Abstract

Misconceptions about psychological phenomena are prevalent among students completing college-level psychology courses. Although these myths are often difficult to eliminate, efforts incorporating a refutational focus have demonstrated some initial promise in dispelling these beliefs. In the current quasi-experimental study, four sections of an online undergraduate Abnormal Psychology course (n = 113 total students) were randomly assigned to receive either a myth-debunking poster assignment or class as usual. Students in the myth-debunking sections were assigned one of five mental health-focused myths and corresponding refutational readings to guide their development of posters aimed at informing their classmates about the misconception, disputing the misconception, and citing relevant evidence as support. Beliefs about common misconceptions (five directly addressed in the assignment and five filler myths) were measured at the beginning and end of the semester. Results indicated that students in the myth-debunking condition were significantly (p < .001, d = 1.09) more likely to know the truth, at the conclusion of the course, compared to the control group. Overall, the myth-debunking intervention appears to have been effective at reducing students’ misconceptions about popular psychological myths, perhaps even some non-targeted psychological misconceptions.

Introduction

Many people hold the erroneous belief that psychological knowledge is largely based on common sense (Lilienfeld, 2012). As a corollary, additional misconceptions about psychological phenomena are widespread and frequently endorsed by the general public (Furnham, 2018; Furnham & Hughes, 2014) including college students (Gaze, 2014; Lyddy & Hughes, 2012). Among the various topics within psychology, misconceptions about mental illness rank as the most commonly endorsed (Gardner & Brown, 2013). Unfortunately, completing an introductory psychology course may be an inadequate means of reducing misconceptions, as data have suggested mistaken beliefs often persist at the conclusion of those courses (Kuhle, Barber, & Bristol, 2009). Generally, research has shown that misconception endorsement appears to diminish as the number of psychology courses taken increases (Arntzen, Lokke, Lokke, & Eilertsen, 2010; Hughes et al., 2015; Taylor & Kowalski, 2004). However, optimism for this pattern should be restrained as upper-division psychology students have been shown to still endorse a large percentage of misconceptions (Gaze, 2014) as well as even revert back later in their education to endorsing popular myths (Lyddy & Hughes, 2012).

There is growing interest in how psychological misconceptions develop in students, in addition to concern over the potential consequences of holding such beliefs (Bensley & Lilienfeld, 2017; Hughes, Lyddy, & Lambe, 2013a). Importantly, the number of misconceptions students endorse has been shown to be negatively associated with course grade (Kuhle et al., 2009) with some evidence to suggest this relationship is moderated by critical thinking ability (Taylor & Kowalski, 2004). Some scholars have reasoned that holding these erroneous beliefs impedes learning and makes subsequent instruction about scientifically based psychological information more challenging (Chew, 2006). Moreover, Lilienfeld, Lynn, Ruscio, and Beyerstein (2010a) contend that, without making a point of disconfirming misconceptions, students are vulnerable to misinformation spread by the popular psychology and self-help industry and may be potentially harmed by some decisions and behaviors based upon these erroneous beliefs (e.g., misallocation of finances/time, foregoing vaccinations).

Our sense of how truly prevalent misconceptions are has been limited by the approaches taken to measure them, with increasing attention directed at the potential impact of response format and wording of test items (e.g., Bensley, Lilienfeld, & Powell, 2014; Gardner & Brown, 2013; Hughes, Lyddy, & Kaplan, 2013b). Research in this area has often relied upon using a binary true–false (T/F) format (e.g., LaCaille, 2015; Taylor & Kowalski, 2012), which requires respondents to make an either completely true or completely false choice. Consequently, this response format may not adequately reflect the strength of holding the belief or likelihood of affirming the misconception. In fact, Hughes et al. (2013b) systematically varied item response choices and found that when presented with a 7-point scale (strongly disagree to strongly agree), versus a true–false format, students were more likely to endorse the misconception. Another study (see LaCaille, 2015) recently highlighted this issue by noting that 42% of introductory psychology students agreed with the “most people only use 10% of their brain” misconception when using a true–false format, in contrast with 71% of the students affirming the myth when queried with a 6-point scale (definitely true to definitely false). Thus, the binary response format appears less sensitive to detecting the true rate of endorsement. In addition to the binary true–false response format, items have routinely been worded such that the correct answer for all the misconception statements in the questionnaire are either all false or all true (e.g., Furnham, 2018; Gardner & Dalsing, 1986; McCarthy & Frantz, 2016) which may make the instrument susceptible to response bias and demand characteristics. Given these limitations, continued attention directed toward improved myth measurement is warranted.

Interest in recognizing and exposing these misconceptions has grown recently, as evidenced by the publication of 50 Greatest Myths of Popular Psychology: Shattering Widespread Misconceptions About Human Behavior (Lilienfeld, Lynn, Ruscio, & Beyerstein, 2010b), which takes a refutational approach (i.e., misconception is activated, then refuted with evidence) to myth debunking. Notably, the use of refutational readings and/or lectures has been shown to reduce myth endorsement in both controlled experimental (Lassonde, Kendeou, & O’Brien, 2016) and classroom settings (Kowalski & Taylor, 2009, 2017). Myth-debunking efforts and assignments (e.g., “PsychBusters”; Blessing & Blessing, 2010) have also been designed to enlist students in the process, with some evidence of these interventions improving students’ ability to think more critically about information. More recently, Lassonde, Kolquist, and Vergin (2017) involved students in developing refutational-based posters based on the Lilienfeld et al. (2010b) book, and then assessed the impact of a brief presentation of these on a small group of volunteer students’ misconception knowledge. Large effects for reduced myth endorsement were detected for the poster intervention immediately following exposure as well as 7–10 days later. Unfortunately, the myth items in the instrument were all worded as false and may be susceptible to response-set bias. Moreover, the potential effects on misconception knowledge for the students developing the posters were not assessed.

LaCaille (2015) similarly engaged upper-level students in creating refutational-based posters and presenting these to students outside of the course, though these were displayed on lecture hall walls and rotated over a 5-week span rather than via accompanying oral presentations. Students exposed to the posters showed an improvement in misconception knowledge over the semester as well as relative to a control group. Notably, the students who created the posters demonstrated large effects for misconception knowledge compared to other students uninvolved with poster development (i.e., introductory psychology students, upper-division comparison group) and reported enjoyment and value in the assignment. The psychology myth-debunking assignment that follows in the current study was, in part, modeled upon this poster intervention as well as public-service-announcement-style messaging (e.g., flyers, posters), but targeted mental health misconceptions because of their noteworthy prevalence. The posters were designed to be refutational in nature and based upon text found within Lilienfeld and colleagues’ (2010b) book. Students were encouraged to be highly involved with the assignment by providing peer feedback during the poster development process as well as by incorporating this feedback in a later version of the poster. It was anticipated that such an approach would facilitate more engagement and deeper processing of information among students, and ultimately improve revision of prior knowledge and reduction of misconception endorsement.

Purpose

Collectively, it appears that students often endorse psychological misconceptions, and that these beliefs may be somewhat resistive to change, depending upon pedagogical strategies utilized. Recent efforts to incorporate refutational-focused strategies, particularly via myth-debunking posters (e.g., LaCaille, 2015; Lassonde et al., 2017) have demonstrated some initial evidence for reductions in myth endorsement among students. Our research extends and strengthens previous evaluations by utilizing a 6-point Likert-type scale to measure misconceptions, targeting a set of mental health myths within an Abnormal Psychology course, including multiple sections over the span of 2 years alternating with a control group. Moreover, we explored the potential change in non-targeted myths as an additional quasi-control and examined all of these components within the context of an online course format. Because of the asynchronistic nature of interaction with the instructor and classmates in most online courses, as well as the importance of greater self-regulated learning (Broadbent & Poon, 2015), it may be particularly important to use innovative approaches to engage students with the course material and each other (Johnson & Aragon, 2003), potentially enhancing deeper processing, connectivity, and ultimately learning.

Because of recent studies showing some impact from refutational strategies, we expected that students participating in the myth-debunking assignment would experience a greater reduction in myth endorsement (i.e., greater knowledge of the truth and accuracy) following completion of the course and relative to a control group at post-test (Hypothesis 1). Given that research has suggested misconceptions are resistant to change and that students may revert back to believing myths, we did not expect to find any differences in endorsement of non-targeted myths for students completing the assignment over time or compared to a control group (Hypothesis 2).

Method

Participants

The sample was drawn from four sections of online undergraduate Abnormal Psychology courses at a midsized Midwestern university over the span of 2 years. Overall, 113 students (Age M = 21.3 ± 3.2 yrs., 84.1% female, 93.8% Caucasian, cumulative GPA M = 3.25 ± 0.49) initially enrolled in and completed the course and the measures. Thirteen (10.3%) other students were enrolled in the course, but did not complete either the course or sufficient measures to be included in the analyses. Attrition from the classes was balanced, such that seven students did not complete the intervention sections and six students did not complete the control sections.

Design, Courses, and Pedagogical Intervention

This study used a quasi-experimental design with students in either the intervention (n = 57) or standard class control condition (n = 56). The order of the conditions was counterbalanced over the four terms that the courses were offered to control for potential season/term effects. The Abnormal Psychology classes were taught online using the Moodle platform and had identical textbooks. The standard and intervention courses each included weekly quizzes, three unit exams, a cumulative final exam, and six written assignments—several of which focused upon case studies and relevant diagnostic criteria (which are excluded from the current discussion and analyses). Thus, the two courses were similarly structured in all aspects, with the exception of two assignments. That is, the intervention group completed the myth-debunking assignment, whereas the control group worked on an assignment that involved viewing five brief videos about study skills followed by responding to questions designed to encourage reflecting upon a previous exam in the course. The assignment in the control class did not address psychological misconceptions. Some topics relevant to mental health misconceptions (e.g., suicide, electroconvulsive treatment) were covered within the textbook that was used in both the standard instructional and intervention courses. However, with the exception of three sentences refuting the mental illness and violence misconception, surprisingly none of the misconceptions were explicitly addressed in the course textbook.

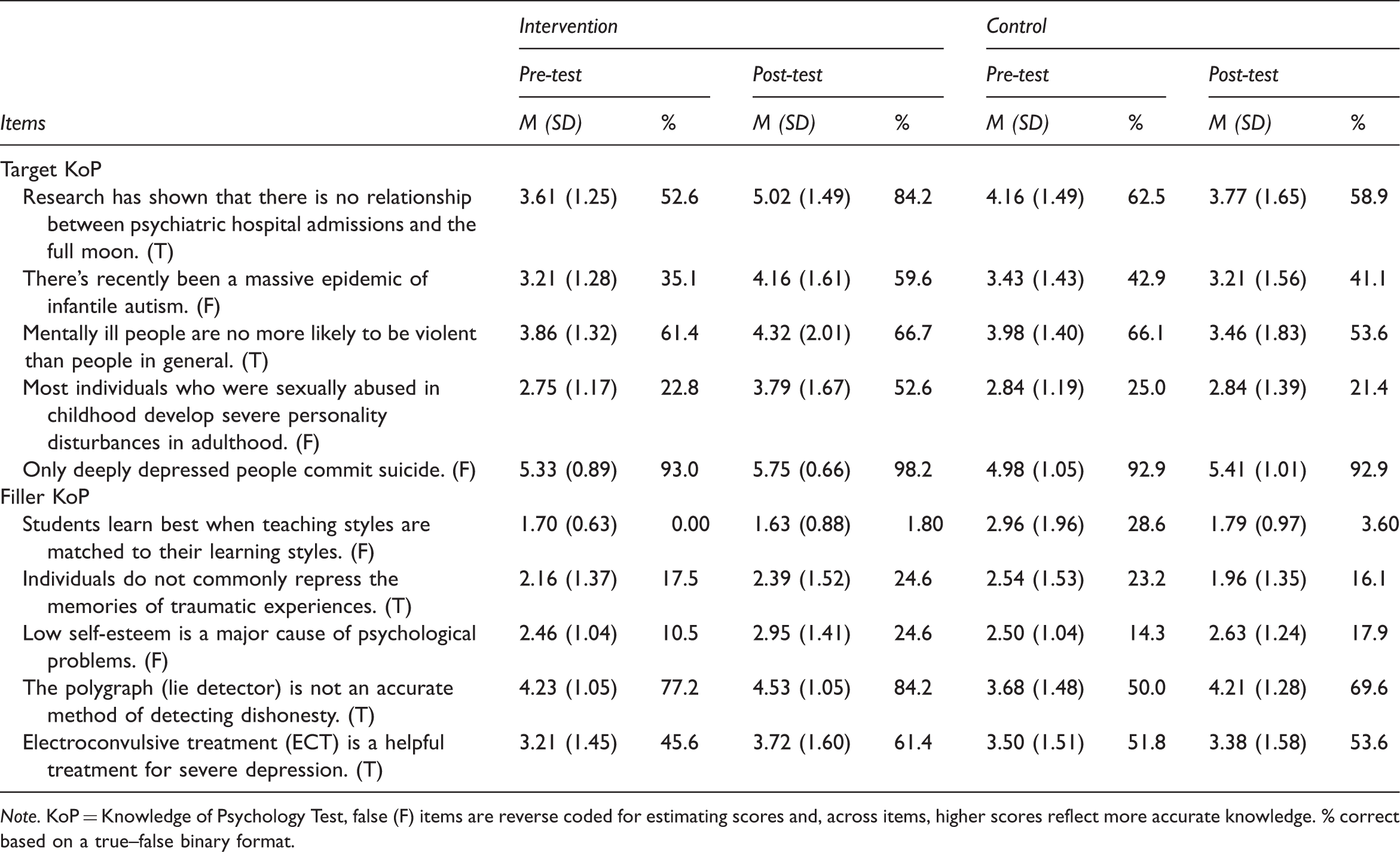

Accuracy with Knowledge of Psychology Items by Condition.

Note. KoP = Knowledge of Psychology Test, false (F) items are reverse coded for estimating scores and, across items, higher scores reflect more accurate knowledge. % correct based on a true–false binary format.

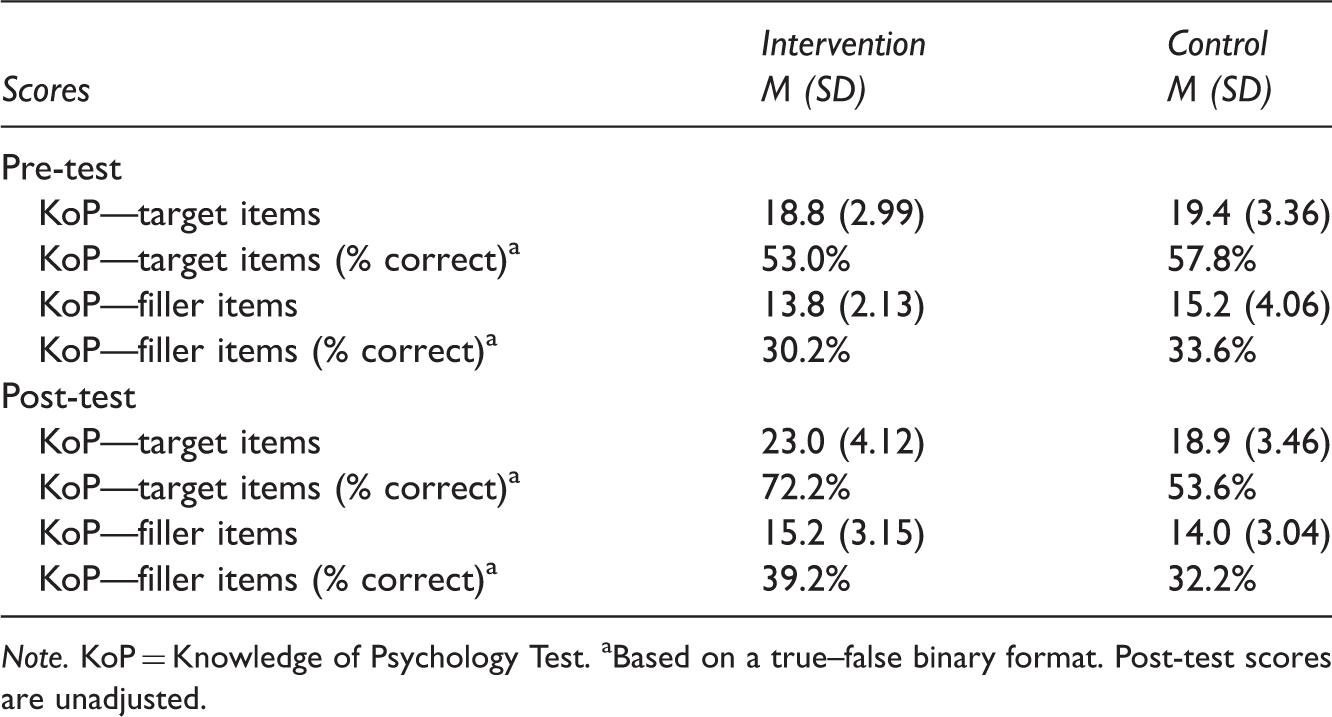

Knowledge of Psychology Scores by Condition.

Note. KoP = Knowledge of Psychology Test. aBased on a true–false binary format. Post-test scores are unadjusted.

The instructor assigned each student to cover one of the five myths and then placed students into groups of five or six students, such that each group contained at least one student covering each myth. Thus, every student in the intervention condition had exposure to all five myths via either their own poster or from reviewing other students’ posters. The refutational-style readings were posted in the online intervention class at the same time as the assignment guidelines (about one week before the assignment was due). The readings were assigned directly from Lilienfeld and colleagues’ (2010b) book, 50 Great Myths of Popular Psychology: Shattering Widespread Misconceptions About Human Behavior, which served as the refutational text for this assignment because each reading explicitly activated the myth, clearly debunked it, and provided evidence supporting the correct information. The interested reader may see Kowalski and Taylor (2009) for additional clarification between standard and refutational text. Readings for each of the myths were approximately three to four pages in length, and students were expected to read about all five myths. In addition, students were given a brief reading, The Debunking Handbook (Cook & Lewandowsky, 2011), to alert them to potential backfire effects and how to develop more effective myth-debunking posters. These two documents were students’ only specific sources of myth debunking refutational information related to the assignment and the basis for their posters and feedback to each other. In order to give accurate peer feedback about poster content, reading about all of the myths was necessary. However, the degree to which students fully read about each myth could not be ascertained.

Each of the selected myths was also explicitly debunked in student-generated posters that were based upon the refutational assigned readings. That is, students activated the misconception, clearly refuted it, and provided more factual information to replace the myth (see Appendix A for an example of the myth-debunking posters). A PowerPoint template was given to the students to create the 18 x 24-inch poster; beyond this, students were free to alter the appearance and text on the template to suit their messages. When both constructing their posters and providing specific feedback, students were encouraged to focus on the following factors:

Accuracy of information (based on the 50 Great Myths of Popular Psychology) Clarity of information and layout (based on guidelines in The Debunking Handbook) Use of images or effects to supplement the message and help clarify concepts Message captured attention while being sensitive to diverse perspectives and potentially someone who may be affected by this misconception Free from misspellings, typos, or blurry/poor-quality images

Procedures and Measure

All students completed a set of questionnaires at the start and end of the course. At the start of the semester, questionnaire items inquired about background information (e.g., age, cumulative grade point average (GPA)) as well as students’ knowledge of psychology (i.e., identification of misconceptions). At the end of the course, approximately 5–6 weeks following completion of the myth-debunking assignment for the intervention group, the students again completed an assessment of their knowledge of psychology.

Results

Independent samples t-tests and Chi-square tests were used for initial group comparisons of equivalency. Hypothesis-driven ANCOVAs, controlling for baseline knowledge of psychology scores, were performed on the post-course knowledge of psychology scores. We also examined effect size estimates (Cohen’s d) to determine the actual magnitude of differences for the comparisons, with the effect sizes generally interpreted as being small (d = 0.2), moderate (d = 0.5), or large (d = 0.8).

No differences (ps > .05) were observed between the intervention and control conditions on age, gender, ethnicity, or cumulative GPA. Moreover, no statistically significant differences (ps > .05) were detected on baseline characteristics between students completing the courses (n = 113) and those not completing the course or sufficient measures (n = 13) to be included in the post-course comparisons.

Knowledge of Psychology

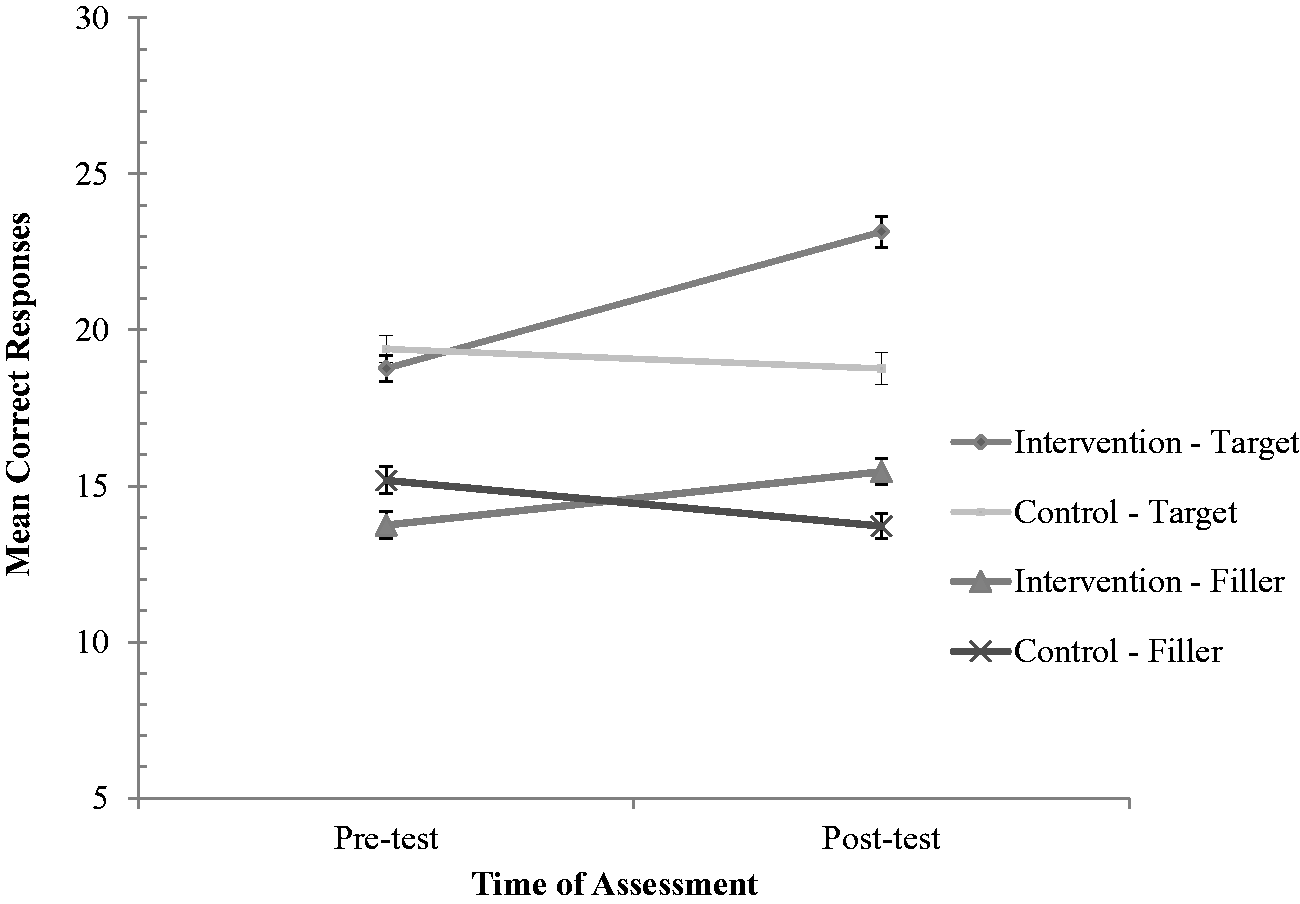

As seen in Figure 1 and Table 2, students in the intervention group showed significantly greater accuracy of psychological knowledge, based on the misconceptions targeted by the assignment, at the end of the course, F(1, 110) = 40.4, p < .001.

Mean correct knowledge of psychology scores for the intervention and control groups, adjusted for pre-test scores. Standard errors are represented by error bars at each point.

In terms of the magnitude of the difference, the intervention group evidenced considerably larger effects (d = 1.09) than the control group students. This pattern was robust to the response format (i.e., when items were converted to a true–false binary format), F(1, 110) = 25.9, p < .001, d = 0.90, with the intervention group correctly identifying 72.2% of the items and the control group correctly identifying 53.6% of the items. Moreover, the intervention group showed a nearly 20% improvement from the beginning of the course, whereas the control did not show an improvement (4% decline in scores). Three of the targeted myths demonstrated improvements of 25% or greater (see Table 1) for the students in the intervention condition. No such gains were observed in the control group for any of the corresponding myths. Because we noticed there was a high rate of knowing the truth with one of the misconceptions (“Only deeply depressed people commit suicide,” Correct M = 5.16 ± 0.99, 92.3% correct across both groups) at baseline, we adjusted the measure to exclude that item and compared the groups again. Importantly, the previous pattern with the data persisted, F(1, 110) = 38.1, p < .001, d = 1.05.

We compared the student groups on their post-course myth filler scores, and unexpectedly found that there was a significant difference, F(1, 110) = 9.79, p = .002, between the intervention and control groups (See Figure 1 and Table 2). The students in the myth-debunking sections performed moderately better (d = 0.40) on these filler items at the end of the course than did those in the control condition. However, an independent samples t-test, t(111) = 2.33, p = .02, d = 0.44, demonstrated the control condition students scored somewhat higher than the intervention group at the beginning of the semester. We again examined the effect for the alternate true–false binary format and found a similar significant group difference, F(1, 110) = 5.51, p = .02, d = 0.36, at post-course. The scores at the beginning of the semester, however, were not significantly different, t(111) = 0.90, p = .37, d = 0.17, between the two conditions. As can be seen in Table 2, the scores for the baseline and final scores differed by about 3% and 7%, respectively, between the two conditions. Individual filler item inspection revealed that scores varied greatly between items (e.g., 0% to 84.2%; see Table 1), but much less so within items from baseline to the end of the semester.

Discussion

The primary goal of the myth-debunking assignment was to dispel common mental health misconceptions held among students in an online undergraduate Abnormal Psychology course. We found that students in the assignment condition reported more accurate knowledge, with regard to the targeted myths, than the control group at the end of the course and showed an improvement over their baseline scores on the targeted myths. These findings are supportive of more recent efforts to engage students in myth debunking with poster assignments (e.g., LaCaille, 2015; Lassonde et al., 2017). The current examination of myth-debunking posters differs from previous efforts in that it targeted mental health-related misconceptions, which are a frequent area of erroneous beliefs (Gardner & Brown, 2013; Lilienfeld et al., 2010b). Importantly, increasing individuals’ accurate knowledge about mental health may also reduce negative stereotypes and stigma attitudes (Burns et al., 2017). The findings also demonstrate the viability of using a refutational-based assignment in an online class and its pedagogical effectiveness in reducing students’ beliefs in psychological misconceptions.

Even though effects were notable in the current pedagogical intervention, not all targeted mental health myths were equally influenced. Surprisingly, there was a high rate of accuracy in our sample at baseline for one of the myths (“Only deeply depressed people commit suicide”). Despite this knowledge, modest gains on this item were still observed for the intervention group relative to the control group (d = 0.40, 5.2% vs. 0.0%, respectively). We also found only modest gains for another myth (“Mentally ill people are no more likely to be violent than people in general”) that was initially endorsed by nearly 40% of the students. Interestingly, students in the myth-debunking intervention showed an improvement, whereas those in the control condition endorsed the misconception more strongly at the end of the course (5.3% improvement vs. 12.5% deterioration). This myth may prove more challenging to refute for individuals, even for students in an abnormal psychology course, due to media attention involving mass shootings and mental illness (Ross, Morgan, Jorm, & Reavley, 2019) or backfire effects secondary to existing belief systems (Lewandowsky, Ecker, Seifert, Schwarz, & Cook, 2012).

Although the targeted myths were generally affected by the debunking assignment as we had anticipated, the findings from the filler items—which served as a quasi-control—presented a more complex pattern. For instance, regardless of condition, students appeared to have more accurate misconception knowledge of the targeted myths at the start of the course. Unexpectedly, students in the myth-debunking sections performed moderately better at the end of the course on the filler items. Although speculative, perhaps this general effect was triggered by students in the myth-debunking assignment examining some assumptions more critically and revising their knowledge of these misconceptions. Such a possibility is consistent with Blessing and Blessing’s (2010) finding that a myth-debunking assignment improved students’ ability to think more critically about information. However, accuracy varied considerably among the filler items, with more notable gains observed in some items. For instance, accuracy about electroconvulsive treatment appeared to differentially improve in the assignment condition (15.8% gain vs. 1.8% gain), even though the textbook only provided a description of its use and application for treating depression. Other filler items, such as the polygraph misconceptions, provide more ambiguous patterns between and within the groups and may reflect a measurement artifact that is in need of further study over time. Overall, however, some items suggest that unless explicitly refuted, the misconception endures at a rather high rate. The myth about learning styles and teaching represents this perseverance well, as more than 95% of students across both conditions continued to believe this at the conclusion of the course. Perhaps it is not surprising such a high rate of students subscribe to this particular myth, as data have pointed out that the misconception continues to thrive within the pedagogical literature, despite being repeatedly discredited by empirical research (Newton, 2015).

Recent scholarship has emphasized the influences of response format (and language) when developing misconception measures and subsequently interpreting findings from these data (Hughes et al., 2013a; Taylor & Kowalski, 2012). As a result, we attempted to improve on previous efforts of examining and debunking myths by measuring beliefs using a 6-point scale rather than a binary true–false format. Such an approach makes it more evident that even though students may still endorse the misconceptions after the intervention, they may not be as confident in their endorsements. We also attempted to reduce the risk of response bias and demand characteristics by counterbalancing the correctness of the statements so that they were not worded as all false or true. However, some of our wording of correct information in the negative (e.g., “Individuals do not commonly repress the memories of traumatic experiences”) may have proved to be more ambiguous to students than anticipated and could have inadvertently influenced responding to the measure. That said, in our sample, belief in psychological misconceptions at the beginning of the semester generally ranged from about 40% to 70%, which appears largely consistent with rates reported elsewhere (e.g., Hughes et al., 2013a). For those interested in broadly assessing misconceptions held among their students, we would encourage consideration of recent measures developed by Gardner and Brown (2013) or Hughes et al. (2013b). Another recent measure that offers a more novel forced-choice response format that pits a misconception against a scientifically supported alternative is the Test of Psychological Knowledge and Misconceptions (TOPKAM; Bensley el al., 2014).

Although we attempted to address limitations evident in past studies examining psychological misconceptions, it should be pointed out that the current intervention is not without limitations as well. We speculated, based upon past myth-debunking efforts (e.g., Blessing & Blessing, 2010), that the assignment may have improved students’ critical thinking, but there was no critical thinking instrument used for comparison of student groups. Future myth-debunking campaigns may want to consider including an assessment of critical thinking as an outcome, mediator, or moderator of pedagogical effects (for a more detailed examination of misconceptions and critical thinking, see Bensley et al., 2014). Additionally, our data and conclusions about the assignment’s effect are limited to a relatively brief follow-up period (i.e., 5–6 weeks following completion of the assignment) and we cannot speak to the impact of our myth-debunking assignment beyond the term of the course. Despite the notable effect sizes seen with the intervention students, it is possible these were not sustained long-term. Although it is challenging to collect data on pedagogical interventions beyond the semester’s conclusion, or assess misconception persistence and modification longitudinally, recent efforts have suggested that students report fewer misconceptions 1–2 years following an introductory psychology course (Kowalski & Taylor, 2017; McCarthy & Frantz, 2016). However, given the potential for students to revert back to holding psychological misconceptions (Lyddy & Hughes, 2012), assessing the durability of myth-debunking efforts is an important future direction.

It should be noted that assessment of pedagogical strategies with quasi-experimental designs have the potential for contamination between the intervention and standard courses (i.e., students discussing the content). Although it is possible some students in our standard course may have known of other students who had taken the course with the myth-debunking assignment, it seems an unlikely scenario that the students would take the time to request from them and read the refutational readings and posters. Moreover, comments from students did not provide any indication that they felt resentment about the assignments. Thus, contamination effects do not seem to be present, at least not on a scale that would threaten the internal validity of this study. Another limitation of the present investigation is that our design did not allow us to disentangle the relative contribution of the assignment components (i.e., refutational readings, poster development, small group discussion forum and feedback). Work examining refutational text and lectures in an introductory psychology course has suggested that the assigned text appeared to contribute little to the observed change in misconception endorsement (Kowalski & Taylor, 2009). Discerning the degree to which students read and engaged with the assigned text (refutational or standard) in this, as well as our study, poses an added challenge in natural setting designs.

While our findings indicate it is possible to reduce mental health misconceptions in an online abnormal psychology course with a myth-debunking assignment, it is important to keep in mind that the rates among the myths still suggested a high level of erroneous beliefs and the need for continued efforts at knowledge revision. Thus, students may benefit from myth debunking and refutational teaching methods incorporated throughout the curriculum, beginning with introductory psychology. Bernstein (2017) recently proposed an intriguing revision to students’ first encounter with the psychology curriculum; replace the standard entry-level introductory psychology with a course entitled “Myths and Illusions about Human Behavior in Everyday Life.” Such a course would emphasize more actively engaging students to interact with refutational information and, as a consequence, develop critical thinking skills and alter their perceptions of psychology as an empirical science rather than merely an endeavor in common sense. Given the current state of affairs with the spreading of misinformation, we believe that such an emphasis in our pedagogy would not only benefit future psychologists, therapists, and classroom educators, but more generally be of value by inoculating our students who are to become members of our citizenry.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship and/or publication of this article.