Abstract

Writing has long been recognized as both an outcome and method of successful pedagogy in psychology. Accordingly, there are a number of methods that successful instructors have employed to teach psychology students how to write. One such method is to facilitate students’ reviewing each other’s written work (i.e., to engage in peer review), although the research on this as an efficacious classroom intervention has thus far been limited. In this study we examine the benefits of implementing student reviews of peers’ written work in a senior-level undergraduate psychology course (N = 59). Results suggest that the more critical students were of their peers’ writing, the higher their grades were on their own writing, an effect that persisted when controlling for grades on previous written assignments and the effect of feedback received from peers on their written work. These findings extend previous research on the effect of student peer reviewing and highlight the utility of implementing peer review in the psychology classroom.

Engaged learning has been recognized as a touchstone of successful acquisition of knowledge and skills related to psychology (McGovern, 2002; McKeachie, 1999, 2002; Miserandino, 1999). One commonly used method of engaged learning is writing, which is not only a means of learning, but also a useful skill in and of itself (Noodine, 1999; for historical review see Haswell, 2008). Discerning ways to improve teaching students how to write has thus remained an active area of pedagogical research.

Master teachers of psychology have noted that writing is an effective way of learning about psychology (e.g., Noodine, 1999, 2002; Pinker, 2014). Indeed, a number of studies have suggested that prompting students to write both inside and outside the classroom leads to increased learning (e.g., Butler, Phillmann, & Smart, 2001; Radmacher & Latosi-Sawin, 1995). While this research suggests that writing may improve student learning about psychological content, writing activities did not necessarily improve the quality of writing in and of itself. To the contrary, teaching students to improve their writing is a difficult thing to do.

Some popular writers of fiction have suggested that reading the work of others is an effective means of improving one’s own writing (e.g., S. King, 2000; Prose, 2006). Such writers note that their reading is not passive, but close and critical such that they read not only to identify aspects of writing that they like and want to emulate (e.g., vocabulary, turns of phrase), but also things they want to avoid (e.g., excessive elaboration, poor sentence structure). These themes are consistent with the idea that successful writing entails a dialectic between writing and reading in which each reinforces the other (Elbow, 2000). Critical reading of others’ writing is also a method endorsed by psychologists interested in writing (e.g., Goddard, 2002; Pinker, 2014). For example, in her guidance on how to teach writing to psychology students, Noodine (2002) suggests that in addition to the instructor offering one or more model papers for students to emulate, students should be offered structured guidance for how to review papers and spend time reading and critiquing the writing of their peers.

There is some evidence suggesting that structured peer review of papers can be effective in improving students’ writing. Fallahi, Wood, Austad, and Fallahi (2006) found that the effect of peer review combined with didactic instruction on writing, formal feedback, and in-class practice was effective at improving writing skills for psychology students. Earlier research suggests that one possible mechanism by which student peer review might improve writing skill is that students tend to provide more critical feedback on their peers’ papers than course teaching assistants (Kottke, 1988). Given that course instructors (or other course staff like teaching assistants who assign grades to students’ written work) are those who ultimately decide what “good” writing looks like (i.e., they, not students, control the standard of what a good paper is), these latter findings may suggests that critical peer reviews are more beneficial for the reviewer than for the reviewed, a finding that has also been demonstrated in subsequent studies (e.g., Cho & MacArthur, 2011; Li et al., 2010; Lundstrom & Baker, 2009). In other words, as writers who have written about their writing process (e.g., S. King, 2000) have suggested, it may be that the more critical students are in their reviews of peers’ papers, the more attentive they are to their own writing. However, there is insufficient evidence demonstrating this empirically.

Current Study

In this study we examined the influence of peer review on students’ writing. We hypothesized that the more critical students were in their review of other students’ paper drafts, the better the grades on their (the reviewers’) papers would be.

Method

Participants

Participants in this study were students in a senior-level undergraduate course on child psychopathology (N = 59) at a large Midwestern university. The class met twice weekly (80 minutes per class) for 15 weeks. Students had a mean age of 22 years and were predominantly female (n = 48, 81%). Demographics of the students in the class were as follows: 86% White, 7% Asian, 2% Black, 5% Multi-racial or Other. Grade point average (GPA) reported by students participating in this study was 3.37. All students included in this study provided consent for their data to be used and all study procedures were approved by the local Institutional Review Board.

Measures

Student papers

As the course was focused primarily on developmental influence on and diagnosis of child psychopathology, and secondarily on the development of writing skills, the primary assessment method of the course consisted of written papers (4–6 pages each) on the theory of and diagnostic application of theory to the development of child psychopathology. The first paper students wrote was primarily a test of content knowledge: students were asked to compare and contrast two theoretical approaches used in the clinical formulation of child psychopathology (e.g., classical psychoanalysis, cognitive behavior therapy). The second paper was a test of application: students used a theory discussed in the class to interpret and diagnose a character or family system from a novel, A. King’s (2014) Reality Boy. For both papers, the course instructor provided students with a scoring rubric, which allocated 30 points across five domains of grading (introductory paragraph, body, concluding paragraph, references, writing quality). For each paper, the course instructor also provided a model paper for students to use as an example (a comparison of psychodynamic and family systems theories for the first paper and an analysis of Shriver’s [2003] We Need to Talk About Kevin as material for the second paper), which the course instructor reviewed using the respective rubrics in class when each paper was assigned as part of didactic instruction on how to write.

In-class peer review workshops

Prior to submission of the final draft of each paper, students participated in a peer review workshop in which each student printed out a copy of his or her semi-final paper draft to be peer reviewed. The format for each of the two workshops was the same: students broke up into 3–4-student groups, read and critiqued their peers’ papers (2–3 each), and in turn had their papers read and critiqued by these peers (2–3 each). As students read their peers’ papers, they graded them using the grading rubric provided by the course instructor. The rubric was specific, consisting of 17 grading areas (worth between one and four points each) grouped into five categories (introductory paragraph, body, concluding paragraph, references, and writing quality; see Appendix). The instructor advised students to be as judicious with respect to giving points across these grading areas and as constructively critical as possible, trying to grade papers just like the instructor/teaching assistant would. In addition to assigning points as per the rubric, students were also asked to write subjective comments about the paper. After reading and grading peers’ papers, students reviewed their completed grading rubric and subjective comments with their peers. Each workshop was the same length as the class.

Student paper proposals

Prior to each peer review workshop, students submitted a 2–3-page proposal for each paper in which they described their general approach to understanding and applying the theory(ies) in the paper. The paper proposals were graded by the course instructor and teaching assistant but were not included in the writing workshops.

Data Analysis

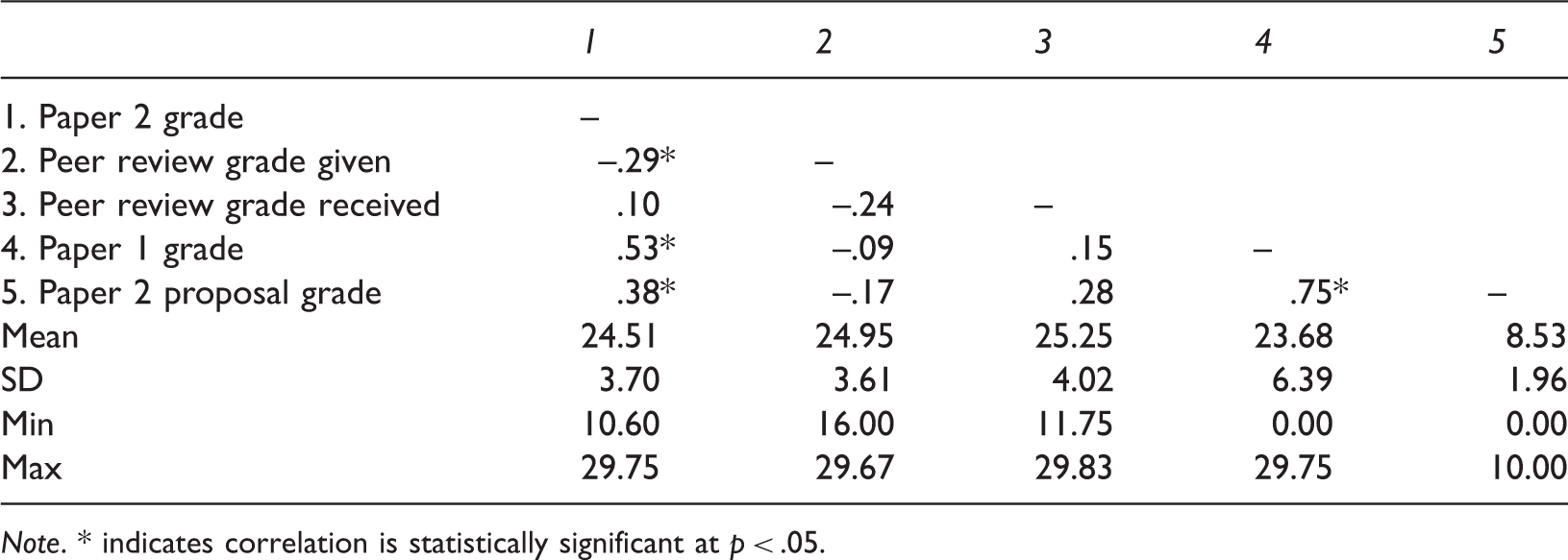

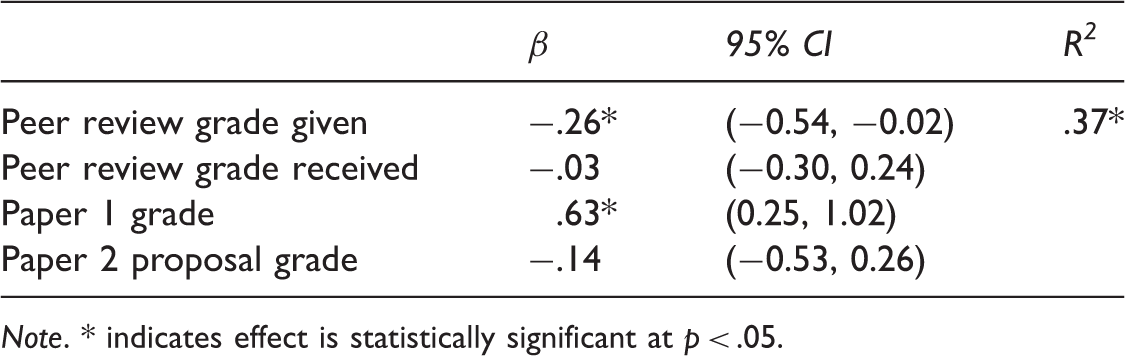

We tested our hypothesis using multiple regression. The dependent variable in the regression model was the final grade on the students’ second paper and the primary predictor was the informal grade students gave to their peers in the in-class peer review workshop. We also entered into the model the informal grades students received from peers during the workshop (to control for help given from peers), students’ final grades on their first paper (to control for previous writing quality), and their grades on the proposal for the second paper (to control for clarity of thought going into the assignment). Prior to computing the multiple regression model we conducted a preliminary analysis of variables in the model using bivariate correlations. Because students graded and received grades from more than one peer during each workshop (2–3 peers each), we used the mean of grades given/received in our analyses. We converted all variables to standardized z-scores prior to analysis and entered all variables into the regression model simultaneously.

Results

Psychometric Information for and Correlations between Study Variables.

Note. * indicates correlation is statistically significant at p < .05.

Results of Multiple Regression Analysis Predicting Grades on Second Paper.

Note. * indicates effect is statistically significant at p < .05.

Discussion

In this study we examined the influence of peer review on students’ writing. We found that the more critical students were of their peers’ writing during an in-class peer review workshop, the higher their paper grades were even when controlling for their previous grades on written assignments and informal grades they received from peers during the peer review workshop. These results extend previous research on student peer reviewing and have implications for teaching writing to students of psychology.

Study findings suggest that peer reviewing can be an effective means of improving students’ grades on written assignments. These findings are consistent with previous research on student peer reviewing suggesting that (a) peer review is useful for improving student writing (Fallahi et al., 2006) and that (b) the degree to which students are critical during peer review is a possible mechanism for this improvement (Cho & MacArthur, 2011; Li et al., 2010; Lundstrom & Baker, 2009). Current findings extend those of previous studies showing that it is the degree to which students are critical of their peers’ writing rather than peers’ critiques of their writing that drives the effectiveness of peer review. This finding is also supportive of findings that unfavorable feedback provided for developmental purposes is often not perceived by the receiver as useful and does not lead to the willingness to change behavior based on that feedback (Steelman & Rutkowski, 2004). In addition, that the effect of peer review persists despite controlling for students’ grades on previous writing assignments suggests that being more critical in peer reviews may help students become better writers (e.g., rather than more critical readers simply being better writers to begin with). This idea of self-improvement is further underscored by the finding that informal grades given during peer review are uncorrelated with official grades on previous written assignments.

These results have implications for pedagogical practice. The most obvious implication is that one way to facilitate self-improvement in writing is to get students engaged in critically reviewing the writing of their peers. A more subtle implication arises from a result that was not statistically significant: current findings suggest that although students benefitted from giving feedback on peers’ writing, they did not benefit from receiving feedback from their peers. This suggests that learning from peer review is only one way and that there is thus work for instructors to do to get students to benefit from the feedback they receive from their peers rather than just using their peers’ work to help sharpen their own editorial skills. Therefore, providing the scoring rubric may not be enough for a student to provide a critical review. Rather, one study showed that even with a rubric, several (e.g., three or more, and optimally at least six) peer ratings are necessary to establish reliable peer reviews (Cho, Schunn, & Wilson, 2006). Research further suggests that certain techniques used by peer reviewers (e.g., localizing comments, focusing on higher vs. lower level writing issues) are more likely to improve future draft quality for those reviewed (Patchan, Schunn, & Correnti, 2016) and that explicit instruction to peer reviewers is often necessary to facilitate their utilization of these techniques (e.g., Baker, 2016; for meta-analytic review of instructional approaches to scaffolding peer review, see Hoogeveen & van Gelderen, 2013). There is also evidence that the best reviewers provide critical, problem-focused feedback (Patchan & Schunn, 2015), although students may need some training both on the usefulness of this kind of feedback and how to resist the pull to be too lenient and reliant on praise in their reviews in order for reviewers to implement this feedback (Vinton & Wilke, 2011). In addition, instructors may want to allocate additional time to students after the critique of others to reflect on their own paper and how to improve it based on feedback given to others (Baker, 2016). Future research may also focus on the effect of feedback specificity (Goodman, Wood, & Hendrickx, 2004) as well as having students take a strengths-based approach to delivering feedback on paper as well as the weakness-based approach that is typically employed (Aguinis, Gottfredson, & Joo, 2012).

This study benefitted from a longitudinal design and from using relevant, real-world metrics of classroom behavior as variables in the study. However, this study also had limitations that point to directions for future research. Some of these limitations have to do with the study’s correlational design, which precludes causal interpretations of findings. For example, although our data suggest that reviewing peers’ written work leads to better writing on the part of peers, this interpretation cannot be conclusive without comparison with a control group (e.g., a group in which students only read peers’ work, not evaluate it). Another limitation to this study was that we examined only the quantitative feedback reviewers provided (e.g., final tabulated score on a rubric) and did not examine qualitative comments, either written (on the paper draft or rubric) or spoken (during feedback session). Analysis of this latter form of feedback could not only yield rich contextual information, but also provide information about possible mediators of the effects observed. For example, one reason for the lack of benefit of receiving peer reviews may be that qualitative comments on the lowest quality drafts were out of the zone of proximal development of the students reviewed whereas comments on higher quality drafts were more easily integrated. Finally, and on a related note, in this study we did not measure other potential mediators of the effects of this study (e.g., overall GPA, level of motivation in general and for peer review in particular). Future research can thus improve upon and extend this study by examining possible alternative hypotheses and possible mediating/moderating influences on the effects found in this study using an experimental design including both quantitative and qualitative data.

Conclusion

In this study we examined the effect of peer review on students’ grades in a writing-focused, senior-level psychology course. Results suggest that the more critical students were when reviewing their peers’ writing, the better grades they received. These findings highlight the utility of peer review as a pedagogical tool in the psychology classroom.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.