Abstract

Psychology graduates are employed in a wide variety of workplace roles. The broad nature of these future workplace requirements can make it difficult for students to learn and apply classroom knowledge and challenging for educators to develop authentic and engaging materials. In research methods and statistics courses in particular it can be difficult to develop specific context exemplars. This article describes the design of a series of case studies and linked learning activities based on the experiences of psychology graduates in their real-world employment roles. These case studies were embedded in an undergraduate research methods and statistics course with the aim of improving engagement by more clearly articulating the link between classroom learning and future workplace roles. Student feedback suggests that the case studies were motivating and supportive of student learning; however, there was no evidence of an improvement in performance. This approach provides a rewarding, flexible template within which students and educators are able to explicitly discuss the relationship between current learning and future real-world roles.

Graduates from undergraduate psychology programs are employed in a broad range of roles—from health and carer careers, to education, public policy, and technical and financial professions (Graduate Careers Australia, 2015). These roles are often not “psychology” careers per se, but instead capitalize on generic skills acquired during the undergraduate psychology degree such as communication, information literacy and problem solving (Roberts, Heritage & Gasson, 2015). However, diversity in career outcomes can make it difficult for educators to design content- and context-specific materials, limiting students’ ability to engage in authentic learning through real-world problem-solving (Blumenfeld et al., 1991; Parsons & Ward, 2011; Willems & Gonzalez-DeHass, 2012). As potential professionals, students require a learning environment in which they can practice “sound judgement and appropriate professional action in complex, context-dependent situations” (Davis & Arend, 2013, p.213), through application that maps learning from theoretical framework to professional practice (Davis & Arend, 2013). The teaching challenge is how to facilitate authentic learning experiences for a student cohort destined for disparate working roles across a wide variety of professions.

These issues are particularly salient in psychology research methods and statistics courses. Research methods training, incorporating both understanding of research and statistical expertise, is the core of undergraduate psychology programs internationally, but is renowned for high levels of student anxiety (Chew & Dillon, 2014; Onwuegbuzie & Wilson, 2003). This anxiety may affect students’ ability to successfully interpret or complete quantitative research (Onwuegbuzie et al., 2010). Students will often defer completing statistical courses (Dunn, 2014; Dykeman, 2011) and statistical knowledge can become the lowest graduate skill with which students leave university (Huntley, Schneider, & Aronson, 2000). Crucially, students have great difficulty seeing the relevance of the skills they are being taught in these courses for their current studies and for future careers (Druggeri, Dempster, Hanna & Cleary, 2008). Typically research methods courses in undergraduate psychology use textbook-based learning, with examples drawn from traditional psychological research, yet only a very small proportion of students go on to research roles within the field (Graduate Careers Australia, 2015). Druggeri et al. (2008) found that “… most [psychology students] saw it [statistics] as an unnecessary aspect meant only for the limited few who ever intended to do research” (p.79).

One potential means of engaging students and improving outcomes in research methods and statistics is through the use of real-world examples and applications (Blumenfeld et al., 1991; Forte, 1995; Pan & Tang, 2005; Parsons & Ward, 2011). Within the teaching of psychology, case studies have primarily been used in personality psychology and forensics (e.g., Ashcraft, 2012; De Ruiter & Kaser-Boyd, 2015). Thistlethwaite et al. (2012), in their review of case-based learning in health education, argue the case-based approach is positively viewed by students and educators as a motivating and engaging gateway between surface- and deep-level learning experiences. Schmidt and King (2010) suggests that the effectiveness of case studies is derived from the incorporation of many higher order thinking skills, leveraging application to real-life contexts. Use of case studies reflecting real-world problem solving thus may increase engagement and support learning (Blumenfeld et al., 1991; Garfield, 1995; Parsons & Ward, 2011), and consequently deliberate use of a diverse set of case studies may aid in increasing motivation (Willems & Gonzalez-DeHass, 2012) and teaching of concepts with an undergraduate psychology cohort.

This article describes the development of a case-study approach to learning in a research methods and statistics environment in which students are asked to engage with a number of scenarios and associated activities drawn from a wide breadth of possible working roles. The aim of the case studies was to encourage students to consider the content in the context of the scenarios, and by doing so increase engagement with the material, and improve students’ learning experience by encouraging them to reflect on real-world workplace demands. The current article presents the teaching context, the development and implementation of the case studies and activities, feedback from students and teaching staff, and discusses the potential application of this approach more widely.

Teaching Context and Approach

The current project was developed for a second-year undergraduate research methods and statistics course at the University of Canberra, Australia. The University of Canberra is based in Canberra, the national capital of Australia, and is one of four universities in the city. The largest local employer is the Australian Public Service (Australian Bureau of Statistics, 2016). The University of Canberra markets itself as an applied university, with a focus on practical work-based projects and high employability of graduates (University of Canberra, 2018).

The course is a compulsory element of the Bachelor of Science in Psychology program. The program is accredited by the Australian Psychological Accreditation Council (2018) and is similar in structure and content to other undergraduate research methods and statistics courses in Australia and internationally. The course is completed over a 12-week semester and includes a two-hour lecture and a 1-h tutorial each week. The content of the course is aimed at second-year undergraduate students and builds on material learned in the first year of study. It is focused largely on experimental research methods and typical analyses conducted with experimental data. The research design issues covered include development of hypotheses, study design, sampling and ethics. Analyses covered include analysis of variance (ANOVA), Repeated Measures ANOVA and Factorial ANOVA, and nonparametric statistical analyses. Power and effect size are also discussed. Use of SPSS is taught during the course.

The first author began convening and teaching the course in 2012. Feedback from previous years had indicated (consistent with other statistics and research methods courses reviewed above) students felt a high level of anxiety in the course, and a low level of engagement with the material. Written feedback suggested students failed to see the material as relevant to their university studies or future employment roles. However, the content and delivery mode of the material was largely mandated by existing structures. Given this context, a means of improving engagement with the material, and adding meaning and real-world problem solving to the course, was sought that would wrap around the existing delivery and content. Case studies were identified as a means of adding such engagement, understanding and real-world context.

The key foci of the initial project were to:

Create specific and concrete examples of possible future workplace roles, including tasks, expected outputs, pressures and interactions with others. Use these case studies to highlight the importance of generic skills in student learning—emphasizing creativity, leadership, the ability to cope with uncertainty, and interdisciplinary communication. Use these case studies throughout the course in both lecture and tutorial activities to engender engaging and content-specific problem-solving.

Materials: Development and Implementation

Case Studies

The case studies were derived through data on outcomes for psychology graduates (Graduate Careers Australia, 2015), and discussion with psychology graduates working in the Canberra region. Eight roles were identified that reflected common occupations among psychology graduates in Australia and/or the local community, or which exemplified the diversity of roles available to graduates. The eight roles were: Clinical psychologist in private practice, Clinical Manager at a local government service, Policy officer for a Federal Government department, Postdoctoral researcher at a university-based research institute, Marketing officer at an advertising agency, School counsellor at a local public high school, Human Resources consultant at a business development consultancy, and a Magician. While not in itself a common graduate role for psychology students, the “magician” was included to represent the small but significant number of graduates who go on to use their skills in areas outside traditional scientific/health disciplines, such as the arts.

Psychology graduates working in each of these roles were identified and interviewed by the first author to gather content for the case studies. The case studies generated were simplified versions of the working roles described by graduates. Given the intended use of the case studies in a research methods and statistics course, all descriptions highlighted research design and statistics-related activities; however, all interviewees spontaneously generated research-related examples in discussing their roles.

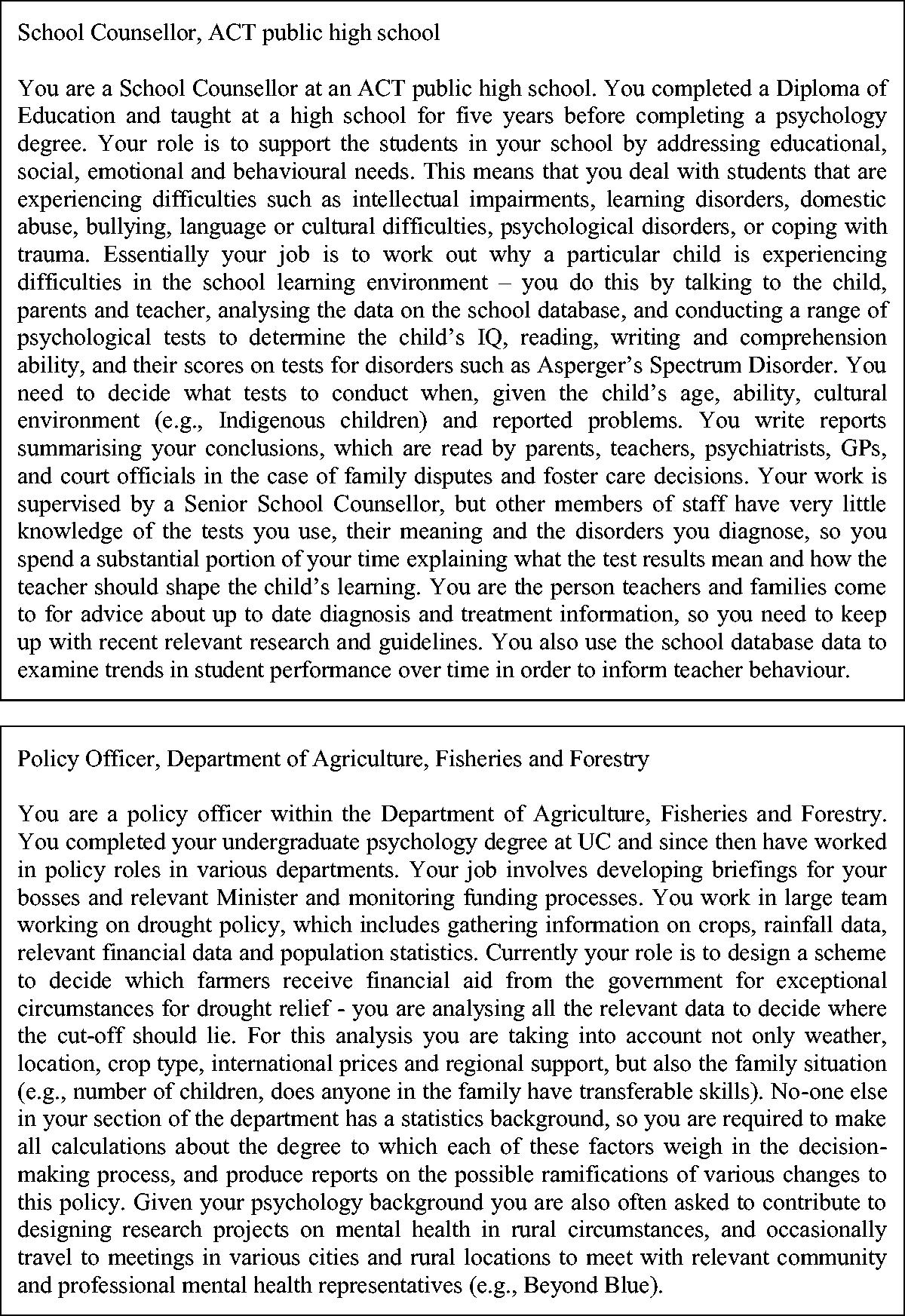

The case studies consist of 200- to 300-word descriptive paragraphs including the name of the role, a description of the workplace, and the qualifications and experience required to be appointed to the position (examples of the case studies are provided in Figure 1). They are written in language accessible to undergraduate students, although deliberately introduce key terminology relevant to that role. Everyday elements of the role are described, including required duties and outputs, but also potential difficulties, nature of collegial relationships, and other relevant issues. Long-term goals and stressors are raised, and leadership or decision-making roles are made explicit. In particular, use of statistical and research expertise as used in the role is described. Common requirements were identification, interpretation and use of published research in practice, developing and engaging in research studies, and analysis and interpretation of data. The importance of communication in these roles is highlighted, particularly with other stakeholders without psychology training. Simplified, specific projects and problems are used as exemplars. Note that the case studies were not designed to be exhaustive descriptions of workplace roles, but rather to provide a starting point for students to begin to consider workplace contexts with very different requirements, pressures, and outputs from their current student experiences.

Two examples of the case studies embedded in the course.

Introductory Activity

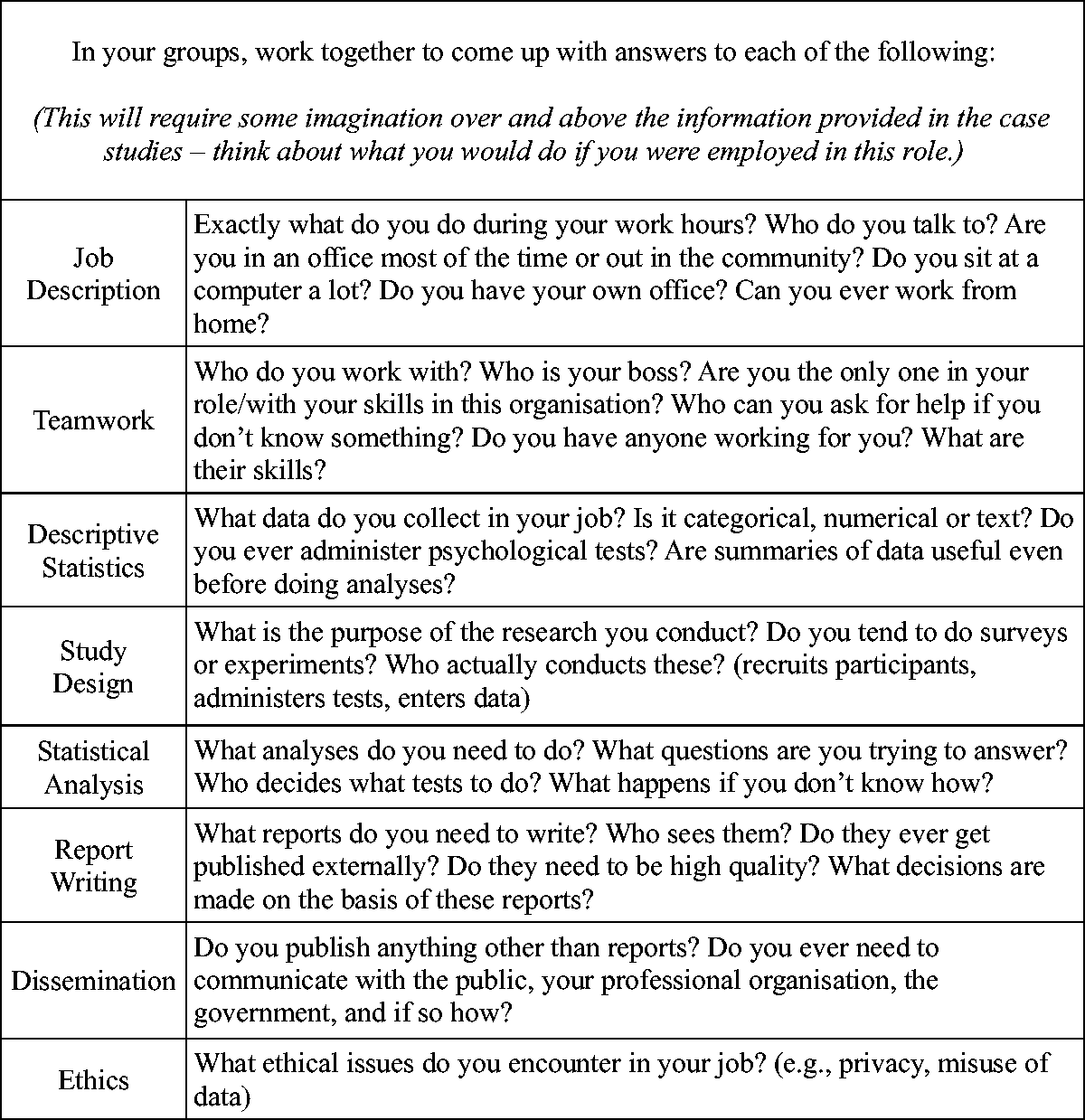

In the first lecture session of the course, students were presented with the case studies displayed around the classroom. In groups they were asked to read the case studies and extrapolate answers to a series of questions. The question set (see Figure 2) was designed to trigger discussion of generic skills in the workplace including leadership, communication, problem solving and ethics, as well as course-specific concepts such as research design and data analysis. Consolidation of statistical terms and concepts is explicitly linked with material learned in the previous year’s introductory course. These questions were designed to encourage students to more fully “flesh out” each of the case studies in their own minds and start them thinking about whether they would like to engage in these roles themselves. Students completed two of the case studies in small groups and were then asked to review and answer the questions for all case studies before the next class. This focus on groupwork was deliberately introduced as part of the case studies, with the activities to be completed in groups, and group-based problem-solving highlighted as a necessary component of future employment roles. All materials were provided for the course Learning Management System.

Question set provided in the first lecture session to encourage discussion of the case studies.

Tutorial and Lecture Activities

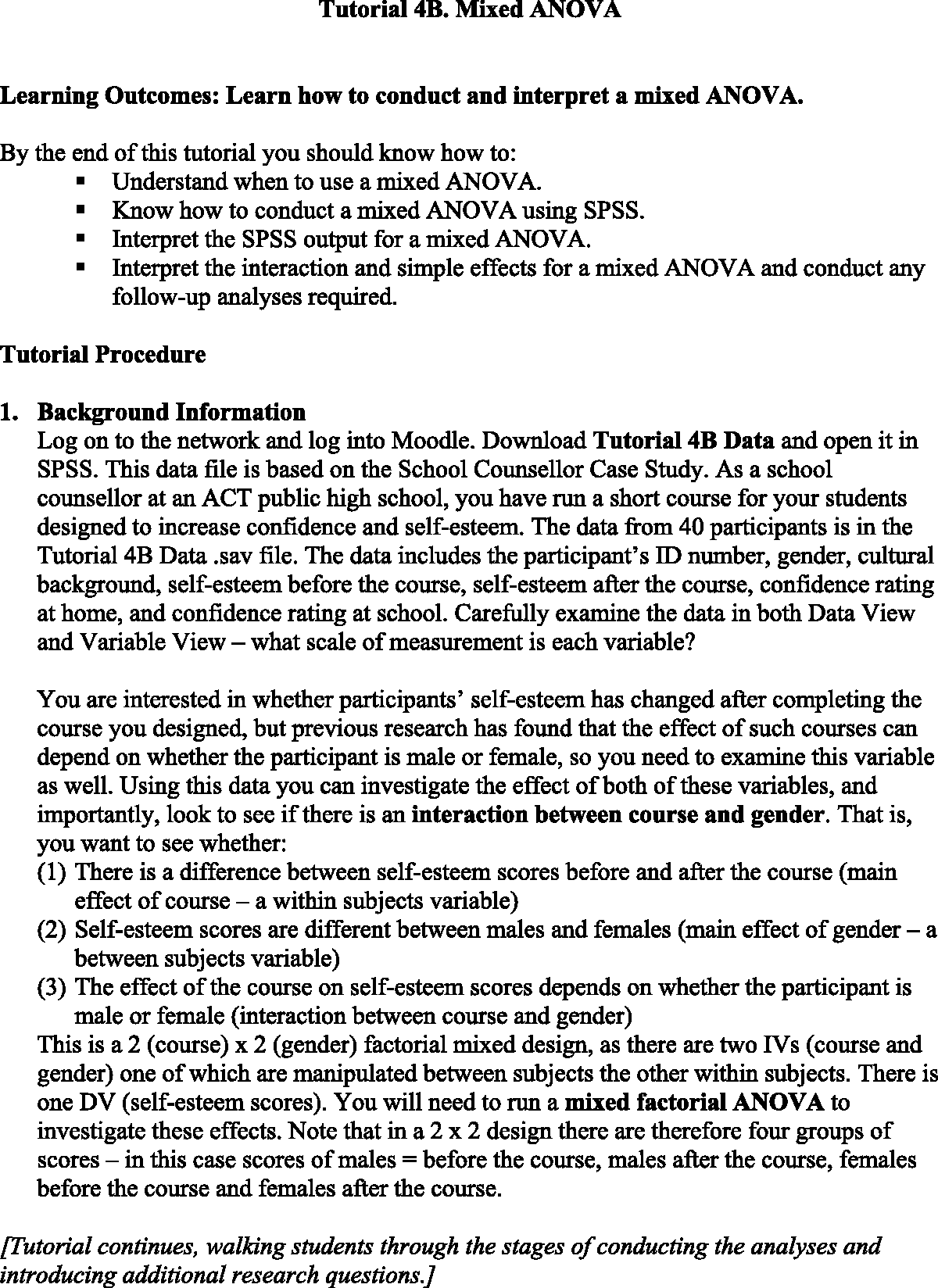

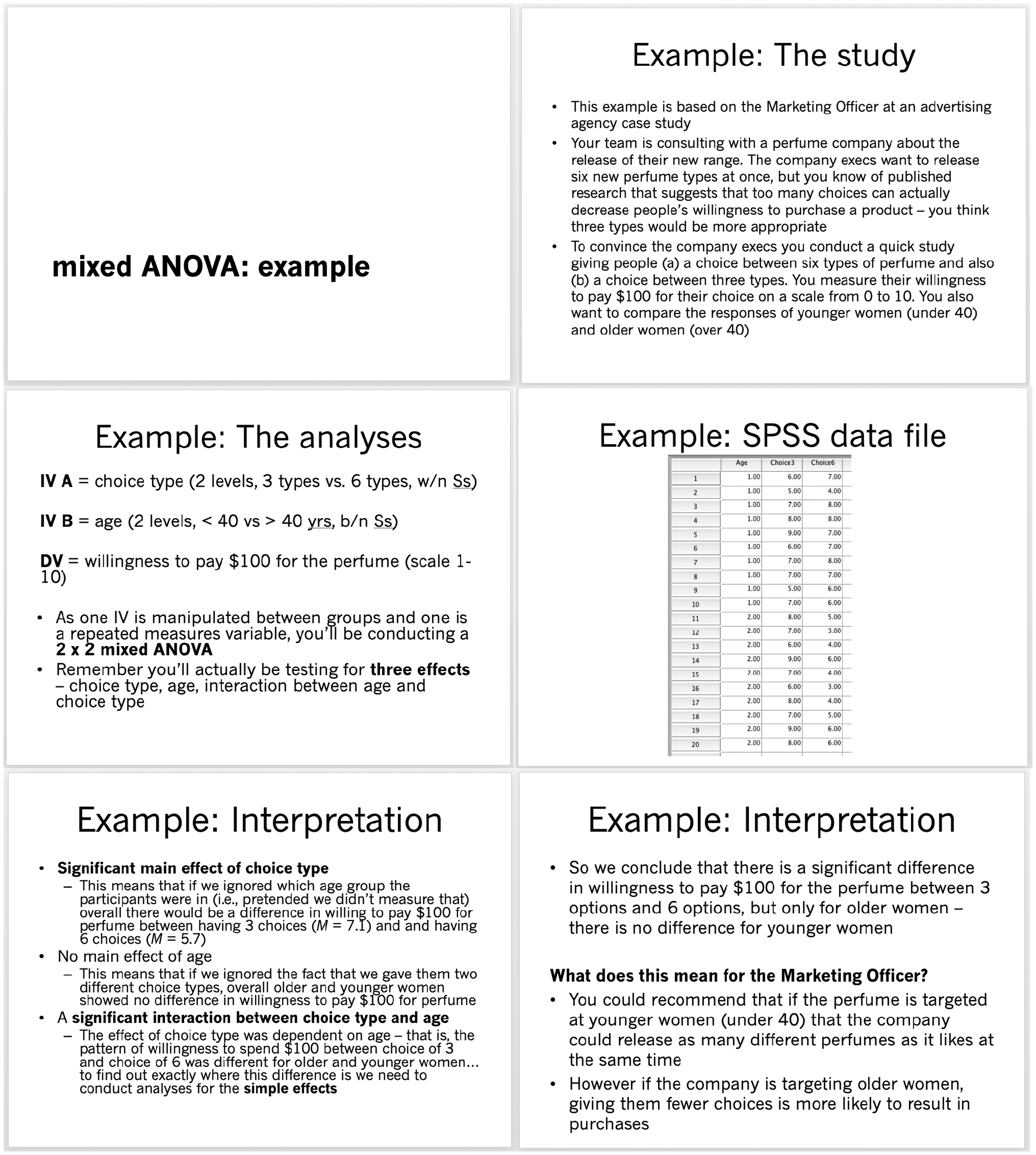

Research and statistical examples used throughout the course were rewritten to be placed in the context of one of the case studies. Tutorials in the course are held in a computer laboratory and typically involve practicing statistical analyses using the program SPSS. Tutorial materials including data sets and associated instructions were constructed based around the case studies. All tutorials state clearly which case study they were based on and provided specific contextual background, with the students asked to imagine they were working in that role and were solving a particular problem or question. Figure 3 provides an example of the use of the school counsellor case study in a tutorial designed to teach students to conduct mixed ANOVA. Students are encouraged to talk through the role and the research questions at the beginning of the tutorial and to consider the communication of the outcomes, and use of this information after the analysis is conducted. Similarly, lecture exercises (example provided in Figure 4) explicitly use the case studies as context for each example and allowed ongoing discussion of why students are learning the concepts presented, and how they could be used in a workplace setting.

An example of the tutorial materials with case studies embedded used during the course. Use of case studies within a lecture to demonstrate a statistical analysis (selected slides presented—example also included a number of slides demonstrating use of SPSS and interpretation of output).

Evaluation

The case studies were first developed and introduced in 2012 by the first author, and have since been implemented (with minor revisions) in every year from 2014 to 2018. The data from 2013 are not available for unrelated reasons. Teaching has been undertaken by the first author in each of these years, with support from tutorial staff and institutional learning and teaching staff (the second author). When the case studies were introduced in 2012, no specific research project was implemented to assess their effectiveness. However, retrospective evaluation of data routinely collected by the institution, as well as personal reflections by the first author, can give insight into their usefulness for both students and staff. Changes from 2011 to 2012 in particular provide a measure of the effect of their introduction, although this data has to be interpreted carefully as there was also a change of staff during that period, meaning that changes in feedback and other scores could be due to differences in teaching style rather than the case study content. Where possible, data relating to the case studies and related concepts is isolated and discussed.

Student Satisfaction

Student responses to institution-wide satisfaction scales can be used to assess student feedback on the course from 2011 to 2018 (excepting 2013). Student enrollment varied within those years from 114 to 149, and response rates to the student satisfaction questionnaires from 29% to 50%.

From 2011 to 2016 the satisfaction questionnaire was distributed once per year at the end of the teaching semester (but before examinations were held) and consisted of 19 questions grouped into five topic areas: course satisfaction (five questions), good teaching (six questions), generic skills (six questions), overall satisfaction (one question), and student experience (one question).

The course satisfaction scale included:

I found this course intellectually stimulating. The course was well organized. The course helped me to develop skills and knowledge. The methods of assessing student work were fair and appropriate. I received feedback that assisted my learning.

The good teaching scale included:

The teaching staff put a lot of time into commenting on my work. The teaching staff normally gave me helpful feedback on how I was doing. The teaching staff of this course motivated me to do my best work. My lecturers were extremely good at explaining things. The teaching staff worked hard to make the course interesting. The teaching staff made a real effort to understand difficulties I might be having with my work.

The generic skills scale included:

The course helped me develop my ability to work as a team member. The course sharpened my analytic skills. The course developed my problem-solving skills. The course improved my skills in written communication. As a result of the course I feel confident tackling unfamiliar problems. The course helped me to develop the ability to plan my own work.

The overall satisfaction scale had a single measure:

Overall I was satisfied with the quality of this course.

The student experience scale single item was:

The course made a positive contribution to my overall experience at the university.

Responses were made on a five-point scale (“Strongly Agree” to “Strongly Disagree”). Responses are reported here as either percentage agreement (collated “Agree” or “Strongly Agree”) for individual questions, or as a scale (coded as values 1–5; the mean for these responses was then calculated and rounded, with “Agree” and “Strongly Agree” representing mean scores of “4” or “5”). The responses were collated and reported by the institution.

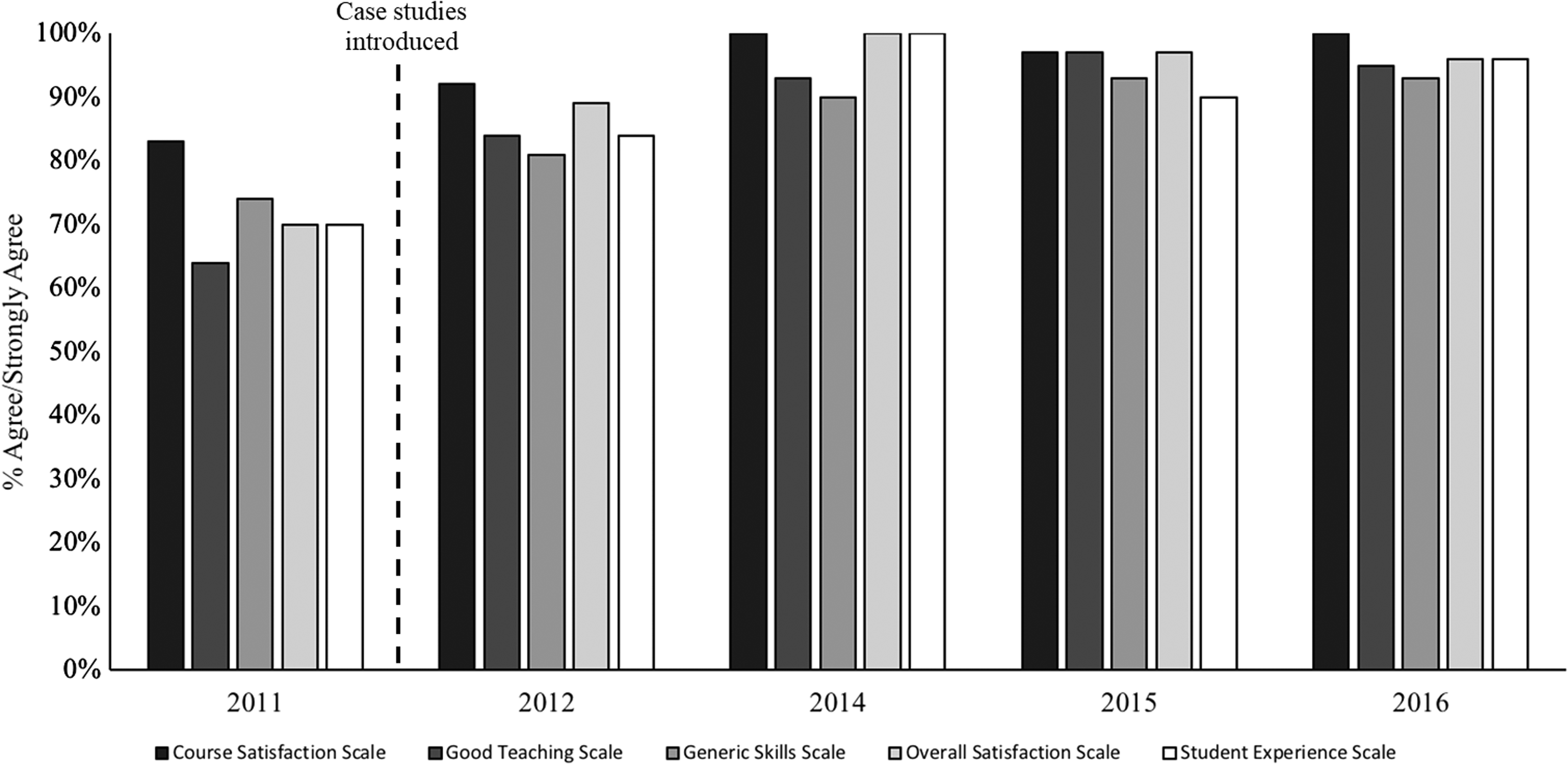

Figure 5 depicts the percentage of agreement with each of the scales. With the introduction of the case studies between 2011 and 2012, increases in all five scales were noted, with large increases in the overall satisfaction, student experience and good teaching scales, and moderate increases on the generic skills and course satisfaction scales. Further improvements and maintenance in feedback scores can be seen in years 2014 to 2016.

Feedback on the course provided by students in years 2011–2016. Case studies were included in years 2012, 2014–2016 (no data is available from 2013). For 2011, N = 47, 36% RR; for 2012, N = 63, 50% RR; for 2014, N = 41, 29% RR; for 2015, N = 61, 41% RR; for 2016, N = 45, 38% RR.

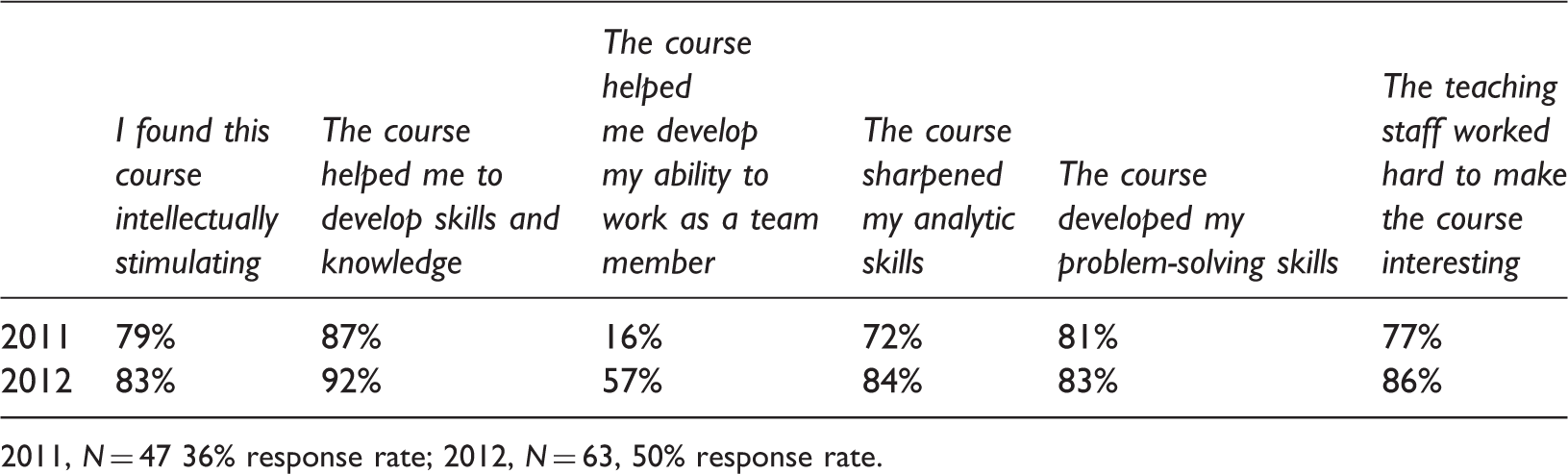

Percentage Agree/Strongly Agree to individual statements in Institution-Wide Satisfaction Survey in 2011 (pre-case study implementation) and 2012 (case study).

2011, N = 47 36% response rate; 2012, N = 63, 50% response rate.

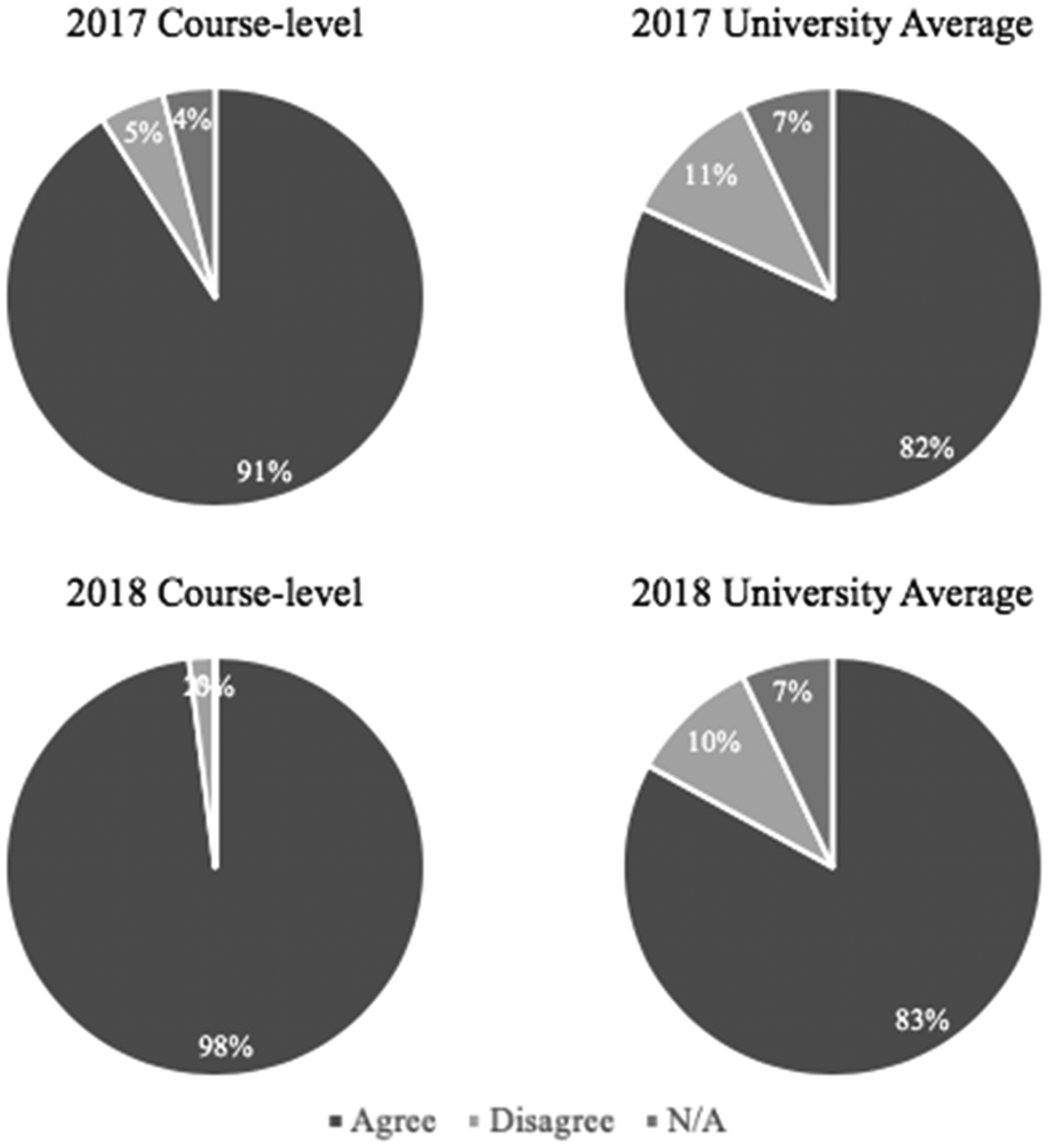

In 2017 and 2018, a new student feedback system was implemented by the university. The overall satisfaction item remained the same as previous years, and was maintained at the high rate seen in previous years (98% in 2017 and 96% in 2018). A new item relevant to the aims of the case studies was implemented asking students their agreement with the statement, “My learning in this course will help me achieve my personal, professional or educational goals,” using the same response scale as reported previously. Figure 6 presents the student responses to this item for both this course and the university average. Across the two years students were more likely to rate the course as relevant to their goals (2017, 91%; 2018, 98%) than the university average (2017, 82%; 2018, 83%).

Percentage agreement with the statement “My learning in this course will help me achieve my personal, professional or educational goals” (Agree; Disagree; Not Applicable) for the course and the university average for 2017 and 2018.

Student Feedback

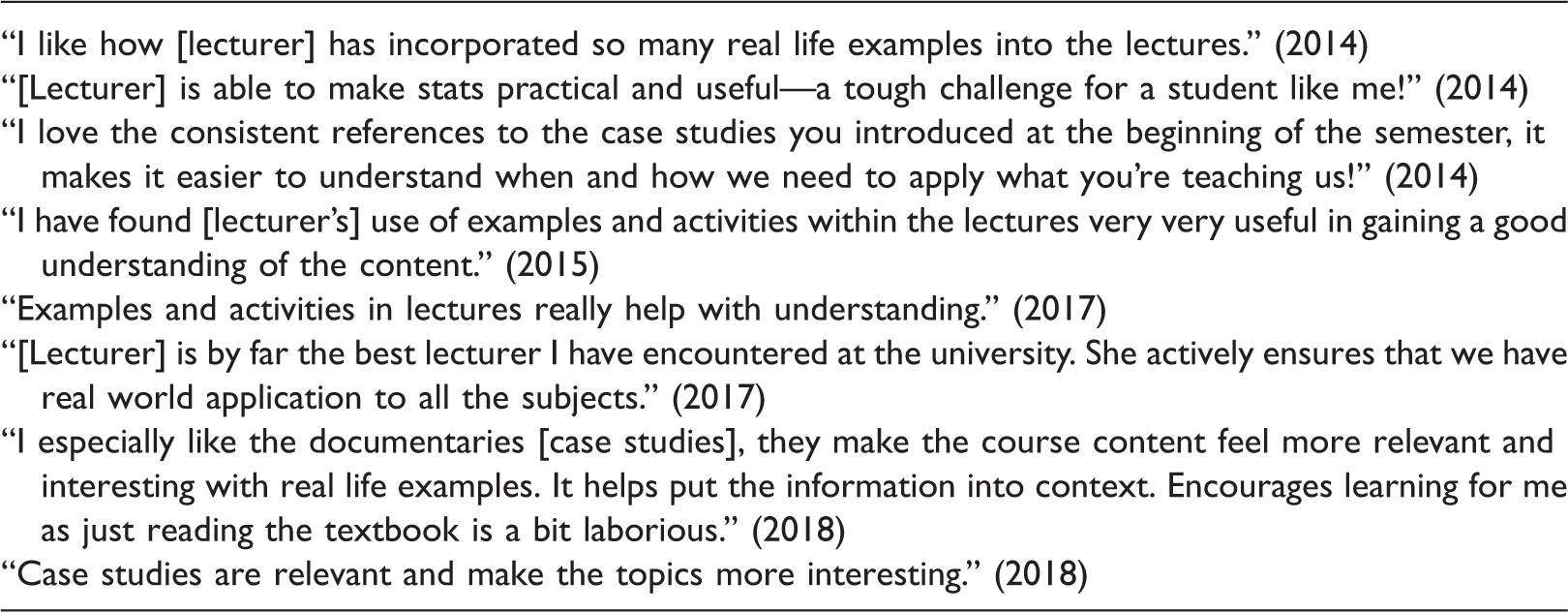

Examples of written feedback from anonymous student satisfaction assessments.

Student Performance

Student marks and grades from 2011 and 2012 were compared to assess the possible impact of the case studies on student outcomes. There was no significant difference in marks between the two years (for 2011, M = 67.35, SD = 13.92, N = 114; for 2012, M = 68.18, SD = 13.34, N = 106; t(218) = −0.44, p = 0.66). Breakdown by grades similarly indicated no change in grade distribution across the cohort, although there was a small, nonsignificant drop in the percentage of students failing the course (although completing all elements) from 7.4% to 5.3%.

Educator Reflections

The first author has created the case studies and all associated course materials, and taught the course in 2012 and 2014–2018 with assistance from sessional tutorial staff and advice from teaching and learning staff at the university (the second author). The case studies were initially time-consuming to create, as they involved interviewing graduates and reworking their responses into a single, appropriately targeted case study. All tutorial exercises (including data sets) and lecture examples were also created to fit within one of the case studies provided. Once the materials were developed, the implementation of the case studies only required additional class time in the first lecture to introduce and discuss the case studies. In all other tutorial and lecture settings, the case study materials simply replaced previous examples used in the course. In each year of the course, the students have been highly engaged with the case studies and have used them to start conversations with each other and staff. Students commonly question terminology used in the workplace (e.g., post-doctoral researcher, clinical manager). The breadth of outputs required in different roles is also a common point of discussion among students (e.g., client reports, feedback on government policies, court testimony, advertising strategy documents, media releases, grant applications).

Conclusions and Future Directions

The case studies were developed as a teaching tool to improve engagement in research methods and statistics material for undergraduate psychology students—one that could be implemented without introducing major changes to assessment or delivery structure. This article has described the design process and when and how they were implemented in the classroom setting. Retrospective assessment of feedback data suggests the case studies were moderately successful in increasing student satisfaction, including on measures specifically related to skill development, teamwork, and engagement. Qualitative feedback from students also suggests the case studies provide a means of adding meaning and improving learning; however, there was no evidence of improved outcomes in the course. With the addition of new feedback scales, the most recent cohort reported 98% agreement with the statement that this course would help them achieve their personal, professional or educational goals. This suggests a very high level of recognition of the relevance of the content to students’ lives, one of the specific goals of the initial introduction of the case studies. The ease of use of the case studies in a classroom setting has also been noted.

One advantage of the case-study approach is the potential for tailoring to particular geographical areas or teaching contexts depending on student expectation and graduation prospects. At this university, gaining a public service role or government policy role is common post-graduation, but this case-study could easily be exchanged if a different industry accounted for a sizable proportion of graduate outcomes in a particular region. Similarly, over time as the workforce changes, other roles are likely to become more common, with employment at an aged-care provider (Hugo, 2014) and with an entrepreneur both good candidates for inclusion in future revisions of these case studies.

However, conclusions that can be drawn about the efficacy of the case studies are limited, due to the lack of structured assessment to date. This retrospective analysis of institutional-level data presented here is suggestive of positive experiences for students and staff; however, the change of teaching staff that occurred simultaneously with the case studies being implemented introduces a confound, such that changes in the feedback scores could reflect either the case study intervention or other instructor-related factors. The lack of targeted assessment of the outcomes of the case studies also limits the interpretation of these findings. Future studies should examine the effects of exposure to the case studies by comparing engagement, satisfaction, and outcomes with those for students exposed to traditionally delivered materials, where both types of learning are delivered by the same staff.

In summary, the use of diverse, workforce-based case studies in research methods and statistics teaching appears beneficial for undergraduate psychology students in terms of engagement and satisfaction, but did not improve learning outcomes. Future studies are needed to provide a more detailed assessment of such interventions. Educators struggling to address issues of how to add real-world meaning to statistical material for a student body with a wide range of employment outcomes can use this approach to enable students to discuss course material with reference to a wide array of workplace contexts.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.