Abstract

Validity is an important issue when measuring teaching quality using student evaluations. This study examined the effects of psychology students’ subjective clarity about study contents and their prior interest in the subject as variables possibly biasing the evaluations of psychology courses. German students (N = 292) evaluated lectures and seminars over five years with a standardized questionnaire, yielding 3,348 data points. In cross-classified multilevel models, we separated the total variance into the variance components of course, teacher, student, and the Teacher x Student interaction and found evidence for biasing effects with regard to the students’ clarity about study contents and prior subject interest. These effects were small overall and were stronger for lectures than for seminars. These results suggest that the validity of evaluations of teaching in psychology can be improved by creating realistic expectations of what psychology is about before students choose psychology as a study subject.

Keywords

Introduction

Teaching quality in university courses is often measured via students’ evaluations of teaching (SETs). Such evaluations can be collected quickly and easily using standardized questionnaires (e.g., Staufenbiel, 2000; Toland & De Ayala, 2005). These questionnaires are usually based on the assumption that teaching quality is a multidimensional construct (e.g., Cohen, 1981; Remedios & Lieberman, 2008) with dimensions referring to teaching methods, interaction with students, enthusiasm of the teacher, and feedback (Marsh, 2007; Rantanen, 2013). SETs are conducted at many universities and their outcomes are used for curriculum development and even hiring decisions. Despite their widespread use, SETs have often been criticized for a potential lack of validity (e.g., Kulik, 2001). One major concern has been that students are not capable of evaluating teaching quality because their judgments are biased by background variables that are external to the criterion that needs to be assessed (for an overview, see Spooren, Brockx, & Mortelmans, 2013; for a critical discussion, see Marsh, 1984, 2007). In this study, we used a broad range of psychology course SETs to examine two possible yet ubiquitous sources of bias: The students’ subjective clarity about study contents and their prior subject interest. The effects of these potential sources of bias on SETs will first be discussed to elicit the research questions examined in our study.

Students’ Subjective Clarity About Study Contents and Students’ Evaluations of Teaching

Psychology is one of the most popular undergraduate study programs in Germany. The number of applicants by far exceeds the number of places (e.g., the ratio was 16:1 at the University of Kassel in 2016). 1 Students who are newly enrolled on a psychology program hold more or less elaborate conceptions of what psychology as an academic discipline is about (e.g., Goedeke & Gibson, 2011; Remedios & Lieberman, 2008). These conceptions influence students’ predictions about what psychology courses will be like, including predictions about the content that will be taught. Such conceptions may be formed by different sources, such as commonsense everyday psychology (e.g., Fletcher, 1984), the portrayal of psychology in the media (Holmes & Beins, 2009), input from peers (Pruitt, Dicks, & Tilley, 2010), and knowledge about the professional activities within the discipline (Rowley, Hartley, & Larkin, 2008).

Studies exploring students’ conceptions of study contents with regard to the subject matter of psychology found that they are quite variable, with some students holding unclear or even unrealistic expectations. For example, in a survey of German psychology students (Orlik, Fisch, & Saterdag, 1971), 33% stated that they were studying the wrong subject, and 67% stated that they could not do what they wanted in their studies. This finding is in line with more recent studies suggesting that psychology students expect practical and skill-focused content in their curriculum (Goedeke & Gibson, 2011; Hertwig & Stoltze, 2001). Many students expect that their undergraduate studies should provide them with knowledge directly relevant for helping people with psychological problems (Gaither & Butler, 2005; Hofmann & Stiksrud, 1993). In Germany, a considerable number of students aim to work following their studies in therapy and counselling (estimates range between 50% and 73%, see Handerer, 2014; Hertwig & Stoltze, 2001; Hofmann & Stiksrud, 1993). The expectations of these students are likely to be at odds with the typical content of undergraduate psychology programs that focus on research methods, statistics, diagnostic techniques, and theory-based research on a variety of psychological subjects. One might suspect that the initial unclear conceptions would be corrected after students have started their studies. However, Gardner and Dalsing (1986) found that students stick to some of their initial views of psychology even after undertaking several psychology courses.

Besides their conceptions of psychology as an academic discipline, students’ subjective clarity about study contents may affect SETs. Previous research focused on the effects of expectations regarding teachers (Bejar & Doyle, 1976; Pruitt et al., 2010) or expected grades (e.g., Feldman, 1997; Holmes, 1972; Remedios & Lieberman, 2008) on SETs. In contrast, the effect of students’ subjective clarity about study contents on SETs has received little attention in research. In the present study, we were interested in the question of whether students holding clearer conceptions of the subject matter of psychology would evaluate the courses more positively than students holding less clear conceptions. We assessed the self-reported clarity of first-semester students with regard to the general and specific study contents of psychology before they took their first course. The clarity of expectations concerning the subject matter of psychology in general, knowledge about the professions for which psychologists are suitable, and the intended careers of the students are subsumed under students’ subjective clarity about the general study contents of psychology.

Students’ subjective clarity about the specific study contents of psychology refers to the clarity of expectations concerning the content of psychological subfields, such as social or clinical psychology. This clarity translates into expectations about the contents that will be taught in a given psychology course. We assume that students with clear conceptions of psychology will be more satisfied, leading to more positive evaluations compared to the evaluations provided by students with less clear conceptions.

Prior Subject Interest and Students’ Evaluations of Teaching

Prior subject interest is a kind of individual interest (Hidi & Renninger, 2006) that is defined as a personal disposition to reengage in particular content over time (to be distinguished from situational interest that is created externally by factors present in a given situation). In more detail, prior subject interest embodies the general interest in a subject area rather than the topic of a specific lesson taught on a particular course (Flowerday & Shell, 2015; Krapp & Prenzel, 2011). Prior subject interest defined in this way has been shown to be an important precondition of intrinsically motivated learning and a strong predictor of learning achievements (e.g., Hidi, 2001) and has been found in some studies to positively predict SETs (e.g., Feldman, 1976; Marsh, 1980; Staufenbiel, Seppelfricke, & Rickers, 2016). In addition, findings indicating that elective courses receive moderately higher ratings than compulsory courses (e.g., Feldman, 1978) might be in part due to the greater interest in the content of these courses. In contrast to these results, other studies yielded no evidence in favour of a relationship between prior subject interest and course ratings (e.g., Olivares, 2001). The inconsistency of results could be influenced by the dimensions of teaching quality that have been investigated in a study by Marsh (1980). This study found that prior subject interest accounted for most of the variance in SETs in relation to 16 background variables (including expected grades and workload). However, the relationship differed with regard to different dimensions of teaching quality. He found relatively strong relationships between the perceived learning value of a course and the general course rating and weaker relationships as far as more objective dimensions of teaching quality were concerned, such as the course organization. Conceptually, prior subject interest is a characteristic of individual students and not an aspect of teaching quality. Nevertheless, interest might colour how individual students experience a course, which, in turn, might bias their assessment of teaching quality (Marsh & Cooper, 1981; Paget, 1984). Therefore, prior subject interest may be considered a potential threat to the validity of SETs as a measure of teaching quality.

Rationale of the Present Study

The present study used Staufenbiel’s (2000) FEVOR questionnaire, a typical standardized multidimensional instrument, and newly developed questionnaires to examine students’ subjective clarity about the study contents of psychology and their prior subject interest as potential threats to the validity of SETs. The FEVOR questionnaire is based on a theoretical conception of teaching quality. It is psychometrically sound and used in higher education in German-speaking countries.

We focused our analyses on the teacher performance item and the planning and presentation scale of the FEVOR, the two criterion variables. Teacher performance is a variable found in most SET questionnaires because it is a broad indicator of teaching quality. However, despite its pervasiveness and intuitive accessibility, the measure is difficult to interpret, because it may comprise many (mostly unknown and possibly varying) components, including instinctive ratings (Merritt, 2008). Thus, teacher performance might be prone to the biasing effects of students’ subjective clarity about study contents and prior subject interest. In contrast, the second criterion variable, planning and presentation, is a central construct of teaching quality that appears with different names in most multidimensional models of teaching quality (e.g., Marsh, 1983) and is assessed with similarly worded items in all of the well-established SETs (e.g., “Course materials were well prepared and carefully explained.”). The items of the planning and presentation scale focus on course details (e.g., “The lecture is clearly structured”), possibly triggering rather reflective ratings (Merritt, 2008). Accordingly, planning and presentation might be less prone to biasing effects of students’ subjective clarity about study contents and prior subject interest than teacher performance.

Students’ subjective clarity about study contents was assessed for general and specific study contents of psychology, measured with specially constructed scales that were applied before newly enrolled students of psychology attended their first course. Prior subject interest was assessed with one item from the FEVOR questionnaire. Considering that all three predictors are likely to overlap and compete for explained student variance, we investigated the unique contribution of each predictor in the context of the other predictors.

Each course was evaluated by several students, each student took several courses, teachers usually taught several courses, and some courses were taught by several teachers. Thus, the data have an imperfect hierarchical (or crossed) structure. On this account, we used cross-classified multilevel analyses (Baayen, Davidson, & Bates, 2008), which included random effects (random intercepts) of all three possible sources of variance: teacher, course, and student (Feistauer & Richter, 2017). Separate models were estimated for both criterion variables: teacher performance and planning and presentation.

Within the framework of cross-classified multilevel models, potential biasing effects of students’ subjective clarity about study contents and prior subject interest may be evaluated by estimating and testing the fixed effects of these variables on the criterion variables. Significant effects were interpreted by examining changes in the variance components of teacher, course, student, and the Teacher x Student interaction caused by including the bias variables as predictors in the model. Finally, we also estimated models in which the biasing effect of students’ subjective clarity about study contents and prior subject interest was included as random effects (i.e., effects varying randomly between students). These models allowed for the testing of the possibility that the magnitude of biasing effects varies between students, with some students exhibiting greater bias than others.

We ran separate analyses for lectures and seminars because of the didactical and organizational differences between the two course formats (cf. Staufenbiel et al., 2016). Lectures have a much more teacher-centered course format in comparison to seminars, which include more contributions from the students. Therefore, we expected biasing effects on teacher performance to be greater for lectures than seminars, because unfulfilled expectations about or unsatisfied interest in the course might be attributed more readily to teacher behavior in lectures.

Method

Sample

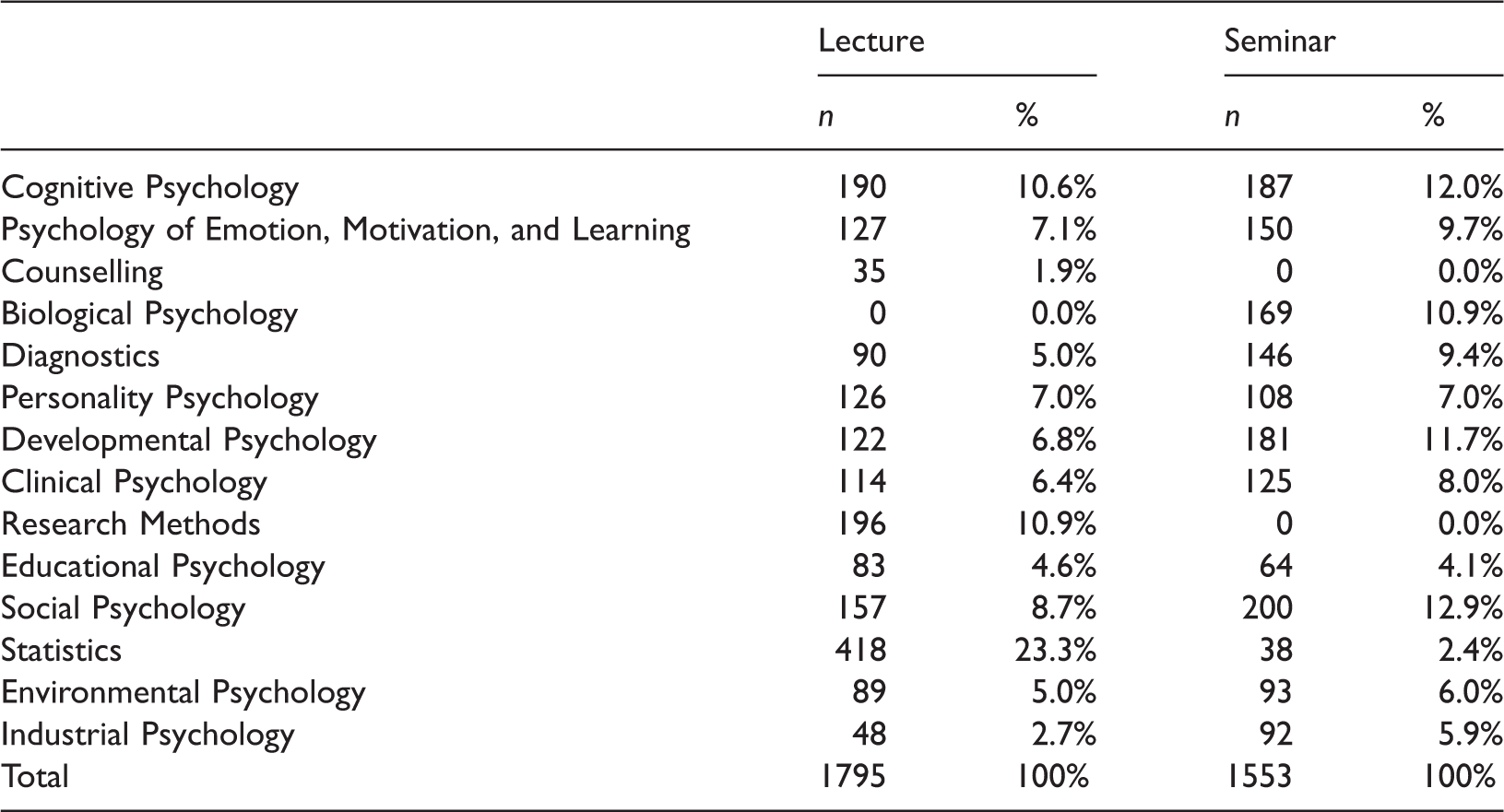

Numbers of Evaluation Questionnaires Split by Course Type and Course Subject

Note. N = 3348.

Procedure

The questionnaires were distributed in the final third of each semester (in the second half of January or June) by the teachers and the students were given 5–10 minutes to complete them. Data were scanned using the software Remark Office OMR 8 and a student research assistant controlled the accuracy of the data. In addition to providing course evaluations, students completed a questionnaire during the first day of introduction, one week before the start of the first semester, asking them for their subjective clarity about the study contents of psychology.

Criterion Variables

The study was based on a standardized questionnaire (FEVOR) used in German-speaking countries for the evaluation of university courses (see Staufenbiel, 2000). Different versions of the questionnaire exist, depending on the course type. The questionnaire has 31 items for lectures and 34 items for seminars. Responses were provided on a Likert scale ranging from 1 (strongly disagree) to 5 (strongly agree) and “not applicable” as an additional response option. The two versions contain 26 identical items. Eight additional items in the seminar questionnaire refer to the quality of presentations as perceived by students, and four items in the questionnaire for lectures refer to the teacher’s presentation style. Students provided an individual alphanumeric code for relating multiple questionnaires completed by the same student, which could not be linked to the students, thus protecting their anonymity. The questionnaire items can be aligned with four psychometrically distinct scales. In this study, we focused on the teacher performance item and on the planning and presentation scale consisting of five to eight items.

Teacher performance

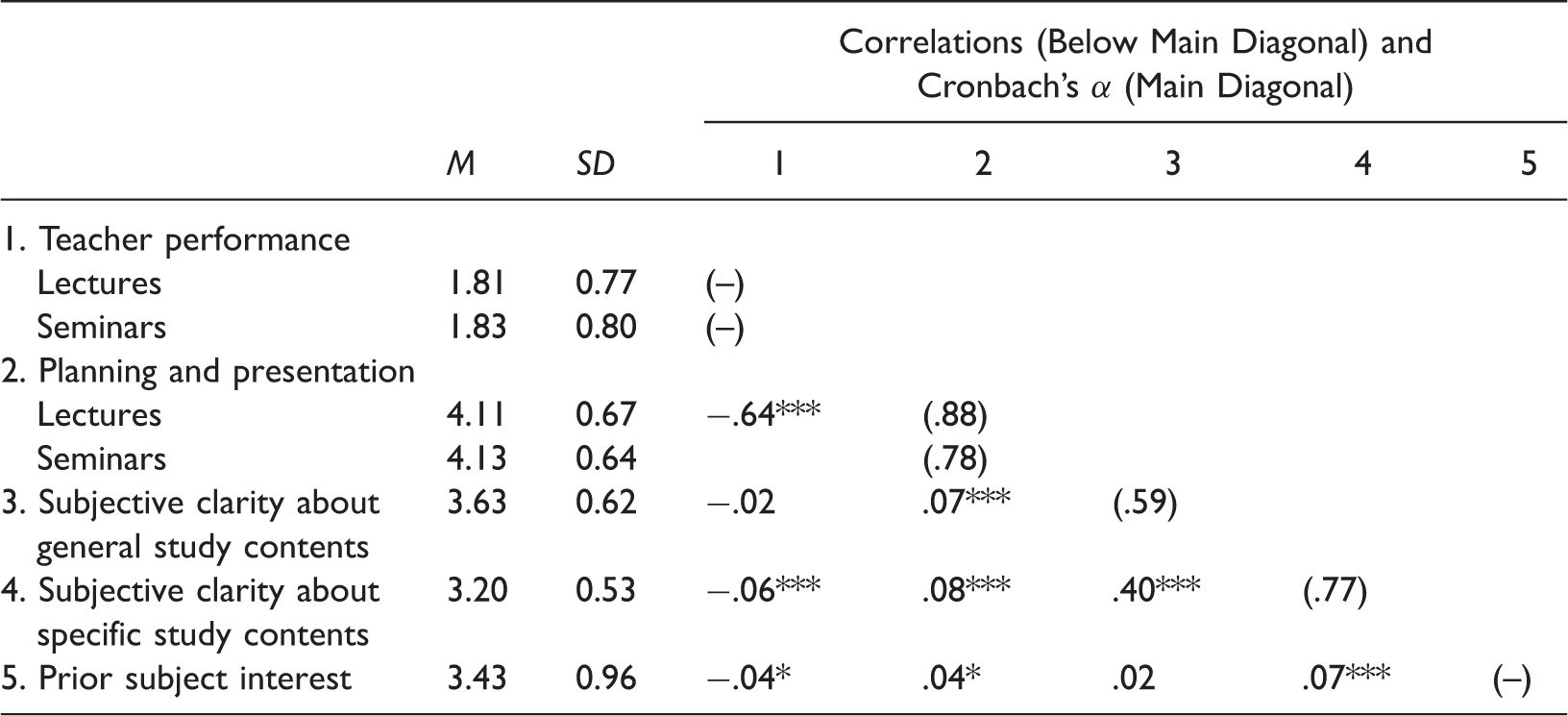

Descriptive Statistics, Correlations, and Reliability Estimates (Cronbach’s α) for all Variables

p < .05, ***p < .001.

Planning and presentation

The scale assesses the extent to which students perceive a course to be well prepared and structured and the extent to which the contents are presented in a meaningful way. It contains items such as “The seminar provides a good overview of the subject area” and “The lecture is clearly structured.” The scale consists of five items for lectures (M = 4.11, SD = 0.67, Cronbach’s α = .88) and eight items for seminars (M = 4.13, SD = 0.64, Cronbach’s α = .78). See Table 2.

Predictor Variables

Students’ subjective clarity about the general study contents

This scale assesses the clarity of a first-semester student’s concept of psychology as a study subject and profession. It contains items such as “I have a clear concept of the contents of the psychology program.” Students rated responses on a Likert scale ranging from 1 (strongly disagree) to 5 (strongly agree) during the first day of introduction, one week before the courses begin. The scale consists of five items that are provided in Appendix A (M = 3.63, SD = 0.62, Cronbach’s α = .59). See Table 2.

Students’ subjective clarity about specific study contents

This scale measures students’ subjective clarity about the subfields in psychology. The scale consisted of 10 items such as “I have a precise idea about the contents of social psychology.” Likert scale responses range from 1 (strongly disagree) to 5 (strongly agree). All items are provided in Appendix B. Responses to this scale were also collected on the first day of introduction (M = 3.20, SD = 0.53, Cronbach’s α = .77). See Table 2.

Subjective clarity about general and specific study contents was assessed before the first semester started. We included students’ current semester at the time of evaluation as a possible moderator variable to consider a possible change of the effects of subjective clarity over time (Md = 2, Range = 1–10).

Prior subject interest

Prior subject interest was measured with one item (“What was your level of interest in the course subject before the course started?”) in the evaluation questionnaire for each course. Response options were from 1 (very low) to 5 (very high) (M = 3.43, SD = 0.96). Based on a sample collected during the summer semester 2017 (680 questionnaires completed by 279 students), retest reliability was estimated by correlating prior subject interest measured before the course started and about 10 weeks later at the time of evaluation. In this sample, the measure reached an acceptable retest reliability of .62.

Results

Analyses were performed with cross-classified multilevel models (Baayen et al., 2008), which allowed for the separation of the variance components of teacher, course, and student. These three sources of variance and the Teacher x Student interaction were included as random effects in the analyses. Separate models were estimated for teacher performance and planning and presentation as criterion variables. The models were estimated with the statistical software R version 3.3.2 (R Core Team, 2016) and the full maximum likelihood estimation procedure included in the lmer function of the R package lme4 (Bates, Mächler, Bolker, & Walker, 2015). Models were compared with the anova function of the R package stats (R Core Team, 2016), which compares the fit of nested models that differ in the structure of fixed or random effects. Data were analysed separately for lectures and seminars.

Estimated Models

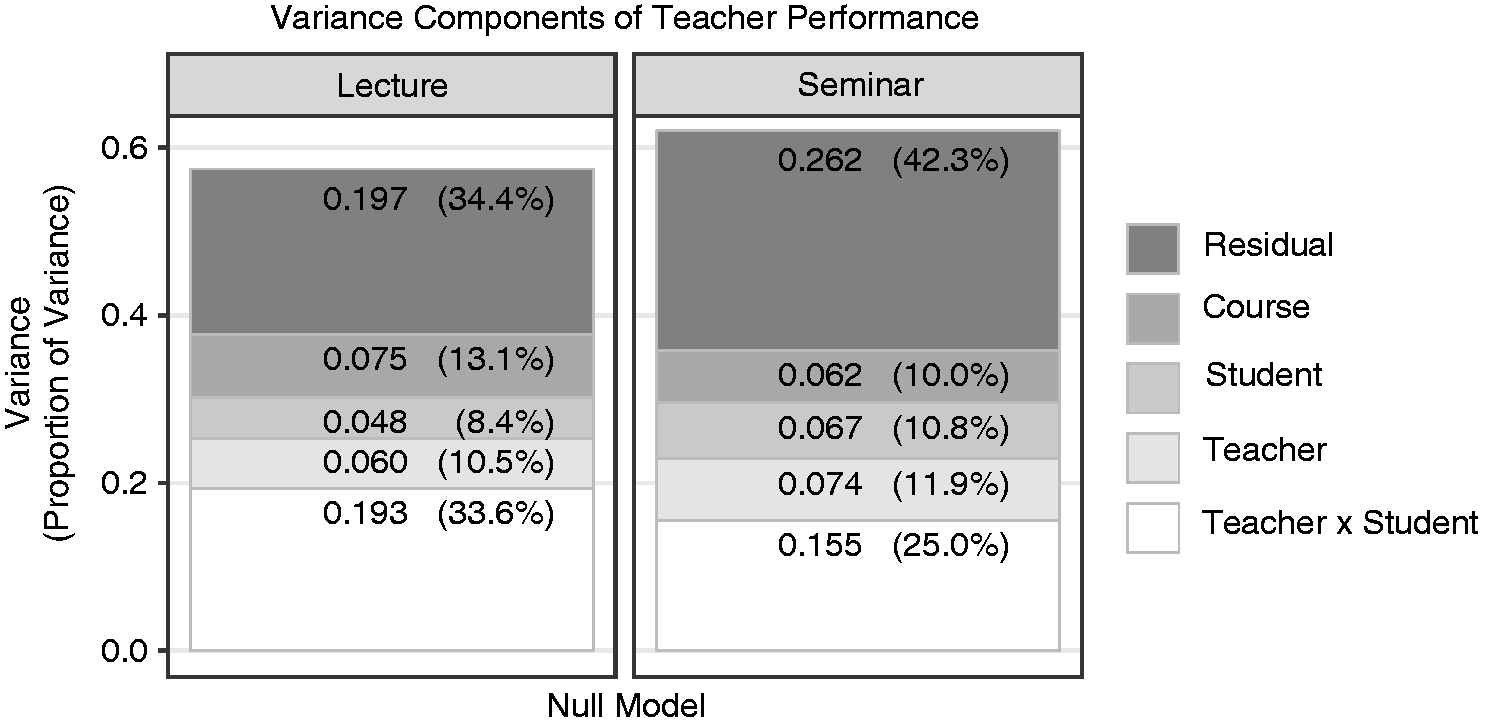

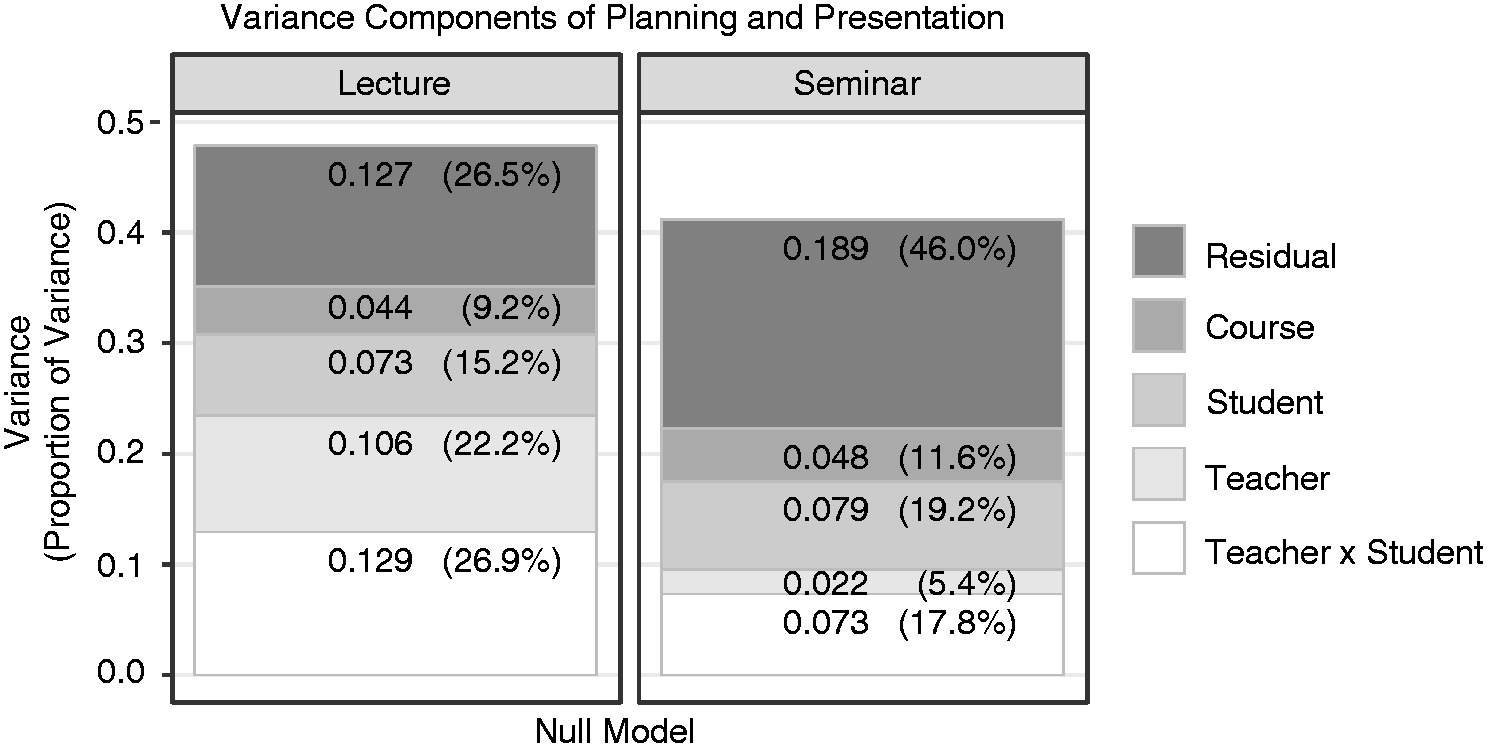

We estimated a sequence of five nested models for both criterion variables. In the first step, we estimated a null model with no fixed effects but with the variance components student, teacher, course, and Teacher x Student interaction (Feistauer & Richter, 2017). Figures 1 and 2 display the variances and the proportions of variance of both criterion variables.

Variance and proportions of variance reflecting the different variance components for the criterion variable teacher performance (null model). Variance and proportions of variance reflecting the different variance components for the criterion variable planning and presentation (null model).

We used the null model as a base for testing the effects of student background characteristics, which were entered as fixed effects and centered at the grand mean. We added students’ subjective clarity about general study contents to Model 1, students’ subjective clarity about specific study contents to Model 2, and prior subject interest to Model 3 as fixed effects. In Models 1–3, the effects of the student-level predictors were assumed to be fixed effects that are constant across all students. However, it may be that the effects of student characteristics on SETs vary randomly across students. To address this possibility, we ran preliminary models with random coefficients for the student characteristics. Only the random coefficient of prior subject interest was significantly different from zero in these analyses, whereas students’ subjective clarity about study contents did not vary across students. Therefore, we estimated Model 4.

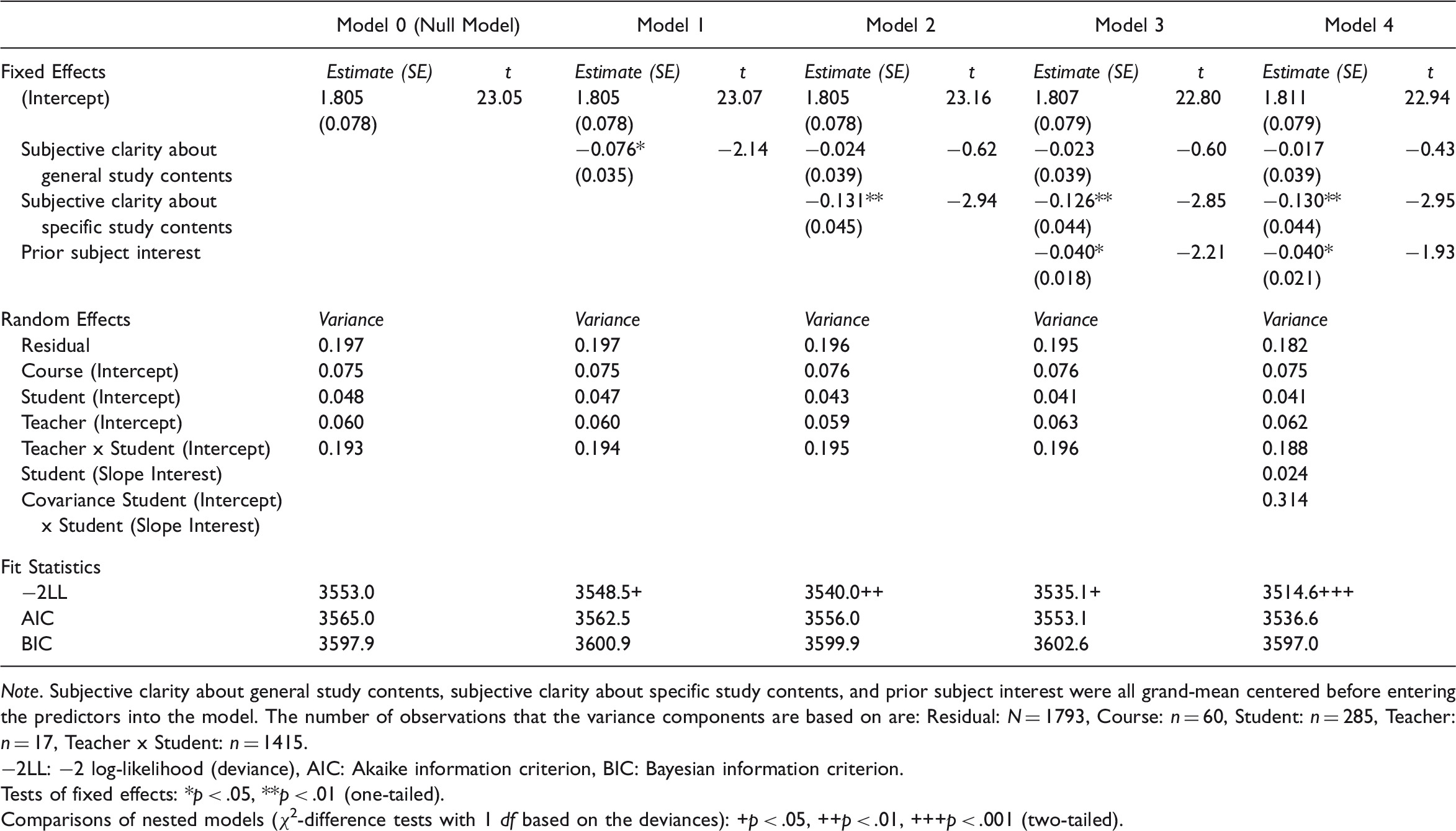

Teacher Performance

Estimates for the Cross-Classified Multilevel Models for Teacher Performance in Lectures

Note. Subjective clarity about general study contents, subjective clarity about specific study contents, and prior subject interest were all grand-mean centered before entering the predictors into the model. The number of observations that the variance components are based on are: Residual: N = 1793, Course: n = 60, Student: n = 285, Teacher: n = 17, Teacher x Student: n = 1415.

−2LL: −2 log-likelihood (deviance), AIC: Akaike information criterion, BIC: Bayesian information criterion.

Tests of fixed effects: *p < .05, **p < .01 (one-tailed).

Comparisons of nested models (χ2-difference tests with 1 df based on the deviances): +p < .05, ++p < .01, +++p < .001 (two-tailed).

Including students’ subjective clarity about general study contents as the predictor in Model 1 led to a significantly improved model fit. The higher students estimated their clarity about the general study contents of psychology, the higher they evaluated teacher performance (β = −0.076; t(289.8) = −2.14; p < .05). Adding this predictor led to a decrease of 2.1% in the variance component of students.

We entered students’ subjective clarity about specific study contents as an additional predictor to Model 2. The correlation between both predictors was .40. Inclusion of students’ subjective clarity about specific study contents led to a considerable increase in model fit. The clearer students were about the specific study contents of psychology, the higher they evaluated teacher performance (β = −0.131; t(275.8) = −2.94; p < .01). Inclusion of this predictor led to a decrease of 8.5% in the student variance component. However, the effect of students’ subjective clarity about general study contents was no longer significant after including students’ subjective clarity about specific study contents as an additional predictor. Addition of the students’ current semester as moderator did not change these results. It showed no main effect on (β = 0.00; t(289.2) = 0.00; p > .05) or interaction with teacher performance in lectures.

We added students’ prior subject interest as a predictor to Model 3. This third predictor was basically uncorrelated with students’ subjective clarity about general (r = .02) and specific (r = .07) study contents. Adding prior subject interest also led to a significantly improved model fit. Greater student interest indicated more favourable evaluations of teacher performance in lectures (β = −0.04; t(1755.4) = −2.21; p < .05). Inclusion of this predictor led to an additional decrease of 4.7% in the student variance component but an increase of 6.8% in the teacher variance component.

In Model 4, we included prior subject interest as a random coefficient. A significant random coefficient would mean that students were influenced to different degrees (and possibly in different directions) by their prior subject interest in their evaluations of teacher performance. Including the random coefficient of prior subject interest significantly improved the model fit. The slope variance was 0.024, indicating that the magnitude effect of this variable on teacher performance varied considerably between students, with some students even showing negative effects (Leckie, 2013).

For seminars, none of the effects specified in Models 1 to 4 for the teacher performance criterion variable was significant.

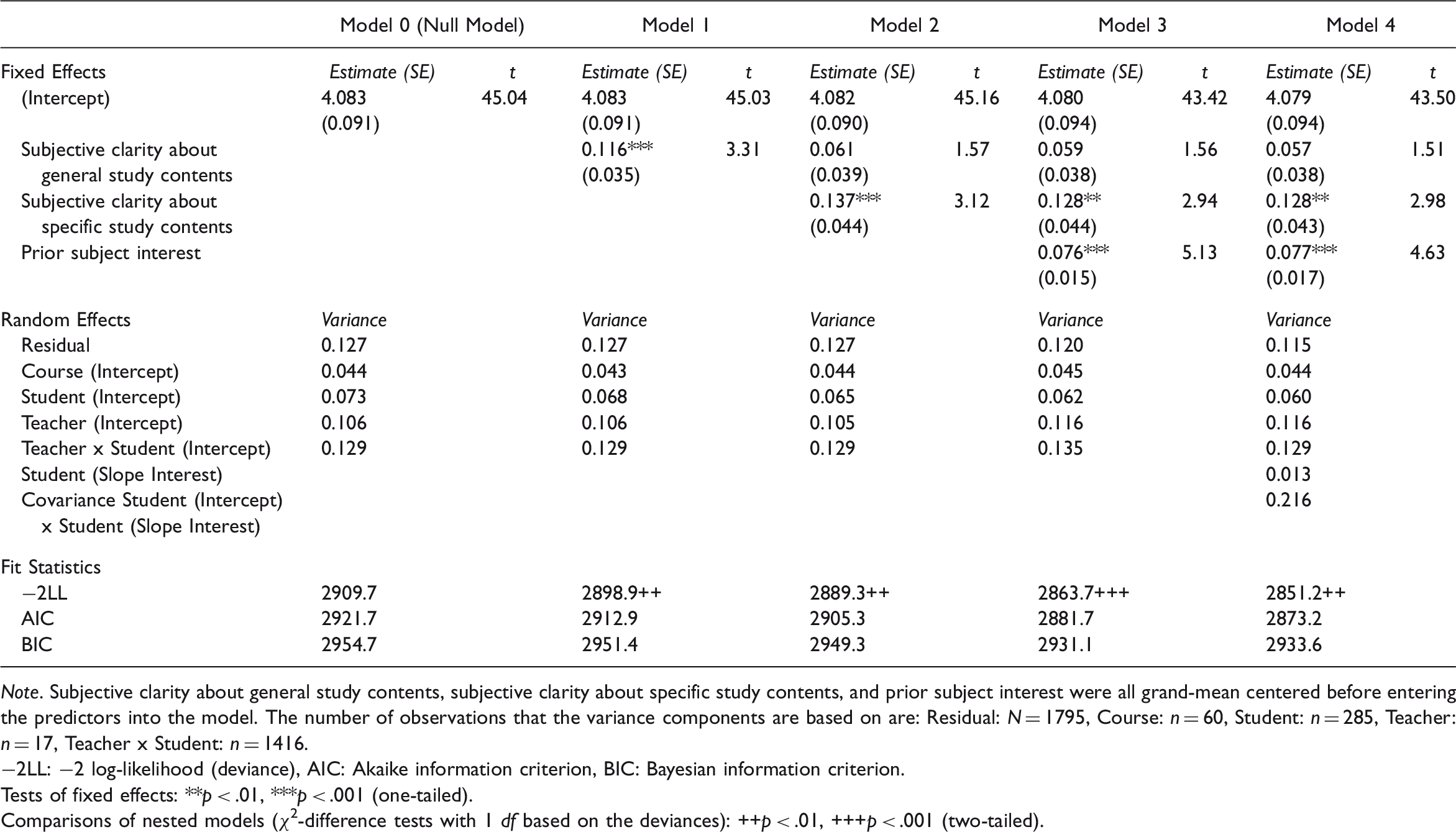

Planning and Presentation

Estimates for the Cross-Classified Multilevel Models for Planning and Presentation in Lectures

Note. Subjective clarity about general study contents, subjective clarity about specific study contents, and prior subject interest were all grand-mean centered before entering the predictors into the model. The number of observations that the variance components are based on are: Residual: N = 1795, Course: n = 60, Student: n = 285, Teacher: n = 17, Teacher x Student: n = 1416.

−2LL: −2 log-likelihood (deviance), AIC: Akaike information criterion, BIC: Bayesian information criterion.

Tests of fixed effects: **p < .01, ***p < .001 (one-tailed).

Comparisons of nested models (χ2-difference tests with 1 df based on the deviances): ++p < .01, +++p < .001 (two-tailed).

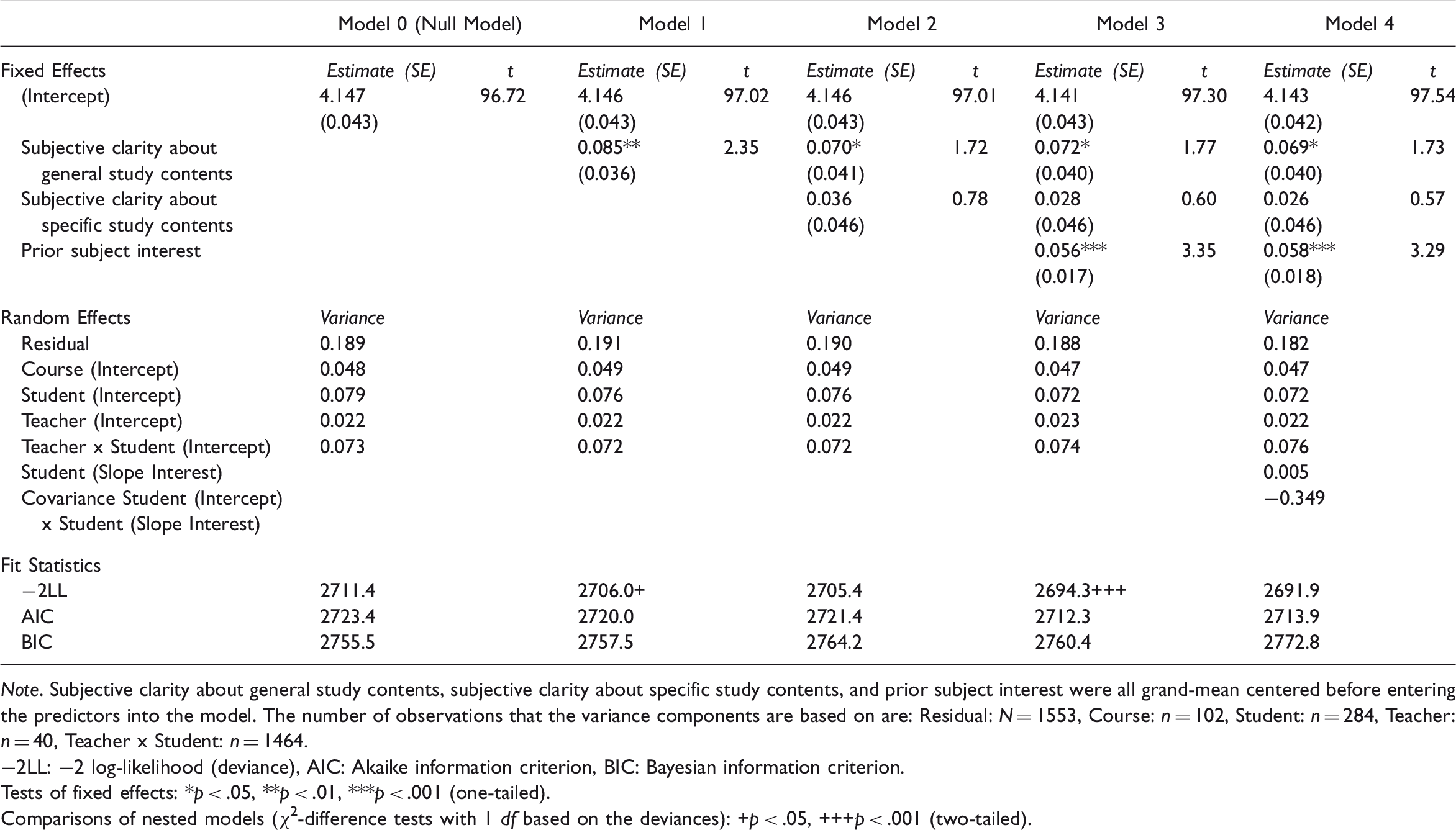

Estimates for the Cross-Classified Multilevel Models for Planning and Presentation in Seminars

Note. Subjective clarity about general study contents, subjective clarity about specific study contents, and prior subject interest were all grand-mean centered before entering the predictors into the model. The number of observations that the variance components are based on are: Residual: N = 1553, Course: n = 102, Student: n = 284, Teacher: n = 40, Teacher x Student: n = 1464.

−2LL: −2 log-likelihood (deviance), AIC: Akaike information criterion, BIC: Bayesian information criterion.

Tests of fixed effects: *p < .05, **p < .01, ***p < .001 (one-tailed).

Comparisons of nested models (χ2-difference tests with 1 df based on the deviances): +p < .05, +++p < .001 (two-tailed).

Including students’ subjective clarity about general study contents as a predictor in Model 1 led to a significantly improved model fit. The higher students estimated their clarity about the general study contents of psychology, the higher they evaluated planning and presentation (lectures: β = 0.116; t(266.8) = 3.31; p < .001, seminars: β = 0.085; t(246.4) = 2.35; p < .01). Adding this predictor led to a decrease in the student variance component (lectures: 6.8%; seminars: 3.8%).

We added students’ subjective clarity about specific study contents as a further predictor to Model 2. Including it led to an improved model fit for lectures but not for seminars. The clearer students were about the specific study contents of psychology, the higher they evaluated planning and presentation in lectures (β = 0.137; t(260.3) = 3.12; p < .001). Including this predictor for lectures led to a decrease of 4.4% in the student variance component. The effect of students’ subjective clarity about general study contents was also no longer significant after including students’ subjective clarity about specific study contents as an additional predictor in Model 2. Addition of the students’ current semester as moderator did not change the results.

Entering prior subject interest as an additional predictor in Model 3 significantly improved the model fit. A higher prior subject interest indicated more favourable evaluations of planning and presentation (lectures: β = 0.076; t(1728.4) = 5.13; p < .001, seminars: β = 0.056; t(1514.6) = 3.35; p < .001). Adding this predictor to the model led to a decrease in the student variance component (lectures: 4.6%; seminars: 5.3%), a decrease of 4.1% in the seminar’s course variance component, an increase of 10.5% in the lecture’s teacher variance component, but to a decrease of 4.5% in the seminar’s teacher variance component, and an increase in the Teacher x Student variance component (lectures: 4.7%; seminars: 2.8%).

Including the prior subject interest as a random coefficient in Model 4 led to a significantly improved model fit for lectures but not for seminars. The slope variance for lectures was 0.013, implying that the individual effects of prior subject interest on planning and presentation varied and were even negative for some students.

Discussion

Our study examined the validity of SETs by analysing the effects of students’ subjective clarity about the study contents of psychology on their evaluations, assessed one week before embarking on a psychology course, and their prior subject interest. The results revealed that students’ subjective clarity about the general and specific study contents of psychology and prior subject interest positively affected the evaluations of teacher performance in lectures and the evaluations of planning and presentation in relation to both lectures and seminars. In more detail, the two moderately correlated components of students’ subjective clarity explained overlapping proportions of variance in SETs. After including students’ subjective clarity about specific study contents in the model, the clarity about general study contents was no longer significant. These results were not moderated by the students’ current semester. In contrast, prior subject interest, basically uncorrelated with both components of students’ subjective clarity, provided a unique contribution to the explained variance in SETs.

These results support our hypothesis that the potential biasing effects of students’ subjective clarity about the study contents of psychology and their prior subject interest are more likely to occur in relation to lectures than seminars. As far as seminars are concerned, neither students’ subjective clarity about the study contents of psychology nor their prior subject interest were significantly related to teacher performance. This finding might be interpreted as support for a bias-free measurement. However, biasing effects were found in relation to the planning and presentation scale used for seminars.

Our hypothesis that evaluations of teacher performance would be more prone to the biasing effects of students’ subjective clarity about study contents and prior subject interest than evaluations of planning and presentation was not supported. Apparently, the detailed items used for the assessment of planning and presentation failed to foster more reflective judgments (Merritt, 2008) that would prevent biasing effects. Our results are more in line with research by Pinto and Mansfield (2010), who found that in group discussions students seem to rely mostly on emotionally charged responses (“gut reactions”) and not so much on reflective judgments when evaluating teaching quality. Even when students were prompted with the question, “Rate the logical arrangement of the course material”, which corresponds to the planning and presentation scale used in our study, 33% of the responses were of the emotionally charged type.

Our results show that planning and presentation, as a central dimension of SETs for measuring teaching quality, which should be completely dependent on teacher performance, is biased to some extent by students’ subjective clarity about study contents and prior subject interest. Although this conclusion casts some doubt on the validity of these evaluations, note that in the models in which students’ subjective clarity about study contents and prior subject interest exerted significant effects on SETs, the three predictors explained small proportions of the total variance of the criterion variables.

In this study, we did not investigate the psychological mechanisms that underlie the observed biasing effects of students’ subjective clarity about study contents and prior subject interest. However, the pattern in relation to variance components that were reduced or not reduced after including the predictors suggests specific hypotheses concerning mechanisms that could be tested in further research. First, students with a higher subjective clarity about study contents might also have more prior knowledge of the subject (Tobias, 1994), follow more functional learning goals (Seijts & Latham, 2011) and, therefore, experience faster progress in learning. Learning goals possibly lead to a higher course commitment (Mikkonen, Ruohoniemi, & Lindblom-Ylänne, 2013; Seijts & Latham, 2011). The considerable decrease in the student variance component (9%) when students’ subjective clarity about study contents was included and also the absence of a decrease in the variance component that reflected the Teacher x Student interaction is consistent with this explanation. Apparently, students’ subjective clarity about study contents affects SETs regardless of the teacher. In contrast, including prior subject interest explained the variance on all variance components. The effects of this variable cannot be explained by the commitment to a course and a teacher but instead indicate that other factors at the student level must be present. For example, prior subject interest is likely to alter students’ motivation or emotions with regard to learning (Park, Plass, & Brünken, 2014; Pekrun, 1992). Motivated students experience the course as more satisfying, which would increase SETs (Filak & Scheldon, 2008). Another possibility is that prior subject interest, as a kind of individual interest, is not necessarily the type of interest that has the strongest effect on the learning experience. In certain subjects, such as statistics, fostering situational interest (Park, Flowerday, & Brünken, 2015) might be more important because individual interest in these subjects is initially very low, whereas situational interest can be raised by didactic measures. Further research seems necessary to disentangle the possible effects of different types of interest on SETs.

In the random coefficient models estimated in the final step of the analysis, only the random coefficient of prior subject interest in lectures was significantly different from zero. In other words, the degree to which prior subject interest was related to SETs varied between students, from a mostly strong positive association to a slightly negative association for some students. The positive associations might have occurred because of higher student interest and the resulting higher positive evaluations, whereas the negative associations could be due to highly interested students who were disappointed with the way the subject matter was presented in the lecture. Future research is necessary to evaluate these assumptions. In contrast to the random coefficient of prior subject interest, the random coefficients of the two components of students’ subjective clarity about study contents were not significant in any of the models. Although null results should be interpreted with caution, this finding provides some indication that the positive associations of students’ subjective clarity about study contents with SETs, albeit not very strong, vary little among students.

Limitations of the Present Study

We believe that our results are informative, but the conclusions that may be drawn from them are limited in several ways. One limitation is that prior subject interest was measured retrospectively at the time of the SETs. Thus, the measurement of prior subject interest could have been affected by teacher performance to an unknown extent. This makes a causal interpretation of the relationship between prior subject interest and SETs difficult. In a future study, we plan to assess subject interest at the beginning of a course to clarify the direction of the causal relationship.

A second limitation is that prior knowledge, which is often confounded with individual interest (Tobias, 1994), was not controlled for in this study. Considering that individual interest develops slowly over time and affects a person’s knowledge (Hidi, 1990) it would also seem to be valuable to assess the effect of prior knowledge on SETs in future. Most likely, higher prior knowledge has a positive effect on SETs because it increases the flow of learning and allows individual students to make the most of a course.

A third limitation concerns the relatively low effect sizes found for students’ subjective clarity about the study contents of psychology and their prior subject interest. One possible interpretation of these findings is that the validity of SETs is only slightly compromised by these biasing variables. However, another factor artificially lowering the effect sizes might be that SETs commonly suffer from ceiling effects (Menges & Brinko, 1986; Zhao & Gallant, 2012). Our study is no exception. The grand means of both criterion variables were relatively close to the maximal obtainable values, which limited the variability and, hence, possibly the effect sizes. Another possible explanation for the weak effect of students’ subjective clarity about general study contents is the low internal consistency (Cronbach’s α = .59) of this scale. Likewise, low reliability might have constrained the effects of prior subject interest because a one-item measure was used. The reported retest reliability of .62 for a 10-week interval (based on a different sample) is acceptable and in line with the retest reliability reported in other studies (e.g., Linnenbrink-Garcia et al., 2010). Nevertheless, measuring prior subject interest with a multiple-item scale would have been desirable in terms of reliability and might have increased the magnitude of its effect on student evaluations of teaching.

A fourth limitation shared with most other studies in the field is that the standards students apply to their evaluations are not clear and likely vary among students (maybe even between different evaluations given by the same student). Previous research suggests that students compare the course being evaluated with other courses (Darby, 2008), with an ideal course (Dunegan & Hrivnak, 2003; Goldstein & Benassi, 2006), or with an average course (Grimes, Millea, & Woodruff, 2004). In many contexts, it is common to instruct or even train raters in using the same standards (Lehmann, Ban, & Donald, 1965), but this procedure is normally not practiced in educational institutions that use SETs. Researchers, therefore, need to develop instruments that include standards in the items to avoid ceiling effects in SETs.

Finally, our study was based on data from psychology courses at one university using only a few student cohorts. We included students, teachers, and courses in our models as random effects because they were drawn from larger populations. However, whether and to what extent the results are generalizable with regard to other study programs remains an open question. This problem is complicated by the fact that our study was based on a convenience sample of only the students who were present when the evaluation was conducted. Nevertheless, the effects of this study are comparable to other studies (e.g., Spooren, 2010; Rantanen, 2013; Staufenbiel et al., 2016), which makes us optimistic that the results can be generalized at least to some extent.

Conclusion

Our study has shown that students’ subjective clarity about the study contents of psychology and their prior subject interest can affect SETs to some extent. These variables can be regarded as bias variables, because teaching quality should be influenced only by the performance of the teacher, not by students’ individual characteristics. However, the biasing effects seem to be rather small, implying that the threat they pose to the validity of SETs is not very large.

Previous research on effects of expectations on SETs has focused on effects of expectations concerning grades (e.g., Centra, 2003; Marsh, 2007), course difficulty (Addison, Best, & Warrington, 2006), and workload (e.g., Gursoy & Umbreit, 2005; Kreber, 2003). To our knowledge, this study is the first one examining students’ subjective clarity about study contents as something that could potentially bias SETs. Students’ subjective clarity about study contents (and other study subjects) can be influenced by giving prospective students a realistic preview, for example, by allowing them to attend a trial study program or informative meetings before they sign up for the study program. Our results suggest that such measures might increase the fit of students’ expectations and course content, thereby improving the validity of SETs.

Footnotes

Authors’ Note

We would like to thank Verena Lössl for her contributions to some of the instruments used in this study.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.