Abstract

The aim of this study is to investigate whether worked examples are effective in fostering psychology students’ explanation competence. Explanation competence is a context-specific cognitive disposition that enables a person to construct a causal model of an observable psychological phenomenon by drawing on psychological theories. We set up a training intervention using worked examples to demonstrate how the observed psychological phenomenon (e.g., cognitive dissonance) is represented in an explanation. Instructional support was implemented using a fading procedure. We investigated the effects of worked examples on explanation competence using a sample of psychology students (

Introduction

Using psychological theories to explain psychological phenomena or, simply put, explaining a psychological phenomenon, is not only a part of the definition of psychology as a science (e.g., Gerrig & Zimbardo, 2010), but also a component of scientific competence in psychology (Dietrich et al., 2015). In psychology teaching, learning

The nature of scientific explanations and their structure

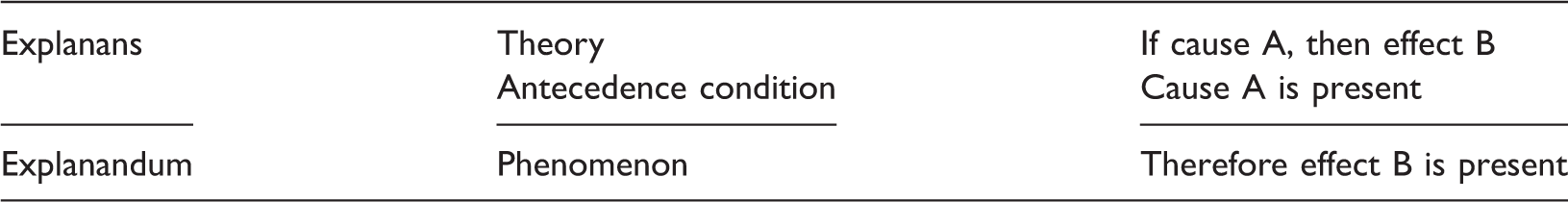

Scientific explanations answer the question why a certain phenomenon happened (Ohlsson, 2010). Ohlsson’s (1992) concept of theory articulation conceptualizes an explanation

1

as the application of a scientific theory to a situation by

The DN model of scientific explanations.

At this point, we have to distinguish between situation and phenomenon. In this paper, we always refer to “phenomenon” as part of the explanandum, whereas “situation” refers to the phenomenon and its antecedence condition as part of the explanans. In the example, the phenomenon is that Eva talks more favorably about her choice, whereas the situation means that Eva has to choose between two universities and that afterwards she talks more favorably about her choice.

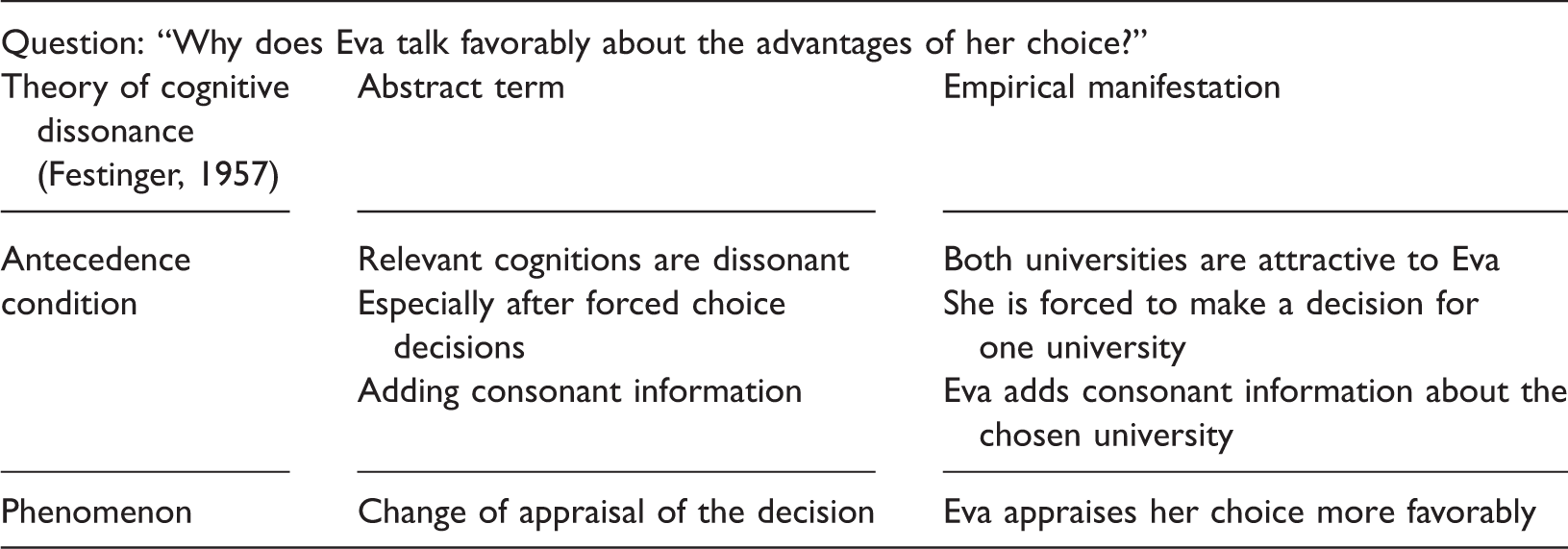

The application of the DN model to the example of Eva given above is straightforward. The theory of cognitive dissonance states that if two or more relevant cognitions are dissonant, then there is a motivation for dissonance reduction. This is especially important after a forced choice. Dissonance reduction is attained by means of adding consonant information. In our example both universities are appealing to Eva, which results in two dissonant cognitions. Thus, she is forced to make a decision causing cognitive dissonance. In order to reduce cognitive dissonance, she adds favorable information (consonant cognitions) about one of the universities, thus devaluing the other and easing the decision process. Taken together this leads to Eva appraising her choice more favorably after the decision.

DN model applied to the theory of cognitive dissonance (Festinger, 1957).

Norms for scientifically valid explanations

Constructing an explanation is a form of deductive reasoning in which theories and the observed situation are coordinated (see Tytler & Peterson, 2003). To consider this deductive inference as valid, an explanation has to adhere to several norms (Rosenberg, 2012; Westermann, 2000). The first norm stipulates that the theory used in the explanation must be proven empirically and thus prevents the use of non-scientific “everyday” or subjective theories (see Stark, 2005). Based on this norm, we set up a second norm that a sound explanation has to mention the used theory

A third norm requires a detailed elaboration of the relationship between the

Logical correctness is a fourth norm to which a good explanation must adhere. As the explanandum constitutes a deduction from the two parts of the explanans, an explanation must not contain circular reasoning (Woodward, 2014). The logical correctness of the explanation is inherent in an adequate theory-evidence-coordination. Thus, a logically correct explanation requires a proper mapping between the antecedence condition and the phenomenon and its respective empirical manifestation. Circular reasoning, for example, would be evident if the same empirical observations were mentioned both in the antecedence condition and in the phenomenon.

The fifth and final norm refers to the consideration of alternative theories that: (a) also explain the situation; (b) can only explain a part of the situation; or (c) cannot explain the situation at all. We call this norm “multiperspectivity,” and it draws on argumentation theory (Walton, 1989). Because explanations are used in scientific argumentative discourse it is important to protect or to support one’s own argument. This can be achieved by mentioning other potentially relevant theories or by ruling out theories that do

To exemplify these norms we revisit Eva’s example. When compiling an actual, that is, a written or oral, explanation, the first two norms require that the explanation contains an explicit reference to Festinger’s (1957) theory of cognitive dissonance. This requirement may be fulfilled by simply adding a citation referring to Festinger’s work. A more elaborate form of this requirement could include an additional summary of Festinger’s theory. Furthermore, to fulfil the norm that a theory must be empirically proven, the explanation has to contain references that support Festinger’s theory. This norm is easily complied by adding citations of suitable studies.

The next norm referring to the theory-evidence-coordination requires a mapping of the elements of Festinger’s theory and the observed situation as shown in Table 2. To provide an adequate theory-evidence-coordination in a written or oral explanation, it must be evident that theoretical statements are coordinated with the appropriate elements of the situation. For instance, the theoretical statement that relevant cognitions must be dissonant has to be mapped with the related statement that both universities are attractive to Eva. Such a mapping has to be completed for all parts of the DN model, that is, the theory and the antecedence condition in the explanans as well as the phenomenon in the explanandum. Thus, in a valid explanation there must be statements containing this mapping. If these mappings in the theory-evidence-coordination are correct, the explanation automatically complies with the norm of logical correctness. Thus, these two norms are related.

To fulfil the final norm, the explanation should consider other relevant theories. In the case of cognitive dissonance, Heider’s (1958) balance theory is an example of a potentially relevant theory. A sound explanation should therefore figure out why the balance theory is not as suitable as the cognitive dissonance theory to explain the situation.

Explanation competence and learning from worked examples

According to Klieme and Leutner (2006), competences are context-specific cognitive dispositions necessary to cope with situations and demands in specific domains. In their model of scientific competences in the social sciences, Dietrich et al. (2015) conceptualize explanation as a cognitive process in which a causal relation is constructed. Following these two lines of reasoning, we define explanation competence as a context-specific cognitive disposition that enables a person to construct a causal model (see Kim, 1994) of an observable psychological phenomenon (like cognitive dissonance) by drawing on psychological theories. Explanation competence, as defined here, can be further divided into distinct competences necessary to apply each of the norms for sound explanations outlined in the previous section. Thus, explanation competence is an overarching construct that has several facets. These facets form a set of cognitive dispositions enabling the application of the norms of good explanations.

A promising approach to foster explanation competence is learning with worked examples (hereafter WE). This approach is mainly used for initial knowledge application in well-structured domains (Renkl, 2014). The effectiveness of WE in well-structured domains has been frequently documented. For example, learning with WE supports knowledge acquisition in mathematics (Durkin & Rittle-Johnsson, 2012). But WE have also been shown to be effective in teaching skills in the complex and ill-structured domain of scientific argumentation (Hefter, Berthold, Renkl, Riess, Schmid & Fries, 2014; Schworm & Renkl, 2007). As explanations in psychology also constitute a part of a complex and ill-structured domain, WE should be an appropriate tool to foster students’ explanation competence.

WE present to the learner the problem itself, the steps involved in the problem-solving process as well as the solution. The learner’s task is to elaborate the WE. As the learner does not have to find the solutions themselves, they are able to focus their attention towards understanding the solution and, most importantly, the problem-solving steps. The lower cognitive demands of example elaboration allow the learner to allocate more cognitive resources to the acquisition of problem-solving schemata (Sweller, 2005). Furthermore, it is standard practice to present several isomorphic WE to the learner (Renkl, 2014). The repeated presentation of isomorphic WE should support the learner’s construction of a problem-solving schema and provide practice for the automation of the problem-solving process (Renkl, 2014). Didactically, WE constitute so-called double content examples. According to Hefter et al. (2014), double content examples consist of two domains, that is, the

WE do not, however, ensure learning success per se. Using WE alone often results in a so-called illusion of understanding (Renkl, 2014). Therefore, learning with WE is usually combined with different forms of cognitive activation and additional instructional support. One such form of activation is the presentation of

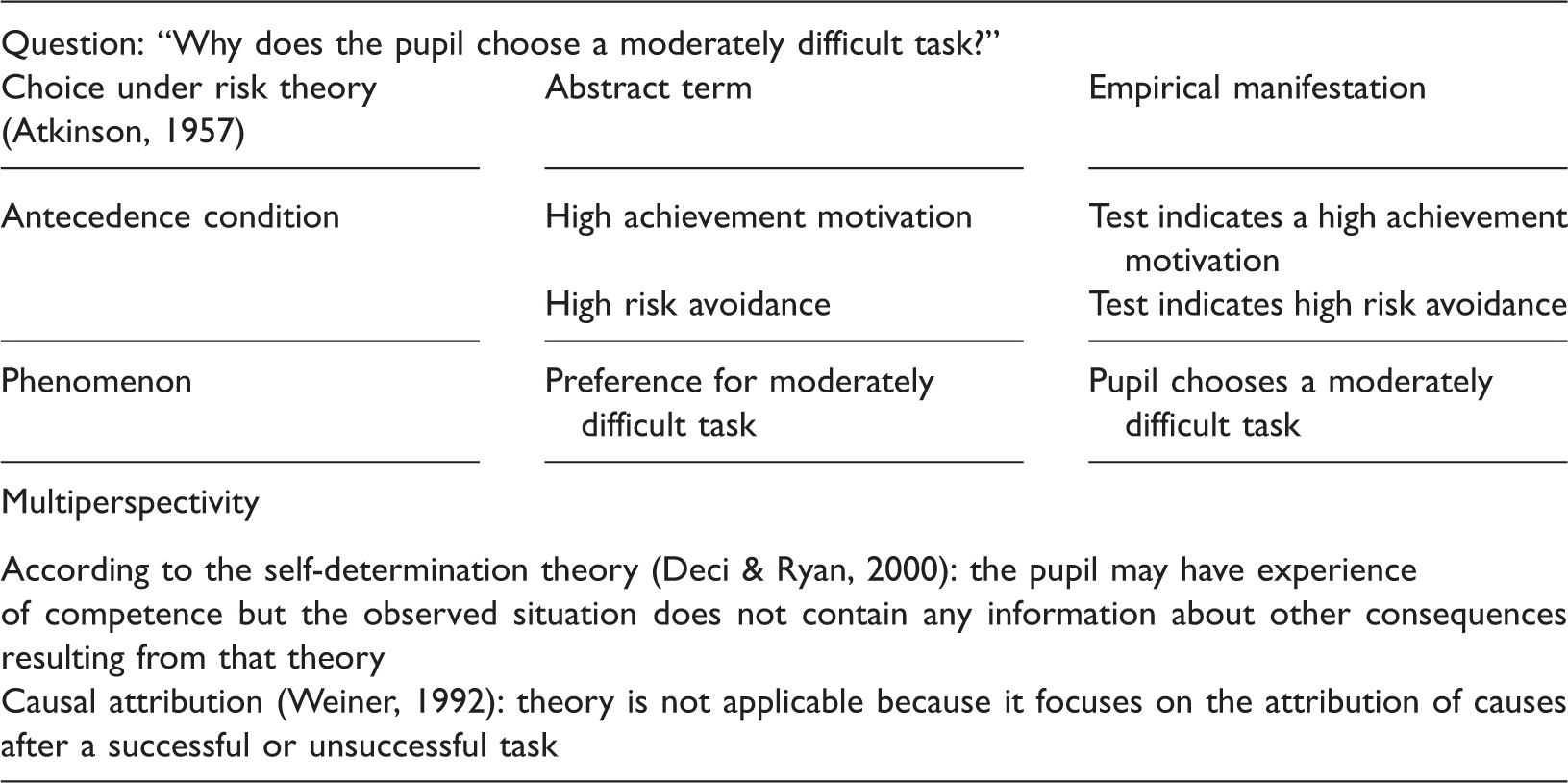

Drawing on WE principles, Hefter et al. (2014) developed a short-term training intervention to enhance argumentation competences. Their intervention featured examples of an argumentative discourse. In order to cope with low prior knowledge about argumentative discourse the authors presented an introductory instructional text explaining the elements of such a discourse (see Schworm & Renkl, 2007). Inspired by Hefter et al.’s (2014) short-term training intervention, we set up a similar training intervention to foster psychology students’ explanation competence. As we did not expect our participants to have declarative knowledge of the basics of explanations and the respective terminology, we also presented an introductory instructional section explaining the DN model. The introduction presented information about the basic concepts and the various parts of an explanation. In the following section, WE examples were used to foster explanation competence. In these WE we used the DN model to illustrate the solution steps necessary to compile a written explanation. The necessary solution steps consist of first relating the elements of the DN model to the elements of the situation and the theory. The second and final solution step requires the compilation of a written narrative form of the DN model. Furthermore, the WE were double content examples: a given situation and a suitable psychological theory were used in the exemplifying domain, whereas the DN model was used in the learning domain. In order to implement a fading procedure we used two isomorphic structured WE, in which the first WE was completely worked out, and in the second WE several solution steps were omitted.

Hypotheses

The goal of this study is to evaluate the effectiveness of a training intervention to foster psychology students’ declarative knowledge about the DN model and the norms as well as explanation competence. Following the argumentation above, WE seem to be an effective tool for improving psychology students’ explanation competence and, furthermore, enhance declarative knowledge about explanations. Because explanation competence as an overarching competence can be divided into a set of cognitive dispositions to apply the norms for scientifically sound explanations, we furthermore expect WE to have positive effects on these distinct competence facets. Thus, we assume that our training intervention will:

foster psychology students’ declarative explanation knowledge, foster psychology students’ explanation competence in general, and foster psychology students’ competence to apply the norms for sound explanations as facets of explanation competence.

Methods

Sample size planning and sample

Sample size was planned using GPower Version 3.1.3 software (Faul, Erdfelder, Buchner & Lang, 2009). For a mixed ANOVA with two measurements in two groups and an interaction between the within and between factors we assumed a nominal significance level of α = .05, a power of β = .95 and a moderate effect of

Only 46 psychology students (12 male, 34 female), however, from a German university participated in the study. The mean age was 21.5 years (SD = 2.63) and the median semester was 3 (range 1–7). The participants received a two-hour credit for participation. Since not enough students volunteered for the study, we weighted the assignment scheme so that approximately two-thirds of the participants were randomly allocated to the experimental condition, resulting in an experimental group of 30 (8 male, 22 female) and a control condition of 16 participants (4 male, 12 female).

The mean age in the experimental condition was 21.43 (SD = 2.32) and the median semester was 3 (range 1–6) whereas the mean age in the control condition was 21.63 (SD = 2.47) and the median semester was 4 (range 1–8).

Design and procedure

The study had a pre–post control group design, with the between-factor training intervention (experimental condition vs. control condition) and the pre-test and post-test as the repeated-measurements factor. The pre- and post-tests consisted of identical tests to measure declarative explanation knowledge and explanation competence. In addition, the pre-test included a demographic questionnaire. In the experimental condition, participants worked with the training intervention, whereas the participants in the control condition received a fake training intervention in which they had to evaluate arguments. Its content was not related to the contents of the training intervention. All materials were in a paper-and-pencil format, and the participants worked self-paced through the entire session.

The sessions took place in a laboratory. We offered participants several possible time frames to participate from which they could choose. A student research assistant guided each session.

A session consisted of the pre-test, the training intervention and the post-test. After the participant finished either the pre-test or the training intervention, they signaled to the examiner, who then handed the next portion of the material to the participant. Standardized instructions were contained in the material, but participants were free to ask comprehension questions at any time. There was no time limit for each individual part of a session, but we instructed the participants that the general time limit was 120 minutes. No participant reached this time limit. The pre-test consisted of a self-constructed multiple-choice test to measure declarative knowledge about explanations and an open-response task to measure the participant’s competence to compile an explanation. After the pre-test they received the training intervention (experimental condition) or the fake training intervention (control condition). Allocation to the training and the fake intervention was randomized according to the allocation scheme for each participant. Finally, participants received the post-test, which consisted of the same self-constructed instruments as the pre-test.

The procedure was the same for participants in the experimental and control conditions, expect for the fake training intervention in the control group. The fake training intervention consisted of texts regarding the history of rhetoric. In between these texts, argument evaluation tasks (see Stanovich & West, 1997) were presented. The fake training intervention had the same number of text pages as the training intervention, and we verified in a pre-test that the reading time for the training and the fake training intervention corresponded.

Training intervention

The intervention was carried out via a booklet consisting of three parts. The first part contained an introductory text on explanations. The text provided information about the DN model, the structure of explanations, the function of scientific theories in explanations and the norms for valid explanations. The DN model was explained using a simple example analogous to the one shown in Table 1. The introduction also featured a heuristic presenting how an explanation can be constructed if the description of an observed situation is given. This heuristic was exemplified using the same example as the one used to explain the DN model. At the end of the first part, students had to answer some multiple choice (MC) items asking about the contents of the instructional part. Feedback about the solutions was provided.

The second part contained two WE. Each of the two WE were structured as follows. First, we provided a situation to be explained as well as the theory that was used in the WE. After that, the generation of the explanation was modeled by means of a so-called explanation tool (McNeill & Krajcik, 2007). The explanation tool is a didactic aid that helps to improve the abstraction of the problem-solving steps. The explanation tool is structured according to the DN model and assists the participants in contrasting the abstract theoretical terms with their situational manifestation. The structure of the explanation tool for the first WE is shown in Table 2. Thus, the explanation tool firstly helped the learner to state clearly which theory was used to explain the observed phenomenon and, secondly, to contrast the abstract elements of the theory with the elements of the observed situation.

The first WE consisted of the cognitive dissonance example from above and thus contained only one theory. Also, the first WE was completely worked out. At the beginning of the example, participants were prompted to figure out the question to be answered by the explanation. We provided feedback containing the correct response. After that, the WE presented a written form of the explanation. The first WE focused on theory-evidence-coordination and the compilation of a written explanation from the components of the explanation tool.

Explanation tool for the second worked example.

Dependent variables

Declarative explanation knowledge

“The DN model consists of the following three components:

Scientific theory, the causes of the phenomenon and its consequences; Core statement of a phenomenon, abstract premise and specific premise; Scientific theory, observed phenomenon and the causes of the observed phenomenon”.

where option (c) is the attractor.

Participants were instructed not to guess. Answers were dichotomously coded right or wrong. A maximum of 12 points could be attained. The participants completed the same test as both the pre-test and the post-test. Guttman’s split-half reliability for the pre-test was .71 for the pre-test and .60 for the post-test.

Explanation competence

The participants’ ability to explain a real-world situation using psychological theories was measured with a self-constructed open-response task. The same task was used in both the pre- and post-tests. The test contained a description of a pupil showing physiological symptoms of test anxiety and a decline in academic performance in mathematics. The physiological symptoms were shivering and illness before a math exam. The decline in academic performance was a series of bad grades in successive math exams. The situation also contained a description of the pupil’s family background of a father having high expectations for his son’s math performance and exerting pressure to achieve good math grades. The pupil was characterized as having been a very shy and anxious toddler. The description was 534 words long. Furthermore, we provided a summary of theories of test anxiety (e.g., Zeidner, 1998, 232 words) as well as summaries of two theories that could not explain the situation (causal attribution (Weiner, 1992) and social learning theory (e.g., Bandura, 1971)) and a summary of a theory that could only explain a part of the situation (transactional model (e.g., Sameroff & Chandler, 1975); in total 137 words for the description of the three alternative theories). Students were instructed to write down their explanation of the situation using the given theories and compose whole sentences. In the post-test, participants were additionally instructed to apply the knowledge about the explanations that they had learned in the training intervention.

To assess explanation competence we rated the presence of the explanation norms in the participants’ writings. In order to write an adequate explanation, students should mention explicitly the theory of test anxiety, provide a logically correct deduction from the explanans to the explanandum and elaborate on the theory-evidence-coordination. Firstly, we rated whether the explanation mentioned the theory (

Second, we rated if the explanation contrasted the abstract elements and their manifestation in the observed situation (

The norm of the logical correctness of an explanation depends on theory-evidence-coordination. A logically correct explanation depends on a correct theory-evidence-coordination. If the relation of the elements of the observed situation to the elements of the theory is correct, then the explanation is automatically correct. Thus, the participants had to recognize that the family background described constitutes the antecedence condition, whereas the physiological symptoms and the decline in academic performance follow from the antecedence condition when considered in conjunction with the theory of test anxiety. Therefore, there was no separate variable for logical correctness.

Third, we rated the multiperspectivity of the explanation. To fulfil the criterion of multiperspectivity, the explanation was required to exclude the theory of causal attribution as well as social learning theory. Furthermore, the explanation must acknowledge that the transactional model explains only a part of the situation. Thus, multiperspectivity splits into three parts:

Finally, in order to get an overall measure for explanation competence we calculated the sum score of all facets of explanation competence. Consequently, the dependent variable

The rating procedure was as follows. Two independent raters (the first author and a student assistant holding a bachelor’s degree in psychology) coded seven randomly drawn explanations from the pre- and post-tests in three rounds. We used Cohen’s κ as the measure for inter-rater agreement. In the first round the two raters coded the explanation and afterwards discussed the rating. Cases of disagreement between the raters were discussed, which led to an adaptation of the coding scheme after the first round. In a second round the same explanations were rated and the codings compared repeatedly. Again, cases of disagreement were discussed. Afterwards, we conducted a third coding round with the same explanation. As the inter-rater agreement was sufficiently high, the second rater coded the remaining explanations. For

For further information about the assessment of explanation competence we include in Appendix 2 a table (Table 8) containing an overview of the coding criteria as well as an explanation tool featuring the core elements of the required explanation.

Statistical analyses

We used SPSS Version 20 (IBM Corporation, 2011) for all statistical analyses and applied the following analysis strategy. For every dependent variable we conducted a mixed ANOVA with pre- and post-tests as within-factor and the intervention (experimental vs. control condition) as between-factor. Afterwards, we calculated comparisons between the pre- and post-tests in each condition by means of a pairwise

Results

Internal validity

Experimental and control condition did not differ significantly in the distribution of sex, χ2(1) = 0.02, n.s.; nor in the mean semester,

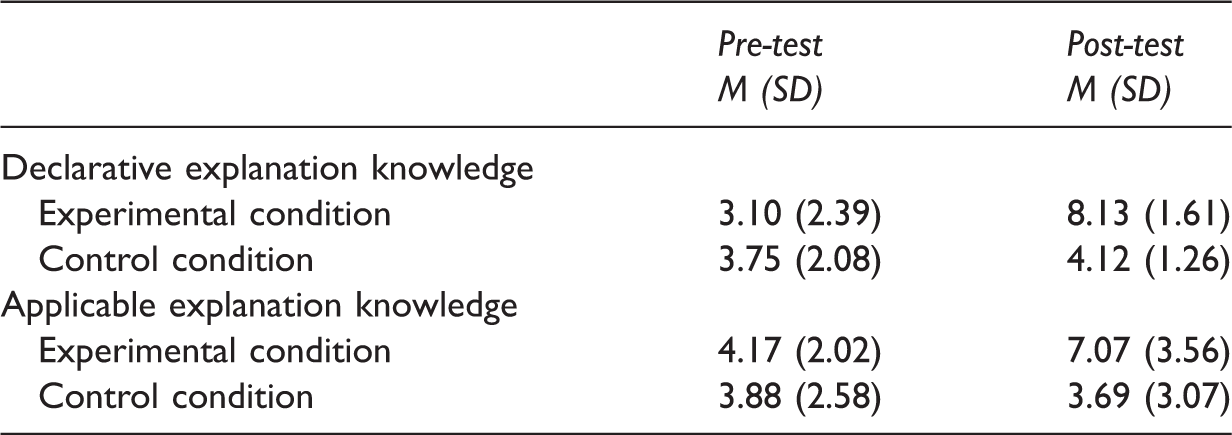

Declarative explanation knowledge

Means and standard deviations for declarative explanation knowledge and explanation competence total score in the pre- and post-tests.

Explanation competence

A similar picture emerged with respect to

Facets of explanation competence

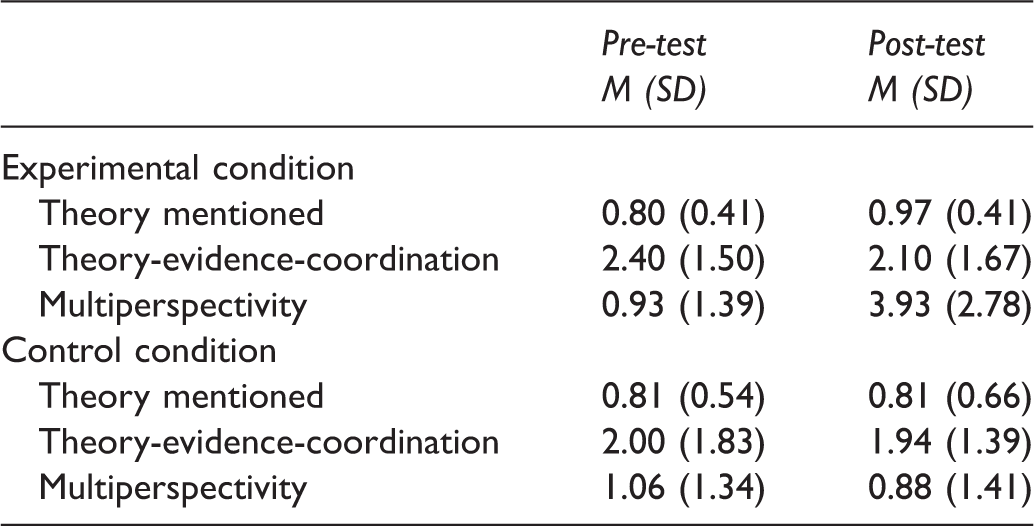

Means and standard deviations for the facets of explanation competence in the pre- and post-tests.

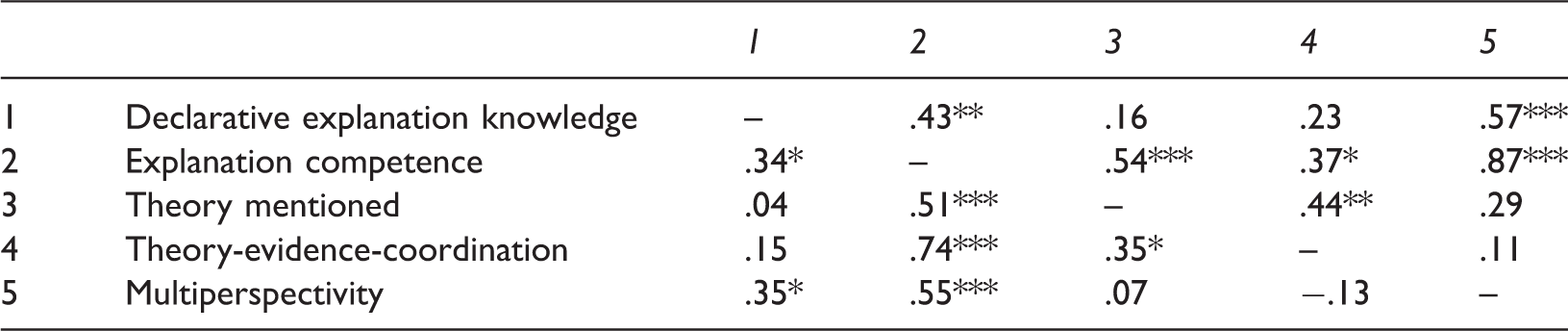

Correlations between declarative explanation knowledge, explanation competence total score and explanation facets.

The correlations for the pre-test are below the main diagonal, and the correlations for the post-test are above (**

A similar picture emerged for

For

Discussion

Overall, the results demonstrate that the training intervention fostered, in particular, the participants’ declarative explanation knowledge, but only parts of explanation competence. Regarding declarative explanation knowledge, the pre-test results support our assumption that psychology students do not have declarative knowledge about scientific explanations. The strong effects of the experimental condition shows that our training intervention effectively enhanced the participants’ declarative knowledge of scientific explanations. The same picture emerged for the overall score for explanation competence. There was a strong effect on the experimental condition, suggesting evidence for the effectiveness of our training intervention.

On the facet level of explanation effects, however, a positive effect only emerged for

Another possible explanation of this finding is the understanding of the task demands by the participants. Instead of changing their naïve concept of explanation to a scientific one, it is possible that the participants interpreted the demand of the explanation task according to the requirements of explanations presented in the WE. In this way, there is no conceptual change (see Lambrozo, 2012) from a naïve, everyday concept of explanation to a scientific one, and the participants only fulfilled the task requirements. This view is supported by the descriptive statistics in Table 5, indicating a shift from the application of

One more possibility besides simply fulfilling task requirements is that the participants kept their naïve concept and only

This interpretation, again, assumes that students associated the scientific concepts with the norm of

A striking result on the facet level is the absence of an effect on

This situation is analogous to the development of expertise in the domain of medical diagnosis (Charlin, Boshuizen, Custers & Feltovich, 2007). In medical diagnosis, novices learn various symptoms of illness. When trying to make a diagnosis, a novice simply compares a patient’s symptoms with the list of symptoms typical for some illnesses. During the development of expertise the knowledge of typical illness symptoms is incorporated into networks, a process known as knowledge encapsulation (Rikers, Schmidt & Boshuizen, 2000). When working with patients, so-called “illness scripts” are generated, which contain relevant information regarding the illness symptoms and their possible manifestation in real cases. These illness scripts therefore facilitate the diagnostic process. An analogous development may apply to the acquisition of expertise in the application of psychological theories. In working with psychological theories, students acquire knowledge about what a theory “looks like in practice.” So, in the development of expertise, the students’ content knowledge of psychological theories is enriched with knowledge on how the abstract theoretical terms may manifest in specific situations. As indicated by the pre-test data, the participants are novices regarding the application of psychological theories (Birke et al., 2016), as most of them were in the first half of their undergraduate studies. This could explain why the participants in our training intervention failed to acquire adequate theory-evidence-coordination skills.

This perspective has two important consequences. Firstly, from an instructional point of view, if the goal is to foster students’ explanation competence, it seems necessary to not only provide worked examples demonstrating the compilation of an explanation, but also to provide examples on how psychological theories are applied in practice. Secondly, from a cognitive point of view, more fine-grained analyses of the various kinds of knowledge involved in explanation competence are necessary. For example, the types of knowledge involved in explanations could be analyzed with regard to Anderson et al.’s (2001) knowledge taxonomy. Such an analysis in the context of that knowledge taxonomy would allow for the inference of specific instructional measures to support the acquisition of various types of knowledge presumably involved in explanation competence.

Besides such an explanation drawing on the expertise in the use of psychological theories, there is also the possibility that our training intervention does not effectively foster the competence to express a generated explanation in a written form. In the training intervention, theory-evidence-coordination was always demonstrated by means of the explanation tool. The transition from the explanation to a textual explanation was not, however, instructionally supported. Thus, the participants may have been able to construct a causal model of the phenomenon drawing on a psychological theory but were not able to express this model in the form of a written explanation. As explanation competence was assessed solely by means of a written explanation, the assessment does not allow separating the competence to construct such a model and the ability to verbally express the model.

Lastly, another potential explanation of the missing effect of theory-evidence-coordination is that two WE might simply not be enough to automatize the steps required in theory-evidence-coordination, and the participants might not have received enough training to coordinate theory with evidence without the use of the explanation tool.

Since we do not have data on the learning process itself, however, further studies should attempt to amend the data on learning outcomes with additional process data. Furthermore, a more fine-grained assessment of explanation competence is needed.

Limitations

One of the major limitations of the present study is the use of the same test for declarative explanation knowledge and explanation competence in the pre- and post-tests. Although such a measure ensures—at least superficially—the equivalence and thus the comparability of pre- and post-tests, at the same time it hinders the generalizability of the results. This is less problematic for declarative explanation knowledge, but more so for explanation competence. The current results provide no evidence that the participants can transfer the acquired explanation competence to novel situations.

Another possible limitation is the possibility that the participants were simply trained to the task. Since the explanation task in the pre-and the post-tests was different from the task in the worked examples, however, such a training process seems unlikely. Besides, if teaching-to-the-task had happened, it seems plausible that all facets of explanation competence would have been affected and not only

A third limitation refers to the

Conclusions

The results indicate that it is possible to foster declarative knowledge about explanation and explanation competence with our training intervention. More research is needed concerning the number of necessary WE, and the role of content knowledge of psychological theories for explanation competence. Besides fading, further research could investigate if instructional explanations are also helpful in fostering explanation competence.

From a didactic point of view, our results can be seen as hints about how to implement the concept of explanation competence in psychology teaching. Our results suggest providing declarative knowledge about the scientific concept of explanation before the compilation of explanation is demonstrated. Instructors should firstly use structural aids like the explanation tool to demonstrate how explanations of psychological phenomena are constructed. Furthermore, to ensure correct theory-evidence-coordination, instructors should also emphasize content knowledge and its relation to the observed situation, and also use an adequate number of examples to ensure that students acquire the necessary solution steps. Fading procedures are also easily implemented in classroom settings. The fading of our training intervention can easily be implemented by means of classroom questions or exercises.

Lastly, we want to emphasize that explanation competence is not only an import construct with regard to the science of psychology and the scientific competences of psychology. Constructing explanations is also a vital part of applied psychology, for example, presenting expert opinions in court cases. Thus, the instructional possibilities for fostering the explanation competence of psychology students should be studied in more detail.

Footnotes

Acknowledgements

We want to thank Martin Klein and Stephanie Knoll for their support in the preparation of the manuscript and Neele Oetjen for her support in coding the explanation task.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.