Abstract

This study examined the effectiveness of a study skills training session offered at midterm to students enrolled in a large section of Introductory Psychology. In the training session, students watched a series of five, short videos on effective learning and answered related clicker questions that encouraged them to reflect their own study strategies and beliefs about learning. Students across all levels of course performance rated the training as helpful and effective. Although there were no differences in subsequent study time and metacognitive skills between students who attended the training and those who did not, students who attended the training gained insight into some of the limitations of their own past study strategies. Additionally, although students who chose to attend the training had lower scores on exams taken prior to training, the difference between those groups disappeared for exams that followed training. Embedding study skills training into an existing course at a point in the semester when students are highly motivated to change and can meaningfully reflect on their own past course performance appears to be a useful pedagogical strategy for introductory-level students.

Introduction

The importance of study skills and habits to academic performance is well-documented (e.g., Hassanbeigi et al., 2011; Marrs, Sigler, & Hayes, 2009; Prevatt, Petscher, Proctor, Hurst, & Adams, 2006). According to a large meta-analytical study by Crède and Kuncel (2008), the study skills students bring with them to college are just as predictive of academic achievement as are high school grade-point average and SAT scores. Unfortunately, many incoming college students lack the study skills needed for success in college-level courses. Retention of studied material is best when students engage in elaborative study techniques that promote effective encoding (Craik & Tulving, 1975); however, many of the study techniques most commonly used by college students (e.g., rewriting notes, and reviewing highlighted material), involve shallow processing of course material and are not predictive of successful performance in college courses (Gurung, 2005; Gurung, Daniel, & Landrum, 2012). Thus, though many first-year college students take their first exams with high expectations for success (Twenge & Campbell, 2008), many are surprised and disappointed to realize that the study strategies that led to high grades in high school are largely ineffective in college.

In order to help students develop more effective study strategies and habits, numerous colleges and universities offer basic study skills courses for first-year students. Research on the effectiveness of such courses has shown that study skills training can positively influence students’ beliefs and attitudes regarding learning, including their interest in college achievement (Urciuloli & Bluestone, 2013) and academic self-efficacy (Wernersbach, Crowley, Bates, & Rosenthal, 2014). Study skills courses and programs have also been shown to improve academic performance and student success rates. For example, Arnott and Dust (2012) found that study skills training can improve performance by helping to mitigate negative effects of harmful study practices (i.e., massed rather than spaced practice). Additionally, Jordan, Parker, Li, and Onwuegbuzie (2015) found participation in a six-week study skills program to be associated with gains in academic performance, as measured by improvement in grade point average. They also found participation in the program to be associated with improved graduation rates, though the effect size of this finding was very small. Jordan et al. (2015) failed to find effects on retention; however, other studies have found study skills training to positively influence retention rates, particularly among students deemed to be most at risk for dropping out (Braunstein, Lesser, & Pestracice, 2008; Polansky, Horan, & Hanish, 1993). While study skills training is typically offered in a seated, face-to-face format, it can also be effective when offered online (Prygmachuk, Gill, Wood, Olleveant, & Keeley, 2012) and when targeted at very specific academic skills, like avoiding plagiarism (Newton, Wright, & Newton, 2014).

Some types of study skills training have been shown to be more effective than others. In particular, results of Hattie, Biggs, and Purdie’s (1996) meta-analysis show that study skills training works best when highly contextualized and integrated into an existing course. Many study skills training programs are presented in a “bolt-on” fashion and are thus independent of students’ other academic courses. With this decontextualized approach to study skills training, students may fail to see the relevance of the training to their own, specific coursework. Moreover, the training cannot provide students with opportunities to self-reflect on their own course performance and to then develop specific strategies to improve (Wingate, 2006). By embedding study skills training into an existing introductory-level course, instructors can present first-year college students with effective alternatives to their current study strategies, and students have opportunities to consider and practice new strategies as they relate to the demands of a specific course.

An additional benefit of embedded study skills training, particularly if it is presented after students have already received feedback on their course performance, is that it can enhance students’ metacognitive skills. Metacognition can be defined as one’s knowledge of one’s own cognitive processes (Flavell, 1976). While there are exceptions in the literature (e.g., Sperling, Howard, Staley, & Dubois, 2004), metacognition has generally been shown to be predictive of academic performance (Hartwig, Was, Isaacson, & Dunlosky, 2012; Sperling, Richmond, Ramsay & Klapp, 2012; Young & Fry, 2012). Schraw and Dennison (1994) posit two components of metacognition: one’s knowledge of cognition; and one’s ability to regulate cognition. One of the assumptions of self-regulated learning models is that as students work to reach a learning goal, they must monitor their own progress and then regulate and adjust their cognition, motivation, and behavior in order to reach that goal (Pintrich, 2004). To do this, students must have an accurate grasp of the boundaries of their understanding of course material so they can then gauge the amount of study time they need and select effective study techniques to master the material. Students with poor metacognitive skills lack these skills and tend to overestimate their knowledge of course material. Consequently, these students often stop studying prematurely and underprepare for exams. An embedded study skills training that encourages students to self-reflect on the specific study strategies they have used in the past for that particular course, consider the effectiveness those strategies, and plan future approaches to learning can strengthen these important metacognitive skills (Ambrose, Bridges, DiPietro, Lovett, & Norman, 2010).

The current study examines the effectiveness of a study skills training session, embedded at midterm into a large section of an Introductory Psychology course. During the training session, students watched a series of five short videos on effective studying (Chew, 2011). These videos present cognitive principles of learning and how they apply to study techniques such as note-taking and text highlighting, and they challenge common misperceptions many students hold about learning (e.g., learning is fast, and intelligence is a fixed, rather than a fluid ability). Before and after each video, students answered a number of clicker questions that required them to self-reflect about their own prior performance in the course, their current study habits and strategies, and their beliefs about learning. While it would be possible for the instructor to deliver the same content presented in the videos (see Chew, 2010 for lecture suggestions), we chose to use the videos as the basis for the training class, as we have found that the videos hold students’ attention well and serve as effective springboards for class discussion.

The class instructor invited all students to attend the training sessions but placed special emphasis on recruiting lower-performing students for two main reasons. First, previous research has shown that improvements related to study skills training are greatest among students who enter the training more academically disadvantaged (Kennett & Reed, 2009; Urciuoli & Bluestone, 2013). Second, because the study skills training session was optional, we recognized the possibility that the students we believed to be most in need of training (i.e., those with lower scores at midterm) would be least likely to attend. Research has shown that students who are struggling in a course are less likely than are those who are doing well to take advantage of optional opportunities to receive additional help outside of class (Jensen & Moore, 2009; Moore, 2008). In order to enhance the likelihood that students presumed to be most in need of the training would attend, we sent emails to all students in the class encouraging them to attend the training session. We personalized those invitations based on students’ grade at midterm and most strongly encouraged students with midterm grades of ‘D’ or ‘F’ to attend. Deslauriers, Harris, Lane, and Wieman (2012) found that sending personalized emails to low-performing students at midterm, inviting them to visit with the instructor to discuss study skills, led the majority of invited students to attend the meeting.

We believed that making the study skills training session an optional, rather than required, component of the course might also make students more likely to alter their attitudes and behaviors related to academic work. It is possible that the very act of choosing to attend an optional study skills class might lead students to subsequently think of themselves differently, and this change in thinking might lead to behavior change. Both self-perception theory (Bem, 1972) and cognitive dissonance theory (Festinger, 1957) posit that engaging in behaviors, particularly when they are voluntary, self-selected behaviors, lead individuals to adopt beliefs about themselves that are congruent with those behaviors. Individuals then feel the need to act in ways that are consistent with those newly-formed self-beliefs (Freedman & Fraser, 1966). Thus, a student who chooses to attend an optional study skills training class might consequently have an enhanced belief that he or she is the kind of student who cares about academic excellence, and this change in self-belief might then lead the student to spend more time studying.

We predicted differences between students who attended versus those who opted not to attend the study skills training session. Those predicted differences included the following:

Students presumed to be most in need of study skills training (i.e., students with lower exam scores at midterm) would be more likely to attend the training session than would those with higher exam scores. Students who attended the training would report spending more time studying for the next unit exam than would those who did not attend. Students who attended the training would show greater metacognitive awareness by being more accurate in their estimates of their own performance on the next exam than would those who did not attend. Students who attended the training would show greater evidence of improved exam performance on subsequent course exams compared to classmates who did not attend. Students would demonstrate increased awareness of their own strengths and weaknesses related to study skills and exam preparation. Students would rate the study skills training class as helpful and effective, both immediately after the training and at the end of the semester.

We also wanted to assess the impact of the self-reflective interventions during the study skills class to determine if students were engaged during the discussion, and if students perceived the study skills class to be effective and helpful. Therefore, we made the following additional predictions:

Method

Participants

We received Institutional Review Board approval for this study. Three hundred and four students enrolled in one section of Introductory Psychology at a mid-sized Midwestern state university served as participants for this study, and all were invited at midterm to attend an optional, extra credit class on study skills. The course was offered in a blended (hybrid) format, so although the course was officially listed as having Tuesday and Thursday meeting times, class typically met only on Tuesdays, leaving students free during class time on Thursdays to complete their online work. Thus, both the classroom and students’ schedules were free during the optional study skills class, which was scheduled on a Thursday during the allotted class time.

All students had the opportunity to earn up to 30 extra credit points in the class (out of 1,000 points possible for the semester). Students who attended the optional study skills class received 10 of the 30 possible extra credit points. One hundred and seventy-three students (57% of the class) attended the optional class.

Materials and Procedure

Email reminders

The date of the optional study skills training class appeared on the course syllabus at the start of the semester, and the instructor sent email reminders to all students the day before the study skills class encouraging them to attend. In order to reinforce the message that the optional class would be beneficial to all students, and in order to even more strongly encourage students with low midterm grades to attend, the instructor sent each student one of four different email invitations. The version of the invitation email students received depended on their letter grade at midterm (i.e., one version was for students with a midterm grade of ‘A’, one was for students with a ‘B’, one was for students with a ‘C’, and one was for students with a ‘D’ or ‘F’).

Study skills videos

We prepared the optional class around the video series, “How to Get the Most out of Studying” (Chew, 2011). The series consists of five videos, all approximately 7–8 minutes in length. The first video, Beliefs That Make You Fail…Or Succeed, presents common false beliefs many students hold about learning (e.g., learning is fast, people are either good or bad at certain subjects, students can multitask while studying without impeding learning). The video then describes how these commonly-held beliefs can undermine learning and it corrects those beliefs. The second video, What Students Should Understand About How People Learn, presents the levels of processing theory of memory, gives examples of shallow and deep study techniques, and explains how deep processing of course material leads to better retention. It also discusses the relationship between students’ metacognitive abilities and their study habits and course performance. The third video, Cognitive Principles for Optimizing Learning, presents specific ways in which students can process course information at a deeper level and focuses on the importance of overlearning. The fourth video, Putting the Principles for Optimizing Learning into Practice, presents highlighting and note-taking strategies designed to encourage deep processing and describes the importance of generating questions, making concept maps, and practicing recall while studying. The final video, I Blew the Exam, Now What?, provides tips for students who perform poorly on an exam, such as reflecting on preparation methods and developing a plan for future study. We presented the five videos in the same order they are presented within the video series.

Timing of training

The study skills training class took place during the ninth week of the 16-week semester, which was the week students received their midterm grades. We presented the optional training session at midterm for two reasons. First, students had already covered course material related to memory and cognition, and they could therefore apply knowledge of that course content to their consideration of effective learning. Second, presenting the training at midterm meant students had received feedback on their academic performance in the course in the form of two exam scores and online assignments covering six chapters from the text. Thus, we were able to encourage students to reflect on the effectiveness of their past study behaviors while considering the new approaches to learning presented in the training.

Self-reflective questions

Descriptive Statistics for the Self-Reflective Clicker Questions Administered during the Study Skills Training Session

Responses made on a 7-point scale of (1) strongly disagree to (7) strongly agree.

Dependent measures

Students completed a total of four unit exams over the course of the semester. Each exam included 60 multiple-choice questions covering three chapters from the text and each was worth 120 points out of 1,000 points possible for the semester. Students completed the two unit exams prior to the midterm study skills training. As we did not randomly assign students to condition, we used scores on the first two unit exams to control for students’ prior course performance when comparing those who attended the study skills class with those who did not. In the weeks following the study skills training class, students completed two additional unit exams. We used scores on these two exams as our main measures of academic performance in the course.

Immediately after the third unit exam, which occurred two weeks after the training session, all students completed a short questionnaire. Students first estimated the number of hours they spent preparing for the exam by selecting one of eleven response options ranging from “under an hour” to “more than 10 hours.” Finally, students estimated the percentage of questions they believe they answered correctly on the exam they had just completed (from 0% to 100%). Global monitoring accuracy, which is the difference between students’ prediction of their exam performance and their actual performance, is a commonly used measure of metacognition (Nietfeld, Cao, & Osborne, 2005). We used students’ global monitoring accuracy (estimated percentage correct minus actual percentage correct) as our measure of metacognitive awareness.

We assessed students’ perceptions of the effectiveness of the study skills training at two points during the semester. First, students answered a final clicker question during the study skills training class that asked them to rate their level of agreement with the statement, “Today’s class was helpful” on a scale from (1) strongly disagree to (7) strongly agree. Second, during the last class of the semester, we asked students to rate the effectiveness of a number of components of the course, including the study skills training class. Students first indicated whether or not they had attended the optional study skills training class, and those who indicated that they had attended then rated its effectiveness on a scale from (1) extremely ineffective to (7) extremely effective.

Results

A total of 287 (94.4%) students enrolled in the course had complete data for all four exams at the end of the semester and were included in the analyses. Of these students, 173 (60%) attended the study skills training class, and 114 (40%) did not. Table 1 presents descriptive statistics for clicker questions that students answered before and after viewing specific videos. Data are shown in the same order in which students viewed the videos and answered the clicker questions.

Data supported hypothesis 1, such that students with lower exam scores at midterm (mean (M) = 182.59, standard deviation (SD) = 25.38) were more likely to attend the training than were those with higher exam scores (M = 190.18, SD = 26.22), t(285) = 2.45, p = 0.015, d = 0.29, 95% confidence interval (CI) [−13.70, −1.49]. However, it should be noted that more than half the class attended the study skills training, indicating that it was not only lower-performing students who attended.

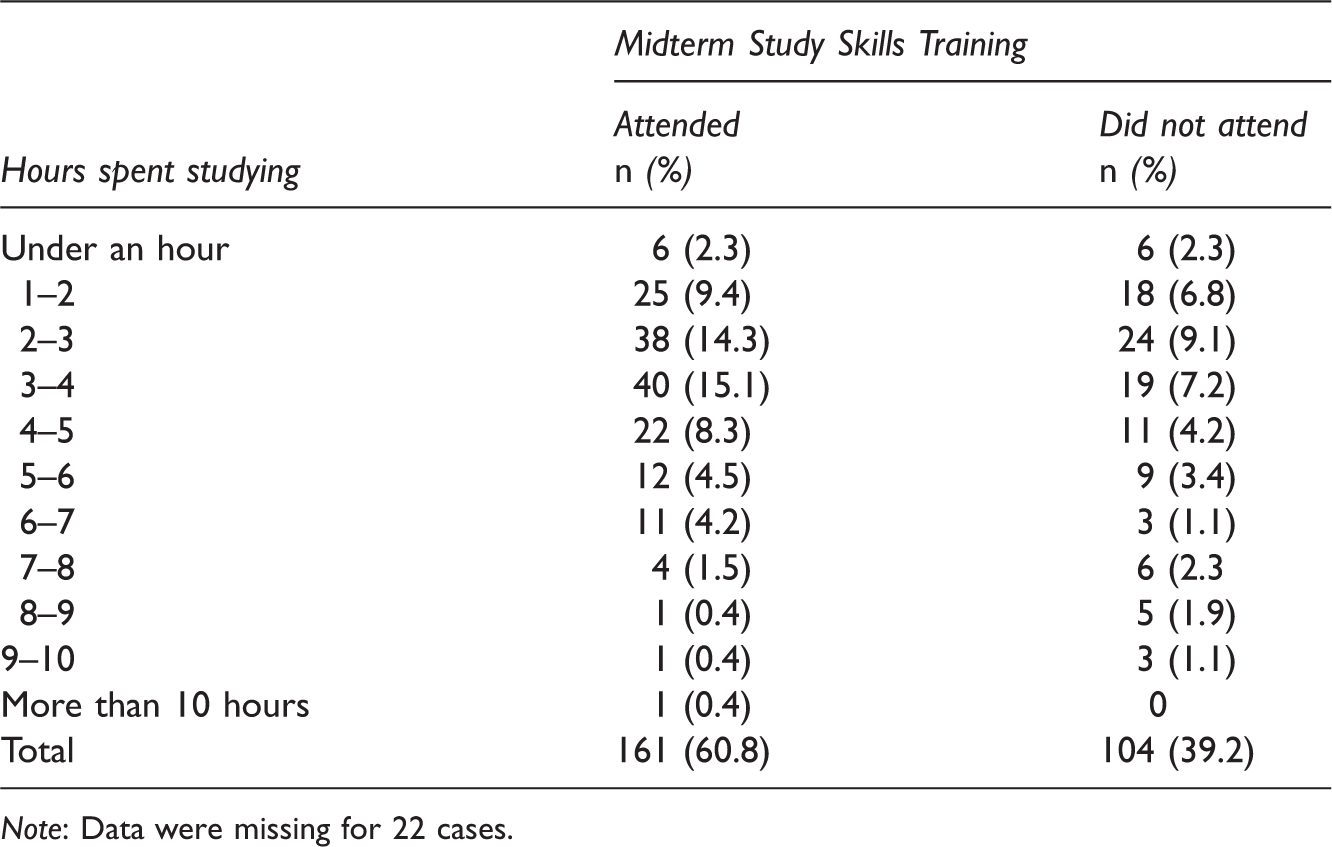

Self-Reported Hours Spent Studying for Exam 3 by Study Skills Training Attendance

Note: Data were missing for 22 cases.

We also predicted that students who attended the training would show greater metacognitive awareness by being more accurate in estimating their own performance on the next exam (exam 3). We used the absolute value of the difference score calculated as students’ estimate of the percent correct on the exam minus students’ actual percent correct on the exam. There was no significant difference between those who attended the study skills session and those who did not attend in their accuracy estimates of performance on the third exam, t(235) = 1.76, p = 0.084, d = 0.24, 95% CI of the difference [−0.24, 3.73]. Although the difference between the two groups was not statistically significant, students who did attend the training were less accurate in their estimate (M = 9.45, SD = 8.24, 95% CI [8.09, 10.82]) compared to students who did not attend the training (M = 7.07, SD = 6.45, 95% CI [6.39, 9.03]). Therefore, contrary to hypothesis 3, students who attended the study skills training were not more accurate in predicting exam scores compared to the students who did not attend the training.

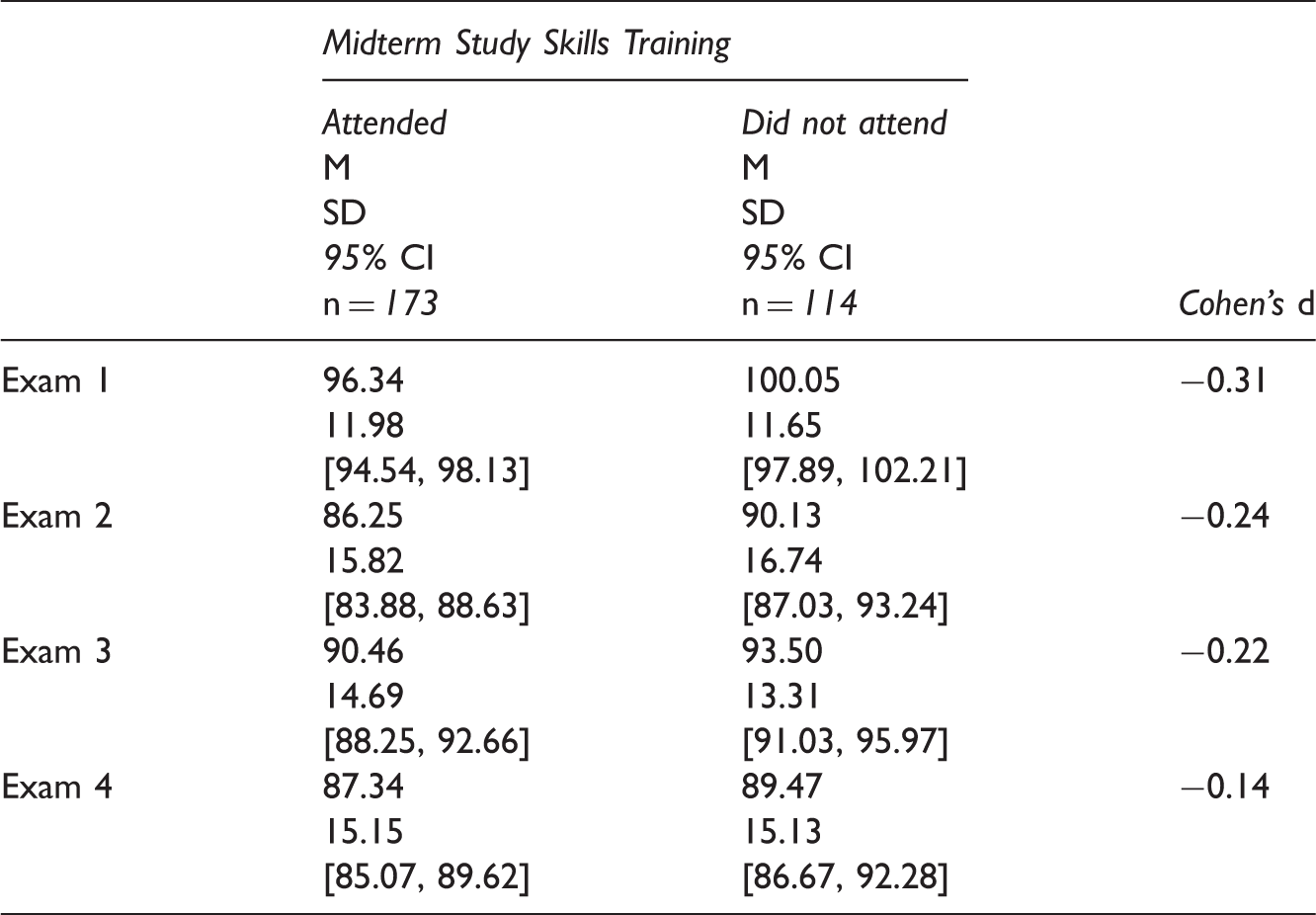

Means (Ms), Standard Deviations (SDs), Confidence Intervals (CIs), and Effect Sizes for the Four Unit Exams by Study Skills Training Attendance Group

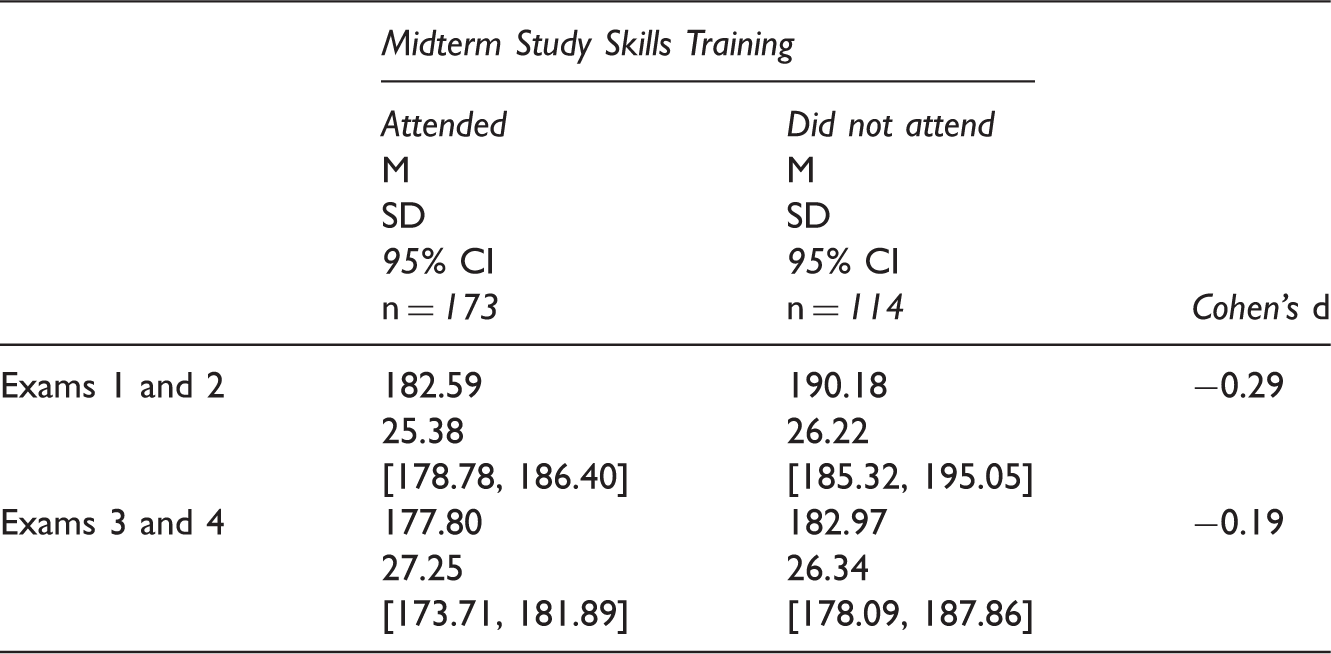

Means (Ms), Standard Deviations (SDs), Confidence Intervals (CIs), and Effect Sizes for the Average of the First Two Unit Exams and the Last Two Unit Exams by Study Skills Training Attendance Group

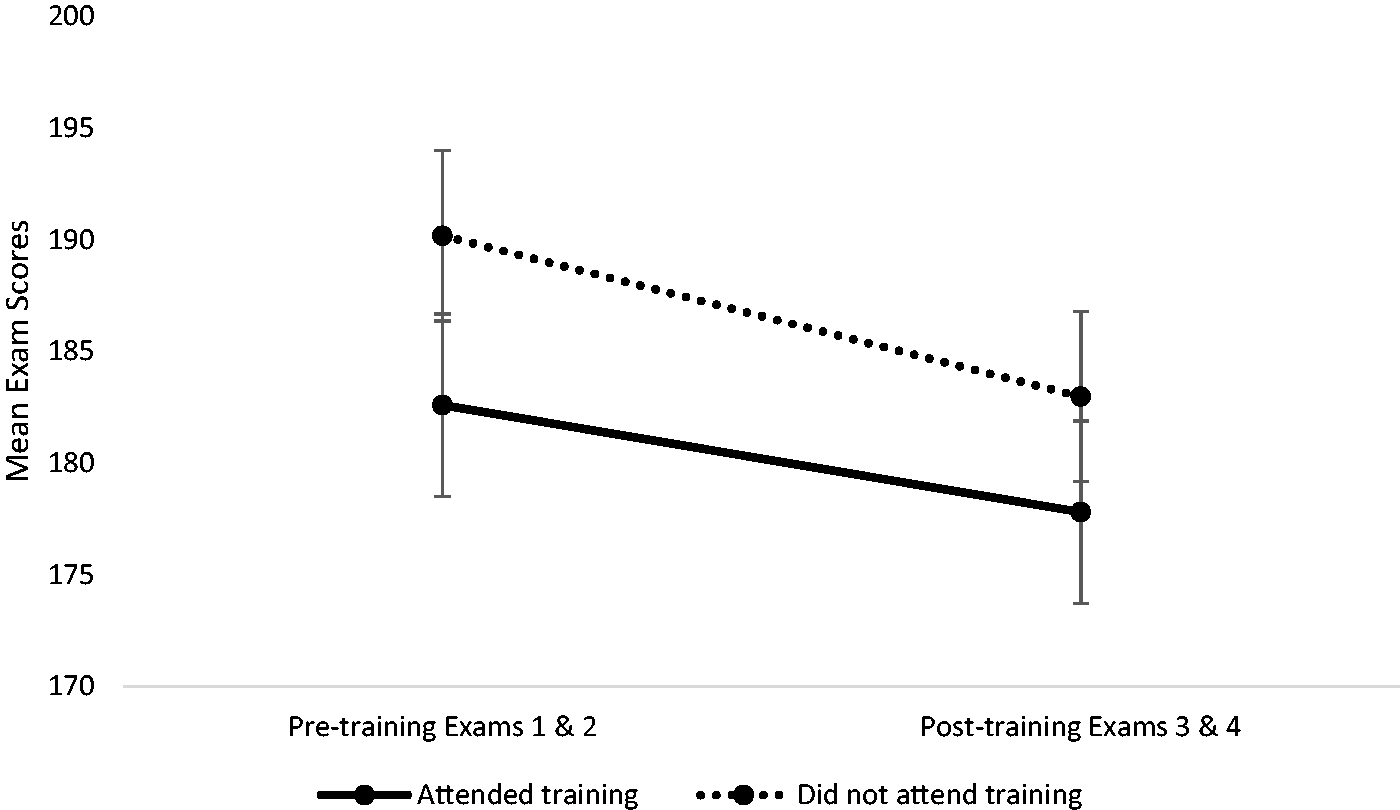

To evaluate hypothesis 4, we used a 2 (attended, did not attend study skills training) × 2 (combined mean of exams 1–2, combined mean of exams 3–4) repeated measures analysis of variance. We found a main effect for time (F (1, 283) = 35.19, p < .001, pη2 = 0.11) such that scores on exams 1–2 were higher than scores for exams 3–4. We also found a main effect for study skills training (F (1, 285) = 4.51, p = 0.035, pη2 = 0.016), where post hoc tests revealed a statistically significant difference between exam scores for students who attended the training and students who did not attend the training for the first two exams (t(285) = 2.49, p = 0.015, d = 0.29); these results further support hypothesis 1, demonstrating that more of the lower performing students elected to attend the optional study skills training class. Despite a significant difference between the groups on performance on the exams prior to the study skills class, there was no statistically significant difference between the two groups for the last two exam scores (t(285) = 1.60, p < 0.112, d = 0.19). The interaction between study skills attendance and time was not significant (F (1, 285) = 1.43, p = 0.233, pη2 = 0.005); however, these results suggest that although the average grade for the last two exams for all students was lower than the first two exams, students who attended the study skills class minimized the decrease in their exam scores between exams 1–2 and exams 3–4 compared to those students who did not attend (see Table 4). We cannot say that the smaller effect size for the average of the last two exams compared to the average of the first two exams is the result of the study skills training, but it is in the direction we predicted (see Figure 1), and would be an interesting question to pursue in future research.

Exam Scores for Students Who Attended Compared to Those Who Did Not Attend Study Skills Training.

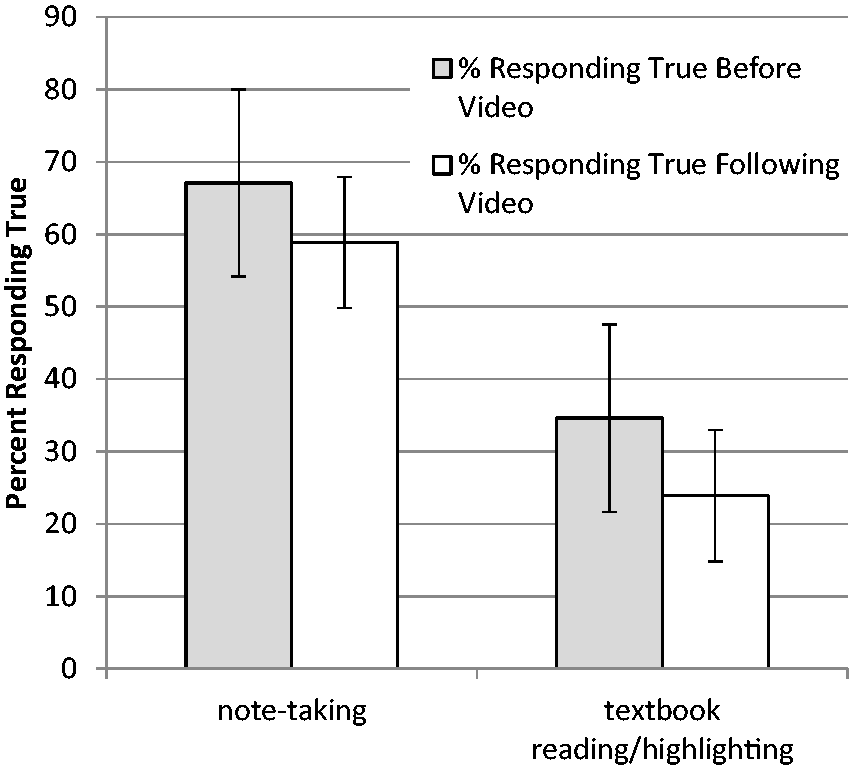

We also predicted in hypothesis 5 that students would demonstrate increased awareness of their own strengths and weaknesses related to study skills and exam preparation during the study skills class. To assess students’ awareness of their strengths and weaknesses, we asked them to rate their own note-taking and textbook reading/highlighting strategies prior to viewing the fourth video, which specifically addresses how to effectively implement these skills. After viewing the video, we asked students to re-assess their note-taking and textbook reading/highlighting strategies. Prior to watching the video, a greater proportion of students indicated they believed they took good notes in class (66.7%, 95% CI [57.64, 75.76]) compared to the proportion of students who indicated they thought they took good notes after watching the video (59.6%, 95% CI [49.64, 69.57]), χ2 (1) = 47.93, p < 0.001, Cramér’s V = 0.55. Similarly, we found a greater proportion of students who indicated their belief that their textbook reading and highlighting strategies were good before watching the video (33.3%, 95% CI [20.37, 46.23]) compared to the percent indicating their strategies were good after they saw the information presented in the video (23.5%, 95% CI [9.65, 37.35]), χ2 (1) = 41.85, p < 0.001, Cramér’s V = 0.52.

In addition, at the beginning of class, we asked students to list the specific study strategies they employed while studying for the first two unit exams. After students viewed the second video, which addresses the importance of selecting study strategies that encourage deep processing of information, we asked students to classify each of the study strategies they listed as either deep or shallow. Students then used their clicker to indicate whether their list included more shallow study strategies, more deep study strategies, or an equal number of shallow and deep processing strategies. The majority of students (51.6%; n = 83) reported that they had been using more shallow than deep study strategies, and only 15.5% (n = 25) of students reported that they had been using more deep than shallow study strategies. In support of hypothesis 5, these results suggest that students who attended the study skills training gained a better understanding of the effectiveness of their note-taking and reading/highlighting skills and gained insight into the effectiveness of their own study skills strategies.

To evaluate hypothesis 6, we examined students’ ratings of the study skills training class, which they evaluated both at the end of the training session and again at the end of the semester. Both times, students rated their perception of the training on 7-point Likert-type scales, with higher scores indicating a more positive perception of the training. Of the 155 students who answered this item immediately after the training, 93.5% (M = 5.89, SD = 0.92) agreed the class was somewhat helpful to very helpful (see Figure 2). At the end of the semester, students who attended the study skills training class rated the effectiveness of the training (rather than helpfulness). On average, the total of 140 students rated the study skills training class as being generally effective (M = 5.19, SD = 1.21).

Student Responses to the Questions: “I take good notes in class” and “My textbook reading/highlighting strategies are good.”

Discussion

Formal study skills training for college students is typically offered as a discrete topic, separate from students’ other academic courses. Although such training can be effective at improving academic performance and attitudes toward learning, study skills training is optimally effective when presented in context, as an embedded part of an existing course (Hattie et al., 1996). In this study, we examined the effectiveness of a study skills training embedded into a section of Introductory Psychology at midterm. Overall, our results suggest that offering an optional, extra credit class is a relatively effective way to recruit a broad range of students, including many lower-performing students, to take advantage of study skills training. Although attending the training did not seem to influence subsequent study time or metacognitive accuracy, we did find evidence that students who attended gained insight into some of the weaknesses of their past study strategies. Students who attended rated the training as generally effective, and exam score differences that existed prior to training between students who chose to attend the training and those who did not attend disappeared for exams that followed training.

As predicted, students who chose to attend the study skills training had lower performance on the two exams taken prior to the training compared to students who chose not to attend. This finding is in contrast with previous research showing that lower-performing students are less likely to take advantage of optional opportunities to receive additional help outside of class than are students who are already doing well (Jensen & Moore, 2009; Moore, 2008). We attribute our contrary finding to the fact that we invited students to attend via emails that were personalized based on midterm grades, and we most strongly encouraged students who were we presumed to be most in need of the training (i.e., those with grades of ‘D’ or ‘F’ at midterm) to attend. Our results support findings by Deslauriers et al. (2012), which highlight the value of personalized emails in encouraging low-performing students to take advantage of opportunities to receive help. While there may be reasons, other than those related to prior exam performance, why students opted to either attend or not attend the optional training, scheduling the study skills class during an “off day” (since the class was offered in a blended format) likely removed some of the scheduling barriers students may typically face when optional classes are offered.

Contrary to our hypothesis, students who attended the study skills class did not report spending more time studying for the following exam than did students who chose not to attend. In fact, there were no differences in reported study time for exam 3 between these two groups. It is possible, however, that there were differences in study time between the two groups that existed prior to training. This possibility is likely, given that students who did not attend the training performed better on first two exams than did students who did attend. It is therefore possible that students who attended the optional class actually did increase their study time as a result of the training they received. Unfortunately, with a single-item, post-training measure of self-reported study time, and without baseline measures of study time from both groups prior to training, we cannot draw strong conclusions regarding the impact of the training. In the future, using multiple assessment items, including measures of the time students spend studying prior to the first two exams would be ideal in order to adequately evaluate changes within and between groups over time.

One of the important goals of the study skills class was to introduce students to the importance of metacognition. One of the videos students watched during training detailed the importance of metacognition with an example of how overconfidence (e.g., predicting a higher exam score than that which was obtained) can be associated with inadequate exam preparation due to the mistaken assumption that mastery has been achieved (Chew, 2011). We hoped that exposure to this information, combined with the fact that students who attended the optional class had the opportunity to self-reflect on their previous metacognitive accuracy, would lead to improvements in metacognitive skills, as measured by accuracy in exam score predictions. Contrary to our hypothesis, students who attended the study skills training class did not have more accurate estimates of their performance on the exam that followed the training compared to the students who did not attend. As with results related to time spent studying, it is possible that students who attended the study skills training did improve in their accuracy of exam score predictions, but did not surpass the accuracy of the students who did not attend. Not having baseline data as a comparison is a noteworthy methodological limitation of this study. Therefore, gathering pre-intervention data on study strategies, time devoted to studying, and metacognitive abilities prior to any study skills intervention would be an important methodological step in future research. It is also important to point out that the time between the study skills training class and exam 3 was quite short (i.e., two weeks). Developing an accurate understanding of metacognition and its importance during learning, and taking active steps to improve metacognitive skills can be a long, and sometimes difficult, developmental process (Kuhn, 2000; Miller & Geraci, 2014). It is possible that students may not demonstrate significant changes over a short time period, but that does not necessarily indicate that they will not develop these skills over time. Assessing these types of skills over long periods of time that span a student’s entire university career might provide interesting information about how and when such skills become more established.

Our prediction that students who attended the training would show greater evidence of improved exam performance on subsequent course exams compared to classmates who did not attend was partially supported. In reality, exam scores for both groups decreased on exams 3 and 4, compared to the first two exams. However, this result is largely driven by the fact that exam 1 scores were relatively high (see Table 3) compared to the other three exams. While students who attended the study skills training class did not show improvement in exam scores compared to students who did not attend, there was an interesting shift in the pattern of exam scores between the groups. Students who did not attend the study skills training had significantly higher mean scores on exams 1–2 than did students who chose to attend, but there was no significant difference between the groups on exams 3–4. These findings, although not supported by a significant interaction, suggest the possibility that the students who chose to attend the study skills training were underperforming compared to the other students on exams prior to the training, but afterwards, those students caught up with the group of students who chose not to attend. Of course, there might be reasons, other than the study skills training, that led to this attenuation in exam score difference between the two groups. For example, it is possible that students who did well on the first two exams (i.e., those less likely to have attended the training class) were less motivated to improve their performance for the final two exams compared to students who did not do well on those exams.

While we may not have evidence of major metacognitive or academic changes as a result of this brief study skills intervention, it is important to examine if there were any more subtle changes in student perceptions and/or behaviors that may be indicative of possible study skill improvements in the future. It appears that students who attended the study skills training challenged some of their beliefs about their own study strategies. A significant number of students, who initially rated their own strategies as effective with respect to note-taking and reading/highlighting the text, reduced their judgments of the efficacy of their strategies after watching a brief video targeting these behaviors. Of course, since these data were self-reported, in a situation in which the objectives were clear, it is possible that participant response bias played a role in the students’ decreased ratings of the effectiveness of their study skills. Students may have watched the video and realized that one of the underlying goals of the presentation was to make students more aware of the problems inherent in their study strategies. When answering follow-up questions asking them to rate the effectiveness of their study strategies, they may have reduced their ratings in order to “comply” with the goals of the study. On the other hand, it is also possible that the consistency motif, or people’s desire to remain consistent in ratings over time, may have played a role in suppressing any changes in ratings after watching the video (e.g., Podsakoff & Organ, 1986). These are limitations common to most self-report data, highlighting the need to find objective ways to collect data regarding study skills behaviors in the future (e.g., using “time on task” data from online course materials to measure study time).

Students were also able to successfully classify their own study strategies as “deep” or “shallow” after watching a video describing the importance of deeply processing information. Not surprisingly, most students found that their past study strategies consisted of activities that would primarily promote shallow processing of information (e.g., highlighting text, and memorizing definitions in isolation). In an attempt to rule out response bias and validate the findings, we evaluated the accuracy of the students’ judgments of their own study strategies. We compared scores on the first two exams between students who indicated they used primarily shallow strategies and those who indicated they used primarily deep strategies. Validating their self-assessments, the 25 students who reported that they had used more deep processing strategies performed significantly better on the first two exams (M = 80%, SD = 12.34) compared to the majority of students who reported using more shallow (M = 74%, SD = 10.15) than deep strategies F(2, 158) = 4.84, p < 0.010, ω2 = 0.045. These results suggest that both groups of students were able to accurately evaluate their own study strategies in terms of depth of information processing. Interestingly, the difference between those groups was not significant on exams 3 and 4, which occurred in the weeks following the study skills training class. One possible explanation for this finding is that some students who initially reported using mostly shallow strategies changed their studying habits to include more deep strategies following the study skills training class.

While positive student perceptions of the study skills training may not be the primary goal of such interventions, it is encouraging that the students who attended the class believed that it was helpful. Students were able to earn 10 out of a maximum of 30 extra credit points (or 1% of their final grade in a class with 1000 possible points) for attending the study skills class. Therefore, it is possible that students chose to attend with a primary motivation of receiving extra credit. If that were the case, students who were already performing well in the class (e.g., those earning and ‘A’ or ‘B’ at midterm) would have been less likely to rate the study skills training as helpful, if they perceived themselves to already possess adequate study skills and had only attended the class for the extra credit points. To evaluate this possibility, we compared students’ ratings of the helpfulness of the study skills training across groups of students with different midterm grades. We found that students with ‘A’s, ‘B’s, ‘C’s, D’s, and ‘F’s at midterm all rated the study skills training class as equally helpful, F (4, 150) = 1.37, p = 0.25. This finding is useful to consider when designing these types of interventions. Rather than focusing only on the students who are struggling, this intervention appears to be well-received among all students.

College students’ study skills are important predictors of academic performance (Crède & Kuncel, 2008), yet many students lack those essential skills (Gurung, 2005; Gurung et al., 2012). Finding ways to address students’ skill deficits by capturing their interest at a time when they are listening (i.e., when midterm grades become available) and embedding that content into an existing and relevant course (e.g., in close proximity to discussing learning and memory in Introductory Psychology) provides students with the opportunity to make meaningful changes to their study skills in a way that can impact the remainder of their college career.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.