Abstract

This study investigates knowledge structures and scientific communication using bibliometric methods to explore scientific knowledge production and dissemination. The aim is to develop knowledge about this growing field by investigating studies using international large-scale assessment (ILSA) data, with a specific focus on those using Programme for International Student Assessment (PISA) data. As international organisations use ILSA to measure, assess and compare the success of national education systems, it is important to study this specific knowledge to understand how it is organised and legitimised within research. The findings show an interchange of legitimisation, where major actors from the USA and other English-speaking and westernised countries determine the academic discourse. Important epistemic cultures for PISA research are identified: the most important of which are situated within psychology and education. These two research environments are epicentres created by patterns of the referrals to and referencing of articles framing the formulation of PISA knowledge. Finally, it is argued that this particular PISA research is self-referential and self-authorising, which raises questions about whether research accountability leads to ‘a game of thrones’, where rivalry going on within the scientific field concerning how and on what grounds ‘facts’ and ‘truths’ are constructed, as a continuing process with no obvious winner.

Keywords

Scientific knowledge production and ILSA research

In recent decades, international large-scale assessments (ILSAs) have gained a key position worldwide in the movement of evidence-based education policy and practice (Pettersson, 2014b). However, the interplay between international and national levels is relatively complex, and the impact multidirectional (Forsberg and Pettersson, 2014), which can be described in terms of borrowing and lending. Numerous examples of learning from elsewhere are manifested in the transfer of policy and programmes. In international organisations like the Organisation for Economic Co-operation and Development (OECD) and the European Union (EU), and in many countries, ILSA is used as a tool to measure, assess and compare the success of national education systems and schools and to distribute praise, shame and blame (Steiner-Khamsi and Waldow, 2012).

Science does not stand still, especially as new research creates new knowledge structures (see, e.g., Börner et al., 2003). As ILSA research is a growing, inter-disciplinary and increasingly international field of study (Lindblad et al., 2015), the scientific development of this field is highly relevant and important to analyse. Knowledge production and dissemination are cornerstones in the development of science and indicate how scientific knowledge is constructed and academic facts are spread, for example, in the form of citations. Various studies (Domínguez et al., 2012; Luzón and Torres, 2011; Owens, 2013) have shown that researching the international large-scale test known as the Programme for International Student Assessment (PISA), staged by the OECD, can be both a viable and an important aspect of science. There has been a lot of discussion about whether or not PISA changes education (e.g. Carvalho, 2009; Grek et al., 2009; Hanberger, 2014; Mangez and Hilgers, 2012) and whether changes in countries’ rankings indicate that the quality of education has been compromised (e.g. Gorur, 2014). However, what has not been widely discussed is how knowledge is organised through PISA research and how various actors disseminate this research.

One way of investigating ILSA research is to examine its output in terms of books, journal articles and other kinds of publications. We are especially interested in, and therefore limit our investigation to, scientific journals, because they function as the new research currency (Rovira-Esteva et al., 2015). Our analysis is conducted on a number of articles, based on PISA data, investigating the organisation and legitimisation of knowledge. The focus is on authors, affiliations, journals and research areas in scientific articles performed by a bibliometric study (e.g. Leydesdorff, 2001), which makes it possible to highlight some of the characteristics of ILSA research. We explore who inhabits and cultivates academic knowledge (Becher and Trowler, 2001) and how researchers refer to each other and, as such, establish legitimacy for their arguments and knowledge (Kandlbinder, 2015). It can be argued that assessing such impact is challenging. Indeed, numerous analysts and researchers have tried to identify the problems of judging the influence of ILSA. When exploring why researchers who are active in the field of evaluation have had very little impact on policy matters, Caplan notes that the two communities – theorists and policymakers – live in different worlds, are divided by different values, reward systems and often different languages (Caplan, 1979) and have probably had different styles of reasoning (Hacking, 1992). The result is that researchers who are involved in judging impact are often forced to draw pessimistic conclusions about the efficiency of such a research endeavour (Burkhardt and Schoenfeld, 2003).

In science, as well as in knowledge production, the diffusion of the produced knowledge is in many respects dependent on socio-cognitive networks, which have been referred to as ‘invisible colleges’ (Crane, 1972) or scholarly tribes (Becher and Trowler, 2001). This organisation of knowledge within science has a rather long history of study. Notable scholars like Robert K Merton (1973), Bruno Latour (1988), Latour and Steve Woolgar (1979), Jasanoff (2004), and Shapin and Schaffer (1985) have described the organising and rivalry among scholars around the production of scientific ‘facts’ and ‘truths’. Knorr Cetina and Cicourel (1981) discuss the same issue and state that science is largely a socially organised cognitive activity made up of various epistemic cultures. The same can be said to be true about knowledge production. Scholarly tribes or tightly connected authors can be understood as representing separate epistemic cultures in which notions and concepts are produced or even ‘fabricated’ (cf. Carvalho, 2012) on more or less ‘objective’ grounds. From disseminated research, different ideas are formulated as to what should be perceived as ‘facts’ and ‘truths’ in specific issues, creating what we call episteme. In our usage of the term, episteme is understood as legitimised knowledge where the ‘facts’ and ‘truths’ that are embedded in the notion of this specific knowledge are perceived as ‘common sense’ (cf. Gramsci, 1971). Hence, every single episteme in a discourse contributes to the rationale and totality of a specific epistemic culture.

The aim of the study is to explore and develop knowledge about the rapidly growing research field of ILSA. Here, the focus is on PISA research, which is the largest and most viable sub-section of ILSA research. The study investigates knowledge related to scientific communication using bibliometric methods in order to measure and assess the production and dissemination of scientific knowledge. Scientific articles focusing on PISA, as an example of ILSA-research, are used to address the research question: how is research using PISA data scientifically organised and legitimised? This is investigated by exploring which authors, research fields, journals and countries are active in this section of PISA research. Consequently, we systematically map how a section of PISA research and knowledge is produced and how author referrals create legitimacy.

The article is organised in four sections. First, ILSA as a field of study is presented. Second, the methodology of the study is described and discussed. The third section contains the study’s findings under the following headings: Journals and research fields; Main countries; and Authors and nodes of research. Finally, the findings are discussed and conclusions drawn about the organisation and legitimacy of knowledge in this particular area of PISA research.

ILSA as a field of study

Education has been characterised as a central requirement for social and economic development. In this, international benchmarking has been identified as an important basis for improvement. An example of this is evident in the OECD statement: ‘It is only through such benchmarking that countries can understand relative strengths and weaknesses of their education systems and identify best practices and ways forward’ (OECD, 2006: 18). The statement exemplifies the significance that international organisations ascribe to comparative international tests as a tool for the standardisation and legitimacy of national educational systems (Kamens and McNeely, 2009; Waldow, 2012). Further, international testing and national assessment are regarded as stimuli for cycles of educational reforms (Baker and LeTendre, 2005).

Over the years, international organisations have developed a common discourse on schooling with a view to influencing national education systems (Dobbins and Martens, 2012) using comparisons and different kinds of data (Pettersson, 2014a). A shift from governing to governance involving actors at different levels within and outside the national governing system has also been identified (Ball and Junemann, 2012). Organisations like the OECD function as mediators for exchange and create and reshape ideas and programmes fabricating a specific knowledge-policy instrument (Carvalho, 2012). Travelling international policy diffuses and reforms societies with different social and political histories. In this context, international organisations collide and intertwine with national embedded policy and history (cf. Ozga and Jones, 2006). ILSA is regarded as a powerful instrument for change, standardisation and the legitimisation of national educational systems. The next section describes the development of testing, comparisons and the use of data for educational governing and how this later emerged into a focus on ILSA.

The development of ILSA

The International Association for the Evaluation of Educational Achievement (IEA) was the first organisation to focus on large-scale assessments of students’ achievements in the late 1950s and early 1960s (Pettersson, 2014a). The first IEA study differs from other comparative education studies undertaken at that time in that it tried to introduce an empirical approach into a field dominated by cultural analysis (Foshay et al., 1962). The study concluded that cross-national studies of educational performance could produce comparable results, which at that time were startling (Purves, 1987). The IEA continued to develop several assessments influencing both policy and research (Pettersson, 2014a), the most well-known of which are TIMSS, TALIS, CIVED, ICCS (for a further discussion see Lindblad et al., 2015). Eventually, criticism of the IEA emerged with regard to the lack of policy relevance. In response, the OECD started to develop indicators and assessments of their own (Pettersson, 2008).

Since the introduction of PISA 2000 by the OECD, both the programme and its results have been widely disseminated in bureaucratic, political and media contexts (Carvalho, 2009; Grek et al., 2009; Pettersson, 2014a). It is important to acknowledge that PISA is not just a test. In conjunction with the tests, meetings are held to discuss both the tests and the results. The results are then published in reports and disseminated worldwide. A number of actors from various countries are involved in PISA activities; for example, public and private research centres, OECD professionals, policymakers, bureaucrats and technicians, as well as independent researchers using the data or results from PISA to investigate different fields of knowledge. The involved actors have different knowledge, interests and perspectives. From this point of view, PISA is a conglomerate of activities, objects and actors that generates diverse actions in different social spaces at different levels (Carvalho, 2012). This means that the effects of PISA are diverse and not always as linear as they might first appear.

ILSA in policy and research

One of the most obvious connections in discussions about ILSA is that between policy and research. Wagemaker (2014) describes this connection in the following way: Common conclusion is that stakeholders need to recognize that major policy initiatives or reforms are more likely to result from a wide variety of inputs and influences rather than from a single piece of research. Research is also more likely to provide a heuristic for policy intervention or development rather than being directly linked, in a simple linear fashion, to a particular policy intervention. This outcome is due not only to the competing pressure of interests, ideologies, other information, and institutional constraints, but also to the fact that policies take shape over time through the actions of many policymakers. (Wagemaker, 2014: 12–13)

The research activities generated by ILSA are not always explicit. Even though researchers and policymakers may appear to live in different worlds, there are connections and overlaps.

The expansion of ILSA is well documented (e.g. Kamens and McNeely, 2009), but questions continue to be asked about the extent to which ILSA has an impact on policy and research. The question that is most frequently asked is whether ILSA contributes to educational reforms and improvements in different national settings. The question is understandable, considering that most of the organisations behind ILSA have more or less explicit aims. However, it is also relevant in relation to the vast production of grey-zone literature produced on the impact of policy at different levels (cf. Lindblad et al., 2015). In the Handbook of International Large-Scale Assessment, Wagemaker (2014) summarises the impact of different ILSA tests and identifies seven effects: i) the growth of ILSA; ii) a widening discourse; iii) changes in educational policy; iv) curriculum changes; v) changes in teaching; vi) capacity building and research endeavours; and vii) global and donor responses. What is striking is that the impact of ILSA on research communication is not obvious, but is instead described as sub-categories under certain headings. Although research on this issue is sparse, it does exist. In order to understand the impact of ILSA, we have to understand research communication in terms of how educational concepts, data and results are developed, disseminated and interchanged.

Methodology

Inspired by a study conducted by Lindblad et al. (2015), which has systematically mapped the field of ILSA, our investigation is carried out using a corpus of scientific articles. This corpus of research contains a selection of core articles, namely the 15 most cited articles using PISA data in the scientific database Scopus. The corpus includes the articles to which the core articles refer (in their reference lists) and the articles that refer to the core articles (in their reference lists). In total, the corpus consists of 578 articles. This corpus is explored using retrospective and prospective analysis. The retrospective analysis investigates to whom and what the core articles refer, and the prospective analysis identifies which researchers refer to the core articles. This then facilitates the location of organisational frames, which is important for conceptualising knowledge within the field. The notion of nodes is used as a concept to explore the connections and centrality of research activities between authors in order to illuminate epistemic cultures within PISA research.

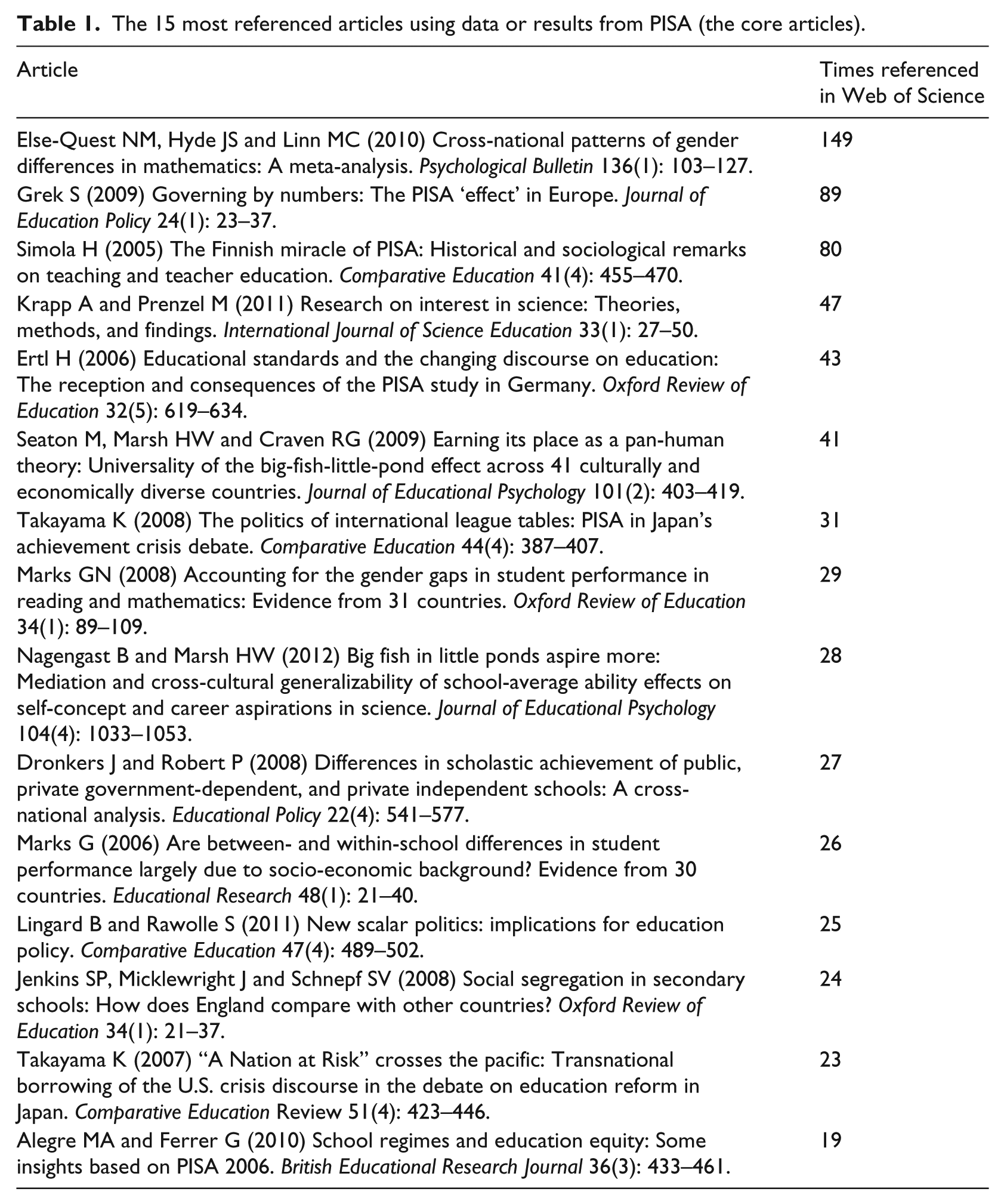

For the study’s retrospective and prospective analyses, bibliometric data was extracted from the scientific database Web of Science (WoS). This database was chosen because it is easier to download the separate data necessary for the analysis from here than from Scopus. The 15 core articles are presented in Table 1.

The 15 most referenced articles using data or results from PISA (the core articles).

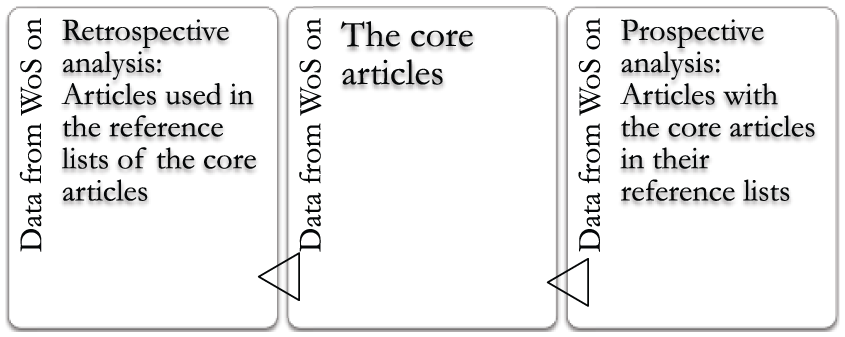

After identifying the 15 core articles, the articles used in their reference lists were also downloaded from WoS. Following this, articles referring to the 15 core articles in their reference lists were downloaded. This provided us with quantified data on research fields, journals, countries and authors for the retrospective and prospective analyses. By using bibliometric methods, it was possible to construct a sub-section of a research field, the corpus, and describe this corpus retrospectively and prospectively and thereby analyse a specific field of ILSA research. See Figure 1 for a visualisation of both the corpus and the retrospective and prospective analyses.

Visualisation of the corpus and the retrospective and prospective analyses.

Figure 1 illustrates the constructed corpus of research and the data used for the retrospective and prospective analyses. The data downloaded from WoS consisted of individual files for the 15 core articles on research fields, journals, countries and authors for the articles used in the reference lists (retrospective analysis). Individual files on the research fields, journals, countries and authors in the articles having the core articles in their reference lists (prospective analysis) were also downloaded. These files were then merged so that there was one file for research fields, one for journals and so forth for the retrospective analysis and likewise for the prospective analysis. Hence, in the end, eight tables provided us with a quantified version of the corpus. WoS, Scopus and Google Scholar were also used to investigate the co-authors indicated by the analyses as important. This provided data that facilitated further elaboration on the active authors in the corpus. The bibliometric analysis highlighted various epistemic cultures within PISA research and identified a process by which knowledge is legitimised.

Losses are always of importance in bibliometric studies. The databases that were used (Scopus, Google Scholar and WoS) are designed as self-promoting environments, which means that they regularly boost the scientific materials that are embedded in them. There is, therefore, a bias in favour of keeping a record of the articles and journals that are embedded in the used database. Research that is dependent on data from a number of databases also has its limitations and possibilities. The limitations of our study connect to the loss of data when it comes to publications referred to by the corpus. As a way of establishing a self-review of our research process, we went through all the articles referred to by the corpus in WoS and concluded that more than 2/3 of all the referred literature was excluded by the database. The missed literature is not primarily categorised as peer-reviewed scientific articles presented in journals, which is the standard form for tabulations in WoS. This observation is probably not unique to our selection, although it may be valid for many scientific fields, especially the social sciences and humanities, where a shift can be observed from one way of publishing research results to another. Another limitation is the language bias; that is, the database contains journals with articles in English. However, the database was chosen because it is one of the best known and because it is particularly appropriate for this investigation. The question of language bias is discussed in more detail later in the article.

When using WoS it has to be acknowledged that educational sciences are somewhat negatively biased and that other databases are more sensitive in their embedding of such material. However, the challenge for us was to find a software that was better suited to incorporating bias in the educational sciences. Despite these limitations, some of the main actors framing PISA research have been identified and nodes of research within the field in focus have also been discerned. Like all bibliometric studies, the analysis has its limitations, the most obvious of which concerns the selection of the most cited articles and the language used. ILSA research is a continuously growing scientific and cross-disciplinary field. By narrowing down the study to the most cited articles, it is only possible to discuss the most disseminated PISA research. Thus, it is important to state that this study does not present all the ILSA or PISA research that has been conducted. Rather, it is suggested that the results could serve as a useful reference for further studies of PISA research.

Results

The corpus is used as a stepping-stone to investigate a specific field of PISA research and to map and highlight the contexts in which PISA research operates. This is conducted by investigating selected characteristics of how the research is organised, starting with journals and research fields. This is followed by the countries of origin of the authors, the authors and the nodes of research illuminating different epistemic cultures in PISA research.

Journals and research fields

The first analysis stage of the corpus mapped the journals and research fields involved. The most referred-to journal is Comparative Education, which is also the second most used journal referring to the core articles. Moreover, the second most referred-to journal by the core articles is the Journal of Education Psychology (also the sixth most referring journal). The journal that refers to the core articles the most is the Journal of Education Policy, which is also the seventh most referred-to journal. In order to investigate the research question further, the research environments in which the journals were active were mapped using the classifications available in WoS of the total number of referred-to and referring articles:

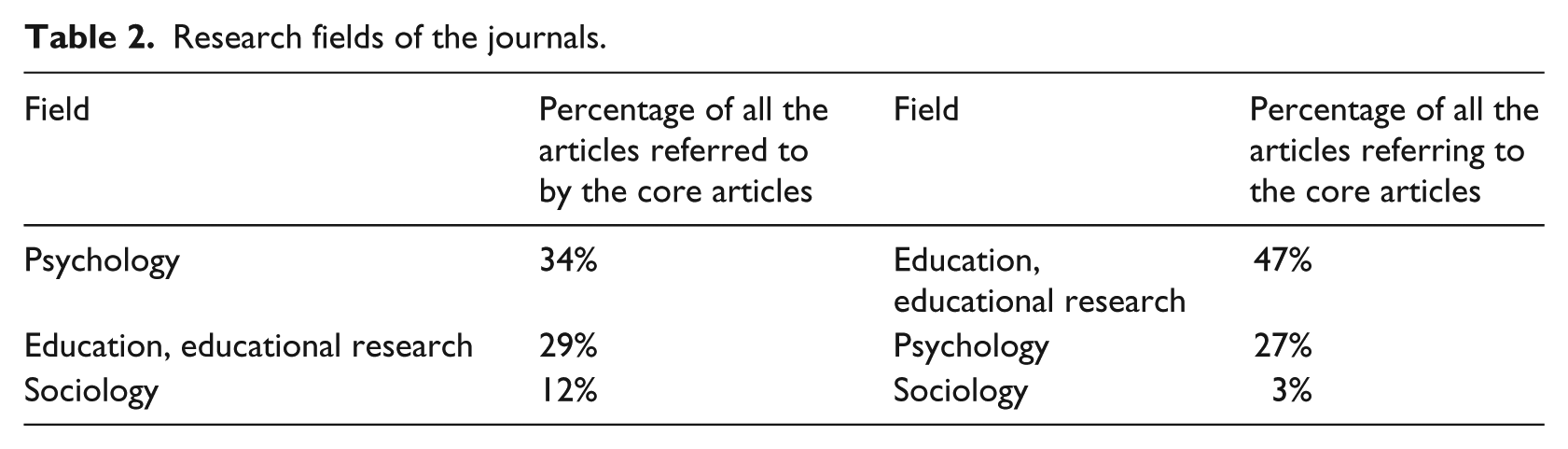

In Table 2, psychology is the primary field for the referred articles. The second most common is educational research. Further, the field of educational research refers more substantially to the core articles than any other field. The field of psychology is the second most referring field. Sociology is also present as a field that is referred to by the core articles and that also refers to them. Business economics, science technology, social sciences and women’s studies, which are not indicated in the table, all appear in the top 10 as referred-to fields. Based on the table, the referred-to and referring journals and the fields of educational research and psychology frame the corpus.

Research fields of the journals.

Main countries

In order to determine the activity of different countries in PISA research, we mapped the affiliation countries in the corpus. This provides information about the cultural frames and possible bias in the PISA research, which are important when explaining how knowledge is established and disseminated.

As Table 3 shows, USA is the primary country. Other countries in the table – Australia, Germany, England, Finland, China, Belgium, Italy, Canada and the Netherlands – appear as referring and referred-to by the core articles. This makes the lists of countries referred to by the core articles and those referring to them highly similar, with 11 out of 15 countries on both lists, thus indicating the supremacy of some countries in the field. It is noteworthy that the referred-to and referring authors mostly come from English-speaking countries, thus providing a bias towards values and ideas connected to these societies.

Country of affiliation of PISA researchers.

Authors and nodes of PISA research

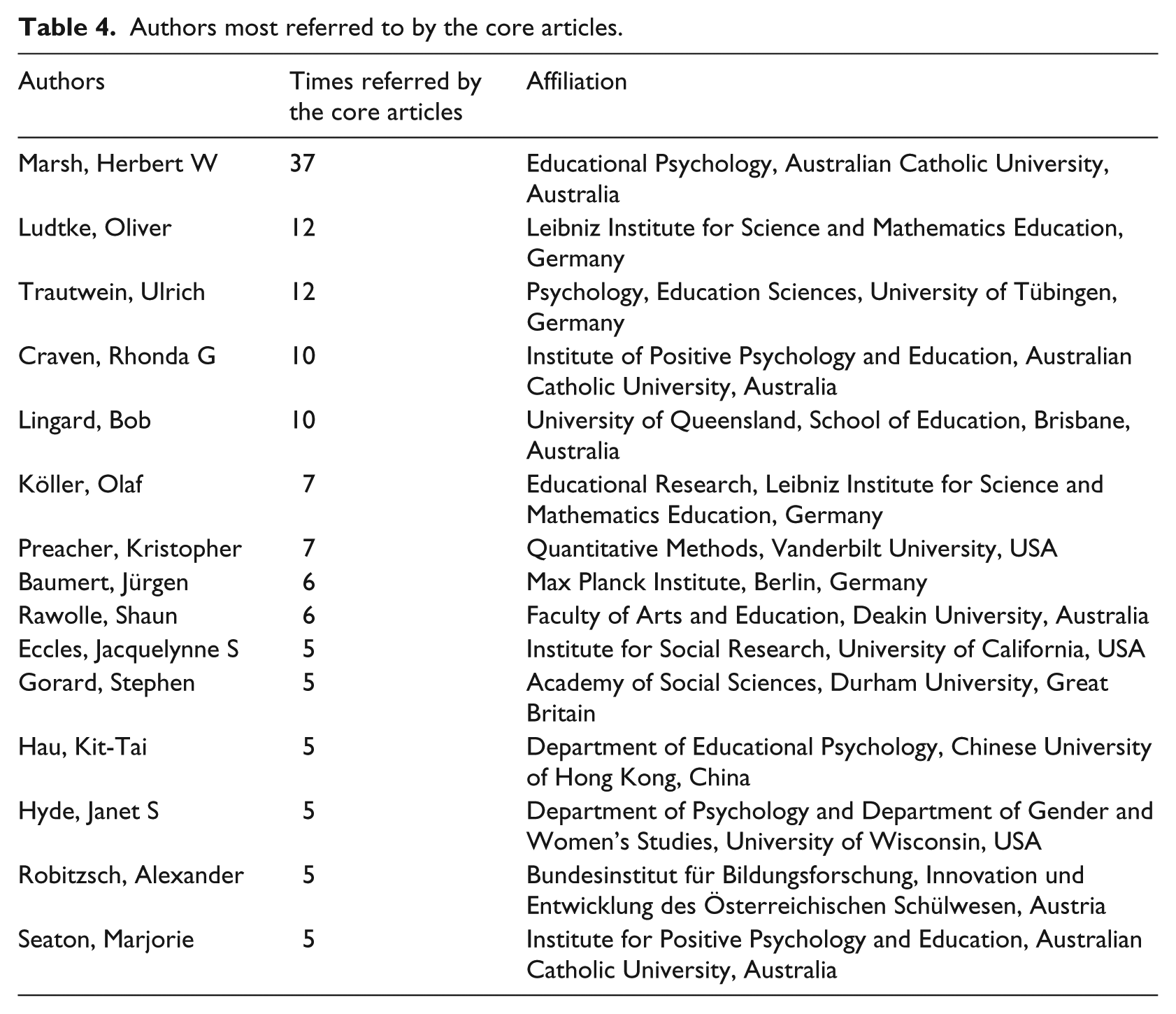

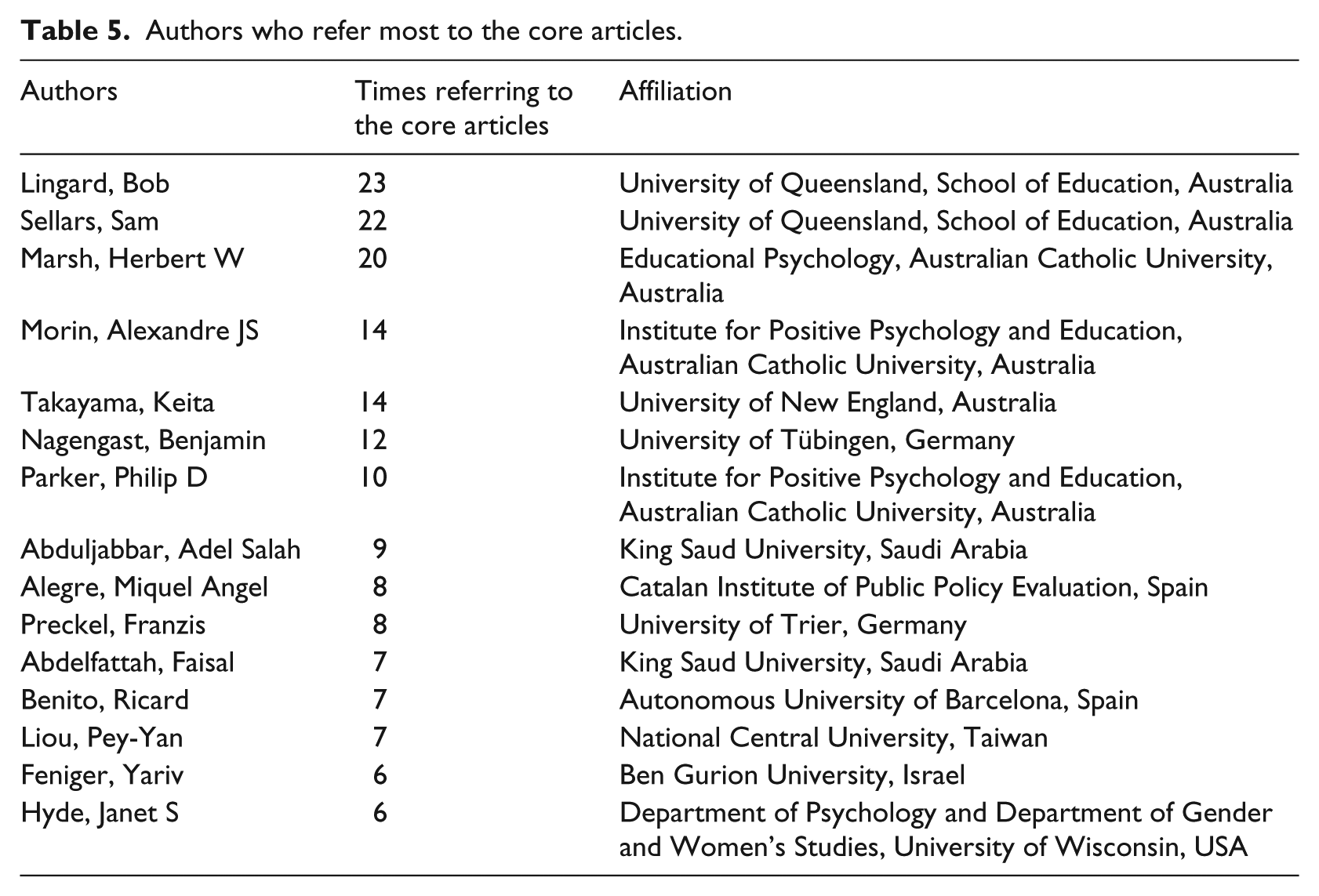

In order to investigate nodes of research and make claims on epistemic cultures, the authors referred to by the core articles and referring to the core articles were mapped. It became clear that some authors were more used and active than others in establishing nodes of research. The most referred-to authors in the core articles are presented in Table 4, and the authors referring to the core articles in Table 5. In these tables, the 15 most referred-to or referring authors are portrayed in order to provide manageable information and illuminate the major actors in the field.

Authors most referred to by the core articles.

Authors who refer most to the core articles.

Tables 4 and 5 show that Lingard, Sellars and Marsh refer to the core articles the most. Marsh is also the most referred-to author by the core articles (Table 4). Moreover, Craven, Seaton and Rawolle are referred (Table 4) authors and are also authors of the core articles. Takayama and Nagengast refer (Table 4) to the core articles and to the authors of the core articles. Taken together, this indicates an overlap between the referred-to authors, the authors of the core articles and the referring authors. This observation confirms that some epistemic cultures seem to be more important than others in framing PISA research.

Nodes of research

The authors Lingard and Marsh are referred to by the core articles, are authors of core articles and refer to the core articles. Conclusively, Lingard and Marsh can be regarded as important establishers of research nodes in the investigated selection of PISA research. Marsh is a psychologist from Australia and in his role as a professor at the University of Oxford has had many articles published on, for example, PISA results. When mapping Marsh’s co-writers, not only in relation to the corpus, many of the other authors listed in the Tables 4 and 5 appear; for example, Morin, Nagengast, Craven, Parker, Lütke, Trautwein, Seaton, Abduljabbar and Köller. Even though many of Marsh’s co-writers are Australian, he also has an extensive cooperation with researchers in England and Germany. Further, Marsh publishes mostly in the fields of psychology and the social sciences, and primarily in the Journal of Educational Psychology.

Another node of research is concentrated around Lingard, an educationalist from Australia, who has a global network and is mostly published in policy journals. Two of Lingard’s co-writers also appear in Tables 4 and 5, namely Sellars and Rawolle. Another important co-writer is Grek – one of the authors of a core article selected for this study. In relation to Marsh, there is a major difference in terms of scientific field, in that most of Lingard’s contributions relate to the social sciences. Lingard also has an extensive cooperation with researchers in different countries in the English-speaking world. Most of these articles are published in the Journal of Education Policy.

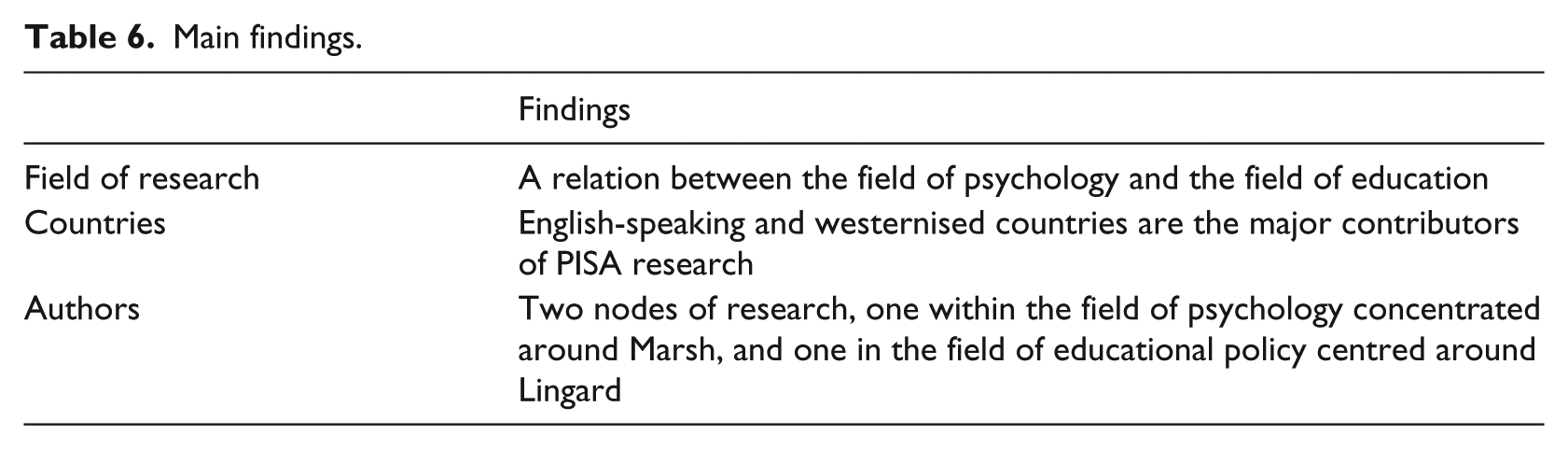

By combining the retrospective and prospective analyses, we have been able to highlight some of the characteristics of a part of PISA research. These features are illustrated in Table 6.

Main findings.

In this selection of PISA research, some authors tend to be more visible than others. Two especially important nodes of PISA research are highlighted, both of which are located in English-speaking countries. One node relates to psychology and the other to education. A relation between the field of psychology and the field of education has also been identified. These findings are discussed in the next section.

Organisation and legitimisation of knowledge in PISA research

As already stated, the aim of this study is to explore and develop knowledge about the rapidly growing research field of ILSA, with a focus on PISA research, which is the largest and most viable sub-section of ILSA research. The expansion of ILSA (e.g. Kamens and McNeely, 2009) and ILSA-research (e.g. Lindblad et al., 2015) is well documented. This study contributes to the field of ILSA research by exploring the organisation and legitimisation of knowledge about PISA research in peer-reviewed journals. This is done by addressing the research question: how is research using PISA data scientifically organised and legitimised? This is investigated by exploring which authors, research fields, journals and countries are active in this section of PISA research. Consequently, we systematically map how a section of PISA research and knowledge is produced and how author referrals create legitimacy. The research question is answered and discussed in the following section.

A relation between psychology and education

One of the study’s major findings relates to the connection between the field of psychology and that of education. Thirty-four percent of the articles referred to by the core articles represent the field of psychology, and 29 percent the field of educational research, which indicates that psychology is the dominant field. It is important to remember that by its very nature, WoS may prioritise psychology rather than education, which means that the result could be biased due to software construction. The situation is reversed when it comes to articles referring to the core articles. Here, 47 percent of the articles referring to the core articles are assigned to the research field of education and 27 percent to the field of psychology. This could indicate, in relation to the limitation concerning WoS, that WoS may not be overly biased in favour of psychology. In order to explore this, a more detailed database analysis would have to be performed.

The third significant research field to emerge in the analysis is sociology, for both referred-to and referring articles, with a third place on both lists. Publishing patterns for the less referred-to and referring research fields are more scattered. It is noteworthy that the corpus manifests in the field of psychology in terms of inspiration (retrospective analysis) and disseminates to the field of education (prospective analysis). These findings indicate a relation between the epistemic cultures of psychology and education in the construction of PISA research. We argue that PISA research is situated in-between these two scientific cultures. In this, based on the publications referred to by the core articles, it is possible that psychology has a slightly more predominant role in the construction of the field. It can also be conjectured that in the publications referring to the core articles, education becomes increasingly more dominant in the field of PISA research. The findings also indicate that among those who refer to PISA research, educational research is important. The question then arises as to whether the status of the field of psychology and the knowledge production in psychology are used to gain legitimacy within the field of education.

English-language-framed PISA research

As might be expected, the authors of the articles in the corpus are primarily based in English-speaking and westernised countries. The US has 37 percent of the referred-to authors and 16 percent of the referring authors. The next highest countries in these categories are England (referred-to authors 16 percent) and Australia (referring authors 13 percent). China is the only non-westernised country to be included in both the lists of referred-to and referring countries. This result can be interpreted in different ways, although the favouring of authors from English-speaking countries is clear and underlines the influential position of English-language research journals. As such, the result is perhaps not particularly striking, although the bias in favour of English literature and westernised countries within the field is significant, especially if language and language-dependent concepts are considered as important for how knowledge is created and legitimised. A result of these observations is that the establishment and development of knowledge in these specific epistemic cultures is highly biased in favour of English concepts and is also framed in that particular language. Field-specific concepts are, therefore, framed by the language. A domination of western ideas can also be highlighted and discussed. It is fair to say that the research field is rather narrow, even though PISA is regarded as international. This narrowness is further strengthened by the fact that the PISA assessment itself is normally presented in English. What is not specifically investigated in this study is PISA research presented in languages other than English. Instead, the study is framed by research embedded in WoS, which is biased in favour of English-language journals. Elaborating on non-English-language research could be an interesting study to perform, given that research has shown that when PISA concepts meet national contexts, translations take place that give PISA new directions and new foci. However, further studies would need to be conducted in order to trace the trajectories of PISA reasoning in different languages and contexts.

Nodes of research: two dominant epistemic cultures

By exploring Marsh and Lingard and their co-authors, it is possible to determine the construction and legitimisation of knowledge. This appears in the two nodes of research identified in the bibliometric analysis: one situated within educational policy thinking and one active within the field of psychology. The two presented authors appear as referred-to authors, authors of the core articles and as authors referring to the core articles. It is also obvious that co-writers, especially Marsh and Lingard, are referred to by the core articles, are authors of the articles in the corpus and are referred to by the corpus. This can be discussed in the light of Knorr Cetina and Cicourel (1981), who argue that science is largely a socially organised cognitive activity made up of various epistemic cultures. Marsh and Lingard seem to be important in the organisation of cognitive activity and thereby function as epicentres of two different epistemic cultures of the investigated PISA research and are highly quoted, which is important for the accountability and legitimation of the presented arguments and knowledge (e.g. Kandlbinder, 2015).

In a situation in which the most central articles of PISA research tend to represent a somewhat narrow field in terms of authors, there is also a risk that the field will become self-authorising. Self-authorising means that author A says that x is a scientific fact by referring to author B and that x is, therefore, presented as an evidence-based fact. This makes us wonder whether self-authorising over-emphasises specific reasoning within the field and, as such, is self-generating and self-referential, as Hacking (1992) pointed out in his important work. This question is prompted by the overlapping that is observed in the studied PISA research, as exemplified in the analysis of the corpus. For example, authors are found in the list of referred-to articles and as authors of the referring articles. The author of the 10th most referred-to article in the corpus is also fifth on the list of authors referring to the corpus. Thus, there is a tendency for PISA research to be constructed and managed by a clique of researchers in dominant positions with regard to the volume and impact of their publications and the opportunities to guide the narrative surrounding the scientific debate about PISA. In our analyses, two important nodes for PISA research are identified. Both these nodes are well established, with international cooperation, large productivity and numerous co-writers further establishing their centrality in PISA research. This could indicate the importance of PISA research for capacity building, as previously described in relation to ILSA tests (see Wagemaker, 2014). We argue that this capacity building includes the legitimisation of knowledge and actors through nodes of research constructed by self-authorising and self-referential publishing patterns and co-authorship created in specifically demarcated epistemic cultures.

It is noteworthy that when it comes to inspirational authors (retrospective analysis) and dissemination in the field of education (prospective analysis), the PISA corpus is very similar. This may not be all that surprising, considering that scientific authors tend to promote their own careers and positions by referring to themselves and their colleagues/collaborative partners for research accountability. This trend is perhaps also acceptable, given that it is normal to think about your own work as important for answering different research questions and creating new ones. Individual researchers’ work can be interpreted as work in progress, where previous work always plays an important role. In our view, this system of individual researchers’ self-referential is perfectly normal in contemporary science, but can lead to problems when it comes to the validity and reliability of individual works and a research field at large. This has to do with the system of accountability, as well as intellectual reasons of positioning. As such, we wonder whether the system of accountability actually fuels the construction of nodes of research in a race for legitimacy, which in itself creates two dominant epistemic cultures.

The characteristics of PISA research

It is interesting to reflect on Wagemaker’s (2014) statement that one impact of ILSA is a widening of discourse. Based on our findings, we illuminate the fact that, in comparison to many other scientific fields, very few articles and actors are connected to the two nodes of research dominating the scope of PISA research in scientific peer-reviewed journals. Moreover, this also indicates that many research articles rarely disseminate as references, which leads to speculations as to how wide the PISA discourse really is. Yet, the fewta articles that are produced have a disproportionate impact on the creation of a specific PISA reasoning. This can also be discussed in relation to the dominance of English as the language of publication, which may mean that concepts are discussed differently.

This study has discussed the role that scientific articles play in the creation of knowledge structures (Börner et al., 2003) in a specific section of ILSA research. As PISA tests and PISA research are constantly used in arguments for reform initiatives and curriculum development, it is important to study and analyse this specific knowledge field in order to understand how such knowledge is organised and legitimised. This ought to lead to a better understanding of how notions such as knowledge, curriculum, assessment and evaluation are discursively formed inside and outside a scientific community and how different research fields function as interfaces, where intellectual yielding occurs and academic capital is interchanged. The findings suggest an interchange of legitimisation in which the USA and other English-speaking countries set the scene for academic discourse and transformation. Therefore, PISA knowledge seems to be organised in publication patterns that favour the English language and the field of education. Moreover, it could be that authors draw on knowledge constructions in psychology to gain legitimacy within the field of education. An indication of this is the publication pattern in which psychology is a major field for the referred to authors, but where the field of education is becoming more important through the authors of the core articles and those who refer to them. How this relation between psychology to education changes or affects the field of education and vice versa is an interesting question that is worthy of further research.

Two further important nodes of PISA research have been located; one dominated by psychology and the other by the educational sciences. The two research nodes function as epicentres in different epistemic cultures of the publishing patterns, in that the authors and co-authors refer to and reference each other’s work to the extent that only a few actors control what is formulated in terms of PISA knowledge. Both nodes establish thrones in PISA research, where the interchange of legitimacy is conducted through the cultivation of each other’s knowledge. This development can be discussed in terms of assortativity and preferential attachment. Assortativity is the tendency to connect to actors who are similar (Guns, 2014), whereas preferential attachment is the tendency to connect to successful actors (cf. Barabási and Albert, 1999). Hence, the corpus appears as a self-referential and self-authorising activity. As such, the study presents two nodes, which can be characterised as two different epistemic cultures, that can be described as two ‘thrones’ in PISA research on which PISA discourses are constructed. Moreover, we wonder whether research accountability leads to what we metaphorically express, in line with the well-known George RR Martin novels and HBO series Game of Thrones. The reasons for this are, just as Merton (1973) stated, that rivalry occurs within the field concerning who the actors are to constitute knowledge. The rivalry can be seen by using the theoretical concept of epistemic cultures for understanding that there are different groups or ‘houses’ that all claim supremacy on how to understand specific concepts and claims within the field as ‘facts’ and ‘truths’. The ‘thrones’ then establish specific epistemic cultures. As a result of this, we believe that the metaphor of game of thrones is suitable for our findings because, just as in the series and novels, there is rivalry going on within the scientific field concerning how and on what grounds ‘facts’ and ‘truths’ are constructed, as a continuing process with no obvious winner.

Footnotes

Declaration of conflicting interests

None declared.

Funding

This research received no specific grant from any funding agency in the public, commercial, or not-for-profit sectors.