Abstract

The starting point of this study is the argument that not only rankings of higher education institutions (HEIs) are inescapable, but so is the constant criticism to which they are subjected. Against this background, the paper discusses how HEIs from Central and Eastern Europe countries (CEECs) are (non)represented in the main global university rankings. The analysis adopts two perspectives: 1) From the point of view of higher education in CEECs – what are the specificity, basic problems and perspectives of higher education in CEECs as seen through the prism of the global ranking systems? 2) From the point of view of the ranking systems – what strengths and weaknesses of the global ranking systems can be identified through the prism of higher education in CEECs? The study shows that most of the HEIs from CEECs remain invisible in the international and European academic world and tries to identify the main reasons for their (non)appearance in global rankings. It is argued that although global rankings are an important instrument for measuring and comparing the achievements of HEIs by certain indicators, they are only one of the mechanisms – and not a perfect one – for assessing the quality of higher education.

Keywords

Introduction

In its 2011 report on progress towards the common European objectives in education and training, the European Commission commented on the global rankings of higher education institutions (HEIs) and how European HEIs are ranked. After highlighting the European countries that present themselves best, including Germany, Great Britain, Holland and Sweden, the report devoted special attention to the countries of Central and Eastern Europe and found that, out of the Central and Eastern European Member States, only Poland, Hungary, the Czech Republic and Slovenia had universities in the top 500 (EACEA/Eurydice, 2011: 61). Underlying this comparison is a clear message: the HEIs of Central and Eastern Europe 1 are lagging behind and fail to be competitive, not only at the global level, but also at the European level. Seeking a deeper meaning in the comparison, we may find a long-expressed concern that achieving the goals of European Union (EU) policies in the field of higher education ‘may also be particularly challenged by the concurrent enlargement of the EU with 10 new countries in Central and Eastern Europe’ (Van der Wende, 2003: 15). There is no doubt that, in the global rankings, the ‘old’ Europe looks better than the ‘new’ one. Thus, the presence (or absence) of HEIs in the global rankings raises the obvious question: does higher education in the former socialist countries hold a useful potential for the development of the European higher education area, or does it represent a challenge, and even a threat, to that development?

Against this background, the present paper looks more closely at how HEIs from Central and Eastern Europe Countries (CEECs) are presented, or not, in the main global university rankings:

The paper shows that most of the HEIs from CEECs remain invisible in the international and European academic world. It argues that these results not only outline the specificity and some of the problems of higher education in CEECs. They also raise some questions that are important beyond their relation to development of ranking systems, such as: How can, and should, quality of higher education be defined? How can the values of diversity, quality and social justice be simultaneously maintained in higher education? How can strategic thinking in higher education be promoted in the context of the daily struggle with financial constraints and bureaucracy?

The paper will proceed as follows. The next step will consist of reviewing the relevant literature on university rankings. Then data regarding the (non)appearance of HEIs from CEECs in global rankings are presented. After that follows a discussion of higher education in CEECs in the mirror of global rankings, and – vice versa – global ranking systems through the prism of higher education in CEECs. The final section of the paper offers concluding remarks.

Is there a paradox here? The inevitability of the highly criticized university rankings

In recent years, the rankings of HEIs, and especially the global rankings, have become an intensely discussed topic. On one hand, there is a generally shared conviction that ‘rankings are here to stay’ and there is ‘nowhere to hide’ from them (Hazelkorn, 2014: 23; Marginson, 2014: 45). On the other hand, rankings have been the target of constant criticism (Dill and Soo, 2005; Marginson, 2009; Marginson and Van der Wende, 2007; Teichler, 2011a; Usher and Savino, 2007; Van der Wende, 2008; Van Dyke, 2005). At first glance, this situation appears paradoxical. However, in view of the specifics of higher education and its developments over the last few decades, the appearance of ratings and their constant application is something inevitable, as is likewise the constant criticisms levelled at them.

‘If rankings did not exist, someone would invent them’. These are the words of Philip Altbach (2011: 2), one of the best-known researchers of higher education and Founding Director of the Centre for International Higher Education, Lynch School of Education, Boston College. The growing interest in rankings of HEIs and the establishment of increasingly numerous and diverse rankings are an inevitable result of the radical changes emerging in the sphere of higher education in all countries of the world in the second half of the 20th century and especially in the beginning of the present century. The trends in question are (Boyadjieva, 2012; Teichler, 2011b):

- massification of higher education and the growing diversity of students in HEIs;

- the increased competition within national systems of higher education and at international level;

- the internationalization of higher education;

- the commercialization of higher education and the entry of market mechanisms;

- the diversification of institutions offering post-secondary education; and

- the change of status of knowledge in modern societies.

In these circumstances, the potential ‘clients’ of HEIs, such as students and their families and employers, are seeking information so as to make a better-informed choice amidst the diversity of offered programmes. For its part, every HEI needs common, objective criteria and indicators for measuring its performance in comparison with other HEIs and to ascertain its specific place on the market of education services amidst the numerous institutions that enrich the body of scientific knowledge. Not least, the need for a comparative view of how the different HEIs are functioning is deeply felt at policy level, when concrete higher education policies are grounded and elaborated. That is why ‘railing against the rankings will not make them go away’ (Altbach, 2011: 5).

Rankings are also linked to the specificity of higher education as an institution. Higher education is defined as a ‘fertile ground for rankings’, because it is a field in which it is very difficult to define quality, and there are no objective measures explicitly linked to the quality and quantity of its outputs. Thus, it is argued that ‘rankings provide a seemingly objective input into any discussion or assessment of what constitutes quality in higher education’ (Morphew and Swanson, 2011: 186).

Rankings have emerged as an instrument for the evaluation of HEIs within national systems of higher education. Today, most rankings are at the national level. With the massification of higher education, and especially the unfolding processes of globalization, the need arises for a common comparative assessment of higher education in all countries.

The first global ranking of HEIs was conducted in 2003 by the Institute of Higher Education at Shanghai Jiao Tong University. The next was that of Times Higher, published in 2004. In the following years, several other global rankings established themselves, including the Leiden University ranking; the Scimago ranking of institutions; the QS world ranking of universities; and the European ranking U-Multirank.

The different ranking systems are based on different kinds of information and data received from different sources. An in-depth comparative study of the six global rankings was published recently (Marginson, 2014). Simon Marginson’s analysis shows that there is no perfect ranking, and each of the best known global rankings has its advantages and shortcomings. Nevertheless, the author believes that the practical issue is not how to get rid of university rankings – which is impossible in the foreseeable future – but how to develop rankings so as to minimize their negative effects and have them serve the general interest better in providing the best comparative information (Marginson, 2014: 47).

As soon as they appeared, the ranking systems were subjected to numerous criticisms by representatives of different social groups, and especially by the academic community. To generalize, we may say there are nine major arguments as regards the endemic weaknesses of rankings: (a) the vicious circle of increasing distortion; (b) endemic weaknesses of data and indicators; (c) the lack of agreement on quality; (d) ‘imperialism’ through rankings; (e) the systemic biases of rankings; (f) preoccupation with aggregates; (g) praise and push towards concentration of resources and quality; (h) reinforcement or push towards steeply stratified systems; and (i) rankings undermine meritocracy (Teichler, 2011a: 62–66). Very importantly, according to many authors, there is an accumulation of biases in rankings. Undoubtedly, global rankings favour research-intensive institutions with strengths in hard sciences, universities that use English, older institutions in countries with long-ranking traditions, HEIs in countries with steep hierarchies and with little intra-institutional diversity (Altbach, 2011: 3; Kehm, 2014; Teichler, 2011a: 67). University rankings are defined as ‘unfair’ – which is not due to the technique of measurement, but to their usages and the rationale for their existence. Rankings are seen as a driver of ‘a market-like competition in higher education’ (Marginson, 2014: 47) and as an instrument to ‘confirm, entrench and reproduce prestige and power’ in higher education (Marginson, 2009: 600).

Hence, not only rankings are inescapable, but so is the constant criticism to which they are subjected. This situation creates a need for careful scrutiny of how HEIs in CEECs present themselves in the world rankings, and especially how world rankings are used to reflect and evaluate, through a comparative perspective, the achievements and problems of HEIs in CEECs. The serious criticisms levelled at world rankings show that they create a somewhat distorted image of universities. Like distorting mirrors, they enlarge certain features, reduce others and make yet others crooked. However, they also allow us to see the situation from different angles. Thus, in most cases, the pictures drawn by rankings are not an occasion for complacency and calm contemplation but for serious reflection, which must not be postponed or underestimated.

The (non)presence of HEIs from CEECs in the global rankings: some facts

Systematized below are some facts about the (non)presence of HEIs from CEECs in the main global rankings, namely in the

Academic Ranking of World Universities of Shanghai Jiao Tong University

The Shanghai ranking is based on assessments of achievements in scientific activity using six objective indicators: alumni of an institution who have won Nobel Prizes and Fields Medals; staff of an institution who have won Nobel Prizes and Fields Medals; highly cited researchers in 21 broad subject categories; papers published in

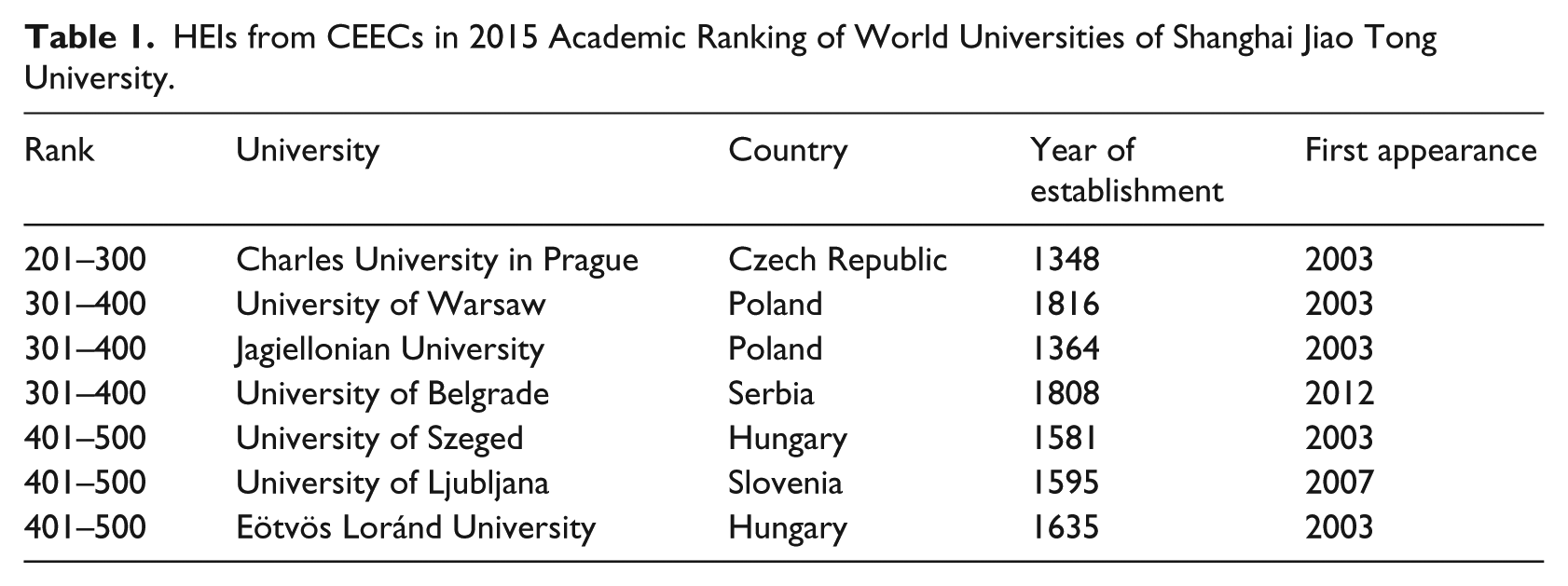

The analysis of the latest edition of the

HEIs from CEECs in 2015 Academic Ranking of World Universities of Shanghai Jiao Tong University.

The data show that the Czech Republic has one university among the top 300; Poland has two universities among the top 400; Serbia has one among the foremost 400; Hungary has two universities among the foremost 500; and Slovenia has one university among the top 500. Not a single university from the other CEECs is present in this ranking.

The ranking by separate fields – science, engineering, life, medicine and social science, which classifies the top 200 – does not include a single university in CEECs. In the ranking by separate subjects – mathematics, physics, chemistry, computer science and economics, and business – Charles University is in the top 200 for Mathematics (151–200) and Physics (101–150), Eötvös Loránd University is in the top 200 for Physics (101–150) and the University of Warsaw is in the top 200 for Physics (151–200).

Times Higher Education World University Rankings

The latest editions of

- Charles University in Prague: placed 351–400 in the ranking for 2013/2014 and 301–350 for 2014/2015.

- University of Warsaw: placed 301–350 in the ranking for 2013/2014 and 2014/2015.

QS World University Rankings

Table 2 indicates the HEIs in CEECs included in the 2015 edition of

HEIs from CEECs in 2015 QS World University Rankings.

The data show that Poland is represented with six universities; the Czech Republic, Hungary, Romania and Lithuania each with four; Estonia with two; and Bulgaria, Croatia, Serbia, Slovakia, Slovenia and Latvia with one each. As regards positions in the ranking, the best universities are those in the Czech Republic and Poland.

Leiden Ranking

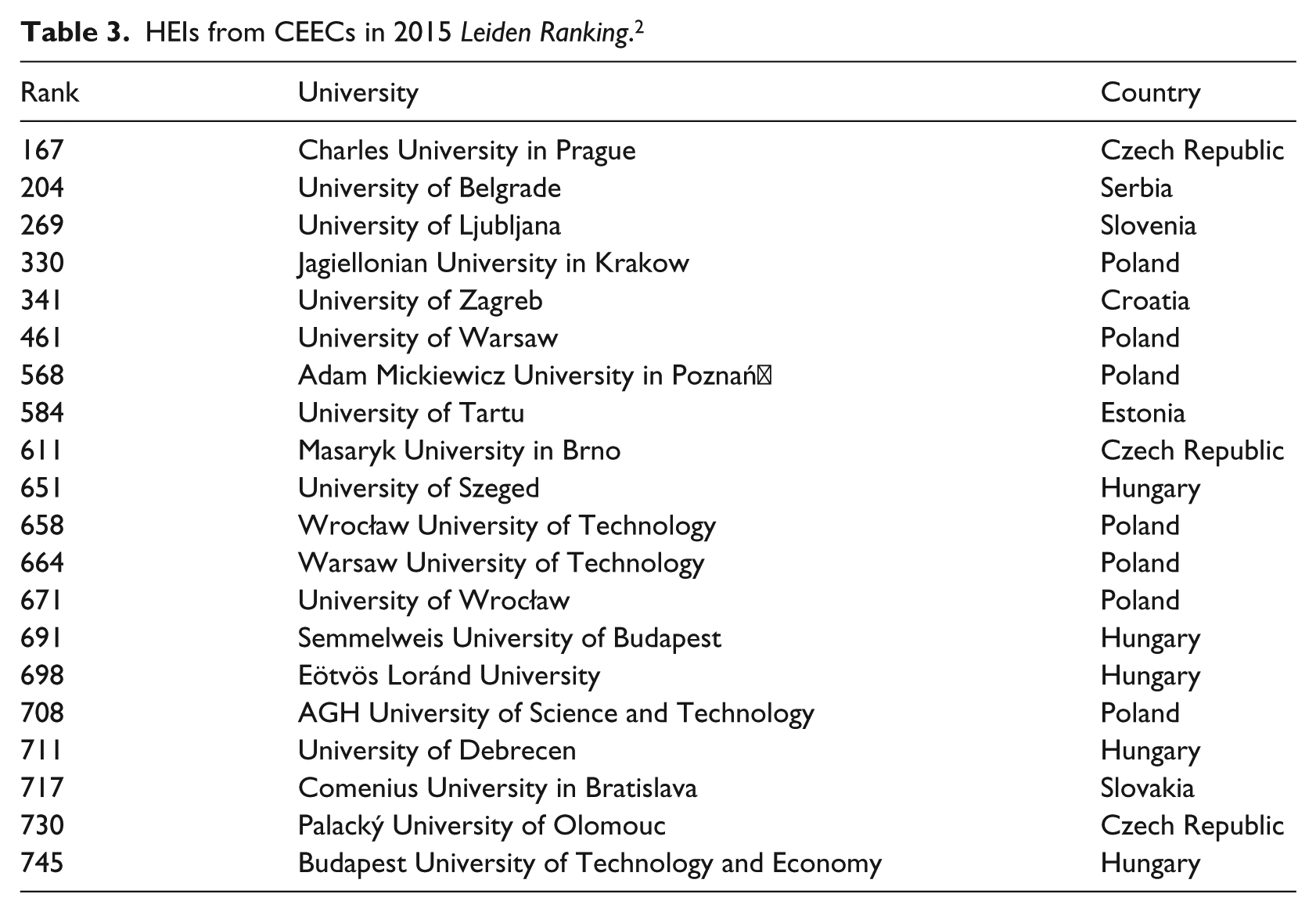

Table 3 presents the HEIs in CEECs included in the 2015 edition of the

HEIs from CEECs in 2015

The data in Table 3 show that Poland is represented with seven universities; Hungary with five; Czech Republic with three; Croatia, Serbia, Slovakia, Slovenia and Estonia with one each; and the other CEECs included in the analysis have no universities included in the ranking.

Higher education in CEECs in the mirror of global rankings

A mere glance at the data from the four global rankings makes evident that a very small share of the HEIs in CEECs are present there, and with a few exceptions, only in the second half or at the bottom of the rankings. These data show both the specificity of the development of higher education in these countries and the specificity of the rankings themselves. The latter are indicators not only, and not invariably, of the (relatively low) quality of higher education in CEECs, but above all, of some important structural characteristics related to the system of higher education in post-socialist European countries and of the problems these characteristics engender.

Recent transformations of higher education in CEECs

There is no doubt that the development of higher education in each of the CEECs has its peculiarities and occurs in different socio-political and cultural contexts, 3 which should be carefully analysed and taken into consideration when discussing how HEIs from each of the countries are (not) present in the global rankings. Nevertheless, there are some characteristics which, although to a different degree, outline the specific picture of higher education in CEECs. Following the radical social transformations which took place in 1989 and the early 1990s, higher education in the countries of the former Eastern bloc appeared to be in a unique and highly complex situation. It had to go through two deep changes simultaneously, both of which had essential impact on national higher education systems. The first change was related to the general social transformation of the countries that had been under communist regimes and the concurrent profound change in the principles of functioning of HEIs and regulation of their relations with the state and society. In the same period, the higher education systems in almost all countries in the world underwent major and intensive innovations in response to globalization, internationalization and the increasingly wide dissemination of higher education. As a result, the following significant innovations were introduced in the higher education systems of all CEECs, which caused qualitative changes in their character: i) emergence of the private sector; ii) introduction of new structural elements, such as the three-cycle degree system (Bachelor’s, Master’s and PhD degrees), the credit system and university quality assurance systems; iii) restoration of university autonomy and academic freedom; iv) the encouragement of mobility of students and staff; and v) introduction of competitive research funding and tuition for students. In all CEECs, the expansion of higher education has led to a transformation of their higher education systems from elitist and unified to diversified systems with broad enrolment. However, the diversification and liberalization of higher education have followed different patterns: Poland and Estonia introduced very liberal rules for establishing new HEIs; Slovakia stuck to more conservative legislation; whereas Bulgaria, Hungary and Slovenia adhered to a more balanced policy (Boyadjieva, 2007; EACEA/Eurydice, 2012; Kwiek, 2013a; Simonová and Antonowicz, 2006; Slantcheva and Levy, 2007).

The qualitative transformation of higher education in CEECs has been accompanied by significant quantitative changes in education. In line with the worldwide trend (Schofer and Meyer, 2005), and driven by political and economic opening and liberalization (Cerych, 1997), higher education has been expanding in all CEECs. The expansion has taken place in a context of underfunding of the old public institutions and the emergence of new private institutions opening their doors to hundreds of thousands of new students (Kwiek, 2013b). Despite the general trend of expansion, the countries differ in the speed of expansion of higher education. Thus, Slovakia is the country with the highest growth of the absolute annual number of graduates per 1000 population for the period between 2000 and 2008 (14.1% per year), followed by the Czech Republic with a growth of 11.1%. In Bulgaria and Hungary, this share is the lowest – 2.0% and 0.7% respectively. For all other countries, the growth achieved for this period is higher than the EU 27 average of about 4.5% per year (EACEA/Eurydice, 2011: 66). In terms of the Europe 2020 target for tertiary educational attainment in the age group 30–34, Hungary and Slovenia are the countries in which the percentage of graduates in the age group 30–34 almost doubled between 2000 and 2010. Notwithstanding the high speed of expansion in the period between 2000 and 2008, in 2010 Slovakia had the lowest share of graduates among those aged 30–34 (22.1%). The increase of the share of higher education graduates in this age interval in Poland was almost threefold. While in 2000, the share of graduates was only by 12.5%, in 2010 it amounted to 34.8%. In Bulgaria and Croatia, the increase was relatively modest. In 2010, 27.7% of people aged 30–34 in Bulgaria had a higher education degree, as compared to 19.5% in 2000. In Croatia, this growth was respectively from 16.2% to 24.5% (Ilieva-Trichkova and Boyadjieva, 2016: 213).

Structural characteristics of the higher education systems in CEECs

The fundamental qualitative and quantitative changes that took place in higher education in CEECs, and the social-economic context of these changes, were connected with and, in turn, influenced the formation of some specific structural characteristics of the higher education system. We are referring to traits that are directly related to how these HEIs are present or absent in the global rankings, namely: i) the place of research within higher education; ii) the inherited model of specialized HEIs; iii) the existence of a large number of small and specialized HEIs; iv) the persistent underfunding of higher education; and v) the brain drain of academic staff and scientists.

The place of research within the higher education system of a given country is very important, inasmuch as in all global rankings, the indicators related to scientific production are of leading or even unique importance. However, in many CEECs (for example, Bulgaria, Czech Republic, Poland, Romania, Slovakia), there is a continued reproduction of the division, inherited from the time of the communist regimes, between research institutes united in academies of sciences, and the sector of higher education. Although to a lesser degree, the separation of teaching and research is still prominent in countries which emerged from former Yugoslavia (Vukasovic, 2016: 112). Despite the evident continuing trend of integration between teaching and research in higher education noted by Peter Scott (2007: 437), this trend is uneven (most pronounced in the three Baltic countries due to the radical transformation of their academies of sciences) and ‘the place of research within higher education continues to be unstable in contrast to the better-understood and accepted relationships between research and teaching characteristics of Western European and North American systems’. One of the results of this division is the concentration of researchers in research institutes and a sort of decreased scientific potential and capacity of HEIs. The effects of this division should be assessed against the background of the differences in research capacity within CEECs and between them and the other countries as shown by the evaluation report of the FP7 and Horizon 2020 Programme (European Commission, 2015).

It is well-known that the network of HEIs in CEECs developed in the communist period included only state institutions and was characterized by significant institutional specialization. The model of the specialized HEIs (also called polytechnics or professional HEIs) emerged in the beginning of the 20th century, but was established as a dominating institutional model in most CEECs after 1944 when socialist/communist parties came to power. This model was perceived as being the most appropriate one for the implementation of the political goals of the communist parties and their ideological ambitions to achieve mass industrialization. After 1989, gradual changes were introduced in the structure and status of these specialized HEIs in all CEECs. In Hungary, mergers to create larger institutions were encouraged. In Bulgaria, many of the specialized HEIs legally acquired university status. Although this process was accompanied by real changes, in some cases, behind the displayed labels of full, multi-faculty universities, these HEIs continued to function (mainly due to the lack of qualified faculty) as specialized institutions offering specialized education in the old-fashioned disciplines and poor-quality education in the newly established ones (Boyadjieva, 2007). As a result, ‘the survival of many “Soviet-era” specialized HEIs and the comparative weakness of what might be called the “generalist” university tradition have influenced the form of restructuring in Central and Eastern European higher education systems’ (Scott, 2007: 437).

The expansion of higher education in the CEECs has been realized mainly through a significant increase in the number of HEIs. If we take into account the size of the population in these countries, the number of HEIs in each one of them is really impressive. According to the relevant ministries and national agencies for accreditation and quality assurance, there are 51 HEIs in Bulgaria, 50 in Croatia,

4

50 in Slovenia, 89 in Serbia, 24 in Estonia, 39 in Slovakia, 74 in Czech Republic and 460 in Poland. In the perspective of global rankings and inclusion in those rankings, an important fact is that most newly created HEIs are small in size, narrowly specialized and offer training only in a limited number of specialties; and some of those schools have very limited scientific research activity. Thus, although there are significant differences between countries, as a rule the average size of HEIs in CEECs is much smaller than in Western Europe or North America (Scott, 2007: 427). Consequently, a considerable proportion of HEIs from CEECs prove to be uncompetitive; but even by definition, they cannot figure in the global rankings as they do not meet, for instance, the criterion of the

The presence (or absence) of most HEIs from CEECs in the global rankings is also influenced by the chronic underfunding of higher education and ‘brain drain’ of researchers and academic staff, inasmuch as these are directly related to the quality of research and the publication activity of HEIs. The chronic underfunding of higher education in CEECs is clearly evident from statistical data, regardless of whether funding is measured as the percentage of GDP devoted to higher education, as the percentage of GDP devoted to research or as funding per student (European Commission/EACEA/Eurydice/Eurostat, 2012; European Commission DG EAC, 2014a, 2014b).

Data show that the emigration rates among tertiary-educated people tended to increase in the period between 1990 and 2010 in all CEECs, the highest increase being in Bulgaria, where it was eightfold (from 1.53% to 12.22%). As of 2010, the emigration rate among the tertiary-educated is highest in Romania (20.36%) and lowest in Slovenia (9.59%) (Brücker et al., 2013). The migration of highly skilled professionals from CEECs to the Western parts of the European Union has additionally increased after the financial crisis (Nedeljkovic, 2014). It is acknowledged that ‘all Central and Eastern European higher education systems have suffered from “brain drain” to the West, currently estimated to be 15% of teachers and researchers’ (Scott, 2007: 434) and that one of the fields in which the brain drain has created specific shortages in CEECs is science and research (Ionescu, 2014).

While the global rankings make visible some important structural characteristics of the higher education systems in post-communist countries, they also highlight the basic problems in the development of higher education in CEECs. That is why they are often used as an external reference point in policy debates. For instance, the debates on the quality of education in Poland present a case in which the rankings are perceived as a feature of modern higher education as opposed to the undesired communist legacy, which lends them credibility (Erkkilä, 2014: 97). The generally unsatisfactory performance of Polish HEIs in the global rankings has proved to be an important motor for reforms in higher education, inasmuch as ‘[t]op decision-makers explicitly state that their objective is to give Polish universities a decisive push to improve their position in leading international rankings’ (Dakowska, 2013: 109). The use of global rankings as an external reference point is fixed in basic strategic documents that outline the policies and directions for reform of higher education in Poland (Dakowska, 2013; Kwiek, 2016). The absence of Bulgarian HEIs in the global rankings was also one of the arguments adduced both by members of the academic community and by journalists in the debates surrounding the adoption of the new Strategy for the Development of Higher Education in Bulgaria (Boyadjieva, 2012). It should also be pointed out that in CEECs ‘there is no “obsession” with rankings at any institutional level’ (Kwiek, 2016: 167). The causes of this can be sought in the lack of confidence of HEIs from the CEECs that they can be competitive in the global academic area (Kwiek, 2016: 167), and also in the perception that the global rankings are unfair with regard to HEIs from post-communist countries. The feeling of unfairness is born out of the strong connection between the position of HEIs in global rankings and their budget, while HEIs from CEECs have been underfunded for entire decades; the feeling is also due to the fear that global rankings may contribute ‘to the brain drain and to a further marginalization of the Central and East European academic space’ (Dakowska, 2013: 120, 126).

Global ranking systems through the prism of higher education in CEECs

The analysis of the ways in which HEIs from CEECs are present, or absent, in the global rankings defines a specific perspective for considering and distinguishing the important problems related to the development of higher education, but also concerning the rankings themselves as monitoring instruments. The most important problem is related to defining the concept of quality of higher education.

Meaning and measuring of quality of higher education

Comparative studies on rankings, of which the largest-scale ones are those of Nina Van Dyke (2005), Alex Usher and Massimo Savino (2007) and Simon Marginson (2014), have definitely shown that the ranking systems differ in their goals, scope, methodology and the kind and reliability of data used. These differences are so large that no two systems are alike, and between some systems, there is not even a single coinciding indicator (Usher and Savino, 2007: 28). Most importantly, the systems are based on different concepts of quality of higher education. All classifications claim to rank HEIs by quality – ‘the best universities’, ‘top 100 universities’, ‘top 500 universities’. But not a single ranking gives a definition of quality of higher education, and there is no generally shared understanding on this concept. Looking at how the indicators used are related to the concept of quality of higher education, we can identify the following trends:

Although to a lesser degree than the other rankings, the global rankings include indicators related to the conditions, prerequisites and results of quality education. For instance, they include the following: the amount of research activity, citations of publications, awards won by teachers, the number of students per teacher, the proportion of international students, the proportion of international teachers, the income of teachers, the funds for research and the prestige of the institution among the academic community and employers. 5

All global rankings employ indicators for measuring research activity, and its measure is present in all rankings; moreover, it is given very great importance, and in some cases, is the only indicator used.

Unlike the national rankings, the global ones have no indicators based on the opinion of students involved in the teaching process.

The global rankings use no indicators that directly reflect the quality of education results, whether this can be assessed by an external, independent assessment of the knowledge and skills of graduates or by a consideration of professional realization. The only exception to this feature is found in the Academic Ranking of World Universities of Shanghai Jiao Tong University, which includes as indicators the alumni of an institution who have won Nobel Prizes and Fields Medals.

In recent years, through the project ‘Assessment of Higher Education Learning Outcomes’ (AHELO) of the Organization for Economic Co-operation and Development (OECD), an attempt was made to achieve some form of unified measuring of knowledge and skills of graduates, similar to the Programme for International Student Assessment (PISA) survey of students in secondary education (Tremblay et al., 2012). The OECD, which followed the feasibility study that was conducted and evaluated in 2012, envisaged a full-scale implementation of the project, which was to be realized in 2015. The assessments obtained by the feasibility study are rather contradictory, and many scholars have defined it as a failure. According to Philip Altbach (2015), ‘it seems highly unlikely that a common benchmark can be obtained for comparing achievements in a range of quite different countries’. He also raises the question, ‘Who is to determine what the “gold standard” is in different disciplines across institutions and countries?’ and argues that, because courses and curricula vary significantly across countries, ‘AHELO would be testing apples and oranges, not to mention kumquats and broccoli’. Some of the most prestigious universities were strongly opposed to AHELO. For instance, the presidents of leading US and Canadian universities, in a letter to the OECD, pointed out that ‘AHELO fundamentally misconstrues the purpose of learning outcomes, which should be to allow institutions to determine and define what they expect students will achieve and to measure whether they have been successful in doing so’ (Husbands, 2015). In turn, the supporters of AHELO feel that: [i]nstitutional opposition to AHELO, for the most part, plays out the same way as opposition to U-Multirank, it’s a defence of privilege: top universities know they will do well on comparisons of prestige and research intensity. They don’t know how they will do on comparisons of teaching and learning. (Usher, 2015: 1)

The intensive discussion about the attempt at elaborating a higher education equivalent of the PISA and the rejection of this idea by part of the universities holding top places in the league tables, which led to the failure of AHELO, have once again clearly demonstrated the difficulties arising in defining and measuring quality of higher education, as well as the fact that the ranking systems do not represent a neutral technical instrument, but are part of and the basic mechanism for the affirmation and redistribution of positions in higher education. 6 These positions have a great symbolic significance, and they provide an advantage in the competition for attracting high-quality academic staff and new material resources.

Drawbacks in the global rankings from CEECs perspective

The way in which HEIs are present or absent in the global rankings provides further proof for the basic criticisms levelled at those rankings.

[r]anking reinforces the advantages enjoyed by leading universities. It celebrates their status and propels more money and talent towards them, helping them to stay on top. It is difficult for outsiders, emerging universities and countries to break in. Rankings are not ‘fair’ to competing universities. The starting positions are manifestly unequal.

Other authors also argue that global rankings privilege older, well-resourced universities, whose comparative advantages have accumulated over time (Hazelkorn, 2015).

A mere glance at the performance of HEIs from CEECs in global rankings shows that the highest scoring universities are also some of Europe’s oldest universities – Charles University in Prague (1347), Jagiellonian University in Cracow (1364), Vilnius University (1579), University of Tartu (1632), University of Ljubljana (1595), University of Szeged (1581), University of Belgrade (1808) and University of Warsaw (1816). Yet it is also evident that not all the universities in CEECs with a long history have found a place in the global rankings, and some are at the bottom of the ranking – for instance, the University of Wroclaw (1505), Palacký University (1573) and the University of Pecs (1367). The conclusion is clear – universities that have been included in the global rankings have a long and well-established history, but this is not enough to guarantee presence in the global rankings.

Conclusion

HEIs from CEECs find themselves in a strongly competitive academic environment. Global rankings are a result of increased competition between HEIs at the European and world level and at the same time are instruments that reinforce and further stimulate competition. Despite the numerous and constant criticisms of rankings, coming from the academic community and from the public at large, there is a growing conviction that the purpose of this critical attitude towards ranking systems is to perfect them, not to reject them (Marginson and Van der Wende, 2007; Van der Wende, 2008; Van Dyke, 2005). Under these conditions, it is not a matter of choice whether a university will or will not be included in the international scope of competitive comparisons. As a rule, global rankings use indicators, the data on which are contained in international research platforms or in international studies. This means that all HEIs, including those from CEECs, are subject to global ranking. It is obvious that for the most part they are not present in the rank lists; however, this does not mean they do not participate in the global competition and classification, but that their results by the indicators in question are not good enough to make these institutions easily, or at all, recognizable.

The present analysis shows that the achievements and the problems of HEIs from CEECs should not be interpreted one-sidedly and in a simplified way, but from different perspectives and by different dimensions, thus viewed as interconnected. Foremost, the fact that a considerable part of HEIs from CEECs cannot appear in the global rankings clearly demonstrates that their development does not meet the global ranking criteria. Although this does not necessarily show that the quality of higher education in CEECs is unsatisfactory or poor, it does point to the existence of concrete problems in the functioning and the performance – especially regarding scientific research – of those HEIs from CEECs. The HEIs from CEECs which have the highest positions in the global rankings are those with high scientific achievements. There is also a distinct problem regarding the level of prestige and the reputation of HEIs of the former communist countries. It is well known that reputation is one of the most important intangible organizational assets – one difficult to build and easy to lose (Morphew and Swanson, 2011: 191). In this respect, the challenges facing HEIs from CEECs are all the greater inasmuch as they have to build a reputation on the basis of achievement while often struggling with their stigmatized image inherited from the past, their past image of ideologically bound institutions in which change is difficult.

The presence of HEIs from CEECs on global rankings no doubt reflects the specificity of the development of higher education in these countries. As leading researchers on higher education have recognized: [h]igher education in the region has had to be reconstructed on a scale, and at a speed, never attempted in Western Europe. Adjustments that have required long gestation in the West have had to be accomplished within four or five years. For example, in the West complex issues such as the relationship between universities and other higher education institutions and between higher education and research have been managed by a lengthy process of reform and negotiation stretching over several decades; in Central and Eastern Europe, such issues had to be immediately resolved after 1989. (Scott, 2007: 436)

An important criterion fused in global rankings are the research results measured mainly by number of publications indexed in world research platforms and by number of citations; this raises the urgent issue regarding the conceptual framework on which the national higher education policies in CEECs are based and in which they develop. These conceptual frameworks should offer a clear answer to two basic questions: What is the place of research in higher education? What kinds of HEIs figure in the concrete national system of higher education and how are they defined in terms of the performed research? In formulating their stances on these questions, policymakers and the academic community in each country should well have in mind the opinion of leading researchers that ‘[un]evenness in research performance is inevitable, if not necessary to creativity itself’ (Marginson, 2011: 32). But they should also take into consideration that ‘[e]xperience indicates that it is not possible to create several world-class universities (with prospects for a sustainable future) in any one country without investing in the national higher education system as a whole’ (Yudkevich et al., 2015: 415).

The examination of global rankings and the indicators they use, through the prism of the positions held by HEIs from CEECs in those rankings, makes all the more visible their specificity and limitations. This analysis enables us to formulate new arguments in support of the need to improve the methods, and field of application, of rankings. Global rankings are certainly an important source of information and an instrument for measuring and comparing the achievements of HEIs by certain indicators. But they are only one of the mechanisms – and not a perfect one at that – for assessing the quality of higher education. A great challenge for policymakers and for the academic community is to strengthen and use global rankings to stimulate, not to penalize, the development of concrete HEIs and national systems of higher education.

Footnotes

Acknowledgements

I would like to thank the editors of the issue and the two anonymous reviewers for their valuable comments on an earlier version of the paper.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship and/or publication of this article: The author gratefully acknowledges the support of the project ‘Culture of giving in the sphere of education: Social, institutional and personality dimensions’ (2014–2017) funded by the National Science Fund (contract number K02/12, signed on 12 December 2014), Bulgaria.