Abstract

The rapid advancement of artificial intelligence (AI) is transforming global industries, yet its adoption in developing economies raises urgent questions about fairness, transparency, accountability, and legal oversight. This multidisciplinary empirical study examines the regulatory challenges of AI adoption in Bangladesh's Ready-Made Garment (RMG) sector, a labour-intensive industry central to national economic growth. Drawing on stakeholder insights, the research highlights key concerns relating to fairness and transparency, accountability and liability, privacy and data protection, risk regulation, and the critical need for education and awareness. The findings expose significant gaps in sector-specific governance and institutional readiness. In response, the study proposes a seven-step process to guide the development of effective and context-sensitive regulatory frameworks. While focused on Bangladesh, the recommendations offer broader relevance to other common law countries such as India and Malaysia. This article contributes to building a more inclusive, ethical, and enforceable foundation for AI governance in emerging economies.

Introduction

Artificial intelligence (AI) represents a paradigm shift in contemporary technological development, offering unprecedented opportunities to transform how humans interact with machines and data. 1 Defined broadly as the ability of machines to perform cognitive functions traditionally associated with human intelligence, such as perception, reasoning, learning, and creativity, AI has evolved from a theoretical concept into a practical and increasingly pervasive force in global economic and social systems. 2 Although AI has garnered significant attention in recent years, it is not a novel innovation. The term ‘artificial intelligence’ was coined in 1955 by John McCarthy and his research team, who hypothesised that every aspect of learning or intelligence could be so precisely described that a machine could simulate it. 3 This vision was built upon foundational contributions by pioneers such as Alan Turing and has since developed into a complex field that now underpins numerous technological applications. 4 Some scholars have likened the significance of AI to that of electricity, underscoring its transformative and foundational impact across sectors. 5

The contemporary wave of AI advancement, particularly the proliferation of generative AI technologies, has accelerated the adoption of AI in diverse domains, including 6 manufacturing, marketing, transportation, finance, law, healthcare, agriculture, energy, education, and retail. 7 According to Grand View Research, the global AI market is projected to grow at a compound annual growth rate of 37.3% between 2023 and 2030. 8 Organisations increasingly utilise AI for data analysis, automation, and decision-making, enabling improvements in efficiency, precision, and cost-effectiveness. 9 However, the diffusion of AI technologies remains uneven. The majority of case studies, use cases, and commercial deployments originate in high-income, digitally advanced economies. In contrast, many developing countries have not yet achieved comparable levels of AI integration. 10 This disparity invites critical inquiry into the structural, institutional, and legal constraints that inhibit AI adoption in lower- and middle-income contexts.

Among the factors contributing to this lag are inadequate technological infrastructure, limited availability of skilled professionals, weak policy coordination, and underdeveloped legal and regulatory systems. Understanding how these challenges interact is crucial for formulating effective national strategies for AI adoption. Our study adopts a multidisciplinary lens to examine these issues, with a particular focus on Bangladesh. Bangladesh has witnessed sustained economic growth in recent decades, underpinned by the Ready-Made Garment (RMG) industry, which remains the country's largest export sector and a major source of employment.

Although Bangladesh has sustained economic growth through the RMG industry, the country has also started to feel the pressure of higher labour costs, low productivity, exploitation, and skill deficits. 11 The question is: Can AI be the solution to these challenges? International agencies, including the World Bank, have identified the potential for AI and robotics to modernise this labour-intensive industry by addressing high labour costs, low productivity, and skill deficits. 12 This assessment does not negate the sector's economic success; rather, it reflects structural vulnerabilities that coexist with growth and pose risks to long-term competitiveness in an increasingly automated global supply chain. Moreover, the adoption of AI in a labour-intensive sector raises legitimate concerns about employment displacement and workforce adjustment, underscoring the need for an effective legal and regulatory framework. The RMG sector thus presents a compelling case for studying AI adoption in a developing, industrialising economy.

The Government of Bangladesh has taken concrete steps to integrate AI into its national development agenda. 13 The National Strategy for Artificial Intelligence (2019–2024) outlined an ambitious framework for using AI to enhance public service delivery, economic competitiveness, and data-driven policymaking. A draft National Artificial Intelligence Policy 2024 is currently under consideration, with proposed objectives including the development of a skilled AI workforce, the promotion of AI research, and the establishment of ethical and legal frameworks to guide AI development and deployment. 14 Significantly, the draft policy acknowledges that effective AI governance necessitates supportive regulatory infrastructure. 15 However, as our study demonstrates, the regulatory mechanisms necessary to support these ambitions remain underdeveloped and fragmented.

An empirical study by Md. T. Islam and others surveyed employees across multiple business organisations in Bangladesh with experience in AI implementation. 16 The study revealed that while 96% of respondents recognised the operational benefits of AI, including gains in efficiency, competitiveness, and supply chain optimisation, 93.3% expressed serious concerns regarding the lack of regulatory frameworks and technical infrastructure. 17 Participants further highlighted challenges related to data privacy, cybersecurity, and ethical governance. 18 These findings indicate a growing recognition of the potential benefits of AI, coupled with widespread unease about the institutional and legal capacity to regulate its use effectively.

Although prior studies have identified various barriers to AI implementation in Bangladesh, few have sufficiently explored the legal and regulatory dimensions in detail. Much of the existing literature tends to focus either on the socio-organisational aspects of AI adoption 19 or on narrow doctrinal issues, such as data protection, 20 intellectual property, 21 liability, 22 or algorithmic bias. 23 Such compartmentalised analyses offer only fragmented insights into the broader regulatory landscape. Moreover, research on AI in developing countries has largely been confined to sectors such as healthcare, 24 agriculture, 25 or generic manufacturing, 26 with limited attention to high-employment, export-oriented industries such as garments, where the social and economic stakes of AI adoption are particularly pronounced.

At a general level, the legal and regulatory challenges posed by AI are now widely recognised across jurisdictions. These include the protection of fundamental rights, including freedom of thought, conscience, and expression, which in an AI-mediated environment increasingly manifest as demands for transparency and accountability in algorithmic decision-making and the safeguarding of privacy and personal data alongside unresolved questions of intellectual property and copyright in AI-generated content. These rights-based concerns are embedded within broader governance principles of fairness, transparency, and trustworthiness, that underpin responsible AI deployment. While these challenges are widely acknowledged in the literature, their regulatory salience varies across jurisdictions. In Bangladesh, factors such as the economy's heavy reliance on labour-intensive manufacturing, institutional capacity constraints, and export-oriented regulatory pressures shape both the prioritisation and practical articulation of which AI-related risks are most salient.

Hence, while these challenges are global in nature, their legal articulation, institutional enforcement, and practical impact vary significantly across national contexts. This article examines these core challenges within the specific regulatory, constitutional, and policy framework of Bangladesh, with a particular focus on how existing laws address the realities of AI adoption in a developing economy. While this article uses the RMG industry as a focal empirical case, the legal and regulatory issues examined are not confined to that sector. Issues of fairness and transparency, accountability and liability, privacy and data protection, and institutional readiness arise across AI applications in both public and private sectors. The RMG industry is employed in our study as a high-employment, export-oriented sector where the social and economic implications of AI adoption are particularly visible. Accordingly, the analysis generates insights of broader relevance for AI governance in Bangladesh and offers lessons applicable to other sectors and comparable jurisdictions. While labour impacts form an important background concern, our study focuses on legal and regulatory readiness rather than providing a sector-specific labour market analysis.

Our study employs a multidisciplinary and empirical methodology to identify critical regulatory deficits, evaluate the adequacy of existing policy instruments, and propose strategic legal reforms tailored to the socio-economic context of the country. The findings have broader relevance for other common law jurisdictions that share similar economic structures and regulatory trajectories.

The remainder of this article is structured as follows: Section ‘AI policy and Law in Bangladesh’ reviews recent developments in AI policy and legal frameworks in Bangladesh, with particular attention to the national strategy documents and draft legislation. Section ‘Methodology’ explains the methodological design, including the rationale for the qualitative and empirical approach. Section ‘Discussion and Suggestions’ presents and analyses the findings, offering critical reflections on stakeholder perspectives and legal-policy gaps. Finally, Section ‘Conclusion and recommendations’ synthesises the key insights and proposes recommendations for enhancing AI governance in Bangladesh and comparable jurisdictions.

AI policy and Law in Bangladesh

Bangladesh is diligently working to maintain its position as a leading global player in the realms of Information Technology (IT) and IT-enabled Services (ITeS). A central goal of the current government is to elevate this South Asian region into one of the most technologically advanced nations on the global stage in the coming years. 27 Under the visionary initiative known as ‘Digital Bangladesh’, which epitomises the idea of incorporating modern technology into every aspect of a nation's life, 28 esteemed organisations such as JP Morgan, Goldman Sachs, and Gartner have recognised Bangladesh as an exemplary model for the future of IT and ITeS. 29

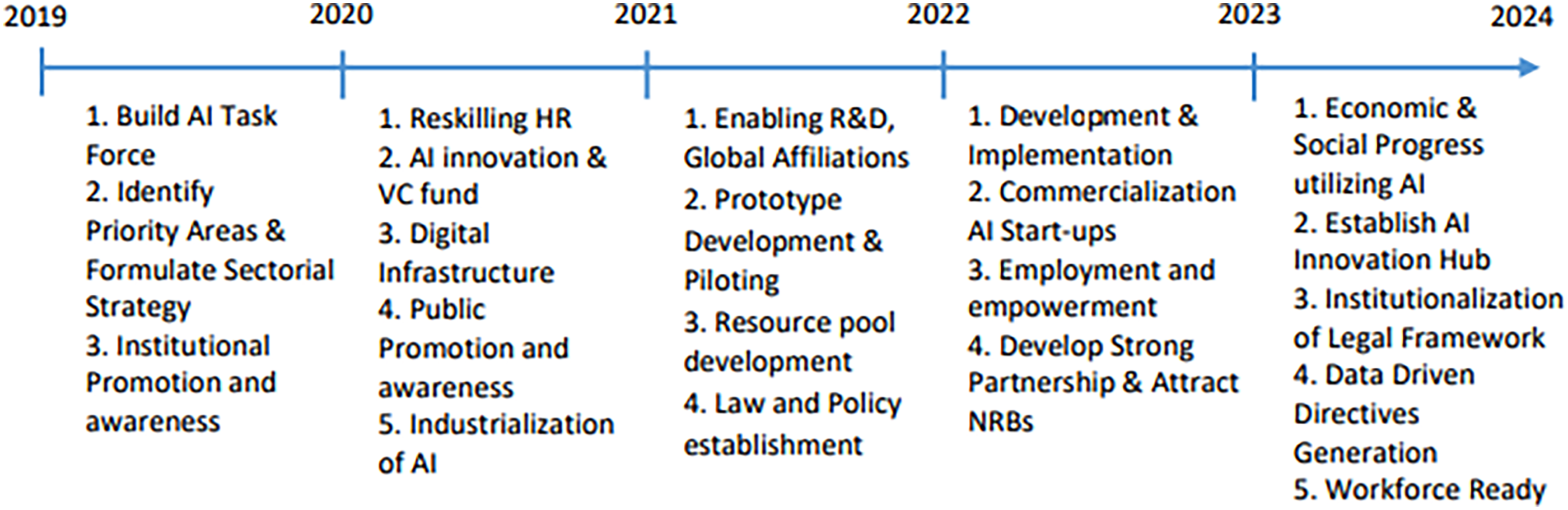

Additionally, the Information and Communication Technology (ICT) Department of Bangladesh has formulated the national strategy for AI 2019–2024 (hereinafter strategy), which provides a comprehensive plan aimed at harnessing the power of AI to accelerate the country's digital transformation and economic development. 30 A summary roadmap outlining the broader strategy steps for Bangladesh's AI development over the years is presented in Figure 1.

Information and Communication Technology Department (ICTD), national strategy for artificial intelligence Bangladesh (2019) p. 4.

According to the strategy, AI is seen as a key accelerator for Bangladesh's digitisation efforts, aligning with the government's vision to have future technologies such as AI, Robotics, Big Data, Blockchain, and IoT. The strategy recognises the substantial economic benefits of AI, including the potential to double annual economic growth rates by 2035, enhance labour productivity by up to 40%, and drive economic growth through increased customer demand and product innovation. 31 The strategy is built on six strategic pillars: research and development, skilling and reskilling the AI workforce, data and digital infrastructure, ethics, data privacy, security, and regulations, funding and accelerating AI start-ups, and industrialisation for AI technologies. Each pillar has its roadmap and action plan. The strategy also considers infrastructure readiness, awareness, resources, social and legal challenges, and other critical factors. 32

Furthermore, the draft National Artificial Intelligence Policy 2024 endeavours to establish a ‘Smart Bangladesh’ that effectively utilises AI for the betterment of citizens, economic growth, and sustainable development. Selected sectors, such as the RMG industry, will experience the advantages of AI integration, leading to improved productivity and quality through various applications such as predictive maintenance and process optimisation. The policy underscores the importance of upholding principles such as equality, fairness, transparency, and accountability in AI implementation, while also recognising and addressing challenges related to risk, trustworthiness, and privacy concerns. It also emphasises the necessity of updating the strategy with a comprehensive framework outlining goals, policies, and initiatives for AI development. 33

There are also several existing legal frameworks applicable to AI development within the country. Article 39 of the Constitution of Bangladesh ensures citizens’ fundamental rights to freedom of thought, conscience, and speech, with the right to information being an integral part of these rights. Freedom of thought and conscience implies that individuals have the right to form their own opinions and beliefs. When it comes to AI, this means that individuals have the right to use, develop, or interact with AI systems in ways that align with their personal values and beliefs. It also means that AI should not be used to manipulate or control individuals’ thoughts and beliefs. Governments and organisations using AI should respect this principle and ensure transparency and accountability in AI systems. 34

On the other hand, freedom of speech entails the right to express one's opinions and ideas. In the context of AI, this means that AI should not be used to suppress free speech or promote harmful content. 35 Governments and organisations should strike a balance between regulating harmful AI uses and protecting free speech. 36

Consequently, the right to information is crucial in the AI context because it relates to access to data and knowledge. Citizens should have access to information about AI systems that impact their lives, such as how AI algorithms are used in decision-making processes. Governments and organisations that use AI should be transparent about their AI systems, including data collection, processing, and decision-making. 37 They should provide citizens with the necessary information to understand and challenge decisions made by AI.

Another significant legal aspect related to AI is the issue of privacy and the protection of personal information. The regulations surrounding privacy and AI can be quite intricate, yet they are pivotal in determining how AI systems gather, utilise, and handle personal data.

38

Although the Constitution does not explicitly state that citizens have a fundamental right to privacy, the judiciary in the case of

Furthermore, the ICT Act (2006), the Right to Information Act (2009) (the RTI Act), and the Digital Security Act (2018) (the DSA) contain specific privacy regulations. These regulations may come into play if AI poses a threat to individuals’ privacy and the security of their data. Building on these frameworks, Bangladesh has also moved beyond an initial Data Protection Bill towards a dedicated Personal Data Protection Ordinance (PDPO) that adopts a more comprehensive, rights‑based approach to data governance. This emerging regime draws on global standards such as the EU’s General Data Protection Regulation (GDPR) by emphasising consent, purpose limitation, data minimisation, accuracy, and security safeguards, and by defining the responsibilities of data controllers and processors handling personal information relating to individuals in Bangladesh.

Yet these instruments still do not specifically address AI‑generated misinformation, discriminatory outputs, or other algorithmic harms. Assigning liability to AI for generating bias or misinformation remains a global concern, 41 and Bangladesh is no exception. As in most jurisdictions, Bangladesh relies on general ICT, cybersecurity, and data‑protection laws to respond to AI‑related risks, and clear, AI‑specific liability rules for misinformation and bias are still largely undeveloped.

Beyond the realms of privacy and misinformation, AI also presents a significant concern in the domain of copyright. AI can generate text, music, art, and other creative content autonomously. This raises questions about the ownership of such content. Who owns the copyright when AI generates something? Is it the AI creator, the user, or nobody? Clear legal frameworks are needed to address these issues. The Copyright Act of 2000 of Bangladesh (Copyright Act) offers protection for various creative works, including computer databases, considering them a form of ‘literary work’ within the law. 42 However, the Copyright Act in no way addresses the challenges of AI-generated content. Determining whether AI-generated content qualifies as ‘fair use’ or ‘transformative’ under copyright law can be intricate. 43 Courts may need to assess if AI-created works significantly transform or repurpose copyrighted material. Then again, AI often relies on datasets that contain copyrighted material. Ensuring proper licensing and attribution for these data sources can be challenging, and failing to do so may result in copyright violations. Additionally, defining liability and ownership concerning AI-generated content remains a challenge. 44

When it comes to penalties for AI-related offences, the Penal Code of 1860 may serve as an effective tool. The Act imposes penalties, including imprisonment and fines, for offences such as data theft, property misappropriation, and criminal breach of trust. While the Penal Code primarily addresses movable property, its definition broadly encompasses tangible assets of ‘varied kinds’, except for land and structures permanently affixed to the ground. Given their broad nature, computer databases can fall under the protective purview of the Penal Code.

While these constitutional provisions, statutes, and draft instruments provide points of legal relevance for AI governance, they do not constitute a coherent or comprehensive regulatory framework for AI. Existing laws address AI-related issues only indirectly or incidentally and were not designed to respond to algorithmic opacity, automated decision-making, or distributed accountability across AI value chains. As a result, fundamental concerns such as fairness, transparency, liability allocation, and AI-specific data governance remain inadequately addressed. This fragmentation significantly constrains the capacity of the current legal framework to respond effectively to the challenges identified in our study.

Methodology

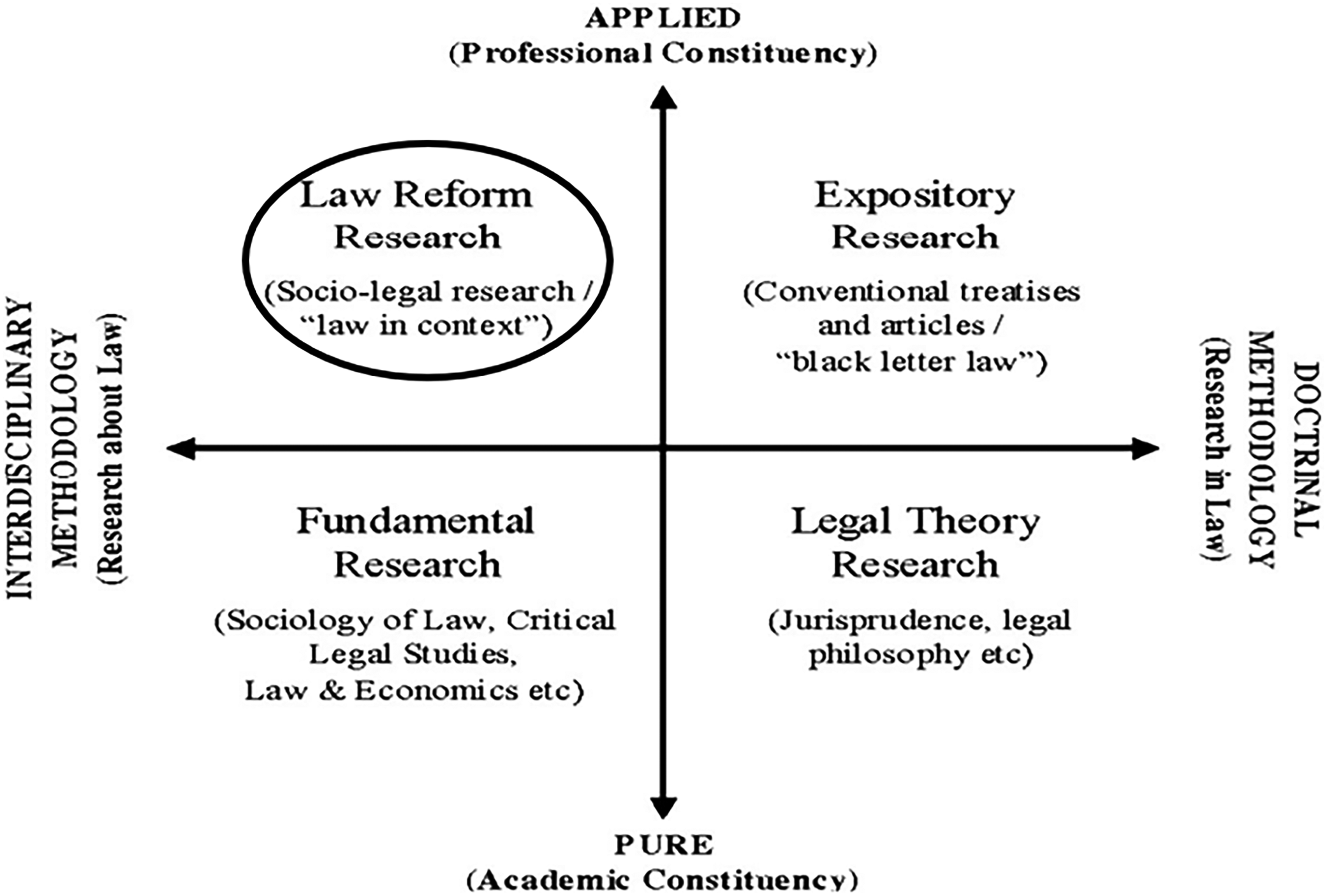

In a field where comprehensive research on AI integration from a legal perspective is scarce, our study draws insights from Arthurs’ 45 taxonomy of legal research styles, as outlined in his report on legal education and research in Canada (Figure 2). It aligns with the field of law reform research (Figure 1), often referred to as socio-legal research. Law reform research is ‘research about law rather than research in law’. 46 Its aim is to explore how the law functions within society and to propose evidence-based reforms that address real-world issues. Law reform research actively engages various stakeholders in this process, with the goal of improving legal systems and their impact on individuals and communities.

Legal research styles, Arthurs, 1983.

Based on the review of extant literature, Hanley and others 47 argued that despite its potential in law reform research, the role of qualitative research methods has not been examined for different law reform tasks. They highlighted that using a qualitative research method is useful in law reform research in the areas of identifying the need for law reform, finding and examining strengths and limits of law reform options, evaluating and testing a law reform proposal, and evaluating the outcomes of an implemented law reform. They argued that stakeholder and expert consultation-based qualitative research through interviews or focus group discussions with organisations involved in law reform facilitates the gathering of ‘bottom-up’ perspectives in law reform research.

Linos and Carlson 48 also noted that qualitative empirical methods have not been systematically used to review law. In this regard, we found that law reform studies have increasingly been using qualitative research methods. For example, Keogh and others 49 conducted qualitative semi-structured interviews about the Abortion law reform in Australia with experts in abortion provision from a range of health care settings. Rustin 50 interviewed women and conducted focus groups with them in her law reform research on gender legislative reforms in South Africa. Based on the arguments and application of qualitative methods in law reform research, our project used a qualitative research method by interviewing diverse stakeholders who are involved in organisations that provide notable legal perspectives on AI integration.

We employed a non-probability convenience sampling technique to recruit participants from diverse stakeholders in Bangladesh with expertise and experience related to AI adoption. Participants included individuals affiliated with RMG organisations responsible for overseeing AI integration within their respective organisations (4), senior officials from government bodies (5), legal academics specialising in AI (4), and an AI specialist from a civil society organisation (1). In total, 14 semi-structured interviews were conducted. Collectively, the research participants had immense knowledge and extensive experience in AI and Big Data analytics in a range of roles.

All interviews were conducted in Bengali with the consent of participants and subsequently translated and transcribed into English. The interview data were then systematically coded using a combination of coding summaries and manual interpretation, in harmony with the established coding structure. 51 A thematic content analysis of the entire data set was then undertaken to locate recurrent themes.

Discussion and suggestions

AI is a rapidly evolving field with distinct challenges, both globally and within Bangladesh. As indicated in the ‘AI policy and Law in Bangladesh’section, the government has already implemented an AI policy.

52

In this regard, participants working for the government to promote the adoption of AI mentioned: With the goals of elevating Bangladesh to a middle-income country by 2030 and achieving a ‘smart’ status by 2041 in mind, there is a strong desire to assess the state of artificial intelligence (AI) readiness. To do this, specific areas of focus have been identified in the strategic plan. These areas include: (1) Manufacturing, with a special emphasis on the garment sector; (2) Trade and commerce; (3) Transportation; (4) Public service delivery; (5) Agriculture. [P3] We are dedicated to realising Vision 2041 by focusing on … four pillars: e-governance, connectivity, human resource development, and ICT business promotion….Our digital transformation foundation, initiated through Digital Bangladesh, includes updated ICT policies (2009, 2015, 2018) encompassing areas like digital government, security, equity, education, innovation, skill development, and AI. An Action Plan for AI and Robotics outlines ministry roles, aligning with our broader ICT strategy…. It is important to clarify that we are indeed engaging with the industry. While there is room for improvement and we acknowledge that, we are committed to progressing steadily and consistently to ensure our policies are effective. [P1]

In the course of a conversation, a senior representative from the RMG sector noted that although the government has made progress in digitisation and AI adoption, the sector should be prioritised. The government is consistently emphasising digitalisation in Bangladesh…I strongly believe that, for better economic growth, the government should prioritise the ready-made garment (RMG) sector, as it accounts for over 80% of our exports. [P4]

Concerns relating to AI

Unlike traditional algorithms, AI, particularly through machine learning (ML) algorithms, autonomously learn from extensive datasets, relying on intricate correlations rather than predefined rules. This autonomy reduces human control over decisions, posing significant challenges for governments in crafting effective policies to govern AI. 53 ML systems’ inherent opacity and unpredictability present technical hurdles for ensuring fairness and transparency, accountability and liability, and privacy and data protection. This section of the article deals with these issues by utilising data obtained from interviews, analysing legal positions overseas, and incorporating scholarly recommendations. The aim is to address these challenges within the specific context of Bangladesh, providing valuable insights and potential solutions.

Fairness and transparency

All participants were of the view that ‘AI will play a pivotal role in shaping critical decisions in our society and daily lives’ [P8]. But, it ‘poses a unique challenge for Bangladesh, a country where fairness and transparency in day to day public service has often been questioned’ [P6].

AI, ML algorithms, and automated decision-making systems

54

are frequently employed to make crucial decisions in various government and non-government sectors.

55

However, issues related to unfairness, bias, and prejudiced treatment consistently arise and are widely recognised as significant challenges associated with their usage: The use of different data sets and algorithms in analysing and predicting data in the world of big data can raise concerns about potential violations of individuals’ fundamental rights. Moreover, it can unintentionally result in unequal treatment and indirect bias against groups that share similar characteristics. This becomes especially evident when considering fairness and equal opportunities in areas like education and employment. These challenges can surface when evaluating job candidates, conducting recruitment procedures, or studying changing consumer behaviours. [P14]

Brintrup and others 56 argue that in order to ensure fairness in the usage of AI in manufacturing, AI development cycles should include methods for checking bias in both data and decision-making processes. They refer to ML fairness toolkits that have been developed to assist practitioners in identifying and mitigating unfairness in ML systems. These toolkits, such as Fairlearn, AIF360, Themis-ML, What-If Tool, FairML, and Fair-Test, offer various functionalities and guidelines for promoting fairness in AI applications. However, practitioners need more practical guidelines from these toolkits to communicate fairness issues effectively. They look for tools that allow quick use, integrate with existing pipelines, and provide access to code repositories for implementing fairness algorithms. 57

Just like fairness, transparency is vital for the responsible and ethical use of AI. As one participant mentioned: ‘One significant concern is the lack of transparency in how algorithms make decisions’ [P11].

The use of AI has broader implications, prompting questions about its nature, audience, and purpose. A number of authors have mentioned that the lack of transparency in algorithms stands out as a significant concern that takes centre stage in legal discussions about AI.

58

One participant reiterated the sentiments expressed by others with this statement: It becomes imperative to ensure that individuals who may have been denied opportunities, or denied benefits can understand why these decisions were made, beyond the explanation that ‘some software processed the decision’. In such a context, it's essential to consider how AI implementation in the public sector can remain free from biases and uphold fairness and transparency. [P10]

Brintrup and others argue that two important pillars of transparent AI are interpretability and explainability. 59 Interpretability in ML concerns understanding model outputs in relation to data. 60 ML interpretability involves three aspects: ex-ante understanding how algorithms make decisions, ex-post identifying the training data used, and metrics to validate results, often employing uncertainty measures. 61 Explainability includes broader explanations beyond just data and ML, incorporating expert knowledge and other psychological aspects. 62 While transparency may not directly resolve technological issues, it can support recourse, repair, and accountability. 63

In response to our query about how Bangladesh should prepare for the usage of fair and transparent AI, one participant well-versed in the anticipated data protection regulation of the country said: Understanding the importance of tackling algorithmic discrimination and bias, it becomes vital to identify and put into action measures to reduce these problems. This includes creating a strong ethical framework rooted in legal principles to ensure transparent handling of personal data and automated decision-making. It can be well addressed by the anticipated Data Protection Regulation of the country as well. [P14]

Undoubtedly, clear legal frameworks can help address unfairness, bias, and discrimination in AI by setting guidelines and standards that AI developers and investors must adhere to. These frameworks can include provisions that require AI systems to be developed and used in a way that avoids discrimination or bias against particular groups or individuals. They can also outline consequences for AI systems that are found to be unfair or biased. Additionally, by providing legal certainty to AI developers and investors, these frameworks can encourage responsible innovation. Developers are more likely to invest in AI technologies and algorithms that align with legal requirements, reducing the likelihood of unfair or biased outcomes.

Accountability and liability

All participants were united in their belief that AI holds the power to independently make decisions, a quality that sets it apart from previous technological marvels. They marvelled at its potential to not just match but potentially surpass human intelligence, highlighting its ability to learn from data, adapt to dynamic changes, and discern intricate patterns from vast datasets to make informed judgments. One participant went as far as drawing a parallel between AI's potential impact and that of nuclear technology, highlighting the profound transformative power that AI possesses. Think of AI like nuclear technology; it has both positive potential, such as nuclear energy, and negative potential, like nuclear weapons. If AI is not regulated similarly to nuclear technology today, there will be a gap in accountability. [P 12]

Duffy 64 highlights the legal complexity associated with AI making independent decisions: does the responsibility for its actions lie with the designer, operator, or programmer? What if the AI's behaviour was unforeseeable? Can the causation of damage by the AI system be attributed to a legal entity, or is the AI system's conduct considered an unpredictable intervening event? Other legal experts also argue that the ‘accountability gap’ poses more significant challenges than initially understood and has repercussions in three crucial areas: determining the source of a problem, ensuring fairness, and providing compensation for damages. 65

Bangladesh's legal framework, like many others,

66

harbours ambiguity regarding the assignment of accountability and responsibility for the consequences arising from the utilisation of AI applications. Ensuring accountability and liability for AI involves establishing mechanisms to oversee the development, deployment, and use of AI systems. [P13].

AI systems are a combination of one or more machines and, in the current legal framework, have no legal personality of their own. Courts primarily hold owners, operators, vendors, designers, and manufacturers accountable, often based on negligence principles under tort law, where these parties are deemed to have a duty of care towards potential victims of machine-related harm. In some cases, there has been strict liability.

67

One participant highlighted the legal complexity associated with AI making independent decisions: Although tort law can be applicable for determining liability relating to AI, however, the application of tort law to AI liability can be complex and may require adaptations or developments in legal frameworks to address the unique challenges presented by AI technology. The specific application of tort law in AI-related cases can vary by jurisdiction and is an evolving area of law. In the case of Bangladesh, while the legal system is based on English common law principles, the courts seem hesitant to apply torts. Rather, they are more willing to provide statute-based civil remedies. [P10]

Using AI technology can pose risks to both individuals and their property. There have been instances where accidents have been caused by self-driving cars injuring pedestrians, mishaps stemming from drones that lack full control, and AI software providing inaccurate medical diagnoses. 68 Assigning liability in AI-related mishaps is challenging due to the numerous individuals and elements implicated, including those responsible for data provision, design, manufacturing, programming, development, usage, and the AI system itself. 69

The recent enactment of the EU AI Act presents a significant development in the regulatory landscape of AI. This legislation offers valuable insights and lessons that Bangladesh can consider and potentially integrate into its own regulatory framework concerning AI technologies. 70

The EU AI Act establishes obligations for providers, deployers, importers, distributors and product manufacturers of AI systems, with a link to the EU market. It will apply to providers and deployers of AI systems in third countries if the output produced by an AI system is being used in the EU (Article 2(1) EU AI Act). Therefore, the AI Act will apply to providers (developers) of AI systems in Bangladesh whose AI systems are used by the RMG industry, as well as to the RMG industry itself as the user (deployer) of the AI system, if the garments produced are sold in the EU. Certain types of AI systems are prohibited (Article 5), and non-compliance may have a maximum penalty of 35 million Euros (AUD 56.6 million) or 7% of worldwide annual turnover, whichever is higher.

However, it must be noted that the EU AI Act primarily focuses on high-risk AI systems. 71 If developers 72 and deployers 73 fail to fulfil their obligations under the EU AI Act, they may be subject to a maximum fine of 15 million Euros (AUD 24.3 million) or 3% of worldwide annual turnover, whichever is higher.

THE EU AI Act also applies to providers of general-purpose AI (GPAI) models, which are defined as An AI model, including when trained with a large amount of data using self-supervision at scale, that displays significant generality and is capable to competently perform a wide range of distinct tasks regardless of the way the model is placed on the EU market and that can be integrated into a variety of downstream systems or applications.

74

Bangladesh can also consider the liability regime suggested by Nichols, albeit for non-high-risk AI systems. 77 Nichols contends that liability for harm caused by such systems should mirror that of harm caused by domestic animals, suggesting the application of the common law rule of the ‘first bite’. This principle acknowledges that a dog owner should not be held accountable for the initial bite, as they may not have been aware of the dog's aggressive tendencies beforehand. Nichols uses three main justifications to support this rule. Firstly, there is a societal presumption of the general safety of domestic animals. Secondly, the benefits of animal ownership are substantial enough to warrant legal support. Lastly, the accessibility of domestic animal ownership ensures fair distribution of benefits and costs across society. Nichols argues that, similarly, non-dangerous AIs are widely assumed to be safe and offer significant benefits, leading to widespread adoption. Adopting established animal liability rules for AIs would aid businesses in anticipating legal challenges and encourage AI innovation, ultimately boosting productivity and efficiency for society. 78

Privacy and data protection

All the participants representing the RMG sector emphasised that they prioritise the implementation of minimal data protection policies. This priority is primarily driven by their clientele from European countries. By adhering to these policies, RMG companies aim to foster trust and confidence among their European clientele, demonstrating their commitment to safeguarding sensitive information and upholding privacy rights. However, participants from the RMG sector showed a lack of awareness regarding the specifics of the GDPR and the upcoming data protection law. 79(Note that at the time of the interview Bangladesh had a Data Protection Bill, which was subsequently replaced by the Personal Data Protection Ordinance in 2025- discussed above).

Several participants acknowledged the challenge presented by AI regarding personal data control, noting that individuals may not be aware of how their data is collected and used. It can also create detailed profiles including sensitive information, making the data vulnerable to cyber threats if not secured properly: Big Data, AI, and machine learning have the capability to gather extensive information about individuals. The challenge lies in the difficulty of understanding how these technologies operate, which can be problematic for privacy. [P14]

Legal professionals and data protection agencies globally share apprehensions regarding the substantial privacy and data protection hurdles presented by AI. Similar concerns were echoed by a participant, knowledgeable in the area of privacy and data protection law: While Bangladesh currently lacks a dedicated personal data protection law, it is important to note that both the draft law and the country's Constitution inherently uphold our fundamental rights. These rights include our entitlement to privacy, the safeguarding of our data against potentially harmful misuse, and the assurance that decisions affecting us are not solely determined by automated processes devoid of human oversight. [P14]

On a global scale, legislators and policymakers underscore the imperative of aligning AI systems with various measures, such as encryption, access controls, and regular security audits, to safeguard their data and prevent potential breaches. 79 This necessitates businesses to discern relevant regulatory frameworks and meticulously plan the deployment of AI. Hence, establishing a robust foundation for AI usage that conforms to diverse regulatory environments is paramount. This entails aligning AI systems with recognised standards such as the National Institute of Standards and Technology, International Organisation for Standardisation (ISO), and other regulatory directives. 80 The emphasis on compliance planning should extend seamlessly throughout the entire AI lifecycle, accentuating the significance of systematic privacy impact assessments (PIAs) or data protection impact assessments (DPIAs). 81 Organisations can seamlessly integrate privacy into their AI systems by incorporating Privacy by Design principles, adhering to standards such as ISO 31700 Privacy by Design Assessment Framework. 82

In various jurisdictions, including the UK and the EU, conducting a PIA/DPIA is not merely a legal requirement but also a foundational step for integrating AI considerations. 83 Developers and external vendors must reassure clients and regulatory bodies of their unwavering commitment to constructing reliable AI through audits that align with recognised standards, regulatory frameworks, and best practices. In the context of the fashion industry in the UK and Europe, which heavily relies on vendors from the RMG sector in Bangladesh, it becomes even more critical for developers and external vendors to reassure clients and regulatory bodies about their steadfast commitment. Hence, the EU AI Act and GDPR will impact the Bangladesh RMG sector, despite the country's privacy law still awaiting approval from Parliament.

Even in cases where AI systems claim to utilise anonymised or non-personal data, as observed in the RMG sector in Bangladesh, privacy risks can surface. This encompasses the potential for re-identification from training datasets and downstream consequences on individuals and communities. For instance, anonymised factory worker data may inadvertently disclose personal details when linked with external data.

Moreover, downstream impacts on communities are another aspect of privacy risk associated with ostensibly anonymised or non-personal data. Consider a scenario where an AI system, trained on anonymised data related to factory locations and community demographics, makes decisions that indirectly affect the allocation of resources or government initiatives in those areas. While the data used are ostensibly non-personal, the consequences could have a profound impact on the privacy and well-being of individuals within those communities. Moreover, in the age of interconnected data sources, combining apparently non-personal data with external datasets may lead to unintended privacy breaches. For instance, merging anonymised data from the RMG sector with publicly available information, such as social media profiles or local news reports, could potentially result in the re-identification of individuals.

Risk-Regulation

The regulation of AI depends on evaluating the potential risks associated with its use. According to Australia's Productivity Commission, ‘governments should consider the nature of the potential harms and the risk of harms measured against appropriate real-world counterfactuals’. 84 Risk assessment includes considering both the probability of harm and the severity of potential consequences. For example, in the RMG industry, using AI for basic inventory management may not seem to have significant consequences. However, while AI can optimise inventory levels, predict demand, and automate restocking processes, errors in its function could be more likely to occur. On the other hand, using AI in quality control and defect detection during the manufacturing process could have significant consequences. If there are errors in the AI algorithms responsible for identifying defects or ensuring product quality, it could result in flawed garments reaching the market. This could lead to questions of liability and compensation, and determining who should be held responsible for any losses incurred.

Hence, a risk-based approach to AI is needed, where the laws can be developed based on the assessment of potential harm, with the goal of minimising harm without imposing excessive regulatory burdens.

85

Many experts agree that implementing AI rules for each industry is necessary.

86

However, this can result in increased costs, inflexible laws, and added complexity. Therefore, specific AI laws may be necessary, but they should not be so specific that they only address one industry's implementation of AI and ignore other industries. As of now, Bangladesh does not have any immediate plans to develop legislation specifically focused on AI. A participant involved in the A2i project explained: Indeed, the successful implementation of artificial intelligence relies on both public acceptance and a well-structured legal framework. Some time ago, in collaboration with our ICT adviser and Deputy Minister, we contemplated the idea of crafting a specific AI-focused law, believing it could effectively address the unique challenges of AI. However, we soon realised that this approach might become increasingly complex as technology evolves over time, potentially rendering the legislation outdated by the time it passes through Parliament. Consequently, we decided to take a different approach. We resolved to first develop a strategic plan for the next five years, focusing on sectors that are rapidly evolving, before proceeding with the creation of AI-related laws. [P5]

Considering the absence of immediate plans for an AI law in Bangladesh, it is imperative for authorities to assess how current laws can be applied before introducing new regulations. To begin with, the government should refrain from assuming that addressing the risks associated with AI use necessitates the immediate creation of new regulations. Instead, a thoughtful approach should be undertaken that involves contemplating the application and enforcement of existing regulations, potentially with some adjustments. 87 Laws related to privacy, consumer protection, and discrimination carry substantial relevance for AI use. Furthermore, negligence and tort laws in English common law countries are already in effect, establishing a certain level of accountability for developers, users, and other parties involved in cases where harm arises from technology use. The determination of liability on a case-by-case basis is likely to take time before courts collectively clarify how specific uses of AI would be handled.

Furthermore, relying on existing regulations imparts a sense of certainty for businesses, allowing them to operate within established frameworks and rules without the need for significant adjustments to AI-specific laws. The regulatory focus should pivot around decisions and actions causing harm, rather than fixating on the technology itself.

We believe that a regulatory sandbox can come into play for AI usage in Bangladesh. Regulatory sandboxes 88 are already implemented in sectors such as fintech, as they offer temporary and conditional relief from existing regulations. The primary objective of these sandboxes is to empower regulators to understand and assess unforeseen risks and harms associated with new technologies, ultimately contributing to the formulation of regulations addressing these issues. 89 The utility of regulatory sandboxes can also extend to providing regulators with insights into how AI learns and makes decisions. 90 Through practical application, regulators can discern the underlying causes of generated outputs. Additionally, these sandboxes create an environment for innovators to explore, experiment, and promote the adoption of AI and the development of complementary innovations. 91

Nevertheless, it is crucial to acknowledge that there are significant challenges in effectively implementing AI regulatory sandboxes. One challenge revolves around ensuring adequate technical expertise among the responsible regulators. Another challenge is designing sandboxes that allow broad participation without distorting competition. Since 2024, sandboxes have moved from conceptual proposals to more structured implementation in some regions. Within the European Union, Article 57 of the Artificial Intelligence Act mandates that Member States establish at least one AI regulatory sandbox by mid-2026, reflecting an institutionalisation of these mechanisms as part of a supranational regulatory regime. Spain has been among the first to pilot a national AI regulatory sandbox, producing implementation guidance through collaborative testing with industry and regulators. 92 France is preparing alongside others in the EU; 93 and the UK has launched its AI Growth Lab and sector‑specific sandboxes. 94

The UK has adopted a decentralised and iterative approach, enabling sector-specific regulators to promptly address sudden AI-related disruptions using targeted measures. 95 The UK Government is exploring the establishment of a central body that sector-specific regulators would consult before implementing AI-specific guidelines. 96

Both in the EU and the UK, small- and medium-sized enterprises will be given priority access to the sandbox, aiming to alleviate the challenges encountered by these companies when introducing their AI systems under the new regulation. Regulators will document the responsibilities of participants, including details such as liability structures and testing standards. 97

While these initiatives are still in their early stages, they serve as potential examples for sandbox design.

Another approach that Bangladesh can adopt involves the creation of industry codes outlining specific AI requirements tailored to a particular industry. 98 However, Bangladesh, like many other countries, has faced challenges in effectively implementing voluntary codes of ethics, especially in the RMG industry. 99 Nevertheless, the adoption of industry codes generally recognises the importance of industry expertise in establishing appropriate standards for high-tech sectors. It also highlights the ongoing relationships between companies and regulators as crucial for maintaining current enforcement practices. Additionally, industry codes can offer a more adaptable and lenient approach compared to rigid legal frameworks.

Bangladesh could also consider an approach similar to that of the United States, where voluntary industry standards are supported through executive action rather than comprehensive AI-specific legislation. President Biden’s Executive Order 14110 of 30 October 2023 on the Safe, Secure, and Trustworthy Development and Use of Artificial Intelligence initially promoted safety testing, standards development, and international cooperation without adopting a risk-tiered regulatory structure comparable to the EU AI Act. However, following the 2025 change in administration, that Executive Order was rescinded, and the current federal approach has shifted towards innovation-driven policy, minimally burdensome oversight, and continued reliance on agency guidance and voluntary commitments. Unlike the EU’s risk-based framework, the United States continues to favour a flexible, executive-led and sector-specific model of AI governance. 100

Education and awareness

AI is a rapidly evolving field with distinct challenges, both globally and within Bangladesh. As indicated in the ‘AI policy and Law in Bangladesh’ section, the government has already implemented an AI policy. Our objective was to evaluate the level of awareness about this policy among government employees and industry stakeholders. We initiated this process by conducting an interview with a government official, coded as A3.

101

He said: With the goals of elevating Bangladesh to a middle-income country by 2030 and achieving a ‘smart’ status by 2041 in mind, there is a strong desire to assess the state of artificial intelligence (AI) readiness. To do this, specific areas of focus have been identified in the strategic plan. Recognising that it's not feasible to encompass every aspect of AI comprehensively, a practical approach has been devised to ensure that each ministry tailors its AI efforts to its unique requirements. Five key priority areas were established during this planning phase. These areas include: (1) Manufacturing, with a special emphasis on the garment sector; (2) Trade and commerce; (3) Transportation; (4) Public service delivery; (5) Agriculture. [A3] A framework has been created to outline the roles and responsibilities of government agencies in relation to these priority areas. This framework serves as the foundation for shaping and developing the government of Bangladesh's AI strategy. The strategy is carefully designed with a concentrated focus on these priority areas, aligning efforts to achieve the nation's 2030 and 2041 aspirations. [A3]

As AI priorities become more crucial in various industries, the question arises: Do these industries know about the commitments made by the government? When we asked if there was any government support for Big Data or AI, B1, a leading employee in the garment industry, responded: No, the government is consistently emphasising digitalisation in Bangladesh. But, I have not heard of any new government support for my industry. I strongly believe that, for better economic growth, the government should prioritise the ready-made garment (RMG) sector, as it accounts for over 80% of our exports. [B1]

This is when we began to wonder why the industry is unaware of the Government's AI policy, even though the government appears to believe it is engaging with the industry. A government employee, A1 from the Ministry of Information, Communication & Technology, stated: We are dedicated to realising Vision 2041 by focusing on grassroots services and universal information access, guided by four pillars: e-governance, connectivity, human resource development, and ICT business promotion. Our digital transformation foundation, initiated through Digital Bangladesh, includes updated ICT policies (2009, 2015, 2018) encompassing areas like digital government, security, equity, education, innovation, skill development, and AI. An Action Plan for AI and Robotics outlines ministry roles, aligning with our broader ICT strategy. We are engaged in the Smart Citizen project, Smart Government initiatives (healthcare, education, agriculture), and shaping a Smart Society. We are also bolstering the smart economy industry, supporting startups, and fortifying tech infrastructure, all in pursuit of Vision 2041, with full government commitment. It is important to clarify that

To gain a deeper understanding of the communication gap we observed between the government and the industry, we conducted an interview with C1, an economics and policy academic currently affiliated with BRAC University in Bangladesh. The aim was to gather insights on how to bridge this communication gap. We must turn our policies into action. Take the ready-made garment industry, for instance. To make things work smoothly, with AI adoption it’s crucial for groups like BGMEA [Bangladesh Garment and Manufacturers Association], BKMEA [Bangladesh Knitwear Manufacturers and Exporters Association], and government agencies like DIFE [Department of Inspections for Factories and Establishments] to work together closely and also collaborate effectively with the government. [C1]

It is worth mentioning here the Victorian Law Reform Commission's consultation paper, released in October 2024, which addresses the use of AI in courts and tribunals. Although the paper specifically focuses on AI in the judicial context, its insights are also relevant to the RMG sector in Bangladesh. The paper underscores the need for education to build awareness around AI policies and guidelines. Given AI's novelty and complexity, stakeholders are likely to require continuous and comprehensive education. Such efforts will be essential to ensure awareness and encourage a safe approach to AI implementation. 102

Conclusion and recommendations

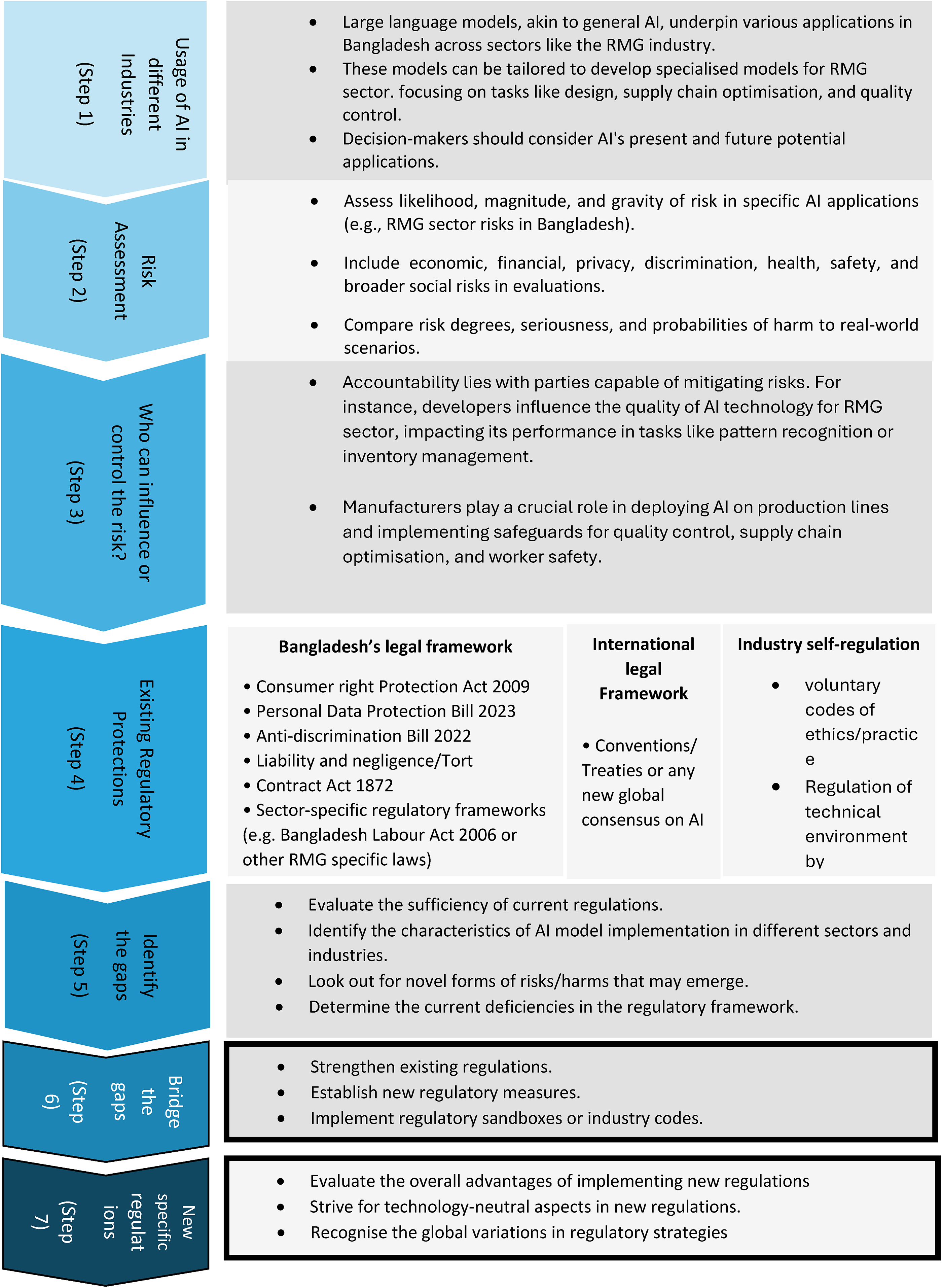

Large language models, such as GPAI, are foundational to diverse applications across sectors, providing adaptability for industry-specific tasks. To assess the feasibility and impact of AI in specific contexts, such as Bangladesh's RMG sector, a comprehensive evaluation is essential, particularly regarding risk assessment and the suitability of existing or new regulatory measures. Drawing from the Australian Government Productivity Commission's research paper on AI regulation, we outline a seven-step process to guide effective regulatory approaches for AI adoption in the RMG sector in Bangladesh (Figure 3).

Adapted from the Australian Government Productivity Commission, making the most of the artificial intelligence (AI) opportunity, research paper 2, the challenges of regulating AI, p. 7.

The first step in this journey is to contemplate both the current and prospective applications of AI in specific sectors. The second step is to gauge the level of risk against real-world scenarios to accurately determine the degree of risk exposure, the seriousness of potential consequences, and the likelihood of these risks materialising. This holistic risk assessment approach is vital for making informed decisions regarding the adoption and management of AI technologies, ensuring that benefits are maximised while mitigating potential harms (Step 2 – Figure 3).

The next step is to identify who can influence or control the risk. Accountability should rest with parties whose decisions or actions can effectively mitigate risks. For instance, developers influence the quality of AI technology for the RMG sector, impacting its performance in tasks such as pattern recognition or inventory management. However, RMG manufacturers play a significant role in determining where AI systems are deployed on the production line and ensuring that appropriate safeguards are in place to address concerns like quality control, supply chain optimisation, and worker safety (Step 3 – Figure 3).

In Steps 4 and 5, decision-makers should scrutinise existing regulatory protections and pinpoint any gaps that may exist. Bangladesh government should not rush to draft a new AI-specific regulation.

103

If the current frameworks are deemed adequate and effective in managing AI-related risks, there may be no immediate need for additional regulations. However, if deficiencies are identified during the assessment process, three possible courses of action are recommended (Step 6 – Figure 3):

Strengthening existing regulations: Review existing regulations to determine if they can be clarified or modified to address gaps in regulation or enforcement related to AI development or deployment. If viable, the recommendation is to clarify or amend existing regulations, along with providing ample resources and training to regulators, instead of introducing new regulations. Establishing new regulatory measures: In cases where existing regulations fall short of adequately addressing identified risks, a risk-based approach should be employed to assess the net benefits of introducing new regulations. This evaluation should consider relevant outcomes and risks, comparing them to a real-world counterfactual. Implementing regulatory sandboxes or voluntary codes: Regulatory sandboxes are another viable option. Sandboxes are structured frameworks established by regulatory bodies within specific sectors, such as the financial sector. The main goal of these sandboxes is to enable regulators to comprehend and evaluate unforeseen risks and harms linked to emerging technologies, thus aiding in the development of regulations to address these concerns. Additionally, industry codes that delineate precise AI requirements tailored to specific sectors could also be contemplated.

When considering new regulations, it is crucial to evaluate their overall advantages to the regulatory landscape. Additionally, aiming for technology-neutral aspects in these regulations fosters fairness and adaptability across different technological domains. Furthermore, recognising the diverse regulatory strategies worldwide helps tailor approaches that resonate with global standards and practices (Step 7 –Figure 3).

In conclusion, AI has the potential to transform a wide range of industries, with early adoption offering significant advantages. For countries such as Bangladesh, it is essential to address the legal and regulatory challenges associated with AI. By adopting a proactive approach, Bangladesh and its RMG sector can fully leverage AI's capabilities while ensuring compliance with legal and regulatory standards. This strategy will not only foster innovation but also create a solid foundation for sustainable, long-term growth in the AI sector.

Footnotes

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was supported by a grant from Monash Data Futures Institute at Monash University.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.