Abstract

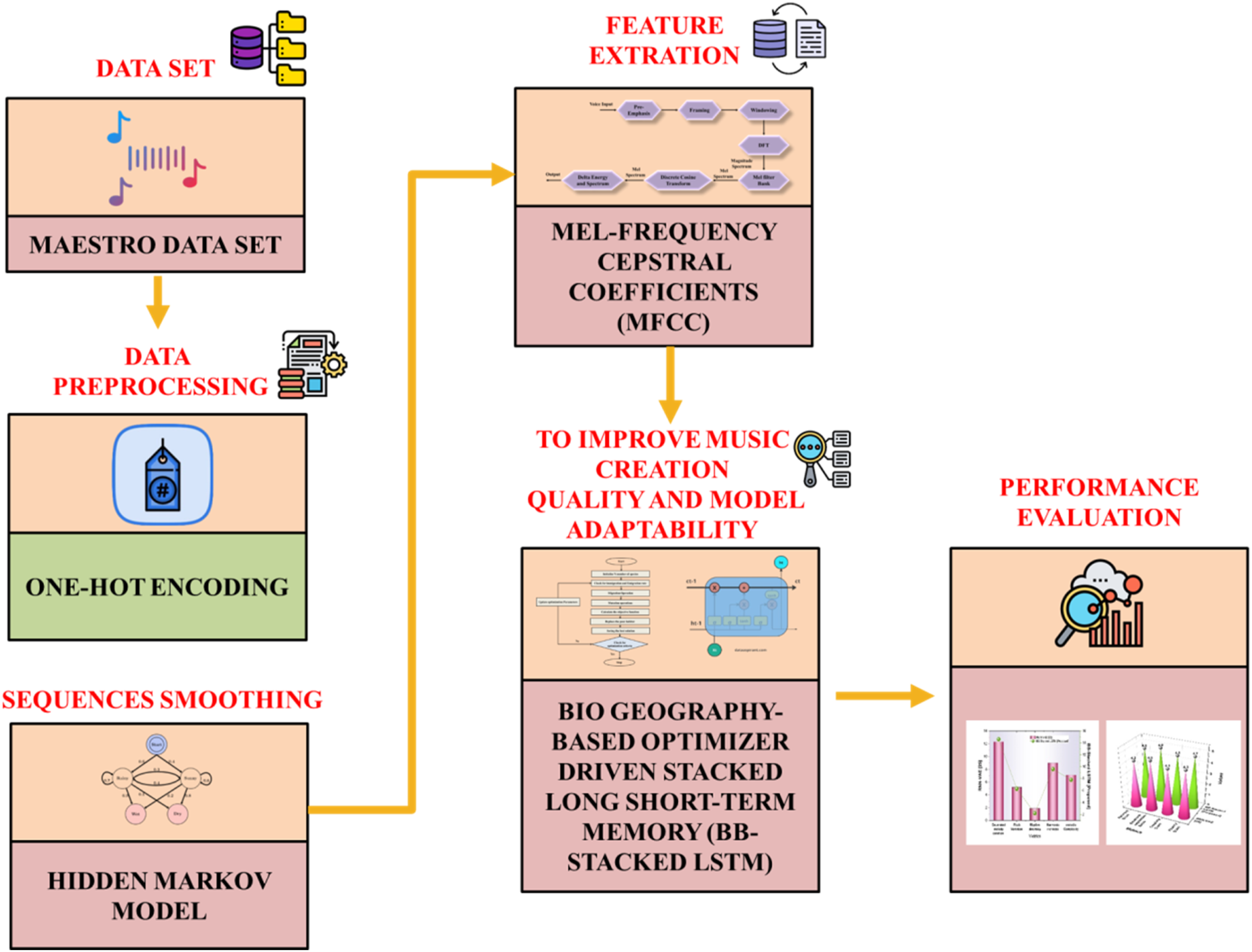

Real-time collaborative music creation requires dynamic systems that can understand musical sequences, tonal structure, and rhythmic flow in live contexts. Conventional sequence generation methods using static deep learning models often struggle with adapting to changing musical input. The limited use of audio features and non-optimized architectures leads to reduced fluency and stylistic coherence in generated improvisations. To enable adaptive and context-sensitive real-time music improvisation that responds fluidly to symbolic and audio-based inputs. A Biogeography-Based optimizer-driven stacked Long Short-Term Memory (BB-Stacked LSTM) is introduced, combining evolutionary optimization with temporal deep learning to improve improvisation quality and model adaptability. The BB-Stacked LSTM system uses evolutionary principles to optimize sequence modeling parameters, enhancing both accuracy and expressiveness in music generation. Performance-oriented datasets featuring paired audio and symbolic data are utilized, including genres such as jazz and classical. One-hot encoding is used for symbolic note sequences. Sequence smoothing is achieved through a Hidden Markov Model. Time-aligned symbolic and audio data are structured for temporal modeling. Mel-Frequency Cepstral Coefficients (MFCC) are extracted from audio to capture spectral and timbral properties. The Stacked LSTM learns sequence progression, while BBO tunes architectural parameters, including layer depth, unit count, and learning rate to maximize musical coherence. Generated sequences exhibit an improved armony score of 4.6. The BB-Stacked LSTM approach enhances real-time music generation by integrating evolutionary optimization with deep temporal modeling.

Keywords

Get full access to this article

View all access options for this article.