Abstract

Although a wealth of consumer research literature has examined privacy, the majority of this research has been conducted from a micro-economic or psychological perspective. This has led to a rather narrow view of consumer privacy, which ignores the larger socio-cultural forces at play. This paper suggests a shift in research perspective by adopting a consumer culture theory approach. This allows an in-depth look into the micro, meso and macro levels of analysis to explore privacy as a subjective, lived experience but also as a representation of cultural meanings that are further shaped by marketplace actors. The paper synthesizes how privacy has been conceptualized within consumer theory and advances three necessary shifts in research focus: from (1) prediction to experience, (2) causality to systems and (3) outcome to process. Specific theories or focus areas are explored within these shifts, which are then utilized to build a future research agenda.

Introduction

In today’s technology-dominated and constantly connected world, it is arguably impossible to live our everyday lives without leaving traces of data behind for firms to utilize. For marketers, this shift towards a data-driven society has been a celebrated change—data enables businesses to target their marketing actions based on very specific needs, leading to personalized products and services (Martin and Murphy, 2017; Wedel and Kannan, 2016) and more relevant marketing content (e.g. Bleier and Eisenbeiss, 2015).

However, as the technologies through which data can be collected continue to develop and the volume of data continues to grow, more attention is being paid to the dark side of data harvesting, and concerns about privacy are becoming more central (Bleier et al., 2020; Martin and Murphy, 2017). Privacy concerns are further fuelled by large international data breaches that can be considered a regular occurrence in today’s environment, some recent examples including Facebook in 2019 with 533 million accounts affected and LinkedIn in 2021 with 700 million accounts impacted (Hill and Swinhoe, 2021).

Privacy is interdisciplinary in nature and has been studied in fields such as information systems, public policy, marketing and sociology. Within consumer research, the topic has frequently been the subject of attention (Martin and Murphy, 2017); however, the focal point of interest has been on the micro-economics or psychology of privacy (Martin and Murphy, 2017; Stewart, 2017), with studies focusing on the measurement of behavioural and attitudinal aspects of privacy or its sub-concepts. A prevalent consensus is that privacy is a complicated construct that is difficult to define, which has steered scholars away from exploring it as a construct in itself (Stewart, 2017) towards examining concepts that are more easily conceptualized, defined and measured, such as the privacy calculus (Culnan and Armstrong, 1999) or the privacy paradox (Norberg et al., 2007).

Various technologies are so tightly intertwined to everything that we do that consumers have lost the ability to keep track of when and where their data is being collected (Darmody and Zwick, 2020). We should therefore ask as follows: what exactly is privacy from the consumer perspective, and how does it emerge within this complex and constantly connected reality? We need to delve into this question from the consumer standpoint in order to gain insight into the way in which consumers understand and experience privacy, as this can differ quite drastically from the marketers’ view. Further, we need to make sense of the way in which this experience stems from and is embedded in the surrounding socio-cultural environment. As a consequence, a shift in the current research perspective is needed and, in this paper, I propose a consumer culture theory (CCT) approach to studying consumer privacy. Through a CCT approach, we can delve, in a novel way, into the multi-layered, fluid and intricate nature of privacy, which is shaped through both personal and cultural meanings that get further negotiated and constructed in the market environment by marketplace actors. Thus, a CCT approach to privacy allows one to explore the micro, meso and macro levels of the concept as well as the connections between these three levels.

The purpose of this conceptual paper is to introduce a CCT approach to consumer privacy studies. This is achieved through proposing three shifts in research focus that will re-direct our attention from prediction to experience, causality to systems and outcome to process. Through these shifts, we can challenge some of the prevalent assumptions related to the concept of privacy within the consumer research literature and start building an alternative assumption ground. In recent years, scholars have discussed the integral role of conceptual papers in advancing marketing theory through their influential nature and long-lasting impact (Hulland, 2018; MacInnis, 2011; Yadav, 2010). In the same vein, this paper contributes to privacy research in the consumer research domain by revising the current frame of reference and in this way aims to advance theory and offer guidance for future work.

I will begin by examining how privacy has been conceptualized in the consumer research literature and discuss the benefits of a CCT approach to studying the construct. Thereafter, I will elaborate on the three necessary shifts in research focus and introduce specific perspectives–partly inspired by recent inquiries into privacy in other disciplines–which could open new fruitful avenues for studying the construct within consumer research. The perspectives introduced are chosen for their potential to offer tools and inspiration for studying privacy specifically through a consumer lens. Lastly, I will discuss the implications of the shifts for consumer theory and their potential in guiding future research.

Privacy in consumer research

The first definitions of consumer privacy date back to (1890) when legal scholars Warren and Brandei defined it as ‘the right to be left alone’. This referred specifically to protecting one’s personal space as the focus was on protecting physical privacy (Beke et al., 2018). With the rise of the Internet and other technological advancements from the 1980s onwards, the focus has shifted to informational privacy, which refers to questions of privacy in relation to the collection and storage of information (Beke et al., 2018). Since then, consumer privacy has commonly been defined as the ability to control one’s personal information and access to it (Belanger et al., 2002; Stone et al., 1983). Clarke (1999) saw privacy as a moral or legal right, and Smith et al. (2011) noted that in the literature privacy is seen as either a right or a commodity, referring to the way in which it is subject to economic principles of cost–benefit analysis and trade-off (Campbell and Carlson, 2002).

As discussed, privacy has been studied extensively in the consumer research literature, a point echoed in the comprehensive overview by Martin and Murphy (2017) on the role of data privacy in marketing, which collates the various theoretical perspectives that have been explored. In recent years, studies have examined for instance the link between personalization and privacy (Aguirre et al., 2015; Walrave et al., 2018) or trust and privacy (Bleier and Eisenbeiss, 2015; Fortes et al., 2017; Gana and Koce, 2016), consumers’ privacy choices (Johnson et al., 2020; Krafft et al., 2017) or businesses’ privacy practices and policies (Fox and Royne, 2018; Martin et al., 2017).

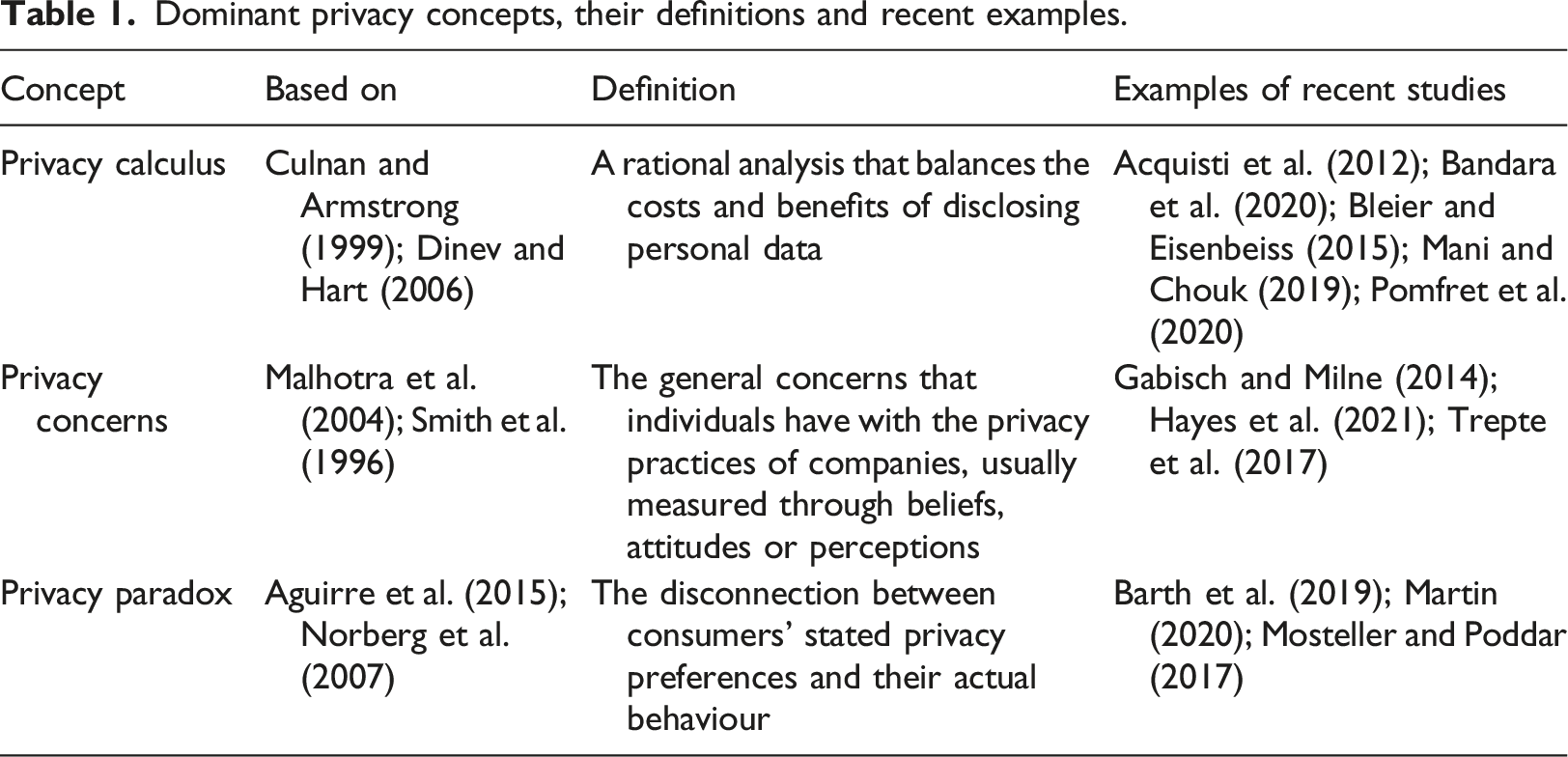

Dominant privacy concepts, their definitions and recent examples.

First, a great deal of research has been dedicated to the

More recently, studies have focused on the impact of monetary compensation on the calculus and consumers’ expectations for privacy protection (Gabisch and Milne, 2014), the differences between individualistic and collectivist cultures in the perception of privacy risks (Trepte et al., 2017) or the influence of the consumer–brand relationship on the perceived risks and benefits of information disclosure (Hayes et al., 2021), among other foci.

Second,

Recent studies on privacy concerns have explored how trust in the retailer affects the way in which personalized ads are perceived and whether they elicit privacy concerns (Bleier and Eisenbeiss, 2015) or how companies can manage consumers’ privacy concerns through increased trust and privacy empowerment (Bandara et al., 2020). Furthermore, the outcomes of the concerns–mainly the willingness to disclose information–have been examined. Pomfret et al. (2020), for instance, studied the socio-demographic and attitudinal influences on disclosure choices, and Acquisti et al. (2012) found that consumers will disclose sensitive information if they believe that others have done likewise.

Third, consumers’ privacy attitudes and actual behaviour often do not align, a phenomenon called the

Kokolakis (2017) has conducted a comprehensive literature review of the privacy paradox. He divides the literature into studies presenting evidence of the concept and into those challenging its existence as well as elaborates on the various explanations provided for the phenomenon. Indeed, the construct has also received ample criticism. One of its loudest critics, Solove (2020), argues that the whole concept of the privacy paradox is a myth with faulty logic. When consumers are asked about their attitudes concerning privacy, they think about the question on a very general level, and naturally, most would assert that they generally do value their privacy. Yet, the situations in which consumers make privacy-related decisions are very context-specific. Thus, distinct decision-making contexts should not be utilized for making larger generalizations about how people value their privacy.

In conclusion, in consumer research scholarship, privacy-related studies have focused primarily on privacy as a micro-economic or psychological construct, with the privacy calculus, privacy concerns and the privacy paradox serving as the most frequently researched concepts. In these studies, the goal has been to examine the relationships among various factors to explain and predict consumers’ behaviour in privacy-related situations. Through this focus, some underlying assumptions about what privacy is and how it can be studied have formed, resulting in a rather one-sided perspective of the construct, which ignores the larger socio-cultural systems that shape behaviours. Next, I will discuss the necessary shift in research perspective so as to broaden our understanding of privacy.

Shifting the research perspective

Darmody and Zwick (2020) propose that, in the future, data and digital marketing will continue to become ever more embedded into our lives up to the point that marketing might disappear as it extends through life without limit. From this point of view, privacy could become a completely irrelevant concept, or at least take on a very different shape compared to what we are used to. Indeed, consumers are living in complex hybrid environments where the lines between the online and offline worlds (Humayun and Belk, 2020; Šimůnková, 2019) and market and non-market modes (Eckhardt and Bardhi, 2016; Scaraboto, 2015) are fading. In these complex networks, movement between domains is fluid and strongly embedded in socio-technical arrangements, that is, networks in which technological hardware and software come together with social practices (Šimůnková, 2019; Thompson, 2019). As researchers, we need to find ways to explore phenomena, such as privacy, as emerging from and being shaped by this reality.

Against this backdrop, I argue that we need to approach privacy from a CCT perspective as this will enable us to explore the various nuances of the current hybrid and dynamic environment in which consumers live and within which privacy takes shape. Thus, in the same way that CCT has highlighted the new shape of concepts such as brand communities (Arvidsson and Caliandro, 2016), luxury (Bardhi et al., 2020), social status (Eckhardt and Bardhi, 2020) and consumer experience (Hoffman and Novak, 2018), given its relevance in the lives of always-online consumers, a CCT approach could help reinvigorate the outlook on consumer privacy.

CCT refers to a set of perspectives and theories that explore consumer culture, consumption and consumers as embedded in and arising from various socio-cultural systems (Arnould et al., 2021; Joy and Li, 2012). Various market-mediated meanings are affected by broader socio-historical and cultural meanings negotiated in social situations and contexts (Arnould and Thompson, 2005). Consequently, employing a CCT approach will allow us to see privacy as arising through the interaction of consumer actions, the marketplace and socio-cultural meanings (Arnould and Thompson, 2005). From this perspective, consumer privacy is considered a constantly negotiated social construct, not an inherent individual preference (Altman, 1975; Mulligan et al., 2020). A CCT approach provides the means to explore privacy through an emic perspective as a lived experience as well as to account for the larger socio-cultural context. Moreover, between these two levels, it also allows an in-depth look into the market dynamics and actors that shape both the individual experience and cultural understandings.

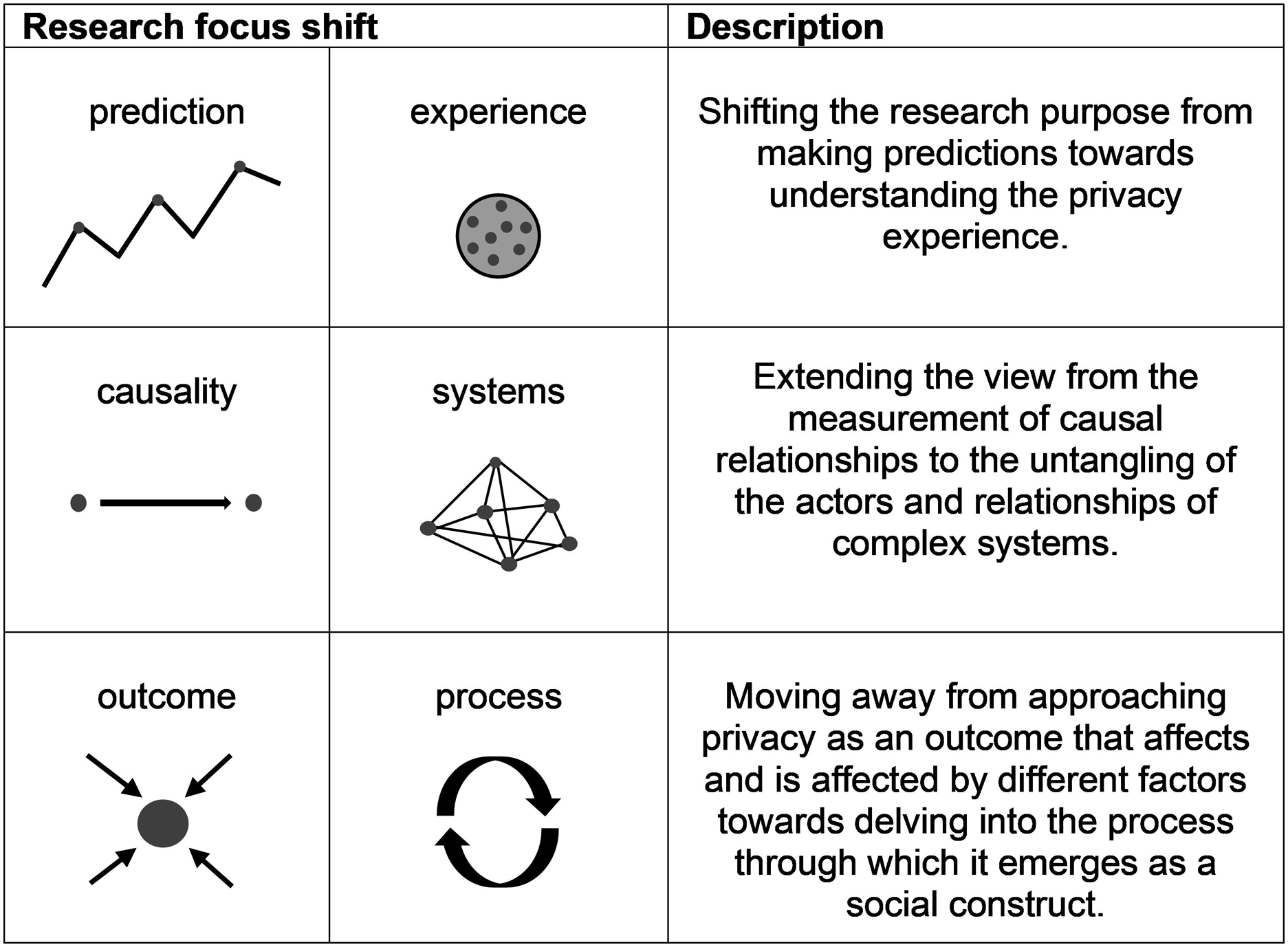

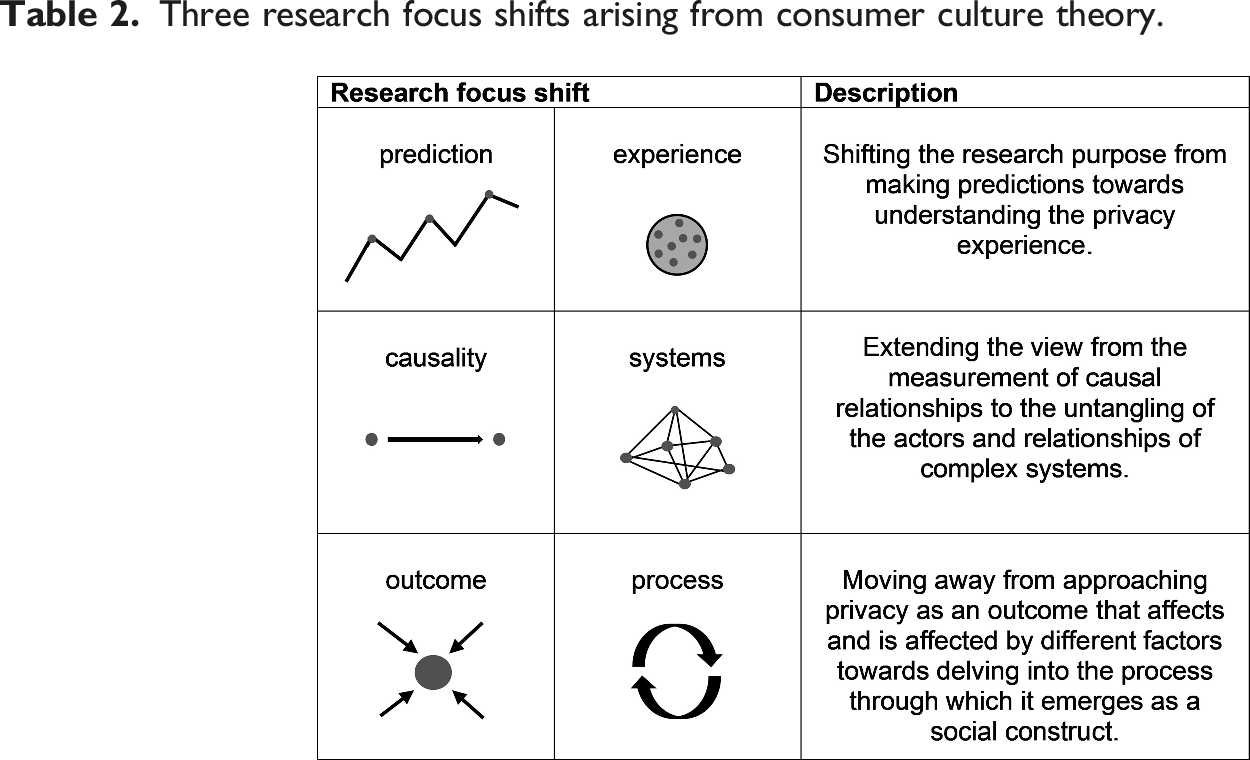

Three research focus shifts arising from consumer culture theory.

Research focus shift 1: From prediction to experience

The first shift steers the research purpose away from trying to predict how consumers will behave in different privacy-related situations towards trying to understand the experience of privacy. A large body of consumer privacy research has focused on predicting behaviour, for instance, disclosure choices (Pomfret et al., 2020), resistance to new products (Mani and Chouk, 2019) and social media engagement (Bright et al., 2021), through sub-concepts of privacy such as the privacy calculus or privacy concerns. However, with this reduced focus on prediction and context-specific decision-making processes, the question of what privacy is, and more specifically how consumers come to understand and give meaning to it in their fluid and always-online everyday lives, remains widely unexplored. To appreciate and uncover the fuzzy and complex nature of privacy, we need to move beyond condensing it into related concepts that can be used as a base for prediction and, instead, delve deeper into what the construct means for consumers and how it is experienced.

The answers to these questions are likely to be considerably different to what they used to be as privacy in the current hybrid world takes on very different shapes from what prevailed before. For example, health information has traditionally been considered private and personal, but nowadays through wearable devices consumers are willing to share data such as their heart rate and sleep patterns with service providers. Thus, to understand the reasons behind these behaviours, placing the privacy experience at the heart of research is essential. I will now discuss how utilizing a phenomenological approach to studying privacy and, stemming from that, focusing on everyday encounters and affects as configuring its boundaries, could provide the means to tap into the essence of the concept from the consumer point of view.

A phenomenological approach to privacy

Delving deeply into the consumer experience can be achieved by employing a phenomenological approach. Phenomenology is the study of the lifeworld (Van Manen, 1990) interested in phenomena: things and experiences the way they occur free from pre-set explanations or assumptions (Moran, 2000; Smith et al., 2009). Phenomenology aims to gain a deep understanding of everyday lived experiences by capturing them as they happen–before they have been categorized, classified or conceptualized; this way, the internal meaning structures of these experiences can be uncovered (Smith et al., 2009; Van Manen, 1990). The emphasis is not on trying to explain the world but, rather, deepening the contact and connection with it (Smith et al., 2009; Van Manen, 1990).

From a phenomenological perspective, the goal should be to try to come as close as possible to the individual’s everyday experience of privacy and the meanings this experience is given. A phenomenological approach lends both to theory and methodology. Within CCT, phenomenology has served as a leading epistemology, originally employed to try to insert a more humanistic perspective to consumer research by highlighting the consumer’s subjective experience (Askegaard and Linnet, 2011).

From a phenomenological standpoint, I suggest that one way of approaching privacy is through consumers’

Privacy configured in everyday life

Cohen (2012) asserts that privacy is always an embodied experience and that we come to understand and experience it through our lived experiences in the digital and physical networks that we are part of and within which the self is configured. In this way, in addition to experiences, the emphasis is on the everyday. Neal and Murji (2015) suggest that by focusing on the everyday and mundane, we can come to understand how social structures are lived and formed. Accordingly, when we explore privacy as a social construct, it seems fitting to turn our attention to the everyday encounters within which the cultural meanings surrounding privacy are negotiated and internalized.

According to Lyon (2017), surveillance is part of our everyday lives, and something consumers also themselves actively engage in constructing a culture of surveillance. Within this culture, the understandings of surveillance and, consequently, conceptions of privacy are configured in day-to-day encounters. The core of this surveillance culture is built on two components: surveillance imaginaries and surveillance practices (Lyon, 2017). Lyon (2017) bases the concept of surveillance imaginaries on Taylor’s (2004, 2007) social imaginaries, which refer to the shared understandings of the social world. These form a base for the implicit understandings of how the world works and what is normal.

More specifically, surveillance imaginaries concern the shared understandings of privacy and visibility in daily encounters and social relationships. Surveillance imaginaries are constructed by engaging with the surveillance culture in encounters with market actors, technologies and the larger cultural narratives circulating through mass and popular media (Lyon, 2017). Through these imaginaries, consumers start to make sense of privacy and attribute value to it.

Surveillance imaginaries inform the other main building block of the surveillance culture: surveillance practices. Surveillance practices can be both responsive, thus acting against being surveilled, or initiatory, thus acting to engage in the surveillance culture. Using a VPN when browsing the Internet is an example of a responsive practice, while using social media to check up on strangers is an example of initiatory practices (Lyon, 2017).

Surveillance imaginaries and practices have been explored in the literature by, for example, Duffy and Chan (2019), who used the concept of ‘imagined surveillance’ to describe how individuals see the various possibilities of surveillance on social media. The way in which individuals response to this imagined surveillance through different practices is dependent on both imagined audiences (Litt, 2012; Litt and Hargittai, 2016) and the imagined affordances of platforms (Nagy and Neff, 2015). Duffy and Chan (2019) explored how young people learn to anticipate or imagine institutional monitoring on social media and demonstrated the way in which the surrounding social context affects our privacy perceptions through imaginaries and practices.

Surveillance imaginaries and practices are firmly intertwined as imaginaries provide the means to engage in surveillance practices, which then reproduce the imaginaries. These imaginaries and practices take many forms, and as a result, individuals have varying responses to surveillance (Lyon, 2017). Therefore, Lyon (2017) stresses, that the experience of surveillance is not as simple and straightforward as compliance or resistance but, instead, more variable and multi-layered. In consequence, consumers’ sense of privacy is also an intricate concept that cannot be reduced to, for instance, ‘caring about privacy’ or ‘not caring about privacy’; rather, it is something that is constantly shifting. By pointing a spotlight on these imaginaries and daily practices of individuals, we can start to get a hold of these multi-layered responses and begin to move closer to understanding the privacy experience.

Privacy configured by affects

Emotions are intricately intertwined with the phenomenological perspective. To date, privacy research in the consumer research field has overlooked the role that emotions play in privacy. In fact, the prevailing underlying assumption is that privacy is based on cognitive and utilitarian thought processes. This shines through the significant focus on studies of the privacy calculus, the core of which is based on the idea of a rational cost–benefit analysis (Culnan and Armstrong, 1999). Rationality likewise features in studies focusing on privacy concerns as they highlight how consumers make careful considerations about whether to disclose their information based on specific factors, such as the knowledge that others have done the same (Acquisti et al., 2012). Conversely, scholars have found that consumers make very hasty and irrational decisions when it comes to their privacy. As studies of the privacy paradox demonstrate, rational calculations are replaced by heuristics and other non-conscious processes (John et al., 2011; Plangger and Montecchi, 2020). Nevertheless, both groups of studies disregard the role of emotions in forming a sense of privacy.

Based on studies conducted in other disciplines, the spotlight should be on affective states as part of the privacy experience (Watson and Lupton, 2020). I therefore assert that affects are focal in establishing a sense of privacy. Within sociology, Kennedy and Hill (2018) discuss how encounters with data are not only cognitive but also emotional experiences and that, therefore, emotions are vital components when consumers are making sense of data. Kennedy and Hill draw on the sociology of emotions and, based on Jaggar (1989), consider emotion as an ‘epistemic resource’, a way of uncovering the most important components in the social world and the behaviours of individuals. Therefore, when we want to explore and analyse social structures and arrangements, we need to acknowledge the value of emotion (Kennedy and Hill, 2018).

Moving closer to the topic of privacy, Leaver (2017) asserts that affects play a key role in permitting and normalizing surveillance in the digital world. In online social networks, affective states and emotions override other factors in driving continuous use and encouraging deeper engagement (Bucher, 2017), and the affective impact is heightened and more effective than other forms of knowledge (Leaver, 2017). Ruckenstein and Granroth (2020) build on this notion and explore emotional reactions to algorithms and targeted advertisement. They show how consumers desire opposing things: on one hand, consumers find tracking technologies and targeted advertisement creepy and intrusive, but on the other hand, they want advertisements to be relevant. Thus, emotions vary from disturbed and frightened to the pleasure of ‘being seen’ by the market.

Watson and Lupton (2020) propose that the boundaries of appropriate and inappropriate data use are largely established by the way that different privacy practices make consumers feel. When faced with privacy dilemmas, consumers want to first and foremost feel comfortable and avoid harm. Affects are entangled with affordances, everyday capacities and limitations, and while feelings of anxiety and discomfort occur, they do not take centre stage.

CCT as a stream was originally born as an objection to the view of consumers as rational decision-making computers, instead highlighting the more hedonic side of consumption (Thompson et al., 2013). I argue that this view should similarly be applied to privacy research. As discussed, in many cases, emotions override other forms of knowledge, and therefore, they are bound to play a role in privacy-related situations. Thus, it may be that the emotions that data practices elicit themselves configure the boundaries of privacy.

It is not possible to capture the essence of individuals’ experiences without acknowledging the role of emotions within these experiences; thus, we cannot begin to understand the experience of privacy without exploring the emotions attached to it. Exploring privacy through consumers’ sense of privacy likewise paves the way for allowing a more central role for affects as the word ‘sense’, as noted earlier, refers to ‘feeling or experiencing something without being able to explain exactly how’. Here, the emphasis is on the word ‘feeling’ guiding us towards the study of emotions instead of utilitarian and rational decision-making.

Research perspective shift 2: From causality to systems

The second shift extends the view from causal relationships to systems of actors. Previous privacy research has focused on uncovering and measuring causal relationships and correlations between factors, such as trust and privacy concerns (Bleier and Eisenbeiss, 2015) or technical knowledge and paradoxical behaviour (Barth et al., 2019). However, these relationships should instead be viewed as complex systems within which the connections between components are dynamic and entangled. The way in which consumers come to understand and experience privacy is not just affected by the companies’ privacy practices (Martin et al., 2017) or the trust and relationship between consumers and brands (Fortes et al., 2017; Hayes et al., 2021), neither is it based solely on individual factors such as personal skills and experiences (Barth et al., 2019; Smith et al., 2011).

Instead, the conception of what is and should be private is moulded through the interaction of a myriad of actors, such as private meanings and practices, but also marketplace actors and dynamics, technological systems and the media (Giesler and Fischer, 2017). Deriving from this, I assert that the research focus should be shifted to teasing out the multiplicity of actors involved in configuring a sense of privacy and exploring and untangling the interlaced relationships between them.

This adds another layer to the first shift as it extends the view from the individual to the surroundings and contexts within which the lived experiences are embedded. Next, I discuss how the networked and emerging nature of privacy can be approached through assemblage theory, which also highlights the interconnections between various actors. Thereafter, I explore two important actors: socio-technical systems and marketers.

Privacy as an assemblage

Privacy has recently been explored in the field of sociology through the study of assemblages (Watson and Lupton, 2020), utilizing more-than-human theory (Lupton, 2019). Watson and Lupton (2020) discuss the way in which other consumers and technologies affect both the way in which privacy is conceived as well as the actions taken in order to overcome issues related to it. Socio-material affordances are in a focal role in configuring individuals’ knowledge about privacy and their agency related to it.

Building on Deleuze and Guattari (1987), DeLanda (2006) notes that assemblage theory involves the study of compositions or constellations of heterogeneous actors or components. Assemblages emerge through the interaction between material, discursive and symbolic actants (DeLanda, 2006; Figueiredo, 2015), including humans, non-humans, devices and narratives (Canniford and Badje, 2015). In these dynamic networks, the properties of diverse actants become the capacities of the assemblage as they interact with the properties of other actants (DeLanda, 2006). Assemblage theory has commonly been deployed in the context of CCT as a theoretical framework to study phenomena such as the Internet of things (Hoffman and Novak, 2018), brand audiences (Parmentier and Fischer, 2015) and consumer tribes (Ruiz et al., 2020). However, privacy has not been explored through an assemblage lens in consumer research.

In an assemblage, the relationships between actants are symmetrical, meaning that object agency–that is, an actor’s capacity to act over other actors–is also symmetrical. This means that the influence of human actants within the assemblage is no greater than that of non-human actants (Canniford and Badje, 2015; Canniford and Shankar, 2013; Figueiredo, 2015). As a result, we can appreciate the role of each component in the assemblage and their influence over other components, thereby moving away from an overemphasis on humans as a centre of phenomena. Indeed, Thompson (2019) stresses the need for marketing to detach itself from the human-centred view and, instead, acknowledge the way in which consumption and consumer identities are embedded in and enabled through socio-technical arrangements.

Studies utilizing CCT from a postmodern perspective emphasize the dynamism of individuals and the fast pace of change when it comes to their identities and the environments within which they live and consume (Bardhi and Eckhardt, 2017; Bauman, 2000, Šimůnková, 2019; Thompson, 2019). Digital technologies further add to the speed of change and the complexity of the world and consumption culture (Arvidsson and Caliandro, 2016; Bardhi and Eckhardt, 2017; Bode and Kristensen, 2016; Kozinets et al., 2017). According to Thompson (2019), this intricate, multi-layered and dynamic reality can be understood through the study of market assemblages.

Consequently, using an assemblage theory lens, we can provide an alternative to the prevailing assumption of privacy as a static construct. We understand that a sense of privacy emerges through the interaction of material and symbolic components and is impacted by the constant flux of these components. Indeed, assemblages are characterized by the principle of emergence (DeLanda, 2006) and are, thus, ‘always in process, in a state of becoming and never complete’ (Figueiredo, 2015: 82). In the same vein, privacy is ever-changing and constantly in a state of becoming as the contexts and components involved are changing.

In conclusion, assemblage theory provides the means to understand consumers’ sense of privacy as an ambiguous concept formed through the interaction of a myriad of material and non-material actors. This assemblage is ever-changing, always in a state of becoming and is strongly entangled in socio-technical systems. As consumers live in a world where technology is deeply embedded in everyday practices and where they constantly and fluidly move from one space, context and situation to another, assemblage theory offers a useful map to guide us through this complex reality. Thus, with the help of assemblage theory, we can start to make sense of the complex and fluid nature of privacy.

Privacy shaped by socio-technical arrangements

As noted earlier, through assemblage theory, the agency of also non-human actors gets emphasized. When it comes to privacy, technological systems such as digital devices and platforms play a focal role within the assemblage as it is through the encounters with these technologies that the sense of privacy takes shape and is given meaning. The interactions and relationships between users and platforms are two-way, multi-layered and in a constant feedback loop and, therefore, best understood as socio-technical arrangements (Bucher and Helmond, 2017). As an example, through our actions on social media, we leave behind data that are immediately fed into recommender algorithms. These algorithms are constantly learning and adapting, filling our feeds with content and advertisements considered either relevant or intrusive, instantaneously affecting our sense of privacy.

Thus, socio-technical arrangements constitute a key contextual dimension within which privacy is negotiated and reshaped. The architecture of this dimension is better understood through the study of affordances. Affordances dictate what actions are available for users and, thus, affect the ways in which people can engage, for instance, on social media (boyd, 2010; Bucher and Helmond, 2017). To illustrate, boyd (2010) distinguishes four distinct affordances of social media platforms: persistence, replicability, scalability and searchability. Persistence refers to the way in which online expressions are automatically recorded and archived; replicability is the way in which content can be duplicated; scalability is the potential visibility of content; and searchability is the way in which all content can be found though search. The affordances of a platform create the framework and boundaries for consumers’ privacy practices and more general perceptions.

The affordances elaborated by boyd (2010) are of an abstract, high-level nature. This means that they are not constrained to specific features or buttons but, instead, enable or constrain various communicative practices and habits – more specifically, the ways in which users can interact (boyd, 2010; Bucher and Helmond, 2017). Simply looking at affordances as technical features that allow specific actions ignores the larger meanings attached to these actions and what they communicate (Bucher and Helmond, 2017; Meier et al., 2014). Further, it does not only matter what features do but also what users believe and expect them to do (Bucher and Helmond, 2017). Imagined affordances relate users’ perceptions, attitudes and expectations of them, which materialize between the specific functionalities and intentions of designers. Users’ expectations and beliefs about the function of certain features further shape the way they are approached (Nagy and Neff, 2015).

Privacy shaped by marketplace actors

Consumers are part of an information environment comprised of technologies–systems, platforms and their affordances–and the companies and marketers that create them. This information environment forms the market where consumers provide their data to gain access to various services and creates the immediate use context that affects consumer action and choices. It has traditionally been thought that consumers can freely and independently make decisions regarding the way they use the systems provided by market actors. This places the responsibility for privacy on individuals, which is the reason why in law and regulation there has been a reliance on notice and consent regimes and privacy self-management (Hull, 2015).

According to Hull (2015), privacy self-management is not a useful principal because, first, users do not and cannot understand what they are consenting to. An information asymmetry exists as consumers, and sometimes even companies, do not know what the data will be used for in the future (McDonald and Cranor, 2008). In addition, privacy statements are long, complicated and difficult to read, largely because keeping them this way is in the interest of the data mining companies (Hull, 2015). Second, even if consumers wanted to act based on their privacy preferences and not use services such as Google or Facebook, the choice is not really theirs. As work, free time and social relationships increasingly move online, it is extremely difficult for individuals to resist joining these platforms and, as such, resist consenting to data harvesting. Thus, the cost of non-consent is too high (Hull, 2015).

Hull (2015) maintains that using privacy self-management as a starting point in trying to understand consumers’ privacy behaviours has led to a problematic perception of privacy as an individual, commodified good that can be traded for other market goods. This notion of economic rationality proposes that all actions taken by individuals directly represent their preferences and, more importantly, that these preferences have been formed autonomously. In reality, technology companies are constantly trying to normalize surveillance by connecting it with notions of convenience and well-being (Bettany and Kerrane, 2016) and directing behaviours through nudging (Puntoni et al., 2020). This way, marketers are constructing the information environment for their own purposes, and individuals do not come to it fully informed; instead, they are constituted by the very environment (Cohen, 2012; Hull, 2015).

This relates to the idea of hypernudging (Yeung, 2017). Through hypernudging, digital marketers construct the information environment to the point that they do not only shape the conscious decision-making process but also the unconscious intention itself. Thus, the goal is not only to know the consumer subject but also co-create it (Yeung, 2017). Marketers frame this as hyper-relevance, a metaphor implying that these actions could lead to a world where consumers and marketers have the same ultimate goal: to create a unique and personalized world for each individual, which reinforces–not dilutes–consumer autonomy and power (Darmody and Zwick, 2020).

Therefore, marketplace actors play a crucial role in impacting both the formation of our privacy preferences and the ways in which we act on them. Placing emphasis on the context within which decisions regarding privacy are made means that we need to consider how the context has been constructed by different agents with varying objectives. Through specific affordances and social cues, marketers create an environment that affects both consumers’ actual chances of taking action and their conception of these chances.

Shift 3: From outcome to process

Relating to the actors and their relationships in the second shift, the third shift moves us away from studying privacy as a given outcome towards studying the process through which it emerges. The focus on causal correlations that treat privacy concepts as impacting or being impacted by factors ignores the question of how privacy in the first place takes shape in the minds of consumers.

This adds the larger socio-cultural context into the mix as the conception of what is and what should be private is constructed in society through prevailing narratives and meanings negotiated in cultural and social contexts (Watson and Lupton, 2020). As Solove (2008: 74) maintains, privacy is not an inherent preference; it is socially constructed and, thus, ‘a product of norms, activities and legal protections’. No concept or piece of information is inherently private. Instead, it is transferred into the private realm through time and in interaction with cultural and social contexts. Although the influence of the context or the surrounding culture in relation to privacy concerns has been acknowledged (e.g. Martin and Murphy, 2017; Pavlou, 2011; Smith et al., 2011), these studies treat the socio-cultural environment as merely a factor impacting an outcome. This approach fails to address the way in which the conceptions and meanings of privacy are de facto constructed within the socio-cultural environment.

Societal conceptions of privacy are rooted in the larger discussion about surveillance in society, and I shall now consider two distinct takes on this: surveillance capitalism (Zuboff, 2019) and surveillance culture (Lyon, 2017). These two approaches have very different views on consumer agency within the surveillance system and, thus, also produce very different cultural narratives about privacy and its place in society. At the end of this part, I will touch on these contradicting views and their connection to the social context within which they are reproduced.

The narratives of surveillance capitalism and surveillance culture

In the context of sociological scholarship, Zuboff (2019) approaches the surveillance society through surveillance capitalism, which refers to a new marketplace in which consumer data is the main capital. Within the surveillance capitalist system, human experience is treated as raw material that can be translated into behavioural data. This data is then packaged into what Zuboff (2019) calls prediction products, which are sold to business customers interested in anticipating individuals’ behaviour. Zuboff (2019) explains that Google was the pioneer of surveillance capitalism and discovered the ‘behavioural surplus'–the capturing of more data than what is needed for the upkeep of the service. These systems of surveillance capitalism are firmly tied to the ideas of hypernudging (Yeung, 2017) and the construction of the information environment and consumer subjects by market actors.

According to Zuboff (2019), the systems of surveillance capitalism gained momentum through the neoliberal economic and political environment, a prevailing need for individualization and personalized experiences and the idolization of the Silicon Valley ideologies and entrepreneurs. She maintains that an essential ingredient was also the way in which individuals were kept ignorant of the changes occurring, which was achieved by selling them the idea that these economic practices were an inevitable consequence of digital technology. In the Western world, technological progress is inextricably wound up in economic growth and social development, and should therefore not be resisted. Thus, if there are some side effects to this progress, they are something that society must learn to live with. However, according to Zuboff (2019), even if technological development is inevitable, the systems of surveillance capitalism are not.

Zuboff (2019) further argues that individuals within the new capitalist system are passive agents who have been made to feel defeated and stagnant. However, this view has also been challenged. Lyon (2017) maintains that consumers engage as active members in what he describes as surveillance culture. Watching has become a way of life as individuals check up on others on social media or install home security systems. More importantly, this passive acceptance of the inevitability of surveillance capitalism is not entirely accurate as individuals have varying responses to surveillance. Relating to surveillance practices, micro responses to surveillance occur in everyday online interactions, even if individuals are not fully aware of them. In addition, there are bigger and more organized movements and shared agendas that seek to resist the current condition. Thus, individuals are not only subject to power but also have agency as subjects of power (Lyon, 2017).

This resonates with the CCT scholarship as many studies stress consumer agency painting a picture of an empowered consumer who can live freely within market environments and take advantage of the opportunities provided by it (Askegaard and Linnet, 2011). Similarly, in relation to privacy, Yap et al. (2012) highlight the active role and agency of consumers in their privacy management: they do not just passively surrender to the actions of companies but, instead, desire to be sovereign, in charge and in control. Thus, in the bigger picture, the culture of surveillance and conceptions of privacy are socially constructed through the active agency of individuals, which suggests that they can also be challenged and reconstructed by the same individuals (Lyon, 2017).

The social construction of privacy

Public opinion and cultural narratives about privacy are built on the notions of surveillance capitalism and surveillance culture. Regardless of whether one fully buys into the idea of a helpless individual within the surveillance capitalist system, it would be foolish to ignore its impact on the discourses circulating on mass and popular media. We are constantly exposed to the inevitability narrative: technological development and its corollary, surveillance, are inevitable and the only way for societies to flourish. Even further, the way in which privacy is conceived by individuals and ascribed meaning is largely shaped by notions of ‘privacy is dead’ or that privacy stands in the way of great development and innovation (Cohen, 2013). Therefore, it is no wonder that feelings of helplessness surface (Andrejevic, 2014; Draper and Turow, 2019). On the other hand, more in line with the notion of a surveillance culture (Lyon, 2017), the cultural discourse highlights a sovereign consumer with core values such as autonomy and empowerment (Yap et al., 2012).

However, these two approaches are also intertwined as the cultural stories influenced by surveillance capitalism become part of surveillance culture, which is created and maintained through the actions of individuals who both strengthen and oppose this culture and its narratives (Lyon, 2017). To look at individuals only as passive acceptors of the surveillance capitalist paradigm would ignore their agency, varied responses, understandings and affects when it comes to surveillance. Therefore, it is important for the study of privacy to also place further emphasis on individuals as active agents in creating and maintaining culture. Indeed, previous work has already recognized the ways in which individuals can for instance contest the dominant datafication narratives though alternative imaginaries (e.g. Kazansky and Milan, 2021; Kennedy et al., 2015; Lehtiniemi and Ruckenstein, 2019).

Still, to close the loop further, Watson and Lupton (2020) note that consumer agency takes place within a certain socio-cultural context, and as a result, individuals draw on their understanding and experiences of the environment in which they live. This also includes the more immediate social contexts within which individuals live their lives and within which these narratives are negotiated. Steeves (2009) asserts that privacy works as the line between the self and others that is negotiated between social actors in daily encounters; thus, it is constituted only through social interaction.

Nissenbaum (2010) highlights the contextual nature of privacy and conceptualizes a privacy theory based on ‘contextual integrity’. Here, the focus is on an appropriate flow of personal information, depending on the context, the type of information being shared and the social roles of the sender, subject and recipient. Consequently, a privacy violation occurs when information moves from a context where sharing it is acceptable to another where it is not. Marwick and boyd (2014) develop this idea even further in their discussion on ‘networked privacy’. They suggest that information norms and contexts are co-constructed by participants and always shifting, which means that individuals do not always have complete control or understanding of these contexts. Further, according to Watson and Lupton (2020), social relations and other consumers affect both how privacy is conceived and the actions taken in order to overcome privacy-related issues. Thus, problems related to privacy are seen first and foremost as social.

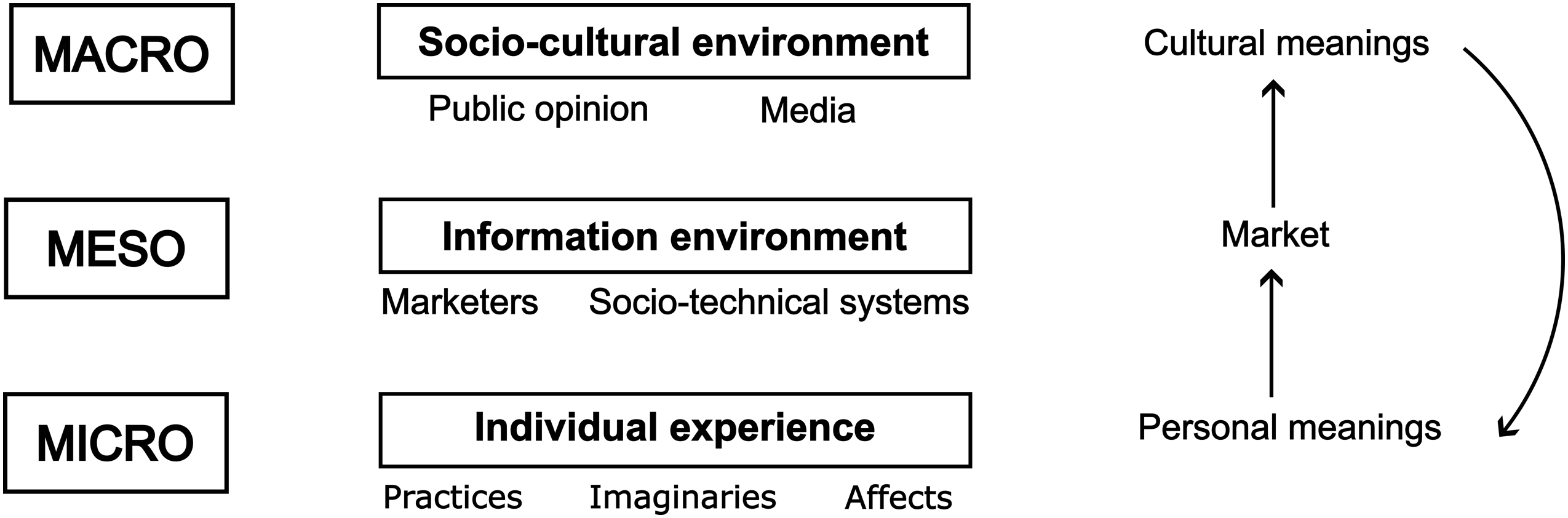

To summarize, even when consumers take action, they are not fully autonomous as they cannot be separated from their socio-cultural setting including both the more immediate social, as well as the larger cultural environment. The individual, social and cultural levels are forever intertwined and in a dynamic three-way relationship where one cannot be understood without the others. Moreover, the market creates another layer through which consumer agency takes place and personal and cultural meanings are renegotiated. This creates the three levels of analysis through which privacy can be explored: the micro, meso and macro. These levels will now be utilized to summarize the above-discussed perspectives and structure a roadmap for future research.

Discussion

Because of the prevalence of concepts such as the privacy calculus and the privacy paradox in consumer research, privacy has been reduced to a one-dimensional concept that focuses on how individual behaviour can be predicted in specific contexts. To break away from this, the first step is to extend the level of analysis from the individual to include the micro, meso and macro levels. To move away from an exclusive focus on the individual and their privacy management practices and disclosure behaviour and, consequently, better understand privacy, we need to look at how individual experience, the market and the larger cultural context intertwine and overlap in a dynamic relationship.

On the micro level, the analysis focuses on the consumer lifeworld and individual experience. Consumers draw from the macro and meso levels as they make sense of privacy through their day-to-day encounters with the information and larger cultural environment. On this level, cultural narratives and meanings are negotiated as part of the everyday personal experience. This experience is constructed through the interaction of practices, imaginaries (Lyon, 2017) and affects (Watson and Lupton, 2020).

On the meso level, market systems and actors are at the heart of the analysis. Marketers and companies interested in individuals’ data, together with technologies and their affordances create an information environment. This information environment can be seen as the market on which individuals use and engage with various systems and, while doing so, make decisions regarding their privacy and data.

Lastly, on the macro level, the focus is on society and culture as public opinion interacts with mass and popular media. Public opinion about privacy is formed through prevailing cultural narratives, understandings and meanings that are circulated, strengthened and reproduced through media. On their end, individuals oppose and reinforce these narratives and build and preserve surveillance culture.

As Figure 1 illustrates, the three levels are interconnected as personal meanings and conceptions are negotiated in the market, which provides the more immediate social and use context in which privacy takes shape. These conceptions support or contest the larger cultural meanings that eventually provide the answer to the question of ‘what is normal’ (Grace, 2021) when it comes to privacy. Again, at the level of individual experience, these cultural meanings are internalized and personalized in day-to-day life experiences. The micro, meso and macro levels of analysis in privacy research.

A back-and-forth analysis between these levels allows for a back-and-forth analysis between cultural and individual meanings. We can capture the way in which consumers interpret and negotiate meanings within their personal experiences and how these interpretations further preserve and challenge consumer culture (Grace, 2021). Between these two levels, we can further consider the intervening role of markets. Through market actors and technologies, both personal and cultural meanings are reshaped as they move from one level to the other.

Avenues for future research

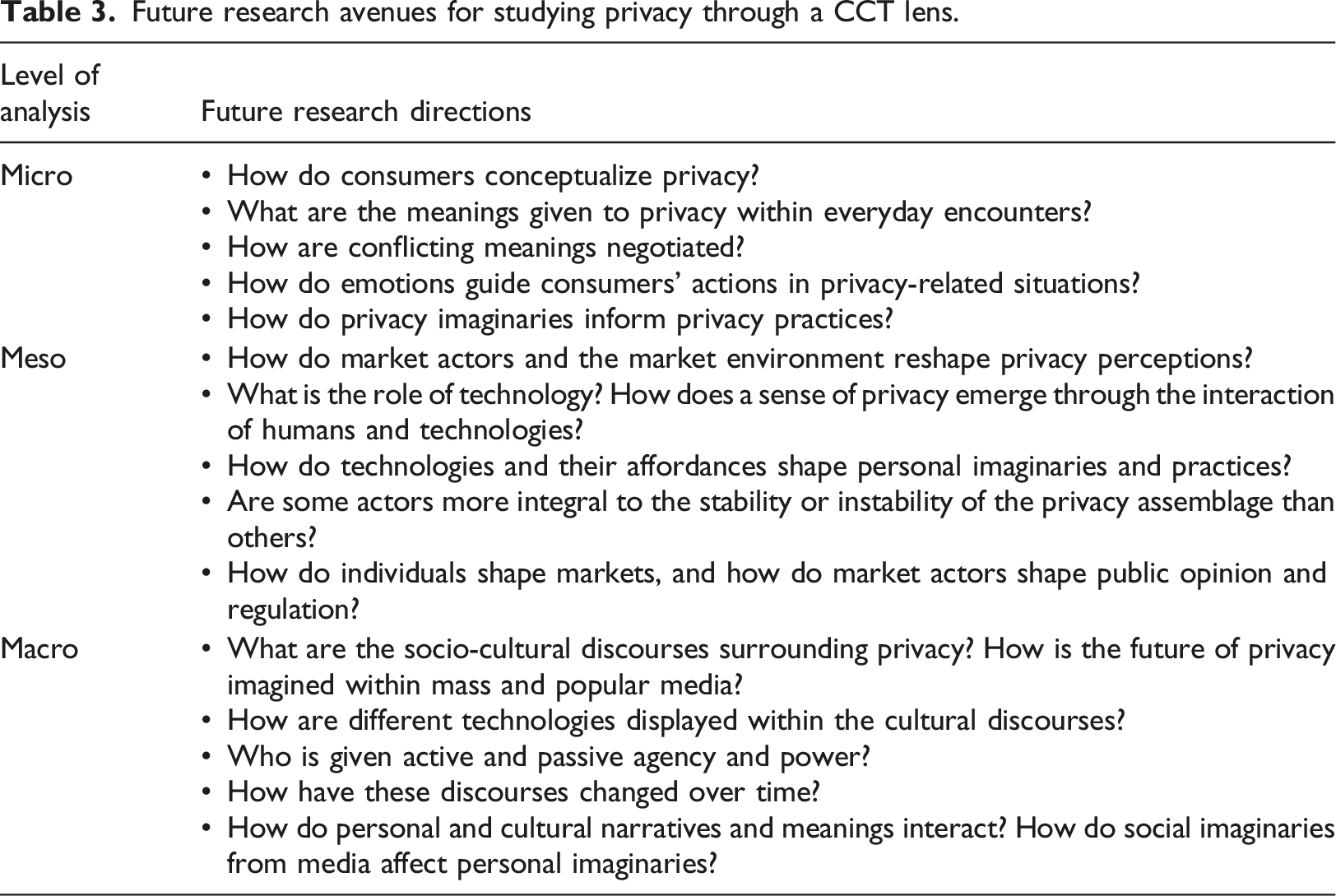

Future research avenues for studying privacy through a CCT lens.

First, a CCT perspective allows the exploration of the lived experiences of individuals and the way in which their sense of privacy takes shape within them. As studies to date have only approached privacy indirectly through associated concepts (Stewart, 2017), on the micro level through a phenomenological lens, we can tap into the essence of privacy and ask questions relating to the meanings given to it in everyday experiences, the role of emotions and the ways privacy practices and imaginaries inform each other.

Second, a CCT approach provides the means to look beyond the individual to understand how personal experiences are embedded in larger socio-cultural systems on the macro level. In this way, we can raise the level of analysis to the socio-cultural context and approach privacy as a construct shaped and negotiated within social contexts and encounters. This level of analysis has been missing from privacy studies in consumer research and can pave the way for interesting research questions related to the socio-cultural discourses surrounding privacy, including the various actors involved in and steering these discourses, their agency and the way these narratives have changed over time.

Third, on the meso level and with the help of assemblage theory, we can begin untangling the various material and non-material components involved. Future research can try to recognize these components, delve into the relationships between them and explore whether some components are more integral to the stability of the assemblage than others. In this way, we can place emphasis on the intricate and ever-shifting nature of privacy and the way it emerges within the hybrid environment in which consumers live, thus challenging the view of privacy as a static construct (Watson and Lupton, 2020).

Lastly, as the levels are interconnected, researchers could also ask how cultural imaginaries are internalized within individual experiences or how market actors shape public opinion and regulation. Overall, a CCT approach to privacy provides the means to highlight privacy as strongly embedded in and mediated by socio-technical arrangements. This theme intersects all the levels and re-directs focus from merely human actors, such as consumers or firms, towards also acknowledging the role of technologies. The importance of specific technologies and the way in which they interact, affect and are affected by consumers offers interesting avenues for future research.

Conclusion

Privacy is and will continue to be an important and relevant topic of study in consumer research. However, the current view of consumer privacy is rather one-sided and does not fully account for the hybrid and data-embedded reality in which consumers live. Therefore, we need to re-consider the lens through which we approach privacy so that we can better tap into its essence. Applying a CCT perspective will allow us to capture the multi-layered and fluid nature of privacy, calling attention to both personal and cultural meanings as well as to the way in which a sense of privacy is embedded in socio-technical arrangements and market dynamics.

In this paper, I have highlighted three necessary shifts in research focus: from prediction to experience, causality to systems and outcome to process. This proposed revised frame of reference is also necessary from a managerial standpoint as marketers are in urgent need of a deeper understanding of privacy. It has been suggested that firms should increasingly prioritize privacy and develop it as a competitive advantage (Casadesus-Masanell and Hervas-Drane, 2015; Martin and Murphy, 2017). Consumers need to be involved in the dialogue (Martin and Murphy, 2017) so that privacy practices can be aligned with their actual needs and preferences and not just with marketers’ conceptions of these needs and preferences. Developing privacy practices and communication in a consumer-centric way begins by discovering the core of the construct. Thus, when companies say ‘we care about your privacy’, they need to genuinely be aware of what the word means for consumers and how this is embedded in the surrounding cultural context.

The aim of this paper was to discuss perspectives that could help us better understand privacy and its meanings in the lives of individuals. The insights gained from this inform a second, equally important question: how can we better understand privacy in society, or further, how can we better protect privacy? This question is beyond the scope of this article but relates to the theories of digital ethics. Floridi (2019) maintains, that ethics should inform regulation through themes such as transparency, fairness and non-discrimination. Going from this, important work has been conducted in relation to the notion of group privacy (Floridi, 2017). As Floridi (2017) explains, users of big data do not, in essence, care about individuals; instead, they care about which groups they belong to and connect with. Even if we are able to protect individuals and their information, groups of people can still be identified and targeted, creating a base for discrimination. For this reason, more weight should be placed on the protection of group privacy. In general, to obtain progress in privacy protection, privacy should be seen not just in terms of individual value but also in terms of social value (Hull, 2015; Regan, 2015; Solove, 2008). Privacy is something that is needed to maintain the social networks within which individuals can flourish. Therefore, a society in which privacy is present is better for everyone (Hull, 2015).

In the words of MacInnis (2011: 143), metaphorically, my aim in this paper was to ‘move the dial in the kaleidoscope to reveal a new image’ so that we could come to see privacy in a new light, with an appreciation for its diverse colours, shades and shapes. I hope that I have managed to capture the multiple intriguing layers of privacy that are yet to be discovered and inspired researchers to grab the kaleidoscope and move the dial.

Footnotes

Conflict of interest

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.