Abstract

This article examines the interaction between deaf and hearing interlocutors in order to demonstrate how understanding (and misunderstanding) can be expressed and inspected through the situated use of multimodal resources. In this communicative situation, participants have asymmetrical experiences of being deaf and being hearing, and ‘codified’ (either speech or sign-language) resources are little shared among participants. The multimodal analysis of an interactional sequence between a young deaf child, her deaf friend and her hearing mother demonstrates ways in which participants use semiotic resources to take, execute and give turns in the presence of sensory asymmetries. The organization of turn taking in this sequence provides insights into the ways in which understanding (or lack of it) can be demonstrated, monitored and co-constructed by participants throughout the interaction. The findings demonstrate that turns offer a useful point of analysis for the recognition and inspection of signs of understanding in the context of sensory asymmetries but there needs to be a qualitative orientation to assessing this. This contribution to the research on situated multimodal sign-making underlines the need for the development of multimodal frameworks that can account for, and effectively document, situated meaning-making beyond ‘codified/linguistic’ resources.

Keywords

Introduction

In this article we examine interaction between deaf and hearing interlocutors to demonstrate how understanding (and misunderstanding) can be expressed and inspected through the situated use of multimodal resources. The context of deaf–hearing interaction that we examine presents a particular communicative situation where participants have asymmetrical experiences of being deaf and being hearing and where ‘codified’ (either speech or sign-language) resources are little shared among participants. After a review of the literature relevant to the analysis of turn organization, understanding and deaf–hearing interaction, our analysis of an excerpt of deaf–hearing interaction focuses on two aspects, namely:

The ways in which semiotic resources are assembled and exploited for the organization of turn taking and how sequences of communicative actions are built. From this we offer some insights into the interlocutors’ interactional knowledge, skills and communicative strategies, as well as a redefinition of turn as an uninterrupted series of communicative actions (with or without the presence of utterances).

The ways in which understanding is manifested through turn organization and the resulting unfolding of the action. Our conceptualization of understanding in this context encompasses (i) the understanding of the message (understanding what) and (ii) the understanding of what is required for meaning-making in this context (understanding how).

We examine face-to-face interaction and signs of understanding in the presence of sensory and communicative asymmetries, that is, where there are different experiences of being deaf/hearing and where speech and sign language are not readily accessible to all participants (Kusters, 2017). This study of interaction among deaf and hearing interlocutors makes an original contribution to the study of meaning-making within the field of multimodal interaction analysis (e.g. Norris, 2017) and multimodal Conversation Analysis (e.g. Mondada, 2012), where shared auditory and oral resources are more usually assumed. The analysis of deaf–hearing interaction through a multimodal framework also brings a fresh perspective to deaf education and deaf studies research that tends to focus on linguistic and sociolinguistic questions.

More widely, the methodological problems that we examine are significant for the development of ways of seeing, describing and analysing face-to-face interaction, the annotation and presentation of multimodal interactional data, and for the development of practical approaches and strategies for enhancing communication and mutual understanding in contexts where sharedness of semiotic resources cannot be assumed.

Turn organization: problematizing the definition of ‘turn’

In our analysis of turn organization, we describe the multimodal practices that interlocutors use to take, execute and offer turns. Because this interaction involves deaf and hearing participants who share little linguistic (either spoken or signed) repertoire, the notion of turn as defined by the use of linguistic resources and analysed at the level of ‘talk’, as well as that of ‘speaker’ and ‘listener’, are problematic (Goodwin, 1979, 1981; Sacks et al., 1974). We thus set out terms of reference for our analysis of turns within a multimodal framework that extends the focus on syntactic, prosodic and pragmatic resources to include gesture, gaze and bodily posture (Goodwin, 2000) and a more in-depth analysis of the multimodal practices involved in taking ‘turns-at-talk’ (Mondada, 2007: 197).

To embrace the importance of the deployment of multimodal resources in deaf–hearing turn-taking we suggest that ‘turns’ can be usefully equated with an uninterrupted series of communicative actions, performed both in simultaneity and in sequence by a given participant in an interaction. As the smallest meaning unit, that is, where something is communicated, actions are contingent on what has gone before and this is important for our analysis of understanding in this context. The analysis of chains of actions or the unfolding of the actions, we argue, will provide insights into the understanding of what is meant and also of how meaning can be made given the resources available to interactants. Communicative actions are conceptually similar to Norris’s (2004) notion of lower-level actions. We prefer avoiding Norris’s concept as she relates lower-level actions to higher-level ones, in defining their function within the interaction. Given the nature of our data and focus of our analysis (i.e. to examine how mutual understanding is achieved and co-constructed, rather than assuming understanding as a given and analysing shifts in focus of attention, as is done by Norris), we avoid making any inferences on possible relations between lower- and higher-level actions. In our analysis, a turn is defined by its boundaries, i.e. an interactant starting and stopping an uninterrupted series of actions directed towards (any of) the other interactants. While Conversation Analysis research has increasingly acknowledged that turn boundaries can be signalled by embodied resources (such as gaze shifts or body movements) and turns themselves can be constituted by other semiotic resources along with speech (for a detailed review, see Mondada, 2014), the analysis of the interaction in the present study expands on the notion of turn, as speech (or sign language) can be totally absent and yet the actions performed are fully communicative; hence the distinction between ‘turn and sequences of actions’ (p. 140) is not tenable in our case.

Our first aim is to document how turns reconceptualized in this way are taken, executed and given in this context of sensory and semiotic asymmetries: what essential conditions are required, and what shared resources are mobilized. This analysis of turn organization in a situation where speech and sign language are not readily available or accessible to all participants offers potentially novel insights into the research on turn organization as multimodally constituted through the situated use of resources for managing the interaction and their situated making for the expression, co-construction and negotiation of meaning.

Signs Of Understanding: Recognition Through Situated (Inter)Action

Through an analysis of turn organization and the resulting unfolding of the sensorially and communicatively asymmetrical (inter)action we aim to identify ways in which understanding is expressed and assessed. Our take on understanding and how this is manifested draws on the work of Kress (2009), Bezemer and Kress (2016) and Mondada (2011) and the notion of understanding as embedded in the situated actions of the interlocutors and demonstrated through the contingency and relevance of these actions.

In building on this work, we examine ways of recognizing understanding in the context of deaf–hearing communication. Interactional analysis in this context usually focuses on the resources of speech and/or sign-language and writing, and we suggest that this only provides partial insights into the understanding of the interlocutors. In our analysis we therefore aim to discover the other ‘inaudible’ and ‘invisible’ ways in which understanding is demonstrated, thus broadening our recognition of the resourcefulness of deaf and hearing participants in interaction (Bezemer and Kress, 2016: 5).

For understanding to take place, there has to be an understanding of the meaning that is intended (the what) but also an understanding of what is required for meaning-making in this context (the how). We are thus investigating the understanding of ‘what’ and ‘how’, arguing that the latter dimension is crucial to this context and that both can be perceived in the analysis of the unfolding of the interlocutors’ actions. Working with Mondada’s (2011) framework, we analyse the sequence of actions between participants, achieved through gesture, gaze, facial expression, posture, as well as the use of objects and space, that demonstrate understanding, or not understanding.

Deaf–Hearing Interaction: Context And Research

Language development and communication

Childhood deafness impacts significantly on early interaction and language development, and therefore presents significant issues for understanding in all face-to-face interactional contexts (Marschark and Hauser, 2008; Peterson and Slaughter, 2006). The substantial body of research in this field demonstrates the importance of intervention with children and families at an early stage, when exposure to a natural signed or spoken language is crucial (Mayberry et al., 2011). Neonatal hearing screening, adopted in almost all industrialized countries now ensures early identification and intervention ideally before 6 months of age (Niparko et al., 2010). Sophisticated hearing technologies – and especially Cochlear Implants (CIs) – have improved deaf children’s access to auditory information, although these technologies do not ‘restore’ hearing or provide the detailed auditory input received by hearing children (Peterson et al., 2010).

The importance of cooperative early interaction that elicits rich communication between deaf infants and their caregivers is a strong theme in this research with much attention given to the differences between the communication strategies of deaf and hearing adults (Depowski et al., 2015; Loots and Devisé, 2003). Deaf caregivers intuitively use more touch and visual communication and strategies that are adapted to the visual channel (Bailes et al., 2009; Meadow-Orlans et al., 2004). Under these conditions, deaf children’s sign language development parallels spoken language development in terms of early babbling, articulation errors, vocabulary and grammatical development (Lederberg et al., 2013).

However, for the majority of deaf children that are born to hearing parents with no previous experience of deafness, these conditions do not prevail (Mitchell and Karchmer, 2004). For most deaf children, interaction at home and at school is situated within contexts where spoken language is salient and where adjustments that acknowledge the importance of visual communication, although made, may not be intuitive or embedded in the established communicative culture (Loots and Devisé, 2003; Loots et al., 2005).These sensory asymmetries can be disruptive of sustained interactions among deaf–hearing dyads (Gale and Schick, 2008). Hearing parents thus benefit from support in developing multimodal (gesture and vocalization) communication strategies that are contingent on the communicative acts of the child (Roberts and Hampton, 2018). The imperative for parents and teachers to facilitate and document deaf children’s progress in language acquisition has motivated extensive early interventions programmes that focus on linguistic competence and skills (Yoshinaga-Itano, 2013).

In this and the wider literature on deaf children’s language development, embodied actions and gestures, and multimodal feedback among deaf–hearing interlocutors are usually conceptualized as part of a development continuum towards language fluency (Volterra et al., 2017). Pertinent to this study, research on turn-taking in deaf–hearing highlights the issues of establishing shared or joint attention among deaf and hearing interlocutors and specifically the role of eye gaze and touch within these contexts (Spencer, 1993; Swisher, 1992),

Attention has also been given to embodied forms of communication among deaf and hearing peers such as the use of gesture, gaze and touch to initiate interactions and take turns in play (Bobzien et al., 2013; Keating and Mirus, 2003). However, descriptions of interaction in these contexts tend to use the terms ‘multimodal communication’ or ‘multimodal channels’ to differentiate between sign and spoken language. The use of the term ‘mode’ is confusing here and in similar discussions (e.g. Allen and Anderson, 2010). A meta review of this work reveals a focus on communication ability and social skills rather than on the unfolding of interaction through the use of multimodal resources (Xie et al., 2014).

Multimodal analysis

The use of multimodal analysis has played some part in this and other areas of deaf–hearing interactional research. From a language development perspective the role of embodied semiotics in deaf children’s sign language development (such as pointing gestures) has been extensively researched since the seminal work on early sign language development (Caselli and Volterra, 1990). The analysis of ‘non-verbal’, ‘pre-linguistic’ or ‘pre-verbal’ skills in deaf children’s spoken language development is also well documented, often in relation to measuring the efficacy and affordances of hearing technologies (e.g. Tait et al., 2001).

Within the analysis of interpreted interaction, the use of embodied resources such as eye gaze, nodding, pausing, waving and tapping are recognized as strategies for establishing a shared focus of attention and to coordinate turn-taking (Berge, 2018; Coates and Sutton-Spence, 2001; Metzger et al., 2004; Napier, 2007; Van Herreweghe, 2002). Socio-linguistic studies of deaf identity and culture have tended to rely more on conversation analysis techniques that incorporate observations on gesture and gaze for analytical purposes, and to reveal linguistic and cultural experience and expression through interaction (Coates and Sutton-Spence, 2001).

Research into the multilingual or ‘translingual’ communicative practices that occur between deaf and hearing people has also encompassed a focus on multimodality (Kusters, 2017). Within this context, scholars are beginning to investigate the simultaneous communicative actions involved in deaf–hearing interaction such as the use of mouthing, eye gaze and body posture while signing (Vermeerbergen et al., 2007), the use of gesturing while speaking (Kusters, 2017), the sequential ‘chaining’ of modes (signing, mouthing, fingerspelling, pointing) to support text-related learning activities (Tapio, 2014) and the blended use of different features of sign and spoken language that serve to make visible the structure of English within a sign language utterance (Berent et al., 2012; Holmström and Schönström, 2016). In this developing body of work, the multimodal aspects of communication are recognized (Dahlberg and Bagga-Gupta, 2013; Lindahl, 2015; Holmström and Schönström, 2016; Snoddon, 2017) but the interpretative frameworks are primarily language-led.

In sum, extant studies go some way to documenting the semiotic resources employed in deaf–hearing interaction albeit within an overall focus on language use and language ability. Expanding from this, we attempt to show how multimodal analysis can be used to go beyond documentation/description of the forms used to provide an analysis of understanding, which would be immediately relevant to parents and educators.

Summary of research questions

Our overarching question is concerned with how understanding is accomplished in a communicative context where there are sensory and linguistic asymmetries. To address the question, our analysis has two sub-questions, namely:

How do participants use semiotic resources in the unfolding of the interaction, i.e. to take, execute and give turns?

How is understanding (or lack of it) demonstrated, monitored and co-constructed by participants throughout the interaction?

Data Collection and Analysis

The interactional data examined was collected as part of a series of case studies of multilingual deaf children and their families (Swanwick et al., 2016). Data collection involved video-recordings of deaf children interacting with their parents in a UK school, along with biography information from the teachers, parents and children themselves about the participants’ language experience and use in different contexts.

The interactional scenario that we examine in the present work involves a young deaf girl (A), her hearing mother (M) and a school friend (P), who is also deaf, interacting together in a room of the two girls’ school. Observation was carried out by a hearing researcher who was well known to the mother and both girls in the educational context as a former teacher of the deaf. Prior to the observation, the researcher had explained to M her current role as a researcher with a university project about communication and outlined the aims of the project. This was communicated in writing as part of the consent process and through face-to-face communication with interpreter support. The venue of school rather than home (both options were given) for the observation was the choice of the mother.

On the day of the observation, the researcher explained again to M that she wanted to observe how the mother and her daughter communicated. She asked M to engage A in showing her some of the classroom games and activities. Also present at the other end of the room were a teaching assistant and two other pupils engaged in a different activity. One of the pupils (P) is good friends with A. The researcher observed and video-recorded a one-hour session during which she situated herself apart from the interaction and did not engage with the participants (although, as will be seen, the participants acknowledge her presence during the interaction).

As will be seen, the planned nature of this observation that takes place in school and the research agenda that is shared with M has a major influence on the direction that the interaction takes. This dynamic constitutes important contextual information; however, our analytical focus is on turn-taking and understanding; hence, rather than a ‘disturbing’ variable for the analysis findings, the presence of the researcher (referred to both by M and A in their turns) is fully part of the content of the interaction and the understanding that is being co-constructed and negotiated.

Participants

A is a 5-year-old girl whose family origins are Lithuanian; she and her family arrived in the UK less than a year before the observation. A has a bilateral, profound sensorineural hearing loss. She had hearing aids fitted at 9 months old and a year before filming (at 4 years old) had a cochlear implant fitted to the left side in Lithuania. Some post implantation complications were later resolved in the UK. Mother and A’s teachers reported that A is getting used to her implant and likes to wear it in combination with her hearing aid at home and at school. A started school in the UK less than a year before the observation, where she has been learning BSL and English.

M (A’s mother) speaks mainly Lithuanian (and occasional Russian) at home with A’s husband and siblings; to support communication, she also uses some English and British Sign Language (BSL), which she is learning (at the time of filming, M has a few signs and beginner English at one word/vocabulary level).

A’s friend P is also deaf and is of Roma-Slovakian origin. She has a bilateral, severe hearing loss and wears two hearing aids. At home, P’s family speak Hungarian, Slovak and Roma. As reported by her teacher, P’s brothers and sisters sometimes also use English. Sometimes she uses Hungarian and, when she speaks to her brothers and sisters at home, she uses English. Detailed records of P’s background and circumstances were not gathered as her involvement in the interaction was unplanned and the family subsequently left the UK. However, it is worth noting that, with her level of hearing loss and consistent use of hearing aids, there is potential for good access to spoken language depending on other contextual factors (such as clarity of interlocutor’s speech, absence of environment noise, child language knowledge, ability and motivation). For A, who has a profound hearing loss, this access will have been significantly compromised prior to cochlear implantation at the age of 4 years, which is during the crucial years for language development.

Data transcription, sampling and analysis

A first transcription and analysis of the video-recorded session focused only on the English, Lithuanian and sign language that participants deployed, enabling a coarse analysis of the languages used (Co-author et al., 2016). Through this process, it became evident that the complexity of the communicative strategies involved in the interaction reached well beyond sign- and spoken language use, and hence required a more fine-grained analysis and an annotation approach that could capture the simultaneous and multimodal features of these, not only multilingual but chiefly situated multimodal interactions. We thus adopted a multimodal analysis and transcription framework for further analysis of this data by annotating gestures, gaze, face expression and body movements of each participant.

We present here the analysis of an excerpt from a one-hour video-recorded session of interaction. This excerpt (starting soon after the start of the video-recording) revolves around a disagreement that is managed and resolved by the interactants within a minute after its beginning. This excerpt has been selected because it portrays a moment of ‘crisis’ in the smooth unfolding of an interaction, where turn-taking and the mutual expression and monitoring of signs of understanding are crucial for managing the interaction and for resolving the disagreement.

A and her mother (M) have entered the room and sat down to play with some toys together. A’s friend (P) has followed and sits with them to join in. At the start of the excerpt presented, M explains to A that they are going to do some work together in the presence of the researcher, and that P will be farther away with another adult. The scenario unfolds as a disagreement with M’s proposed arrangement emerges. The interaction takes place at a table in a small school classroom prior to the start of a working session in school. There are resources in the room (books, displays of work, toys) in readiness for the working session.

In the excerpt, M accompanies her gesturing with speech in Lithuanian (she is the only participant who uses speech, except for one occasion in which P utters A’s name); in the transcription we provide (with Lithuanian glossed in English), we display spoken utterances in a grey colour rather than black to indicate uncertainty as to what is actually heard by the two girls. A’s cochlear implant is fairly recent and we cannot assume that she can hear let alone understand her Mother speaking (although she might rely on visual clues such as M’s mouth movements), while P, whose hearing is better developed than A, does not understand Lithuanian as she is not exposed to it. We will analyse each turn in sequence, focusing on the resources employed in taking, executing and giving turns (and commenting on spoken utterances when relevant) and on the emerging signs of understanding (or lack of it).

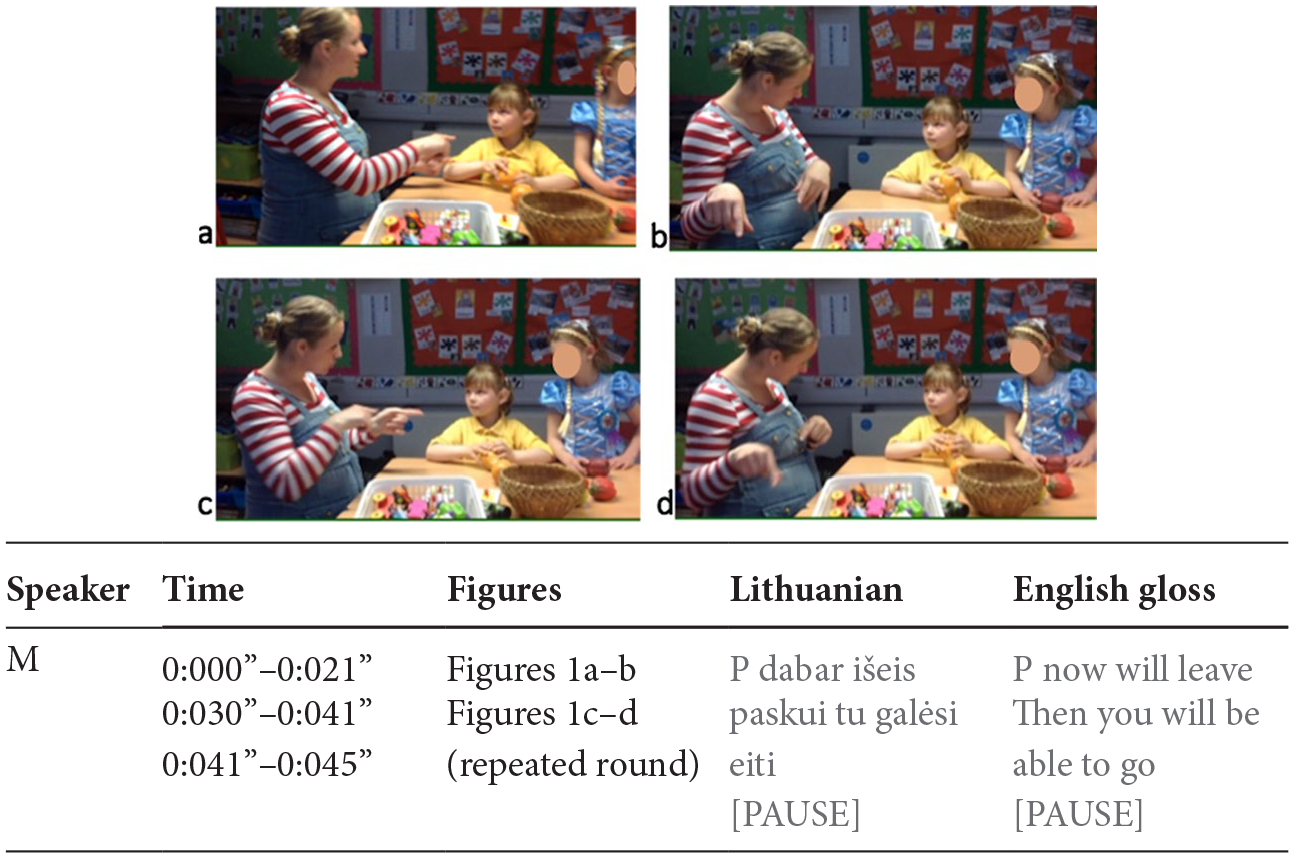

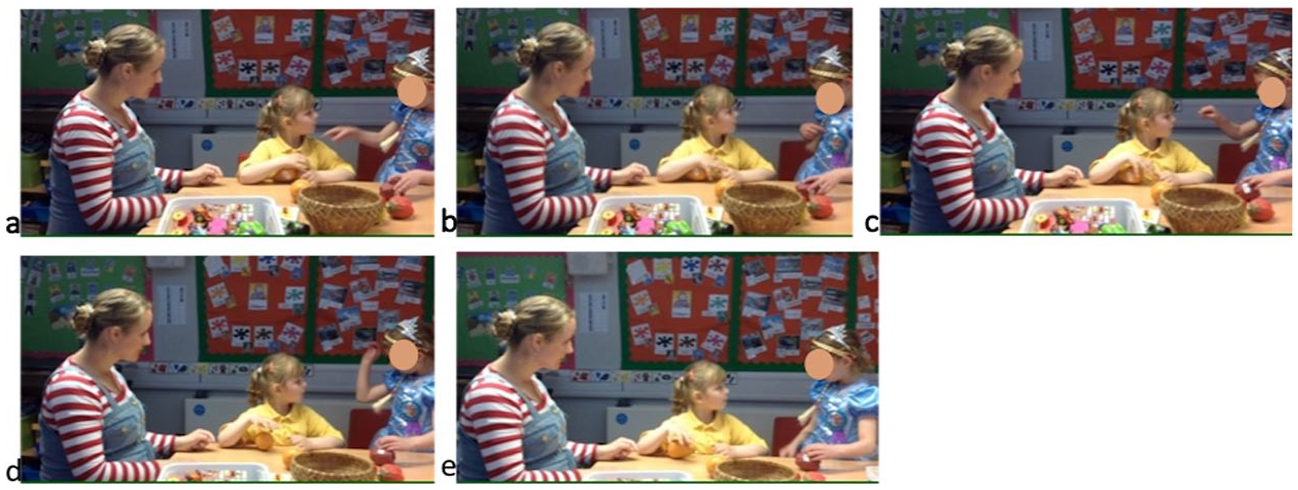

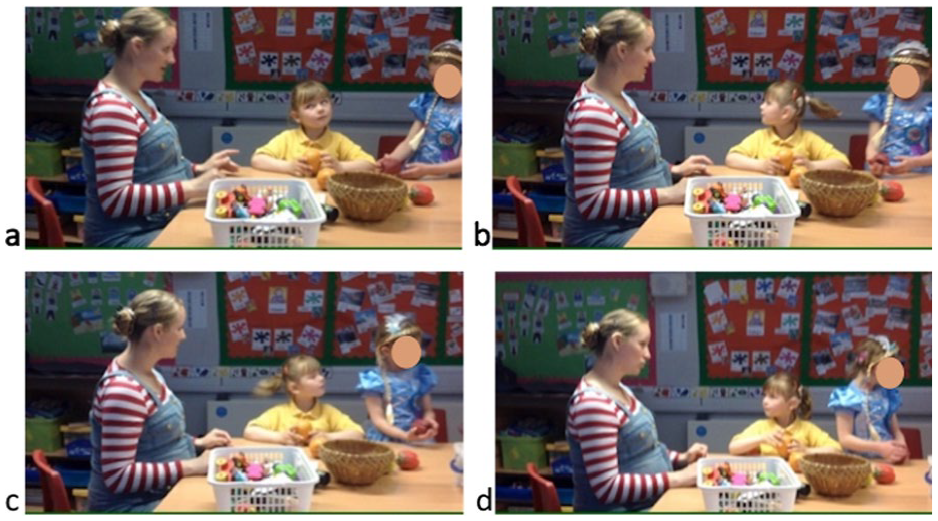

M executes her first turn addressing A through gaze; A from the start, and P immediately after, look at her. In a first round of gestures (Figure 1), M indicates with both hands A (Figure 1a), then a location close to herself (Figure 1b), then A (Figure 1c) and the location again (Figure 1d). M’s speech says something different; while M gestures “A” and then “here [close to me]” she refers to P in her speech instead; P (and possibly also A) may have heard her name being said, but we cannot make any further inferences on the two girls’ hearing/understanding of M’s speech as P cannot understand Lithuanian and A’s CI is fairly recent.

M’s first round of gesture (repeated once) (0:00”–0:045”). 1 As images can capture only one moment in time, while gesture, gaze, body movement and speech are continuous, each selected screenshot captures only one key moment of each gesture/gaze/body movement; the time frames indicate only beginning and ends, while the alignment of figures with spoken time frames in the transcript is only indicative of the time span during which speech occurs in relation to the key moment represented in the figure.

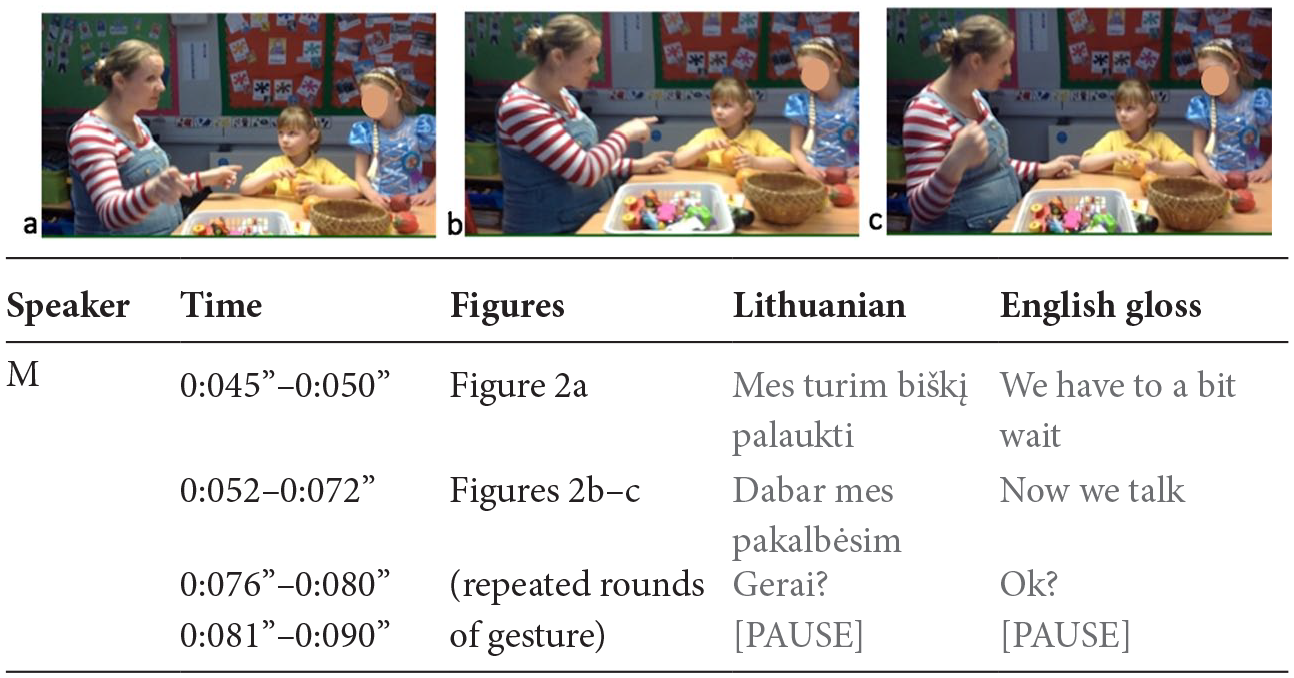

M then starts a second round of gestures (Figure 2), by indicating her daughter with her left hand and the researcher, positioned behind the camera, with her left hand (Figure 2a). At this point, she touches A’s arm with her left-hand index finger and keeps touching her, while moving her right hand, indicating A first (Figure 2b), then herself (Figure 2c), slightly moving the back of the hand towards the camera, in the direction of the researcher. While always keeping touching A’s arm with her left-hand finger, M repeats the pointing of A, herself and the researcher three times at a faster pace (for nearly 5 seconds) thus shaping a circle with her right arm including the three participants.

M’s second round of gesture (repeated three times at faster pace) (0:045”–0:09”).

In this second round, by producing a gesture with each hand, M uses the simultaneity afforded by the combination of touch (with one hand) and gesture (of the other) to produce a syncretic ‘you and researcher’ and then ‘you and I’. In the latter, by using the orientation in space and movement affordances of gesture, through the moving of her hand back and forth towards the direction of the researcher, M can also include the researcher in the ‘you and I’ produced. Thus M combines modal resources that deploy through the afforded senses of her daughter (visual and touch) to produce simultaneous meaning (touch: ‘You’ & gesture: ‘I+researcher’) to allow the daughter to follow visually one hand while perceiving herself included through touch. Note that in M’s speech (which again, is not understood by P and we cannot assume to be understood by A), the expressed actions (i.e. ‘wait’ and ‘talk’) are inflected at a generic first-person plural without disambiguating which of the participants are included (or excluded), which only the gestures indicate.

When repeating the pointing towards A, herself and the researcher the other two times, M uses also the continuity affordance of gesture to mean ‘together’ by producing a circle through arm movement and pointing to participants included in the circle, while implicitly excluding the non-indicated participant (P). M uses the speed affordance of gesture to signal ‘repetition’ (by speeding up the second and third circling movement of the arm while pointing to participants included in the circle). The repetition signals M’s attempt at making sure that the message is understood. Repetition seems a strategy often used throughout the interaction, not only by the adult (and hearing) participant, but also by A and P.

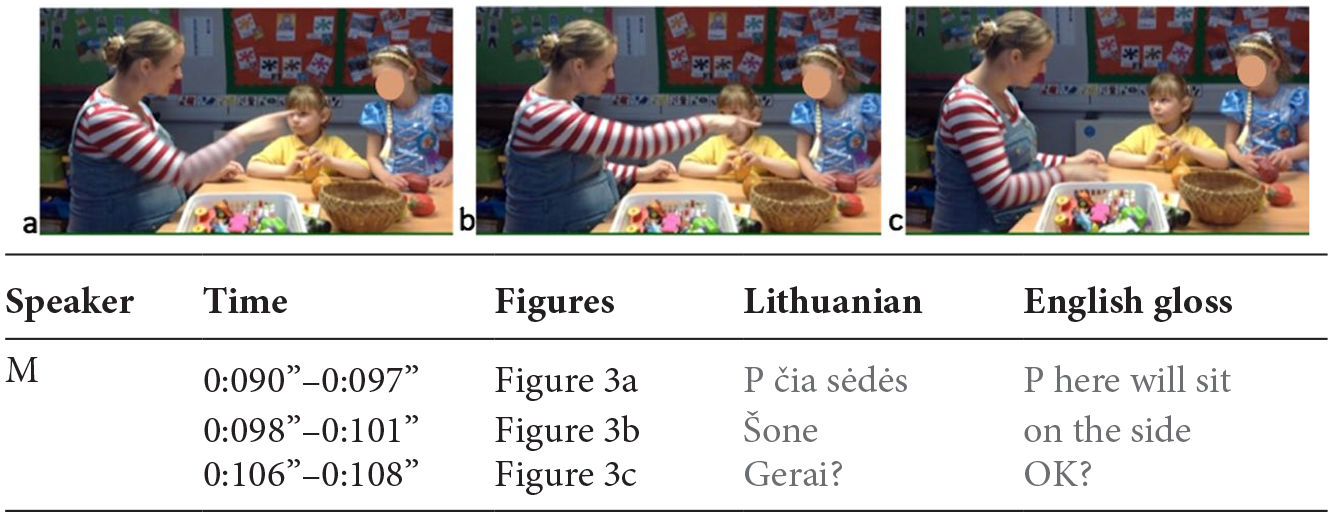

Then M starts a third round of gesture (Figure 3), by ‘de-touching’ her left finger from A’s arm while using her right arm to indicate P (Figure 3a) and a location farther away from A, towards P’s left side (Figure 3b), while shifting her gaze to look in the direction of the location, and tilting her head, seemingly to mitigate the imposition (or the possible face threatening act) of excluding P. Here M uses the sequencing affordance of gesture and negation of touch to indicate separation between A (M and the researcher, expressed in the second round) and P. Here, M’s naming of P in speech – if heard by A and P – may serve to reinforce the meaning expressed by her gesturing pointing at P.

M’s third round of gesture and closing of turn (0:09”–0:11”).

Finally (Figure 3c), M signals her end of turn by putting her arms to rest, closing her mouth and lowering her head, while giving the turn to A by shifting her gaze back to A (from looking at the direction of her pointing gesture in Figure 3b). The use of putting the arms/hands in resting position is a resource used constantly by all interlocutors to signal the end of turn throughout the whole interaction, with the gaze (shift) towards one participant always signalling the selection of the addressee to whom the next turn is offered.

In M’s first turn, two aspects are significant to our questions:

M’s use of the affordances of embodiment to make meaning, combining gesture with one hand and touch with the other, to indicate the participants that are meant to be with A, and to separate (through negation of touch) the participant that is not meant to be with A. Thus she orchestrates simultaneity and sequencing of visual and tactile resources to adapt to A’s sensorial space;

M combines both repetition and reformulation; she repeats the location close to her in the first round (Figure 1) and then repeats the ‘A-researcher-myself’ participants three times in the second round (Figure 2). The second round is also a reformulation and elaboration of the meaning expressed in the first round, i.e. from ‘A-here’ only, to ‘A-researcher-myself’; in this, she offers multiple access possibilities to meaning, thus not assuming her interlocutors’ straightforward understanding at the first round of gesture.

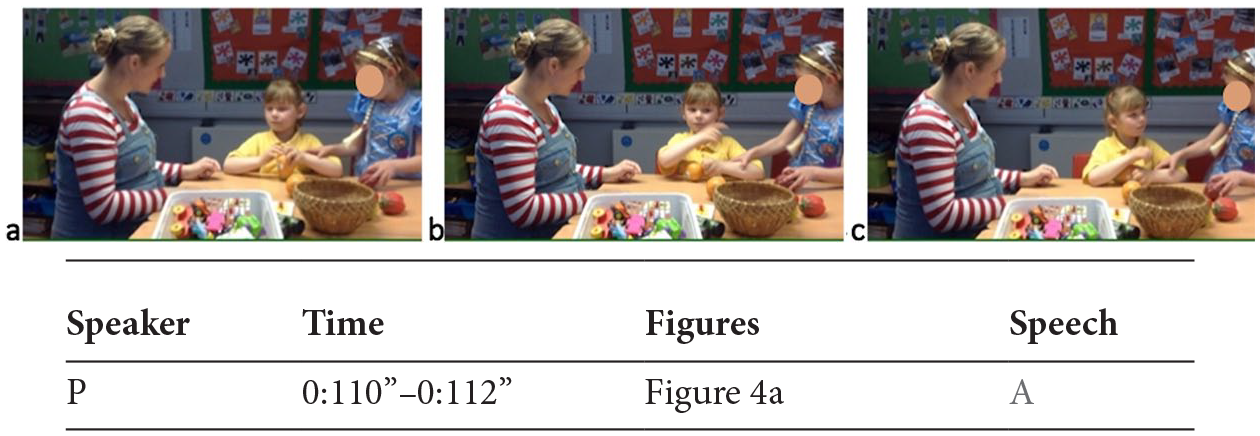

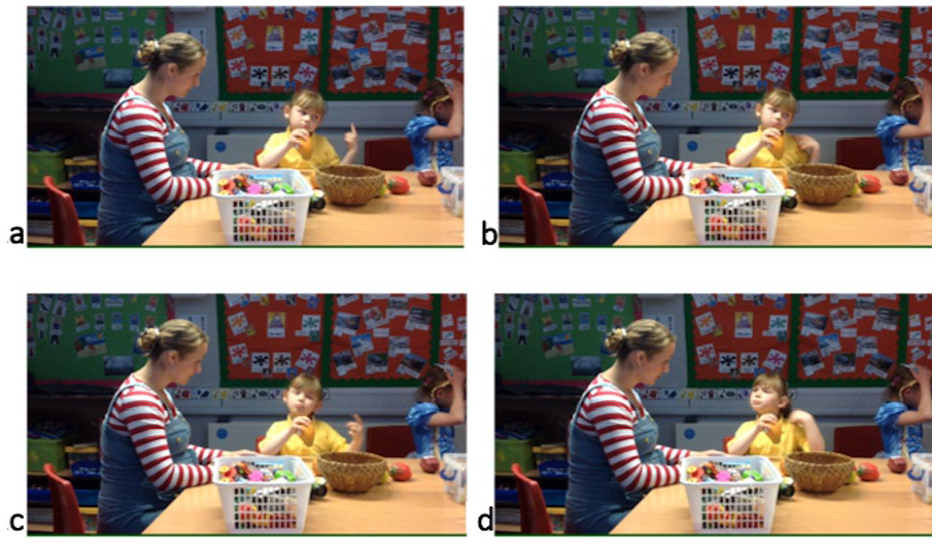

After M closes her turn offering it to A, a negotiation of turns occurs (Figure 4). While A starts taking her turn by lifting her right hand (Figure 4a), P moves towards A, unseen by A and, while uttering A’s name, she touches A’s arm to attract A’s attention and take (unoffered/self-initiated) turn, thus initiating a potential turn overlap with A who has started indicating P with her right hand (Figure 4b). While A does not immediately respond to P’s uttering her name (Figure 4a), hence we cannot determine the extent to which she has heard it, P’s calling for A’s attention through touch (by further pulling A’s arm towards herself) resolves the competition in turn-taking, with A’s turning towards P (Figure 4c), looking at her, and putting her arm to rest, thus giving her turn up and giving it to P. At this point also M shifts her gaze from A to P; P has gained her turn through touch (by grasping and moving A’s arm towards herself), not only with A but also with M, who follows A’s shift in gaze towards P.

Competition of turns between A and P (0:11”–0:12”).

After gaining her turn, P executes it through gesture, while addressing A through gaze (Figure 5). Her right-hand gesture indicates first A (Figure 5a), then herself (Figure 5b), then A again (Figure 5c); then she signs ‘outside’ in BSL (Figure 5d), with the orientation of the sign in the direction of the door of the room and corresponding further to the direction of the school playground outside the building. Here P draws on P and A’s shared knowledge of the place orientation and surroundings to further specify the ‘outside’ BSL sign through the orientation of the hand-sign. Then she signals the end of her turn (Figure 5e) by putting her arm/hand to rest, lowering her head, and by keeping looking at A, thus assigning the turn to her. P’s turn offers three kinds of reflections relevant to our questions:

P works with A’s sensory possibilities, by using touch to attract A’s attention and making sure she is in A’s visual space before starting to execute her turn. This shows awareness of the requirements for visual attention and of the resources that are apt to achieve it. This awareness, more immediately embodied in deaf participants, needs instead to be learned (or trained) by hearing participants;

P uses repetition (of A, in Figure 5c), like M in her first turn, thus again offering redundancy that may enhance chances of being understood.

P’s expressed meaning in her turn contrasts with the one expressed by M earlier. At this point it is however not clear whether P has understood M’s meaning and is opposing it by expressing a different option, or has misunderstood M and offers A her own interpretation of M’s turn. Considering that, unlike A, P has not perceived M’s touch/de-touch action that functioned to separate A from P (Figure 3a), it could also be that P has understood M as offering choice to A (as meaning ‘A, do you want to stay here with me and the researcher or go with P there?’). P addresses A rather than M, through her gaze; as normal routine of showing disagreement (at least among adults), 2 we would expect P to look at M while expressing a proposal that contrasts with hers (thus meaning ‘can’t we instead …?’). Beyond the possible hypotheses, what is significant is that, by simply proposing something different from M, P’s turn is not per se a sign of understanding of M’s first turn. Suspending judgment on P’s understanding until there are clear signs of it is important for the co-development of the interaction.

P’s execution and closing of turn (0:12”–0:13”).

Once P has closed her turn and offered it to A through gaze, A turns her head and gaze from P (Figure 6a) to M (Figure 6b), while widening her eyes when looking at M (Fig 6c.) in a hopeful expression of request; this one sole action functions simultaneously to taking the turn (offered by P), executing the turn, and giving it to M, meaning something like ‘what do you think? Do you agree?’ A’s turn offers itself to two observations relevant to our questions:

A uses her resources extremely economically to maximum effectiveness. Although in the excerpt A has the shortest turns among the three participants, this does not mean that she is excluded or made powerless; quite the contrary, A is the main focus of attention and interlocutor, by being the most referred to through gesture and the most gazed at, by both M and P; A is the one whose agreement (either to M’s or P’s proposal) is looked for.

A’s turn has not shown signs of disagreement or understanding yet; her ‘hopeful/pleading’ gaze towards M seems now to indicate agreement with P’s suggestion (P1), although this is not yet a sign of her understanding of either M’s or P’s proposal.

A’s turn (0:13”–0:14”).

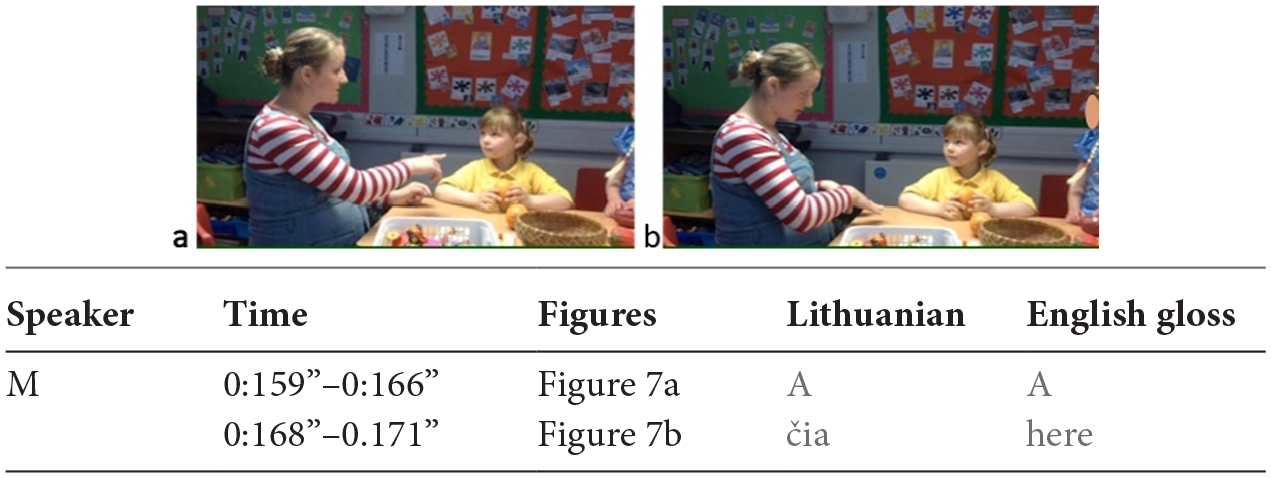

Looked at by both A and P, thus offered a turn, M (Figure 7) looks at A and indicates her through gesture (Figure 7a), while naming her in speech; then she gestures a location on the table close to herself and A (and says ‘čia’ = ‘here’), while shifting her gaze to P (Figure 7b); while keeping addressing P, she repeats the two gestures (indicating A and ‘here’) another time.

M’s first round of gesture (repeated twice) (0:15”–0:17”).

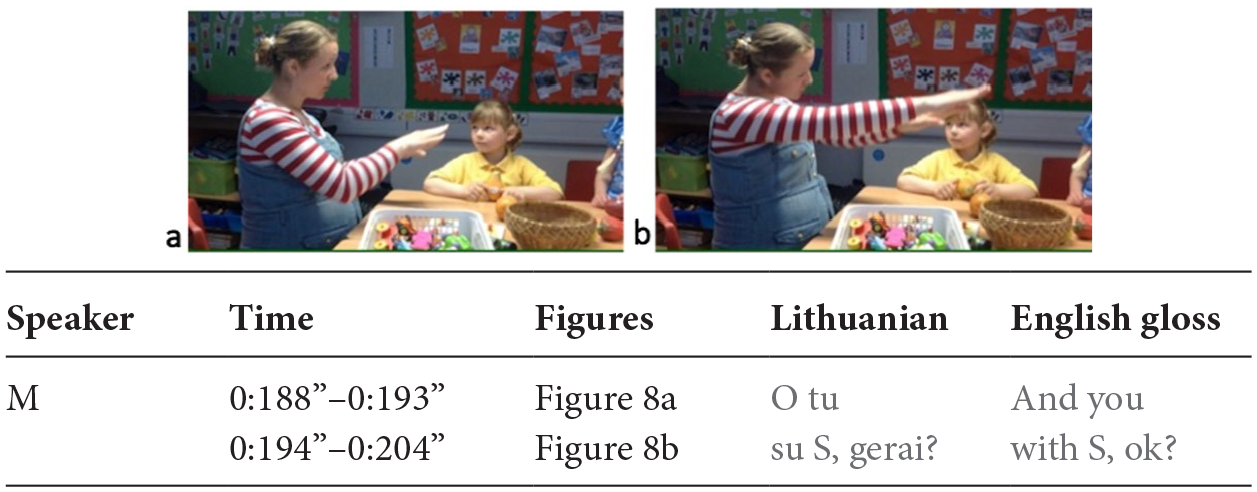

Then (Figure 8), in a second round of gesture, she indicates P (Figure 8a) and a location beyond P opposite M (Figure 8b), by stretching her arm, and mentioning S (the teaching assistant sitting beside P, not included in the video frame), while tilting her head to mitigate the imposition or possible face threatening act towards P’s exclusion.

M’s second round of gesture (0:18”–0:20”).

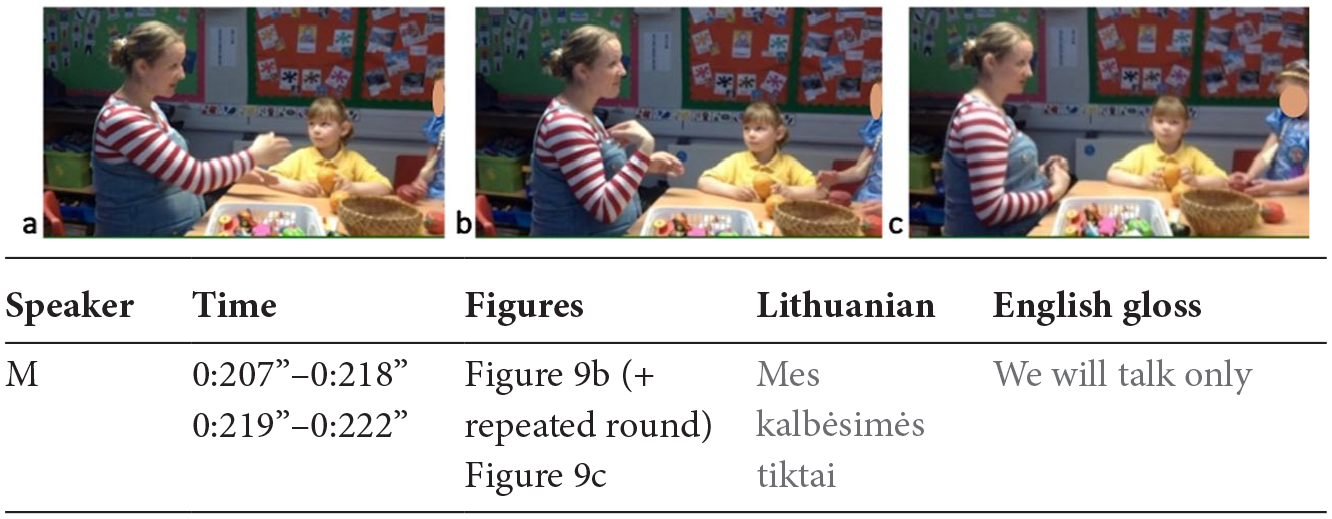

Finally (Figure 9), always looking at P, M gestures indicating A (Figure 9a) and herself (Figure 9b), repeating the two gestures other two times. P nods three times and shifts her gaze to A. M finishes her turn (Figure 9c) by putting her hands/arms to rest and shifts gaze between A and P, thus leaving it open to either one or the other to take the turn. In this second turn, M shows three aspects relevant to our questions:

She works against the affordances of gaze (which enables only one focus of attention/addressee at a time) by employing gaze shift to ensure both interlocutors’ attention while addressing one. She starts by looking at A and then shifts and keeps her gaze at P, signalling that she is addressing P and responding to her turn, while having some confidence that A is still watching;

She shows effort in making sure that the other participants understand, again through repetition and reformulation both within this second turn and of her first turn. While in her first turn, M gestures indicated ‘A here’ first (Figure 1), ‘A+M+researcher here’ (Figure 2), vs ‘P there’ (Figure 3), in this second turn M indicates ‘A here’, ‘P there’, and finally ‘A+M’, thus simplifying the referred participants and creating opposition of locations between A and P; M’s second turn thus functions both as an explanation of her first turn and a reinforcement/re-statement of her first turn’s position.

Like P earlier, M also does not manifest any sign of disagreement with (nor understanding of) P’s proposal, by, e.g., shaking her head before re-iterating her own proposal; her addressing P through gaze (rather than A, whose turn preceded M’s) shows however that she is responding to P’s turn, thus providing elements for the others to interpret M’s as a differing/contrasting position.

P’s triple nodding while M finishes her turn signals her understanding; immediately after M closes her second turn, P signals also her agreement with and acceptance of M’s proposal by shifting her gaze and body away from the interaction (see further Figure 10c and 10d below). The combined nodding and moving away from the interaction is, we argue, the definite sign of understanding (and acceptance) of M’s position by P, and hence the first visible sign of understanding expressed by any of the participants so far.

M’s third round of gesture repeated twice (and P’s nodding, three times) and closing of turn (0:20”–0:22”).

A’s second turn: head shake repeated three times (and P’s turning away from the interaction) (0:22”–0:24”).

A takes her turn (Figure 10), by shaking her head vigorously, first time looking at M, then for the other two times with her eyes closed, while then reopening her eyes gazing at M at the end of her last head shake (Figure 10d), thus giving her turn to M. Her head shakes express disagreement, although this is still not a clear sign of understanding of M’s meaning. Nevertheless, given the unfolding of the interaction with M’s first turn expressing a position, P’s first turn expressing a different one and M’s second turn reiterating/reformulating her first position, there are more elements for all interlocutors to have a clearer understanding of each other’s positioning.

The definite clear sign of A’s understanding occurs only 23” later in the interaction (Figure 11), after M has presented A with examples of activities (by grasping a series of toys and books from the box), when A shakes her head again and indicates P first with her left index finger (Figure 11a) then herself (Figure 11b) and by tilting her head towards P, meaning ‘A and P together’, repeating the (‘A+P’) gesture twice (Figure 11c and 11d) and shifting her gaze towards M with her mouth sealed in a contrasting expression (Figure 11d), thus showing not only her disagreement through her head shake first and mouth expression later, but also her understanding that M’s proposal is different.

A’s proposal (0:47”–0:48”).

The interaction proceeds with a series of other turns, with M attempting again to express the ‘A+M’ option, A shaking her head multiple times, then M using gaze and shaking her head in an inquisitive expression towards A (meaning ‘don’t you want to?’, accompanied with a spoken ‘Gerai?’ = ‘Ok?’), and A again indicating herself and P (similarly to Figure 11 but with A’s head and body positioning even closer to P). Then the disagreement resolves (0:59”–1:03”) when A stretches her right arm and produces a circle indicating all the participants (herself, M, researcher, and P), repeating the circle twice, and then spreading the fingers of her hand, indicating the number ‘5’ first and then ‘4’ (closing her thumb), with her arm stretched towards M and while always gazing at her, meaning ‘all of us 5, no: 4, together’. At which point, M nods multiple times (and says ‘Gerai’ = ‘Ok’), to express agreement. As anticipated earlier, although A is the participant with the fewest and shortest turns, she plays the decisive role in the negotiation.

Findings

Our overarching aim was to examine how understanding is accomplished through the mobilization of embodied resources in a communicative context where there are sensory and communicative asymmetries. Redefining ‘turn’ as a participant’s uninterrupted series of communicative actions (directed towards any of the other interactants), we focused on turn organization, hypothesizing that (1) these actions potentially provide evidence of meaning being successfully conveyed and that (2) the ways in which turns are executed offer ‘signs of understanding’ at different levels. We discuss the findings beginning first with the two foci/sub questions, before drawing conclusions in response to the overarching question.

Resources used

Different modal affordances are exploited in turn taking, execution and offering that mitigate the sensory and linguistic asymmetries among the interactants. These include several affordances of gesture, i.e. simultaneity of gestures through both hands to signal ‘togetherness’ (M), sequencing of gesture to separate (M), continuity of movement to include (and exclude) participants (M and A), speed increase to signal repetition (M and A), and combined hand orientation and movement to join (M) or locate (P).

Participants use a combination of modes for orchestration of multiple meanings; the simultaneous co-deployment of modes enables the expression of different functions, i.e. to refer and locate, to include and exclude (mainly through gesture), to address (through gaze), to communicate the type of speech act and stance (through face expression), to modulate politeness (through head movement) and to indicate more or less participation/involvement or disengagement (through body movement and proxemics).

Interlocutors show awareness of each other’s afforded sensory channels by using touch to trigger visual attention (P) and by combining touch and gesture to make combined meaning through tactile and visual channels (M). Both P and M employ communication strategies according to the modal and sensory channels available, i.e. again with P using touch to enter A’s visual space, and M establishing gaze contact with one interlocutor at the start of the turn to assure the latter’s attention and then shift the gaze to another while executing it, thus increasing the chances of having both interlocutors’ attention while addressing one of them.

Signs of understanding

Our analysis of the turn organization leads us to three conclusions about understanding and how it can be judged in this context. The first of these is that there is consistent evidence that the participants understand how meaning can be made in this context, that is, how to make themselves understood by interlocutors with different resources and what is needed for understanding among the participants to be achieved. Participants are sensitive to one another’s semiotic possibilities and sensory channels, and seem to understand how to use their resources to enhance their chances of being understood. We see this in the way that M repeats the same sequence of gestures in the same turn or when she reconfigures her gestures in multiple ways to get the meaning through. M also demonstrates her understanding of the sensory affordances of her daughter, drawing on her vision and touch perception to produce the simultaneous meaning (‘I+you’), which the daughter can follow. This understanding is also evident when P touches A’s arm to call her attention before starting to execute her turn, showing her awareness of the need to be within A’s visual space for communication.

The second is that understanding of intended meanings (understanding ‘what’) cannot be assumed either through nods and head shakes on their own, or through the expression of a congruent/contrasting meaning per se. Only the two combined enabled us to verify understanding. P’s nods and shift away from the interaction to play with a toy, confirms both understanding and acceptance of M’s proposal; A’s later head shake and contrasting proposal confirms A’s understanding of (and disagreement with) M. Up until these combined actions are taken, however, we cannot argue that any of each turn is a sign of understanding.

Our third point is that, even when there are no proofs of understanding, through the organization of all the turns with each participant re-iterating and reformulating their meanings, the interaction does demonstrate that each participant knows ‘how to go on’. The turns demonstrate an understanding of the ‘next step’ in the exchange. For example, when A takes, executes and gives the turn to M through turning her head and gaze combined with a facial expression of request, there is no evidence that she has fully understood M’s proposal or P’s counterproposal. However, this is a sign that she has understood how the communication needs to progress for the issue to be resolved (i.e. the question has to be put back to M).

This level of understanding enables the co-development and the temporal unfolding of the interaction that takes the negotiation of meaning to a conclusion. Participants’ understanding of how to use semiotic resources that fulfil the others’ sensory possibilities and how to go about making meaning and managing the interaction is as crucial as is non-assuming the interlocutors’ straightforward understanding of what is being expressed.

Implications for Practice And Research

The significance of these insights for practice relate particularly to work with parents and teachers (but also possibly therapist and clinicians) and the different ways in which the development of communication and meaningful interaction can be supported among deaf and hearing adults and children.

The first point emerging from this work relates to the recognition of understanding and the need for practitioners and parents to be aware of the range of embodied resources (beyond linguistically-codified ones) that may be deployed by individuals in their communication. A multimodal frame ensures that evidence of understanding is not overlooked or relies exclusively upon analysis of linguistic outputs. In the education context, this widens the premise on which learning and understanding may be demonstrated and judged. Observing the communicative sensitivities and multimodal strategies that enable this interaction to cohere may also be instructive for hearing parents and practitioners seeking to develop reciprocal and meaningful communication, and promote opportunities for language development. Multimodal analysis at the level of turns can thus tell us something about engagement and understanding (at some level) that may be missed in a language-based analysis.

The second point that relates to assumptions of understanding is equally important for practice, and in particular for work with teachers in mainstream settings. Where the communicative sensitivity seen here may not be shared by all participants, there is a risk that understanding is assumed or interpreted because of a nod/head shake or a congruent/contrasting turn. The meaning of a turn and its contingency with the ongoing interaction need instead to be analysed in depth before claims of understanding are made.

Both insights could be valuably incorporated into training for practitioners or support intervention programmes for parents of deaf children. Insights on how participants show and manage understanding of ‘how’ and do not assume understanding of ‘that’ could be further explored and expanded for the requirements needed for the co-construction of situated understanding in multilingual contexts, where shared linguistic knowledge cannot be assumed (Blommaert and Rampton, 2016).

Methodologically, we suggest that turns do offer a useful point of analysis if redefined in terms of communicative actions rather than turns at talk. In this, our findings lead to two methodological reflections. Firstly, the situated use of embodied resources can fully execute turns rather than solely signalling turn-taking or focusing functions or complementing meaning expressed in spoken or signed utterances. If this is particularly manifest in deaf–hearing interactions such as the one analysed here, we think our findings invite a redefinition of turn (as actions, not necessarily involving utterances) that could offer novel insights in the analysis of all interactional contexts. Secondly, there needs to be a qualitative orientation to assessing turn organization/distribution in relation to reflections on engagement, agency and power. This is an important departure from research that has assumed a relationship between the power of participants in deaf–hearing interaction according to the number and length of turns (Mahon et al., 2003; Wood and Wood, 1997). Instead, we learn from this analysis that A’s shorter turns or lower numbers of turns do not index per se her exclusion or lesser power in the interaction. In fact, as the most gazed at (addressed) and referred to, she is central to the interaction and the final outcome is contingent on her responses and actions (as when a leader or authority figure needs to be convinced by others and then only utters the last word/decision).

Finally, the case examined here pushes the analysis of face-to-face interaction even further in the development of multimodal frameworks that can account for situated meaning-making beyond ‘codified/linguistic’ resources. No transcription of speech or sign-language could have documented the meanings produced by interactants; while our data offer further extremely rich insights, because of the space needed to describe the actions performed we had to limit ourselves to present the analysis of only a handful of turns out of a one-hour session of video-recorded interaction. We hope that increased interest in research on situated multimodal sign-making may represent a push also to adopt forms of academic dissemination that are more suitable (than static image and writing only) to the presentation of multimodal interactional data.

Footnotes

Funding

This research received no specific grant from any funding agency in the public, commercial, or not-for-profit sectors and there is no conflict of interest.

Notes

Biographical Notes

ELISABETTA ADAMI is a University Academic Fellow in Multimodal Communication specialized in social semiotic multimodal analysis. She researches ways in which people use both verbal and nonverbal resources to make meaning, particularly in intercultural situations. Elisabetta’s work centres on sign- and meaning-making practices in place, in face-to-face interaction and in digital environments.

Address: School of Languages, Cultures and Societies, Centre for Translation Studies, University of Leeds, Woodhouse Lane, Leeds LS2 9JT, UK. [email:

RUTH SWANWICK is a Professor in Deaf Education working in childhood deafness, language and learning, inclusive education and teacher development. Her area of research expertise is deaf children’s multilingual language and learning, and the development of language pedagogies. Ruth’s work centres on deaf children’s diverse use of sign and spoken languages and the daily life interactions between deaf and hearing people.

Address: School of Education, University of Leeds, Hillary Place, Leeds, LS2 9JT, UK. [email: