Abstract

In recent years, the use of information technology to promote active learning in higher education has raised great interest. Teachers are continuously challenged to identify new research-informed approaches and educational practices for supporting students to actively learn and apply their knowledge. The present study tests the effects on students’ learning outcomes of an ad hoc developed learning tool (QLearn) which integrates three active learning strategies, previously empirically validated in face-to-face educational contexts. By using the QLearn software, students can generate questions, explain and develop answers, receive feedback from teacher and test their knowledge. Using a quasi-experimental design, we analyzed whether, in various course settings and instructional contexts, the students who use QLearn, as a support in their learning process, demonstrate a different learning performance compared to students who learn the same content by using their preferred learning strategies. The interventions were offered on a voluntary basis and implied participants from different fields (computer science, psychology) and different study levels (undergraduate and master’s level). The results showed that some groups of our participants significantly benefits from the use of QLearn platform. The outcomes of the present research advanced our understanding of the efficiency of technology-sustained learning in educational contexts and offer a promising strategy for facilitating the active involvement of students in the learning process.

Introduction

Learning is preeminently a dynamic and active process. The scientific literature reveals that there is a wide variety of paradigms and theoretical models that aim at elucidating the mechanisms of learning. Most of them support the importance, but also the need for active involvement of the learner in the learning process in order to gain an in-depth understanding of the content studied. Studies in the field of cognitive and educational psychology (Dunlosky et al., 2013; Weinstein et al., 2018) show that there are a large diversity of empirically validated approaches that can be applied to support student deep learning. Although many of these have proven to be very promising in laboratory and controlled classroom settings, their dissemination and integration into face-to face or online teaching/learning contexts are poorly represented. Moreover, although technology offers us huge opportunities for the use of the results provided by the learning sciences, these results or their implications are not used at their full potential to substantiate the design of educational technologies (Casanova et al., 2017). In the present research, we aimed to develop and test, in an educational context, a computer based learning tool (QLearn) which integrates three effective learning strategies empirically validated for traditional (on site) learning environments.

Applying the science of learning for improving students’ active learning

The interest in investigating educational strategies for fostering the active involvement of learners with the support of digital technologies has increased significantly in recent years (Martin et al., 2020). More and more research aims to identify the best technologically-mediated practices that significantly increase cognitive engagement and, consequently, learner’s acquisition.

Based on the significant advances that the learning sciences have made in the last few years, our understanding of active teaching and learning principles has increased considerably. Active learning is a broad term “generally defined as any instructional method that engages students in the learning process” (Prince, 2004). Active learning pedagogy is well sustained by empirical evidence coming from a number of disciplines that include learning sciences, cognitive psychology and educational psychology. Therefore, we capitalized on empirical data and explanations offered by cognitive psychology in designing a computer based tool for active engagement of students in active learning.

Actually, we can rely now on solid evidence and specific recommendations on strategies that can be used by teachers and students to maximize learning efficiency and on how our knowledge about cognitive processes can be applied in education (Agarwal and Roediger, 2018; Dunlosky et al., 2013; Weinstein et al., 2018). In a representative review (Dunlosky et al., 2013), the benefits of 10 learning techniques were evaluated. The analysis aimed at highlighting the extent to which they can be generalized with regard to four categories of variables: learning conditions, student characteristics, materials, and criterion tasks. Based on this detailed analysis, the authors made a number of empirically supported recommendations on the usefulness of these strategies. Thus, techniques such as practice testing, distributed practice, elaborative interrogation, self-explanation, and interleaved practice are techniques considered to be of high and moderate utility in various instructional contexts. In a relatively recent study, Weinstein et al. (2018) analyzed, in their turn, six specific cognitive strategies, with a solid empirical validation and also offered a series of recommendations and warnings regarding their implementation in educational practice. These and similar studies (Berthold et al., 2007; Endres et al., 2017; Meier, 2017) demonstrate that there are a wide variety of empirically validated approaches that can be systematically applied to support classroom teaching and student learning.

Many of these intervention strategies have proven their effectiveness in controlled laboratory based research and, recently, there has been an impetus for research on the use of these strategies in representative educational contexts (Agarwal and Roediger, 2018; Daniel, 2012; Daniel and Chew, 2013; Mayer, 2018, 2010; Rodriguez et al., 2021). In addition, investigating how students apply these strategies in online environments, can help us optimize the ways we use technology to support active teaching and learning.

Effective learning techniques for improving students’ learning

Until now, little attention has been paid to exploring how teaching strategies developed and tested in constrained laboratory conditions could function in educationally realistic contexts. When applied within complex classroom contexts, these recommended strategies may have a very different effect. They may interact with different laboratory uncontrolled variables, for example with different content, with other strategies, or with different goals to produce a new, different outcome. Therefore, to develop reliable pedagogical tools it is necessary to design research in which promising strategies that demonstrate effects in laboratory conditions are then tested in relevant and complex classroom settings.

In the last years, data derived from cognitive approach on perception, attention and memory offer us valuable insights about how humans process and store information. Based on this extensive research on human cognition two important principles regarding learning mechanisms could be inferred (Kosslyn, 2017): the deep processing of information and the construction of associations between types of knowledge. From a cognitive perspective, these are considered principles of active learning and are viewed as essential premises for facilitating efficient and robust learning (Kosslyn, 2017). According to the first principle, also known as the Level of processing framework (Craik and Lockhart, 1972), as we move from surface processing (physical and sensory characteristics of the stimulus) to the deepest level of processing (pattern recognition and extraction of meaning), the higher are levels of retention and longer the memory traces. More exactly, the more intensely we process a content, the better it is retained and easier to recover it. Similarly, the association principle considers that connecting new information or ideas with existing knowledge, supports learning by helping us to mentally organize material and offering us some hints/reminders to later recall the material from memory. The information processed according to these constraints may be retained and applied even if the learner does not intentionally intend to do so. These principles have considerable implications for instructional activity and can be operationalized in specific learning strategies applicable in real learning contexts. Moreover, their efficiency can be enhanced by the use of information technology, which provides increasingly viable opportunities for exploiting the results of research on the cognitive mechanisms of human learning.

Based on these principles and their educational implications, in the present study we have explored the potential of several learning strategies to stimulate students’ cognitive engagement and to improve their learning performance in digital environments across a variety of content domains. In this respect, we developed a learning tool (QLearn platform) by integrating three learning strategies: student generated questioning technique, interrogative elaboration, and practice testing. In order to facilitate students’ learning and to assess their performance, in different phases of our study, we opted for multiple-choice tests. There are relevant studies which indicate that, multiple-choice tests can be a robust instrument to sustain and to assess higher-order learning, if they are well designed (Agarwal, 2019; Jensen et al., 2014; Scharf and Baldwin, 2007).

The originality of this platform consists in combining for the first time the three learning strategies anticipating a cumulative effect of their formative benefits. The reason for selecting these three techniques emerged from an initial survey of the science of learning literature that indicated they have large utility in face-to-face teaching and they are supported by solid cognitive psychology research. At the same time, we could find ideas and recommendations for their pedagogical implementations.

The student-generated questioning technique is a learning strategy that favors both in-depth processing and reflection on the material to be learned (Ebersbach et al., 2020) yet is hardly practiced in higher education (Aflalo, 2021). In the process of generating questions, students actively focus on the content, analyze it, look for information that can be reflected in the form of a question, combine ideas and generate the question. These processes facilitate the understanding and retention of content (Rosenshine et al., 1996). Moreover, the strategy may also require the identification of the answers to the generated questions, which in turn requires laborious processing, that is, in-depth information. Numerous studies report that the use of this strategy consistently yields positive effects on understanding, updating, and problem solving (Rosenshine et al., 1996; Song, 2016). A series of investigations were aimed at demonstrating the impact of the questioning strategy on learning also in various instructional contexts. Thus, in a study conducted with students enrolled in a sociology course, the subjects in the questioning group performed significantly better on the final information recall test than their colleagues in the group who used the summary technique or the participants in the control group (King, 1992). In another study, students enrolled in a psychology course noticeably improved their exam performance by being engaged in a task of generating questions from course materials (Berry and Chew, 2008).

Recently, there have been studies aimed at capitalizing the possibilities offered by digital technology to facilitate learning in various domains, using the student-generated questioning technique (Mays et al., 2020; Sanchez-Elez et al., 2014). The application of method in instructional contexts, involves providing consistent support and feedback to students by the teacher, in order to generate correct, accurate and well elaborated questions (Song, 2016). This process is demanding and time consuming (Ebersbach et al., 2020) and practicing it with the help of technology could diminish these shortcomings and offer a number of benefits regarding the frequency and the quality of student-generated questions.

A second educational strategy with a similar impact on learning is elaboration. According to several empirical analyses, elaboration is considered in numerous studies as an effective strategy for facilitating long-term knowledge retention (Dunlosky et al., 2013; Weinstein et al., 2018). It involves adding and connecting the new information to existing knowledge and thus integrating it with other concepts in memory. Integration then facilitates the organization of new knowledge in the mind, which optimizes the updating of knowledge. Elaboration is an umbrella concept which includes various techniques. Of these, the interrogative elaboration technique has a solid empirical validity. Elaborative interrogation implies questioning the studied materials and generating an explanation for why an explicit stated fact or concept is true (Dunlosky et al., 2013). Different kinds of questions are used to prompt learners to generate explanations, but the majority of studies have used prompts following the general format “Why would this fact be true of this [X] and not some other [X].” The process of finding the answer to these questions supports learning. Studies indicate that, when the technique is used, it is important that learners check their answers with their materials or with the teacher because a poorly elaborated content could negatively impact learning (Clinton et al., 2016). One of the limitations of interrogative elaboration concerns its ability to facilitate comprehension. However, there are recent studies that have demonstrated its usefulness for stimulating critical thinking beyond surface analysis of factual knowledge (Yang et al., 2016). At the same time, a series of studies have shown that interrogative elaboration is beneficial and supports learning in both face-to-face educational environments (Smith et al., 2016) and in online environments (Navratil and Kühl, 2019; Yang et al., 2016).

Practice testing is the third learning strategy that has represented the subject of this research. It involves the recall of the to-be-learned material in low-stakes or no-stakes contexts, for formative purposes. Retrieving the information implies not just showing the knowing of that information but, at the same time, it is a way to consolidate and expand it (Roediger et al., 2021). Thus, there is an active updating of information that is much more beneficial for long-term information retention than passively revising materials based on re-reading the content, for example. This effect is known as the testing effect. Furthermore, numerous studies have indicated that practice testing a subset of information improves memory for related information even though this information was not tested (Chan, 2009, 2010). At the same time, there are some studies that support the usefulness of practice testing for facilitating the application of knowledge in new contexts as well (Dirkx et al., 2014). Another benefit, as supported by the promising results of relatively recent studies, is the reduction of test anxiety and the reduction of learning stress (Smith et al., 2016).

Even though the majority of studies on practice testing were laboratory studies, the relevance of testing effects for learning in representative educational contexts has also been demonstrated. In various studies that focused on the practical testing effect, tasks (Butler, 2010; Roediger et al., 2021), materials (McDaniel et al., 2012), and time intervals (Carpenter, 2009) with high educational relevance were used. At the same time, there is research that has evaluated and found the impact of practical testing in educational contexts (McDaniel et al., 2012).

Based on the results of these investigations, we can unequivocally conclude that the effects of practice testing on facilitating learning in face-to- face teaching contexts are robust. We can also identify promising evidence of its use in online environments. Thus, in a recent study Thomas et al. (2018), reported a positive effect of practice testing on knowledge retention. Compared to other study opportunities that were offered to students in a neuropsychology course, testing knowledge through quizzes significantly optimized retention in an online learning environment. The role and usefulness of various strategies in supporting and facilitating learning is supported empirically. Certainly, the use of information technology can increase this effect through the many opportunities to maximize the potential of each strategy. However, the use of technology must be guided by the principles developed in the science of learning if we want to build active, flexible and relevant learning contexts for students.

Purposes of the study and research questions

The purpose of this study is to test the practical utility of learning strategies adapted to online teaching-learning. In order to achieve our goal, we selected three learning strategies that had previously proved effective in the context of face-to-face educational practice. The three learning strategies, which are derived from cognitive psychology research, formed the basis for the development of a learning platform (QLearn). The main purpose of this learning tool was to stimulate the active engagement of students in the in-depth processing of learning content. Subsequently, the platform was implemented for teaching of three courses in different fields and its utility in increasing the academic performance of students in a quasi-experimental study was tested. Academic performance was assessed on the basis of scores obtained by participants post-test and of the marks obtained in their final exam.

The research objectives we pursued in this study were:

To investigate the impact of using the QLearn learning platform on students’ academic performance. Specifically, we aimed to test whether students who use the Qlearn learning platform achieve significantly better academic performance than those who study using their preferred learning strategies (regular learning strategies employed by students of their own choice).

To identify the existence of differences between the achievements of students who used the Qlearn platform depending on various factors (level of study, field of study, number of feedbacks received). In other words, we wanted to investigate whether we can validate potential predictors of change in student performance as a result of using the Qlearn platform.

Method

Design and participants

In order to accomplish the research objectives, the intervention was conducted in a natural setting. Random assignment is difficult in naturalistic conditions therefore we used a quasi-experimental design (unifactorial design with two modalities). The participants were 277 students, of which 191 female and 86 male. Their age ranged from 19 to 27 (M = 20.29, SD = 1.38). They registered voluntarily, gave written informed consent, and were told that they could withdraw from the experiment at any time, without any negative consequences. Participants were enrolled in three different required courses: Methods Advanced Programming-MAP, Computational Models for Embedded Systems-CMES and Introduction to Psychology-PSIHO, from two different fields (Computer Sciences, Psychology) at the same university. Their distribution in the courses was as follows: Methods Advanced Programming 46.1%, Computational Models for Embedded Systems 23%, to Psychology 30.9%. Two courses (MAP, PSIHO) were taught at undergraduate level and one course (CMES) at masters level.

Learning tool—QLearn platform

The main goal of the present investigation was to facilitate the deep engagement of students in their learning process. In order to achieve our aim we had designed an ad hoc computer-based learning tool named QLearn platform. The development of the platform was based on the integration of three learning strategies which have solid empirical support from cognitive psychology research.

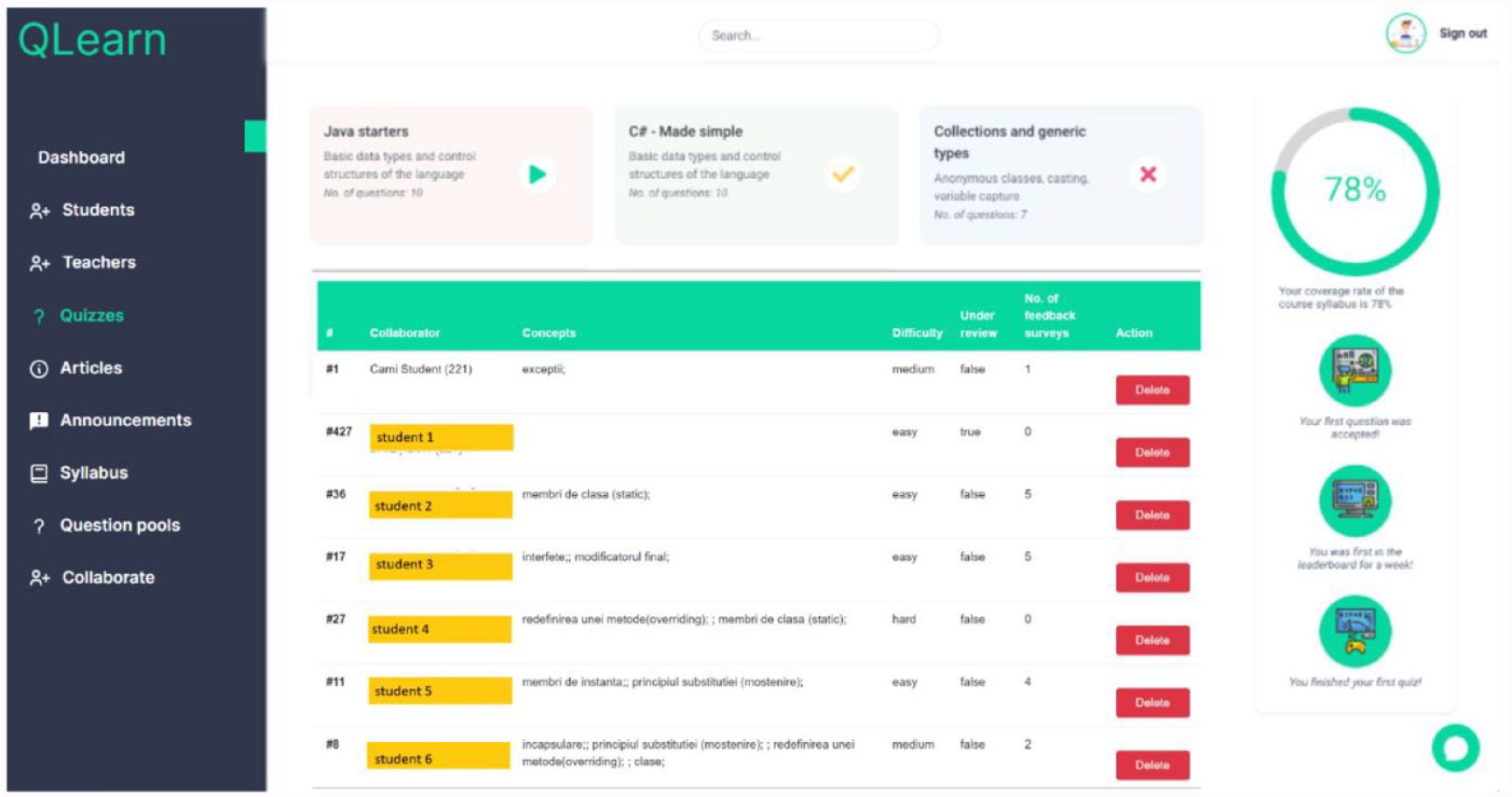

The QLearn platform provides instruments both for students and teachers to stimulate the cognitive engagement process by question generation, elaboration of answers and practice testing. A short overview of the platform architecture and its main functionalities is provided in Figure 1.

QLearn platform.

The main functionalities of the application include:

Adding questions: The core element of the application is the Question entity. A Question is associated with one or more categories. A category is a specific learning topic from the syllabus (e.g. collections, java, streams, etc.). It is used to describe questions so that a user can monitor the coverage rate of the learnt concepts. Each question category can have one of three levels of difficulty: easy, medium and difficult. Questions are added into the QLearn platform by students.

Make a review process: The proposed question is submitted by the student to be reviewed by the teacher. The student receives the feedback and improves the question according to recommendations made by the teacher. Once a question passes the review process, it is added to the question pool and it will be used to create quizzes in the practice test phase.

Building quizzes: A question pool is a container of generated questions. A quiz is a set of questions provided to the user. The quiz is generated by specifying the type of category and, for each category, the number of questions.

Assessment metrics: The platform aggregates a different number of metrics, such as: the student’s coverage rate of concepts through practice testing, the number of reviews of each generated question (the numbers of feedbacks offered to each student to refine the questions), the number and type of badges achieved by each student.

Instruments

The instruments used in the present study include: learning performance tests, learning materials, template for student-generated questioning.

Learning performance tests were developed for each of the three courses for pretest (Quiz 1), post-test (Quiz 2), and final exam phases. The maximum score on each test was 10 points. Depending on the course subject, pretest and post-tests consisted of 10 or 15 multiple-choice questions. The final exam test contained many more questions (40 questions). Learning performance tests were elaborated by course teachers from coursebooks which students could access via the online platform used by the university. All tests were integrated as a part of the regular teaching and learning activity. The assessment instruments were in multiple-choice format and comprised mixed questions—factual questions and higher order questions. The questions were developed in accordance to revised Bloom’s taxonomy. They were aligned with course learning outcomes and with course learning activities.

The template for the student-generated questioning task was designed to include all the components of student-generated questioning task: the question body, four answer alternatives, justification for the correct answer, the question’s level of difficulty, other concepts related to the question’s main idea (extracted from the learning materials). These components were embedded in the functionalities of the platform.

Example questions were generated by teachers and were based on a format similar to the one used in the QLearn platform. The examples were categorized as (i) difficult question, (ii) medium difficulty question, and (iii) easy question and were presented to students as models. Students analyzed the teacher’s examples and used these in order to generate their own questions and to elaborate on (i.e. justify) the correct answer.

Procedure

A unifactorial design with two groups was implemented in a realistic educational context. The experimental group included students from three different courses which used the QLearn platform in their learning process. The control group included participants from the same disciplines and academic years as the experimental group, but who did not use the QLearn platform in their learning process.

The procedure consisted of four phases: study phase 1, Quiz 1 test, study phase 2, Quiz 2 test.

Study phase 1

In the first study phase all participants (experimental and control group) had to study specific topics from the course syllabus (unit A), in a certain period of time (3 weeks) after each topic had been taught weekly in regular online classes. All participants were involved in learning activities, studying the learning materials with their own preferred learning strategy, without the digital technological support.

Quiz 1 test

After the completion of the first study session, participants’ knowledge was tested with a multiple-choice test (Q1), with questions developed by the teachers.

Study phase 2

In the second study phase, all participants had to study another specific topic from their curriculum (Unit B) after these topics had been taught in regular online classes. Participants from the control group studied the content to be learned using their own preferred learning strategies, in a way similar to study phase 1. In contrast, participants from the experimental group used the QLearn platform and they had to perform various learning tasks according to the instructions embedded in the platform. Study phase 2 consists of three core tasks:

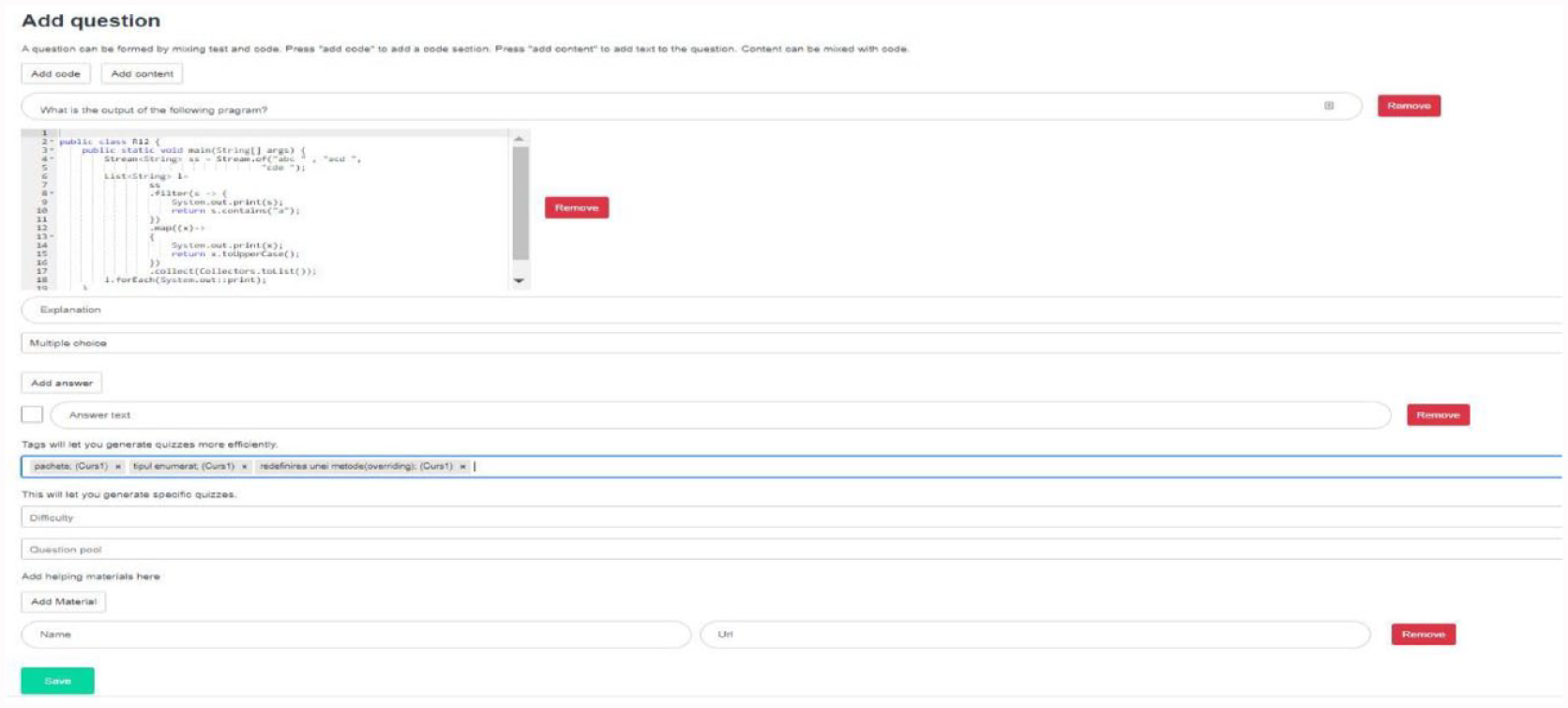

a. Student-generated questioning: each student had to formulate at least four multiple-choice questions based on the content to be learned, in accordance with the following instructions, displayed on the platform (see Figure 2): – Formulate the multiple-choice question – Identify a set of four alternative answers (including one correct answer and three plausible distractors) – Indicate and justify why the correct answer is the correct one – Specify (from learning materials) the most important concepts which are related to the main concept/idea stated in the question – Indicate the difficulty level of the generated question (easy, medium, difficult). The difficulty level of the question was assessed starting from the following criteria: questions related to definitions, simple facts were considered to be easy or low in level of conceptual difficulty; questions which required analysis, synthesis, application of concepts were categorized as medium or high difficulty.

Before generating the questions in the QLearn platform, students were exposed to a training session. In this stage, participants were instructed by teacher on how to complete each of these instructions (Formulate the question, Indicate the answer, Explain the correct answer, Identify related concepts, Indicate difficulty level).

b. Question review: the process of creating questions was a challenging task. Students were supported in this process by teachers who offered repeated feedbacks for improving the questions. Students had to integrate the feedback, to refine the questions and to send the final form to the teachers. Again, this reviewing process was mediated by the QLearn platform.

c. Practice testing with generated questions: the final version of each generated question was included in a question pool on the QLearn platform. Different quizzes were generated by the QLearn platform according to several criteria—levels of difficulty, topics to be tested. Using the QLearn platform, the students had the possibility to practice self-testing and to receive feedback about their results.

QLearn—template for student generated questioning task.

Quiz 2 test

After the completion of the second study session, participants’ knowledge (experimental group and control group) was tested with a multiple-choice test (Q2) with questions generated by the teachers.

Final exam

At the end of the semester, all participants (experimental and control group) were tested with a multiple-choice test, in a formal final exam.

Results

In this study we analyzed the impact of using an ad hoc learning platform (QLearn) on students’ academic achievements. In total, 277 students enrolled in courses from different fields and levels of study participated in the study. Regarding the statistical analysis of the data, the averages and standard deviations were calculated for the descriptive component. In the case of inferential statistical analysis, statistical significance, variance analysis (ANOVA) and covariance analysis (ANCOVA) were used. Given that the samples involved in the study were small, which affects the statistical significance of the data, we decided to calculate and analyze the effect size. Correlations between different variables were also calculated in order to identify certain predictors of change.

Regarding the use of data analysis methods, firstly, we used parametric analyses, because the necessary assumptions of parametric analyses for our data were met. Secondly, based upon the relatively small groups, we decided to move the focus of our analyses from statistical significance to effect sizes. As far as the analysis of change was concerned, we used a two-way factorial ANOVA procedure, because we had two independent variables: the experimental conditions and time. While time was actually a pseudo-factor, we mainly focused on the interaction effect between the two factors, which indicated if the groups evolved differently from pre-intervention to the post-intervention sequence. Also, when comparing groups that had initially significant differences regarding a relevant variable, that variable was controlled as a covariate into an ANCOVA procedure. When using correlations, even if we used the Pearson correlation coefficient, the focus of our analysis was also moved from the statistical significance toward the magnitudes of the correlations.

Analysis of change

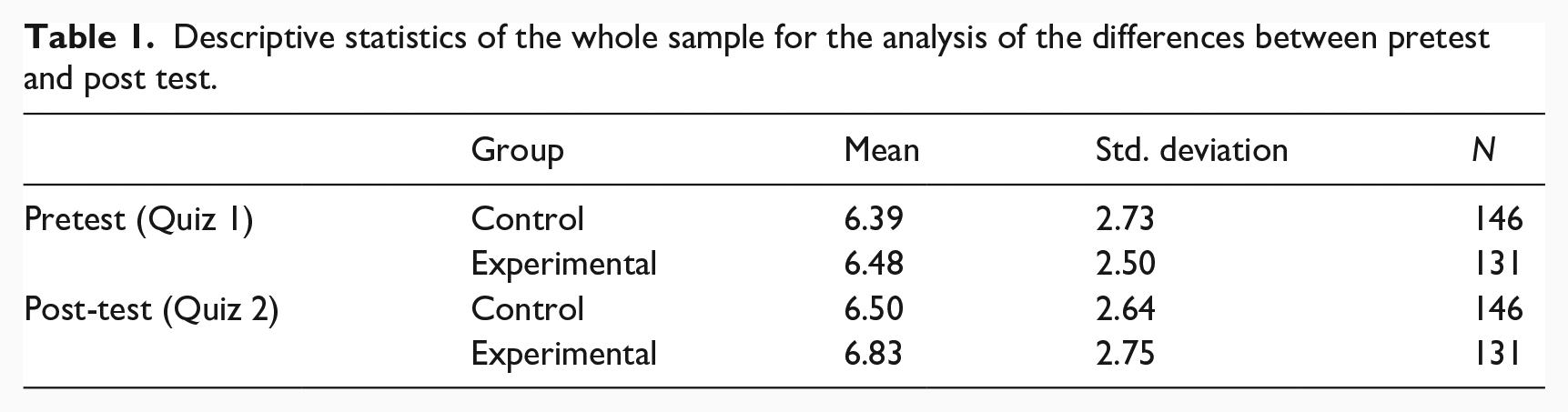

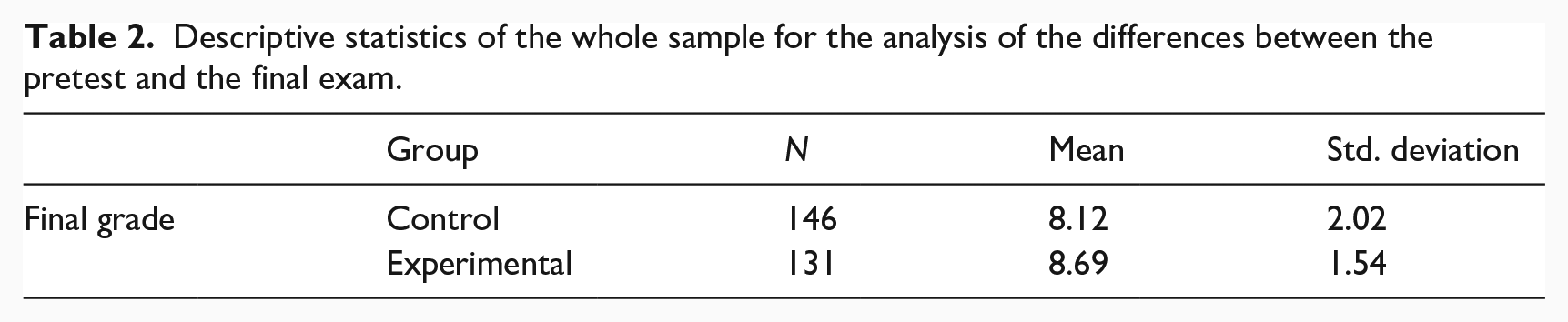

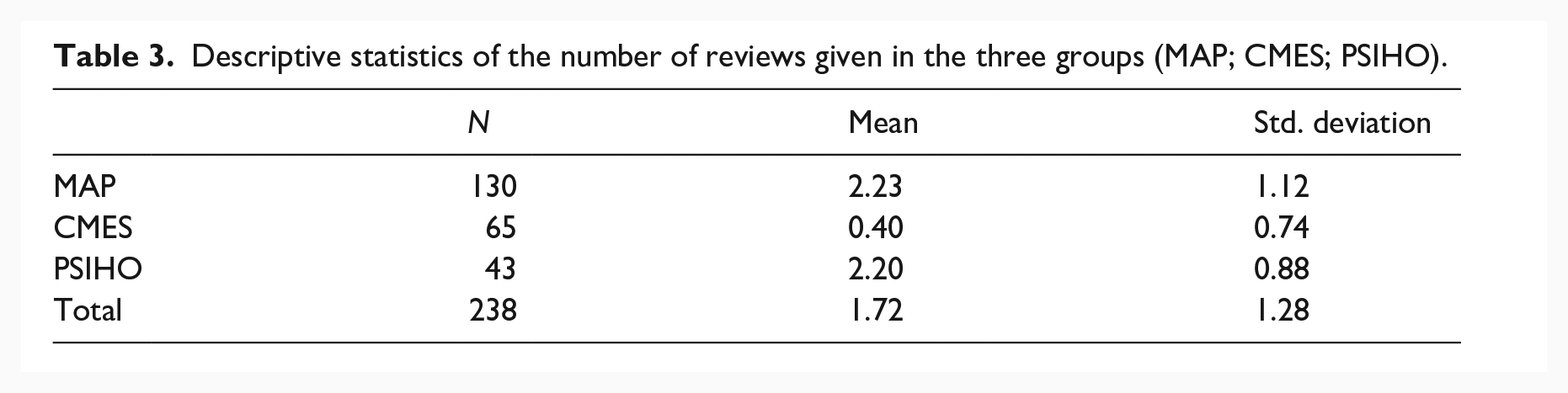

The learning progress made by the participants is illustrated in the changes between pretest and post-test, respectively pretest and the final exam. The descriptive statistical results of the whole sample regarding the change analysis are illustrated in Table 1 (pretest/post-test), Table 2 (final exam) and Table 3 (number of reviews).

Descriptive statistics of the whole sample for the analysis of the differences between pretest and post test.

Descriptive statistics of the whole sample for the analysis of the differences between the pretest and the final exam.

Descriptive statistics of the number of reviews given in the three groups (MAP; CMES; PSIHO).

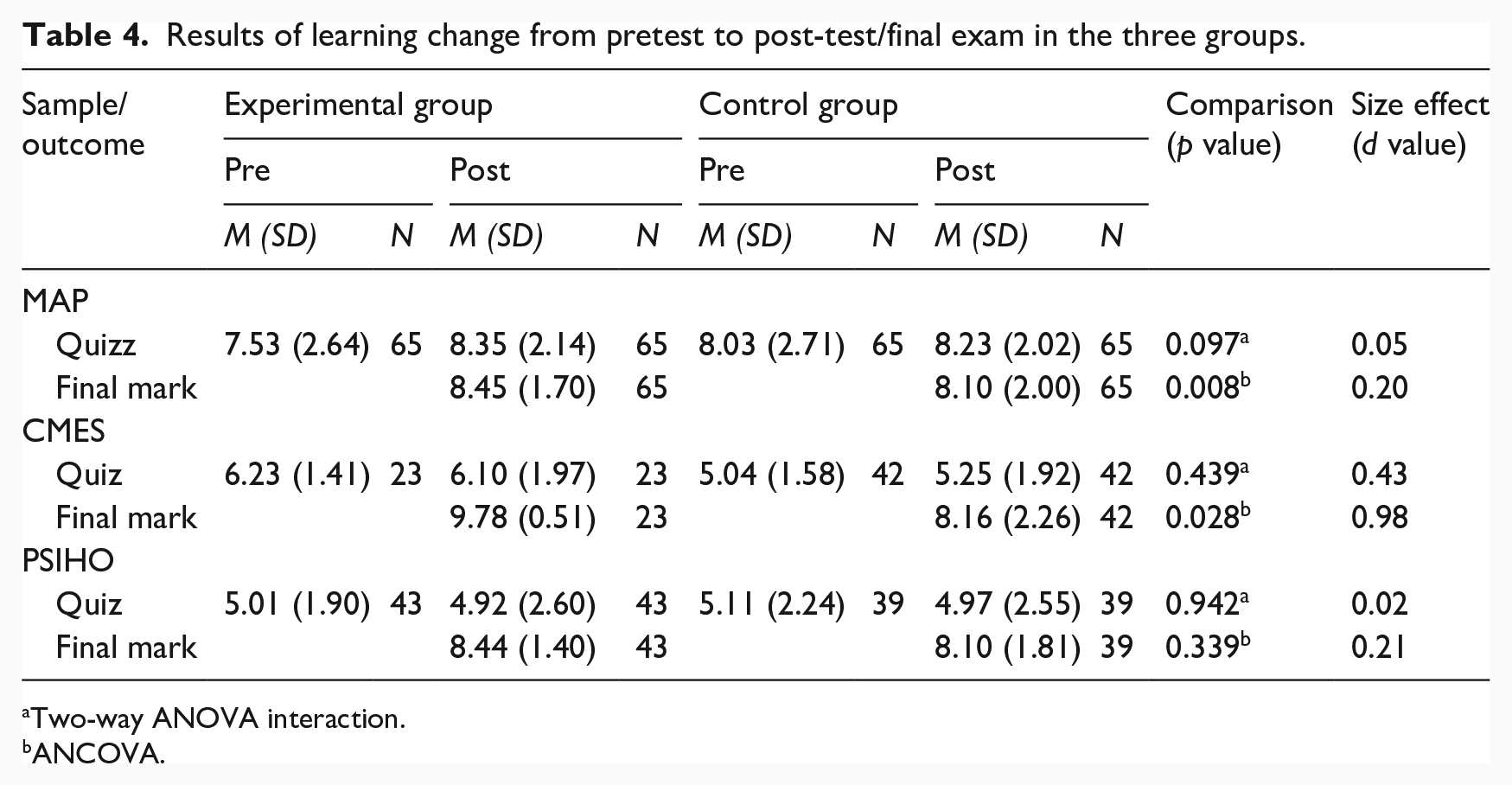

Regarding the analysis of the change between pretest and post-test at the level of the whole group, the results of the analysis of bifactorial variance (bifactorial ANOVA) indicated the existence of a trend to improve performance, but this change is not statistically significant. The two groups evolved rather similarly, F interaction (1, 275) = 0.82, p = 0.365, effect size d = 0.12. In other words, taking into account the whole sample (control + experimental group) there was no significant improvement in performance, the insignificant interaction effect indicating that there are no significant differences in the evolution of the two groups from pre-intervention to post-intervention. In order to analyze in more detail the change between the pretest and post-test, we performed the bifactorial analysis of each of the three groups of students enrolled in Methods Advanced Programming (MAP), Computational Models for Embedded Systems (CMES) and Introduction to Psychology (PSIHO). The results obtained are summarized, for each of the three groups of participants, in Table 4. Analyzing the data, we notice that among the students in the Methods Advanced Programming group, the bifactorial analysis of variance (bifactorial ANOVA) indicated a tendency to improve performance, but this effect of the interaction between the two factors is statistically insignificant. In other words, even if there are differences in the evolution of the two groups (MAP control, experimental MAP) from pre-intervention to post-intervention in the sense we expected, but they are not significant. Similar results, as we can see in Table 4, were obtained in the case of the other two different groups of students enrolled in the course in Computational Models for Embedded Systems, respectively, Introduction to Psychology.

Results of learning change from pretest to post-test/final exam in the three groups.

Two-way ANOVA interaction.

ANCOVA.

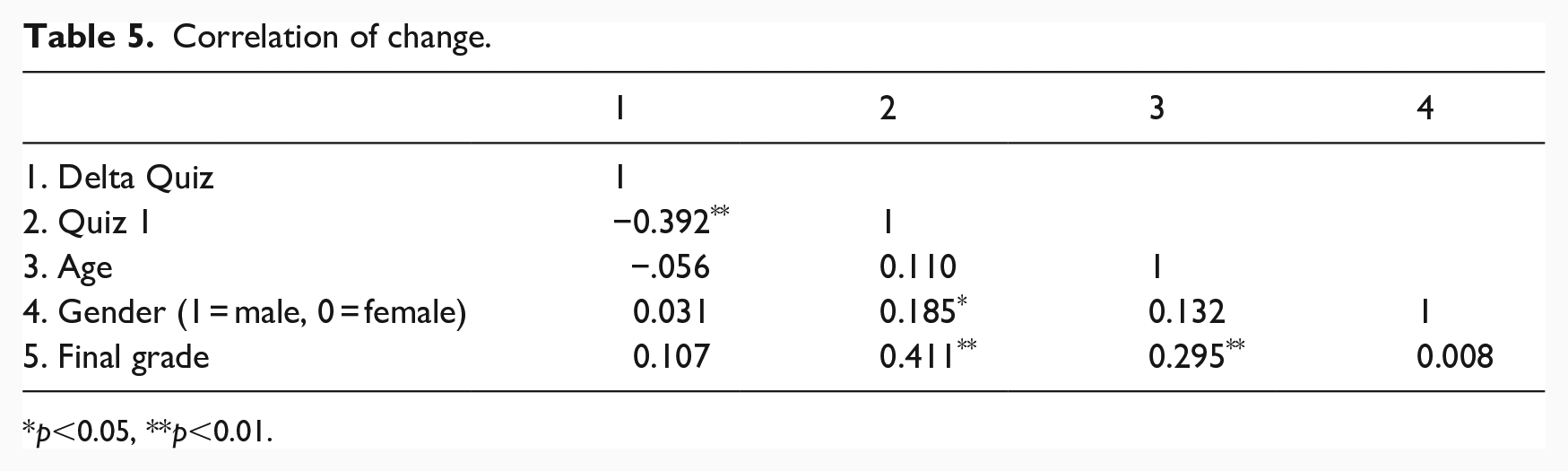

We also analyzed in detail, at the level of each of the three groups of students (Methods Advanced Programming-MAP, Computational Models for Embedded Systems-CMES and Introduction to Psychology-PSIHO), the effects of the intervention for the final exam grade. As the initial level of knowledge (Quiz 1) is a significant correlation of the final grade (see Table 5), the grade differences between the two groups (control and experimental) were analyzed in an ANCOVA model in which the initial level of knowledge was controlled as a covariate. The result of the analysis indicates that the average score of the experimental group is significantly higher than that obtained by the control group, F(1, 274) = 7.46, p = 0.007, effect size d = 0.31.

Correlation of change.

p<0.05, **p<0.01.

In order to analyze in more detail the change produced between pretest and post-test, we performed the ANCOVA analysis at the level of each of the three groups of students. The results obtained are summarized in Table 4. In the case of students enrolled in the Methods Advanced Programming course, the result of the analysis indicates that the average grade of the experimental group is significantly higher than that obtained by the control group, F (1, 127) = 7.29, p = 0.008. Significant results were also obtained for the students in the group Computational Models for Embedded Systems. The result of the analysis indicates that the average of the grades of the experimental group is significantly higher than that obtained by the control group, F (1, 62) = 5.08, p = 0.028. Only in the case of the students enrolled in Introduction to Psychology, the results of the ANCOVA analysis indicate that the average of the grades of the experimental group is not significantly different from that obtained by the control group, F (1, 79) = 0.92, p = 0.339.

Another aspect that interested us in our analysis was based on an indicator taken from the platform’s metric—the number of reviews/feedbacks given by the teacher per student. Comparing the number of reviews between the three groups (see Table 3), the analysis of variance showed that there are significant differences in the number of reviews between these groups, F (2, 235) = 79.81, p < 0.001. Post-hoc analysis using the Scheffe test showed that this difference is found between the groups Methods Advanced Programming and Computational Models for Embedded Systems (mean difference = 1.83, p < 0.001), respectively, Computational Models for Embedded Systems and Introduction to Psychology (mean difference = 1.80, p < 0.001). In other words, the group Computational Models for Embedded Systems received significantly less feedback than the other two groups.

Correlation of change

To identify and analyze potential correlates of change, for each participant we calculated the post-intervention—pre-intervention difference on the Quiz and tested the correlates of this change. The results can be seen in Table 5. We have found that the highest value of the correlation coefficient is between the results of the Quiz 1 test administered after the first stage of study and the results of the final exam. This value reveals the existence of a moderate correlation between the two evaluations.

Data used to generate the analyses presented in the paper are accessible via a publicly available data repository (https://figshare.com/s/da9d7518a7080a920e84).

Discussions

In this study we investigated the impact, in various course settings and instructional contexts, of an ad-hoc online learning platform on the academic performance of three different groups of students. Academic performance was assessed through post-test and final exam.

In the post-test case, the comparison of the scores obtained by the experimental group with those obtained by the control group showed that the scores of the experimental group did not increase significantly compared to the scores of the control group as a result of using the QLearn platform. Even if in this case the difference between the scores is not significant, a careful analysis of the results reveals that there is a tendency of the experimental group to improve its performance from pretest to post-test. Given that the samples involved in the study were relatively small, which affects the statistical significance of the data, we also analyzed the effect size. The values of d indicate small effect sizes. However, in educational contexts, these values can be interpreted as encouraging. Various analyses in the field (Bakker et al., 2019; Cheung and Slavin, 2016; Coe, 2002; Kraft, 2020) show that it is a common feature of educational interventions, especially those aimed at student achievement, so that most of them have effects which, described according to Cohen’s classification, are small. Academic achievements are considered to be much harder to influence than other types of outcomes. One possible explanation is that many effective strategies are already used in educational contexts or that, given the complexity of the educational environment, it is likely that different strategies will be effective in different situations (Coe, 2002).

In the case of the final exam, the comparison of the scores obtained by the experimental group with those obtained by the control group showed that the use of the learning platform led to an increase in learning performance. These results come to confirm the results of other studies that use similar learning strategies and that have been conducted in various educational contexts. They demonstrated the effectiveness of one or another of the three strategies on learning both in face-to-face environments (Rodriguez et al., 2021) and in environments in which learning was supported by educational technology (Golanics and Nussbaum, 2007; Greving et al., 2020; Hardy et al., 2014; Sanchez-Elez et al., 2014).

Analyzing in detail the results obtained in the final exam, for each of the three groups of students we can see differences in the impact of using the platform on their academic performance. Thus, significant differences were obtained between the experimental group and the control group in terms of their performance in the final exam in the case of two groups of students, those in the Computer Science (Methods Advanced Programming-MAP group and the Computational Models for Embedded Systems-CMES group). These results seem to only partially confirm other similar empirical studies that have shown the usefulness of student-generated questioning technique integrated in a learning platform for various courses, regardless of the field of study (Hardy et al., 2014). Moreover, out of the three groups of students investigated, we notice that the impact of using the QLearn platform (illustrated in the effect size values) was greatest in the students who took the Computational Models for Embedded Systems course. Unlike the other two groups of students enrolled in the Methods Advanced Programming and Introduction to Psychology (MAP, PSIHO) courses, who are undergraduates, the Computational Models for Embedded Systems group consists of students at master’s level. The course runs with a small number of students and their average age is higher than that of students enrolled in the other two courses. The results also indicate that the number of platform-mediated reviews per question generated by the student decreases as students age. At the same time, the number of reviews offered to master’s level students is significantly lower than the number of reviews given to undergraduate students. Combining all these results, we could conclude that students enrolled in advanced courses could benefit more from the advantages of learning strategies integrated into the QLearn platform. At the master’s level, QLearn works more economically and efficiently: learning performance is highly optimized and teacher support is lower. We have not found in the literature similar studies to investigate the role that the level of studies can have on the utility of a similar learning platform, but it is a direction that can be explored in future research.

Based on the analysis of the correlations between the post-test results and the final exam, we find that they correlate moderately. This allows the hypothesis that behind this result is a common factor that influences less than 25% of the variance of performance in the post test and in the final exam. The rest of the variance is due to other, different factors that could have influenced the performance of the exam participants. For example, in the case of the final exam, given its stake, students’ motivation to learn was probably much higher than in the case of the post-test. In the context of exam preparation, platform-based learning was intentionally doubled by other learning strategies, which could have led to a significant increase in their learning performance at this testing stage. In the post-test stage, the students learned as a result of solving the tasks generated by the platform, without intentionally deciding to learn those contents. Identifying all the variables that competed in the occurrence of these differences in post-test student performance and the final exam requires further exploration.

Summarizing, the present study has several theoretical and practical implications. From a theoretical point of view, it deepens research on the effectiveness of learning strategies in various instructional contexts. Moreover, the study provides an opportunity for dissemination and integration in real-world educational settings of empirical findings from cognitive research on learning. Our research shows that even if the impact of these strategies is not as great as in laboratory conditions, their use in various course settings and instructional contexts can facilitate and optimize learning. In addition, the study draws attention to the importance of a rigorous empirical foundation that must underpin the design of online educational technologies. From a practical point of view, the study provided a methodology and a (perfectible) tool—the Qlearn platform – to support the active learning process.

Conclusions

The present study has tested, in an educational setting, the three learning strategies with a solid empirical foundation derived from the latest research on cognitive mechanisms of human learning. The three strategies were integrated into an online learning platform (QLearn) that allowed students to generate questions related to the course content, to elaborate on the correct answers, to receive feedback on the questions generated and to test their knowledge with the help of questions they had developed. The efficiency of the platform was evaluated in three different courses within the same university. Initial results showed that students who used the learning platform scored higher on the final exam. At the same time, there is an increasing trend, though not a statistically significant one, concerning the results of students who participated in the experiment relative to the knowledge assessment test conducted at the end of the study. We also noticed that master’s level students achieve high academic performance, requiring less help from teachers to perform tasks on the platform. Overall, the methodology and tool proposed and tested in this study represent a promising mechanism for the active involvement of students in learning and the optimization of their academic performance. Our goal was not to compete with other similar learning management technologies/systems, available for free or marketed in educational contexts. Rather, we sought to provide students and teachers with a working instrument that could support and facilitate active learning. In addition, investigating how students apply these strategies in online environments can help optimize the way we use technology to support and streamline teaching and learning.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.