Abstract

This paper responds to calls from teacher-student feedback research looking for options on how to improve student performance. In Study 1, we first observe the relationship between student conscientiousness, midterm-performance, feedback-seeking behaviors, and final semester grades. Second, in Study 2, we test whether using an active learning method helps students improve grades regardless of their individual differences. Specifically, we test how the implementation of a face-to-face instructor-student performance review at midterm can be beneficial for performance improvement by allowing students who would otherwise not seek additional feedback or clarity to discuss performance completely. Structural equation modeling and mean difference tests are used to test empirical relationships between personality, behavior, and performance. Comparisons between groups that did and did not include a midterm review supports the hypothesis that interactive mid-term performance reviews improve class grades. Regression analysis supports that performance reviews improve grades even after controlling for individual differences. This active learning technique has both immediate and long-term benefits. In addition to grade improvement, mid-term reviews allow students to experience how to conduct professional performance reviews and receive and use feedback more effectively. The discussion offers simple advice on how midterm reviews can occur even within remote classes.

Keywords

Feedback’s role in motivation and performance

Feedback plays a vital role in an individual’s performance and learning (Wang and Zhang, 2022). Research in higher education has sought to identify elements of feedback that inform and improve class performance. Seminal research in employee motivation indicates that developmental feedback is necessary to direct effort. Whether it is feedback from goal-setting (Locke and Latham, 1990, 2019) or feedback as a core job dimension (Hackman and Oldham, 1976; Oldham and Hackman, 2010), feedback should be individualized and adjusted depending on an individual’s ability and general motivation (London, 1997). Individuals need to know (1) how they are doing and then (2) evaluate the efforts that went into that status to determine (3) what behaviors should change or not change. In some jobs, feedback is provided by the nature of the work and an individual may not need validation or interpretation from a supervisor (e.g. mowing a lawn or cleaning a surface). In other jobs, an individual may need guidance in interpreting performance and creating development plans. Hattie and Timperley (2007) stated, “Feedback by itself may not have the power to initiate future action” (p. 82). They identified in their review of educational research that effective feedback addresses goals, progress toward the goal, and activities necessary to make better progress (p. 86). Higher education has had a long-standing reputation of delivering feedback—poor feedback (see Ferguson, 2011). In some cases, students engage in feedback-seeking to maximize their understanding so they may improve future performance.

Teacher-student feedback challenges

Feedback-seeking is defined as behavior in which individuals actively ask for and track information about their performance to see if they should maintain or change efforts (Ashford, 1986; Ashford et al., 2003; Ashford and Cummings, 1983). Poorer student performers on occasion are more likely to both monitor and elicit feedback in hopes for improvement (Ashford, 1986). However, a study on how feedback-seeking behaviors of helicopter pilot trainees did not present consistent support for the negative correlation between current performance and seeking feedback (Fedor and Rensvold, 1992). However, results did suggest that the pilot trainees were more likely to monitor and elicit more feedback when they perceived low costs of asking for more feedback and when they trusted the source of the feedback as being credible. Thus, they asserted that attitudes and evaluation of the context may more consistently predict whether students monitor or seek feedback. Almost two decades later, research in feedback inquiry found that on-line gradebook monitoring is not only common among college students, but that more on-line monitoring predicted end of term academic performance (Geddes, 2009). Geddes’ research also observed that women were more likely to monitor Blackboard (a course management system used in the study) than men. Geddes called for future research to investigate how feedback-seeking strategies assist the learning process.

A review of 103 studies of teacher-student feedback challenges identified characteristics that make it difficult for students to use, track, or seek feedback (Jonsson, 2013). Findings isolated five main challenges for instructors (pp. 66–70): (1) Feedback needs to be useful; (2) Feedback should be specific, detailed, and individualized for each student; (3) Authoritative feedback (feedback that is not open for discussion) is not productive; (4) Students may lack strategies for use of feedback; (5) Students may lack understanding of academic terminology or jargon. Jonsson called for future research to investigate methods to improve feedback usefulness in higher education. Additionally, Jonsson encouraged instructors to include a discussion or “dialogic” model of feedback (p. 72). This advice was also given by Dysthe et al. (2011), which suggested that instructors need to take the role of dialog partner (engage in a back-and-forth discussion of performance perception) rather than assuming their perception of performance is complete. An instructor’s perception is only one perception (not the complete perception) on writing or student output. Moreover, the way students perceive/receive written feedback may not be the way the instructor intended. In fact, when students met face-to-face with tutors to discuss the feedback given, students reported an increase in feedback satisfaction and usefulness (Chalmers et al., 2018). Student need guidance on how to apply feedback to meet different learning/performance objectives and goals. Both short-term and long-term feedback loops can improve the use of feedback (Carless, 2019).

How can instructors encourage all students to discuss feedback face-to-face to improve outcomes, especially if they perceive costs for doing so or if their personality or performance is such they simply do not engage in elicitation? This paper responds to these challenges in providing effective teacher-to-student feedback by investigating the relationship between individual differences, feedback-seeking, and performance. Moreover, we experiment with a suggested active learning method to ensure all students an opportunity to discuss feedback information and consider plans for improvement.

Study 1: Individual differences determine semester grades

Previous performance is related to future performance. Jensen and Barron (2014) found support that midterm grades were related to final grades in a semester course (semesters typically last 16 weeks). Intra-individual motivation to learn and earn a certain grade is likely to be consistent across a semester. Also, although intellectual ability may change over a period of time (Neisser et al., 1996), across a single 16-week period we assume it to be relatively stable. Thus, grades throughout a semester long class are likely to be steady based on that motivation-ability constant. It should follow, then, that grades earned by midterm will predict final semester grades.

However, students’ final grades are also likely to be a function of the amount, frequency, and quality of useful feedback received. As described above Jonsson’s (2013) review identified how quality feedback that is individually directed can be helpful in students learning in college. It is likely that all students, in a given class, are receiving the same rate and type of feedback on different assessments. Thus, the students who seek additional feedback (e.g. requesting professor appointments) and monitor received feedback ques (like tracking grades) should receive a developmental benefit. Therefore, feedback-seeking behaviors should also predict final grades.

Next, the type of college student seeking feedback on class performance is aware of what they want, class grading structure, and the likely consequences of class achievement. These attributes are characteristics of a conscientious student who is reliable and dependable in many areas of life (Costa and McCrae, 1992). In fact, contentiousness is the most generalizable predictor of managerial performance and predicts academic performance (Higgins et al., 2007). Notably, Geddes (2009) found that women monitored feedback more often than men. Since women tend to have slightly higher levels of conscientiousness, we believe a more precise predictor of feedback-seeking is this personality trait rather than gender biology or identity. Thus, we propose that student conscientiousness predicts final grade performance by way of feedback-seeking behaviors.

Method

Sample

Participants were enrolled in one of eight Principles of Management classes at a small private northwestern university in the United States. Each section was taught in either the spring or fall 16-week semesters. A population of 318 students (earning a major or minor in business administration) were studied in this research, and they were all taught by the same management professor using the same syllabus and learning assessments. All students participated in the in-class discussions and debrief regarding personality assessments and feedback-seeking behaviors as a part of course design—see Procedure. The sample used for hypotheses 1–5 testing consisted of 280 students who provided complete measures for analysis (88%).

Procedure

Personality assessments (Big Five Factors, Goldberg, 1992) were provided to students after midterm grades were submitted (week 8 = midterm grades submitted; week 9 = collection of Big 5). The professor reviewed class averages within class and shared statistical similarities with other current and previous sections of the class along with national averages and differences between gender. The professor reviewed research on the

Feedback-seeking assessments (adapted from Fedor and Rensvold, 1992) were distributed during the class period before an in-class discussion on managerial practices relating to performance appraisals and feedback (week 11). Within that following class, the professor summarized Fedor and Renvold’s research and had students engage in discussions concerning why or why not their scores and class outcomes were similar to findings from the study of helicopter pilot trainees. Students were given the option to voluntarily turn in their assessments for future analysis. They were ensured that the professor would not look at their responses until after final grades were posted (as they assessed student trust in professor feedback and perceived costs of eliciting feedback from that particular instructor). Students handed their assessments voluntarily when they left the classroom. Only a few students in each section did not turn in the feedback-seeking survey.

All sections used the same grading scale and similar learning assessments. By midterm, two exams, a revise and resubmit writing assignment (with another option for resubmission before the end of the semester), class participation, and initial group project work had begun. Grades and written feedback were continuously updated using Blackboard. Thus, students had access to instructor feedback on assessments well before the performance review.

Measures

Conscientiousness

Students responded to conscientiousness items form Goldberg’s (1992)

Grades

Feedback-seeking

Fedor and Rensvold’s (1992) measures were used for hypothesis testing. We adapted their measures of feedback eliciting, feedback monitoring, feedback costs, and external propensity—measured on five-point scales, where 1 = never; 5 = very often.

Analysis and results

To test our hypotheses, we combined all data from each section into one database to run correlations and models. We used SPSS and AMOS.

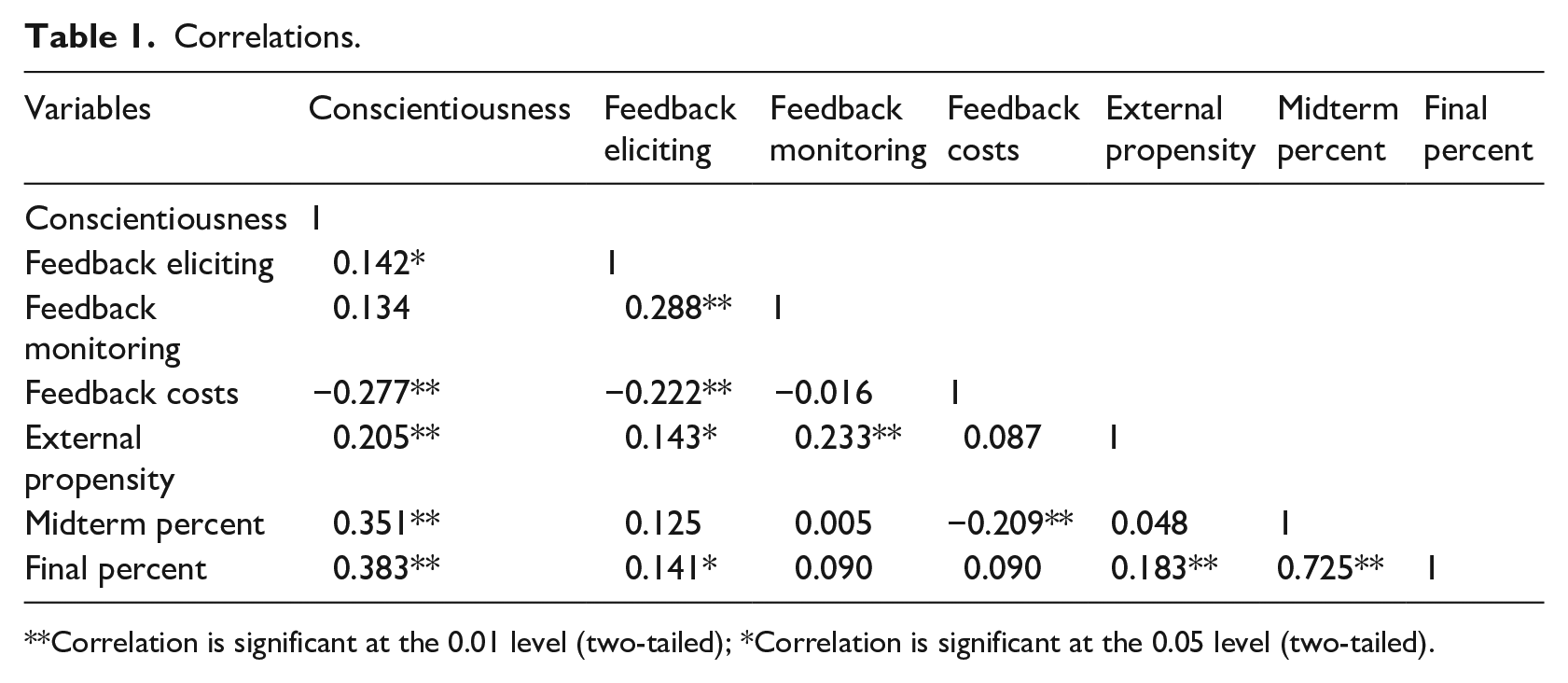

Table 1 shows the correlations between all variables. Those with higher grades at midterm were likely to get the higher grades on the final exam. Those students higher in conscientiousness are more likely to have higher grades on the midterm, in the final grade, and engage in feedback-seeking behaviors. Using SPSS, we then tested our hypotheses using Regression analysis, which showed a significant ANOVA model of feedback-seeking behaviors and the final grade (

Correlations.

Correlation is significant at the 0.01 level (two-tailed); *Correlation is significant at the 0.05 level (two-tailed).

For structural equation modeling (SEM), we used a list-wise deletion method to determine which participants to eliminate based on incomplete data. All variables used in model testing were standardized. As described above in Measures, we first ran a confirmatory factor analysis to determine if the four feedback-seeking variables were separable. We used feedback-seeking as a second-order latent variable.

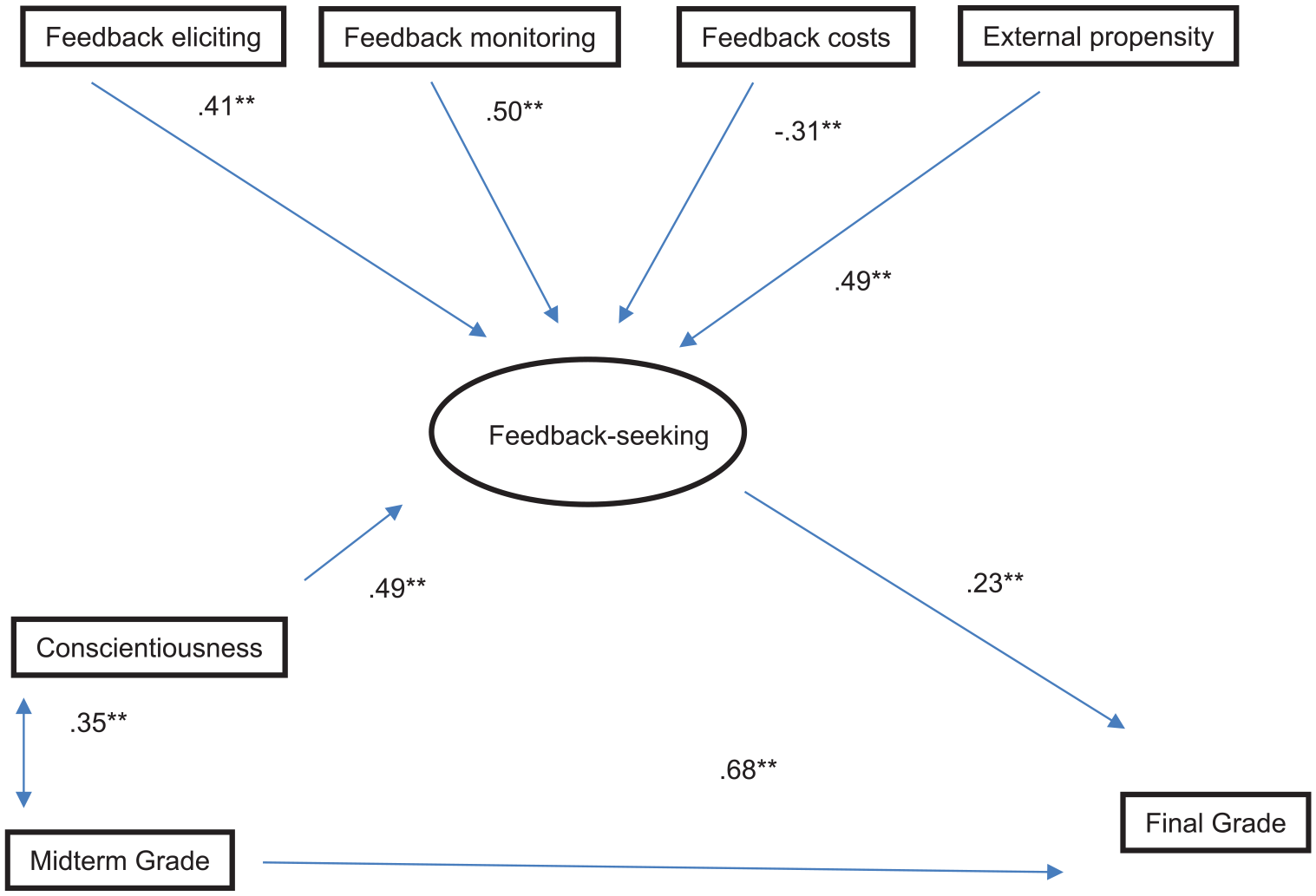

We ran the full SEM path model in AMOS (see Figure 1), which included a correlation between conscientiousness and midterm grade (see Table 1). The hypothesized path model achieved fit (Chi-square/df = 1.52, CFI = 0.95, RMSEA = 0.05). All paths were significant in the hypothesized direction, and we found support for the mediated path model (the significant correlation between conscientiousness and the final grade was no longer significant when the link between feedback-seeking was added to test mediation). Thus, hypotheses 1–6 were supported. Feedback-seeking mediated the relationship between contentiousness and final grade, and the connection between midterm grade and feedback-seeking is explained through the correlation between conscientiousness and midterm grade (we also tested a model with midterm grade to feedback-seeking in the path model, and no significant relationship existed when all variables were included in the path model).

Hypothesized path model.

Our first study replicated and extended previous tests of individual differences predicting performance and found that those individuals who engage in feedback-seeking are more likely to see increases in grades between midterms to finals. The next study added a performance review to test whether instructors can use feedback giving best practices to allow for all students to improve their performance.

Study 2: Benefits of midterm performance reviews

Our story so far is that not all students will seek feedback to improve grades. Referencing back to the above calls for research, we next sought to test an active learning method of ensuring all students get the benefits of additional feedback—adding a midterm performance review. A performance review is a term used in employment to indicate that there will be a discussion of performance assessments with an opportunity to develop a future plan of action. Feedback is focused on potential improvements. In higher education, Hattie and Timperley (2007) used a term “feed forward” to describe when performance information is used to discuss improvements. As described earlier, management students learn about performance reviews as a management method. Educators can use performance reviews to meet the challenges of feedback-giving and receiving.

Ensuring feedback is understood and useful

Ferguson (2011) gathered published research on college student perceptions of feedback and its usefulness. College classes in the United States often have different and multiple opportunities to assess knowledge and earn grades. As a result, students receive feedback on their understanding or efforts in the form of grades or marks on a variety of assignments. The most common type of feedback is written, but Ferguson (2011) found that written feedback that is developmental (instructs students to analyze or describe further or develop their thoughts more completely), or does not identify something specific to fix, is often ignored. Students do not understand exactly what to do. Both Jonsson’s (2013) and Ferguson’s (2011) separate literature reviews note that verbal or auditory feedback in addition to the written marks is more useful and likely to be interpreted as intended.

Bull Schaefer (2018) described an active learning method to give business students a mid-semester performance review, likened to a professional performance review, to allow students to receive feedback richly, allow for dialog, and ensure the creation of individualized development plans. In these performance reviews, students were required to meet with the instructor face-to-face and have a dialog about their perceptions of student and teacher performance and make a plan to improve efforts and resources. Performance reviews have often been criticized as not being value-added in professional settings due to game-playing, politics, inconsistent measurement, etc. (Longenecker et al., 1987), and some corporations have decided to get rid of formal reviews (see Wilkie, 2015). However, in an academic setting, some of the constraints that make performance reviews ineffective (such as timing, measurement, and accountability) can be held constant. Thus, a midterm performance appraisal and review should allow students the opportunity to bring together different observations of performance to consider what is working and how performance or behaviors can improve through different/additional effort and instructional resources. This exercise allows students to practice feedback interpretation and dialog skills. Requiring all students to engage in the face-to-face dialog about performance ensured that all students heard developmental feedback (not just the good performers or conscientious individuals). The 2018 publication spoke only to anecdotal findings and described the active learning method. We test Bull Schaefer’s assumptions through measuring grade percentage as an indicator from midterm to final grade, we investigate the quantitative measures to test the student’s understanding of the feedback based upon their performance review. Our Study 2 hypothesis tests whether a midterm performance review is a value-added method to improve student grades and understanding.

Method

Sample

To test hypothesis 6, 133 out of the 280 students described in Study 1 were given a midterm performance review. Around 96/133 students had completed the individual difference information (72%) because one section that was given performance reviews was not given the personality or feedback-seeking surveys due to extenuating circumstances.

Procedure

The procedure for Study 2 is the same as Study 1 until week 12 of the 16 weeks. In week 12, all students completed a 20-minute face-to-face performance review with the professor (as described in Bull Schaefer, 2018). Each student was asked to reflect on their grades and marks and turn in a self-assessment of their performance before the dialog. They also responded to questions regarding ways the professor could improve resources, etc. This self-assessment and responses guided the performance review to help guide discussions to clarify grades and feedback and create follow-up plans for improvement for both students and the instructor.

Study 2 additional measures

Percent change

This dependent variable was computed by taking the difference between midterm and final divided by the midterm score and then multiplying by 100. We wanted to see if including a midterm performance review would lead to larger change between midterm and final grades. This percentage change was then standardized for an independent sample

Analysis and results

The effect of a midterm performance review

To observe the effect of adding a formal midterm performance review, we calculated the average percent change between midterm and final grades for all sections. Then we performed an independent samples

Finally, we ran a regression predicting percent change in grade from midterm to final. Independent variables included standardized measures from Study 1: of conscientiousness, feedback-seeking behaviors. Additionally, we controlled for gender and who received the performance review. Regression analysis reported a significant ANOVA model (

Discussion

Feedback is needed to help students assess if the direction, intensity, and persistence of their effort is achieving the performance goals set. College creates a context for constant feedback, but the usefulness of written feedback has been found to be inadequate (Ferguson, 2011; Jonsson, 2013). Previous research has suggested that students need auditory feedback in addition to written feedback, and instructors should discuss performance feedback with students to meet learning/developmental goals (Dysthe et al., 2011). If left to monitor their own feedback, not all students will use online grade tracking to improve their class performance (see Geddes, 2009). Fedor and Rensvold (1992) noted that some students may also not feel a connection to the instructor. Thus, those students who feel comfortable approaching an instructor will have a level of privilege. It would be a shame if future studies were to find out that students of a different race or disability or other protected class than the instructor were at a disadvantage because instructors only explained feedback to those who were similar to them and felt comfortable asking.

Bull Schaefer (2018) proposed to include a midterm performance review in business classes to help accomplish this vehicle of feedback delivery while also teaching students elements of effective performance review design. Our study sought to extend and test the findings and assumptions from previous research using an academic undergraduate sample. We sought to observe whether this active learning method could ensure that all students receive developmental feedback and improve midterm to final grades.

Our research first found support that personality and previous performance predicted final grades. We also found support that including the face-to-face performance review allowed not only for an active learning opportunity as student experience a performance review, but these discussions also ensured that everyone in the class benefited from feedback they may not have sought out otherwise. In sum, we found improved change from midterm to final grades was significantly larger in the sections that were required to have the face-to-face discussion and review.

Limitations

As mentioned in Bull Schaefer’s (2018) detailed description of how to design a midterm performance review, the main downside to forcing a midterm review is time and emotional labor (skill related to displaying effective emotions). This exercise may be impossible to complete with larger section sizes or in quarter verses semester design. Consequently, some instructors who understand the experiential value and learning benefits of this active design may consider how they could implement discussion of a specific assignment instead of all course evaluative components. They could have a chat on the phone or a video conference instead, too. However, there is a risk that an instructor could fall into habits of delivering authoritative feedback and not a dialog. Asking open ended questions of “what do you or do you not understand, what resources would you need to improve, and how can I help you to meet your learning goals” can minimize one directional performance reviews.

Next, we intentionally wanted to run this study with one professor who was tenured (had job security and could withstand low teaching evaluations if the experiment failed) and taught multiple sections of the same course. Not all eight sections were taught in the same semester. Two-three sections were taught each semester. This study’s control and design could be a strength, or it could create even more questions. Was the student experience really the same? How consistent was that instructor between and across sections? The usefulness and application of this experiential and active learning method is relevant in a Principles of Management course since students are learning about how to measure performance and give motivational feedback. However, replication in a literature or chemistry course may not have the same immediate professional application for students. Thus, more longitudinal research is needed to experiment with other feedback giving strategies specific to disciplines.

Finally, students handed their self-assessments voluntarily. This could cause some potential problems with the representativeness of the data. However, in this study the majority of students handed their assessments to the professor when they left the classroom. If a few students did not hand in their assessment, it would not impact the data extremely to cause any outliers within the data. Additionally, since variation in personality is studied within our research, we are confident that self-selection did not cause a problem in the current research.

Teaching in a virtual space

The data in this paper was collected before the COVID-19 pandemic. During the time of this submission, many universities had opted to or had been forced to teach completely virtually with no face-to-face contact, joining the several online only programs offered across the world. Although this paper concerns face-to-face performance review meetings as a method to help all students improve, rather than allowing personality and previous performance to determine the amount of feedback-seeking behavior, instructors can use web conference platforms as the meeting space for reviews. During the spring semester of 2020, one of the authors got a chance to use Zoom and Microsoft Teams to facilitate performance review simulations with MBA students with great success.

A video conference meeting is not as rich (communication-wise) as meeting face-to-face. However, having the virtual barrier may help reduce communication noise (like smell or discomfort). Additionally, some virtual platforms, like Zoom, include the option to transcribe the conversation for subtitles (which can be helpful with individuals needing hearing assistance) and can be recorded for repeat review! Unfortunately, technical errors could be interpreted as internal or relational attributions rather than external. However, when the computer mediation works well, the virtual performance review may actually help reduce anxiety that students may otherwise have when planning a visit to an office.

Conclusion

Students may not monitor their performance or elicit feedback due to perceived costs or personality. If instructors of higher education want students to improve their learning, our study found empirical support that requiring all students to engage in a performance-related dialog (a performance review) benefits student performance from midterm to final grades. It is no longer enough to simply “be available” for students during office hours. Instructors need to be more intentional on using active techniques to ensure their feedback leads to improvements.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.