Abstract

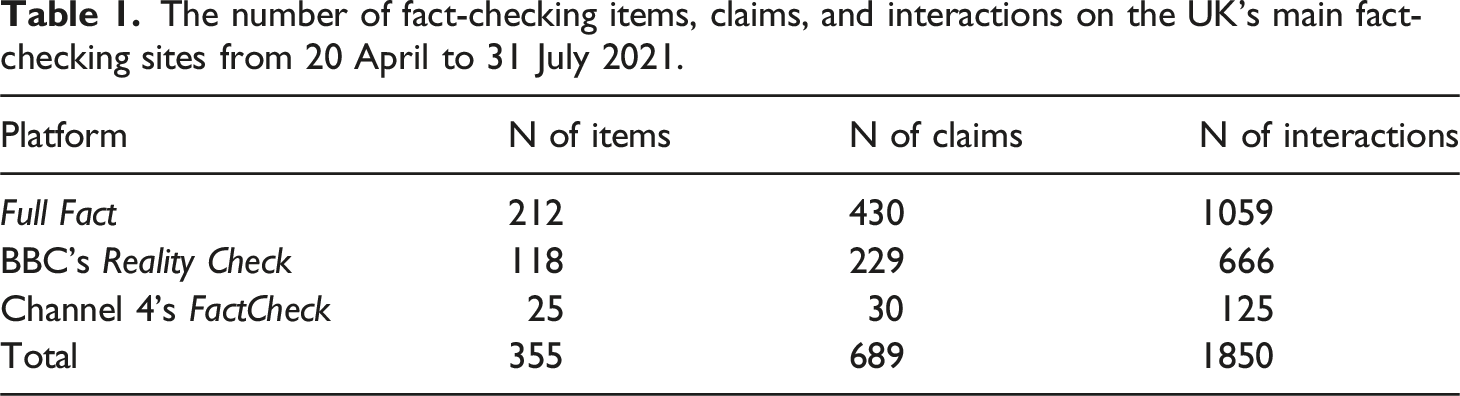

This article explores whether different media platforms across impartial news media supplied the same level of scrutiny in how they fact-checked political claims. While prior research has largely focused on independent fact-checking organisations, the fact-checking practices of legacy media through a cross-platform perspective have comparatively received limited attention. The study develops new lines of inquiry into the fact-checking practices of legacy media, presenting one of the largest and most forensic cross-platform studies of fact-checking to date. It draws on a systematic content analysis of 355 items from fact-checking sites, including 689 claims and 1850 instances where journalists or sources interacted with them in 2021, and assesses how they were covered by a further 280 television news items. Our findings demonstrate that the selection and degree to which journalists and sources scrutinised political claims varied across media platforms, with television news less inclined to report and analyse policy claims than dedicated fact-checking websites. Overall, we argue that the editorial boundaries of fact-checking are policed by journalists’ interpretations of impartiality, which differ across platforms (in television news or dedicated fact-checking websites) due to a range of editorial factors such as production constraints and news values.

Keywords

Introduction

The growth of dis/misinformation on social media and other informal networks of communication has attracted significant public and scholarly interest in recent years, with particular attention to fact-checking organisations worldwide (e.g., Graves 2016; Nieminen and Rapeli 2019; Vinhas and Bastos, 2023). However, despite the wider implementation of fact-checking by news organisations, the editorial practices of legacy media in countering dis/misinformation have comparatively received limited empirical examination regarding their approach to fact-checking journalism (Salaverría and Cardoso, 2023). This study aims to develop a forensic and systematic analysis of how political claims were dealt with by journalists across different broadcast news and online fact-checking sites in the UK. This includes assessing whether different media platforms within the same public service media organisation supplied the same level of scrutiny in how they fact-checked political claims in online and television news coverage.

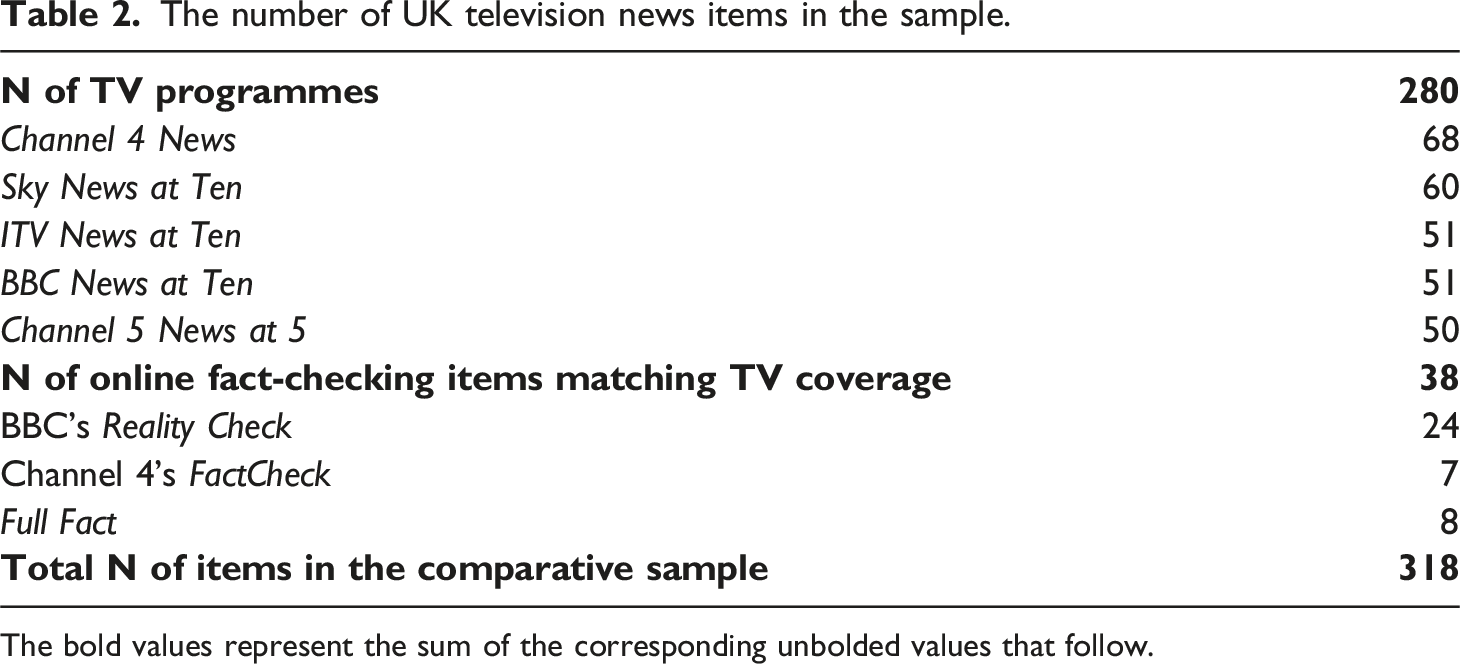

The UK’s public service media are regulated by guidelines, which require them to be impartial and accurate in their news output. Yet, to date, there has been limited academic attention paid to understanding how different standards of impartiality, coupled with the distinct editorial cultures and routines of platforms across online and broadcast, influence the selection of claims and the degree to which these are scrutinised. To advance a new agenda of studying the impartiality of news, this study will carry out a comparative content analysis of 635 news items produced by dedicated fact-checking sites and television news. This included examining 355 news items across the UK’s three main UK fact-checkers (BBC’s Reality Check, Channel 4’s FactCheck and Full Fact). It also included evaluating 280 television news items from five UK broadcasters over the same period matching coverage of the fact-checking sites. In doing so, we can then assess whether the same political claims were subject to the same degree of journalistic scrutiny across both television news and specialised fact-checking websites.

Fact-checking and journalism

Amidst concerns over declining levels of trust in professional journalism (e.g., Newman and Fletcher, 2017), fact-checking has been viewed as a central development in restoring public trust in journalism and enhancing the quality of public debate (Pingree et al., 2018). Celebrated as a ‘new democratic institution’, fact-checking has been viewed by some scholars as a revitalising force for traditional journalism in the new millennium by holding public figures accountable for the spread of false statements (Graves 2016: 6). Needless to say, journalists have always sought to verify facts and sources. But what characterises fact-checking initiatives is the focus on establishing the accuracy of claims typically made by public authorities while also giving prominence to the claims that are determined inaccurate rather than eliminating or correcting them ahead of the publication of a story (Amazeen 2019). While studies about fact-checking have grown over recent years, they have mostly discussed fact-checkers operating independently of mainstream journalism (e.g., Graves and Cherubini 2016; Moreno-Gil et al., 2022), reflecting an early research bias toward a U.S.-centric perspective. In recent years, more attention has been given to a wider range of contexts (e.g., Cazzamatta and Santos, 2023; López-Marcos and Vicente-Fernández, 2021; Vinhas and Bastos, 2023), yet the overall focus of studies on fact-checking remains on independent services. How fact-checking has been embedded or operationalised in mainstream news reporting—as in the case of UK broadcast media—is either under-researched or, in the UK, has largely focused on periods of election campaigns (e.g., Birks, 2019; Soo et al., 2023). At the same time, audience studies across different contexts indicate a demand among news media audiences for commitments to fact-checking (Kim et al., 2022), including an appreciation for factual reporting by mainstream media organisations (Newman and Fletcher, 2017) and a call for more rigorous scrutiny of claims by broadcasters (Cushion et al., 2021).

Despite struggling to maintain their relevance in high-choice media environments and adapt to the information needs of young audiences, public service media outlets remain trusted sources of information in many European countries (Newman et al., 2023). In the UK, public service media are generally still associated with attracting high levels of public trust (Ofcom, 2023). Whilst fact-checking has long been part and parcel of routine news reporting, UK public service broadcasters have, over recent decades, increased their commitments to tackle disinformation through the establishment of dedicated fact-checking websites. This aligns with broadcasters across various national contexts recognising the importance of ensuring the accuracy and reliability of information, countering misinformation, building audience trust, and upholding the credibility of public service media organisations as trusted sources (Rivera Otero et al., 2021).

BBC’s Reality Check (now known as BBC Verify) and Channel 4’s FactCheck are two main fact-checkers in the UK, alongside the independent fact-checking organisation Full Fact. Inspired by the US service FactCheck.org, UK Channel 4’s FactCheck was launched as a blog in 2005 and relaunched in 2010 with a strong focus on election coverage and as a feature of broadcast news output (Birks, 2019). The BBC introduced a dedicated Reality Check team in 2015 to cover the Brexit referendum and subsequently made it into a permanent feature. Since 2023, it has been subsumed into BBC Verify, a larger editorial division that produces journalism that challenges mis/disinformation. Full Fact is the UK’s largest independent fact-checker, launched in 2010 as a registered charity with trustees that include journalists and members of the main political parties (Graves and Cherubini 2016). Whilst other media companies such as Sky News and The Guardian have employed fact-checking during election campaigns, what distinguishes these three UK fact-checkers is their consistent fact-checking outside of election periods, as well as their overarching objective to be impartial. Whilst for BBC’s Reality Check and Channel 4’s FactCheck their impartiality credentials stem from their role as public service broadcasters, for Full Fact, this is due to the independent status of their organisation.

Prompted by the surge of false or inaccurate information since the onset of the pandemic, UK broadcasters have intensified their efforts to combat dis/misinformation in recent years. Following the publication of a 2021 impartiality review, the BBC’s director general pledged that the public service broadcaster would put “even more focus on our reporting of misinformation and fact-checking” (BBC Media Centre, 2021). Despite these increased commitments towards fact-checking services, public service media has infrequently been the primary focus of research concerned with fact-checking. While interest in UK public service media regarding fact-checking and countering disinformation has significantly increased in recent years, especially during election campaigns (Soo et al., 2023) or with a focus on the pandemic (Cushion et al., 2021), routine times of reporting outside of campaign periods and the inclusion of television news output from a diverse array of news organisations for a comparative approach have largely remained outside the scope of empirical investigations.

Broadcast impartiality and online fact-checking: a cross-platform approach

Adhering to the professional norms of impartiality entails a commitment to deliver coverage that is free of bias and avoids favouring one side over the other, which can sometimes be perceived as conflicting with the practice of fact-checking. In a study looking at the reception of UK fact-checking, Birks (2019) found that users viewed fact-checking as subjective, seeing journalists’ verdicts of election claims as biased, a perception rooted in a broader distrust of mainstream media. As Graves notes, while fact-checking can often invite political criticism and draw reporters into partisan fights, it requires strict adherence to journalistic standards and methods (2016). At the same time, it is crucial to recognise the significant challenges fact-checkers face in navigating the complexities of political and institutional discourse characterised by dubious facts, antagonism and ambiguities.

In the UK, broadcasters must follow ‘due impartiality’ guidelines regulated by Ofcom. However, the way ‘due impartiality’ has been applied and regulated by broadcasters has raised concerns about a style of reporting which ensures a balance between opposing parties or viewpoints but not scrutiny of competing claims. In their study of sourcing patterns, Wahl-Jorgensen et al. (2017) register a persistence of the ‘paradigm of impartiality-as-balance’ across BBC news programming resulting in a limited range of views being reported and a focus on party-political conflict to the detriment of contextualised coverage of the most important and contentious issues. While on the one hand, the journalistic practice of ‘false equivalence’—or more informally known as “both-sideism”—might reflect trends of increasing confrontation and polarisation of political discourse (Post, 2019), on the other hand, critics of the notion across the scholarly and media industry arenas have held journalism responsible for not keeping up with these changes and ultimately failing their audiences (see Maitlis, 2022).

A further key challenge facing UK public service media within a high-choice and rapidly evolving media landscape is maintaining its core public service principles across a range of services and platforms including broadcast and online/digital. However, few studies have highlighted differences in fact-checking standards across platforms. Notably, Hughes et al.’s (2023) research fully focusing on BBC output shows that efforts to uphold due impartiality may be adjusted based on factors such as the domestic or international context of a story, the involved politician, and the specific platform producing the content. Furthermore, insights from production studies reveal a limited integration between reporters engaged in routine news production and journalists focused on fact-checking (Soo et al., 2023), further underscoring the nuanced dynamics within the editorial process. This suggests the potential existence of varying editorial cultures and diverse interpretations of impartiality at the platform or program level, deviating from the idea of a uniform organisational approach. Such variation extends across the output and platforms of a news organization, significantly intersecting with challenges related to time constraints and resource limitations that can impact the extent to which issues are subjected to fact-checking. Since audience surveys have increasingly revealed people have diverse and fragmented media diets (Ofcom, 2023), it becomes crucial that more studies adopt a cross-media approach to assess media performances across the multiple services and platforms of media organisations. In particular, the examination of fact-checking practices across different platforms represents a scarcely explored area of investigation.

Despite recognition of the value of fact-checking by UK broadcasters, it is journalism mainly produced online and on social media platforms (Birks, 2019: 7). In the UK, broadcasters have experimented with integrating fact-checking features into television news packages, but this has not led to continual implementation. Initially, FactCheck was incorporated into the Channel four broadcast news output, including when relaunched in 2010, and BBC’s Reality Check fact-checking segments have appeared on high-reach outlets, although largely during election campaigns. Sky News also broadcast their Campaign Check segments ahead of the 2019 UK General Election. Despite experimental efforts to integrate broadcast with online fact-checking, the adoption of such practices has not become a sustained routine in television news bulletins (Soo et al., 2023). Furthermore, a different interpretation and application of impartiality across online and broadcast platforms, along with distinct editorial cultures within various departments and challenges related to time constraints and resource limitations, introduces a complex landscape. This complexity might result in audiences being exposed to varying degrees of scrutiny applied to the same political claims across platforms within the same organisation, despite their policies explicitly stating or implying that the approach to impartial journalism should be consistent across all news output.

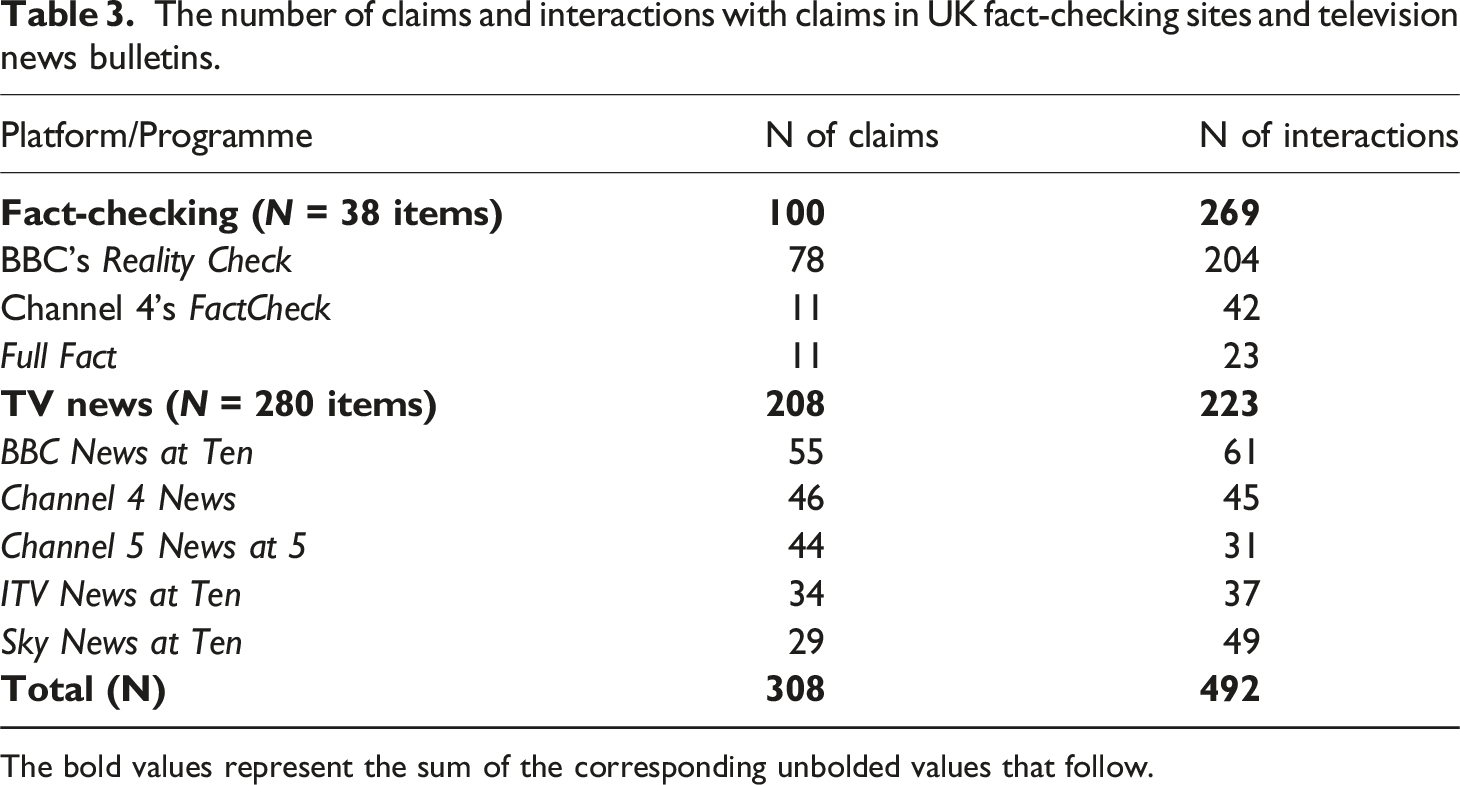

This study represents one of the first-ever forensic cross-platform analyses of fact-checking news. The aim is to assess whether different media platforms within a public service media organisation scrutinise political claims to the same degree. We carried out a systematic content analysis of UK specialist fact-checking websites (N = 355 items, N = 1850 interactions), followed by a comparative analysis of television news (N = 280 items) that matched the online sample in coverage. The analysis comparatively examined how and to what extent journalists or sources interacted with political claims (N = 492 interactions) across those news items presenting corresponding claims between online fact-checking and broadcast news items.

The study asks the following questions:

Method

To conduct the analysis, we compiled a sample of online fact-checking items from the UK’s main three fact-checking sites. We first analysed them independently to closely examine online fact-checking across these organisations. This sample also served as the foundation for our comparative content analysis between fact-checking and broadcast coverage.

Online fact-checking

The number of fact-checking items, claims, and interactions on the UK’s main fact-checking sites from 20 April to 31 July 2021.

Each news item published on the platforms within the sampled period (including weekends) was included for analysis. The online sample was first coded as a standalone content analysis of online fact-checking output and subsequently in comparison to broadcast output. The content analysis of the online sample drew upon a set of variables, including: the platform’s name; article type (fact-check, analysis, explainer, brief, video); pandemic focus; topic; geographical focus; outcome and decisiveness of the verdict. Additionally, within the sample of 355 fact-checking items, we examined all claims (N = 689) and interactions by sources or journalists with these claims (N = 1850), such as challenges or validations.

Constructing the comparative sample matching online fact-checking with TV news items

To establish a comparison between fact-checking and television news, a broadcast sample was constructed to identify how the same claims were dealt with on television news and fact-checking sites. To construct the broadcast sample, all stories reported by the online fact-checkers (BBC’s Reality Check, Channel 4’s FactCheck and Full Fact) within the sample period (N = 355) were searched for on Box of Broadcasts across BBC News at Ten and Channel 4 News bulletins on the same day and a week before and afterwards. Only television news items that matched the story reported in the fact-checking articles were included in the sample. We could then assess whether the claim was scrutinised differently across platforms.

The number of UK television news items in the sample.

The bold values represent the sum of the corresponding unbolded values that follow.

The television sample could also enable us to examine claims across broadcasters with different Ofcom-regulated license obligations in the provision of news while subject to the same legal requirements to be accurate and impartial. While the BBC is the main public service broadcaster, ITV, Channel four and Channel five are commercial public service broadcasters with contrasting license agreements about their news provision. In contrast, Sky News is a commercial broadcaster with no public service obligations.

Assessing scrutiny of political claims across platforms

The content analysis across fact-checks and broadcasts drew on five main variables assessing each claim in the news items made by a source broadly concerning a policy or political issue. First, it recorded the source making the claim. Second, it classified the topic of the claim (it transpired that all the corresponding claims between online fact-checking and television news items were political claims, hence we refer to ‘political’ claims in the comparative analysis). Third, if the claim was subject to some degree of scrutiny, it asked whether the claim was scrutinised by a journalist or an external source. Fourth, it classified whether any claim was scrutinised via a direct or indirect quote or using numeric data or visuals. Fifth, it assessed the degree of scrutiny of the claim by either a journalist or a source. To code this crucial variable, we developed a detailed analysis of interactions with claims to assess the scrutiny of claims. We define ‘interactions’ as instances where either the internal journalist/reporter or an external source explicitly or partially/implicitly challenges a claim or explicitly or partially/implicitly validates the claim. Null interactions were also coded: these were instances where a claim was featured in the news item but neither challenged nor validated.

The number of claims and interactions with claims in UK fact-checking sites and television news bulletins.

The bold values represent the sum of the corresponding unbolded values that follow.

After re-coding approximately 10% of the entire sample, the variables achieved a high level of inter-coder reliability according to Cohen’s Kappa (see Appendix A and B).

Findings

Online fact-checking: Comparative insights

We found that coverage across the three platforms—BBC’s Reality Check, Channel 4’s FactCheck and Full Fact—was still significantly informed by COVID-19, with stories relating to the pandemic making up over half of the fact-checking items in the sample (58%, N = 206). However, major differences emerged across the three platforms concerning the selection of claims for fact-checking. While Full Fact predominantly fact-checked instances of disinformation circulating on social media, mostly about the pandemic (83%, N = 86), Reality Check and FactCheck focused on claims by political actors relating to a wide range of domestic policy issues including public health, Brexit, and the environment. Although disinformation claims lend themselves to a clear-cut verdict, the scrutiny of political claims did not always include sufficient content that opened up the opportunity for either journalists or sources to validate or challenge.

Furthermore, we found that the story or issue being covered determined the decisiveness of a fact-checking verdict. While 85.2% of Full Fact items had a clearly stated verdict which was distinctly signposted at the top of the article, BBC’s Reality Check much less frequently included a clear verdict (9.7%) on the claim at the centre of the story. This reinforces previous research on British fact-checking about the high number of unclear verdicts (Birks, 2019). While Full Fact largely published standard fact-checks with clearly stated verdicts (93.9%, 199), Reality Check relied on a wider range of formats beyond standard fact-checking (21.2%, 25) including ‘analysis’ pieces (22.9%, 27), explainers (36.4%, 43) and brief posts (19.5%, 23). This points to a more diverse interpretation of ‘fact-checking’ as observed in other European contexts (Graves and Cherubini, 2016) which includes different formats and genres beyond examining claims and delivering clear-cut verdicts.

We further examined the three fact-checking sites to understand whether they dealt with political claims differently. Specifically, we analysed the 689 claims, focusing on the type and degree of interaction with each claim, resulting in a total of 1850 interactions (see Table 1). As indicated previously, interactions refer to instances where either a journalist or a source drawn upon in a news item explicitly or partially/implicitly challenged or validated a politician’s claim. We found that 83% of Full Fact interactions with claims involved explicit challenges, a substantial contrast to Channel 4’s FactCheck (56%) and BBC’s Reality Check (40%). The most frequent method to fact-check claims was journalists scrutinising them across all three websites (making up between 32% and 41.5% of sources used to fact-check). For example, in a Full Fact piece fact-checking Health Minister Matt Hancock’s claim on vaccination daily figures in Bolton, a verdict was conveyed through a decisive journalistic statement explicitly challenging the claim by referencing relevant data: “This is wrong. Official figures show that the highest number of people vaccinated in Bolton in a single day is 5465” (Full Fact, 28/05/2021). Reality Check had the highest number of implicit/partial challenges (33%), indicating a reluctance to select claims, sources and evidence that would deliver decisive and assertive verdicts. For example, in a BBC’s Reality Check piece on Prime Minister Boris Johnson’s police numbers, the government’s claim of having “hired 9000 of the promised 20,000 additional officers by 2023” is scrutinised with added context implicitly challenging the claim by suggesting that “those new officers should be seen in the context of the reduction in officer numbers since the Conservatives came to power in 2010”. Yet, while contextual figures were provided, the overall analysis lacked an explicit challenge or verdict.

Political sources were used to fact-check claims more frequently on BBC’s Reality Check (12.5%) and Channel 4’s FactCheck (19.2%) compared to Full Fact (3.7%), the percentages being the proportion of interactions involving political sources out of all interactions, per each platform. As an example, in a BBC’s Reality Check piece, Prime Minister Johnson’s assertion that the government acted “as fast as we could” to introduce quarantine hotels as “a very tough regime” was juxtaposed with a statement from the Labour Party about a lack of a “comprehensive” border system slightly diverging from Johnson’s claim’s focus. Overall, the analysis suggests that broadcasters’ fact-check by balancing party political claims, which follows long-established journalistic routines about source selection, such as prioritising official and elite sources (Yousuf, 2023). The content analysis of online fact-checking revealed significant variations concerning agendas, style and interpretations of UK fact-checking. This demonstrates that different editorial choices were made depending on whether the fact-checking service was an independent site (Full Fact) or a public service media outlet (BBC and Channel 4).

Fact-checking sites and broadcast media: a tale of two agendas?

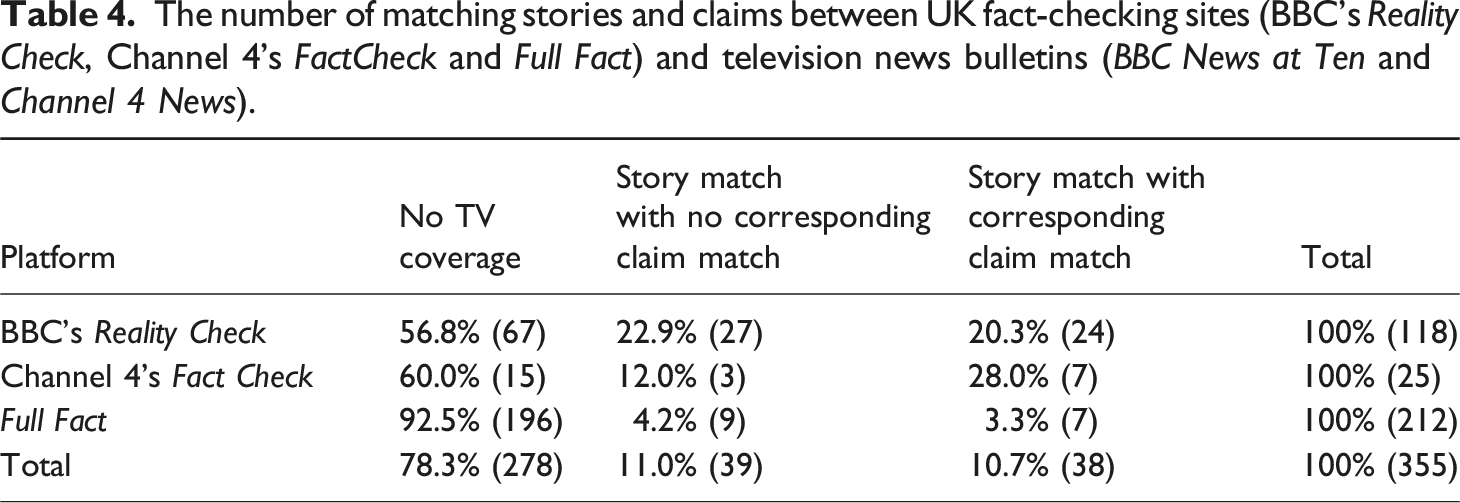

The number of matching stories and claims between UK fact-checking sites (BBC’s Reality Check, Channel 4’s FactCheck and Full Fact) and television news bulletins (BBC News at Ten and Channel 4 News).

As discussed earlier, Full Fact’s fact-checked claims tended not to be broadcast on TV given the different agenda favoured by the platform, which heavily focused on disinformation circulating on social media. However, our sample included seven Full Fact items which contained at least one claim scrutinised by at least one broadcaster. Table 4 below shows the extent to which the stories and claims reported in the fact-checking sites were covered on BBC News at Ten and Channel 4 News. The aim was to identify whether stories and claims reported on a broadcaster’s fact-checking site were also reported across its flagship television news bulletins (BBC News at Ten and Channel 4 News). In doing so, it will reveal broadcasters using its dedicated fact-checking service across other media platforms.

We looked closely at those fact-checking items that matched stories covered by television news bulletins but without any of the specific fact-checked claims being reported on television news during the sample period (N = 39). BBC’s Reality Check had the highest percentage of stories (22.9%) which were covered on broadcast (BBC News at Ten), but the specific political claims being reported did not match. These findings suggest that, despite some degree of cross-platform agenda overlap, broadcasters do not routinely draw on the analytical output of their dedicated fact-checking services – even within their own organisation. Broadcast news tended to focus on the more sensational aspects of political process stories rather than on policy issues. For example, in coverage of Prime Minister’s Questions—a weekly political event at the House of Commons that attracts a lot of media attention—there were differences in how broadcasters and fact-checkers covered it. Television coverage focused on the political strife of the parliamentary exchanges at the expense of the policies being debated. A BBC Reality Check item on 28 April 2021 selected for fact-checking five policy claims by Boris Johnson on taxation, Brexit, vaccine rollout and housing. However, the BBC News at Ten’s bulletin from the same day did not report any of those policy claims and solely focused on the political controversy about whether the Prime Minister had used public money to refurbish his flat, fuelled by allegations from the leader of the opposition that he was lying. In short, the editorial values of television news appear at odds with the sensibilities of fact-checkers, centred on analysing and questioning political claims in forensic detail.

Varying degrees of scrutiny of political claims across platforms

As Table 4 showed, only 38 fact-checking items (10.7%) featured at least one claim that was reported in at least one television news item (either BBC News at Ten or Channel 4 News).

To expand the base for comparison between fact-checking and broadcast news output, we also examined five of the UK’s flagship television news bulletins. In addition to BBC News at Ten and Channel 4 News, this included analysing ITV news at Ten, Channel 5 News at 5 and Sky News at Ten. 280 TV items within the same period across the five broadcasters were identified as potentially featuring matching claims with the 38 fact-checking items.

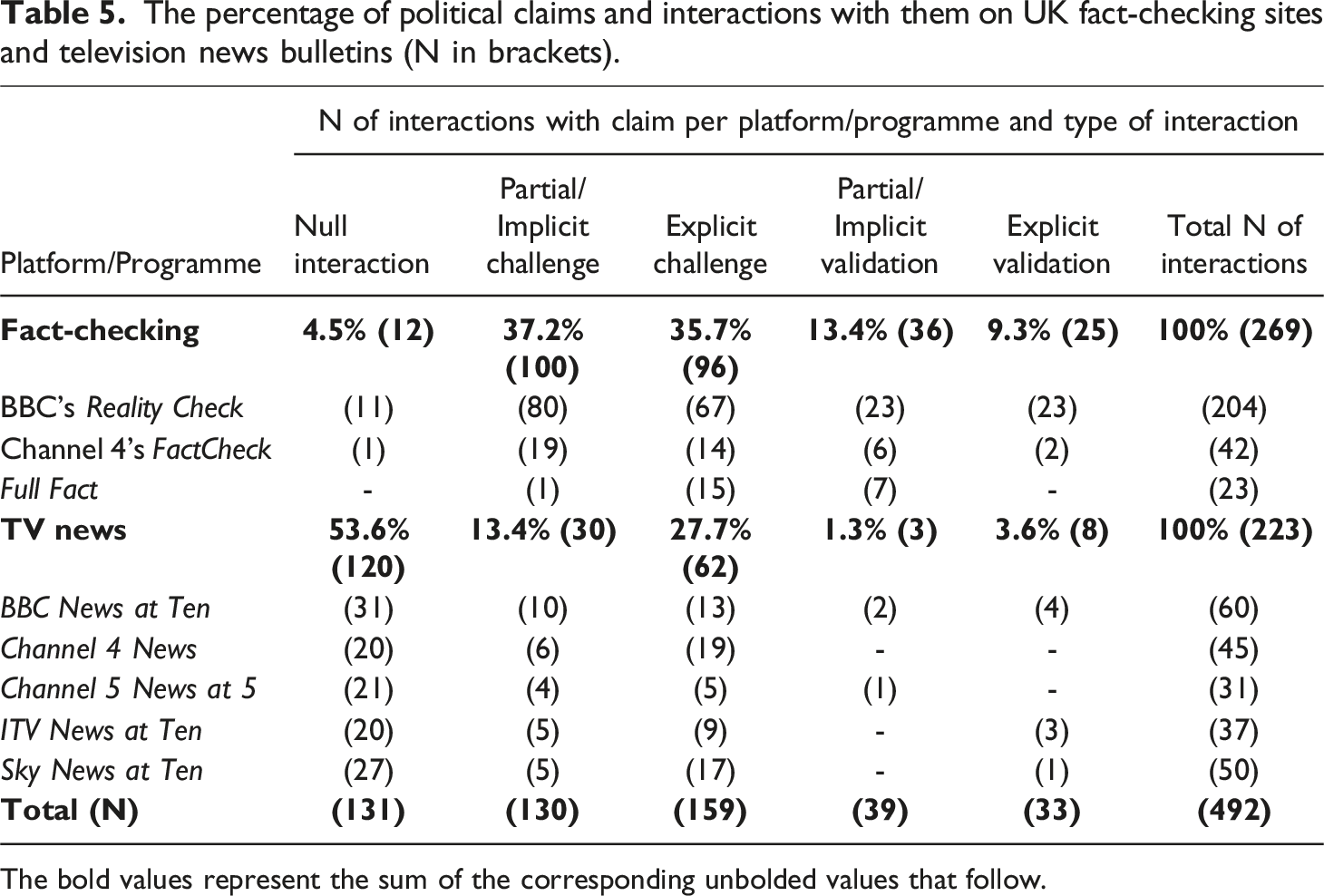

The percentage of political claims and interactions with them on UK fact-checking sites and television news bulletins (N in brackets).

The bold values represent the sum of the corresponding unbolded values that follow.

Overall, the scrutiny of claims was far more robust on fact-checking than on TV. For example, despite significant coverage of social care by BBC News at Ten, particularly in a comprehensive report on 11 May, 2021, the scrutiny of the government’s record was limited. The dedicated reporter package only once implicitly challenged the government’s handling of social care, with the reporter inferring public “deep frustration that yet again [people] are being told they must wait for detailed plans for reform”. In contrast, BBC’s Reality Check scrutinised social care claims by both the Prime Minister and the leader of the opposition Kier Starmer more assertively, explicitly stating “successive Conservative governments failed to reform social care” with hard data used as supporting evidence.

The significant number of unscrutinised claims (or ‘null interactions’) on television news reflects the focus on providing competing sides to a political debate (see Table 5). In contrast to fact-checking sites, this often came at the expense of detailed and contextualised analysis of individual political claims. When policy debates were reported on broadcast news—such as covering parliamentary exchanges—these tended to focus on the squabbling between the Prime Minister and the opposition politicians, often in edited formats of juxtaposed clips of debate highlights. For example, a story about the Queen’s speech—which sets out the UK government’s agenda for the new Parliament—was reported on BBC’s Reality Check on 12 May 2021 and extensively by all broadcasters. Reality Check selected seven policy claims ranging from healthcare, social care, and employment. Three claims were made by the Prime Minister, three by the leader of the opposition, and one by Conservative MP Gillian Keegan. These claims were fact-checked through a total of 22 interactions, including 16 challenges and six validations by a balanced mix of analyses by journalists or from sources used in the news item. However, all broadcasters—including the BBC News at Ten bulletin which could have drawn on the Reality Check service—largely focused on the Queen Speech’s ceremony itself rather than on the policy issues debated. The segment concerning the parliamentary debate opened with the leader of the opposition criticising the government’s inaction beyond promises. It included a juxtaposed clip of the Prime Minister promising a plan of employment relaunch followed by the claims of two members of other opposition parties (SNP and Plaid Cymru) advancing further attacks on the government’s plan (or lack of) respectively on Brexit, devolution and social care. In effect, this meant that while the government’s plan was balanced by the attacks of the opposition parties, no journalistic scrutiny was provided on any of the claims on the BBC News at Ten, despite being free to draw on the fact-checking journalism of their Reality Check team. While it can be argued that the bulletin was aired a day before the Reality Check was published, possibly allowing for extended fact-checking analysis, television news appears to prioritise a balance of political views over in-depth scrutiny of policy.

Without interviewing journalists making editorial judgements, we cannot establish why decisions about scrutinising political claims are different across fact-checking sites and television news reporting. Clearly, there are differences in fact-checking across platforms which arise from time constraints, affecting the depth of scrutiny, source selection, and potentially leading to an overemphasis on readily available political sources as noted by Soo et al. (2023) in interviews with senior editors and journalists. However, TV news has the opportunity to broaden its sources beyond politicians, even within time constraints, to enhance the comprehensiveness, scrutiny and informative value of the reports. Furthermore, our analysis showed different editorial choices were made about the selection of claims even when the same story was covered by platforms within the same organisation. For example, a BBC Reality Check item published on 12 June 2021 analysed the government’s management of the pandemic by fact-checking five claims made by the Health Secretary and making 18 challenges (10 explicit and eight implicit) about the veracity of his statements. Only two of the seven fact-checked claims featured on the BBC News at Ten bulletins (on care home discharges and lockdown extension), neither of which were analysed. Instead, the story reported across the different broadcasters revolved around the antagonism between different political personalities and spin doctors. While ITV News at Ten did not report on any claim that featured on the BBC’s Reality Check site, the other bulletins featured two of the five claims. Yet, the claims examined on television news received limited scrutiny and included a significant number of null interactions.

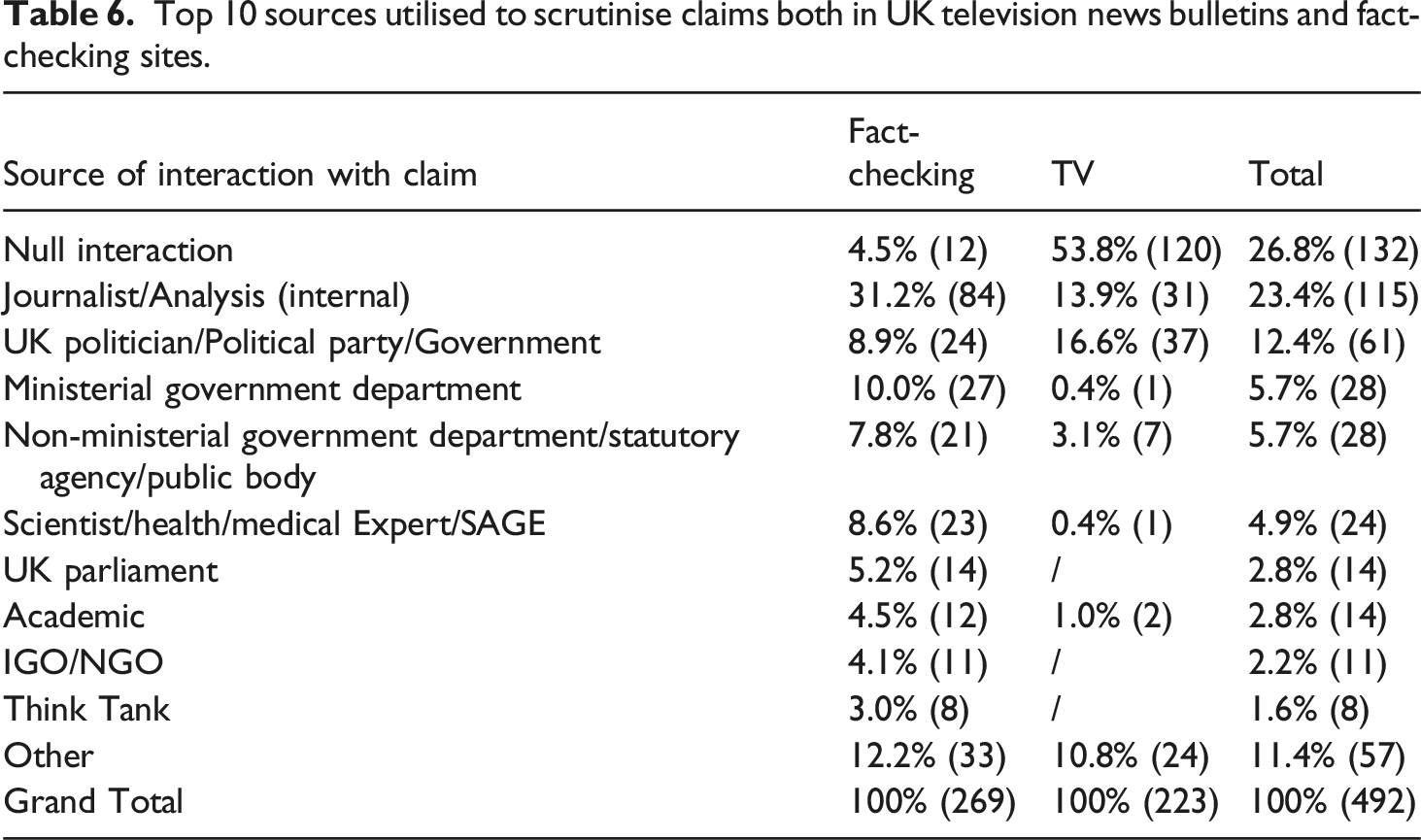

Top 10 sources utilised to scrutinise claims both in UK television news bulletins and fact-checking sites.

Overall, fact-checkers drew on a wider range of sources to scrutinise claims than television news bulletins, including non-ministerial government departments, scientific experts and academics, non-governmental organisations and think tanks. Furthermore, fact-checking sites challenged claims more in the form of hyperlinks, visual statistics, and numerical data than television news. But, above all, the most striking comparative finding was that over half of political claims featuring on television news (53.6%) did not get further scrutinised by either a journalist or a source, in contrast to just 4.5% of claims on fact-checking items.

Towards a cross-media approach to the study of fact-checking

This study developed a systematic analysis and conceptual understanding of how claims were scrutinised on television news and fact-checking sites. Above all, our findings demonstrated that there are different approaches to the selection and scrutiny applied to political claims across fact-checking online sites and television news bulletins. This means the editorial boundaries of fact-checking journalism are applied differently according to media platforms including from the same organisation. The reasons for the different levels of scrutiny between fact-checking and television reporting reflect a complex mix of editorial considerations, such as production constraints and time limitations. The influence of these factors is particularly striking in television news bulletins where relatively short news items construct a balance of competing party-political arguments, which then limits the time for journalists to assess the relative strengths of different claims and provide any journalistic scrutiny about the weight of evidence supporting them.

Due to these production constraints and news values, the evidence amassed in the study suggests this influences how journalistic impartiality is applied differently between fact-checking sites and television news bulletins. Television news is less likely to report policy claims and provide in-depth and contextualised analyses of the claims made by politicians than dedicated fact-checking sites. This highlights how journalistic impartiality can be interpreted differently, as Hughes et al. (2023) discovered when comparing BBC television news output and its fact-checking service during the 2019 UK general election and the 2020 US presidential election. Furthermore, our study showed that contrasting news values between broadcast and online fact-checking influenced the selection and analysis of claims. Whilst fact-checking sites largely selected policy claims and applied robust and informative scrutiny drawing on a wide range of sources, broadcasting favoured the more sensationalist side of stories by focusing on personality, controversy and conflict at the expense of more ‘fact-checkable’ claims referring to empirical facts (Birks, 2019).

Despite the production constraints and news values of broadcast journalism, in our view, this does not mean broadcasters cannot deal with dubious or false claims more robustly than at present. For example, even factoring in time constraints, there are plenty of information-rich sources beyond politicians that could help journalists interpret the veracity of competing political claims. We would further argue that the fact-checking resources of broadcasters, notably the BBC, could be used more effectively across its broadcast, online and social media platforms. The BBC’s Editorial Guidelines boldly state in Section 3.3.1 that its output has a commitment to “weigh, interpret and contextualise claims” (BBC, 2019). Yet, our study suggests that such a commitment is not pursued consistently across its online and broadcast platforms. Fact-checking appears more like a journalistic ‘specialism’ confined to digital provision rather than an integrated feature and routine practice of broadcasting. Practically speaking, it is harder to fact-check in live broadcasting when compared to online reporting (Mantzarlis, 2016). But digital fact-checking platforms offer opportunities to supplement broadcast news reporting, especially in resource-constrained environments, by providing avenues for thorough scrutiny of claims and counterclaims, should editors prioritise such an approach.

A growing body of scholarship has shown that, from television viewers being turned off from the analysis of political statements (e.g., Hameleers et al., 2022; Newman and Fletcher 2017), there is a strong appetite for robust forms of journalistic scrutiny in broadcast news coverage (Cushion et al., 2021). Furthermore, our study has shown that it is not only the scrutiny of policy claims that often lacks in broadcasting—particularly if compared to fact-checking analyses—but also the limited selection of policy-driven stories. The news values of television news appear to be influenced less by policy and more by a political narrative, with a focus on the day’s events and the personalities involved. This may be driven by editorial perceptions of television viewers favouring more entertaining than factual analysis of politics. Or it could represent a reluctance to challenge claims by senior politicians on prime-time television when it could be left to dedicated fact-checking sites even if they might not reach as wide an audience.

In summary, our study highlights the significance of a cross-platform approach to analysing fact-checking and journalistic scrutiny within public service media, a dimension often overlooked in existing research (Salaverría and Cardoso, 2023). We recommend that future studies further develop systematic comparative content analyses to examine potentially varying practices, styles, and standards of journalistic scrutiny and fact-checking across platforms and media organisations in various contexts. This approach has the potential to inform recommendations for news media organisations to uphold standards and enhance public understanding across their output.

Footnotes

Acknowledgements

The authors would like to thank Dr Anya Richards and Dr Luke Roach for their work as research assistants.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Arts and Humanities Research Council; AH/S012508/1.

Appendix A. Inter-coder reliability scores for individual variables – online fact-checking sample

Variable No

Variable description

Level of agreement, with Cohen’s kappa (CK) in brackets

1

Article type

95% (0.92 CK)

2

Covid focus (yes/no)

100%

3

Geographical focus

100%

5

Topic category

95% (0.94 CK)

6

Topic 2 category (for media-focused items)

97.5% (0.95 CK)

7

Is there a political claim (yes/no)

97.5% (0.96 CK)

8

Type of verdict/outcome

95% (0.91 CK)

9

Verdict clearly stated?

90% (0.82 CK)

10

Disinformation/fake news/conspiracy theory mentioned (yes/no)

97.5% (0.92 CK)

11

Author of claim

100%

12

Topic of claim

96.6% (0.94 CK)

13

Source of interaction with claim

96.9% (0.96 CK)

14

Extent of interaction

98.6% (0.97 CK)

15

Type of interaction

95.6% (0.94 CK)

16

Placement of interaction

98.3% (0.96 CK)

Appendix B. Inter-coder reliability scores for individual variables – television news sample

Variable No

Variable description

Level of agreement, with Cohen’s kappa (CK) in brackets

1

TV convention

100%

2

Is there a political claim (yes/no)

100%

3

Topic category

100%

4

Fact-checking tool/feature

97.7% (0.846 CK)

5

Disinformation/fake news/conspiracy theory mentioned (yes/no)

97.7% (0.876 CK)

6

Author of claim

100%

7

Topic of claim

98% (0.976 CK)

8

Source of interaction with claim

97% (0.965 CK)

9

Extent of interaction

96% (0.941 CK)

10

Type of interaction

98% (0.976 CK)

11

Placement of interaction

99% (0.978 CK)