Abstract

Scholarship has increasingly sought solutions for reversing broad declines in levels of trust in news in many countries. Some have advocated for news organizations to adopt strategies around transparency or audience engagement, but there is limited evidence about whether such strategies are effective, especially in the context of news consumption on digital platforms where audiences may be particularly likely to encounter news from sources previously unknown to them. In this paper, we use a bottom-up approach to understand how people evaluate the trustworthiness of online news. We inductively analyze interviews and focus groups with 232 people in four countries (Brazil, India, the United Kingdom, and the United States) to understand how they judge the trustworthiness of news when unfamiliar with the source. Drawing on prior credibility research, we identify three general categories of cues that are central to heuristic evaluations of news trustworthiness online when brands are unfamiliar: content, social, and platform cues. These cues varied minimally across countries, although larger differences were observed by platform. We discuss implications of these findings for scholarship and trust-building efforts.

Researchers and journalists have increasingly sought solutions to declining levels of trust in news in many countries (Newman et al., 2019); however, there is limited evidence about what strategies are effective, for whom, and under what circumstances. While journalism scholars often highlight the importance of editorial practices, transparency, and audience engagement (Wenzel, 2020; Zahay et al., 2021), such approaches may be disconnected from how people increasingly consume news online, which may involve fleeting encounters on platforms with often unfamiliar sources.

In this study, we use a bottom-up approach to better understand how audiences make judgments about the trustworthiness of news in everyday life. We draw on evidence from an iterative, inductive qualitative study involving 232 participants across three rounds of data collection, which, based on insights from focus groups (first round) and in-depth interviews (second round), culminated in a series of “think aloud” interviews (third round) grounded in people’s concrete experiences using platforms across four countries (Brazil, India, the United Kingdom, and the United States). These latter interviews enabled going beyond retrospective elaboration to observe in real-time how people formulate judgements about sources on three of the most used platforms for news—Facebook, Google, and WhatsApp. Our findings underscore the central role played by cues in how people evaluate what to trust, and we identify three types that are especially relevant when people are unfamiliar with the source: content, social, and platform cues. These cues were relatively consistent across countries but varied across platforms, reflecting how distinct features lead to differing cues, which in turn trigger varying heuristics.

Our study extends previous research on information credibility and trust in news by: (a) identifying salient cues and heuristics in the context of a platform-dominated media environment where many encounter news from unfamiliar sources; (b) doing so across different countries; and (c) in an audience-centric manner. We show how many cues are disjointed from newsroom and editorial practices, such as transparency and engagement, which are often the focus of scholarship on trust in news. They include characteristics that may not seem substantively important to journalism, but which appear nonetheless relevant to audiences as they decide what to believe. This holds implications for news media seeking to build and sustain trust but also others seeking to exploit misplaced trust, and it underlines the importance of qualitative audience research in scholarly work on trust in news.

Literature review

Why changing news habits matter for understanding trust

Trust in news has declined in many countries (Newman et al., 2022; Hanitzsch et al., 2017), spurring research into how to restore it. Scholars have focused especially on journalistic practices such as transparency (Koliska, 2022; Masullo et al., 2022; Peacock et al., 2020) and deepening engagement with audiences (Wenzel, 2020; Zahay et al., 2021). Yet, empirical evidence around what works is limited. Some previous studies suggest such approaches may have a minimal impact or none at all (e.g., Koliska, 2022; Masullo et al., 2022; Peacock et al., 2020).

One often highlighted challenge is that many trust-building strategies are anchored in normative ideas about journalism, which may be disconnected from much of the public’s experiences with news. Many have limited knowledge about or interest in individual news brands—or the procedures and practices underlying journalism—making them less responsive to such initiatives (Gibson et al., 2022; Toff et al., 2021). More importantly, these approaches risk overlooking the digital and often incidental context in which growing segments of the public consume news on platforms such as social media, messaging applications, and search engines (Newman et al., 2022). This means people encounter news they weren’t actively seeking but also that they are exposed to a relatively larger variety of sources (Fletcher and Nielsen, 2017; Strauß et al., 2020). Specific affordances associated with these platforms may trigger distinct strategies and behavioral responses (Sundar, 2008).

The abundance of information online means careful vetting and analysis of all information is often untenable (Metzger and Flanagin, 2013). While prior knowledge about journalism and news sources can conceivably facilitate the evaluation of news, this may not describe most people’s experiences on platforms. One report shows that between one-half and one-third of those surveyed across eight European countries said they were unfamiliar with or did not pay attention to news sources they encountered on social media (Pew Research Center, 2018). Furthermore, prior evidence indicates news use online is unequally distributed (Kalogeropoulos and Nielsen, 2017), with many expressing low interest in news and limited familiarity with even basic journalistic concepts. As such, those who encounter news online, but especially those with less interest and knowledge, will likely require other strategies.

Heuristic approaches to decision-making and credibility

Researchers from cognitive science and psychology have long shown people process information in ways that minimize cognitive demands. Dual-processing theories such as the Heuristic-Systematic Model posit that information is processed through pathways that only sometimes involve conscious evaluation (Chaiken et al., 1989; Chen et al., 1999), referred to as systematic processing. Heuristic processing, on the other hand, relies on “heuristics” or judgmental rules that require less mental effort (Chaiken, 1980; Chen et al., 1999). Such strategies allow people to make decisions more quickly, frugally, and/or accurately than using more cognitively demanding methods. As “mental shortcuts” (Gigerenzer and Gaissmaier, 2011), heuristics depend on cues that are readily available, which may be combined or nested (Todd, 1999). In practice, there is no dichotomy between heuristic and non-heuristic, as people can rely on more or less information when judging information (Gigerenzer and Gaissmaier, 2011: 455). Thus, whether a cue is employed heuristically versus systematically depends on how a person uses the information available.

Heuristics and trust

Communication researchers have previously adopted this theoretical framework to understand the importance of heuristics in credibility judgments (Metzger and Flanagin, 2013; Metzger et al., 2010)—for example, how website attributes and design may shape evaluations of information online (Flanagin and Metzger, 2007; Fogg et al., 2003). Such research has focused on how audiences cope with sociotechnical changes in the media environment, such as unprecedented information abundance (Metzger et al., 2010; Sundar, 2008). However, journalism scholarship has generally paid less attention to heuristics in how audiences judge the trustworthiness of news (important exceptions include Masullo et al., 2021; Peacock et al., 2020). In part, this may be because journalism research has increasingly shifted its attention away from credibility and toward trust. Credibility is typically understood as a narrower concept involving the properties attributed to information or sources, making them more or less believable, whereas conceptualizations of trust tend to be broader, emphasizing its relational character, positive orientation, and uncertainty (Engelke et al., 2019; Tsfati, 2003).

Here we are less concerned with the definitional distinctions between trust versus credibility 1 and instead seek to bring research on heuristics into greater focus for journalism studies scholarship on trust in news. More specifically, we focus on aspects of the contemporary media environment shaped by digital platforms; most heuristics research largely precedes their rise in prominence. We also examine audiences across four countries whereas most previous research derives from single-country studies—typically the US. Given that heuristics are learned capacities that originate in specific experiences and socio-cultural contexts, we cannot take for granted that they will be the same from one place to another (Schirrmeister et al., 2020). By comparing across countries, we can consider these divergent contexts.

The present study’s focus on trust cues arose inductively through an audience-centric approach rooted in attending to audiences’ everyday media practices and sensemaking. We see this as a contribution to calls for an “audience turn” in journalism studies, going beyond a focus on newsroom-centric practices and producer-oriented concerns of much past scholarship (Swart et al., 2022). We did not predetermine to focus on credibility heuristics or assessment strategies; rather, these objects of study were identified over the course of investigating a more general research question: (

Methods

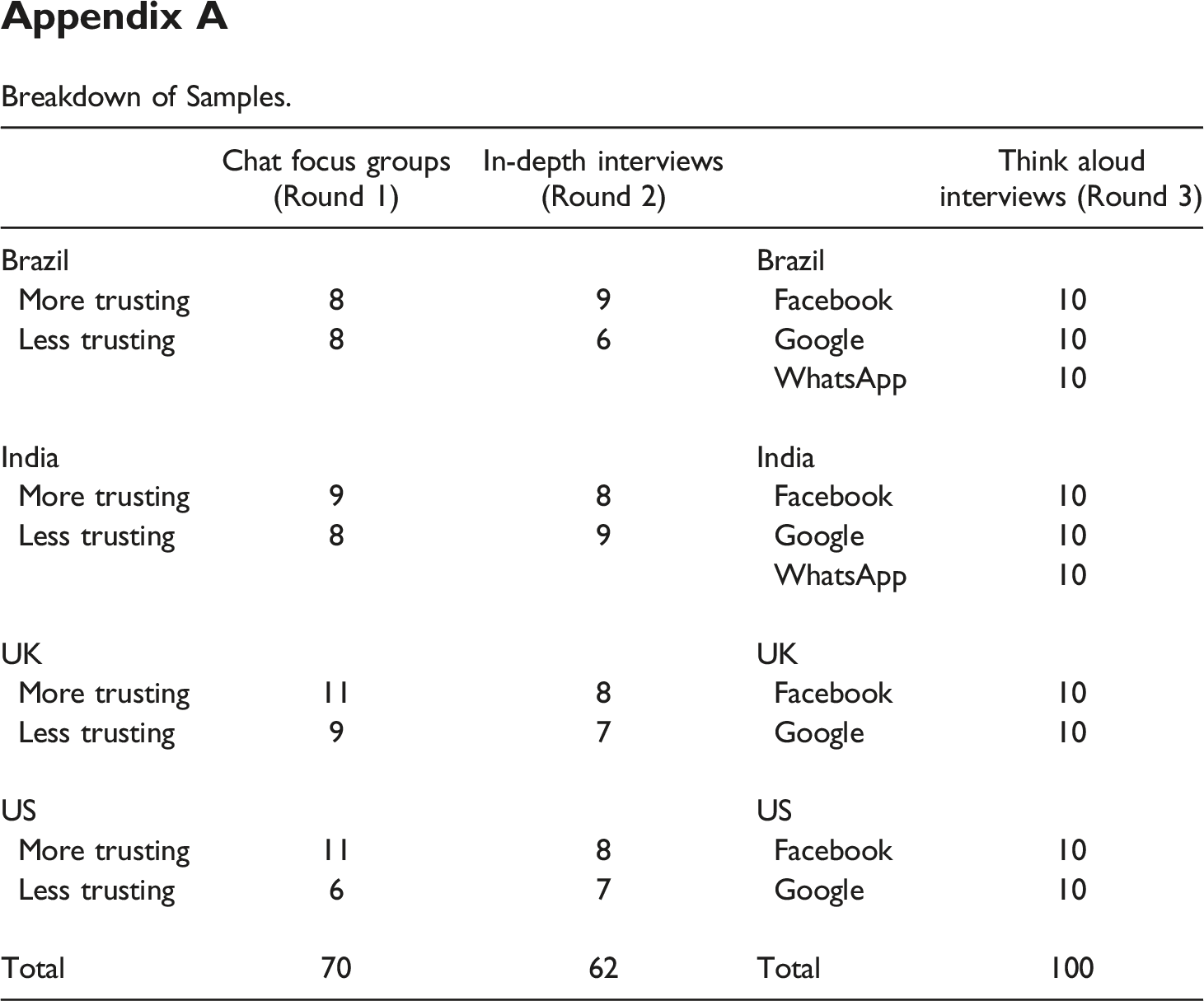

This study is part of a larger research project, allowing us to refine our research design over time, informed by intermediate findings. We draw on three rounds of qualitative data collected over more than a year (see samples in Appendix A) through chat-based focus groups (R1), in-depth interviews (R2), and “think aloud” interviews (R3) with 232 participants in four countries that differ in their sociocultural, political, and media systems 2 —Brazil, India, the UK, and the US. Differences in characteristics along these dimensions, ranging from polarization to media market structures, may play a role in shaping levels of trust in news (see Fawzi et al., 2021 for a review), which vary across each of the four countries. According to data from the Reuters Institute Digital News Report (DNR) (Newman et al., 2022), Brazil has above-average trust in news, while the UK and US have below-average levels, with trust in India in between. The countries also vary in their uptake of platforms generally and for news. The DNR showed that while Facebook was used widely in Brazil (67%), India (62%), the UK (62%), and the US (58%), rates were higher as a source for news in Brazil (40%) and India (43%) compared to the UK (19%) and US (28%). Likewise, fewer in the UK (24%) and US (36%) said they relied on search engines like Google to get news compared to Brazil (49%) and India (65%), two countries where the messaging app WhatsApp has also become an important information intermediary. 3

Data collection and analysis

We conducted our first two rounds of data collection in early 2021 and included samples split between those more and less trusting of news (see appendices for details). 4 We carried out eight chat-based focus groups (R1), using a proprietary online platform similar to common messaging services, each with six to 11 participants (N = 70). We then conducted in-depth interviews (R2) using a videoconferencing service with an additional 15–17 people per country (N = 62). During these two rounds of data collection, we asked participants about their media habits, attitudes towards journalism, experiences consuming news, and how they distinguished between trustworthy and untrustworthy news. This helped us understand how people conceived of trust and the context around their everyday engagement with news.

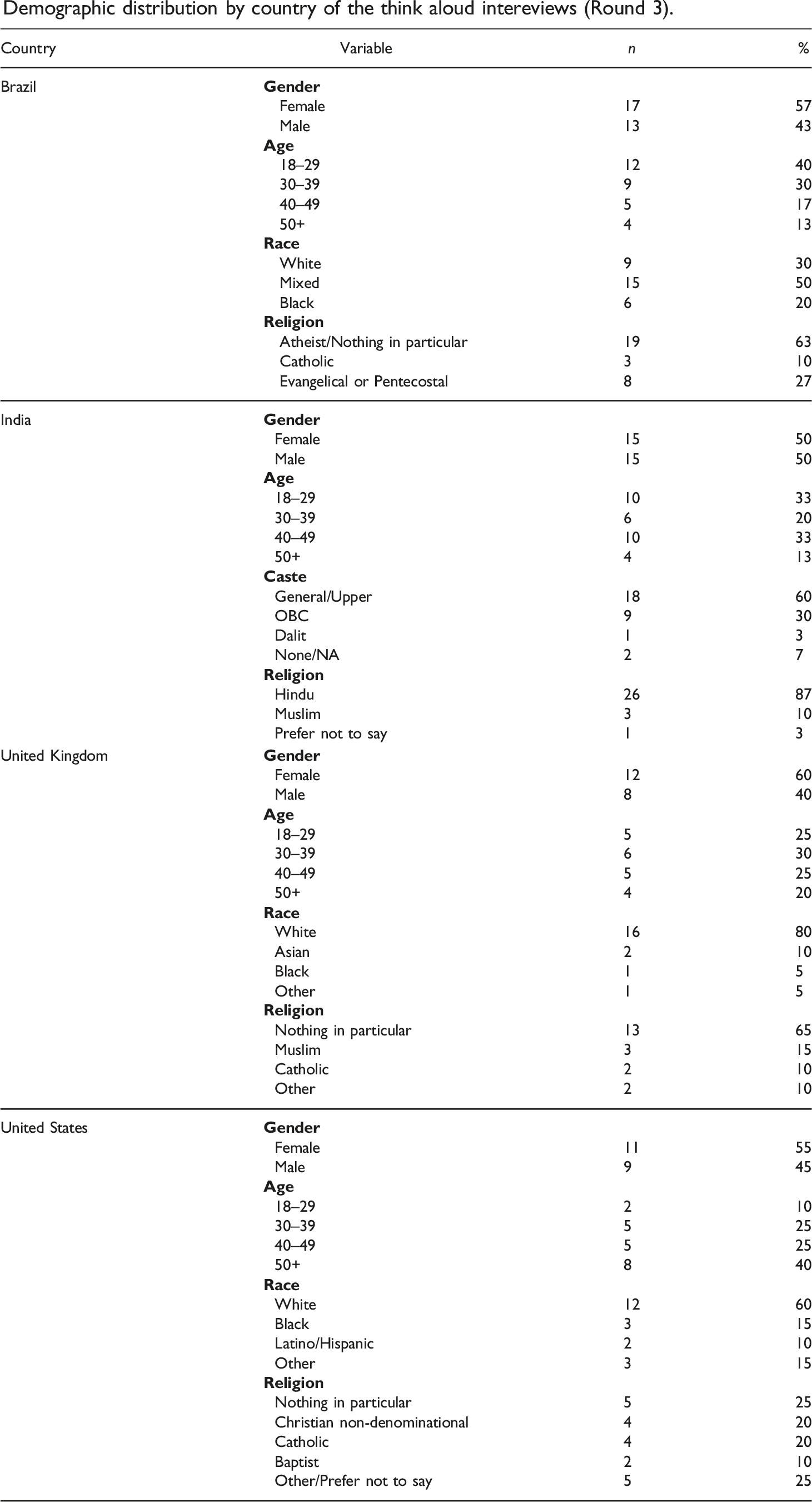

Because participants rarely highlighted journalistic practices or editorial processes and instead described paying attention to various cues, especially when judging sources they had limited knowledge about, for our third round of data collection, we focused on these cues. Between December 2021 and January 2022, we conducted 100 interviews (R3) with individuals generally untrusting of news—those who trusted a below-average number of news outlets in their countries—who were also less interested in politics, which we found to be a strong indicator for disengagement from news (Toff et al., 2021). We recruited participants who regularly used three platforms: Facebook (social media) and Google (search engine) in all four countries, and WhatsApp (messaging app) in Brazil and India. We conducted 10 interviews per platform per country, centering on one platform per participant. We sought variation across demographic characteristics such as gender, age, and race/caste (see Appendix C).

We held this last set of interviews (R3) over videoconferencing software using a “think aloud” interview technique (Eveland Jr. and Dunwoody, 2000; Ericsson and Simon, 1980), which sought to simultaneously provide insight into the kinds of things participants naturally encountered while using digital platforms and how they thought about the content they came across. After asking about general media use, we instructed participants to load one of the relevant platforms on their screens as they would in everyday life and describe what they saw as they navigated it. This enabled us to identify in real time what kinds of content participants encountered and what they paid attention to when judging trustworthiness, while also allowing us to move beyond abstract responses to real-life experiences, where we could probe into specific examples (more details in Appendix B).

Focus groups and interviews were conducted in English, except those in Brazil (Portuguese) and a small portion of those in India (Hindi). Focus groups lasted around 90 minutes and interviews between 40 and 60 minutes. Sessions were recorded, transcribed, and translated to English with participants assigned pseudonyms. In this article, we draw from all three rounds of data collection (R1, R2, and R3), but given our focus on cues, we disproportionately reference excerpts from R3, which focused most squarely on these matters.

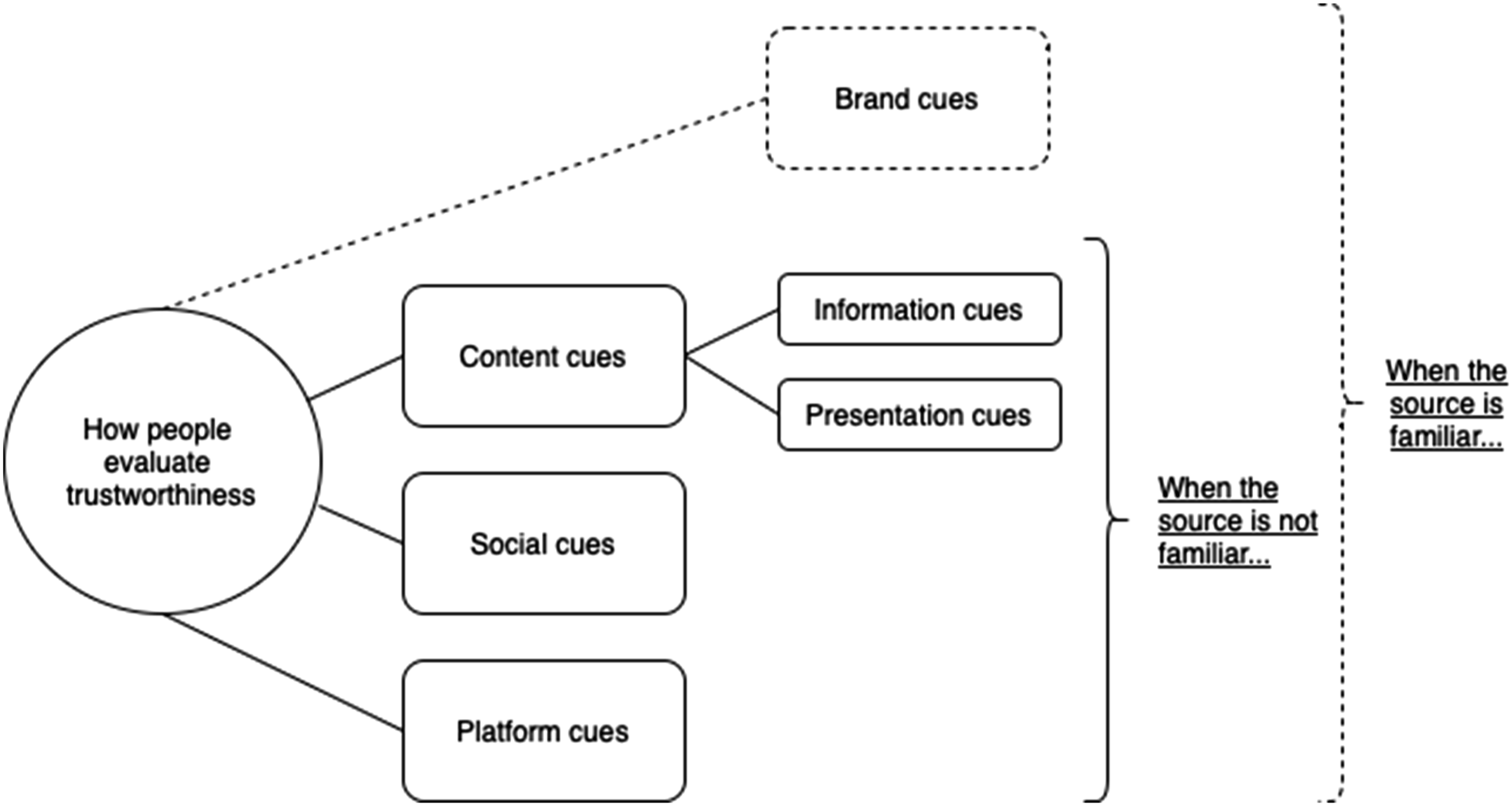

All transcripts were analyzed inductively by the research team for common themes (Braun and Clarke, 2006). First individually and then collectively, the authors identified an initial set of themes, corresponding to a variety of beliefs, factors, and strategies shaping peoples’ trust judgments. Following the third round of data collection, and considering previous literature on credibility assessment and heuristics, we refined our coding to focus on prominent “cues” underpinning in-the-moment judgments participants mentioned. We then organized them based on where they originated, resulting in four categories, summarized in Figure 1: brand, content, social, and platform cues.

5

While participants often alluded to brand cues in instances when they noticed and were familiar with a source (i.e., brand), given our focus on trust judgements when people are unfamiliar with sources, we only elaborate on the latter three categories (as the dotted lines in Figure 1 signify). Cues for trust heuristics online.

Findings

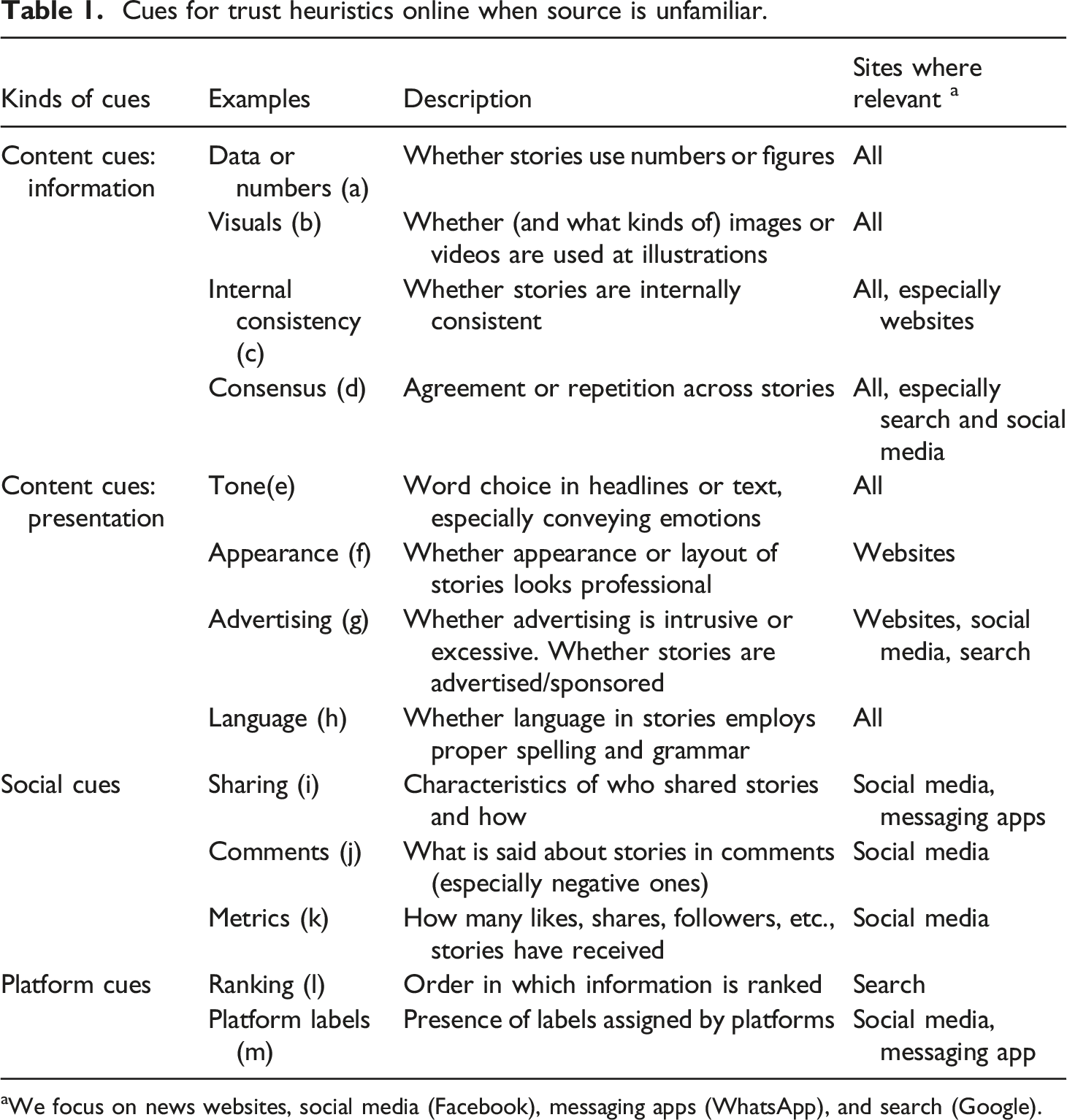

This section covers the three main types of cues we identified when participants were unfamiliar with or unaware of the source and therefore could not draw on brand cues. Content cues refer to information contained in news snippets on platforms or news sites, or to characteristics about how that information was presented; social cues refer to those prompted by digital traces of other people on platforms; and platform cues capture those pertaining to how platforms organized or labeled information or sources. We found remarkable consistency in cues cited in all four countries varying mainly in degree. Differences were more apparent with respect to whether news was encountered via a news site, social media, messaging app, or search engine, reflecting cues most readily available on each.

As we detail examples, we underscore that this is not a comprehensive list of cues. Rather, we aim to illustrate the varying ways people described using mental shortcuts when judging news online. While we explain each separately, in practice, people often drew on multiple cues depending on previous knowledge, context, and availability. We also link our findings to past research on credibility heuristics or assessments. Indeed, the boundary between credibility assessments and heuristics is sometimes blurry given that some cues can also be used for more cognitively effortful evaluations.

Content cues

We first explore the most salient content cues, starting with those focused on information in the news, followed by those centered on the presentation of news.

Cues centered on information

Interviewees often referred to specific aspects of the information conveyed in news content as indicators of whether the source itself could be trusted, highlighting characteristics such as the presence of (a) data or numbers, (b) images or video, the degree of (c) internal consistency within a story, or (d) consensus across outlets.

One of the most consistent examples of cues centered on information involved references to the presence of (a) data or numbers, in line with prior credibility research (see Slater and Rouner, 1997). Enzo (Brazil, R1), for example, noted he was more inclined to trust “when the news comes along with some survey/study.” Some discussed data as a basis on which reported claims could be substantiated and subjected to scrutiny. However, others used the presence of numbers or figures as a shorthand for quality, rather than engaging with the information analytically. For instance, Ruben (UK, R3) described his favorable impression of a search result on Google, noting, “That’s got lots of figures, so that seems reliable.” For participants like Ruben, simply seeing numbers on the screen invoked a greater sense of trust.

Second, many discussed the importance of (b) images and video as cues for judging news quality, especially in Brazil, the UK, and US. For some, visuals not only made content more eye-catching, but also instilled a greater sense of trust. Michelle (US, R3) explained, “things we can see because it’s live, I would trust that more than just read[ing] an article.” Similarly, Lucas (UK, R3) described his tendency to trust videos livestreamed on Facebook over news outlets because he viewed them as untampered evidence: “You know that famous saying, ‘A picture speaks a thousand words.’…it doesn’t matter what other people are telling you, you can take that at face value; this is with your own eyes.” Indeed, the feeling that they were witnessing news “with their own eyes” reduced the perceived uncertainty; as Sangeeta (India, R3) put it, “what we can see, we believe in that.”

Different kinds of images could lead to more or less trust. Martin (UK, R3) described the importance of a “good quality photo,” expressing skepticism about an outlet that used “a stock image” compared to others using “pictures of nurses and doctors and PPE in the hospital.” Similarly, Guilherme (Brazil, R3) expressed suspicion of one source that used an illustration rather than a photograph: “All of the others have an image of this fossil to show us how big this animal was. This is the only one that has an illustration…So, I would not click there.” News items with images less effective at conveying proof were deemed less trustworthy.

Third, participants described how perceived (c) internal consistency between various components of a story mattered for trust. Especially when clicking through to news sites, instances where “the entire report/article may have different details then the headline itself,” in Bharat’s (India, R1) words, aroused suspicion—what previous research has called the “expectancy violation” heuristic (Metzger et al., 2010). Luiza (Brazil, R3) noted, “I don’t like when they change the topics. You know, they draw attention towards one topic and when you start reading, it’s not that.” Antoine (UK, R2) also felt distrust when “you read a headline, and then you read the rest of the article, you’re like, ‘Hang on a minute, that’s not even what you’re saying in this headline.’” Inconsistency was thus equated with deception.

Fourth, participants in all countries emphasized the importance of (d) consensus across news articles, especially on social media or search, where they were more likely to encounter multiple versions of the same story. Artur (Brazil, R2) suggested that “when searching for information, if three websites report the same thing, then that information is true.” Similarly, Pamela (US, R1) expressed, “I think you can tell quickly if multiple sources are putting out the same information.” On the flipside, Manish (India, R3) was more inclined to distrust “if you see a huge variation…A and B is saying something different.” In this line of thinking, many also expressed skepticism about news they encountered for the first time. As Casimiro (Brazil, R3) said, “if it’s breaking news, something that I haven’t heard before, at first sight, I don’t trust.” These perceptions point to how audiences associate the repetition of information with a greater sense of trust, which can be shaped by how news is consumed online.

Cues centered on presentation

In addition to cues deriving from information contained in news, many participants alluded to mental shortcuts rooted in how such information was presented. Most typically, participants mentioned the (e) tone they perceived in headlines or text snippets, which they often rejected if found to be “too sensationalistic” (Renata, Brazil, R3), “using too much inflammatory statements” (Robbie, US, R3), or employing “doomsday headlines” (Natalie, UK, R3). Sangeeta (India, R3) described losing trust in a news brand after seeing stories that seemed hyped-up: “totally masala [dramatic] type of things they are sharing with us.” Perceived sensationalism was often used as a proxy for a news organization’s intentions, resembling the “persuasive intent” heuristic identified by Metzger and Flanagin (2013) and Fogg et al. (2003). The motivations behind news perceived as trying too hard to “grab your attention” (Prabha, India, R1) were automatically suspect. Candy (US, R3) was put off by headlines that were “intentionally incendiary” or “written to be very attention-grabbing.” Ruben (UK, R3) noticed words “designed to create emotion of one sort or the other; either scare, anger. The more you have of those, the less trustworthy it is.” As these quotes suggest, underpinning participants’ attention to sensational language was concern about hidden motives behind news (Mont’Alverne et al., 2023).

A second presentation cue concerned a news site’s (f) appearance, including its layout and design, although this tended to come up mostly when participants clicked through to articles. This echoes previous scholarship documenting credibility assessments based on website attributes and layout (Flanagin and Metzger, 2007; Fogg et al., 2003). This heuristic was mentioned in all countries but was less frequent among Indian interviewees. Following a Google search, Melissa (UK, R3) scrolled through news sites she was unfamiliar with but nonetheless believed were trustworthy: “I mean, they all look pretty trustworthy…quite genuine.” She was persuaded by one because of “the way it’s laid out,” especially because “there’s lots of writing and paragraphs and headlines, pictures.” Eduardo (Brazil, R3) also noted paying attention to “the text’s formatting” when evaluating a story.

The appearance of a news site was associated with the ease of using it (see also Wathen and Burkell, 2002). As Preeti (India, R3) explained, “as for trustworthiness, I feel the news should be crisp, and it should give you the message in brief and it should be easy to read.” Similarly, Gemma (UK, R2) noted being “very wary of what the website looks like. If there’s a lot of pop ups, and lots of different photos of different things and it’s quite a clogged website, I tend to not trust the news as much.” For these participants, a certain appearance and experience navigating a news site—what Billard and Moran (2022) describe as “genre expectations”—signified greater professionalism and legitimacy, fomenting trust.

The appearance of a news site was associated with a third presentation-based cue focused on (g) advertising, a factor also shaping trust in Fogg et al.’s (2003) study on website credibility. Loud or intrusive ads were typically conducive to less trust. João (Brazil, R3) described his preference for sites with “a clear visual aspect, with few ads, which leads to less visual pollution.” Hannah (UK, R1) also externalized her avoidance of “‘spammy’ websites with loads of pop-ups and adverts.” Analogous to this cue, sponsored news on social media also raised suspicion, with participants paying attention to labels denoting whether posts themselves were ads. Describing a Google search result, Pranav (India, R3) explained he would “neglect first two websites because it’s written ‘Ad’, so it is promoted websites.” Robbie (US, R3) also expressed dislike of “ad-driven results” because “when anybody puts their dollars behind something, there’s a reason for it.” Thus, intentions behind advertising were frequently suspect, and news content associated with publicity drove greater distrust.

Fourth, a subset of participants in all countries alluded to the importance of (h) language, including correct grammar and spelling, as a cue for trust. This is in line with previous studies suggesting people rate stories with more grammatical errors as lower in credibility (Appelman and Schmierbach, 2017). As Rachel (UK, R2) explained, “When I open an article, and I see it’s full of errors and spelling mistakes and grammar mistakes, I know that it’s not something to be relied upon.” Renata (Brazil, R3) elaborated on this point, noting, “I usually try to pay attention if the person wrote correctly because I imagine that when someone is writing a headline, someone’s going to read, to revise. So, there shouldn’t be grammar mistakes.” Veronica (US, R3) added: “a trusted site is going to be very thorough, so you they’re not going to (…) post something that has all these mistakes grammatical errors.”

In India, respondents emphasized language more often. Kartik (India, R1) described his preference for videos from a local outlet because they “speak in fluent regional language; it feels good to hear.” Multiple Indian respondents also alluded to reading English language newspapers precisely for the sake of practicing English. Good language was required to fulfill said function. Pratibha (India, R2) noted, “[The] Hindu has good language, and it covers a lot of stories, everything is inside that newspaper.” Ultimately, proper spelling and language served as shorthand for journalistic rigor, making interviewees unforgiving of such mistakes.

Social cues

In addition to content-driven cues (a–h), participants described cues involving judgments of other people, which were particularly relevant on social media and messaging applications. These social cues could be specific to individuals they trusted to vet information, but also strangers whose critiques they found persuasive, or even indicators provided by platforms suggesting certain news items or sources were popular.

Consistent with Sterrett et al.’s (2019) study, people frequently described paying attention to (i) who shared a news item as a shortcut for judging its trustworthiness. More specifically, news coming from trusted acquaintances was deemed more trustworthy. Vidya (India, R3), a regular WhatsApp user, described the importance of noticing “the person from whom it is coming…When someone I trust posts some news, then I might not even crosscheck.” Similarly, Jerry (US, R3) explained his evaluation of news on Facebook: Most of my friends, who I follow, whose opinions I trust, I let them…filter that stuff for me. (…) It’s not necessarily that I like the news articles with organizations themselves, it’s that I like my friends, whose opinions I trust.

Antonia (Brazil, R3) described how specific WhatsApp groups were more trustworthy as news sources than others. “If it is a university group, like one of my course groups, I trust everything. But if it is something in my family group, then I have to do some more research…because they will share just anything.” An extension of this, some noted that seeing a friend followed a specific news source on social media contributed to their trust in it. Carmen (UK, R3) explained, “seeing if I have mutual friends that like or follow that group or news source, that often would indicate if it’s trustworthy or not, especially if it was friends who are very into politics.” As these quotes suggest, people often outsourced trust, with some describing hierarchies of trustworthiness, whether individuals or groups, who they believed were better or worse at judging news for them.

Second, some participants drew on (j) comments for determining what information to trust, or more commonly, what not to trust. This is consistent with previous work finding negative comments decrease message credibility (Boot et al., 2021; Waddell, 2017). Renata (Brazil, R3) discussed how she “would read the comments and see what people are talking about. And if they have the same perspective that I do, the headline is not reliable.” Russell (US, R2) described “looking at the comment section” and identifying whether “I see a lot of comments agreeing” or “completely opposing” the news. Similarly, Neha (India, R3) noted, “Foremost thing is, I take comments. In that, the people used to mention, ‘I have already gone through this website, and it is fake.’” She added, “I trust that most people don’t comment badly.” Like Neha, others tended to trust unknown individuals online who they viewed as less likely to have a suspect agenda over news organizations whose intentions they were generally suspicious of. As such, they were often willing to delegate information vetting to others they did not personally know when it came to flagging untrustworthy news.

Third, some relied on (k) platform metrics such as likes as indicators of news quality. Sunita (India, R3) justified her skeptical approach to a Facebook post, saying, “No [I do not trust it], because if I open the page, then only 19 people have liked it.” Douglas (Brazil, R3) used similar reasoning, explaining “that I see that there is a high number of views. I think that the more views they get there, the more trustworthy they are.” Jerry (US, R3) suggested that click metrics contributed to perceptions of trustworthiness because “if it’s already made to this point, it’s got to be legitimate to some degree.” 6 Thus, some saw quantified platform metrics as proxies for trustworthiness (Waddell, 2017)—however, other research has shown these tend to be seen as more reliable indicators by those with lower knowledge about news (Schulz et al., 2022).

Platform cues

While social cues were often enabled through features of social media platforms, a subset of participants described relying on cues provided by platforms themselves. These included how platforms made certain kinds of content more prominent or labeled information or sources. However, these cues were more often polysemic, leading to different interpretations from one person to another.

First, many highlighted the (l) ranking or order in which information was presented—specifically when using the Google search engine. Consistent with previous work, highly ranked results were seen as more trustworthy (e.g., Hargittai et al., 2010). For example, Sukumar (India, R3) explained his interpretation of Google search results, noting, “Definitely something on top is a priority.” Melissa (UK, R3) detailed the rationale behind this preference, explaining, “I probably click on the first result. That’s the whole SEO thing, search engine optimization, because that’s the top search result.” Rosemary (US, R3) was under the impression that Google search results were vetted by the platform: “I think it tries to give you the reputable sources first; the ones that have peer reviews by government sources, by experts in the field.”

While most viewed high rankings as a cue for trustworthiness, some offered different interpretations. Reviewing the results of a Google search, Barbara (Brazil, R3) explained that “as many people consume that specific news, the first pieces are those on top. I think that they’re manipulative.” She recalled one news source was “always ranking first, so that makes me mistrust that information.” Barbara viewed the privileging of certain sources as suspect, triggering a different mental shortcut—one inviting less trust.

Second, participants described using (m) platform labels to judge trustworthiness. Verification checks, used on many social media platforms, were, for most, signifiers of greater trustworthiness. As Abhijeet (India, R3) explained, “this source has a blue tick, which means it’s verified through Facebook (…) Blue tick means the source is authentic.” Similarly, Carmen (UK, R3) described a news item that came up in her newsfeed: “It seems trustworthy. It has the verification tick next to it, and … I have mutual friends that have liked the page.” Carmen nested two kinds of heuristics related to platform cues and social cues. Trust was thus associated with platform-granted authenticity—a source being who they say they are.

We asked WhatsApp users about perceptions of the label identifying messages as “forwarded many times.” Here, too, we found differences in the heuristics elicited. While some said these labels didn’t impact their trust, others said this label indicated messages needed to be approached with caution. In Aarti’s (India, R3) words, “Definitely, when something is forwarded so many times, it is either for promotional purposes or it is a propaganda…I will definitely trust it less.” However, others, such as Beatriz (Brazil, R3), viewed the label as signaling importance: “If people are getting a piece of information that’s been shared by many, it’s important.” Vidya (India, R3) trusted such information more, noting, “if it is forwarded so many times, then people must have seen something good in those messages.”

Even less ambiguous platform labels, such as those warning sources might be compromised or information might be incorrect, were read in different ways. For example, Gabrielle (UK, R2) said she used Twitter’s label warning about “fake news”: “It brings to my attention [that] what I’m reading I shouldn’t actually believe to be the truth.” However, others were skeptical of these labels. Lucas (UK, R3) described an experience with a source he often saw on Facebook: “I always used to trust that site. And then when it came up saying ‘Russian sponsored.’ I was, like, ‘Well, do I not trust them because they’re Russian, or do I not trust Facebook for saying it’s Russian?’” Differing orientations toward platform companies guided if and how platform-driven labels were used heuristically.

Discussion

Cues for trust heuristics online when source is unfamiliar.

aWe focus on news websites, social media (Facebook), messaging apps (WhatsApp), and search (Google).

Our study builds on previous work by psychologists and sociologists identifying a range of heuristics and cues that people in principle could rely on in judging information to document the ones people in practice say they do rely on when it comes to news online. Our focus on trust judgments on platforms specifically extends prior work on credibility heuristics conducted mostly before the rise of these technologies, which are increasingly important to how people encounter news online, and which integrate unique affordances that meaningfully shape heuristics.

Our cross-national and cross-platform approach allows us to examine variation across both countries and digital technologies. We found fewer differences across the four countries (Indian participants, for example, more often mentioned language and were less likely to attend to the appearance of online news sites as salient cues), but these were mainly a matter of degree. Instead, we found more substantive variation across platforms, which make particular cues more or less salient, resulting in different combinations of heuristics. Whereas images or the tone of headlines were important in all cases, for example, specific user metrics acquired prominence on social media, where such information is made plainly visible. This underscores how platform affordances may activate varying heuristics with regards to news. While some cues are necessarily used for heuristic judgments (e.g., content cues centered on presentation), others can overlap with more systematic processing (e.g., content cues centered on information). Whether a cue is used more systematically or heuristically depends on how individuals engage with it.

The importance of heuristics for trust documented here does not mean news organizations should abandon other efforts to build trust, many of which may improve their journalism and be met favorably by audiences attending to their brands’ reputations. However, for others, such approaches may be downstream or disconnected from how they use and experience news online. Reaching audiences—especially those most disengaged—may require meeting them where they are: attending to the cues most legible to them in the media environments they use. Further research is needed to understand how cues are processed over time, relate to one another, and are attended to by different audience segments. Furthermore, we focused on people’s relationship with news and how they encounter it—even as, more broadly, judgements of trustworthiness will also be influenced by other factors, including attacks on the media by prominent politicians.

As important as we believe focusing on “shortcuts to trust” may be for news organizations, it is also unlikely these factors alone will be enough for individual brands or the news media at large to increase trust levels where they have declined. As Nelson (2021) points out, audiences are never fully knowable, and initiatives aimed at improving relationships with audiences must consider the limitations of such endeavors. However, to the extent that news organizations are concerned with how individual news items they distribute on platforms are evaluated, especially by less familiar audiences in often fleeting encounters, our findings suggest attending to these heuristics is critical. These findings also have implications for how news organizations weigh the costs and benefits of engaging in differing online contexts, some that they have less control over (i.e., platforms) than others (i.e., their websites). Moreover, audience reliance on heuristics can create tensions for newsrooms, elevating seemingly surface-level indicators over qualities journalists may wish to accentuate about their own standards and practices. However, it is perhaps unreasonable to expect audiences to employ more cognitively demanding systematic processing when choosing what to trust, especially given the variety of ways people use platforms, much of which has little to do with news.

We remain agnostic about whether using shortcuts to trust is good or bad, but it is easy to see how such strategies can lead people to erroneous conclusions. One could wrongly assume a friend follows a source on Facebook because they trust it or vetted an article before sharing it. More concerning, heuristics can be weaponized—something bad-faith actors have long intuited (e.g., Sundar et al., 2021; Mena et al., 2020). Indeed, data can be fabricated, images manipulated, and websites designed to create an aura of legitimacy. While public assessments about the trustworthiness of news outlets tend to correlate with expert assessments (Schulz et al., 2020), these patterns may mask variation among those less familiar with brands. Trust is not always the same as trustworthiness. We all sometimes trust things we should not (or distrust things we could).

These findings should be interpreted in light of their limitations. Self-reported data is susceptible to biases, which can impact the heuristics participants discussed. Furthermore, people may not always be aware of or able to verbalize all heuristics they use. While our inductive design draws on interviews and focus groups with a considerable number of people, especially for a qualitative study, the think aloud portion ultimately relies on small samples per platform per country. We might have found more variation cross-nationally or across specific demographic subgroups with larger samples. That said, as stated at the outset, our aim was to describe the mental shortcuts people report using when judging news online grounded in their real-life experiences. The striking consistency in heuristics we found across such different countries, despite sample limitations, is noteworthy. We hope this study is generative for future researchers employing complementary methods, including experiments and surveys, better suited to capture differences in how heuristics are used and combined in distinct ways across countries or demographic groups.

Finally, relying on cues for trust does not mean other factors are unimportant. We found evidence of widespread skepticism among many participants (Mont’Alverne et al., 2023), often anchored in pervasive negative ideas about the news media, which are often the focus of other research on trust in news. These unfavorable views are a salient backdrop for how many experience news abundance online. Paying attention to heuristics can help inform future research around their relative importance compared to these other factors.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was supported by the Meta Journalism Project (CTR00730).

Notes

Appendix

Breakdown of Samples.

Chat focus groups (Round 1)

In-depth interviews (Round 2)

Think aloud interviews (Round 3)

Brazil

Brazil

More trusting

8

9

Facebook

10

Less trusting

8

6

Google

10

WhatsApp

10

India

India

More trusting

9

8

Facebook

10

Less trusting

8

9

Google

10

WhatsApp

10

UK

UK

More trusting

11

8

Facebook

10

Less trusting

9

7

Google

10

US

US

More trusting

11

8

Facebook

10

Less trusting

6

7

Google

10

Total

70

62

Total

100

Appendix B

For our first (chat-based focus groups) and second (in-depth interviews) rounds of data collection, we partnered with the research firm YouGov to recruit participants in all four countries. We screened for people who were both more and less trusting in news, using a screener survey question, derived from Strömbäck et al. (2020). Respondents were asked to what extent they agreed with the statement, “Generally speaking, I can trust information from the news media in [applicable country.]” We also screened study participants to capture a variety of other characteristics, including age, partisanship, and forms of media use. The focus groups were divided up by level of trust, such that people with lower trust were grouped together and those with higher trust were also grouped together. That said, in practice, it was often difficult to distinguish between those belonging to one group or the other except in extreme cases.

Given our need to conduct interviews and focus groups online amid the COVID-19 pandemic, we focused specifically on people on the higher end of the socio-economic spectrum in each country: those with access to reliable internet connections, most often residing in major metro areas, and with average or above-average education. In practice, such people generally have the most advantaged access to information in their respective countries. YouGov facilitated the text-based focus groups on their own chat-based platform, using a guide we jointly put together. We observed these sessions. Meanwhile, the interviews were conducted by members of our research team.

For our third round of data collection based on think aloud interviews, we partnered with Inteligencia em Pesquisa e Consultoria (IPEC) in Brazil and Internet Research Bureau (IRB) in India, the UK, and the US to identify and recruit participants. In addition to seeking variation across demographic characteristics (see Appendix C), we focused on including participants with (a) below-average interest in politics relative to others in their country, (b) below-average trust in news, and (c) regular use of one of the three designated platforms (Facebook, Google, and WhatsApp). Both the questions used and the cut-offs for inclusion were based on findings of a prior study (Toff et al., 2021), where we had previously identified the characteristics of “generally untrusting” people in each of the countries. Due to differences in response patterns by country, the cut-offs for eligibility varied by country.

We measured political interest by asking “How interested, if at all, would you say you are in politics?” with responses measured on a five-point scale from “extremely interested” to “not at all interested.” We measured trust in news by asking participants: “Generally speaking, to what extent do you trust information from the following,” and offered 15 different news organizations in their countries separately enumerated. Those who said they “somewhat” or “completely” trusted a below-average number of news brands for their country were eligible. Here too the cut-off varied from one country to another. Lastly, we measured use of digital platforms with the question: “How often do you use the following for any purpose (i.e., for work/leisure, etc.)? This should include access from any device (desktop, laptop, tablet or mobile) and from any location (home, work, internet cafe or any other location).” We focused on Facebook and Google in all four countries and WhatsApp only in Brazil and India. Participants who said they used at least one of these platforms at least “2–3 days a week” were considered eligible.

While some participants often used more than one platform, each interview focused on a single one. Thus, while we asked participants about general media use early in the interview, when moving to the platform- specific section, where participants described and responded to what they saw on their screens while using a platform, we concentrated on only one per individual. During the practical portion of the interview, we spent some time understanding what content participants sought out or were presented with when using the platform as they would in their everyday lives, for example, while scrolling through their Facebook feeds, reading through group chats on WhatsApp, or running searches on Google about topics relevant to them or that they might typically look up. Whenever they narrated coming across items they considered to be news, participants were asked to discuss them in greater detail, including whether they could trust what they saw and what aspects they noticed and paid attention to. They were also invited to interact with each item as they normally would (e.g., clicking on a story or simply reading the snippet).

Our decision to ask participants to use their actual accounts or applications, rather than artificial simulations of these platforms, meant that the concrete pieces of information (including the format) and sources encountered varied from one person to another, including the fact that some came across more news than others, and while some chose to click through to news websites on occasion, others relied entirely on the information available in the snippets or previews as displayed on each of the platforms. Given that many did not organically encounter much news or any at all, toward the end of the interview we also asked some participants to do things they may not normally do otherwise, such as conduct Google searches for news-related topics specifically or navigate the Facebook news tab in an effort to better understand their sense-making around news specifically (at the time of our interviews, this feature was available in the US and UK, and even there, most interviewees did not even know the feature existed, much less used it with any regularity).

Appendix C

Demographic distribution by country of the think aloud intereviews (Round 3).

Country

Variable

n

%

Brazil

Female

17

57

Male

13

43

18–29

12

40

30–39

9

30

40–49

5

17

50+

4

13

White

9

30

Mixed

15

50

Black

6

20

Atheist/Nothing in particular

19

63

Catholic

3

10

Evangelical or Pentecostal

8

27

India

Female

15

50

Male

15

50

18–29

10

33

30–39

6

20

40–49

10

33

50+

4

13

General/Upper

18

60

OBC

9

30

Dalit

1

3

None/NA

2

7

Hindu

26

87

Muslim

3

10

Prefer not to say

1

3

United Kingdom

Female

12

60

Male

8

40

18–29

5

25

30–39

6

30

40–49

5

25

50+

4

20

White

16

80

Asian

2

10

Black

1

5

Other

1

5

Nothing in particular

13

65

Muslim

3

15

Catholic

2

10

Other

2

10

United States

Female

11

55

Male

9

45

18–29

2

10

30–39

5

25

40–49

5

25

50+

8

40

White

12

60

Black

3

15

Latino/Hispanic

2

10

Other

3

15

Nothing in particular

5

25

Christian non-denominational

4

20

Catholic

4

20

Baptist

2

10

Other/Prefer not to say

5

25