Abstract

Over the last 60 years, media outlets have been covering emerging technologies like artificial intelligence (AI) and automation. This countrylevel study wants to give a nuanced overview of how these technologies were covered in US newspapers. First, a Latent Dirichlet allocation (LDA) topic modeling was conducted on articles on AI and automation in The New York Times and The Washington Post between 1985 and 2020. Second, an inductive manual framing analysis was conducted to distinguish the frames that both newspapers applied over time. Results from the topic modeling show that articles on AI and automation are most prominent within ‘Work’, ‘Art’, and ‘Education’. Concerning the manual framing analysis, the coverage has been more optimistic than pessimistic over time. However, when dystopian frames are considered, the results show that there has been more attention in the corpus on the impact of AI and automation on ethical conundrums involved with these technologies.

Introduction

Artificial Intelligence (AI) and automation have become ubiquitous concepts, as they are included in strategic documents of governments, policies are drafted to regulate certain applications and they are used to accelerate the digital transition. In a study by Diakopoulos (2020), both technologies are seen as the biggest disruption in the labor market and have therefore the ability to change these markets structurally. Moreover, AI and automation are often leitmotivs in popular culture, as several sci-fi films and books have explored the power and danger of these technologies (Fast and Horvitz, 2017). Partly due to technological breakthroughs in recent years like advanced machine learning algorithms, the gap between scientific reality and popular culture is narrowing and becoming more genuine, making both terms more prominent in the media and thus more central to public debate (Cave and Dihal, 2019; Chuan et al., 2019). Yet this portrayal of AI and automation has not only generated enthusiasm, but these technologies have also resulted in a great deal of concern.

To better understand the positive and negative portrayals of these technologies, this country-level study wants to examine news coverage over time and evaluate which frames news media utilize when they include AI and automation in their coverage. Indeed, both terms have been discussed in news reporting in roughly the same period, the 1940s and 1930s, which made them technological concepts that occurred in the same articles (Fast and Horvitz, 2017). In this study, AI technology (cause) is reviewed as the necessary condition to enable the specific automation (result) that results from it. In other words, these smart software systems can be seen here as the means, while the automation of these processes, for example by software or an algorithm powered by AI technology, is the result.

Although there is a deep connection between the research and development of AI and automation, there has been little research on how these interconnected concepts have been framed and perceived over time. Due to the multifaceted nature of both concepts, it is crucial to map the positive and negative portrayals and to foster more nuance in the debate on these technologies that are often described as a ‘war on AI' or a ‘conflict of automation' (Cave, 2019). The central concern of this paper is that both concepts are characterized wrongfully and are met with oversimplified imaginaries that have the potential to influence the deployment of technologies that are affiliated with AI and automation.

Indeed, this study is embedded in similar theoretical and empirical work on how emerging technologies are perceived and framed (Cave, 2019). Earlier research on the framing of nanotechnology for example has shown that these characterizations are highly correlated with how the public opinion makes sense of these technologies from news reporting (Cave and Dihal, 2019). Therefore, this study provides a more in-depth and nuanced discussion of the benefits and risks of AI and automation in news media in order to enable a critical assessment of the current and future (mis)use of both concepts.

Literature review

Artificial Intelligence

Decades after the publication of Turing’s (1950) study ‘Computing machinery and intelligence' and the Dartmouth conference where the term AI was introduced, binary mathematical models have resulted in AI expert systems that have widespread use in everyday life (Epstein et al., 2018; Feigenbaum, 2003). This widespread use has led to a multitude of conceptualizations and applications of the term Artificial Intelligence (AI) which is defined in this study as: “A collection of ideas, technologies that involve a computer system to perform tasks that would normally require human intelligence” (Brennen, 2018: p. 34).

This broadening of conceptualizations and applications shows that AI has evolved into a more multifaceted concept that includes a broad tent of technological processes. Partly due to this evolution, terms, and technologies such as ‘algorithms', ‘machine learning', and ‘blockchain' are included in AI while these terms evolved with the ever-evolving technologies (for an overview of these technologies, e.g., Nilsson, 2009). For example, applications that are fueled by AI technology in 2022 can retrieve information, coordinate logistics, handle inventories, monitor tax applications, provide financial services, translate complex documents, write business reports, prepare legal briefings, and diagnose diseases (Chuan et al., 2019; Spyridou et al., 2013).

The multitude of applications around AI also has an impact on its perception, which scholars have often described as either rather utopian or dystopian (Fast and Horvitz, 2017). This study, therefore, seeks to identify whether these perceptions are consistent with how AI is discussed in news reporting. Our research builds on the theoretical and empirical studies that have evaluated the utopian or dystopian framing of (earlier) emerging technologies like televisions, telephones, and computers (Nilsson, 2009). In analogy with these technologies, we want to give a brief overview of the perception of AI from its introduction till the 2020s.

When Turing’s research got published in 1950, AI was regarded by him as a utopian application that would enable humans to improve their lives and even become immortal (Epstein et al., 2018). Nilsson’s (2009) research, which zooms in on these early days, speaks of an actual ‘AI boom' because the novelty of the technology made researchers lyrical about the ‘infinite possibilities' of AI.

This perception would normalize in the 1960s and 1970s, mainly due to a growing awareness that the technological applications had many limitations (e.g., errors in statistical models, limited computing power due to malfunctioning processors). This led to a more ‘down-to-earth' attitude among researchers. During this period, a great deal of emphasis was placed on Boolean logic (true/false) within AI, which led to a greater lack of interest in AI, which also resulted in a partial drying up of funding for research into the technology (Nilsson, 2009). This period in the 1980s and early 1990s is known as the so-called ‘AI winter' where the more dystopian narrative prevailed (Fast and Horvitz, 2017).

In the mid-1990s, there was a renaissance that led to new research perspectives on AI, partly due to the rise of the internet. Thus, more tasks came with building systems that were driven by real-world data that were more feasible (Haugeland et al., 1985). The technological advances of AI and the rise of the Internet also resulted in a cheaper way to develop those systems, allowing AI to process larger amounts of data for further analysis. These developments again led to a more utopian attitude towards AI (Nilsson, 2009).

Automation

As with AI, the applications in automation are very versatile. The process of automation was coined by Delmer S. Harder in 1936, the Vice President of the Ford Company (Hitomi, 1994: p. 122). The term automation had existed for some time, but it was not used until the 1940s to refer to the process of transferring work items between machines in a production process, where human handling is limited (Veillette, 1959). Considering this process, automation is defined as "a technique, method or system for operating or controlling a process by highly automatic means, such as software, which minimizes human intervention" (Endsley, 1999: p. 462). In other words, this means that automation has one main goal: to have machines perform tasks of a repetitive nature or, as Zuboff (2015) puts it, "to take the robot out of the human" (Zuboff, 2015: p.77). Several periods of intensive automation have taken place in recent decades, just as they took place in the manufacturing industries in the nineteenth and twentieth centuries (for an overview, see for example Rasmussen, 1982).

The definition of automation specifically points to the least possible human intervention, which, according to Nilsson (2009), has resulted in a greater fear among people of losing their jobs. This dystopian image has only increased, partly due to the current applications of AI technology (see Chuan et al., 2019). The current basis of dystopian alarmism, Acemoglu and Restrepo (2018) argue, can be traced back to the fear that AI will have more far-reaching effects on labor than previous waves of technological change and automation, i.e., the partial replacement of jobs and the creation of new tasks that AI systems must supervise. Since AI systems are often described and framed as 'the next big thing' that will transform workplaces, the perception of automation is closely related to that of AI (for a comprehensive overview, see Brynjolfsson and McAfee, 2011).

To get a more nuanced overview of the perception of both AI and automation, this study focuses on the different topics in news coverage in which AI and automation are discussed. Is the overview within topics such as work more positivistic than for example within topics concerning health care? How do these topics relate to the image formation or the frames over time and can we conclude that the coverage of AI and automation is reviewed as a media hype? Subsequently, these topics are a prerequisite for bringing more nuance to the earlier research on the perception of AI and automation and should therefore transcend the oversimplification of AI and automation by going one step further in the methodological framework. In the past, mere frames have been examined, without considering the topics in which they occurred (e.g., Chuan et al., 2019). To obtain a more nuanced overview of the topics or subjects of AI and automation, the following research question was obtained: RQ1: Which topics can be distinguished from AI and automation in articles in US newspapers between 1985 and 2020?

Research on the framing of emerging technologies

Over the past two decades, framing research on emerging technologies has been conducted in both the frame-building (e.g., Nisbet et al., 2002; Weaver et al., 2009) and frame-setting processes (see e.g., Beck and Vowe, 1995; Kelly, 2009; Scheufele and Lewenstein, 2005; Schudson, 2001). This study builds on the theoretical and empirical results of a maturing field, namely the study of the perception of emerging technologies.

Beck and Vowe (1995) for example examined media perceptions of emerging multimedia (i.e., Internet and mobile telephony) and identified dominant patterns of argumentation that emerged such as “boosting human productivity through the help of technology” (p. 551). This study showed that the argumentation around emerging technologies is predominantly positive rather than negative. Scheufele and Lewenstein (2005) found that media frames about nanotechnology had a stronger influence on public opinion than purely factual information which points towards the relevancy of the distinction of existing frames in emerging technologies. Kelly (2009) evaluated the role of media discourse in the introduction of the microcomputer and concluded that if a new technological application is heralded as ‘revolutionary', this technology is presented within rhetoric that is incorporated into traditional values, roles, and practices such as ‘emancipation' and ‘human autonomy'. These microcomputers, the study concluded, were thus described as reliable tools or as a kind of extension of the human being that respected ‘our' freedom.

Despite the research on the framing of emerging technologies, there has been little empirical research into what topics AI and automation occurred within and what frames can be distinguished over time. Two studies by Fast and Horvitz (2017) and Chuan et al. (2019) have reviewed the framing of AI in media coverage in a rather limited way. Fast and Horvitz’s (2017) study examined The New York Times' coverage over the past two decades, focusing on how AI was covered in newspapers rather than distinguishing various frames. They concluded that the positive perceptions around AI are more prevalent (i.e., helping and automating daily life, gaining insight into certain databases through AI’s increased computing power), but that in recent years there has been more focus on the various negative consequences of AI (i.e., a bias of algorithms in racial profiling, automatic weapons based on AI). Thus, this study focused more on the positive and negative coverage of AI where they examined the discussion of AI in The New York Times in a broader way. The study by Chuan et al. (2019) analyzed more newspapers, but the study looked for frames within a limited number of articles (N = 300). The study concludes that the ethical frame has become more prevalent in the last decade. A closer examination of the articles in which ethics or moral issues were discussed as the main theme, shows that both positive and negative aspects of AI have emerged.

Chuan et al. (2019) point out that news media influence people’s attitudes towards emerging technology (see also Cave and Dihal, 2019). Therefore, a more in-depth and nuanced discussion of the risks and benefits of AI in news media is needed to enable a critical assessment of the use and misuse of AI. This study portrays a more nuanced image by first distinguishing the topics across time and, within those distinguished topics, looking for the frames that have appeared in recent decades – looking further than the utopian and dystopian dichotomy that is often raised in similar studies. By analyzing more than a thousand articles manually in this study, we want to go beyond the analyses of Fast and Horvitz (2017) and Chuan et al. (2019) by distinguishing all possible topics and frames over time. This brings us to the second research question:

RQ2: What frames can be distinguished between AI and automation in articles in US newspapers?

By examining the topics and frames over time, a more multifaceted overview of the topics and frames around AI and automation on the one hand, and for emerging technologies on the other, emerges. This more nuanced overview can create a reference to better explain why there are sometimes supernatural or ominous expectations associated with smart technologies and the processes they entail.

This research undertakes a framing study for two reasons. First, frames help to show what is going on with a certain problem or social issue. Facts do not stand alone but are given meaning by embedding them in a narrative. As Figoureux (2020) describes, the dynamic yet persistent nature of frames ensures that they play an important role in communication, especially when it comes to social issues that impact our daily lives. These frames change shape, but in the end, they see a limited number of frames that keep recurring over time.

Second, frames also simplify reality. According to De Vreese (2005), the essence of framing is the sizing of information, i.e., enlarging or reducing certain elements of reality to make them salient. This oversimplification is also present in AI and automation, writes Brennen (2018), focusing on the two extreme poles of the frames around AI and automation, i.e., on one side there are the utopian dreams of jobless futures and eternal life, and on the other side, they find the dystopian nightmares of robot uprisings and Orwellian apocalypse. Therefore, this study aims to provide a thorough longitudinal overview of how AI and automation have appeared in the various topics in The New York Times and The Washington Post.

Method

To distinguish the topics and frames over time, this study used a mixed-method approach: topic modeling in combination with a manual inductive framing analysis. For a detailed description of the topic modeling method, as well as a comparison with other existing methods (including supervised machine learning and manual content analysis) this study refers to the works of Rentier (2017, 2019). This method has previously produced valid research results by using US newspaper articles (e.g., Walter and Ophir, 2021). As a result, a topic model was used on the topics of ‘automation‘ and ‘artificial intelligence' in articles from The New York Times (NYT) and The Washington Post (WP). In this study, it is important to note that we have deliberately chosen ‘automation’ and ‘artificial intelligence’, as they encompass a wide variety of technological processes such as ‘algorithms’, ‘blockchain’, and ‘machine learning’. Some of the latter-mentioned processes are not as timely as the terms automation and AI but they have been included in the analysis as well because most of the articles that were analyzed mentioned these more recent technological processes.

Topic modeling (LDA): Sample and analysis

Topic modeling is a semi-automatic, unsupervised method for the analysis of textual data in which a set of topics is extracted from a given corpus. As topics have their own characteristics, this study defines them as "statistical entities, collections of frequency distributions of words. It is a bag-of-words approach, which means that the narrative, the place in the text, and the syntax are not considered" (Wallach, 2006, p.32).

Two main rationales were considered for the initial selection of the sample. First, the selection of both newspapers was deliberate because the archives of The New York Times (NYT) and The Washington Post (WP) are the only news outlets where all articles from the designated period have been digitized and openly available for researchers via an API. Second, both newspapers are internationally known and have in what Meraz (2009, p. 47) has called an ‘elite status’. The central role of intermedia agenda-setting that The New York Times and The Washington Post are playing in our modern societies, in combination with being ‘newspapers of records’ that have been used in many studies of international news coverage, make these news organizations highly eligible for a framing analysis (Golan, 2016).

For the initial selection of the sample, we utilized a search query where keywords ‘artificial intelligence’ + ‘automation’ was looked for using Python. This query extracted 43,732 articles that were published between 1985 and 2020. A verification was then carried out via LexisNexis to see whether all articles were included in the selection. The articles on ‘automation' and ‘artificial intelligence' from before 1985 have not all been digitized, which is why this country-level study opted to analyze only the years for which a complete archive exists. A python script was then used to break down the 43,732 articles into individual documents containing the full meta text and to download their body text.

After removing extremely short articles from the corpus (<500 characters), the corpus was reduced to 33,732 articles. For the topic modeling procedure, duplicate articles as a cause of data processing errors were removed, resulting in 10,089 in the final sample. The articles were edited according to common guidelines, including removing stop words, converting capitals to lower case, removing punctuation and numbers, and removing words that occurred in more than 99% of the documents or in less than 0.5% (in that order).

Latent Dirichlet allocation (LDA) and Gibbs Sampling (Blei et al., 2003; Jacobi et al., 2016) were used in this work, using the LDA packages for Python. To determine the number of topics (k), this study used perplexity scores for different candidate models, ranging from k of 10-220 in steps of 10. After, 10-fold cross-validation was used to obtain average perplexity scores over 10 iterations per model. As this is a computationally-intensive process, tests were performed on a random sample of 20% of our corpus. The maximum point for the second derivative of all changes in the perplexity score was used when moving from a model with a k value to one with (k + 10) value, which is the range of k where increasing k gives a decreasing return. Based on the results of this process, the optimal k-value of 10 topics was chosen for the model. To interpret and label topics, three types of information were examined: the words with the highest load on each topic, the words that were both prevalent and exclusive to each topic, and the full articles that were most representative of each topic.

Manual framing analysis: Sample and analysis

The frames were obtained by performing an inductive framing analysis (see Gamson & Modigliani, 1989). As with the topic modeling, the sample included articles on automation and artificial intelligence from 1 January 1985 to 31 December 2020 collected via the API of The New York Times (NYT) and The Washington Post (WP), and LexisNexis. This resulted in a sample of 1039 articles (each time the 10th article of the corpus of the topic modeling) which were all manually analyzed to distinguish the different frames in an inductive way.

After the selection of the 1039 articles, the texts of the articles were read and analyzed thematically to look for the textual parts that dealt with automation and artificial intelligence. This involved looking at how the two terms were defined, and how they were presented as utopian or dystopian or not, after which there was an open association with the extract in question and formulating various keywords. Of the 1039 articles, 1937 fragments were assigned at least one code, indicating causes, consequences, solutions, moral evaluations, lexical choices, and the use of metaphorical language and catchphrases.

The thematic coding continued until the first patterns became clear. Through the codes and by comparing the different fragments, it was examined how the reasoning and framing devices were logically related, to arrive at the frame packages step by step. Because it was not clear at the beginning of the process which frames would be obtained, the articles read at the beginning of the process were analyzed more thoroughly. Once the textual fragments were brought together via axial coding and, in a final step, frames were clearly delineated. Within the manual framing analysis, 76 articles did not show any frame; most often articles that provided purely economic updates from companies mentioning the terms AI and automation.

Results

AI and automation from 1985 to 2020: Topic modeling

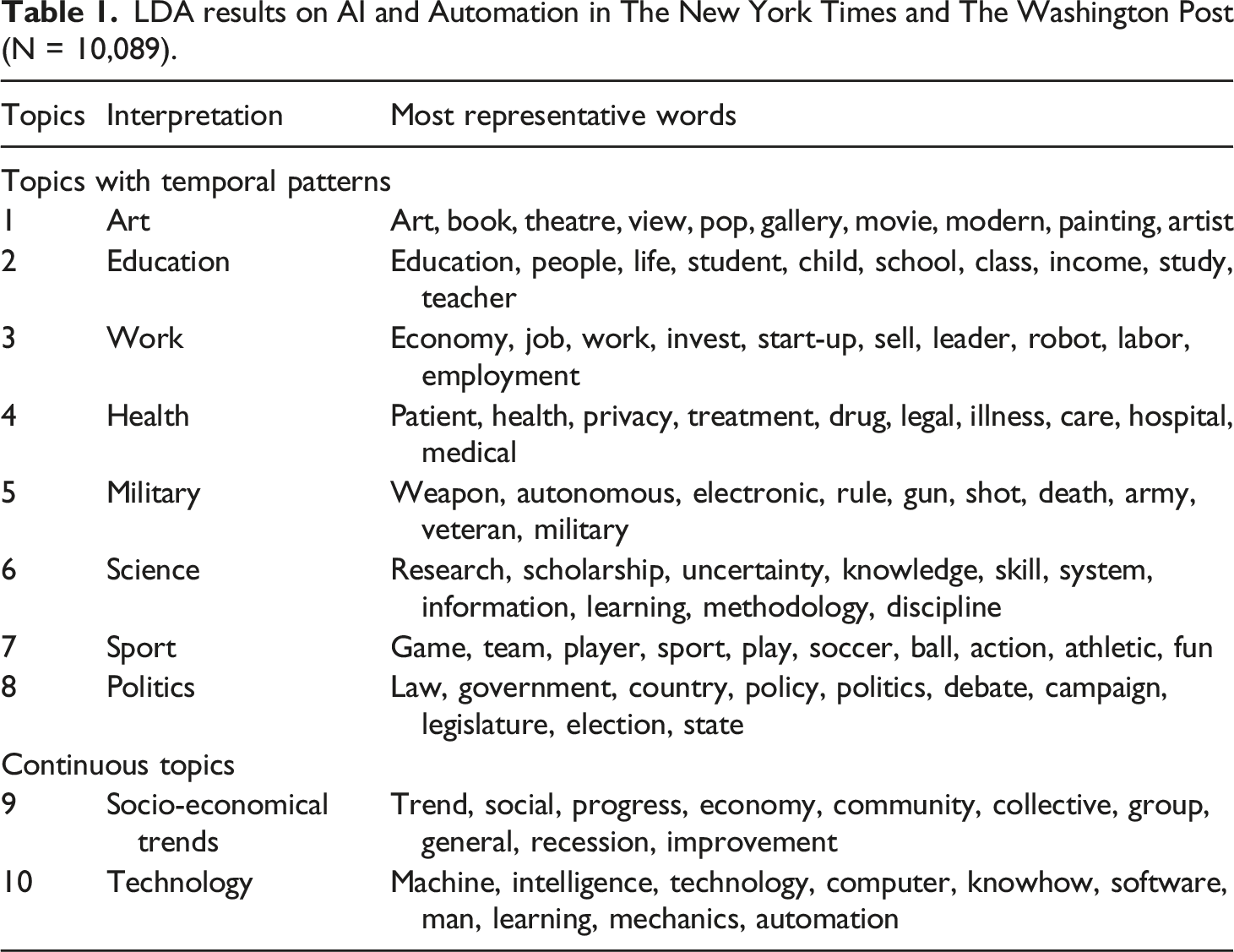

From the corpus of 10,089 articles, 10 dominant and useful topics emerged. Table 1 shows the topics that emerged from this analysis, including our interpretation and the 10 words that most strongly represent a topic. For our results, this country-level study uses the division proposed by Jacobi et al. (2016), namely: (A) topics that show a pattern in their use over time and thus fluctuate more and (B) topics that are more or less continuous. By using this division, it becomes clear which topics have a more continuous lifespan. This allows for substantive and insightful analysis of the articles on AI and automation and the topics that have appeared over the years. Topics with temporal patterns LDA results on AI and Automation in The New York Times and The Washington Post (N = 10,089).

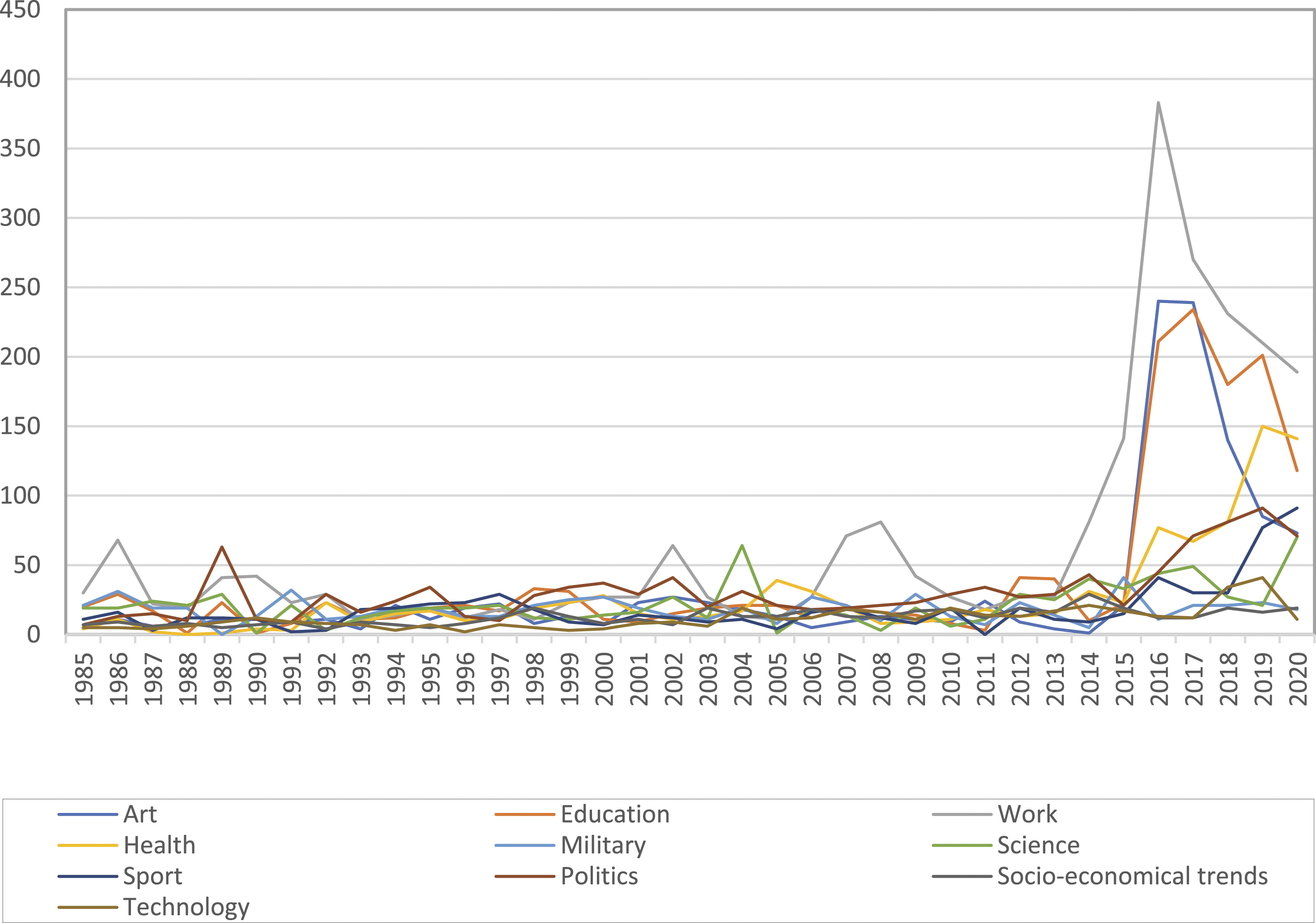

Firstly, several topics were found that show a strong temporal dimension. They are prominent in the news in some years or decades but appear in more latent ones. In general, the last 5 years (2015–2020) have seen significantly more news coverage around AI and automation. From the corpus of 10,089 articles, eight topics are distinguished with temporary patterns, namely: (1) Art, (2) Education, (3) Science, (4) Health, (5) Military, (6) Work, (7) Sport, (8) Politics.

News items on (1) ‘Art' deal with applications of AI and automation in the arts, such as books and films with words like "View", "Modern" and "Artist" that can be linked to artists using new technology or films that deal with AI. In addition, the book 1984 by George Orwell is regularly mentioned within this topic, often linked to the dominance of smart technology. In general, this topic does not appear often in the dataset, but articles on AI and automation have become more prominent in recent years, especially since 2015, as smart technology is increasingly being used in the arts. At the same time, this topic has experienced a decline since 2017, just like other topics such as ‘Education' and ‘Work'.

For the topic on (2) ‘Education', a similar evolution as with ‘Art' is noticeable. A first increase has already been noted between 2012 and 2013, after which the topic reaches a peak in 2016 with 234 articles with words like "People", "Teacher" and "Income". From the analysis of the corpus, news coverage around ‘Education' is more prominent when it comes to AI and automation, which often has a strong digital component, such as digital and blended learning with the help of computers and later with the support of smart technology.

For the topic (3) ‘Work', we conclude that this has been the most prominent topic over time. The peak of articles on ‘Work' is reached in 2016 when 383 articles appeared on AI and automation respectively. Throughout the 35 years that the topic of work has been reported, there is a clear presentation of this theme, often linked to the consequences for the employee. For example, there is often talk of job loss due to the deployment of smart technologies, often linked to the automation of various work packages.

The fourth topic, (4) ‘Health', is about the broad applications of AI and automation in the medical world with words like "Patient", "Treatment" and "Care". This topic has become increasingly prominent since 2015. In contrast to the aforementioned topics, Health is a topic that is being written about more and more, reaching its peak around 2019. In 2020 a small stagnation is observed with 141 articles compared to 150 articles in 2019. Here, very strong reference is made to the help that robots (which are often described as extremely precise) can offer in operations or in detecting certain diseases through data analysis.

In the topic (5) ‘Military', the analysis that there are mostly articles on the deployment of 'smart weapons' to the use of new technology in armament. Words such as "Autonomous", "Death" and "Army" are frequent in the topic ‘Military'. Since 2014, there has been a slight increase in the number of articles on this topic due to an increased focus on the discussion around (semi-)automatic weapons.

If one focuses on the topic (6) ‘Science', just as in the Military, a clear trend can be seen with words like "Scholarship", "Uncertainty" and "Methodology". In the 1980s, AI and automation were very often referred to as applications and techniques that arose in university labs. Over the years, science has often been referred to, as Nilsson also writes (2009), as a kind of messiah to further explore the technology around AI and automation. A peak is observed in 2020 when many articles were published on the use of science and new technology in the context of Covid-19 and the global pandemic.

The seventh topic, (7) ‘Sport', is the least dominant topic in the corpus, a theme where new ways of playing a sport are often discussed, for example by playing chess against a machine or an algorithm. Some prominent words that occur in this topic are: "Team", "Player" and "Athletic". In 2020, this topic reaches its peak, with 91 articles on AI and automation, mostly focused on smart algorithms that are being used in football and basketball.

The last temporal topic, (8) "Politics", is clearly present throughout the corpus. In 1989, with the fall of the Berlin Wall, a peak can be observed because at that time the use of military technology in the Cold War was often mentioned. In 2019, there is also a peak, partly due to the various applications around deepfakes and the dangers of AI and automation in elections. Continuous topics

Continuous topics are topics that show small fluctuations through time, but that does not show a clear trend. If the topic (9) ‘Socio-economic trends' is considered, words like ‘Progress' and ‘Improvement' are being used, as well as ‘Recession'. Here, AI and automation are often placed in a broader context of social and economic developments such as social changes, a financial crisis, and what the place of smart technology is.

In topic (10) "Technology", there is a continuous representation in the corpus, but there is also an increase in the number of articles from 2017 on. This is partly due to the larger number of articles published in the last 5 years. Within this topic, the emphasis is very often on "Intelligence", "Chip" and "Knowhow", with AI and automation often described from a purely technical perspective. Articles appear on the hardware and software that drive AI and automation. Figure 1 shows the change in occurrence over time for the ten topics that have been discussed, both the ones with temporal patterns and the ones that were continuous. Occurrence of temporal and continuous topics (1985–2020) (N = 10,089).

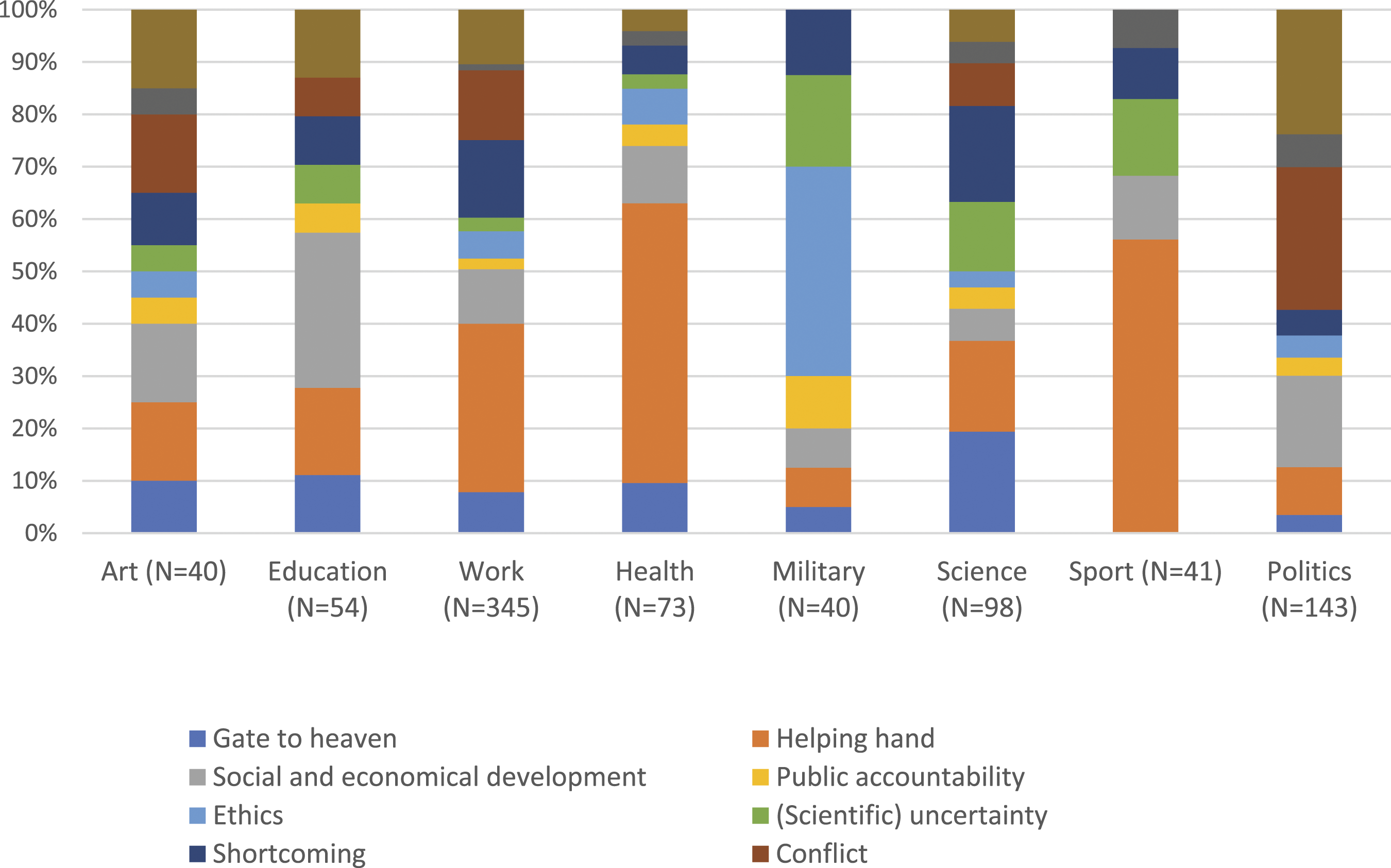

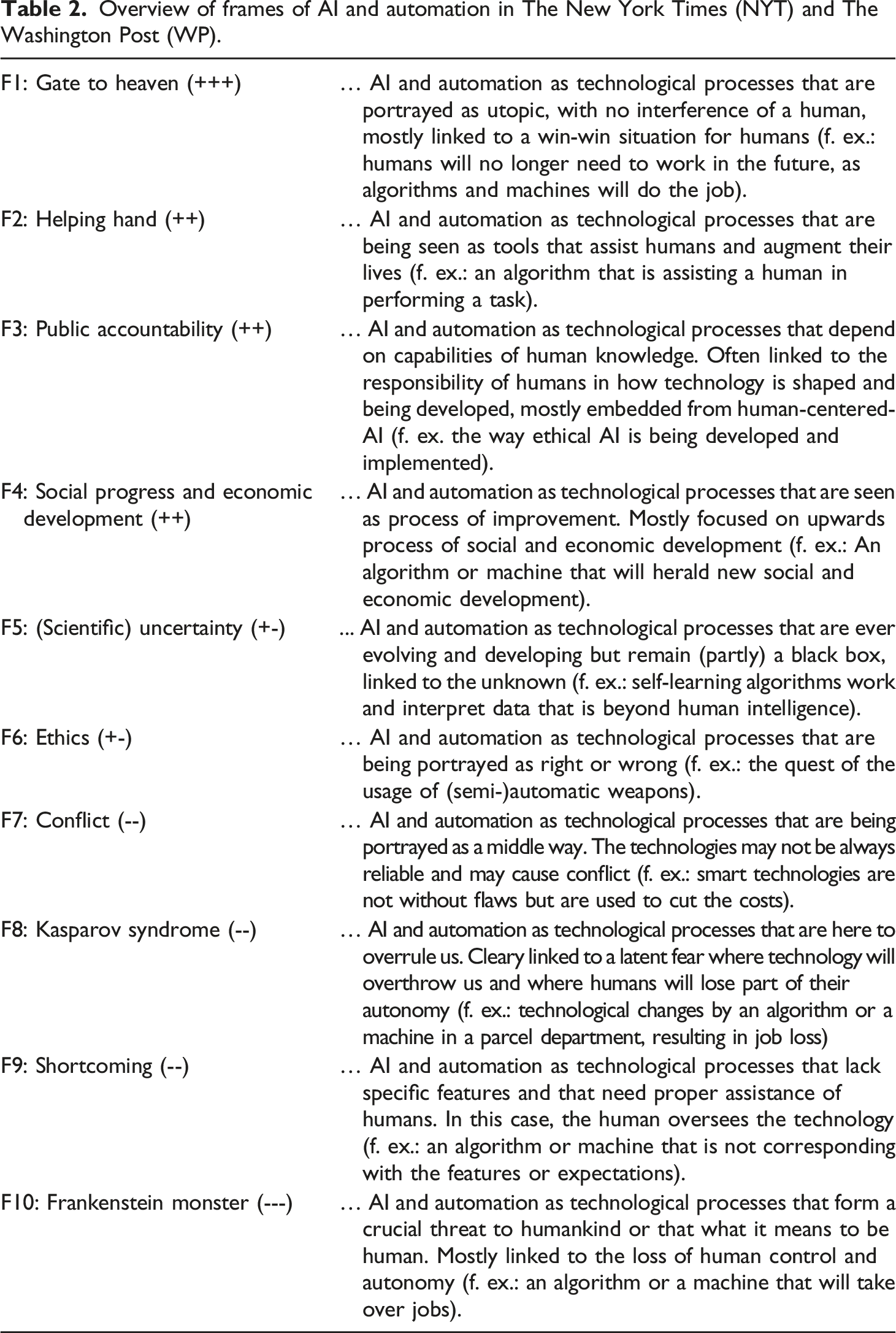

AI and automation from 1985 to 2020: frames

From the corpus of 1039 articles and the analysis of the body texts, 10 dominant and useful frames emerged (1985–2020). Figure 2 shows the frames that emerged from this analysis. The frames are divided into (A) problematizing frames; (B) rather neutral frames; and (C) positive frames. In addition, Table 2 shows the different frames on a scale from utopian to dystopian. Positive frames (Utopian) Total number of articles coded with each frame linked to topic modeling (LDA) (N = 1,039). Overview of frames of AI and automation in The New York Times (NYT) and The Washington Post (WP).

The first positive, utopian frame is ‘Gate to heaven', where AI and automation are presented as very utopian technologies that will improve us as human beings. The emphasis is often placed on technology as a panacea that will continue to improve, so that, for example, humankind no longer needs to work. Of all the topics, the frame "Gate to heaven" is most often found under "Work" and "Science". Here, AI and automation are referred to as technologies like what Thomson describes in an article in The New York Times: “a holy grail that will collectively improve our standard of living” (Thomson, 2010).

The ‘Gate to heaven' frame is the superlative of the ‘Helping hand' frame where AI and automation are portrayed as technologies that improve everyday life. This frame also includes a positivist view on technology, but it is seen much more as an extension or a tool that enables humans to perform tasks, and thus not as some kind of 'holy grail' as is the case with ‘Gate to heaven'. In other words, here AI and automation are seen as an extension of humans (Chase, 1985). This frame is particularly prominent in the topic ‘Sport'; more than half of the articles analyzed contained this frame.

The third positive frame is ‘Public accountability', where AI and automation are seen as technological processes that depend on the capabilities of human knowledge and are therefore mostly embedded in the context of ‘human-centered AI'. Within this frame, technology is seen as something positive, and the responsibility for how AI and automation are crafted, developed, and implemented is designated to the tasks of humans. ‘Public accountability' is a frame that is rather latent within the corpus and occurs mainly within all topics except ‘Sport'.

The fourth and final positive frame is 'Social and economic development', where AI and automation are presented as technological processes that improve living standards and consequently result in social and economic progress. This frame is mostly present in the corpus as is being put forward when AI and automation are heralded as positive technological forces that “will create jobs or that will spur economies on a worldly scale” (Metz, 2016). Neutral frames

The first frame that lies between utopia and dystopia is ‘Uncertainty'. This frame is often used to show AI and automation as technological processes that remain unclear, and that is difficult to reveal to the outside world. For example, there are applications of AI such as algorithms and the automation that results from them, which are referred to as a "black box" (Hoagland, 2017) or an "enigma" (Goleman, 1997), to something that is not clear how it works. This is also the case with more complex forms of AI and self-learning systems that are pre-programmed and do not always have a clear outcome. Especially within the topic of ‘Science', the frame ‘Uncertainty' occurs, respectively in 13 articles. Since 2015, this frame has become more prominent within this topic as technology becomes more advanced.

The second, more neutral frame is ‘Ethics'. Here AI and automation are seen as technological processes that are linked to a question of conscience. This frame is remarkably common within the topic ‘Military', often in relation to the use of autonomous weapons. AI and automation are portrayed as a technology that can have right or wrong consequences - this can, it is said, cause technology to take control over human autonomy. This also means that new technologies could have negative consequences for humanity. Problematizing frames (dystopian)

The different frames range from dystopian (AI and automation as very negative technological processes) to utopian (AI and automation as very positive technological processes). The first problematizing frame is ‘Shortcoming' where AI and automation are presented as technologies that clearly have one or more shortcomings. Here, it is important that humans support the technology and that it is controlled or supervised by humans. Within the topic ‘Work' and the topic ‘Science', the frame ‘Shortcoming' occurs frequently (51 times in ‘Work' and 18 times in ‘Science'). When it comes to ‘Science’, reference is made to a machine or an algorithm that makes mistakes but is used anyway. Or as Rosse (1989) writes in an article in The Washington Post on machine translation: "The machines were unable to do more than translate words, not meaning. Results were often incomprehensible". It can complete a task, often through clear instructions from a human, which is why AI and automation fall short to some extent.

The next problematic frame is ‘Conflict', where AI and automation are presented as technologies that result in a struggle. Although this frame is often linked to a ‘middle ground' and in some cases as a ‘lender of last resort'. Metz (1995) writes in an article for The New York Times that "AI and automation is our only option and possibility, but we must also resist it". Thus, it is often referred to as an endpoint. Yet, the use of these technologies seems to have a negative connotation that results in conflict. In the sample of 1039 articles, this frame is less prominent. For the topic ‘Work', there are 46 articles where the frames conflict occurs, in the topic ‘Politics' there are 39. In these articles, AI and automation are mentioned as means to speed up or optimize processes, while there is not always ‘enthusiasm' for this.

The third dystopian frame is ‘Kasparov Syndrome' in which AI and automation are presented as technologies that will overrule or outclass humans in the future. Unlike the ‘Frankenstein Monster’ frame, ‘Kasparov Syndrome' refers to the possible loss of control, whereas in the former frame control is already lost. Therefore, in this frame, AI and automation are presented as technologies of constant, latent threat. They want to imitate human intelligence (‘Kasparov Syndrome'), but often fall short, are too ‘singular' (‘can only perform one task satisfactorily') and will still allow humans to maintain their autonomy. This is why ‘Kasparov Syndrome' is considered a less dystopian frame than ‘Frankenstein Monster'.

The last problematizing frame is ‘Frankenstein’s Monster', which results in a portrayal of AI and automation as very dystopian. This frame depicts AI and automation as processes, as technologies that are constantly in motion and need of change. They are presented with a clear focus on the potential danger to humanity. Linked to this is a link to the ‘robot' narrative, describing how AI and automation have taken control at the expense of something, a job, a life, and our human freedom. Especially in the topic ‘Work', a prominent presence of the frame ‘Frankenstein Monster' is observed, as it refers to AI and automation taking over or even eliminating jobs.

Discussion and Conclusion

This study examined the topics and frames surrounding AI and automation that appeared in The New York Times (NYT) and The Washington Post (WP) from 1985 to 2020. The topics and the frames of AI and automation in this country-level study provide a long-term overview of the debate on these technologies by distinguishing ten topics, on the one hand, four problematizing frames (dystopian), two neutral, and four de-problematizing frames (utopian) on the other. From 1985 onwards, a continuous presence of both technologies in the coverage of both news outlets is observed.

For the first research question, which aimed at distinguishing the different topics, it can be concluded that eight temporal (topics that were at times more or less prominent) and two continuous topics (themes that had a more stable presence throughout time) can be distinguished in which AI and automation emerge. However, from 2013 onwards, a strong increase is visible within ‘Work’, ‘Art’, and ‘Education’. The results reveal that AI and automation and its applications have been referred to as a ‘media hype’ (Naudé, 2021), as coverage of algorithms, deep learning, and recommender systems has been more prominent than ever. This hype has been very visible, especially in 2016 and 2017, and one could argue that AI, automation, and its applications will remain prominent in news coverage as these technologies will result in both established and novel ethical, legislative, and economical challenges.

The second research question investigated which frames can be distinguished in the reporting of AI and automation in The New York Times (NYT) and The Washington Post (WP). The frames ‘Helping hand', ‘Shortcoming', and ‘Conflict' were most prominent throughout the corpus. In the topics ‘Work', ‘Health' and ‘Sport', the frame ‘Helping hand' is prominent, which indicates a more positive approach to AI and automation. Within the topic of ‘Politics', more dystopian frames were found, with ‘Conflict' and 'Frankenstein monster' as the most prominent ones, for instance when attention is paid to the use of algorithms controlled by smart technology.

Keeping the research questions in mind, the distinction of topics and frames of AI and automation is a relevant add-on in the field of framing emerging technologies as it provides a more systemic and longitudinal overview of the rather utopian and dystopian portrayals it has encountered. This study also responds to what Donk et al. (2012) have urged in their research on the perception of nanotechnology, namely that there should be longitudinal research on the framing of technologies of AI and automation in media coverage as these perceptions have the potential to influence public opinion.

With this study, we provide a valuable and more holistic overview of which topics and frames have been used to describe AI and automation over time. Overall, we can state that the findings of our study are largely in line with the results of the above-mentioned literature in a sense that they uncovered similar patterns of news coverage of AI over time. Fast and Horvitz’s (2017) study researched the beliefs, interests, and sentiments of AI in an exploratory way by analyzing text corpora of news coverage of The New York Times. They have found over a 30-year period that the coverage of AI has been more positive than negative over time. Our country level study has come to a similar conclusion, namely that both for AI and automation frames as ‘Gate to heaven’ and ‘Helping hand’ have been more prominent over the time than the ‘Frankenstein monster’ and the ‘Shortcoming’ ones. In addition, the findings of our study mirror the results of the study of Chuan et al. (2019) that has mapped the perceptions of AI in five U.S. newspapers from 2009 till 2018. One of their key conclusions was that when dystopian frames were described, they were met with greater specificity than utopian frames. We have uncovered a comparable pattern when we were conducting the manual framing analysis as more dystopian examples and metaphors were embedded in news coverage.

Apart from the similarities, the results of this country level study also provide novel insights that build on the above-mentioned literature and enhance our understanding of the perceptions of AI and automation in news coverage. For the last 5 years of our analysis, namely 2015–2020, we have observed new trends and patterns that advance and impact the findings provided in already-existent literature in at least two ways. First, certain topics like ‘Science’ and ‘Sport’ became more prominent over the last years, emphasizing the opportunities of AI and automation (helping hand) and underlining morality dilemmas (ethics). Second, an overall nominal decline became visible within other topics like ‘Work’, ‘Education’, and ‘Art’, possibly revealing that media coverage of AI and automation might have passed its peak. All in all, we can state that our findings add on the current literature and its findings by researching a more extensive corpus, and by incorporating a larger time frame.

In the light of the results, we also want to come back to the general question of this study, namely to what extent the frames of AI and automation tilt towards utopia or dystopia. Overall, AI and automation have been portrayed as more dystopian from 1985 till the end of the nineties, but as the world entered the 21st century, the coverage became more utopian or even ‘benefit-driven’. All in all, the debate on AI and automation can be very utopian or dystopian and therefore lead to confusion on the possibilities and impossibilities of these technologies. By looking at the different topics and frames longitudinally, however, a more nuanced portrayal has emerged in this study. In addition, the topics and frames that are prevalent in the news coverage influence how AI and automation will be deployed, adopted, and regulated in the past, the present, and the foreseeable future (see also Marconi, 2020).

This study has limitations. First, this study only looked at the use of frames in the coverage of The New York Times and The Washington Post, and no other popular or commercial newspaper titles in the US. During the deductive analysis, few intrinsic differences were found in the coverage of The New York Times and The Washington Post, but they have been central in intermedia agenda-setting and are characterized as internationally affluent. However, by including other titles in the corpus of the follow-up study, possible differences could be detected through comparative analysis. Second, it would be interesting to examine how these news frames evolve over a longer period of time in light of how AI and automation are hyped. This calls for more longitudinal quantitative research, which would deepen our understanding of how media contribute to discussions about emerging technologies.

Finally, it would be relevant to question whether rather utopian or dystopian frames correspond with the opportunities and challenges that lay ahead when it comes to AI and automation. One could argue that an over-representation of positivist frames could invoke a naive interpretation of these emerging technologies, resulting in generous contentment or bitter disappointment. This study hopes to take the first step toward a better understanding and representation of the structure, topics, and frames over time, and thus promote a more balanced discussion about the possibilities and impossibilities of AI and automation.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.