Abstract

The aim of this research is to identify AI imaginaries, the issues they raise, and how the AI industry tackles them. It does so by choosing an opportune moment to map the issue space by virtue of a controversy around the firing and rehiring of OpenAI CEO Sam Altman. The sites for the mapping are X/Twitter and LinkedIn, where users frantically post not only about Altman but also about all manner of AI promises and pitfalls. By employing techniques from controversy mapping and digital research methods, we locate contemporary AI imaginaries, assess their salience in a cross-platform perspective, and describe the stakes gleaned from the prominence of certain dominant imaginaries, such as longtermism, regulatory ambivalence, and techno-hagiography. We discuss the issues these imaginaries raise and the AI industry’s premediation and preclusion of them: the manners by which the AI industry strives to occupy the future and absorb the present.

Keywords

Introduction: AI industry reaction to the Sam Altman controversy

A little less than a year after the release of ChatGPT, OpenAI, the company behind the groundbreaking chatbot, was in the spotlight again. But this time, it was not because of another model release. In November 2023, the company’s CEO, Sam Altman, was abruptly fired and then rehired by OpenAI in the span of 5 days, causing speculation across and beyond artificial intelligence (AI) enthusiasts and skeptics worldwide. The incident sparked extensive discussions in mainstream media outlets and an outpouring of posts across social media platforms.

Yet the controversy revealed more than views about Altman and his role at OpenAI; it mobilized and resurfaced broader arguments and issues around AI accountability, regulation, promises, and pitfalls. The controversy sparked questions such as “what AI is,” “where is it going,” and all other manners of thought and issues concerning AI. It could be said that this episode around Altman set the stage for a public clash about AI generally, and as such, offers a particularly opportune instance to undertake a controversy mapping.

This article aims to capture the discussions and clashes around Altman, OpenAI, and AI that took place in November 2023. It seeks to contribute to the study of AI as new media concentration (or “Big Tech” 1 ) and the development of the AI industry (Bode and Goodlad, 2023; Crawford, 2021; Kak and West, 2023) by examining how industry discusses itself as a key actor or leader. This is a story about Sam Altman, but it is also about a reflection by the AI industry, and about the issues arising from the main object of our study, AI imaginaries.

While referencing the vocabulary of AI imaginaries as well as an AI controversy stirred up by Altman’s firing and reinstatement (Latour, 2005), we also frame the following analysis around the notion of issuefication, or its becoming an issue (Marres and Rogers, 2005). Thus, this article is subsequently an invitation to study imaginaries as occasions of issuefication (or the lack thereof). It centers on the following research questions: What kind of work do AI industry’s imaginaries do? Which issues are raised by the imaginaries? And how does the AI industry tackle these issues?

We employ techniques from controversy mapping and digital research methods to surface the articulation of imaginaries around Altman’s firing and rehiring. We operationalize the research through a cross-platform analysis of X/Twitter and LinkedIn, which we treat as fertile grounds generating discursive data and traces. This allows us to capture how AI is imagined at a specific moment in a controversy when online commentators and editors are furiously writing posts and pressing their “send” buttons.

In our work with imaginaries, we propose the study of the issues they introduce, for we find it productive to consider what imaginaries are “doing..” The imaginaries we find—Longtermism, Regulatory Ambivalence, and Techno-Hagiography—are shaping the future by shaping the present; they are also raising issues that the AI industry is tackling through premediation and preclusion. As put forward by Grusin (2010), premediation is a media effect that operates on the premise that “the future has already happened by capturing the moment when the future emerges into the present” (p. 7). In our study, premediation similarly denotes the process of externalization by making the future emerge as if it had already happened in the present; it is how the AI industry (broadly speaking) pivots to future issues, overriding current critiques via deflation and belittlement. Preclusion is the process of internalization, meaning the AI industry recognizes that an issue exists, yet presents itself as best suited to resolve it, disempowering critiques by absorbing them. Our research is thus in line with Marres et al. (2024: 2), who argue that the AI industry and its spokespeople are openly talking about AI harms and risks as a way of disarming civil society’s critiques by adopting those very criticisms. Through the controversy mapping, we thereby demonstrate concrete instances of how the AI industry tackles AI issues through premediation and preclusion. AI emerges as an imminent yet volatile future technology: the AI industry’s envisioned, continuously materializing brainchild that is yet to transpire but also already exists in that single, retained future.

Conceptual framework: imaginaries and controversy mapping

Following the premise that AI is, in fact, “a sociotechnical phenomenon in formation” (Jobin and Katzenbach, 2023: 43), this article belongs to recent endeavors in mapping AI controversies that contribute to the making of AI (such as the Shaping AI report, 2023). Many investigations taking this angle analyze AI through “imaginaries” or “narratives” and turn to interviews (Hautala and Ahlqvist, 2022), publications and official documents (Cardon et al., 2018), especially on national or cross-national levels (Bareis and Katzenbach, 2022; Jasanoff and Kim, 2009; Wijermars and Makhortykh, 2022), or media coverage (Brennen et al., 2018; Chuan et al., 2019) as sites of AI formation and the performance of AI imaginaries. In this research, we operationalize AI imaginaries as issuefying, or raising issues about AI, influencing and being influenced by industry and non-industry actors across two leading yet markedly different social media platforms: LinkedIn and X/Twitter. By focusing on the issues raised by AI imaginaries found there, we bring attention to how AI is transformed into an urgent matter of concern for and by specific stakeholders, and how AI imaginaries are being utilized “in the wild.”

“Imaginary” as a concept has traveled across disciplines before being recently operationalized for the purposes of media and critical AI studies. The term denotes neighboring but varying notions, ranging from abstract cultural concepts, embodied cultural beliefs, and performed normalizations, hopes, and fears. A comprehensive overview by Strauss (2006), succinctly summarizes the most influential meanders of “imaginaries” in the past decades: “for [Cornelius] Castoriadis, the imaginary is a culture’s ethos; for [Jacques] Lacan, it is a fantasy; for [Benedict] Anderson and [Charales] Taylor, it is a cultural model (i.e. a learned, widely shared implicit cognitive schema)” (p. 323). Building upon the notions of imaginaries proposed by Taylor and Anderson, Jasanoff and Kim (2013, 2015) speak of “sociotechnical” imaginaries, which are concerned with sociotechnical systems and are more aligned with the tradition of Science and Technology Studies. Sociotechnical imaginaries emerge as multiple and co-existing, and as assemblages of desired and feared visions which reflect values, politics, and power relations in regard to technological developments (Jasanoff and Kim, 2015: 5). Tracking such sociotechnical imaginaries is thus useful as it can make visible the moral, political, and discursive imperatives that drive people who create, use, and govern technologies such as AI (Jobin and Katzenbach, 2023).

While sociotechnical imaginary is a useful term to account for developments in the Altman controversy, it is not the only one. As Wyatt (2021) notes, other symbolic forms that use one thing to represent another, such as metaphors, are “available to all, are much more flexible and dynamic than sociotechnical imaginaries (. . .) and can capture fears as well as hopes and promises” (p. 410). Imaginaries could be said to be a broader and more delimited vessels of meaning, consisting of collectively established stories and ways of desirable thinking and acting. Unlike emerging ideas, imaginaries are more settled. As Jasanoff (2015) argues, unlike narratives, imaginaries invite future-oriented, broader outlooks and are spread and recognizable across relevant actors; unlike master narratives, imaginaries are more malleable and invention-driven; unlike discourses, imaginaries are embedded and “associated with action and performance or with materialization through technology” (Jasanoff, 2015: 20). Imaginaries are fuzzy, and less stable than ideology; they often incorporate metaphors and narratives; and they open up a space for re-imagining.

AI imaginaries, like other sociotechnical imaginaries, emerge in complexity and multiplicity; they are contested, performed, reinforced, and modified by sociotechnical power dynamics materialized in technological and social structures as well as everyday practices (Bareis and Katzenbach, 2022; Kazansky and Milan, 2021). They function as prefabricated visions of technology’s place in the world, complete with spokespeople, examples of success and failures, and key texts and images that are frequently repeated and become somewhat recognized. They are thus (re)produced across various actors and places, such as stakeholder visions, media representations, and public perceptions (Richter et al., 2025). AI imaginaries are powerful (some more than others) in generating fear and hope, selling products, getting votes, and gathering funds. As Paris et al. (2023) point out, “imaginaries are a useful concept to think through the politics embedded within current Internet infrastructures, as they reflect and anticipate the desired futures of their creators” (p. 4–5, following Adams et al., 2009; Mager and Katzenbach, 2021). The term ‘imaginaries’ implies competing visions under development, where it is possible to trace the labor of their making. To observe the labor of making and performing AI imaginaries, we look at the Sam Altman controversy through the lens of controversy mapping. We propose to not only make visible the AI imaginaries that come to life in the Sam Altman controversy, but also to see AI imaginaries as active participants in it.

Controversy mapping, or controversy analysis, is a methodological approach for “the study of public disputes about science and technology, and the interaction between science, innovation, and society more broadly” (Marres and Moats, 2015: 2). Controversy mapping “follows the actors themselves” (Latour, 2005: 12), namely, the researcher seeks to intervene scarcely, instead allowing the associations and utterances of actors to become central in locating interpretations and relevant issues. The process of making issues tangible is not merely theoretical or abstract, but it occurs in real-world settings such as offices, newsrooms, board meetings, and (in our case) social media spaces.

Controversy mapping rests on a horizontal (de)structuring of influences among a variety of elements such as artifacts of communication, devices, social and individual actors. As Bruno Latour and others have argued, there are certain moments in the course of technology development when, for its critical study, it is especially doable to map controversies and, as this article also demonstrates, issuefication. Such controversy mappings bring attention to the complexity and interconnectivity of all artifacts involved, but also the “impact” which they have, develop, and lose over time and over the relations with which they come into contact (Venturini, 2010; Venturini and Munk, 2022). From this standpoint, a controversy can be identified (and mapped) when an industry and science are in palpable motion, not quite stabilized; the controversy gels through an accident or disaster, and more recently, when projects are released in the wild in beta, transforming users into participants in a living lab (Latour, 2005; Marres, 2018). Or as Latour (2005) put it: “[What] a minute before appeared fully automatic, autonomous, and devoid of human agents, are now made of crowds of frantically moving humans with heavy equipment” (p. 81). In Latour’s (2005) understanding, the stir caused by a major event is “the social” (p. 1), as it designates a temporally set of actions and reactions of (re)assembling a state of affairs in a given temporality.

Issuefication has been put forward as a means of considering the dynamics of public controversies, particularly how their stakes are formed (Marres, 2012). It originated as a notion that stood in contrast to seeing controversies as public debates, where actors have positions and are inside or outside by virtue of having a voice (Marres and Rogers, 2005). Rather, controversies become such as actors disagree about how to frame them as matters of concern (Latour, 2005). The concept was developed further as an “object-oriented” approach to studying controversies and how publics participate in them materially (Marres, 2016). Issuefication has also been described as how controversies reach criticality for the public, for example, through hybridization or formatting. Hybridization work reframes the issue, for instance, from one about Internet governance to one that is also about gender or justice; reformatting takes it from the realm of counter-summit and alternative media to celebrity concern (Rogers, 2005). It has also been employed to study the absence of certain matters of concern. For example, in the scientific literature on AI, it was found that the machine learning literature sees AI as solving problems outside of AI rather than having its own problems, such as racial bias (Munk et al., 2024).

Methodological framework: digital methods and platform affordances

We approach social media platforms as areas where issues and controversies are created and leave traces, making them valuable data sources. As such, our research is grounded in utilizing digital research methods to map controversies and their issues (Rogers, 2013; Venturini and Munk, 2022; Rogers et al., 2015; Venturini and Rogers, 2025). Digital methods are a toolkit of methods that aim to be of the medium, meaning these methods account for (and often repurpose) the affordances of digital objects.

Digital media offer an opportunity that the research methods take advantage of. As has been argued, “digital media can become the basis for a new generation of quali-quantitative research” (Venturini and Munk, 2022: 8). Along these lines, digital media platforms generate data and traces, allowing researchers mapping controversies to capture associations in the making. Here, we lean on the capacity of digital media to provide data and traces of timely, collective interactions, such as the outpouring of reactions during the Sam Altman controversy.

Web environments, such as social media platforms, do not constitute the only space for analyzing the Altman controversy or AI imaginaries in general. While the Internet is neither the whole world nor a reflection of it, these online spaces still constitute particular performative spaces where controversies, sociality, and issues are mobilized, redefined, and performed. We chose two platforms for our analysis (LinkedIn and X/Twitter) as each platform attracts industry professionals (and many others) to post their views, tag users, and otherwise engage through reposting, liking, and other such actions.

For over two decades, LinkedIn (with over a billion users 2 ) has been a leading platform for professional self-promotion (van Dijck, 2013a), where job seekers, recruiters, and professionals “do the networking” by engaging with and sharing relevant content (Baruffaldi et al., 2017; Davis et al., 2020). Networking on LinkedIn promises a straightforward reward for the users: career and reputation advancement by sharing the latest industry information and job opportunities. Through their engagements, users purposely leave behind a “digital footprint” that benefits and promotes a construction of their professional profile (Bridgstock, 2019; Cho and Lam, 2021). LinkedIn users also share content that reflects issues, narratives, and dominant framings of what is most desirable (or not) in a specific industry or role.

X/Twitter (with over 400 million users 3 ) is also two decades old. It has been an object and a source of research for years, transforming itself from a platform discredited for sharing mundane sandwich pictures to providing firsthand reporting and commentary on protests and events from around the globe (Bruns et al., 2013; Rogers, 2013). Despite the growing limitations in data linked to its representativeness (Blank, 2016) and the controversial acquisition of Twitter by Elon Musk, X/Twitter remains one of the main online, discursive spaces with specific, yet changing, politics and biases (Gillespie, 2010, 2014; van Dijck, 2013b; Rogers, 2024).

Rendering a platform as a space for imaginaries and their issues

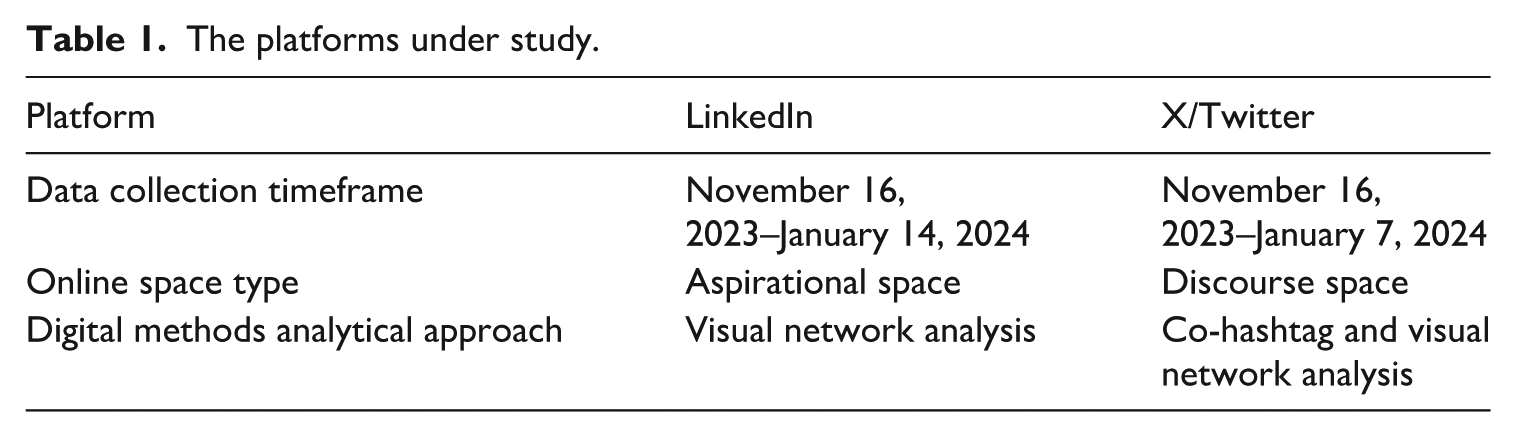

In digital research methods, the demarcation of a corpus and initial analysis are performed through computational methods and interpretation through closer readings. We deployed scraping to access relevant data on the Altman controversy as it unfolded on LinkedIn and X/Twitter. For each platform, a somewhat different analytical approach had to be taken to account for their data accessibility as well as the platform affordances (Table 1), which we detail below.

The platforms under study.

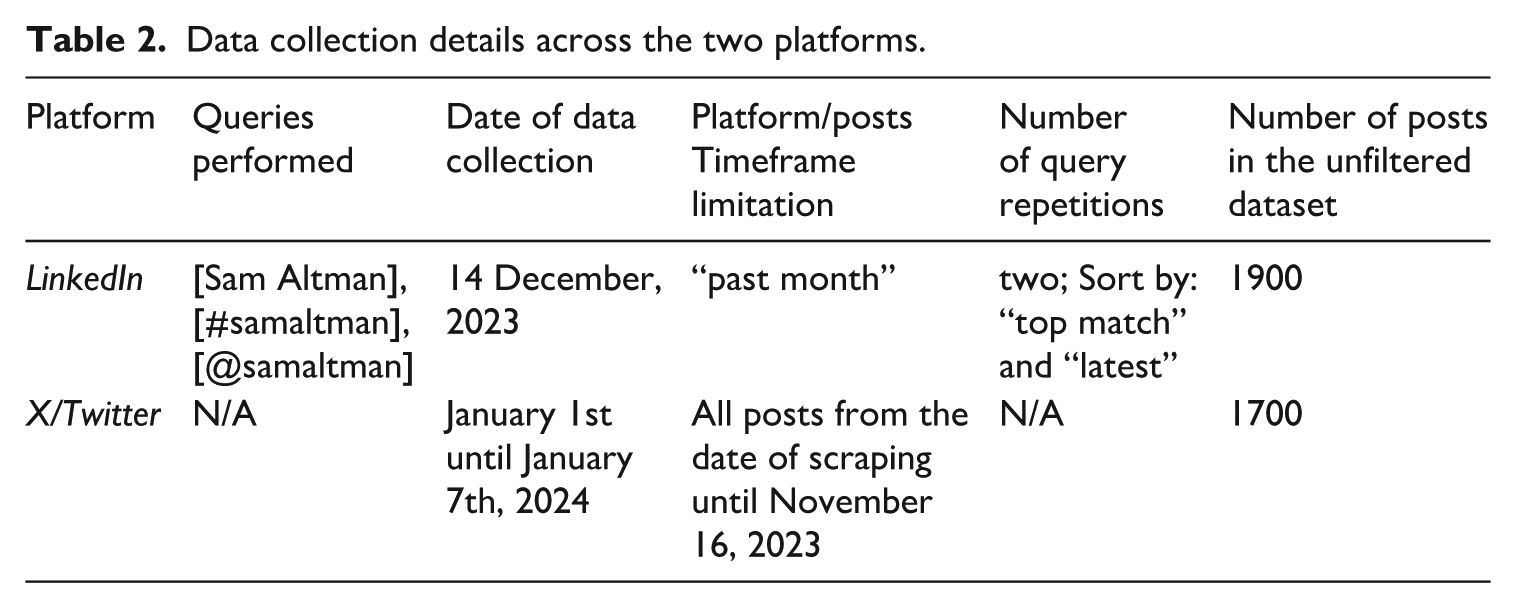

To perform data scraping, we used a clean research browser and set up new accounts for LinkedIn and X/Twitter to access the platforms’ content. We utilized an online name generator and had to choose several attributes to complete the account creation, such as zone choice (“Amsterdam, the Netherlands 1012 XT”) for LinkedIn and three interests to personalize “what do you want to see on X” (“technology,” “science,” and “business and finance”). Using relevant queries for both platforms (see Table 2), a browser extension tool, Zeeschuimer, was used to scrape all the posts including their metadata and media contents. As an aid in the mapping endeavor, we turned to network graph rendering in Gephi, a network visualization and exploration software (Jacomy et al., 2014). By doing so, we were able to map posts into networks and examine relevant clusters. We used different nodes and edges to accommodate platforms’ affordances, a co-hashtag network and a mention analysis for LinkedIn and a retweet (or re-post) analysis for X/Twitter.

Data collection details across the two platforms.

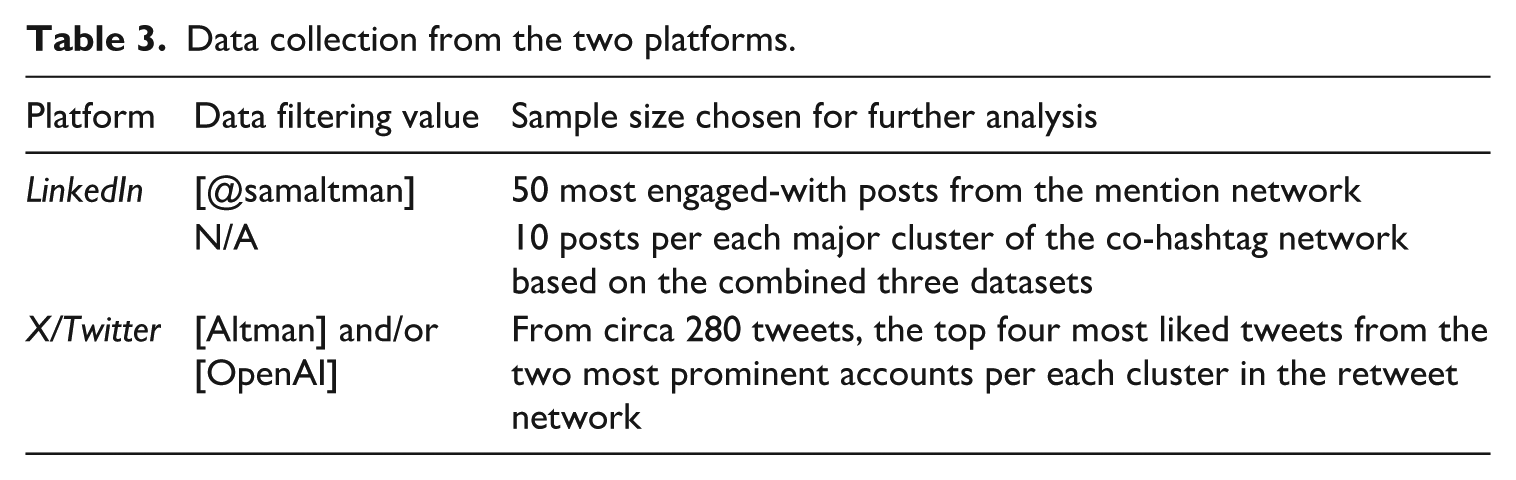

To identify the traces of imaginaries, we performed an inductive coding using a three-step analysis scheme. First, for each data sample chosen for qualitative analysis (see Table 3), we performed an ethnographic content analysis of posts focusing on keywords, hashtags, and text in each post. Second, we identified relevant keywords and phrases that stood in for emergent actors (e.g., Sam Altman, Big Tech, white men) and issues (e.g., AGI, racism, democratic processes). Third, we let the broader themes surface by introducing labels of emergent imaginaries that encompass repeated issues and actors across our mappings.

Data collection from the two platforms.

We used LinkedIn’s Search function to perform a set of queries—[Sam Altman], [#samaltman], and [@samaltman]—roughly a month after the firing and rehiring of Altman took place. For each query, LinkedIn Search’s “sort by” option was first set to “top match” and, second, to “latest,” resulting in a total of three datasets.

We performed a co-hashtag analysis on the three combined datasets. For a qualitative analysis, a sample of 10 posts per each major cluster in the co-hashtag network was selected for close reading. We also performed a close reading of the 50 most engaged-with posts 4 for the mention network. We focused on the mention network qualitatively as mention-only posts reflect particularly well the LinkedIn vernacular as a space of networking and professional aspiration; users who mention others [@] aspire to engage with the mentioned users and be associated with them and their network.

X/Twitter

The major obstacle in working with X/Twitter data follows from the closure of X/Twitter research API (application programming interface) in 2023 and the shift to scraping to collect tweets (or posts as they are now called). To scrape relevant posts, we utilized a dataset containing a list of relevant accounts, scraping all posts and their retweets (re-posts) from each account (see “Limitations” section for details). Once the dataset was completed, we uploaded it to the 4CAT Capture and Analysis Toolkit, the open-source research software (Peeters and Hagen, 2022: 572). In 4CAT, we used the “filter by value” processor to retain posts containing only relevant keywords. Treating X/Twitter as a discourse space, we generated a network graph organized by the most engaged-with posts per cluster according to retweet count. A high number of retweets can be an interesting signal in an analysis of actors and communities on Twitter (Schaefer and Van Es, 2017). To analyze retweets as a metric, we created a network graph where original authors (actors who posted a tweet) exist as a source, while retweeters (actors who retweeted the tweet) are the target.

Limitations

In turning to digital research methods, one has to acknowledge the intrinsic obstacle in data access for research. In the case of both X/Twitter and LinkedIn, the API is practically not available to use for independent research purposes. Therefore, an alternative way of collecting data and metadata had to be applied: scraping. While scraping violates platforms’ terms of service, it is often the only way for researchers to collect and scrutinize platform data (other than of using marketing data services). Furthermore, using digital methods invites making several choices in terms of research design and its computational execution. Given that content ordering and recommendation on each platform is not well understood, we are faced with the limitations concerning how the choices we make result in a data set that could be different given another set of starting points.

Another limitation is that following the purchase of Twitter by Elon Musk, the introduction of “daily tweet limit” to circa 400–600 posts a day reduces the extent of the practical data collection. Only a few hundred posts can be accessed from a single account per day. The restriction, however, is glitchy; the number of displayed posts varies and can be bypassed by reloading the page in longer time intervals. We instead chose to scrape all posts from relevant X/Twitter accounts.

To locate relevant accounts, a list of AI organizations was compiled using the first 50 results of six Google queries: [AI research], [AI justice], [AI safety], [AI ethics], [AI risk], [AI harms]. The search was conducted in a research browser, with the language set to English and the IP location set to Italy. Only organic results were considered. Following qualitative coding, a list of 101 websites of AI-related organizations was compiled, which included industry websites, NGOs, government pages, and various international organizations. Using a dataset containing a list of AI organizations, we compiled a list of people by accessing the “people”/“team”/“about us”/etc. pages of each organization and locating three X/Twitter accounts per organization in the order in which they were listed on the page. One has to note the limitations of the above approach, including the English-language and Global North biases in the data, and some unevenness. For some organizations, no associated people had X/Twitter accounts, while for others, their accounts were private or had no tweets; this approach most often targeted CEOs and managerial positions.

To protect the privacy of users’ information in our data, we anonymized usernames and abstracted the content of posts and tweets by paraphrasing individual phrases and issues in our coding. This ethical consideration implies that our analysis is a step away from the actors’ language on the ground. The emergent imaginaries were not rigid and, as we saw in our data, their boundaries across issues, actors, and clusters are blurry. Our article does not present universality or completeness but rather a snapshot of resonating traces in a given digital space, time, and context.

We now turn to our findings concerning the kind of work the AI industry’s imaginaries do, the issues that are raised and how the AI industry tackles them.

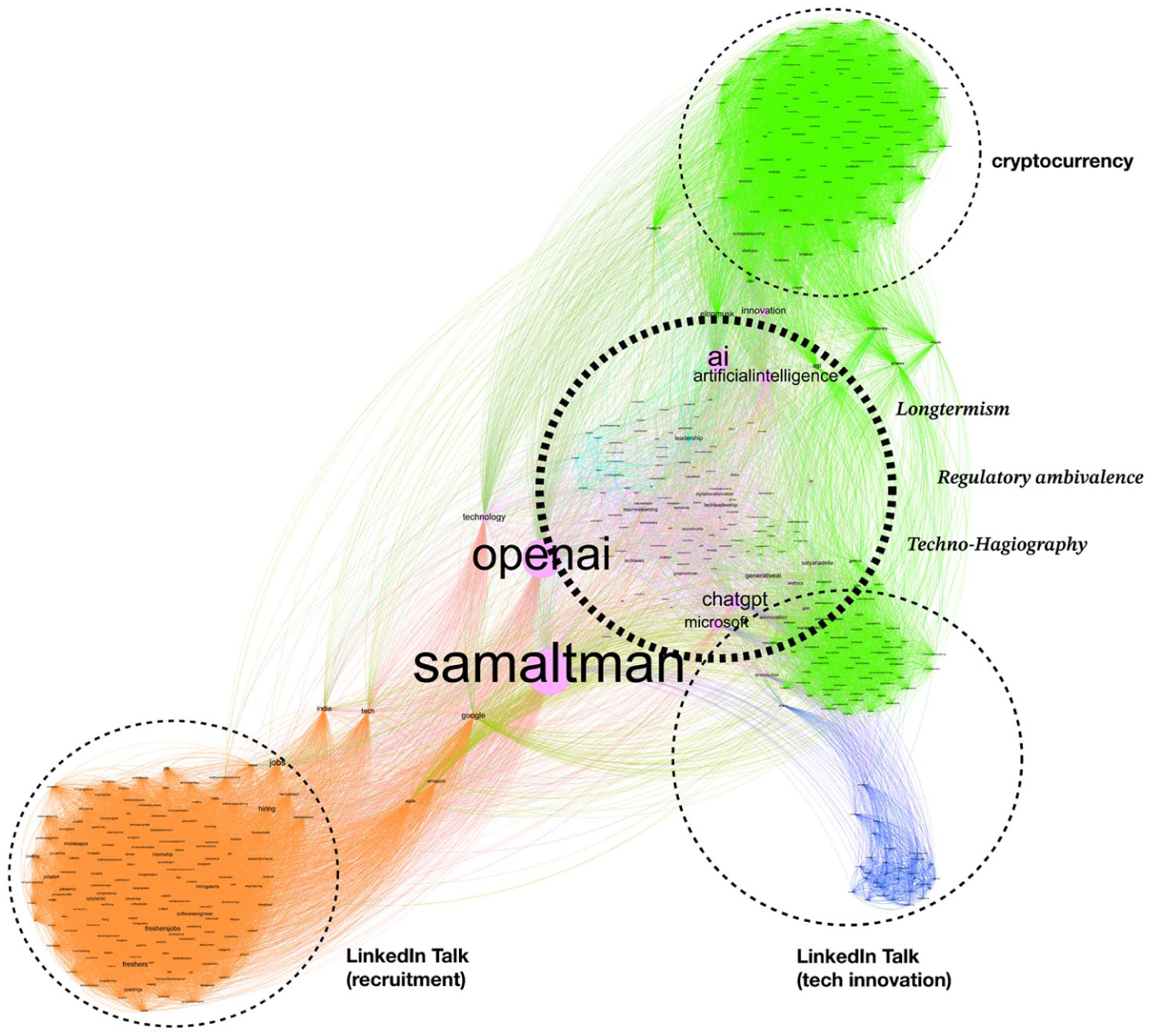

Results: issue premediation and preclusion on LinkedIn

On LinkedIn, the Altman controversy sparked many considerations about Altman’s contribution to AI development, particularly speculations around the so-called “Q” algorithm that OpenAI was allegedly developing as a step toward Artificial General Intelligence (AGI). Commented upon in relation to Altman’s role as CEO, users discussed AGI, the creation of a self-conscious silicon entity that will surpass human intelligence. What should be done with AI, it is argued, is either to aim for “superalignment” (ensuring that AGI will be aligned with human values and ethics) or prepare for human extinction. An imaginary we refer to as Longtermism emerges from this language contained in a set of posts; it is a term that has already been used to describe a similar range of attitudes, fears, and visions toward artificial intelligence development (Ahmed et al., 2024; Kemper, 2024). We saw arguments pertaining to the imaginary of Longtermism also emerging in the X/Twitter sample. Through its emergence, we observe how the issues ascribing to this imaginary preclude others; what Longtermism centers upon makes other issues of concern secondary at best and not in the focus of an AI-related discourse. However, the imaginary of Longtermism is closely entangled with two other imaginaries that emerge in this space, intertwined within one cluster (see Figure 1).

A co-hashtag analysis of the Altman controversy on LinkedIn data. The terms in italics signify emerging imaginaries from the cluster.

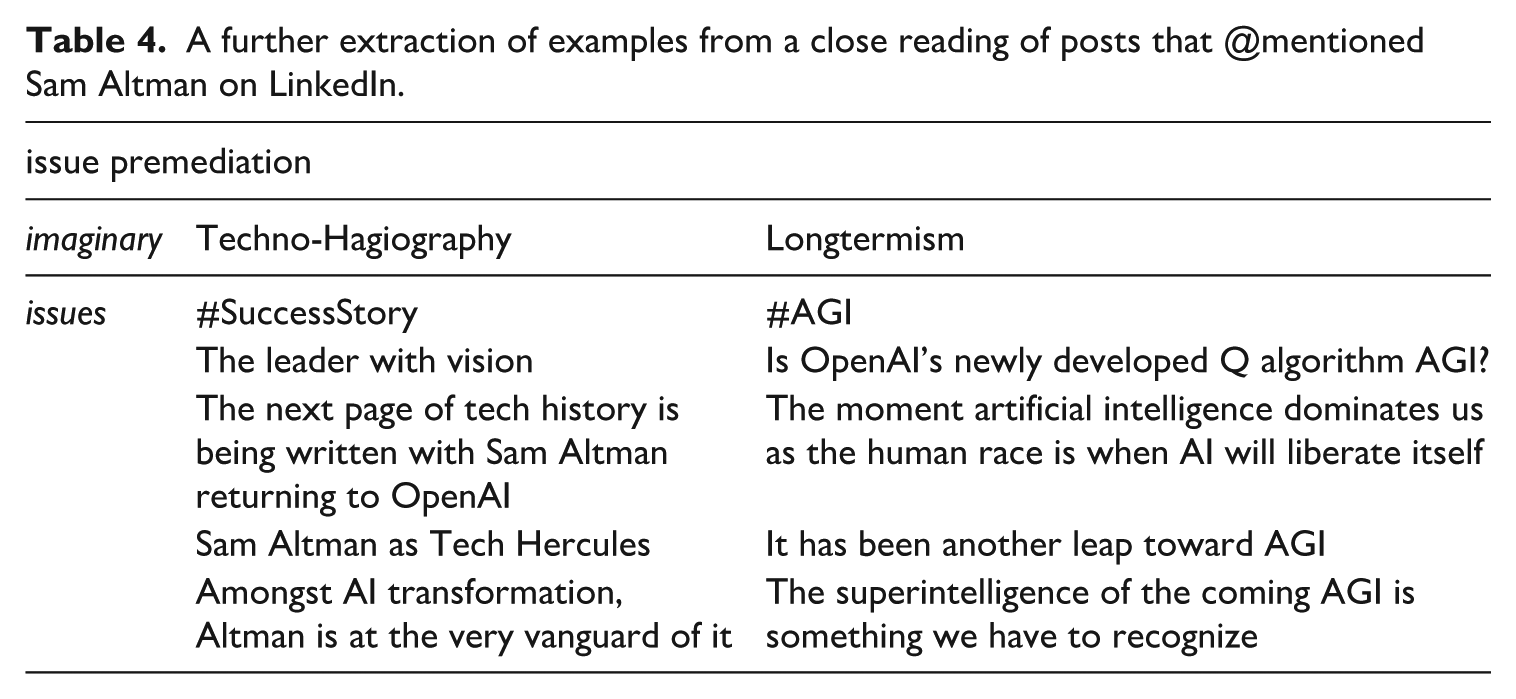

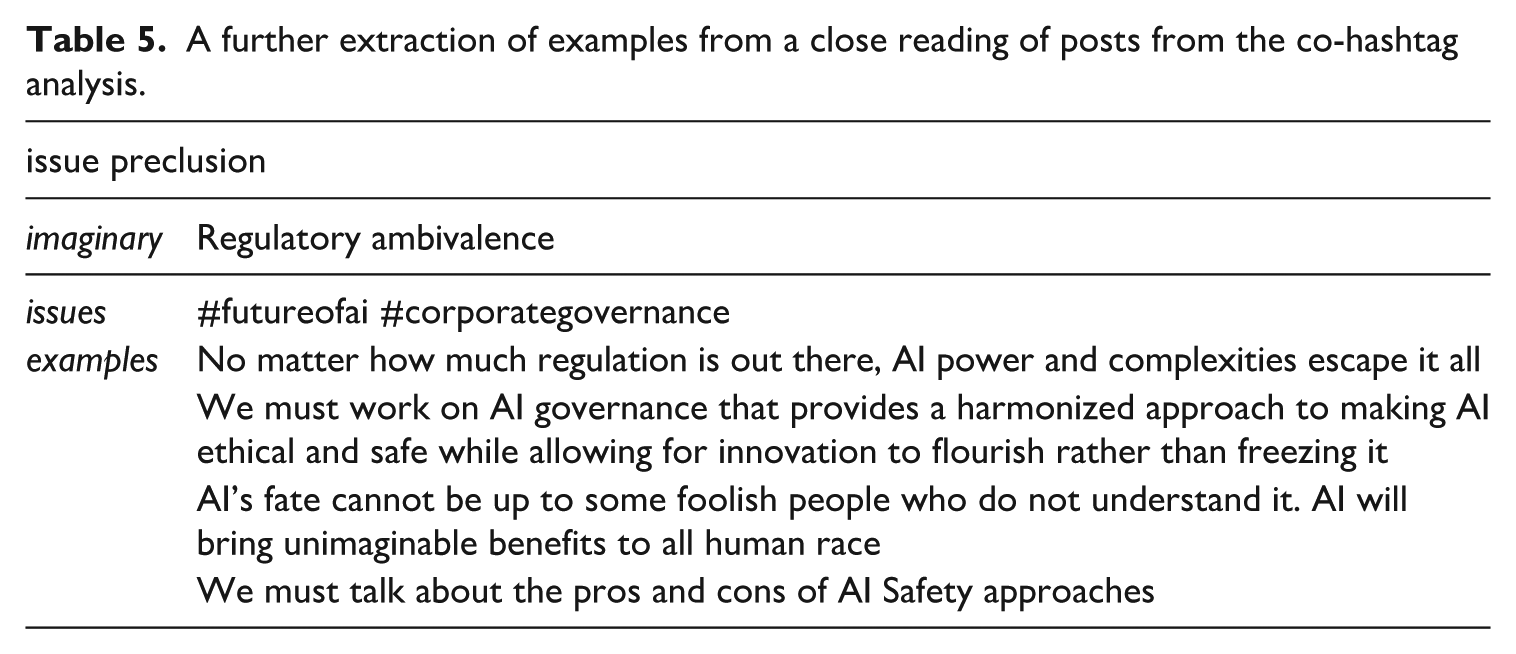

Within the same cluster, another emerging imaginary we refer to as Techno-Hagiography, is performed to mythologize AI spokespeople (Table 4). Across the posts we analyzed, Altman is described as a lone genius, a visionary who leads the whole AI revolution and hence should be empowered to continue his vision. Altman is a “hero” who returns victorious after being expelled from OpenAI; he stands as a role model for both the tech community as well as anyone who wishes to make a change and be a bold leader.

A further extraction of examples from a close reading of posts that @mentioned Sam Altman on LinkedIn.

Myth telling has been connected to the development of technology, and the mythmaking of Altman into a lone genius who returns as a victorious hero follows such a pattern. The premediation of issues enacted by the emerging imaginary of Techno-Hagiography is accomplished by projecting an image of a hero on Altman who is apparently “making history” by returning to OpenAI as a CEO. It is closely related to the perpetual mythizations of a (male, white) individual by in fact retelling the story of seemingly unstoppable technological progress and human genius.

Issue preclusion is performed when issues of concern are being acknowledged yet absorbed by the AI industry and aligned actors; in other words, the issues are relevant, but it is the AI industry itself that is best equipped to handle and resolve them. It is well illustrated by what emerges as an imaginary we refer to as Regulatory ambivalence (Table 5). The question of regulation is central to several voices we encountered on LinkedIn, as the Altman controversy raises questions of AI governance and safety in relation to the alleged power that Altman holds over not only Open AI, but AI worldwide. The issue preclusion is enacted here, on the one hand, users question the incompetence of governmental actions in the face of new technology in need of regulation; and, on the other hand, users argue that the tech community should take on a stronger governance structure to avoid disruptions and conflicts such as the Altman controversy.

A further extraction of examples from a close reading of posts from the co-hashtag analysis.

For the sake of completeness, we also note the strong presence of discourse spaces that are neither particularly relevant to the Altman controversy nor show strong signs of emerging imaginaries (Figure 1). We refer to these spaces as LinkedIn Talk (which we further subdivided into “tech innovation” and “recruitment”) and Cryptocurrency. Given that LinkedIn is a platform that provides an aspirational space focused on professional development and specialized work-oriented content, many expressions of Altman controversy were, in fact, used as promotional opportunities and embodied in LinkedIn Talk. While some “linked” themselves to Altman as a role model, others saw an opportunity to promote their products (such as courses), companies, or skill profiles. A notable interest of the blockchain and crypto community in the Altman controversy was linked to Altman’s investment in cryptocurrencies. The development of AI—and thus the firing and rehiring of Altman—constitutes part of the landscape of crypto discussions, where AI is envisioned as aiding the further development of blockchain and cryptocurrency infrastructure.

X/Twitter: AI Industry’s monopolization of the future is not (yet) complete

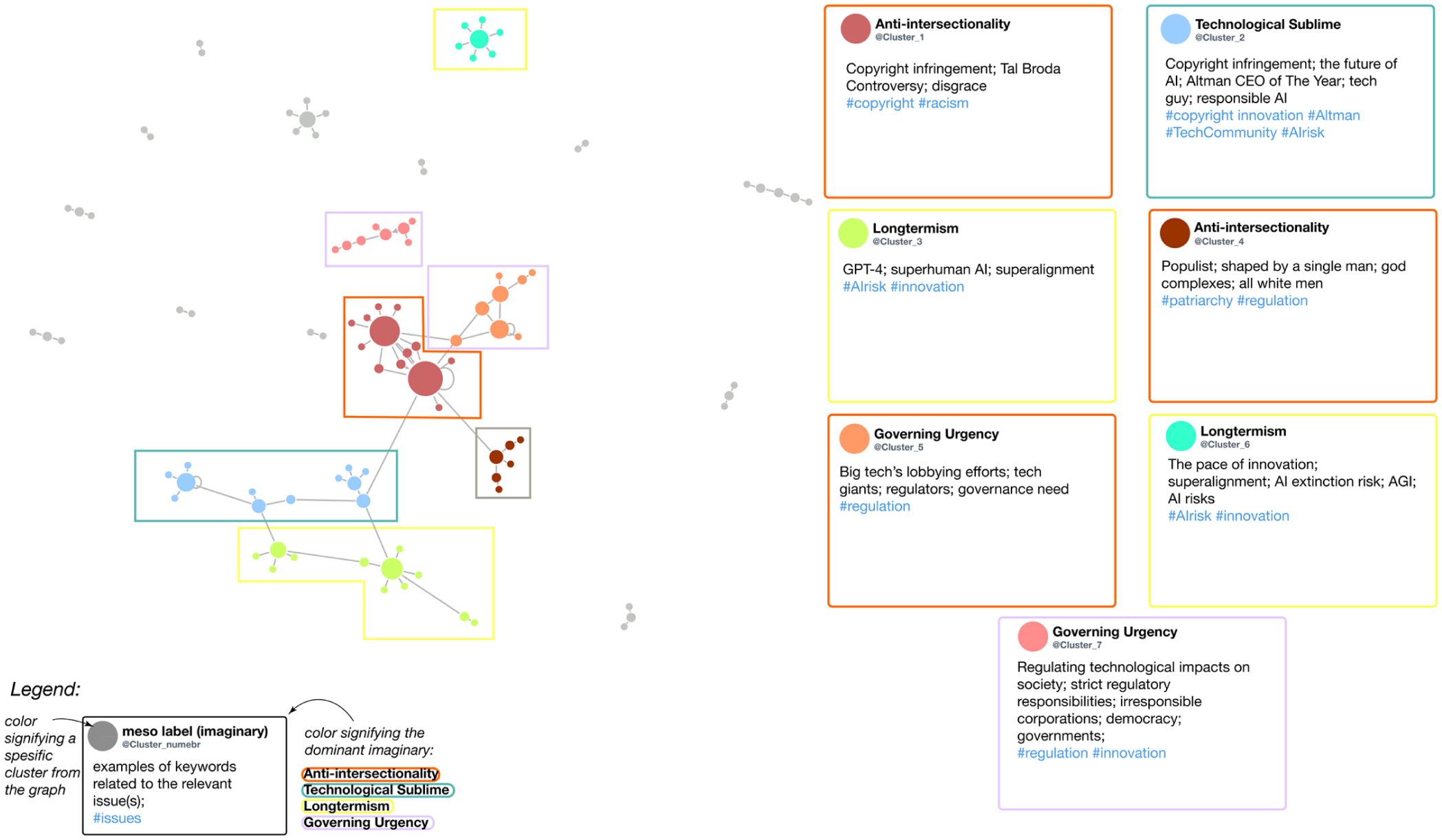

While we encounter similar clusters of AI imaginaries (and their related issues) across the two platforms, we also find more contrary stances on X/Twitter (see Figure 2). The X/Twitter sample indicates that the emerging AI imaginaries have issues that occupy a space beyond (and against) the AI industry’s issue preclusion and premediation. Rather than seeking to manage and prevent AI impacts in the future (from a Longtermist perspective), these issues focus on the problems, harms, and concerns around AI that are visible today. They stress the structural biases of the AI industry, such as the dominance of white men in positions of power and an anti-Palestinian bias.

A representation of the AI Imaginaries and their issues on X/Twitter, as seen on the labeled Gephi retweet graph with paraphrased tweets (posts) content. Each node represents a single X/Twitter account, and its size corresponds to the number of retweets.

The two emerging (counter-) imaginaries we refer to are Governing urgency and Intersectionality. What we refer to as the Governing urgency imaginary centers on the acute need for stronger regulatory initiatives taken up by governments as the only way to face the risks and damages that AI imposes. These are expressions of a strong distrust toward self-regulation of Big Tech and its lobbying. Instead, Big Tech’s promises of improvement are framed as futile. Intersectionality focuses on the lack of diversity, discrimination, and racism that have been reoccurring issues in the Big Tech, especially in managerial circles. Big Tech is predominantly white and male, projecting certain biases and worldview as normative. Mentions of the Tal Broda controversy, 5 which overlapped with Altman’s on X/Twitter, are also discussed as examples of racism that was unaddressed by OpenAI management, showing a larger anti-Palestinian bias within Big Tech. Tal Broda is OpenAI’s Head of Research, whose tweets calling for the annihilation of Palestinians caused a great uproar, resulting in him deleting the tweets. Neither he nor OpenAI addressed the controversy, which led to further criticism. The men who oversee the powerful tech companies are accused of expressing “God complexes” as they are deeply out of touch.

We also note a presence of issues that recall the complexities of AI discourse that are both technologically and legally difficult but pressing. Such complexities include the uncharted terrain of the mass copyright infringement that has been the foundation of AI models, but also the technological black boxing that makes it difficult for tech insiders to comprehend and predict the performance and possibilities of AI in near future. We relate these issues to an emerging imaginary of the Technological sublime (Nye, 1996), emblematic of the cognitive discrepancy between the billions of parameters that steer AI and human comprehension and an uncertainty and excitement about the pace of capability improvement.

We now discuss in more detail the implications of the imaginaries we have identified, and how the issues they raise are handled by the AI industry through premediation and preclusion.

Discussion: the AI industry’s responsibility turn?

Certain imaginaries, which emerged from enacted issues by actors voicing the hegemonic concerns attended to the AI industry, partake in issue preclusion. What emerges as the Regulatory ambivalence imaginary on LinkedIn embodied several issues that facilitate the process of internalization, meaning industry’s recognition that an issue exists, yet presenting itself (the tech sector, the industry) as the best fit to “deal with it,” disempowering outside critiques by absorbing them. As Bode and Goodlad (2023) note, the concentrated industry behind AI has an interest in shifting the attention away from regulatory practices that could impact their research agendas and corporate power. A certain paradox emerges: the actors behind the AI industry express the need for regulation yet avoid practical responsibility or oversight that might reduce their influence. We thus observe a “responsibility turn” by Big Tech actors (Katzenbach, 2021: 3), where “the shape of this responsibility is by no means clear” (Katzenbach, 2021: 3). Issue preclusion is enacted as, on the one hand, the pivoting to the issues of incompetence of governmental actions in the face of new technology in need of regulation; and, on the other hand, actors arguing that is the tech community should take on stronger governance structure to avoid disruptions and conflicts such as the Altman controversy.

The retelling of technological myths, including AI myths, is not only a domain of popular culture but also scientific fields themselves. Here, LinkedIn provides a space for various professionals to engage directly in AI mythmaking through a particular retelling of the Altman controversy, which attunes to an emergent Techno-Hagiography imaginary. The practice of shaping AI myths by the practitioners may be traced back to the early debates around AI research. As discussed by Natale and Ballatore (2020), as early as the 1960s, projecting more-than-human capacities on early AI technologies and actively contributing to mythmaking was a part of scientific debates and publications. This insight also applies to what we refer to as the Longtermism imaginary, where it is the community of computer scientists, Big Tech CEOs, and thinkers who choose to perpetuate certain AI myths and visions. Natale and Ballatore (2020) even note that one of the more prevalent myths lasting from the 1960s onwards is that of shifting the discourse from concrete facts of today to the realm of speculations, moving the discussion “forward from the horizon of the present to the horizon of the future” (p. 11). Issue premediation via Techno-Hagiography also expresses such a perspective, as it historizes the contemporary moment of the Altman controversy and projects upon it its future significance.

The AI industry and aligned actors perform issue premediation by externalizing the issues of concern, such as the harms, risks, and shortcomings of AI technologies and the (Big Tech) industry behind them. Such issue premediation on both LinkedIn and X/Twitter is exemplified by the imaginary of Longtermism. Longtermism as an imaginary has been in the (re)making by a particular tech community over the past two decades. The pressing AGI threat perceived by technology practitioners can be traced to the early 2000s, the beginning of both a movement and a community uniting ideas of effective altruism (“EA”), existential risk (“x-risk”), and, indeed, longtermism. Often termed the “AI Safety” movement, it has grown since into a robust “epistemic community” that occupies itself with applications of EA, x-risk, and longtermism into contemporary AI developments (Ahmed et al., 2024: 1). According to the ideology of longtermism, the human species is “morally obligated both to work on realizing the techno-utopian world that AGI could bring about” (Gebru and Torres, 2024), a moral call that works directly in favor of the AI industry’s issue premediation. While focusing on the speculative threats of superintelligence, AI Safety and longtermism discourses stray away from the present-day harms that AI systems already perform.

We did not find traces of issues and (counter) imaginaries on climate change and the environmental effects of AI, its impact on labor relations, or contextualizing issues by comparing AI to other technologies of the past or presenting future alternatives (e.g., slow AI 6 ). We attribute this invisibility to their lack of performance in the digital spaces within the context and timeframe of the snapshot of the Altman controversy, at least with a resonance that would have surfaced them in our analysis.

As with many sociotechnical controversies viewed through the lens of controversy mapping, the Altman controversy stirred up actors and surfaced tensions about the stakes of AI. Where nuclear power and climate change might draw out actors and issues or shift responsibility to citizens, 7 the AI industry “internalizes” them; the AI industry appropriates controversy to occupy “public concern” (Marres et al., 2024: 4). In our case, the Altman controversy gives Silicon Valley an opportunity to appropriate AI’s controversial issues, making certain of them more concerning than others. It does so through what we refer to as issue premediation and issue preclusion. AI controversies are thus increasingly used by the Big Tech “as a valuation strategy”: an opportunity for leveraging marketing and innovation ends and building up public momentum for their products (Marres et al., 2024: 3, following Geiger et al., 2014).

Conclusion: how the AI industry occupies the future and absorbs the present

By collecting data on two social media platforms during the Sam Altman controversy, we were able to trace the ways in which AI as a technology becomes imagined and how these imaginaries raise issues that the AI industry tackles. Our methodological and conceptual contribution in mapping AI imaginaries, their issues, as well as industry’s issue preclusion and premediation adds to the body of literature on both issue and controversy mapping as well as to the range of theoretical attempts aiming to ground and operationalize imaginaries in the context of sociotechnical entanglements.

By following an invitation to consider Sam Altman’s firing and rehiring through a lens of controversy, one can look at the Altman controversy as an expression of broader tensions where technology, politics, and culture not only intertwine but often clash. We demonstrated how some of the emerging imaginaries that animated certain parts of the controversy around Altman took their roots in issues that predate contemporary generative AI hype or even AI development. These relations become traceable once we discuss the issues inhering in the imaginaries, allowing us to better situate and contextualize contemporary narratives of AI technology.

While reassembling how Big Tech’s AI imaginaries may be stabilizing, the more normative question concerns the monopoly position. This is not surprising, but an inevitable outcome of the merger mania of Silicon Valley in which big players like Meta, Google, Microsoft, Apple, and Amazon bought up sundry small technology companies and consolidated their media concentration. As demonstrated in the recent report by the AI Now Institute, “there is no AI without Big Tech” (Kak and West, 2023), AI imaginaries are indispensably related to the concentration of infrastructure ownership (Kak and West, 2023: 6).

We demonstrated empirically how the AI industry’s preclusion and premediation of issues that arise from imaginaries allows industry to occupy the future and absorb the present. As we found across the X/Twitter data emerging (counter)-imaginaries to those of the AI industry’s, we conclude that the AI industry’s monopolization of the future is not (yet) complete.

Footnotes

Acknowledgements

This paper is a further development of a research project “Mapping, Tracking, and (Re)Making of the Counter-Imaginaries of AI across the Web” conducted during the Digital Methods Initiative (DMI) Winter School 2024. Thanks to Natalia Sanchez-Querubin for the inspiring input in the initial brainstorming of this project. We are grateful for the precious feedback and fruitful discussion we received at the panel “AI Industry Expectations and Underperforming Imaginaries” at AoIR2024, where we presented an earlier version of this paper.

Author contributions

Natalia Stanusch wrote the bulk of the paper and undertook much of the empirical analysis. Richard Rogers wrote some of the theorizing and methodology.

Authors’ note

This paper is a further development of a paper presented at AoIR2024 titled “AI Imaginaries Have Issues: Mapping an AI Controversy with Social Media,” which was part of a panel “AI Industry Expectations and Underperforming Imaginaries,” Stanusch NB, Rogers R, Baym N, Liu C, Shaw R, Katzenbach C, . . . Zeng J (2025) AI Industry Expectations and Underperforming Imaginaries. AoIR Selected Papers of Internet Research. https://doi.org/10.5210/spir.v2024i0.14086. The initial grounding for this paper was laid out in a project “Mapping, Tracking, and (Re)Making of the Counter-Imaginaries of AI across the Web” conducted during the Digital Methods Initiative (DMI) Winter School 2024, accessible at ![]() .

.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Ethical approval and informed consent statements

The social media data from X/Twitter and LinkedIn does not include the usernames and the text of the posts have been paraphrased in order to maintain anonymity.