Abstract

Major digital platforms have long resisted fact-checking their users, even as public concern about misinformation has grown. We explore how they legitimated a change in this policy during the COVID-19 pandemic. Using blog posts by Meta, YouTube and Twitter, the study contributes to the body of research on content moderation by US-based platforms as they expand their policies into contested areas. Based on the theories of discursive legitimation, we examine the strategies platforms employ when presenting their actions in countering false and misleading health information. We show that the pandemic emerges as an important opportunity for platforms to narrate their legitimacy in society. Yet, the newly adopted responsibility to curb health misinformation does not signal a reform towards more truthful platforms, but temporary exceptions whose future is left open. These findings foreshadow the reversals of misinformation policies in recent years and highlight the continued importance of external regulation.

Keywords

Introduction

The coronavirus disease (COVID-19) pandemic intensified calls for social media platforms to address the ‘information disorder’ (Wardle and Derakshan, 2017) that has developed on their watch. As people around the world increasingly turned to digital platforms for health-related advice (see Lee et al., 2023), the unedited nature of these spaces (Bimber and Gil de Zúñiga, 2020) raised serious concerns regarding the reliability of information available to their users. While social media giants including Meta, YouTube and Twitter (now X) had already faced worldwide criticism for their role in amplifying false narratives eroding faith in democratic institutions (e.g. Bowers and Zittrain, 2020), after the global outbreak of COVID-19, their (in)actions regarding unreliable health information were widely perceived to affect the outcomes of a once-in-a-lifetime pandemic.

Digital platforms have been repeatedly pressured to redefine parameters for acceptable speech, whether through regulatory pressure (Gorwa, 2019), negative press (Marchal et al., 2025) or public outcry (Ananny and Gillespie, 2016). These inflexion points raise the question of how digital platforms justify their expanding self-regulation, particularly when their new policies conflict with previously professed principles. False and misleading health claims are a prime example of such a tension. Even before the terms ‘fake news’ and ‘post-truth’ entered the public lexicon, researchers had observed that health misinformation, defined broadly as ‘information that is contrary to the epistemic consensus of the scientific community’ (Swire-Thompson and Lazer, 2020: 434), had proliferated across dominant social media, particularly during pandemics from Ebola to Zika (e.g. Bode and Vraga, 2015; Kata, 2012; Wang et al., 2019). Yet, just months before the outbreak of COVID-19, Facebook CEO Mark Zuckerberg reiterated their position that ‘people should decide what is credible, not tech companies’. 1 For years, Meta’s industry peers have echoed a similar stance, presenting themselves as impartial intermediaries and supporters of maximalist free speech rather than publishers with editorial responsibilities (Gillespie, 2010; Napoli and Caplan, 2017; Scharlach et al., 2023).

This article explores how major digital platforms discursively legitimated their actions against health misinformation during the COVID-19 pandemic. Building upon prior research of platforms’ society-facing discourses in policy documents (e.g. Chan et al., 2023; Cowls et al., 2024; Scharlach et al., 2023), we investigate the discourses these companies mobilize in their corporate communication, a topic that has received considerably little attention, especially regarding health information (cf. Gillespie, 2010; Gillett et al., 2022; Lien et al., 2021). Through this analysis, we extend the recent scholarship on how platforms understand misinformation (e.g. Lien et al., 2021) by exploring how these companies take responsibility for addressing and mitigating it. Drawing on blog posts by Meta, YouTube and Twitter (n = 118), we ask: what discursive strategies do these three major US-based platforms use in their public statements when presenting their actions in countering health misinformation during the COVID-19 pandemic? The study contributes to critical platform studies by showing how the major US-based platform companies strategically mobilized COVID-19 as a critical event to build their legitimacy and position themselves as central, powerful actors in society. Our findings also suggest that regulators should exercise proper caution before accepting this narrative as a substitute for legal obligations, as these same companies have demonstrated in our data and beyond that, their commitment to newfound obligations to moderate health misinformation is weak and shallow.

Content-moderation remedies for health misinformation

Although the severity of digital misinformation remains a topic of debate (see Heikkilä, 2025), false and misleading information is widely believed to promote poor judgements and decisions that could escalate into society-wide troubles (e.g. Ecker et al., 2022). While such effects raise concerns across many domains, from politics to science, various stakeholders, including patients and their families (Spanos et al., 2021), intergovernmental agencies, 2 and civil society actors 3 have sounded the alarm about health misinformation, in particular, given its outsized risks to individual well-being and the collective efforts to prevent and mitigate public health emergencies (Gallotti et al., 2020; Kata, 2012; Wang et al., 2019). During the COVID-19 pandemic, such fears became known as the ‘(mis)infodemic’ (Gallotti et al., 2020). Despite the flaws of this metaphor (see Simon and Camargo, 2021), prior research suggests that digital platforms indeed enhance and enable the circulation of unreliable content by creating environments that allow misinformation to swiftly travel across social networks (Gallotti et al., 2020) and where users struggle to assess the veracity of the content they encounter (Bimber and Gil de Zúñiga, 2020; Pennycook et al., 2021).

As the demand for platforms to address health misinformation has intensified, the idea of moderating it has gained traction. Under the rubric of platform governance (Gorwa, 2019), content moderation is generally understood as the ‘process in which platforms shape information exchange and user activity through deciding and filtering what is appropriate according to policies, legal requirements, and cultural norms’ (Zeng and Kaye, 2022: 61). A growing body of research has highlighted different perspectives to this object of study, ranging from legal and regulatory challenges involved in content moderation globally to the algorithmic systems required to scale up the detection of objectionable contents and behaviours (e.g. Caplan, 2018; Gillespie, 2018; Gorwa et al., 2020). For example, critics have argued that platforms, despite their rhetoric of efficiency, tend to overblock legitimate political speech (e.g. Gorwa et al., 2020). Conversely, moderation practices have also been criticized for being too ineffective, either because they respond to public demands too late or neglect countries outside their narrow business interests (De Gregorio and Stremlau, 2023).

Conventionally, content moderation has been understood as the process of identifying and removing objectionable online content, or ‘hard’ moderation (Grimmelmann, 2015). Yet, platforms also increasingly employ a variety of less intrusive content-moderation remedies (Goldman, 2021), ranging from disabling comments to restricting content visibility. Recent behavioural research has proposed leveraging some of these tools specifically to tackle false beliefs, for example, by featuring corrective information on platforms’ recommended content listings (Bode and Vraga, 2015), tagging disputed content with warning labels (Clayton et al., 2020) or prompting users to pause and consider the accuracy of information they are about to share (Pennycook et al., 2021).

Previous research suggests that platforms’ self-regulatory practices reflect internalized legal and moral obligations, extending moderation into contexts where there is little legal or ethical ambiguity, such as child pornography, copyright infringement and violent terrorism (Gillespie, 2018; Gillett et al., 2022; Gorwa et al., 2020). Historically, health misinformation and other forms of misleading speech have not elicited as clear a condemnation. Conversely, efforts to curb online falsehoods have been regarded as antithetical to free speech, a particularly salient value among US-based platforms (Bimber and Gil de Zúñiga, 2020; Scharlach et al., 2023) as well as their national stakeholders 4 , whose continued support enables these companies to maintain a privileged legal status as ‘neutral’ intermediaries (Gillespie, 2018; Napoli and Caplan, 2017). Instead, digital platforms’ approach to misinformation can be characterized by Ananny and Gillespie’s (2016) concept of ‘governance by shock’, according to which platforms concede to heightened public pressure only to implement barely perceptible policy adjustments. For example, in response to an outcry over a surge of ‘fake news’ during the 2016 U.S. presidential election, Facebook (now Meta) partnered with select fact-checking organizations to flag disputed claims on their behalf (Ananny, 2018) instead of implementing formal policies targeting factually inaccurate content. Similarly, facing both public and legislative pressure for peddling anti-vaccine misinformation during the Measles epidemics of 2018 and 2019, YouTube 5 announced minor changes to its recommendation algorithms, but expressly assigned health misinformation as ‘borderline’ content, which would not violate its community guidelines. 6

While considering their incentives to moderate misinformation, platforms also face the question of how to define it. As Krause and collaborators (Krause et al., 2022) argue, even the academic literature is divided on the interpretation of the term, with a hodgepodge of typologies and classifications that typically downplay the difficulty of identifying ‘false’ claims even in highly evidentiary fields, such as health and medicine. While most scholars would agree that misinformation is factually questionable, determining the veracity of a specific claim opens complex epistemological problems (Jack, 2019). For platforms, there is no clear consensus on how online misinformation and its governance should be operationalized, both in terms of explaining their policies to external audiences and developing internal instructions for human and machine moderators to detect it (Gillespie, 2018). This challenge is further complicated by the fact that misinformation comes in different forms, modes and degrees (Wardle and Derakshan, 2017), some of which are misleading today but later verified as accurate (Swire-Thompson and Lazer, 2020).

Legitimating platform interventions

As discussed above, regulating misinformation is a challenging task with ambiguous goals. Thus, it emerges as a context in which platforms are forced to explain and justify their actions, and in which they can easily highlight their role as societal mediators. After the scandals such as Cambridge Analytica and Facebook Files, the platforms have become targets of accountability demands aiming to render their actions more answerable to public concerns (e.g. Gorwa, 2019), that is, showing their commitment to these issues by addressing them publicly.

Approaching platform reports and communication as demonstrations of their responsibility, we turn to theories of discursive legitimation. Discursive legitimation is a linguistic process through which actors aim to construct legitimacy and authority for their practices in a specific institutional order (Suchman, 1995; Van Leeuwen, 2007). Legitimation, thus, can be adopted as a discourse analytical approach to unpack how justification for social practices is constructed in discourse (Van Leeuwen, 2007). In their seminal work, Van Leeuwen (2007) identifies four central legitimation strategies: authorization, rationalization, moral evaluation and mythopoesis. Authorization aims to build legitimation by referring to authority vested in tradition, custom or law, or to that of persons possessing institutional authority. Rationalization legitimates action by referring to the goals and uses of the action, thus highlighting its instrumental purposes. Moral evaluations build legitimation by mobilizing discourses of moral values through desirable attributes, abstractions or comparisons. Mythopoesis conveys legitimation by telling narratives and connecting actions with real and mythical events. These categories have been extensively applied in empirical research to scrutinize, for example, speeches by political leaders (Reyes, 2011) or the discourses used by a multinational corporation committing a unit shutdown (Vaara and Tienari, 2008).

Organizations seek legitimacy because it protects them from external pressures and questioning (Meyer and Rowan, 1977). A legitimate actor has more leeway to act. Therefore, organizations aim to manage their legitimacy by various communicative strategies to gain, maintain and repair it (Suchman, 1995). As legitimacy claims are constructed in discourses, actors address the question ‘why’: why do something at all, and why do it in a chosen manner (Van Leeuwen, 2007). When searching for approval in their social and institutional environments, actors strategically mobilize specific discourses and positionings (Vaara and Tienari, 2008). Discourses of legitimation, thus, are not innocent; they deal with power relations among involved actors and seek to maintain or reconstruct the discursive dynamics related to established social practices and institutions (Vaara and Tienari, 2008). Legitimation enables the institutionalization of actors, ideas or practices; a status that platform companies are eager to acquire for themselves and their services. Platforms operate in an intermediary position where they need to strategically build their discourses to at least three stakeholders: end users, content producers and advertisers (Gillespie, 2010). As Gillespie (2010) argues, discursive positioning begins from the word ‘platform’, chosen to describe openness, neutrality and novelty. In their community guidelines, platforms strategically mobilize value discourses that serve both commercial and public interests and legitimize their platform governance choices (Chan et al., 2023; Scharlach et al., 2023), maintaining a delicate balance between the two (Ananny and Gillespie, 2016).

Among the many sources that have been explored to find how platforms portray themselves and their responsibilities, this article explores platform blog posts, 7 a rarely utilized corpus of public-facing documents utilized for reasonably varied and flexible self-reporting. Corporate blogs, like community guidelines, serve as stages for important public performances that articulate platforms’ self-image, goals and values (Gillespie, 2010, 2018: 47; Scharlach et al., 2023) and offer glimpses into their content governance arrangements (Bossetta, 2020). While blogs arguably have many uses, they frequently advocate for distinctly social missions (see Tromble and McGregor, 2019) exemplified in titles like ‘The Four Rs of Responsibility’ (YouTube, 3 March 2019). 8 They are, of course, carefully crafted documents that reflect evolving corporate narratives rather than objective reality. Consequently, they are important precisely as discursive performances. Compared with static community guidelines and concise corporate reports, blog posts record in more fine-grained detail how policy rationales evolve, how interventions are purportedly carried out and what successes they might achieve. They are, in short, opportunities for platforms to explain how they perceive issues and to control the narrative they want to convey.

Data and method

Our data consist of public documents crawled from the corporate blogs of globally dominant social media platforms: Meta (about.fb.com/news, parent company to Facebook and Instagram), Twitter (blog.twitter.com, known as X since 2023) and YouTube (blog.youtube, a subsidiary of Alphabet). In terms of self-regulation practices, these industry leaders also closely resemble each other, partaking in many same voluntary governance arrangements (e.g. EU’s Code of Conduct on Disinformation, Gorwa, 2019), sharing similar views on their editorial responsibilities (Gillespie, 2018; Napoli and Caplan, 2017; Scharlach et al., 2023) and enforcing governance decisions through industrial-size content-moderation systems (Caplan, 2018; Gorwa et al., 2020).

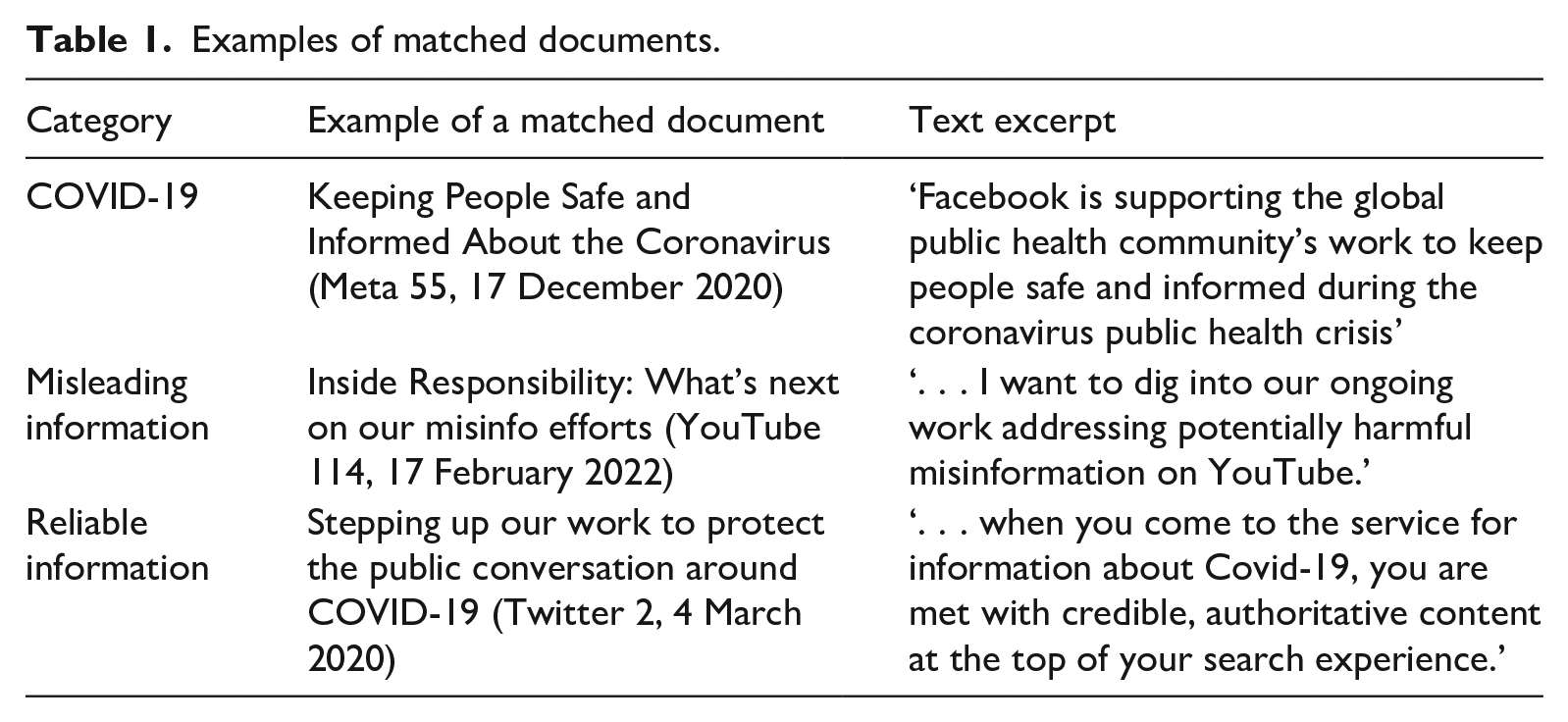

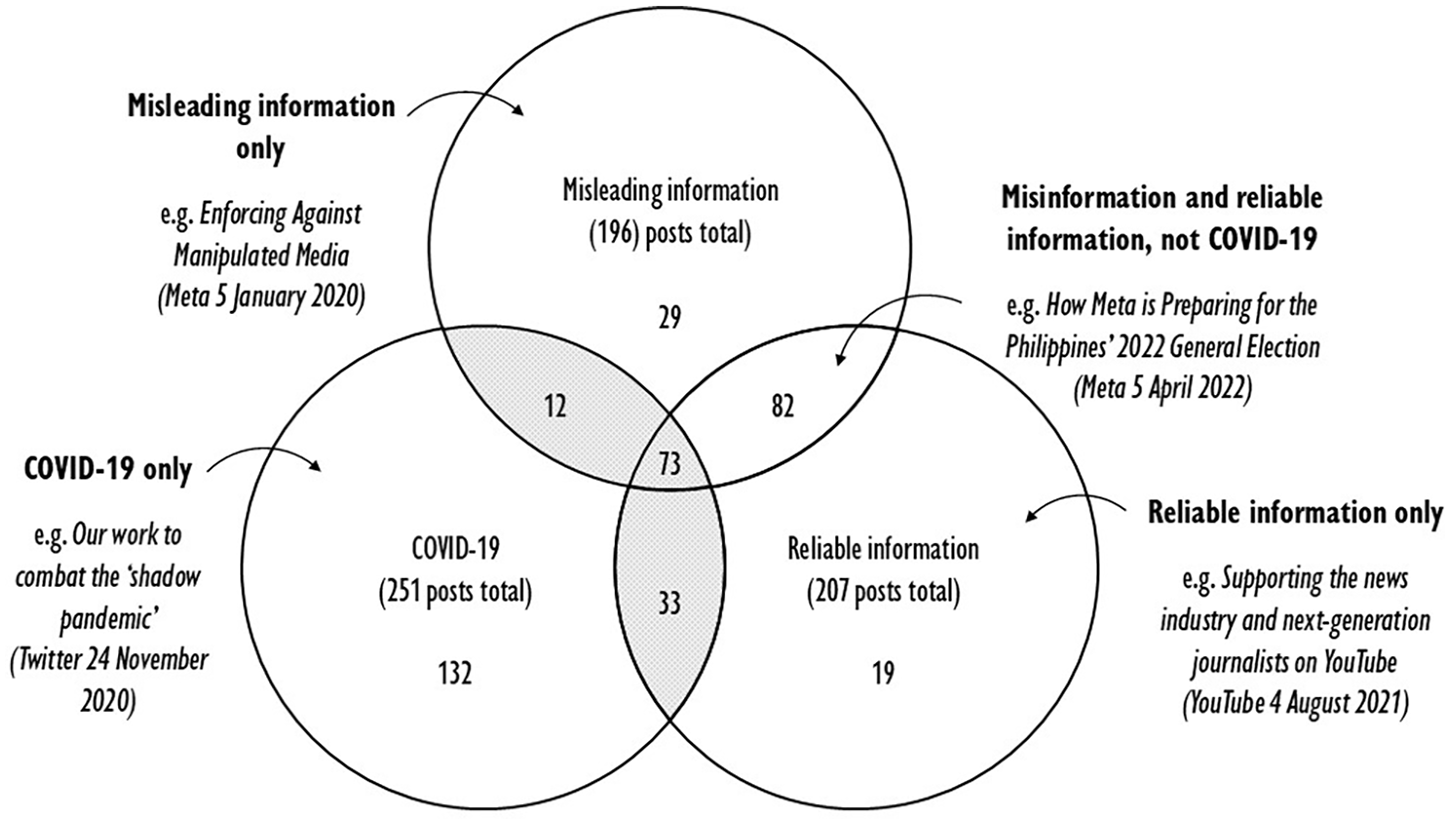

Using keyword queries, we identified posts that discuss problematic content within pandemic-related conversations and engaged in qualitative analysis to detect discursive justifications for platform interventions. Posts were scraped programmatically using Python scripts built on the Selenium framework. We focused our analysis on the English-language sites, given that this was the primary language the three US-based platforms communicate, and to ensure the we felt confident in performing discourse analysis reliably. The corpus was filtered by date, keeping documents published during the first 20 months of the pandemic (1 January 2020 through 31 August 2022). Although COVID-19’s impacts on public health are ongoing, this timeframe captures some of the central developments in the life cycle of a global health emergency, including comprehensive social distancing and vaccination campaigns that gradually phased out mid-2022. 9 The remaining 1351 blog posts were searched using a comprehensive list of keywords based on terms such as ‘misinformation’, ‘covid’ and ‘reliable’ (see Supplemental Material) to locate relevant data related to COVID-19, misleading information and, following Krishnan et al. (2021), reliable information (Table 1). The first author validated prospective matches, yielding 118 documents (67 Meta, 28 YouTube and 23 Twitter blog posts) discussing COVID-19 related topics (e.g. mask mandates) and at least one of the categories for information quality (e.g. removing or amplifying content) selected for qualitative analysis (Figure 1). Individual posts are referenced throughout the analysis by the company and a running index (1–118) sorted by ascending date.

Examples of matched documents.

The number of blog posts matched in each overlapping category.

The first author was responsible for qualitatively coding the final corpus. The discourse analysis proceeded in stages, using Atlas.TI software. The initial stage focused on text passages in which the platforms articulated their actions (moderation, commitments, policy making, etc.). These were then inspected for the presence of typical linguistic tropes of discursive legitimation (Van Leeuwen, 2007), such as authority figures, value systems and stated goals. For example, legitimation by rationalization was coded by identifying purposes (‘commitment’, ‘in order to’), whereas moralization manifested as evaluative adjectives (‘harmful’, ‘healthy’), and authorization by naming commanding subject positions (‘experts’). Special attention was paid to the use of verbs (‘challenge’, ‘elevate’), along with their associated subject positionings. Next, an open coding approach was used to connect found passages to broader themes (‘transparency’, ‘responsibility’) and recurring lexicon (e.g. ‘keeping users safe and informed’). During this final stage, all authors met regularly in peer-debriefing sessions (Lincoln and Guba, 1985) to refine the code categories and to map them to specific legitimation strategies, distinct roles (e.g. providers of authoritative information) and tactics (e.g. comparing platform interventions with public health measures).

The qualitative analysis revealed that different companies employed strikingly similar discursive strategies, down to individual phrases (e.g. ‘connecting people to reliable information’, ‘providing context’). This observation is consistent with the existing literature that points to the close operational and aspirational alignment between Silicon Valley tech companies (Gillespie, 2018; Gorwa et al., 2020; Marchal et al., 2025; Napoli and Caplan, 2017; Scharlach et al., 2023). The analysis was therefore conducted on a unified corpus instead of comparing companies. Still, in reporting the findings, we highlight variations in tone and emphasis that set blogs apart. We begin the Findings section by briefly describing the content of our materials in general terms (what remedies platforms introduced and how they frame them) and proceed to report our findings regarding the four legitimation strategies platforms use to justify their interventions.

Findings

From the outset of the pandemic, interventions against COVID-19 misinformation were a considerable topic across all platform blogs. Rather than minimizing their role during a public health emergency, Meta, YouTube and Twitter demonstrated from early on a strong commitment to ‘do their part’ in support of the crisis response. The materials conveyed a sense of urgency and concern, but also confidence in the companies’ ability to ‘step up’ their responsibility and meet the emerging challenges. Although the term ‘infodemic’ was not used explicitly, the companies framed their responsibility in terms of maintaining information quality. Like a virus, COVID-19 misinformation was likened to a medical threat, whose spread could only be prevented with comprehensive countermeasures.

Following strikingly similar timelines and approaches, Meta, Twitter and YouTube announced explicit and global policies prohibiting at least some misinformation about COVID-19, and later, vaccines in general (see Krishnan et al., 2021). While all platforms acknowledged the complexity of defining misinformation, they settled on a relatively straightforward definition of ’false or misleading claims’ (Twitter 6) that conflict with the ‘reliable’ and ‘authoritative’ information from health authorities and other ’trusted sources’ (YouTube 58). In practice, explicitly prohibited claims were grouped into epidemiologically inspired categories, such as ‘false claims about cures, treatments, the availability of essential services or the location and severity of the outbreak’ (Meta 10). With the help of expert input, platforms developed a two-tiered system in which some claims are prioritized as ‘harmful’ misinformation, while others, such as ’conspiracy theories about the origin of the virus’ (Meta 10), elicited a less urgent response. In enforcing these rules, platforms drew from a broad toolkit in which legacy-moderation practices (content removals, suspensions) are complemented with lower-consequence remedies like warning labels and visibility reductions. Expanding their editorial oversight, they also announced new types of responses such as educational check-ups for users that have interacted with removed misinformation (Meta) and engaged in other editorial actions such as setting up dedicated information centres and drafting prompts aimed at ‘elevating credible sources’ instead of removing unacceptable behaviour.

Moral legitimation: moderation as a protection against harmful misinformation

The platforms regularly defended interventions against misinformation by embedding their ethical responsibilities into discursive strategies recognizable as moral legitimation (Van Leeuwen, 2007). Most notably, the data conveyed a concern for the safety of online users during a surge of dubious COVID-19 advice. This commitment is consistent with the basic normative principles of content moderation (e.g. Grimmelmann, 2015). In the spirit of the ‘community keeper’ role (Gillespie, 2018: 49), the companies portrayed themselves as the ultimate protectors of fragile online communities, but also further justified their actions by drawing parallels to the work of public health authorities. That is, the companies went beyond merely reporting their enforcement of content policies; they morally abstracted their interventions as ‘protecting the public conversation’ (Twitter 2) and even ‘fighting against COVID-19’ (Meta 101) This new feature provides yet another layer of protection by limiting the spread of viral misinformation or harmful content, and we believe it will help keep people safer online. (Meta 35, 3 September 2020)

The value of ‘safety’ was routinely contrasted with the threat of ‘harmful’ (Twitter 17) and ‘dangerous’ (YouTube 102) misinformation against which platforms actively need to protect their users. Meta, in particular, proclaimed it cannot tolerate misleading COVID-19 claims because they pose ‘imminent physical harm’ (Meta 13). This utilitarian position was, however, undermined by a lack of coherent rationale. While platforms repeatedly made sweeping causal claims about ’harms’, none provided detailed explanations of how they believe exposure to dubious health information would lead to harmful behaviour. The lack of specificity suggests that the term is invoked not as a descriptive account, but as a normative label which selectively draws attention to illegitimate speech (see Gillett et al., 2022). Moreover, portraying misleading claims as dangerous is convenient for several reasons. For instance, platforms have invested in moderation technologies aimed at quickly detecting offensive behaviours (Gillespie, 2018; Gorwa et al., 2020), not fact-checking contentious claims. Qualifying misinformation as ‘harmful’ also simplifies the logic for penalizing it. Similar to hate speech and other ‘abuses’ that cause distress (Grimmelmann, 2015), the removal of ‘dangerous’ misinformation can be prioritized without engaging with thorny ethical considerations. And while the platforms did not seem to think that all misinformation was ‘harmful’, the category proved to be broad and flexible: (. . .) [T]o reduce the spread of misinformation, we’ll enforce our policies on harmful, false content including by removing claims like:

Claims that the COVID-19 vaccines do not exist for children Claims that the COVID-19 vaccine for children is untested Claims that something other than a COVID-19 vaccine can vaccinate children against COVID-19 (. . .) (Meta 105, 29 October 2021)

While maintaining safety gave platforms a strong justification for sanctioning misinformation, it also put them in a precarious position of violating their overriding commitment to free expression. Although prior precedent proves that this commitment, too, can be bent (Scharlach et al., 2023), the platforms were adamant that they can ‘strike a sensible balance’ (YouTube 96) between suppressing misinformation and promoting ‘good faith public debate’ (Twitter 6). These assurances were a particularly salient part of YouTube’s (22, 76, 96, 97, 102 and 114) justifications: At YouTube, we’ll continue to build on our work to reduce harmful misinformation across all our products and policies while allowing a diverse range of voices to thrive (YouTube 114, 17 February 2022)

One way to reconcile the ‘tension between values of voice and safety’ (Meta 52) was to anchor moderation to the principle of justice. Interventions become legitimate, platforms argued, when penalties are distributed harshly and swiftly, but also fairly and proportionately. These considerations give rise to a two-tier system. On the one hand, platforms were willing to punish egregious misinformation with a purposefully ‘aggressive’ (Meta 55) or ‘rigorous’ (YouTube 71) approach. By contrast, remedies against merely ‘misleading’ content were less intrusive, such as warning labels and information panels. In such cases, platforms assumed the role of benevolent governors, not punishing but ‘educating the public’ (Twitter 68, YouTube 82) with the ‘context they need to make informed decisions’ (Meta 70).

Rationalization: moderation as an efficient and scalable process for controlling information quality

According to Van Leeuwen (2007: 101), instrumental rationalization legitimates practices by drawing attention to their purposes, means and ends that make the activities justified. In the case of COVID-19 content-moderation remedies, the first goal the platforms invoked was to ‘limit the spread of false information’ (Meta 52), thereby ensuring that as few people as possible would be exposed to it. This rationale was predicated upon a logic of efficiency or having a maximum impact with minimal effort. Rather than focusing on the supply or demand for health misinformation, platforms assumed the tasks of administrators in charge of dependable ‘logistics of detection, review, and enforcement’ (Gillespie, 2018: 21) that could weed out policy violations ‘proactively’ (Twitter 57) and ‘quickly’ (Meta 34). In this role, platforms sought to ‘solve’ misinformation by ‘using systematic, rationalized procedures’ (Jack, 2019: 440) that identify, classify and remove the problem one piece of content at a time (see Wardle, 2023). This was evident in ubiquitous ‘success statements’, which reported recent moderation achievements by tallying interventions against contents and accounts: As a result, we’ve removed more than 12 million pieces of content about COVID-19 and vaccines. (Meta 72, 22 March 2021)

By operationalizing misleading speech primarily as a piece of content that human and machine moderators can easily process, the platforms stripped misinformation of its epistemic complexities, messy intentions and social functions. Ultimately, these rational goals justified a dramatic increase in fully automated content-moderation decisions (YouTube 5, Twitter 6, Meta 57), which, despite their scalability, were prone to overblock legitimate speech by mistake: Because responsibility is our top priority, we chose the latter – using technology to help with some of the work normally done by reviewers. . . . [W]e accepted a lower level of accuracy to make sure that we were removing as many pieces of violative content as possible. (YouTube 33, 25 August 2020)

The second main purpose of COVID-19 remedies was to keep platform users informed about crucial developments that could impact their well-being. Platforms often introduced this rationalization by telling how they had recently discovered that people use social media ‘to get accurate information’ (YouTube 14). Catering to this need, platforms were happy to redirect users to the type of content ‘found most valuable’ (Meta 107). The added benefit of better service was that platforms allegedly helped people to make ‘informed decisions’ (Meta 37) or to ‘keep themselves and their families safe’ (YouTube 96): When we ask people what kind of news they want to see on Facebook, they continually tell us they want information that is timely and credible. (Meta 24, 25 June 2020)

The aim to satisfy users’ informational needs had a distinctly individualistic and rational character. It invoked what Tromble and McGregor (2019) call ‘naivé social engineering’ (p. 325), or platforms’ tendency to view complex social problems through the lens of user experience. In their discourse, platforms assumed that simply ‘connecting’ users to authoritative information automatically leads to rational decisions (see Munn, 2024), whereas misinformation distracted people from receiving authoritative emergency communications and therefore from acting in their best interest. It is noteworthy that companies that had previously downplayed ‘shadow banning’ social media users (Savolainen, 2022) now publicly announced to have full control to dial their content feeds. As such, platforms emerged as vital amplifiers of health information that ‘elevate’, ‘surface’ and ‘raise’ certain sources while ‘reducing’, ‘demoting’ and ‘downranking’ objectionable claims: On Instagram, in addition to surfacing authoritative results in Search, in the coming weeks we’re making it harder to find accounts in search that discourage people from getting vaccinated. (Meta 13, 16 April 2020)

Authorization: moderation approved by experts

As self-governing private entities, platform companies have broad powers to exercise moderation at their discretion (Gillespie, 2018; Gorwa, 2019; Napoli and Caplan, 2017). However, platforms also seek to strengthen their mandate by allowing external actors to partake in their decision-making (Van Leeuwen, 2007: 94). The primary authorities used to legitimize the moderation of health misinformation were medical experts. Since the outbreak of the pandemic, all platforms announced partnerships with global and national health authorities. The blogs further suggested that these experts were authorized at all levels of content regulation, from initiating and co-authoring platform policies to auditing and refining their moderation approaches. Most importantly, outside entities were delegated considerable responsibility for identifying and determining what claims platforms would regard as (harmful) misinformation. Although these accounts could exaggerate the true influence of external advisors (see Ananny, 2018), it is noteworthy how integral experts supposedly were to the platforms’ overall approach: . . . we consider claims to be false or misleading if (1) they have been confirmed to be false by subject-matter experts, such as public health authorities. (Twitter 59, 13 January 2021) Dealing with COVID-19 misinformation our approach has been to rely as much as we can on health authorities, whether they’re national health authorities – like the NIH, the CDC back in the States or the World Health Organization – as they developed their approach to things like masks, or what type of cures are harmful versus not. (YouTube 118, 19 May 2022)

Although expert authorization featured prominently throughout the data, some ambiguity remained surrounding the identity of these experts and their selection. The term ‘expert’ was rarely attached to a specific organization, such as the CDC or the WHO, and appeared more often as a generic stand-in for some undisclosed group of public health actors. According to platforms’ representation, ‘expert consultations’ were also curiously univocal at a time when scientists and health practitioners had to navigate emerging and highly tentative medical evidence (see Krause et al., 2022). It also appears that the platforms sourced these advisors only from public health authorities, excluding other relevant stakeholders. For example, Meta reflexively dismissed investigative journalists, watchdog groups and governmental actors raising concerns over the company’s mishandling of COVID-19 misinformation (Meta 91, 93, 100). This myopic approach is exemplified by a debate between Meta and its Oversight Board, in which the company essentially overruled a multidisciplinary panel of experts in platform governance, policy design and civil rights law in favour of biomedical scientists with no background in these areas: There is one remaining recommendation [by the Oversight Board] that we disagree with and will not be taking action on since it relates to softening our enforcement of COVID-19 misinformation. In consultation with global health authorities, we continue to believe our approach of removing COVID-19 misinformation that might lead to imminent harm is the correct one during a global pandemic. (Meta 67, 25 February 2021)

Mythopoesis: moderation as a response to exceptional circumstances

Finally, the justification for COVID-19 misinformation interventions is related to the pandemic itself. Platforms argued that in such ‘temporary and unique circumstances’ (Meta 52), ‘a pivotal moment in history’ (YouTube 71) ‘surpassing any event we have seen’ (Twitter 59), it was justified to react with exceptional approach, even if this meant reversing prior principles and creating new policies. The COVID-19 crisis represents, in other words, ‘a radical departure from the pattern of normal expectations’ (Turner, 1976: 381) that compels companies to take ‘extraordinary steps’ (YouTube 33). This readjustment under pressure was regularly invoked when explaining new policies, features and partnerships. The exceptional circumstances were also used to excuse mistakes and errors that occurred when platforms had to solve problems on the go: We want to be clear: while we work to ensure our systems are consistent, they can sometimes lack the context that our teams bring, and this may result in us making mistakes . . . We appreciate your patience as we work to get it right (Twitter 6, 16 March 2020)

By invoking the crisis event, platforms’ justifications follow a narrative structure consistent with Van Leeuwen’s (2007) mythopoetic legitimation. Interventions against misinformation, according to Meta, had began with a single triggering event when WHO declared COVID-19 as a global health emergency (e.g. Meta 10, 13, 55) and were updated to reflect the epidemiological developments, campaigns and public health guidelines (e.g. ‘Supporting Vaccine Efforts on our Apps’, Meta 83). Notably, aligning the legitimacy for these policies with the life cycle of a pandemic strongly suggested that they would become irrelevant once the public health crisis was contained. In practice, platforms created such an expectation by deliberately referring to ‘COVID-19 misinformation’, not ‘health misinformation’, even though their policies explicitly forbid medical claims that could apply to many infectious diseases (see Krishnan et al., 2021) and exempted untrue social commentary related to this particular pandemic: Meta is asking the Oversight Board for advice on whether measures to address dangerous COVID-19 misinformation, introduced in extraordinary circumstances at the onset of the pandemic, should remain in place as many, though not all, countries around the world seek to return to more normal life. (Meta 118, 26 July 2022).

To summarize, platforms portrayed the pandemic as an unexpected shock that necessitated an urgent response (Ananny and Gillespie, 2016). Yet, they failed to consider whether this ‘infodemic’ was not a singular event, but rather the gradual culmination of their cumulative organizational failures. By attributing health misinformation to an external ‘act of God’, the platforms could avoid the issue of creating the breeding grounds for such content in the first place. This refusal to acknowledge past failures is illustrated well in a series of blog posts from December 2020, when the companies instituted for the first time a comprehensive ban on ‘misleading vaccine information’ (Twitter 54)—identified already a decade earlier (Kata, 2012)—not to address an overlooked policy area, but specifically and narrowly to support ‘health leaders and public officials in their work to vaccinate billions of people against COVID-19’ (Meta 63).

Discussion

In this article, we examined discursive legitimation strategies adopted by three major US-based platform companies to mitigate health misinformation during the COVID-19 pandemic. Our study broadens the discussion on what scholars have identified as an exceptionally interventionist approach adopted by these platforms (Cotter et al., 2022; Krishnan et al., 2021). Given the implications of global, comprehensive policies that prohibited misinformation for the first time on dominant digital platforms that were just recently characterized as the ‘unedited public sphere’ (Bimber and Gil de Zúñiga, 2020), we set out to explore how these companies assumed responsibility for addressing and mitigating it.

Approaching platforms’ corporate blog posts during COVID-19 as purposefully crafted discursive performances that invoke certain frames, roles and narratives over time, we have shown that platforms legitimate their newfound responsibilities combating health misinformation with four distinct strategies that map into Van Leeuwen’s (2007) analytical framework. Moral evaluation enables companies to adopt a role of community protectors with an ethical duty to block a broad category of ‘harmful’ misinformation and part of benevolent governors making fair rulings that balance moderation with free speech commitments. Rationalization connects moderating misinformation to goals of efficiency and impact, also justifying the indiscriminate removal of legitimate speech. In this context, platforms presented themselves as administrators overseeing intricate enforcement arrangements, and as amplifiers of reliable information connecting people with the content they allegedly want and need. By invoking the authority of medical experts, social media companies effectively outsourced thorny epistemic questions regarding truth and accuracy, while assuming the role of supporting partners to the real crisis managers. Finally, platforms used mythopoetic legitimation to narrate their policymaking as a crisis response to a pandemic with a clear beginning, middle and a projected end.

Our findings contribute to an ongoing discussion of the mechanisms that inform how platforms’ self-regulation evolves over time. Previous studies have shown that moderation policies can respond quickly to public shocks and scandals (Ananny and Gillespie, 2016; Bossetta, 2020). Our study extends this line of inquiry by showing that digital platforms also strategically mobilize specific elements of these shocks in their corporate discourse. Mobilizing such discourses is assumably a strategic choice for companies struggling in the middle of the ‘techlash’, an attempt to repair their legitimacy as societal actors (Suchman, 1995). In our data, the pandemic is depicted as stripped of its contentious and political nature, which is also strategically beneficial for the platform companies accused of meddling with political communication. The four legitimation strategies together convey that platforms can ‘move fast and fix things’, that is, muster up an independent and persuasive response to disruptive events. In line with the legitimation through ‘constitutional metaphors’ (Cowls et al., 2024), our findings revealed language that depicts platform companies as serious governing bodies, adhering to self-imposed policies and expert consultations. This trend has continued during the most recent crises, including platforms’ response to the Russian Invasion of Ukraine (Pantti and Pohjonen, 2023). Notably, this can be seen as a signal to national and international regulators.

Although Meta, YouTube and Twitter all used the COVID-19 pandemic as an opportunity to showcase their editorial duties, their ability to control content flows and their capacity to remove misinformation on a large scale, the policy implications of this narrative should be approached with caution. In particular, our findings contest the initial optimism that platforms’ recent actions would signal a shift towards more accurate or truthful information environments. Whereas research has highlighted that the propagation of online misinformation is strongly influenced by the structural incentives and disincentives embedded within social media spaces, our materials are conspicuously silent about any meaningful reform in this area. In contrast, the most enduring change attributed to the COVID-19 pandemic seems to be the surge of automated content moderation, which platforms defend by its scalability, not accuracy or consistency. Moreover, while this study has not assessed whether platforms actually enforce their policies, it is becoming evident that there is a considerable gap between promising moderation and delivering it (Mündges and Park, 2024). The same caution applies to, for example, the disinformation reports that the platforms are now requested to publish under the Code of Practice on Disinformation in the European Union.

Our findings also contribute to a broader understanding of how platforms frame misinformation. In addition to being ‘highly nuanced and varied’ (Lien et al., 2021: 7), we show that platforms’ conceptualization of health misinformation is intertwined with strategic goals to legitimate their pre-existing content-moderation arrangements and to secure greater leeway as legitimate social actors. Specifically, this conceptualization rests on three convenient, but questionable assumptions. First, platforms represent misinformation as inherently atomized content divorced from prevalent societal narratives and identities like race and gender, which play a central role in the dynamics of misinformation production and consumption (see Wardle, 2023). Second, platforms utilize a flexible conception of ‘online harms’ (Gillett et al., 2022) to frame misinformation as an inherently dangerous phenomenon with a vague yet imminent potential for real-world harms. Finally, platforms assume that even during unfolding crises, there exist undisputable facts that can conclusively determine whether content is true or false (cf. Krause et al., 2022).

Taken together, our findings suggest that platforms ultimately struggle to solidify their approach to misinformation beyond the most recent public health emergency. By framing their actions as a response to an unforeseen ‘Act of God’ event, social media companies seem to purposefully distract attention from a well-documented history of prior platform-enabled ‘epistemic problems’ (Bimber and Gil de Zúñiga, 2020) with obvious ties to the ‘infodemic’, such as hosting thriving anti-vaccine communities (Kata, 2012). Similarly, the rules, partnerships and features platforms introduced during our study period are qualified by transitory and external circumstances. Unlike ‘coordinated inauthentic behaviour’ with designated reporting obligations, teams and resources, the category of ‘COVID-19 misinformation’ does not trigger the organizational rearrangements that usually accompany crises to platforms’ public standing (Bossetta, 2020). Rather, platforms present a temporary solution to divisive epistemological questions only by outsourcing them to opaque expert partnerships specific to the COVID-19 context. Instead of debating whether principles like truthfulness or accuracy have a place among core platform values, platforms constantly signal that their priorities and urgency in tackling health misinformation will not necessarily outlast the recent pandemic. Whereas justifications during the pandemic relied heavily on ‘real-world harms’ and ‘expert consensus’, it is difficult to imagine similar legitimation in other domains of misinformation, such as politics. As we have witnessed following the recent developments in Twitter/X and Meta, many of the responsibilities highlighted in this article can be quickly revoked under new owners and circumstances.

Conclusions

In this study, we adopted a discourse analytic viewpoint to investigate the legitimation strategies platforms publicly employed when presenting their roles, actions, rationales and successes in correcting and disrupting health misinformation during the COVID-19 pandemic. This study contributes to ongoing debates in the nexus of digital platforms’ social roles and their capacity to tackle online harms (e.g. Gillett et al., 2022) by highlighting how the platforms position themselves as protectors of public conversation and central actors who uphold information dissemination, healthy online spaces and, ultimately, the society. Our study shows how the COVID-19 pandemic appears to the platforms as a critical event, a shock to the status quo (Ananny and Gillespie, 2016) that the companies use to narrate their legitimacy and responsibility in society. However, contrary to previous research suggesting that platform companies react to unambiguous issues (Gillespie, 2018; Gillett et al., 2022; Gorwa et al., 2020), we have demonstrated how they can also strategically exploit issues to expand their policies into contested areas. In this regard, the dissemination of health misinformation during a global health crisis offered an opportune moment, characterized by relatively clear standards for truth and a global sense of urgency. Thus, instead of reacting with minimal and insufficient adjustments to existing policies (Ananny and Gillespie, 2016; Bossetta, 2020), platforms are also capable of leveraging shocks as preemptive opportunistic governance mechanisms, to reinforce their increasingly prominent infrastructural and institutional position. Indeed, the means and the discourse platforms adopted are closely related to their earlier actions regarding unwanted content. Therefore, we maintain that instead of accepting a story that emphasizes the novel, and the expected, critical platform scholars should pay close attention to the continuum of discourses corporations use to construct their position in society.

Our systematic data-collection strategy and focus on corporate blogs enabled us to illuminate the trajectory of responses and related discourses by three major US-based platforms during the first 2.5 years of the pandemic. This advances the focus of previous exploratory studies focused on the early days of the pandemic (Cotter et al., 2022; Krishnan et al., 2021). However, the study is not without limitations. Most importantly, our materials represent a narrow slice of all misinformation-related actions and communications outside the recent public health crisis. We encourage future studies to address this gap with a more longitudinal design, also in contexts beyond health misinformation, such as elections and terrorism, as well as with a broader selection of platforms, including those based outside the US. As a related limitation, our analysis used only English-language materials. The platforms are global corporations whose actions have consequences worldwide, often pronouncedly in smaller countries and languages (Flew, 2021). Future research should explore the global discourses of the platform companies in more diverse linguistic and regional settings. It is also worth emphasizing that our study is limited to corporate truths and aspirations. While voluntary public statements can offer important insights into how platforms portray themselves, they should not be confused with the realities experienced by social media users or the employees of these corporations. Even when platforms use constative language, they profess actions and objectives that are difficult to review independently. Unfortunately, the possibilities for researchers, policymakers and activists to critically scrutinize and challenge these reports are increasingly deteriorating.

Supplemental Material

sj-docx-1-nms-10.1177_14614448251365270 – Supplemental material for Reluctant arbiters of truth: Discursive legitimation of platform interventions against COVID-19 misinformation

Supplemental material, sj-docx-1-nms-10.1177_14614448251365270 for Reluctant arbiters of truth: Discursive legitimation of platform interventions against COVID-19 misinformation by Tuomas Heikkilä, Salla-Maaria Laaksonen and Matti Pohjonen in New Media & Society

Footnotes

Acknowledgements

We want to thank the three anonymous reviewers for their insightful recommendations. We are grateful to Iida Lähdekorpi for their excellent and expert assistance in collecting the research materials and to Mervi Pantti for commenting on earlier drafts. An earlier version of this manuscript was presented at the ECREA 2022 9th European Communication Conference.

Data availability statement

The dataset used in the current study may be available upon reasonable request.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was partly supported by the Academy of Finland research project MAPS: Media platforms and social accountability (grant number 332751/2019), Helsingin Sanomat Foundation project Unconventional Communicators in the Covid-19 Crisis, and the Finnish Cultural Foundation under the grant 00220294; Suomen Kulttuurirahasto.

Supplemental material

Supplemental material for this article is available online.

Notes

Author biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.