Abstract

Content moderation plays a pivotal role in structuring online speech, but the human labour and the everyday decision-making process in content moderation remain underexamined. Informed by in-depth interviews with 16 content moderators in India, in this research, we analyse the decision-making process of commercial content moderators through the concept of sensemaking. We argue that moderation decisions are made in the context of the industry’s plural policies and efficiency requirements. An interplay of four cognitive processes of pattern identification, subjective perceptions, shared knowledge, and process optimization influences the final judgement. Once sense is enacted in the decision-making process, the sensibilities are retained by the adept moderator for future moderation decisions. Visibilizing the labour process behind commercial content moderation, we argue that everyday moderation decisions unfold in a socio-technical and economic assemblage wherein decisions are decontextualised and plausibility driven rather than consistency driven.

Keywords

Introduction

In October 2022, an Indian news website, The Wire, reported that Instagram removed a satirical story depicting a person worshipping an idol of Uttar Pradesh’s Chief Minister (an Indian province) as sexual content. According to The Wire’s two-part report, Meta (Instagram’s parent company) took down the post without review, based on reports from its cross-check (X-Check) accounts associated with a political leader from the ruling party (Newton and Schiffer, 2022). In response, Meta clarified that the post was ‘surfaced for review’ by their automated system and not by a user (Meta, 2022) and strategically remained mute on how the final decision to remove the content was made. After several debates on the accuracy of The Wire’s claims and an internal review, the news agency retracted the report. However, a central question remained unanswered: how was a post about a man praying to a political leader’s idol ‘surfaced for review’ as sexual activity and nudity (emphasis added) and then removed after the review? How did the reviewer decide to remove the content?

Generally, human labour in content moderation remained behind the screens until investigative journalists uncovered the practice of commercial content moderation on social media platforms. These moderators handle the daunting task of sifting through vast amounts of content with high accuracy demands and pressing timelines (Buni and Chemaly, 2016; Chen, 2014). Scholarly research has explored various aspects of content moderation. This includes understanding its taxonomy (Grimmelmann, 2015; Roberts, 2019), moderation ecosystem (Klonick, 2018), power asymmetries in the outsourcing practices (Ahmad and Krzywdzinski, 2022; Roberts, 2019) and the psycho-social impact of moderation work on labour (Roberts, 2019). However, the decision-making process employed by commercial content moderators remains underexamined. Some studies have provided glimpses into how moderators categorise content as clearly problematic or as grey areas (Stockinger et al., 2023) or become complicit in post-racist ideologies under employment pressures (Jereza, 2024). The cognitive processes underlying the perception of content as problematic and the choice of enforcement require academic attention. Focusing on these aspects, in this research, we ask: How do commercial content moderators decide whether content should stay or be removed from the platform? What is the decision-making process employed by the content moderators?

Given the complexity of content moderation on social media platforms, where diverse content and multiple platform policies intersect, we contend that decision-making operates in the equivocal questions of ‘what to moderate’ in the volumes of unfamiliar content, policies and processes, and ‘moderate to what extent’ such that the moderator meets the efficiency requirements. Utilising Weick et al.’s (2005) concept of sensemaking, that is, the act of comprehending unexpected circumstances and acting upon it based on the comprehension, we begin by understanding the equivocality in the content moderation process and then trace the decision-making journey through the stages of enactment, selection, and retention. We identify the different factors that contribute to decision-making in each of these stages to visualise the process. Overall, we argue that everyday moderation decisions unfold in a socio-technical and economic assemblage – an interwoven system of human, non-human (mainly technological) and economic factors. Our findings visibilize the labour process of commercial content moderators behind the production of monetisable and safe spaces for online communication and provide a primer for future research on moderation decision-making.

Content moderation (that is known)

Content moderation is the ‘detection of, assessment of, and interventions taken on content or behaviour deemed unacceptable by platforms or other information intermediaries, including the rules they impose, the human labour and technologies required, and the institutional mechanisms of adjudication, enforcement, and appeal that support it’ . . . (Gillespie et al., 2020: 2)

This definition suggests that the practice of moderation involves both human labour and technology. While algorithmic moderation enables moderation at scale, it is limited in contextual understanding (Duarte et al., 2017) and obscures the politics and biases behind decisions (Gorwa et al., 2020). Human labour is necessary to deal with the contextual complexities in decision-making (Caplan, 2018; Duarte et al., 2017; Gillespie, 2018) and the inherent biases of automation (Binns et al., 2017). Moderation practices are treated as trade secrets to circumvent users from gaming the system (Gerrard, 2018; Roberts, 2018), and this invisibility can disproportionately impact marginalised users (Thach et al., 2022). Human reviewers enforce platform policies behind the scenes, maintaining the platform’s objective image (Roberts, 2018) and the feeling of frictionless communication (Carmi, 2019). Existing scholarship on human content moderation has examined its organisation, enforcement and decision-making. We trace and detail this scholarship in the following paragraphs.

Human engagement in moderation may be voluntary (by the platform’s active users) or organised for remuneration, referred to as commercial content moderation (Roberts, 2019). Voluntary moderation, independent of employment arrangements, enables moderators to set standards (Cai and Wohn, 2022), profile violators for enforcement severity (Cai and Wohn, 2021), and assume various decision-making roles (Seering et al., 2020). Commercial content moderation, the focus of this study, involves organised labour, often structured in a three-tier system (Klonick, 2018) and becomes complex when outsourced to other countries (Ahmad and Krzywdzinski, 2022). The globally integrated yet locally fragmented supply chain, concentrated in the Global South, fosters power asymmetries (Ahmad and Krzywdzinski, 2022) and exploitation through competition among outsourcing agencies (Roberts, 2019). The work demands moderators’ embodiment of the platform as its gatekeepers (Carmi, 2019) and affective labour to deal with content extremities (Roberts, 2019).

In terms of the enforcement of content moderation, human moderation may be triggered before (ex ante) or after (ex post) publication of content (Grimmelmann, 2015). Furthermore, ex post moderation can be either reactive (responding to user or automated reports) or proactive (Klonick, 2018). While proactive moderation traditionally focused on specific violations like terrorism or hate speech (Caplan, 2018; Klonick, 2018), recent research suggests that it may be triggered by ambient factors such as many negative comments, high engagement, or association with users viewing vulgar content (Li and Zhou, 2024). Once a violation is detected, enforcement strategies such as user exclusion or organising content by deleting, editing, and reducing the reach of content are chosen by moderators (Grimmelmann, 2015). Commercial content moderation is likely to follow an industrial approach, prioritising consistency in rules at the ‘expense of being localised or contextual’ (Caplan, 2018: 25), which distances the moderator from conversational cultures (Ruckenstein and Turunen, 2019) and implements policies that imbibe colonial and western values (Shahid and Vashistha, 2023).

Previous studies on the decision-making process of commercial content moderators have highlighted the expectation to make rapid decisions (within seconds) and meet productivity targets to ensure job security (Ahmad and Krzywdzinski, 2022; Chen, 2014; Roberts, 2019), which discipline moderators to be complicit in platform’s post-racist ideologies (Jereza, 2024). Stockinger et al. (2023), in their examination of comment moderation practices in Austria and Germany, posited that high volumes of content compel moderators to prioritise and address clearly problematic cases first, while dealing with the grey cases subjectively. Overall, while the existing literature provides glimpses of the decision-making process and potential effects of such decision-making based on specific content violations, content type, and Western/US platform contexts (Gillespie et al., 2020), the cognitive mechanisms that underpin the perception of what constitutes problematic content remain insufficiently explored. Furthermore, the criteria for selecting one enforcement action over another are still unclear. Greater academic attention to these cognitive processes at the moderator level is essential to comprehend the actual practices of content moderation and develop improved state and self-regulatory strategies.

Sensemaking (for what is not known)

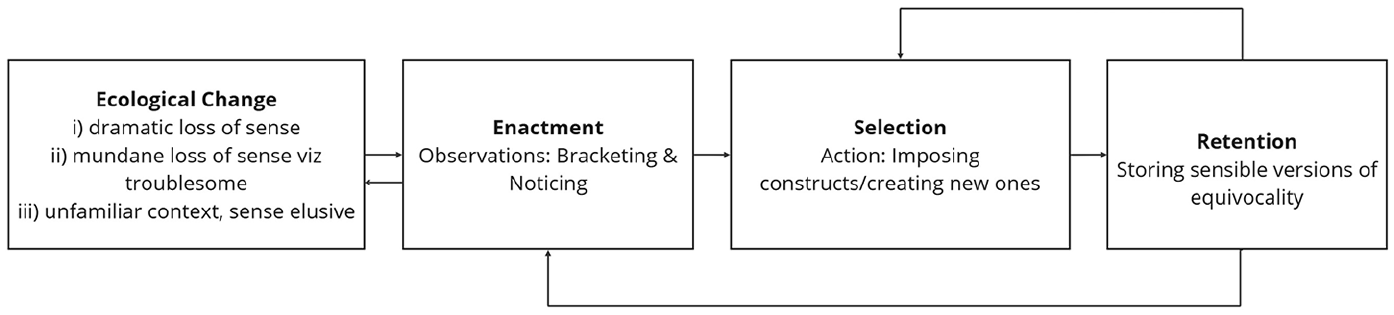

To examine the decision-making process that unfolds within the complexities of ambiguous content, personal beliefs, platform policies, and employment insecurities, we utilise Weick et al.’s (2005) concept of sensemaking. As moderators view diverse forms of content and gatekeep, bearing in mind the contextualities, the voluminous global and local platform policies and the efficiency requirements, we deem sensemaking to be useful because it provides a conceptual tool to examine an actor’s engagement with ambiguity. According to Weick et al. (2005), sensemaking unfolds within ecological changes through the stages of enactment, selection, and retention. Ecological changes, triggered by flux, chaos, or ambiguity, prompt individuals to reevaluate their understanding and make sense of it. These changes could involve a dramatic or mundane loss of sense, such as employees navigating organisational restructuring, uber drivers making sense of the algorithm management, a nurse reacting to shifts in a patient’s condition or in context of this research, a moderator deciding for a various types of content based on the voluminous platform policies and efficiency requirements.

Sensemaking begins with noticing and bracketing the ecological changes, that is, focusing on the unknown among other events surrounding the actor. The act of noticing and bracketing the unknown in the external environment is called

Sensemaking (adapted from Weick, 1979: 132).

As sensemaking enables an understanding of ‘how’ a certain choice is made, it can therefore help understand the decision-making process by commercial content moderators on social media platforms. Consequently, we deem it a valuable lens for our study. In existing scholarship, sensemaking has been used to appreciate both large organisational and individual/oral processes, such as organisational sensemaking in the implementation of Enterprise Resource Planning (Tan et al., 2020), employee sensemaking of clients’ cultural contexts in outsourcing firms (Su, 2015), or Uber drivers’ sense of the algorithms at an individual level (Möhlmann et al., 2023). In this study, we use sensemaking at the individual level to trace a moderator’s micro-level journeys to the choices they make regarding content. By systematically tracing moderators’ decision-making processes through the stages of enactment, selection, and retention, we identify the key factors influencing decision-making at each stage, capturing both the process-narrative and the underlying cognitive influences.

Methodology

We employed a qualitative method to understand the decision-making process of commercial content moderators as they decide whether content should stay or be removed from the platform. Focusing on the Indian context, a significant hub for outsourcing content moderation after the Philippines (Roberts, 2019), we collected data from commercial content moderators based in the cities of Delhi, Hyderabad, and Mumbai. The ethical approval to conduct this study was received from the institutional review board at the authors’ institution. Given this industry’s fragmented and obscure nature, we considered any commercial content moderator with experience in reviewing content for social media platforms in India as the only inclusion criterion for this research. This openness allowed us to interview commercial content moderators who had joined the field between 2017 and 2023, with representation from global and local social media platforms.

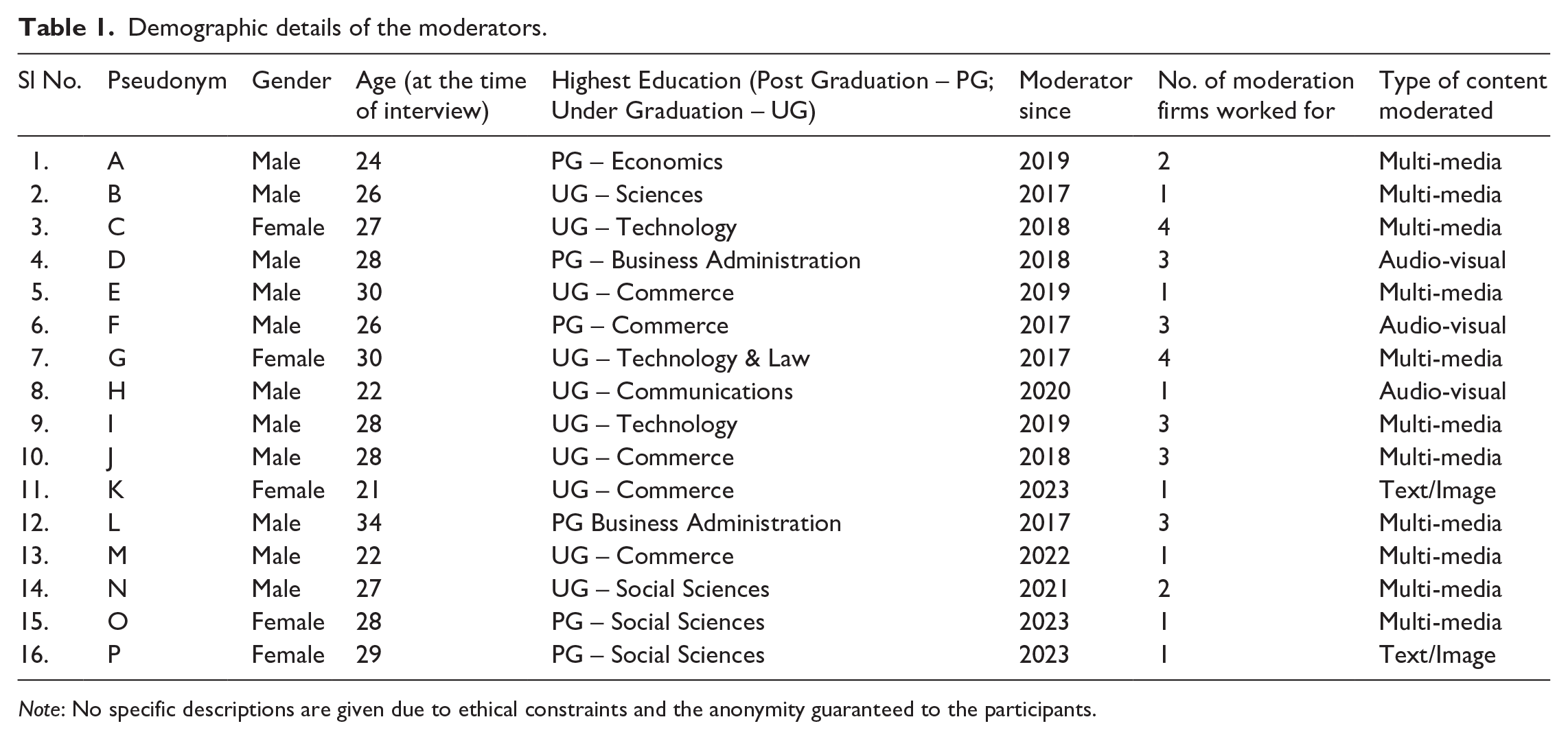

We used purposive snowball sampling due to its suitability in collecting data from hard-to-reach or sensitive populations (Biernacki and Waldorf, 1981). Initially, 78 commercial content moderators were contacted through a professional networking platform, with 11 agreeing to participate in interviews. An additional five moderators were interviewed through peer referrals. Thus, a total of 16 moderators (11 males and 5 females) were interviewed. Data saturation determined the sample size, indicating the point at which no new information was elicited from the interviews (Guest et al., 2006). Table 1 captures the details of the moderators interviewed.

Demographic details of the moderators.

Note: No specific descriptions are given due to ethical constraints and the anonymity guaranteed to the participants.

We conducted in-depth semi-structured interviews 1 with the participants between June 2023 and January 2024. All the interviews were conducted online through a video-calling platform. Moderators based in Delhi (researchers’ base location) were given the option to meet in-person for the interview, but they preferred virtual interaction. This preference likely stems from the nature of the job, where virtual interviews offer advantages of accessibility and anonymity, especially when discussing sensitive topics (Clarke and Braun, 2013). Of the 16 interviews, 13 were recorded with moderator consent, while three were documented through handwritten notes. The recorded interviews were transcribed verbatim (which included English, Hindi and Bengali languages), and the handwritten notes were reconstructed into a transcript for subsequent coding.

In addition, four moderators shared their moderation process through screensharing, providing valuable insights into decision-making. The process was documented through handwritten notes and reconstructed into transcripts later. The interviews lasted about 60 to 90 minutes, and the ones involving screensharing took place over multiple sessions. This led to a 96,076-word transcription spanning 184 pages. The dataset was complemented by internal rulebooks from three firms (one multi-national and two local) and training videos from two firms, all sourced from interviewed moderators under conditions of anonymity.

We employed theoretical thematic analysis (Braun and Clarke, 2006) and used Weick et al.’s (2005) sensemaking as a guiding concept. The choice of theoretical thematic analysis allowed us to analyse data without implicit epistemic or theoretical commitments while still incorporating relevant theoretical ideas (Clarke and Braun, 2013) and recognising our positionality in relation to the subject matter (Braun and Clarke, 2021). Our analysis proceeded through six steps: (1) familiarisation and transcription, (2) generating initial codes, (3) constructing candidate themes, (4) reviewing themes, (5) defining and naming themes, and (6) analysis. We familiarised ourselves with the data by listening to audio recordings, reading field notes, and transcribing over 4 weeks. Thereafter, we generated initial codes which were a mix of semantic (data-driven) and latent (conceptual) codes. The transcriptions were manually coded on NVivo to manage codes and relevant excerpts easily. The codes, initially, were combined into four candidate themes and 13 subthemes. It was reviewed to check whether the themes and coded extracts made a coherent narrative. Ultimately, four main themes and 10 subthemes were finalised and formed the basis of our analytical narrative illustrating the decision-making processes of commercial content moderators. While the analysis is underpinned by sensemaking, it is also shaped by critical scholarship on the content moderation industry’s exploitative and extractive nature (Ahmad and Krzywdzinski, 2022; Chen, 2014; Roberts, 2019).

Findings

The interviews with commercial content moderators provide critical insights into the moderation ecosystem. A frequent experience was their initial unawareness of job responsibilities until they began working on the production floor. Over time, they familiarised themselves with rulebooks and the diverse range of violative content. Another recurring concern was the industry’s high efficiency standards, directly linked to job security. Analysing the interview transcripts, we visualise the decision-making process of the content moderators through Weick et al.’s (2005) concept of sensemaking. We begin by understanding the moderation setting and the equivocalities therein. Subsequently, we trace the decision-making journey through the stages of enactment, selection and retention, and identify the different factors that contribute to decision-making in each of these stages to visualise the process.

Content moderation setting and its equivocalities

The content moderation ecosystem is undergirded by human labour and technological architecture, and the following is a brief description of the ecosystem as narrated by the commercial content moderators themselves. The human labour is either employed in-house or through an outsourced agency that hires people or further outsources it to freelancers. Moderators, irrespective of their employment type, are bound by non-disclosure agreements which, as per ‘Moderator A’, ‘are very strict policies’. The moderators reported their work to be organised in three levels: (1) agents who handle content at the frontline; (2) supervisory level of Quality Analysts, Subject Matter Experts and/or Language Experts overseeing multiple agents or specific content types; (3) management level consisting of Team Lead who is responsible for communication with other teams and the clients. Technologically, an online portal, called the tool, organises the whole process by queuing the cases randomly or based on content type/violation type. Through the tool, the moderators may have access to case logs with details like post time, moderation duration, and decisions made. The tool may vary across the work hierarchy; for instance, ‘Moderator O’ stated, ‘DP [Display Picture] issues . . . have to be mentioned in a block sheet as we don’t have access to blocking users’, which would be taken care of by her reporting officer. In addition, the moderation process may also be supported by in-built and external applications. In-built sensitive text or speech detection tools or using Google Lens to ascertain plagiarism are common. Furthermore, firms may use activity tracking applications to monitor moderators’ presence on the tool during working hours and evaluate performance. Knowing all the technological tools used in moderation may be impossible, but the information received indicates their embeddedness in the moderation process.

It is in the above work hierarchy and technological architecture that a moderator decides whether to let a content be or not be on the platform. The moderator grapples with the questions of ‘What to moderate?’ and ‘Moderate to what extent?’, which form the two fundamental equivocalities of content moderation. The former pertains to navigating unfamiliar platform policies, culturally diverse content, and new processes. The latter uncertainty, related to efficient performance, requires moderators to balance key performance indicators (KPIs), ensuring they neither spend excessive time on individual cases nor overdeliver on targets while making plausible decisions.

What to moderate (in the unfamiliar)?

When moderators join a project and begin their work, they encounter various unfamiliarities. We identify three types of unfamiliarity commonly encountered by moderators: (1) unfamiliarity with the detailed policies, (2) unfamiliarity with the culturally detached content, and (3) introduction of new moderation policies and processes. For freshers, these unfamiliarities are exacerbated as they are unaware of the job profile during recruitment. As ‘Moderator B’ shared,

I joined the firm in 2017 not as a content moderator, but as a service executive . . . unfortunately the project was ramped down . . . so they moved me into content moderation . . . sometimes you wouldn’t even know what you are doing, and you end up doing moderation.

Furthermore, the training and induction sessions do not fully prepare the moderators for what they might encounter and the possible enforcement actions. As ‘Moderator C’ recollected, ‘training vaguely explains what all happens, but once you start working on the content, you gain experience. That is when you really understand what happens [in moderation]’.

Unfamiliarity with the detailed policies

Social media platforms have detailed internal guidelines and multiple enforcement options that can raise the question of what to do with the queued content. For instance, ‘Moderator P’ showed that the guidelines for her text moderation project, apart from the general guidelines, had 390 sensitive words to be cautious of and 25 references that were strictly prohibited. Furthermore, a violation can have multiple subcategories or multiple enforcement options. As ‘Moderator B’ explained,

There are policies related to throat slashing [referring to terrorist videos]. If it is uploaded on a random channel . . . without any information, in that case, [the policy] is to remove from the platform . . . That very throat slashing video when slightly blurred and posted on a news channel’s profile would be labelled . . . [the policies and the moderation decision] depends on these smaller intricacies of who posts it.

Thus, the work requires moderators to be well-versed with numerous guidelines, develop an understanding of the multiple subcategories of one violation and be cognizant of the different enforcement options. The plurality of policies and enforcement options often becomes a source of unfamiliarity for moderators.

Unfamiliarity with culturally detached content

Moderators often deal with culturally detached content, that is, content that does not belong to their geographical and cultural context. For Indian moderators, this manifests in two ways: (1) moderating content from other countries such as the Middle East, Latin America or East Asia; (2) moderating content from other parts of India. Interviews with moderators reveal that many have moderated content from around the world. Having moderated content from East Asia, ‘Moderator F’ explained how enforcement policies could vary based on geographical and cultural contexts,

There was a Momo meme wherein a user’s face could be morphed [which] made it joker like [an inexact recollection of the 2019 “Momo” phenomenon, a fictional character that sparked panic amid unverified claims of inducing self-harm in children], while it was not banned in India, it was banned in other countries like Indonesia, Colombia and Philippines . . . because it led to suicides.

Furthermore, India being a linguistically and socio-economically diverse nation, content from one part of the country can be alien to a moderator from another part of the country. For instance, ‘Moderator P’, during her assignment as a text moderator, came across a text describing Pottu Thali [necklace indicating the marital status of women in Southern India]. In the said case, Pottu Thali was translated as a ‘plate’ worn by a woman to mark marital status. While the description seemed correct, she was not sure if the English translation, that is, plate, was factually correct due to her lack of familiarity with the vernacular language from Southern India. Thus, culturally detached content is a source of ambiguity for content moderators.

Introduction of new policies and processes

Given the ever-evolving and dynamic nature of online communication, content moderation firms need to respond to trends and alter their strategies from time to time. To do so, they introduce instructions that the moderator must keep up with. Sometimes, minor tweaks introduced by the firm in the process can also lead to problems. For instance, when ‘Moderator O’ was asked to moderate content as per user type (segregated by the platform), she expressed that her workflow got disrupted and she had to figure out a process to bring back the pace in moderation, ‘after they changed the Standard Operating Procedures, meeting targets became even more difficult’.

Moderate to what extent (Efficiently)?

While a moderator’s job is to assess and intervene in violative content, they are also expected to do it efficiently, that is, making appropriate decisions without spending too much time. A moderator’s efficiency is reflected in KPIs, which have two primary indicators: productivity and quality/accuracy targets. KPIs are pivotal to a moderator’s salary, bonus and job security. For instance, ‘Moderator N’ lost his job after he approved two ‘Not Safe For Watch’ pieces of content that were detected in the quality check.

Meeting the productivity targets, that is, the number of contents to be moderated per day, was a concern for the moderators. They needed to devise ways to take less time in decision-making. ‘Moderator A’ indicated how moderators learn judicious use of time with experience, ‘a moderator needs to view a lot of content to develop experience and understanding . . . as the moderator views content, they know which content to skip, tag, keep, delete.’. . . Furthermore, they had to keep in mind not to moderate more than what was expected, as exceeding targets alerted the quality checkers. For instance, while sharing efficiency tips in her moderation project, ‘Moderator O’ shared, ‘. . . once I moderated 2500 audios and was noticed by the higher-ups’. The second KPI indicator, that is, quality/accuracy, is assessed by sampling some portion of the work done by the moderator and recording the number of mistakes in it. A percentage of the number of mistakes out of the total sample size leads to the accuracy percentage, as explained by one of the moderators. The percentage of accuracy may be graded, and quality targets are generally above 90 percent in this industry. Instances of low accuracy may require moderators to discuss and explain the incorrect decision made on a case-by-case basis. During interviews, we identified a lack of clarity among moderators regarding quality checks; for example, ‘Moderator M’ only fully understood quality checks after a colleague explained them.

Maintaining KPIs is like treading a tightrope, as moderators must make quick decisions to meet productivity targets while being able to explain the decisions and avoid overachievement of targets. They also need to make decisions that they can explain. In the subsequent sections, we shall illustrate how these efficiency requirements impact the decision-making process and the moderators’ final decision.

Enacting upon the equivocalities

It is in this experience of unfamiliarity and pressure to moderate efficiently that content moderators operate and sensemaking is triggered. Sensemaking begins when a moderator brackets the unfamiliar content and policies. It unfolds through the practice of seeking practical indications to violative content facilitated by the internal rulebooks and technological aids.

Seeking practical cues through rulebooks

Content policies governing social media platforms are principle-based and broad. For instance, an Indian social media platform’s policy prohibits impersonation in a misleading and deceiving manner with an exception for fan or satirical profiles of public figures. This may be easy to understand, but very difficult to implement for a moderator. To aid the moderators, platforms provide internal rulebooks that operationalise these principles into specific guidelines. For instance, in the rulebook, the impersonation policy translates into policies of removing a man using a woman’s display picture and de-ranking profiles that use celebrity/public figure images. When dealing with culturally detached content, additional documents explain regional terms, festivals, beliefs, and the likes. These region-specific guidelines may complement the standard policies. Furthermore, moderators receive training to familiarise themselves with these rules, which can last from a day to several months, depending on their employment status (freelance or regular employee). Moreover, private work groups allow moderators to seek case-specific advice. During data analysis, a notable observation was ‘Moderator A’s’ explanation of the rulebook and its utility, ‘Let me show you one thing [screenshares the rulebook], one gets habituated with them after reading once’. This metaphorical assertion implies infrequent reference to the rulebook. Consequently, despite the presence of numerous documents, it became essential to investigate whether the rulebook serves as the primary tool for moderators in identifying and categorising violative content.

Seeking practical cues through technological aids

Technological aids play a crucial role in content moderation and policy assessment, providing essential cues for moderators. We identified four key aids: (1) content queues, (2) in-portal access to rules and policy changes, (3) self-monitoring features, and (4) internal and external applications. This is not an exhaustive list, as many more features may exist.

Some content moderation tools enhance efficiency by

We noted an over-dependence on automated tools during the interviews. ‘Moderator K’ highlighted that AI facilitates swift removal of sensitive content, stating, ‘AI is mostly used because without AI, moderation would become a time taking process, one will have to read everything and then correct’. This over-dependence has links to the target pressures that limit deeper evaluations. Thus, we contend that there is a greater dependence on technological aids than on extensive rulebooks to notice and bracket possible violations.

Selecting possible enforcements

Once the moderator notices and brackets the potential violative content using the rulebooks and technological aids, they must take action upon the content, a process called selection (Weick et al., 2005). In content moderation, this involves determining whether to allow or remove content, thereby enacting sense into the equivocalities of ‘what to moderate’ and ‘moderate to what extent’. This gatekeeping decision (selection) is shaped by cognitive processes of pattern identification, subjective perceptions, shared knowledge and process optimization. In the said processes, moderators must analyse the content and make retrospective deductions, influenced by their own experiences and those of their peers in the field.

Pattern identification

As moderators grapple with volumes of content, they identify patterns in content and violations. This is purely based on cognitive processes and analysis at an individual level. For instance, ‘Moderator M’, while working on live streaming projects, identified repetitive policy violators, such as a streamer hosting multiple streams at the same time. In such a case, he would not open the stream and straight away end it, eventually saving time. Similarly, ‘Moderator K’ shared that working in text/image-based moderation and exposure to several cases has helped her develop a hunch for plagiarised images. She explained, ‘some images are such that one glance is enough to assess whether it is from the web or an original image’. In such cases, she would not waste time checking the image plagiarism through Google Lens and would take a decision immediately. Moderators also discern patterns in efficiency assessments, influencing their enforcement choices. For instance, ‘Moderator N’ speculated that profile-blocking reports made on a separate sheet were not included in the counts and reduced reporting on that sheet to save time.

Subjective perceptions

Subjective perceptions of harm, comfort and user intention also influence the selection of enforcement actions. ‘Moderator A’ illustrated this subjectivity,

If in a video there is a child, a toddler, it is not conscious [unaware], may be private part is showing [sic], if the concern is about showing the beauty of the baby then it will remain on the platform.

Similarly, a training video shared by a moderator highlighted, ‘[describing an image of men applying colour on a woman] it was looking very weird [emphasis added]. . . So that image we removed . . . , if you feel it is uncomfortable to see you remove it’ (excerpt from training video shared by a moderator). Looking at the intention behind content and gauging whether it would cause inconvenience to the audience or deciding if it is okay to see depends on how a video is perceived by the moderator, which may vary from person to person, that is, be subjective. These subjective judgements allow moderators to quickly decide without referring to rulebooks.

Shared knowledge

Moderators also depend on the knowledge of their peers to select enforcement for content. This knowledge is shared via workgroups or individual interactions. For instance, when ‘Moderator P’ was unsure about the factuality of the translation of Pottu Thali [necklace indicating marital status of women in Southern India], she sought peer advice in the work group and was asked to approve it. Thus, her judgement of the factuality of the concerned text, against her hunch, was based on her peer group’s understanding and experience. Sometimes, one-on-one interactions with peers can lead to knowledge sharing. For instance, ‘Moderator M’ learnt from a colleague’s observations that accuracy reports are based on the cases of violative Display Pictures (DP). Since then, they became very careful while removing a profile on grounds of the DP and only removed the clearly violative cases, such as ones with guns, indicating personal information like phone numbers, and so on. In addition, moderators shared how client priorities shaped their judgements. For instance, ‘Moderator O’ was told by a reporting officer that the clients only cared about numbers, so she must focus on meeting the targets and not get bogged down by the accuracy at that moment. One can assume that such an approach can lead to seepage of violative content on the platform.

Process optimization

A moderator has multiple enforcement options, such as approving, removing, de-ranking, skipping, and blocking. The selection of one enforcement action inherently excludes others and is guided by process optimisation. Process optimization is the function of the targets and time pressures and the economics of the content moderation industry. It directly feeds into the question of ‘moderate to what extent?’ Most moderators are given daily targets, which creates

Retaining the sense made

Once a certain action is selected, the final stage of sensemaking involves retaining the enacted sense for future actions. In content moderation, the enacted sense is retained in the form of process hacks and diluted policies to be used in subsequent decision-making. We define process hacks as mental checklists to manage the moderation process and diluted policies as memory-based lists of policies used by the moderators for decision-making. We argue that diluted guidelines are a by-product of process hacks. It is important to note that intricacies of the two strategies may vary based on platform, content type, policy changes, efficiency requirements and the moderation portal structuring the practice.

Process hacks

Moderators often retain the cognitive processes that help meet targets and avoid quality check alerts, discarding others. We call this form of retention, process hack which consists of mental checklists or standard operating procedures at the moderator’s level to successfully manage the moderation work and job security. For instance, when ‘Moderator O’ was asked what tips she would give to a newcomer in her audio moderation project, her response revealed her process hack:

I would ask them to first check the audio uploader’s DP and remove it if there were violations. Initially, I made a separate list of violative DPs from the rulebook to be able to refer and memorize. I would also recommend not to put too much time listening to the audios . . . , as otherwise one would barely meet the 1500 target. [I] Would never recommend de-ranking content as it would take time. In all of this, one should also be careful not to moderate too much as once I moderated 2500 audios and was noticed by the higher-ups.

Diluted policies

When a moderator retains the enacted sense in the form of process hacks, it leads to diluted policies. This second form of retention is a memory-based list of policies that the moderators keep in mind while deciding for a content. For instance, ‘Moderator O’, who moderated audio livestreams, memorised a list of violative DPs from the rulebook and used a simplified approach where she would approve audios after brief listening and remove them only if the DP was inappropriate. When asked what contained in this list of inappropriate DPs, she listed ethically unambiguous categories such as ‘nudity, display of weapons, smoking/drinking or using illegal substances, sharing phone numbers, QR codes’. Given the volume of content moderators must process, the diluted policies help the moderator meet the time and target pressures and economics. Technology facilitates this dilution by detecting and assessing content. When ‘Moderator P’ screenshared her freelance text moderation, we noted that technologically highlighted words were often removed without context consideration, indicating a preference for technology’s judgement over personal judgement. This reliance on technology fosters a ‘technology knows better’ mindset, reducing the comprehensive rulebook to a list of prohibited text and speech fed into the detection system. We summarise the decision-making process in Figure 2.

A visual map of commercial content moderator’s decision-making process.

Discussion

Summary of key findings

Zooming in on the decision-making process of commercial content moderators on social media platforms, using Weick et al.’s (2005) concept of sensemaking, we argue that the detection of violative content hinges on the moderator’s sense of ‘what to moderate’. It accommodates the platform’s ever-evolving (global/regional) policies and processes, and is crucially supported by the technological architecture, consisting of the moderation portal and internal/external automated detection tools. Once violation is detected, the selection of enforcement, that is, the choice to approve or disapprove content is influenced by four cognitive processes of pattern identification, subjective perceptions, shared knowledge, and process optimisation. These cognitive processes facilitate the achievement of efficiency requirements posed by the question of ‘moderate to what extent (efficiently)’. Once sense is enacted into the decision-making process, the adept moderator retains the sense in the form of process hacks and diluted guidelines that facilitate (hopefully) accurate decisions and ensure job security. Thus, addressing the question of how moderators decide whether to let content be or not be, we find that the decision is not solely based on platform policies but is influenced by a range of efficiency indicators such as targets, time taken per case, and quality audits that impinge upon a moderator’s salary and job security.

Theoretical contributions

Analysing a moderator’s decision-making process through the sensemaking stages, we find that the current

Here, it is worth noting the

Our analysis also contributes to the discourse on the industrial approach to moderation, which is characterised by large-scale moderation (Gillespie, 2018). This approach operationalises rules to ensure consistent decision-making across the global supply chain (Caplan, 2018). It is guided by the value of consistent decision-making. However, tracing the decision-making journey indicates that individual-level

We attribute the difference in policy and practice to the economic logic underpinning moderation decision-making. The economic logic renders it impractical for moderators to devote time to contextual decisions, compelling them to develop strategies that align with efficiency targets imposed by employers. While the low remuneration for this work has been documented (Chen, 2014; Roberts, 2019), and its role in perpetuating neo-racist ideologies of platforms has been noted (Jereza, 2024), our findings emphasise its central role in shaping moderation decisions. Therefore, we argue that

Overall, our research highlights the labour process of commercial content moderators behind monetizable and safe online communication platforms. While existing scholarship has largely explored how self-regulatory policies (Klonick, 2018) and their underlying values shape online discourse (Caplan, 2018; Gillespie, 2018; Roberts, 2018; Shahid and Vashistha, 2023), our analysis foregrounds and underscores how economic factors of the moderators influence gatekeeping of online content. Unlike volunteer moderation, which is unpaid and allows moderators to engage in community standard setting (Cai and Wohn, 2022), commercial moderation is shaped by economic imperatives which differentiates the decision-making process. Moreover, unlike prior research that tends to be content-specific, event-driven, or U.S. platform centric (Gillespie et al., 2020), our study examines the routine, everyday practices of commercial content moderators in the Global South. In doing so, we extend existing understandings of decontextualised decision-making in industrial moderation systems (Caplan, 2018; Ruckenstein and Turunen, 2019) by tracing how such decisions materialise through the cognitive stages of sensemaking. We also surface the tensions between platform policies that stress consistency (Caplan, 2018) and moderators’ practice of making ‘plausible’ decisions that may not always align with prescribed consistency and be geared towards job security. While our study offers reflections on moderation in the Global South, the absence of comparable empirical studies from Western contexts limits definitive conclusions about regional specificity. Nonetheless, two critical dynamics emerge: first, Indian moderators exhibit limited discretion in questioning technological tools, contrasting with the scepticism observed in European contexts (Stockinger et al., 2023). Second, while the centrality of economic considerations appears particularly acute in our context, similar productivity concerns affecting decisions have been documented in U.S. and Ireland-based moderation settings (Jereza, 2024) and in Europe where workload impacts thorough judgement (Stockinger et al., 2023). Thus, while our study foregrounds how socio-economic constraints shape moderation in India, the current absence of cross-regional comparative research limits the ability to conclusively determine whether these dynamics are uniquely Global South-specific or indicative of broader, global patterns in commercial content moderation.

Practical implications, limitations of the study and future research directions

The overbearing influence of the economic factor in commercial content moderation decision-making has implications for both platform and state regulation. We emphasise the need for platforms to assess and redesign their technological infrastructure to support efficient moderation, including reducing manoeuvring time within the portal, integrating quick-access rulebooks, and implementing violation-specific content queues. For example, to reduce manoeuvring time, platforms could standardise the number of steps required for selecting different enforcement options to prevent moderators from choosing one action over another due to time constraints. These preliminary design recommendations for improvement are broad as their applicability will vary depending on the specific features of the moderation interface, the nature of the content being moderated, and the range of available enforcement options. Beyond technology, addressing the implicit economic pressures on moderators – such as performance targets and employment conditions is an important need of the hour to bridge the gap between consistency driven platform policies and plausibility driven moderation practice. However, it remains largely unexamined due to its conflict with platform’s economic interests. By foregrounding this economic tension, we highlight the limitations of platform self-regulation and the necessity of state regulation to establish labour standards in content moderation. Like antitrust laws and intermediary liability, labour protections, such as social security, mandating a proportional number of moderators relative to content volume, can be considered a component of platform regulation.

Through this study, we provide a primer for understanding routine decision-making process in content moderation and hope to inform future research and development of nuanced solutions to the challenges of human moderation. While we outline a generalised decision-making process and how moderators arrive at strategies to ensure job security, we do not account for the platform specific differences. Although the overarching nature of decision-making process and the retention of sensible strategies remain consistent across platforms, minor variations may arise due to platform type, content format, policy changes, efficiency requirements and the design of moderation portals. Future research could examine platform-specific moderation strategies and explore decision-making by in-house moderators to identify extra-organisational influences and assess how employment security shapes moderation practices. In addition, this study does not examine the interaction between human and algorithmic moderation and future research can examine their interaction to clarify their mutual influence.

Footnotes

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Ethical approval and informed consent statements

Approval to conduct the study was obtained from the Institutional Review Board of the University of Queensland, Australia.

Data availability statement

Data cannot be made available in a relevant data repository due as there is no ethical clearance for the same from the Institutional Review Board of the University of Queensland, Australia