Abstract

This article investigates the development and deployment process of QUIC, a new standard of the Internet Engineering Task Force (IETF) that is fostering momentous architectural change in the ways in which communication and data packets transport happens on the Internet. We present QUIC’s standardization process as an analytical site to capture recent evolutions in the balance of power between the so-called “Big Tech” actors, other actors of the Internet industry, and states. The article focuses on three key socio-technical controversies that have shaped its standardization process, and, in particular, explores Google’s capabilities in re-shaping the technical architecture of the Internet. This research contributes to unveiling how control over Internet traffic is “infrastructured” by the QUIC process, highlighting the place of the private sector in standardization processes; the processes of consolidation and concentration of the Internet around dominant actors that are influenced by the making of standards; and the discussions and controversies regarding specific technical aspects of a standard that reconfigure broader balances of power and decision-making in Internet governance.

Introduction

This article investigates the development and deployment process of QUIC, a new (as of early 2024), and—it has been argued—deeply transformative, 1 standard of the Internet Engineering Task Force (IETF). 2 QUIC is a transport-layer network protocol; in other words, it describes how two end points can establish a connection and communicate on the Internet. The Transmission Control Protocol (TCP), introduced in the 1970s as part of the core suite of protocols that subtend the Internet, has long been the ubiquitous solution for this fundamental transport function. Initially designed by Google, QUIC is proposed as an alternative to TCP and provides a new technical architecture for communicating (and encrypting) data through the network.

After Google’s first public presentation in 2013, a working group was launched at the IETF in 2016, leading to QUIC’s standardization 5 years later, in 2021. QUIC is now implemented and deployed by the largest Internet platforms (Meta, Google, Cloudflare, Alibaba, and Microsoft) and early-2024 estimates suggest that it accounts for almost half of the Internet traffic in Europe, Latin America, and the United States (Cisco, 2024).

In this research, we examine QUIC’s standardization process, understanding it as an analytical site to capture recent evolutions in the balance of power between “Big Tech,” other actors of the Internet industry, and states, contributing to reconfigurations in digital economy, standardization, and “digital sovereignty” (Pohle and Thiel, 2020). In the face of QUIC’s new encryption features, this process has indeed led to intense negotiations on what should be made “visible” to states and network operators out of global Internet traffic, signaling a reordering of their control over data flows (ten Oever, 2021).

QUIC is an emblematic case of how Internet standards participate in the processes of infrastructuring (Blok et al., 2016; Karasti and Blomberg, 2018; Musiani, 2022) of companies’ and states’ control over networks and communications; a process with direct implications for the Internet as a whole. To uncover what has been “infrastructured” by QUIC, this study focuses on the key controversies that have shaped its standardization process, related to encryption, state sovereignty and surveillance, and explores in this context Google’s capabilities in re-shaping the technical architecture of the Internet.

The literature on the governance “of” and “by” Internet infrastructures has demonstrated how Internet protocols and standards can be “crucial components in arrangements of power” (Musiani et al., 2016). In this article, we show how processes focused on QUIC have moved beyond the more strictly “technical” aspects of standardization to become a globalized site of controversy, at the forefront of the conflicts between various paradigms and visions shaping the Internet, while also generating new rearrangements of power in the IETF and beyond.

This article seeks to answer the following research question: what has been both enabled and constrained by the QUIC standardization process, and what are the implications of this for the future of the Internet? In addressing this question, we will complement and expand upon the two main studies that have unpacked earlier steps of this standardization process (Harcourt et al., 2020; ten Oever, 2021). As we will also emphasize, the adoption and the deployment of QUIC are also useful cases to test claims about recent shifts in global digital governance, including the so-called “return of the state” (Haggart et al., 2021).

The plan of the article is the following. After a brief section presenting our methodology and theoretical framework, the article first explores QUIC’s standardization process and unpacks Google’s capabilities in the IETF. Indeed, this process has been presented in previous literature as an initiative led by Google, which could hardly be opposed by other industry actors, and had to ultimately be “absorbed” by the IETF (Harcourt et al., 2020). Recent interviews with active participants in the QUIC working group of the IETF are useful to add further nuances to the understanding of this process. This article explores how Google was able to gain the support of network operators and state officials from the beginning of the process in the IETF, despite early concerns about QUIC’s potential impact on their (security) practices and systems. Exploring three major controversies that have shaped this 5-year process, we will look, for instance, at the resolution of what has been called the “spin-bit” debate, opposing Google on the one hand and network operators on the other hand, over the quantity of data and conditions under which the metadata of packets could be made visible to network operators.

The second part of the article studies this process as a practice of “infrastructuring” the future of the Internet, discussing in particular its implications for state control over Internet traffic. QUIC was formally adopted at a time when many countries in the world were flexing their regulatory and policy muscles to defend their “digital sovereignty” in Internet standard setting (Perarnaud and Rossi, 2023). Despite QUIC’s clear technical and economic implications, state actors were perceived as rather passive and silent during this standardization process. Yet, the scale of states’ control over networks was at the center of the debates, and regularly used as justification for both limiting and expanding the encryption of traffic. Findings from the research fieldwork will inform how some sovereignty-minded claims from states fed into this process and how Google (successfully) navigated these competing demands, during and after QUIC’s standardization process. The conclusions will also be an occasion to highlight the ways in which the QUIC case can contribute to advance the conceptualization of “infrastructuring” in relation to digital networks and dynamics of standardization and sovereignty.

Methodology and theoretical framework

This article draws on desk research, mobilizing secondary literature and public mailing lists of the IETF, as well as six research interviews conducted during an in-person IETF meeting in November 2023, 3 and eight online interviews with participants of the IETF’s QUIC working group. 4 In studying this process, we aimed to include a variety of standpoints having contributed to the QUIC standardization process, including institutional representatives, academics, civil society, and private sector. Informed by recent studies on gender and diversity at the IETF (Cath, 2021), considerable attention has also been paid to ensure participation from women experts. 5

Combining conceptualizations and methods from different disciplines, this project mirrors the “interdisciplinary nature of standard-setting” (Becker et al., 2024). In terms of conceptual lenses, one of the ambitions of this project is to reconcile studies in political science that look at how power is exerted in standard-setting processes with approaches derived from science and technology studies (STS) and in particular from infrastructure studies. These approaches are useful to highlight how standards are used to leverage power outside of such standardization processes (Galloway, 2006), and how standard-developing organizations and their outputs are not static entities defined once and for all or faits accomplis, but dynamic and evolving entities (Flyverbom, 2011).

Thus, this research draws on political science scholarship to study the decision-making process of the IETF and to explore how controversies about states’ digital sovereignty engage actors endowed with asymmetrical resources (Harcourt et al., 2020)—human, financial, technical. When investigating the power of the state in the IETF, it is important to signal that only a few governments, primarily from Western and Asian countries (Cath, 2021), are active in such standardization processes, at times with different, and even divergent, interests to defend. Though we will refer for the sake of clarity to “states” and “state actors,” we recognize that they are neither homogeneous nor unitary. 6 In our case, states in IETF standardization processes will be primarily embodied by technical experts belonging to domestic security agencies from Europe and the United States.

Our STS perspective juxtaposes this scholarship a perspective embedded in the so-called “material turn” in Internet studies (Casemajor, 2015) that intends to highlight the “materialities, matters and materials” associated with networked and digital processes, addressing their socio-technical, industrial or infrastructural, as well as spatial and territorial, aspects. 7

In this regard, the study draws on the literature on the “turn to infrastructure” in Internet governance (Musiani et al., 2016), which defines Internet infrastructure not just as the physical space of data centers, cables, and routers, but also as the “set of open protocols that connect the networks and devices together” (ten Oever, 2020). By surfacing the “invisible work” of infrastructure (Star, 1999; Star and Ruhleder, 1994) and “the social forces, interests, or ideologies that went into making them” (Harvey et al., 2016), we will explore in particular to what extent such Internet infrastructures can be promoted and “coopted” for other motives than the technical functionalities they initially aim to fulfill (Musiani et al., 2016). Furthermore, this research draws on the concept of infrastructuring (Blok et al., 2016; Karasti and Blomberg, 2018) and applies it, as some nascent works are starting to do, to the study of Internet governance and digital sovereignty (Musiani, 2022). The shift from infrastructures to infrastructuring is useful both theoretically and methodologically, in that it presents infrastructures as processes, practices, and settings that are both expansive and open-ended, “even as our starting point as researchers is made of ‘spatially, temporally and organizationally circumscribed’ case-study infrastructures” (Musiani, 2022). In our particular case, we have deliberately chosen to retain the term of infrastructuring, instead of “infrastructuralization” for instance, to zoom in “from structure to processes” (Grön et al., 2023). Infrastructuring as a concept highlights dynamic and processual dimensions (Karasti and Blomberg, 2018) that are better suited to capture the ever-evolving “process” and relations rooted in the standardization and implementation of QUIC.

In doing so, our focus will be placed on specific technical “controversies” that shaped the standardization process and its outcome, the way in which these controversies were resolved (or stabilized) by the actors participating in the IETF standardization process, and their implications for the Internet as a whole. In line with the STS literature that has sought to analyze, describe and map socio-technical controversies (see Venturini and Munk, 2021), and the literature that has sought in recent years to apply this approach to the study of Internet governance (Musiani, 2020), we conceive the management and governance of the Internet as being about “exercising control over particular functions of it that provide certain actors with the power and opportunity to act to their advantage; on the other hand, there is very rarely a single way to implement these functions or a single actor capable of controlling them” (Musiani, 2020: 92), which makes the Internet controversial and contested, both a target and an instrument of governance, and an object of interest for manifold actors. Controversies are observed as mechanisms that unveil different versions, according to different actors, of the worlds where notions of governance take place (Ziewitz and Pentzold, 2014). Three key controversies—QUIC’s fall back, cryptographic model, and spin bit—were identified through qualitative analysis of the QUIC IETF mailing list 8 and semi-structured interviews with 14 experts representing companies, civil society organizations, and the IETF leadership, who were directly involved in this process between 2015 and 2021.

Standardizing QUIC from the “shoulders of a giant”

Before discussing what QUIC means for the Internet as a whole, this section explores the standardization process of QUIC in order to understand how it appeared on the IETF’s agenda and unpack the capabilities of its main initiator (Google) and early supporters.

A quick genealogy of QUIC: Google in the driving seat

A central actor in this standardization process, Google is often presented as the primary initiator in the phases of design and rolling out of QUIC (Harcourt et al., 2020; ten Oever, 2021). The company had designed an earlier version of this protocol in-house (known as G-QUIC) for its own services and servers. G-QUIC had been enabled by default on Google Chrome, and used by other services of Google such as YouTube for several years prior to its presentation to the IETF. But Google identified strong incentives to proceed to its generalization at the global level via the development of a standard in the IETF. Harcourt et al. (2020) argue that Google’s motivations to standardize QUIC were commercial, regulatory, and technical, in resolving the latency and “ossification” of TCP. The process of ossification consists in the decreasing flexibility of the network which results in the inability to deploy a new protocol or protocol extensions due to the unchangeable nature of infrastructure components that have come to rely on a particular feature of the current protocols (ten Oever, 2021).

Throughout the interviews carried out with IETF experts, QUIC was presented as the much-awaited answer from the Internet community to a few fundamental challenges that limited their ability to improve the functioning of the network. Some of these challenges were related to the limitations of TCP, evidenced by the notorious ”head-of-line blocking” problem. Head-of-line blocking occurs when there is a queue of data packets waiting to be transmitted in the network, but the packet at the head of the queue cannot move forward due to congestion, blocking the rest of the data packets behind (Lee, 2014). This problem also stems from the proliferation of middleboxes throughout the network (ten Oever, 2021), which had the effect of blocking or hindering the deployment of any new protocols or functionalities to improve the overall network performance. Middleboxes refer to the intermediary devices and services on the network, such as firewalls, routers, and network management devices that can both facilitate or constrain connections.

QUIC thus proposed a fundamental change, which has even been called a “revolution” by some of our respondents, after decades-long reliance on TCP, the main transport protocol over the Internet. The interviews underlined that the degree of sophistication of the QUIC proposal to the IETF had only been possible thanks to the mobilization of a set of resources, that only a few actors in the Internet industry had at the time, in terms of human resources, technical means, and strategic vision.

Indeed, this new proposal was the result of a massive investment by Google in hiring leading engineers in their respective fields (cryptography, transport, congestion control, . . .) in the 2000s, mirroring a brain-drain strategy that Cisco had used in earlier times:

Google decided after looking at Cisco that they would hire and fold in thinkers and they would tell nobody what they were doing. No research contracts, no open research, nothing. Google’s thoughts were entirely private, and Google’s brain trust was the best on the planet. They hired a squadron of scary people [. . .] These were the icons of my time. They all worked for Google. And of course, scary, smart people have scary thoughts, [. . .] and Google keep it a secret and then just quietly implemented it bit by bit by bit.

9

While Jim Roskin is known as the main leader at Google behind the early development of QUIC, respondents also referred to Adam Langley for the cryptographic part in QUIC, or Jana Iyengar, who had been specifically hired by Google for his work on “Minion” at the IETF, a project that intended to optimize TCP. In answering the head-of-line blocking problem of TCP, Minion is said to have greatly inspired the development process of QUIC. 10

QUIC’s early design phase benefited from the ability of Google to “A/B test” its protocol through its infrastructure for years, both from client and server sides. These technical experiments were primarily incentivised by the fact that the expected decrease of latency that would result from QUIC was identified as a highly profitable opportunity for Google, due to the observed effect of latency on websites’ revenues. 11 Also, QUIC aimed at giving less visibility to network operators over the data passing through their infrastructure, while giving more control to the end nodes, and in particular web browsers (a market where Google is also heavily dominant). While initially there had been internal discussions within the company about keeping this protocol proprietary—since it meant possessing more efficient networks than its competitors (a crucial asset in particular for Google’s cloud offer), a final decision was made to present it publicly and standardize it at the IETF, due to the positive externalities that the global diffusion of QUIC would generate for the company’s business model as a whole.

As illustrated by this decision, QUIC was considered as one of the vehicles for advancing Google’s ambition to re-design the Internet architecture to the benefits of end points and low latency. This was shown by previous or simultaneous other attempts—such as SPDY and HTTP 2 (Peacock, 2020). Indeed, QUIC replicated—but at a greater scale—another initiative of Google in the field of the web. In the end of the years 2000, Google had developed in-house a protocol for the web, called SPDY, which was also designed to reduce latency for HTTP. 12 After many experimentations and optimizations, Google came to the IETF with the ambition to standardize SPDY, a process that ultimately led to HTTP2. The success of this standardization approach led Google to replicate it in the context of QUIC. What was different with QUIC, however, is that other companies, including Meta, rapidly experimented with QUIC—or similar technical solutions—which thus led to a more participatory standardization process (from the perspective of the industry involved in the IETF) than HTTP2. 13

After having presented the short history and incentives leading QUIC to be at the agenda of the IETF, the next section will describe the main points of contention that emerged from its standardization.

Resolving controversies in the IETF

The analysis of the IETF’s QUIC mailing list, as well as a series of interviews with participants of the QUIC working group, led to the identification of three key controversies (presented below), that shaped the outcome of its standardization process.

Fallback option

The first controversy is related to the fall back to QUIC, that is, the backup option to which one would need to revert to when a QUIC connection would fail. Such fallback was explored for when network operators would not want to accept QUIC traffic for various technical and security reasons. As underlined earlier, the reason QUIC was supported by many actors at the IETF was precisely for its potential to circumvent the “ossification” of the network, in other words, to act upon the relative powerlessness of many companies in the face of network operators which would block or alter the performances of new protocols being deployed.

QUIC relies primarily on UDP, instead of TCP, to transport communications. Google’s early experiments had shown that several network operators did not let UDP-based connections flow across their network, and would thus hinder the deployment of QUIC at a broader scale. Engineers at Google thus designed a fallback option, for when a network operator would not accept QUIC connections, allowing it to revert to TCP. This fallback option was a technical solution to a concrete deployment challenge, 14 but it also played a significant role in guaranteeing a relative consensus during the first stages of the IETF standardization process.

Indeed, as soon as QUIC was presented at the IETF in 2015, network operators and other parts of the Internet industry identified, early in the process, that this protocol could be a potential challenge to their business model, while US agencies were also closely monitoring this proposal. 15 By encrypting more data than TCP, and giving almost no visibility to network operators as to what was going on through their infrastructures, QUIC was immediately perceived as a threat by private-sector and state actors alike.

As a result, a few industrial actors voiced their concerns, both in the mailing list and during an IETF’s BoF (for “birds of a feather”) meeting. 16 They rapidly proposed a modification to the QUIC proposal in order to ensure that, for security purposes, QUIC traffic could be analyzed and properly managed by network operators. The immediate answer from the proponents of QUIC, which was then repeated several times throughout the standardization process, was to say that tempering QUIC and its encryption capabilities was not necessary, given that the “fallback option” would already give visibility to the network operators which would not want to accept QUIC traffic. In other words, if network operators did not like what QUIC did, they could easily turn off QUIC by blocking UDP ports.

Early in the process, this fall back was presented as the deal-maker for the rest of the industry, a form of red line to ensure a sustained consensus around this initiative. As described by a respondent,

The baseline starting point for QUIC was that it was going to be over UDP, not TCP. It’s going to have fall back to TLS over TCP [. . .]. That was table stakes. You don’t get into the conversation without those table stakes.

17

Network firewall device vendors were particularly active in these early stages of the discussion. Their incentive was that they wanted to demonstrate to their clients that they could block QUIC, if needed. The proponents of QUIC became concerned about this effort, and according to one of them, they decided to propose a specification explaining “how to identify a wire image as QUIC,” 18 so as to facilitate the work for network operators and firewalls. 19 The specification would allow them to recognize a new flow having these properties and block QUIC, if they wanted. Several respondents part of the QUIC working group justified this approach explicitly, so that the network operators would not block the standardization process as a whole. 20

As a result, it can be argued that Google’s technical design pre-empted the opposition of network operators, which may have otherwise considered QUIC as a direct threat to their business model and their ability to manage networks, by allowing them to block QUIC connections. According to one of the respondents, also active in the QUIC working group, network operators, and possibly a few governmental agencies, also identified that the fallback option may also open valuable opportunities for carrying out individualized blocking:

the network operator can force the network into this state where they learn more and they have more ability to do individualized blocking and they can do more that way. So QUIC isn’t as powerful as it says it is in the face of a network adversary who’s willing to incur [limited] performances.

21

While this fallback option was not contested as such, it played an important role in diffusing the opposition of a part of the industry. Throughout the standardization, it was presented by one of the driving forces in the process as an “escape hatch”—which would allow QUIC proponents to dismiss concerns expressed by network operators and prevent any obstacle to the standardization.

Cryptographic model

A second controversy identified from observation of the mailing and interviews relates to the cryptographic model used by QUIC to encrypt communications.

Indeed, the first proposal of Google included its own proprietary cryptographic model—that had been developed for the purpose of this project—while the rest of the IETF participants were rather doubtful and wanted to integrate TLS 1.3, 22 which was being standardized in parallel. The choice of TLS 1.3 prevailed after several discussions on the list and during the first meetings of the working group. This issue was the primary focus in the early stage of the process and the first to be resolved, allowing the group to move on. The development of the cryptographic model for QUIC was done in part through RFC 9001 23 of the IETF on how to use TLS to secure QUIC.

As mentioned earlier, Google had mobilized world-leading experts in the design of G-QUIC, including Adam Langley, a leading engineer at Google considered as one of the most renowned cryptography experts at the time. In collaboration with other teams, they had devised several optimizations to TLS 1.2 into this new proprietary protocol. Interestingly, some of these innovations were later integrated into TLS 1.3, illustrating also how some of the features of TLS 1.3 were designed in anticipation for QUIC. 24 Yet, this proprietary model was seen as insufficient for the Internet as a whole. According to an interviewee, experts “realised early on through private discussions with Google was that their proprietary protocol was working for their experiment, but it would not have the legs once you would go to the internet scale.” 25

Google had a number of meetings with the leadership of the IETF to see what it would take to have a new transport protocol within the IETF and what would and would not be included in the final specifications. For instance, the congestion control piece was deemed “separable,” after consideration as to whether it would be part of the core of what is known as QUIC now. This discussion also addressed the case of the cryptographic model, which was eventually integrated into the core of QUIC. The question was whether encryption would be a “mandatory” part of the standard or not.

During the standardization of HTTP2,

26

a process on which QUIC was drawing to a large extent, a decision had been made not to make encryption a mandatory piece of the standard. After the 2013 Snowden revelations, it became untenable to opt for a similar choice—and encryption was considered by most as a defining and needed feature in the case of QUIC. According to an interviewee working in the Internet industry:

the underlying attitude persistent in the group in the way we approached the problem of encryption was shaped by the pervasive monitoring attacks that have been revealed, and also the fact that the security model was much easier to reason about once you had encryption in there. If you have encryption all the time, many things would get simpler, such as all the denial of service mitigation. The security features we had rested on the idea that you had encryption. Once that decision was made, we embraced it all the way. The only thing that came up was about packet number encryption, in the context of the spin bit debate.

27

Several interviewees pointed to a meeting of the QUIC working group in January 2018 in Australia, presented by a respondent as a “pivotal moment in the design when we realised we could encrypt everything, literally everything.” This refers to encrypting also the “sequence numbers,” 28 which would be in clear for TCP—and led in part to the spin bit controversy described in the following section.

Spin bit

The controversy around the spin bit was related to the lack of visibility that network operators would suffer from, as a result of the encryption of communication sequence numbers. Debates over QUIC led to weighing the privacy needs of users with industry needs to monitor networks (Cath, 2021). Indeed, QUIC prevents network “observers” from having access to the protocol header field, which refers to the metadata of the communication. In order to address the challenges raised by encrypting this field, a mechanism was considered whereby a single bit of data would be periodically shared “unencrypted” in order to help operators measure latency and the overall performance of their network.

Until the end of the standardization process, this issue remained at the agenda of the working group. There were two main questions at stake: the first being about whether the IETF should create such a specification at all to give some visibility to network operators thanks to one “spinning bit” that would provide them periodically with information in clear; and the second regarding whether this spin bit specification should be considered optional or mandatory in QUIC’s future implementations.

For one of the respondents, network operators used this issue to create a precedent, in anticipation for other standards to come. A respondent explained that the technical controversy here seemed secondary to them—it was all about “sending a message.” This feature eventually materialized in the final specification, but as an optional one. Indeed, Google does not use it in Chrome, nor does Mozilla Firefox—while Apple, Microsoft, and Broadcom had for instance flagged their interest during the standardization process. 29 The IETF specification states that the spin bit is optional for end points to participate in, and both endpoints need to agree to participate before the mechanism becomes useful.

The spin bit was perceived by some as:

the last chance to see one bit left. [But now the] spin bit is all over. It is sealed, never going to happen that way. It’s hermetically sealed. You can’t see inside QUIC. You’re not allowed to. Because if you can see you can play. And if you play, you’re playing in someone else’s territory and they’re basically saying not your space.

30

Indeed, it appears that the spin bit was part of a bigger debate in the IETF in relation to the power balance between “end points” (the equipment of the end users) and network operators. This is well illustrated by another IETF process called Plus, intended to be a framework that would allow the network operators to communicate with end points and express their interests in the type of treatment happening in the network, so that end points could react accordingly. Plus was also expected to give the possibility to end points to ask the network to do something in exchange. But this process “died in [a] very messy way” according to one respondent. 31 Several IETF experts attacked this process on the basis that it was building software on the endpoints, and this was not in the interest of end users. The intent was to give network operators some “meta” control, but this eventually failed.

In the wake of this failure, the Internet Architecture Board (IAB) of the IETF proposed in the late 2010s a number of RFCs on the topic: RFC 8558 on how an end point can communicate with the network and vice versa, and RFC 8546 about what is available to network operators when two end points use encryption. These RFCs reflected on principles around how end points and the global network should communicate with each other; ultimately, the spin bit could be considered as a new manifestation of those intentions to support network operators in the face of more encrypted communications.

Outcome of the process: a silent revolution, with Google at its core

Several interviewees used this case to highlight Google’s omnipotence within the IETF and the company’s power to determine what is and is not possible in its working groups. Some experts elaborated on the lobbying work they had to do before some of IETF’s formal meetings, in the hope of being greenlighted by Google in the QUIC working group, hoping that their own ideas would eventually feed into the final specification.

From most interviews with IETF experts, there is a general recognition that the outcome of the standardization process is not exactly what Google initially came up with, signaling an evolution of the technical proposal, encompassing more concerns. Yet, a respondent explained that this does not suggest Google’s lack of influence:

Google wasn’t trying to keep control of the protocol. Google wanted a change in the industry. It isn’t Google’s QUIC; it’s the entire upper level content folks saying it’s time this industry get to know where the money is and where the control is.

32

The development of QUIC is a process by which Google mobilized, discreetly but effectively, 33 other large players realizing that such a shift in the transport layer would benefit their entire industry.

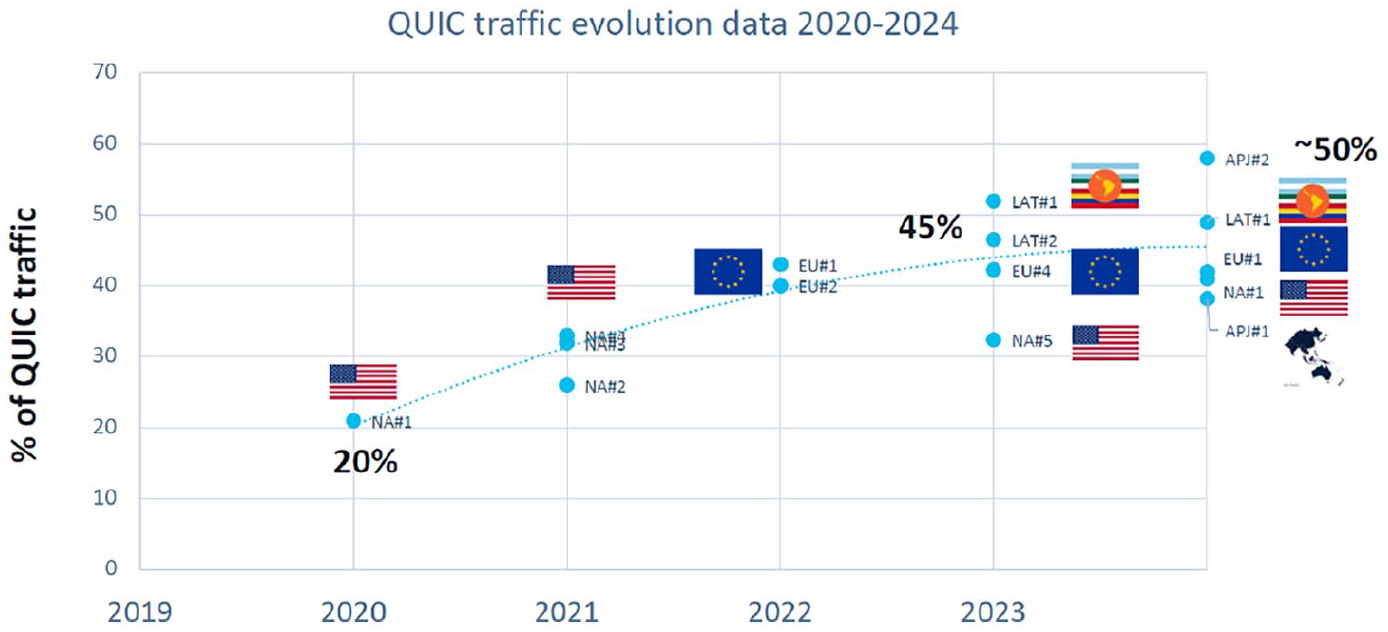

Indeed, key companies including Microsoft, Cloudflare, Ericsson, Akamai, and Facebook have deployed QUIC at scale since 2021 (IETF, 2021; Facebook, 2020). This growth led industry commentators to argue that QUIC will soon “eat the Internet” (Duke, 2021). A more recent account from Cisco (2024) indicates that Meta’s services (Instagram and Facebook) almost exclusively rely on QUIC, as does Google’s YouTube. In terms of flows, almost half of the traffic in Europe, the United States, Latin America, and the Asia Pacific region uses QUIC (as opposed to TCP), as illustrated in Figure 1.

QUIC traffic over time from 2020 to 2024.

Another interesting indication which may be drawn from this figure is that QUIC was a reality for the Internet, even prior to the finalization of its IETF specification. QUIC’s early deployment by Google already accounted for one-fifth of the Internet traffic in the United States. The process of standardization allowed it to scale and achieve a more global reach at a fast pace.

QUIC has, as of 2024, more than 20 implementations.

34

Google, Meta, Apple, Alibaba, Amazon, and Microsoft have developed their own implementations—which vary depending on their use cases and design choices—but are expected to share the core of the IETF specifications, to ensure interoperability at the level of UDP. According to a respondent, the vertical structure of most of these large companies means, however, that they have the possibility to diverge significantly in terms of their implementation, especially when they own the apps and the servers themselves. Indeed, a research participant stated that

it doesn’t actually matter what we do; and we may, or may not, conform to the idea. You can’t tell from the outside [. . .] I can do what I want. So how do you know that all these implementations follow the standard? And the answer is, they don’t. But there’s no enforcement mechanism anymore, because interoperability is really just interoperability at a different level. It’s UDP.

35

QUIC has now become a reality for the Internet, and appears—while, of course, facing a number of controversies, as analyzed in this article—to have operated a massive shift in the Internet industry with potentially long-lasting implications. The next section will explore what this means for the network as a whole and how to understand QUIC in the context of the various claims made by state actors around their digital sovereignty.

Infrastructuring network control in the shadow of states

QUIC was formally adopted at a time when many countries in the world, including in the EU, were advocating for their “digital sovereignty” in Internet standard-setting processes and beyond (Perarnaud and Rossi, 2023).

Yet, the analysis of QUIC’s formulation process, and explicit questions on our part to our respondents, indicates that state actors were rather absent in these discussions. However, the issue of states’ control over networks was nonetheless at the center of the debates, and regularly used as justification for both limiting and expanding encryption of the traffic.

States and QUIC: Google’s strategic pre-emption of digital sovereignty claims

Many of the innovative features of QUIC in comparison to TCP could be interpreted as a challenge to the ability of states (and national network operators) to analyze traffic and communications. According to a respondent, QUIC “doesn’t let the network operator inspect [. . .], and it is designed that way specifically to defeat certain kinds of state sovereignty.” 36

However, a careful reading of the QUIC mailing list does not suggest a significant involvement of state actors in this process. While US and UK agencies were seen as closely monitoring the standardization process, there was no clear hindrance from states to the development of QUIC. When questioning research participants about the absence of states in the mailing list despite the obvious impact of QUIC on their sovereignty—pointing out that QUIC did not seem to mobilize these players in any significant way—a few participants explained that Google had, in fact, pre-empted this debate from the very start of the process.

While QUIC proposes to encrypt all traffic, including the sequence numbers, Google had strategically proposed that if a network operator decides not to use QUIC (with UDP), it can use a default solution (the fallback presented earlier) offering better “visibility” of the network. In fact, this configuration offers even better visibility for such actors, due to a technical reason linked to the interaction between QUIC and TLS over TCP.

As we previously introduced, the proposed fallback was both a necessity for Google at the time, to ensure that all network operators and middleboxes would accept the traffic, but also a strategic move to ensure limited opposition to QUIC’s deployment.

Several respondents, who participated directly in the QUIC standardization process, confirmed that the fallback offered them a crucial “escape hatch.”

37

One of them elaborated further by stating that

It actually became the escape hatch every time someone complained, we would say: ‘no, sorry, we don’t care. You can just block it on your network.’ And in reality, what everyone knew—and this is where it got a bit political, and it wasn’t said on the list—was that [. . .] in reality, what everyone knew was that QUIC worked so well that if, for example, an ISP started blocking QUIC in reality, their network wouldn’t work as well.

38

Control inversion: the indirect effect of QUIC’s global deployment for states

While this fallback option indeed could benefit network operators and thus states, at first sight, it however is becoming increasingly difficult to implement as QUIC rolls out across the network. In other words, it is much more difficult than before for a state to block it (by blocking UDP)—without this having any major impact on latency.

This too seems to have been anticipated by Google, but it was not part of the argument in the mailing list or in IETF meetings. In the words of a research respondent:

Now that QUIC is pretty widely deployed, it makes it much harder for the network operators to say we are going to block it now because now their network looks worse. It performs worse than the other network operators.

39

Research respondents suggested that as of early 2024, only a few ISPs blocked QUIC, and their actions remained marginal. QUIC is mostly blocked in the private networks of companies and schools—but much less so for the rest of the Internet. This can be justified by the real disincentive for states to slow the performance of their own networks.

However, the rapid and massive deployment of QUIC at the global level may further alter the ability of states to resort to this fall back process. According to several interviewees, 40 the fact that QUIC has now a fairly wide deployment grants even more power to the deployers of QUIC. Indeed, informal discussions within these companies suggest that you could soon “imagine somebody removing the fall back. You could imagine a client that says I’m only willing to talk QUIC, or a service that only is willing to listen QUIC and not do TCP, TLS over TCP, and be willing to say failure is a hard failure.” 41 Another respondent added that, as we are starting to see media and streaming media solutions being built for QUIC, it may “become too big to be an option for networks.”

Accepted on the ground that network operators could resort to an alternative option if they disliked QUIC, its wide deployment could further invert the power balance to the benefit of QUIC’s deployers and may force states into a more active role to monitor the Internet’s traffic. 42

This emphasizes how QUIC’s process of infrastructuring of new forms of network control cannot be disjointed from the specific contexts in which it is implemented and the various actors engaging with it. The built-in uncertainty as to the permanence of the fallback option indicates the dynamic and contextual nature of this process, which may evolve as a function also of the country or region-specific relationships entertained between public authorities, large technological companies, and Internet service providers.

“Google among the machines”—a new step in the evolution of the global Internet?

From a technical standpoint, QUIC is a fundamental evolution for the Internet’s core architecture. QUIC indeed brings transport and security at the application layer, 43 making most of the layered models that have historically represented the Internet 44 almost “irrelevant abstractions.” 45 This shift invites for a discussion of its implications for the process of Internet consolidation.

During its standardization process, QUIC inevitably raised concerns of consolidation, in part due to the very choice of Google’s initial brand name for this protocol. Those concerns are still heavily debated by experts. 46

The literature review and research fieldwork nonetheless show that QUIC both makes invisible and centralizes most of network operations—and thus structurally favors actors siting at both end points of the traffic (ten Oever, 2021). According to an interviewee, “Google has been the fastest to realise” the potential of such a technical design “and has literally gone way out on a limb” 47 with QUIC. Similarly, IETF expert Huston (2024) publicly commented that QUIC is “pushing both network carriage and host platform into commodity roles in networking and allowing applications to effectively customize the way in which they want to deliver services and dominating the entire networked environment.” 48

According to another respondent, an academic researcher, the design of QUIC fosters a form of centralization of control by the destination network:

As the recipient of the QUIC’s ID connection packets, [. . .] it’s just the destination network that has control, which can have control over routing, but everything in the middle, the whole transit network [. . .] loses a little sovereignty at that level. [. . .] it’s clearly the destination networks, so everything that’s CDN, everything that’s Google, Microsoft and others, that is going to take control.

49

In a seemingly Darwinian process, 50 QUIC provides a case in which the content/application industry appears to have attempted to conclusively take over the “lower-layers” carriers industry, in a strategic and potentially irreversible way. 51 From a technical perspective, QUIC is said to be characterized by much more vertical integration than other protocols, in part as a result of the encryption piece. 52

In this process, the arguments of limited latency and stronger privacy were used simultaneously by QUIC proponents, underscoring how some of the primary actors that trade personal data for “free” services, such as Google and Meta, did not shy away from using privacy motives to blind network operators from their traffic. Civil society representatives were often met with this conundrum of supporting large companies for privacy purposes in the context of QUIC, noting that while “we are fighting for people’s rights to communicate over the Internet, in some cases, that ends up being people’s rights to communicate with Google.” 53

It transpires from these examples that QUIC appears particularly suited for networks and services with large resources and control over both clients and servers, to implement it at scale. As underlined by a respondent: “If you want to manage QUIC connections and offer a QUIC interface, in other words, a better user service, you need to be powerful.”

54

This is further explained by an engineer working for Meta, when commenting on the overall speed of adoption of this protocol by the industry:

We are able to achieve such high numbers [close to 100% usage of QUIC by Meta] due to the fact that we control the client and server for the vast majority of our traffic and so we can more proactively use QUIC. For third party clients who use more cautious policies, the numbers are not as high.

55

To be deployed effectively and harness its latency gains optimally, QUIC indeed benefits to structures with large farms of servers, with control points on both ends of the network—that Internet giants typically possess. This is not the case for other companies that, if they cannot (or do not want to) outsource this part to those large providers, may not be able to transition to QUIC. The limited adoption from smaller actors (as of 2024) is also related to the lack of technical support and updates available to implement QUIC in many server environments, 56 as well as the limited awareness, or even appetite within (both big and large) companies, for a protocol with direct implications for the stability and security of their private networks. 57

Many actors will need to continue relying on TCP, but at the cost of being sub-optimal from a network perspective in comparison to the most well-known services such as YouTube. 58 This indicates that QUIC may not completely replace TCP in Internet’s traffic, as both will likely coexist in the medium term due to the mixed incentives of the great range of actors active on the Internet. Yet, this shows how QUIC also indirectly fuels a form of Internet consolidation and concentration, by limiting via the Internet infrastructure itself the capacity of smaller actors to compete with larger ones.

Conclusion

This article has analyzed the processes of development and deployment of QUIC, an IETF standard that is arguably fostering a momentous architectural change in the ways in which communication and data packets transport happens on the Internet. We have investigated the QUIC standardization process as a site of “infrastructuring” where different socio-technical dynamics emerge between various actors—the giants of “Big Tech,” notable technologists, other actors of the Internet industry, as well as nation states—the latter as crucial, yet often silenced, entities.

We have sought to demonstrate how the QUIC process “infrastructures” reconfigurations in power balances and the respective weight between those actors, with potentially long-term consequences for Internet governance. In doing so, we have paid special attention to three socio-technical controversies around particular aspects of the QUIC standardization processes, and their performative role in contributing to define “in practice” the implementation of particular visions of the network of networks and its openness, architecture, and affordances.

Ultimately, this research contributes to unveil a number of insights related to the place of the private sector in standardization processes; the extent to which processes of consolidation and concentration of the Internet around the “big ones who get even bigger” are influenced by the making of standards; the extent to which discussions and controversies on specific technical aspects of a standard actually reconfigure broader balances of power and decision-making for the present and close future of the Internet.

While infrastructures are generally considered as the domain of the invisible (Star, 1999), this research offers an interesting instance where the heterogeneous visibility offered by Internet infrastructures over communications becomes itself the main subject of contention. In doing so, this article thus partially answers previous calls in infrastructure studies, made for instance by Harvey and colleagues, to observe infrastructural systems from the perspective of the “patterns of visibility and invisibility to which they give rise” (Harvey et al., 2016). These insights ultimately speak back to the very concept of “infrastructuring” and suggest further ways in which the evolving and processual dimension that is at the core of the shift from “infrastructure” to “infrastructuring” can be traced back and made explicit by fieldwork, when this concept supports the analysis of the Internet and networked artifacts.

For scholars of digital sovereignty, and of how this multi-faceted and at times ambiguous concept gets embedded in infrastructures and standards, the QUIC case prompts further questions: can “digital sovereignty” of private actors exist, and have large technological companies, with QUIC, taken one more step toward their own digital sovereignty? To what extent are digital sovereignty claims becoming an explicit part of IETF discussions and, more generally, of standardization processes? As the literature on both “infrastructuring” and digital sovereignty keeps on growing, such questions will no doubt find an increasingly important place on the research agenda, and speak to the ways in which Internet governance evolves and will be studied in the years to come.

Footnotes

Appendix

Affiliation of the research respondents.

| Private sector:

Cisco, Cloudflare, dVide, Ericsson, Google, Mozilla |

| NGO/non-profit:

Center for Democracy and Technology (CDT), American Civil Liberties Union (ACLU), FreeBSD |

| Technical organizations:

AFNIC, APNIC, Internet Society |

| Academia:

University of Mons, University of Amsterdam |

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was made possible with the support of the Brussels School of Governance (BSoG-VUB), the Research Foundation – Flanders (FWO, Belgium), the project DIGISOV funded by the French Agence Nationale de la Recherche, the Centre national de la recherche scientifique (CNRS, France) and the DNS Research Federation.