Abstract

This study investigates the association of socioeconomic status (SES) and digital and AI literacy with types of Chat GPT use by college students, with subsequent implications for academic self-efficacy and creativity, conditioned by trust. Analyses of a survey of U.S. college students (N = 947) show that SES has a greater association with AI literacy than with general digital literacy. Two dimensions of Chat GPT activities emerge: academic support and displacement. Structural equation modeling reveals that AI literacy is positively associated with both activity dimensions, while digital literacy is unexpectedly a negative contributor. Further, academic support is strongly linked to positive outcomes whereas academic displacement is negatively associated. Attitudinal trust in Chat GPT moderates the overall relationships. Our findings suggest that conventional digital inequality persists and evolves with generative AI, traditional digital literacy becomes insufficient in the age of AI, and trust in this new and opaque digital technology influences these relationships.

Keywords

Chat Generative Pre-Trained Transformer (ChatGPT), the conversational AI released by OpenAI on November 30, 2022, rapidly accrued over 100 million active users within 2 months after its initial launch (Halaweh, 2023). In the context of college education, a recent survey showed that 30% of U.S. college students had used ChatGPT to complete written assignments; 60% of those reported using it for more than 50% of their written assignments (Intelligent, 2023). Some scholars assert that ChatGPT can reduce educational inequality by providing low-cost personalized tutoring services and learning assistance (Baidoo-Anu et al., 2023; Cotton et al., 2023; Kasneci et al., 2023; Sallam, 2023). Others are concerned that a digital divide in this domain may emerge (Fui-Hoon Nah et al., 2023; Mhlanga, 2023), as digital innovations often amplify underlying human and institutional forces, reinforcing existing inequalities (Toyama, 2011; Van Dijk, 2020). These inequities may occur in associations of SES with three levels of the digital divide: skills, use, and outcomes (Van Dijk, 2020; Van Deursen and Van Dijk, 2019). Further, trust in ChatGPT may influence these relationships. Applying the digital divide framework, this study explores how central influences of socioeconomic status and digital and AI literacy are associated with engagement in a wide range of reported ChatGPT uses/activities and in turn related to two important educational outcomes, academic self-efficacy and creativity.

ChatGPT controversy in college education

The ongoing controversy about ChatGPT focuses on its potential to both support and displace learning in educational settings. On the positive side, ChatGPT can enhance task efficiency (Halaweh, 2023; Sallam, 2023), encourage student engagement (Cotton et al., 2023; Kasneci et al., 2023), improve writing skills (Halaweh, 2023), facilitate information seeking (Arif et al., 2023), and supply personalized tutoring (Baidoo-Anu et al., 2023).

However, others emphasize that ChatGPT is inherently limited in content quality, accuracy, and validity (Baidoo-Anu et al., 2023; Sallam, 2023), which can mislead students. In addition, concerns abound regarding its potential for misuse and overreliance (Fui-Hoon Nah et al., 2023; Kasneci et al., 2023), thereby hindering learning outcomes.

Thus, a vital question emerges: does existing digital inequality persist in the context of ChatGPT use? Supporters champion ChatGPT because of its low-cost access: those who lack access to educational resources that require memberships (e.g. Coursera and Grammarly) or are unevenly available geographically can receive personalized assistance in a cost-efficient way (Baidoo-Anu et al., 2023; Haque et al., 2022; Kasneci et al., 2023). The user-friendly interface also makes it easy for students with lower levels of digital literacy to access information that would otherwise require more sophisticated knowledge and skills such as coding.

On the other hand, past research on the digital divide has highlighted inequality in access to, skills necessary for, and use of, information and communication technology and their associated outcomes (Van Dijk, 2020). Much different from the utopian view of technology being an automatic force for democracy and equality (critiqued by Katz and Rice, 2002 syntopian perspective) and digital saturation (Norris, 2001), digital divide research suggests that technology can amplify underlying human and institutional intent and capacity; as such, it tends to exacerbate existing inequalities (Toyama, 2011; Van Dijk, 2020). As a key contributor, individuals’ socioeconomic status (SES), encompassing education, occupational prestige, and economic resources (Brooks et al., 2011; Duncan et al., 1972), often influences how individuals understand, use, and benefit from technology. Those with greater initial SES are more likely to have earlier and greater access (Katz and Rice, 2002), develop more knowledge and skills (Hargittai, 2002), engage in more information-based or capital-enhancing uses and activities (Hargittai, 2010; Hargittai and Hinnant, 2008; Helsper and Eynon, 2010), and reap more real-life outcomes and benefits more than those with lower initial SES (e.g. making new friends, expressing political opinions; Van Deursen and Helsper, 2015), thus increasing social inequality. Thus, the use of ChatGPT can exacerbate fairness issues by putting non-users at a disadvantage (Cotton et al., 2023), and ultimately reinforcing digital inequality (Fui-Hoon Nah et al., 2023; Mhlanga, 2023).

Owing to the contrasting perspectives in the digital divide literature, and the lack of research on ChatGPT and college students specifically, we hypothesize and test associations among SES, skills, use/activities, and outcomes in the context of ChatGPT use by college students.

Contributors to ChatGPT inequality

SES and literacy

Two forms of literacy emerge in the discussion of ChatGPT skills inequality: general digital literacy and AI literacy. The two constructs are related, as general digital literacy can provide a foundation for AI literacy (Olari and Romeike, 2021). But they are also distinct because users who are proficient at traditional digital skills may not possess the new set of knowledge and skills (e.g. awareness of algorithmic profiling and bias, and potential for inaccuracies) required by AI technology. Further, experience with AI-related technology does not guarantee proficiency with other digital platforms. Given this conceptual and practical divergence, we test how both types of literacy are associated with SES and ChatGPT use.

Digital literacy

Digital literacy refers to “the awareness, attitude and ability of individuals to appropriately use and interact with digital technology (tools) to easily and effectively access information in different formats (e.g. text, videos and images) in a digital environment” (Nikou et al., 2022: 373). Digital literacy focuses on users’ overarching abilities to utilize conventional digital applications such as email, websites, and social media (Van Deursen et al., 2016). Extant research consistently shows that individuals with higher SES are more likely to possess higher digital literacy (Hargittai, 2002, 2010; Hargittai and Hinnant, 2008; Helsper and Eynon, 2010; Van Deursen and Van Dijk, 2014), which in turn is associated with more perceived technology usefulness, less difficulty in use, and more professional development and lifelong learning (Kohnke, 2017; Mohammadyari and Singh, 2015).

AI literacy

AI literacy reflects the unique knowledge, skills, and understanding required to interact with AI technologies. The introduction of AI-based technology and applications creates new challenges for individuals (Carter et al., 2020). Definitions of AI literacy (e.g. Carter et al., 2020; Cetindamar et al., 2022; Laupichler et al., 2022; Long and Magerko, 2020; Ng et al., 2021) emphasize the need to take both a technical and a social perspective. People’s abilities to critically utilize and evaluate AI contribute to the successful appropriation of AI into individuals’ personal, social, and professional lives (Yi, 2021). AI literacy is expected to be influenced by SES based on prior research on the skills level of the digital divide. As indicated by Druga et al. (2019), a significant difference in their ability to understand AI concepts was observed between children from low- and moderate-income schools and the ones from high-income schools, because the latter had more experience coding and using advanced technologies. In an investigation of AI-mediated tools (Goldenthal et al., 2021), household income and device access were positively associated with the extent to which people were comfortable with AI.

SES and activities

SES is typically also associated with variations in users’ digital activities (Van Dijk, 2020). However, limited research has been conducted to examine how college students use ChatGPT for various activities and how SES is associated with such use. As noted above, although AI has the potential to provide students with personalized learning support as intelligent tutors and learning partners (Hwang et al., 2020), the capabilities of ChatGPT for content generation have raised concerns about its misuse (Fui-Hoon Nah et al., 2023).

In response, educators recommend managed use. For example, Halaweh (2023) encouraged reverse searching, through which students use search engines to find support for ChatGPT content, and Arif et al. (2023) commented that ChatGPT can be used to assist reviewing literature, structuring writing, and paraphrasing, but it should not be used to generate the overall framework or text. Table 1 summarizes common educational activities of ChatGPT discussed in the existing literature and how they have been considered as recommended (a tool used with critical thinking thus supporting learning) or not recommended (a tool used without critical thinking thus displacing learning).

Common ChatGPT activities in educational settings.

We assess such ChatGPT activities by asking students to report the frequency of their use and analyze those responses to identify possible activity dimensions.

Literacy and activities

The paradox between the “black box” nature of AI, as represented by autonomy, machine learning, and inscrutability (Berente et al., 2021), and its surface-level accessibility, as characterized by its user-friendly interface, natural interactions, and low cost, has led to debates on how lay people should prepare for the AI era. With the user-friendly format of chat-based interactions, people may indeed need only general digital literacy to navigate ChatGPT, without knowing the underlying AI mechanisms (Wang et al., 2022).

Conversely, others argue that AI-specific literacy is useful to appropriately understand and manage ChatGPT’s capabilities, acceptability, and outcomes (Fui-Hoon Nah et al., 2023; Mhlanga, 2023), necessary for effective applications and evaluations of AI (Ng et al., 2021). That knowledge and use are inseparable also corresponds with the underpinning logic of explainable AI, which attempts to provide the reasoning behind AI actions and decisions that can facilitate human trust in AI (Fui-Hoon Nah et al., 2023). With the large discrepancy between AI’s complexity and its usability, it is uncertain which form of literacy is more associated with ChatGPT’s activities.

Educational outcomes of ChatGPT use

We examine two learning outcomes of great scholarly interest in this debate: academic self-efficacy (ASE) and perceived academic creativity (PAC). ASE, defined as individuals’ beliefs in their abilities to attain educational goals, has been identified as a dependable indicator of academic success (Honicke and Broadbent, 2016). One way that ChatGPT use can mitigate educational inequality is by fostering a sense of empowerment and agency (i.e. increasing efficacy) through being able to obtain substantial related material in an organized form. However, delegating tasks to ChatGPT may instead make individuals feel less capable and engaged in learning (i.e. decreasing efficacy). PAC, as a combination of solution originality, novelty, and usefulness, is another indicator of academic achievement (Gajda et al., 2017). Because ChatGPT can generate ideas and present new information and viewpoints, it may serve as a starting point and elicit more creative thoughts; however, some scholars are concerned that ChatGPT may replace students’ creativity and originality.

The moderating role of trust

Finally, individual differences such as trust may be associated with the way people engage with AI technology and experience outcomes. Trust plays a pivotal role in digital literacy and use, as “an attitude of confident expectation in an online situation of risk that one’s vulnerabilities will not be exploited” (Corritore et al., 2003: 740). In the educational context, ChatGPT’s trustworthiness is primarily assessed based on information quality, with perceptions of anthropomorphic intelligence supporting user trust through interactivity and social intelligence (Sun et al., 2024). Extant studies mainly investigate the direct effects of trust, as seen in the Modality-Agency-Interactivity-Navigability model (Sundar, 2008) and technology acceptance research (Wu et al., 2011). For example, Tossell et al. (2024) found perceived trustworthiness of ChatGPT predicted students’ intent to rely upon it for future educational purposes. Likewise, Choudhury and Shamszare (2023) demonstrated that trust in ChatGPT predicted people’s intent to use and actual use of it. More subtly, trust can mediate between perceptions and prior experiences with, and use and evaluation of, an online technology (Corritore et al., 2003).

We extend this research by proposing trust as a qualifying condition for relationships with the uses and outcomes of ChatGPT. As trust signals willingness to rely on a technological device (Molina and Sundar, 2022), the associations of digital literacy and AI literacy with ChatGPT activities may be stronger among students who trust it more. Similarly, associations of use may be more associated with reported outcomes when the user trusts this inscrutable resource. Only one existing study on ChatGPT treated trust as a moderator, remarking that perceived enjoyment was related to positive attitudes toward and intentions to use ChatGPT only under the condition of high trust (Rahman et al., 2023), yet that study did not investigate ChatGPT use.

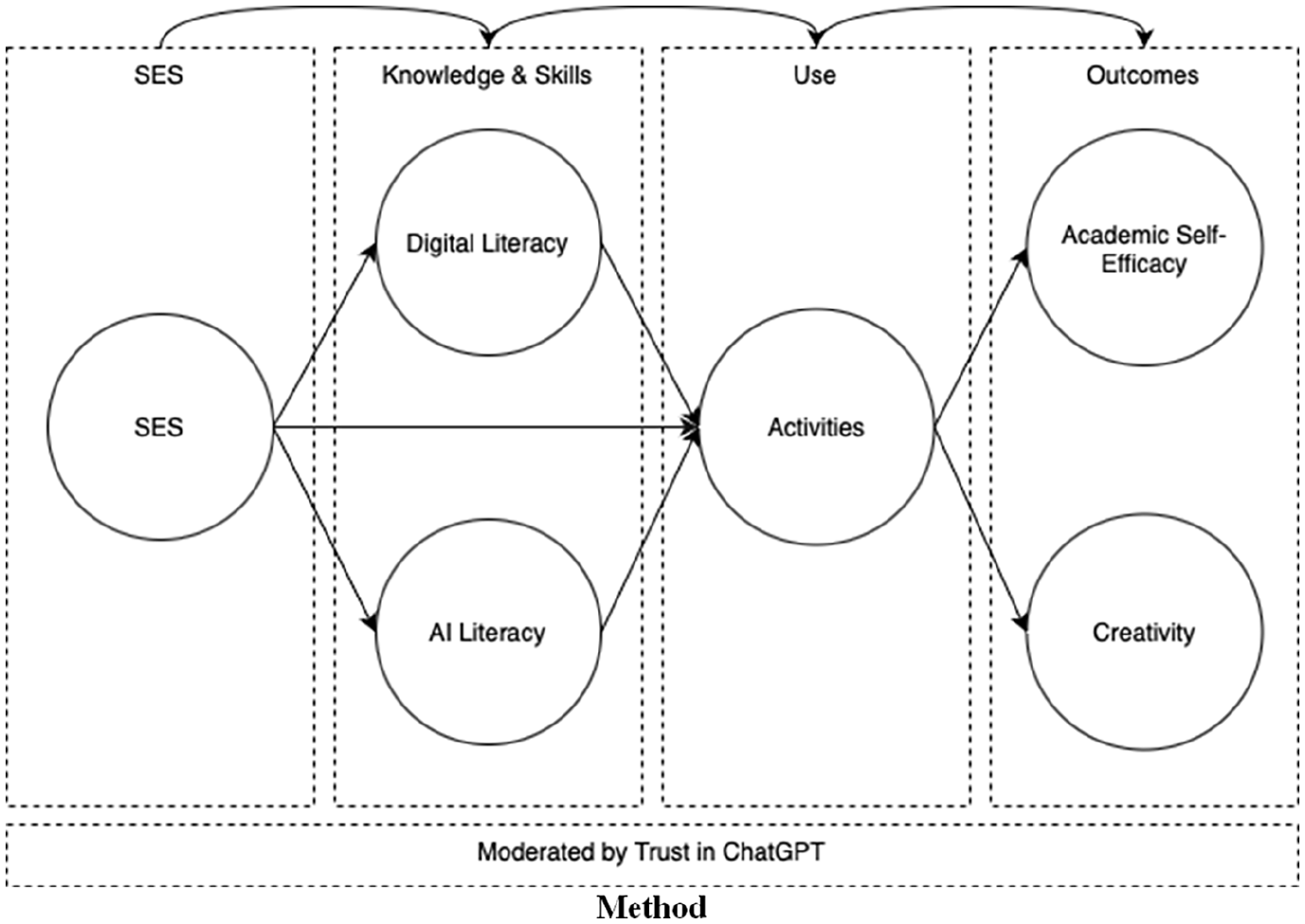

Figure 1 presents the conceptual model. Given the purpose of model testing, a large-scale quantitative survey was the most suitable methodological choice for examining multiple relationships within a large sample of relevant respondents. This approach aligns with the quantitative research tradition in the study of the digital divide (Hargittai and Hinnant, 2008; Katz and Rice, 2002; Van Dijk, 2020; Van Deursen and Van Dijk, 2014).

The conceptual model depicting potential inequalities in the context of ChatGPT.

Method

Procedure

All survey measures were pilot-tested with a sample of college students (N = 360) at one university conducted from October 9 to November 14 to assess measurement validity and reliability (details available from the first author). Students received research credit for their participation. The pilot survey also provided two open-ended comment boxes and asked participants to fill in other schoolwork-related activities not listed; however, no other distinct activities emerged, indicating that the list from Table 1 offered good coverage. In the full study, the participants were informed of the general purpose of this study, provided online consent, and proceeded to the main questionnaire, which took approximately 9 minutes. Their participation was compensated by Qualtrics. Both studies received human subjects research authorization.

Participants

For the full study, we collected a purposive but not representative national sample of U.S. college students by recruiting participants via Qualtrics through their panelist pools during November 2023. The sample consisted of 947 ChatGPT users, from U.S. adult (limited to 18 through 35 years old) college students from 4-year colleges/universities (no city/community colleges, online programs, or international exchange students).

Measures

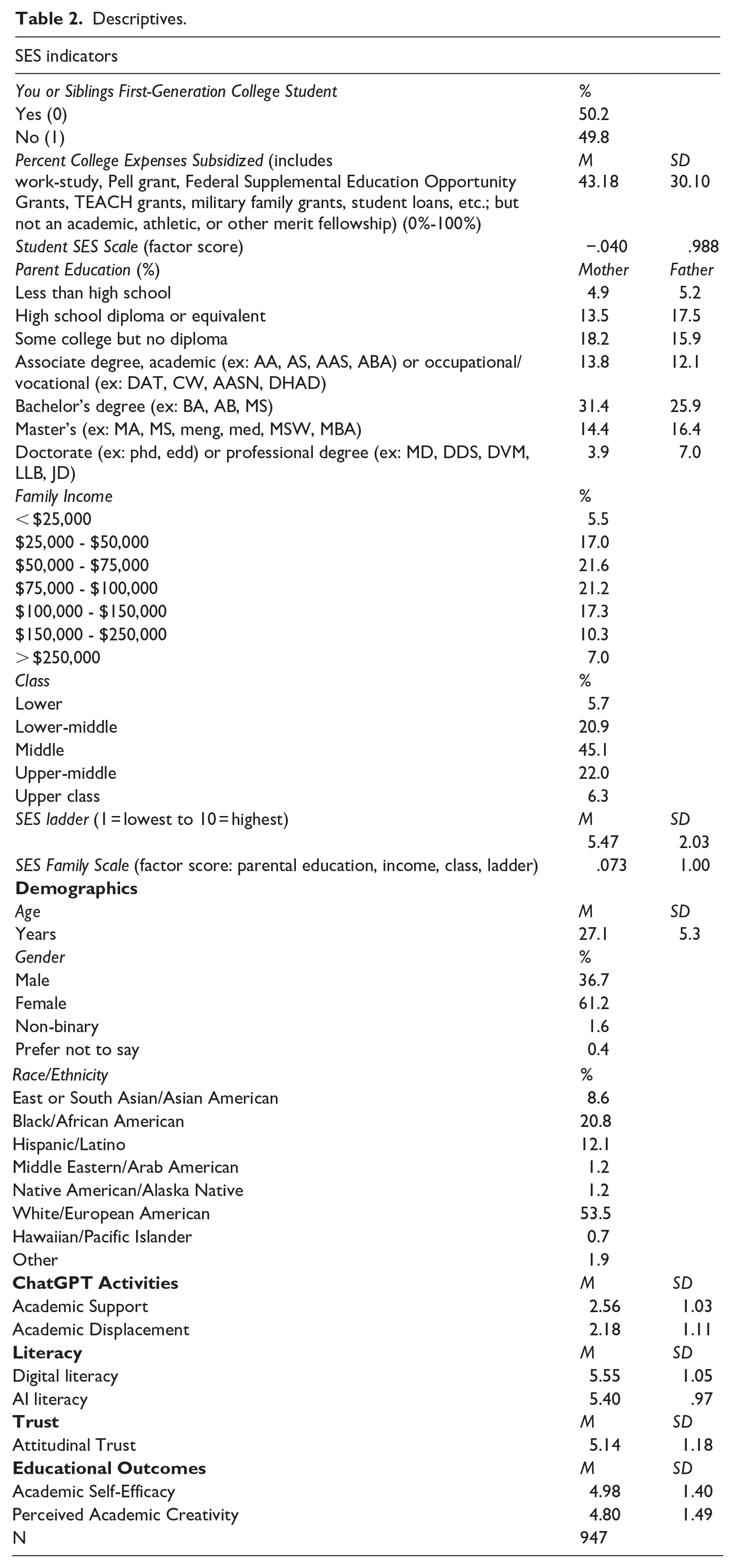

Table 2 provides sociodemographic information and scale descriptive statistics. Scale reliability was assessed using McDonald’s Omega generated with maximum likelihood, an approach that overcomes some shortcomings of Cronbach’s alpha (Hayes and Coutts, 2020).

Descriptives.

Socioeconomic status

Based upon an extensive review of literature on SES measures (please see Supplemental Appendix A for a summary), and analysis of preliminary measures in the pilot study, SES was measured by 7 questions concerning first-generation college student status, percentage of student loans, parental education, estimated family income, perceived family social class, and socioeconomic ladder. We collapsed infrequent categories and recoded reversed items so that higher values on each item represented higher SES. Exploratory factor analysis (EFA) revealed two dimensions, with sufficient indicator loadings onto the respective dimensions (Howard, 2016). The first factor was family SES, containing 5 measures (mother education, father education, estimated family income, perceived family social class, and socioeconomic ladder; Omega = .72). The second factor was student SES (first-generation college student status, percentage of student loans; Spearman’s rho = .16, p < .001). Accordingly, two-factor scores were generated (r = .19, p < .001). This indicates that students’ financial, social, and cultural resources within college can be relatively independent from their family SES, at least in the case of U.S. college students.

Literacy: digital literacy and AI literacy

Ng’s (2012) digital literacy scale included 10 items representing three dimensions (technical, cognitive, and socio-emotional; Table 2, p. 1070) and has been used widely in educational contexts; Nikou et al. (2022) adapted it by dropping one item about collaboration. We further dropped one item about collaboration and included 8 seven-point Likert-type items that focus on individuals’ digital literacy (Omega = .90).

AI literacy was measured with 12 seven-point Likert-type items from the AI Literacy Scale developed by Wang et al. (2022), encompassing four subdimensions: awareness, usage, evaluation, and ethics. Although the scale is new, Celik’s (2023) study corroborated its reliability. We performed confirmatory factor analysis (CFA) based on the cutoff values (RMSEA ⩽ .06, SRMR ⩽ .08, and CFI ⩾ .95) proposed by Hu and Bentler (1999). Because the awareness and evaluation dimensions were highly correlated (Brown, 2015), r = .92, we removed the awareness dimension and attained an excellent model fit (RMSEA = .03, CFI = .99, SRMR = .02), with strong factor loadings (> .7). Because the second-order CFA model fitted well, AI literacy was examined on the second level (Omega = .89).

Use: ChatGPT academic activities

Participants indicated their frequency of using ChatGPT for the 12 schoolwork activities mentioned above on a 5-point scale (1 = Not at all; 5 = Every day). See Table 3 for activity frequencies.

ChatGPT activity frequencies.

Outcomes: academic self-efficacy and perceived academic creativity

ChatGPT users reported the extent to which they believed ChatGPT improved their ASE with 4 seven-point Likert-type items adapted from the General Academic Self-Efficacy Scale by Van Zyl et al. (2022) (Omega = .89, loadings > .75). Also, they reported the extent to which extent they believed ChatGPT enhanced their PAC, such as “I more often have new and innovative ideas in my schoolwork because I use ChatGPT,” with 4 seven-point Likert-type items adapted from positive items from the Self-Rated Creativity Scale by Tan and Ong (2019) (Omega = .91, loadings > .8).

Moderator: trust

Trust was measured with 4 seven-point Likert-type items from the attitudinal trust measure employed by Molina and Sundar (2022) regarding how much participants think ChatGPT is credible, reliable, accurate, and factual (Omega = .88, loadings > .8).

Demographics

For gender, respondents indicated whether they identified as male, female, or non-binary. As the percentage for the last category is small (< 1.6%), only male (0) and female (1) were included in the analysis. For racial-ethnic backgrounds, the survey provided seven common categories, along with “other.” After collapsing categories with small sizes (< 1.9%) into “other,” the sample consisted of White/European American (53.5%), Black/African American (20.8%), Hispanic/Latino (12.1%), and East or South Asian/Asian American (8.6%) (see Table 2).

Table 4 displays correlations among the main variables.

Pearson correlations.

p < .01, ** p < .05; two-tailed.

Analyses

Based on Osborne’s (2013) criterion that skewness and kurtosis with absolute values smaller than 1 should not raise concern, several variables demonstrated slight violations of the normality assumption (maximum skewness = −1.78, maximum kurtosis = −2.00). Therefore, we employed Maximum Likelihood Estimation with Robust Standard Errors (MLR; Satorra and Bentler, 1994) due to its robustness against non-normality. CFA suggested the measurement model provided a good fit, RMSEA = .04, CFI = .96, SRMR = .05. Structural equation modeling (SEM) was conducted using Mplus 8.10. For moderation, we conducted a split sample path analysis (low vs high trust) in consideration of the sample sizes and model complexity. Although the significance of moderation/interaction terms cannot be tested with this approach, it provides an overview of noticeable differences in path directionality and magnitude.

Results

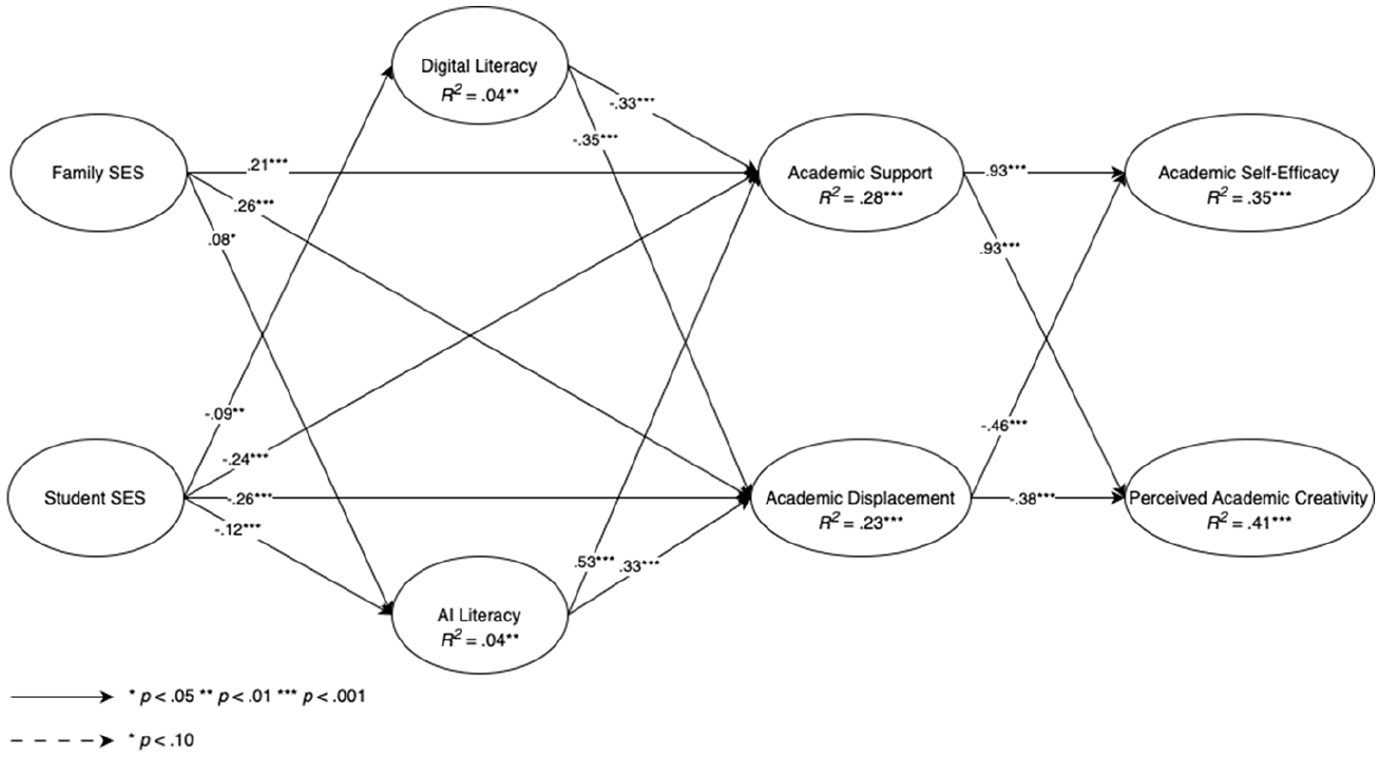

SEM indicated an adequate model fit, RMSEA = .04, CFI = .95, SRMR = .07 (Figure 2).

Significant SEM paths in the tested model.

SES with literacy and with activities

H1 and H2 predict SES to be positively associated with digital literacy and with AI literacy among U.S. college students who use ChatGPT. Contradicting H1, a significant negative path was found from student SES to digital literacy (β = −.08, p = .01). Supporting H2, a significant positive path was found from family SES to AI literacy (β = .08, p = .05), while the influence of student SES was negative (β = −.12, p = .01).

For RQ1a, CFA (RMSEA = .07, CFI = .97, SRMR = .03) confirmed two dimensions of ChatGPT use for educational activities revealed by the pilot study: academic support (#1-#8; Omega = .94), and academic displacement (#9-#12; Omega = .91). For RQ1b, family SES was positively related to use for academic support (β = .21, p < .001), with student SES negatively associated (β = −.24, p < .001). Similarly, family SES (β = .26, p < .001) and student SES (β = -.26, p < .001) were positively and negatively, respectively, associated with academic displacement.

Literacy with activities

RQ2 focuses on associations of both literacies with ChatGPT academic activities. Results show that digital literacy was negatively related to both support (β = −.33, p < .001) and displacement (β = −.35, p < .001) (RQ2a), and AI literacy was positively associated with academic support activities (β = .53, p < .001) and displacement (β = .33, p < .001) (RQ2b).

Activities with outcomes

RQ3 is concerned with the associations between ChatGPT academic activities and academic self-efficacy and academic creativity. Support (β = .93, p < .001) and displacement activities (β = −.46, p < .001) were significantly associated with ASE, and support (β = .93, p < .001) and displacement (β = −.38, p < .001) were significantly associated with PAC. For both outcomes, academic support activities had strong positive associations, while academic displacement ones had negative relationships.

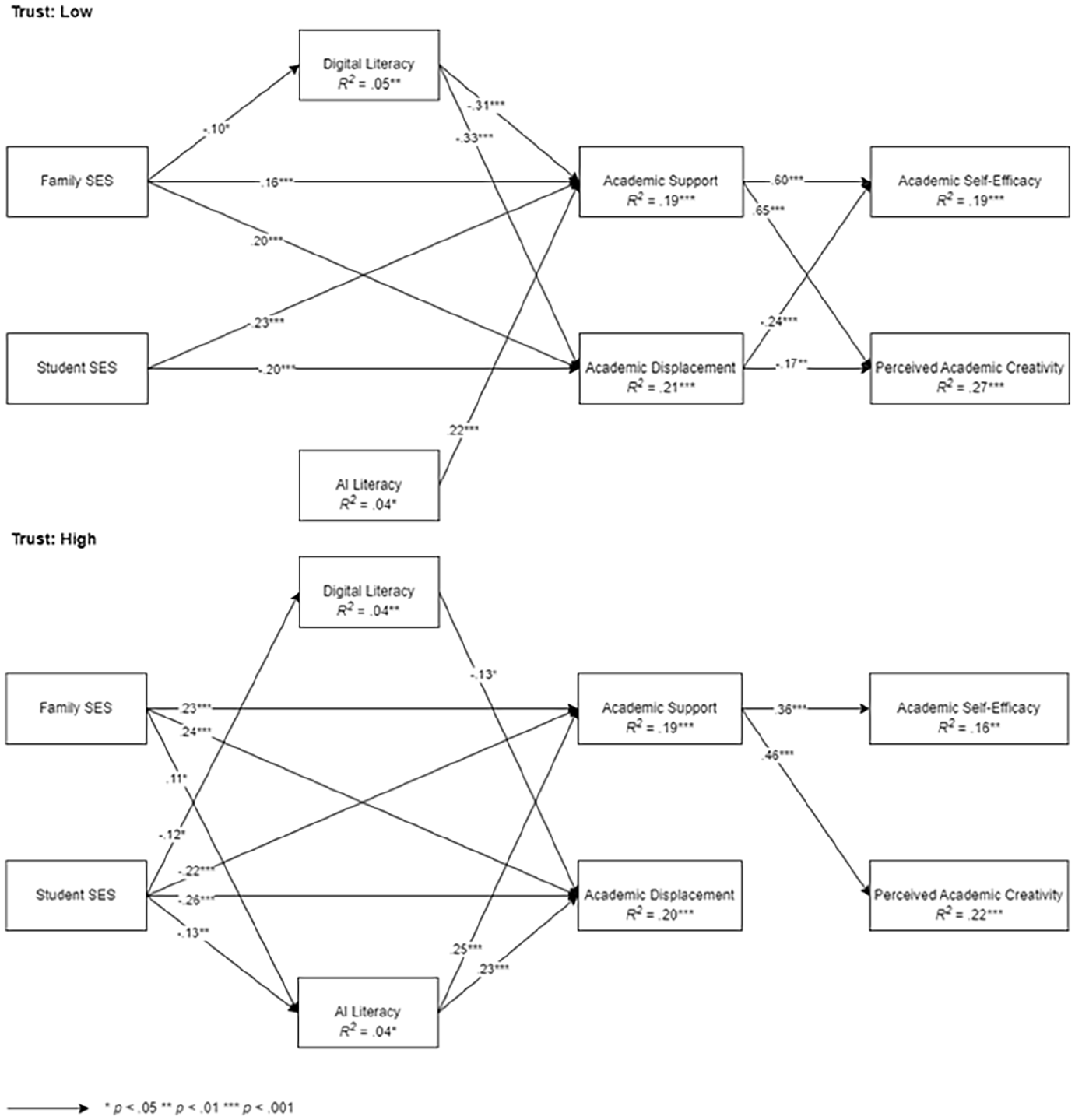

Split sample analysis of trust

Split sample path analysis revealed several important differences for RQ4 (see Figure 3). Noticeably, family SES was negatively associated with digital literacy when attitudinal trust was low (β = −.10, p = .03), while this path was non-significant when trust was high; and its association with AI literacy was non-significant with low trust (β = −.02, p = .73), as opposed to high (β = .11, p < .001). Student SES was negatively associated with digital literacy (β = -.12, p = .01) and AI literacy (β = −.13, p = .01) under high trust, and the associations were non-significant under low trust. Trust moderated the paths of literacy to activities: the negative effect of digital literacy on academic support (β = −.31, p < .001) under low trust was non-significant under high trust, and its association to displacement was weaker under high trust (β = -.13, p = .02) as opposed to low (β = -.33, p < .001). For AI literacy, its association with displacement (β = .23, p < .001) was only significant under high trust. Finally, trust moderated outcomes. Surprisingly, academic support had stronger associations with ASE (β = .60, p < .001) and creativity (β = .65, p < .001) under low trust than it did with ASE (β = .36, p < .001) and creativity (β = .46, p < .001) under high trust. Displacement was only negatively associated outcomes with ASE (β = -.24, p < .001) and creativity (β = -.17, p = .007) under the condition of low trust, and the associations became non-significant under high trust. Using ChatGPT for academic displacement activities, while having lower trust for it, clearly represents a negative experience.

Significant paths under low vs high trust.

Discussion

The present study explores how the digital divide may manifest in the era of advanced AI technology, with a specific focus on ChatGPT use by college students. We draw from digital divide research to suggest possible relationships among SES, digital and AI literacy, ChatGPT use for academic activities, and how those activities may be related to academic self-efficacy and creativity. The proposed model explained a good amount of variance in ChatGPT use and a greater amount in academic outcomes. Moreover, trust appeared to moderate these relationships. Table 5 summarizes the results.

Summary results for hypotheses and research questions.

ChatGPT activities

We identified two dimensions of ChatGPT activities, academic support and academic displacement, which align with educators’ recommendations of ChatGPT uses. The examination of activity outcomes suggests the positive associations of academic support with both academic self-efficacy (ASE) and perceived academic creativity (PAC). However, their negative relationships with academic displacement underscore the importance of how students use ChatGPT. The positive influence of support activities implies that the generated ideas or suggested sources may enhance users’ perception of their own ASE and creativity instead of degrading it, despite the delegation of some control to AI, which corresponds with the past finding that the introduction of ChatGPT improved the students’ critical, reflective, and creative thinking and that ChatGPT enhanced student confidence in research (Essel et al., 2024).

In contrast, displacement activities may reduce students’ confidence in their own abilities to achieve academic goals or generate innovative solutions, potentially impairing their learning motivation if they perceive AI as more competent than humans. This insight contributes to our understanding of the potential risks and disadvantages of using ChatGPT, especially since prior studies did not distinguish the influences of different uses on critical thinking (e.g. Essel et al., 2024) or have primarily focused on direct usability issues such as flawed content quality (e.g. Ajlouni et al., 2023). Without long-term data, we lack evidence on how academic displacement might affect learning outcomes. Yet it is conceivable that reduced engagement in academic reading and information searching could hinder the development of essential skills like attention span, information-seeking, and in-depth content comprehension. This could place them at a disadvantage in an information-driven society. These findings also echo the sociocultural perspective outlined by Wertsch (1991), through which ChatGPT can be viewed as a mediating tool between human cognitions and action in learning, and consequently influences how students approach problem-solving and impact their cognitive outcomes.

SES

Our analysis identified two distinct components of SES for U.S. college students: family SES and student SES. The family-student SES distinction may be rooted in the individualistic tradition of American culture and societal practices of self-reliance in college education. Thus, the two aspects need to be analyzed and interpreted as separate dimensions. Indeed, the results revealed rather distinct associations with the two SES components. While the non-significant path from family SES to conventional digital literacy might suggest a decreasing digital divide among college students, we identify a positive association between family SES and AI literacy, which may indicate the perseverance of SES-based inequality in this novel context. Potentially, the results demonstrate a reduced knowledge gap in general Internet skills owing to improved training and application, but the gap remains salient for more advanced technologies (e.g. AI), perhaps because the corresponding educational resources are more scarce and privileged. Beyond literacy, family SES also plays an encouraging role in ChatGPT use regardless of activity type, given its positive associations with academic support and displacement activities.

In contrast, we observed negative relationships of student SES with both forms of literacy and both dimensions of activities. It is conceivable that those with lower student SES face more life challenges, starting their education with less social, financial, and cultural capital, especially as first-generation and loan-burdened students. These challenges may lead them to take on paid jobs, which could both encourage the development of digital skills for problem-solving and result in greater reliance on ChatGPT due to limited time and energy for academic pursuits.

Literacy

Unexpectedly, we observe negative associations between digital literacy and ChatGPT use: those possessing greater conventional digital skills are not more, but less, active users. A potential explanation might be that higher digital literacy may entail mastery of a greater range of digital technologies, so individuals might have used other tools serving the same purposes rather than ChatGPT. Alternatively, they may be more ingrained in their accustomed methods for completing academic tasks, hence being less inclined to use ChatGPT because of confidence deriving from their conventional advantages. In addition, more digitally literate individuals may be more cautious about AI-generated information as they may be more aware of possible risks.

Conversely, AI literacy positively contributed to its use. Returning to the ongoing debate regarding whether AI-specific literacy is a necessity in the new era (e.g. Ng et al., 2021; Olari and Romeike, 2021; Wang et al., 2022), we find that AI literacy provided stronger explanatory power than conventional digital literacy. This finding provides empirical evidence for the conceptual distinction between digital and AI literacy in predicting AI user behavior and suggests a pressing need for AI-specific education and training. Such education will be essential for equipping students with the indispensable knowledge and skills to fully harness the positive potential of AI, despite its “black-box” nature. Furthermore, the positive associations between AI literacy and both academic support and displacement underscore that greater skills allow for more understanding and use of recommended and non-recommended activities. Therefore, there is a need for increased pedagogical efforts to educate students about the potential risks associated with some kinds of AI activities.

Trust

Associations between SES and literacy and activities were found to be more pronounced under high trust in ChatGPT. If distrusting ChatGPT, individuals may be less motivated to learn and use it even with supporting resources. Alternatively, they might feel less confident in their abilities to master AI, viewing it as an unreliable and elusive technology. More interestingly, the analysis showed that individuals with higher digital or AI literacy were more conservative about ChatGPT use across activities under lower levels of trust. While we might expect that greater AI literacy would help guide students to avoid displacement activities, greater trust in ChatGPT seems to reduce those concerns. This also suggests that trusting students may be more educated about the benefits of AI and are more inclined to use AI technology for academic support, whereas distrusting students may focus more on the limitations and hazards of AI.

Counterintuitively, we found that academic support activities were more strongly associated with the two academic outcomes under low trust rather than high. One possible explanation could be that users with low trust perceive more autonomy and less reliance in use and thereby tend to attribute task success to themselves, therefore receiving greater psychological gains from use. Meanwhile, the results revealed that only under low trust were displacement activities negatively associated with both outcomes. This suggests that individuals with less trust in ChatGPT ironically experienced stronger outcomes, both positive and negative, from both types of activities. That low-trust individuals perceived more harm from displacement activities resonates with the past digital divide finding that those who express more concerns also perceive more harm (Blank and Lutz, 2018). It is also possible that those with lower trust were more likely to pay attention to their uses and the relationships of use to outcomes with more mindfulness and awareness rather than “simply” trusting that use would be unproblematic. Overall, our findings extend past research on trust and technology adoption to the novel context of ChatGPT use and build on previous ChatGPT research (Choudhury and Shamszare, 2023; Tossell et al., 2024) by testing the moderating role of trust.

Limitations and future research

Though the full sample was collected nationally, it is not representative of U.S. college students. Another limitation lies in the cross-sectional nature of this study. For the establishment of causal relationships, future research could benefit from gathering longitudinal data as well as objective measures of ChatGPT use and outcomes to further investigate the implications of ChatGPT use on the learning outcomes of college students. In addition, we encourage researchers to explore ChatGPT inequality using qualitative and mixed-methods designs to further elucidate college students’ subjective perceptions and attitudes of ChatGPT use. Finally, though not hypothesized, we note results for gender and race/ethnicity divides in digital literacy and ChatGPT use, which should be explored by future research.

Conclusion

Our study underscores how SES-based digital inequalities may evolve in the novel context of ChatGPT. Household and individual SES levels exert distinct influences, with individual SES (i.e. student SES) proving to be more powerful in the college student context independent of household SES (i.e. family SES). College students with higher family SES tend to use ChatGPT more frequently, potentially due to greater cultural and material support for GenAI use. In contrast, those with higher student SES tend to use ChatGPT less, which may suggest that they may rely on less scaffolded learning due to increased resourcefulness in their college life. Therefore, policymakers should consider the interplay between these factors when designing and providing external support to promote educational equality. Notably, our findings suggest that while digital literacy negatively influences both dimensions of ChatGPT activities, AI literacy positively impacts them. This emphasizes the necessity for education programs focused specifically on AI literacy, in addition to general digital education, and the potential risks associated with ChatGPT activities that may displace desired academic processes. The moderation test additionally indicated that lower trust in ChatGPT leads to more cautious use and better outcomes, highlighting the need to manage student trust to prevent overreliance. To address the new challenges of the digital divide brought by AI, our considerations of educational AI should be not only technological but also pedagogical, ethical, social, cultural, and economic (Markauskaite et al., 2022). A more viable approach may involve careful promotion of AI-specific education, including justifiable bases for trust in ChatGPT, in both pre-college and college settings.

Supplemental Material

sj-docx-1-nms-10.1177_14614448241301741 – Supplemental material for College students’ literacy, ChatGPT activities, educational outcomes, and trust from a digital divide perspective

Supplemental material, sj-docx-1-nms-10.1177_14614448241301741 for College students’ literacy, ChatGPT activities, educational outcomes, and trust from a digital divide perspective by Ceciley (Xinyi) Zhang, Ronald E Rice and Laurent H Wang in New Media & Society

Footnotes

Authors’ note

All authors have agreed to the submission and that the article is not currently being considered for publication by any other print or electronic journal.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: We gratefully acknowledge support for survey funding through the Arthur N. Rupe Endowed Professorship in the Social Effects of Mass Communication.

Supplemental material

Supplemental material for this article is available online.

Author biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.