Abstract

The buzzword “smart borders” captures the latest instantiation of media technologies constituting state bordering. This article traces historical techniques of knowledge-production and decision-making at the border, in the case of Ellis Island immigration station, New York City (1892–1954). State bordering has long been enabled by media technologies, engulfed with imaginaries of neutral, unambiguous, efficient sorting between desired and undesired migrants—promises central to today’s “smart border” projects. Specifically, the use of “proxies” for decision-making is traced historically, for example, biometric or biographic data, collected as seemingly authentic and neutral stand-ins for the migrant. Techniques of selecting, storing, and correlating proxies through media technologies demonstrate how public health anxieties, eugenics, and scientific technocracy of the Progressive Era formed the context of proxies being entrusted to enable decision-making. This pre-digital history of automation reveals how the logics and politics of proxification endure in contemporary border regimes and automated media at large.

Keywords

Introduction

A key area where automated and artificial intelligence (AI)-driven media technologies are developed and implemented under heavy investment is border control. Border regimes are constituted within the practices and technologies managing differential mobilities of bodies across bordered territories (Walia, 2021). As such, how today’s borders appear and are experienced, is conditioned by an umbrella of digital technologies producing, storing, circulating, and correlating information about migrants. Employing the latest technologies, borders are “technological testing grounds” (Molnar, 2020): exceptional spaces, where technologies are less regulated than for sedentary citizens. Such projects include transnational databases for fingerprints (e.g. Eurodac), blockchain synchronizing data across authorities, digital identity systems in humanitarian aid (Cheesman, 2022), voice biometrics to automatically determine asylum-seekers’ areas of origin (Pfeifer, 2023), or AI-driven avatar lie detectors (“iBorderCtrl”). These digital technologies, ranging from computational data processing and storing, to more complex automation, machine-learning, and AI to assist and conduct decision-making, are usually subsumed under the buzzword of the “smart border.” Emerging from the US-Canadian “Smart Border Declaration” in 2001 (Cote-Boucher, 2002), the term has, alongside a wider societal “smartness mandate” (Halpern and Mitchell, 2023), evolved into an industry term and all-encompassing socio-technical imaginary that drives investments by foregrounding the role of media technologies in the fortification of border regimes.

However, as I argue through an archival case study of the Ellis Island immigration station in New York City (1892–1954), techniques and imaginaries around media technologies that conceptualize the border as “smart” can be traced historically. Instead of ascribing a fundamental shift in border regimes to digital technologies, I aim to enhance the critique of digitalized and automated borders by historicizing techniques of knowledge-production at the border, which enable projects of bordering through media technologies.

To do so, I make a two-fold argument. First, as an analysis of Ellis Island reveals, efforts to draw on media technologies to promise neutral, unambiguous, and efficient processing of border crossings predate the digital era. Second, juxtaposing histories of borders and media technologies shows how the presumed logics and politics of binary decision-making through the collection of seemingly neutral and authentic datapoints, and projects of prediction and correlation—which I conceptualize as a process of “proxification”—are shared by borders and automated media technologies more generally. As these older techniques of knowledge-production become reshaped in digital forms, I argue that borders are an important site at which automated media have been formed more widely, as techniques of filtering and sorting bodies and data dissipate society and technology more generally. I approach borders as historically constituted out of the socio-material techniques of knowledge-production, that make up a given border at a given time. These techniques, constituted out of social and cultural imaginaries and practices as well as material technologies, are historically contingent and situated, yet become rearticulated and rematerialized across time within different media technologies (cf. Leurs & Seuferling, 2022).

Borders mediate differential mobilities between those whose movement is facilitated and those who are constrained (cf. Mongia, 2018: 2), reproducing historical hierarchies of race, nationality, gender, class, sexuality, health, and ability. Within the media technologies, the political choices of which information is deemed necessary to control these differential mobilities become exposed. Dijstelbloem (2021) calls this the technopolitics of borders, where technologies and politics coproduce each other. Borders unravel a process of world-making, where “knowledge and ideas are realized and unfold through the development of technologies” (Dijstelbloem, 2021: 15). If one understands borders as inseparable from media technology, Dijstelbloem’s (2021) approach also works in reverse: instead of uncovering technopolitics of borders through studying its infrastructures, the border arguably reveals how politics and logics of mediated techniques of knowledge-production are historically molded within situated contexts of borders.

As I will argue in this article, studying the longue durée of such techniques at borders reveals important historical trajectories of automated and “smart” media technologies, where logics and politics of the border drive imagined functionalities of media technologies: the goal of clear-cut binary decision-making between inside and outside fuelling dreams of automation, algorithmic predictions, the selection of storable datapoints, and optimized, efficient, and neutral processing of information. Hence, assumptions of radical shifts coming with the digitalization and “smartification” of borders must be contextualized and relativized, by excavating the historical conditions under which techniques and imaginaries around media technologies for border control have been negotiated and endure.

Ellis Island immigration station (1892–1954) serves to trace datafied, automated, and algorithmic techniques of decision-making, enacted through media technologies. The concept of “proxies” (Chun, 2021; Mulvin, 2021) is used to further explain those techniques and conceptualize how media technologies were used to select and produce specific information, such as biometric or biographic data, to “stand in” and seemingly neutrally represent “the migrant.” Proxies, such as results of intelligence tests, or medical inspection—standing in for the assumed “aptitude” of a migrant—were selected, stored, and correlated, to enable the decision-making process, deemed as more neutral and unbiased than human inspectors. Thus, at Ellis Island, the situated negotiations of how proxies were legitimized to stand in and represent “the migrant” can be observed. While many of these techniques were flawed, malfunctioning or resisted and circumvented, for this article the case serves as a context to investigate the historical legitimization of automation and dreams of “smartness” around decision-making at the border, before media technologies existed that are commonly called “smart” today. Anachronistically asking how “smart” Ellis Island was, a history of bordering techniques around proxies shows how migrants, long before AI or blockchain, became broken down into datapoints enabling correlation and prediction in the inspection process. This exposes the political choices of how certain data about humans became regarded as true and objective, while complicit with a discriminatory, violent, and eugenicist project of border control. As many contemporary media technologies and predictive systems are driven by such techniques of knowledge-production (Chun, 2021; Hong, 2022), the history of borders is an important site of media history more generally, where techniques of data-driven sorting were imagined and enacted.

Historicizing borders and media technologies

The interrelations of borders with media technology have increasingly come under scrutiny. Chouliaraki and Georgiou (2022) conceptualize the “digital border” as the double articulation of excluding media discourses, operating hand-in-hand with technological infrastructures of border control in Europe. Locating mediation central to hostile digital mobility regimes, the “smart border” is studied through a focus on its media technologies: for example, the design of the EU’s Visa Information System (Glouftsios, 2021), uses of data-driven systems in migration agencies (Leese et al., 2022; Pfeifer, 2023; Scheel et al., 2019), border control, camps and surveillance (Molnar, 2020), or in humanitarianism (Cheesman, 2022; Marino, 2022).

In capturing this contemporary moment, the technological changes brought by digitalization have been conceptualized as moves to a “deep border” (Amoore, 2021), “big border” (Metcalfe and Dencik, 2019), or “data border” (Hall, 2017), dissecting the consequences of different digital technologies in controlling migration. Specifically, studies focusing on functionalities, imaginaries and uses of “smart” technologies have critically described transformations of migration control: for example, when “[f]orced migrants as embodied and experiencing humans become data categories in abstract space” (Witteborn, 2022: 169), or when “deep learning algorithms are reordering what the border means, how the boundaries of political community can be imagined” (Amoore, 2021: 2).

These critiques of digital borders are highly relevant, supporting the fight for “data justice” at the “big border” (Metcalfe and Dencik, 2019). However, it can be asked which aspects might be missed, if the problematics and violences of technologized border are rhetorically assigned to the emergence of digital technologies. Instead, a more systematic historicization of media technologies at the border can counter a too presentist analysis, and rather trace where and when materialities, practices, and socio-technical imaginaries that, often aimlessly, entrust digital technologies to enhance border control took shape (Leurs & Seuferling, 2022; Leurs, 2023: 136–164). Halpern and Mitchell (2023) identify a largely unquestioned “smartness mandate” as the “promises about computation, complexity, integration, ecology, and crisis” (Halpern and Mitchell, 2023: 26) that digital technologies have come to embody. A historical perspective is necessary to question where such a “smartness mandate,” and its legitimized techniques of knowledge-production have been assembled, in uses and imaginaries around older technologies. Thereby, a “techno-hype in migration research” (Tazzioli, 2023: 1) can be counteracted, through a less now-focused perspective.

I further build on historiographies of media and migration that have addressed how media technologies intersect with previous projects of bordering, population control, nation-building, surveillance, or identification. Important examples are Chaar-López’ (2019) study of sensing technologies at the US-Mexican border since the 1970s, showing how “cybernetics gave officials the conceptual apparatus to structure the border as an information system” (p. 497); Dalbello’s (2016) study of “reading and writing the migrant” at Ellis Island; Groebner’s (2007) tracing of methods of identification in early modern Europe, before photography, arguing that the emergence of papers “both document and transform their owner’s identity”; or Siegert’s (2006) emphasis of media materiality, tracing how in paper systems of 1500s Spain narrativization and inscription of migrants to the Americas created the modern subject. Furthermore, racializing technologies such as biometrics, fingerprinting, and photography, and their afterlives in contemporary digital systems, have been explored within projects of controlling and surveilling mobilities in contexts of settler colonialism, slavery, surveillance, and carcerality (Browne, 2015; Kaun and Stiernstedt, 2023; McKeown, 2008; Mirzoeff, 2021; Weitzberg, 2020).

This body of work signposts the genealogies of media technologies in producing racialized and gendered regimes of mobility control through surveillance and carcerality, and enforced identification. Specifically, it supports a co-producing relationship of media technologies and border regimes. While the history of media technologies is central for border control as a modern state practice, it is also changing historical, political, economic contexts that shape mobility regimes, and bring forth new techniques of knowledge-production and specific media technologies.

However, research on border digitalization often remains ahistorical in accounts of how not only concrete technologies, but the underlying techniques, logics, and epistemologies, that are sought to be enabled by the digital, have come to be developed. Similarly, historiographies of digitalization, automation, AI, or datafication, rarely locate trajectories of technological development and techniques of knowledge-production in concrete sites, such as borders. Thus, to resist, and undo logics and harms of technologies and borders, historical investigations are necessary of the ways borders have produced mediated techniques of knowledge-production, and vice versa, how such techniques have enabled bordering before the digital. As an initial step, this article studies one situated context, engulfed with media technologies, as a predecessor of today’s borders ridden by the “smartness mandate.”

Proxies and their politics

To further conceptualize how these mediated techniques in border control emerge across time, I draw on the concept of “proxies,” encompassing both a mediated technique of knowledge-production and a socio-technical imaginary of legitimization and entrustment in media technologies. Proxies devise “stand-ins” invested with the power to represent something or someone else. Mulvin (2021: 4) conceptualizes proxies as “the people, artifacts, places, and moments invested with the authority to represent the world.” To enable knowledge-production, he argues, proxies “function as the necessary forms of make-believe and surrogacy” (Mulvin, 2021: 4), where a surrounding cultural work of legitimization is necessary to constantly justify and make acceptable which proxies represent which aspects of perceived reality. In application to automated, machine-learning technologies, Chun (2021) discusses proxies, which are needed for any technology, that is built on modeling and prediction, “to infer behavior [one is] interested in but cannot directly access” (Chun, 2021: 121). An optimally chosen proxy reliably represents the information one seeks to attain, and can perfectly “visualize what cannot be experienced” (Chun, 2021: 124). Tree rings, which stand in for histories of climate change, or zip-codes to infer class, race, and income to enable predictive policing, reveal similar logics of proxification: “[h]ighly correlated variables are [. . .] considered to be ‘proxies’ of each other: by tracking one variable, you can capture the other” (Chun, 2021: 47). Proxification then refers to the “culturally conditioned practice of consistently using some things to stand in for the world” (Mulvin, 2021: 5), that is, a historically contingent, socio-cultural process of stabilizing specific proxies for specific external realities over time, a process inherently political and power-ridden. Predicting climate change versus predicting crime encapsulates highly different politics and contexts of proxy selection. So does bordering. Which proxies get selected at the border, and how they are justified to stand in for migrants, reveals the politics of historically contingent bordering practices, enabled by media technology. Borders discriminate based on proxies, but, as noted by Achiume (2021: 333), “it is also a core function of modern borders to discriminate in the normatively prejudicial sense—they allocate fundamental human rights differentially on the basis of race, gender, class, national origin, sexual orientation, and disability status, among others.” Attention to proxies at the border, such as choosing biometrics or details from migrants’ biographies to stand in for inferences about potential “dangers” to the nation, or for the right to asylum, reveals the logics and politics that characterize knowledge-production at the border, and how these techniques historically emerge and change. Proxies thus coproduce and legitimize differential allocation of rights and mobilities, entangled with and co-producing intersecting axes of gender, race, class, sexuality, ability and more.

A core purpose of proxies is standardization, consistent recognition, and correlation—features that lie in the interest of both borders and media technologies more widely. Andrejevic (2020) argues that automated media do away with complexity, narrativity, and an interest in understanding, in lieu of “systematically fragment[ing] and standardiz[ing] the components of expression and evaluation” (p. 5). Similarly, Manovich (2001) identifies reductive representation within something else (i.e. in proxies), modularity, and subsequent automation as key features of “new media,” where narrativity is replaced by datapoints that can be combined in any way. This characteristic of automated technologies also carries Chun’s (2021) investigation of discrimination in datafication, where she argues that authenticity and recognition are key techniques of automation, as the technology relies on unambiguous difference and clear-cut binaries (everybody must be their most authentic self to be perfectly recognized and grouped by technology). In the case of border control, efficiently chosen proxies are seen as able to create a mediated version of the migrant, that, constituted out of manifold stand-ins, is more recognizable—more real, true, and authentic—to the border, than the migrant themself. Proxies legitimate make-belief surrogate stand-ins that make the migrant hyperreal.

At the border, proxified techniques of knowledge-production have material consequences: “The extent to which a refugee body can be datafied determines the degree to which that body can move, integrate, and beg for public recognition” (Marino, 2022: 5544). The longing to create the ideal, infallible, and definitely non-fake representation of the migrant, by way of proxy, highlights the politics in selecting and optimizing proxies that underlie the automated correlation and recognition. Which aspects of the migrant are seen as authentic, and how are these turned into datapoints? Which politics drive proxy selection, for example, anxieties about public health, terrorism, or labor markets?

Deconstructing these proxies allows for exposing power: “The power to determine proxies [. . .] is nothing less than the power to determine the grounds of difference” (Mulvin, 2021: 33). The wish for determining unambiguous difference lies at the core of bordering as a state practice, as well as of automated media. While the border relies on proxies, also other media technologies that rely on sorting and authentic recognition as their epistemological logic are bound to proxification. Proxies at borders make visible the locus of politics, the lines chosen to distinguish between migrant and citizen, foreign and native, inside and outside, a politics that is tied to borders as a project of nationalist exclusion and racial capitalism (Walia, 2021; Weitzberg, 2020).

How smart was Ellis Island?

The following case study of techniques and media technologies at Ellis Island follows three strands: (1) the selection, (2) the storing, and (3) the correlation of proxies. Emerging from a thematic analysis of archival documents, I see these techniques as dimensions of the proxification of border control. I analyze archival material to reconstruct uses and imaginaries around media technology, and how decisions between immigration, detention and deportation were made. Files were analyzed from the New York Public Library, the National Archives (Washington D.C.), the New York Historical Society, and Ellis Island’s archives (Bob Hope Memorial Library), identified through keyword catalog searches and archivists’ recommendations.

Techniques of selecting proxies

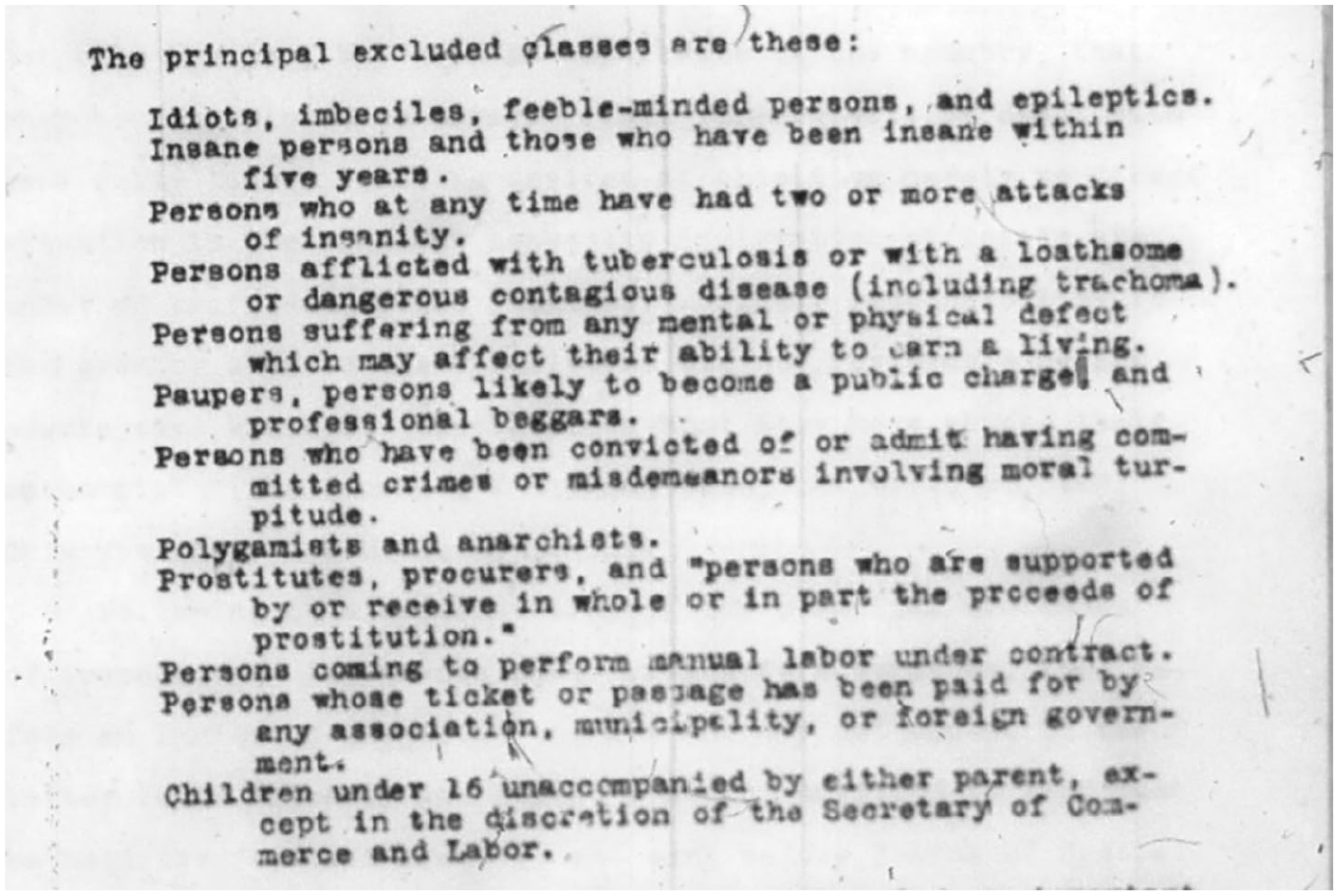

The immigration station at Ellis Island first opened its doors in 1892. New York City had long been the main entry point for immigrants from Europe to the United States, yet federal legislation restricted immigration only since the 1870s. The introduction of categories immigrants had to fulfill created the need for an inspection station. Figure 1 shows the list of “excluded classes” from 1903, giving chilling insights into the eugenicist, ableist, classist, gendered and racialized grounds of exclusion, and the time’s moral panics, that drove the subsequent techniques of knowledge-production.

Part of report on Ellis Island (William Williams Papers, NYPL).

In response to more arrivals from Europe and increasingly complex categories, the city picked Ellis Island, across the water from the old Castle Clinton landing station, as the place for a new immigration station. Until the immigration stop in 1924, around three quarters of all migrants entered the United States through the station.

After a wooden building burned down, the now famous brick hall was opened in 1897. This architecture is an important infrastructure for the migrant inspection process, and for the selection and visualization of respective proxies (see Sánchez Arsuaga [2020] for an analysis of Ellis Island’s architecture). First, the hall responded to anxieties around contagious diseases brought by migrants, through an air circulation system, that replaced the air every 15 minutes. Second, structuring the station as one big hall, all migrants crammed together, made visible the “border spectacle” (De Genova, 2013): a performance to the national public of controlling border movements. A balcony provided space for journalists, politicians and the public to gaze at the migrant-processing machinery, visualizing if the border was “full” or “empty” (Pegler-Gordon, 2009: 110).

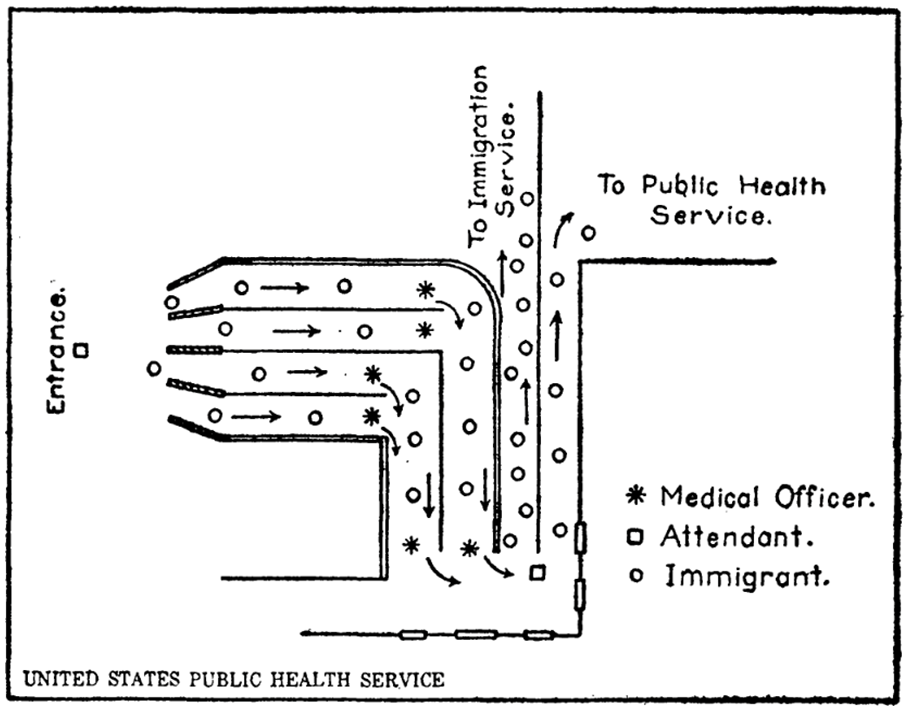

Third, the inspection process was structured architecturally, breaking down border crossing into consecutive stages. The building enabled techniques of visualization for the proxies for decision-making, desired by the inspectors (and by immigration law). One of the main anxieties structuring inspections was an ableist, racist and classist understanding of “public health”: contagious diseases, other general health conditions, and mental conditions and “intelligence,” but also socially and morally undesired “traits” such as poverty, political views (anarchism), or prostitution. Any such “diagnosis” could lead to rejection. Migrants were meant to flow through a chain of inspection stages aimed to visualize potential conditions. Walking up a flight of stairs, doctors stood on the sides, diagnosing those out of breath with a potential heart condition (Yans-McLaughlin, 1997). Subsequently, immigrants were sluiced through a system of fenced corridors, checkpoints, and pens, breaking the inspection process apart—each stop extracting different information that served as a proxy. As the drawing indicates (Figure 2), corridors were used to expose all sides of the migrant’s silhouette to medical inspectors. One specifically brutal inspection was for the eye-infection trachoma, where a buttonhook was repurposed to invert migrants’ eyelids. In case of a positive diagnosis, inspectors marked the migrants’ clothes with chalk codes to communicate that information to inspectors further along.

Diagram of inspection process, in article about mental examination at Ellis Island (Mullan, 1917: 734).

Sequencing the inspection process was described as significant progress by the New York Times (1900): [T]he interior arrangements are what, after all, make the station a model of completeness. Every detail of the exacting and confusing service [. . .] were considered in perfecting the interior plans. The transportation, examining, medical, inquiry, and various other departments of the service being assigned quarters that, while they are practically separate in every detail, yet are so arranged as to follow one after the other, according to its proper place.

The technique of breaking down the inspection into discrete steps can be seen to serve a function of taming multiple possibilities and complexities of interpretation. Delegating the assessment, for instance of “ability to earn a living,” to a selection of proxies systematized the decision, and seemingly made it unquestionable. Hong (2022: 4), discussing today’s predictive policing technologies, calls this a “grammar which effectively reduces any human condition into discrete empirical states.” Proxies produce correlatable datapoints which make any theory behind their choice redundant, he argues: “Once frozen into such ossified forms, it only remains to demonstrate some statistical relation between the two artificially stable objects (‘face’ and ‘criminality’) to complete the equation” (Hong, 2022: 4).

These datapoints ranged from physical and medical examination to biographical details and social categories (such as profession, finances, marriage status, race/ethnicity). Categories from the list of “excluded classes” (Figure 1), such as “pauperism,” “ability to earn a living,” but also “likelihood to become a public charge” (“L.P.C.”), or “idiots, imbecile, feeble-minded” were specifically hard to assess, and therefore attempted to be accessed via proxies, enabling inferences that could not be seen. The racist, classist, and ableist violence driving this technique of knowledge-production became specifically evident in the use of intelligence tests, and the underlying epistemology of eugenics. Ellis Island was a laboratory for biologists and psychologists to develop eugenicist methods. The assumption that poverty or intelligence are genetically conditioned health issues, that can be scientifically detected, predicted, and avoided (i.e. “bred out”), undergirded inspection at Ellis Island. In 1913, eugenicist Henry Goddard tested a translated Binet test at the station, eager to find a standardized method to detect “feeble-minded” individuals, to circumvent bias by inspectors. In his results, he found that 40% of Jews, Italians and Hungarians qualified as “feeble-minded.” Are these results reasonable? Doubtless the thought in every reader’s mind [. . .] it is impossible that half of such a group of immigrants could be feeble-minded, but we know that it is never wise to discard a scientific result because of apparent absurdity. [. . .] We can only arrive at the truth by fairly and conscientiously analyzing the data. (Goddard, 1917: 266)

Goddard’s conviction of the technology’s neutrality and scientific-ness had real-life consequences for thousands: during the ensuing year, deportations based on alleged “feeble-mindedness” doubled. For the authorities, the standardized tests provided a seemingly neutral, (pseudo)science-supported media technology, proxifying the assessment and standardizing decisions. In fact, the inherently racist and classist assumptions of eugenics rely on essentializing proxies themselves: the performance in tasks becomes a proxy for “intelligence,” which, in turn, becomes a proxy for genetic predispositions, and social characteristics, such as poverty or the ability to sustain oneself. The strong willingness to trust the results of the intelligence tests demonstrates the force of successfully legitimized proxies, which remain unquestioned but have material consequences.

As evident from the intelligence tests, race and ethnicity were central categories in the inspection, seen as useful datapoints to be correlated with other information and enable hierarchical sorting. Race and ethnicity were filed from 1899 to 1931, driven by medical officer Victor Safford, author of the Dictionary of Races used at Ellis Island and other stations. A wide range of categories across nationality, language, and ethnicity were used to racially differentiate the mainly European migrants, for example, distinguishing South and North Italians, while lumping together Jews from different countries as Hebrew. This bureaucratic-technological racialization, or described by Simone Browne (2015) as “epidermalization” by way of media technologies (digital or pre-digital), inscribed hierarchical social categories onto skin and other physical features, making them legible—and thus usable as proxies, to be correlated with other information, such as medical information, literacy, or intelligence tests.

Other technologies for assessment were standardized interview guides, to collect biographical information deemed necessary to assess certain criteria. The complexities of finding the best techniques of detection are exemplified by a short-lived experiment of placing women inspectors at Ellis Island in 1903. Concerned with trafficking of sex workers (“prostitutes” were an excluded category, Figure 1), the Woman’s Christian Temperance Union convinced President Theodore Roosevelt to hire female inspectors, arguing that only women could detect the “immoral” among young women traveling alone, able to “perceiv[e] feminine distress, confusion, and vulnerability” (Pliley, 2013: 101). This diversification of inspectors ended after 3 months, due to male inspectors’ opposition to women colleagues. While this example illuminates how gender structured the roles of inspectors and inspected (Pliley, 2013), it underlines the socially and culturally situated quest for techniques that could make visible the most authentic and failsafe information about immigrants, in this case not by replacing inspectors with media technologies, but by using female inspectors for the proxy recognition work.

The quest for the most truthful representation of the migrant underlies the selection of proxies. Deep mistrust in the migrant, a hostile and xenophobic conviction of being lied to, bribed, and fears of inconsistent assessment, drove authorities to outsourcing inspections to the information that proxies mediate. This echoes a logic discernable in contemporary predictive systems, also built on “a pre-existing fantasy that criminalises the targets of prediction. The worker is presumed to be, by default, a potential thief” (Hong, 2022: 8), as Hong notes about automated surveillance in warehouses. Assigning higher legitimacy to a proxified data-driven system is an enactment of power on the side of the one employing the technology (i.e. border guards), while curtailing power from those data is collected about (i.e. the migrant). The hostile assumption of dishonesty and untrustworthiness on the migrant’s part, as also addressed in Weitzberg’s (2020: 32) study of biometric registration technologies in colonial Kenya, legitimizes the truth-claiming capacity of racialized, gendered, medicalized, and class-based proxies, over the migrant’s own voice: “[the technology] served to normalize [. . .] the untrustworthiness of black self-presentation.”

Such techniques disconnect migrants from biographical narrativity and complexity, in lieu of singular datapoints, that synecdochally represent the entirety of the migrant seemingly more authentically. The intelligence test after all knows better how intelligent you are, than you know yourself. Andrejevic (2020) describes how automated media dis-embed decision-making processes from the forms of social life and interaction they rely upon. Understanding becomes replaced by operations, where systems “rely on correlational patterns rather than causal narratives” (38). A contemporary example of this logic is speech biometrics, where an AI uses voice samples of asylum-seekers to determine areas of origin (Pfeifer, 2023). The narrative content of what the person is saying is made redundant by a technology analyzing sound types—a truer representation of the person’s story than the person can tell themself. As Mulvin (2021: 5) remarks, the cultural work of legitimizing proxies speaks to a “theatrical enactment of objectivity,” necessary to justify why something else has the authority to be more meaningful than the real thing.

Ultimately, outsourcing the assessment to media technologies was seen to relieve inspectors of a rising workload, and promised neutral and consistent decisions, unaffected by bribery (several cases occurred during the early years). While from a critical border studies perspective outsourcing by proxy is reductionist, violent, and discriminatory, from the inspectors’ point of view inspection predominantly poses huge amounts of work: Correctly and promptly to “inspect” an immigrant is an art which not all of the officials known as immigrant inspectors are masters. [. . .] To inspect means to view

It is thus little surprising that media technologies became entrusted to alleviate the border guard’s workload, under the promise of neutrally producing information to stand in as proxies for the decisions taken.

Techniques of storing proxies

As seen in the practice of chalk-marking migrants’ clothes with codes for diagnoses, the breaking down of the inspection makes organized flows of information necessary. A range of technologies enabled the storage of information. These systems were driven by imaginaries of optimization, standardization, and effectivization. These buzzwords not only resonate with contemporary automation discourses, but also with the context of the Progressive Era, as the period of ca. 1880–1920 is referred to in US-American history. In reaction to industrialization, urbanization, and corruption, public administration in the United States embraced a technocratic spirit toward efficiency, optimization, and scientific methods to fight perceived social ills, such as waste, poverty, and bad working conditions, or, as seen above in attempted identification of sex workers, issues of “morality.” This movement incorporated reforms in administration and the handling of information, such as the filing cabinet (Robertson, 2021), and a development of computational media technologies (Geoghegan, 2023).

Ellis Island saw several reforms to effectivize and optimize data flows across the island. Besides photography (see Pegler-Gordon, 2009, for an extensive study of race-making and photography at Ellis Island), the authorities built a streamlined record of card indexes, datafying and making legible the migrant: A card index is now kept in which the names of all aliens arriving at New York are arranged alphabetically according to their several nationalities. [. . .] The work of the Special Inquiry Boards is tabulated every month and shows the numbers held and deported by each board together with the reasons and much other interesting information. Most of the blanks formerly used have been discarded and superseded by new ones of a more concise nature and better calculated to source the desired information, and many useless blanks and records have been discontinued.

2

In a “Statistical Division,” 55 employees prepared data on migrants: “The principal facts given on the ships’ manifests are recorded on cards (through electrical punching machines) and forwarded to Washington for tabulation.” 3 The result was an increasingly complex database kept on all migrants, including all datapoints produced by during inspection (biographical and biometric information, medical inspection, etc.): predecessors of contemporary border databases, for example, USCIS (United States Citizen and Immigration Services), the EU’s Visa Information System or Eurodac.

A file from 1915 reveals a detailed plan for an index system that “will take on efficiency hitherto unknown,” remedying “inharmonious conditions” with an “ultimate plan.”

4

This plan envisioned a thought-out data flow from the moment of deboarding. Ellis Island’s databases were among the largest to exist at that point in US history: “[T]here are nearly TWICE as many cards, and more than THREE times as many independent and unrelated entries in the 12-year-old index of arrivals at Ellis Island, as [in the] New York Public Library.”

5

However, this circumstance did not lead to complexity reduction in inspection. Seeing the index as a “machine”

6

to be optimized, the filing system saw reforms of streamlining and standardization. The plan of 1915 introduced numerical codes for migrants, deducted from the ship’s manifest (effectively starting datafication on European shores): To illustrate: All records in the case of John Jones, whose name appears on line 2 of page 6 of the manifest of a ship whose general number is 12015, will be numbered 12015-6-2, which will be the permanent identification of the passenger in all subsequent records.

7

This method promised a standardized and efficient (less paper) data flow through the island, as files referring to an individual could be kept together: “[A]lmost the entire records of the Station immediately can be made to take on a definite and descriptive numerical identification which will cause them to gravitate into orderly arrangement in the files.”

8

This “gravitation” of data into the correct files was promised to happen in real time: The Information Division will thus have a complete list of all arriving aliens as rapidly as they are examined, and with the explanatory data as to whether or not they were detained. A copy would be sent immediately to the New York side and serve to satisfy a large number of inquiries at the Gate, and obviate much unnecessary congestion at the Island.

9

Another document shows the respective telegraph codes, such as “REHUL” standing for “feeble-minded.” 10 Central to optimized data storage is encoding: to produce referability, and reduction, and enable better circulation. Reduced to datapoints, the code-filled database creates a horizontal assemblage of datapoints that are equal and non-sequential. Data can be extracted, combined, and correlated in different ways from the database (Manovich, 2001). The “smart border” has no time and space for self-representation. Instead, smoothly stored datapoints on all aspects of the migrant, according to this imaginary, supposedly relieve the inspector of the impossible decision about a person’s life trajectory, by maximally reducing complexity, filtering out the noise—and reducing necessary shelf space.

Moreover, optimizing data storage also enabled scalability. The systems at Ellis Island promised to handle larger data amounts, while reducing the necessary human labor and material infrastructures: these additional subdivisions will result in a saving of practically a half-year’s work of one clerk in the matter of making the index [. . .] the index eventually will be a machine of nearly 300,000 parts, and if past performances are repeated, it will [. . .] absorb as many as a million names per year.

11

The encoded elements, running on a standardized infrastructure of data flows, could allegedly be scaled up because the technology relieves humans of specific work steps—a promise recognizable from contemporary AI and automation discourses—including the caveat, posed before this promise: “many essential conveniences in equipment are now lacking, but when eventually set up and properly adjusted [. . .] the index will take on an efficiency hitherto unknown.” 12 If only done right in the future, it will work.

While none of these techniques qualify in today’s sense as computationally automated, they are characterized by imaginaries and techniques of datafication, that undergird automated media: scalability, complexity-reducing and shareable codes, and a promise of optimization and efficiency. Studying “smart” cities, Powell (2021: 5) describes “optimization [as] an ideal that transforms the arrangement of technological and social resources [. . .], laying down technology-driven assumptions about how social life should unfold.” Ellis Island demonstrates how technologies, such as indexes and filing systems, helped to rearrange the wider assumptions about social life, that is the bordered state, and how it is to be enacted. The binary logic of the border (in vs out) merged with the spirit of optimization: making this decision as smooth and unambiguous as possible through techniques of datafication. Encoded, reductive, essentialist proxies become naturalized as those datapoints that enable optimized decision-making desired by the border.

Techniques of correlating proxies

Third, proxification at the border includes operations of processing data. Beyond databases, automated media technologies promise to re-value datapoints with new meaning—in the best case all by itself. The “machinery” of Ellis Island produced huge amounts of data. Different types of proxies produced different datapoints: (1) binary, yes versus no (or 0 vs 1) (e.g. infected with trachoma or not), (2) scale/hierarchies (e.g. financial means, “intelligence,” race), or (3) likelihood (e.g. “likelihood to become a public charge”).

However, producing reliable data for the third category turned out difficult. Archival files give insight into attempts to remedy this problem: ways of observing correlations between datapoints used for predictions, arguably predecessors of algorithms, predictive modeling, and machine-learning. Beyond selecting and storing proxified datapoints on migrants, Ellis Island tried to correlate and predict identities. Commissioner William Williams documented the following change in Ellis Island’s record system: A full and special record is now kept of those applying for relief and deportation as papers, or sent here for such purposes subsequent to the landing. Since July 1, 1902, about 1,100 aliens belonging to these classes have applied for relief [. . .] and of these it was possible to deport about one-fourth. [. . .] It is hoped that a study of the history of these charity cases will result in assisting the Ellis Island officials materially in determining from actual experience who is and who is not likely to become a public charge.

13

This technological reform incorporates the idea of analyzing characteristics of immigrants that became a “public charge,” and, based on pattern recognition within a database, being able to learn and predict which future immigrants might fall into that category. The record archive becomes the basis for attempting to correlate identities with “likelihood to become a public charge.” While it remains unclear to what extent this project was implemented and what results it yielded, Williams’ mode of thinking rings familiar: the operational steps of grouping datapoints, identifying proximities and correlations, and thus predicting probabilities for the future, underlies all automated, machine-learning, algorithmic digital technologies, that draw on models. The selected proxies, and the data they produce, such as intelligence tests, become variables that can be correlated, to make predictions about the future based on a historical database.

Scholars such as Chun (2021) or McQuillan (2021) have critically traced this technique of knowledge-production (correlation as a statistical method) to eugenics, and its erroneous and racist assumptions of innate correlations, for example, of linking genetics with intelligence, behavior, or poverty. It is thus little surprising to see these histories entangled at Ellis Island. This technique is crucially based on proxification: “Highly correlated variables are thus considered to be “proxies” of each other: by tracking one variable, you can capture the other. Correlations are most often used to uncover latent or hidden variables’ (Chun, 2021: 47). If processed in the correct ways, so this ideology, proxies can reveal invisible patterns—and produce truer and more authentic information than before. Second, correlation-based probabilities enable predictions: tomorrow’s migrants can be inspected even more “correctly” than yesterday’s—by turning them into a self-learning, inferential dataset. Making the assessment of an immigration claim dependent on datapoints of others in the past, is an equally problematic and flawed practice at Ellis Island of the early 1900s as it is in a contemporary border authority’s AI-driven systems.

Conclusion

The 1924 Immigration Act reduced Ellis Island’s activities through drastic quotas for European migrants. In 1954, the station closed, and in 1990 today’s museum opened (meanwhile plans included a shopping mall and a nuclear power plant). While the island has lost its importance for bordering the United States, I traced its historical relevance for what today is called the “smart border.” Iconic in US history, the island held a key position in the institutional framework of US migration control stretching across many sites, and was part of a transnational network of border infrastructures reaching to emigration stations at European harbors. Its relevance for envisioning and implementing mediated techniques of bordering within the Global North more widely is thus supposedly high.

Efforts of unambiguous, neutral, and efficient filtering of migrants shaped media technologies, through proxification: datapoints were extracted from migrants, in their combination seen as legitimate to reduce complexities and “stand in” for the migrant, erasing allegedly inauthentic self-representation. These techniques comprised selecting the most authentic proxies, storing them in optimized, encoded ways, and processing and correlating them to achieve decisions. Understanding these observations at Ellis Island as historical predecessors of today’s projects of automated, data-driven borders—and any other data-driven sorting system—highlights the continuity and afterlives of dreams of efficiency and seeming neutrality in administrating borders, projected onto media technologies and proxies, and their complicitness with racist, classist, sexist, and ableist projects of nation-building.

Situated histories, such as Ellis Island, expose how logics, politics, imaginaries and practices of bordering affect the shaping of media technologies, and vice versa: how logics of media technologies and proxification affect how politics of bordering become realized. Hence, borders are anything but marginal in the technological development of societies. They deserve critical attention as laboratories for how automated and other new media technologies were and are imagined and developed. Ellis Island demonstrates how imaginaries and practices of automating information management were in fact tested out and shaped within the operation of border control, heavily relying on the racist and classist pseudo-science of eugenics, ridden by ableist public health anxieties, and shaped by the Progressive Era’s technocracy. Many such techniques of knowledge-production linger until today, as proxies centrally enable automated, machine-learning, and predictive systems at borders and beyond (Chun, 2021; Geoghegan, 2023). Digital systems more widely rely on techniques that seek to smoothly filter between seemingly clear-cut binaries, controlling borders between inside and outside. This history of automation exposes borders, being binary modes of decision-making run by proxies, as epitomes of automated media in general: through mediated forms of seeing and recognizing the migrant, borders violently control mobilities by differentiating “same” from “different”—or, as Commissioner Williams wrote, of filtering out “the riff-raff of Europe” 14 from the desirable immigrants. Borders historically are technologies that seek to realize the dream of the clear signal: 0 or 1, in or out—similar to other predictive, machine-learning systems, where logics of noise-free filtering enable automated, data-driven predictions. Situating the longer historical trajectories of these techniques of knowledge-production at the border, exposes how the quest for filtering between seemingly stable binaries is always produced and legitimized through proxies, that are ridden by social, cultural, and political contingencies, and are in fact fundamentally unstable, not as natural as promised—and inseparable from racialized, gendered, and class-based axes of exclusion.

How can this historiography advance the critique of “smart” borders? While under a “smartness mandate” (Halpern and Mitchell, 2023) the latest technologies are employed to smoothen all complexities of a given operation, Ellis Island shows that this socio-technical imaginary is not inherent to the digital. As immigration criteria became more complex, Ellis Island never lobbied for abolishment or simplification of the border regime. Instead, to ease the workload, and accomplish the impossible task of efficient, unambiguous decision-making, remedies were seen in technologized, streamlined, and complex administrative systems—fostering a drive to more proxification and mediation. The delegation of the border’s impossible task to proxified techniques of knowledge-production has been so fundamentally naturalized that it seems unimaginable to reduce or abolish the delegated stand-ins for bordering. Thus, a first step must be to denaturalize and delegitimize proxies more radically from being able to stand-in for aspects of migrants’ complex identities as humans. This can happen by continuously re-evaluating which and how proxies are chosen, for which inferences—following a call for “mindful filtering” (Marino, 2022)—or more radically undoing and rejecting proxification built on reductionist epistemological grounds. Yet, it is important to note that unmaking these techniques of the border is caught within a tension of benign and malign proxies: ensuring that claims to asylum are protected, while rejecting proxies as markers of hierarchical difference in general.

Therefore, it is necessary to investigate historical contexts how techniques of knowledge-production at the border have become conceived and materialized in media technologies, and continue to shape digital technologies. Understanding historical trajectories of automated media technologies, and their entanglement with borders, can unsettle the “smartness mandate” (Halpern and Mitchell, 2023) and its notions of progress and techno-solutionism, by pointing at continuities, contingencies and alternatives. The border has never been smart nor dumb, but enacted by necropolitical, exclusionary, racist and classist techniques of filtering and differentiation relying on the legitimization of proxies. While this article focused on the naturalization work of these techniques on the authorities’ side, technologies hardly remain without resistance or obfuscation—exposing the contradiction, misidentifications, and flaws of proxies and their truth claims, for example, by migrant organizations critiquing procedures at Ellis Island publicly, or by subversive practices of lying, bribing, or document forging by migrants. Foregrounding such resistances can be a further important element in delegitimizing proxies.

Today and historically, the fortification of borders does not reduce migration, and the promised efficiency and humaneness remain on the side of the border authorities, and not of the migrant. Following Hong (2022), “smart” technologies make things more predictable for those employing the technology, while life becomes less predictable for those whom technology is employed upon. As the grounds for which proxies stand in for which decision remain opaque, “smart” technologies at the border make migration an increasingly unpredictable, intransparent, dangerous undertaking. This has lethal consequences: Chambers et al. (2021) find that the deployment of “smart” technologies at the US-Mexican border has resulted in a more than doubling of border deaths, as migrants choose more dangerous routes. Unsettling the truth claims of such technologies, and exposing their futility rooting in longer historical continuities of bordering techniques, should inform critiques of the “smart border” and projects of regulation. Therefore, it is necessary to understand how mediated techniques of filtering, rejecting and eliminating have historically been normalized, and have, beyond specific technologies, digital or not, become taken-for-granted techniques of bordering that only stubbornly change.

Footnotes

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.