Abstract

This article brings frameworks from literary and cultural studies and methods from network science to bear on a central topic in political communication research: polarization. Recent studies have called into question the argument that digital “echo chambers” exacerbate polarization by preventing members from encountering a diversity of information and opinions. Using Gab, a far-right social media platform, as a case study, we offer further evidence that even members of highly polarized publics do engage in “cross-cutting.” However, we develop a distinct concept of hate-sharing, or sharing content for the purpose of disagreeing with or denigrating it. We show that hate-sharing is common on Gab. Moreover, it is associated with stronger community structure than other kinds of sharing and appears to confer substantially greater influence on those who engage in it. We interpret these findings as evidence that social networks incentivize the production of networked outrage—where “hating on” linked content merges with hate.

Keywords

In recent years, experts have identified polarization as a key threat to democracy and digital technologies as key drivers of polarization (Lorenz-Spreen et al., 2023). Many have argued that search engines and social networking sites drive polarization, as well as related harms like “problematic information” (Jack, 2017) and “radicalization” (Marwick et al., 2022), by creating “echo chambers” (Sunstein, 2001), and “filter bubbles” (Pariser, 2011) that prevent those inside them from encountering a diversity of opinions. But numerous studies have now challenged this account, offering evidence that even members of highly polarized online communities engage in “cross-cutting” (Mutz, 2002), sharing links from sources on “the other side” (Bright et al., 2020; Dowling, 2024; Haller and Holt, 2019; Juarez Miro and Toff, 2022).

This article builds on these findings empirically, methodologically, and conceptually. Drawing on theories developed in literary and cultural studies, we define hate-sharing, a distinctive kind of cross-cutting that involves linking content for the express purpose of disagreeing with or denigrating it. Expanding on past theorizations of taste and the performativity of affect, we hypothesize that hate-sharing takes place within polarized networked publics (boyd, 2010) and involves distinctive interaction and community formation dynamics. We then assess the prevalence of hate-sharing on Gab, the far-right social networking site, and analyze the dynamics that surround it.

Having developed a mixed quantitative-qualitative method for accurately identifying examples of hate-sharing at scale, we show that Gab users regularly engage in it. Indeed, hate-shares account for over 10% of all news sharing captured in our data. Moreover, by using methods from network science, we demonstrate that hate-sharing is associated with measurably different community formation and social interaction dynamics than either affirmative “like-sharing” or link-sharing in general, and thus constitutes a distinctive form of the circulation and attention that define publics (Warner, 2002). Specifically, we found that hate-sharing was associated with stronger community structure and that users who hate-shared, or directly commented upon or quoted another user’s hate-share, were significantly more influential than their like-sharing counterparts. Using one standard measure of influence, most users who hate-shared were nearly four times more influential than those who did not.

While our data cannot prove causation, our findings do suggest strong motivations for hate-sharing: the opportunity to create community and gain status within an atomized and isolating environment. We discuss the methodological and practical implications of these findings, as well as their significance for how we conceptualize polarized networked publics. We conclude by reflecting on avenues for future research.

Literature review

Polarization, disinformation, and cross-cutting

Since the turn of the millennium, numerous social scientists have documented and analyzed increasing political polarization in the United States (Druckman et al., 2021) and beyond (Gidron et al., 2020). “Affective polarization,” or the tendency of partisans to dislike and distrust one another rather than simply disagree on specific policy positions, has become a major research topic (Iyengar et al., 2019).

Within this literature, numerous studies focus on the role of media industries, technologies, and genres in driving polarization. One influential argument holds that media “echo chambers” prevent citizens from encountering the diverse array of information and opinions that are needed to sustain a liberal public sphere and, thus, liberal democracy itself (Sunstein, 2001). Scholars have explored the emergence of newly polarized and polarizing broadcast media genres, particularly liberal comedy (Baym, 2010) and conservative “outrage” (Berry and Sobieraj, 2013; Jamieson and Cappella, 2010 [2008]). Recognizing the convergence of regulatory, technological, and economic changes that made this kind of programming possible, studies analyze its prevalence in both psychological (Young, 2020 [2019]) and cultural (Peck, 2019) terms.

Influential early research on digital communications technologies argued that “participatory” media (Jenkins, 2006) would provide salutary alternatives to increasingly commercialized public discourse. However, a more pessimistic argument soon emerged, that digital media produced many of the same anti-democratic effects that had previously been attributed to broadcast echo chambers. According to this view, search engines and social networking sites produced “filter bubbles” that narrowed the range of information users encountered and encouraged them to engage in certain predictable responses (Pariser, 2011).

As numerous critics within and beyond the academy started to reevaluate earlier claims about the democratic potentials of Web 2.0 (Tarnoff and Weigel, 2018; Hwang, 2017), scholars began to observe that digital technologies and practices often regarded as democratic and democratizing had a “bleak flipside.” In addition to facilitating surveillance and filtering users and information for the purposes of advertising, they enabled forms of “dark participation” (Quandt, 2018: 36) such as “networked harassment” (Marwick, 2021; Marwick and Caplan, 2018) and “participatory disinformation” (Starbird et al., 2023). Contrary to a long tradition in media and cultural studies that had celebrated user agency as inherently emancipatory, Alice Marwick and Will Partin (2022) argued that participation should be regarded as “normatively neutral.”

However, as disinformation became a central topic of political communications research (Freelon and Wells, 2020), critical scholars also began to contest the value of this and other organizing concepts, including “conspiracy theory” (Bratich, 2020) and “radicalization” (Marwick et al., 2022). Alice Marwick (2018) argued that dominant journalistic and scholarly narratives about “fake news” perpetuated a discredited model of “media effects,” while Reece Peck (2023) pointed out that their “info-centric” emphasis discounted the role of style in shaping political belief. Daniel Kreiss and Shannon McGregor (2023) further contended that framing threats to democracy today in terms of “polarization” obscured the more salient issues of inequality and white supremacy.

While re-evaluating “disinformation” and “polarization,” scholars have called the concept of “echo chambers” into question, too. Numerous critics have objected that popular and academic discourse about the echo chamber effect is “overstated” (Dubois and Blank, 2018) and may “obscure the real problem” (Bruns, 2019) or even constitute an echo chamber itself (Guess et al., 2018). Others argue that the echo chamber metaphor overestimates the power of digital technology to dictate beliefs (Bruns, 2019) and underestimates the significance of other factors (Geiß et al., 2021). Among the many studies that critically interrogate the figure of the echo chamber, several were particularly relevant to us. Those were studies demonstrating that cross-cutting does in fact take place even in highly polarized online spaces.

Many of the debates sketched above share common theoretical stakes. These concern causation, or the power of media technologies to determine social phenomena. Both optimistic and pessimistic popular narratives about technology tend to fetishize it as acting upon society. Critiques of these narratives stress that technology and society are situated in and constituted through one another; neither is, or can act upon the other as, a thing. Such a perspective raises questions about what causes echo chambers to emerge, as well as what effect they have on individuals “inside” them and on society at large. Early accounts identified “filtering” (Sunstein, 2004) or “selective exposure” (Garrett, 2009), whereby people seek out media that support their views, as key mechanisms. However, several recent studies of highly polarized online publics directly contradict this hypothesis.

Through an analysis of the official Facebook pages of the far-right extremist PEGIDA movement in Germany and Austria, Haller and Holt (2019) show that these pages make reference to traditional media outlets almost as often as alternative, right-wing media. Bright et al. (2020) collected trace data from Stormfront, the White nationalist forum, that reveals that members engaged with cross-cutting content frequently and repeatedly. Indeed, they did so more often and for longer periods of time than they engaged with non-crosscutting content.

Similarly, Clara Juarez Miro and Benjamin Toff (2022) have demonstrated that far-right users of the Spanish message board ForoCoches engage deeply with cross-cutting news. Melissa-Ellen Dowling (2024) conducted an analysis of posts by right-wing users of Gab and Telegram in Australia and found that they, too, shared news from an ideologically diverse range of sources. However, these users typically juxtaposed mainstream news sources with right-wing ones. All four studies revealed that users shared mainstream news in order to cite it as evidence for extremist views, particularly regarding race, ethnicity, and immigration.

It is clear that members of these highly polarized publics do, in fact, regularly encounter cross-cutting content, contrary to what theories of filtering or selective exposure would predict. But, rather than encouraging normatively positive deliberative exchanges, such cross-cutting can serve starkly illiberal ends, including advancing avowedly anti-democratic and white supremacist political programs. In addition to the technologically determinist argument that search and social media algorithms cause individuals to become radicalized, this finding contradicts what we might call the textually determinist assumption that a user who shares a link must be persuaded by the content that it links to. If critiques of technological determinism call upon us to recognize the mutual imbrication of technology and society, a critique of textual determinism requires us to investigate how the meaning of various forms of online amplification come to be constituted through the relations of senders, messages, and recipients. That is, through context.

Cross-cutting contexts and affects

The fields of literary and cultural studies have long taken interest in how acts of reception give rise to meaning (e.g. Gadamer, 2013 [1960]) and spectators co-produce texts by “decoding” them. Stuart Hall’s (2007 [1973]) argument that audiences sometimes realized meanings at odds with the (apparent) intentions of the creators who “encoded” television programs, helped inspire an entire subfield of research on “virtuous badness” (Mao and Walkowitz, 2006). This includes “good” (interesting, or politically progressive) readings of “bad” (low cultural, or politically regressive) objects (e.g. Berlant, 2008; Radway, 2009 [1984]; Schor, 1995) and vice versa (Emre, 2018 [2017]; Warner, 2004). Priyasha Mukhopadhyay (2017) has argued that books can have “vibrant intellectual lives” without being read at all.

Over two decades ago, Jonathan Gray (2003) proposed that scholars of fandom needed to extend their attention to “non-fans” and “anti-fans” in order to understand the nature of media textuality and the role that audiences played in constituting it. Subsequent work elaborated Gray’s insight by investigating how anti-fans produced identity and community in contradistinction to content that they performatively rejected through acts of “hate-reading,” “hate-watching,” ironic viewing and sharing, and so on (Click, 2019). Scholars have characterized the aesthetics of the outrage genre in terms of its focus on “unveiling enemies” (Berry and Sobieraj, 2013: 47). Like outrage, anti-fandom reframes the messages that it receives or reprises. The key difference is who reframes: although anti-fans may produce content, they first identify as consumers.

Past empirical scholarship on social media has described practices that politicians use to contextualize news items online as “interpretative linking.” Enli and Skogerbø (2013), who coined this term, note that in their data, the act of “add[ing] an interpretation, correction, or comment contesting the mass media versions of their public images” often constituted a strategy for “protesting against journalistic interpretations” and adding “personalization.” The posts that Haller and Holt (2019), Juarez Miro and Toff (2022), and Dowling (2024) cite in their discourse analyses meet the definition of “interpretative linking” established by Enli & Skogerbø, but the former authors do not use this concept. Instead of emphasizing interpretation, correction, or comment on the content of mainstream news stories, these authors highlight how users stake out a position in relation to them.

Haller and Holt (2019) describe links to mainstream news on PEGIDA Facebook pages as serving an “‘I told you so!’ function.” Juarez Miro and Toff (2022) observe that Vox supporters who shared liberal or left-leaning use were “using them to spur discussion, support their arguments, and promote their own positions.” Dowling (2024) describes cross-cutting links in her dataset as “pointing to” and “reifying” right-wing views of reality. These are phatic and deictic speech acts. Beyond conveying additional information, such acts express emotion and establish context, orienting their speakers and audience in social space.

Rather than interpretative linking, we therefore conceptualize hate-sharing as a form of affective linking. Affective, because it expresses, and generates, feelings about linked content, through the act of juxtaposing it with new content and/or placing it before a new audience. Linking, not only because it involves URL shares, but because it binds users for whom the presentation of linked content evokes feelings. As we conceive it, affective and interpretative linking are not opposing or mutually exclusive concepts; rather, the second represents a subset of the first. Building on existing accounts of outrage programming and anti-fans, we hypothesize that there exists a phenomenon of networked outrage and that hate-sharing plays a key role in producing it. We further expect that cross-cutting links in polarized online spaces often constitute “hate-shares” and serve the function of creating an identity and audience by defining against.

If users who crosscut for the purposes of hate-sharing give new meaning to the content that they thus recontextualize, we anticipate that the performativity of this gesture plays an important role in shaping networked publics, too. We do not use “performativity” in the colloquial sense, meaning insincerity or disingenuousness. Rather, we intend the sense that critical social theorists have taken up the term from philosophers of language. Performance does not, or does not only, describe reality. Rather, it changes—or upholds—realities. For Judith Butler (2011), for instance, gender is “real . . . to the extent that it is performed” (p. 278).

In his now canonical work on taste, the sociologist Pierre Bourdieu (2013: 42) not only demonstrated the sociological significance of aesthetic distinctions; he also reported that aesthetic aversions were the strongest predictors of class membership. Alongside Bourdieu’s ideas about “cultural capital” (Guillory, 2023), scholars in literary and cultural studies have embraced affect theory as a framework for interpreting how performances of distaste, dislike, or “disidentification” (Muñoz, 1999) can produce new identities and communities.

In her influential work on the cultural politics of emotion, the feminist theorist Sara Ahmed (2014 [2004]) describes affects as “economic.” By this, she means that affects are not stable in themselves, much less, stable properties of individuals or groups. Rather, just as goods gain their value through the process of circulation and exchange, feelings take on value as they move among bodies, objects, and signs. Per Ahmed, speech acts performatively create feelings, by mobilizing signifiers that have accumulated differential charges through their histories of being used.

The media scholar, André Brock (2020) draws on theorists of antiblackness, as well as on Ahmed, to develop an account of “libidinal economy” online. Broadly, this framework holds that psychological and emotional intensities, rather than rational material interests, drive racism. Brock adds an emphasis on the role of digital technologies in mediating libidinal exchange. For instance, he argues that in addition to facilitating the spread of explicitly racist discourse, social media enact “weak-tie racism” through recommendation algorithms—which encourage white users to interact with videos that spectacularize antiblack violence (Brock, 2020: 155–60).

Zizi Papacharissi (2015) argues, against a long line of argument that disparages the epistemological and normative value of feelings in favor of reason, that “affective publics” can drive positive change. But Ahmed and Brock’s readings suggest that affects, like participation, are “normatively neutral.” Among them, hate plays a special role. Ahmed argues that hate produces boundaries between the self and others. In hate, Ahmed (2014 [2004]) writes, “bodies surface by ‘feeling’ the presence of others as the cause of injury or as a form of intrusion” (p. 48). On this account, cross-cutting driven by hate-sharing would not remedy polarization. On the contrary, it would be a precondition of polarization. A polarized public would come into being through repeated contact with content that its members experienced as an injury or intrusion. That is, through content that evoked their hate.

Affect theory would predict, in other words, precisely what Bright et al. (2020) observed: that within a networked public like Stormfront, far from moderating extremism, cross-cutting views serve as a “unifying presence” (p. 1). Bright et al. (2020) ground their interpretation in cognitive dissonance theory and its fundamental postulate that “the individual strives towards consistency” (p. 4). Affect theory, by contrast, emphasizes trans-personal and collective dimensions of emotional and social life. The methods that Haller and Holt (2019), Bright et al. (2020), Juarez Miro and Toff (2022), and Dowling (2024) primarily focus on individuals too. All four studies employ a combination of quantitative analysis of engagement metrics and discourse analysis of posts. These methods can and do yield many interesting insights. But they cannot tell us about the relationships that practices of cross-cutting, hate-sharing, or any other kind of affective linking might sponsor.

To understand community formation and interaction dynamics we had to turn to a different set of methods, drawn from network analysis. Having decided to use Gab as our test site, we approached it with the following research questions:

RQ1. Does hate-sharing take place on Gab?

RQ2. If so, is this practice associated with measurably different interaction and community formation dynamics than other kinds of link-sharing?

RQ3. Finally, if hate-sharing is associated with distinct dynamics, can engagement metrics and/or the content of posts help explain them?

Methodology

Research site

Launched in 2017 with the goal of attracting users who believed that the dominant social media platforms were biased against them, Gab reproduces many of those platform’s features. It allows users to post “gabs” of up to 3000 characters that other users can “like,” “comment” on (reply to), “repost,” or “quote.” Like Facebook, Gab also allows users to join groups. Its interface has evolved over time. At present, it most closely resembles Twitter/X, with a scrolling vertical timeline in the center of the page, a navigation panel displayed on the left and trending content on the right. The major difference is that Gab imposes almost no community standards or content moderation on its users.

Quantitative analyses have found that Gab attracts predominantly white and male “Alt-Right” supporters and conspiracy theorists, who gather there to discuss news media that cater to their outlook (Lima et al., 2018; Zannettou et al., 2018). Such analyses also suggest that “superparticipants” (Graham and Wright, 2014) shape discourse on Gab to an even greater extent than their counterparts on other platforms (Zhou et al., 2019). Qualitative scholars have identified Gab as a key site for the “Alt-Tech” movement (Donovan et al., 2019), which aims to provide infrastructure to right-wing movements as dominant tech firms increase moderation. Content analyses confirm that Gab hosts an eclectic right-wing user base united by a sense of “sociotechnical victimology” (Jasser et al., 2023) or fears of persecution (Peucker and Fisher, 2023).

Our choice of this site entailed some limitations. Gab has a small, homogeneous user base compared to Facebook or Twitter/X. Nonetheless, the strong ideological homophily and availability of public data offered a promising opportunity to explore our research questions.

Collecting data

We used the following two data sources: (1) Public Gab posts and (2) a list of media outlets classified according to their political leanings by AllSides, a company that publishes bias ratings of US news sources on a spectrum from left to right. To produce these ratings, AllSides combines a number of methods, including editorial reviews by a multi-partisan panel, general surveys, independent reviews, third-party research, and community feedback (Bovet and Makse, 2019).

We downloaded a set of 7,954,426 publicly available posts made between 23 December 2017 and 6 May 2018. Of these, 1,820,782 included URLs. From these, we extracted a subset that included URLs from media outlets that AllSides had classified. To identify instances of cross-cutting, we collected posts containing URLs that included the domain name of any media outlet classified as “left” or “lean-left.” For the purposes of symmetry, we also collected posts containing URLs that included the domain name of any outlet classified “right” or “lean-right.” We hypothesized that Gab users sharing links from left and left-leaning news sources would tend to do so from an adversarial perspective, while those sharing links from right and right-leaning sources would tend to approve of them. Our research is in line with widely accepted principles regarding the ethical use of big data, in that we only analyzed public data available to anyone who wishes to view it, and that we only presented anonymized and/or aggregate results.

Validating our data

To address our research questions, we had to establish that posts containing cross-cutting links on Gab did in fact constitute “hate-sharing.” We began the validation process by creating subsets of the 100 most-liked posts that contained URLs from outlets that AllSides had classified as left, lean-left, right, or lean-right and had also received at least one reply. so we could use the discussion therein to better assess the accuracy of our method. Two coders independently reviewed these subsets. After an initial review, we held a meeting at which we established shared definitions for “hate-shares” and “like-shares” (see Supplementary Material Appendix 1).

Through this process, we observed that most posts in the left and left-leaning subsets that did not meet our definition of hate-shares contained URLs from a small number of outlets: The Intercept, Mashable, SplinterNews, BoingBoing, and Gizmodo. Moreover, we found that the linked stories addressed a small handful of topics. Most common was critical reporting on major US tech firms. Also common were stories criticizing US military intervention in the Middle East and/or Israel.

The fact that Gab users consistently “liked” stories from these outlets suggest that the beliefs of the community constituted through Gab diverges from classifications used by expert institutions like AllSides. It accords with past analyses of discourse on Gab and may reflect the limitations of relying on domain-level data more generally. However, our goal was not to analyze the politics of Gab, or the merit of AllSides’ classification, for their own sakes. Rather, it was to develop a reliable method of identifying examples of hate-sharing and like-sharing at scale. Therefore, we simply excluded posts containing URLs from the five outlets in the left and lean-left sets that Gab users consistently praised, and produced a new validation sample.

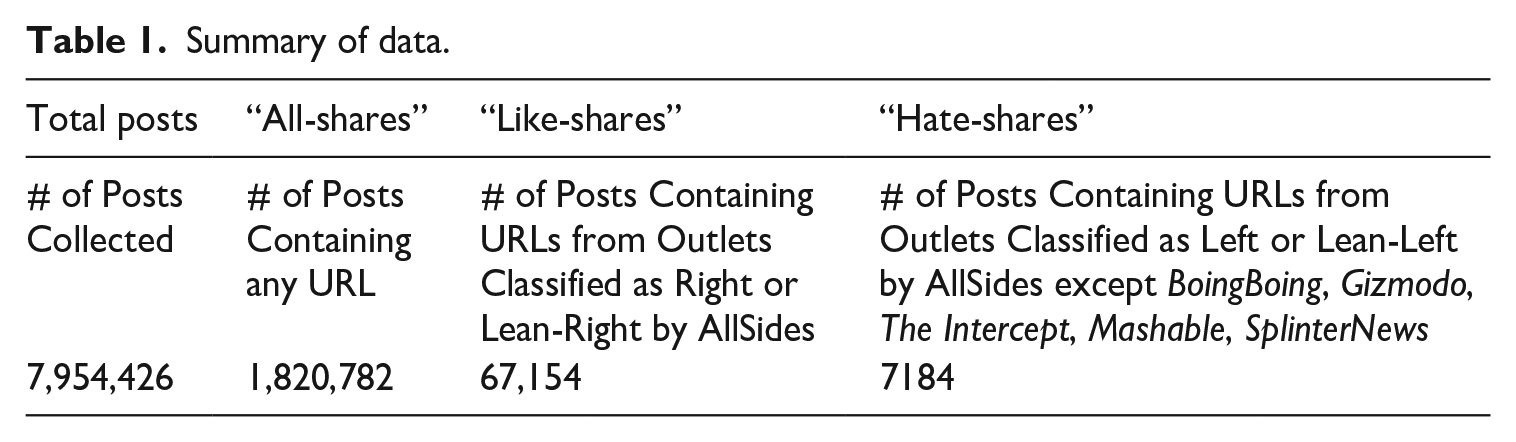

This time, we combined posts containing URLs from “left” and “lean-left” AllSides sources into one subset of “Hate Shares,” and those containing URLs from “right” and “lean-right” sources into a second subset of “Like Shares.” Without the problematic “left” and “lean-left” domains, this yielded 67,154 “Like Shares” and 7184 “Hate Shares” (Table 1). To determine accuracy, we coded a larger sample of the most-liked posts in each group that had at least one reply: 251 “hate-shares” and 244 “like-shares.” Together, these constituted a 16% sample of all hate- and like-shares with at least one reply, or a 0.7% sample of all hate/like shares.

Summary of data.

Through this process, we achieved an average accuracy of 90% on like-shares and 87% on hate-shares (89% overall), with perfect intercoder reliability for like-shares and more-than-sufficient reliability for hate-shares (Cohen’s Kappa: 0.81, percent agreement: 0.96, Brennan-Prediger Coefficient: 0.91 Krippendorff Alpha: 0.81). Although these accuracy scores were not perfect, the fact that they were very similar for hate- and like-shares, suggested that inaccuracies constituted a kind of “noise” that would cancel itself out across our comparative analyses.

Network construction and operationalization

To understand how users came to be connected through hate-sharing and like-sharing, and whether the connections created through these practices differed from one another and from connections created by sharing in general, we constructed and analyzed three different interaction networks. These were as follows: (1) a Hate-Shares Network, representing connections formed around hate-shares, (2) a Like-Shares Network, representing connections formed around like-shares, and (3) an All-Shares Network, representing connections formed around all URL shares. Our data included the total numbers of likes, comments, and reposts for each post that it contained, along with information about the users who posted them. In addition, it contained information regarding which users quoted or commented on which other users’ posts (although not on which users liked or reposted other users’ posts).

In each network, the nodes were Gab users, and we drew a directed edge from node A to node B if A commented on or quoted B’s post. Whether B’s post was a hate-share, like-share, or “all-share” (which includes hate shares, like shares, and all other URL shares) determined the network (1–3) in which that connection was drawn. Based on hypotheses formed during our literature review, we decided to focus on network measures that could give us insight into the degrees of (1) connectedness, (2) modularity, and (3) centralization in each of these networks. That is, the extent to which (1) the extent to which users were connected to one another and (2) formed subgroups or communities, as well as the extent to which (3) different types of interactions were associated with influence.

Following a standard practice, we focused on each network’s largest connected component (LCC), the biggest subgraphs where all nodes were connected. For the hate-sharing network, the LCC included 84% (2456/2937) of all nodes and 93% (3402/3667) of all edges. For the like-sharing network, it included 86% (3137/3637) and 95% (5012/5277), respectively. And for the all-share network, it included 97% (14,606/15,095) and nearly 100% (59,610/59,874). All the measurements reported below were taken on these network LCCs.

Additional quantitative and qualitative analyses

To gain further insight into what kinds of interactions were taking place within the networks that we constructed, we counted each kind of engagement metric available for each post (likes, comments, and reposts) and used linear regression to analyze and compare engagement distributions. We also conducted a qualitative discourse analysis on a subset of “hated” and “liked” content. To create that subset, we identified the 50 most-shared URLs in both the “Hate-Share” and “Like-Share” datasets (100 URLs total). Then, we collected up to 50 posts containing each of these URLs, as well as the comments they received. Because hate-shares are less prevalent than like-shares overall, these two samples differed in size. There were more than 50 shares of each of the top 50 “liked” URLs, so that sample consisted of 2500 posts, plus comments. However, only 5 of the top 50 “hated” URLS were shared at least 50 times; ultimately, that sample consisted of only 1125 posts, plus comments. Nonetheless, by analyzing these two samples, we were able to develop a strong sense of common themes of both hate-sharing and like-sharing. We then used an inductive method, reading the posts and taking note of themes they discussed and patterns of language that they used to do so.

Results

Hate-sharing (RQ1)

Our data clearly answered our first research question: Gab users do engage in hate-sharing. Hate-shares constituted over 10% of all the posts in our data that contained links to media outlets classified by AllSides. Moreover, more users were engaged in hate-sharing than the percentage of hate-shares out of all URL shares in the data would predict. In total, 6.43% of everyone who shared any URL engaged in hate-sharing, even though hate-shares only comprised 0.39% of URL shares. We note that like-shares (according to our definition) constituted 90% of all-shared URLs classified by AllSides, and 3.69% of all-shared URLs, in general. Furthermore, 16.8% of users who shared any URL engaged in like-sharing. These numbers show that like-sharing is more prevalent than hate-sharing. Therefore, our point is not that hate-sharing trumps like-sharing, but that it is present and should not be ignored or forgotten, particularly because it could play a key role in contributing to polarization.

Interaction and community dynamics (RQ2)

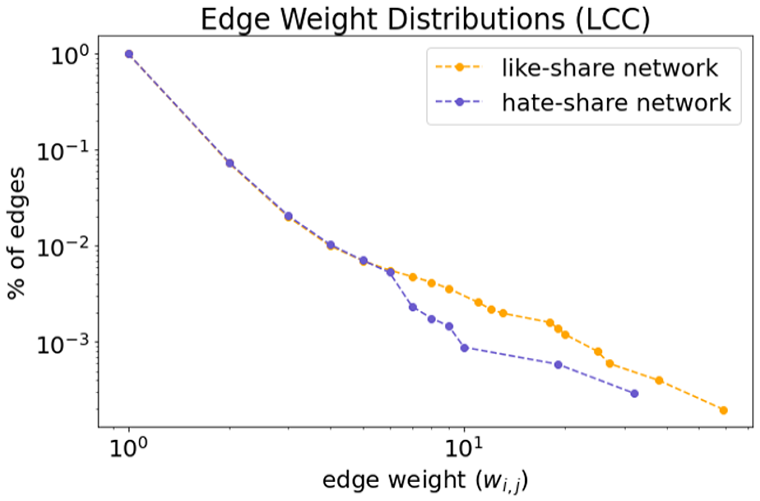

We used network analyses to determine how hate-sharing connected Gab users and whether the connections formed through this practice were distinctive. In general, we found that Gab users were only loosely connected. We calculated both the density and edge-weight distributions of our three interaction networks. Density represents the proportion of all possible relationships in the network that are, in fact, present. Using the formula

where E = the total number of edges in the network and N = the number of nodes, we found that all three networks had low density. However, both the Like-Sharing and the Hate-Sharing Networks were significantly denser than the All-Sharing Network, and the Hate-Sharing Network was slightly denser than the one formed by Like-Sharing. (Density for All-Sharing ≈ 0.00056; Like-Sharing ≈ 0.00102, and Hate-Sharing ≈ 0.00113.)

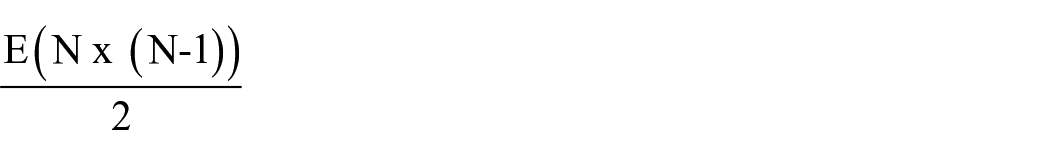

In our networks, edge-weights represented the number of interactions between any two nodes or users—that is, the number of times that either user had commented on or quoted the other user’s posts. (Recall: We did not have interaction data regarding likes.) Whereas density measures the proportion of possible relations that are present among all the nodes in a network, edge-weight distributions reflect the number of times that any two users interacted with each other. Our edge-weight distributions (Figure 1) revealed long tails for both the Hate-Sharing and Like-Sharing Networks. But the Hate-Share network had a noticeably smaller proportion of highly weighted edges. This means that a smaller proportion of users in the Hate-Share Network interacted repeatedly.

The Complementary Cumulative Distribution Functions (CCDF) for the edge-weight distributions in the like-share network’s LCC (orange) and the hate-share network’s LCC (purple). Here, the weight of an edge represents the total number of interactions between the connected users. The plot should be read as follows: y of edges have a total weight of at least x. We see that the like-share network has a proportionally higher amount of edges with especially large weights (>7).

When we examined community structure, further differences began to emerge. To compare the structure of communities formed through hate-sharing and like-sharing we compared the modularity of the two networks, or the strength of their division into subgroups. Using two different partitioning techniques, we found that the Hate-Sharing Network had higher modularity than the Like-Sharing Network. Using modularity maximization (Clauset et al., 2004), we found that the Hate-Sharing Network had a modularity score of 0.75, and the Like-Sharing Network had a modularity score of 0.66; using label propagation (Cordasco and Gargano, 2010), Hate-Sharing had a score of 0.56, and the Like-Sharing had a score of 0.49. To put this another way, both methods of calculation found that subcultures or communities formed by hate-sharing were around 14% stronger than the communities formed by like-sharing.

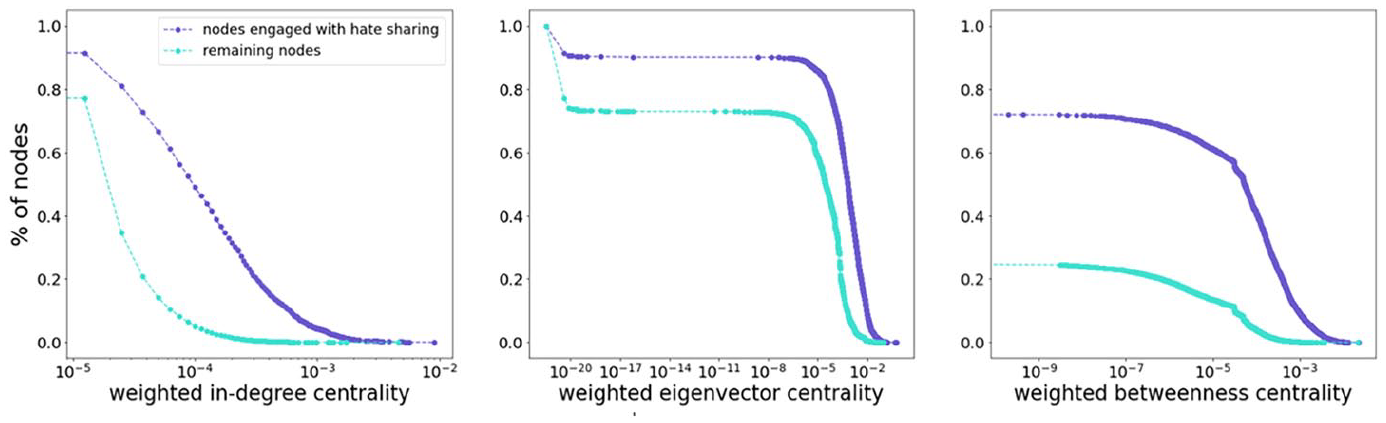

Our most striking finding concerned influence. To measure the relationship between hate-sharing and influence, we calculated several kinds of centrality scores for all nodes in the LCC of the All-Sharing Network. Network scientists have recognized centrality as a proxy for influence or importance across many kinds of networks (Das et al., 2020). For our purposes, weighted in-degree centrality, eigenvector centrality, and betweenness centrality seemed particularly important. In the first case, a high score would indicate a high degree of attention and engagement being given to a user (i.e. many comments on, and/or quotes of, their posts). In the second, a high score would indicate that the user was proximate to other highly influential users (i.e. whose posts received many comments and/or quotes). In the third, a high score would suggest that the user has some control over how information flows in the network and is positioned to act as a bridge or gatekeeper.

We calculated these scores for all nodes in the LCC of the All-Share network and then compared the distribution of scores for users who had appeared in the full hate-share network (20%) versus those who had not (80%). The former group scored significantly higher on all centrality measures than did their non-hate-sharing counterparts. Figure 2 displays these distributions. It shows that users who appeared in the Hate-Share Network received more attention overall and were closer to one another than users who did not. They also exercised much more control over the flow of information: the vast majority of users who appeared in the Hate-Share network had betweenness centrality scores nearly four times higher than those who did not.

CCDFs of the centrality distributions for nodes in the all-share network LCC that have (a) engaged with hate-sharing (i.e. are present in the hate-share network; purple) and (b) nodes that have not (turquoise). In each case, we see higher centrality overall among users who have engaged with hate-sharing.

Further analysis of engagement metrics and discourse

We were curious whether an analysis of engagement metrics and of the discourse that took place within and around hate- and like-shares would help explain the differences that we have just described. Most URL shares on Gab attract little engagement: fewer than 1% of the URL shares we collected received any likes, comments, or reposts at all. However, hate-shares received more replies than like-shares. And proportionally, more hate-shares than like-shares received very large numbers of likes (100 or more).

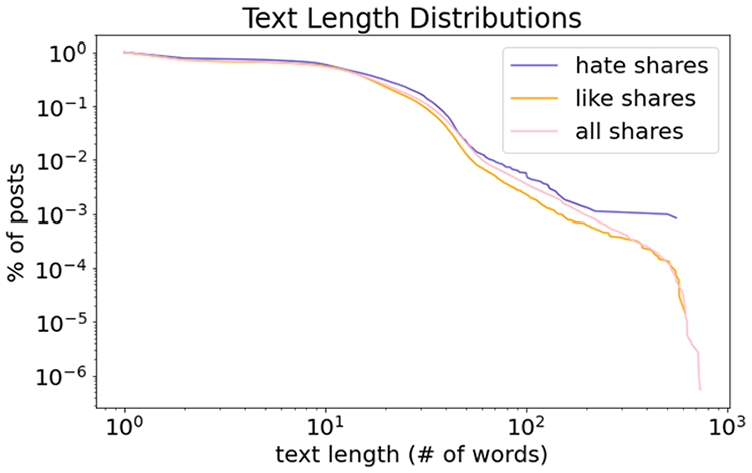

By analyzing the distributions of text lengths we found that hate-shares were longer than like-shares and other URL shares—and that a small percentage was significantly longer. The average and median text lengths for hate-shares were 16 and 12 words, while they were 13 and 11 for like-shares and 14 and 11 for all-shares. Furthermore, consulting the CCDF shown in Figure 3, we saw that hate shares have a much higher proportion of especially long posts (300+ words) compared to like shares and all shares. The average length of comments was greater, too. The average and median text lengths for comments were 22 and 18 for hate-shares and 20 and 15 for both like shares and all shares. Strikingly, comments tended to be longer than original posts across all kinds of URL-sharing.

The Complementary Cumulative Distribution Functions (CCDF) for the text length distributions for like-shares (orange), hate-shares (purple), and all-shares (pink). The plot should be read as follows: y% of posts have a length of at least x. We see that the distribution for hate shares has a fatter tail—that is, the hate shares have a higher proportion of long posts.

It stands to reason that a user might amplify content that they agreed with by liking or reposting it without further qualification. It also makes sense that, if a user posted content that they disliked to an audience that they anticipated would also dislike it, they would explain why they were doing so at greater length. Finally, it seems logical that members of that user’s audience, if they bothered to pay attention to content that they were inclined to dislike, would articulate their analyses and criticism in quotes and comments, rather than simply reposting. By conducting a qualitative discourse analysis of a subset of like-shares and hate-shares and the comments on them, we were able to expand our interpretation beyond these intuitions.

Most of the most like-shared URLs were (1) from a small set of sources, (2) about topics likely to outrage a Gab user, and (3) already framed as outrageous. Of the top 50, 25 came from the Daily Caller and 7 from the New York Post. Only three other media outlets had links shared more than once: PJ Media (five shares), Federalist (five), Gateway Pundit (two). In terms of subject matter, 16 of the top 50 most shared stories were about political corruption—of the FBI and the “deep state” (seven stories), of the Clinton family (five stories) and of other Democrats (four stories). Ten were about political bias at Big Tech firms and their practices of content moderation and surveillance. Five were about culture war topics (such as “toxic masculinity”); three were about guns, and three were about antifa. Only two were positive stories praising Donald Trump. The fact that only two positive stories about Trump appeared among the top 50 most like-shared reflects the prevalence of outraged frames in Gab users’ preferred media outlets.

Both original posts and the comments on those posts frequently reiterated the headlines and framing of the linked article. Of the first 50 shares of the most-shared URL, 39 consisted only of the headline and/or link, sometimes with hashtags added (e.g. #speakfreely, #QAnon #GreatAwakening #WethePeople #MAGA). Comments also tended to affirm the framing of the story being shared. For instance, comments on posts about a story on the suspension of Gateway Pundit from Facebook read, “The congress should investigate with the Justice Department.” “Patriots rise and stand united against this BS.” “Yes, on YouTube and Twitter also.” Disagreements generally concerned strategy rather than substance. For instance, another user insisted, “We have to DEMAND the @FCC revoke ALL of their protections by Section 230 of the Comm. Decency Act, which will allow us to SUE them for ANY content . . . Unless they remove their NWO Globalist / Anti-Trump Bias!” The next, “Congress is dead. They will no longer do their jobs. They no longer respond to shit.”

Contrary to our initial expectations, almost none of the most hate-shared URLs came from sources that AllSides had classified as “left.” Of the top 50 most shared URLs, 19 came from the Associated Press, 12 came from ABC News, and 7 came from Time; 4 each came from The Week, New York Magazine, and all other sources combined. AP, ABC, Time, and the Week all receive ratings of “lean-left” from AllSides; of outlets that appeared in the most hate-shared URLs set, only New York Magazine earned a rating of “left.” Nor did most of the stories that they linked to express explicitly left or left-leaning opinions. Rather, the overwhelming majority were news stories, reported in a neutral or “objective” journalistic style.

The most hate-shared set included multiple stories about two topics that had been absent from the most like-shared set. The first was immigration and/or race and ethnicity. Eight of the top 50 stories were about either immigration policy or specific immigrants or descendants of immigrants to the United States. (This echoes Dowling’s (2023) analysis of cross-cutting by Australian Gab and Telegram users.) Another eight of the top 50 focused on international news, a topic that did not appear anywhere in the most like-shared URLs.

We repeatedly encountered instances of users sharing news stories that were not overtly or primarily about immigration, ethnicity, and race and using their posts and comments to foreground those subjects. The posts containing these stories, and the comments on them, frequently did two things. First, they criticized the original reporting, dismissing its objective style as a cover for elite interests. Second, and relatedly, they offered racist and xenophobic interpretations of the reported events.

Given their hateful content, we will refrain from reproducing many of these posts, but want to cite two representative examples. One December 2017 story from ABC News ran with the headline: “Pennsylvania Cop Shooting Suspect’s ‘A Chicken, Not a Terrorist’: Ex-Brother-in-law.” The story, shared 44 times, did not mention its subject’s name or the fact that he had immigrated to the United States from Egypt, for several paragraphs. Gab users, however, foregrounded these facts and accused ABC of deliberately obscuring them: Post: Pennsylvania man targets police officers in string of shootings; one injured, suspect killed #Muslims #Terrorists #Police #Shooting #Pennsylvania #ObamaLegacy #Immigration #Refugees #Migrants Comment: Muslim! Surprise! Post: fake news does not want you thinking terrorist “Pennsylvania cop shooting suspect’s ‘a chicken not a terrorist’” this is how they do it . . . constant propaganda . . . imagine if killary was in

Another ABC story that ran on 2 January 2018 was shared 11 times: “Emotional parents speak out after missing UPenn student found dead from suspected homicide.” Although the original reporting did not mention the family’s background, users picked up on their last name, Bernstein, to reframe the coverage in antisemitic and racist terms: Post: Homicides of Jews make international news, while daily homicides of US whites who are victims of blacks are mentioned in 10 second spots on your local television. Comment: If mentioned at all . . . Post: I think suicide is more likely. “Blaze Bernstein?” I’d kill myself with that name. Not to mention, can you imagine how much he was made fun of in middle school as they learned about how Jews were killed?

International stories inspired similar acts of reframing. Users repeatedly took objectively reported news about military conflict in the Middle East as occasions to declare their opposition to US intervention on behalf of elite, allegedly Jewish economic interests. Comments often expressed conflicting attitudes toward Israel, alternating between antisemitism, Islamophobia, and admiration for Israeli military strength.

Our analyses made amply clear that users engaged in and with hate-sharing were not earnestly considering information or arguments from “the other side.” But neither was they thoroughly rebutting such content. Instead, they were taking objective news and using affordances like sharing, quoting, and hashtags to create an “outraged” frame around it. This process sometimes inspired play, as when users referred to dominant platforms as “F***book,” “Twit,” and “Goolag.” And it sometimes involved contestation—as when users debated whether an Israeli airstrike was part of an insidious globalist plot or constituted an admirable ethno-nationalist response to terrorism. But it did little to mitigate prejudice. Indeed, to the extent that the hate-shares we examined did expand the scope of discourse, they did so by providing new occasions to express intolerance and even call for violence. When hate-sharing, or commenting on hate-shares, users expressed more overtly racist, antisemitic, and xenophobic views than they did engaging with “liked” content.

Discussion

Our data clearly answered our first research question: hate-sharing is common on Gab, accounting for over 10% of all the news sharing captured in our sample. Our validation process also affirmed the importance of running a qualitative “check” rather than making assumptions about how members of a networked public are using links to make meaning. If we had assumed that all cross-cutting from “left” or “lean-left” sources constituted hate-shares, we would have greatly exaggerated the prevalence of this practice. While this was not our primary focus, we would also have missed an important fact about Gab users: Their views of major technology firms, the US military, and Israel overlap with views expressed in sources that AllSides, an expert institution, classified as “left” or “left-leaning.” Our findings underscore the importance of combining qualitative and quantitative methods capable of capturing context, especially when analyzing emergent political formations.

Our network analyses showed that users who share URLs on Gab are only loosely connected to one another and those connections among those who hate-share are especially loose. Moreover, users are unlikely to interact repeatedly through hate-sharing. However, hate-sharing is associated with stronger community structure; it creates a network with more tightly bonded subgroups than do like-sharing or URL-sharing in general. Most strikingly, users who engage in hate-sharing are significantly more influential than those who do not. Indeed, by one of our measures, the vast majority of users who hate-share are nearly four times more influential than their counterparts. A picture begins to emerge. Hate-sharing is a practice that bonds communities without binding particular individuals to each other; indeed, the strength of relationships between individual users may vary inversely with the strength of subgroups.

Our analysis of engagement metrics and of discourse helps clarify why. The engagement metrics and text length distributions that we analyzed show that hate-sharing inspires discussion, in the form of more and longer replies and quotes, to a greater extent than direct amplification, such as likes or reposts without commentary. Our discourse analysis provided a compelling explanation for this. Users who hate-share, or comment on or quote hate shares, are not simply reiterating the information in the linked source. Instead, they are producing—and sometimes debating and competing over—new interpretations. These new frames often resemble the frames already present in users’ preferred news sources. And they frequently serve as a pretext for intensified expressions of prejudice and violence. Users implicitly or explicitly justify prejudice and violence as reactions to the alleged deceptions of mainstream news organizations.

This, in turn, suggests an explanation for why hate-sharing might be associated with the community formation and interaction dynamics that we observed. The vast majority of posts on Gab attract little to no engagement. But, when a hate-share does succeed at attracting engagement, it typically attracts more than a like-share would. Recall: Hate-shares got more replies than like-shares and a larger proportion of hate-shares than like-shares received 100 or more “likes”; moreover, hate-shares tend to inspire users to comment on and quote them at greater length. These facts help clarify why hate-sharing would convey influence, particularly influence over flows of information. On Gab, a successful hate-share creates an occasion for users to gather, recognize their common enemy, and collectively determine how to extend the interpretations of reality that they prefer onto new terrain. The ritual gives rise to a feeling that is familiar to Gab users from their preferred media sources: outrage.

Conclusion

By taking up affect theory as a framework and network analysis as a method, we have been able to see something that previous accounts of cross-cutting in polarized spaces have not yet captured. When echo chambers resonate with opposing views (Bright et al., 2020), individual members are not simply reducing their own cognitive dissonance. They are making bids to gain attention and status, particularly the kind of status associated with controlling information flows and, thus, setting the scene for members of the group to define its beliefs. In this way, a networked public like Gab incentivizes users to perform hate. These findings suggest that social networking sites shape polarization not only, and perhaps not even primarily, by filtering out dissonant perspectives, but rather by structuring sociability. We further show that “cross-cutting,” as a normative concept, may have limited value for describing online polarization. As we discovered in our validation process, between one-third and one-half of all apparently cross-cutting link-shares were, in fact, performances of “hate.”

Our findings resonate with a great deal of canonical scholarship on racism, fascism, and media. In the United States, the idea that industrial and technological modernity gave rise to highly atomized societies, and the anxiety that such societies would turn to authoritarian strongmen, were foundational for the emergence of media and communications studies as academic disciplines. The first generation of researchers engaged in “propaganda analysis” focused on how fascists and demagogues used mass media to appeal to audiences who had lost traditional forms of community, family, and religious beliefs (Bauer and Nadler, 2021; Turner, 2013). Critical scholars of that generation argued that, by identifying with strongmen and their cruelty to outsiders, mass audiences took pleasure in their own domination (Horkheimer et al., 2002 [1947]). Mass media facilitated sado-masochistic identification through vertically-integrated industrial organization and distinctive aesthetic properties–such as the ability of the radio broadcast or film image to simultaneously spectacularize the leader and bring his likeness “close up” (Adorno, 1938; Benjamin, 2018 [1936]; Löwenthal and Guterman, 2021 [1949]). Today, critical scholars face the vexing question of why individuals using very different media, characterized by different forms of organization and aesthetic properties, still tend to gravitate toward performances of hate.

Further research is needed to determine how far our findings might generalize in several directions. Cross-platform research using a similar methodology would enable us to determine whether sites with different user bases and affordances than Gab engage in hate-sharing at similar rates and with similar effects on community formation and interaction dynamics. Such research would enable us to better understand at least two things: whether the findings here reflect something specific about Gab users and whether they reflect something about specific Gab features, like the ability to reply or quote on a chronological feed.

There is a long tradition in political psychology that holds that liberals and conservatives have distinct psychological tendencies, and associates the latter with negative affects (Bauer, 2023). But there is also evidence that individuals across the political spectrum enjoy sharing information about public figures they dislike (Rathje et al., 2021). If we analyzed a “left” or “left-leaning” public, would we find that they hate-share at similar rates? How about a social network with a more diverse user base? Do anti-fans who hate-share ostensibly non-political content come to be connected in the same ways as partisans who hate-share news? It would also be illuminating to learn whether hate-sharing takes place and drives similar dynamics on Reddit, for instance, which is organized into forums and where users upvote rather than repost—or on TikTok, where users express admiration or disdain through split-screen duets. Such research could begin to yield insight into the “forms of the affects” (Brinkema, 2014) emerging from contemporary social media. In addition to investigating other demographics and other platforms, future research could explore other kinds of affective linking—of sharing that expresses emotions that have their own cultural and political utility, such as joy or shock or “cringe.”

Like past complications and critiques of the “echo chamber” metaphor, our findings have practical implications. Many experts have proposed introducing content from opposing sources into polarized online spaces, as a means of decreasing intolerance (e.g. Bessi et al., 2016; Sunstein, 2018; Zollo et al., 2017); our case follows others that complicate this picture (Heatherly et al., 2017). Our findings have methodological implications that extend beyond studies of polarization, too. Quantitative social scientists have frequently used URL-sharing as a signal of influence, agreement, and/or social proximity (e.g. Cinelli et al., 2021; Garimella et al., 2018; Peterson et al., 2021). But if social media users share content in order to profess and elicit negative emotions about it, we cannot necessarily take sharing as evidence of such.

If sharing the same URL indicates that two or more members of a network have something in common, that something may not necessarily be affinity. This raises difficult questions about the ontology and epistemology of publics. The theorist Michael Warner (2002) famously defined publics as being “constituted through mere attention” (p. 60); their democratic promise is to create collectivities defined by reason or preference rather than inherited status or rank. But there are many kinds of attention and the ways that technologies and institutions condition them vary. What kinds of subjects and publics arise through performative rejection is urgent to understand, as crises of democracy worldwide arise from, and give rise to, new forms of hate.

Supplemental Material

sj-docx-1-nms-10.1177_14614448241245349 – Supplemental material for Hate-sharing: A case study of its prevalence and impact on Gab

Supplemental material, sj-docx-1-nms-10.1177_14614448241245349 for Hate-sharing: A case study of its prevalence and impact on Gab by Moira Weigel and Adina Gitomer in New Media & Society

Footnotes

Acknowledgements

The authors would like to thank Claire Coffman for her invaluable help coding samples and Alan Mislove and Christo Wilson for their advice on securely storing data, as well as Brooke Foucault-Welles, Brian Friedberg, Matt Goerzen, Alice Marwick, Will Partin, and Ben Tarnoff for offering feedback at various stages of the research and writing process. We are also grateful to the two anonymous peer reviewers whose suggestions greatly strengthened our final text.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Supplemental material

Supplemental material for this article is available online.

Author biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.