Abstract

The diffusion of algorithms, robots, and artificial intelligence has sparked public debates regarding opportunities, risks, and responsibility for addressing problems and developing solutions. Since media cover and shape sociotechnical imaginaries, this study investigates the Austrian media discourses on responsibility in two domains of automation: social media algorithms and social companions. Using a machine learning approach, relevant articles were identified, followed by a manual comparative content analysis. The findings indicate that media coverage of social media algorithms tends to be more critical compared to social companions. In the debate about social media algorithms, journalists emerge as the most common speakers raising responsibility issues and primarily attributing them to Internet platform providers. Conversely, responsibility for social robotics is predominantly articulated by experts, considering it as a responsibility shared by society, economy, and research. Furthermore, the media present different perspectives on the agency and responsibility of social media algorithms and social robots themselves.

Keywords

Introduction

The economy and society are experiencing a significant shift toward automation, marked by the increasing deployment of automation technologies. These technologies include

The issues of

In constellations of uncertainty regarding de facto responsibility, it is crucial to consider how responsibility is discussed and

The present analysis aims to explore how responsibility is portrayed and thus constructed in the media’s coverage of selected automation issues.

For our empirical analysis, we will concentrate on two topics that are currently receiving significant attention in both academia and the media:

The use of algorithms on social media platforms;

The use of robotics as social companions for social purposes.

The two areas for in-depth analysis were selected because of their topicality and practical relevance, many controversially discussed benefits and risks, and the high level of attention paid to them. In addition, the academic discourses on social media and social companions point to similarities but also several different thematic focuses and perspectives, which may also be reflected in attributions of responsibility.

Social media algorithms, for example, are the subject of heated debates about risks and questions of responsibility, as well as about the power of Internet platforms. This is evident in national and international efforts to hold digital platforms more accountable, such as with the European Union’s Digital Services Act (DSA).

Social robots are increasingly used in socially sensitive interaction contexts, including intimate human–robot relationships, such as in nursing, that affect social interaction in societies (e.g., Seibt et al. 2014). In this sensitive context, there is a sharp increase in literature on machine-, robot-, and AI ethics that also entails questions about the responsibility status of robots, such as whether and to what extent robots themselves should and can assume responsibility (see, for example, Coeckelbergh, 2020; Danaher, 2019; Loh, 2019; Matthias, 2004; Waelbers, 2009).

The analysis facilitates a comparison and contrast between the media portrayal of social media algorithms—operating rather opaque and invisibly in the background (Pasquale, 2015)—and the depiction of social companions, whose operations are more visible and observable to users. Technologies are frequently anthropomorphized (Darling, 2012), attributing human characteristics to them, including the ability to make judgments, act, and assume responsibility. The comparison between social media algorithms and social companions allows us to envisage similarities and differences regarding the extent to which technologies are portrayed as autonomous “actors,” to which extent technologies themselves are held accountable for their “actions” and to which extent other actors are referred to as responsibility subjects.

Loh’s (2017, 2019) theory of

Within this analysis, we first use a machine learning procedure to detect relevant news articles in Austrian media. Second, we examine each responsibility statement identified in the media coverage to ascertain the assigning actor (speaker), the assigned party (subject of responsibility), and the specific issues and challenges (object of responsibility) being addressed. In doing so, we aim to trace which actor groups (as assigners and as subjects of responsibility) and which objects are made visible in the media discourse on responsibility.

Problematizations: tonality, risks, and objects of responsibility

Questions of responsibility arise in relation to technologies, concerning the causes of problems and the development of solutions. The way social media algorithms and social companions are portrayed and problematized in the media is relevant in this context. The tone of reporting, whether positive and optimistic or negative and critical, can shape perceptions of these technologies. Furthermore, the coverage of problems and risks, and the identification of challenges and responsibilities are crucial factors. The state of research on these questions can be summarized as follows.

Research examines

We therefore ask:

And we propose the hypothesis:

Closely linked to the tone of reporting on technologies is the coverage of automation

The discussion of risks is connected to the question of

In the fields of social media and social companions, there are many objects for which questions of responsibility arise. On the one hand, these objects relate to

Empirical analyses of the coverage of responsibility in technology reporting are rare. Brantner and Saurwein (2021) analyzed the extent of responsibility references in Austrian reporting on automation. They show that 13.7% of all articles about automation also address responsibility issues. However, these investigations do not specify which particular problems and challenges of automatization are frequently presented as objects of responsibility.

We therefore ask and propose:

Attribution of responsibility

Speakers

The portrayal of responsibility objects leads to the question of which actors address this responsibility in the media and to which actors it is assigned, that is, who appears as the responsibility subject. Content analyses therefore record media attention to both the actors who appear as speakers in the media and the actors who are the subject of reporting. Analyses of actors generally operate under the assumption that increased

The

There are some analyses of media coverage of robotics, AI, and algorithms that examine the visibility of actors (Brennen et al., 2018; Fischer and Puschmann, 2021; Sun et al., 2020). Sun et al. (2020) show that the US coverage of AI is dominated by business actors, especially the “tech giants” (Google-Alphabet, Meta, etc.). Comparatively less attention is paid to scientific actors, political actors, and voices from civil society. Brennen et al. (2018) also observe a strong industry influence on the media discourse on AI in the United Kingdom, evidenced by a dominance of industry issues and industry-related sources. Nearly 60% of all articles relate to industry products, initiatives, or announcements. Civil society and advocacy groups are mentioned least often. Also, Fischer and Puschmann (2021: 22) observe a lack of actor diversity in the coverage of AI and algorithms in the German media discourse. It is dominated by economic/industry actors, followed by scientists, while political and civil society voices are hardly heard. A slightly different constellation of speakers was found in an analysis of the responsibility discourse on automation in Austria (Brantner and Saurwein, 2021). While economic/industry actors (32%) and scientists (31%) are on an equal footing and journalism contributes one-fifth (21%) of all responsibility attributions, other groups are less represented. Though civil society groups appear rarely as spokespersons (9%), they still have more voice than political actors (5%), while people in their roles as users and citizens hardly ever get the opportunity to express their views (1%). Overall, the state of the research shows that economic actors dominate the general coverage of automation topics, while scientific actors receive similar attention when responsibility claims are covered. Further differences depending on specific application areas are unexplored yet. In this article, we therefore investigate:

Subjects of responsibility

Central actors in responsibility constellations are those persons or organizations who are responsible and who are referred to as

Subjects of responsibility are individual or collective actors that can be held accountable for their actions through means such as sanctions or requests for justification of their actions (Loh, 2019; Loh and Loh, 2017). In the context of automation, the actors involved range from manufacturers, developers, and designers who create technologies, to companies and private individuals who use them, to industry, political, and administrative stakeholders (Saurwein 2019).

Automation increases the takeover of activities by machines, which requires reconsidering questions of responsibility. This leads to tensions between the realization of morally relevant activities by increasingly autonomous robots, the ideal of maximum assignability of responsibilities to particular subjects, and the challenge of avoiding large-scale responsibility gaps. In addition to individual and collective actors, responsibility is sometimes also assigned to technologies. In the scholarly discourse on Internet platforms, for example, the focus is on the influence of algorithms on decision-making processes and on online risks. In this context, research often points out that in sociotechnical constellations risks result from the interaction of technical and social factors (Saurwein and Spencer-Smith, 2021: 228) and that there are human subjects of responsibility behind algorithmic decisions. At the same time, developments in AI and machine learning lead to considerations of the active role of algorithmic systems and the possibility that technologies themselves have agency (Amoore, 2020: 12; Burr et al., 2018; Diakopoulos, 2015). However, little attention has been paid to the question of whether social media algorithms—even if they act autonomously to a certain extent—can serve as subjects of responsibility at all.

In the academic discussion of social companions, the social aspects of human–robot relationships play a central role. With regard to subjects of responsibility, we can roughly group the different views on this issue into two perspectives. The first emphasizes the responsibility of humans, partly combined with an explicit and necessary demarcation from machines (Sparrow and Sparrow, 2006). This view strengthens the position of humans as responsible subjects for ethical, social, economic, and legal risks in human–machine interaction. In the second perspective, scholars argue that the increasing social interaction with robots also leads to questions about the moral and legal status of the machines. Studies on the human–machine relationship conclude that humans do humanize robots by transferring anthropomorphic ideas to them (Darling, 2012). Considerations of the autonomy, freedom, and agency of robots lead to questions about their possible morality and ability to assume responsibility (Floridi and Sanders, 2004; Gunkel, 2018; Wallach and Allen, 2009). Here, for instance, concepts of shared or extended responsibility are discussed (Hanson, 2009; Simmler, 2019), which include the responsibilities of both machines as well as humans involved. Concerning media coverage, the question arises as to whom responsibility is assigned in media discourse and whether the controversially discussed perspectives on “responsibility of technologies” are also taken up in the media discussion on social media algorithms and social robotics.

In media studies, the attribution of responsibility (Iyengar, 1996) has been studied for socially controversial topics such as European politics (Gerhards et al., 2007) and climate change (Post et al., 2019). However, insufficient research exists on attributing responsibility within the news media for rapidly evolving technologies such as automation, robotics, AI, and algorithms. One exception is a study by Suárez-Gonzalo et al. (2019) which examined the media coverage of Microsoft’s chatbot Tay. The bot generated controversy in 2016 due to its racist and anti-Semitic statements on Twitter. The researchers found that three-quarters of articles attempted to identify the culprits for the bot’s inappropriate behavior. Twitter users were blamed in 40% of the cases, while Microsoft was held responsible less frequently (17%). 14% pointed to flaws in the machine learning code, and 18% interpreted the malfunction as a result of interactions between humans and the software. The chatbot itself was predominantly portrayed as a victim. Suárez-Gonzalo et al. (2019) concluded that the media discourse reinforced the idea that the failures primarily originated from the behavior of Twitter users, neglecting to address the varying responsibilities of designers, users, and platform operators. Another analysis (Brantner and Saurwein 2021) examined responsibility attribution in the media coverage of automation in Austria. The findings revealed that industry stakeholders accounted for the largest portion of responsibility subjects (45%), followed by politics (23%), and society (15%). Science and education actors are assigned with responsibilities as often as users (7% each). Further investigation will determine whether patterns of responsibility attribution in the automation discourse resemble or differ from those concerning social media algorithms and social companions. Based on these observations and the preceding discussion, we pose the following questions and hypotheses:

Methods

The analysis is based on a final sample of 327 articles (193 about social media algorithms and 134 about social companions) between 2000 and 2018. The data source for the media content analysis is the Austrian Media Corpus (AMC), with print sources accounting for 80.8% of the corpus. 1 It comprises articles from the main Austrian news media since 1991, including the national and regional daily press, periodicals, and transcripts of selected news broadcasts. The AMC was gradually expanded in the 1990s through the inclusion of additional media. For 2018, the most recent year studied, 361 million articles are included.

Step 1: coverage of automation

In the first step, we defined keywords that describe the thematization of automation and relevant connected technologies. We used the German expressions for “automation*,” “algorithm*,” “robot*,” and “artificial* intelligence” as lemma search terms to create a thematic sub-corpus within the AMC (AMC-S) that includes media reports on automation from 1990 to 2018. The AMC-S sub-corpus contains a total of 45,034 articles and serves as the data basis for further examination.

We then developed a coding scheme for quantitative content analysis (Neuendorf, 2017)

Step 2: in-depth analysis of the responsibility discourse

The data basis for the content analysis of the responsibility discourse is formed by those news articles in the sub-corpus (AMC-S) that deal with the two selected topics: social media algorithms and social companions. For article selection, we developed a

First, we identified those news items in the AMC-S sub-corpus that deal with social media algorithms or social companions. For this purpose, we used articles that, according to the content analysis in step 1, addressed the two topics (

Creation of a sub-corpus from AMC-S based on the extracted keywords;

Training of a text classifier using the Spacy ML library (the 1176 articles from the manual content analysis were used as a training set);

Application of the model to AMC-S sub-corpus;

Subsequent manual evaluation (correction or confirmation) by a human coder;

Iterative improvement of the model by repetition of evaluation-training cycles.

In addition to the adaptation of the data set, various constellations of technical parameters were tested in the machine learning process. From these interlinked processes, the quality of the text classifier was continuously increased until it reached a sufficient F-score > 50%.

For the selection of the articles for the in-depth analysis, the classifiers were trained with regard to the following requirements and inclusion criteria:

The articles (1) should deal with social media or social companions as the main topic, and (2) should contain “responsibility references” because the in-depth analysis centers on media attribution of responsibility.

The text classifier generated and optimized in this way was then applied to AMC-S to create a sample for further content analysis of the responsibility references. For each application area, the classifier selected 270 articles published between 2000 and 2018 which met the inclusion criteria with the highest possible probability according to the prediction model. The manual content analysis was carried out by two human coders.

The

Responsibility was coded on a statement level, that is, every responsibility reference built a coding unit. For this purpose, the categories for different responsibility relata

4

(

Out of the 540 articles identified by the classifier, 213 were excluded as false positives because they did not discuss social media algorithms or social companions as a main topic or did not contain responsibility references.

The sample consists of 327 fully coded articles, with 193 focusing on social media algorithms and 134 on social companions. In total, the articles contain 932 responsibility references, 575 articles about social media algorithms, and 357 articles about social companions. To establish intercoder reliability, two coders (third author, graduate assistant) double-coded 60 articles (

Results

This section presents findings on the

Tonality (RQ1)

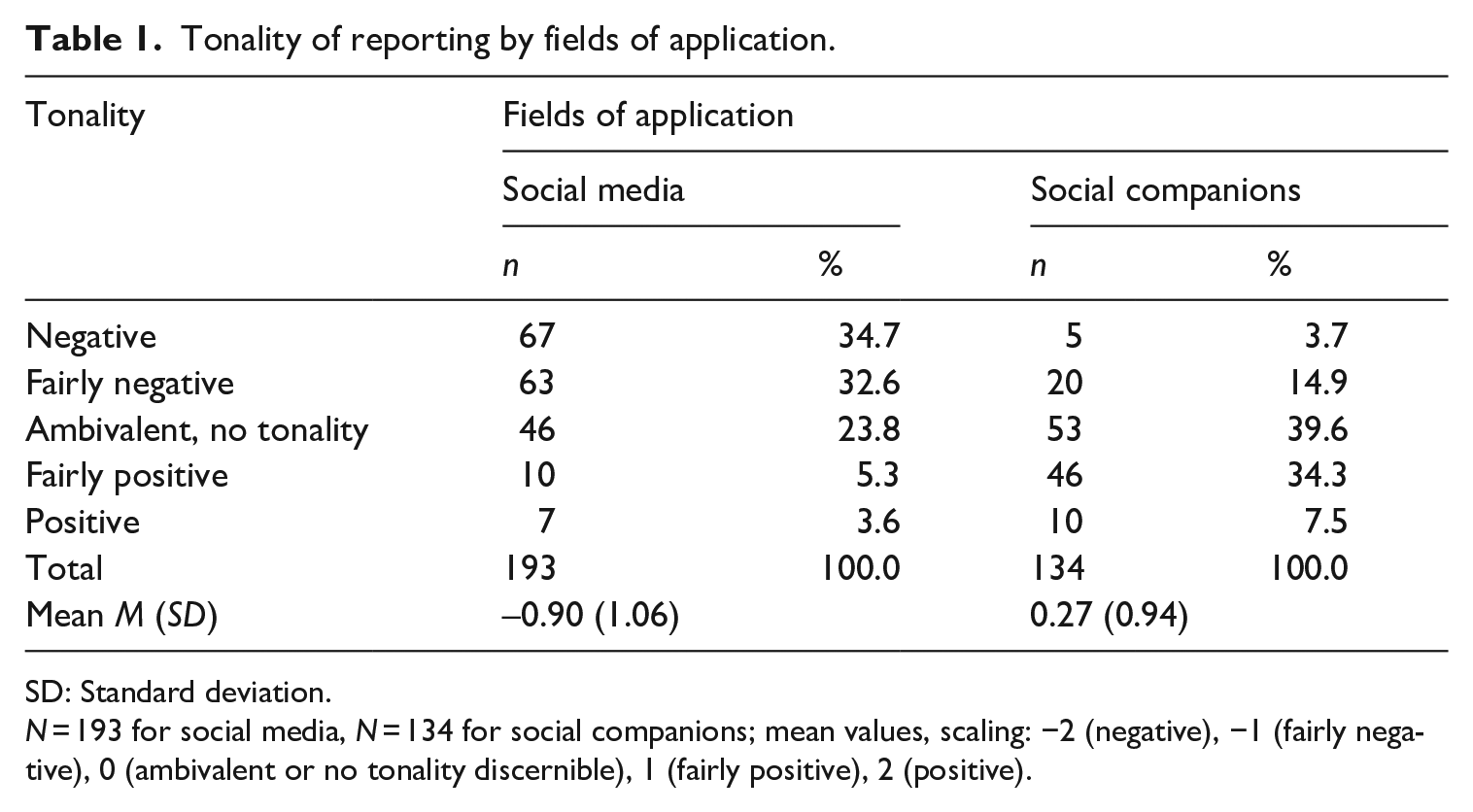

Previous research indicates that coverage of automation is predominantly characterized by a positive tone, with variations observed among different automation technologies, such as AI, robotics, and algorithms. These variations are also apparent when comparing the coverage of social media algorithms to that of social companions (see Table 1).

Tonality of reporting by fields of application.

SD: Standard deviation.

While the tonality of articles about social media algorithms is, on average, fairly negative (

Responsibility objects (RQ2)

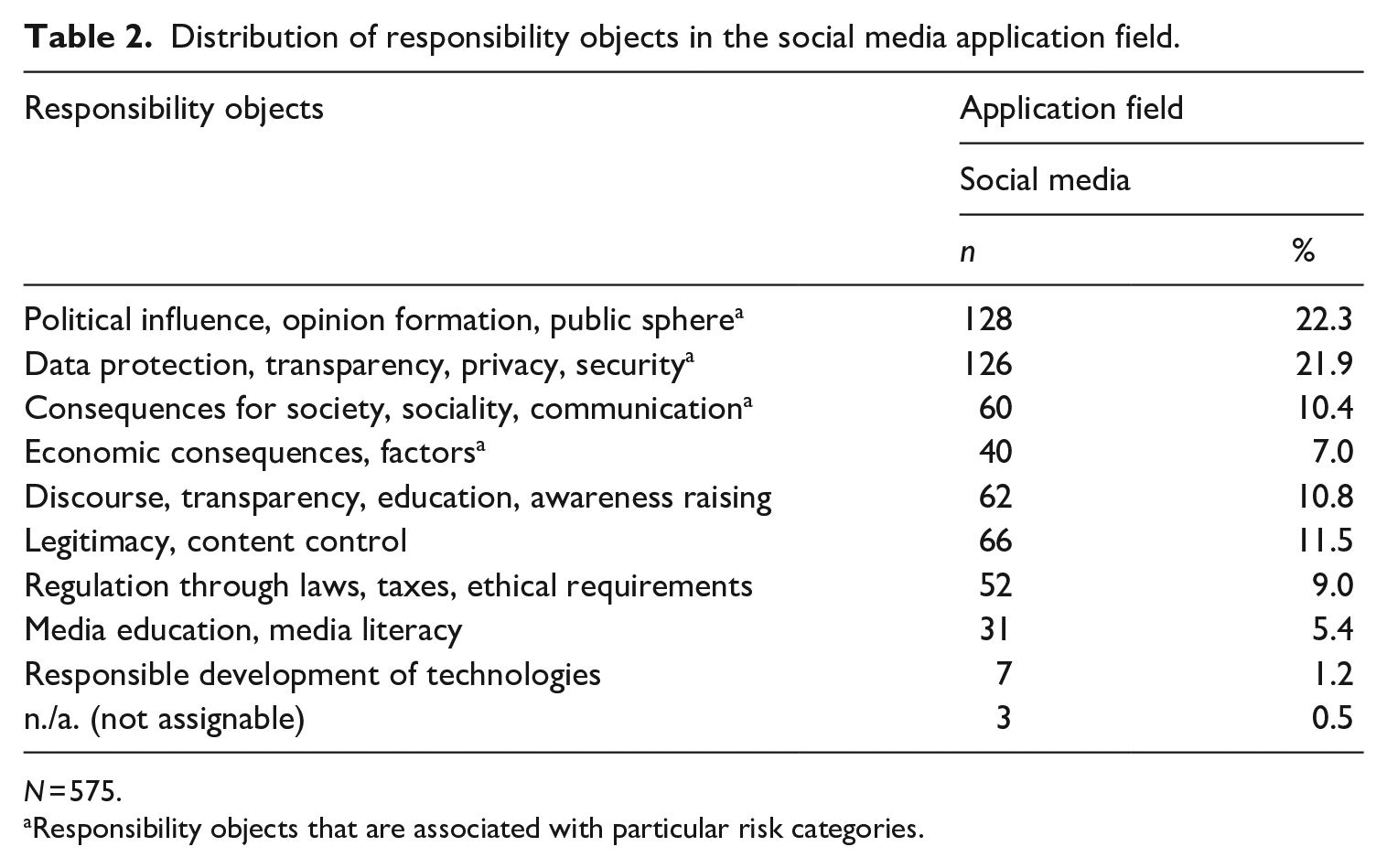

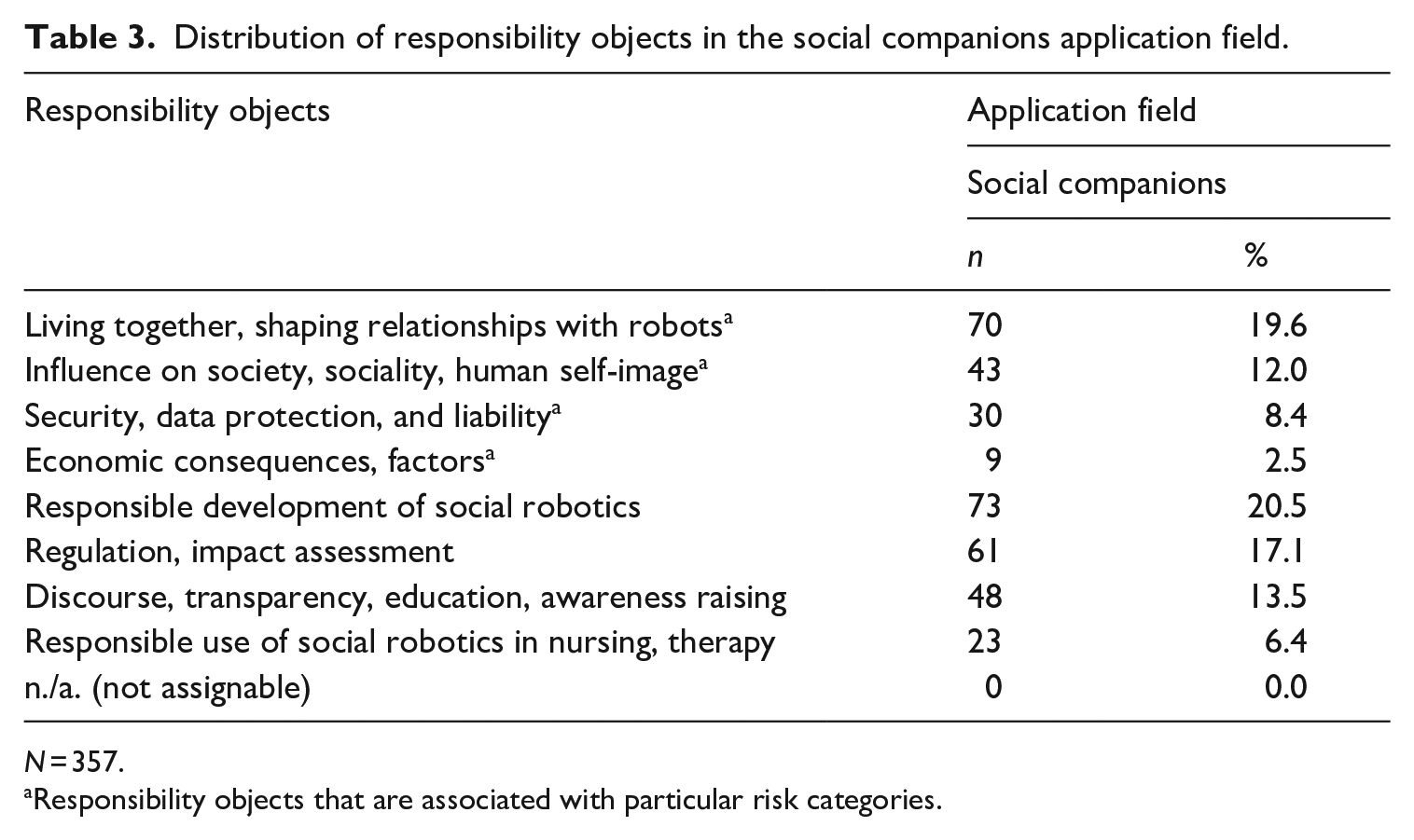

What challenges and opportunities for action are represented in the reporting on social media algorithms and social companions as objects of responsibility? To what extent are these responsibility objects associated with risk categories, and do differences exist between the fields of application? Tables 2 and 3 provide an overview of the coverage of various responsibility objects within the media discourse on social media algorithms and social companions.

Distribution of responsibility objects in the social media application field.

Responsibility objects that are associated with particular risk categories.

Distribution of responsibility objects in the social companions application field.

Responsibility objects that are associated with particular risk categories.

The results of the content analysis show that in both areas the thematization of responsibility objects is strongly focused on

Reporting also encompasses references to

Finally, there are remarkable differences in the demand for

Assignment of responsibility by speakers (RQ3)

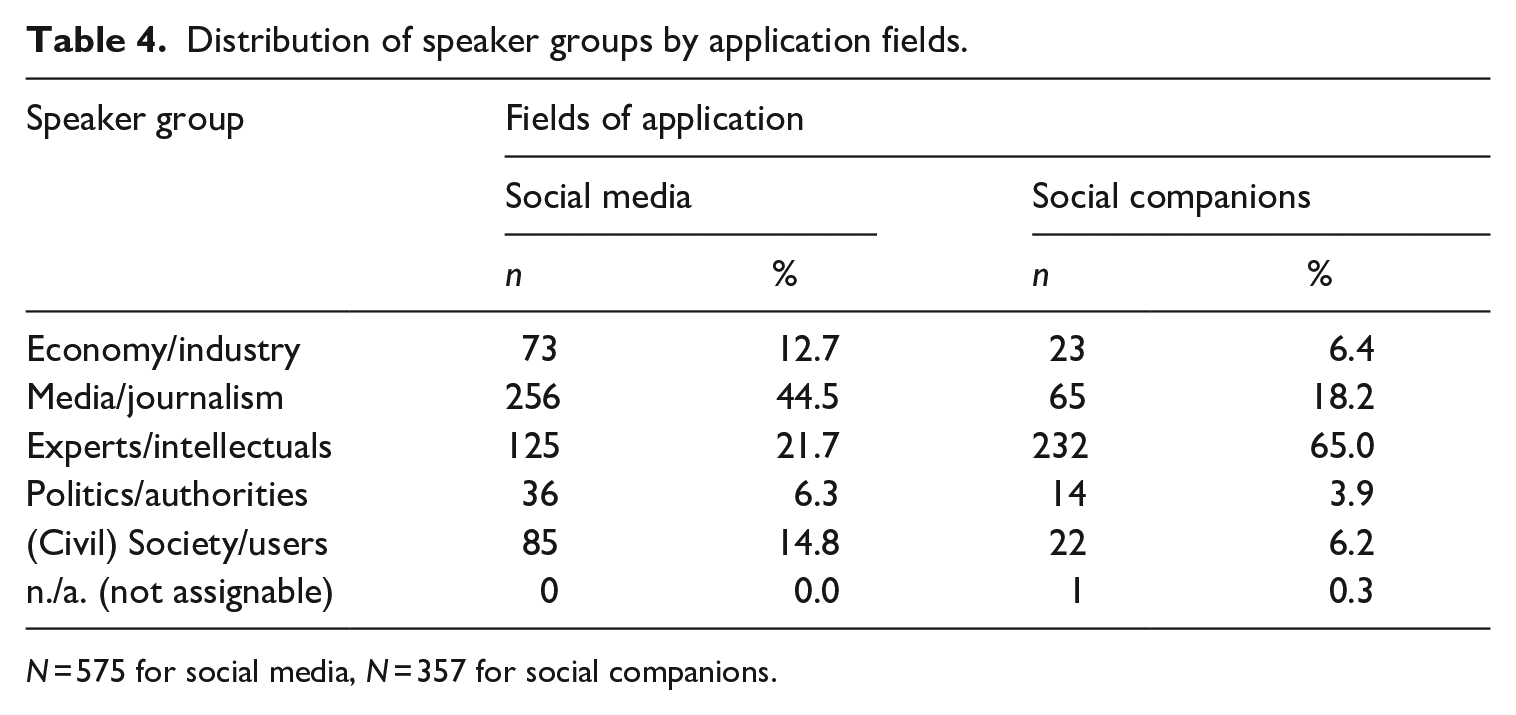

In media contexts, the question arises which actors gain visibility for their demands, and which actors are assigned responsibility (Who holds whom responsible for what?). Current research indicates that in reporting on automation, artificial intelligence, and robotics, it is primarily economic/industry actors who possess a voice and shape the public discourse. In addition, science and experts gain attention in responsibility discourses, while critical civil society interest groups receive little attention. The analysis of the constellations of speakers in the responsibility discourses on social media and social companions only partly confirms these observations and paints a more nuanced picture (Table 4).

Distribution of speaker groups by application fields.

Contrary to our expectations (H3), economy/industry actors play only a minor role as speakers in the responsibility discourses on social media algorithms and social companions. They account for only 6.4% (social companions) and 12.7% (social media) of the speakers. Hence, the responsibility discourses on social media and social companions differ significantly from the general discourse on automation, in which 32% of all responsibility assignments are made by economy/industry actors (Brantner and Saurwein 2021).

In line with the hypothesis (H3), however, experts and intellectuals have a say in both discourses, albeit to a different extent. With 21.7% they score second but do not particularly stand out in the coverage of social media algorithms. When it comes to social companions, the responsibility discourse is strongly dominated by experts (65%). Hence, in the responsibility discourse on social companions, the media primarily offers experts and intellectuals a forum for statements, criticism, and the assignment of responsibility.

When it comes to social media algorithms, news media and journalists (44.5%) predominantly act as speakers themselves, asking questions, voicing criticism, and assigning responsibility to actors or calling them to account. In reporting on social companions, the media and journalists appear significantly less often as speakers (18.2%).

Civil society actors such as individual users and public interest groups, who often contribute a critical perspective, do not play a central role in the constellation of speakers across both fields of application; however, they are more strongly represented in the discourse on social media topics (14.8%) than in the coverage of social companions (6.2%) or automation in general (10.2%, see Branter and Saurwein 2021). Thus, the debate lacks critical voices that incorporate the users’ and civil society’s point of view. Moreover, it is observable that the relatively positive tone in the reporting on social companions (see Table 1) is accompanied by the absence of critical civil society institutions in the media discourse.

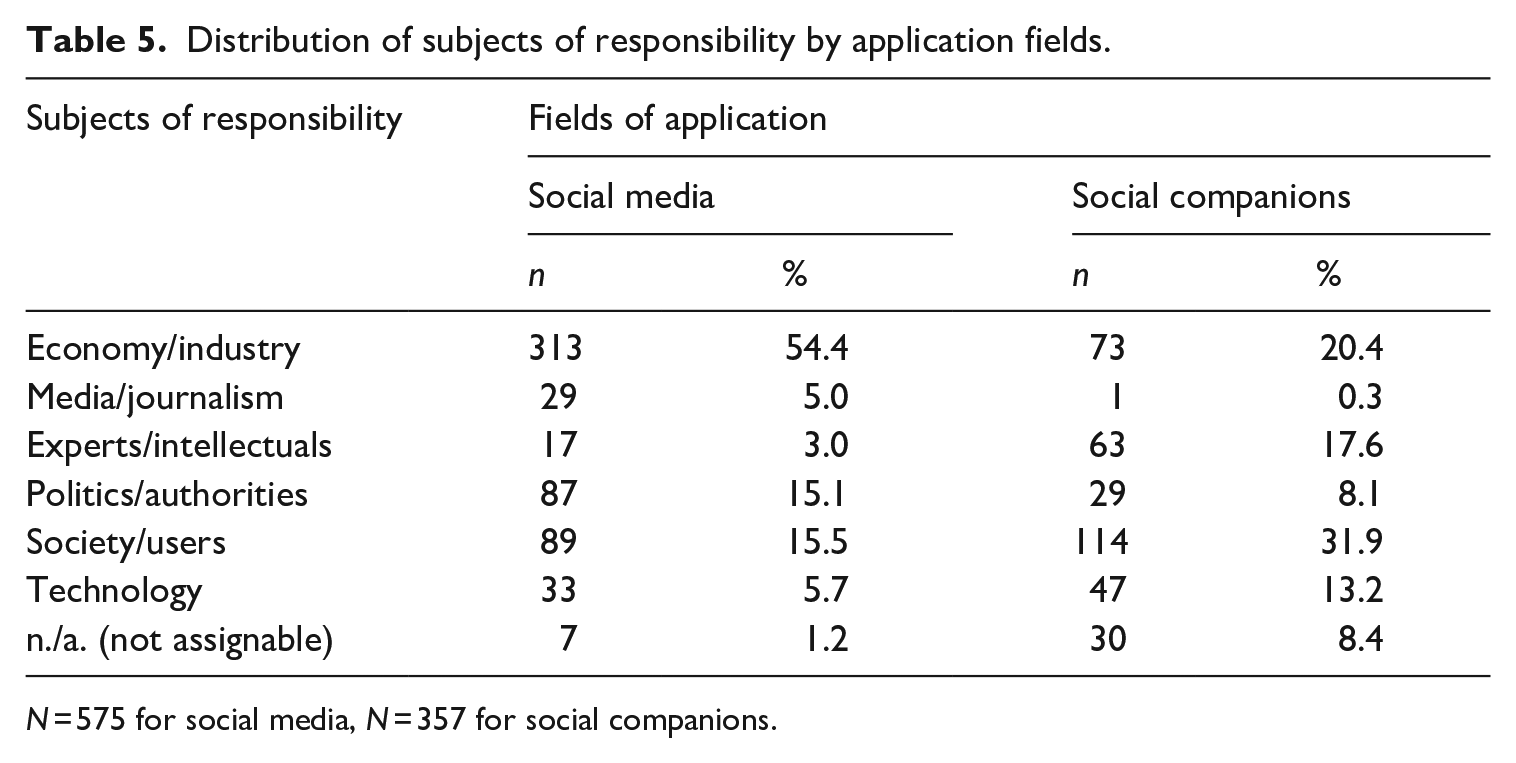

Subjects of responsibility (responsible stakeholders) (RQ4)

Central actors in responsibility constellations are those persons or organizations that bear responsibility and serve as

Distribution of subjects of responsibility by application fields.

The assumption of dominance of industry subjects is confirmed by the results of the coverage of social media algorithms: Economic actors and in particular the platform providers are at the center of the responsibility discourse (54.4%). Responsibility is also assigned—albeit to a considerably lower extent—to politics and public authorities (15.1%) as well as to society (at large) and users (15.5%).

In the case of social companions, the attribution of responsibility is less concentrated on economic actors (20.4%) and more diverse in terms of responsibility subjects. What is particularly striking in the coverage of social companions is the strong attribution of responsibility to social entities (society at large or individual users; 31.9%), which goes hand in hand with the problematization of risks of social robotics to sociality and interpersonal relations. A remarkably high proportion of responsibility attributions is also directed at scientific institutions, experts, and intellectuals (17.6%). This indicates many open technical, social, and ethical questions in the field of social robotics.

Technologies as responsibility subjects (RQ5)

Controversial topics of the scientific discourse on AI and robotics are the social and moral status of artificial agents, their ability to act and to bear responsibility for their actions. Consequently, we explore to what extent and how technologies are portrayed as responsible actors in the media (RQ5).

Table 5 shows that in the coverage of social media algorithms, technologies were held responsible in 5.7% of all responsibility references. In line with H5, technologies are more often assigned responsibility (13.2%) in the coverage of social companions.

Supplementary qualitative content analysis shed light on the contexts and forms of the thematization of responsibility for technology: Coverage of responsibility of social media algorithms is connected to reporting about the influence of algorithms on our perception of the world (e.g., filter bubbles), manipulation of users, and the influence of technology on political and social developments. In this context,

In the reporting on social companions, on the other hand, responsibility attributions are prospective and normative, stating how robots ought to be and act in the future. These references consider the responsibility of robots in private and professional human–machine relationships and the characteristics and capabilities robots should or should not possess to integrate harmoniously into relationships and society (emotionality, intelligence, rationality, moral capacity, judgment, autonomy, etc.). Furthermore, an attribution of responsibility to machines in the media often occurs through references to Asimov’s laws of robotics, which impose rules of behavior on robots to ensure safety in human–machine constellations. Finally, robot responsibility is also addressed in a legal context. This involves the legal status of machines, for example, as legal subjects with specific rights, the protection of technologies (e.g., robot protection laws), and liability issues (e.g., the electronic person).

Summary and conclusion

The economy and society are undergoing far-reaching changes that are shaped by a strong trend toward automation. In the course of automation, new, mixed sociotechnical constellations are emerging in which responsibilities have not yet been conclusively clarified. In this context of uncertainty, media reporting on robotics and artificial intelligence shapes the sociotechnical imaginaries of automation technologies in general (Jasanoff and Kim, 2015) and contributes to the social constructions of risks and responsibility in particular. This study therefore explored how responsibility is discussed in the Austrian news media’s coverage of social media algorithms and social companions. The innovative focus of this study contributes to existing research. Previous studies have primarily examined media reporting on particular automation technologies, without specifically addressing responsibility issues. On the other hand, existing analyses of responsibility attribution in media discourses have largely centered around controversial topics (e.g., Gerhards et al., 2007; Iyengar, 1996; Post et al., 2019), rather than focusing on automation-related matters.

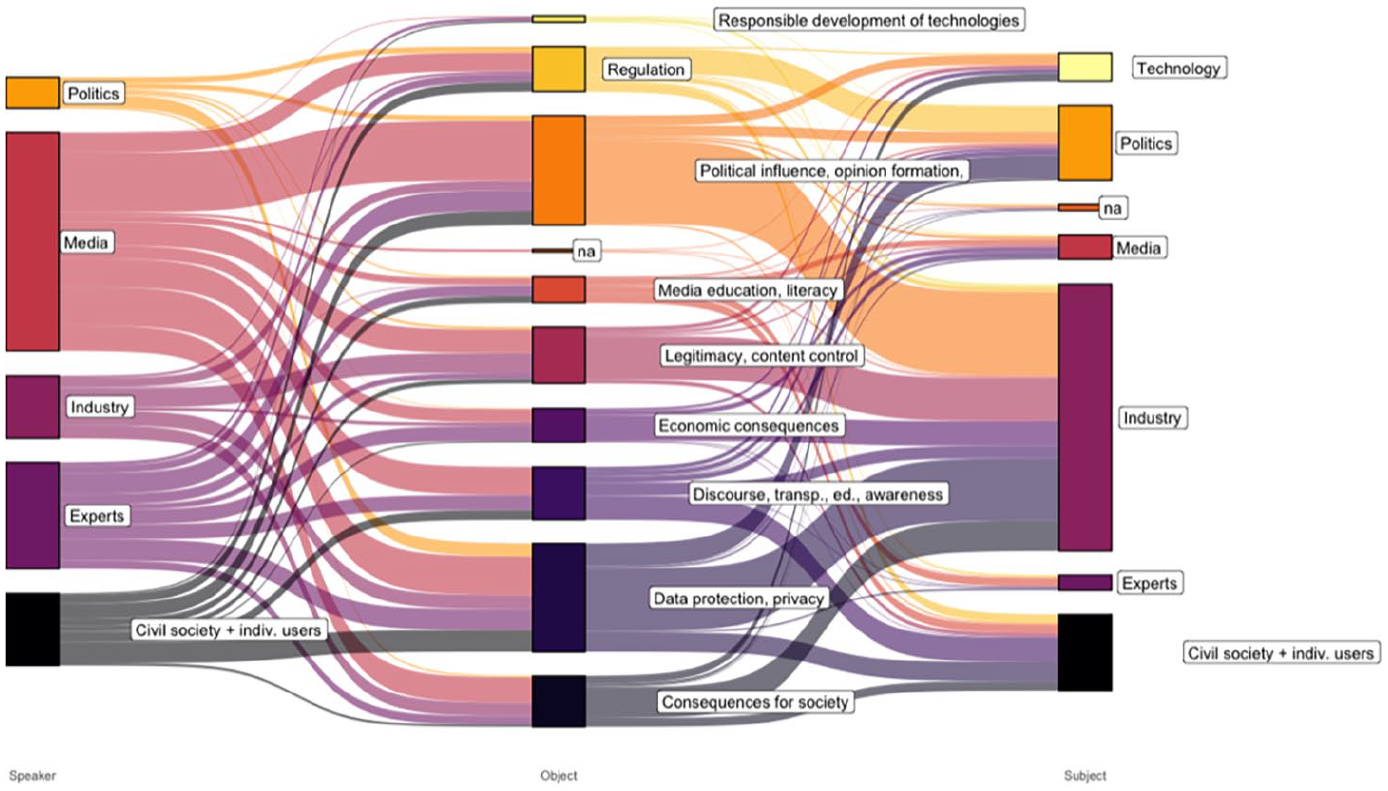

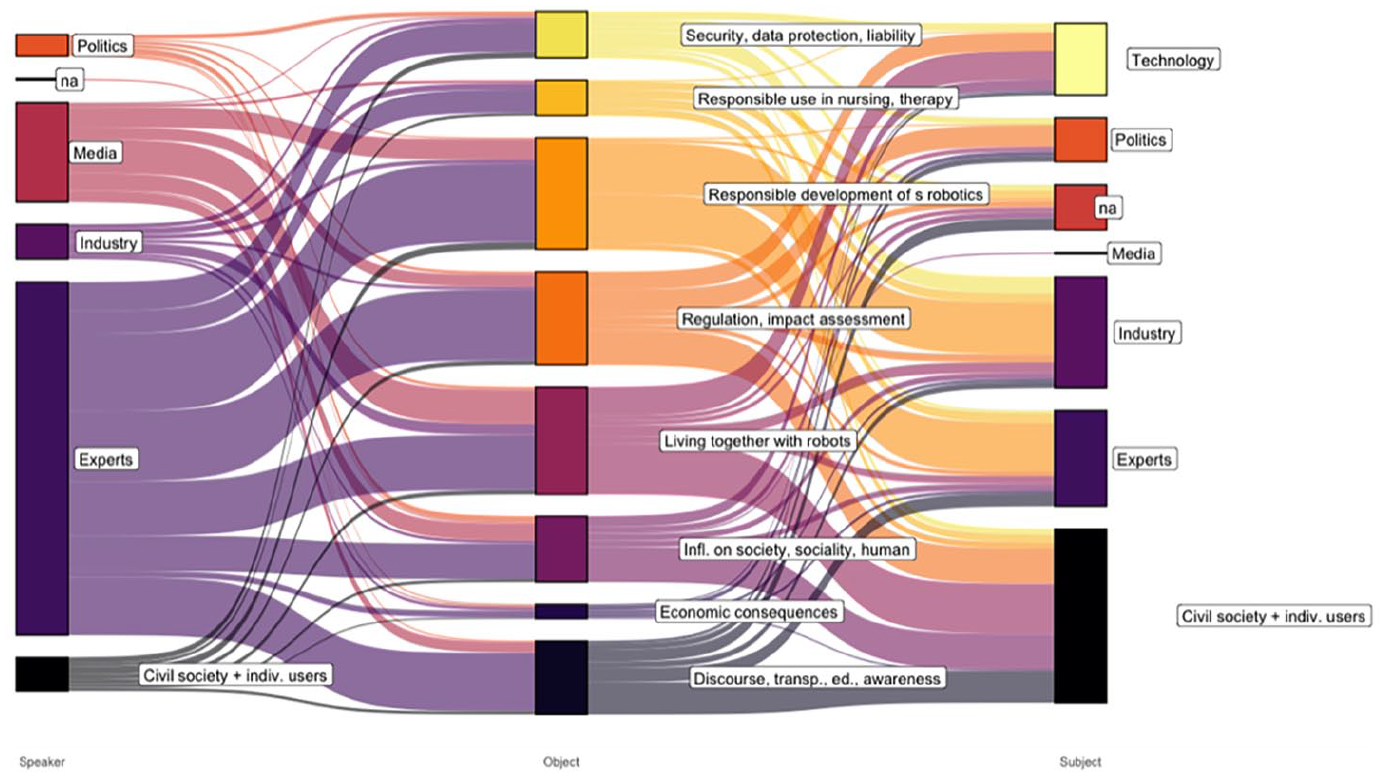

The present investigation centers around the question: “Who holds whom responsible for what?” The few available studies on speakers and responsibility subjects in the media coverage of automation indicate that actors from the economy/industry dominate the debates (Brennen et al., 2018; Fischer and Puschmann, 2021; Sun et al., 2020). The analysis of responsibility discourses on social media algorithms and social companions provides more in depth-insights into discourse patterns and shows remarkable differences (see Figures 1 and 2).

Pattern of the responsibility attributions in reporting on social media algorithms.

Pattern of the responsibility attributions in reporting on social companions.

In summary, challenges linked to social companions are mainly portrayed as social issues and challenges for technology development. They are articulated mainly by experts and presented as a responsibility for society, economy, and research alike. Conversely, regarding social media algorithms, responsibility discourse focuses on concerns regarding the public sphere, opinion formation, and data protection. Journalists play a key role in expressing these concerns, and responsibility is largely assigned to Internet platforms.

The study prompts further considerations regarding the constellation of speakers involved and the portrayal of responsible subjects, and it enables the drawing of valuable conclusions for (tech) journalism and future research.

Journalists’ high share in assigning responsibility in the debate about social media algorithms testifies to their control function by holding the actors involved accountable (Sarcinelli, 2011). In contrast, when it comes to the issue of responsibility of social companions, the media primarily provide a forum for discourse (Habermas, 1989) for scientists and experts. The findings indicate that journalists may perceive themselves as more qualified to speak on social media algorithms than on social companions. This could be attributed to the fact that social media is more closely intertwined with the daily lives of journalists, who are also users of social media. The impact of algorithms, both in social media and in journalism, on media and the working routines of journalists is significant (Diakopoulos, 2019; Shin et al., 2022; Simon, 2022). This is due to factors such as the growing integration of algorithms into newsrooms, the role of social media as a distribution platform for traditional media, and the challenges that social media poses to traditional economic models of journalism through increased competition for advertising revenue and user attention.

The role of technologies, particularly in relation to responsibility subjects, necessitates increased attention and comprehensive in-depth reflection and analysis. As technology becomes increasingly autonomous, questions arise regarding its agency and capacity to bear responsibility for its operations and their consequences (Floridi and Sanders, 2004; Gunkel, 2018; Hanson, 2009; Simmler, 2019; Wallach and Allen, 2009). In the coverage of responsibility, there are both similarities and clear differences between social media and social companions. In both areas, technologies are explicitly assigned an active role (e.g., “algorithms determine our perception of the world”; “robots increase the danger of social isolation”), but this occurs twice as frequently for social robotics as for social media algorithms. In the case of social media, responsibility is typically assigned in the sense that technology is portrayed as the cause of problematic phenomena. However, this attribution of responsibility rarely involves the conceptualization of algorithms as subjects capable of being accountable for their actions. In contrast, the media portrayal of social companions explicitly addresses the responsibility status of robots and the possibility of holding them accountable. One explanation for this difference in responsibility attribution is that robots, as opposed to social media algorithms, are more prone to anthropomorphization, leading to a stronger belief in and imagination of their potential to exert agency and carry responsibility. In any event, the question of whether robots should be considered (at least to some extent) as subjects of responsibility, and what properties, rights, and duties should be associated with them, is now a topic of discussion not only among experts but also in the news media.

The ever-increasing autonomy of technologies has resulted in a considerable number of assertions that directly assign risks and responsibility to technologies themselves. This, in turn, carries the risk of neglecting other potential subjects of responsibility such as users, programmers, operators, regulators, and more, in discussions surrounding risks and responsibilities. Consequently, tech journalism, in order to fulfill its control function and adequately inform the public, must be mindful of the origins and causes of risks, which often arise from the interplay between various actors and technologies within sociotechnical assemblages. These encompass the design and usage of technologies, commercial interests, social practices, and the surrounding context. In-depth scientific media content analyses are, therefore, necessary to assess whether media reporting on automation effectively captures these constellations of actors and technologies, their interactions, and shared responsibility. To that end, future work would benefit from hybrid approaches combining computational and manual content analysis (Zamith and Lewis, 2015). Thorough qualitative and quantitative manual analyses are essential to delve deeper into responsibility discourses, surpassing the limitations of automated topic modeling and surface-level analysis of sentiments toward emerging technologies.

This study stands as the first investigation into the attribution of responsibility in media reporting on select automation technologies. However, it is confined to the examination of reporting about social media algorithms and social companions within Austrian legacy media from 2000 to 2018. Future research should broaden its scope to encompass different media types and newer developments in the responsibility discourse, particularly in reporting on (generative) AI. Furthermore, it is crucial to consider the influence of cultural and societal contexts on responsibility narratives, exploring variations in responsibility discourses across different countries. In addition, the implications of responsibility attribution in media reporting for policy-making and governance of automation technologies require careful consideration.

Footnotes

Acknowledgements

This research was conducted as part of a project on “Media reporting on algorithms, robotics and artificial intelligence: Representation of risks and responsibility in the automation debate (MARA).” The project was hosted by the Institute of Comparative Media and Communication Research (CMC) of the Austrian Academy of Sciences and carried out in collaboration with the Austrian Center of Digital Humanities and Cultural Heritage of the Austrian Academy of Sciences and the Institute of Philosophy of the University of Vienna. We would like to thank our project collaborators Janina Loh, Christian Bauer, Stefan Resch, Sabine Laszakovits, and Matej Durco.

Authors’ note

All authors have agreed to the submission and the article is not currently being considered for publication by any other print or electronic journal.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was funded by the Austrian Academy of Sciences’ go!digital Next Generation program for excellent research in digital humanities.