Abstract

In the early days of the COVID-19 pandemic, excitement broke out around the potential for drones to generate aerial solutions to devilish pandemic problems. But despite the hype, pandemic drones largely failed to take to the sky and far from the scale initially imagined. This article pursues the failure of the pandemic drone to materialise, showing how it nevertheless functioned as a locus of experimentation for remote logics and processes. As such, we shift focus away from what the pandemic drone is to if and where it – or its logics – can be found. To learn from the pandemic drone, we turn to three trajectories of failure: failure as experiment, failure as imaginary and failure as glitch. With particular attention to specific case studies, we show how failure enables drone logics and processes to migrate across various socio-technical forms, sites and applications of automated decision-making responses to the pandemic.

In the early days of the COVID-19 pandemic, excitement broke out around the potential for drones to generate aerial solutions to devilish pandemic problems. China used drones to deliver medical supplies, disinfect villages and even provide lighting for the rapid, around-the-clock construction of a COVID-19 hospital in Wuhan. Globally, police departments announced that drones equipped with loudspeakers would be used to monitor and enforce social distancing. Proposals for drones that used remote sensors, pose estimation and machine learning to autonomously detect fever temperatures, coughing and social distance burst across the mediascape. Despite the hype, pandemic drones largely failed to take to the sky and certainly not at the scale imagined in the early days of the pandemic.

This article pursues the failure of the pandemic drone to materialise. We argue that while largely failing to materialise as techno-solution, the pandemic drone nevertheless functioned as a locus of experimentation for remote logics and processes. As such, we shift focus away from what the pandemic drone is (Martins et al., 2021) to if and where it – or its logics – can be found. Turning to the sites of the experiment, imaginary and glitch, through which the drone and its underpinning logics are at once located, accessible and (re)produced, we demonstrate that pandemic drone ‘failure’ rendered visible a distinct politics that raises questions of the role of failure in automated solutionism more widely. While drones may be largely absent from most skies (for now), they remain highly anticipated technologies. Examining both experiments with and speculative imaginaries (Jackman, 2022a) of the pandemic drone is thus a useful and pertinent task, not least because its various failures to launch nonetheless mark departure points for dispersed drone logics and processes that are no longer aerial but nonetheless pervasive. In recognition of the multiplicity of experiments with and iterations of the pandemic drone, we also mobilise ‘glitch’ thinking as a framework through which to attend to the ‘generative’ ways in which digital systems and devices can be creatively ‘interrupted’ (Leszczynski and Elwood, 2022: 361).

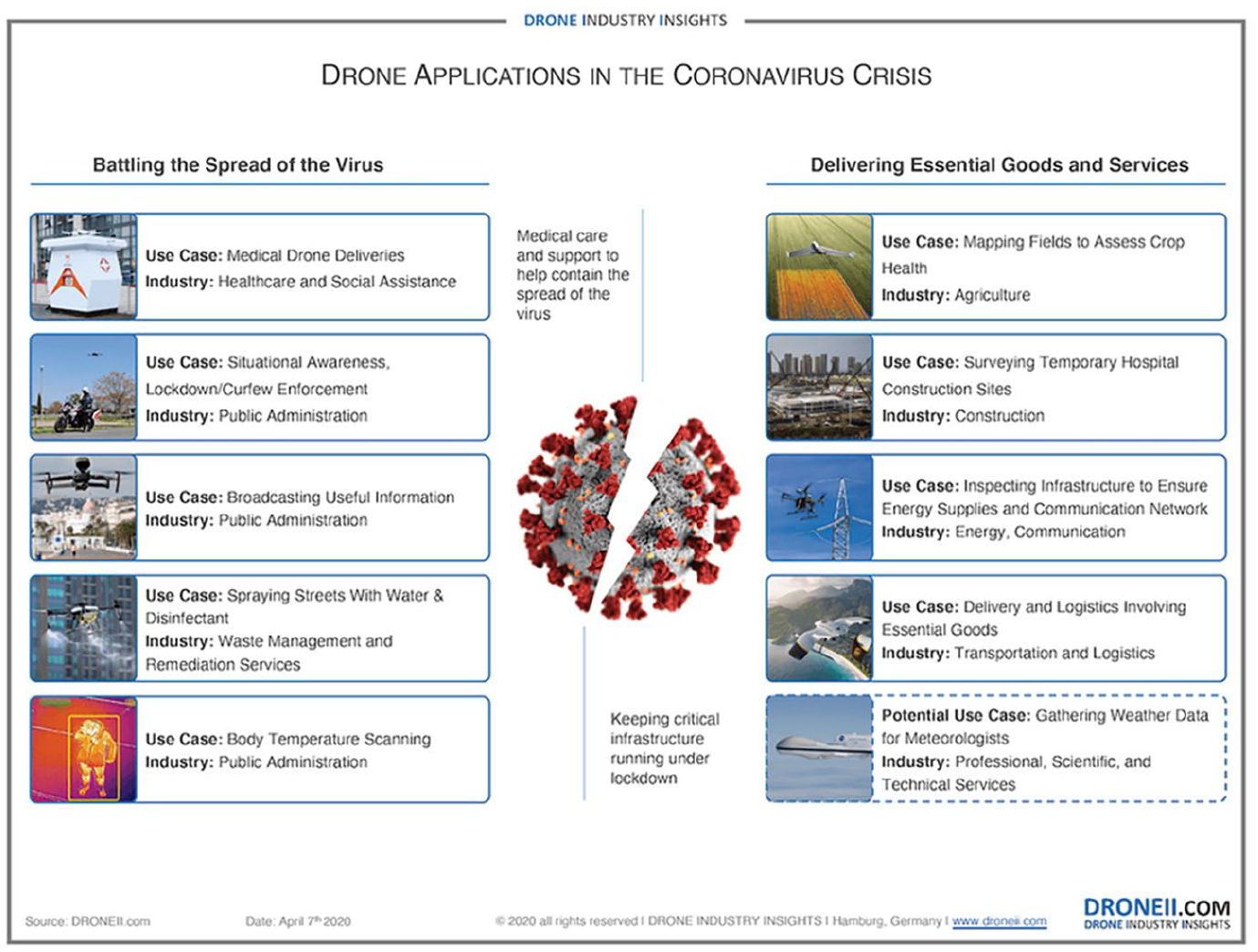

Within its tragic production of ‘new global geographies of death ranging from cellular to global scales’ (Maddrell, 2020: 107), COVID-19’s targeting of shared spaces and embodied interactions has spurred a boom in digital and robotic systems (Taylor, 2020). Even as the virus acted to ‘slow down and halt everyday mobilities’, so too was another ‘transport mode mobilised’ and accelerated: the pandemic drone (Hildebrand and Sodero, 2021: 148). As a platform premised on enabling action at a distance, drones seemed ideally suited to a context ‘marked by the persistence of social distance’ and in which proximity itself was a threat (Martins et al., 2021: 603, 605). While constituted by an increasingly diverse ecosystem of platforms, drone technology blurs ‘boundaries between military and civilian or battle-ground and home front’ (Kaplan and Miller, 2019: 419). Pandemic drones promised to rebrand ‘remoteness’ as that which is ‘economically synonymous with lifesaving’ (Taylor, 2020: n.p). As a form of ‘inorganic body’ that can come together with the ‘organism’ of the coronavirus, the pandemic drone at once appealed and responded to calls for remoteness that ‘limit contact’ between human bodies (Sumartojo and Lugli, 2021: 7, 11). As depicted in Figure 1, this has involved both the reworking of existing drone applications and the development of potentially novel approaches to tackle pandemic challenges (We Robotics, 2020).

Drone applications in the coronavirus crisis.

Established drone applications and imaginations have been reworked in the context of the pandemic, such as in the drone delivery of COVID-19 tests, samples and personal protective equipment in a range of locations globally (Martins et al., 2021). Concurrently, a second group of pandemic drone applications emerged, comprising novel propositions directly responding to the specific challenges of the pandemic (Figure 1). From spraying disinfectant to the monitoring and gathering of temperature data, drones were envisioned as both data capture and communication actors and agile delivery and response agents. More frequently, the pandemic saw the drone deployed in the ‘policing of the public’ (Hildebrand and Sodero, 2021: 149) via the enforcement of social distancing using drone-mounted cameras and loudspeakers. In one case, a video of a police drone hovering over and ‘scolding an elderly woman for not wearing a mask’ went viral, prompting concerns that the pandemic’s extension of the so-called ‘good drone’ was instead emerging as a ‘disaster in the making’ (Jumbert and Sandvik, 2017; Richardson, 2020). Yet despite this media presence and several pilot initiatives, pandemic drones never took flight at scales initially imagined.

Examining pandemic drone propositions, scholars have urged attention to the ‘politics of the vertical’ encompassing data collection and logics of automation of particular kinds (Hildebrand and Sodero, 2021; Kaplan, 2020; Martins et al., 2021: 605) and the ways these ‘automation possibilities’ variously ‘restructure’ and reshape everyday life (Macrorie et al., 2021: 198; Mintrom et al., 2021). This article is situated in the unruly space of ongoing implementations, actual experimentations and imagined and projected use cases. Rather than continuing to ask what the pandemic drone is, we instead consider how it has failed to materialise in the ways imagined by both critics and cheerleaders. To do this, we turn to three sites and trajectories of failure: failure as experiment, failure as imaginary and failure as glitch. Drawing inspiration from geographical thinking conceptualising ‘digitality as at once multivalent’ and comprised of both ‘material’ technologies, ‘aesthetic’ imaginaries, legitimating discourses and ‘logics through which digital systems transduce particular socio-spatial configurations’ (Leszczynski and Elwood, 2022: 363; Ash et al., 2018), we turn to the sites of experiment, imaginary and glitch as those through which the drone and its underpinning logics are at once located, accessible and (re)produced. With particular attention to the much-reported case of Draganfly’s pandemic drone, we demonstrate both how failure enables drone logics and processes to migrate across various socio-technical forms, sites and applications and that pandemic drone failure itself is multiple. We then interrogate the implications of (failed) pandemic drones in relation to the theme of this special issue on automated decision-making and the pandemic. Given the drone’s violent legacies, we argue that, even in its seeming failure, the pandemic drone’s material and speculative manifestations should promote pause.

Forms of failure: mapping pandemic drone ‘failures’

Research on the emergence of new technologies tends to focus on how successful or pervasive technologies come to be, but failure can also be instructive (Latour, 1996; Perriam, 2023; Sadowski, 2021). In the context of complex systems, accident (Perrow, 1984) and failure (Dekker, 2011) are inescapable even as efforts are made to mitigate their effects (Dörner, 1997). Failure reveals much about technological systems, whether stock markets and other digital infrastructures (Appadurai and Alexander, 2020), the functionality (Raji et al., 2022) and knowledge claims of algorithms (Amoore, 2019) or the errors and injuries produced by failed and failing biometric systems (Lisle, 2018; Magnet, 2011). Attending to failure in autonomous vehicle accidents, for example, complicates narratives of ‘automation’s efficacy’ and diversifies our ‘understandings of the sites and operation of power in automated systems’ (Bissell, 2018: 57). Simply put, failure is an endemic feature of technology (Sadowski, 2021) that can be produced and performed via digital and social media platforms (Perriam, 2023). Drones are no exception. In her genealogy of the US military drone as a project ‘built on failures’, Chandler (2020: 15) explores the multiple ways in which ‘drone networks’ both ‘come together but also fail to cohere’, unpacking the ‘politics that emerge through these relations’. Turning to failures of ‘crashing, sinking, exploding and sputtering’, Chandler (2020: 36) reminds us that moments of failure were, and remain, an important part of drone technology.

We approach the pandemic drone with a similarly generative view of failure, one that recognises the limitations of ‘success’ and ‘failure’ as categories (Latour, 1996; Lipartito, 2003) but pursues instead how failure is manifested, performed, repurposed and mobilised in efforts to apply drone ‘solutions’ to pandemic ‘problems’. Drone technologies work differently in different contexts (e.g. humanitarian or conservation applications), which in turn means that drone power is also heterogeneously dependent on specific interactions with people, environments and other sociotechnical systems (Fish and Richardson, 2022). Drone failures thus need to be understood in context and in line with the multiple ways in which power is manifested to understand the uneven role that militarism plays across pandemic drone failure. Reassessing the pandemic drone through the lens of failure reveals a range of often hidden or eschewed actions and relations. In thinking with failure, we consider the drone not solely ‘in flight’ but instead as a remote sensor, acting with autonomy – emplacing it within the wider context of drone logic (Andrejevic, 2015, 2016). In doing so, different visions, versions and (in)actions are rendered visible, highlighting the value of alternative and multiple vocabularies of automated drone failure.

Failure as experiment: translating drone technics to automated pandemic responses

In April 2020, Westport Police Department (2020), in Connecticut, USA, announced it was testing a ‘state of the art technology that may help combat the spread of Coronavirus’. In partnership with the Canadian drone company Draganfly, the ‘flatten the curve program’ would involve two drone-deploying phases, the first to ‘monitor social distancing’ and the second to ‘detect COVID-19 symptoms’ (Draganfly, 2020). Through its partnership with the University of South Australia (UniSA) and the Australian Defence Force (ADF), Draganfly proposed a technically complex system using remote biometric sensors to detect heart rate, coughing, sneezing and temperature, as well as identifying social distancing perimeters and violations. While phase 1 was initiated in Westport, the pilot project failed to make it to phase 2. As Draganfly (2020) noted in a statement, the trial ‘did not go over well with Westport residents’ who expressed concerns around privacy, prompting the Police Department to halt the trial.

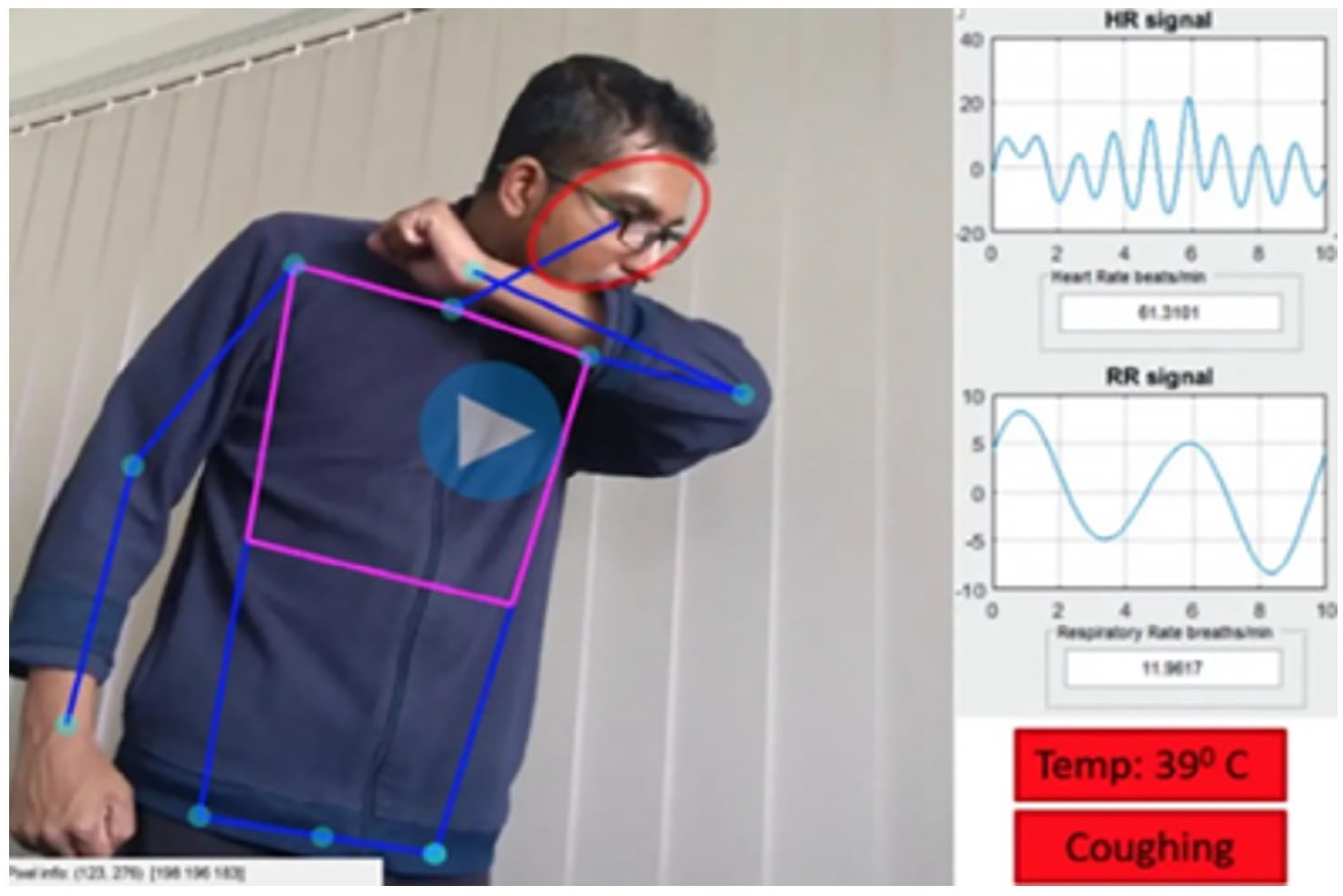

One of the oldest civic drone companies, Draganfly has been in operation since 1998 and the remote sensing technologies developed by UniSA’s Professor Javaan Chahl and team originate in prior collaborations with the Defence Science and Technology Research Group (DSTG), the innovation and industry partnership arm of the ADF. Entering the pandemic, Draganfly CEO Cameron Chell quickly dismissed both the equipping of drones with loudspeakers and the use of thermal cameras for direct reads on body temperature, stating that they ‘needed to provide more value than typical use cases’ (Protalinski, 2020). Instead, ‘Vital Intelligence’ technology developed by Chahl and DSTG worked differently, embedding machine learning analysis of visual imagery into a package that could be deployed on Draganfly drones. First conceived for use in war zones and disaster relief (Al-Naji et al., 2019), the experimental technology uses machine vision analysis of minute variations in thermal camera results to measure heart rate and, from there, internal temperature.

According to Chahl and Chell (University of South Australia, 2021), privacy concerns could be readily mitigated via encryption, non-recording of imagery and other measures. Both also claim that the system was never designed to record individualised public health data or diagnose COVID-19, but rather to provide wide area-focused public health information, such as flagging concentrations of people with elevated temperatures or high rates of coughing. Similarly, Chahl claims facial recognition had been eschewed in favour of pose estimation techniques more suited to tracking bodies in crowded places. Yet in their attempt to reckon simultaneously with people as individuals and populations, pandemic drones echoed their military counterparts, which enable ‘visibility precisely so that it can be made productive within existing regimes of power’ (Parks, 2014: 2519). Thus, pandemic drones risked exacerbating or amplifying surveillance and security measures that already disproportionately target vulnerable people (Jackman and Brickell, 2022).

Despite claims of imminent alternative pilot projects (Protalinski, 2020), we could find no record of Draganfly’s pandemic drone taking to the air in further public trials. While this may attest to reticence in the wake of Westport, the technical challenges likely played a considerable role too. Draganfly and UniSA’s video imagery always showed idealised test conditions: known bodies seen close up and in simple, uncluttered environments. Dealing with crowded spaces full of mobile bodies, such as beaches, parks, train stations and stadiums articulated as primary use cases (Protalinski, 2020), presents the kind of complex challenges that are well-known stumbling blocks in attempts to move automated decision-making ‘solutions’ from controlled settings to the messy, unpredictable wild of social life (Star, 2002). This kind of system failure – what we might understand as techno-social error – is all too familiar to contemporary cultures of technological solutionism.

But the curious thing about the pandemic drone failing to take flight is that its technics – and more importantly its logics – were readily translated to other contexts. Within a year, the ‘Vital Intelligence’ machine vision system had been decoupled from the aerial motility of the drone, amplified with breath rate and oxygen saturation detection and built into Smart Vital Kiosks for ‘point of entry screening’ at universities, hospitals, schools and industrial workplaces (Spence, 2021). First installed at Alabama State University in late 2020, the kiosks enact familiar logics: autonomous monitoring of individuals and groups for biometric indicators of COVID-19, with the aim of (re)shaping social behaviour. Here, though, the technology is decoupled from the formal institution of the police and instead introduced as a surveillant policing function within the built environment. If ‘drone logic’ is constituted by the way ‘logics of remote sensing, networking, distributed ubiquity, mobility, and automation coalesce’ (Andrejevic, 2015: 9), then it is identifiable in pandemic drones and Smart Vital Kiosks alike. Tethered to the ground, the kiosk still shapes human mobility: it targets movement through screening points, refiguring the student or worker into emergent threats to public health. Error and risk introduced by the instability and contingency of the mobile aerial platform are significantly reduced, such that the grounding of the platform becomes a selling point.

Despite the seeming disparity between static kiosks and motile aerial vehicles, the migration of Draganfly’s pandemic drone across contexts echoes what Andrejevic (2016: 26) calls the ‘dedifferentiation’ of drone technologies, in which uses blur, crossing back and forth between material forms and civilian and military applications. As Sumartojo and Lugli (2021: 13) highlight in their analysis of pandemic robotics, the ‘unfinishedness of technology offers opportunities not only for improvisation as a valuable mode of technology development and use, but also for imagining what might come next’. In other words, we can approach the drone and its underpinning logics as ‘malleable’ (Jackman, 2019), embracing sites of failure as much as function in the exploration of the drone’s elasticity as it is refashioned and reimagined across multiple contexts and applications. Thus, mapping the pandemic drone’s ‘failure’ requires us to consider not only where failure takes place and the effects of environmental context but also how the unfinished quality of certain pandemic techno-solutions allows them to migrate in search of sites of possible implementation and potential profit. Failure is neither final nor simply a stage in product development, but rather part of the experimental process of finding viable use cases and exploiting their value.

Failure to materialise: deconstructing imaginaries of the pandemic drone

While it remains important to examine the functioning drone, there is analytical value in attending to the drone as it is imagined. In recognition that our worlds are produced in part through the struggle over speculative futures, this section foregrounds (pandemic) drone imaginaries that blur categories of ‘real’ technology and the ‘world of imagination’ and ‘success’ and ‘failure’ alike (Latour, 1996: 68). In doing so, it shifts attention to the pandemic drone’s failure to materialise through the alternative lens of the pandemic drone imagination. In approaching failure otherwise, it argues that such imaginations at once actively and productively promote drone promise and hype while failing to materially realise such visions.

In robotic and techno-futures, ‘automation exists as much in the imagination as it does in practice’ (Kinsley, 2019: n.p), with imaginaries constituting an ‘important part of the assemblage of robotic technologies’ (Sumartojo et al., 2021: 99). As scholars have shown, imaginaries of technological and urban futures at once articulate promises (Richardson, 2018) and act to (re)produce relations and ‘hierarchies’ (Melhuish et al., 2016: 225). As such, it remains important to interrogate both the ‘fantasies’ underpinning the advent of the ‘good drone’ (Jumbert and Sandvik, 2017: 5) and the ‘power relationships’ represented and entangled therein (Jackman and Brickell, 2022: 165). So too have scholars demonstrated the importance of attending to moments where techno-imaginaries fail and are undone. In an exegesis of the autonomous vehicle through the lens of the accident, Bissell (2018: 57) argues that, in moments of failing to perform, ‘overlooked interruptive material agencies’ are revealed. Moments of failure can thus be understood as ‘heuristic devices’ to ‘excavate’ (Graham, 2010: 1,3) the drone’s political economies.

Furthermore, failure is multi-faceted: at once ‘thwarted, stalled’ and ‘failing to deliver on its promises’ (Temenos and Lauermann, 2020: 1110, 1113). Drones can materially fail (crashing and sputtering) but also fail to exist at all. Here, Latour’s (1996) quasi-fiction tale of ‘Aramis’, a public transportation system traced from its inception to dissolution, is instructive. Taking the ‘technological object’ of the transportation system as its ‘central character’, Latour (1996: vii, ix) offers an ‘autopsy of failure’. Through the lens of diverse humans and nonhuman actants, Latour (1996: 79) highlights the agential capacities of that which fails to ‘impose themselves so as to become objects’.

Returning to Draganfly, public outrage around privacy was accompanied by the research team stating that further work was required to ‘mature’ the technology ‘into real world applications’ (Khanam et al., 2019: 1). For example, when pressed about the testing of its pandemic drone ‘with a variety of skin tones’, Draganfly’s CEO replied that this raised some ‘challenges’ (Protalinski, 2020: n.p). He continued that, while you can ‘get a very meaningful population sample’, the technology may fail to function ‘because of lighting, because [the test subject] has a hoodie on, or [a] certain type of skin tone’ (Protalinski, 2020: n.p). Like Aramis, Draganfly’s pandemic drone can thus be understood as a techno-blueprint imagination of a system that hasn't fully materialised, but the constitutive parts of which nonetheless remain agential. After all, given the wider context of discrimination in and through artificial intelligence (AI)-enabled technologies, it remains clear that even failed attempts at dronified airspace reveal it as a ‘contested realm’ through which power is ‘exercised in highly unequal ways’ (Klauser, 2022: 56). As Latour (1996: 68) notes, ‘definitions of reality, feasibility, efficiency, profitability’ and value differ for diverse actors and communities. As such, the ‘political implications of automation’ are both ‘complex and contingent’ (Bissell, 2018: 59). Further attention is therefore needed to the ‘assumptions, biases’ and ‘values encoded’ (Walker et al., 2021: 206) in sociotechnical imaginations that present and ‘prescribe’ potential and failed pandemic drone ‘futures’, those acting to serve and protect some and to ‘exclude’ and marginalise others (Jasanoff and Kim, 2009: 120). While imaginaries are thus associated with performative power (Bissell et al., 2020), so too is the pandemic drone’s ‘failure’ productive: it has ‘generative effects’ (Temenos and Lauermann, 2020: 1113). In short, while the pandemic drone failed to materialise, imaginaries of its potential remained both lively and contested.

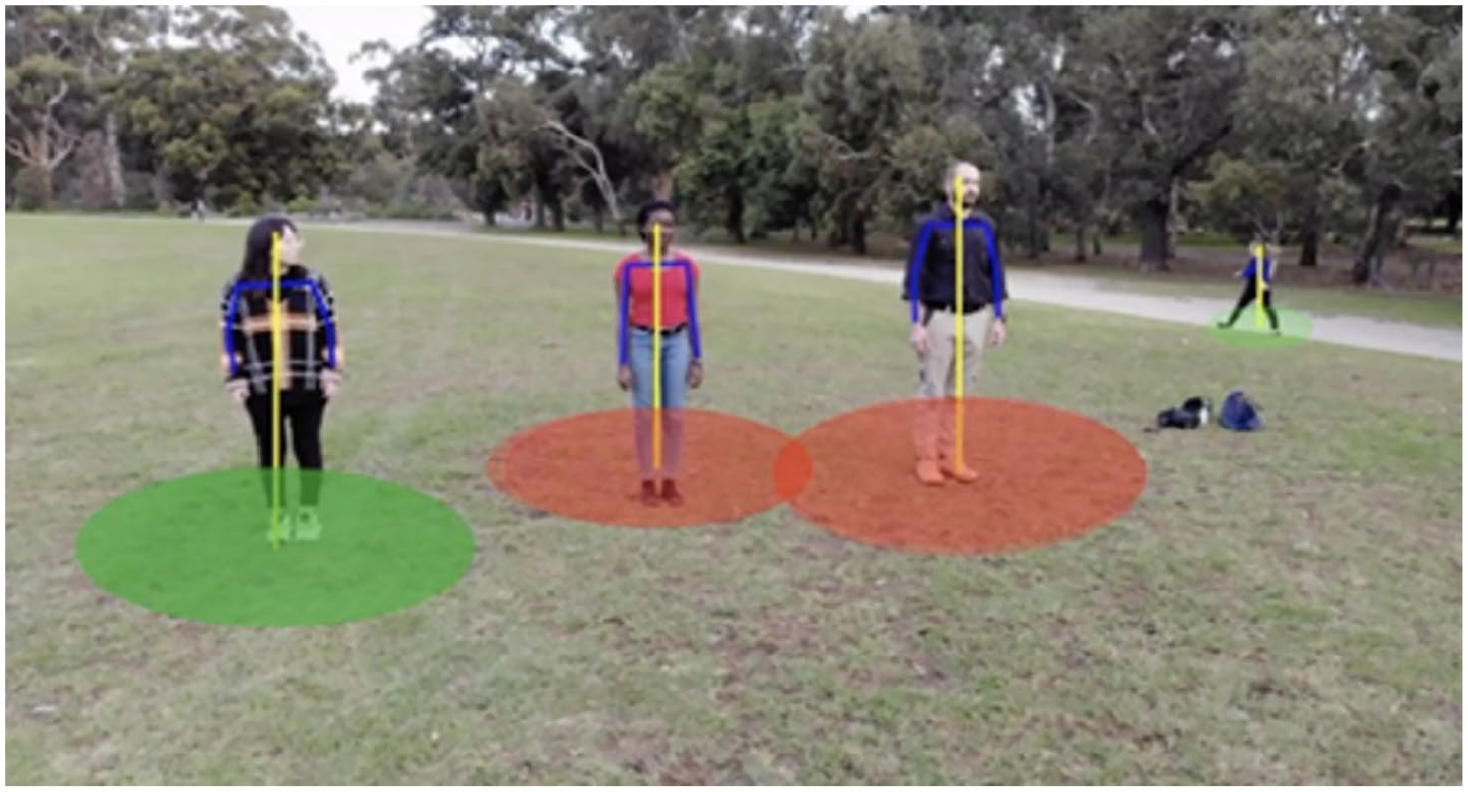

While typified through the case of Draganfly’s pandemic drone, such concerns can also be found elsewhere. In California, drone company FD1 appeared on local news stations to promote their pandemic drone designed to ‘fight against COVID-19’ by gathering ‘real-time information’ about ‘crowd size, social distancing’ and mask-wearing adherence (Aarons, 2020: n.p; ABC 10 News, 2020). With the assistance of AI, the drone and accompanying software ascertain the ‘distance between people’ and ‘detect which people are wearing masks, and which people aren’t’, showing ‘green dots’ on the screen for those complying and red dots for those who are not (Aarons, 2020: n.p). While touted as a ‘tool’ to enable pandemic-busting ‘AI for good’ at sites from ‘parks, beaches, schools, shopping centers, or anywhere else people gather’ (Aarons, 2020: n.p), the company’s owner nonetheless lamented that in spite of reaching out to a number of ‘cities and schools’ their pandemic drones remain ‘grounded’ due to ‘budget and training concerns’ (ABC 10 News 2020: n.p). In addition to demonstrating the drone’s elasticity, the FD1 pandemic drone’s failure to materialise also raises critical questions about its potential use. Which kinds of social practices, communities of gathering or ‘worlds’ might such technology ‘make possible and foreclose’ (Walker and Winders, 2021: 164)? To what extent, might darker skin tones flummox its systems or produce racialised outcomes (Buolamwini and Gebru, 2018)? How might the preemptive conceptualising of people as potential threats to popular health refigure shared spaces?

Scholars have highlighted that this kind of ‘drone capitalism’ is at once ‘increasingly entangled in daily life, impinging on bodies’ (Richardson, 2018: 79) and not yet present, but rather poised to reshape everyday lives in uneven ways (Jackman and Brickell, 2022). Here, the FD1 example resonates with the idea that, while the number of ‘lofty predictions about how drones would help humanity survive the pandemic’ grew, many of such propositions failed to ‘actually come true’ (Greenwood, 2020: n.p). Yet so too does the example remind us that even in their proposition and failed materialisation, drone imaginaries remain productive, acting to ‘perform’ in their tacit legitimation of wider drone markets (Crampton, 2016: 141). In this vein, alongside noting the pandemic’s ‘exertion of pressure to fast-track’ drone experimentation, so too can we understand the pandemic drone’s (failed) materialisation as influencing the drone’s ‘public acceptance’ more widely (Chen et al., 2020; Martins et al., 2021: 608). After all, the pandemic drone (whether in flight or as it is proposed and imagined) has material outcomes that exceed itself. Whether or not it fails (to materialise), the pandemic drone acts to shift understandings and agendas of drone futures more widely.

Failure as glitch: thinking failure otherwise

We have identified the ways drones have been conceived as a techno-solution to pandemic constraints, whereby pre-existing drone imaginaries and use cases have accelerated. These prepositions have ushered in critiques that point to the consequence of such applications: for instance, as increasingly adaptive, scalable and accessible to numerous actors and entities, pandemic drones emerge as a site of production for new forms of militarism (Kaplan, 2020) and mobility (Hildebrand and Sodero, 2021) that extended automated decision-making as a pandemic solution. Following from the work of Crandall (2011) and Chandler (2020) on the generative potential of drone failure – its capacity to invite new ways of operating or to reveal alternative lineages of the drone itself – this section engages with wider thinking borne of attention to the digital to propose that failure can be thought of otherwise than anticipated. Drawing upon ‘glitch’ thinking, we demonstrate that failure can expose the playful, inventive and resistant forms of the pandemic drone. Here we see how a normative notion of failure obscures the dynamic, discordant and multifaceted ways that drones have been adopted in response to pandemic constraints.

This approach complicates a prescriptive notion of failure/success in the context of the deployment of drones during the pandemic. Diverging from the ‘material assemblages and discursive frameworks’ (Leszczynski, 2020: 190) that shape the idea of the ‘pandemic drone’ as a techno-solution, we can turn to the drone’s various manifestations, those which can undermine dominant state and military-leaning accounts of what drones do (Jackman and Brickell, 2022: 159). In parallel to the pandemic drones discussed thus far, drones have also emerged as a means of resisting constraints, for increasing social connection and engagement and for engaging in playful and novel responses to the isolating dynamics of the pandemic. Drone affordances have, for example, enabled entertainment and play: in South Australia, someone shared glasses of scotch with their neighbour via drone delivery (Yahoo, 2020); stranded in Sydney on a cruise ship, a couple fabricated a story telling how, during their isolation period aboard the ship, they’d arranged for a wine delivery via drone (AFP Australia, 2020) and in Brooklyn, a photographer used their drone to ask someone dancing on a rooftop for a date (Fleming, 2020).

Here we can valuably turn to work at the intersection of drone studies and feminist geopolitics, which at once sheds light on ‘the growing range of non-state actors mobilising, experiencing, and subject to the drone’ (Jackman and Brickell, 2022: 157), while arguing that to do so enables the thinking of failure ‘otherwise’ (Leszczynski, 2020: 201). By moving beyond a singular notion of failure/ success to demonstrate how ‘failure’ can also point to ‘variance’ and ‘unintended outcomes’ (Nunes, 2011: 3), such literature works to consider failure as a positive qualifier, reinforcing the notion that multiple dynamics of failure-success operate under the purview of the ‘pandemic drone’. In thinking failure otherwise, scholars have centrally mobilised the notion of the ‘glitch’ to explore the implications of failure as a mode of subversive, creative and playful engagement with technology (see Leszczynski, 2020; Lynch, 2022; Nunes, 2011; Russell, 2012). ‘Glitch’ thinking seeks to both name and foreground the ‘small-scale disorientations’, temporary malfunctions, errors and miscalculations that inform our everyday relations with technology and which have the potential to create ‘new horizons onto other vital paradigms and politics of digitality’ (Lynch, 2022: 1, 2). The ‘glitch’ is thus configured as an ‘epistemological approach’ (Lynch, 2022: 1) that invites us to pay attention to the offerings of such deviations for our understanding of technologies and their function(s). While the word ‘glitch’ commonly implies ‘error’ or computational ‘bug’, in conceptualising digitality, Russell (2012) reframes it as an opportunity for ‘erratum or correction to a system’ (Leszczynski, 2020: 191; see also Leszczynski and Elwood, 2022). By theorising in ‘the minor’, it is argued that we can make space for both the everyday ways in which people mundanely, hopefully and creatively intervene in and comprise (Leszczynski, 2020: 191) pandemic droning and the ways in which these practices challenge normative conceptions of identity and ‘the status quo’ (Russell, 2020: 20). Footage of a person in Cyprus walking their dog via drone – an act subsequently inspiring others to take on the practice (ABC7, 2020; NXD ShutterBox, 2020), is but one example of playful pandemic droning that reminds us that play at once ushers in ‘ways to be otherwise’ (Woodyer, 2012: 313) and renders visible alternative narrations and experiences of failure.

Furthermore, rethinking pandemic drones through the ‘glitch’ also brings into focus the ways that the drone has produced diverse perceptions of the pandemic itself. First, in addition to the pandemic drone’s attempts to manage the constraints of the pandemic documented in the first two sections, the drone was also used differently in the pandemic to make accessible and visually document what may have otherwise been inaccessible due to lockdowns. For example, as Kaplan (2023, forthcoming) notes, drone imagery played a key role in ‘documenting the shock of the first waves of social change as the virus spread around the globe’. Aerial photography of cities otherwise inaccessible revealed deserted city streets (BBC, 2020a), yet failing to capture those occupying these seemingly ‘empty’ spaces – the homeless, essential workers and the unemployed, as Zimmermann and Kaplan (2020: n.p) note, ‘masks the continuities of late capitalism’s inequalities’. Furthermore, images of mass grave and cremation sites from New York to India revealed flaws in public health systems in the face of the outbreak while simultaneously warning viewers of the consequences of breaching public health codes (BBC, 2020b; CNN, 2020). Drones produced what Kim (2021: n.p) calls ‘pandemic media’, a phenomenon referring to how media produced in times of disaster reform the mediascape through distinct documentation practices.

In so far as they deviate from popular conceptions of the pandemic drone as an automatic solution, these examples show how ‘failure, glitch, and miscommunication’ can be expressions of ‘creative openings and lines of flight that allow for a reconceptualisation of what can (or cannot) be realised within existing social and cultural practices’ (Russell, 2012: 3). The concept of the ‘glitch’ accords with what Bradley and Cerella (2019: n.p) refer to as ‘everyday droning’, whereby quotidian experiences and expressions of the drone recast centralised accounts of what drones can do. Akin to Irani and Silberman’s (2013: 611) work critically analysing Amazon’s Mechanical Turk (a crowdsourcing marketplace for remote human labour) in which the authors draw attention to the ‘Turkopticon’, an ‘activist system that allows workers to publicize and evaluate their relationships with employers’, so too can we afford attention and agency to the drone user as ‘drone subject’, a civilian (non-state) actor who ‘can exploit UAVs for her own ends’ (Bradley and Cerella, 2019: n.p), thus transforming the way in which the technology itself is mobilised. While, from flower delivery to tours of cities in lockdown (Jaffe, 2020), the multitude of expressions of the pandemic drone is not necessarily (or always) intended as acts of resistance, they at once demonstrate a diverse range of actions, inclinations and intentions and are demonstrative of how the disparate and errant entities and identities that manage the pandemic drone and its various functions and failures can subvert (inadvertently, even) corporate and state-driven logics. As Leszczynski and Elwood (2022: 371) assert, thinking with the ‘glitch’ thus facilitates a critique of automation ‘simultaneity’ attentive to ‘how techno-capitalism secures itself in cities through idiosyncracy as a distraction (glitch trick), and [to] how it is always-already being interrupted’. While this theme is unpacked further in the next section, this section highlights that the notion of failure is tethered to context, and, as these instances reveal, understanding failure also requires we account for the diverse and multiple conditions in which, or for whom, failure occurs or applies.

Drone failure in context: automation, logics and imaginations

While a complex and contested term, automation can be understood as the ‘institution of some kind of self-organization in machines and facilities’, that spans a ‘continuum’ of applications and capacities inclusive of ‘varying degrees of human non-intervention’ (Lin, 2022: 467). Premised, promised and imagined as an efficient and cheap replacement for human labour (Bissell and Del Casino, 2017), in practice, automation often entails the displacement, concealment, transformation or control of human labour, leading to charges that AI and automation are better understood as myths (Munn, 2022; Sadowski, 2018). Interrogating the significance of automation (material or imagined, real or fake), automation is understood as fundamentally productive, enabling and enacting ‘new socio-spatial relations’ and ‘new human/nonhuman relations’ alike (Bissell and Del Casino, 2017: 436). Critical analysis of automation thus demands attention to space, sociality, labour and power, as well as to technology itself. This includes attention to failure as it arises, of imagination, to materialise, or as it is otherwise.

In tracing their ‘failures’, we have argued that pandemic drones are emblematic of the force of ‘drone logic’ (Andrejevic, 2016). For Andrejevic, the ‘figure of the drone’ is better understood as an ‘avatar’ representative of a wider situation of ‘(quasi-)automated data collection, processing and response’ (Andrejevic, 2016: 21). Drone logic ‘extends the reach and scope of the senses’, acts to ‘saturate the time/spaces monitored’ and enables diverse forms of automated capture and response (Andrejevic, 2016: 23). In highlighting the ‘portability’ of ‘military-inflected’ drone logic from the battlefield to the sphere of everyday life, Andrejevic (2015: 206; 2016: 29) argues that drone logic is significant because of the ‘forms of knowledge’ its capture enables and its promotion of ‘the seemingly inevitable logic of algorithmic decision-making’. Distinct from other forms of automated response, pandemic drone logics act to combine automated decision-making with always-on capacities and remote sensing and processing. Such logics align with the techno-solutionism expressed in the early days of the pandemic. As an autonomous and mobile remote imaging technology, these logics imply that the surveillance of the publics and reinforcing of social distancing measures would no longer be within the remit of individual responsibility, but a function of the drone writ large. Even once the verticality of the aerial drone is abandoned and reimagined in favour and format of the stationary kiosk or other such application, the hierarchical force of verticality remains: drone logics retain the god’s eye claim to authority of the aerial view even as they disperse elsewhere. In other words, the techno-power of the drone’s capture lies not in its altitude, but in its hierarchical mediation – categorisation and subjugation – of the populations in its midst (Parks, 2016). While feminist scholars have critiqued understandings of the ‘aerial view as a given, neutral and all-seeing’ (Williams, 2011: 384), in its iteration ‘for good’, the domestic drone (and the products of its failure) remain both bound to and acting in the potential ‘detoxification’ of, violent military overwatch (Jumbert and Sandvik, 2017).

Such observations are significant because if we focus on prominent but narrow instances of (failed) pandemic droning, we risk absenting consideration of the wider sets of drone logics, relations and risks they entail. We can return to the example of Draganfly’s pandemic drone to reconsider what approaching the failed pandemic drone as automation logic can reveal. Here, Draganfly’s visualisations (Figures 2 to 4) help reveal the dynamics of the automated decision-making software embedded within the proposed pandemic drone. As Jackman (2022a: 3) writes in ‘re-approaching the more-than-military drone’ through its visualisation in ‘patents and speculative design’, visualisations remain under-examined as sites of normalisation and imagination, ‘domains’ in and through which drones are imagined, ‘made’ and ‘normalised’ (Jackman, 2022b) and those which remain active in shaping ‘the way we think, see and dream’ (Mitchell in Bissell and Fuller, 2017: 2487). Such work recognises the visual as at once ‘performative’ in its speculation and imagination of potential (techno-)futures (Kinsley, 2010: 2271) and encompassing of a ‘politics of anticipation’ therein (Bissell and Del Casino, 2017: 439). After all, in its expression of ‘possible futures’, the ‘visioning’ in promotional visualisations works at once to ‘condition’ understandings while seeking to garner support and build ‘confidence’ and markets alike (Kinsley, 2019: n.p; Wigley and Rose, 2020: 158). In other words, such promotional visualisations are productive forms of ‘storytelling’ that play an important ‘role in the evolution of automation’ (Bissell, 2021: 369).

Draganfly (2020) pandemic drone (https://draganfly.com/news/can-a-pandemic-drone-help-stop-the-spread-of-covid-19/) (permission granted).

Draganfly (2020) pandemic drone (https://draganfly.com/news/can-a-pandemic-drone-help-stop-the-spread-of-covid-19/) (permission granted).

Draganfly (2020) pandemic drone (https://draganfly.com/news/can-a-pandemic-drone-help-stop-the-spread-of-covid-19/) (permission granted).

In Draganfly’s promotional imagery, we can disaggregate its automated sensor systems into component elements, for which there are notable (and militarised) antecedents for each of the techniques, capacities and relations pictured. Thermal imaging (Figure 2) has been a key function of military and civic drones, made possible by the forward-looking infrared sensors equipped to identify warm and cool objects, whether human or animal bodies, engines, structures or environmental features. Caught in the drone’s crosshairs, the depiction of the drone’s subject (target) in this imagery (Figure 2) resonates with militarised relations between the drone (assemblage) and the bodies in its midst, refigured as sites of calculation, devoid of context and lifeworld (see Parks, 2014, 2016). As a form of automated biometric tracking, human pose estimation entails the abstraction of the body into a simple wireframe set of features and movements. Illustrated in Figures 3 and 4, Draganfly technology sought to apply human pose estimation to identify whether people were standing (Figure 3) or coughing (Figure 4). Draganfly’s proposed application marrying thermal imaging and pose estimation sought to at once combine and amplify these techniques. Thermal imaging would not only help differentiate bodies from environments but also assign specific temperature ranges that could be correlated with pose analysis to identify potentially infected individuals.

These visualisations speak to an underlying push to leverage automation to combine, ‘accelerate’ (Chen et al., 2020) and intensify drone logics in response to the distinct social and biological challenges of the pandemic. Just as the military drone enacts a biopolitical logic, the pandemic drone is imagined and visualised as an automated decision-maker that reckons with both biology and sociality as a problem for life-saving governance: proximity to others, the nature of gestures and the internal state of bodies. In less comprehensive and integrated incarnations, such as in the use of loudspeaker-equipped drones to enforce social distancing, the motility and mobility of the drone itself are paramount. What makes Draganfly’s pandemic drone instructive is that its aeriality is secondary to its logics. Here it is important to emplace Draganfly’s pandemic drone within the context of the robotification of the ‘spaces, politics and subjects of security’ more widely (Del Casino et al., 2020: 607). The automated logics of the pandemic drone are ‘woven into’ its visualisation (Figures 2 to 4) (Del Casino et al., 2020: 611), and in its depiction of techno-worlds and social relations, we see the capacity of the image to anticipate and ‘elevate some imagined futures above others’ (Valdez et al., 2018: 3387). Following Jeremy Crampton’s (2016: 141) call to emplace the ‘drone assemblage’ within the wider context of ‘algorithmic governance’, we can focus attention away from ‘drones as objects’ and rather on ‘what effects they achieve through the creation of new forms of subjectivity’.

In the initial conception, the Draganfly pandemic drone was proposed to fly in uncontrolled environments with varied lighting, whereas, in its reimagined kiosk iteration, the technology worked more effectively ‘in a controlled environment’ (Protalinski, 2020: n.p). While ascertaining that the pandemic drone was never meant to ‘identify people’ but rather to ‘measure the health of a population’, those with darker skin tones arguably remain excluded from what is described as ‘a meaningful population sample’ (Chell in Protalinski, 2020: n.p), a claim reinforcing existing biases within AI more widely. As the potential inequalities embedded are laid bare, we are thus reminded of the importance of understanding both the uneven ways by which someone or something comes to be apprehended as ‘anomaly’ or ‘insecurity scenario’, and that even in failure, such ‘experiments of automation’ are productive and do ‘particular kinds of political work’ (Dowling and McGuirk, 2022: 422). In other words, even as Draganfly’s pandemic drone failed, it at once tapped into and spawned other legacies. From mobilising military drone logics of targeting, to dispersing pandemic drone logics into a still kiosk operating under ‘controlled’ conditions, even in their alleged failure, pandemic drone logics live on.

Conclusion

Throughout, we have sought to pull attention from the what of the pandemic drone to the how and where. In so doing, we charted three trajectories of drone failure: failure as experiment, failure as imaginary and failure as glitch. These trajectories are not necessarily separate from one another, but rather resonate and intersect at various points. We showed how failure variously acted, enabling drone logics and processes to migrate across diverse socio-technical forms, sites and applications while interrogating the implications of failed pandemic drone automated decision-making therein. As automated decision-maker, the drone’s navigational and mobile classification capacities exhibit ‘intelligence’ that can thus be understood as ‘multiple, partial, and situated in and in-between spaces, bodies, objects, and technologies’ (Lynch and Del Casino, 2020: 382). While important to understand the drone’s ‘autonomy’ as ‘conditional’ – that is shaped by and through its contexts of operation and the ‘human-machine relations’ therein (Sumartojo and Lugli, 2021: 8) – the propositions of the pandemic drone nonetheless raise important questions of the ‘sites’ and actors of ‘responsibility in decision-making’ (Bissell, 2018: 59). As our discussion of the ‘glitch’ noted, the ‘reshaping and shifting of power relations’ both enacts and results in forms of ‘domination and resistance’ alike (Del Casino et al., 2020: 606).

In turning to sites and stories of pandemic drone failure as experiment, imagination and glitch, we have underscored failure’s performative and productive capacities. We can see and access failure across multiple sites and sources – as it is envisioned, unfolds and even as stalls in prototypes and trials and in its manifestations and legacies in new products and projects. In unpacking automated pandemic drone logics, we have highlighted them as at once punctuated with questions of visibility, control and subjugation and as morphing, translating and manifesting in wider applications elsewhere. Reassessing the pandemic drone through the lens of failure is vital in light of the inherent malleability and portability of its logics. While exploring the ‘what’ of the pandemic drone is instructive, focusing only on prominent but narrow instances of pandemic drones risks absenting wider sets of drone logics and the risks they entail – especially to individuals and communities already marginalised by COVID-19 mitigation and management. Heeding the teachings of the ‘failure’ of pandemic drones suggests that tracking the apparent failures of automated responses to the pandemic of all kinds warrants ongoing attention.

Footnotes

Authors’ note

Michael Richardson is now affiliated to UNSW Sydney, Australia.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Economic and Social Research Council funded ‘Diversifying Drone Stories’ project (ES/W001977/1) and the Australian Research Council Centre of Excellence for Automated Decision-Making and Society (CE200100005).