Abstract

Machine vision is one the most consequential applications of artificial intelligence (AI) in contemporary society. This article analyzes how companies that produce machine vision technology articulate what it means for machines to “see.” Through a thematic analysis of more than 200 corporately produced documents, we examine the companies’ product offerings and identify three discursive techniques that entwine basic explanations of emerging technology with the ideologies of AI producers: dismantling sight into technical action, expanding the parameters of sight, and seeing through data. These recurring corporate narratives organize perceptions of automation, educating outsiders how to value computational outcomes and support them through rearranging the real-world conditions of labor. We argue that the social power of machine vision is not only in how it detects objects, but also in how it arbitrates what work is visible in visions of the industry’s future.

Keywords

Introduction

The mechanisms of machine vision—image capture, object recognition, and robotic automation—underpin some of the most impactful and contested technological developments in contemporary society. Facial recognition powers surveillance systems that inform law enforcement and predictive policing at risk of discrimination and oppression (Introna and Wood, 2004). Scanners installed on drones could be used to locate inventory in e-commerce warehouses, organizing the activities of workers and rendering them susceptible to algorithmic management (Delfanti and Frey, 2021). Object detection trained on large-scale datasets dismisses the personal, contextual, and political aspects of images during classification, thereby perpetuating societal inequalities and reinforcing extant, dominant worldviews (Crawford, 2021).

For more than 60 years, computer scientists have endeavored to advance the field of artificial intelligence (AI) far enough to bestow machines with “sight”—the ability to detect and process visual information. Yet, it is clear that a machine’s ability to see is not purely computational. This article approaches machine vision from a sociotechnical perspective, inquiring into the discourses of the technology’s producers. Those who design and promote AI-enabled technologies play a significant role in shaping both how technology is used and, ultimately, its social consequences (Bailey and Barley, 2020). Their visions act as primary agents that organize public conceptions of emerging technology (Mager and Katzenbach, 2021). Through their strategic presentations of facts and reasons, corporate discourses produce ideological frameworks that structure understandings of what it means for machines to see and articulate them into a unity called machine vision.

In this article, we analyze a dataset of 222 public statements produced by seven corporations to describe their product offerings and their companies’ mission. Complex AI systems are difficult to understand, even for experts. How they operate and the organizational cultures that produce them are often deliberately obscured (Pasquale, 2015; Gillespie, 2022). One way of understanding opaque technological systems is through “scavenging” from publicly available sources of information, like corporate websites (Seaver, 2017: 6). These sources act as an “interface space” in which industry insiders attempt to determine how their technologies are known through communication with the public (Ortner, 2010: 219). Similar techniques developed in the political economy of communication “listen in” (Corrigan, 2018: 2757) on industry conversations through analyzing materials, such as trade publications, as manifestations of insiders’ interests and worldviews (Carter and Egliston, 2023; Wilken et al., 2019).

We leverage the duality of the term vision—as both a way of seeing the present and anticipating the future—to clarify the mechanisms by which imaginaries participate in the co-production of material and ideological realities. While the notion of sociotechnical imaginaries as initially developed by Jasanoff and Kim (2009) does not specifically account for technologized labor, in her subsequent work, Jasanoff (2015b) notes it is through “deployments of labor and capital . . . [that] imaginaries get embedded in the concrete artifacts of industrial civilization” (p. 327). Critical technology scholars have advocated for examining the “manufacturing of hype and promise” (Elish and boyd, 2018: 58) in order to understand how communication about a technology frames public conceptions about what a technology is and does. This article uses sociotechnical imaginaries as a sensitizing lens to explain how corporate narratives participate in what Bareis and Katzenbach (2022) term “talking AI into being” (p. 863).

We argue that the way machine vision technologies are talked about—how they are conceptualized and justified by the companies that produce them—is inseparable from the way they operate. Contributing to a tradition of material-discursive inquiry (Orlikowski and Scott, 2015), we demonstrate that machine vision is not an external, technical reality; it is configured through communication and practice. Corporately produced explanations and descriptions of new machines are entwined with statements that motivate their adoption, external investment, and societal acceptance. From an expert vantage point, understandings of machine vision are evidenced by corporately produced case studies and solidified by powerful institutional coalities. Their promises of reduced labor costs and superior data-driven workflows invite investors and employers to implement technologies for generating speculative returns. By grounding our inquiry in the context of work, our findings advance a critical approach to sociotechnical imaginaries by illustrating the material consequences of corporate discourse—in this case, the reconfiguration of labor.

Literature

Under the category of AI, machine vision can be defined as automated technology associated with the visual senses. In his book

As noted by Shoshana Zuboff (2015), pervasive computer mediation has transformed nearly all aspects of the world by making them visible, knowable, and shareable. From a political economy perspective, the visual aspects of digital media are analyzed as an extension of capitalism, serving as one means of data extraction for the demands of monitoring or monetization (Beer, 2018; Couldry and Mejias, 2019; Turow and Couldry, 2018). For example, Zuboff (2015) observes that facial recognition (along with other data-gathering moves) is a substantial investment for companies that result in a “distributed and largely uncontested new expression of power” (p. 75). Sadowski (2019) exemplifies this approach in his theorization of visual data as a form of capital. He argues the cameras and sensors installed in urban environments unevenly consolidate knowledge to such a degree that it becomes a tool of social control and oppression. Another string of scholarship foregrounds the possibilities of algorithmic control on social media, addressing how software processes intersect with issues of visibility (Bucher, 2012; Jacobsen, 2021). If we understand the recognition process as powerful in the form of surveillance (Zuboff, 2015), knowledge production (Sadowski, 2019), and visibility arbitration (Jacobsen, 2021), the question remains: what does it mean to say that a machine can see and how are these latent meanings articulated into a unity called machine vision?

Machine vision as a sociotechnical imaginary

To reveal the constructedness of machine vision, we find it helpful to turn to the concept of sociotechnical imaginary—particularly as it relates to questions of technology and shared ways of knowing. The notion of sociotechnical imaginaries emerged in recognition of the inextricable “coproduction” of technoscientific imagination and social orders (Jasanoff, 2015b: 332). According to Jasanoff (2015a), a sociotechnical imaginary is defined as “collectively held, institutionally stabilized, and publicly performed visions of desirable futures, animated by . . . advances in science and technology” (p. 4). It is often corporate actors—in addition to the public institutions that were the original focus of imaginaries scholarship—that operate powerful imaginaries in their articulations of technological futures (see Egliston and Carter, 2022; Haupt, 2021; Hockenhull and Cohn, 2021; Sadowski and Bendor, 2019).

As noted by Mager and Katzenbach (2021), imaginaries are increasingly commodified by companies in the making of digital technology and its rhetoric to propel “new socio-economic orders that comfortably accommodate their business interests and commercial products” (p. 227). In her ethnographic analysis of interventions in automation, Annette Markham (2021) observes corporate visions strengthen the dominant frames of technological inevitability. She notes people’s difficulties in “imagining futures in ways that do not reproduce current ideological trends or cede control and power to external, mostly corporate, stakeholders” (p. 384). The emphasis on corporate imaginative power echoes current advocacy for more attention to the production of ideology in studies of AI within organizations (Bailey and Barley, 2020). As Bailey and Barley (2020) argue, the implications of intelligent technology are “not always the product of ongoing action and interpretation at the point where people are using technologies. Rather, those who design and promulgate technologies have visions of what work is and what it should be like” (p. 2). Nagy and Turner (2019) add a layer of timeliness to these directives, advocating for inquires into how a technology becomes “the medium of the moment” as discourses are still unfolding (p. 536). In analytical terms, it becomes urgent and essential for us to ask about the material consequences of corporate visions and how they exert power.

Machine vision, labor, and rationalization

In this article we ask: how do companies articulate their visions of a machine’s ability to “see”? And, in what ways do these articulations rationalize the labor practices that will emerge as a result of their introduction? We answer these questions through a case study of cutting-edge technologies intended for installation at large-scale material recovery facilities (MRFs) that sort and process recyclable materials from waste streams. MRFs are leveraging machine vision in an attempt to automate and optimize the most fundamental aspect of their business: the identification, classification, and selection that are the basis of sorting. We argue that machine vision functions not only as a mechanism to discover the value of recyclable objects but also as an ideological mechanism—assigning value to actors within the sorting system. Beyond the technical functioning of machine vision, these technologies are also constituted by

Methods

To better understand the imaginary of machine vision articulated by the companies that produce sorting machines for MRFs, we conducted a thematic analysis of public-facing materials available on their company websites. The organizations selected for this study provide a case study for examining how corporations at the forefront of innovation within a specific industry frame the opportunities and challenges associated with emerging AI technologies. The materials we analyzed include texts (product descriptions, mission statements), graphics (logos, schematics, renderings), and transcribed videos (marketing demos, webinars with industry experts). The content on company websites is directly produced and controlled by technology creators, representing what Jasanoff (2015a) calls “the languages of power.” She observes that these official discourses provide fertile ground for investigating “how actors with authority to shape the public imagination construct stories of progress in their programmatic statements” (p. 25). Websites offer a useful data archive of content intended to frame how interested consumers see and understand new technologies.

Empirical setting

We investigate recycling sorting technology as demonstrative of a larger category of AI-powered “smart” machines intended to automate material labor. This follows a research project that included field research at an MRF in the United States to understand how AI technologies were being adopted and adapted in essential workplaces during the Covid-19 pandemic. In the recycling sorting industry, machine vision technologies use camera sensors and physical mechanisms to separate various objects on conveyor belts. These objects are discarded by consumers as waste and enter the facility as a jumbled, varied mass that must be separated by type in order to be baled and resold in global recycling markets. MRFs have long used human labor and various forms of automation—some relatively simple and some more mechanically complex—to aid in the sorting process. However, advances in AI have created a new class of intelligent machines that combine digital software and robotics. More recently, the exposure dangers and social distancing directives of the Covid-19 pandemic have fueled enthusiasm and investment in automated sorting machines (Corkery and Gelles, 2020).

The empirical data for our analysis were collected from public-facing, English-language materials available on the websites of the North American and European-based companies that sponsored the 2022 MRF Operations Forums. Though we initially investigated all 13 companies that sponsored the forum, we found that as of July 2022, only 7 explicitly presented themselves as advancing recycling sorting through the use of AI in their product offerings: AMP Robotics, Waste Robotics, Bulk Handling Systems (BHS), Machinex, TOMRA, CP Group, and AMCS. Despite differing positions, they are united in the ultimate goal of automating the sorting process to improve material recovery (see Supplemental Appendix 1).

Data collection and analysis

We followed Braun and Clarke’s (2006) steps for data collection and reduction. For each company website, we first immersed ourselves in the content through repeated reading, to familiarize ourselves with the depth and breadth of their self-description. We then moved from “data corpus” (a term that describes all the data gathered for a particular project) to data set by considering the relevance of data items to our research questions (p. 79). Keyword searches (AI, robotics, etc.) were conducted to locate data that may be buried in archives. Our final data set constitutes 222 documents.

Thematic analysis

Thematic analysis is a qualitative method widely used for “identifying, analyzing and reporting patterns within data” (Braun and Clarke, 2006: 79). It is a well-suited and established method for application within sociotechnical imaginaries (ten Oever, 2021) that has been used to investigate the argumentative structure of corporately produced content (Egliston and Carter, 2022; Haupt, 2021; Liao and Iliadis, 2021; Sadowski and Bendor, 2019). Using an interpretivist approach to latent meaning, we conducted a thematic analysis to identify the underlying assumptions and conceptualizations that shape the surface of corporate self-presentations.

Our initial codes were generated by developing common sense categories drawn from the Five W questions (who, what, where, when, and why). Developing these basic understandings is especially necessary for research on emerging technologies, whose functionality and use contexts are not widely known. Coding categories were developed through an iterative process that supplemented, refined, and modified the initial coding scheme. We synthesized emerging categories through thematic mapping. Some potential themes were eliminated as anomalous, whereas others were combined to reach an overarching theme that included enough coded segments to support patterns in alignment with our research questions.

Findings

The seven companies we analyzed commonly exhibited complementary descriptions, claims, and rationales for installing AI-powered vision systems and the robots that act upon this information. We label these three discursive techniques: dismantling sight into technical action, expanding the parameters of sight, and seeing through data. We theorize these articulations collectively as the imaginary of machine vision.

Dismantling sight into technical action

The imaginary of machine vision is built upon a dialectic that is at the very core of AI. First,

Making sense of sorting mechanically

Corporate representations of recycling sorting produce a mechanical understanding of the sorting process. Waste sorting work is dissected into a series of physical actions—such as pick and place—that can be triggered and arbitrated by sensors. In this set of symbolic tasks, few human workers are considered necessary to manage the hundred tons of material hoisted onto main feed conveyor belts through the MRF daily. Instead, mechanical apparatuses are the new agents that select and collect waste according to their characteristics such as color and composition.

For almost two decades, companies like TOMRA have produced optic sorters that recognize materials using lasers to detect their composition and move them using blasts of air. Optical sorting like this is a common part of MRFs, and the technology is described as “fairly basic” by industry publications (Flower, 2015). This mechanism, while potentially reducing the number of employees in facilities, does not currently operate without a substantial amount of associated human labor in the form of quality control. Realizing claims of “fully automating” processes (30)

2

or “100% autonomous” systems (188) is contingent upon technologies that mimic human intelligence. In the visions of manufacturers, machine vision technology “is more than just a robotic sorter” and will soon act as “the active brain” of MRFs that control equipment and provide real-time data (

Advances in machine vision are leveraged in corporate discourses, as companies compete to position themselves as industry leaders and their products as uniquely effective and innovative. CP Group boldly billed their optical sorter in all capital letters: “BEST-IN-CLASS,” underlining that its sensor was tested to outperform competitors (54). Self-presented as the apex of deep learning algorithms, TOMRA claimed to be “the first in the world” applying it to distinguishing wood-based materials (43). In touting its cutting-edge ability of data training, AMP Robotics advertised it “has created the largest known real-world dataset of recyclable materials for machine learning” (93). This arms race of sensors, algorithms, and data suggests that rather than contesting each other’s epistemic production of techno-solutions, these manufacturers performed a shared belief in materializing automation thoroughly through higher pixel resolution and optimized visual intelligence.

Imitating human attributes

Concurrently to their arguments for removing human workers, machine vision companies reconstructed humanness at the site of labor. The definition of AI relies on an anthropomorphism that reproduces aspects of human intelligence (Guzman and Lewis, 2020). AI’s indebtedness to this metaphor is an operational frame that not only brings humans and nonhumans into a relation of similarity, but also dehumanizes those who perform that work (Rhee, 2018).

Just as the recurring metaphor of “world brain” is used to describe the Internet as a repository of knowledge (Wyatt, 2021), vision systems are portrayed as the “eyes” of sorting machines. In explaining their product to consumers, AMP directly equates machine and human vision: “this vision system is . . . just like you and I would if we were looking at the materials and the sorting facilities, so it’s using the same kind of perception and logic as a human being” (5). Like biological eyes to the brain, machine vision is described as an input pathway for continuously capturing megabits of data to be made understandable by computers. The logo of Max-AI® provides an evocative illustration of the combination: a geometric eye, in which the streaked colors of the iris are composed of dots and lines in a printed circuit board (Figure 1). Drawing a likeness between machine vision and natural mechanisms of sight grants AI familiarity, making it understandable by equating it with existing systems in terms of function. These machines approximate the same labor they are designed to replace.

Screenshot of the logo of BHS machine vision system on July 17th, 2022 (190).

Wyatt (2021) argues, metaphors don’t simply describe abstract technologies; they are a normative means for powerful social actors to define reality through everyday language. The metaphors of eyes and visual understanding serve to humanize the non-human and to characterize machine intelligence as having an enhanced human sensory ability enabled by larger, integrated systems of data acquisition, pattern recognition, and self-training. While the move is subtle, for industry insiders the meaning is clear: because AI technologies can see like people, they can achieve a vision of complete automation that has evaded automated technologies up to this point.

Better than human

The metaphor of sight makes it possible to compare the robot’s computing ability with the visual intelligence of humans in pursuit of productivity. Among most manufacturers, there is a strong sense that artificial sight is not created to augment human eyes in existing processes of sorting, but to build ways of seeing that exceed natural eyes and make them unnecessary. AMP Robotics made this point through improved time-efficiency, citing that conveyor belts could be run at an additional 100 feet per minute when quality control work was performed by robots rather than human sorters (67).

Yet, these claims of robots bettering human abilities are still founded on anthropomorphic metaphors that ultimately dehumanize workers. BHS provides perhaps the starkest example of this, “I AM MAX” their website’s landing states in first-person voice: “I don’t get sick. I don’t need breaks, lunches, or days off. I work harder, longer, and better than anyone else. I’m more accurate and more efficient than anyone could be” (207). In this sense, illness or human limits are not viewed through the lens of physical health, but as incompetence that can be overcome by machines.

Expanding the parameters of sight

AI-powered sorting technologies operate on the premise that robots should prioritize collecting certain materials over others. Here, machine vision moves from mere identification to selective action based on pre-programmed determinations of value. Corporate discourse also acts as a structure for action. It organizes perceptions of labor, educating outsiders on how to value the computational outcomes of sight and support them through rearranging the real-world conditions of sorting work.

Value through recognition

Haraway (1988) writes, “vision requires instruments of vision . . . Instruments of vision mediate standpoints” (p. 586). Recognizing that machine vision is both the power of seeing and the instrument of that power prompts a need for an inquiry into how technology—both materially and discursively—arbitrates what is seen. The algorithms that power AI technologies synthesize massive bodies of data into actionable outputs. In

Automated sorting machines first classify objects based on their material composition. The visual dashboard of the AMP Vision™, for example, offers a high-definition video stream of material categorization. The objects that move across the screen are quickly isolated with color-coded rectangles and labeled: corrogated_cardboard, metals, fiber_paper, and so on. AMP Robotics’ website for their vision system states that their machines are informed by a data set that contains the “50-billion items” seen by their systems annually (111). Machine vision technologies digitize this vast array of images and process them using neural network algorithms that recognize patterns. The similarity between these digital profiles and physical objects is the basis of recognition.

While the software that powers these machines seeks to classify all the material it encounters, the robots are programmed to retrieve only material that has resale value. Recycling sorting is, at the basic level, a process of separation. Paper is separate from plastic, and both are separated from organic material (like food waste) that can’t be sold and reused. Material recovery is founded on programmed rules about what materials to prioritize—reifying the value system of existing markets that buy and sell recycled materials. Machinex stated, “The type of material a robot is programmed to pick . . . is best determined based on the market prices” (130).

Of course, the classification and selection process isn’t unique to machines. Human sorters are also trained to select materials with market value. However, advanced machine vision technologies tout the ability to capture a previously unmonetized layer of objects. AMP Robotics builds upon the claims of bettering existing technologies, with achievements in “speed and precision” that “open the door to other categories of packaging that have been historically challenging to identify” (101). Here, AI expands the parameters of what can and should be captured in sorting processes. In doing so, vision systems play an active role in defining the regime of recognition through redefining the parameters of vision. Recognition is small, recognition is specific (87). The imaginary of machine vision introduces and then perpetuates a level of operationality that can’t be achieved through current labor practices. Thus, company messaging about AI’s ability to see shapes the conditions of visibility and the conditions of work.

Building the visual field

Many machine manufacturers highlight a reduction of labor cost as a measure of success for their installation of robotic sorting lines (8; 55; 66) For instance, a full-screen illustration on the Waste Robotics website starkly presents a potent visualization of labor displacement. The justification for new equipment investment is made by directly contrasting capital expenditures with the prevailing costs associated with human labor (Figure 2). In order to support their rationale for delegating labor to robots, the companies emphasize the burdensome nature of recycling sorting work. In a case study by AMP Robotics, their vision system customer attests, “Sorting jobs are difficult and often dangerous, and [our facility] prefers the use of a robot, where possible, to a manual sorter” (107). Waste Robotics seconds the perspective: “The work was inhuman and difficult to fulfill since it exposed people to dull, dirty, and dangerous work” (15). It is true that recycling jobs expose humans to harmful waste and dangerous machines (Joseph, 2016). However, emphasis on the dirty and dangerous nature of manual sorting work masks the organizational choices that result in the physical insecurity and economic vulnerability of workers (Chen, 2015). Corporate representations of manual work wield ideological force that tangles workplace conditions with the financial benefits of labor reduction.

Screenshot of Waste Robotics website on July 19th, 2022 (18).

Between complete automation and manual labor lies a possibility of intermediate configurations. For example, in a trade press article, both AMP Robotics and BHS discuss the application of robots for quality control (Smalley, 2020). Traditionally performed by humans, quality control work corrects the mistakes of machines—in this case removing items that should have been filtered out in the optical sorting process. Company executives evidence the abilities of robots to perform this task through referencing a reduction in headcount (e.g. from three sorters to one). While supporting their claim of cost savings, the single remaining sorter—and the existence of quality control in general—is also a subtle acknowledgment of the necessity of human intervention and a necessarily different arrangement than that of total automation. On the BHS website, one of their quality control robots is presented as a “co-bot” (or collaborative robot) that is “designed to work safely alongside people” (201). The quality control co-bot can be integrated into existing processes and infrastructure and is capable of occupying the same space as workers. Because it doesn’t require extensive retrofitting, it is more a technology of replacement than a data-driven reimagining. BHS’ efforts to achieve a low-end foothold in the market reveal both the limits of the technology and discourse.

Machine manufacturers justify AI creation as freeing up humans to do more sophisticated tasks and perform different, less grueling duties. As a client of AMP Robotics argues, “[Our company] does not view a robot as a replacement for a person; rather, a robot is replacing a ‘job’ or a task. In this way, the technology has helped create higher-value positions within the plant” (107). This statement relies upon a perspective in which it is both possible

Optimistic popular discourses on the future of work frame automation as a shifter of jobs, rather than a mere replacer (Kelly, 2012). Corporate visions in which workers move into “higher value” positions gloss over the reality of displacement when automation is introduced. Rather than moving upward, workers who once performed a task are often moved outward, into roles organized around machines that don’t necessarily offer higher pay or better working conditions (Irani, 2019). When automation transfers tasks from humans to machines, new problems always emerge (Gray and Suri, 2019). Though machine manufacturers are striving for total automation, we meet these claims with skepticism. Currently, AI technologies deployed in recycling sorting facilities require a great deal of human labor in the form of quality control and maintenance (Fox et al., 2023). Framing the complex scenarios of labor into simple, causally connected narratives, serves to legitimize displacement—while failing to acknowledge that automation, as it currently stands, still requires the presence of human workers. Thus, the social power of machine vision consists not only in how it detects objects, but also in how it arbitrates what work is visible in visions of the industry’s future.

Seeing through data

The speculative value of AI vision technologies is articulated through discourses of future potential and future uncertainty. Our thematic analysis finds that, in these corporate visions, AI technology is not just framed as a source of greater profit for the facilities that monetize sorted objects. Rather, the data created by AI—what we call “datafied seeing”—becomes the central asset. We argue that the value of machine vision lies not only in the monetization of sorted products but also in the transformation of labor and material waste into data, through “real-time” monitoring. This largely speculative vision of how future MRFs could work takes on a persuasive, normative quality that seeks to organize decisions about labor and drive deeper investment in technology.

Monitoring in real time

AI-powered technologies produce data that enable managers to track sorting processes in ways that would be almost impossible for facilities that use hand-sorting or mechanical (non-“smart”) sorting machines. Presently, data are collected in many MRFs manually throughout inbound material inspection, frontline workforce, and outbound logistics (140). In an industry webinar, BHS notes that manual data collection is, at best, retrospective. These evaluations—characterized by BHS as “Hey, did I do good? Hey did I do bad?”—lack the instant indicators offered by robotic sorting systems that can be recalibrated (or even, recalibrate themselves) at the moment (9). The complex systems of traditional sorting machines that “do not communicate with each other” leave facility managers to implement data insights, but a constant flow of ever-changing material means that once executed they may no longer be applicable (143). In company discourses, manual processes of monitoring streams are problematized to rationalize the necessity for an instant, continuous, and remote view of sorting behaviors through cameras and sensors that capture visual data.

Monitoring is a hallmark of production processes under capitalism, facilitating managerial control over worker productivity and time efficiency at the labor sites (Zuboff, 1988). In corporate visions of the current recycling industry, this monitoring activity is challenged and overwhelmed by scale. As BHS described, facilities “are larger, more complex, and more automated than ever before” (182). Manufacturers figure the problem as old-fashioned, cumbersome, non-digital forms of labor tracking that create “revenue leakage” and amount to “a series of speed bumps” (143).

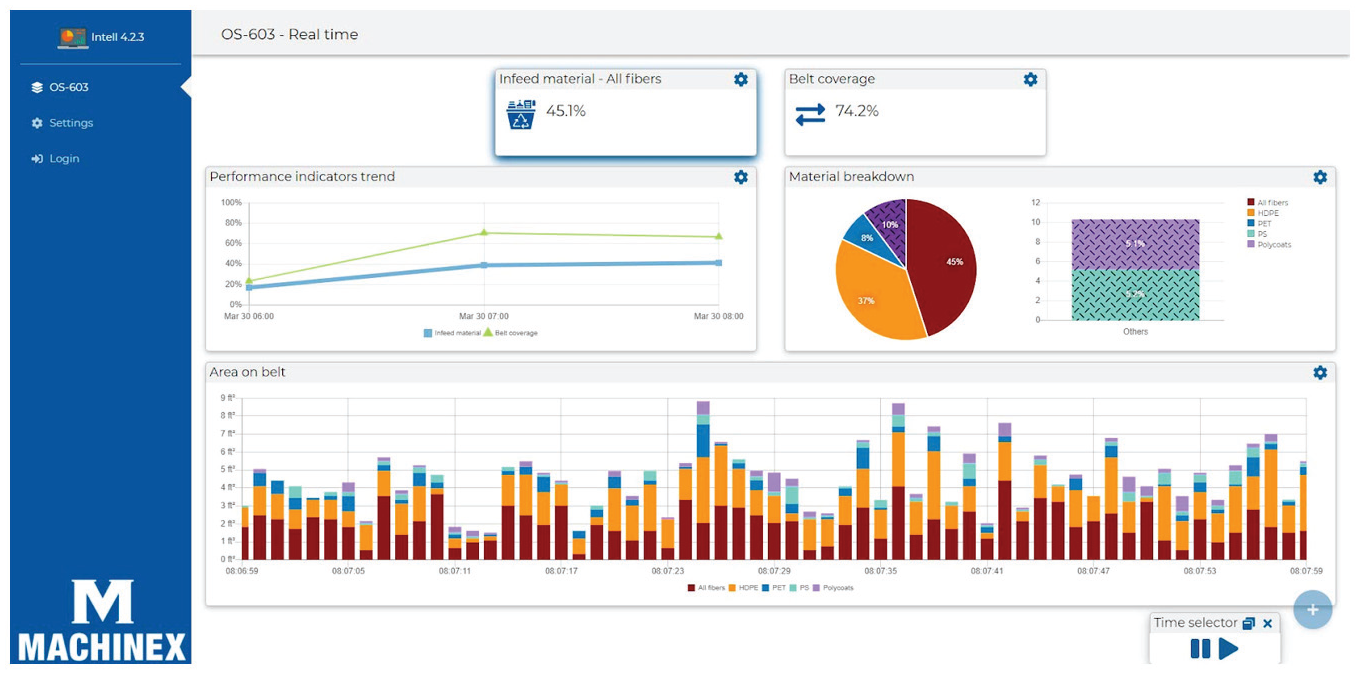

Alternatively, AI offers data in “real-time.” This new temporality of vision is made possible through continuous tracking of the entire recycling process—potentially beginning the moment materials arrive at the facility, with high-resolution cameras capturing images of the contents of individual containers as they are emptied into sorting machines (140). One of the greatest challenges facing MRFs is the enormous variability of waste streams. With instantaneous data metrics and visualized dashboards, facility managers have access to important information for ever-changing updates

Screenshot of the Vision Intell dashboard connected to MACH Vision on July 18th, 2022 (127).

Machine vision dashboards play a productive role in representing the material and symbolic accomplishments of data work. Sensors and cameras capture images of the real world that are fed into automated systems that respond in real time; metrics and visualizations bring this relation between objects and images into a clearer view. Datafication, in much of the existing literature, is often treated as the perpetual cycle of turning

Anticipating the future

Company descriptions of high-tech MRFs—with fully integrated smart technologies that can observe every part of the sorting process—are still largely speculative. While brand-new, investment-firm funded facilities do exist, municipal MRFs are often updated in retrofits where automated machines are added piecemeal. A single AI-powered sorter acts as an initial buy-in for facilities. Optimal performance of the machines requires more data. More data are produced by installing more machines. This formula validates the practices it influences by proposing conditions that facilities must meet to harness the technology’s potential.

Data are of value to facility managers, who can use them to inform organizational decision-making. And, they are also of value to machine manufacturers, who use them to improve self-learning software in neural network systems. Manufacturers’ business goals must perpetuate an ever-expanding logic of data accumulation to push the limit of AI. The CEO of AMP Robotics states it plainly: “anything that people have trouble distinguishing, the system will have trouble distinguishing, and those require a lot of data to overcome” (Pransky, 2020: 321). The ability of AI to surpass human capabilities (the key value proposition of their product) is reliant on the accumulation of data and thus on spurring implementation.

Machine vision technologies participate in what media theorists van Doorn and Badger (2020) term “dual value production” (p. 1476). In this case, an AI sorting machine installed in an MRF not only generates value through automatically sorting waste but also generates data about waste streams and sorting processes. These data may be just as valuable to the facility as the sorting activity performed by machines. AMP Robotics product ventures make this value clear “We’re branching out into . . . deploying our vision system

The circular logic of anticipatory value functions as a pivotal conduit for greater implementation of AI systems; yet, at the same time, it buries “the last mile” human sorters who feed materials to intelligent machines under layers of digital denial. As Lily Irani (2016) argues, corporate articulations that hide the necessity of on-the-ground workers perform an important financial function. She observes that technology companies require large initial investments to develop software, but then can easily (and cheaply) scale in a way that is impossible for companies that require large amounts of labor. By focusing on the data produced by machine vision technologies, manufacturers offer a secondary digital product to facilities and make themselves attractive to venture capital.

The value of vision in machines is produced through a future-based configuration, in which technology and data are an investment for potential returns. The risk of becoming obsolete inscribed through the imagined industry revolution propels organizational decision-makers to participate in this future. For instance, many manufacturing companies emphasize the ability to adapt to “changing markets, emerging consumer trends, and new legislation” (138). “Future-proofing” became a buzzword among these companies to demonstrate machine vision will allow facilities to align technology, people, and processes to meet the challenges of uncertainties (20; 118; 196) and “deliver system success” (165). Case studies of business success and future forecasting serve as a disciplinary technique, encouraging organizations to endlessly recalibrate their behavior to keep up with industry standards. Narratives of innovation often operate through what Lee Vinsel and Andrew Russell (2020) term “a dialect of perpetual worry” (p. 11). The idea that companies will be left behind as the industry inevitably advances plays upon fear, which can be alleviated by adopting technology—even when they are unproven or not yet fully effective. Imperatives for industrial innovation come to serve as their own justification for implementation (Pfotenhauer and Jasanoff, 2017).

Discussion: seeing as knowing? The imaginary of machine vision

The imaginary and power

The corporate visions that construct technology discourses exercise ideological power in the way they structure the realm of epistemology and codify a privileged point of view. Through testimonials from various experts and the wielding of scientific evidence, machine vision is attested to and verified by authoritative adopters (Cunningham, 2020). Defining what counts as knowledge, these discursive patterns construct the dominant frames that guide and regulate behavior. The anticipatory logics embedded in the imaginary are a motivating force that impels subjects to

Corporate visions gain traction and strength through “sustained acts of coalition building,” which rise to a collectively held imaginary (Jasanoff, 2015a: 4). The imaginative power of machine vision is morally substantiated and normatively grounded in shared, industry-wide discourse. This discourse concurrently encodes human labor as unstable while tactically conforming to needs of the other powerful institutions—buyers, investors, and employers—for control over uncertainties in the future of work. At the convening of the MRF Operations Forum, the sponsoring companies (including BHS, Waste Robotics, AMP Robotics, and Machinex) all shared an ultimate goal of realizing a “fully automated system” (Smalley, 2019). Here we see how the machine vision imaginary is assembled: circulating from insiders to profit-driven sectors, drawing in more participants, and delivering a fantasy of fully automated labor, producing more data to adapt to uncertainties, more intelligence to increase predictability, and more automation to centralize managerial control.

The politics of seeing

All classification algorithms are sorting technologies. This sorting function explains why much of the existing, critical literature on algorithmic recognition analyzes the technology in terms of power. When technologies sort people—whether through facial identification (Introna and Wood, 2004) or automating decisions in state systems (Eubanks, 2018)—their social consequences are clear. But, what about technologies that sort material? Our study of machine vision mechanisms reveals that AI-powered technology is always social, always human. Metaphors of eyes and visual understanding are used to replicate the human sensorial abilities needed for continuous labor. These technological descriptions are juxtaposed alongside metrics of achievement that position existing workers in the lowest rungs of labor hierarchy. Even in the aspirational isolation of total automation, machine vision is not a natural and objective seer.

Machine vision needs to be read as much more than the cameras and images that enable computational sight. Once an image is captured by cameras, it is AI that defines what is in the field of view. It binds desirable measurements to artifacts and informs robotic action. Certainly, vision begins with the eye, but recognition is a cognitive process in which objects are given meaning. Corporate discourses perform a similar, definitional function. They attempt to bind their articulations to implementation. They invite observers to see scenes of work from the perspective of machines. For the manufacturers in this study, machine vision technology acts as an optical lens literally, but also figuratively; figuring a picture of a foreseeable future that shares a likeness with the existing, yet more optimized world. As Anne Balsamo (2011) argues, much of technology’s transformative power is in the form of reproduction. Technology materializes the cultural perspectives of designers and, in doing so, may replicate a set of social relations.

If “seeing without knowing” is the limitation of looking inside an algorithmic system (Ananny and Crawford, 2018: 973), our research demonstrates the necessity for inquiring into lines of sight: where we see technology from, and what is elicited from a point of view. When understanding is constrained by the public facts and reasoning of industry insiders, it becomes clear that the answers to these questions are not purely technical but always deeply political and economic. Informed by corporate self-presentation, we come to know technology through the dominant frames of insider knowledge that comfortably accommodate their interests. The industry constantly and intentionally invites the public to (in the words of AMP Robotics slogan) “See it, Know it” as they do.

From the algorithmic arbitration to vision diffraction

Machine vision seemingly communicates through image rather than language, but our case study demonstrates that machine vision is highly articulate. It communicates when speaking in first-person voice as an ideal employee who never gets sick and tired of their job, or delivering what it sees as a transparent reporter via digital dashboards and real-time analytics. Machine vision provokes an ironic answer to the question “who is speaking for machines”—for all those non-human, intelligent artifacts made to seem like they function independently, because there is no “their own” voice for machines. There are only manufactured alignments of facts and reasons that, through various justifications, give power to those who design technologies with particular ways of seeing, knowing, and acting. The machine utters only for the interests of the creators and adopters, not for whom machines displace.

Visions are not just a matter of anchoring meaning to the machine’s ability to see but also about closing off the possibilities of seeing from elsewhere. Jasanoff (2015a) warns of the normalizing, even hegemonic, power of imaginaries. She writes, “Other ways of seeing and reasoning—ways that would make injustice palpable—may not enter anyone’s imagination . . . and hence may never give rise to organized criticism or opposition . . . that could hold power accountable” (p. 14). Indeed, the labor conditions of work surrounding AI are one of the most pressing contemporary concerns for technology ethics (Williams et al., 2022). Through continually strengthening and constraining the frames of everyday discourse in conjunction with business, AI entrenches the existing worship of productivity and the dominant logic of profit.

Importantly, the imaginary is not simply about AI changing human practices, or about humans making use of AI, but about the ideological control of external corporate power that attempts to influence organizational decision-making and structure industries through selling technologies that are not yet fully realized. For critical studies of technology, it implies recognizing communication and the corporate constructedness of public facts as one of the central spaces in which AI is shaped—and the necessity of continuing the work of diffracting articulations of machine vision.

Supplemental Material

sj-docx-1-nms-10.1177_14614448231176765 – Supplemental material for Machine visions: A corporate imaginary of artificial sight

Supplemental material, sj-docx-1-nms-10.1177_14614448231176765 for Machine visions: A corporate imaginary of artificial sight by Wei-Jie Hsiao and Samantha Shorey in New Media & Society

Footnotes

Acknowledgements

The authors thank Joshua B. Barbour and the anonymous reviewers for their feedback on prior drafts, which connected us to broader scholarly conversations in the field. We thank our collaborators on

Correction (July 2023):

The article has been updated with minor textual errors since its original publication.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was supported by the U.S. National Science Foundation grant #2037261.

Supplemental material

Supplemental material for this article is available online.

Notes

Author biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.