Abstract

In this article, we explore the experiences of commercial content creators who have their content (videos, photos, and images) misused. This article reports from a mixed-methods study consisting of 16 interviews with content creators; nine interviews with practitioners who support sex workers and interviews with seven adult service website (ASW) operators. These qualitative data are supported by 221 responses to a survey from the content creators’ community. We describe how content creators experience blackmail, threats of exposure and recording without knowledge, stalking, harassment, doxing, ‘deepfakes’ and impersonation. We conclude that the online sex work environment may not be as safe as previous research has demonstrated, and that commercial content creators are often ignored by governance and platforms following sex workers’ complaints regarding their content being misused. We reflect on the forthcoming UK Online Safety Bill as compared to the US Stop Enabling Sex Traffickers Act/Fight Online Sex Trafficking Act (SESTA/FOSTA) law which have seen implications across the globe for digital sex workers. We offer solutions for ASWs to act more responsibly.

Introduction

While the law and research evidence has addressed intimate image abuse since 2015, we uncover an extension of this victimisation among commercial content creators who sell sexualised images and content online. The broader landscape of sexual content and platform politics (Tiidenberg and Van Der Nagel, 2020) is important to reflect on why commercial content creators are ignored as legitimate workers. Sexual content on websites has received high levels of attention from regulators, children’s safety advocates, feminist lobbyists and researchers. Yet those that practice sexual entrepreneurship are often subject to scrutinising behaviour and restrictive boundaries (Bleakley, 2014), increasing vulnerability. It is this complex landscape of the victimhood of commercial content creators that is explored. For noting, in this article, we use the term ‘commercial content creator’ to acknowledge digital sex workers who create sexualised content sold for commercial gain. We use the term ‘Adult Service Websites’ (ASWs) to refer to platforms which are either solely for the advertisement and provision of sexual services or platforms which are forums where sex advertising takes place. The partner in this research project, the Revenge Porn Helpline (RPH), operated by South West Grid for Learning, was established in 2015 alongside the introduction of the offence criminalising the act of sharing private sexual content without consent with intent to cause distress.

Background: pay-to-view content, a pandemic and the rise of ‘intimate image abuse’

Digital technologies have had significant impacts on the nature, organisation and characteristics of sex markets worldwide (Sanders et al., 2018). Indirect sexual services have risen as a dominant form of sex market (Jones, 2016, 2020a). Moreover, various forms of digitally facilitated crimes exist because of technologies (Henry et al., 2023; McGlynn et al., 2017). Paradoxically while the Internet provides safety, an increasing number of violations are regularly experienced (Campbell et al., 2018). The changes to advertise, negotiate and provide sexual services online have gained momentum over recent years as new forms of sex markets develop, to the point that the sexual services spaces are fluid and transient (Cunningham et al., 2018). One such space includes subscription payment sites where customers pay to follow or view content uploaded by content providers. Furthermore, the COVID pandemic resulting in government restrictions had a notable impact worldwide, including pausing normal work patterns, the mobility of citizens and social interactions (see Brooks-Gordon et al., 2021; Brouwers and Herrmann, 2020; Murphy and Hackett, 2020; Shareck et al., 2021). This backdrop of both technological change and governments’ pandemic responses meant that subscription sites grew exponentially (BBC News, 2021; Safaee, 2021; Sanchez, 2022), expanding the nature of sexual labour and work (Bernstein, 2019). The media quickly promoted the ‘success’ of subscription platforms for content providers (see BBC News, 2021; Jones, 2020b). Yet, it was clear on the ground that support for victims of misuse remained limited where the context was commercial as opposed to the private misuse of images.

Platform politics: how legislation shapes sexual morality

Platforms are not abstract hardware but are understood through their workings within cultural and political values and actions (Ihlebæk and Sundet, 2021). Meanwhile, sex work is policed by governments and primarily regulated by these digital platforms who govern online sex work, sexual behaviour, stigmatising sex work and the ways we respond to crimes against sex workers (Stegeman, 2021; Stjernfelt and Lauritzen, 2020). Chib et al. (2022) refer to ‘subverted agency’ to understand how digital technologies can be personally empowering for sex workers, while reinforcing normative regimes that marginalise sex workers online. Thus, global online platforms have the power to transform institutional politics (Ihlebæk and Sundet, 2021). Platform studies provide an overall framework for addressing changing power dynamics on a socio-cultural and political level (Gillespie, 2018; Van Dijck et al., 2018).

It is the bigger platforms that hold the power and impact policy changes, while the smaller platforms, ASWs included, must deal with the changing landscape. Researchers report how platforms have disrupted advertising, impacted national media economies while regulating advertising, content creation and platform services (Lobato, 2019; Ots and Krumsvik, 2016). Consequently, content creators struggle to maintain identity and ownership over their content, when working in an environment in which platform power threatens trust and ownership of user data (Ihlebæk and Sundet, 2021).

Legislation in England and Wales currently limits the definition of a ‘private’ image to ‘something not ordinarily seen in public’ (Criminal Justice and Courts Act, 2015). If sexual content is shared or sold publicly, or made publicly accessible, the image is no longer private and falls outside the remit of the RPH. The term ‘intimate image abuse’ captures the range of crimes and issues the Helpline can support, including private sexual content shared or threatened to be shared without consent, and intimate content recorded or taken without knowledge and consent. The Helpline supports anyone over 18 and UK-based who has experienced intimate image abuse. In 2021, they received 4406 cases reported to the Helpline and worked to remove 27,000 images non-consensually shared online, achieving a 90% removal rate. The COVID-19 pandemic saw a rise in image abuse cases across the Helpline, including new and complex behaviours, such as the trend of commercial content creators having content leaked or shared beyond the original host platform. Sexual content already consensually shared online to platforms would not fall under the legal definition of sexual content and hence not within the remit of RPH. Cases received to the Helpline from sex workers and commercial content creators grew 142% from 2019 to 2021, showing a growing need and demand for advice and support.

Many have questioned how platforms can be governed and regulated to prevent the negative consequences of platform power. We must also question to what extent platforms are responsible for content that is published on their sites, when that content is misused (Cammaerts and Mansell, 2020; Gillespie, 2018; Van Dijck et al., 2018). While platforms engage in algorithmic and human moderation strategies, increasingly, political powers are intervening in their regulation (Mansell, 2021). In the United Kingdom, upcoming legislative reform entitled ‘The Online Safety Bill’ is underpinned by an understanding that the criminal law applies to online activity in the same way as to offline activity. The Bill highlights how misuse of the Internet can cause long-term harms to users. Within scope of the Online Safety Bill’s (2022) includes are duties of care on user-to-user services, illegal content duties and user empowerment duties. The harms contained in the Bill include revenge porn and sexual exploitation (Department for Digital, Culture, Media & Sport [DCMS], 2022). The Bill addresses these harms by requiring online services to take a proactive approach to managing risk of harm, and to ensure that risks to the safety of users are considered as part of product and service design (Ofcom, 2022). However, these points are discussed in the legislation in the context of platform economic power and authority, and not in relation to the content and rights of platform users.

Methods

This research project was conducted in partnership with the RPH to inform how they can improve support and access to appropriate information and services for those who work within the online adult entertainment industry, in particular content creators. This community is often socially excluded from mainstream services due to the stigmatised nature of their role (Blunt and Stardust, 2021). Ensuring content creators are at the forefront of policy and service developments is crucial to decrease the chance of marginalisation and ‘othering’ (Campbell, 2016; O’Neill, 1994). Consequently, a paid advisory group was set up with members of the sex industry community to ensure the project was feasible and appropriate. Below we describe the qualitative interviews with content creators, practitioners supporting sex workers and operators of ASWs, and the survey with the content creation community.

Recruitment and sample

The project lead, researcher from UoL and the practitioner from RPH all had established working relationships with online ASWs, peer-led organisations and sex workers and are members of UK forums and practitioner groups working on safety and rights for sex workers. Thus, recruitment and dissemination of the survey was completed within these existing networks. The interview participants were recruited to the survey voluntarily by advertising the survey via professional organisations and activist groups in the sex work community as well as via key ASWs focusing on UK sex markets. In this article, we chose not to identify the platforms we worked with to protect their anonymity as agreed in the consent process for this and future studies. This is important given the ongoing relationships with hard-to-reach ASWs for research, impact and policy-related activities. While there is no intention to protect the platforms which are not taking responsibilities for harms on their websites, there is an ethical responsibility to ensure continued engagement with ASWs to collectively work towards safer online sex work environments and sex workers’ rights. We see the value in critiquing specific ASWs but we wish to do this directly through a knowledge exchange and dissemination process with the platforms to initiate change. There is an important point to be made about not jeopardising sensitive relationships with gatekeepers who can turn off accessibility to hidden communities.

Survey

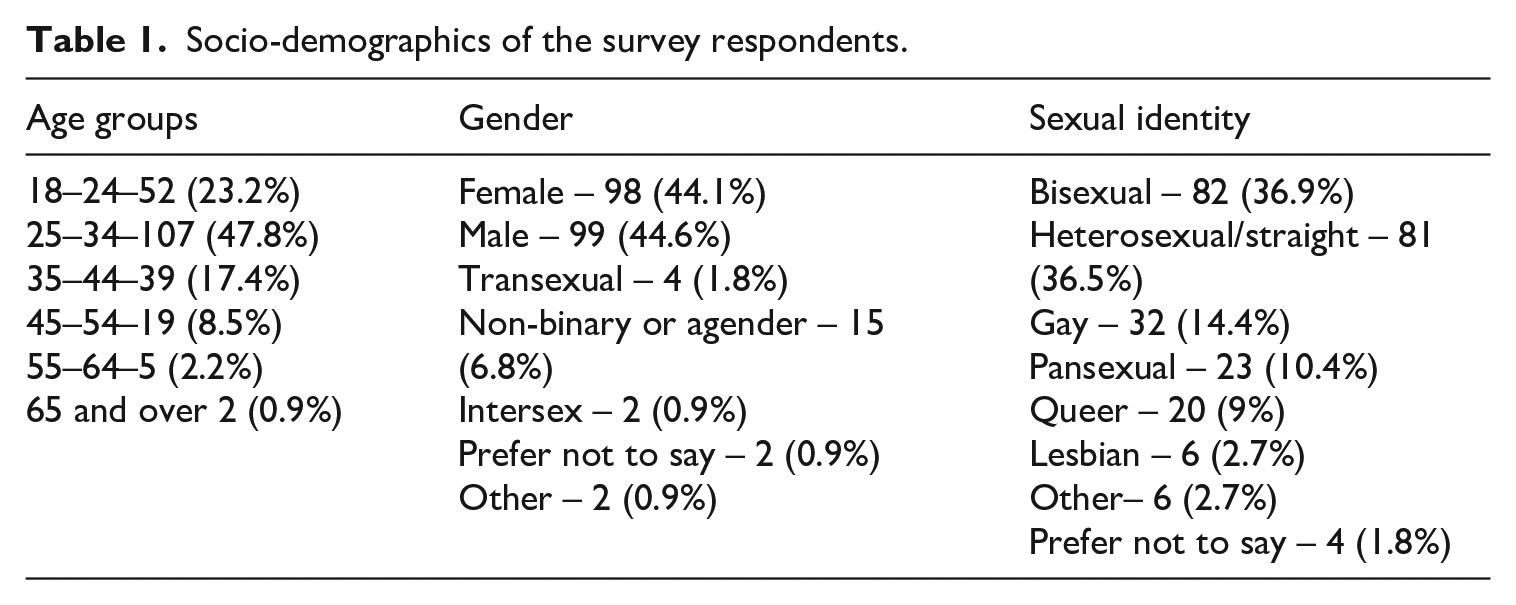

Two hundred twenty-one participants answered the survey to capture specific information to better inform the RPH in addition to core demographic information of participants (see Table 1). The survey ran for 11 weeks from mid-June 2021 to September 2021. It was shared across various social media sites, through information pages on online platforms and across industry forums.

Socio-demographics of the survey respondents.

The majority of respondents identified as British 63.8%, n = 140, White 22.2%, n = 49, with other ethnicities also represented in smaller numbers.

The survey questions were organised by themes such as type of content created and where shared; safety knowledge; experiences of harm; platform customer services experiences and how this could be improved. Areas such as harms which included threats to out, content shared without permission, blackmail, harassment and doxing were probed to allow the participants to share who perpetrated the crime, and their ability to report. Where safety advice/support was sought was also a core theme. Respondents were also asked what they thought was needed to improve services and their safety. The survey allowed for contact details to be shared by respondents who wanted to be further involved through a paid interview (£25 Amazon voucher).

The interviews

We conducted 16 semi-structured interviews with online content creators, 15 via zoom with one by email. There was a mix of Internet use for either solely delivering a service such as web-camming, adult content creation (n = 9), or for both service delivery and advertising sexual services to be delivered physically (n = 7). Ages ranged from 22 to 50, with a range of duration of involvement from a year to a decade or more. The interviews asked non-intrusive questions about their experiences while working online, what they felt were missing from platforms they worked on and opinions on improvements. Safeguarding from harm was a priority as the semi-structured nature of the interview allowed for expansion of the baseline questions and provided participants a safe space to share their views.

Practitioner interviews were conducted with nine individuals from organisations who offered services/support to those involved in the adult industry, three of which were from peer-led organisations. We also interviewed a police officer who is a full-time Sex Work Liaison Officer (SWLO). Participants were asked about what services/support they offered to people within the sex industry, history of online harms reports, their level of knowledge regarding the online industry and content creation, and where they felt more knowledge was needed.

Seven interviews were conducted with staff from six ASW platforms, including three large international platforms utilised frequently by the UK sex market, hosting sexual services via a subscription and/or tipping process. Other platforms included a small peer-led UK platform, relatively new to the sex economy; a large European platform which focused on selling sexual content via a streaming service; and a US peer-led adult performer service, who offered advocacy and legal advice to adult film performers. We asked about their platform operation, member support processes, protection protocols from online harms, platform improvement plans and any knowledge gaps. Our project built in a feedback loop so information from participants will be anonymously fed back to the ASWs to assist the improvement of their sites and support. The qualitative data were analysed using thematic analysis (Maguire and Delahunt, 2017). Five main themes were identified: incidents of online image abuse and its impact, reporting to ASW’s and their response (including other reporting routes), motivations for involvement, current report/support routes and how safety for online content creators can be improved. In this article, we report on the first two.

Findings

Although the study’s aim was to explore the concept of ‘image-based sexual abuse’ (McGlynn and Rackley, 2017: 1) experienced by adult content creators and how they feel responses to such crimes could be improved, participants also shared a more personal narrative of their daily lives, sharing why they were involved in this rapidly evolving platform-based sex work. To understand participant experiences, we adopted Jones’ (2016), ‘polymorphous paradigm’ (p.228), recognising the fluidity of experiences, both the good and the bad, the pleasure and the danger. Therefore, we share participants’ experiences of online image abuse and how they negotiated the impact and aftermath, their motivations for involvement with erotic labour, the benefits to them, and what they feel adult sites need to change to develop accountability, acceptance and improve safety measures.

Misuse of content in the commercial sexualised context and its impact

Although creating and selling sexual services online can carry less risk considerations than face-to-face sexual services (Cunningham et al., 2018; Jones, 2020a), the rise of the Internet as a commercial platform for erotic labour has seen an increase in certain harms. One of these is ‘Image-Based Sexual Abuse’ which sits along a continuum of sexual or sexualised abusive behaviours (McGlynn et al., 2017). Previously, this discussion has largely centred around the sharing of private images resulting in abuse rather than commercial adult content. This relatively new form of risk has not been considered beyond activist/support organisations such as the RPH and National Ugly Mugs (NUM, 2021). As often seen within the digital abuse context (McGlynn et al., 2017), interview participants reported multiple experiences of online harms expressing frustration regarding potential loss of revenue and control of their content. There was a strong feeling of being vulnerable to potential online abuse by unknown perpetrators:

You can feel very vulnerable because you’re just putting yourself out there . . . It’s the abuse of it, if you haven’t got thick skin and if you’re really shy. (Sharon, 30s, part-time cammer)

With the digital expansion of sex markets, and the minimal levels of online anonymity afforded to users the ability to take illicit screen shots or recordings of creators’ content and post online anonymously is increasingly commonplace (Sanchez, 2022; Stroud, 2014). Some participants describe how they fell victim to a platform rather than individuals stealing their content:

So there are three websites, of which I’m aware, that have hijacked my pictures and my phone number and I use the word ‘hijack’ some people say they ‘scrape’ our profiles from places where they’ve uploaded them . . . without our consent. . . ‘The way that I’ve been finding out about those websites is by having punters call me and tell me that they’ve seen me on those websites’. (Poppy, 30s, works online and full-service face-to-face).

Unscrupulous copying of images by platforms was suspected to be much more common than originally expected and non-consensual sharing was not only about rogue behaviour from purchasers.

These videos are not videos I’ve made, they have somebody recording me whilst I’m in these private shows and I don’t know whether it’s [adult platform] themselves or whether it’s just some bloke with a camera’. (Sophie, 40s, part-time cammer and sells content online)

This anonymity of digital perpetrators was evidenced within our survey with 65.2% of respondents reporting that the crimes against them were perpetrated by strangers. For others like Carly, their experiences were closer to home when she was ‘outed’ to her family and friends on a social media platform by her partners’ ex, experiencing the stigma and ‘whorephobia’ that Weitzer (2018) describes as ‘omnipresent in sexual commerce’. Carly lost her mainstream employment due to the videos also being sent to her employers:

I get a phone call from a friend back home saying, ‘[name] these videos are getting sent around everybody back home. You’re in every group chat, everyone’s talking about it’, and I’m talking thousands of people, not just a couple of people, it was bad. . .They [employers] got sent the videos, so they then didn’t speak to me for a few weeks . . . they ostracised me . . . they tried to basically make it out like I was going to ruin their business, so they wanted nothing to do with me. (Carly, 20s, part-time cammer and commercial content creator)

This risk to people’s livelihoods is ever-present especially for those who blend their sex work with mainstream ‘square’ employment (Bowen, 2021). Mark described how his office colleagues used his online work against him:

They took it upon themselves one day to catfish me on Grindr. They pretended to be a man, they made a Grindr account and looked me up. They got me to share my photos. They asked if I had a platform that I posted any of my content to. They sent me lots of photos back of this man that turned out not be true obviously, it was them pretending. So they got some of my explicit photos in return, which they then took into the office and shared around the entire department, and showed them to all of my colleagues, showed them to my bosses, showed them to my line manager, my line manager’s line manager, and the head of my department. (Mark, 20s, works part-time online, commercial content creator)

Such ‘othering’ of people within the sex industry, often expressed through verbal abuse and intimidation to ‘put them in their place’ (Campbell, 2014: 58) has been researched in the context of marginalised communities and hate crime (Armstrong, 2019; Campbell, 2014; Campbell and Sanders, 2021). We have established the stigma street-based sex workers experience (Armstrong, 2019) but these targeted behaviours have moved online and are acutely felt by individuals with a profound impact on the victims’ lives:

I think I suffered PTSD from it. That month, because it was two weeks of it all going on back home and then two weeks in succession of it all going on up here, and my whole life just kind of flipped. And it really shook me to the core. (Carly, 20s, part-time cammer and commercial content creator)

This opening up of their status as sex workers can have catastrophic effects on their daily lives while perpetrators are allowed to remain anonymous, holding all the power but no consequences. For Sophie, this reached beyond her own personal consequences due to the dual roles she lived and how her ‘outing’ could have ramifications on her mainstream employment. For Carly, it emphasised how people working in the sex industry are treated by wider society, as the perpetrator in her case was given more consideration by police due to how a potential court case could impact her employment:

I get a phone call at 11.30 in the middle of the night, I was fast asleep, by a police officer I’d never spoken to before. ‘Are you sure you want to go ahead with this?’. . . And he was like, ‘But the thing is, you’re going to ruin her [the perpetrator’s] life’. And he was laying it on, and I was half asleep, it was in the middle of the night. What a time to ring somebody. So, he kind of pressured me into dropping it, I felt. So, I dropped it. (Carly, 20’s, part-time cammer and commercial content creator)

Compartmentalising victims of crime as blameworthy or deserving is discussed within victimology discourse (Black et al., 2019), which sex workers have had to historically fight against (see Bowen et al., 2021). This new era of digital crime and its inadequate social justice response (Bond and Tyrrell, 2021; McGynn, Johnson and Rackley, 2021; Zeniou, 2021) presents similar difficulties regarding culpability for survivors but significantly for those in the sex industry. This is highlighted by Claire in response to the omission of sex workers from the UK Online Safety Bill:

We never really fitted in exactly with the other instances or forms of image abuse. It’s still seen as different somehow, because like what you said, it’s seen as a public image, and that maybe even it’s a copyright infringement or something like that. But it’s always been an uncomfortable fit trying to put sex workers into the narrative around image abuse. You’re not seen as victims in the same way, even though the potential consequences for you can be more dire than would be for other people. (Claire, 20s, works both online and full-service face to face)

Eikren and Ingram-Waters (2021) discuss the blame that victims of revenge porn receive due to the perception of bringing it on themselves and getting ‘what they deserve’ because of sexual agency and empowerment. Often discussed within feminist discourse and female victimisation Mark’s experience highlights that it is not restricted by gender:

People were telling me [I] brought this upon [myself] because if you didn’t want this to happen you shouldn’t have ever put anything up on the internet in the first place. You were asking for it. You deserve this kind of thing going on. You deserve to lose your job because blah-blah-blah. The more people started to pile that on, the more I did for a moment start to listen to them and I started to agree with them. (Mark, 20s, works part-time online, commercial content creator)

Interviewees commented about the constant threats of outing from strangers, work colleagues, and customers who used their anonymity in sending verbal slurs and the assumption that those working in the sex industry would never report to the police. While reporting to the police on harms is rare for sex workers (Bowen et al., 2021), our study explored how content creators used website platforms as a possible avenue of assistance and rebuttal to their content being misused.

Reporting misuses to ASWs

This section explores the responses participants received when reporting experiences of commercial image misuse to ASWs. Interviewees discussed the complexity of trying to have their non-consensually shared content removed, the lack of accountability from sites and the feeling of disempowerment due to the protracted process and dismissive nature of responses: ‘It’s this feeling of David and Goliath, but Goliath isn’t really listening, and David has to just guess what Goliath wants’ (Johnny, 30s, works full-time online, commercial content creator).

Although many survey respondents identified that they were able to report content misuse to the relevant platform (40.7%, n = 68), responses were slow, creating additional barriers and delays: ‘the machine takes a long time to respond, and sometimes it’s literally been months’ (Johnny). Inconsistent approaches and a perceived lack of understanding and accountability by platforms was an overriding finding:

So I kept, again, requesting them to remove my phone number and my pictures, I was doing that two or three times a week, this is after they said they rejected the DMCA (see below), they kept ignoring me and then eventually, they once again told me to complete a DMCA and they said that they would say the same thing to any lawyer that I might instruct to pursue the matter on my behalf! (Poppy, 30s, works online and full-service face-to-face)

When discussed with ASW operators, they claimed to have empathy towards their members’ situations especially from staff with lived experience of sexual labour: ‘These are big battles and of course I understand as a creator the emotional and directly financial damage of piracy’ (Platform 1, DMCA team). Despite this apparent understanding of the situation most interviewees found the whole process challenging, having to ‘Jump through hoops, but then the hoops are changed’ (Rich, 20s, works part-time online, commercial content creator). Johnny discussed his exasperation when attempting to start the process of removal of misused images using the recommended process of a DMCA (Digital Millennium Copyright Act) notice (RPH, 2022):

Once or twice I’ve found stuff on Tube sites, and I’ve gone to the DCM, whatever it’s called, the ‘take-down’. It does take time, and one of the things that is difficult is they have several steps that you have to jump through to prove that it’s yours. . . . some of the steps . . . They feel bureaucratic and difficult. Instead of just being able to say, ‘This is me, this is my site, go here, see where it should be’, you have to then upload a load of stuff. (Johnny, 30s, works full-time online, commercial content creator)

Although there were cases with positive outcomes this was not a uniform response with the majority reporting sluggish procedures with no clear route to specified help but rather a generalised ‘one-size-fits all’ service. This lack of individualised response has long been highlighted by researchers and sex workers (Cunningham et al., 2018; Nelson et al., 2022; Sanders et al., 2018). This generalisation is something Rich reflects on in addition to the anxiety of where his information was ending up after sending it into what he felt was an unknown ether:

I found it incredibly difficult to find anything, only a few support categories to choose from and my request was quite specific? It shouldn’t be this hard to get an email address, that was all I wanted but filling out those forms and never receiving a response, I don’t know where it’s going? (Rich, 20s, works online part-time, commercial content creator)

This was reiterated by Sam who also highlights the generality of his response to queries of support:

‘The wait times are often upwards of two weeks on both [Platform 2] and [Platform 1], and they’ll tend to respond with unhelpful, generic information that I already found by searching for it’. . . ‘We see straight through their copy/pasted responses to our tickets and how fed up they must be. We realise they go through thousands of tickets per day, but as with every support channel it’s important to maintain a relationship with us and not snub our concerns away without at least reading them and addressing them properly’. (Sam, 20s, works online part-time, commercial content creator)

Participants considered such relationships with the platforms as problematic and felt dismissed by ASWs who are making major profits by taking high percentages from providers:

I don’t think they give a f**k outside of where their money comes from. They’ve got the same inbuilt misogyny as the police and everybody else has . . . I don’t think they value sex workers. (Marie, 40s, works both online and full-service face to face)

Such challenging processes are leaving commercial content creators feeling increasingly isolated and de-platformed (Blunt and Stardust, 2021). Furthermore, participants highlighted an increase in surveillance of their accounts by platforms alongside inconsistent rules which often led to their accounts being questioned, suspended or completely shut down (Grant, 2021):

there was one email that they said, ‘We’ve had multiple reports of you using this word’. This was [Platform 1]. And the way you read it is that multiple people are complaining and reporting you. And I suddenly realised that’s not what it is. They’ve done a text search, and it’s come up as bumping this number of times I’ve used this word or that word and they contact me saying, ‘You’ve done this, and you mustn’t do this, and you might be chucked off’. (Johnny, 30s, works full-time online, commercial content creator).

This issue of increased scrutiny or ‘algorithmic profiling’ which can effectively wipe out members’ accounts is disproportionately affecting those who work online due to its inaccurate contextualising of sexual content. The constant surveillance is resulting in commercial content creators feeling like collateral damage (Blunt and Stardust, 2021; Myers West, 2018). Johnny reflects on how this has led to rule changes, yet the creators are the last to know:

It’s just that, ‘You know what? We’ll use you, we’ll use your content, we’ll use your bodies, and then throw you away when we’re done with you . . .’ It’s just that feeling of, ‘You know what, we’re going to ignore you totally, completely keep you in the cold, use you for as long as we can squeeze as much out of you, and then just drop you’. And that feeling is very nasty. (Johnny, 30s, works full-time online, commercial content creator).

This ever-changing regulation through localised terms and conditions of platforms (Stegeman, 2021) and inconsistent marketing parameters of sexualised services is seen as further discrimination against an already marginalised community and unreflective of the broad range of virtual sexual services that are now demanded by, and offered to, an increasingly digitalised society (Cunningham et al., 2018; Cowen and Colosi, 2020). Participants explained how such inconsistent terms and conditions led to not knowing what they could create or what was banned. This algorithmic surveillance was an additional risk of further control of their content by the platform as well as the possible misuses from customers and strangers due to perceived copyright restrictions from the sites themselves. Poppy discusses her anxiety about approaching the site she worked on for help due to the threat of losing her account and feeling unclear about procedures:

Once out of the blue, [Platform 3] actually closed my profile down, at that point I wasn’t blogging so it wasn’t anything to do with that, they did restore it quite rapidly but what I heard when I first went on [Platform 3] is that if they find that your image is on other websites, they might close down your profile with them as well, so that’s another reason why I haven’t raised the matter of the hijacking of my profile by other websites. (Poppy, 30s, works online and full-service face-to-face)

This question of content ownership by the tech giants who act as gatekeepers over their members’ digital content, including regulation and control (Cowen and Colosi, 2020; Passonen, 2019) is seen as another barrier to creator’s inclusion as recognised entrepreneurs. Sam reflects on this lack of autonomy and the impact on his ability to rely on his online work as a main income stream due to the precarious nature of the working conditions:

Unlike physical, tangible forms of art, online content isn’t exactly owned by us, but rather we rent a corner of some larger website and they take care of all the administration. This means they can shut us down and kill our income for whatever reason at a whim, and it makes having a reliance on it for income inconsistent at best. (Sam, 20s, works online part-time, commercial content creator)

This complex nexus that exists between platform, creator and subscriber with the latter considered as often taking precedence by ASWs only exacerbates the ‘online othering’, disposability of workers and lack of control that is evidenced within the wider sex industry (Blunt and Stardust, 2021; Harmer and Lumsden, 2019; Sanders, 2016). Rich discusses how he decided to move platforms due to his content being stolen, but rather than being able to decide when and how this was done, he felt the platform gave more consideration to the impact on his subscribers:

‘[Platform 1] they won’t let you delete your account if anyone is still subscribed’. . . ‘I feel when you are creating that type of content you are putting yourself in a fairly vulnerable feeling situation and it should be within the rights of the creator to be able to have the content removed whenever because it’s my content’. . . ‘they seem to give more weight to subscribers than content creators, but [platform 1] can remove a video whenever they feel like it of yours if they feel it goes against one of their rules’. (Rich, 20s, works online part-time, commercial content creator)

Despite this Rich considers himself privileged due to having alternative income and recognised how his fellow content creators were vulnerable. Several interviewees pointed to the UK criminalised framework of governance as a structural issue that compounds their discrimination from tech companies:

I think legitimisation of it [the industry] would be so beneficial to everyone and I’m fortunate because I have another job but if I was having to rely on just content creation for my income and then facing any of the things we’ve been talking about I could see how that would very quickly becoming that was very stressful as you’d need to continue working to pay the bills but having to deal with how difficult it is to get help with anything at all!! (Rich, 20s, works online part-time, commercial content creator)

Participants felt that ASWs needed to be more accountable for their safety and protection and felt that robust, effective support routes were lacking alongside the need to recognise sex work as legitimate labour (Rand, 2019). This was reflected in their feelings of disposability by platforms and society due to the availability of eager replacements (Rand, 2019; Stardust, 2020). To explore the role of ASWs in regulating online content, we now explore the opinions of ASWs and what they feel their services offer commercial content creators.

ASWs: their role in prevention and protection

The larger ASW operators we spoke to protested vehemently when questioned regarding their perceived lack of accountability about image abuse occurring via their platforms, viewing themselves as easy targets for criticism over the smaller more hidden sites:

I can tell you categorically that it’s simply not the case. A lot of the information in the press is obviously blown out of all proportion. Trust and safety is an issue, and sharing content illegally, and it’s not the sites like us . . . We’re available, you’ve got us on a call, we’re scrutinised by everyone because we’re a well-known brand. But it’s the sites that aren’t that you can’t find where they’re hosted, you don’t know who runs them, you could never speak to them . . . They tend to be the biggest issue. (Management Team – Platform 2, large international ASW) It’s like a silent service for us, because a lot of people want to complain about the piracy, but they don’t actually know what we’re doing proactively to help to protect their content. (Staff Member – Platform 1, large international ASW)

Such censure is increasingly seen in sensationalised media reports which criticise how ASWs are run and managed, considering performers as a commodity to their ever-increasing empire, leaving content creators feeling like collateral damage in the capitalist machine (Stardust et al., 2020). Participating ASWs refuted this explaining how they ensure members are protected with support centres, DMCA takedown services, fingerprinting and watermarking content to ensure tracking, ‘hashing’ technology to block content being uploaded and layers of security that should deter perpetrators from content misuse.

However, they spoke of the difficulty filtering the non-consensual transgressions due to the sheer volume of traffic and how individual appeals for help might be overlooked against more obvious contraventions:

Every day is a battle that’s not going to be won. The incoming is greater than the outgoing. So my job really is to balance the response time of complaints, and focus our proactive efforts on hitting the biggest players, the most prolific target websites . . ., ‘it’s whack a mole’. . . ‘the piracy networks’, the community of bad players who are actively leaking, crowdfunding their efforts to pirate whole archives of content creators . . . in an organised fashion. (Staff Member – Platform 1, large international ASW)

Smaller sites report being able to offer a successful, more personalised service to their members:

‘So my cousin was on [one of the big platforms] and was really dissatisfied with the customer service, how the website operated, it was super slow and they ripped you off massively with conversion rates even though they’re a UK based company everything’s in dollars. They would kick people off with no warning, wouldn’t reply to emails’ . . . ‘That’s probably one of the biggest things that people do say about us is that we actually care . . . [owner] the main thing she wanted to do was make sure there was actual people who understand and able to reply to the emails’. (Staff Member – Platform 6, small independent ASW)

This more personalised customer service was seen as crucial by creators. This was accepted by the management team of a large international ASW, who recognised there was room for improvement but spoke of the need to exercise caution:

It’s a bit of a difficult one, most of our Support Team [are from overseas] and to be honest with you they do come across like they’re bloody robots sometimes, their responses are quite automated, and it is a human being that you’re talking to, but they do have quite a kind of scripted response . . . So it’s a fair comment and it’s something that we do need to work on. (Management Team – Platform 1, large international ASW)

Another area of improvement highlighted was the verification process for creators and subscribers, in which some interviewees felt would help prevent image abuse:

All content uploaded must have consent, model releases and ID’s, no content should be able to be uploaded online without all this info, Joe public should not be able to upload stolen content without providing their ID and address also, which should be handed over to the police if they upload content they don’t own. No one would upload revenge porn if they had to do it under their real name. (Poppy, 30s, works online and full-service face-to-face)

One of the larger sites reported already starting a similar process to this alongside additional safety procedures with positive results:

We’ve had a lot of positive feedback from our Trusted Flagger programme since we launched it. Although it’s hardly ever used because the content’s just not there for people to take down in all honesty. Especially since when we launched the ID requirement for uploaders. It’s a huge deterrent for anyone uploading anything that shouldn’t be there. Management Team – Platform 2, large international ASW)

It was recognised by all that inconsistent terms and conditions were a problem with a need for a simplified version, or common language. This research was seen as beneficial to identify where improvements were needed and also to hear customer feedback:

any advice you can give us on how we could potentially do things better is always taken on-board . . . We only want to help content creators, it can only be a good thing. (Management Team – Platform 2, large international ASW)

Although as shown platforms stated that they had robust systems in place to ensure the protection of their members, the lack of appropriate responses that commercial content creators felt they were offered following abuse resulted in them looking further afield to mainstream support with no guarantee of an appropriate, informed response.

Discussion: the side-lining of commercial content creators

In our conclusion, we want to address three key issues which these findings point to. First, the safer environment of online sex markets is now being threatened by the lack of attention to how commercial content creators are affected by image abuse. Second, there appears to be a disjuncture between the regulation of sexual content and further outlawing of image abuse yet a distinct lack of attention to commercial content creators. Third, we suggest some practical ways in which ASWs can begin to act more responsibly towards the generators of content, from whom platforms make abundant profits.

The limits of safety in the online sex work world

Online spaces have traditionally been heralded as spaces for the liberation of sexual identities, sexual exploration and practices (McNair, 2013). Initially with minimal checks, the adult sex entertainment economy has thrived globally and become economically mainstreamed and increasingly socially acceptable as norms around sexuality become more liberal in the West (Brents and Sanders, 2010). The shift to online sex work, pre-pandemic, was extensive and since 2020 the online sex work world has become the dominant place where sexual services are both advertised/negotiated and also purchased through streaming and live webcam services. The research evidence stacks heavily in favour of online sex work creating a safer working environment either for checking prospective clients or working entirely remotely (Campbell et al., 2019). However, this is becoming less defensible. Our findings demonstrate that many online content creators have their images misused with limited come-back or protection from either the website platforms or the law. The current approach to both regulation and legal frameworks around the governance of platforms seeks less to offer redress to individuals but more to target the social media giants to be accountable for their content. This effectively leaves the commercial content creators out in the cold as they are not protected by any party.

The landscape of platform governance is key to place these current findings in the context of broader global processes which explain how and why sexual content is regulated. As Gorwa (2019) notes our understanding of how platform governance is being constructed from a range of actors from government, users, businesses, advertisers and other political actors is only just emerging. Where sexual content is concerned, there is a complex play between liberal attitudes around free speech and capitalist principles of entrepreneurialism with sexual politics which hones in on sex as risk. There appears to be a significant contradiction in how commercial content is treated within the broader sexual politics of online content. On one hand regulation is increasing, with greater scrutiny on what is acceptable sexual content. Lead by the US policy, which is influencing global trends, at the time of writing the Online Safety Bill is proceeding through the UK parliament with the aim of making social media and website platforms responsible for content so that adults and children are protected from online harms. At a final stage, there has been a clause (16 of section 7 priority offences) which intends to introduce the offence of ‘inciting or controlling prostitution for gain’ charged at website platforms. This would have the responsibility of making platforms responsible for promoting harmful content which has been poorly defined thus far.

Thus, when it comes to content creation, Fight Online Sex Trafficking Act–Stop Enabling Sex Traffickers Act (FOSTA–SESTA) plays a role in its regulation on a global scale. FOSTA–SESTA, tackled the ‘facilitation’ of sex work by platforms by outlawing sex work advertisements. Thus, if platforms host advertisements for sex workers’ services they can be prosecuted (Blunt et al., 2020; Campbell et al., 2019). This strict regulation of sexually explicit content led to the de-platforming and proactive shadow-banning of sex workers online, as sex workers reported losing access to accounts, advertising platforms, payment processes and support networks (Blunt et al., 2020; Blunt and Wolf, 2020; Jones, 2020a). These consequences demonstrate how content creators are at the whim of political and platform powers (Ihlebæk and Sundet, 2021). There are concerns from sex worker organisations such as the English Collective of Prostitutes (ECP, 2022) who are fearful that the US version of FOSTA–SESTA is being introduced via the backdoor and that advertising sexual services is being construed as a ‘harm’. The offence, if passed, could ultimately criminalise sexual advertising online.

On the other hand, there are further attempts to use legal frameworks to protect victims of image abuse. Announced in November 2022 (Ministry of Justice 2022), the misuse of private images via Information and Communication Technologies (ICTs) is soon to become a crime in the United Kingdom (along with ‘downblousing’ and ‘deepfakes’ where sexual images are altered to appear like someone else). The move to outlawing ‘deepfakes’ suggests the seriousness of both the harm non-consensual sharing causes and the reach of the government to send a clear message (largely to male perpetrators) that this behaviour is unacceptable and will not be tolerated. Yet, commercial content creation is not addressed.

Why are commercial content creators ignored?

While the impact of image abuse is noted for the general population (McGlynn et al., 2021), the damaging nature of non-consensual sharing of commercial content is rarely highlighted. Moreover, platform politics is dictated by cultural norms about sex and sexual identity (Ruberg, 2021). The sexual content creator works within a system that supposedly encompasses a broad range of prosocial attitudes that relate to sexual behaviours. Yet despite this, content creators’ actions are not viewed as self-care or as autonomous work but are subject to moderation and regulation (Stegeman, 2021).

This is underpinned by the purposeful lack of acknowledgement that sex work is part of many online platforms. Stegeman (2021: 1) notes that large webcam platforms: ‘for legal and financial reasons, reject the idea of camming as sexually explicit or as (sex) work’. Thus, biases against sexuality and sex work underlie most regulatory policies. The moderation of particular types of sexual content shapes what sexual identities are visible and normalised (Stegeman, 2021). The dominant online platforms thus hold the power to moderate what is (and is not) legitimate sexual content (Ihlebæk and Sundet, 2021; Ruberg, 2021). Thus, content creators are often ousted, marginalised and shadow-banned across platforms, limiting sexual expression, autonomy and their labour rights.

This points to a broader fear from website platform operators of the implications from laws such as FOSTA–SESTA. The fear of breaching legal frameworks has meant that strict regulation of sexual content has negatively harmed sex workers. Since the changes, many ASWs continue to downplay the fact that certain types of content creation are legitimate work (Stegeman, 2021). Underlying motivations are largely to avoid prosecution and to appeal to payment processors who discriminate against sex workers (Blunt and Wolf, 2020). Furthermore, framing workers as independent contractors in the gig economy allows platforms to waive labour laws and responsibilities (Graham et al., 2017). Thus, platforms hold no responsibility to sex workers, but reinforce sexual norms and biases while presenting an environment in which the abuse of sex workers is legitimate (Stegeman, 2021). Despite this, ASWs continue to profit from sex work while undermining and avoiding true representation and regulation of sex work as work. Thus, too, are violations of their content and privacy beholden to the platform’s regulations of ownership and liability. The US legal framework and approach to sex work advertising has led the global trend of confronting commercial sex in a way that is opposite to the general trends of free speech on social media. Yet at the same time many United Kingdom and European focused ASWs are flourishing, by enabling sexualised content to proliferate but providing little to no protections for workers.

Acting responsibly: how can ASWs improve their service to content providers?

This period where regulation of the online sex industry is taking place is optimal to think about how website platforms operate, who they are accountable for and how sexual labourers can be protected. The monopoly of the industry by the larger, more well-known populous sites (Ihlebæk and Sundet, 2021; Rand, 2019) is problematic for both the survival of smaller, more ethically driven platforms and for the erotic entrepreneur to feel considered (Sanchez, 2022). Monopolisation is problematic for individuals and there is limited good practice from platforms in terms of responsibilities to reduce the misuse of content, or where it happens, support in the removal and implications for individuals.

The governance of platforms is contested between regulation, platform self-governance and user self-regulation (Gorwa, 2019). Platform studies provide an overall framework for addressing changing power dynamics on a societal, cultural and political level, and perhaps hold the answer for increasing responsibility of ASWs (Ihlebæk and Sundet, 2021). Meanwhile, redressing the balance between organisations that are well established and benefit from the current political and economic landscape and latterly, those with less privilege who have little influence over the landscape is required (Ihlebæk and Sundet, 2021). In the case of this article, the context through which we must understand these roles relates to who sets the guidelines for content creation and ownership above the smaller stakeholders who, including content creators, feel the effects of a market which may not necessarily regulate appropriately (Sundet et al., 2020).

Chib et al. (2022) suggest that to transform unequal socio-structural conditions and attitudes, we must attend to contextual intersections of marginalisation and provide a framework for inclusion. Sex workers face vulnerability due in large part to structural marginalisation. Importantly, those who work via platforms, and ultimately make profit for them, are less inclined to reach out to canvas how to improve platforms because they are concerned about the negative consequences of speaking out. Digital sex workers often have their accounts suspended, finances frozen, content wiped (see Stegeman, 2021). Content creators have much to lose from challenging platforms especially when the end goal is usually to make cash quickly. The value of a ‘bottom up’ approach (Strohmayer et al., 2017), whereby potential service users are consulted regarding their needs prior to service development is twofold; to ensure relevance, but significantly to highlight sex workers’ rights as human rights, and the right to work and live safely (Strohmayer et al., 2017). Our research was driven by such principles in partnership with the RPH who identified the need for a more tailored service amid the rise of commercial content creators and the proliferation of harms they could potentially face (NUM, 2021). The evidence from this project has informed the development of a new service at the RPH and tailored information for this hidden group of sexual labourers who are a growing economy of young adults working in sexual content creation.

Footnotes

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The author(s) received funding from an annoymous source for this research.