Abstract

Online targeted advertising leverages an information asymmetry between the advertiser and the recipient. Policymakers in the European Union and the United States aim to decrease this asymmetry by requiring information transparency information alongside political advertisements, in the hope of activating citizens’ persuasion knowledge. However, the proposed regulations all present different directions with regard to the required content of transparency information. Consequently, not all proposed interventions will be (equally) effective. Moreover, there is a chance that transparent information has additional consequences, such as increasing privacy concerns or decreasing advertising effectiveness. Using an online experiment (N = 1331), this study addresses these challenges and finds that two regulatory interventions (DSA and HAA) increase persuasion knowledge, while the chance of raising privacy concerns or lowering advertisement effectiveness is present but slim. Results suggest transparency information interventions have some promise, but at the same time underline the limitations of user-facing transparency interventions.

Keywords

Political campaigns rely increasingly on online advertisements to communicate with citizens. Where traditional advertisements are the same for everyone and are communicated to a broad audience, online advertisements are often tailored and targeted to specific segments of society, on the basis of data collection and analysis (Zuiderveen Borgesius et al., 2018). This produces an information asymmetry in which advertisers target citizens on the basis of personal data, while the targeted citizen is unaware on the basis of what data they are being targeted (if they are at all aware that they are being targeted; Dobber et al., 2018). In other words, “those holding the data know a lot about individuals while people don’t know what the data practitioners know about them” (Tufekci, 2014: 2)

The information asymmetry is thought to be problematic for citizens, because the asymmetry limits citizens’ ability to evaluate political advertising, which negatively affects citizens’ capacity to take informed and autonomous decisions (Helberger et al., 2021; Susser et al., 2019; Zuiderveen Borgesius et al., 2018). This asymmetry was reflected in the Cambridge Analytica case, when data of millions of unwitting Facebook users were collected and used to classify their personality traits (Confessore, 2018). Cambridge Analytica then claimed to have used this information to send people political advertisements tailored to their personality. This covert effort to persuade people has been labeled manipulative (Susser et al., 2019), and can be seen as a turning point that amplified calls for more transparency and measures to safeguard public values around the products and operations of big tech companies such as Facebook (Brown, 2020; Van Dijck et al., 2018).

Lawmakers aim to decrease the information asymmetry by proposing regulations to ensure the inclusion of transparency information alongside online political advertisements (e.g. Digital Services Act, 2020; European General Data Protection Regulation, 2016; US Honest Ads Act, 2019). Transparency information in this study means any user-facing information about the advertiser and/or the targeting criteria used by the advertiser that accompanies online political advertisements. Providing advertising transparency should ensure that the “recipients understand how information is prioritized for them” (Digital Services Act, 2020: 33). In other words, providing citizens with transparent information is expected to increase citizens’ persuasion knowledge.

According to the persuasion knowledge model (Friestad and Wright, 1994), people “develop personal knowledge about the tactics used in (. . .) persuasion attempts” (p. 1). Persuasion knowledge helps people “identify how, when, and why marketers try to influence them” (Friestad and Wright, 1994: 1). People use their knowledge of persuasive techniques to make more informed decisions. Realizing that a (political) advertiser tries to persuade them, would enable the recipient to resist that persuasion attempt (Amazeen and Wojdynski, 2019; Fransen et al., 2015; Friestad and Wright, 1994).

Providing citizens with transparent information alongside persuasive online political advertisements appears an increasingly popular intervention to improve the knowledge position of the citizen vis-a-vis the advertiser and advertising platform. However, there are three challenges associated with current transparency information interventions. First, empirical studies suggest that information (e.g. in the form of labels that indicate that users are exposed to an ad) that accompanies advertisements often go unnoticed (Boerman et al., 2017; Kruikemeier et al., 2016). And when people do notice such information, the information is not always comprehensible (Wojdynski and Evans, 2016). In other words, not all “transparency information” can be expected to be equally effective. Yet, while current regulatory proposals in the United States and the European Union (EU) all propose transparency information to be shown alongside political advertisements, these proposals all present different directions with regard to the desired content of transparency information (e.g. Digital Services Act, 2020; French Law LOI No. 2018-1202, 2018; Honest Ads Act, 2019; Irish Online Advertising and Social Media (Transparency) Bill, 2017). Consequently, these different proposals are unlikely to be equally effective in providing citizens with information that will help them evaluate online political advertisements. Unfortunately, as of yet, we have only few insights into the effectiveness of current regulatory interventions with regard to online political advertisements. Some studies have focused on information about the advertising sources in a US context. For instance, Binford et al. (2021) tested the disclosures designed by Facebook and found that people do look at advertiser-level source sponsorship disclosures, but that these disclosures do not increase people’s ability to understand who is the source of the advertisement. However, there are very few studies that have experimentally tested the transparency information as set out by actual regulations.

Second, we have little understanding of why certain interventions are “more effective” because the mechanism through which these interventions might work is currently poorly understood. Third, because we do not understand the mechanism through which the interventions might work, we cannot discount potential backlash effects. For instance, providing transparency information alongside political advertisement could not only activate persuasion knowledge but also induce privacy concerns, because citizens learn how their personal information is being used to influence them. In turn, activated persuasion knowledge and activated privacy concerns could (negatively) impact advertisements’ effects on political attitudes or behavioral intentions.

Seeing that regulatory proposals in the EU and the United States increasingly look at transparency information to make individuals more aware of political advertisements, but also recognizing that transparency information interventions come with challenges, this study sets out to test the mechanism and effectiveness of different operationalizations for transparency information, as provided by the following laws, bills, or regulatory proposals: US Honest Ads Act (2019), EU Digital Services Act (2020), Irish Online Advertising and Social Media (Transparency) Bill (2017) and French Law LOI No. 2018-1202 (2018) (see Online Appendix D for relevant provisions). Deploying an experimental design (N = 1331), this study seeks to answer the following question: To what extent do different types of transparency information differentially affect political attitudes and behavioral intentions, via persuasion knowledge and privacy concerns?

Theoretical framework

Online targeted advertising differs from traditional advertising in three important ways. First, personal data (and analysis) is the basis of online targeting. Using large amounts of personal data, advertisers can segment a large and heterogeneous audience into several smaller and more homogeneous audiences. Second, targeted ads are tailored to the receiver. This means that ads are not only shown to relatively small segments but also that ads differ between segments. Third, targeted advertising is opaque. Where a newspaper advertisement is visible to anyone, a targeted ad is in principle visible only to the targeted audience (see Zuiderveen Borgesius et al., 2018). Opacity also applies to the “meta-data” of the ad. A targeted citizen does not know how many people get shown an ad, how many versions of that ad exist, on the basis of which (demographic) criteria the ad was targeted, and on the basis of what personal data the ad delivery algorithm matched an ad with the specific citizen (Fathaigh et al., 2021; Tufekci, 2014). There have been efforts to make online advertising more transparent. In 2018, for example, social platforms have launched advertisement libraries as a response to concerns about “the lack of transparency and accountability” in online advertising (Leerssen et al., 2021: 1). However, these ad libraries come with substantial pitfalls regarding the information offered, accessibility, and completeness of data (Leerssen et al., 2019).

Together, these three characteristics of targeted advertising contribute to an information asymmetry between the advertiser (and ad platform) on one hand, and citizens on the other hand (see Tufekci, 2014). Lawmakers and scholars are concerned about this information asymmetry because of two main harms that follow from the asymmetry: undue influence (such as manipulation and deception) and privacy violations. We will specify these aversive effects below.

Harms of the information asymmetry might be countered by persuasion knowledge

Undue influence is any type of influence that follows directly from leveraging the information asymmetry between sender and receiver. In other words, an advertiser unduly influences a citizen when the advertiser covertly uses prior insights about the audience to make the advertisement more effective. For instance, Zarouali et al. (2022) showed how (covertly) targeting people on the basis of their personality increased the effectiveness of the political advertisement. The information about people’s personality was covertly collected and leveraged to influence people. In this experiment, people saw a political ad, but were unaware that (a) information about their personality was collected and processed, and (b) that the advertisement was tailored to them personally on the basis of the data collected earlier. Thus, Zarouali et al. (2022) illustrate how targeted ads can have an undue influence on citizens, by creating and leveraging an information asymmetry between advertiser and citizen.

Lawmakers propose to counter the information asymmetry and its adverse effects by providing transparent information (DSA, 2020; GDPR, 2016 see also Dommett, 2020), meant to increase persuasion knowledge. Indeed, the British Information Commissioner’s Office argued that people should be able to understand “how and why they might be targeted by a campaign” (ICO, 2018: 41; see Dommett, 2020). This aim is strongly in line with the consequence of having persuasion knowledge, as stated by Friestad and Wright (1994: 1): “[persuasion knowledge helps people] identify how, when, and why marketers try to influence them.”

Activated persuasion knowledge is understood to help citizens “recognize, analyze, interpret, evaluate, and remember persuasion attempts and to select and execute coping tactics believed to be effective and appropriate” (Friestad and Wright, 1994: 3). Providing transparency information can activate persuasion knowledge by making people aware of the persuasion attempt, and by providing information about the persuasion technique. Crucially, the persuasion knowledge model suggests an information asymmetry between the recipient and advertiser, by recognizing that the advertiser holds knowledge about the target audience, while the target audience does not (Friestad and Wright, 1994: 2). Current regulatory attempts to provide transparency information alongside political advertisements extend the persuasion knowledge model by providing the target audience with information about audience characteristics. This way, citizens learn about a persuasion technique: tailoring advertisements to personal characteristics. In other words, the targeted audience would learn that ads are not generic and randomly distributed but tailored and targeted to them and people who share characteristics.

The persuasion knowledge literature shows that activated persuasion knowledge helps citizens cope with the persuasion attempt by activating their defenses (Boerman et al., 2012; Friestad and Wright, 1994). These defenses are thought to help the citizen in evaluating the source, content, and goals of an online political advertisement. Often, increased persuasion knowledge leads to resistance, which is associated with negative evaluations (Eisend and Tarrahi, 2022).

The persuasion knowledge model (Friestad and Wright, 1994) is predominantly used in the field of commercial communication. Moreover, persuasion knowledge mediates the effect of a sponsorship disclosure rather than ad transparency information, which is the focus of this study. Beckert et al. (2021) found that persuasion knowledge significantly mediates the effect of an advertisement sponsorship disclosure on anger. Van Reijmersdal et al. (2016) showed that a sponsorship disclosure activated persuasion knowledge, which led to resistance (counterarguing and negative affect). Boerman et al. (2017) found that the effect of a sponsorship disclosure is conditional upon the source. Only when the sponsored post was disseminated by a celebrity did the disclosure activate persuasion knowledge, which then reduced people’s intention to engage in electronic word of mouth (eWOM).

A meta-analysis by Eisend and Tarrahi (2022) showed that activating persuasion knowledge, in general, leads to negative coping reactions and to “less favorable evaluations, intentions, and behavior” (p. 14). Moreover, successfully activating persuasion knowledge negatively affects attitudes toward the brand, and decreases behavioral intentions. Overall, it becomes clear that persuasion knowledge helps consumers resist persuasive efforts.

The persuasion knowledge model has been scarcely used in the field of political communication. Kruikemeier, Sezgin, and Boerman (2016) found that the effect of exposure to a political advertisement alongside a sponsored label on eWOM is mediated through persuasion knowledge. A more recent experimental study by Binder et al. (2022) did investigate the transparency information of targeted political ads. More specifically, Binder et al. (2022) studied the extent to which variation in granularity degrees of transparency information (i.e. whether people were informed that they were targeted on the basis of demographic data, or on data about their preferences and wishes) affected party evaluations through targeting knowledge. Binder et al. (2022) found that these variations did not significantly activate targeting knowledge, and the variations did not significantly affect the evaluation of the political party that sponsored the ad.

Because Binder et al. (2022) are the first to provide rigorous and valuable insight into the (lack of) merit of transparency information to activate knowledge, some elements of transparency information are necessarily left unexplored. For one, Binder et al. (2022) focused on the transparency of information provided by Facebook. This information is limited in comparison with the transparency information that (proposed) regulations would provide. For instance, there was no detailed information about the source of the advertisement. The political party that sponsored it was mentioned, but some proposed regulations want an explicit mention of the address of the sponsor (Irish bill), or the entity that paid for the ad. Moreover, the transparency information about the targeting criteria was unspecific. People saw that they were displayed an ad, for example, because “[the political party] is trying to reach females aged 18 to 30, who are interested in family and whose primary location is in Austria” (p. 7, Online Appendix A). Citizens could be offered more elaborate (e.g. also include the amount spent, and the number of people reached), and more specific transparency information. In addition to this, Binder et al. (2022) focused specifically on targeting knowledge rather than persuasion knowledge as a whole.

The effect of persuasion knowledge is not always negative. Isaac and Grayson (2017) found that when people regard a persuasion tactic as credible, they are more likely to evaluate the source and the offered product as favorably. However, this favorable effect does not occur when the consumer judges the persuasion tactic unfavorably. The persuasive tactic in this current study is political (micro)targeting. This tactic is perceived rather negatively by the general public in the United States (Turow et al., 2012) and in Europe (Dobber et al., 2018). As such, it can be expected that activated persuasion knowledge in response to exposure to information about a (micro)targeted will lead to negative evaluations.

Privacy concerns as a second mediator

Privacy is “the condition in which others are deprived of access to you” (Reiman, 1995: 30). Privacy offers citizens a private space, enabling them to “reflect on and entertain beliefs, and to experiment with them” (Reiman, 1995: 42). Targeted advertisements threaten that private space because they are both the consequence and the source of the collection and processing of personal data. Targeted ads are based on data collection and analysis, but also a source of data collection and analysis because exposure to such ads offers insights into personal preferences. For example, someone who watches a video about raising the minimum wage in its entirety is more interested in this issue than someone who ignores the advertisement.

Transparency is also a critical element in the protection of privacy, or rather data protection under the GDPR (2016), which is the cornerstone of European data protection regulation. Under the GDPR, transparency and “informed consent” are one of the most relevant legitimate grounds for data processing in the context of targeting (Art. 6 GDPR; European Data Protection Board, 2021). In the academic debate, the “informed consent” paradigm has been criticized on various grounds, including, first, that “consent” is often not obtained in compliance with the GDPR (e.g. Kollnig et al., 2021; Nguyen et al., 2022); second, that certain situations, such as online targeted advertising allocated through real-time bidding, are so complex that information obligations cannot remedy the fact that citizens are often unaware that their right to data protection is being threatened (Belgian, 2022; Veale et al., 2022); and third, that the existing transparency provisions are not sufficiently grounded in empirical insights into how users process transparency notices (Wulf and Seizov, 2022).

When citizens realize that the ads they see “are based on their private information, they might feel that their anonymity, and thus an essential part of their privacy, has been threatened” (Trepte, 2021: 161). Anonymity is an important element of the social media privacy model because it affords users some degree of perceptive control (Trepte, 2021). However, exposure to transparency information confronts people not only with the fact that their privacy has been threatened, but also by whom, how, and on the basis of what kind of personal data. Seeing transparency information confronts people with the fact that specific third parties have access to them personally (see Reiman, 1995). And therefore, we expect that exposure to transparency information increases privacy concerns, and privacy concerns subsequently decrease political attitudes and behavioral intentions. More specifically, an increase in privacy concerns is expected to negatively impact attitudes. Dobber et al. (2018) have shown that privacy concerns lead to negative attitudes toward political microtargeting. As such, we expect that an increase in privacy concerns negatively affects people’s attitudes toward the targeted advertisement.

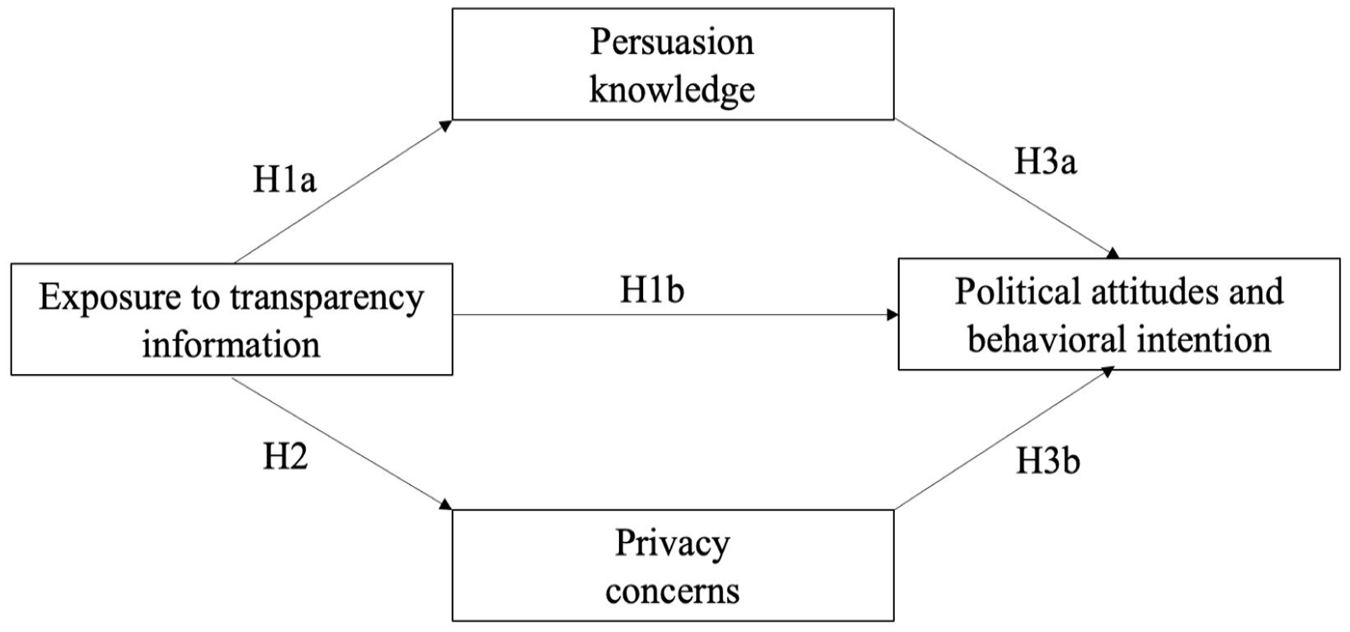

In sum, we propose that exposure to information transparency information first has the, by lawmakers desired, effect that it increases persuasion knowledge. But we propose a parallel mediation model that also acknowledges potential additional effects: an increase in privacy concerns and ultimately, via persuasion knowledge and privacy concerns, reduced advertisement effectiveness as measured in political attitudes and behavioral intentions (see Figure 1):

H1a. Exposure to transparency information (vs no transparency information) increases persuasion knowledge.

H1b. Exposure to transparency information decreases political attitudes (issue, source, and advertisement) and behavioral intentions.

H2. Exposure to transparency information (vs no transparency information) increases privacy concerns.

H3. The negative effect of exposure to transparency information on people’s attitudes toward the targeted advertisement is mediated by persuasion knowledge and privacy concerns, such that exposure to transparency information increases persuasion knowledge and privacy concerns, and an increase in (a) persuasion knowledge and (b) privacy concerns decreases people’s political attitudes and behavioral intentions.

Proposed parallel mediation model.

Method

Kantar Lightspeed recruited 1416 participants in the Netherlands, who received a small amount for their participation, in October 2019. In line with our preregistration, 1 we removed people who did not watch more than 35 seconds of the stimulus material, after which 1331 participants remained. On average, the sample was 44 years old (SD = 13.73), 53% of the sample identified as women, the 47% identified as men.

Design

This experiment consisted of nine treatment conditions and one control condition. Participants were randomly placed into one of those conditions.

Stimuli

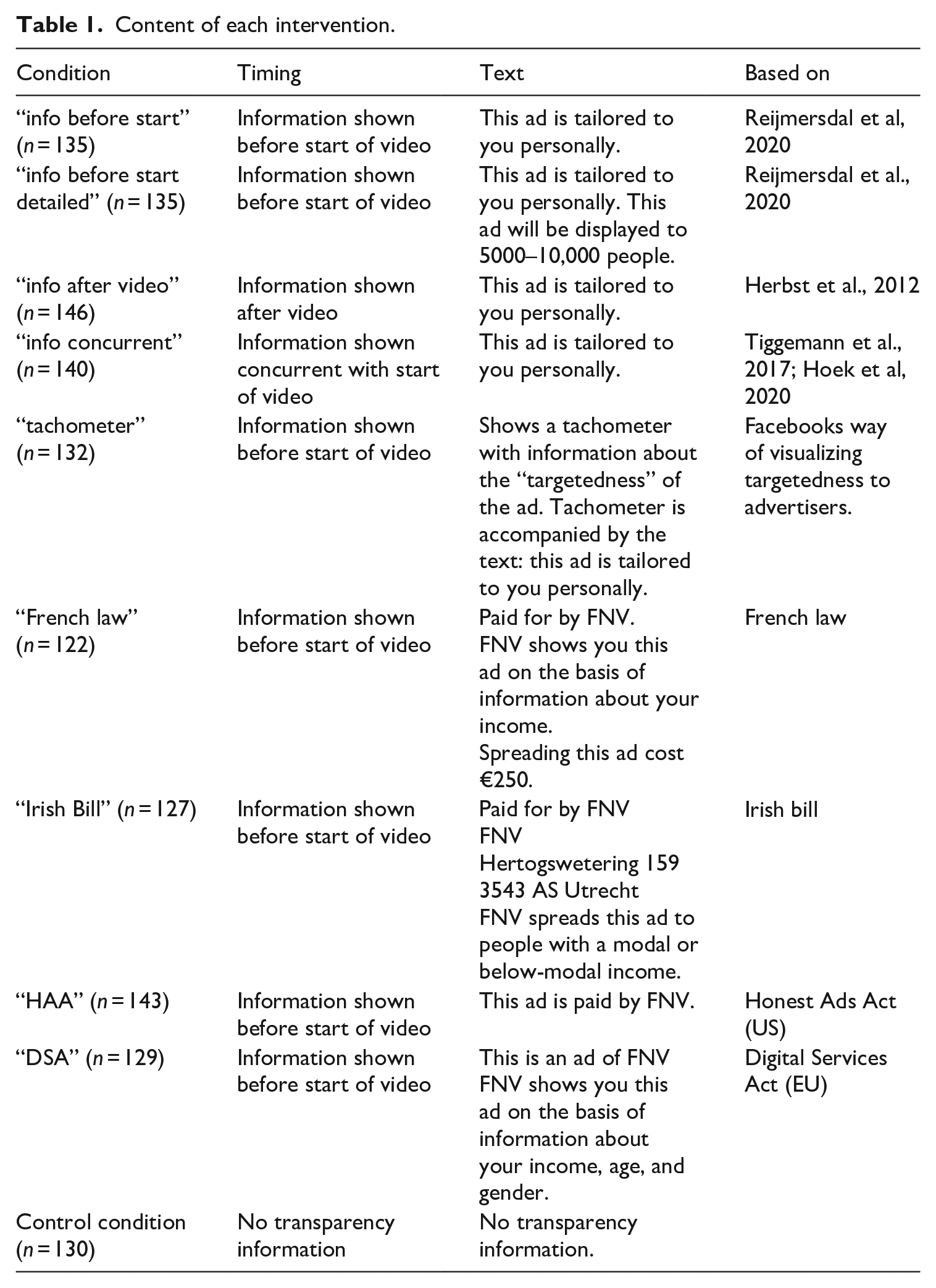

Participants saw a 46-second political video advertisement about increasing the minimum wage in the Netherlands to €14. This political issue ad was sponsored by Dutch union FNV, but references to FNV were removed from the video. People in the control condition saw only this video. But the participants in the experimental conditions were exposed to one of nine variations of transparency information (see Online Appendix A). Table 1 outlines the specific interventions.

Content of each intervention.

Mediators

Persuasion knowledge was measured using the following items from Boerman and Kruikemeier (2016; 7-point scale, 7 is the highest): The video feels like an advertisement; The video promotes union FNV; Union FNV has paid for the video; The video is an advertisement; The video is intended to make you like union FNV; The video is intended to influence you, the video is intended to influence your opinion about the minimum wage in the Netherlands.

Together, these 7 items formed one scale (Eigenvalue = 2.38, Cronbach’s alpha = .77, M = 4.96; SD = 1.17).

Privacy concerns were measured using the following items (7-point scale, 7 indicates the most concerned; derived from Dobber et al., 2018: 9): “I am worried that my personal data (such as my online surf and search behavior, name, and location) will be abused by others.” “When I am online, I get the feeling that others keep track of where I click and what websites I visit. “I am afraid that the personal data I share, is not being stored securely.” “I worry that my personal data on the internet will be passed on to other companies.” “I worry that my personal data on the internet are seen by people I do not know” These 5 items formed one scale (eigenvalue = 3.49, Cronbach’s alpha = .92, M = 4.76; SD = 1.39).

Dependent variables

Source attitude was measured using the following items, all on 7-point scales (7 is more positive, see Ohanian, 1990): Based on this video, I think the source of this video is . . . trustworthy, experienced, honest, knowledgeable, authentic, sincere, competent, has expertise on the matter, capable, credible, appealing. These 11 items formed one scale (Eigenvalue = 8.53; Cronbach’s alpha = .97; M = 5.08; SD = 1.31).

Issue attitude was measured with the following items, all on 7-point scales (7 is more positive): The minimum wage in the Netherlands needs to be increased; Do you think it is important to increase the minimum wage in the Netherlands? Both items correlated strongly (r = .87; M = 5.39; SD = 1.45).

Ad attitude was measured with the following items, all on 7-point scales (Boerman and Kruikemeier, 2016; 7 is more positive):

You have watched a YouTube video. What do you think of this video? Do not like/Like; Negative/Positive; Bad/Good. These 3 items formed one scale (Eigenvalue = 2.19; Cronbach’s alpha = .90; M = 4.82; SD = 1.42).

Behavioral intentions were measured with the following 8 items, all measured on a 7-point scale (see Boerman and Kruikemeier, 2016; 7 is likeliest): How likely is it that you are going to like the YouTube video; share the Youtube video; save the YouTube video; subscribe to FNV’s YouTube channel; visit FNV’s website; talk about FNV with friends, family and coworkers; search for more information about FNV; search for more information about minimum wages in the Netherlands? These items formed one scale (eigenvalue = 5.57; Cronbach’s alpha = .97; M = 2.91; SD = 1.70).

Checks

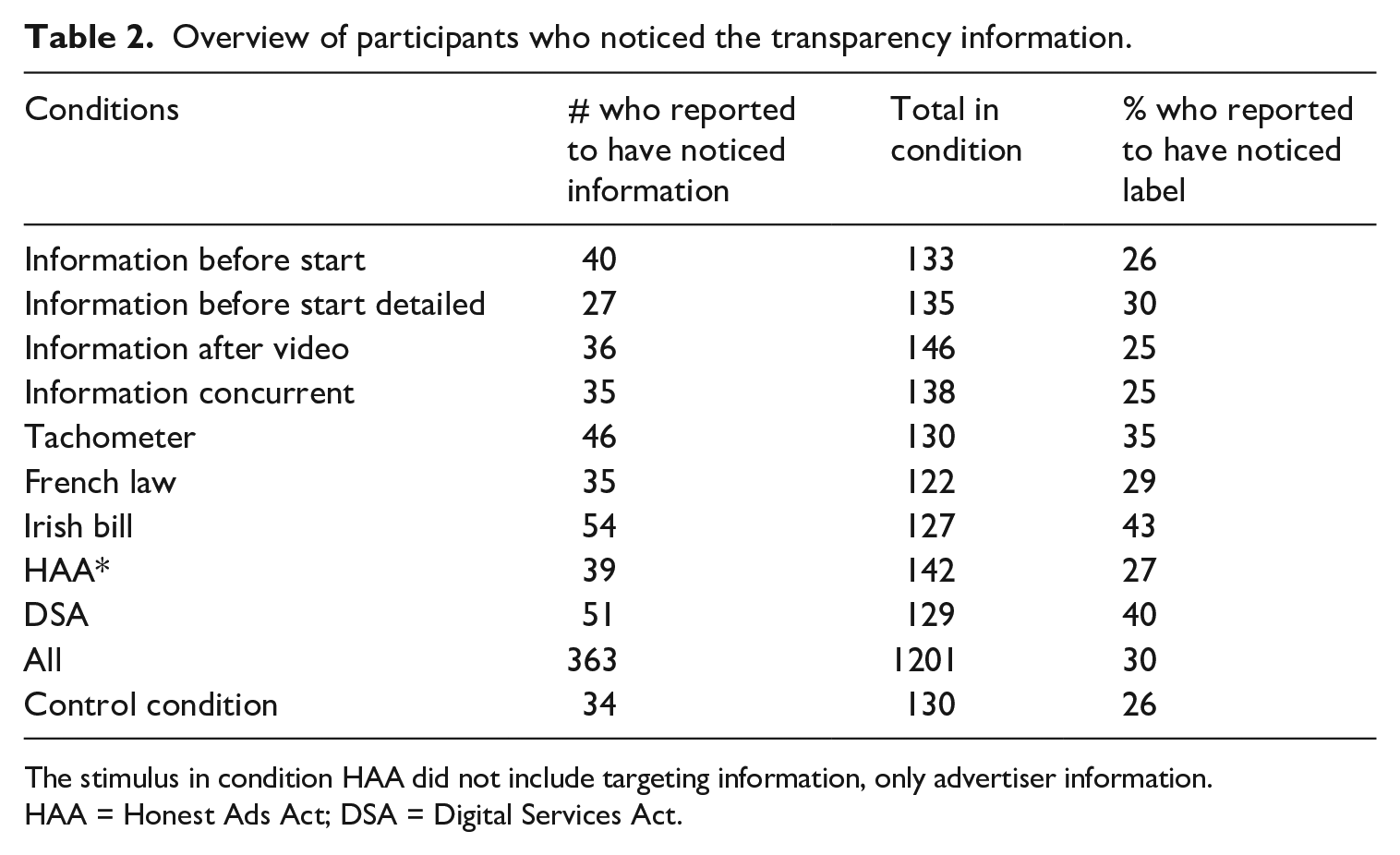

We asked participants whether they had seen information about the targeting of the audience (yes/no). Table 2 shows per condition how many participants noticed the stimulus. The participants who did not remember seeing the stimulus are included in our analyses, because not remembering correctly does not automatically mean not seeing the stimulus or not being affected by it. Including those who claimed to have not noticed the stimuli provided us with a more accurate estimation of the real-world impact of the stimuli. In a real-world setting, many people will likely not notice or remember transparency information when provided alongside an advertisement (Evans et al., 2017). Including only those participants who have noticed, therefore, would likely lead to an overestimation of the real-world effectiveness. Moreover, seeing that 34 people in the control condition claimed to have seen the transparency information, it is questionable to what extent people correctly remember exposure. Nevertheless, in Online Appendix B, we report the analyses on the smaller group of participants who claimed to have seen the stimulus and the entire control group (N = 397).

Overview of participants who noticed the transparency information.

The stimulus in condition HAA did not include targeting information, only advertiser information.

HAA = Honest Ads Act; DSA = Digital Services Act.

Ethical approval was granted by the ethics board of the University of Amsterdam and was given the following approval number (2020-PCJ-12764).

Changes compared with preregistration

There were some changes with regard to the preregistration. The hypotheses have been streamlined, brought down from three hypotheses and five research questions in the preregistration, to three hypotheses in the current article. We also changed the emphasis from direct effects on political attitudes in the preregistration, to persuasion knowledge in the current article after we learned from conversations with EU-level policymakers that raising persuasion knowledge was a core goal of the policymakers.

Results

Randomization checks

We conducted a series of randomization checks regarding age, gender, and political interest. We conclude that randomization was successful (see Online Appendix C).

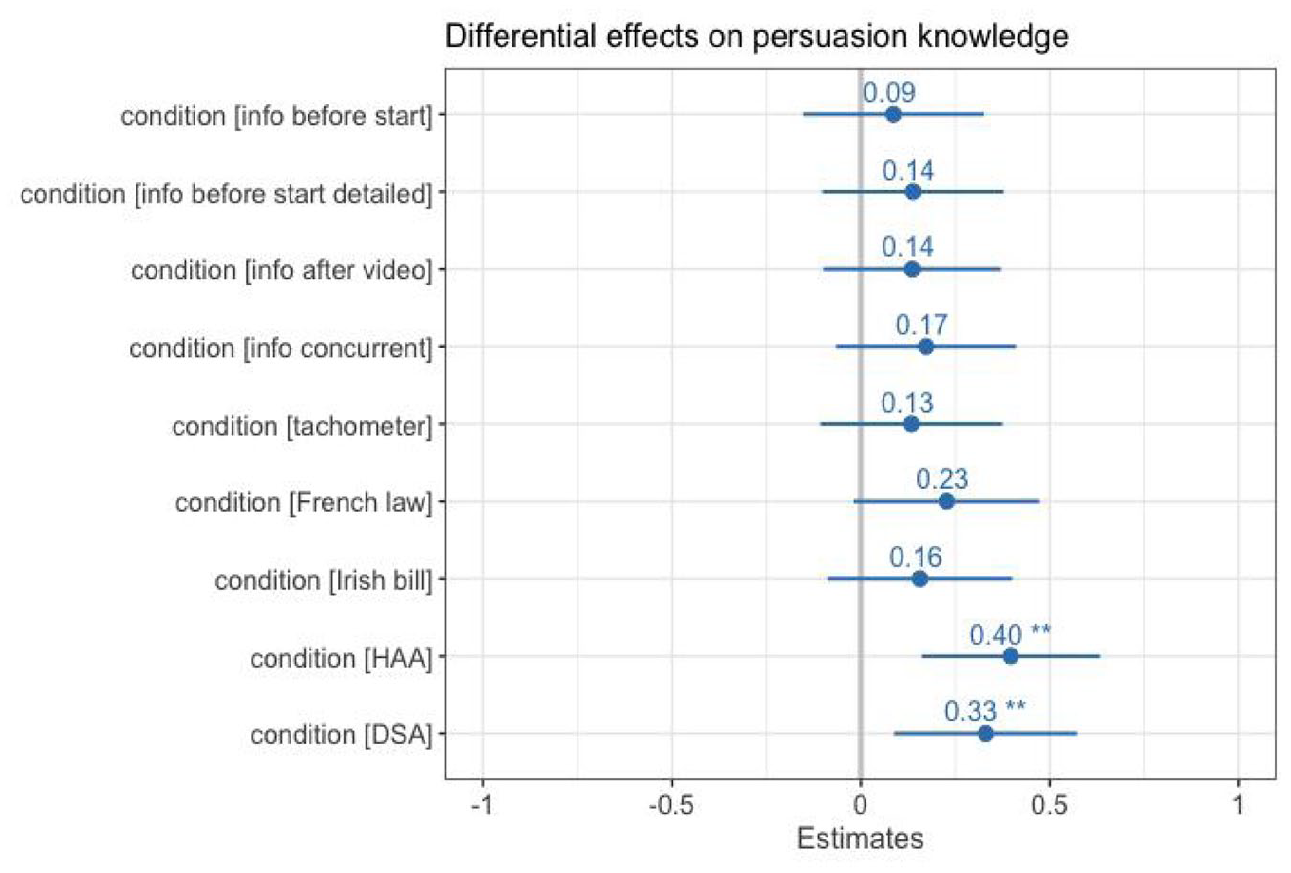

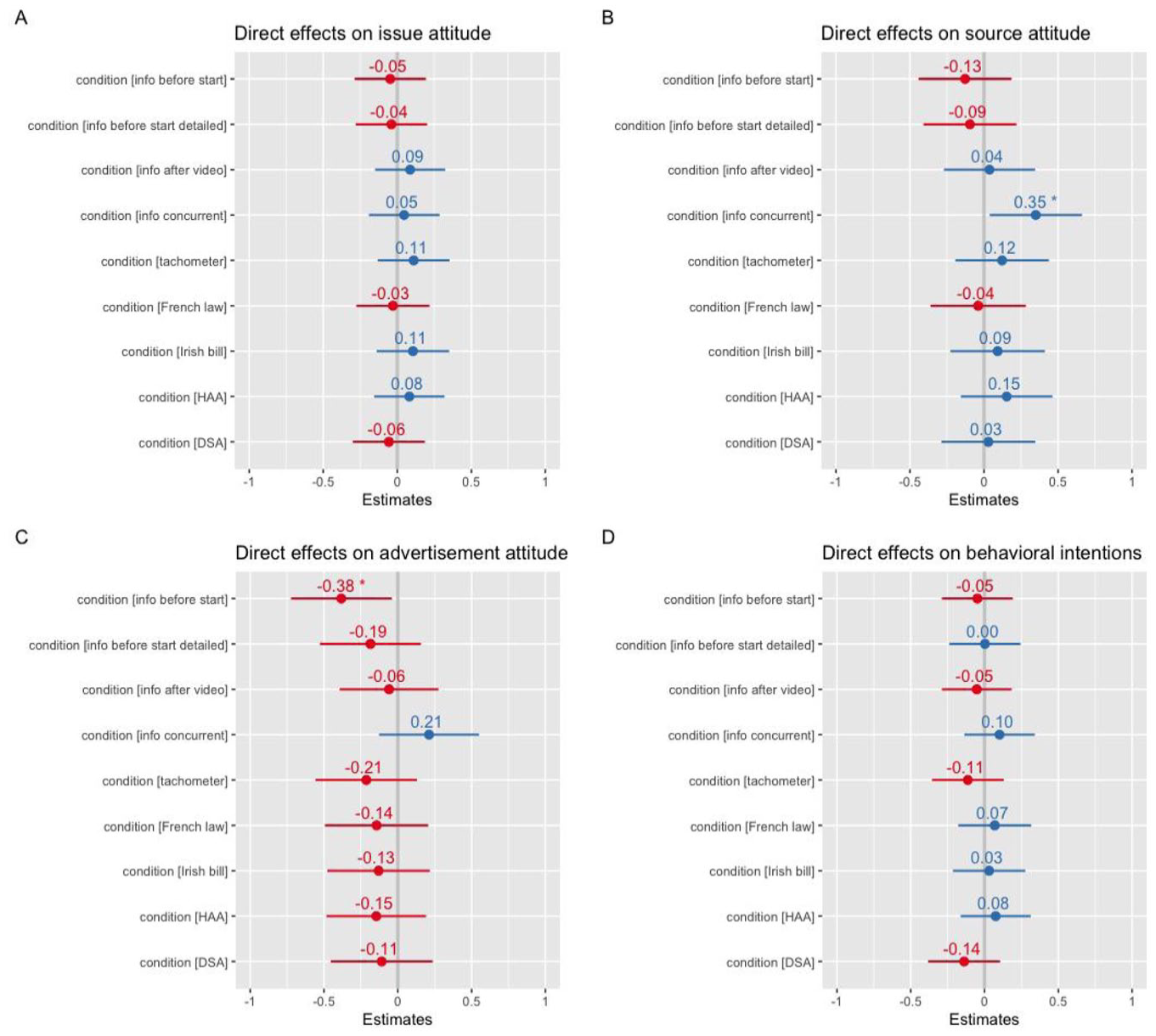

Persuasion knowledge

H1a expected that exposure to transparency information would increase persuasion knowledge. A linear OLS regression analysis showed together with condition Honest Ads Act (B = .40, t = 3.29, p = .001, 95% CI = [.16, .63]), and condition Digital Services Act (B = .33, t = 2.67, p = .01, 95% CI = [.09, .57]). Condition French law was borderline significant (B = .23, t = 1.81, p = .07, 95% CI = [−.02, .47]); see Figure 2. This means that H1a is partly supported: only exposure to conditions HAA and DSA significantly increased citizens persuasion knowledge. This finding is robust. When analyzing the direct effects of exposure to transparency information on only those who remember seeing the information (ATT; see Online Appendix B), we find that the DSA condition is significant (p = .04) and the HAA condition is borderline significant (p = .06).

Effects on persuasion knowledge per condition.

H1b expected a negative direct effect of exposure to transparency information on political attitudes (issue, ad, source) as well as behavioral intentions. A linear ordinary least squares (OLS) regression analysis showed no significant direct effect of any of the conditions on issue attitude (see Figure 3). As Figure 3 shows, a linear regression analysis did reveal a significant direct effect of condition that showed an information message concurrent with the start of the advertisement on source credibility (B = .35, t = 2.31, p = .02, 95% CI = [.08, 1.01]). This effect is not negative, but positive. Exposure to transparency information concurrent with the start of the video increases rather than decreases participant attitudes toward the source of the video. Another linear regression analysis revealed a significant direct effect of exposure to the condition that showed simple information before the video on attitude toward the advertisement: B = −.38, t = −2.20, p = .03, 95% CI = [−.72, −.04]. This means that there is little support for H1b: only one condition significantly affected the dependent variables in the expected condition, and only for attitude toward the advertisement. Similarly, when analyzing the data of only the participants who remembered seeing the information (see Online Appendix B), we find no direct effects on issue attitudes. However, we also find no significant direct effects on source attitude. Focusing on advertisement attitude, we see that the condition information before start is not significant, but the tachometer condition (B = −1.03; p = .001) and the Irish bill condition (B = −.75; p = .002) are. Moreover, together with the DSA condition (B = −.93; p = .02), the tachometer condition also negatively affects behavioral intentions for the ATT (B = −1.20; p = .004).

Direct effects on political attitudes and behavioral intentions.

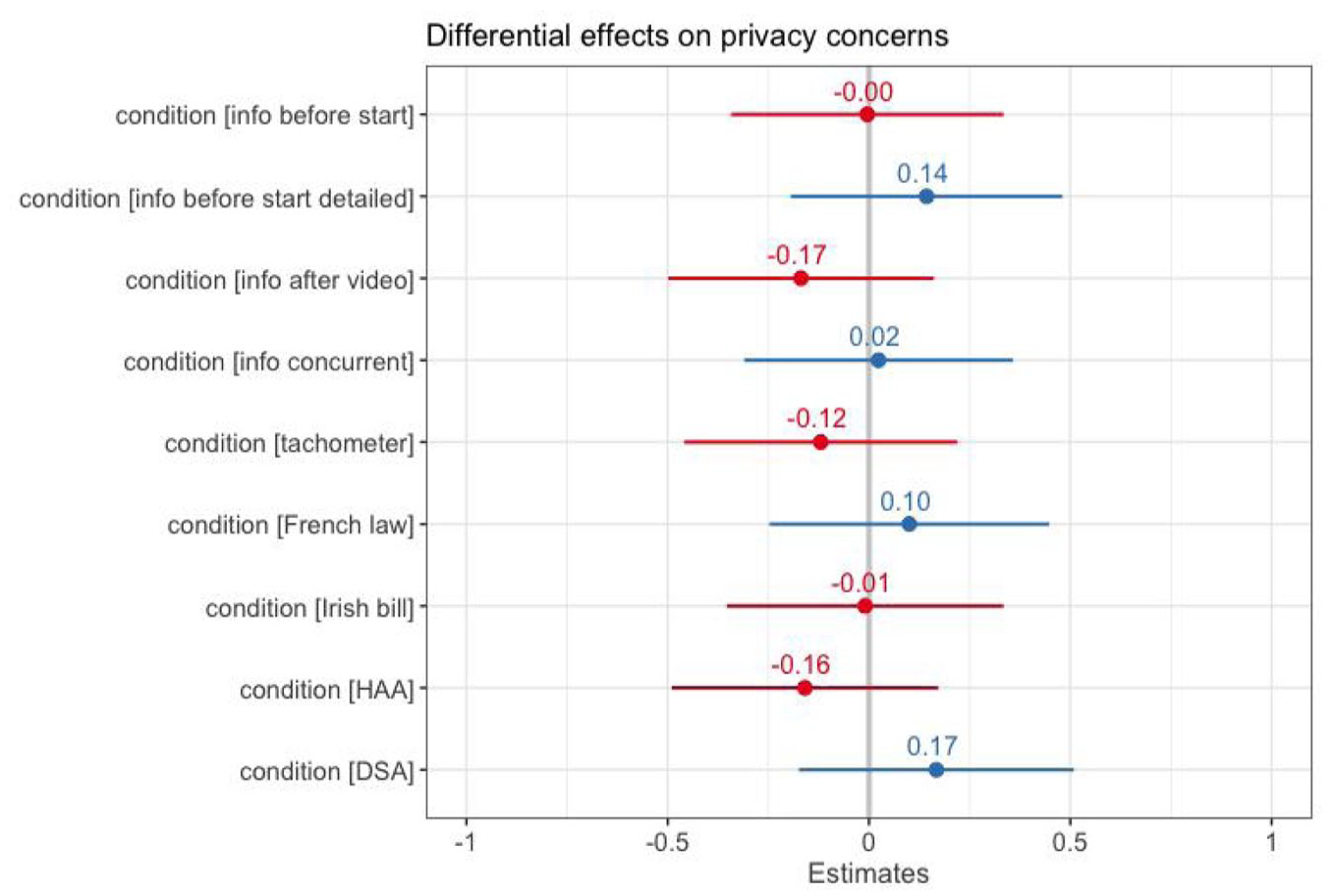

How (variations in) ad transparency information affects privacy concerns

H2 expected that exposure to transparency information might increase people’s privacy concerns. To test H2, we ran a linear OLS regression analysis. We find that none of the information transparency variations significantly affects privacy concerns (see Figure 4). This means that H2 is not supported. Exposure to transparency information does not affect privacy concerns. This is in line with the analysis for the ATT: exposure to transparency information did not directly affect the privacy concerns of those people who remember seeing the information (Online Appendix B).

Differential effects on privacy concerns.

Parallel mediation via persuasion knowledge and privacy concerns

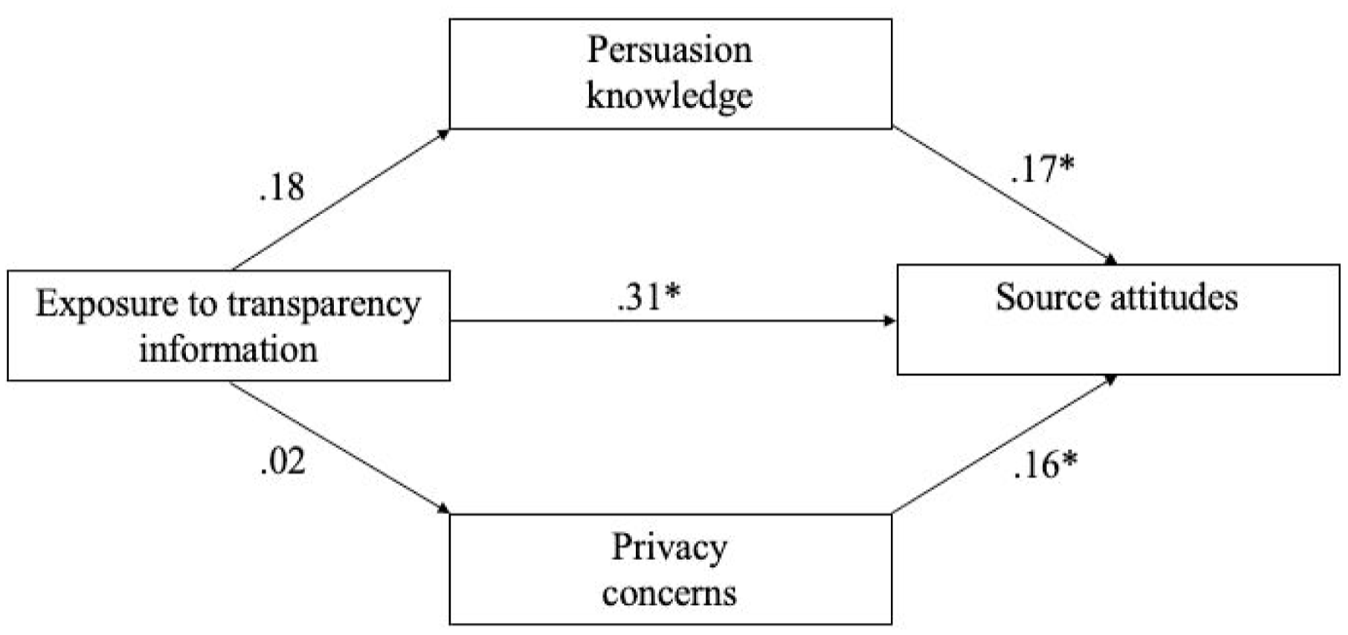

We also expected a parallel mediation to occur (H3). We only found direct effects for the condition that showed an information message concurrent with the start of the advertisement on source attitude, and for the condition that showed basic information before the start of the video on advertisement attitude. Thus, we will only run mediation analyses for these two relationships. Focusing first on the effect of exposure to the transparency information in condition concurrent with start on attitude toward the source, we hypothesized that this direct effect is mediated by persuasion knowledge and by privacy concerns. Exposure to transparency information is expected to increase both mediator variables, and the expected increase in both mediator variables is, in turn, expected to decrease source attitude (see Figure 5).

Parallel mediation model source attitude.

We estimated a parallel mediation model using structural equation modeling (SEM; N = 270; bootstraps = 5000). Exogenous variable condition was a binary variable, which means that the experimental condition (condition concurrent information) was set as 1 and the control group as 0. There was one degree of freedom and the model fit was poor: χ2 = .000; CFI = .60. The model yielded no meaningful mediation effects (see Figure 5). Exposure to the transparency information shown concurrent with the start of the video does neither significantly affect source attitude via persuasion knowledge (indirect effect = .03, Z = .1.04, p = .30), nor via privacy concerns (indirect effect = .004, Z = .13, p = .90). The direct effect, in line with the regression analysis reported above is .34, Z = 2.43, p = .02. The total effect is .31, Z = 2.20, p = .03.

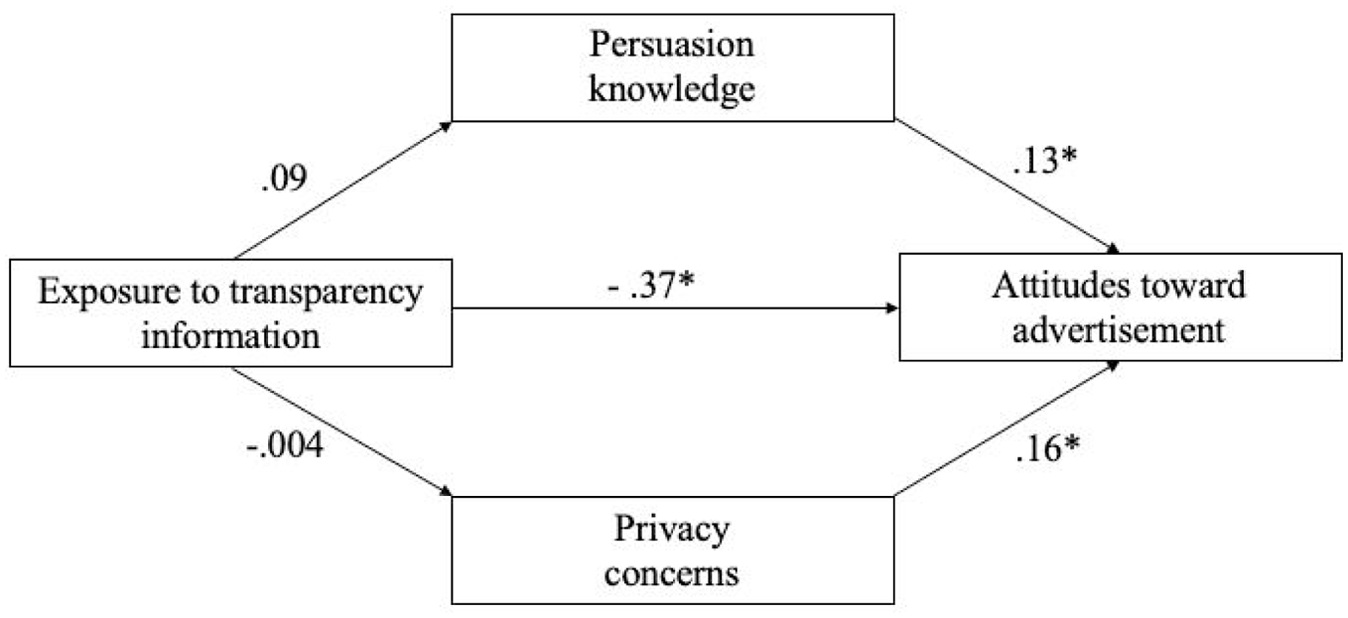

Focusing on the mechanism behind the relationship between exposure to the condition that showed basic information before the start of the video and attitude toward the advertisement, we estimated another parallel mediation model using SEM (N = 265; bootstraps = 5000). Similarly to the previous model, exogenous variable condition was a binary variable, which means that the experimental condition (condition concurrent information) was set as 1 and the control group as 0. The model fit was reasonable at best: χ2 = .07; CFI = .81; df = 1. Again, we find no meaningful mediation effects (see Figure 6). Exposure to the basic transparency information shown before the start of the video does neither significantly affect source attitude via persuasion knowledge (indirect effect = .01, Z = .55, p = .58), nor via privacy concerns (indirect effect = −.001, Z = −.02, p = .98). The direct effect, in line with the regression analysis reported above is −.37, Z = −2.17, p = .03. The total effect is −.36, Z = 2.07, p = .04. We conclude that H3 is not supported. This means that persuasion knowledge and privacy concerns do not mediate the effect of exposure to transparency information on source attitude (and issue attitude and attitude toward the advertisement).

Parallel mediation model attitude toward advertisement.

Discussion

In this study, we set out to test the extent to which different transparency information disclosures activate persuasion knowledge and privacy concerns. We also examined how persuasion knowledge and privacy concerns could affect political attitudes and behavioral intentions. We proposed a parallel mediation mechanism, with persuasion knowledge and privacy concerns as mediators. We found no support for the proposed mechanism. Moreover, we found that, while we tested nine different variations of transparency information disclosures, exposure to only one variation (i.e. basic transparency information shown concurrent with the start of the video advertisement) had a significant direct effect on people’s attitude toward the source of the political advertisement, and only one (i.e. basic transparency information shown before the start of the video advertisement) had a direct effect on people attitudes toward the advertisement (but no direct effects on people’s attitudes toward the issue or on behavioral intentions). Contrary to expectations, the direct effect we found on source attitudes was not negative but positive. It could be that the issue advertisement we used as stimulus material was not salient or controversial enough, and that people might respond differently to transparency information accompanying (a) more controversial issues, and (b) ads from political parties rather than unions (in other words, “less trustworthy” information; see also Campbell and Kirmani, 2000; Isaac and Grayson, 2017).

When we look at transparency information disclosures from the perspective of European lawmakers, the purpose is not to directly affect citizen attitudes or evaluations but to induce persuasion knowledge (see Dommett, 2020; DSA, 2020). Our findings reveal that transparency information disclosures can successfully increase persuasion knowledge. More specifically, while regulatory interventions are not always evidence-based, we find that the transparency information disclosures that are based on actual law (proposals) are most effective (i.e. HAA, DSA). In other words, the current proposed regulatory interventions seem effective in achieving a desired outcome: increasing persuasion knowledge. While this study does not find evidence for subsequent mediation, the persuasion knowledge literature shows that increased persuasion knowledge can bolster citizens’ defenses against undue influence (Amazeen and Wojdynski, 2019; Boerman et al., 2017; Eisend and Tarrahi, 2022; Kruikemeier et al., 2016; Tutaj and Van Reijmersdal, 2012).

In comparison to Binder et al. (2022), who looked specifically at three levels of information about the granularity of targeting and found that these variations did not increase “targeting knowledge,” this current study tests more diverse and elaborate transparency information (e.g. information about the source of the ad, the money spent, the number of people reached). Moreover, the current study used a black-background, white text stimulus, while Binder et al. (2022) used a more unobtrusive design in line with the house style of Facebook. This current study mimicked a YouTube interface, but Binder et al. (2022) mimicked a Facebook interface. Finally, Binder et al. (2022) specifically meant to activate targeting knowledge, and this study focused on the more general persuasion knowledge.

This current study tested regulatory approaches to transparency and thus sometimes used targeting information alongside more general advertising information to activate persuasion knowledge. For example, the US Honest Ads Act condition only mentioned that “this ad is paid by FNV,” but disclosed no information about targeting parameters. This intervention thus provided “agent knowledge,” but not “target knowledge” (Friestad and Wright, 1994). The DSA condition, by contrast did also mention targeting information: “This is an ad of FNV. FNV shows you this ad on the basis of information about your income, age and gender.” The French and Irish conditions did also include information about targeting parameters, and so did the other conditions. But only the DSA and the HAA conditions affected persuasion knowledge, which suggests that it matters what specific information is in the transparency information (congruent with Wojdynski and Evans, 2016).

This study is limited in that it cannot explain why certain interventions do increase persuasion knowledge, and others do not. Future research could focus more systematically on the differences in effectiveness of variations in transparency information. In a similar vein, and in line with Dommett (2020), it remains unclear when lawmakers consider a transparency intervention effective. Should the intervention activate general persuasion knowledge, or more specific targeting knowledge (Binder et al., 2022)? Is the intervention still considered effective if it not only increases persuasion knowledge, but also affects advertisement effectiveness?

The findings of this current study and the findings of Binder et al. (2022) suggest that general persuasion knowledge might be easier to activate than specific targeting knowledge, potentially because targeting is an ill-understood phenomenon (Binder et al., 2022; Dobber et al., 2018) that is, therefore, challenging to activate. Future research should attempt to measure how much people already know before exposure and what people have actually learned about targeted advertising after exposure to transparency information.

This study envisioned privacy concerns as a mediator, but found no evidence for this, potentially because privacy concerns are a more stable characteristic that are more fitting as a moderator. But potentially, privacy concerns were unaffected because the targeting information went unnoticed by many people. This would suggest that design and the location of the information is paramount (see also Wojdynski and Evans, 2016). However, analysis of the effect of exposure to transparency information on privacy concerns for only those who remembered noticing the information also yielded no significant effects (see Online Appendix B). As such, the findings suggest that policymakers do not need to be concerned with inducing privacy concerns as a side effect. In a similar vein, our findings provide only some limited evidence for other side effects, such as a decrease in attitudes toward the advertisement, and an increase in attitudes toward the source. But only for very specific conditions.

The evidence for potential side effects on political attitudes and behavioral intentions is limited because one can ask questions about the robustness of this study’s findings about the interventions’ direct effects on political attitudes and behavioral intentions. The findings about the effects of exposure to transparency information and persuasion knowledge are robust. But the analysis of data for only those people who remember seeing the transparency information shows that the direct effects on source attitude, and advertisement attitude for the larger group, do not hold for the smaller ATT group. In fact, for this smaller ATT group, it is the tachometer that negatively affects people’s advertisement attitudes and behavioral intentions. This discrepancy suggests that we should not overinterpret the potential for side effects of transparency information on political attitudes and behavioral intentions. In addition to this, the discrepancy suggests that attentive viewers are affected by different interventions than non-attentive viewers. In reality, a large share of viewers does not notice or does not remember noticing transparency information. In this study, only 30% of the participants remembered noticing the stimulus.

Policymakers might be concerned with this low number. The 30% found in this study is on the lower end in comparison with the literature on sponsorship disclosures. Kruikemeier et al. (2016) found that 68% recalled seeing a sponsored label on a Facebook ad. Boerman and Kruikemeier (2016) found that 20% noticed sponsored labels that accompany tweets. Evans et al. (2017) found that 33.3% remembered seeing “sponsored” in an Instagram post’s hashtag, while almost 62% remembered seeing “paid ad” in the same setting. Wojdynski and Evans (2016) found in an eye-tracking study that it matters where the disclosure is displayed on the screen: 90% of people paid attention to the disclosure when the disclosure was displayed in the middle, while 40% of people in the top condition, and 60% of people in the bottom condition looked at the disclosure. Taken together, an important drawback of information disclosures is that many people do not see them or remember them.

As such, the findings from this study underscore the limitations of citizen-centered interventions. Citizens are easily overburdened in an already information-rich digital environment. Adding to the cognitive load with detailed transparency information might be too demanding for (many) citizens. Already, many citizens seem “blind” to information disclosures (see “banner blindness”; Benway, 1998). Repeated exposure to such information might only increase banner blindness. It might be better to focus on supply-side interventions to decrease the information asymmetry. Academics and policymakers are concerned with the information asymmetry between “those holding the data” and individual citizens (e.g. Dommett, 2020; Helberger et al., 2021; ICO, 2018; Susser et al., 2019; Tufekci, 2014; Zuiderveen Borgesius et al., 2018). The findings of this study warn against over-reliance on transparency interventions and emphasize the importance of more attention to the form in which the information is provided.

Supplemental Material

sj-docx-1-nms-10.1177_14614448231157640 – Supplemental material for Shielding citizens? Understanding the impact of political advertisement transparency information

Supplemental material, sj-docx-1-nms-10.1177_14614448231157640 for Shielding citizens? Understanding the impact of political advertisement transparency information by Tom Dobber, Sanne Kruikemeier, Natali Helberger and Ellen Goodman in New Media & Society

Footnotes

Correction (April 2023):

The Funding section of this article has been updated since its original publication.

Funding

The author(s) received financial support for the research of this article, from the Knight Foundation Fund of the US and from the European Research Council (ERC) under the European Union’s Horizon 2020 research and innovation programme (grant agreement No 949754).

Supplemental material

Supplemental material for this article is available online.

Notes

Author biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.