Abstract

This article reorients research on agentic engagements with algorithms from the perspective of autonomy. We separate two horizons of algorithmic relations – the instrumental and the intimate – and analyse how they shape different dimensions of autonomous agency. Against the instrumental horizon, algorithmic systems are technical procedures ordering social life at a distance and using rules that can only partly be known. Autonomy is activated as reflective and informed choice and the ability to enact one’s goals and values amid technological constraints. Meanwhile, the intimate horizon highlights affective aspects of autonomy in relation to algorithmic systems as they creep ever closer to our minds and bodies. Here, quests for autonomy arise from disturbance and comfort in a position of vulnerability. We argue that the dimensions of autonomy guide us towards issues of specific ethical and political importance, given that autonomy is never merely a theoretical concern, but also a public value.

Introduction

The concept of autonomy has come to dominate contemporary notions of self-determination and free will (Taylor, 1989) and has traditionally connoted independence, arguing for the moral and political values of freedom (Mokrosinska, 2018). At the core of autonomous agency is the ability to make and enact choices that correspond with one’s reflectively constituted self. The underlying reasons, values and motives of one’s behaviours and decisions ‘should be one’s “own” in some relevant sense’ (Mackenzie, 2014a: 17). In the midst of current technological developments, however, it is often not clear whether and how behaviour is one’s own. Algorithmic systems, governed by formal yet often opaque rules, make decisions that matter in terms of lived lives, such as what kind of information shapes our worldview, who is recommended to us as a potential partner, how many work gigs one can get as a platform labourer and whether one can get credit and at what rate.

The way technologies press against notions of self-directed action suggests that holding on to a clearly bounded notion of self-determination is becoming increasingly difficult (Schüll, 2012; Sharon, 2017). Schüll (2012), for instance, describes how machine gamblers in Las Vegas forget themselves while the experience of flow carries them forward; as they play, they are also ‘played by the machine’. Similarly, personal experiences with recommender systems and self-tracking devices illustrate how one can overlook oneself when relying on the guidance of devices and services (Karakayali et al., 2018; Schwennesen, 2019). A fine line can separate independent action that takes advantage of tracking of sleep or diet to optimize wellbeing and what turns out to be disturbingly controlling, underlining the importance of specific, here-and-now contexts when assessing autonomous agency.

These and similar examples urge us to explore how autonomy is at stake when people engage with algorithmic systems. We adopt Seaver’s (2019b: 419) definition of algorithmic systems as ‘dynamic arrangements of people and code’, emphasizing that it is not the algorithm but the overall system that has sociocultural effects. Relations, on the other hand, can be defined as algorithmic when relationships to self, others or society more broadly become mediated by algorithmic technologies. We suggest that previous research on human–algorithm relations grapples with tensions around autonomy but typically does not make them explicit. People share private space and confidential information with algorithmic systems and use them as aids in the construction and cultivation of self (Karakayali et al., 2018). They may engage in active self-work to align themselves with digital infrastructures and the perceived normativities they enforce (Cotter, 2019; Savolainen et al., 2022). In these processes, moral, personal and bodily boundaries – central from the perspective of autonomy – become crossed, negotiated and re-established.

We argue that paying close empirical attention to moments of alignment and friction with algorithmic systems can be enriched by distinguishing dimensions of autonomy in human-algorithm relations. We demonstrate that everyday practices are not merely subject to algorithmic logics; rather, people actively respond to data and algorithms, ranging from actual technical operations to their imagined effects, in their quests for autonomy (Bucher, 2018; Kennedy, 2018; Lupton, 2019). Autonomy is often considered an entity that a person can ‘have’ and others can control or manipulate, but this narrow view of autonomy is insufficient for examining our myriad relations to algorithmic systems (Owens and Cribb, 2019; Tanninen et al., in press). People may, for instance, willingly outsource some tasks and operations to such systems and endorse certain requirements – like ceding control over their data for algorithmic processing – when they trust the technology to have been designed competently and with their best interests in mind (Steedman et al., 2020). The relational nature of autonomous agency drives us to think about autonomy more reflexively, for which we need conceptual resources that can help explore how dimensions of autonomous action play out in algorithmic relations. To advance the current debate, then, we take a closer look at how autonomy is activated in practice through design choices, resistance and intimate engagements with technologies. Supporting this approach, Hayles (2017) suggests that in relation to algorithmic systems, autonomy is deeply rooted in everyday decision-making practices: While traditional ethical inquiries focus on the individual human considered as a subject possessing free will, such perspectives are inadequate to deal with technical devices that operate autonomously, as well as with complex human-technical assemblages in which cognition and decision-making powers are distributed throughout the system. (p. 4)

Below, we first develop our approach to autonomy as a lens on human agency and move on to analysing dimensions of autonomy in light of two horizons of algorithmic relations: the instrumental and the intimate. The discussion foregrounds how both horizons are shaped by feedback loops that characterize algorithmic systems. We argue that different facets of autonomy offer conceptual signposting for making sense of forms of agency in relation to algorithmic systems. Acknowledging dimensions of autonomy can enable us to live better with algorithmic systems because they can make us more aware of and reflective about precisely how algorithms and autonomy are intertwined. Dimensions of autonomy contain elements of societal critique, as they pin down what troubles and presses against agency in the everyday. Knowing what harms lie in algorithmic systems and how we might be harming ourselves by using them paves the way for attempts to disconnect from their most damaging aspects.

Our discussion emphasizes that algorithmic relations ‘become’ in and through a dynamic interplay between algorithmic technologies and people, who contribute to them through their actions and data traces (Bishop, 2019; Bucher, 2018). The figure of the feedback loop teases out tensions of autonomy in the algorithmic age, most notably because it points to the difficulties of locating agency in hybrid, distributed and mutually reinforcing systems. Both human and machine can begin to self-correct and modify in response to the other’s signals and stimuli, as if acting in unison. In many ways, we are the algorithm, and the algorithm is us.

Autonomy in light of the instrumental and the intimate

Relational notions of autonomy, outlined in practice-based understandings of value (Helgesson and Muniesa, 2013) and feminist ethics (Mackenzie, 2014a; Mackenzie and Stoljar, 2000; Westlund, 2009), help answer the question of exactly what algorithmic technologies are doing to us when we use them, trust them and become ourselves with their guidance. When defined in this way, autonomy sensitizes us to the less stable and more ambivalent aspects of algorithmic relations that are crucial for thinking about how we live – and want to live – with algorithmic systems. To outline how such systems give rise to questions of agency, we propose two interrelated but analytically separable horizons of human–algorithm relations where autonomy is at stake: the instrumental and the intimate. We arrived at this distinction in human–algorithm relations – and the dimensions that elaborate on what is at stake with this distinction in terms of autonomous agency – by bringing the literature on people’s agentic engagements with algorithms into dialogue with notions of autonomy. The two horizons are offered as framing devices; windows onto algorithmic developments that enable us to observe the human–algorithm relations from different angles and at different moments.

The instrumental horizon defines algorithmic systems as socio-technical structures that order social life at a distance and according to formal rules. In terms of autonomy, the instrumental horizon is at play when people encounter systems’ attempts at efficient and rule-based management of social action. Instrumental reason connotes a technical, formal and impersonal orientation to the world, originating in science and technology but creeping into other cultural realms. Algorithmic technologies introduce forms of design-based control and automated rules, making decisions about and for us in ways that have practical impact on our lives. They are concerned with making contextual and messy social lives more governable through quantification, classification and the establishment of standardized procedures. Algorithms – the term carries a similar meaning to ‘recipe, process, method, technique, procedure, routine’ (Knuth, 1997: 4) – then appear as ‘the latest instantiation of the modern tension between ad hoc human sociality and procedural systemization’ (Gillespie, 2016: 27).

However, algorithmic feedback loops are not implemented merely to govern and order social action. In addition to ‘harder’ aims of control, a ‘softer’ aim is to build more intimate human–machine relations (Ruckenstein and Granroth, 2020). Scholars have pointed out how digital surveillance and algorithmic technologies are used to seek increasingly personalized and emotionally based connections. Fourcade and Healy (2017) describe how data-driven developments shape consumer relationships and illustrate how private spaces, personal practices and confidential information become transparent to algorithmic processes: The old classifier was outside, looking in. The firm tried to guess what you liked based on some general information, and often failed. The new classifier is inside, looking around. It knows a lot about what you have done in the past. Increasingly, the market sees you from within, measuring your body and emotional states, and watching as you move around your house, the office, or the mall. (p. 23)

With these intimacy-seeking undercurrents, companies aim to become participants in people’s lives and promote reciprocity. Here, company actions depart from the instrumental horizon and support and blend with a horizon we call ‘intimate’, which refers to what is closely held, private and selectively shared with trusted others (Yousef, 2013); in this context, the intimate horizon relates to those qualities in algorithmic encounters.

Organized under these two horizons, we present four dimensions of autonomy that the literature on people’s agentic engagements with algorithms suggests as a response to instrumental and intimate tendencies. Our account takes on the dual task of outlining both how the developments in human–algorithm relations press against notions of autonomous agency and what kind of room they leave for its rehearsal. Autonomous agency appears to be co-shaped in relation to algorithmic systems by their limiting and enabling features. Specifically, then, in our account, autonomy appears as a situational achievement, constituted by reflective, adjustive and protective behaviours vis-à-vis algorithms and their imagined effects.

Dimensions of autonomy

Research in the interdisciplinary field of digital media, sociology and anthropology has gradually moved towards empirical explorations that study how algorithmic systems become experienced and responded to in daily life. Based on our prior knowledge of the field, we identified key social scientific texts that have been crucial in guiding the scholarly discussion along these lines (Bishop, 2019; Bucher, 2017, 2018; Kennedy, 2018; Lomborg and Kapsch, 2019; Lupton, 2019; Schüll, 2012; Seaver, 2017, 2018) and traced their citations to amass a library of sources. After familiarizing ourselves with the literature, we asked what its recurring findings suggest in terms of autonomous agency. At this stage, we also sought additional theoretical approaches and empirical research on autonomy. By cross-fertilizing these literatures, we developed an understanding of autonomy that is relevant to daily life and speaks to actual engagements with technologies. Notably, much of the research that appears relevant to how algorithmic systems threaten or enhance autonomous action explores the field of digital media. This suggested to us that online services and platforms have become important for probing autonomy in the algorithmic age; people enthusiastically use digital services but are also aware of how they might threaten their notions of free will and independent action.

While our work is grounded in and enters into dialogue with the research on everyday agency in relation to algorithmic systems, we emphasize that our aim is not to offer a systematic literature review. Research in this area is still emerging and consists largely of qualitative approaches that speak to various interdisciplinary aims and different disciplinary traditions rather than a fixed research perspective. Instead of a review, we aim to elaborate on the concept of autonomy and to reframe and recontextualize the empirical findings with it. In so doing, we offer conceptual guidance and spaces within which to explore contemporary developments in human–machine relations in a more open-ended manner – for instance, without a fixed starting or end point in power or resistance.

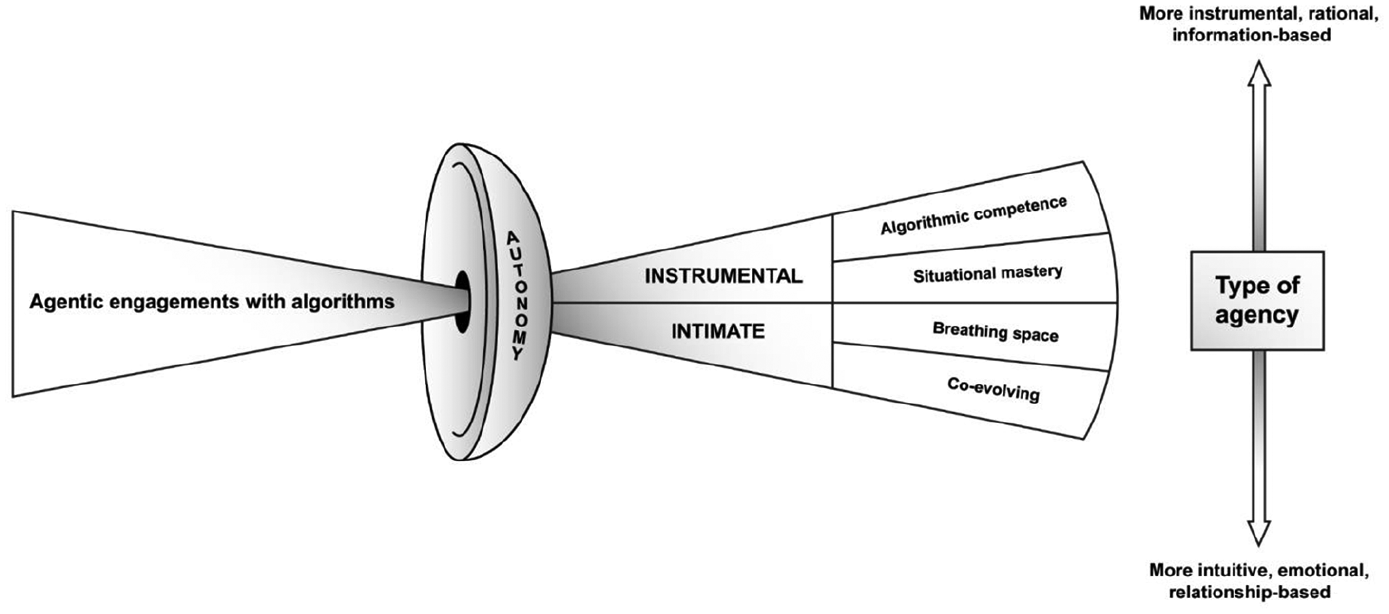

After rounds of writing and discussions with each other and external readers, we settled on four dimensions of autonomy in relation to algorithmic systems: autonomy as algorithmic competence, situational mastery, breathing space and co-evolving (Figure 1). These different dimensions are activated by particular instances in the human–algorithm relation. Our approach relies on acknowledging that while the instrumental and intimate horizons push against self-determination, each is also associated with distinct behaviours among people striving to enhance or maintain their sense of autonomy. In the instrumental horizon, calculative, system-oriented agency is emphasized, while the intimate horizon resonates with affective negotiations and circumstantial acts of protecting personal space. By arguing that such situational, reflexive and adjustive behaviours are in fact constitutive of autonomy in analytically separable ways, we highlight that autonomy is never fixed or possessable; rather, it is a multidimensional relation that calls for actively making distinctions between the self and the world.

Autonomy as a lens to human agency in relation to algorithmic systems.

The instrumental horizon – reason and mastery

The instrumental horizon aids in foregrounding algorithmic technologies as distinction and decision-making systems that are employed to promote efficient ordering of ever-new domains of social action, perpetuating a logic of legibility, automated management and ‘programmed coordination’ (Bratton, 2015: 41). For instance, to create potential matches, the dating app Tinder’s algorithmic system ‘adjusts who you see every time your profile is Liked or Noped’ (Iovine, 2021), while Facebook has a friend-sorting algorithm that ranks contacts based on their closeness to users (Constine, 2018).

The instrumental horizon evokes questions of autonomy that can be traced back to algorithms as ‘the decision-making parts of code’ (Beer, 2017: 5). Algorithmic systems’ technical agency precedes and operates alongside human agency, making ‘myriad of small and large decisions with concrete effects’ (Rieder, 2020: 256; see also Hayles, 2017). From this perspective, we are able to capture specific aspects of algorithmic relations that trouble notions of autonomy as reasoned, reflective and informed choice and as practical mastery – in other words, the ability to not just decide but to enact one’s decisions, reasons and values amid technological constraints.

Below, we look at how the aforementioned features of algorithms promote system-oriented, information-seeking and calculative behaviours. We highlight how autonomy is activated in relation to the structuring of future choice and action by algorithmic feedback loops. More specifically, we argue for the notion of autonomy as the capacity to reflect on our choices and actions in a critical, informed and rational way (Beauchamp and Childress, 2012; Friedman, 2003; Meyers, 1989). This requires users to develop novel skills and capabilities to understand and act on algorithmic operations. As we demonstrate below, because the outcomes of algorithms’ complex calculative steps link directly back to user experience and lived lives, such a system-oriented attitude is becoming increasingly critical in and for autonomy quests.

Autonomy as algorithmic competence

People’s capacity to do, maintain and negotiate autonomous agency in relation to algorithmic systems increasingly hinges on their algorithmic competence, such as the capacity to identify algorithms at work and to sufficiently understand and critically reflect on algorithmic logics (Dogruel, 2021; Gran et al., 2021). Information access on social media serves as an example: if we are not aware of social media algorithms or knowledgeable enough about how they operate, we may not think of how our current and future options are silently being shaped by them. This problem is even more pressing because algorithmic feedback loops mean that ‘users are performatively involved in shaping their own conditions of information access’ (Gran et al., 2021). In other words, the decisions we make today are constrained and enabled by data about prior decisions made by ourselves and others.

Research suggests that people might be unaware of or uncertain about the decision-making functions of algorithmic systems. Over half of 40 US citizens interviewed by Eslami et al. (2015) reported being unaware that Facebook algorithmically organized their news feeds. As the interviewees learned about this curation and were shown how their feed would look unadulterated, some had epiphanies about former sequences of events and relationships. For example, one woman had assumed that she had not seen a Facebook friend’s posts because the friend was hiding them from her, saying: ‘I always assumed that I wasn’t really that close to that person’. After learning about algorithmic functions, however, she realized her mistake. Here, we begin to see the power of algorithmic technologies in covertly influencing the interpretations that guide how we navigate the world and construct our identities. A more recent German interview study found that while all 30 respondents knew that algorithms are widely used in digital services, their practical awareness of them was contextual, depending, for instance, on the area of application. Moreover, they tended to downplay the potential influence of algorithms on their decision-making (Dogruel et al., 2020). Meanwhile, a representative study carried out in Norway (Gran et al., 2021), a wealthy and highly digitalized Nordic country, found that 60% of respondents reported having little to no awareness of algorithms in digital media. As in other studies, sociodemographic factors such as lower education and higher age correlated with being unaware (Cotter and Reisdorf, 2020).

In light of these studies, worries about the lack of independence and awareness of the subject’s decision-making in the algorithmic age do not seem unfounded (Beer, 2009; Berry, 2014: 11; Slavin, 2011). As Beer (2017) puts it, ‘there is a sense of a need to explore how algorithms make choices or how they provide information that informs and shapes choice’ (p. 5). From this perspective, algorithmic systems constitute a kind of ‘unconscious’ that influences deliberation, interpretation and action beyond the self-awareness of subjects and collectives (Beer, 2009). These concerns suggest that algorithmic systems put pressure on notions of autonomy as reflective and informed processes of self-definition, decision-making and evaluation of options (cf. Meyers, 1989). Such processes require introspection, but if part of the cognitive processing that precedes choice is outsourced to algorithmic systems and feedback loops (Hayles, 2017), how can one endorse a preference, choice or interpretation as one’s own? With algorithmic systems, there seems to be a structural opposition to transparency and accountability for subjective deliberation.

Algorithmic competence offers a much-needed pushback mechanism: it aims to make visible aspects of the background processing carried out by algorithmic systems. If we are unable to reflect on and critically assess algorithmic systems’ decision-making rules, notions of autonomy as informed and self-aware deliberation cannot be sustained. Researchers have thus unsurprisingly called for policies to improve people’s algorithm literacy and awareness (Dogruel, 2021; Gran et al., 2021). In most cases, these calls centre on awareness and knowledge about the different types of algorithms and their functions (Dogruel, 2021). Critical reflection comes across as an effort to build on this technical proficiency. However, qualitative studies that highlight the various forms algorithmic expertise can take argue that algorithm-related knowledge rarely aims at technical precision and focuses instead on practical value and normative evaluation, and even takes on speculative forms like ‘gossip’ and folklore (Bishop, 2019; Bucher, 2018; Lomborg and Kapsch, 2019; Ruckenstein, 2021). Broadening algorithmic competence seems highly relevant in the face of increasingly complex machine learning-based systems whose decision-making may not be wholly explainable with the original model – putting pressure on ‘human-scale reasoning and styles of semantic interpretation’ (Rieder, 2020: 246). Critical awareness of the social relations that undergird algorithmic systems is likely to be more attainable than precise technical understanding. It promotes self-aware agency by creating distance between the user and the technology, and opening up time and space for reflecting on, interrogating and evaluating the assumptions, motivations and potential biases that proprietary algorithms encode as our background cognizers (Lomborg and Kapsch, 2019).

Autonomy as situational mastery

The second identified dimension of autonomy begins with the notion that capacities for technical understanding or critical reflection have little importance if people cannot exercise them. For example, one might have the algorithmic competence to critically evaluate Facebook’s data practices and eventually wish to boycott the company. Yet, this does not mean that one would be protected from Facebook’s data harvesting, as the platform is known to have collected and processed data even regarding people who never signed up for Facebook (Singer, 2018). Autonomous agency thus concerns more than inner reflection and deliberation. It requires enactment: the ability to navigate the opportunities and constraints of one’s environment in ways that support self-chosen goals and values.

From this perspective, autonomy appears as situational mastery that relies on skills and creativity to make use of or appropriate the affordances suggested by technologies. Affordance refers to action possibilities opened by technological artefacts (Faraj and Azad, 2012). Notably, affordances are about not only the technology but also how technologies are experimented with and what is expected of them. Users of algorithmic technologies may probe their decision-making, engaging in a kind of mundane reverse engineering by ‘examining what data is fed into an algorithm and what output is produced’ (Kitchin, 2017: 19). For instance, an Instagram influencer studied by Cotter (2019) noted that ‘since Instagram doesn’t disclose all the specific details for their algorithm, it’s up to users to A/B test what works’ (p. 903).

Against this backdrop, empirical research suggests that situational mastery develops when people explore technologies and their specific features. Algorithms transform from background infrastructure to a consciously exploited affordance, materializing simultaneously as techniques of power and as artefacts that allow us to do things. At least two types of approaches can be identified in current research that supports autonomy as situational mastery. First, to increase their algorithmic rank, people may start to act in line with the rules they perceive algorithms to encode. For instance, believing that high engagement is a precondition for getting to Instagram’s Explore page, users start to produce content that they expect will gain recognition. The interactive system aids in visibility pursuits, as users receive constant feedback of what ‘works’ on the platform (Savolainen et al., 2022). Second, people may seek to ‘manipulate the rankings data without addressing the underlying condition that is the target of measurement’ (Sauder and Espeland, 2009: 76). Such practices are often referred to as gaming, which opens up the possibility of ‘mobilising algorithms toward ends that were not originally intended’ (Velkova and Kaun, 2019).

Game-playing is indeed a common frame in the literature on user agency in relation to algorithmic systems, calling attention to how their rule-based nature can prove situationally empowering (Chan and Humphreys, 2018; Cotter, 2019; Haapoja et al., 2020; Kear, 2017). Studies offer examples of situational mastery: by inferring the broader logic and general rules of the algorithmic ‘game’, users start to play along (Haapoja et al., 2020). They may associate game-playing with positive meanings such as ‘innovation, the ability to problem solve, independent achievement, and accumulating capital’ (Cotter, 2019: 906). However, in terms of autonomy as self-endorsed action, game-playing is a curious notion, as the game creates a ‘distance between the individual and the role they play’, allowing ‘for the performance of behaviours that might otherwise feel cruel, alien or meaningless’ (Kear, 2017: 354). For an increasingly large number of people, playing algorithms becomes a question of self-protection or survival, as, for example, in the case of platform labourers who rely on the tyranny of the algorithmic logic of the platform that assigns their work (Chan and Humphreys, 2018).

Although mastery in ‘playing’ algorithms might enhance the sense of being the cause of observable and even desired effects in the world, any control achieved can only ever be situational and opportunistic. Platform companies share an interest in dominating technological affordances. Therefore, their engineers are constantly tracing and disabling unintended and ‘manipulative’ uses of algorithms (Petre et al., 2019). Getting algorithmic systems to promote a broader spectrum of values that matter in terms of individual and collective lives would require proactive efforts by companies and collective deliberation regarding both the values for which algorithmic systems optimize and the aims for which their use should be disabled. However, the instrumental horizon continues to develop largely outside of public view and discussion, meaning that algorithmic power cannot easily be spoken back to. As a result, in the algorithmic everyday, autonomy needs to be constantly negotiated and re-negotiated in relation to technologies that rank, steer and act on behaviour.

The intimate horizon – affects and attachments

In the instrumental horizon, practices that enhance a sense of autonomy are shaped by knowing and being able to learn and act on algorithmic operations and feedback loops. The deliberative, critical and executive qualities of autonomy are emphasized. However, to think of autonomous agency as culminating in rational, goal-oriented and information-based behaviour ultimately frames both personal autonomy and algorithmic developments in a limiting way. In addition to the more informed aspects of autonomy, research has called attention to the crucial role of attachments, vulnerability and emotional agency in and for autonomous personhood (Govier, 1993; Helm, 1996; Mackenzie, 2014b; Nedelsky, 1989). These aspects of autonomy are best understood as activated by a related but analytically separable development in human–algorithm relations, where the aim is not to control at a distance but to cultivate an increasingly close and emotion-based relationship with the consumer through the use of personalization-enabling algorithmic techniques (Ruckenstein and Granroth, 2020). As a result, in the everyday, algorithms are not responded to merely as cold, technical procedures but give rise to affective engagements.

Taking our cue from research findings that detail emotional friction and alignment with algorithmic technologies, we demonstrate how forming and breaking down intimacy can act as access points to the more affective and interdependent aspects of human autonomy. First, we look at how algorithmic technologies and the assumptions about behaviour and subjectivity they encode may override and devalue our sense of ‘epistemic and normative authority’ with regard to self-definitions and practical commitments (Mackenzie, 2014a: 36). Such boundary crossings violate an implicit normative order in the everyday to respect and recognize one another as separate and autonomous beings. The reason why we are so protective of personal boundaries and self-descriptions – when we feel they are being threatened – may be that we realize their fundamental fragility. Our vulnerability and openness to the influence of others is ‘an ontological condition of our embodied humanity’ (Mackenzie, 2014b: 34), and can also be harnessed in support of quests for autonomy. In the closing section of the analysis, we argue that this explains why seemingly invasive practices, such as behavioural modification and gathering personal data, can also be experienced as empowering when people feel that algorithmic technologies care for them or otherwise assist them in their self-projects. Intimacies are violated but also generated and enhanced by algorithmic systems, which may complicate attempts at their critical use.

Autonomy as breathing space

In discussing feelings triggered by algorithms, Lomborg and Kapsch (2019: 9) describe Rieke, a 54-year-old woman who experienced targeted advertisements as ‘assaults’ on her identity: ‘There were all such old-person advertisements, stuff like “your retirement savings” because it obviously branded me as being old [. . .] I was very offended’. As in this case, recognizable responses to algorithmic technologies often have to do with their inability to respect us as self-authoring persons and to recognize who we want to be. The push against self-chosen action mobilizes a quest for autonomy as breathing space, which we see as the space needed to foster goals, reasons and self-definitions. Mainstream epistemic resources, whose influence on contemporary digital culture is difficult to overstate, motivate algorithmic developments that explain why breathing space is needed. Developers and engineers in digital advertising, recommender systems, insurance and digital health draw largely from behavioural psychology and behavioural economics when designing algorithmic products (Tanninen et al., 2021). Behavioural approaches are focused on human aspects that are quantitatively measurable, observable and manipulable. Stark (2018), for example, argues that ‘psychometrics’ – which seek to apply ‘calculability to subjectivity’ by measuring personality and emotion through behavioural cues – are now a built-in feature of our very digital infrastructures. Relatedly, Seaver (2019a) describes how recommendation system developers speak of ‘hooking’ and ’addicting’ people as their goal, often by exploiting psychological vulnerabilities (see also Schüll, 2012). In engineers’ worlds of reference and through their designs, users become configured as prey to be captured and held for as long as possible (Seaver, 2019a).

In practice, behaviourist design principles mean that algorithmic systems expose users to different kinds of nudges, quantifying and adapting to their response. The social media platform TikTok provides an illuminating example of machine learning-powered platform architecture. TikTok’s artificial intelligence (AI) tests the effectiveness of different signals – such as watch time (Smith, 2021) – in predicting a user’s return to the platform, recommending future content on the basis of the signals with the greatest predictive power (Waters, 2022). Technologists imagine algorithmic systems that track pupil movements, facial expressions and body postures to enable more effective, on-the-spot nudging (Murphy, 2022). The ability to process large volumes of highly detailed data to test and anticipate behaviour tightens the feedback loop’s grip by further specifying and defining proclivities. As a result, algorithmic systems may even begin to corrode our sense of epistemic authority over our actions, choices and commitments. As put by an interviewee in a study on the use of AI in health behaviour change, the new intimacy of algorithmic systems triggers unpleasant feelings of uncertainty about ‘who is the one deciding’ (Tanninen et al., in press).

The negative emotions and evaluations that algorithmic feedback gives rise to by interfering with notions of self-determination engender reactive and protective behaviours and actions. People may seek to counteract the informational feedback loops that algorithms introduce into their lives: they may strive to ‘click consciously’ (Bucher, 2018: 115) or feed the system ‘incorrect’ information in order to ensure diverse information access and avoid being surveilled, ‘hooked’ or algorithmically subjectified. One of Bucher’s (2018) interviewees states that ‘privacy online does not really exist. So, why not just confuse those who are actually looking at your intimate information? That way, it misleads them’ (p. 112). While it is almost impossible to leave behind erroneous data traces so consistently as to undermine personalization logics, we find examples like these important, because they highlight how the space of autonomy cannot be wholly undone by algorithmic invasions but is held onto as the location from which they can be reflected on and critiqued.

Designers might speak aspirationally about technologies that could ‘give us what we want, before we know we want it’, but such hopes appear dubious in light of notions of autonomous agency that treat critical self-reflection on the formation of one’s desires as constitutive of them. Relying on signals of past actions, behavioural approaches to algorithmic infrastructures are ignorant of people’s ongoing introspection and efforts, such as how we feel or think about our actions and habits and might strive to change them. By not taking such reflections into account, algorithmic systems fail to treat the interests of users ‘as fundamentally separate from . . . corporate interests’ (Rubel et al., 2020). The notion of autonomy as breathing space offers a powerful means for critiquing behaviourist epistemologies, precisely because it makes a distinction between traceable behaviour and action that is or would be endorsed by the self, which is not a given entity amenable to calculation and prediction but is in a state of self-reflexive becoming. It is precisely these reflexive qualities – not manifest behaviours – that define subjectivity.

Autonomy as co-evolving

Whereas the quest for autonomy as breathing space is triggered by the way algorithmic technologies press against the intimate sphere, autonomy is also felt as enhanced by the personal nature of algorithmic systems. Successful personalization generates pleasurable feelings of being ‘seen’ and ‘recognised’ by the algorithmic system (Ruckenstein and Granroth, 2020). Here, the intimate horizon directs attention to sharing personal space and confidential information with algorithmic systems. Such sharing means both exposing oneself and becoming exposed to the other – in this case, the algorithmic other – and eventually cultivating a relationship of growing closeness. When people’s aims and self-understandings align with algorithmic systems and feedback, fears of corporate surveillance and algorithmic control move to the background. Other considerations, like convenience and care, become emphasized, and everyday activities come off as enjoyable and active self-exploration aided by algorithmic feedback loops. For instance, focusing on experiences with the music recommendation site last.fm, Karakayali et al. (2018: 3) argue that by objectifying taste in music and offering tools for revising and modifying it, algorithms become ‘intimate experts’ that accompany people ‘in their self-care practices’. This experience of autonomy is enhanced by willingly giving up control of an aspect of one’s life to calculative systems that bring forth and enable care. People even relate to algorithmic intimacies as a developing relationship; to become closer, they must keep ‘their end of the bargain’ (Siles et al., 2020). Among Costa Rican Spotify users, this takes the form of constant feedback giving, such as ‘liking’ songs, ‘following’ artists and establishing listening patterns that the platform easily recognizes. Here, what counts is not how the system appears today but one’s trust in it: an anticipated movement or trajectory towards increasing closeness and familiarity. Algorithmic intimacies are consciously and systematically pursued and fostered in human–machine interaction.

Given algorithms’ rule-based nature and generalizing thrust, upholding algorithmic intimacy requires weighing algorithmic feedback against other ways of orienting oneself in the world. Gregory and Maldonado (2020) study food couriers working on Deliveroo and find that cyclists negotiate the ‘optimized’ routes suggested by the algorithm based on sensations of discomfort, safety and familiarity. Instead of the contextual and tacit being overridden by the rule-based algorithmic suggestions, cyclists continuously balance and work them out in practice. Schwennesen (2019) provides another example by tracing the development and implementation of a physical rehabilitation application in which sensor data on bodily movement is ‘translated and transformed into immediate digital feedback, with the aim of guiding and motivating the patient during training’ (p. 180). She finds that users who give epistemic authority to the algorithm, overlooking bodily signals, could end up enduring severe pain and physical setbacks instead of progress, causing the algorithmic care arrangement to break down. Negotiating digital feedback in relation to one’s bodily sensations allowed the algorithmic system to better achieve its aims and the patient to uphold a sense of competence and embodied self-knowledge. Algorithmic logics cannot render these contextual, affective forms of knowledge obsolete, because algorithms’ performance, to some extent, depends on them: they supplant the algorithmic assemblage with what the machine lacks.

These examples point towards processes of co-evolving: a collaboration where action and intentionality are produced in the interactions between human and machine (Kristensen and Ruckenstein, 2018). The quest for autonomy is an integral part of co-evolving: people act and become themselves with the help of and in response to algorithmic feedback and recommendation. When successful, co-evolving works to assist agentic capabilities and create a sense of being in a close, personal relationship with the technical system, that ‘we’ are in this together. However, the sense of intimacy that algorithmic personalization promotes is always on the brink of breaking down and must continuously be balanced (Kristensen and Ruckenstein, 2018). Autonomy as co-evolving is made unstable by the discrepancy between the affective human and the fundamentally instrumental and indifferent machine. Frequent failures in personalization remind users that while algorithmic processes may give the impression of intimate familiarity and support, technologies can never ‘know’ or care for a person in their singular individuality, only as a unique composite of correlational features.

Balancing autonomy in relation to algorithmic systems

We have argued that the notion of autonomy is a particularly valuable lens for understanding agency in relation to algorithmic systems because it places specific demands on agency; for instance, autonomy emphasizes the useful but easily overlooked distinction between action and self-endorsed action. From this perspective, autonomy provides conceptual guidance for assessing the quality of self-directedness. With algorithmic decision-making, choices become anticipated by and interlinked with the behaviour of other agents, machine learning models, big data sets and the interactions between them: the boundary between self and others on which traditional notions of autonomy rely has become increasingly muddled. If we define autonomy too narrowly, we risk neglecting the fact that people exercise autonomous agency amid conditions of design-based control and infrastructural pressures, as well as excluding, a priori, any discussion of what might be worth preserving in human–machine interdependencies. Thus, inspired by earlier research findings, we have argued for a multidimensional view of autonomy as an aspect of relationships that is actively worked on and negotiated. When defined this way, we can hold on to the value of autonomy while recognizing the contextual and situational nature of agentic engagements with algorithmic systems.

We have distinguished two horizons of algorithmic developments where autonomy is at stake. Instrumentally, algorithmic systems and practices are used to order information and manage social cooperation at a distance, according to rules that can only partly be known. Intimately, the same systems and practices cultivate increasingly close human–machine relations. The two horizons are of course interrelated and their aims mutually reinforcing: the more intimately the system ‘knows’ the proclivities of each user, the better it can optimize social coordination that serves instrumental goals, as by matching advertisers with the right customer profiles. Thus, the distinction between the instrumental and intimate should not be viewed as objectively existing. Rather, the horizons work as framing devices that enable us to reflect on algorithmic relations that trouble the value of autonomy on different fronts simultaneously.

In tracing what the intimate and instrumental horizons mean in terms of autonomy, we have concentrated on the four dimensions of autonomy that we identified with the aid of research on everyday responses to algorithmic systems: as algorithmic competence, situational mastery, breathing space and co-evolving. These dimensions are not meant to be exhaustive but are offered as a guide for future research: their aim is to make agency in algorithmic relations visible by highlighting how notions of autonomy are challenged, supported, destabilized or revitalized. Through the first two dimensions, activated by the instrumental aims of algorithmic systems, the focus on autonomy appears as the capacity to reason about one’s decision-making in the context of automated systems of social ordering and as the ability to enact one’s decisions, reasons and values amid technological constraints and possibilities. Of course, the instrumental nature of algorithmic technologies may in some cases enable more reflective and reasoned – and thus more autonomous – behaviour by representing, organizing and shaping individuals and their habits as objects of improvement (Kristensen, 2022). This is especially true of certain forms of self-tracking: regarding eating and exercise, for instance, where one previously acted upon impulses, wearable and biometric technologies may promote more deliberate, information-based and goal-oriented behaviour. Meanwhile, the experiences of autonomy activated by the intimate horizon focus on autonomy as arising from disturbance and comfort in a position of vulnerability. Autonomy becomes featured as freely given or collaborative self-knowledge, for the sake of participating in the system in ways consistent with one’s own reflexive identity. All four dimensions of autonomy are simultaneously present in current algorithmic relations, suggesting that we will continue to grapple with pressures against our self-determination and with desires to live well in intimate relations with (the aid of) our algorithmic companions.

Ways forward

The four dimensions of autonomy and the examples that illustrate them support critical engagement with algorithmic systems by pinning down everyday tensions and violations. Ultimately, through such knowledge, we can begin to offer more precise epistemological resources to the building and regulation of algorithmic infrastructures (Draper and Turow, 2019). The conceptual and ethical distinction between action and self-endorsed action that engagements with autonomy aid in highlighting suggest that to improve algorithmic relations, we need an approach to harm that is able to reach beyond objective, quantifiable risks (Swierstra, 2015; Rubel et al., 2020). The intimate horizon is especially important in pointing out how cultivating autonomous agency in the algorithmic age cannot be seen as merely an individual responsibility: autonomy is not only about how we regard ourselves but also about how social and technological others recognize and respect us. This concerns more than how we make ourselves; it involves how we become ourselves with the help of trusted companions. The lens of autonomy underlines the fact that the failure by algorithmic systems to respect people as their own persons with inner lives, reasons and self-definitions is a wrongdoing in itself, though an ethical one – posing a constant threat to public culture. Notably, this threat is not exclusively motivated by the abstract question about the place of the human subject amid machines with superior capacities for processing high-dimensional information; rather, it is very much a practical and political issue, having to do with how individual subjects and collectives are excluded from the deliberation regarding how data about them is processed and put to use.

The focus on specific features of autonomy is especially important as the breadth of the instrumental and intimate horizons becomes ever clearer: organizations increasingly treat the everyday as consisting of arrays of numerically defined practices that can be iteratively examined and acted on. The question regarding algorithmic systems and artificial intelligence is no longer whether we should do away with them: algorithmic systems and machine learning are here to stay. Therefore, we should also focus our research efforts on how to better live with algorithmic systems so that we can steer them to account for the positive qualities that make us distinctly human – like our ability to reflect upon our choices and doings and our desires for self-determination, meaningful participation and information that concerns our lives and futures. This requires approaches that start from neither power nor resistance but take a more open-ended and situational perspective to assess technologies’ positive, negative and ambivalent features in relation to values that matter in terms of individual and collective lives. By providing both analytical and normative rigour, we argue that carefully assessing the different dimensions of autonomy is one way to go about this. While autonomy is a more limiting term than agency, it helps in pointing out precisely what is it about algorithmic technologies that troubles agency. This approach means that explorations of autonomy can guide us towards tensions that have specific ethical and political importance, given that autonomy is never merely a theoretical concern but also a public value.

Footnotes

Acknowledgements

We thank the ADM Nordic network for supporting our work and Stine Lomborg in particular for excellent comments on how to revise our approach. Warm thanks to Reviewer 1 for engaging with our work with productive structural advice and critical eye for details, and to Reviewer 3 for offering useful advice for the final writing round.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship and/or publication of this article: This work was supported by the Academy of Finland (grant number 332993).