Abstract

The algorithmic automation of media processes has produced machines that perform in roles that were previously occupied by human beings. Recent research has probed various theoretical approaches to the agency and ethical responsibility of machines and algorithms. However, there is no theoretical consensus concerning many key issues. Rather than setting out with fixed conceptions, this research calls for a closer look at the considerations and attitudes that motivate actual attributions of agency and responsibility. The empirical context of this study is legacy media where the introduction of automation, together with topical considerations of journalistic ethics and responsibility, has given rise to substantial reflection on received conceptions and practices. The results show a continuing resistance to attributions of agency and responsibility to machines. Three lines of thinking that motivate this stance are distinguished, and their potential shortcomings and theoretical implications are considered.

Introduction

Machines are active parts of many decision-making processes in our daily and interpersonal as well as civic and political realms. The use of algorithms in media processes has attracted considerable attention, in particular with social media, where algorithms are deployed to display and recommend content. According to recent proposals, the media have become ‘algorithmic’ or are undergoing an ‘algorithmic turn’ (Bucher, 2018; Napoli, 2014): the infrastructures and institutions of communication increasingly depend on such computational processes. Algorithms are considered more than just computer programmes carrying a set of instructions. Montal and Reich (2016) argue that algorithms are no longer procedures for calculations consisting of finite sets of rules, or ‘precise recipes’, but series of computational actions performed concurrently to decision-making at different levels of uncertainty (cf. Latar and Nordfors, 2009). In online media, machines act in roles that previously were reserved for human agents. Algorithms appear to make decisions not only on the contents we encounter but also on the ways in which our reactions to those contents further shape the media content that is provided to ourselves and others. Consequently, research has begun to consider the transformation that algorithms may bring about in agency within media (Klinger and Svensson, 2018; Lewis and Westlund, 2014).

Within the past couple of years, automated journalism has become a buzzword in both the media industry and media research. The potential of automating previously human-driven processes has been seen as challenging our understanding of journalism as a human task (cf. Napoli, 2014; Van Dalen, 2012). Research has broached various potential transformations of agency in the newsrooms such as human-agent teaming, the challenges that algorithms pose for journalistic authorship, and even the possibility of ‘algorithmic journalism’ (Dörr and Hollnbuchner, 2016; Lindén, 2017b). Several studies on automation in newsrooms have drawn from actor-network theory (ANT) that calls for an end to privileging ‘the human’ in describing social and material activity in research (Bucher, 2016; Lewis and Westlund, 2014; Primo and Zago, 2015; Wu et al., 2018). However, these theoretical approaches and suggestions are not descriptions of the actual social practices of attributing agency to machines. Remarks made in latest studies suggest that journalists do not attribute agency or responsibility to machines or algorithmic operations (cf. Milosavljević and Vobič, 2019a; Wu et al., 2018).

This research contributes to the currently developing discussion on the agency of algorithms and machines in media as well to the research on automation in journalism. The empirical context is legacy media where algorithms and machines are introduced to perform tasks and roles that have previously been reserved for human beings. This setting provides the opportunity to explore how the agency of machines is articulated when automation is met by long-standing traditions, standards and professional ethics. The data of this research are drawn from interviews conducted with key actors in central Finnish legacy media with considerable experience with the introduction of automated processes. The concentration on leading Finnish media is motivated by some recent developments. In late 2019, the Finnish Council for Mass Media passed what appears to be globally the first ethical statement concerning the use of algorithms and automation in journalistic contexts. Within the industry, the statement and the processes leading up to it had resulted in increased attention to the use of automation and its consequences. The Finnish case presents a forefront of these developments and the state of the art in thinking about automation in journalism.

This article begins with an exploration of theoretical approaches to automation and machines in journalism, as well as the more general question of the agency and responsibility of machines. Recent studies offer some initial clues to why people in general, and people working in newsrooms in particular, might resist attributing agency to machines or algorithms. However, research has produced various perspectives on the agency of machines in media, and there is no theoretical consensus on algorithmic agency or responsibility. Rather than beginning with a fixed concept of agency that can be compared with machines at different levels of technical sophistication, it is argued that we must take a closer look at the attributions of agency and responsibility and the considerations and attitudes that motivate them. This is the task of the study detailed in the following sections that describe its empirical background and review the main results in terms of three main approaches to automation and agency in legacy media. In a final section, the results of the study are discussed in light of the theoretical approaches examined, and proposals for further theoretical advances are outlined.

Journalistic agency in transformation?

In recent research, the adoption and consequences of automation in journalism has been approached as technologically driven changes in processes of news production (Lindén, 2017a), as institutionally managed innovation processes (Milosavljević and Vobič, 2019b), and as a part of a broader transformation of journalism such as ‘computational journalism’ or a ‘quantitative turn’ (Anderson, 2012; Coddington, 2014). 1 It has been proposed that the prospects of automation pose an unprecedented challenge to our understanding of journalism as a human task (cf. Napoli, 2014; Van Dalen, 2012), and to received conceptions of journalism as a ‘human’ occupation, including the ‘ethnocentric’ attribution of authorship of journalism to human beings (Montal and Reich, 2016; cf. Reich and Boudana, 2013). Research has begun to seek perspectives from which to articulate the agency and responsibility of machines in journalism. It has been proposed that automation may follow the principles of human-agent teaming, with the human staying ‘in command’ by being informed of and by monitoring the behaviour of automated ‘agents’ (Lindén, 2017b). This approach implies that machines have at least some agency in a kind of collaboration with human journalists.

Some visions of automated journalism go further. Dörr and Hollnbuchner (2016) propose that in automated newswriting – which they call algorithmic journalism – human, moral agency is delegated to algorithms. Algorithmic journalism leads to ‘algorithmic agents’, and the complexity of the relationship and collaboration of these agents with humans leads to ethical challenges. Dörr and Hollnbuchner (2016) argue that journalistic ethics could be ‘embedded within code’ with journalists and technologists producing algorithms that fit with individual and organizational ethical standards. It has even been argued that machines may improve on humans. ‘Algorithmic objectivity’ refers to the idea that machines could be devoid of subjective biases and cognitive shortcomings typical of human beings (cf. Carlson, 2014; Carlson, 2017; Milosavljević and Vobič, 2019a). These approaches are reflected in discussions in the philosophy of technology, where there is considerable interest in the possibility of developing artificial moral agents: machines that take ethical considerations into account by being capable of a form of ethical reasoning (Moor, 2006; Wallach and Allen, 2009).

Others have further argued that media research is too concentrated on journalism as a profession, and that the role of technology must be brought into the foreground. Such views are often inspired by the methodological perspective provided by actor-network theory (ANT) that attempts to examine the machinery of news production and media as practice, as opposed to focusing on the product – journalistic content – or the economic and political structures of its production (Hemmingway, 2008: 25–27). It has been proposed that the ‘news network’ includes the dynamic relationships between different actors in the production, distribution and use of news (cf. Anderson, 2012; Domingo et al., 2015; Lewis and Westlund, 2014; Wu et al., 2018). The increasing interest in applying ANT in theorizing about journalistic processes is largely due to its approach to technology. ANT enables viewing technologies as actors – or actants, as they are sometimes called following Latour (2005). Automated processes and algorithms are not mere intermediaries or tools for human action, but actors that transform and alter the processes and meanings of journalism (Primo and Zago, 2015; Wu et al., 2018). Indeed, from the point of view of ANT, ‘any thing that does modify a state of affairs by making a difference is an actor’ (Latour, 2005: 71; cf. Primo and Zago, 2015; Wu et al., 2018). 2

These approaches within the research on automation in journalism are in different ways open to the attribution of agency and even ethical responsibility to current or prospective machines and algorithms. However, remarks made by participants to previous studies suggest that attributions of agency or responsibility to machines and algorithms in journalistic contexts are rare. Based on interviews with editors, Milosavljević and Vobič (2019a) claim that human beings are still considered to be ‘the dominant agents in news production and its ongoing re-invention’ (p. 16). Wu et al. (2018) set out from the ANT perspective where technologies are viewed as actants. Nevertheless, the journalist participants described newsroom processes as controlled by human beings, and one participant even noted that possible errors are always human ones, making clear that responsibility is assigned to human journalists.

Even when faced with a machine performing complicated and seemingly goal-oriented tasks that previously were reserved for human beings, journalists, other professionals and audiences may resist attributing agency to machines. Recent research on journalism provides some clues as to why journalists, in particular, might do so. Resistance to the introduction of automation in journalism has been connected with concerns for occupational implications of automation, including the displacement of journalists (Carlson, 2014; Lindén, 2017b; Montal and Reich, 2016), challenges for journalistic professionalism (Bucher, 2016; Carlson, 2014; Milosavljević and Vobič, 2019a) and risks involved in the promotion of financial profit as opposed to journalistic values and quality (Splichal and Dahlgren, 2016). One study notes that claims about the secondary status of machines and automation are a ‘discursive strategy’ that journalists deploy to maintain the distinctiveness of journalistic professionalism (Bucher, 2016). The introduction of automation has also been resisted on the grounds that machines cannot act in accordance with journalistic ethics (cf. Culver, 2016; Milosavljević and Vobič, 2019a; Van Dalen, 2012). In her extensive study developing ANT as a methodological approach to the study of the newsroom, Emma Hemmingway provides an example of how ANT can also be used to draw out disparities between human agency that is self-reflexive and intentional, and the agency of non-human actors (Hemmingway, 2008: 180–185).

These considerations are connected to debates in the philosophy of technology that also offer further and more general reasons for resisting the attribution of agency to machines. A central concept in these debates is autonomy that is often considered the prerequisite of (‘real’) agency. In one sense of the word, a highly advanced newsbot is autonomous: it operates without the constant or concurrent decision-making of human beings. However, in another sense, a newsbot is not autonomous: it has been programmed by humans (cf. Himma, 2008). It easily appears that the true agents in these processes are the human beings whose decisions and intentions the machine is designed to carry out (Klinger and Svensson, 2018). For the same reason, we may easily resist attribution of ethical responsibility to machines. In recent years, substantial concerns have been raised about human possibilities of implementing accountability to algorithms (cf. Ananny and Crawford, 2016; Diakopoulos and Koliska, 2016). This has led to proposals of ‘distribution’ of ethical responsibility or accountability across both human and non-human agents (e.g. Floridi, 2016; Floridi and Sanders, 2004). However, it has again been argued that machines lack the relevant autonomy and freedom from external and human decision-making (Johnson, 2006).

Freedom and autonomy, however, are notoriously difficult topics. A principled difference between human beings and machines in their terms is difficult to articulate. Any human action or decision can be described as determined by previous actions, choices and external factors. Beginning with P. F. Strawson’s (1962) influential account of moral responsibility, many philosophers have argued that moral responsibility is a matter of (human) practices of attribution of moral praise and blame. Largely sidestepping the issue of freedom, Strawson stressed the affective component of such attributions. Extending this approach to the agency and responsibility of machines and algorithms, we can view these attributions as ultimately up to our social practices. When faced with technological developments, different views and attitudes towards machines as well as their potential may both contribute to such attributions or make them appear dubious. Moreover, these practices are hardly immutable. As novel technologies are introduced, and our thinking and attitudes develop, new practices of attributing agency as well as moral praise and blame may emerge.

Research has provided different and often incompatible perspectives on the agency of machines in media. In general, there is no theoretical consensus on whether and when machines and algorithms count as agents, or as ethically responsible. Rather than beginning with a fixed concept of agency that can be compared with machines at different levels of technical sophistication, this research – inspired by Strawson’s insight – attempts to take a closer look at the actual attributions of agency and responsibility, and to uncover the considerations and attitudes that motivate such attributions. This is the task of the study detailed in the following sections: it sets out to find out how the agency and responsibility of machines and algorithms are articulated in the context of legacy media where algorithms and machines have been introduced to perform tasks and take over roles that have previously been reserved for human beings.

Engaging with the newsroom

The study is based on interviews with individuals with considerable insight into the contemporary development, future and implications of automation in legacy media where algorithmic means have been and currently are introduced and the consequences of automation are experienced and reflected upon. The seven interviews were conducted in person between September and November 2019 with each interview taking between 60 and 150 minutes. The interviews took place at the working offices of the participants, and many of the participants also showcased their working environments. The interviews were audio recorded, and key portions were transcribed and translated. The discussions were semi-structured: a number of themes for discussion were taken up with the interviewer asking spontaneous follow-up questions. The participants were asked to describe their views of the current state and future of automation and its role in journalism. They were also asked about the key challenges, including ethical issues, that automation may bring about. These questions enabled tracking the ways in which agency and responsibility were attributed. Different examples of automation that the participants provided, and the distinctions they made between different types of automation, were grouped and distinguished as a background for the rest of the results. The responses were then grouped in accordance with how they related to or answered the main research question. Themes and concepts that repeated were labelled, especially if they were connected to differing views of the agency of machines or to the perceived changes in agency due to technology. In this way, different such themes were detected within the responses of individual participants. These labels were finally connected as patterns or broader approaches to the transformation of agency. Quotations were selected to present these approaches.

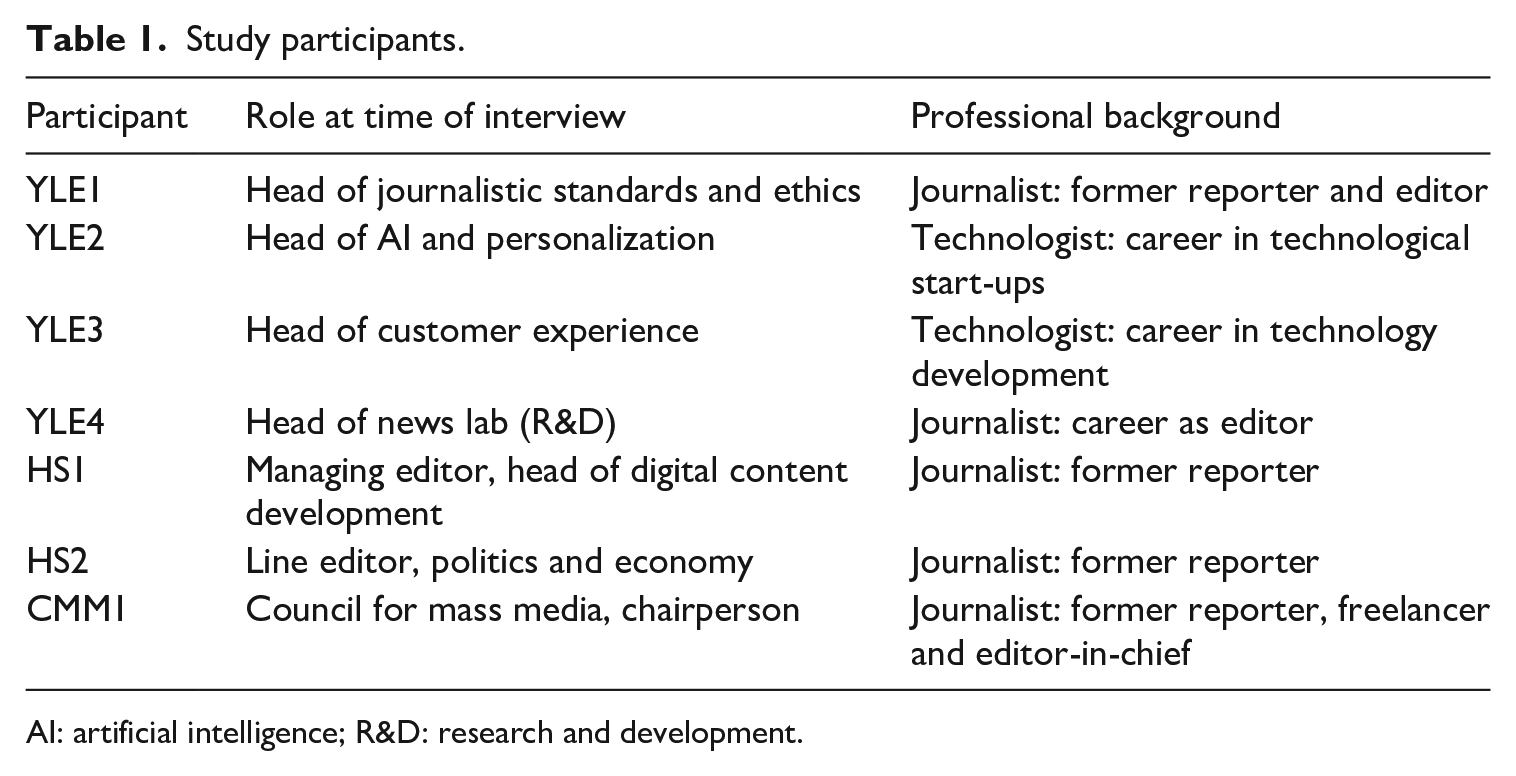

The participants were selected by identifying key actors in two major media outlets based on their position in journalistic processes and the development of automation, and on the recommendations of those interviewed by way of snowball sampling (see Table 1). Four participants have a journalistic background and currently act in supervisory roles in news production that involve the introduction of automation. Two others can be classified as ‘technologists’ working on the technical development of the production and distribution of journalistic content. Following recent calls to draw attention to technologists in the study of journalism (Lewis and Westlund, 2014), it was thought that the inclusion of participants working in the newsroom without a journalistic background would provide a broader perspective. The technologist participants had experience in media development also outside legacy media, including in smaller start-ups, and closely followed international developments in the field. These six participants represent two leading media outlets in Finland, the public broadcast service Yleisradio (YLE) and the largest national daily newspaper Helsingin Sanomat (HS). One further participant was the Chair of the Council for Mass Media in Finland at the time of the interview. The number of interviews is limited, and the study did not include extended observation of the participants. However, the participants are leading experts with an excellent grasp of the topic; during the course of the study, it became clear that additional participants would be unlikely to add further information relevant to the research questions.

Study participants.

AI: artificial intelligence; R&D: research and development.

The concentration on Finnish media and these two media in particular deserves further elaboration. Finland is arguably in the forefront of the adoption of journalistic automation in Europe. During the time that this study was conducted, there was also considerable interest in the ethical and societal implications of news automation and algorithms within the country. This was part of a larger public discussion concerning the use of algorithms and its potential problems that continues to the present moment. 3 However, increased attention to such issues were also due to discussions leading to the passing of what appears to be globally the first ethical statement concerning the use of algorithms and automation in journalistic contexts by the Finnish Council for Mass Media in late 2019. 4 The coverage of self-regulation is exceptionally high in the country: next to all journalistic media have signed the basic agreement of the Council, subscribing to its professional code of ethics and empowering the Council to address any complaints concerning them.

The Council’s statement eventually had two main theses. 5 First, it maintained that the use of news automation, as well as the personalization or targeting of content, are always journalistic decisions that must be done on journalistic grounds. Newsrooms and media must have a sufficient understanding of automation to keep its operation under control of the editorial office and the responsible editors. Second, the statement asserts that the public has a right to know about the automated recommendation or targeting of content, and the media are obligated to disclose that journalistic content has been automatically generated or published. The statement includes recommendations concerning implementing transparency, in particular, marking news automation and personalization. Because of the process leading to the statement by the Council, the representatives of leading Finnish media had reflected on the use of automation and the changes it brings about in agency and responsibility. 6 This study concentrated on two major media outlets that have the longest experience in and the most extensive plans concerning the development of news automation and algorithms in the country.

Three approaches to automation and agency

The following sections describe the results of this study. The next section details the scope and uses of automation in journalism – present and future – as described by the participants. The three sections that follow outline three main approaches to the transformation of media agency based on the participants’ accounts, including comparisons with observations made in recent research.

Uses of automation

All of the participants were prepared to articulate their views of automation and its prospects. They used several different words to refer to current and prospective technologies of automation and journalism, in particular automation and algorithms, and in some cases, artificial intelligence or AI. In addition, the world script was used to refer to simple computer codes performing newsroom tasks. When discussing automation or algorithms in their working environments or in journalism more generally, none of the participants identified automation solely with content production. Indeed, machine-written news was often described as being of less interest than other developments: ‘There is too much talk about robot journalism. In the big picture of the development, the main factor is the enhancement of processes and automating routine work’ (HS2).

In previous studies, newsworkers understood automation in journalism as extending beyond creation of content to various technological advances assisting and helping journalists in their work (Bucher, 2016; Wu et al., 2018; cf. Sirén-Heikel et al., 2019). The findings of this study confirm this view. However, the technologist participants also supplied a typology of automation involved in journalism. One is the automated or nearly automated production of news items and stories. The main current examples of such automated content were automatically produced news items covering sports results, the stock markets and election results. The second is content distribution, especially the personalization of user experience and recommendation of content based on audience behaviour. A typical example of such recommendation is the targeting of online news based on previous news items consumed and the location of the user. Finally, the participants discussed a number of ‘aids’ for journalists and others working in newsrooms that help in both the production of content and the analysis of operations. One participant described this split succinctly: ‘We have content dissemination methods, for example a recommendation system. We have a content creation system, which includes algorithmic methods. And we have methods of analysing content and our operations. In each of these, algorithmic methods can be used’ (YLE2).

According to another participant, the distinction between production and distribution, on one hand, and aids and tools, on the other hand, can be understood as based on the contrast between ‘customer experience and working experience enhancing systems’ (YLE3). The former types of systems are relatively visible to the audiences. The latter type includes a variety of newsroom tools, such as methods of analysis of content and audiences, and aids for newsroom workers: ‘There is choosing images. Sending announcements. Ad network. A classic one is [. . .] archival work. Automatic moderation of comments. Speech to text, no need for people to write subtitles’ (YLE3). Another participant listed further uses: Detecting gender and age in content, recommending content tags for reporters [. . .], categorizing comments for comment moderation [. . .] voting advice applications, all kinds of things as well as specific journalistic projects such as chatbots where machine learning is being used. (HS1)

From the perspective of the audience, the most visible part of automation in journalism may be machine-written news and the algorithmic recommendations of content. However, both journalists and technologists view automation in journalism as a wider phenomenon: machines automate and ‘help with’ background processes in the newsroom.

‘The new typewriter’: machines as tools

A first approach to the introduction of automation in the newsroom was evident when discussing automation in the context of the development of journalism. The participants – both journalists and technologists – seldom described machines or algorithms as agents or actors but as simpler tools or devices. Even when a machine was described as acting, it was usually acting to ‘help’ and to ‘make things easier’ for a human agent. Parallel remarks have been made in previous studies. The senior figures and experts working in media organizations interviewed by Montal and Reich (2016) all thought that a human agent is to be identified as the author of algorithm-generated news item (even if, in some cases, an institution was ascribed authorship). The editors interviewed by Milosavljević and Vobič (2019a) described algorithms as ‘helping’ in their decision-making processes. Similarly, Bucher (2016) found that journalists working in different Nordic countries often perceived computational processes as technical support and providing assistance to human beings.

However, the interviews shed considerable light on the reasoning behind this view. The participants tended to describe automation and algorithms as another step in the history of the introduction of new technologies in journalism, such as typewriters and computers. When asked about the transformations of agency due to automation, one participant put this approach succinctly: ‘I see this change as similar to the change from pens to typewriters and from typewriters to computers. We’re developing a more efficient typewriter. I do not see a major change in agency [. . .]’ (HS1).

Drawing from the continuum of developments of technical devices to aid journalism, another participant described the future role of automation in this key: To us it could be a similar relief in working as, previously, reporters used typewriters to write stories [. . .]. Then came computers and the story can be transferred to a program directly. These have all been tools to help work processes. [Automation] is a similar thing, nothing else. (YLE4)

Some participants even noted that some of the developments of automation might not be of particular journalistic interest. One participant compared some aspects of automation to the development of new printing ink – something that alters the processes of production, but is not a journalistic concern. The participants – both journalists and technologists – did not expect major changes of agency resulting from automation. Several participants explicitly suspected that automation would never exceed the status of a device, even with the development of artificial intelligence. Discussing the potential of algorithms and artificial intelligence in journalism, one participant noted, ‘For example, when we use algorithms in content production, the starting point is that it takes work “off” the reporters. [. . .] I do not see how AI could become more than a helping factor’ (YLE2). Notably, the participants often described machines as helping an individual reporter in their work. The acting agent is the journalist, who makes the decisions and is responsible for the choices made, although aided by devices and tools.

‘We’ in control: automation in journalistic supervision

A second approach was evident when the participants described machines presently occupied with tasks that used to be performed by human beings. It also appeared to be the most prevalent perspective on automation and algorithms. Especially when considering news automation and the algorithmic recommendation of content, the participants viewed automation as complementary to the actions of human beings. One participant also described experimentation with human–machine ‘teams’ with a human journalist ‘pairing’ with a machine that processes data from public sources such as the voting records of the national parliament. However, although machines were viewed as operating without concurrent human decision-making, they were not considered autonomous. The participants underlined that automation acts under the control of human beings by way of both design and supervision. They often emphasized that they also should do so: A human being has accepted the operation of an algorithm after verification of its operation. That’s when a responsible decision is made. And then the operation is observed. [. . .] If something is broken, we fix it. It’s our responsibility. It’s our promise of service. (YLE2)

In discussing the way algorithms are designed and controlled, the participants shifted from describing the way automation can help in newsroom processes to underlining the demands of the journalistic profession and professionalism. As one participant suggested, the creation and production of algorithms can itself be considered journalism conducted by human beings: ‘Producing an algorithm is, as it were, journalism. It is to consider what the algorithm is and what it produces [. . .]’ (HS2). Human control over automation and algorithms was frequently motivated by ethical considerations. Many of the participants referred to the view of the Council for Mass Media that automation is part of journalistic decision-making. One participant imagined a possible complaint to the Council based on content produced by a news bot or algorithm: If it is a question of yielding journalistic decision-making to outside the editorship, then we would respond that we have estimated that we can use this tool for this purpose, we have analyzed the algorithm to respond to the need, we have not handed over journalistic decision-making to anyone else. (HS1)

The situation was compared to earlier developments in the expansion of the scope of the notion of ‘journalism’: ‘It is quite similar to when we had simple text, and it was then realized that pictures and graphic design also belong to journalism’ (CMM1). Many of the participants emphasized the needfulness of human beings having a good grasp of the machines and algorithms in use: ‘Lack of understanding can also be considered as handing over the control of journalistic decision-making’ (CMM1).

Accordingly, none of the participants described automation and algorithms as ethically responsible. Rather, the ethical aspects of decision-making were left to prospective human choices, design, and retrospective surveillance. As one participant made this point that appeared to require no further argument: ‘Decision-making must stay in the hands of people. An algorithm cannot take responsibility. You can get all kinds of explanations from it, but not justifications’ (YLE2). Few of the participants raised the possibility of developing algorithms to detect and address ethical issues. Even when considering the possibility of ‘ethical robots’, they argued that this would not result in the machine bearing ethical responsibility: ‘We cannot teach a robot to be an ethical agent. We can teach it ethics, but not being an ethical agent’ (YLE1). The participants also reflected on the challenges that learning machines may introduce to retaining human control: For example, a bot is internal to the newsroom as long as it works in accordance with the rules given to it and in newsroom control. That’s when it’s still within the journalistic decision-making of the newsrooms. But when we talk about machine learning, that’s where the border can be crossed. (YLE1)

At least here, retaining newsroom control and human responsibility was found to be more important than the potentially speedy development of algorithmic automation.

In discussing control over machines, a meaningful shift occurred in the way the participants described agency and ethical responsibility. The newsroom as a whole was assigned agency: the participants described the agent responsible for automation as ‘us’. Moreover, this collective agent was taken to include those in the newsroom who are not reporters or editors. Discussing agency and responsibility, one participant considered the broadening of the collective newsroom in this way: ‘I try to bring together journalists, service designers, data scientists – dramaturgs, if needed [. . .] We try to fade the lines between journalists and others. [. . .] Future newsrooms are multidisciplinary teams’ (YLE2). In describing machines under human control, agency and responsibility were attributed to the ‘we’ of the whole of the newsroom, including people who do not work in traditional journalistic roles. This suggests that automation may lead to the adoption of a more collective view of agency and responsibility.

‘Human to human’: the demands of the audience

A third approach to automation displayed by the participants highlights the distinctiveness of human beings as opposed to machines. The participants listed abilities that in their view would make human beings irreplaceable in journalistic contexts. This approach was prominent when discussing the future of automation: the participants consistently argued for the continuing need of human beings in media operations. Both journalist and technologist participants listed several features as distinctive of human beings. Many participants noted that machines cannot understand or create meanings: It is nowhere close that there would be an autonomous system which can see that these are the topics, these are the questions that we should be asking. [. . .] It is a human ability to see meaning in the world. (YLE2)

The same point was made concerning the distinctively human ‘abilities’ of experiencing emotions and empathy: Journalism is always human to human. That’s why emotions and empathy are related. When we discuss content in general, feelings and empathy can be simulated. But I think that journalism is made interesting by people having produced it. [. . .] Why would I give any time to a machine? (YLE4)

Another human feature was creativity. Machines and algorithms were argued to have limited ability to create diversity and variety, and prone to produce ‘more of the same’. This was taken to imply the need for diversity also within the human organizations producing media content: We need diversity, in the humans working here, but also in content. So that we don’t lose what is human in it. [. . .] [W]ith machines, we might end up with making content of this same kind, or acquiring content of that same kind. (YLE3)

The introduction of automation appears to have resulted in considerations of the different ways in which humans are distinct from machines, as well as of the advantages of human beings over machines. However, the ‘human’ abilities and features that the participants noted are not the same as the goals of good journalism as commonly described by representatives of the profession. For example, the participants did not argue that automation suffers from insurmountable issues with factual accuracy, integrity and timeliness, often considered journalistic ‘virtues’. Notably, when discussing the prospective developments of automation, the participants turned to a consideration of the needs and wishes of the audience or consumers. These demands were the fundamental reason for the continuing need for the human: journalism has to be by human beings to ‘speak’ to other human beings.

Participants to previous studies have expressed some similar considerations. It has been suggested that automation pushes journalists to reconsider their ‘selling points’, strengths and special abilities that may differ from the features that journalists describe as the ideals and skills defining the journalistic profession, such as factuality, objectivity and speed (Van Dalen, 2012). Participants of other studies have argued that journalists must focus on strengthening ‘foundational skills’ in journalism, including their understanding of the wants of the readers (Wu et al., 2018). Journalist participants have mentioned having instincts or ‘intuition’ as a distinctly human ability (Bucher, 2016), and one of the participants of another study worried that automated creation makes pieces of journalistic content too similar to one another, diminishing their relevance for the audience (Milosavljević and Vobič, 2019b).

Discussion

Recent media research has proposed a multitude of perspectives on the agency of machines, ranging from proposals that flatten the distinctions between human and non-human agents, such as ANT (Hemmingway, 2008; Primo and Zago, 2015; Wu et al., 2018), or hold the door open for algorithmic agents (Dörr and Hollnbuchner, 2016), to views that reject the idea of algorithms and machines as agents because of their dependence on human design and intentions (Klinger and Svensson, 2018). There is no theoretical consensus on the agency and ethical responsibility of machines and algorithms. The likely reason for this is that agency is part of our normative vocabulary. Even when separated from ethical responsibility, agency indicates where the faults and successes lie. When connected with ethical responsibility, it invites intricate – perhaps intractable – questions of freedom. This research drew motivation from the philosophical insight of P. F. Strawson’s (1962) view of moral responsibility central to moral agency. Instead of attempting to arrive at a fixed concept of agency that can be compared with machines at different levels of technical sophistication, it aimed to look at the social practices of attribution of both agency and responsibility and their potential change in the current conditions.

The introduction of automation in newsrooms provides an opportunity to explore how the agency and responsibility of machines are articulated and understood in a context where machines act in roles that previously were reserved for human agents, potentially challenging received conceptions and practices. Latest studies have detected some resistance to attributing agency to algorithms and machines on part of newsroom workers (Milosavljević and Vobič, 2019a, 2019b; Wu et al., 2018); the findings of this research confirm these observations. However, they shed considerable light on the thinking and reasoning behind this resistance. The overall notion that unites all of the three approaches to the agency of machines and algorithms evidenced by the participants is that machines and algorithms do not make decisions. Even if machines and algorithms appear to act in roles previously reserved for human beings, they do not participate in the decision-making processes of the newsroom. Accordingly, praise and blame for successes and failures belong squarely to the human being.

Considered from the point of view of the human individual working in the newsroom, automation and machines are easily reduced to tools that simply do not decide. This approach is motivated by historical considerations of the introduction of new technologies in the newsroom, with which the current shift towards automation was perceived as continuous. Few of us would ascribe agency to a pen or a typewriter – why would do so to a piece of code? However, this perspective may also well understate the relevant discontinuities between earlier and current developments. Automated processes operate without concurrent human control; at the very least they occupy a position in the decision-making of the newsroom that typewriters or computer text editors do not enjoy.

In turn, by the most prevalent approach, machines and algorithms should not decide. Existing algorithms and automations were described as operating without constant human input, but under the control of human beings to whom praise and blame may be ascribed. This approach can be connected with concerns over journalistic professionalism and ethics (Van Dalen, 2012), as well as the notion of human journalists staying ‘in command’ (Lindén, 2017b), likely amplified with the statement from the Council for Mass Media that underlined the need for retaining editorial control. The statement appears to codify a common stance concerning journalistic control and human responsibility that requires little further justification. Algorithms were described as unable to engage in (real) ethical reasoning or assume ethical responsibility. Notably, this approach exposed a considerable shift towards a view of agency and responsibility as distributed, not between humans and machines, but among human beings in the newsroom. This view incurs some obvious vulnerabilities. It is not clear how such control can be retained – in particular, if responsibility is distributed to a collective (human) agent. Who is making the decisions, if the ‘we’ controlling the machine turns out to be an illusion?

Finally, the prospective developments of automation were discussed in terms of the limitations of algorithms and machines in contrast with human beings, such as emotional abilities, lack of creativity and understanding and creating meaning. As a previous study suggests (Van Dalen, 2012), these human strengths appear to diverge from the traditional goals or values of journalism. Notably, however, this approach was connected with views about the expected demands and desires of the audience or the consumer. Machines cannot decide, because journalism is ultimately ‘human to human’. Doubts about such confidence, however, can easily be raised. Some of the latest research suggests that, at least in some cases, people rather rely on algorithmic advice over advice from people – a phenomenon recently called ‘algorithmic appreciation’ (Logg et al., 2019). Indeed, it has even been proposed that the logic of some media contexts forces human beings to act more like machines and less like human beings (Ruckenstein and Turunen, 2019). As things stand, we may well that expect human beings make the most important decisions concerning the content of journalistic media. However, there are few guarantees that this will continue – especially if we discover that a machine makes the timelier, more accurate or more interesting choices.

In addition to these results, the limitations of this study point towards interesting avenues for further inquiry. In the first place, the observation of actual practices and even experimental settings could reveal new ways in which the introduction of automation changes both attributions of agency to machines as well as the human agency operative in the newsroom. Even more crucially, a newsroom, even if at the pinnacle of these developments, provides a highly specialized setting. Although the participants represent the state of the art of thinking on these issues, and their number included technologists without a distinctively journalistic background, they were all professionals in managing algorithms. Their resolve to keep these technologies under the control of human choice and agency may sound soothing – in particular, at a time when the ‘algorithmization’ and ‘datafication’ of our society is blamed for many of its current ills, including political polarization, spread of disinformation and injustice in societal and political decision-making (Noble, 2018; Steensen, 2018). However, the future of these developments does not rest solely in the hands of those working in the confines of the newsrooms of legacy media, but is influenced by external factors, including competition from media businesses and institutions that may have a differing view of the role of human beings and machines, as well as by potential changes in the expectations concerning journalism and its role in society.

Footnotes

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research has been financially supported by the C. V. Akerlund Media Foundation and the Helsingin Sanomat Foundation.