Abstract

Sympathy sockpuppets are false online identities used for purposes of extracting care work from others. While online community infiltration for nefarious purposes is a well-documented phenomenon, people may also join online communities using deceptive personas (“sockpuppet” accounts) for non-nefarious reasons, such as to gain sympathy or cultivate a sense of belonging in a group. In comparison with scamming and trolling, this more subtle form of online deception is not well understood, and to date, its impacts on individuals and communities have not been fully articulated. This knowledge gap leaves communities without guidance when managing the impacts of this sympathy sockpuppet deception. We interviewed people who had been members of online communities that discovered sympathy sockpuppets in their midst to explore and characterize the phenomenon of sympathy sockpuppetry and to provide guidance for other individuals and communities that encounter similar forms of online deception.

Keywords

Background

Online communities are dynamic networks of people who congregate on the Internet, and members belong as a result of their active participation and conformity to shared norms (Stommel and Koole, 2010). Communities can be formal or informal, but members often have common interests, needs, or goals. Online communities are part of people’s social lives and have mixed impacts on their well-being, but supportive online interactions have been associated with positive affect and a sense of community (Oh et al., 2014), especially for members of marginalized populations like LGBTQ+ youth (Ybarra et al., 2015) or people with chronic illnesses (Costello, 2017). Contrary to claims that online relationships are less meaningful or “real” than those formed offline, online forums can facilitate the development and maintenance of trusted relationships (Griggs et al., 2020; Henderson and Gilding, 2004), particularly for those with high levels of digital literacy or involvement (Frederick and Zhang, 2021; Yau and Reich, 2020). In sum, relationships formed in online communities can be equally or more important to people as the relationships they form offline.

Deception is a normal communicative process, occurring both offline and online (Buller and Burgoon, 1996). Offline, it is typically detected by people picking up on inadvertent social cues, like hesitations or facial movements. Online, many people both admit to dishonesty and expect that others will commonly engage in lying (Drouin et al., 2016). Although deception is common, research pathologizes it as an “antisocial” behavior online (Kumar et al., 2017), measuring it alongside Machiavellianism, psychopathy, and/or addiction (March et al., 2017). It has been called “Munchausen by Internet” (Feldman, 2000) in online health communities.

Online deception takes many forms, including sockpuppets and trolling. The term “sockpuppet” (taken from hand puppets made from socks) is commonly used to refer to false online identities or accounts operated by real people for a variety of reasons, whereas “trolling” (taken from “trawling,” a form of fishing in which one slowly drags a lure to attract a bite) refers to intentionally baiting or provoking people online. Dominant techniques that sockpuppets use include identity concealment, facilitated by acts of omission like using the wrong name; category deception, or giving the impression that one belongs to a defined group; and impersonation, or pretending to be a different, known person (Donath, 1999). Some online deception is nefarious; for example, catfishing is intended to intentionally misrepresent oneself to potential romantic partners (Mosley et al., 2020), and malevolent trolling is designed to purposefully harm individuals and to sow discord in a community (Shachaf and Hara, 2010).

However, even within categories often associated with malice, researchers have identified a variety of motivations, ideologies, psychological motivators, and impacts on communities (Sanfilippo et al., 2017). “Trolling-light,” also referred to as soft trolling or light-hearted trolling, for example, involves use of humor for entertainment purposes, rather than with malicious intent, and may be perceived as such by the greater community as well as those engaging in this form of trolling (Sanfilippo et al., 2017, 2018). Similarly, we believe that sockpuppetry can be used with a variety of intentions, motivations, and impacts on communities. We define sympathy sockpuppetry as a form of “soft deception” where the goal is to extract care work and sympathy for the deceiver. Unlike many more nefarious forms of online deception, sympathy sockpuppetry does not have a primary objective of material gain, catfishing, or trolling.

To date, research in online deception has concentrated mostly on exploitative deception, while subtle forms of deception like sympathy sockpuppetry are understudied. Furthermore, existing studies focus on individual motivations and goals of deceivers, rather than on the impact that deception has on its targets or online communities’ responses. Research on identifying online deception focuses on algorithmic detection using cues like message length and lexical complexity, rather than on a qualitative understanding of how people detect and respond to it (Zhou and Sung, 2008). Published case reports of sympathy sockpuppet discovery indicate a need for further study (Joinson and Dietz-Uhler, 2002) to better understand its impact on communities. We therefore undertook an exploratory study to characterize the phenomenon of sympathy sockpuppets in online communities, processes of discovery and response to such deception, and the impacts of sympathy sockpuppets on individuals and online communities.

Theoretical framework

This study was initiated with an open, exploratory approach, and no a priori theoretical framework was selected beyond a general influence of constructivist grounded theory (Charmaz, 2014) on our approach, research questions, and analytic plan. During the preliminary analysis, we noted resonances with interpersonal deception theory (IDT), as well as the feminist construct of care work, which we then integrated into our analysis.

Interpersonal deception in online communities

A recent critical review demonstrates that most research on online deception in interpersonal relationships is concentrated in three domains: dyadic deception over SMS/text; dyadic deception on online dating sites; and deceptions of a group of followers on social networking sites where many of their connections know them personally (Toma et al., 2019). The technological affordances and constraints of the platforms where deceivers operate are often the focus of studies (Cross, 2019). Immediacy, social norms, and platform affordances shape online deception, its likelihood, what type of deception might occur, and its discovery (Tsikerdekis and Zeadally, 2014). We know little about how people go about determining whether they are being deceived online, particularly in online communities where most users do not have personal connections with one another offline; nor is much known about how these communities respond when they discover that deception is occurring. As such, researchers have called for theorizing that goes beyond understanding the features of online platforms that aid or hinder deception, instead addressing how these features interact with social factors that are not technologically dependent (Toma et al., 2019).

We therefore situate our analysis in IDT, a robust framework that addresses the communicative and social factors of interpersonal deception (Buller and Burgoon, 1996). While the theory was originally developed for in-person dyadic interactions, it has since been applied more widely, and its underlying principles do not depend on these conditions (Utz, 2005). IDT positions deception as goal-oriented, intentional, and strategic (Hancock et al., 2007). The theory defines three basic types of deception, in order from the least to most deceptive: equivocation, or avoiding the truth; concealment, or omitting information; and falsification, or lying (Burgoon et al., 1994). Throughout the course of an interaction, people may engage in multiple types of deception. Common goals of deception include self-serving motivations, like entertainment or acquiring and maintaining power over others; relational motivations, including relationship maintenance; and identity motivations, like increasing social desirability (Buller and Burgoon, 1996). According to IDT, deception demands that people interact strategically and requires cognitive work from both deceivers and receivers, or targets of deception. Deceivers perform cognitive work to maintain their deception and their intent to deceive. Receivers also engage in strategic behaviors to determine the deceiver’s credibility. However, people have a truth bias and assume others are honest, hindering detection of deception (Burgoon et al., 1994). Detection often begins as a suspicion that receivers attempt to conceal while they employ strategic probing strategies, like asking questions, to gauge whether they are being deceived. Detection is impacted by the type of deception, probing strategies used, immediacy and frequency of contact, familiarity, and social norms (Buller and Burgoon, 1996).

While there is a growing body of literature that applies IDT specifically to questions of online deception, these studies typically focus on online dating, where dyadic deception is the most common example. In this context, deceivers often misrepresent their physical attributes, marital status, or careers (e.g. McNelis, 2013; Peng, 2020). As is the case in face-to-face interactions, online deception on dating sites is difficult for receivers to detect, and its detection relies on linguistic cues, rather than verbal cues, like message length, language concreteness, and conciseness (Toma and Hancock, 2012).

In addition, when applied to offline settings for which IDT was originally developed, there are a few studies that apply the theory to situations where there is one deceiver and multiple receivers. Because deception is a complex and interactive social process, its detection in groups is more complex and relies on social dynamics and interactive patterns within the group (Marett and George, 2004). Studies that address group deception using IDT are typically quantitative; are situated in workplace or organizational settings, where people know one another and have clearly defined roles and shared goals; and take place in laboratories, where people are communicating either in-person or on the phone and not in an online community (Dunbar et al., 2015). These studies find that work tasks are more difficult to accomplish when deception takes place, and that perceived deception lowers trust and raises suspicion in the group (Dunbar et al., 2021; Fuller et al., 2011). Finally, IDT is underdeveloped in its articulation of what happens after people discover deception. Therefore, there is opportunity to extend the theory to questions of how mediated deception unfolds in groups that are voluntary and more amorphous in structure, like online communities, and what the effects of that deception are on the community.

Care work

A key concept that informed this analysis was that of “care work” (alternatively written as “carework”), a term that encompasses both paid and unpaid labor that involves providing care to others. The concept of care work can be considered an expansion of the Marxist feminist concept of reproductive labor and the related exploitation of women’s work—for example, the manner in which capitalist economies rely on unpaid mothering and homemaking to support and produce workers. England et al. (2002) define occupational care work as labor that “provid[es] a service to people that helps develop their capabilities” (p. 383), and Huang (2016) defines it as “any face-to-face, often hands-on, service that contributes to the physical, emotional, psychological, and cognitive maintenance and development of the individuals receiving the care” (p. 1). Increasingly, globalized economies of care and the growing nature of virtual life and interpersonal relationships challenge the conceptualization of such labor as physically hands-on, as caregiving may be transnational (e.g. international surrogacy) or virtual (e.g. online counseling and social support).

In contemporary Western societies, care work is both glorified as altruistic and devalued as the domain of women and racialized people (England, 2005). Caring work in the paid labor market (e.g. teaching, counseling, nursing) tends to be feminized, racialized, and lower paid than other professions requiring comparable education or training. (England, 2005; England et al., 2002; Mathieu, 2016) However, care work is done in informal settings (e.g. at home, in one’s community) as well as in paid labor markets, particularly when carried out by family or friends. (Huang, 2016). As our analysis of the sympathy sockpuppet interview data progressed, it became evident to the authors that this phenomenon could be considered one that extracts unpaid care work from community members under deceptive pretenses; thus, we incorporated the construct of care work—and related concerns regarding feminization and valuation of caring labor and—into our analysis and understanding of sympathy sockpuppetry.

Purpose

The objective of this analysis was to characterize the experience of sympathy sockpuppet discovery within online communities. Our specific research questions were the following:

Research question 1. How are sympathy sockpuppets discovered by community members?

Research question 2. How do individuals and communities respond to the revelation of a sympathy sockpuppet in their midst?

Research question 3. What recommendations can be made to communities that might encounter similar situations in the future?

In addition, an emergent aim of this analysis focused on testing and expanding theory. The objective of this was to build on underdeveloped aspects of IDT by (a) explicitly extending the framework into community-based, collaborative experiences of computer-mediated deception and (b) analyzing how people respond to the discovery of deception.

Methods

This exploratory study took a qualitative approach to exploring the phenomenon of sympathy sockpuppets through interviews with individuals who had experienced the discovery of such a member of an online community. Our approach to this was influenced by constructivist grounded theory (Charmaz, 2014), focusing on social processes that encompass both affective and cognitive processes, and testing and extending an existing framework (IDT).

Data collection and analysis

Participants were recruited in August 2019 via public posts on the researchers’ Facebook and Twitter feeds, which linked to study information and an online consent form. Consenting individuals were emailed to confirm eligibility and schedule telephone interviews. Interviews were split between the two authors and audio recorded, and participants reviewed their transcripts for privacy and accuracy issues. The semi-structured interview guide was developed around the three study research questions, exploring participants’ experiences with sympathy sockpuppet discovery, individual and community level responses, and their recommendations to others who discover sympathy sockpuppets.

Analysis of transcribed interviews involved an iterative process of conventional (inductive) qualitative thematic analysis (Hsieh and Shannon, 2005) influenced by constructivist grounded theory (Charmaz, 2014). Both authors separately conducted preliminary (“line”) coding, which was granular and emphasized gerunds to understand social processes at work. We discussed emergent themes, after which the first author conducted focused coding to develop these themes and the model of sympathy sockpuppet discovery and response in conversation with the coauthor. During discussion of this focused round of coding, we determined that our developing model of sympathy sockpuppet discovery and response resonated strongly with IDT, at which point the theory was introduced into our final analysis, to compare against our findings. Final, theoretical-level coding was tested and solidified through discussion and diagramming, resulting in the social process findings detailed below. Study procedures were approved by the institutional ethics review boards of the University of Massachusetts Amherst and Rutgers University. Participants were assigned pseudonyms to protect their privacy.

Findings

Based on in-depth interviews with seven participants, we identified five different types of sympathy sockpuppets, ranging from fairly innocent category deception to extensive identity falsification to manipulate other community members. The process of discovery and response typically involved indirect suspicions leading to confirmation, often by photographic evidence. Following discovery, people typically took this information to other community members with high status for investigation, to inform the community and potentially to levy consequences against the deceiver. Although sympathy sockpuppets did not gain materially from their deception, individuals and communities nonetheless experienced losses due to betrayal of trust and the extent of the emotional labor they had performed for sympathy sockpuppets. In addition, individuals and communities experienced a “loss of innocence” that carried lasting implications, testing individual coping and community resilience. Participants suggest that communities should strive to clarify norms around privacy and sharing, and to consider options other than banning sockpuppets who are more puzzling than abusive.

Participants and communities

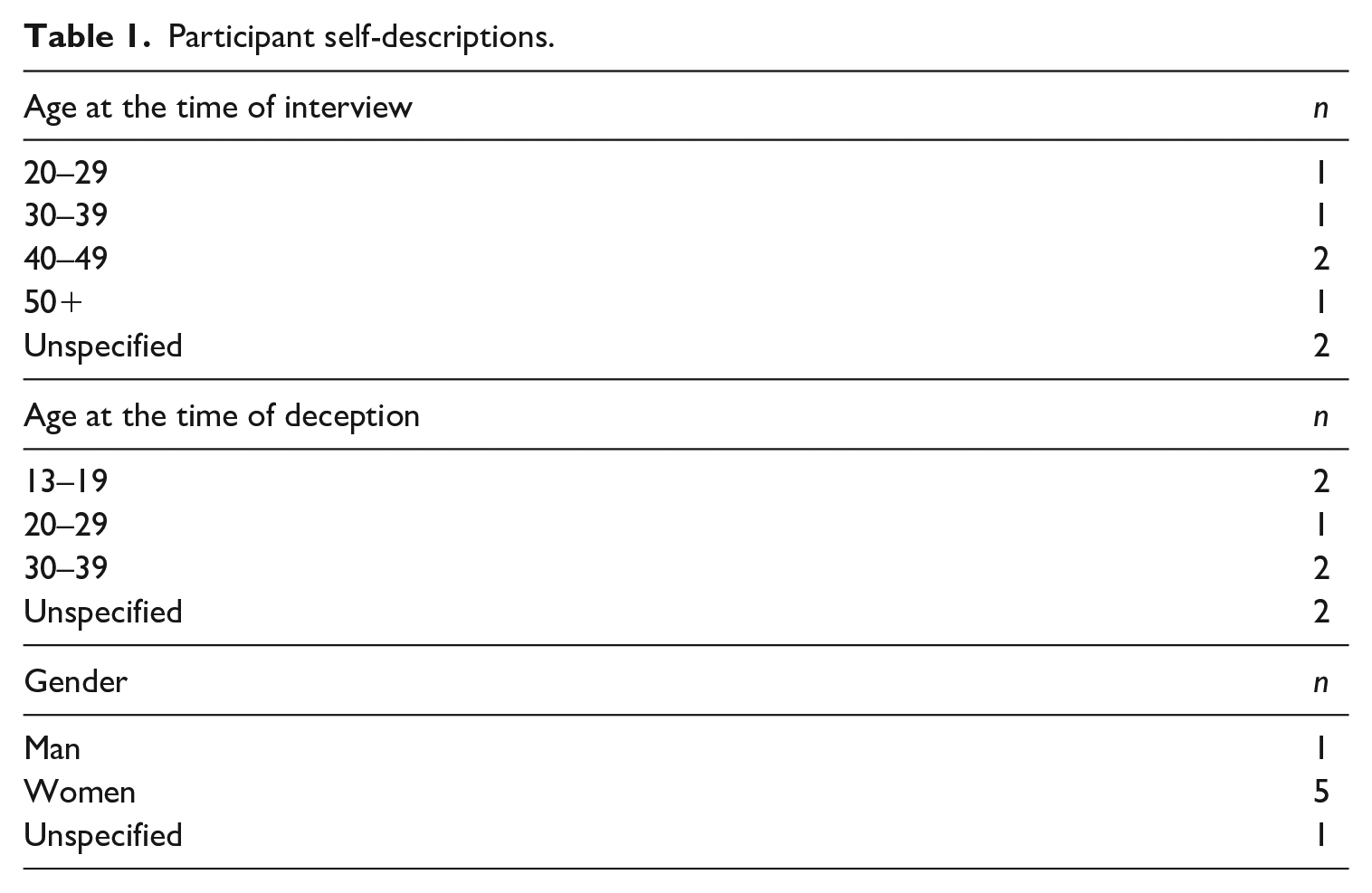

We conducted seven interviews of individuals (Table 1). These experiences involved at least six deceptive individuals (two in the same community) portraying at least seven sympathy sockpuppet accounts (one individual operated two sockpuppets). Interviews ranged from 44 to 84 minutes, with a mean length of 66 minutes (median, 72). While we did not systematically collect demographic data from participants, five participants referred to themselves in interviews as women, one as a man, and one was not specific about their gender. Participant ages at the time of interview ranged from their 20s to 50s; two were minors when the deception occurred.

Participant self-descriptions.

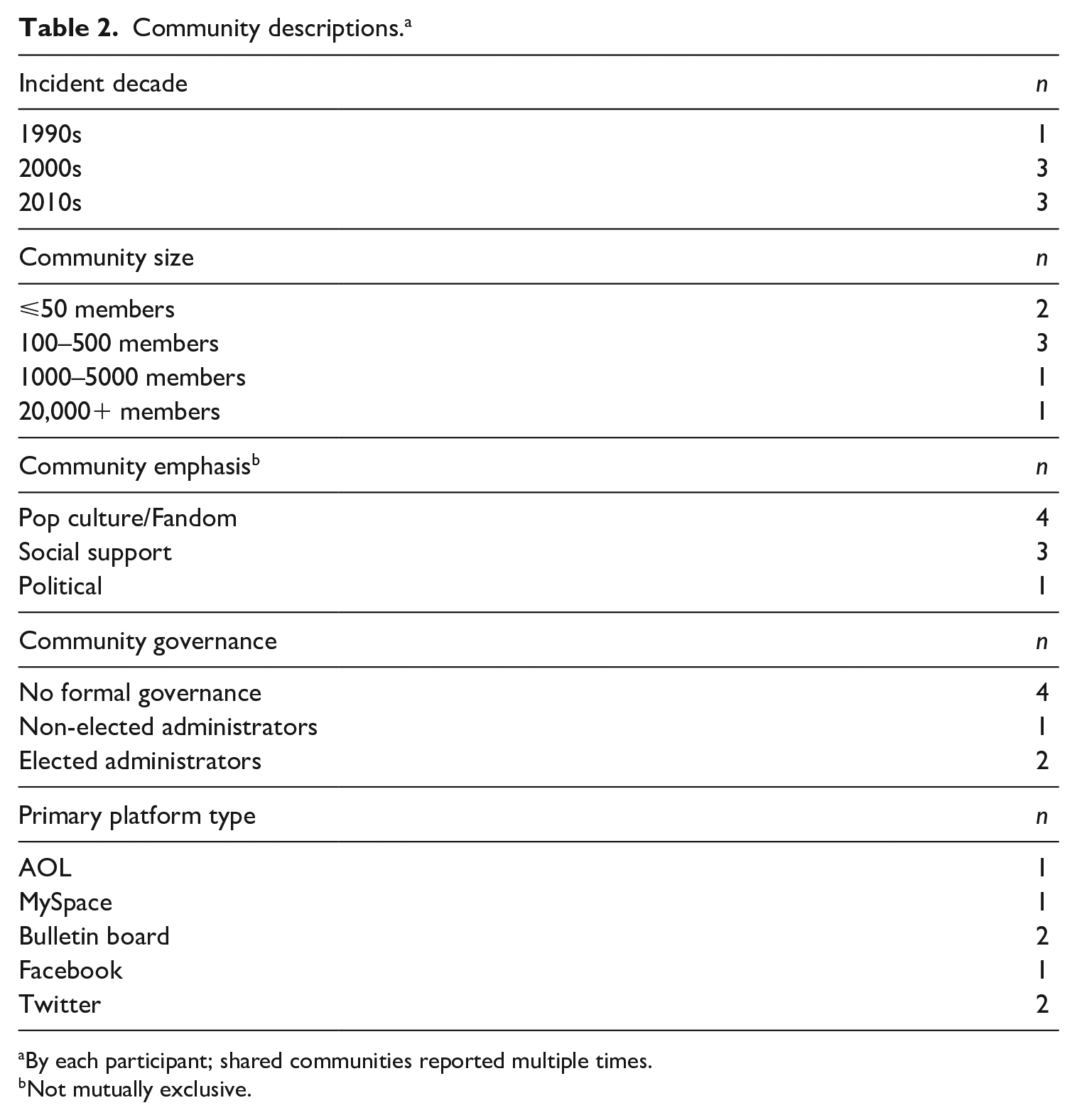

Our seven participants described experiences in five communities (Table 2). The communities where incidents took place ranged in size from 10 to 30,000 members and occurred on platforms including AOL groups, bulletin boards, Facebook groups, and Twitter. Most groups had a multi-platform element, often originating on one website and spilling over onto other platforms. Lasting online/offline friendships began in many of these groups. The most recent sympathy sockpuppet incidents described by participants took place 2 years prior to the interview, and the oldest incident was approximately 20 years ago.

Community descriptions.a

By each participant; shared communities reported multiple times.

Not mutually exclusive.

Describing sympathy sockpuppets

Participants described their online communities as important to their social lives. Susan called her community “a total lifeline,” explaining that “they were at the time pretty much my only support community, especially with regard to parenting.” These communities often provided support that was unavailable locally or face-to-face. In many cases, participants reported that relationships begun in these communities led to lasting in-person friendships where they vacationed together and attended each other’s significant life events, such as weddings. Within these communities, sympathy sockpuppets posed as friendly peers or acted as charismatic leaders, extracting emotional labor from community members. Michelle described the sympathy sockpuppet in her community as “everybody’s friend.” These interactions, however, could take a more sinister aspect—especially when viewed retrospectively—when sympathy sockpuppets were, as Cristina explained, “really just emotionally strip-mining people,” by asking for support and eliciting disclosures from other members.

Types of care work provided by participants and other community members for sympathy sockpuppets included online posts of sympathy or support; reading, listening, and providing feedback on their (supposed) life situations; advice on topics ranging from fashion to relationships; seeking and providing information on serious topics ranging from tax filing to medical concerns; and routinely providing telephone or online company in lonely times. As Abigail described, “Later on, everybody said, ‘She was constantly calling people . . . She was always wanting to talk.’” As sympathy sockpuppets tended to portray stories of misfortune, the support community members provided them on topics such as sexual assault, bereavement, and serious illness could be emotionally taxing and sometimes involved disclosure of members’ personal traumatic experiences as an act of relating or validation.

In interviews, we invited participants to share their experiences with “‘fake’ community members who do not seem to have any intention to troll, scam, or spy, but have joined the community under a false identity for emotional reasons.” Sockpuppets described by participants had both commonalities and variations. All engaged in falsification to create their sockpuppet identities; most also concealed information and equivocated to avoid the truth. Study participants speculated on the sockpuppets’ motivations, frequently echoing Rebecca’s comment that “why” is “the enduring baffling question [. . ..] There’s probably a certain amount of loneliness there [. . ..] And there was something about the community that was desirable.” While sympathy sockpuppets did not ask for money, some accepted gifts sent to false addresses or created convoluted excuses for not accepting material assistance from others. Some of the less-involved examples of sockpuppetry involved falsification as minor as category deception (e.g. misrepresenting one’s age or gender) to gain access to an online community that might not otherwise been open to them, while others were extensive and involved years of groundwork building virtual lives for entirely fictional characters who had their own social media profiles on multiple websites and fictional romantic partners with their own online “lives.”

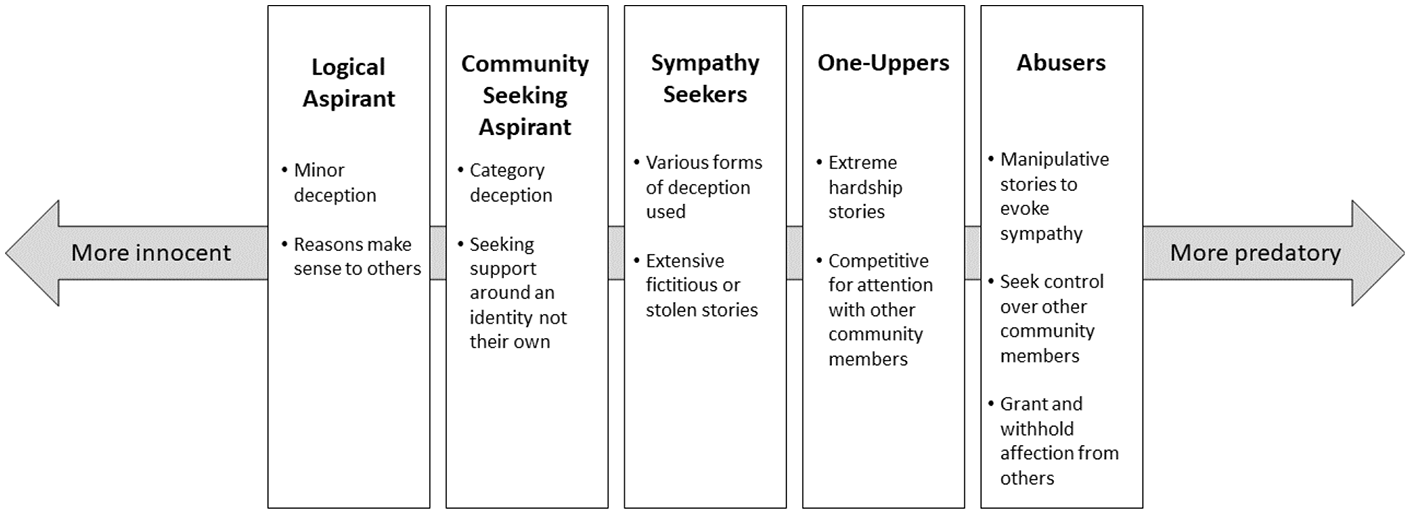

Based on participants’ descriptions of sympathy sockpuppets, we suggest that there may be a spectrum of sympathy sockpuppetry, ranging from the most innocent deception to the most predatory, which we illustrate in Figure 1. On one end of the spectrum are logical aspirants, who are clearly assigned non-nefarious motivations by study participants. Logical aspirants engaged in relatively minor category deception for reasons that were not difficult to understand, like a high school student pretending to be an older university student to access an adults-only community. Community-seeking aspirants also engaged in category deception, but in ways that were less logically clear and more focused on seeking care and sympathy around an identity they did not possess, like a single woman without children pretending to be a married mother of twins to join a mothering community. Around the middle of the spectrum, we heard about sympathy-seekers, who engaged in various types of deception, including category deception, impersonation, and outright fabrication, and shared extensive fictitious or stolen stories of hardship to elicit emotional support.

A spectrum of sympathy sockpuppets.

Continuing down the spectrum are two types of sympathy sockpuppets that were more deliberately harmful, although they still placed limits on material gain and other activities that would classify them in existing categories of nefarious online deception. One-uppers were competitive sympathy-seekers, who seemed motivated to match and exceed the hardship stories of others in the community. A fixation specifically on “one-upping” a particular community member in a competition for community attention suggested a more deliberately harmful deception, rather than general sympathy-seeking. Finally, at the most-nefarious end of the spectrum were abusers, who sought to control other members of the group. These sockpuppets still sought sympathy through their own narratives and also granted or withheld affection or favor to maintain control of the community or a subgroup therein.

The social process of sympathy sockpuppet discovery and response

Directly suspicious incidents, whereby sympathy sockpuppets shared contradictory information, led to their discovery. Commonly, other community members had underlying suspicions that either led them to be alert for such contradictions or that were previously brushed aside but served as additional evidence when viewed in retrospect. Because many communities have built cultures of mutual support, confronting a deceptive member without evidence was often taboo; therefore, individuals or teams—formal governance groups or informal friend groups—tended to embark upon an investigation to confirm their suspicions before accusing another community member. Once deception was confirmed, communities commonly banned the perpetrators, but meaningful closure was elusive. Near-term impacts like anger and loss of trust were ubiquitous, some of which faded over time. Communities struggled in the aftermath of these incidents. Sometimes, they dissolved; in other cases, the “survivors” of these incidents strengthened their bonds with one another, often through shared humor.

“It just didn’t add up”: suspicions and discovery

Sympathy sockpuppets were commonly the subject of low-level suspicions by community members prior to official discovery. In some cases, underlying suspicions accumulated until their weight merited investigation. Other times, it was only in retrospect that members pinpointed things that seemed “off” to them in the past. Underlying suspicions tended to be aroused by inconsistent photographs, an unexplained inability to be contacted outside of the community (e.g. by phone or mail), multiple unusual situations, or unrealistic circumstances. For example, Susan, who long held suspicions about someone masquerading as a mother of several young children, noted that “the amount of time that she had to talk to other people, and the fact that there were never any kids in the background” seemed strange. Sometimes a sockpuppet’s behavior was unexplainable; as Brandon described, “We started realizing that [Sockpuppet’s] behavior was really erratic and crazy but without substantiation behind it of what was actually going on.”

In each instance, some clearly suspicious incident gave rise to active exploration that led to confirming the deception. Direct suspicions were raised by patently contradictory information, like timeline inconsistencies, accidental use of the wrong name, and, frequently, photographic evidence that was inconsistent with a sockpuppet’s story. In one example, Michelle described how she confirmed her suspicions through an online photo search. The sockpuppet had shared a photo that was ostensibly of a medical document from a recent appointment. Michelle “put it through a reverse image search, and it came up as . . . not her photo. The picture was years old. It was some Reddit thing.” Another story involved a sympathy sockpuppet couple, both of whom were fictional and controlled by the same person. After one of the pair died, leaving the other widowed, suspicions were raised because the widow sockpuppet allegedly worked in a small, well-known institution. A community member mentioned her to an offline friend who also worked there, and the friend was confused—they knew no one with the sockpuppet’s description.

There was one notable “negative case” that contradicted the patterns observed in the other six narratives of sockpuppet discovery. The sockpuppet in Hayley’s community spontaneously confessed to her deception: “She eventually told people like, no she’s not [living the life she said she was].” As far as Hayley could tell, no one had underlying suspicions or had confronted this sockpuppet, and the photos she shared were truly her own.

“Hello, grieving widow, you are a fake”: investigation and community notification

While some directly suspicious incidents were confirmatory themselves, often individuals or groups embarked upon a period of investigation to ensure their confidence in wrongdoing, and/or to document its extent. As Rebecca described, “nobody wants to just pop up [. . .] and be like, ‘Hello, grieving widow, you are a fake.’” Upon discovery of evidence of deception, a community member presented this evidence to other members—often formal or informal leaders—for confirmation and to plan how to inform the larger community. In these data, there were four ways that this shift to greater community awareness played out: community investigation, dismissal of allegations, the accuser leaving, and sockpuppet confession.

In community investigations, a small investigative group assembled documentation to confirm the deception. They then revealed some or all of this to the whole community. For example, once Susan had evidence of a directly suspicious contradiction, she presented it to the community’s formal leadership: I took it to somebody who I know had also had a close connected relationship with [the sockpuppet], talked to her frequently, and was also one of the administrators for the board at the time, basically saying, “Look, I’m really hoping that I’m just missing something and that this isn’t true, but it really looks like this person is not who she’s saying she is.”

The member-elected administrative team investigated until they had sufficient evidence of sockpuppetry and then shared it with the entire community. In another community, Cristina was approached by a member with evidence of a sockpuppet. Unlike Susan’s community, Cristina’s did not have a formal governance structure, and she felt that she was chosen by her fellow community member in part due to her credibility and long-standing involvement with the group. She had met several members offline and said, “I am unassailably who I say I am. I have been in the community for so long and so many people have met me that nobody could doubt that I existed.” Christina assembled an ad hoc investigative team including the original discoverer of the deception and other community members with complementary strengths who worked together in shared online documents. This team conducted an extensive multi-platform investigation to document the details and extent of the deception, culminating in an information release synchronized across multiple platforms to notify the community.

In some cases, however, the discoverer of a sympathy sockpuppet in an online community was dismissed when they brought allegations to others, either due to disbelief or because they people they approached did not think it was important to investigate. Abigail, for example, described noticing “inconsistencies [. . .] that got me real suspicious.” However, when she brought her concerns to others in the community, their response was, “No. No, no, no. [Sockpuppet’s] fine and everything.” Michelle also had her concerns dismissed by others, recounting, “I told a couple of people that I knew. I was like, ‘That [photo]’s not hers’. And they were like, ‘Oh, you’re being paranoid. [. . .] Don’t worry about it. It’s not like she’s doing anything wrong’.” While in one case the sympathy sockpuppet was eventually investigated and exposed, the other resulted in the discoverer leaving the community because she no longer felt like it was a safe place.

Less commonly, participants described leaving a community without raising concerns about the sockpuppet, despite having direct evidence like inconsistent photographs. Brandon chose to leave his online community because he did not think they would confront the sockpuppet. As he described it, “This person had so much control over everybody that there was—no one was about to call them out and be like, ‘You’re not who you say you are.’” Finally, as described in the previous section on suspicion and discovery, we heard about one case in which the sockpuppet spontaneously confessed their deception and came clean to the community. In this case, there was no need for investigation.

“Loss of innocence”: reaction and repercussions

In cases where the whole community was informed of the presence of a sympathy sockpuppet, a range of sanctions were levied against the perpetrator; however, it was rare for a community or individual to achieve meaningful closure with the person behind the sockpuppet account(s). Although sympathy sockpuppets generally avoid scamming community members out of material gifts, the spent emotional labor and violations of trust were nonetheless commonly perceived as significant violations by individuals and communities. The personal and community impacts of sympathy sockpuppets were both immediate and long-lasting.

Consequences for sockpuppets

Consequences for the sockpuppet were shaped by technological affordances of the platform(s), community norms, and leadership, including whether this was seen as an actionable community issue. In one community with a formal governance structure, the sympathy sockpuppet was “outed” by administrators in a community-wide announcement, and the perpetrator was banned. In another community without formal governance, the ad hoc investigative team revealed the deception to their community and also managed to have the sockpuppet’s accounts removed from multiple online platforms for terms of service violations. Alternatively, in the case of a community whose administrators seemed unconcerned about deception, the discoverer left after unsuccessfully confronting the sockpuppet herself. In the case of an abuser sockpuppet, other group members also left rather than confronting the sockpuppet. Finally, a young logical aspirant spontaneously confessed their deception in a community that lacked technical capabilities to ban users. At the suggestion of informal community leaders, the group moved on from the incident without sanctions.

Multiple community members attempted to confront sympathy sockpuppets after learning about the deception, although this was rarely satisfying. Several people attempted to confront the individual behind the sympathy sockpuppets in Cristina’s community, but she felt that these efforts did not provide closure. When confronted by community members, Cristina said, (H)e was really upset. So, he blames mental illness. And to one person he was autistic, to another he’s schizophrenic, to another his real name is [Name] and he’s super depressed. And as much as I don’t want to be insensitive to the struggles of people with mental illness, I don’t really believe him. But how would I even believe him?

Some sympathy sockpuppets did apologize after being found out, including the one who spontaneously confessed, although participants were hesitant to trust these apologies. In the absence of whole-community consensus about the sockpuppet’s wrongdoing, individual attempts at confrontation were even less satisfying. Abigail’s sockpuppet denied the deception, and Michelle was blocked on social media by the sockpuppet in response. And in the case of an abusive sockpuppet, participants who left a community without alerting others to the deception remained haunted by a lack of closure and lingering fears that the sockpuppet could still be out there, online, watching.

Impacts on individuals

Study participants spoke of profound impacts of these experiences for themselves and for other people in their communities. “Loss of innocence,” a phrase used by multiple participants, was a prominent theme. Participants described reexamining past interactions and rewriting their memories after discovery. Hayley described a feeling of betrayal that “I’d known this person for a year, and I didn’t really know anything about her,” and Cristina detailed the persistent need to overwrite her mental scripts about the sockpuppets in her community: I knew [Sockpuppet] and [Sockpuppet Wife] and even to this day knowing she never existed it’s still hard to not think of them as two people, like I even say, “Them.” They are [Sockpuppet] and [Sockpuppet Wife]. There were two separate people, but no, it was just this one guy.

In addition to sadness and loss, participants described feeling anger and betrayal in the aftermath. This was sometimes compounded by the perception that online deception might not be taken as seriously as offline betrayals. For example, Rebecca told us, I was really angry about it for a while. There is this real concept, kind of—not wanting vengeance, but I wanted to know who this person really was. And I wanted to know why the hell they did this. And I wanted them to know that they really hurt people. I wanted that.

Over time, Rebecca’s anger became less acute. While she still felt “baffled” regarding the sockpuppet’s motivation, years later, “it’s become more of like, ‘Listen to this crazy story’.”

The degree of possible closure varied for participants, although no one seemed to feel entirely resolved about all aspects of the deception, no matter how far in the past the incidents occurred. Even in cases where the deception was recognized and an attempt to impose consequences was made, participants did not achieve satisfying resolutions because they did not understand the sockpuppet’s motivations, and the ability to confront them and to receive a sincere apology was limited. Some participants reported that a degree of closure was achieved as time passed, but this was more due to their own growth and shift in perspective and was not predicated on anyone else’s response, including the sockpuppets themselves. Only one participant felt they had achieved closure from the incident. Their sockpuppet was a logical aspirant engaging in minor category deception for a year; they were young (and therefore considered less accountable) at the time of deception, and the community decided to forgive the sockpuppet and move on, rather than banning or imposing sanctions on them. This incident also happened over 10 years ago, although time passed is certainly not the determining factor as other participants described incidents that occurred equally long ago with no hope for closure.

Community-wide impacts and responses

When describing community-wide impacts, participants also commonly referenced a loss of trust or innocence and increased circumspection among members. Some communities lost members or waned altogether, while in others new policies or norms were instated, including systems of identity verification. As Cristina put it, “The biggest problem that we faced in the immediate aftermath of letting all this information out was everybody being like, ‘Oh my God. Any of us could be catfished. Anybody could be faking who they are.’” Those who had been the closest to sympathy sockpuppets were among the most likely to leave the communities or social media entirely. Most communities underwent noticeable shifts in membership following discovery, with some dwindling in activity. However, participants noted that other concurrent changes make it impossible to ascribe these drops in activity to the sockpuppet incidents alone. For example, shifts away from bulletin board forums and LiveJournal to Facebook groups were mentioned as other potential causes for membership drops.

Following a sympathy sockpuppet incident, some communities changed or introduced policies to improve community security. These policy changes were not universally perceived as positive. Abigail, for example, described her community’s efforts to implement new rules to make the community safer, which she perceived as backfiring: They started to get a little bit more rules. So it was a little bit more rule-heavy to try to protect themselves, but then it just seemed different. The site wasn’t as open and caring [with] a few rules. Now, it was distrustful [with] a lot of rules.

While Abigail’s community had formal governance procedures, people in administrative positions had no particular expertise in Internet security or community moderation; to some extent, “No one knew what they were doing.” Commonly, communities began formal or informal practices attempting to verify members’ identities. Some built “webs of accountability” to map which members were connected to others offline, some questioned or investigated new members, and one community instated a policy that any new member had to be known by an existing member. Cristina described a community that “kind of went in a more ransom note direction where they would hold up pieces of paper with the date and their handles and stuff,” noting that “It was kind of hilarious. It was done with a sense of humor, but it was definitely like, ‘These are stress reactions to some really bad news’.”

Multiple participants noted that community members used humor as a coping mechanism and a resilience behavior. Brandon described going through the sockpuppet’s posts with another friend from the community and finding “mistakes” (inconsistencies), saying, “it kind of became a running joke. We were like, ‘Oh, no way. There it is. That’s the proof’.” Similarly, Rebecca said that the joking started shortly after the community was informed about the sympathy sockpuppet: I think it was as soon as people sort of got over the shock of it. I mean, especially something that ridiculous, half of us went, “So I guess we’ve got to laugh at this.” Right? I don’t know exactly when the first joke occurred. But I would say it was probably like a week or two. It was pretty fast, probably even sooner than that. And it was just sort of—I mean, it’s like basically, making fun of the absurdity of the persona [and] it was a little bit making fun of ourselves that we didn’t see it.

In the aftermath, community members bonded and reaffirmed their connections using humor. Cristina was initially concerned that her community would start “tearing each other apart with suspicion,” but that instead “we really did close ranks and support each other and turn inward, and kind of realize this is a thing that happened to all of us.” While it took her community a while to regain their sense of “fun and freewheeling-ness,” Cristina concluded that now, “I do think we’re closer and I think our community is stronger.” Even one participant who left a community that did not address the sockpuppetry said that their sole remaining friendship from that community was strengthened because “It was almost as if we were survivors together.”

Recommendations and advice

We asked participants what advice they would offer to people and communities who are going through this process. The overwhelming message to individuals was, in the words of Cristina, “Document everything and trust the people you can trust.” Participants also encouraged people to reach out to others in their community or elsewhere who have been through such experiences, particularly because people who do not form friendships online may not understand that these relationships are real and their dissolution painful. In fact, participants said that being part of this research was itself validating, as it helped them realize that their experience was not an isolated incident but was instead part of a larger phenomenon. Participants encouraged people to try to move through the period of feeling betrayed and “second-guessing anything anybody ever tells you,” in order to regain trust, and not to let the sockpuppet “wreck” the community. For those whose allegations of deception are not believed, participants suggested the best route is to “stay strong to what you believe” and that “If everybody is buying this person and you’re not, get out of there. Just get out.”

Participants agreed that “the reality is that there’s only so much you can do” to ensure truthfulness online. However, they thought that it would be helpful for communities to provide guidelines for sharing personal information, and reminders that people may be deceptive online, especially in groups that include minors. Communities should determine how important identity verification is to their mission and members and act accordingly, recognizing that more identity verification would mean more safety, but possibly less diversity and openness to new members. Participants urged investigative teams to work together when responding to allegations of deception, with no “loose cannons” who might tip off the sockpuppet before the investigation was done. In determining consequences, some urged consideration of communication and reconciliation efforts, rather than banning people, which leaves little opportunity for closure.

Discussion

Online deception is not a binary of nefarious or innocuous behaviors. There exists an intermediate space of non-nefarious deception that is hurtful to individuals and harmful to communities. Sympathy sockpuppetry has real and lasting impacts on people, who rarely have support for this kind of injury from outside of the community in which the deception occurred. Although the harm comes largely from the revelation of a caring relationship as exploitative and one-sided rather than part of an implicit reciprocal community compact, these harms occur whether or not the community is explicitly support-oriented. Raising awareness and providing support for people and their communities as they experience the process of discovering, confirming, and responding to a sympathy sockpuppet could minimize these feelings of loss and isolation. Sockpuppets who are competitive, abusive, or continue to deceive may need to be removed from communities for the safety of other members. However, in more innocuous cases, providing closure (e.g. through processes of community reparations and acceptance) may allow people and their communities to sensemake in the wake of these confusing incidents.

IDT and sympathy sockpuppets

IDT was developed to explain how deception functions within in-person, dyadic interactions; as such, the theory is often applied in that context. Studies of online deception that apply IDT to online deception are typically focused on dyadic, online dating interactions; those that apply IDT to communal settings are situated in laboratory contexts rather than in naturalistic settings, with clear roles and goals for participants (e.g. Dunbar et al., 2021; Peng, 2020). The present study extends this prior work by describing the collective experiences of deception in online communities. We build on IDT in several ways; namely, we qualitatively identify and describe the investigation phase of detection, describe the importance of visual evidence in confirming suspicions, and articulate how individual suspicions may lead to collaborative probing strategies, extending our understanding of how receivers confirm their suspicions. We also explicate the complex social dynamics that are involved in uncovering and recovering from deception in voluntary, communal settings online.

Because online deception was collectively experienced in this investigation, participants did not often engage directly in the dyadic probing strategies presently described by IDT in face-to-face and in online dating contexts (Buller and Burgoon, 1996; Peng, 2020). Instead, the suspicion was often shared privately with other members of the community. When others were interested in pursuing this suspicion, people with high social standings in the community, both formal (moderators) and informal (verified longtime members), were often asked to investigate. This suggests that social standing is a factor in whether suspicions are pursued and that communities rely on well-trusted members to help manage the aftermath of discovery. Rather than engage in typical probing strategies like asking interview-like questions of the deceiver, participants collectively developed and used agentic investigation strategies to pursue and confirm their suspicions, including evidence-gathering and triangulation (Greyson, 2018). Photographs and other forms of visual evidence played a large role in confirming deception. Aside from Hayley’s sockpuppet, who spontaneously confessed their category deception, all other participants described the importance of photographic evidence in the investigation phase. In many cases, photographs provided the most definitive proof that deception was taking place—either because the photographs were found elsewhere online or because details contradicted the deceiver’s narrative. The large role of photographic evidence in the investigation phase may relate to the heavy reliance on visual evidence (e.g. facial expressions) in detection of face-to-face deception. This finding also extends our understanding of how cues function in interpersonal deception online, beyond linguistic cues like message length and comprehensiveness (Toma and Hancock, 2012).

One consistent feature of these experiences was that participants felt “emotionally strip-mined” by their deceivers. While IDT posits that both receivers and deceivers undergo labor to maintain or uncover deception, we also found that a great deal of labor was required of our participants before the deception was discovered. Another contribution to IDT, therefore, is that the work that detectors perform may not only be focused on uncovering deception. Detectors in our study also took on excess emotional work for the sockpuppet. A central principal of IDT is that deception is a goal-oriented behavior; sockpuppets in this study benefited from the emotional labor performed by the community before they were discovered.

Implications for research and practice

Moderators, administrators, and informal leaders of online communities may benefit from some of these findings. IDT posits that deception is goal-oriented, but in the cases of sympathy sockpuppets, most people are left guessing at their motives. It appears that apologies, explanations, and achieving a degree of closure may help repair the individual and community betrayals. Communities in this study that had policies about deception often focused on punishment and consequences in those policies, rather than on restoring or repairing the community, which may have been more helpful to community member closure and maintaining the community. Therefore, community policies might consider aiming for more restorative justice-oriented approaches when appropriate.

The variety of community platforms and range of time over which these seven incidents took place provided a glimpse into the ways the evolution of technology and online social spaces did and did not affect experiences with sympathy sockpuppets. While improved technological sophistication and more complex affordances are often positioned as improving safety and security of online spaces, we did not find this to be the case in this context. Neither deeply felt injury nor community resilience nor a sense of closure appeared to be associated with particular technological affordances. Therefore, rather than relying on technology to verify member identities, it may be best for communities to be explicit about the importance of honesty and privacy, and to remind members to be as cautious as community content merits.

This exploratory study raised some questions for future research on the subject of sympathy sockpuppets. Two topics in particular arose as potential themes in these data, about which we did not have sufficient data to draw conclusions. The first of these is whether certain types of communities, like those that are feminine-coded or explicitly organized around mutual support, may be most susceptible to sympathy sockpuppetry. It stands to reason that those seeking to extract emotional labor might target communities that are primed to offer support. Given that care work is largely performed by women or others in feminine-coded roles (e.g. feminized professions, gay men), it may be that sympathy sockpuppetry is an extension of age-old patriarchal exploitation of uncompensated care work.

A related question is whether there are gender dynamics to certain subtypes of sympathy sockpuppetry. For example, some sockpuppets in this study were female-presenting sockpuppets run by men offline, who were sometimes perceived by participants as “mining for girl code” in their interactions with women in the community (e.g. by eliciting fashion or relationship advice, or eliciting sexual or gendered stories). Users may engage in online cross-gender presentation for a variety of reasons, ranging from catfishing to freeing oneself from gender role constraints (e.g. for men, permitting expression of emotions, and for women, freedom from harassment) (Suler, 1999; Todd, 2012). Online spaces may also be used by some transgender people as safe places to present one’s authentic gender (Wagner et al., 2016). We did not have sufficient data to draw conclusions about this possible phenomenon, but future explorations might explore such interactions, which may share some commonalities with deceptive practices of ethnicity-related sympathy sockpuppetry (Bromwich and Marcus, 2020) online “race shifting” (Flaherty, 2020a, 2020b) and practices of “distorted” or “aspirational descent” (Leroux, 2019, 2020).

Finally, while participants were pessimistic about the likelihood of sympathy sockpuppets participating in research honestly, we nonetheless believe this approach merits exploration. Studies of other types of online deception have recruited perpetrators to shed light on motivations for (Cook et al., 2018) and practices involved in (Cruz et al., 2018) trolling and, to a lesser extent, catfishing (Simmons and Lee, 2020). Even if interviewing the users behind sympathy sockpuppet accounts fails to satisfactorily answer the pressing question on the minds of most participants in the current study—Why did they do it?—it is possible that other useful information about deceptive practices without nefarious intent would emerge.

Strengths and limitations

This study, like all empirical research, has both strengths and limitations. This was the first known study to systematically explore experiences of individuals in a variety of communities with sympathy sockpuppets. The theoretical basis for this investigation and analysis are strong, the interview guide was rigorous and in-depth, and the data were rich given that they were collected via individual telephone interviews. The researchers came from an insider-outsider positionality, each with over 20 years of independent involvement in various online communities similar to those described by participants, which helped us take into account differences in online communities over time and across platforms. In addition, there was diversity in platform(s), social media era, sockpuppet type, and participant ages and roles in their communities. This allowed us to test some emergent themes more fully than is common with such a small number of participants.

Limitations include the fact that this was a small study, in which all data were retrospectively recounted by participants, sometimes about incidents that occurred over a decade ago. It was notable, therefore, that in this small sample we did not detect a clear association between detail of recall and time passed since the events being described; rather, more emotionally disruptive events appeared to be remembered in more detail by participants than ones perceived as minor or innocent deceptions. We also have limited demographic data on participants, so we cannot draw conclusions about the representativeness of the sample. Nonetheless, we believe the characterization of sympathy sockpuppets, model of social processes involved in discovery and response, and recommendations for individuals and communities going through this are likely transferrable to other communities and instances of non-nefarious online deception, and we hope that future research will more fully test these propositions.

Conclusion

Sympathy sockpuppets employ deceptive personas in online communities, seemingly without nefarious intent, yet they do real harm to individuals and communities. These deceptions range from fairly innocuous to outright abusive. The more extensive deceptions, which extract the greatest emotional labor and care work from other community members, appear most harmful. Inconsistencies, often noticed in photographs, typically lead to discovery of a sympathy sockpuppet by a community member, who then turns to someone with high status in their community for verification and action. Communities commonly experience a “loss of innocence” following discovery, and they rarely achieve meaningful closure. Some dissolve in the aftermath, while others strengthen bonds among “survivors” through humor and a reaffirmation of mutual trust. While not all online communities have clear boundaries and formal policies or governance, those that do should consider policies and procedures to clearly communicate community values, facilitate communication, and offer opportunities for closure following disruptive incidents like the discovery of a sympathy sockpuppet.

Footnotes

Acknowledgements

The authors thank the participants for sharing their stories with us.

Data availability statement

The data underlying this article cannot be shared publicly for the privacy of individuals who participated in the study and the privacy of other members of their communities.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This study was supported in part by the Elfreda A. Chatman Research Award of the Association for Information Science & Technology’s Special Interest Group on Information Needs, Seeking, and Use.