Abstract

The use of artificial intelligence-based algorithms for the curation of news content by social media platforms like Facebook and Twitter has upended the gatekeeping role long held by traditional news outlets. This has caused some US policymakers to argue that platforms are skewing news diets against them, and such claims are beginning to take hold among some voters. In a nationally representative survey experiment, we explore whether traditional models of media bias perceptions extend to beliefs about algorithmic news bias. We find that partisan cues effectively shape individuals’ attitudes about algorithmic news bias but have asymmetrical effects. Specifically, whereas in-group directional partisan cues stimulate bias perceptions for members of both parties, Democrats, but not Republicans, also respond to out-group cues. We conclude with a discussion about the implications for the formation of attitudes about new technologies and the potential for polarization.

Keywords

Social media platforms are facing increased criticism from political elites that their curation of news is biased. During the 2016 presidential campaign, both Hillary Clinton and Donald Trump advanced claims of media bias, with Clinton referencing unbalanced critique and Trump calling many prominent news outlets “fiction” (Easley, 2016). These claims come at a time of heightened partisanship in American politics and growing concern over the power large technology companies (often referred to as “Big Tech”) have over what appears in public discourse through the evolving digital media environment.

Such claims of media bias typically allege partisan bias, and therefore increasingly target algorithmically curated news in online news feeds. Conservative politicians in particular claim that social media unfairly censors conservative news, reflecting partisan bias against Republicans. For example, Republican US Senator Ted Cruz claimed that Facebook intentionally discriminated against “conservatives, Christians, whites, and other groups dear to his heart” (Oremus, 2018). Recently, Rep. Jim Jordan (R-OH) accused tech companies of censoring conservative outlets such as Breitbart News and former President Trump’s Twitter channel (Rodrigo, 2020). Rep. Josh Hawley (2021) (R-MO) has been particularly aggressive on this issue, going as far as writing a book (“The Tyranny of Big Tech”) that details his perspective of anti-conservative bias and censorship by tech media companies. Democrats have also expressed concerns about bias online with regard to misinformation (Castronuovo, 2021).

The broad concern by the Republican party and some Democrats has resulted in several US Congressional hearings involving Big Tech leaders regarding censorship, bias, and misinformation, although little has resulted from them (Fischer, 2021). The public also believes this censoring is occurring, with nearly three-quarters of Americans (73%) saying they think it is somewhat or very likely that social media sites censor political viewpoints, with Republicans (90%) believing a substantially greater net likelihood than Democrats (59%; Pew Research Center, 2020). This recent shift in charges of media bias toward tech companies reflects changes in the digital media environment, particularly the introduction of algorithmic news curation.

“Algorithmic curation” refers to the leveraging of user-preference data through artificial intelligence (AI) to provide customized news feeds on commonly used social media platforms like Facebook or Twitter, but also on news aggregators like Google News and Apple News (Bandy and Diakopoulos, 2020; Rader and Gray, 2015; Thorson and Wells, 2016). AI is an expansive technology that takes various forms depending on its application from a range of fields including psychology, medicine, engineering, and economics, among others (Stone et al., 2016). In this article, we focus on AI specifically in reference to news curation algorithms, keeping in mind that different platforms have unique user-preference data and algorithms, which can shape media logics (Klinger and Svensson, 2018). These algorithms have essentially become gatekeepers to the news people see online. Despite large bodies of research examining perceptions of partisan media bias, however, and the growing significance of algorithmic curation in modern discourse, there is a little research examining the partisan dimensions of perceptions that algorithmically curated news is biased. To address this gap, we conducted an experiment that examines how the factors that explain bias perceptions in traditional media, specifically partisan cues, may translate to the formation of algorithmic news bias perceptions.

Within the media bias literature, researchers consistently find that cues from partisan figures influence public perceptions of media bias. Perceptions of media bias are often formed and reinforced by partisan cues delivered by partisan news outlets and political elites that proclaim instances of bias against their own party. The effect of partisan cues on perceptions of media bias has been widely studied through examination of the hostile media effect (HME), in particular, which posits that partisans perceive neutral coverage of issues as biased against their own beliefs due to feelings of animosity toward the opposing party (Vallone et al., 1985).

Our research builds upon this literature by testing the effects of partisan cues on the formation of algorithmic news bias perceptions and discussing potential implications for the formation of AI attitudes more generally as well as concerns for polarization. We extend media bias research by exploring how patterns that drive perceptions of media bias may also result in perceptions of algorithmic news bias. To do so, we designed a 2 by 2 experiment embedded in a nationally representative survey of US adults to examine how (1) cues with directional partisan bias accusations or non-directional bias accusations from (2) Mike Pence or Joe Biden alleging that AI biases the news we see influence perceptions of algorithmic news bias. Drawing on Heider’s (1978) balance theory and the HME to theorize how partisans’ attitude formation is likely to be influenced by such cues, we explore how participants perceive algorithmic news bias when cues do or do not align with their partisan identity. Our findings illustrate patterns of both in-group and out-group cueing, though the latter is only evident for Democrats.

Understanding algorithmic news bias through partisan identity and hostile media effect

AI developments have fundamentally changed the media environment, particularly through customized news feeds, curated by algorithms drawing on digital trace data (Vogel, 2020). This development challenges the role of media organizations as news gatekeepers. Algorithms add a new barrier between what news users see on their news feeds and the information news organizations decide to report (Bozdag, 2013; Nechushtai and Lewis, 2019). This new gatekeeping role of algorithmic news curation raises questions about how perceptions of media bias might be evolving with the digital environment. In this study, we examine Americans’ perceptions of algorithmic news bias and test how partisan cues shape those perceptions.

Hostile media effect

Perceptions of media bias are not new. In general, such perceptions—especially among partisans—can occur through several pathways, one being the hostile media effect. As previously introduced, the HME captures the situation in which partisans perceive neutral coverage of issues as biased against their own beliefs and in favor of the opposing party (Vallone et al., 1985). In a review of HME literature, Perloff (2015) discusses group identity as a moderating influence on the HME such that in-group identity accentuates perceptions of media bias based on how the media portrays in-groups or opposing out-groups. For this study, we explore how partisan identity, made salient by in-group and out-group cues, influences perceptions of algorithmic news bias.

Although not strictly confined to partisanship, previous HME research has consistently found that various partisan variables predict perceptions of media bias (e.g. Eveland and Shah, 2003; Gunther et al., 2012; Ho et al., 2011; Stroud et al., 2014). The relationship between the HME and partisanship has been extensively researched with regard to social identity theory (which we elaborate on below), exploring how partisan identity influences perceptions of media bias and attitudes toward the out-group (e.g. Matheson and Dursun, 2001; Reid, 2012; Schulz et al., 2020). We also discuss how partisan cues can influence partisans’ attitudes about media (e.g. Arceneaux, 2008; Westerwick et al., 2017) and their media use (Yeo et al., 2015), resulting in potentially polarizing effects (e.g. Iyengar et al., 2012; Nicholson, 2012).

Partisan identity

Partisan identity can play a powerful role in shaping how individuals form opinions about issues in general, both by providing relevant mental “shortcuts” or heuristics for people to reach conclusions quickly on an issue and by shaping their more systematic, slower reasoning process (Chaiken, 1980; Eagly and Chaiken, 1993; Price and Tewksbury, 1997). In the highly polarized political climate in the United States, party cues activate individuals’ partisan identity heuristic, which can help them quickly assess unfamiliar issues.

Partisan identity is a particularly strong heuristic in part because of how it manifests as a group identity that is both widespread and made salient by frequent messaging from media and political leaders signaling expectations of group attitudes. Partisan identity’s effects on individual attitudes and behaviors can be understood under the larger umbrella of social identity theory (Greene, 1999, 2002, 2004; Mason, 2018b). Social identity theory explains how people also use shared group identity as a heuristic to make sense of new information in a way that defends their values (Converse, 1964; Tajfel, 1974; Tajfel et al., 1979; Turner, 1999). Perceived similarity in values can lead to someone identifying with a group as a whole. How that individual perceives the values of the in-group they belong to can then, in turn, shape their own values (Forsyth and Burnette, 2010). The result can be that, for many topics, instead of thoroughly evaluating information on the issue themselves, individuals adopt the attitude of the group they identify with (Stroud et al., 2014).

Pushing this a step further, partisans might also think that in-group members then have the same values and attitudes that they (or the party) have, which illustrates the power of partisan cues (Druckman et al., 2010; Leeper and Slothuus, 2014). For example, if a politician from someone’s own party makes a statement, they might rely on partisan identity to assume the politician is credible and voices the same attitudes that they personally would (or should) have (Arceneaux, 2008). The individual then could use what the politician said to form their own opinion, usually aligning with, or, in more extreme cases, taking the exact position as the politician (e.g. Crowe, 2020). Stronger partisan identities can also exacerbate a sense of partisan hostility toward the opposing party (e.g. Mason, 2018a; Miller and Conover, 2015).

Partisan identity is relevant to attitude formation of algorithmic news bias for several reasons. First, partisan identity can be a strong heuristic for forming opinions and attitudes about issues surrounded by uncertainty, including technology issues that overlap with political and policy questions, such as nanotechnology (e.g. Yeo et al., 2015). Weak or unformed attitudes about issues that people pay less attention to can be potentially especially sensitive to the influence of partisan identity. As noted, people may default to the views or opinions of their party, rather than forming attitudes themselves (e.g. Arceneaux, 2008; Druckman et al., 2013). Second, as shown by research on the HME, partisanship shapes perceptions of media bias more broadly. It is possible that partisanship will similarly shape perceptions of algorithmic news bias.

Parallels between media bias and algorithmic news bias

Perceptions of media bias, as explained by the HME and partisan cues, exist across partisan lines in the United States, although critiques of media are traditionally more prevalent throughout conservative commentary. As noted earlier, conservative media commentators and Republican leaders are known for claiming that the media is liberal and biased against them (Easley, 2016). The commentary by former President Trump, on Twitter especially, serves as a prime example of Republican leadership reinforcing perceptions of media bias when he references the “liberal media,” claims “fake news,” or argues that algorithmic search engines are biased against him. In 2019 alone, in response to Fox News live tweets, President Trump posted 88 tweets attacking news media (Gertz, 2020). Studies further indicate Republicans tend to perceive greater media bias overall compared to Democrats. For example, belief in liberal media bias shapes conservative views of media credibility (Eveland and Shah, 2003). However, such cues could also influence views among Democrats, who might become less likely to believe that the bias exists at all or skeptical that it exists against conservative news sources, specifically.

Claims of media bias have found a new object of critique in the algorithmically curated news of Facebook and other news curation platforms. Recent reports of Republican leaders claiming that Facebook is biased against conservative news content (e.g. Allyn, 2020) are based on the perception that Facebook’s curation algorithms are biased to prioritize liberal content and mask conservative content. There have also been concerns over the past decade of partisan polarization being exacerbated due to algorithmic news curation creating “echo chambers” and “filter bubbles” (see Pariser, 2011; Sunstein, 2007) that lead to “audience homophily” (Dvir-Gvirsman, 2016), reducing exposure to counter-attitudinal information and, in some cases, leads to negative feelings toward people of opposing views (Settle, 2018). In addition, evidence of the extent to which such filter bubbles actually exist is limited (Bandy and Diakopoulos, 2020; Nechushtai and Lewis, 2019; Zuiderveen Borgesius et al., 2016), but concern over potential effects from algorithmic curation appears to be relatively prevalent, as exemplified in part by a 2016 survey of over 53,000 people from 26 countries that found that more than half of respondents believed that personalized news would result in missed viewpoints and important information in their news feeds (Thurman, 2019).

Despite the fact that partisans believe there is a partisan bias in media, research has not found such bias. Recent examinations of ideological bias in media indicate that although journalists tend to be liberal, their reporting is not, nor are organizations’ selection of news to cover (Hassell et al., 2020). Similarly, a meta-analysis conducted in 2000 of 59 studies on media bias in presidential election coverage found little evidence for instances of bias (D’Alessio and Allen, 2000). One study that found no evidence of systematic media bias did find, however, that there were far greater claims of a liberal slant in news about media bias, as compared to claims of a conservative slant (Watts et al., 1999).

Although the research on algorithmic news bias is less developed than the literature on traditional media bias, several factors, human and technical, have been examined to see if they create bias in algorithmic news curation. A recent study of Google Top Stories found an ideological bias in the impressions (defined as links within Top Stories) made by news outlets that favored left or liberal news (62.4% of impressions), as compared to right or conservative news (11.3% of impressions; Trielli and Diakopoulos, 2019), although the authors cautioned that this finding is more likely due to “a greater availability of news material on the left” (p. 12) than any particular bias of the algorithm itself. A similar study comparing human-curated and algorithmically curated news through an audit of Apple News found that there was a difference between the two curation results, but more in the form of the algorithm-curated news being “softer” news as compared to the “hard” news in the human-curated comparison, rather than having a partisan slant (Bandy and Diakopoulos, 2020). Previous research on the potential for bias in algorithmic news curation is also complicated by the fact that the algorithms used in curation are typically a “black box” (Barberá, 2020) and reflect complex mixtures of human choices such as the original algorithm design and platform users’ inputs with adaptations made by the algorithm itself. These examples illustrate the lack of well-developed literature on the extent of algorithmic news bias.

Research on perceptions of algorithmic news bias thus presents an interesting parallel to the lack of evidence for claims of traditional news bias. The topic of algorithmic news bias is starting to generate greater attention in public discourse, however, as political elites adapt claims of traditional news bias to the digital environment. While the implications of such messaging from partisan elites are not yet clear, our study may help illuminate likely effects on the formation of attitudes about algorithmic news bias.

Lack of awareness about AI provides an opportunity for influence, or polarization

Despite concern about bias in algorithmic news curation, evidence suggests that most people do not have a firm understanding of what algorithmic news curation is, making them particularly susceptible to partisan cues. Results from focus groups indicate that many do not know which media outlets use news personalization and a substantial proportion cannot differentiate personalized news from commercial advertisement targeting (Monzer et al., 2020). Other research indicates that although individuals are skeptical of algorithm-curated news and think it filters news in a way that restricts what they see (Rader and Gray, 2015), they do not understand news curation as a concept (Eslami et al., 2015) or how this filtering process works (Fletcher and Nielsen, 2019). Polls further demonstrate that people do not have a clear understanding of exactly what roles algorithms play in shaping what news people see. A 2018 Pew survey estimated that about half of the American public (53%) does not understand how algorithms operate in Facebook news feeds (Pew Research Center, 2018). At the same time, nearly three-quarters of Americans believe intentional bias exists in what they see on social media, thinking “it is very (37%) or somewhat (36%) likely that social media sites intentionally censor political viewpoints that they find objectionable” (Pew Research Center, 2020).

Public perceptions about the relatively new issue of algorithmic news curation are likely just beginning to form. This opens up the opportunity for the HME and partisan cueing to occur in ways that could exacerbate partisan polarization. Previous research has shown that when partisan identity is salient, cues from out-group political elites can result in individuals having heightened negative views of the out-group, while reinforcing their own partisan beliefs, which can heighten polarized attitudes about issues (e.g. Iyengar and Westwood, 2015; Jennings, 2019; Mason, 2018a). In addition, recent polling data provide evidence of political polarization in Americans’ perceptions of political bias in news, with nearly half (49%) of Americans perceiving “a great deal” of political bias in news, but Republicans (72%) perceiving more bias than Democrats (28%; Gallup-Knight, 2020). The findings of one study examining hostile media perceptions of vaccines suggested that “partisans will see unfavorably slanted content as even more polarized (and perhaps polarizing) than it actually is” (Gunther et al., 2012: 451).

Examining how partisan cues shape perceptions of algorithmic news bias

While there are similarities between traditional news media selection and algorithmic news curation, there are also many reasons why we might not expect perceptions of bias in algorithmic news curation to be polarized along partisan lines. The algorithms used for news curation disseminate news based on user preferences and other factors that differ based on the platform. The range of data used with these algorithms are not specifically designed to reflect partisan biases and are considered by some to help make algorithms a potential antidote to partisan news bias, such as user control and the cross-cutting exposure social media potentially provides users (for a review of social media and partisan polarization, Barberá, 2020).

Given the lack of understanding of algorithmic news curation and how it fits in the larger context of discussions on media and news bias, we assume that the factors influencing perceptions of media bias (particularly partisanship in the United States) might also influence perceptions of algorithmic news bias. To see if this is the case, we used a survey-embedded experiment to examine the effects of partisan cues on Americans’ attitude formation of algorithmic news bias. Previous research on the hostile media effect helps explain how perceptions of media bias form based on partisan cues. We couple this with a focus on the structure provided by balance theory to explore how individuals reason through information on partisan algorithmic bias, depending on the partisan cues attached to the information and the partisan identity of the individuals receiving the information.

Heider’s (1946) balance model describes how attitudes form in the face of cues from others. According to Heider’s balance model, there are three elements that influence how someone forms attitudes in relation to another person: the perceiver, the other person, and the impersonal entity. In our study, the perceiver is represented by the participant taking the survey, the other person is represented by a politician (either Mike Pence or Joe Biden), and the impersonal entity is belief in algorithmic news bias. These three elements have either positive or negative relationships with each other. A “balanced” model represents a situation where the attitudes of these three elements of the triad fit logically together, as opposed to an imbalanced model in which the attitudes between the elements in the triad do not fit together.

A balanced model can be achieved in multiple ways. In our study, this might look like a participant who is politically aligned with the politician in their condition and agrees with the politician’s statement that AI biases the news we see. Likewise, a participant that is both not politically aligned with the politician and disagrees with their claims about algorithmic news bias represents a balanced triad. Whereas imbalanced triads could be a participant who is politically aligned with the politician but disagrees with his statement on algorithmic news bias, or someone who is not politically aligned with the politician but agrees with the statement. Imbalanced triads are generally unstable and tend to change over time to balanced states as the perceiver reconciles the incompatibility of their attitudes in relation to the other person (Eagly and Chaiken, 1993; Shaffer, 1981).

Balance theory is particularly useful in understanding the effect of partisan cues on individuals’ attitude formation about a new issue (impersonal entity) because it explains how attitudes of another person can be influential, depending on how the individual views that other person. In this study, we combine this theoretical model with the effects of partisan identity on attitude formation to compare how individuals respond to partisan cues from political leaders in their party and in the opposing party. We can thus hypothesize that the formation or adjustment of partisans’ attitudes about algorithmic news bias will align with the perceived stance the in-group party leaders hold on this issue. In other words, we expect both Republicans and Democrats to form or adapt their views of algorithmic news bias in accordance with the views of their party or party leaders. In addition, this framework makes clear the possibility that partisans may even react in the opposite direction to claims of bias from out-group members. For example, Democrats might be less likely to believe that algorithmic news bias is an issue when a Republican makes the claim than they otherwise would be without exposure to a partisan cue. Given the previous research and the current state of media bias rhetoric in conservative media, we test the following hypotheses:

H1. Republicans will have higher baseline perceptions of algorithmic news bias than do Democrats.

H2. Individuals exposed to partisan cues will perceive more algorithmic news bias than individuals not exposed.

H3a. When exposed to claims about bias attributed to Pence, perceptions of algorithmic news bias will increase among Republicans and decrease among Democrats.

H3b. When exposed to claims about bias attributed to Biden, perceptions of algorithmic news bias will increase among Democrats and decrease among Republicans.

H4. Individuals exposed to partisan cues that specify a political party will perceive more algorithmic news bias compared to individuals exposed to partisan cues with non-directional bias.

Methods

To test our hypotheses, we designed an experiment with four partisan cue manipulations. The study was based on a 2 (AI-based bias claim: directional partisan bias accusation vs non-directional bias accusation) by 2 (slant of source: Mike Pence vs Joe Biden) online experiment embedded in a nationally representative survey of US adults’ perceptions of AI, conducted by YouGov from February to March 2020. The final sample was 2700 US adults, with a completion rate of 41.3% (American Association for Public Opinion Research (AAPOR), 2016). About 450 participants were randomly assigned to each of the four treatment groups, and about 900 to the control group. The analyses apply weights from YouGov based on a sampling frame to ensure sociodemographic representativeness for gender, age, race, and education.

Participants in the four treatment groups were exposed to a modified AP News article on algorithmic news bias, featuring a quoted tweet from either a Republican politician (Pence) or a Democratic politician (Biden). Pence and Biden were chosen as the representatives for each party because they are relatively similar in terms of gender, race, age group, position, and public familiarity at the time, reducing the potential influence of factors other than partisan identity on the experimental effects. While we acknowledge there may be other politicians with greater similarities, we wanted the elite cues to come from unambiguous partisan sources that would be widely recognizable among participants. The content of the quotes differed slightly by treatment group. For the non-directional bias accusation group stimulus, the politician (either Pence or Biden) claims that “Clearly #BigTech slants the news we see . . . These AI-based algorithms are creating an uneven playing field in American politics!” For the directional bias accusation treatment groups, the politician claims the news we see is slanted against their party. All other aspects of the article were identical. Examples of the stimuli that respondents saw can be seen in Appendix A of the Supplemental material. The control group received a fictitious article of comparable length about fertilizer, with identical format and a tweet from the Soil Association.

Before the experiment, participants were asked questions related to their knowledge about and attitudes toward AI which were pretested and checked for randomization. Following the experiment, they were asked a series of questions measuring perceptions of algorithmic news bias generally and against specific political parties. Respondents were provided a broad definition of AI that they could review any time AI appeared in the survey. Despite AI being a complex technology with a range of capabilities depending on the application, we chose a general definition of AI to maximize respondent comprehension and because the whole survey examines perceptions of AI broadly and across many applications.

Measures

Perceptions of algorithmic news bias was measured by asking how much respondents agree or disagree with the following statements (1 = strongly disagree, 7 = strongly agree): (a) Most news online is distorted by AI-based algorithms, (b) News delivered through AI-based algorithms is harmful for democracy, and (c) AI-based algorithms deliver news that tends to be slanted against my views. The items were averaged to create an index (M = 4.5, SD = 1.1, Cronbach’s α = .78). Perception of algorithmic news bias against Republicans was measured by respondents’ agreement that AI-based algorithms deliver news that tends to be biased against Republicans using the same 7-point scale (M = 4.2, SD = 1.6). Perception of algorithmic news bias against Democrats measured respondents’ agreement with AI-based algorithms deliver news that tends to be biased against Democrats using the same 7-point scale (M = 3.5, SD = 1.6).

Partisan identity was measured by first asking respondents whether they identify as a Democrat, a Republican, an Independent, other, or not sure. Those who selected “Independent, other, or not sure,” were asked about their leanings. We created a 5-point scale (1 = Democrat, 2 = Leaning Democrat, 3 = Independent, 4 = Leaning Republican, 5 = Republican; M = 2.8, SD = 1.7). Partisan identification was used as a continuous variable for analysis.

Analysis

Our analysis used hierarchical ordinary least squares (OLS) regression models to test how each treatment interacts with party identity. Preliminary tests confirmed the effectiveness of random assignment, that is, that there were no significant differences among the conditions on key variables such as gender, race, partisan identity, or knowledge about AI. Given effective randomization of the experiment, our models did not include control for variables that captured individual-level differences, other than partisan identity, which is proposed to be associated with perceived algorithmic news bias and moderates the effect of experimental conditions on perceived algorithmic news bias. All independent variables were entered into blocks based on the assumed causal order (Cohen et al., 2003), as follows: (a) party identity, (b) experimental conditions (the control group as the reference group), and (c) interactions between partisan identity and each experimental condition. To reduce the risk of a Type I error caused by our large sample, we set our alpha level to establish significance at α ⩽ .01.

Results

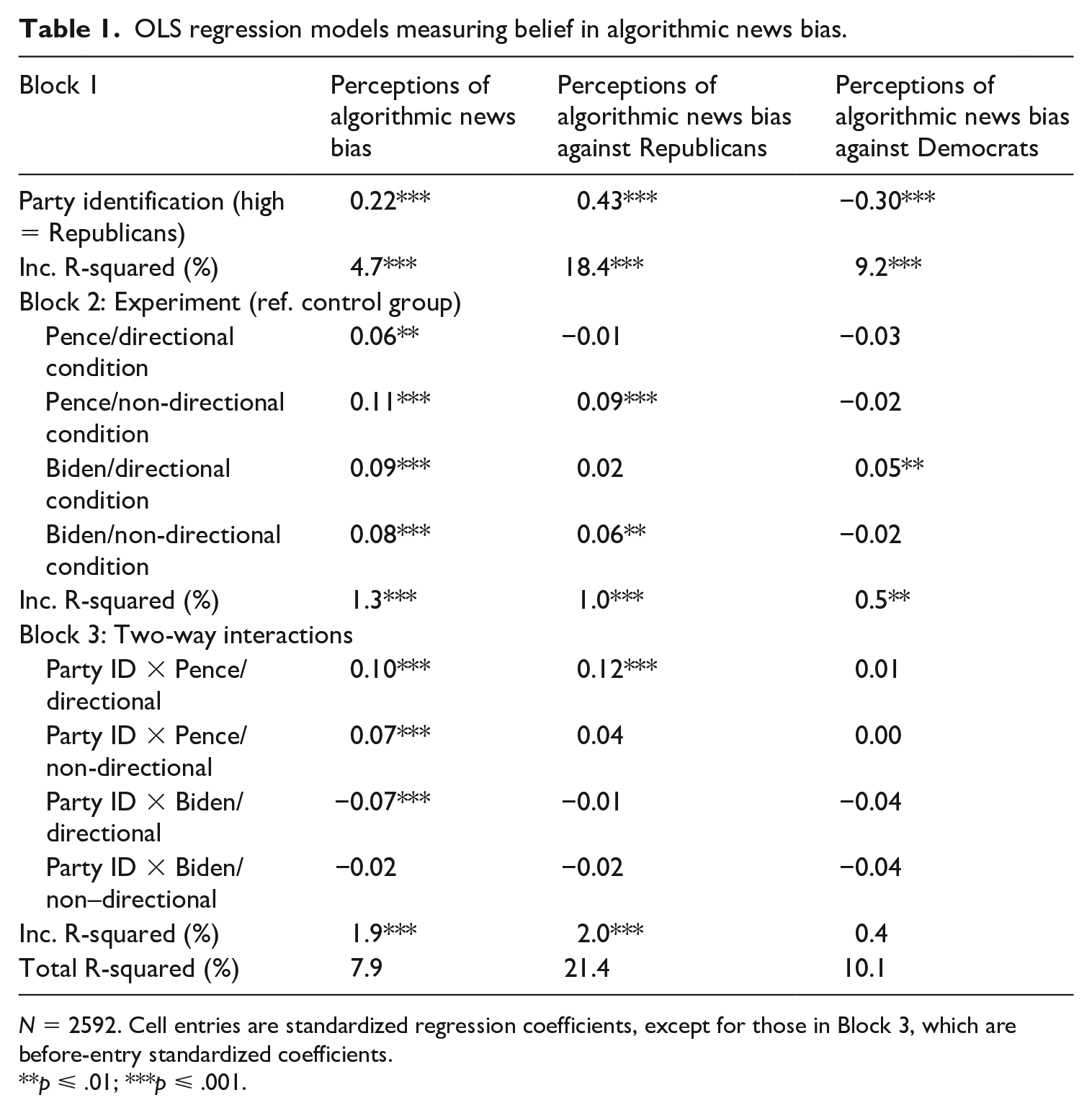

Table 1 presents the coefficients of regression models that predict algorithmic news bias. Across experimental conditions, we found that the more individuals identified with the Republican Party, the greater their perceptions of algorithmic news bias (β = .22, p ⩽ .001), supporting H1.

OLS regression models measuring belief in algorithmic news bias.

N = 2592. Cell entries are standardized regression coefficients, except for those in Block 3, which are before-entry standardized coefficients.

p ⩽ .01; ***p ⩽ .001.

Republicans and democrats show opposite reactions to the same source

Compared to the control group, respondents in all four treatment groups (i.e. Pence/directional bias: β = .06, p ⩽ .01; Pence/non-directional bias: β = .11, p ⩽ .001; Biden/directional bias: β = .09, p ⩽ .001; Biden/non-directional bias: β = .08, p ⩽ .001) had higher perceptions of algorithmic news bias in general, supporting H2.

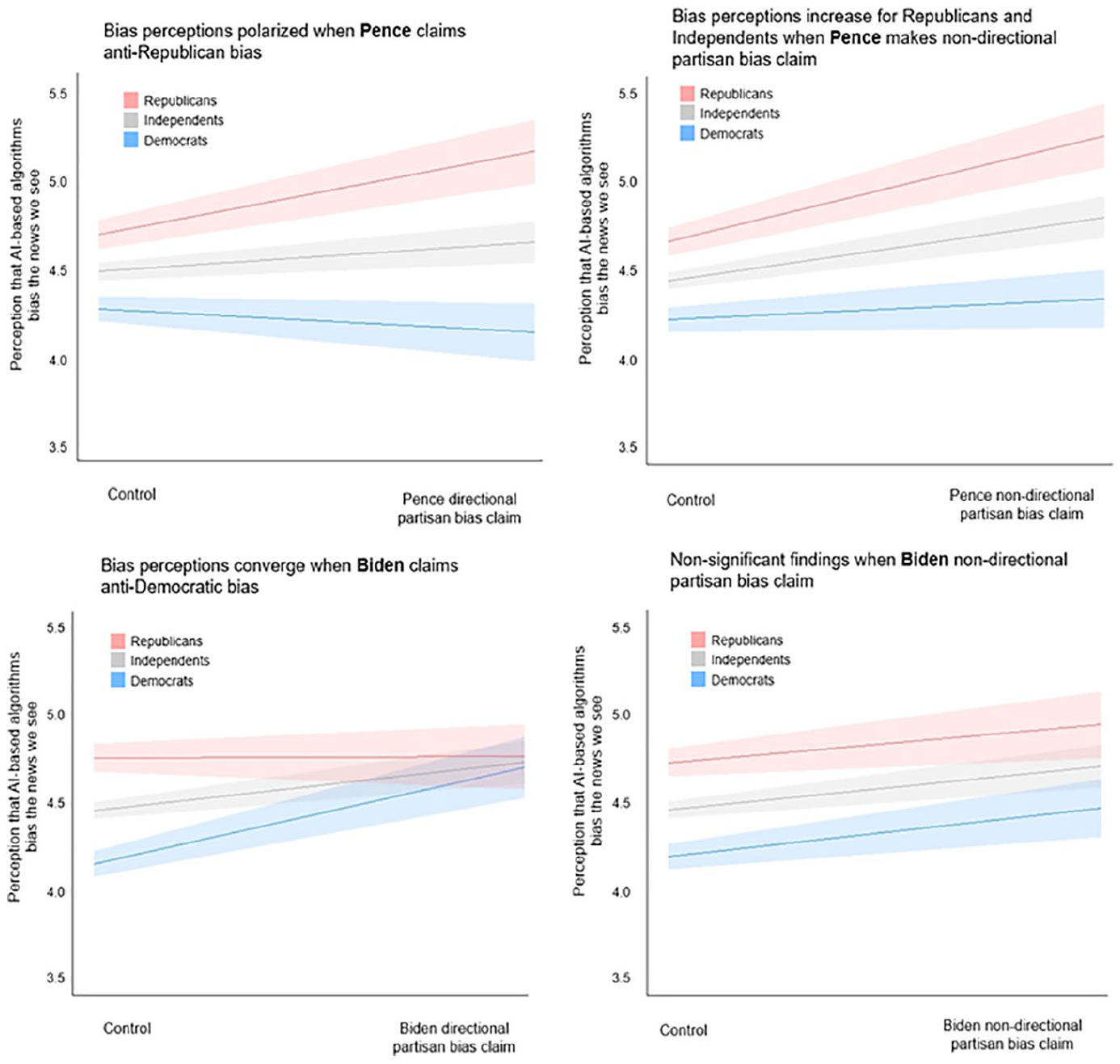

In the two Pence conditions (Pence/directional bias: β = .10, p ⩽ .001; Pence/non-directional bias: β = .07, p ⩽ .001), Republicans significantly amplified their perceptions of algorithmic news bias, while Democrats perceptions either decreased (Pence/directional bias) or remained the same (Pence/non-directional bias), supporting H3a. Given that Republicans perceived a higher level of algorithmic news bias at the baseline, the Pence conditions further polarized partisan views of algorithmic news bias, increasing the gap between Democrats and Republicans by 0.60 on a y-point scale in the directional bias condition (see Figure 1). However, only one of the Biden conditions (Biden/directional bias: β = −.07, p ⩽ .001) indicated the same in-group and out-group partisan effect in that Democrats’ perceptions of algorithmic news bias increased while Republicans’ perceptions did not change, partially supporting H3b. The result of this interaction was that algorithmic news bias perceptions look similar for Republicans and Democrats after respondents received Biden’s message that AI-news we see is against the Democrats (see Figure 1). This result indicates that only Democrats show an attitudinal change when exposed to a claim of bias against their own party. As there was no significant interaction effect in the Biden/non-directional bias condition (β = −.02, n.s.), this supports H4 for the Biden conditions. The results only partially support H4, however, because in both Pence treatment groups, Republicans’ perceptions of bias increased at a similar level compared to the control group.

Results from the four experimental conditions comparing perceptions that AI-based algorithms bias the news we see by participants that identify as Republicans (including leaners), Independents, and Democrats (including leaners).

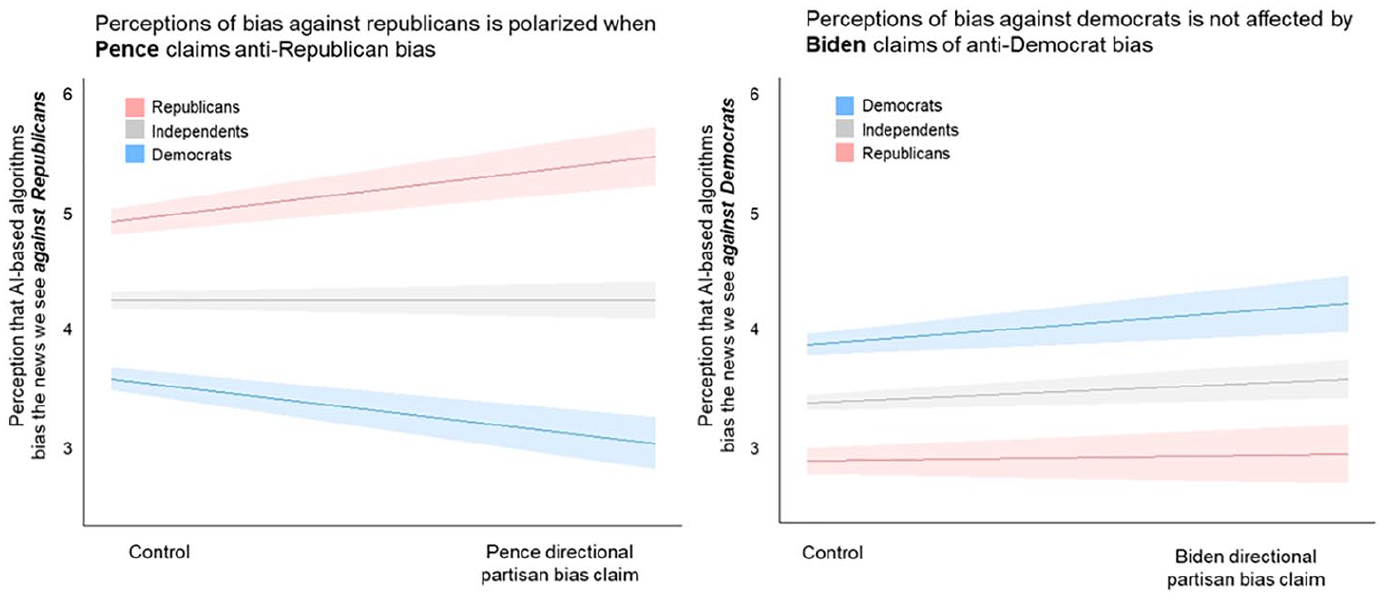

To deepen our understanding of the partisan differences and potentials for polarization, we examined the effects of the manipulations for Republicans and Democrats separately. Not surprisingly, due to identity protection, Republican participants are more likely to agree that algorithmic news is biased against Republicans (β = .43, p ⩽ .001), and the less likely they are to agree that it is biased against Democrats (β = −.31, p ⩽ .001). Compared to the control group, respondents in the Pence/non-directional bias (β = .09, p ⩽ .001) and Biden/non-directional bias (β = .06, p ⩽ .01) treatment groups had significantly higher perceptions of algorithmic news bias against Republicans. It is possible that Republicans and Democrats had opposite attitudes toward the two partisan arguments, which counteracted the group means.

Regarding the interactions between each treatment and party identification, our results showed one significant relationship of the Pence/directional bias group. When exposed to a Pence/directional bias stimulus (β = .12, p ⩽ .001), Republicans increased their perceptions of algorithmic news bias, and Democrats’ perceptions decreased. The gap in perceptions of algorithmic news bias against Republicans (see Figure 2) was wider than non-directional bias (see Figure 1). In addition, the effect of the conditions with directional bias was slightly stronger for Democrats than Republicans. However, no comparable effects of participants were found when asked if AI biases the news we see against Democrats (β = −.04, n.s.; see Figure 2).

Results from the two directional partisan bias experimental conditions comparing perceptions that AI-based algorithms bias the news we see against the Republicans and Democrats, respectively, by participants that identify as Republicans (including leaners), Independents, and Democrats (including leaners).

Discussion

This experiment gives insight into how partisan cues can shape perceptions of media bias as they extend to a new, evolving, and increasingly prevalent technology in today’s media environment—algorithmic news curation. Our findings extend those of previous experiments examining the impact of partisan cues on attitude change and formation (e.g. Arceneaux, 2008; Baum and Groeling, 2009; Druckman et al., 2013; Rahn, 1993) to the context of perceptions of bias in algorithmic news curation. In doing so, our findings suggest notable differences in attitude formation for algorithmic versus traditional media bias perceptions. Before discussing the implications in more detail, we consider a number of limitations to our design and data.

Specifically, the conditions under which we test attitude formation about algorithmic news bias are constructed, including the fictitious AP article. Therefore, the experiment simulates news content more than what people might encounter in their social media feeds. In addition, while our study shows evidence for party cues impacting belief in algorithmic news bias, the mixture and extent of cues from party leaders will ultimately shape how public discourse and opinion play out. Furthermore, in this study, we focus on partisan identity as the driving cue and factor, but partisan identity is only one way in which individuals form attitudes about new issues. It is very possible that other factors could shape people’s view of the technology, and these factors still need to be explored. In addition, differences we saw in the strength of the partisan cues by Biden and Pence might be due to differences in how extreme respondents perceive their ideology. Analyses using items from our survey that captured perceptions of Pence and Biden’s ideological extremity reveal that respondents saw Pence as more extremely conservative (posited 5.9 on a 7-point liberal-conservative scale) than they did Biden as liberal (posited 2.9 on the same scale). Our experiment might have shown different effects if we had partisan cues that were equally ideologically extreme. Finally, respondents may have assumed that when the partisan actors in the stimuli stress the notion of bias in their claims, they mean bias in favor of the opposing party and view the stimuli through a partisan lens, regardless of how strong (directional) or weak (non-directional) the claims are.

A central implication of our findings is that party cues have the power to shape emerging attitudes about algorithmic news bias largely when those cues align with one’s own party identity. This exemplifies the intertwined dynamic between partisanship and attitudes of new and emerging technologies. Consistent with previous research that partisan cues encourage hostile media perceptions (Weeks et al., 2019), we found that Republicans in the Pence conditions had higher belief in algorithmic news bias after exposure to the stimulus, as did Democrats in the Biden conditions. Our findings further contribute to this body of research by showing differences between the reactions of Democrats and Republicans to the stimuli that include partisan cues from the out-group partisan elites. Specifically, we found that Republican responses were less impacted by out-group (Biden) treatment effects than were Democrats, who were significantly influenced by party cues in both directions. This suggests that Democrats were more sensitive than Republicans to partisan cues in their attitude formation on algorithmic news bias.

In addition, our results indicate that Republicans not only had more stable views, but also higher baseline perceptions of algorithmic news bias (i.e. controlling for stimulus exposure). This difference is likely due to the issue of media bias being communicated more often from Republican Party elites and conservative news and therefore likely more salient among Republicans. In his review of HME literature, Perloff (2015) outlines four proposed processes as mediators presented throughout the HME literature, one of them being “prior beliefs about media bias” in which negative perceptions of the media impact opinions of media coverage on particular issues. While Perloff (2015) notes that research in this area has been “met with mixed success” (p. 711), prior beliefs about media bias as a mediator might explain the ceiling-like effect we see from Republican respondents. Because claims that media have a liberal bias are consistently made by Republican leaders and among conservative news, belief in its existence may be more established and therefore stable, regardless of which party is highlighted as affected. This is in contrast to Democrats who may not have preconceived beliefs about media bias and therefore might be more susceptible to party cues.

Another important implication of our findings is that while there is potential for perceptions of algorithmic news curation to be biased along partisan lines, the issue of algorithmic news bias itself is still largely not polarized. There are only minor partisan differences in baseline views of algorithmic news bias among respondents in the control condition, with only an 11 percentage point difference between Republicans (51%) and Democrats (40%) who “somewhat agree,” “agree,” and “strongly agree” that AI-based algorithms bias the news we see. Although the question wording is slightly different, recent polling on perceived news bias show a 44 percentage point difference in perception that there is “a great deal” or “a fair amount” of political bias in news between Republicans (72%) and Democrats (28%; Gallup-Knight, 2020).

Our findings, however, illustrate a substantial potential for future partisan polarization of this issue as it develops. For instance, for both Democrats and Republicans, perceptions of algorithmic news bias were amplified when the accusations were made by leaders of one’s own party. However, as noted earlier, we also find that Democrats’ perceptions of algorithmic news bias were more sensitive to out-group (Pence) accusations of bias than were those of Republicans, creating an additional potential pathway for polarization. This indicates that the polarizing effect might differ across parties and is slightly in contrast to explanations of biased attitude formation with the hostile media effect, in which perceptions of bias for partisans are similarly impacted by disdain for the opposing party. Our findings are consistent with balance theory, however, which explains that balanced states involve individuals forming attitudes consistent with in-group party leaders or discrepant with out-group party leaders. This is especially likely when, as our results indicate, most Americans do not yet have solidified, highly partisan or polarized beliefs about algorithmic news bias.

Even though public perceptions of algorithmic news bias are largely not yet polarized, our findings point to the danger posed by increasing claims by political leaders of partisan bias in algorithmic news curation. Our results show that Pence claims of existing partisan bias in algorithmic news curation are polarizing in that Republicans’ perceptions of algorithmic news bias become elevated as Democrats’ perceptions of bias becoming reduced. On the flip side, it appears that Democratic accusations of algorithmic news bias narrow the gap in bias perceptions among partisans (Figure 1). However, it would be misleading to conclude that such claims by the Democratic Party signal any consensus among partisans. Individuals identified with opposing parties are highly likely to disagree on the direction of news bias.

As a result, claims of algorithmic news bias by both parties could fuel polarization of the debate surrounding algorithmic news curation, making it hard to talk about these issues outside of narrow partisan concerns. Consequently, heightened partisanship related to online news bias can overshadow effective public engagement and deliberation about whether and to what extent there is, in fact, bias in algorithmic news curation, and if so, what should be done about it.

Conclusion

What we already know about partisan perceptions of media bias could extend to shape perceptions of bias associated with algorithmic news curation, and potentially influence attitudes of AI more broadly. Despite its widespread use, algorithmic news curation is a developing technology that is not well understood by or particularly salient to broader publics in the United States. However, algorithmic transparency has proven complicated and been argued to be an ineffective way to approach the consequences of algorithms as sociotechnical systems, with scholars calling for development of “ethics of algorithmic accountability” (Ananny and Crawford, 2018). Our findings contribute to the growing call for research on the relationship between algorithms and humans, to examine the broader societal impacts and implications of new AI applications, like algorithmic news curation, and how we decide to communicate and collectively make decisions about such technologies (e.g. Napoli, 2015; Thurman et al., 2019). Our choice to specifically consider perceptions of bias in algorithmic news curation adds further complexity and depth to existing research by applying known concepts and phenomena in political communication and media studies. Specifically, we advance literature about algorithmic news curation by providing some clarity on how perceptions about this new technology can be formed, and are likely to be formed given the context of media bias perceptions and the current state of partisan politics.

Our findings highlight the sensitivity that new issues such as algorithmic news bias have toward partisan cues, which could possibly lead to the polarization of this issue. This last point is critical, as partisan polarization of new science and technology issues can have detrimental effects on understanding and decision-making concerning these issues. We are only beginning to discuss the implications of algorithms in shaping our news environments and political discourse, let alone the implications posed by rapidly developing AI applications across a wide range of industries, and there is great need and potential to discuss and decide collectively what role these technologies should play in our society. As we see in growing Congressional interest in tech companies, there is a reason to believe it can be a topic that generates bi-partisan concern over the power and possibilities—for better and worse—of AI and information technologies. Currently, concern from US legislators that Big Tech is censoring the news we see is often partisan, with Republicans frequently claiming anti-conservative censorship. However, concerns are not aligned across party lines. Democrats recently introduced legislation to prohibit discrimination by online algorithms on the basis of race, gender, age, ability, and other protected classes, as well as calling for algorithmic transparency (Zakrzewski and Schaffer, 2021). These differences in may exacerbate partisanship around these issues, with potential consequences impacting perceptions of and support for AI beyond news curation. Thus, how we decide to pay attention to and discuss scientific developments, as experts, policymakers, or even society as a whole, will determine whether we see further partisan polarization or constructive critique of new technologies and their potential societal implications, regardless of party identity.

Supplemental Material

sj-pdf-1-nms-10.1177_14614448211034159 – Supplemental material for Polarized platforms? How partisanship shapes perceptions of “algorithmic news bias”

Supplemental material, sj-pdf-1-nms-10.1177_14614448211034159 for Polarized platforms? How partisanship shapes perceptions of “algorithmic news bias” by Mikhaila N. Calice, Luye Bao, Isabelle Freiling, Emily Howell, Michael A. Xenos, Shiyu Yang, Dominique Brossard, Todd P. Newman and Dietram A. Scheufele in New Media & Society

Footnotes

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship and/ or publication of this article: Data collections were – in part – supported by funds from the University of Wisconsin and the Wisconsin Alumni Research Foundation.

ORCID iDs

Supplemental material

Supplemental material for this article is available online.

Author biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.