Abstract

Keywords

Introduction

Video capsule endoscopy (VCE) is a non–invasive endoscopic procedure for visualizing and assessing the gastrointestinal (GI) system. 1 This innovative procedure offers a means for exploring regions of the GI system that are inaccessible or difficult to reach with other endoscopic techniques, for example, colonoscopy and gastroscopy. Specifically, VCE has an essential role in diagnosing small–bowel diseases and disorders including obscure GI bleeding, Crohn’s disease, small–bowel tumors and polyporosis syndromes.2–5

A camera–equipped device of pill shape and size is swallowed by the subject and records more than 50,000 images as it passes through the GI tract. Images are either transmitted to an external recording device worn on a belt around the waist of the patient or stored in onboard memory for subsequent download after the capsule is retrieved after excretion. 6 Eventually, the retrieved images are converted to a video file which is afterwards reviewed by a gastroenterologist. Typical duration of capsule endoscopy (CE) videos is approximately 4 to 5 h and examination requires at least 45 to 50 min but can last few hours depending on the experience of the gastroenterologist and the scarcity of clinically significant findings.7–9 Certain small bowel abnormalities are obvious, appear in multiple frames and can be detected easily, while others are more elusive and require experience and careful evaluation to be noticed. Particularly, the rate of missed lesions in the small–bowel is estimated as high as 10%. 10

Artificial intelligence (AI) is revolutionizing medical imaging by enhancing diagnostic yield, reducing human error, and improving patient outcomes. The range of applications spans from computer-aided analysis in radiology 11 to enhancing image–guided surgery 12 and precision medicine. 13 Its capabilities include diagnosing, predicting and prognosing diseases, and personalizing treatment plans, making it a transformative “tool” across various medical specialties.14–18 Ιn VCE, AI could automate parts of the reviewing process through automatic detection, localization and classification of potential abnormalities and anatomical landmarks, thus increasing the diagnostic yield and significantly reducing the review time with the experience to be a less crucial factor. Although several AI solutions have been proposed in the literature,19,20 the main factor for translating them to clinical practice is their validation through multi–reader multi–case (MRMC) studies, 21 prospective or retrospective. These studies can evaluate the current clinical practice with and without AI; hence, they can enable the assessment of the performance of the end–user when using AI, not the AI alone. Moreover, such a study approach aims to bring to spotlight the symbiotic relationship between the gastroenterologist and AI and to assess the challenges of this relationship. 22

This work aims to present a software platform designed solely to support MRMC clinical studies for measuring the impact of AI in VCE. Although such platforms are used in the few relevant studies exist in the literature,23,24 documentation of the supporting software is not provided. The only relevant work in literature presents a commercial cloud–based VCE reading system. 25 In that work, all seven readers assessed the VCE reading system as easy to access and use achieving a high usability. In that study, seven gastroenterologists. Apart from the presentation of the design and implementation decision we made, an additional novelty of this work is that evaluates the usability of the proposed software approach revealing important insights about end–users’ expectations and preferences.

Methods

Web platform

The capsule endoscopy video reading (CEVR) platform is designed to support MRMC clinical studies which aim to investigate the impact of AI in reading capsule endoscopy videos. The system specifications of the CEVR platform are divided into two main categories. On the one hand, the specifications which originate from the requirements of the study design, that is, 1. Remote access to the capsule endoscopy videos, 2. A data model that includes a “reading” entity which captures the participants’ interactions with the video, 3. Ad hoc support of AI functionalities, 4. Monitoring of the participants’ involvement.

On the other hand, the second group of specifications regards the user experience (UX). It is required for the participants to have a similar experience as the routine CEVR provided by the existing commercial software applications. Nonetheless, the objective is not to replicate the existing solutions but to implement a user interface (UI) that consists of the minimum viable functionalities to provide a familiar environment. The UI/UX related specifications were extracted from a hybrid user research approach that consisted of interviewing and co–designing with expert gastroenterologists. The UI/UX-related specifications consist of 1. Viewing capsule endoscopy videos with an extended set of playback capabilities, 2. Allowing manual annotation of suspicious findings, 3. Applying AI that automatically detects and localizes suspicious findings which are presented to the reader in a user-friendly manner, 4. And, allowing the reader to manipulate the existing suspicious findings, both the manual annotated and AI extracted, by adding comments and indicating clinical significance.

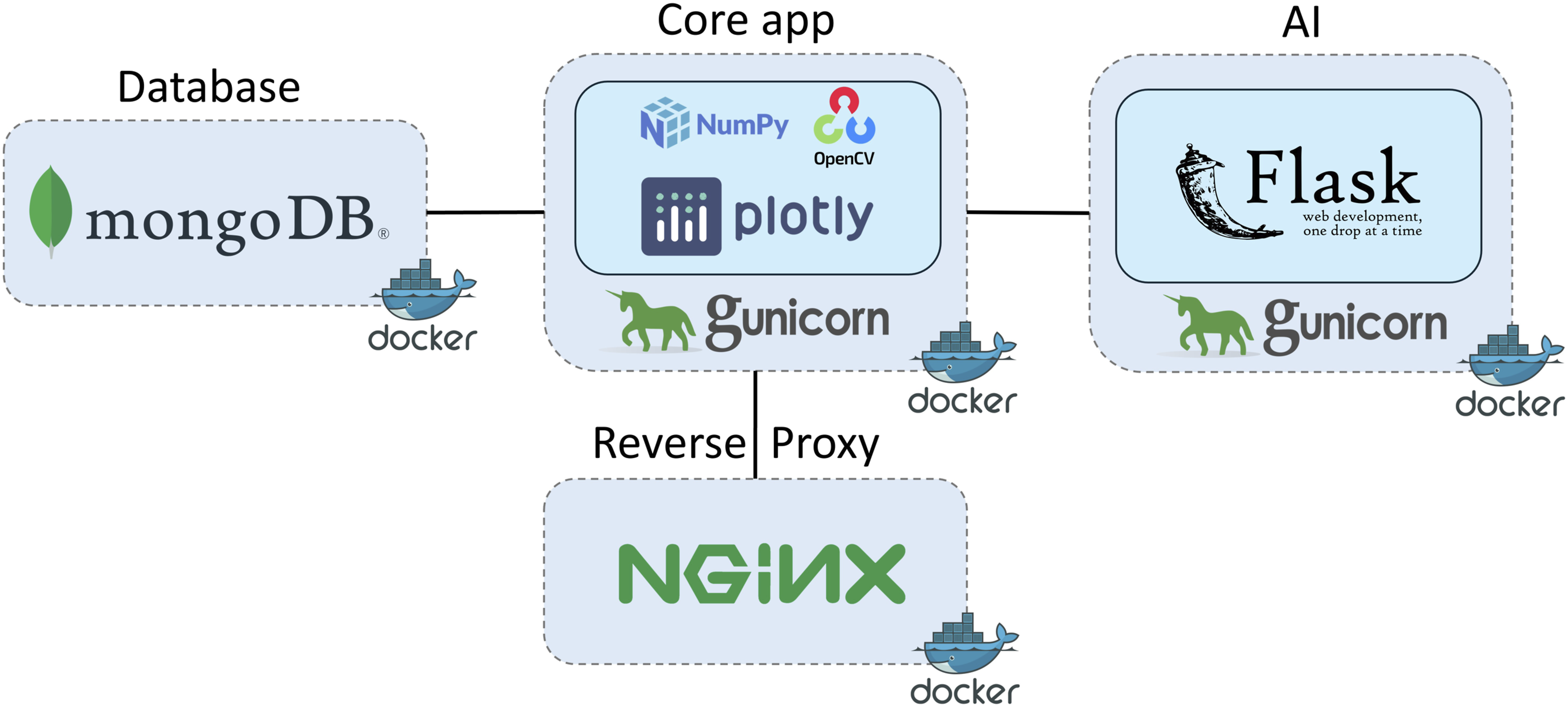

For satisfying the system specifications the client–server architecture model was selected, that is, the CEVR platform is a web application. Figure 1 shows the main components of the CEVR platform and the respective technology stack. The front–end part of the CEVR platform is implemented using the Plotly–Dash,

26

which is low-code framework for rapidly building web applications in Python. Plotly–Dash along with OpenCV

27

and NumPy,

28

two widely used libraries for computer vision and array processing, compose the core component of the CEVR platform. The AI pipeline is accessible via a RESTful API implemented using Flask,

29

a well–known Python web application framework. Both the core and the AI components are served by Gunicorn HTTP servers. The data model is built using MongoDB,

30

a NoSQL database that is popular for its scalability and flexibility. The entry point to the platform is a reverse proxy server built using NGINX server.

31

A reverse proxy intercepts requests from clients and forwards them to the appropriate component. The implementation of a reverse proxy server is a best practice in web development for offloading tasks like load balancing, caching and content filtering from other components. All components are packed and deployed using Docker ecosystem.

32

Specifically, every component is “dockerized” into a container and all of them are combined into one software entity using the Docker Compose tool. Main components and technology stack of the capsule endoscopy video reading platform.

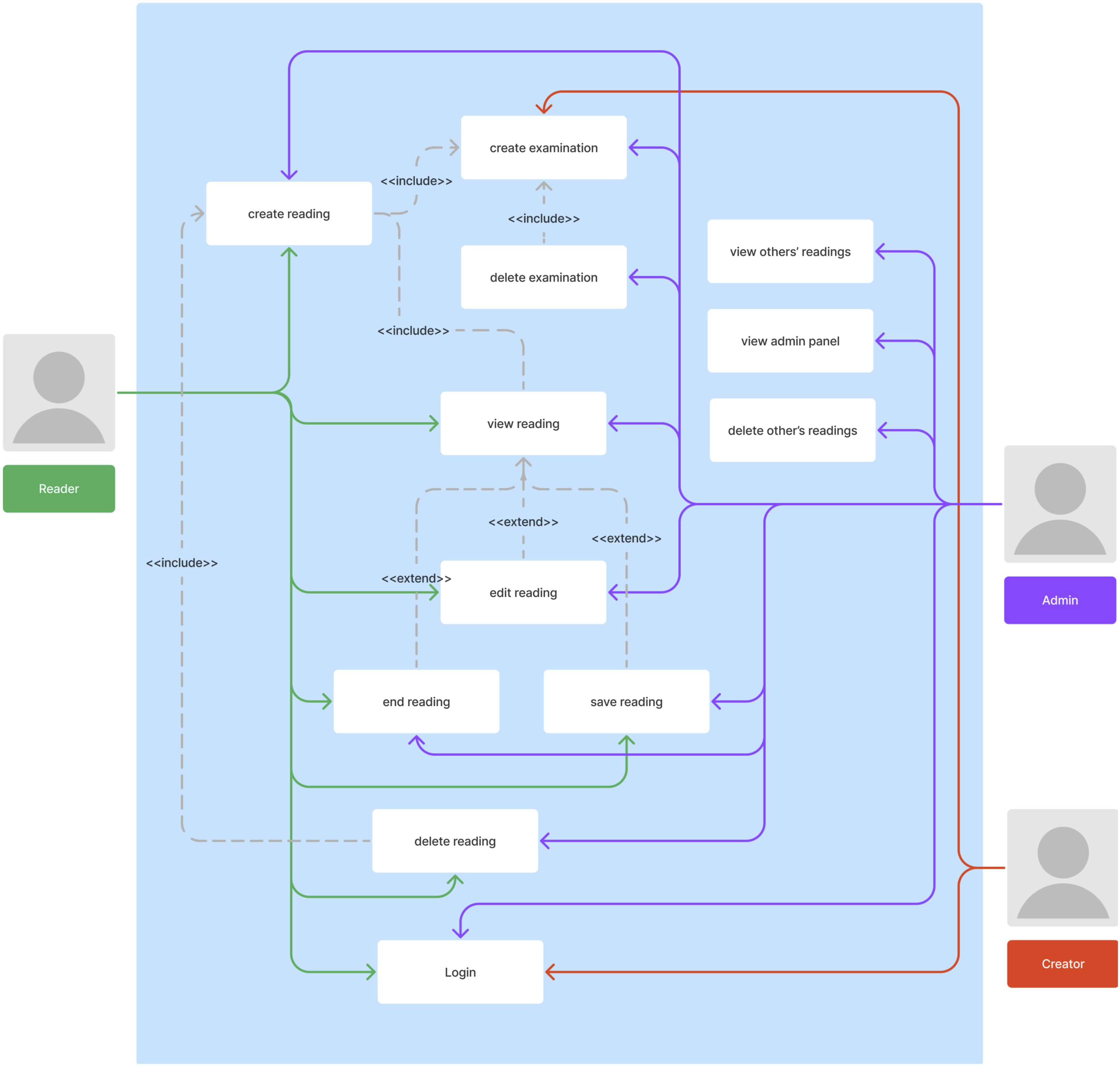

The core application supports three user roles: the Creator, the Reader and the Administrator. The Creator is the one who can add new examinations in the platform, but cannot view, create, or edit readings. The Reader can open examinations and view, create and edit readings. The Administrator can create examinations and readings, view and edit all readings in the system and monitor users’ activity through the administrator panel. The three user roles and the respective activities are illustrated in the use case diagram of CEVR platform in Figure 2. Use case diagram of the capsule endoscopy video reading platform.

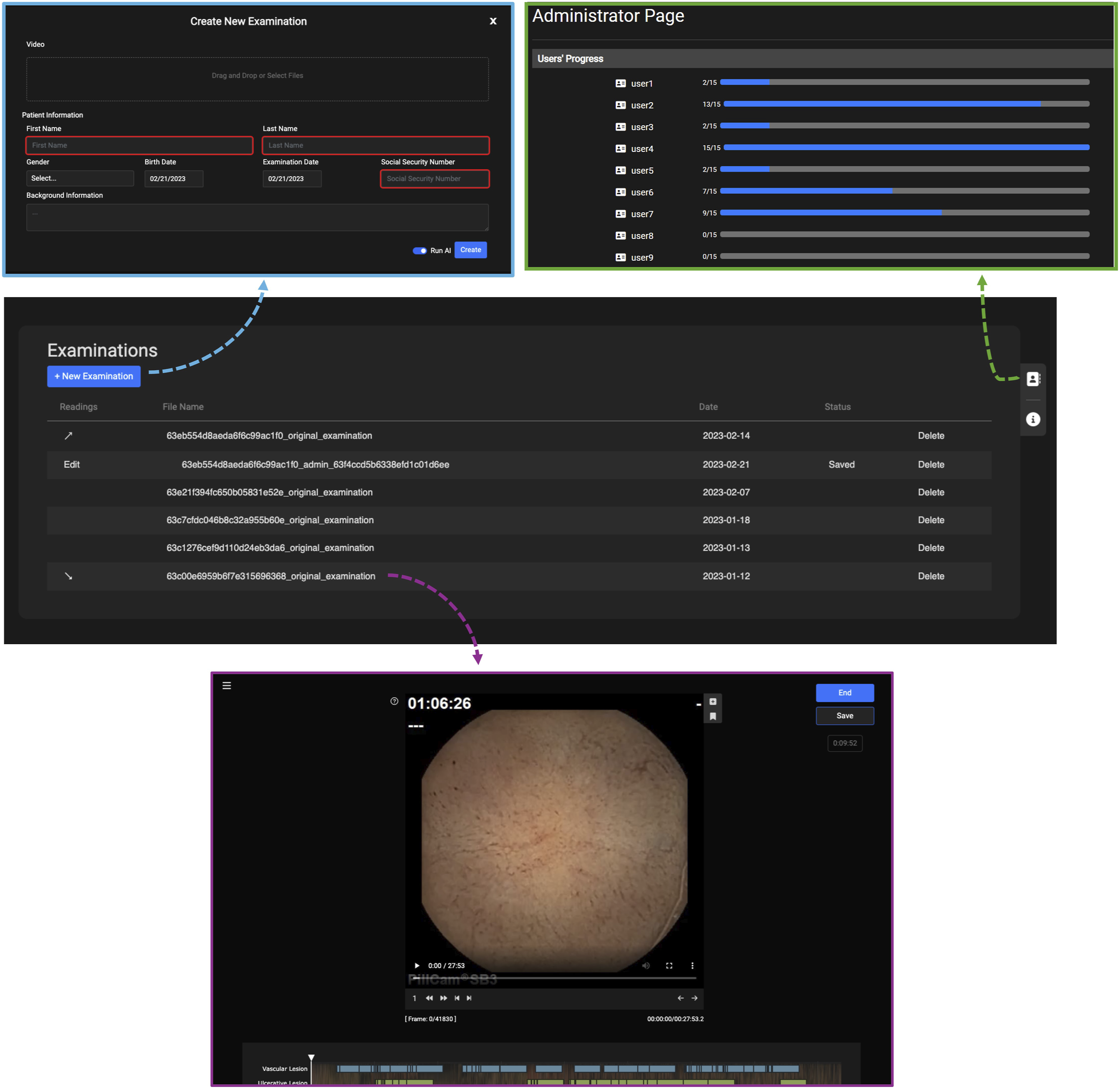

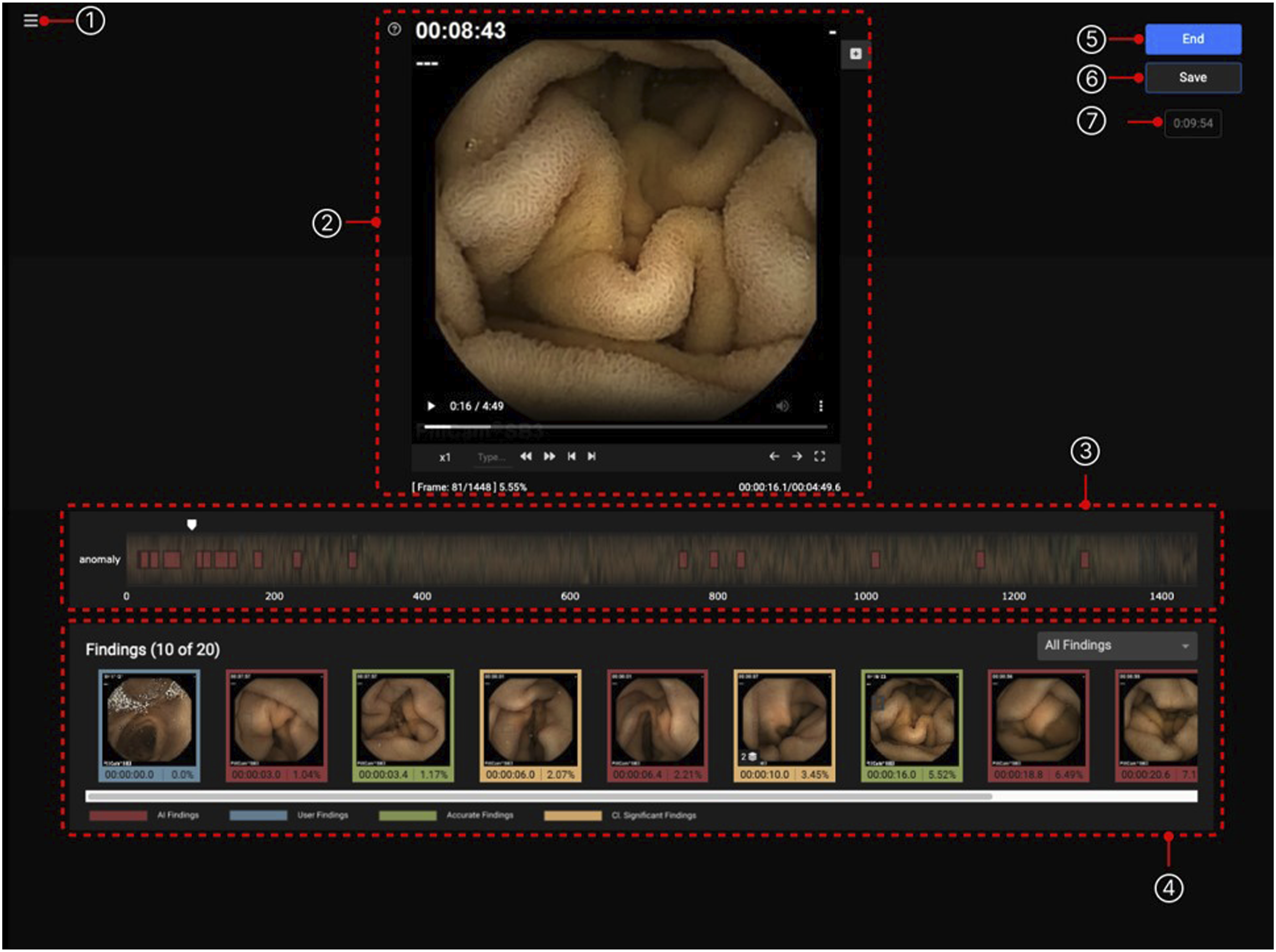

Regarding the UI, there are three distinct pages the end–users can access based on their role: the examinations list page, the reading session page, and the administrator panel page. As demonstrated in Figure 3, the examinations list is the homepage of the platform, where the user can either create a new examination via a popup window or access the examinations and readings in the system, or access the administrator panel, all according to the user’s role. Particularly, upon clicking the examination or reading, the user is transported to the reading session page, which is shown in more detail in Figure 4. Here, the user can view the capsule video and view all the findings derived from the AI model. Upon clicking the respective buttons, the user can create a new finding or landmark. It is possible to view each finding and input comments and save the state of the reading. Moreover, the administrator panel allows the monitoring of the study participants’ progress. Specifically, the administrator can view the number of readings each user has completed. It should be noted that authentication and authorization are accomplished using built-in functionalities of Plotly–Dash. The main pages of the CEVR platform. The examination list (center) is the homepage from which users, according to their role, can create a new examination (above left), start and continue readings (below), and monitor participants’ progress (above right). The reading session page consists of (1) a patient panel with medical background information, (2) a video player, (3) a CE video and findings timeline, (4) a findings panel where both manually and AI findings are presented, (5–6) two buttons to finalize and temporarily save a reading, and (7) a counter of reading’s duration.

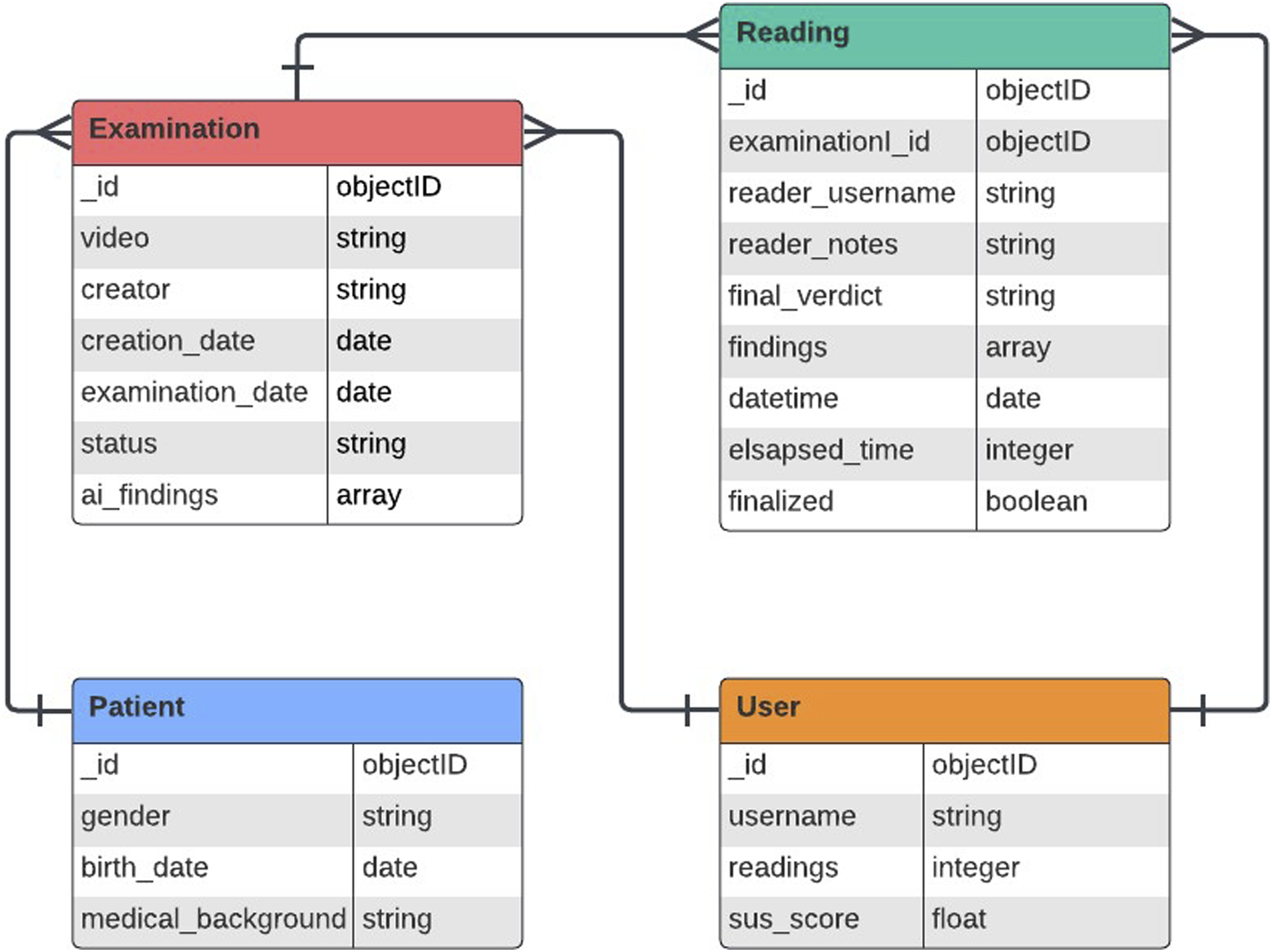

Regarding the data model, the database contains metadata describing four entities, that is, the Examination, the Reading, the User, and the Patient. Participants details such as first name, surname, phone number and email address are kept in separated secured database. The content of each data entity and their relations are shown in Figure 5. Data structure of the capsule endoscopy video reading platform.

AI model

The CEVR platform is designed to allow the deployment of any AI model supported by Flask framework. Nonetheless, for the purposes of the usability study, a state-of-the-art AI model was developed and deployed on the platform. Particularly, the RetinaNet model architecture was adopted that is a one–stage object detection model designed to address the problem of class imbalance in object detection tasks. 33 The model is based on the Focal Loss function which focuses training on hard examples, making it highly effective for detecting objects in a wide range of scales and dealing with imbalanced datasets. The dataset used is the Kvasir–Capsule dataset 34 which consists of 47,238 labelled CE images split into two predefined subsets for training and testing. The approach followed was to train two RetinaNet models and combine their outputs. Specifically, the first model was trained only with whitish anomalies, that is, lymphangiectasia, erosion, ulcer, whereas the second model with the reddish ones, that is, angiectasia, erythema, fresh blood. It was observed that the performance metric, that is, the area under the receiver operating characteristic curve (AUROC), was improving when these classes were targeted separately. From an implementation point of view, a RetinaNet with pretrained weights using the Microsoft COCO dataset 35 and with a ResNet50 backbone network was trained with Adam optimizer, learning rate of 1e − 5, and batch size of 8. Data augmentation, through rotation, crop, vertical/horizontal flip, contrast and brightness adjustment of input frames, took place during training. The resulting output is the union of the bounding boxes predicted by each model, after the application of a different score threshold. The optimal threshold for each model is defined as the one that maximizes the geometric mean between sensitivity and specificity. The final pipeline was evaluated using the testing split. The evaluation results of accuracy, sensitivity, and specificity are 0.89, 0.85, and 0.89, respectively.

Study design

After the development of the system, a pilot study for evaluating the usability of the developed platform took place. Gastroenterologists were invited to participate in the study by the university hospital where the study was conducted. The number of gastroenterologists who accepted the invitation and were recruited to use and test the CEVR platform was nine. All participants were provided with a 10–page manual without any further instructions or assistance. During the study, the nine participants remotely accessed and self–paced examined 15 CE cases. After completing the set of the 15 CE cases, each participant filled a System Usability Scale (SUS) 36 questionnaire where the answers were based on a 5-point Likert scale, that is, 1 = Completely disagree to 5 = Completely agree. Along with the SUS questions they are asked to indicate their experience in CE reading by answering if they had viewed more or less than 50 CE cases in their career before they participated in the study. The ones who had viewed more than 50 CE cases are considered as high experienced (HE), in contrary to the rest who are characterized as low experienced (LE). To justify the number of 50 used a threshold to indicate experience, it should be mentioned that the American Society of Gastrointestinal Endoscopy recommends a minimum of 20 complete supervised readings in order to achieve competency.37,38 The 15 CE examinations used as cases in the study were collected from subjects referred for CE on routine clinical practice of the university hospital where the study was conducted. Informed consent was obtained from all study participants and subjects.

Results

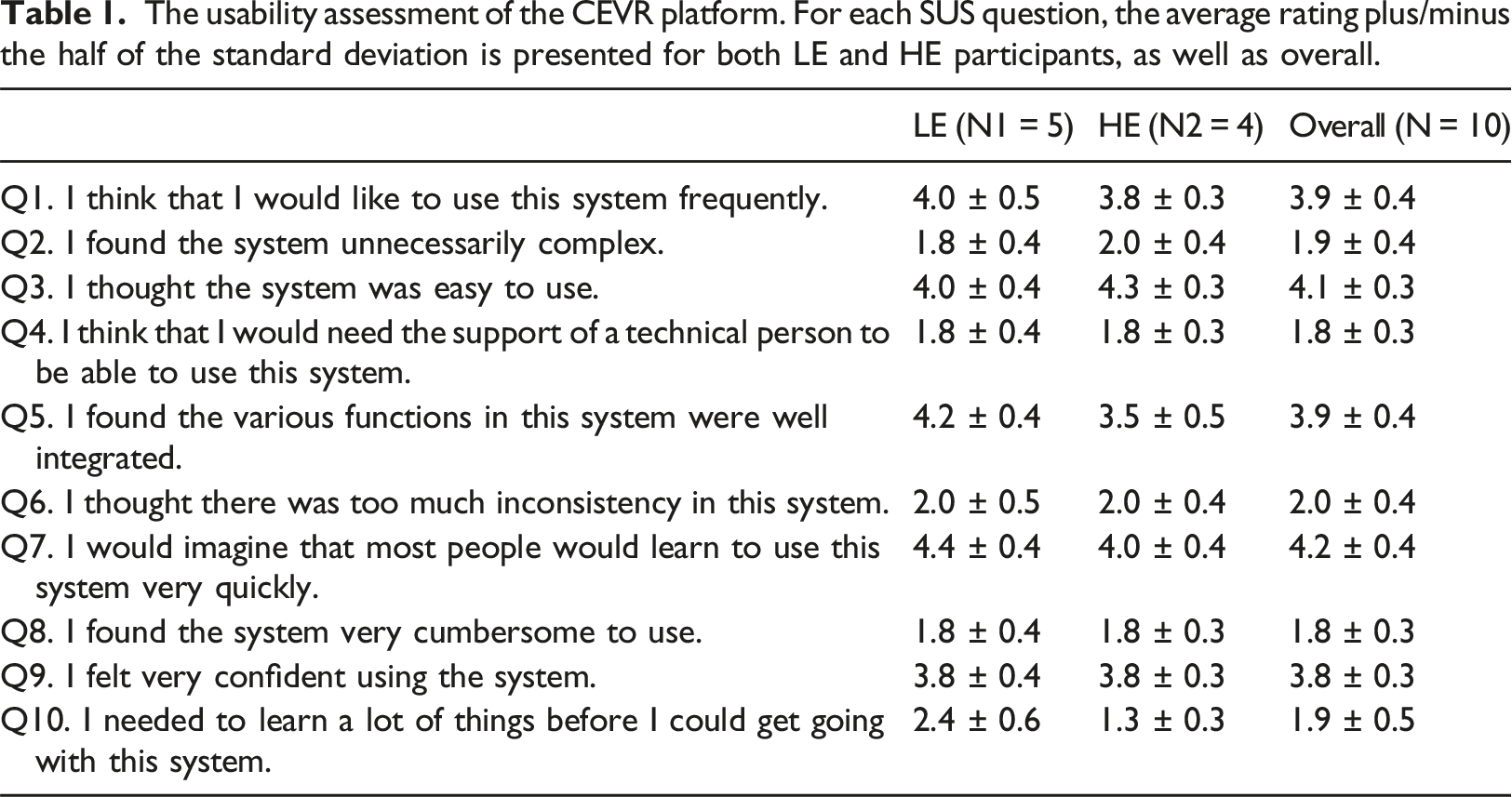

Nine gastroenterologists participated in the study and the proportion between HE and LE was equal, that is, 4:5. All participants completed the SUS questionnaire resulting to an overall average SUS score of 76.4 (±7.2)%, so the usability of the CEVR platform can be characterized as “Good” and “Acceptable” based on the rating proposed in Bangor et al. 39 The value in the parenthesis symbolizes the half of the standard deviation. The average SUS per experience class is 75.8 (±4.1)% and 76.7 (±8.4)% for HE and LE, respectively.

The usability assessment of the CEVR platform. For each SUS question, the average rating plus/minus the half of the standard deviation is presented for both LE and HE participants, as well as overall.

Discussion

The advent of modern AI techniques opens new horizons in computer–aided diagnosis. In gastroenterology, AI can have a significant impact in optimizing small bowel VCE, that is, increasing diagnostic yield and decreasing time–to–diagnosis. Specifically, reading and interpreting a CE video is a challenging task due to its time consuming and tedious nature. 9 Although, several AI solutions have been proposed in the literature19,20 and generative AI–based ones40,41 are anticipated in the near future, the successful translation of them to clinical practice requires the execution of MRMC studies to investigate whether a CE reader is actually benefited by the integration of AI in the standard procedure.

This work presents a software platform that was designed and developed for supporting MRMC CEVR studies in which the participants can review sets of CE videos with and without AI functionalities. The main designing decision was to select the client–server architecture in order to allow remote access to the study activities; hence, gastroenterologists from multiple locations and clinical sites are able to participate. Furthermore, this type of study is expensive to conduct because it requires a substantial amount of readers’ time for video reading and interpretation 21 so it is imperative the design process to produce familiar and engaging UI/UX. For this purpose, a series of workshops with expert gastroenterologists took place to extract the main UI/UX components. The overall usability of the proposed platform was evaluated via conducting a corresponding study during which the participants reviewed a set of CE videos and they were afterwards asked to answer the SUS questionnaire.

The results of the overall SUS analysis indicate that the developed web platform has a good and acceptable usability for both experience classes. These results suggest that the developed platform effectively addresses the core challenges, however, there is a modest margin of improvement. To put it in another way, the proposed platform is a minimum viable product (MVP) which might be enough for small scale MRMC studies, while it can be used a basis for building an extended more usable version of the proposed platform for supporting larger MRMC studies. When the individual questions are inspected, despite the consensus in the majority of the questions, it is evident that disagreements appear regarding the successful integration of the various functionalities (Q5) and the need of previous knowledge before starting to work with the platform (Q10). Specifically, on the one hand, the HE gastroenterologists were less satisfied by the integration of various functionalities than their LE counterparts. This result is expected to some extend since the former might have a more discerning position with respect to smooth and seamless operation suggesting that it needs dedicated co–creation sessions only with HE specialists to thoroughly capture operational discrepancies. On the other hand, there is a greater need for learning to use the proposed platform among the LE gastroenterologists than the HE ones. This result is apparent due to the knowledge gap and the varied levels of familiarity with commercial CE software between the two experience groups. This result suggests that prior to such a MRMC study a training session should take place to ensure a baseline familiarity with the platform usage.

Additionally, a comparison of the resulting usability outcomes to the respective ones from the assessment of the commercial cloud–based VCE reading system in Costigan et al. 25 shows that our web–platform has equivalent usability with a commercial system developed by the leading company in the market. Even though different measures for usability assessment are used in both works, an empirical comparison can be made. In our work the usability is characterized as “Good” and “Acceptable” with a SUS score of 76.4% calculated from the responses of 9 readers, whereas the compared system was characterized “very easy” to access and use by 7 readers who were answered a user-reported outcomes questionnaire.

One limitation of the proposed system is that, although we can monitor how many readings have been completed by each participant, there is no mechanism to quantify the commitment of the participants to the study. For instance, if participants review the videos in a different manner than the real cases, i.e., intermittently and/or during their spare time, then the real reading conditions are simulated; hence, the actual impact of the AI might not be revealed.

Finally, future work may consist of the development of additional features which will increase the usability of the platform, as well as, will extend the field of application to support the wider gastrointestinal endoscopy field including procedure like colonoscopy, colon capsule endoscopy, and gastroscopy. Usability improvement requires further user research with emphasis on the needs of the highly experienced gastroenterologists and comprehensive training modules for the low experience ones. Particularly, conducting a training session prior to the study ensures a smooth onboarding process. Moreover, the success of future VCE MRMC studies strongly depends on the monitoring of participants’ commitment and engagement; hence, the implementation of software monitoring and telemetry infrastructure 42 is highly recommended; yet taking into consideration the possible data privacy ramifications. Considering the deployment of the proposed platform in large scale studies, a series of actions should be taken to ensure robustness and reliability. Particularly, these actions regard the increase of computational and memory resources of the virtual machines which host the platform, as well as, the incorporation of additional software components, i.e., some container orchestration system (e.g. Kubernetes) and content delivery network. The extension of the field of application mainly requires a respective modification of the data structure. Particularly, more data entities should be represented in the database increasing the number of tables and relationships so a best practice would be to organize the data in an existing standard, for example, HL7/FHIR43,44 or OMOP. 45

Conclusion

The translation of AI solutions to the clinical practice of VCE requires the successful conduct of MRMC clinical studies. This work presented a software platform designed to support such kind of clinical studies. The platform was designed as a web application to support remote access allowing multi–center studies. Moreover, special emphasis was given to UI/UX elements to provide a familiar and engaging environment. Finally, the usability of the designing and implementation decisions of the proposed paradigm was assessed via conducting a corresponding pilot study. The results shown a good and acceptable usability as well as provided insights about the expectations and preferences of gastroenterologists with different experience.

Supplemental Material

Supplemental Material - A web-based platform for studying the impact of artificial intelligence in video capsule endoscopy

Supplemental Material for A web-based platform for studying the impact of artificial intelligence in video capsule endoscopy by Georgios Apostolidis, Antigoni Kakouri, Ioannis Dimaridis, Eleni Vasileiou, Ioannis Gerasimou, Vasileios Charisis, Stelios Hadjidimitriou, Nikolaos Lazaridis, Georgios Germanidis and Leontios Hadjileontiadis in Health Informatics Journal.

Footnotes

Acknowledgements

The author would like to thank all gastroenterologists who participated in the study.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work is part of the HosmartAI—“Hospital Smart development based on AI” project that is funded by the European Union’s Horizon 2020 Research and Innovation Programme (grant agreement ID 101016834).

Ethical statement

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.