Abstract

Although mobile mental health apps have the unique potential to increase access to care, evidence reveals engagement is low unless coupled with coaching. However, most coaching protocols are limited in their scalability. This study assesses how human support and guidance from a Digital Navigator (DN), a scalable coach, can impact mental health app engagement and effectiveness on anxiety and depressive symptoms. This study aims to detach components of coaching, specifically personalized recommendations versus general support, to inform scalability of coaching models for mental health apps. 156 participants were split into the DN Guide versus DN Support groups for the 6-week study. Both groups utilized the mindLAMP app for the duration of the study and had equal time with the DN, but the Guide group received personalized app recommendations. The Guide group completed significantly more activities than the Support group. 34% (49/139) of all participants saw a 25% decrease in PHQ-9 scores and 38% (53/141) saw a 25% decrease in GAD-7 scores. These findings show mental health apps, especially when supported by DNs, can reduce depression and anxiety symptoms when coupled with coaching, suggesting a feasible path for large-scale deployment.

Introduction

Mental health outcomes for nearly all populations around the world continue to decline, with youth especially impacted. Rates of depression and anxiety have only increased in light of COVID-19 and related economic challenges. The resulting need for more mental health services has accelerated interest in telehealth, especially smartphone apps that are capable of offering scalable and accessible care. However, clinical experience with smartphone apps has been disappointing as many mental health apps have suffered from low rates of uptake and engagement, 1 precluding any clinical benefit. This study explores the challenge of engagement by investigating which model of coaching can support mental health app use and by assessing the feasibility of training and deploying Digital Navigators. A Digital Navigator is a member of the care team that utilizes their specific expertise in digital health to assist patients with technical support, troubleshooting, app customization to improve engagement, and clinical integration.

The need for scalable and accessible coaching models in mobile mental health care is crucial for realizing the potential of mental health apps. With rates of engagement with non-human supported mental health apps often approaching nearly zero percent after just 2 weeks, there has been a recent focus on how human support can assist. 2 Given that the potential of smartphone apps in increasing access to care rests in their scalability, it is critical that coaching models be equally scalable. A recent review of coaching to support mental health apps by our team found that much of the training for coaches is not shared or that coaches themselves are advanced mental health professionals (e.g., graduate students or licensed psychologists). 3 A related 2022 review found significant heterogeneity in how coaches were deployed to support mental health technologies and was also unable to report on the impact of coaching on outcomes. 4 Without sharing details on training so that it is scalable or creating programs accessible to non-professionals, it is likely that coaching to support mental health apps will not help realize the full potential of transformative increased access to care through mobile mental health.

To strive for scalable coaching, our team has developed a coaching model to train a new or existing member of the care team called a Digital Navigator. An individual’s care team may be comprised of, but is not limited to, physicians, clinicians, social workers, specialists, and administrators. We have established a 10-h Digital Navigator curriculum to train such individuals or new members to take on this innovative role. The training covers five core themes: (1) basic smartphone functions, (2) basic smartphone troubleshooting, (3) selecting and setting up smartphone apps, (4) sharing feedback around data and app use with patients, and (5) supporting engagement through motivational techniques. 5 This training is scalable in nature for several reasons. First, the training can be done hybrid (5 h in-person and 5 h online), which allows those who cannot commit to a full day of training to still be able to receive certification. Additionally, the curriculum can be tailored to fit the needs of the community and team receiving the training. Since the training is broken into core themes, any aspects that do not pertain to those receiving the training can be easily omitted. Lastly, the curriculum is publicly available for other groups to adapt and lead. The curriculum has since been adopted by outside groups in 2022 and won a digital health equity award from the National Digital Inclusion Alliance. While we have employed the role in our own clinic to help support the integration of mobile health into routine clinical care, 6 to date we have not formally assessed its role in supporting app use outside of clinical care.

Thus, in this study, we aimed to assess how support from a trained Digital Navigator could enable people to engage with a mental health app to reduce symptoms of anxiety and depression. We utilized the mindLAMP app that our team has developed and shares as open-source software. MindLAMP features a suite of CBT-related exercises or interventions that we have deployed in prior studies. However, we have found that engagement with these interventions has been limited previously 7 and therefore sought to assess how human support through the Digital Navigator may increase it.

In planning this research, we understood that engagement with the app itself is not an ideal metric to assess the impact of the Digital Navigator. This is because prior research has shown that while some degree of engagement is necessary for any app to be effective, the relationship between engagement and outcomes is not simple.8–11 One potential new metric developed by our team called the Digital Working Alliance Inventory (D-WAI) seeks to assess the alliance between a person and the app (i.e., not the human). 12 Prior research with the D-WAI suggests that it may be a valuable marker for assessing app engagement and impact 13 and, thus, we chose to employ it in this study as an exploratory endpoint.

Finally, in deploying Digital Navigators, we aimed to separate components of coaching to inform scalability, specifically we investigated the impact of using all skills acquired from the training versus a limited set. The objective was to understand if sharing feedback around data/app use and supporting engagement through motivational techniques are necessary for individuals to engage with and benefit from the app. Feedback refers to discussion of CBT exercises utilized by the individual with the Digital Navigator to assist in tailoring and selecting upcoming activities. We hypothesized that the group receiving only core support (i.e. did not receive personalized app recommendations and notifications), not guidance, from the digital navigators, would have overall lower engagement and less symptomatic improvement with the app. As a pilot and feasibility study, we sought to assess if either form of digital navigator support would improve outcomes around depression or anxiety and provide the necessary data to power a clinical trial.

Methods

Participants

This study included individuals experiencing anxiety and other forms of mental illness who were recruited online (researchmatch.org) between July 2021 to February 2022. The participants were eligible if they were 18 years or older, owned a smartphone compatible with the study app, and had a minimum score of 5 (mild anxiety) on the Generalized Anxiety Disorder-7 (GAD-7) 14 survey. Participants were excluded if they scored below a five on the GAD-7 questionnaire or were unable to provide informed consent. There were no exclusions for scores on other metrics, including depression scales such as the Patient Health Questionnaire-9 (PHQ-9), as we sought to assess the impact of this program on people with anxiety. Any PHQ-9 score indicating a risk of suicide was discussed with the study PI and followed up with a risk assessment of the participant.

Procedures

Measures

The 6-week study involved participants meeting virtually with a Digital Navigator at the beginning of the study, at week 2, and at week 6. After each of these three study visits, participants completed a set of Redcap surveys including the Patient Health Questionnaire-9 (PHQ-9), 15 Generalized Anxiety Disorder-7 (GAD-7), Pittsburgh Sleep Quality Index (PSQI), 16 Perceived Stress Scale (PSS-10), 17 the Behavior and Symptom Identification Scale (BASIS), 18 the Social Interaction Anxiety Scale (SIAS), 19 the UCLA Loneliness Scale, 20 the Flourishing Scale, 21 the System Usability Scale (SUS), 22 and the D-WAI 12 The D-WAI is used to assess engagement as it is designed to measure the “alliance” between the user and the app. All measures were self-reported by participants. Measures like the PSQI, PSS-10, BASIS, and SIAS were not analyzed in this study but included to provide common metrics used in other mindLAMP studies which will enable future analysis.

Digital navigators

Research assistants with mental health experience through their role served as Digital Navigators and underwent training per our published protocol. 5 The training was completed in person at Beth Israel Deaconess Medical Center. All participants provided written informed consent.

Participants were placed into the Digital Navigator Guide versus Digital Navigator Support conditions in a 3 to 1 ratio. This ratio was determined based on prior research which has shown that app engagement without guidance leads to minimal app engagement. 1 Our primary interest was the Guide condition, but we wanted to gather pilot data on the Support condition in the hopes that the Support condition would be a more scalable version of the Digital Navigator. Both groups had equal access and time, approximately 20 min per visit, with the Digital Navigator, but during study visits the Guide group received personalized recommendations and support around customizing mindLAMP to their needs. For example, Digital Navigator Guide may help a participant explore their response to a CBT exercise the prior week and suggest a related exercise to help build on those gains. Digital Navigators in the Guide group would inquire about the individual’s impression of that week’s activity, how it impacted their mental health if at all, and if they would like to build on the skills they learned or if they would prefer to try new ones. In the Support group, a Digital Navigator would check on how the participant was using the app, but not offer suggestions or recommendations for future use.

nindLAMP

Both groups had full access to mindLAMP, with modules including Thought Patterns (beginner and advanced), Mindfulness (beginner and advanced), Journaling, Distraction Games, Gratitude Journaling, Behavioral Activation, and Strengths. The Digital Navigator Guide group received notifications from the app directing them to complete Daily and Weekly Surveys, as well as weekly module activities co-selected with and tailored by their Digital Navigator Guide. However, the Digital Navigator Support group received generic notifications to remind them to engage with the app. Both groups also had access to their data through the ‘portal’ tab in mindLAMP, but only the Digital Navigator Guide group utilized this data to inform future module selection.

Compensation in both groups was the same. Participants were mailed a reloadable debit card. After each visit, participants received $25 for a total of $75 received upon completion of the study. This compensation was paid regardless of app engagement.

Analysis

First, we evaluated engagement. In this study, we used both the number of activities completed and D-WAI scores as a measure of engagement with the app. Despite weaknesses, the number of activities completed remains one of the most utilized metrics to report on engagement. 8 First, two-tailed independent t-tests were performed to compare the activity counts between the Digital Navigator Guide and Digital Navigator Support groups using the scipy.stats module. 23 In addition to total activity counts, we also compared the number of Weekly Surveys, Daily Surveys, and other activities.

Second, we focused on anxiety and depression improvement as an outcome. We used a threshold of 25% symptom improvement. As many studies of coached technology platforms classify any clinical improvement as an outcome24,25 we also report on this. Participants with initial scores of less than 5 on the GAD-7 were excluded from the GAD-7 analysis and participants with initial PHQ-9 scores less than 5 were excluded from the PHQ-9 analysis as there was no initial depression or anxiety for improvement to be measured. We also compared the change in PHQ-9 and GAD-7 scores from week 0 to week 6 between the Digital Navigator Guide and Digital Navigator Support groups.

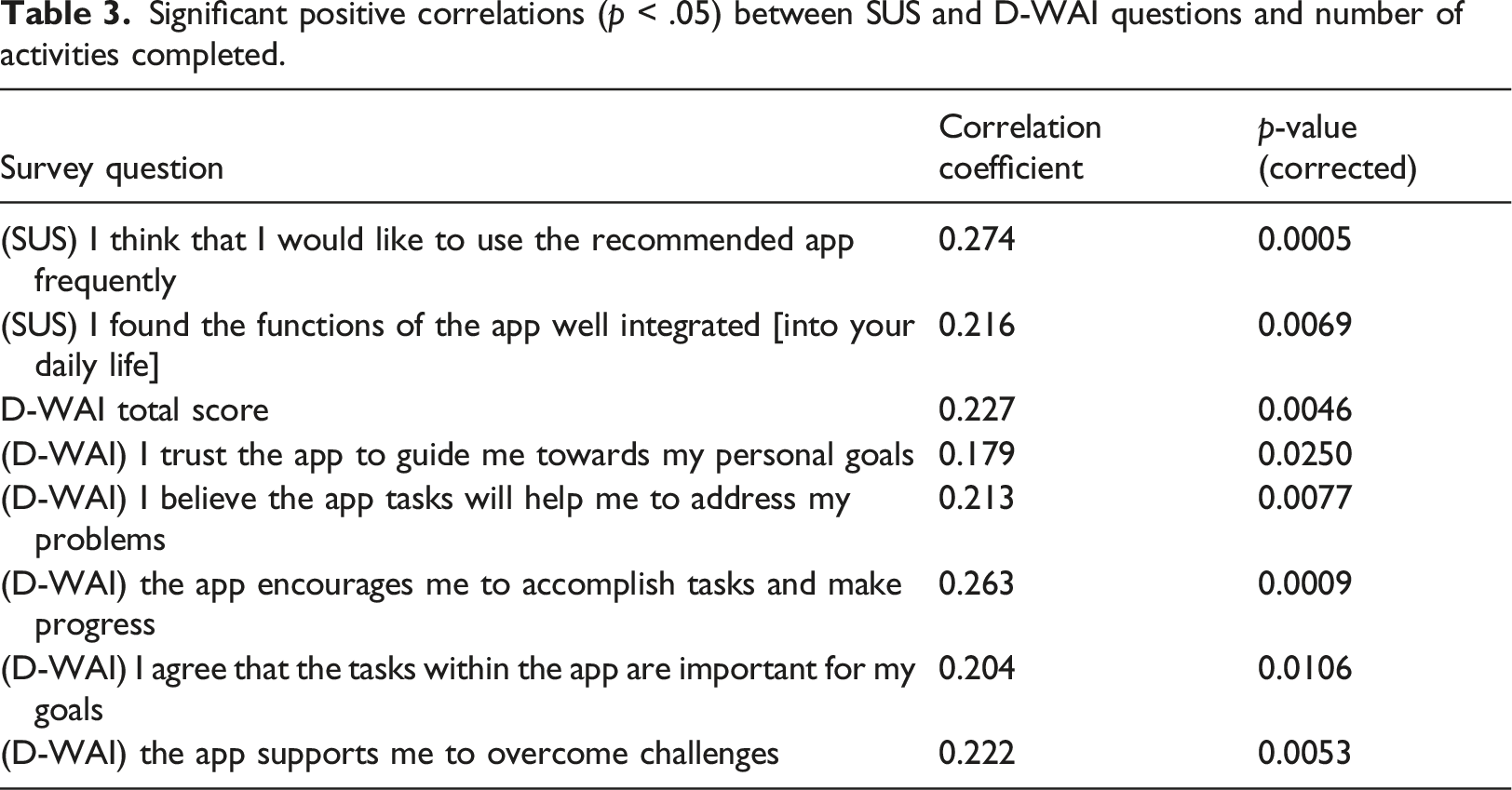

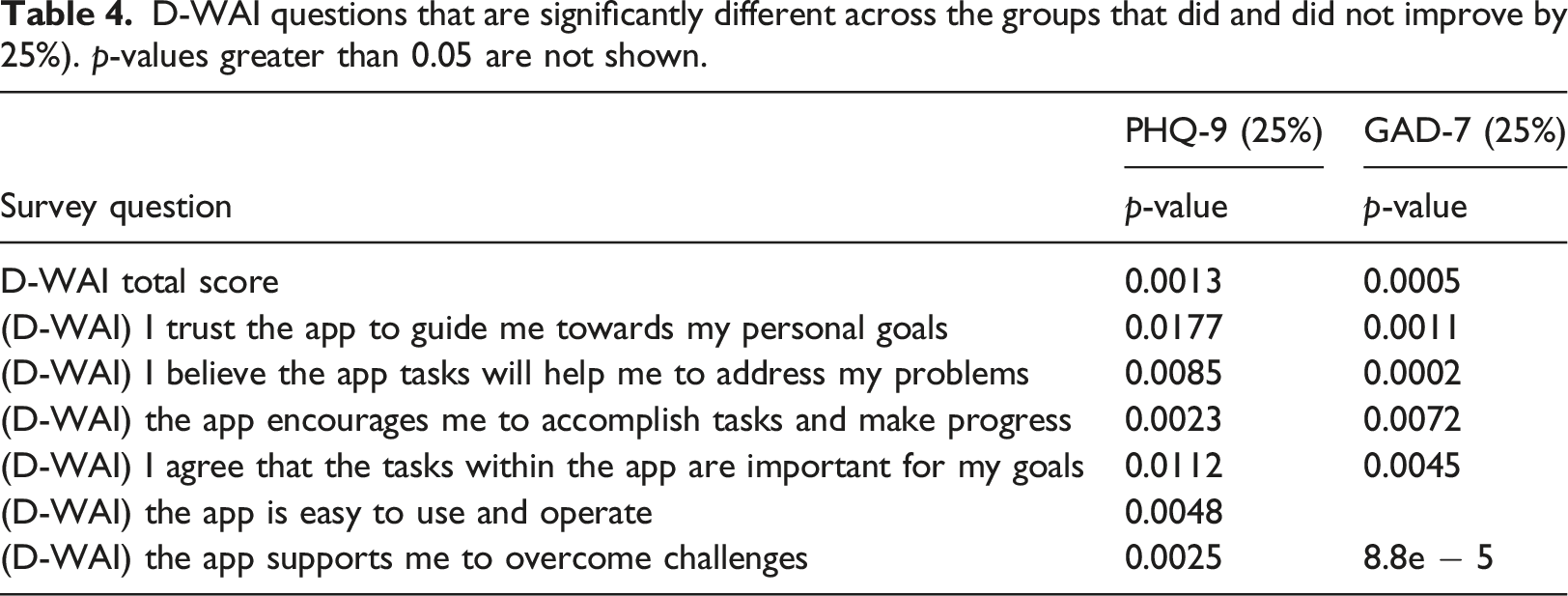

Third, we wanted to investigate the relationship between engagement, alliance, and improvement. Thus, we looked at the correlations between the average SUS and D-WAI question scores and activity counts. The Hochberg method was used to correct the p-values for multiple comparisons using the statsmodels.stats.multitest.multipletests module in Python. 26 Two-tailed independent t-tests were used to compare average D-WAI scores for participants that did or did not improve on PHQ-9 and GAD-7. For both PHQ-9 and GAD-7, as above, improvement was defined as a 25% decrease in total score from week 0 to week 6.

Results

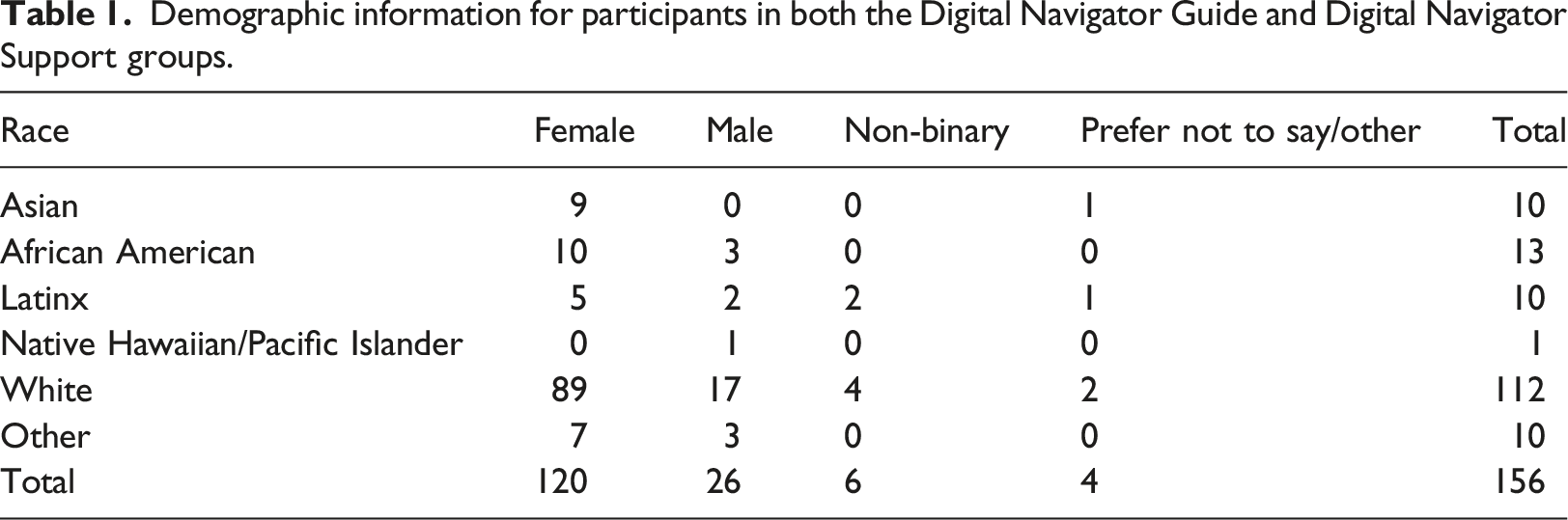

Demographic information for participants in both the Digital Navigator Guide and Digital Navigator Support groups.

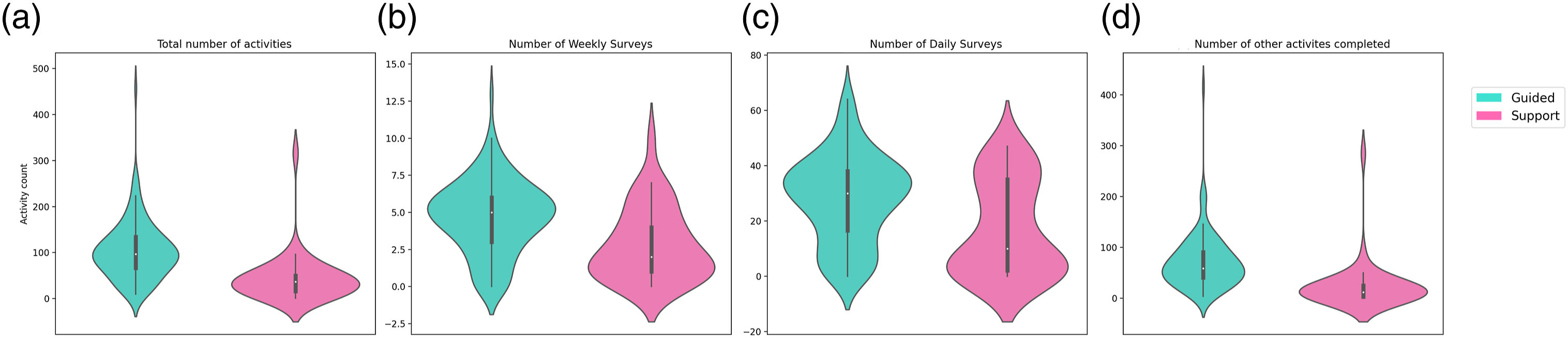

An analysis of engagement between groups revealed that the Digital Navigator Guide group completed more module activities overall than the Digital Navigator Support group (p < .001), as shown in Figure 1. However, the Support group completed significantly more activities in their first week than the Guided group (p < .001, Guide: 103.8 ± 63.4; Support: 43.5 ± 52.7). The activities were broken down into three subsets: Weekly Survey, Daily Survey, and other activities which consisted of module-related activities. Module-related activities included Thought Patterns (beginner and advanced), Mindfulness (beginner and advanced), Journaling, Distraction Games, Gratitude Journaling, Behavioral Activation, and Strengths. In all three subsets, the Digital Navigator Guide group completed more activities (p < .001). The D-WAI scores were not different between the Guide and Support groups (p = .30, Guide: 22.1 Comparison between number of activities completed by the Digital Navigator Guide versus the Digital Navigator Support group. (a) Total number of activities. (b) Number of weekly surveys. (c) Number of daily surveys. (b) Number of other activities completed.

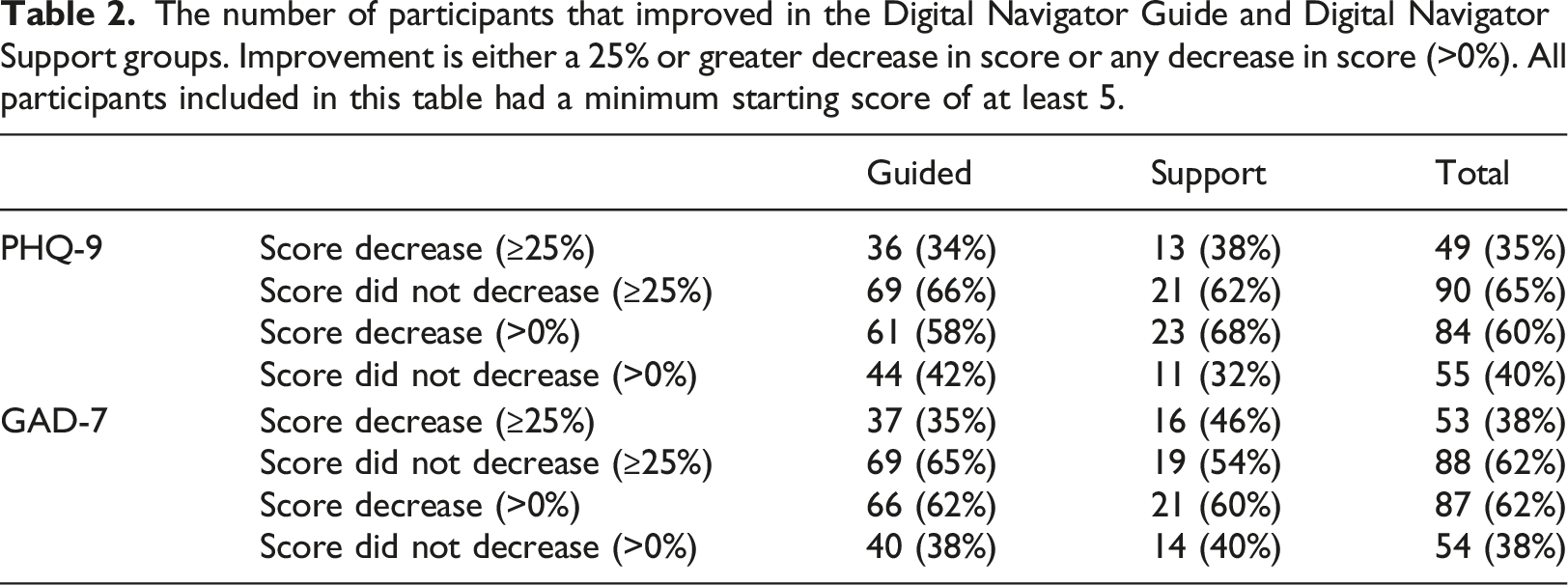

The number of participants that improved in the Digital Navigator Guide and Digital Navigator Support groups. Improvement is either a 25% or greater decrease in score or any decrease in score (>0%). All participants included in this table had a minimum starting score of at least 5.

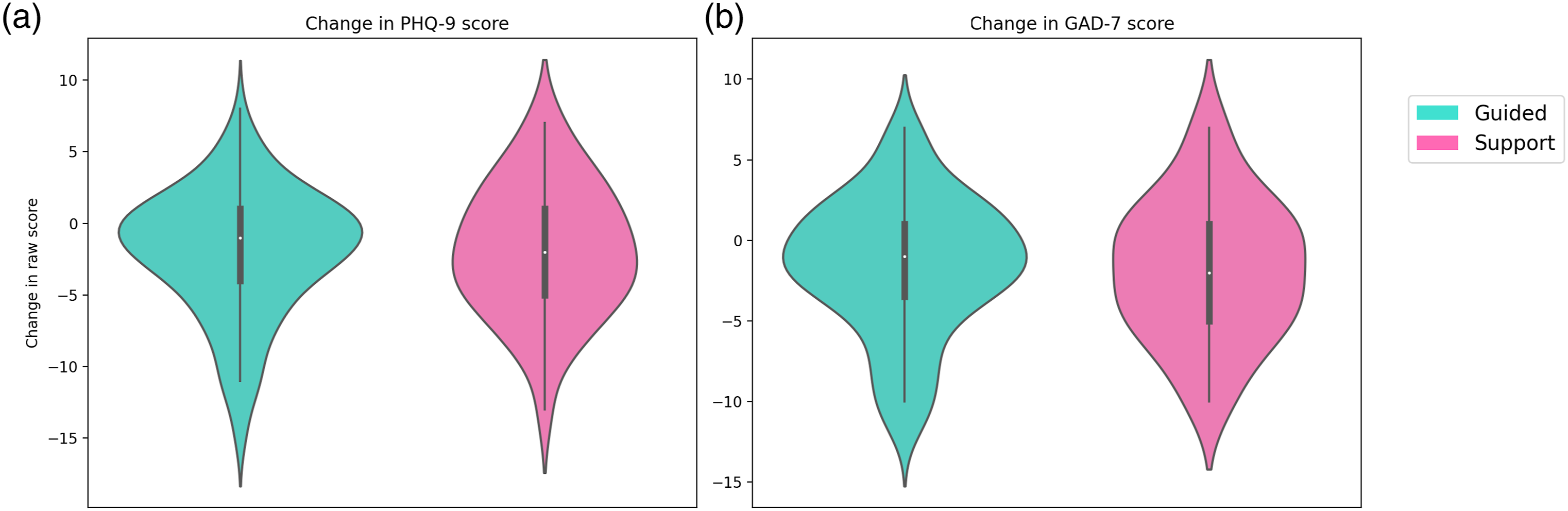

Raw score difference across the duration of the study comparing the Digital Navigator Guide and Digital Navigator Support groups. Negative numbers indicate improvement. (a) Change in PHQ-9 score. (b) Change in GAD-7 score.

Significant positive correlations (p < .05) between SUS and D-WAI questions and number of activities completed.

D-WAI questions that are significantly different across the groups that did and did not improve by 25%). p-values greater than 0.05 are not shown.

Discussion

This study investigated the impact of a 6-weeks mobile mental health app intervention, mindLAMP, in conjunction with a coach known as a Digital Navigator, on mood and anxiety symptoms. By exploring the impact of human support on outcomes, we found that both the Guided and Supported Digital Navigator groups benefited from the study with approximately 1/3 of all participants having a 25% reduction in anxiety and depression scores. Given the scalable nature of this intervention, any improvement in symptoms is notable as the potential to deploy at a population level today is present. While the Guided group completed more activities, both groups reported high and similar working alliance scores with the app. This suggests a possible mechanism of action for the interaction between the app and Digital Navigators for future exploration.

An interesting finding concerning participant app engagement was that the support group participants appeared to binge, or over engage in, activities in the first week. Binging on the app refers to indulging in many or all of the app offerings in excess within a short period of time. This excess usage early may lead to a drop in app engagement over time perhaps because of the e-attainment effect or boredom with the app.27–29 This resulted in a higher app engagement in the first week compared to that of the guide group. Despite this, the guide group ended up completing more activities overall suggesting that the extra guidance facilitated not only increased app engagement, but also more consistent app use over time. A potential contributor to the increased engagement for the guided group is the presence of personalized notifications reminding participants to complete the activities assigned by the Digital Navigator. Furthermore, participants in the guided group reviewed their data with their Digital Navigator and received personalized activity recommendations. Both may have contributed to the steadier level of engagement seen in the guided group.

As there was no difference in depression and anxiety outcomes between the two groups, the results seem to indicate that binging did not have a straightforward effect on clinical outcomes. However, our study was not designed to elucidate the relationship between binging and other app engagement patterns on outcomes, so further investigation must be done.

Our analysis of changes in depression and anxiety symptoms on a weekly basis revealed that participant response to modules was heterogeneous, see appendix A. One possible explanation, not well captured in the data but often shared with Digital Navigators, is the confounding role of life events outside the study. For example, one participant mentioned that three family members passed away unexpectedly between week 2 and week 6 of the study. While she found the modules helpful, her depression and anxiety scores both rose given her situation. The use of the app for the prevention of worsening symptoms versus direct improvement represents another area for future exploration.

Although there were no overall differences in D-WAI scores between the Digital Navigator Guide group and the Digital Navigator Support group, findings suggest that a strong alliance was formed in both groups. Therefore, this likely did not impact outcomes. Our results across both groups suggest that the D-WAI was more so correlated with activity completion than the SUS. One interpretation is that most participants found the app overall usable and thus the SUS did not capture unique information from participants. Other studies have shown that the D-WAI is associated with app engagement and our results add further support. 13 These results also suggest an interesting theory regarding engagement in that the relationship between app use and clinical outcomes may be moderated or even mediated by alliance. While our results suggest a possible mediator, our study was not designed to investigate this effect. However, such a theory would explain the many prior studies that have not found a direct relationship between engagement and outcomes.30,31

The lack of differences between the Guided and Support conditions suggests that the guided help, data review, and module assignment from the Digital Navigator did not impact outcomes around depression or anxiety. The success of the Support condition is important as it highlights how a simplified version of the role may result in nearly equivalent clinical benefits for patients. This has the potential to make the Digital Navigator curriculum more scalable than before, because it suggests that the training can be simplified, which would reduce the amount of time to train Navigators and ultimately makes offering the entire program more scalable. While Digital Navigators are of course not as scalable as self-help apps, the near-zero percent engagement with these self-help apps 1 underscores the importance of this and related roles. Future studies assessing alliance with both coaches and apps can help better separate the therapeutic role of each in helping apps be more effective.

The limitations in our work also suggest areas for future research. Given that any interaction with a Digital Navigator or coach may potentially offer therapeutic benefits, adding a control group with no human support would help in understanding our results. Our participants were recruited online and not assessed in person, though this limitation is a growing reality as patients seek care online during the COVID-19 pandemic. Several participants in the study had at least mild anxiety (score of 5-9 on the GAD-7 screening survey), but by the time participants were contacted, scheduled, consented, and enrolled, their GAD-7 scores at the initial visit were below 5. Thus, these participants had less room for improvement from the start to the end of the study. Additionally, participants in the two groups were unmatched, so future studies should aim to match the participants to control for confounding variables. While study payments were the same across both groups, it is possible these payments may have impacted outcomes if they changed anxiety and mood. As a pilot study, we did not capture follow-up data on the longer-term impact of this program. These results, however, may be used to power a clinical trial and explore the questions we were unable to do so here.

Conclusion

There is an ongoing need for accessible mental health care, especially due to the adverse impact the COVID-19 pandemic has had on mental health. Our results around using an already widely used and open-source app, coupled with a scalable coaching model, present a ready-to-deploy and actionable means to offer immediate and scalable support today. While we have discussed mental health applications, there is ongoing research in related coaching models to support health and wellness across the world. 32 Future research efforts through clinical trials will continue to refine the optimal role of coaching but should not preclude using smartphone apps to provide services to patients today.

Footnotes

Acknowledgements

This work was supported by a grant from the Argosy Foundation. The authors would like to thank Jenny Melcher, Tanvi Lakhtakia, Luke Scheuer, Ryan Hays, Ryan D’Mello, Ashley Meyer, and Suraj Patel for hosting visits for study participants.

Authors’ note

All authors have read and approved of the submitted manuscript.

Author contributions

JT was involved in the conceptualization, design, and writing of the manuscript. EC, SC, and DC were involved in the design, data collection and curation, analysis, and writing.

Declaration of conflicting interests

The author(s) declared the following potential conflicts of interest with respect to the research, authorship, and/or publication of this article: JT has had research support from Otsuka in the past 3 years. JT has also co-founded a mental health app company called Precision Mental Wellness.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Argosy Foundation.

Ethical Statement

Appendix