Abstract

In recent years, a wide range of generic and domain-specific maturity models have been developed in order to improve organizational design and learning of healthcare organizations. While many of these studies describe methods on how to measure dedicated aspects of a healthcare organization’s “maturity,” little evidence exists on how to effectively implement and deploy them into practice. This article therefore delineates the encountered challenges during the design and implementation of three maturity models for distinct improvement areas in hospitals. On the one hand, this study’s findings may serve as basis for refining existing maturity model design approaches. On the other hand, it may facilitate further research in domain-specific organizational design with maturity models.

Introduction

Maturity models (MMs) are recognized tools for the stepwise and systematic development and/or improvement of skills, processes, structures or general conditions of an organization. It has its roots in software engineering where it was found helpful in guiding and monitoring the maturity of software development practices. The Capability Maturity Model (CMM), BOOTSTRAP and the international standard ISO/IEC 15504 commonly referred to as SPICE (Software Process Improvement and Capability determination) are but a few examples for largely successful MMs.1,2 Besides generic areas of application, there are also more and more MMs designed for examining domain-specific processes or technologies. A brief review of the literature showed that a variety of healthcare-specific models covering a wide range of improvement areas, such as software quality, 3 software security, 4 risk management, 5 health supply management 6 or hospital collaboration, 7 exist.

Common to practically all these MMs is the notion of having a staged representation of an actual state in relation to a potentially achievable goal state and a description of steps required to achieve this objective. The major aim is to identify gaps between actual and desired states as well as to demonstrate an evolutionary path to achieve the improvement from a desired to an actual state. In specifying this imaginary improvement trial, most MMs apply a top-down approach by fixing a number of maturity stages or levels first and further corroborating it with characteristics (typically in the form of specific assessment items) that support the initial assumptions about the maturity distribution. This has led to a strong criticism since many MMs seemed to be quite arbitrary. This problem has been dealt with in the recent literature by proposing sophisticated data-driven (bottom-up) techniques and algorithms.8–10

While current research is occupied with the specification of ever-more rigorous approaches for developing MM, there has been a general disregard of effectively implementing MM in complex organizational environments, such as hospitals. 11 The aim of this article is therefore to identify and designate major challenges and risks when it comes to the implementation of MMs in the daily practice of healthcare organizations. We thus strive to answer the following research question: What are major challenges and mistakes when implementing MM as means for the improvement of healthcare practice?

Since MM implementation and development are mutually influenced by each other, we will not only reflect on how to effectively integrate MM in healthcare organizations but also bring forward some considerations to positively adept design of MM in order to foster a broader proliferation. The remainder of the article is organized as follows: in the next section, the research approach of this study is discussed. This is followed by a report on findings drawn from three projects aimed at developing healthcare-specific MM. We conclude with a description of recommendations for future research.

Research approach

Our research follows the assumption that “value-free data cannot be obtained, since the enquirer uses his or her preconceptions in order to guide the process of enquiry, and furthermore the researcher interacts with the human subjects of the enquiry, changing the perceptions of both parties.” 12 Being aware of the subjectivity as part of our research, we base the findings reported in this article on three projects, which we conducted from 2008 to 2012 relating to distinct areas of health informatics and health information management. The first project aimed at developing an MM for measuring the information technology (IT) capability of hospitals. For this, we designed a method and developed a corresponding tool named H-BIT that supports hospitals in assessing mismatches between the capabilities of their IT facilities and their imminent and future strategic needs. 13 In a second project, we developed an MM named HSRM 3 that can be used as reference for measuring the effectiveness and reliability of a hospital’s supply management procedures. 6 The third project emphasized the inspection of intra- and inter-organizational collaboration of hospitals. For this, we designed an MM that measured different aspects of collaborative behavior in hospitals (HCMM). 7

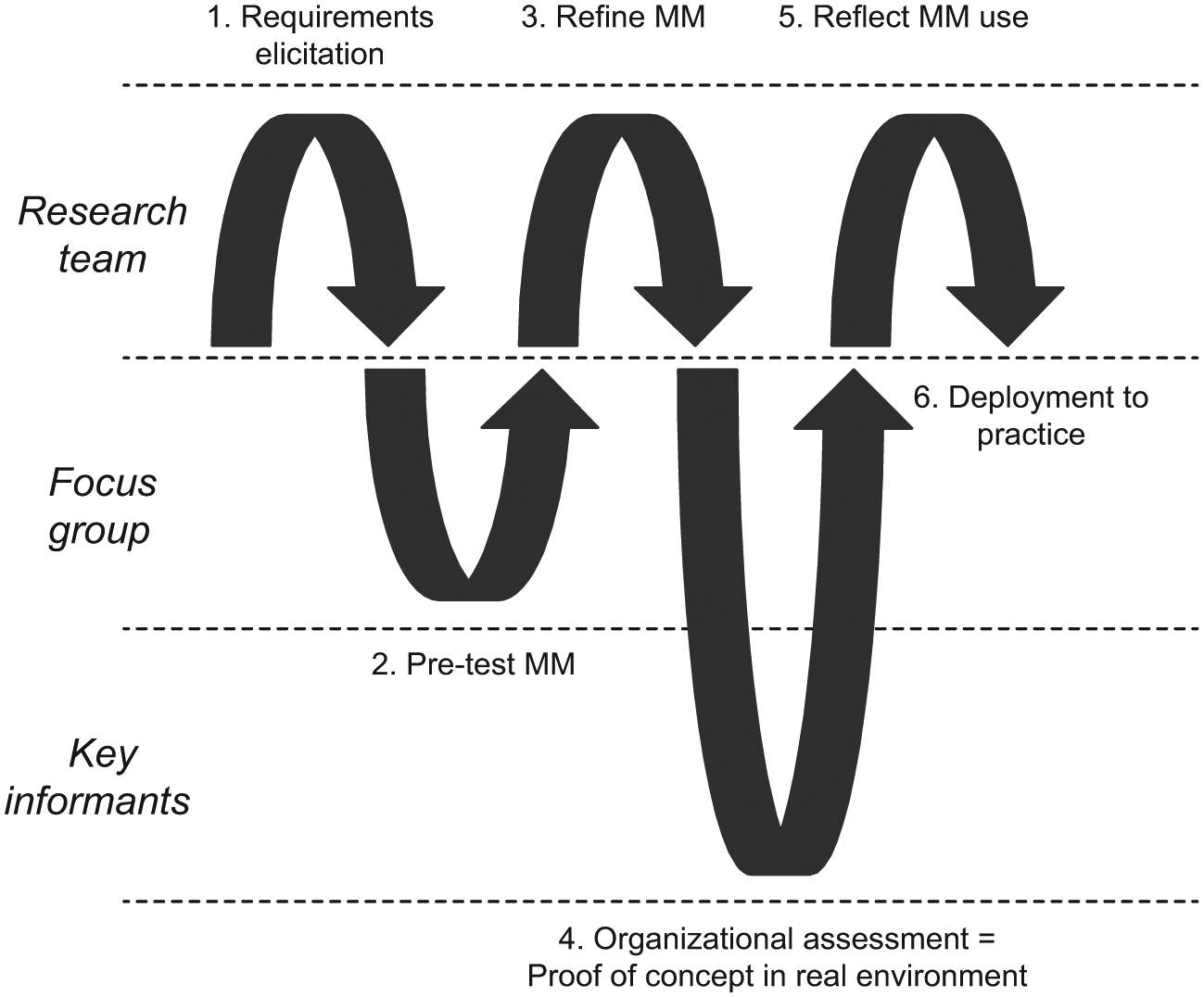

In all three projects, we followed the notion of action design research (ADR). ADR is used for “generating prescriptive design knowledge through building and evaluating ensemble IT artifacts in an organizational setting.” 14 In doing so, a research team faces two kinds of assignments: (1) addressing a problem situation encountered in a specific organizational setting by intervening and evaluating and (2) constructing and evaluating an IT artifact that addresses the class of problems typified by the encountered situation. Research applying ADR follows a generic schema or interaction pattern, as illustrated in Figure 1.

Adapted action design research approach. 14

After a first phase of requirements elicitation (mainly desk research), we used focus group discussions to pre-test a first version of our new MM. This commonly served as the basis for refining the MM and getting more specific feedback for the software design. The initial (physical) prototype allowed us to conduct organizational assessments in several hospitals. This in turn helped us to identify the key challenges and mistakes on which we will report in the next section. With each MM, we conducted 20–50 assessments with distinct health professionals in Austrian, Swiss and German hospitals during the reported time period.

Findings from 5 years of research on MMs in hospital settings

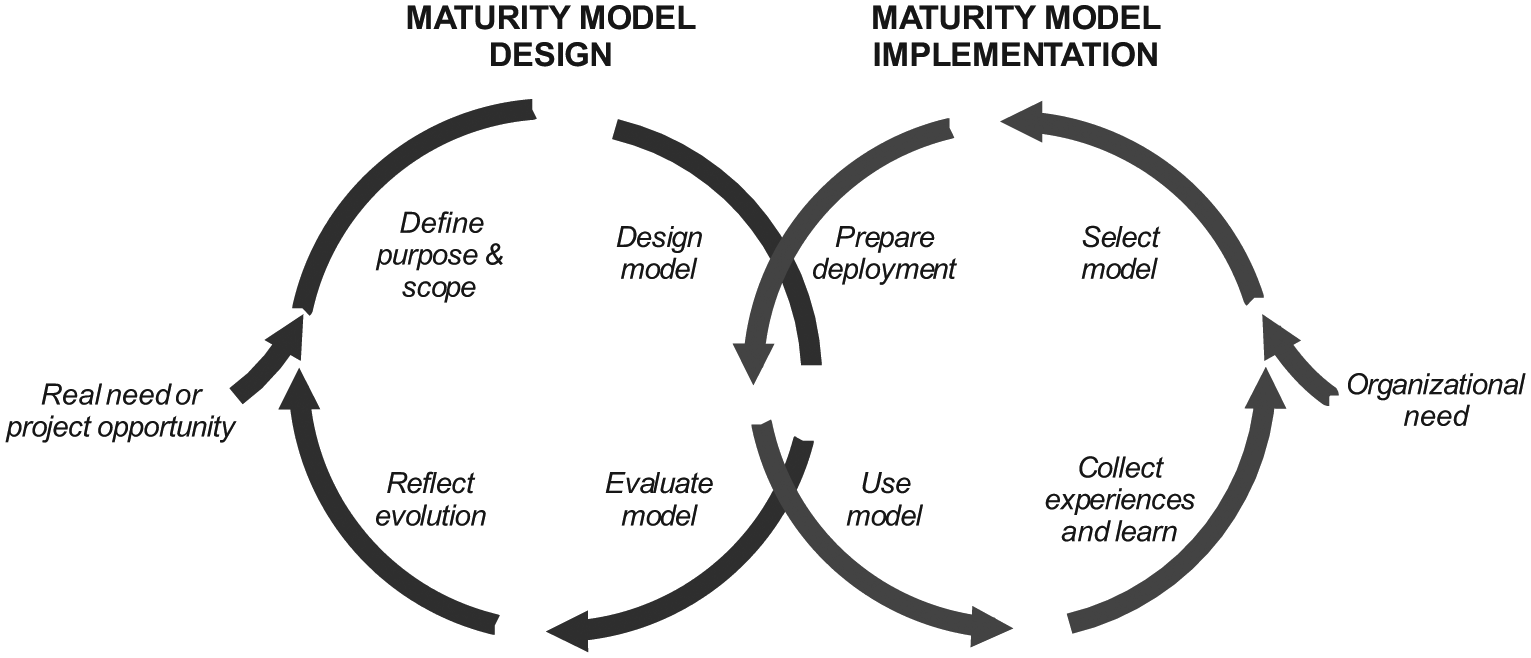

In this section, we present the findings that we gained from 5 years of research on the subject of MMs and the translation of MMs to the healthcare context. Since the design and implementation of MMs are interconnected (Figure 2), we present and discuss our findings based on the intervening cycles as discussed by Mettler. 8

Duality of maturity model design and implementation. 8

Design challenges

The implementation success of MM is directly interlinked with the way how the model has been developed and tested. 15 Therefore, we first take a closer look at the necessary steps and challenges that might occur during the development of the model.

Need- or opportunity-driven approach

Each measurement process starts with the questions “what” and “why” something should be measured. Therefore, one of the first challenges is how to decide when it is fruitful to use a measurement tool such as an MM. Researchers such as Mettler 11 , therefore, propose to start the process by answering questions regarding the “novelty” of phenomenon and the “innovation” that could be created by a new measurement model. Organizations therefore should clarify whether the phenomenon under investigation is a new (emerging) one or whether it is an already known (mature) problem. In the latter case, it is very probable that model-based solutions already exist. This in turn enables thinking about the “innovation” of the model to decide whether a new model has to be developed or whether it is suitable to modify or directly apply an existing model to the phenomenon under investigation.

This decision certainly is important in any organizational context. In hospitals, however, such a decision is usually more difficult than in other types of organization. The latter are mostly centrally managed on the basis of an overall strategy. In hospitals, a common strategy is missing, the aims of the different clinics can diverge strongly and there is not just one key decision-maker, but—in the worst case—as many as there are clinics. Taking this and the tense resource situation into account, it becomes even more important to integrate leaders from both administration and clinical management from the very beginning in the decision and development processes. This reduces later rejection of a prospective measurement solution and avoids the waste of resources for false developments.

Purpose and scope definition

Once the question of the need has been answered, the “focus” and the “purpose” of the model have to be defined. As already mentioned, it is fundamental for success to clarify which stakeholder(s) should be addressed by the model. Therefore, it is necessary to think about the targeted audience and its particularities before designing a model. 15 In the context of hospitals, this could be management personnel, physicians, nursing staff, or members of the support staff such as IT personnel to name just a few. However, it could also be possible that the viewpoint is higher and that the model should be developed for practitioners of different professional groups. As all these different groups do have their own individual language, it is a major challenge to find the right language, so to say the “Esperanto” of MM.

We have encountered the challenge that health professionals sometimes do not understand questions correctly without further explanation or that it was hard to find a shared language when crossing professional borders. Nurses, for example, had a different concept of medical service quality than doctors or members of the management. This had a non-negligible influence on, for example, answers to the question whether the quality of medical services influences the willingness to cooperate between departments. In general, the approaches to dealing with maturity-related questions and the angle of vision of different professional groups differed strongly. Therefore, it is of utmost importance to substantiate questions or measuring items with concrete examples from practice.

We therefore believe that for actionable results regarding the assessment of maturity or the definition of maturity itself, a shared understanding is fundamental. The used language for questionnaires or interviews has to be as unequivocal as possible and be supported by anecdotal examples from daily practice. The higher the heterogeneity of the audience, the more difficult and time-consuming it is to find a common language in a number of evaluation cycles with practitioners.

Besides the challenge of “language,” developers of MMs also have to define the observation “depth” and “breadth” of their model. To improve understandability and conserve resources, it is therefore helpful to decide whether the model is designed to represent phenomena on people (e.g. work routines, educational background), group (e.g. professions, age groups), departmental (e.g. clinics, specialties), organizational (e.g. locations) or even inter-organizational level. Furthermore, it should be determined whether the model should be used rather generalist or specific to improve feasibility and reduce understanding difficulties. The decision in regard to these two aspects, of course, depends on the actual purpose of the measurement model on the one hand and influences the “language” of the model on the other hand. Again, it is therefore crucial to have a clear idea of the overall purpose of the model and to incorporate the correct stakeholders in the development process in order to ensure a subsequent acceptance.

Model design

After the need has been elicited and both audience and scope have been set, the model itself has to be designed. One of the first and, at the same time, biggest challenges in this context, therefore, is to develop a suitable concept of maturity. Three different concepts, notably the process-focused, the object- or technology-focused, and the people-focused concepts of maturity, do exist. 11 In the process-focused concept, it is measured to what extent a specific process is defined, managed, measured, controlled and effective. 2 In other words, it is measured how the efficiency or effectiveness of the current process relates to a possible ideal process (target process). 17 This could be, for example, the stepwise implementation and/or refinement of integrated care processes, the adaptation surgical procedures or paving the way for a new health information system. In the object-focused concept, it is assessed to what extent a product, a machine or anything alike reaches a defined level of satisfaction. This could be, for example, the evaluation to what extent the newly integrated health information system improves the process of patient enrollment or discharge as crucial bottlenecks for effective and efficient care pathways. Finally, in the people-centric concept, abilities are at the center of interest, and it is measured to what extent the individual skills are suitable to achieve or support certain organizational goals. 18 Staying with the same example, what skill level of the medical personnel is needed to use the new health information system effectively would be measured.

The different concepts also show that the aim of improvement is multi-faceted and may vary, for example, between cost, quality, efficiency or other objectives. In addition to the specification of the objective, or “optimization function,” developers also have to decide how one or several objectives are influenced by changes in the maturity path. This is very challenging as cause-and-effect relationships have to be understood first.

Another question arises with respect to the model base: On what do we find the improvement path? On theoretical knowledge, practical–empirical experience or a combination of both? How do we present our model base? Is it a text document (e.g. a questionnaire), a software product or again combination of these?

Model evaluation

Another challenging task is to provide proof of whether the MM measures the “right things” in the “right manner.” With respect to the evaluation of an MM, this means that both the theoretic validity and reliability, and the usefulness and practical relevance of an MM need to be evaluated somehow. According to Kuechler and Vaishnavi, 19 the evaluation process itself thereby involves frequent iterations between development (with health professionals) and evaluation (by health professionals), rather than a procedural approach It is hence hard to identify a clear start of the evaluation in the development process. Nevertheless, the process of evaluation should follow some rules to guarantee rigor. Developers thus should think about the “object” (what should be evaluated), the “time” (when should it be evaluated) and the “way” (how should it be evaluated) to validate the model in a plausible and comprehensible way. In terms of “what,” developers have to decide whether only the model itself, its development path or both should be evaluated. In regard to regular criticism on the validity of MM, it was suggested to evaluate the path and the product, thus opening both to scientific evaluation and discussion. 11 Considering the duality and iterative character of the evaluation process, it is clear that this process can take its time.

Continuous improvement

The MM has to be maintained, and further development will be needed given that some model elements will get obsolete, new constructs will emerge and assumptions on the different levels of maturity will be affirmed or refuted. 20 Therefore, even in an early stage, it is important to also reflect on how to handle alterations in model design and deployment.

In order to ensure a certain degree of sustainability, the model should be developed over time. A challenge therefore is to decide whether only the structure of the model as such, its function or both should be further developed. Also, it is important to decide whether the model should be reviewed further on the basis of regular intervals and, even more important, who is responsible for deciding that the model does no longer fit certain requirements. In addition, it can help to increase the acceptance of the model as such by stipulating which stakeholders, such as health managers, clinicians, nurses or IT specialists, will be integrated into the process of renewal.

Implementation challenges

Although numerous challenges during the building phase can be identified, several process models and approaches already exist that guide the development process in order to guarantee or at least facilitate rigor. However, in regard to the implementation and use of MM, this looks different. The knowledge base on how to use and implement MM in practice is small. We will therefore identify possible challenges and necessary decisions in terms of the implementation of MM and relate them again to the steps of designing such a model.

Organizational needs

As in the design phase, the first decision that has to be made during implementation concerns the question of when the use of an MM seems reasonable in an organization. MMs are primarily characterized by their property to assess an object under observation (instances, processes crossing paths or skills) in terms of their current and a possible target state. An MM thereby includes several maturity levels and describes an anticipated, desired or typical development path. These maturity levels are defined by characteristics and characteristic values. 20 Therefore, these models have a dynamic character and thus are well suited for the gradual and evolutionary improvement of situations and conditions.

The decision for or against the use of MMs should therefore be based on the character of the situation that should be improved. If a gradual improvement in the targeted context seems useful, adequate access to evaluating partners (knowledge base) does exist and sufficient financial resources are available, the implementation and use of an MM appear promising. For a useful application of an MM, however, it is crucial that the state of maturity can be conceptualized clearly enough in order to ultimately rate it. The final question is, ‘Do we really need a model that guides our improvement activities?’

Most of the hospitals performing assessments with one of our MMs were driven by the urge of improving organizational learning. On the one hand, MMs were seen as excellent instruments to critically reflect capabilities of the own organization. On the other hand, it allowed health professionals to compare dedicated aspects with a peer group outside the own hospital. While we expected that economic drivers will have a major influence on the decision whether to do an assessment or not, the possibility for inside-out and outside-in learning was actually the main reason for adopting MMs in hospitals.

Model selection

As mentioned earlier, hospitals are characterized by a high degree of organizational complexity and specific linguistic features. 21 This means that models, either newly developed or reused from other domains, need to be strongly tailored to the context of hospitals. Since adapting is usually less time- and resource-consuming, it should be the preferred approach in the context at hand. In addition, it is very likely that an already established model has been tested several times and thus meets the criteria of rigor in terms of its development and functionality.

However, in order to decide whether to develop a new model or adapt an existing one, implementers need to have an overview of the existing model that might be appropriate for adaptation in order to save resources and avoid deficiencies in rigor.2,18 However, the search for an adaptable model is very time-consuming and only promising if context and the aim of use of the MMs are sufficiently known. Since there is no common model base or classification system for MMs to date, it is challenging to find the right model for the given task. 8 Therefore, a transparent and comprehensible search process underpins the later credibility of the used MMs.

In addition to a rigorous search process, the implementation success also heavily depends on the requirement rationales for the MMs itself. It usually makes little sense to use an MM for unique assessments or doubtful certificates. Rather, in view of the effort and the costs associated with the implementation of MMs, hospitals should think of using these models for long- or medium-term change processes (e.g. integrating continuous improvement processes). This, however, requires again a clear picture of the changes that need to be measured and controlled on the bases of MMs.

Model deployment

Managers are often confronted with workforce resistance. This especially applies to healthcare organizations as these organizations are often not used for performance measurement, yet. In order to gain acceptance and improve standardization of the model, 15 we suggest a two-phase deployment approach.

In a first step, the model should be tested with people who are dependent, for example, the group of people who are directly confronted with collecting the necessary data for the assessments. This initial development group has, therefore, great influence on the appropriateness of the model as such and the awareness the model might reach in the organization during development or adaptation. Important decisions that have to be taken in this phase are which practitioners should serve as information suppliers in order to build a suitable tool. These collaborators should be actively selected according to their knowledge regarding the phenomenon that should be measured and improved. A survey on voluntary basis, instead, can cause key informant bias because usually these practitioners do cooperate which supports the integration of a model and could therefore distort the appropriateness of the model. In political science, this phenomenon is well known and describes a systematic measurement error caused by the differences between the subjective perception and the present value of the objective or phenomenon under investigation. As key causes of informant bias, information- and valuation differences apply depending on functional areas, roles and hierarchy levels. 22 This, however, can lead to significant differences in the assessment of facts by different interviewees and therefore directly affects the results of a maturity assessment.

In a next step, the model should be tested with practitioners who are independent of the developing and testing process in order to improve the model. 15 Besides these initiatives to increase generalizability, another important decision concerns the question “who should perform the assessment?” Depending on the situation, self-assessments, third-party assisted interviews or interviews by external professionals and/or consultants can be the options. 11 During our field research with MMs in hospitals, we noted several times that self-assessments were often prone to errors due to misunderstandings and that interviews by external consultants were refused. As best practice, therefore, often the third-party interviews are helpful.

Model use

MMs represent tools for evolutionary improvement of objects, skills or conditions. Assessments with these models on regular basis, therefore, are a critical requirement in order to guide changes over time. Unfortunately, most of the works regarding MMs do neither address the question how to routinize maturity assessments over time nor do they address the challenge how to adapt and develop the model over time (in the assessment process). Therefore, maturity assessments are often seen as single, unrelated events. However, as MMs guide evolutionary development activities, one of the most important aspects is to address time and change in an appropriate way. Hence, another challenge concerns the question how MM assessment and further developments can be anchored in the organizational and personal routines, processes and culture. In addition, it is necessary to appoint responsibility to one or more representatives of the organization in order to guarantee a periodic and regular use.

In hospitals, responsibility for MM use and development should therefore be located at the top management level of the organization in order to ensure a sustainable use across professional borders. Furthermore, as the development and use of MMs are resource intensive, organizations should strive to develop models that can be used for more than just one opportunity or at least adapted to new domains easily. This reduces the costs of such models and can therefore help to improve acceptance and foster sustainability. 15

Facilitating long-lasting organizational learning

Organizations only benefit from the findings of maturity assessments if the results are collected centrally and if structured improvement initiatives are derived periodically on the basis of these findings. With regard to the organizational structure of hospitals, it is therefore most promising to collect data on management level. This matters especially when cross-sectional assessments are carried out that could reveal detailed improvement potential. Another reason for the localization of responsibility on top management level is the high resource intensity of maturity assessments. Since the budget responsibility for organizational improvements in hospitals is always localized at the top of the organization, resources for assessments, improvements or changes can only be released by management. Furthermore, high staff turnover on department level aggravates a sustainable development of the tool if located on departmental level. However, depending on the way an assessment is performed and the model instantiated, close cooperation between the IT department and hospital management is of utmost importance.

Conclusion

Not least the increasing need for process orientation in hospitals, the development and use of MMs for path-oriented improvements have become more and more common in the recent years. MMs have become an established and major instrument for guiding organizational change and learning initiatives.

The fast-growing number of models cannot obscure that there are only few studies on the efficacy of initiated change efforts. The need for implementation support in general and for hospitals in particular is therefore very high.21,23 We found that the extant literature has a strong emphasis on the development of MM and the perspective of the developers of such models. However, in practice these developers are frequently not or only indirectly involved in the actual deployment of an MM in a hospital setting.

Besides the challenges from a design perspective, we also wanted to describe the challenges which are faced by implementers of MMs, such as hospital manager or health policy makers. We have also tried to move the discussion beyond design methodologies.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.