Abstract

Drawing on social exchange and anthropomorphism theory, this research examines the role of virtual conversational assistants (VCA) as frontline employees. Specifically, we investigate the effects of AI-derived features, such as anthropomorphism, in building Human-Machine relationships. Drawing on a qualitative interpretivist approach, 31 semi-structured interviews were conducted with global users of Siri, Alexa and Google Assistant. Our findings suggest anthropomorphism is an important factor in understanding the development of trust within Human-Machine interactions. More specifically, the effects of a humanised voice, interactive communication capability and cognitive features evoke a sense of social presence that may positively or negatively impact user trust. We propose that the interplay between a user’s perceptions of the bright and dark sides of interacting with an AI-empowered anthropomorphised machine determines categories of trust and subsequent customer engagement behaviours with this embedded form of organisational frontline.

Introduction

Firms such as Patron Tequila, Ocado, PayPal, Johnnie Walker and Uber are increasingly using virtual conversational assistants (VCAs) such as Siri, Alexa and Google Assistant in the role of frontline employees. These devices interact with customers through activities such as receiving and responding to commands and customising service offerings and products based on order history and preferences. These interactions are human-like in manner and enable the VCA to continually learn and adapt. As such, the nature of customer interactions at the organisational frontline is changing through the digitalisation and virtualisation of service encounters (Marinova et al., 2017). However, technological developments such as deep machine learning, also raise issues of uncertainty and trustworthiness (Letheren et al., 2020), as individuals may be uncomfortable communicating with machines and doubt their capabilities to fulfil their service roles to meet expectations (Pitardi & Marriott, 2021).

Additionally, the inability of customers to understand artificial intelligence algorithms, creates perceptions of privacy risk and uncertainty affecting customer trust and willingness to engage with VCAs (Blut et al., 2021; McLean & Osei-Frimpong, 2019). Hence, while the benefits of applying VCAs in service encounters are apparent (Wirtz et al., 2018) there may be unintended consequences for businesses that revolve around issues of trust and engagement. To mitigate these, firms are investing in virtual assistants imbued with human characteristics leading customers to attribute human features to them (i.e. anthropomorphism). This research focuses on the effects of different anthropomorphic features, including auditory (voice-based interactions and attributes), cognitive intelligence (related to machine learning and capabilities to do sophisticated tasks) and mannerism (conveying human manners through verbal acknowledgement) on engagement and trust. Cognitive and behavioural capabilities of VCAs differentiate them from other technologies. Hence, theoretical frameworks and models such as Technology Acceptance Model, Unified Theory of Acceptance and Use of Technology models may not suffice to explain human interactions with emerging anthropomorphised technologies as these models and theories have focused only on the delivery of utilitarian benefits such as perceived ease of use and usefulness (Fan et al., 2017; Hsieh & Lee, 2021; Ramirez-Correa et al., 2019). However, AI that displays anthropomorphic features has revolutionised the way humans perceive and interact with VCAs to the extent that users may form deep connections with machines (Pitardi & Marriott, 2021). These natural language processing characteristics may have socio-emotional implications for the trust and acceptance of such technologies and the nature of customer engagement in forming and developing relationships.

Adopting a generic qualitative approach (Dodgson, 2017), this research draws on anthropomorphism and social exchange theory to explore the nature of human-machine interactions where VCAs are being used as the organisational interface with customers. In doing so, this paper contributes to the anthropomorphism literature through the identification of the role of humanlike behavioural characteristics (i.e. mannerisms) in humanising machines (i.e. anthropomorphism), and to the human-machine relationship literature by identifying the mechanisms through which anthropomorphism influences customer trust and engagement through social presence.

Theoretical background

Organisational frontline and anthropomorphism

Organisational frontline research refers to ‘the study of interactions and interfaces at the point of contact between an organisation and its customers that promote, facilitate or enable value creation and exchange’ (Singh et al., 2017, p. 4). While the daily interactions of most humans continue to be with other humans, communicating with VCAs that act and behave like a human is becoming more prevalent (De Visser et al., 2016). VCAs display cognitive and behavioural capabilities to the extent that a human interacting with such a machine may attribute human-like characteristics to it. Indeed, Chérif and Lemoine (2019) suggest that anthropomorphic features may evoke positive feelings towards machines. From an organisational perspective, understanding the impact of how such feelings affect Human-Machine relationships and engagement levels is crucial to maintaining and enhancing positive customer outcomes.

Consumer engagement and anthropomorphised technology

Firms may classify frequent interactions between customers and frontline employees as customer engagement. Such engagement is viewed positively insofar as it empowers customers’ psychological, physical and/or emotional investment in a service/product, brand or organisation (Hollebeek, 2011; Vivek et al., 2012). More recently, Yuksel et al. (2016) interpret engagement as a form of communicative interaction between the focal firm and their customers. Hence, customer engagement may be viewed as consisting of cognitive, emotional, behavioural and social characteristics built on communicative interaction (Heinonen, 2018; Vivek et al., 2012). The cognitive and affective factors of customer engagement encompass the customer’s feelings and experiences while the behavioural and social factors are related to gaining the current and potential customer’s participation in the exchange condition (Vivek et al., 2012).

Letheren et al.’s (2019) study of household engagement with technology identified three key levels: passive, interactive and proactive. At a passive level, engagement is cognitive, at an interactive level engagement is behavioural and at a proactive level engagement is affective. Schweitzer et al.’s (2019) focus on bilateral voice-based interactions, suggests that consumers build relationships with anthropomorphised VCAs by assigning the role of servant, partner or master. Other studies identify message contingency (Moriuchi, 2021), perceived user control (Mariani et al., 2023) and perceived competence to communicate bilaterally with users in a thoughtful way as being pertinent to determining the nature of human-VCA interaction (Schuetzler et al., 2020).

However, AI’s increasing ability to encompass high-level thinking, intuition and creative problem-solving (Huang & Rust, 2018), raises questions as to whether focusing on purely bilateral voice-based interactions is adequate when considering the level and nature of human-machine engagement (Pitardi & Marriott, 2021; Schweitzer et al., 2019). How do advanced forms of AI-derived anthropomorphic features such as cognitive intelligence and the display of human-like behaviours, influence human interactions with these technologies? How do AI-derived anthropomorphic features affect Human-Machine relationship formation and the evocation of associated positive and negative affection?

Bright and dark sides of AI technologies

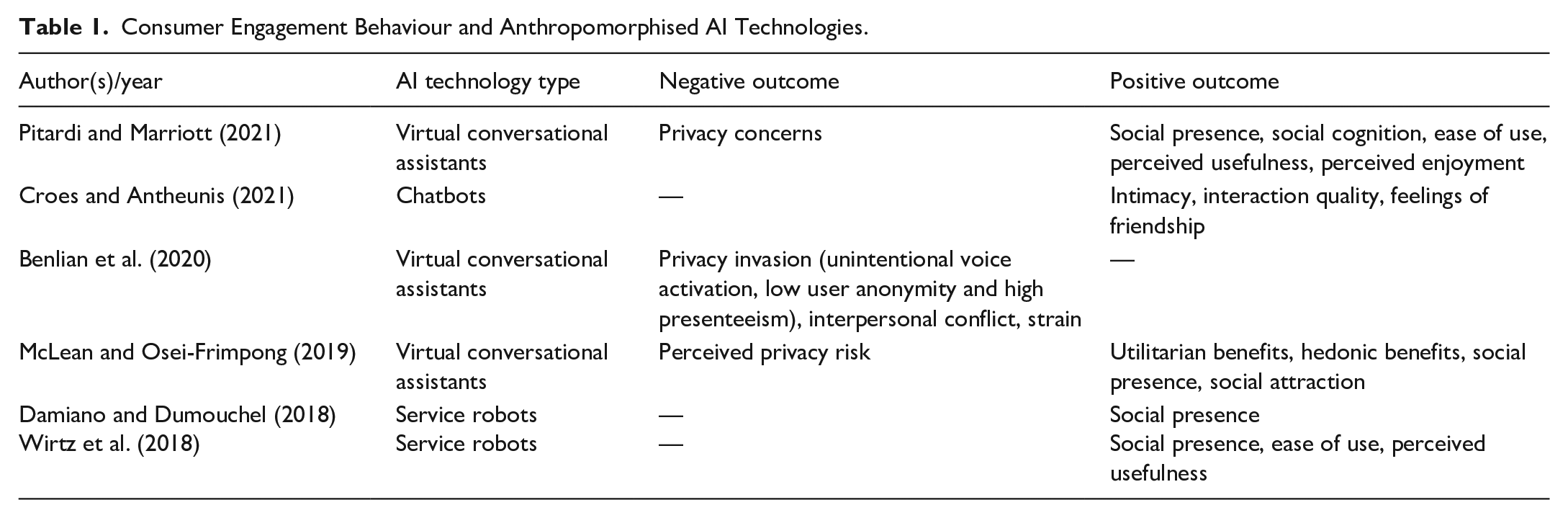

Anthropomorphised AI technologies have frequently evoked both positive and negative outcomes among users with accompanying notions of both a bright and darker side in terms of its applications (Liang & Lee, 2017; see Table 1 for a summary of these).

Consumer Engagement Behaviour and Anthropomorphised AI Technologies.

As Table 1 illustrates, many ‘bright sides’ to anthropomorphised AI and the positive advantages it can offer humans have already been identified within the literature (e.g. healthcare support (Letheren et al., 2020)). However, there are still significant areas of ambiguity within this context that require further investigation (Blut et al., 2021; Mori et al., 2012). For example, AI may evoke feelings of vulnerability among consumers (Jobin et al., 2019) around issues of data security, privacy or general misuse of data (Mani & Chouk, 2019). Related to this, smart home assistants could be perceived as passive listeners with associated consequences for trust (Pitardi & Marriott, 2021).

From a positive perspective, augmenting AI with anthropomorphic features such as auditory capabilities may evoke social presence insofar as a user may sense they are interacting with a human (Kim et al., 2013). In doing so, users may become more inclined to have social interactions with a machine. Even with a lack of human-like appearance, voice anthropomorphised machines can evoke a high level of social presence within their users (Damiano & Dumouchel, 2018).

A sense of social presence helps mitigate the potential of an interaction with a machine being perceived as impersonal insofar as the more human-like a machine, the more intense the sensation of social presence and the higher the levels of user satisfaction (Chérif & Lemoine, 2019). Additionally, voice recognition can enhance ease of use for users, leading to further engagement. Generally, anthropomorphic features make connecting to technology less inhibitive through the creation of unique interaction methods (Pfeuffer et al., 2019). AI developers encompass anthropomorphic design in machines to engender a feeling of familiarity. This facilitates a natural and personal connection with a machine making engagement less inhibited (Pfeuffer et al., 2019). Indeed, ‘intellectualised anthropomorphism’ (i.e. designing an entity with human-like features) may improve technology acceptance and user competence during interaction (Epley et al., 2007; Salles et al., 2020). Accordingly, understanding the impact of anthropomorphic features in motivating individuals to interact with AI-based frontline employees is important. However, previous research regarding the impact of anthropomorphism on customer intentions to use service robots is ambiguous or even contradictory (Blut et al., 2021). Whilst there is research that proposes service robots imbued with anthropomorphic features enhance human-robot engagement through social interaction (Novak & Hoffman, 2019), other research suggests perceived anthropomorphism may create a sense of eeriness annoyance and discomfort in humans (Mende et al., 2019; Schweitzer et al., 2019). Consequently, Van Doorn et al. (2017) call for further research to clarify how anthropomorphism affects customer outcomes such as engagement, satisfaction and loyalty within service contexts. This then leads us to our first research question:

RQ1: How do anthropomorphic features of AI-based organisational frontlines affect customer engagement in service encounters?

Trust in the AI-based organisational frontline

Trust is a complex multi-dimensional construct with differing disciplinary interpretations and perspectives (Chan & Yao, 2018; Ekman et al., 2018). Within the digital realm, trust has historically been interpreted as ‘confidence in a trustee to gather, store and utilize the trustor’s digital information in a way that benefits and protects expectations to whom the information pertains’ (Alsheikh et al., 2019). However, the increased use of anthropomorphic AI raises significant ethical issues related to the evocation of trust and perceptions of risk associated with the gathering, storage and use of digital information. The anthropomorphic design features of VCAs (e.g. voice-based interaction) may create privacy dilemmas for users. For example, unintentional voice activation may arouse concerns regarding a VCA provider’s encroachment upon user privacy (Kowalczuk, 2018; Wueest, 2017) and result in distrust whilst having a correspondingly detrimental impact on the Human-Machine relationship (Benlian et al., 2020). In contrast, anthropomorphic design features may increase perceptions of higher levels of competence among users resulting in users believing machines are more capable of delivering a service than their human counterpart (Blut et al., 2021). Extrapolating this further, Human-Machine voice-based interactions with VCAs that mirror Human-Human interactions may result in increased perceptions of proficiency and potentially trust (Chérif & Lemoine, 2019). The inconsistency of these findings highlights the necessity for further research into the role of anthropomorphic design features on trust. To address this, we propose our second research question:

RQ2: How do anthropomorphic features of AI-based organisational frontlines affect customer trust in service encounters?

Methodology

Research design

Given the experiential nature of the research questions and the lack of empirically based research in this area (Rafaeli et al., 2017), a qualitative approach was deemed appropriate. Initially, an inductive approach was adopted that corresponded with the research objectives of identifying and understanding customer behaviour and their experiences of interacting with AI-based frontline employees that display human characteristics. This was followed by an abductive approach to analyse the research data through systematic combining (Dubois & Gadde, 2002). Data was collected through semi-structured interviews in anticipation that this would provide deep contextual insights whilst reflecting individuals’ behaviours and attitudes towards AI-based frontline employees.

Data collection

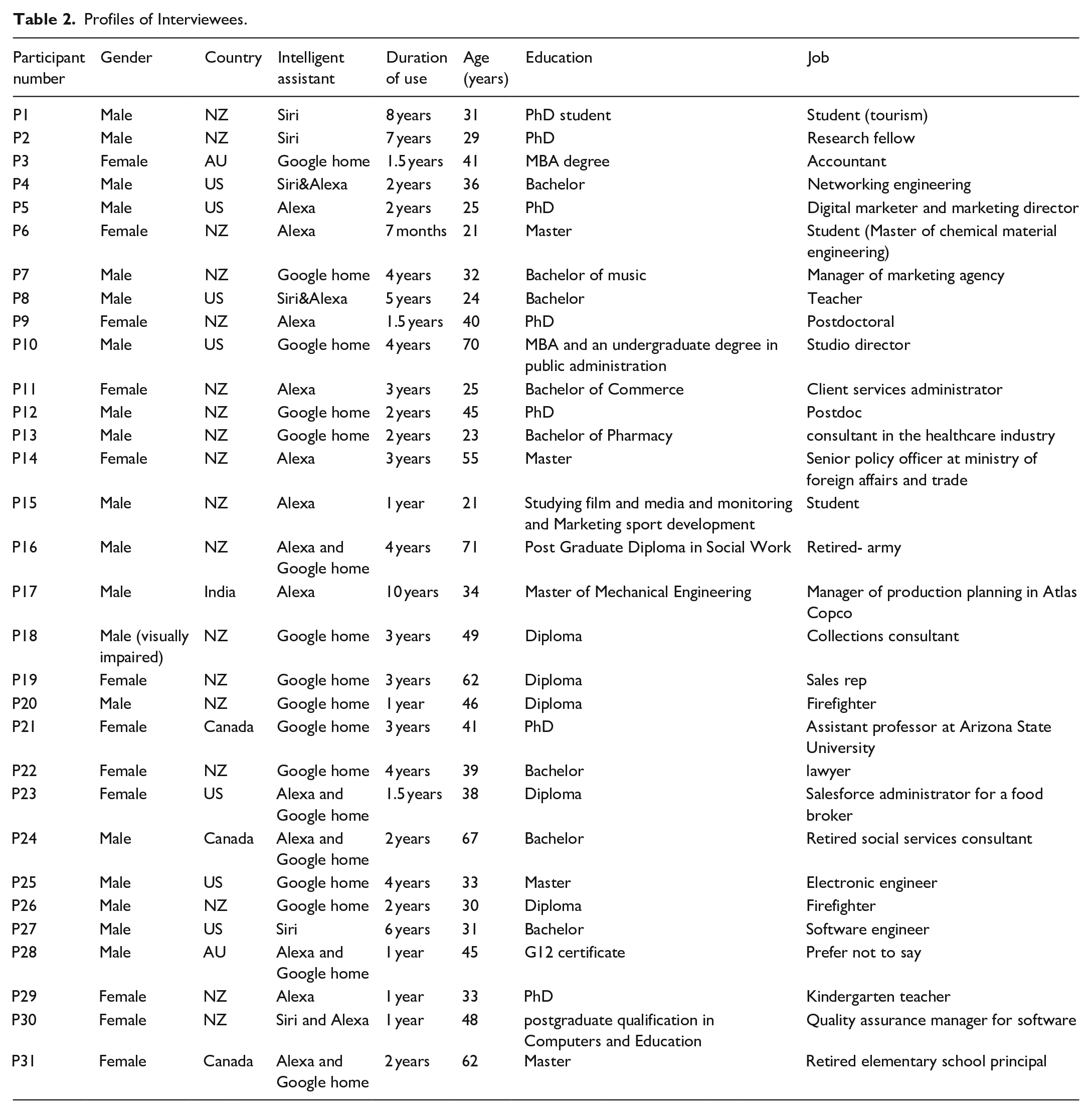

We conducted semi-structured in-depth interviews with 31 global users of Siri, Alexa and Google Assistant that focused on their experiences of using VCAs. Interviews lasted between 35 and 95 minutes and were recorded and transcribed using Zoom teleconferencing software.

Interviews were conducted between March and May 2020. This period was during the Covid-19 pandemic when many individuals were spending significantly longer periods at home than would normally be expected. Users of Siri, Alexa and Google Assistant were selected because, at the time of data collection, they were considered to be the most advanced VCAs available and were familiar and accessible to the wider global population. Additionally, we argue that the behaviours of Siri, Alexa and Google Assistant users are transferable to service delivery situations in general where organisations deploy conversational agents. This is because they mirror human frontline employee interactions insofar as they ‘answer questions, follow a conversation, and help users to accomplish a task’ (Ki et al., 2020, p. 1).

The research employed a sequential sampling method (Neuman, 2011) whereby participants were chosen according to age (i.e. over 18 years old) and experience (i.e. having a minimum of 6 months experience of using a VCA). Interviewee profiles are outlined in Table 2. Participants were recruited through postings on Siri, Alexa and Google Assistant users’ Facebook group pages and subsequently through snowballing. Questions focused on participants’ interactions with their VCA and accompanying service experiences.

Profiles of Interviewees.

Data analysis

Data analysis was conducted using Braun and Clarke’s (2006) thematic content analysis approach with the aid of NVivo 12 (Brandão, 2014). More specifically, a process of data familiarisation was initially conducted where transcribed (verbatim transcription) data were read and reread and initial annotated notes were made. Subsequently, primary codes were created prior to these being grouped into relevant themes (e.g. engagement, AI and trust). As part of this process, first-order codes were created inductively without consideration of theoretical perspectives (Miles et al., 2018). Themes were reviewed to ensure relevance with coded extracts and to enhance the details of each theme. As part of this process, the researchers moved back and forth between data and theory to merge initial codes into second-order codes and combine themes. This ensured the researchers stayed ‘close’ to participants’ experiences and meanings (Gioia et al., 2013). Each theme was carefully defined and labelled. Finally, all themes were refined and finalised. Please refer to the coding schema presented in Supplemental Appendix 1 for further details.

Findings

Findings suggest the anthropomorphic features of VCAs may generate utilitarian, hedonic and social attributes for users of VCAs.

Utilitarian benefits of interaction

Informants identified rational utilitarian reasons to engage with their VCAs. A consistent theme was how the initial convenience of interacting with their VCA enabled through its anthropomorphised voice interface would lead to increased levels of interaction. In this regard, one informant discussed the advantages of their interaction with Alexa being entirely voice activated:

When I talked with Siri, she answered the question and I finish. . . . . . and then I need to again press the button and ask her another question. But Alexa. . . . most of the time . . . .for answering those questions she will ask me “Does that work for you?” You know. . . that’s very good for me. So I can ask another question. So it’s very convenient (P9).

Informants also commented on the role of VCAs in simplifying interactions with complex technologies, for instance, the application of VCAs to control security systems. Informants highlighted how managing such systems without VCAs could be difficult for individuals who were not particularly tech-savvy. P4 illustrates this with reference to her sister:

My sister is not really technologically inclined. She has a lot of trouble even just texting on a phone. . . I wanted to give her a security system. But because of her not being technologically inclined. . … the Echo Dot system allows her to just use her voice to turn the alarm on and off both through her phone and while in the house and it saves her from having to physically interact with the electronic touchpad to do all those things. So, in my mind, I was able to get her something that made her and my nieces and nephew safer while still keeping within her limitations of interacting with technology (P4).

Informants would frequently refer to their VCA’s functionality, quality of information, accessibility and its perceived skills and knowledge. Informants used VCAs for a range of reasons from controlling smart home devices (lights, curtains and security systems) to information seeking. From a service encounter perspective, functionality is dependent on the context that the VCA is used for. For example, within a financial context, it may cover payment of bills or management of a home loan. Nearly all informants commented on their VCA’s 24/7 availability and how they were not constrained to any form of opening hours. This was perceived as a significant advantage over human frontline employees. A number of informants also highlighted how their day would begin early by using smart home device services such as requesting the latest news headlines or information about the day’s weather as well as other practical applications.

Now I start my day where it [the VCA] tells me about the news and the weather . . . . . .we use a shopping list function as well and sometimes open up the full-body stretch [for exercise]. They would have got to six minutes and stretching instructions directed at your body that’s quite handy and yeah. . . . . . I also use it a lot more now for when I’m cooking in the kitchen doing the conversions from imperial system to metric (P11).

Users were frequently impressed at what they perceived to be the breadth and depth of the skills and knowledge that their VCA possessed. Such skills and knowledge encompassed facts (e.g. geography and history), storytelling (i.e. Siri can read bedtime stories) or even the ability to tell jokes. The quality of information that VCAs were able to deliver in terms of specificity, accuracy, currency and timeliness of information was also highlighted by users.

It makes me feel current in terms of information. So, it makes me feel informed. Because she [their VCA] just didn’t tell me other things. . … she told me exactly what I asked her, that’s the difference between listening to the news on the TV, which tells you all kinds of things. So, what you really don’t need to hear or want to hear, she tells you exactly what you’re asking for (P24).

As the number of interactions increased, so too would confidence in VCA responses. This, coupled with the anthropomorphised nature of the VCA’s interaction, would often lead users to adopt a more relaxed interaction style. For example, one informant described how the humanised female voice of their VCA gave the illusion of talking with a ‘friendly lady’. The emergence of other behavioural human aspects (e.g. mannerisms) could lead to higher levels of humanisation and further heighten levels of confidence in the VCA.

An emerging theme was the feeling of empowerment experienced by some informants when, for instance, a VCA enabled them to multi-task. Informants could request information from their VCA while driving, cooking or reading a book. In one case, an elderly and disabled informant explained how their VCA could help fulfil daily tasks and chores without having to depend on family members or helpers thus empowering them to be more independent than would otherwise be the case.

I purchased her [their VCA] because I was going to hospital and because I live in my own house, I wanted to be able to do things without too much movement. Following the purchase Alexa . . . . So I use Georgia (Google Assistant) to turn my lights on in different rooms, my electric jug in the dining room, my dehumidifier. . . .I use it for the heater in the hall. She is very helpful (P16-cancer patient).

Hedonic benefits of interaction

Informants described a number of direct and indirect means by which the anthropomorphic features of VCAs could evoke hedonism. Indirectly, during visits to friends or family, a VCA could become the focus of the gathering and lead to light-hearted interactions between the VCA and others through asking it amusing or inappropriate questions. Individuals who did not already have a VCA would frequently purchase one after such interactions.

I have a couple of friends who have actually bought one (VCA) after speaking to it in my house (P11).

VCAs may be perceived as possessing a sense of humour by some participants. A sense of humour is a fundamental human attribute that culminates in particular behaviours that anthropomorphised VCAs were capable of displaying. This could lead to what informants labelled ‘fun’ or ‘entertaining’ interactions similar to those they might experience with human frontline employees during a service encounter. However, in contrast to a service encounter with a human, VCAs may be directly asked to tell the user a joke. At another level, one participant described how they were able to safely tease their VCA without the potential conflictual consequences of teasing a human:

One day, just feeling a little goofy, I decided to ask Alexa if she knew Siri and Alexa’s response was “Only by reputation!”. . … And I thought, whoever did the programming certainly was able to build a sense of humour? I find that there is humour built into it and I do enjoy that when you dig into some of the layers of it (P31).

This suggests the potential of establishing a rapport during a service encounter with an AI enabled frontline employees is possible with consequences for social and emotional connectivity.

The social benefits of customer interaction

As well as the voice-auditory attributes, the interaction style and mannerisms that VCAs displayed also had a positive impact on the nature of interactions. Users appreciated selecting their preferred gender (i.e. male or female), voice (e.g. that of a celebrity) and even accent (e.g. Irish). This would give the feeling they were interacting with someone ‘familiar’ or ‘friendly’. Moreover, many informants complemented their VCAs on their manners (e.g. answering ‘No problem’ in response to a ‘thanks’). The data also illustrated how these anthropomorphic features enabled users to associate concrete rather than abstract characteristics to their VCA. This may be ‘a friendly person’ or, in some cases, a friend, family member or even a ‘beautiful lady’. Many informants alluded to the notion of social presence when describing the nature of this with interactions being akin to Human-Human rather than Human-Machine interactions.

It feels like there is someone there. . . . a person who can talk to me and someone I can talk to. . … in my home (P17).

Cognitive intelligence (e.g. learning power and decision-making) is another anthropomorphic feature that results in building a sense of social presence. Cognitive intelligence provides VCAs with deep machine learning capabilities such as learning informants’ behaviours from ongoing interactions and increasingly mirroring these. This, in turn, evoked a sense of connection between the user and their VCA. A number of informants commented how this was likely intensified through there being an abnormally high frequency of interactions with their VCA precipitated by extended periods of lockdown during the COVID-19 pandemic.

I think every person who lives alone should have an Alexa because if you’re lonely, it creates a person interface to speak to. Definitely, I considered it as a friend (P30).

The perceived costs of anthropomorphic features

Interactions with VCAs could induce reticence, apprehension and even anxiety among some users. These primarily focused on issues of privacy risk and uncertainty and could adversely influence the manner in which users interacted with their VCAs. Of particular concern was that VCAs could listen in on personal conversations that users would have with other humans creating concerns over how such data could be stored and used.

I think it’s a product of their [Amazon] constant monitoring of her (Alexa) and of us rather. I think it’s clearly the company [Amazon] that is [doing the] programming. The cleverness speaks to the darker side of the thing. . . . . . which is the notion that we know that all the conversations that we’re having are being potentially listened to. . . . . . possibly recorded (P24).

Phrases such as ‘being overheard’ and ‘spied on’ were commonly used by informants with some believing their data was being ‘captured’ and sold on to a third party (e.g. government, CIA, etc.,). Depending on how high participants perceived this risk relative to the perceived benefits they received when interacting with their VCA influenced the adoption of one of three potential behaviours. Consumers interacting with their VCA at a high level both behaviourally and psychologically are classified as highly engaged (Brodie et al., 2011). Consumers whose interactions were at a high level behaviourally but not psychologically are classified as shallowly engaged (Chen et al., 2019; Pansari & Kumar, 2017). Finally, consumers interacting at a low level both behaviourally and psychologically are classified as disengaged/non-engaged (Brodie et al., 2011; Kumar & Pansari, 2016).

Highly engaged, shallowly engaged and disengaged users

Informants who decided to continue using their VCA had generally resigned themselves to the fact that using such technology equated to losing their privacy and interpreted this as an acceptable trade-off in order to receive the utilitarian, hedonic and social benefits proffered by the VCA. Some justified this by explaining that they ‘did not do anything special’ or participate in activities that they needed to be worried about if disclosed to third parties.

A number of informants also commented that they believed recorded data was in binary format (zero and one) and was only used by firms to improve VCA functionality or for marketing purposes.

I accept the fact that there’s so much data that Google can’t do anything with that really doesn’t impact me. They’re collecting so much data, what are they are going to do with it [all]? I mean, it’s just numbers for them and so it’s like an accumulation of stuff that they’re looking for. . . . . . .I don’t care if it starts showing me tracking boots! (P10).

Informants who decided to restrict their usage elaborated on how the notion of being continually listened to and monitored made them feel uncomfortable. This would result in a conscious decision to decrease their interactions with their VCA, and they would only use it for specific purposes (i.e. value-focused consumers). In such instances, VCAs were usually disabled and only enabled when they required a service (e.g. asking for information or controlling smart home devices).

So I’m not saying that Siri, as a person, or as a spy is listening but it means that she is listening and she’s gathering information. . …they can use it [the information gathered] for whatever reason that we are not aware of and it’s very scary. So, for me, I have limited my interactions with Siri a lot. For instance, when I know that Siri is around, I am a bit more cautious about what to say (P1).

Some informants were distrustful of specific functionalities of their VCA. For example, one informant was reluctant to use their VCA for video calls due to the possibility of such calls being monitored.

We’ll say “Hey, let’s call our friend Bill” and we call him a couple of times, using Alexa. And then I wasn’t really sure that I really love the idea of having our phone calls being monitored basically. So, we stopped doing that and just called differently (P31).

Most informants were particularly reticent about disclosing financial information to their VCAs and would attempt to provide only the minimum amount of information required for a particular task. As P13 explains:

The Google Home has access to my [bank] balance and can purchase stuff for you. That area of things, the financial management and wealth management that the virtual assistants can provide. . … that is an area that I’m still not 100% comfortable with. It’s still for me one of those. . . . . . . . I need to do it myself even though I can do it through voice. If I’m making a purchase, especially something that costs a lot of money or it’s linked up to my credit card number, that sort of privacy is still very important to me but the general kind of setting in the background privacy doesn’t bother me (P13).

However, when the vulnerability of interacting with the VCA was perceived as very high, relative to the potential benefits, informants ceased to interact with it:

I started getting uncomfortable at the idea of having something that’s listening to me all the time in the living room. Even though I know that my phone’s listening to me anyway. It was just like that extra thing that I didn’t need that was listening to me. So, I ended up stopping using it. When you sign over these permissions you don’t know who’s getting it, you don’t know who it’s being sold to and I would like to believe that it’s all just to improve the informant experience for us, but I don’t believe that’s true (P21)

The socio-emotional outcomes of using AI-enabled anthropomorphic VCAs

There was evidence to suggest that the cognitive intelligence and deep machine learning capabilities of VCAs could lead to a sense of connectivity between the user and their VCA. However, an unanticipated outcome for some informants was the strength of that connectivity Terms such as ‘companion’, ‘friend’ and ‘family member’ were frequently used to describe the nature of their interaction with their VCA. This connectivity evolved over time largely driven by the development of trust enabled through the anthropomorphic features of the VCA. Initially, the nature of this trust primarily focused on the reliability of their VCA to fulfil utilitarian tasks in a competent and accurate way.

If you want to know the capital of somewhere or how many eggs do I use in like custard or whatever . . . .generally, what I’m looking for I can find pretty quick (P19). Humans are more fallible when it comes to anything like if I ordered string cheese of a certain brand. Alexa is going to order that exact one that I put the first time because of the code. It’s going to line up. Now, if I’m on a phone with an Instacart or in any type of delivery service and I say, I want this kind of string cheese and I want it from this brand. Well, this brand has multiple types of the same string cheese and so then, Did you want this quantity? Did you want this type of cheese? Did you want this, this, and this? Whereas, because I already put it in Alexa, Alexa is going to order the exact same thing. But when you’re trying to talk to a human, human has a margin of error (P5).

Some informants even considered their VCAs to be a more credible source of information than humans. Participants explained this was because it was linked to Google and Google was perceived as a reliable source. Participant also highlighted that, depending on the nature of their question, their VCA would link its answer to a specific source such as a book or newspaper.

Usually, when you ask a question like that, it will always refer to a credible source before saying this book bla bla bla. . . says this. So, I think it also has this inbuilt, kind of thing to make sure that you can believe it. . . .you can depend on that information that it’s giving (P12).

As interactions with their VCA increased, the continual updating of learning algorithms (and associated improvement of levels of cognitive intelligence) allowed their VCAs to adapt and customise their interaction style to resonate more with the user (e.g. understanding user accents etc.). This, in turn, would increase trust in the ability of their VCA to conduct tasks.

I’ll say that there was a while where we probably weren’t using it for a whole lot more than to just play music but yeah, I definitely would use it a lot more now… . .I feel like it’s gotten a lot better as well. . …I remember probably a year or two years ago I asked it questions,. . …and I remember it not being able to tell me, and that kind of annoyed me and then I sort of didn’t ask any questions for a while and then a year or so ago, I asked it again and it knew. Yes, definitely, improving all the time, so we’re using it more and more (P11).

In the development of human-to-human relationships, credibility is established over a period of time. However, VCAs were able to shortcut this process by providing accurate information from what are perceived to be dependable sources.

I guess we rely on the Google Home assistant to be accurate because it is a machine. So, my perception of it was that whatever you ask, she can return a search result for it is more grounded by facts and more grounded by a rigorous approach (P13).

Up to this point, the development of the H-VCA relationship has largely been based on calculative trust focusing on the utilitarian benefits derived from interacting with their VCA. For the purposes of this research, we consider calculative trust to be based upon the economic value and rational decisions regarding the future benefits and costs associated with these (Poppo et al., 2016; Susarla et al., 2020). Whilst these benefits were largely anticipated by users, what was not anticipated was the extent they would become imbued into their daily routines and lifestyle. VCAs were accepted ‘into their homes’ and viewed as ‘a member of the family’. As a ‘benevolent friend’ or ‘family member’, it was perceived as caring and only acting in their best interests. As such, the VCA would not provide misleading or inaccurate information.

I’ve tended to trust implicitly, the quality and authenticity of the information. . . maybe beyond what I should (P24).

When the relationship with their VCA was perceived as that of one with a ‘trusted friend’, a ‘blanket trust’ would develop. Blanket or complete trust is identified as high-level trust where a person does not anticipate any negative consequences from the other party (Habibov et al., 2019; Tuang & Stringer, 2008; Welch, 2006). As a result, informants would take at ‘face value (i.e. nominal value (0&1))’ what the service provider stated in their privacy policy or just accept the risk as a consequence of their relationship with their VCA. Whilst fully aware of these risks and accompanying uncertainty, respondents accepted this vulnerability as a consequence of their relationship with their VCA. At this point, there was little or no concern about disclosing information about their personal lives to their VCA.

It doesn’t bother me and the fact that there are two iPhones in my house that anytime could be doing the same thing for all we know. I know. . . if it is recording, I like to think it is not. I believe the privacy statements that say it doesn’t. I have no reason not to believe them (P7).

Users were able to rationalise this in terms of a reciprocal relationship. As their sense of connection and engagement with their VCA increased, enhanced through learning algorithms and resonating interaction styles adopted by the VCA, so did the benefits derived from these interactions. As P8 states: ‘the fear factor is very minimal compared to the amount of joy I get out of it’. It was accepted that the quid pro quo of these benefits was the requirement to forfeit, to varying degrees, their perceived privacy. However, some informants were surprised at the extent they were prepared to do this.

Compared to the first day when I set my Alexa up, it needed so much information and I thought no!…… it is not very secure. I don’t want to give her or give the app my information, bank account, and this kind of thing. And I didn’t! At first, I thought okay, maybe it’s like a spy. It is designed to spy on people’s life. Still, I’m thinking the same, but I don’t care. I said, okay, it’s a beautiful technology. I don’t care and I’m not an important person. So, who wants to spy on my life. I don’t have any kind of secret so that’s all right, but it is more fun for me. So why not and I think Alexa opened a window to new technology. I love it and I use it (P9).

In this case, AI-derived anthropomorphic features enhance the user’s experience through increasing perceived value relative to the perceived costs potentially contributing to both calculative and blanket trust.

Finally, for some informants their lack of expertise and technical knowledge that underpinned the operations of the VCA was recognised. They highlighted how, from their perspective, the firms operating VCAs do not present clear and concise privacy policies related to this. However, this was mitigated by the nature of their interaction with their VCA and the development of a ‘blind trust’ in their interactions with the VCA. Blind trust is defined as ‘an emotional state (different than mere cognitive myopia) by which a market agent willingly or unconsciously accepts to make themself completely vulnerable to the intentions or actions of another market agent, without any of the possible consequences, positive or negative, of this action by reducing the level of perceived prediction to or near zero’ (Mesly, 2015, p. 23). Hence, whilst the trustor is aware of the presence of risk, they accept it completely without paying attention to its consequences. When probed about this, the levels of trust some informants were prepared to place in their VCA for the ongoing development of their relationship and its associated benefits surprised even them.

Discussion and conclusion

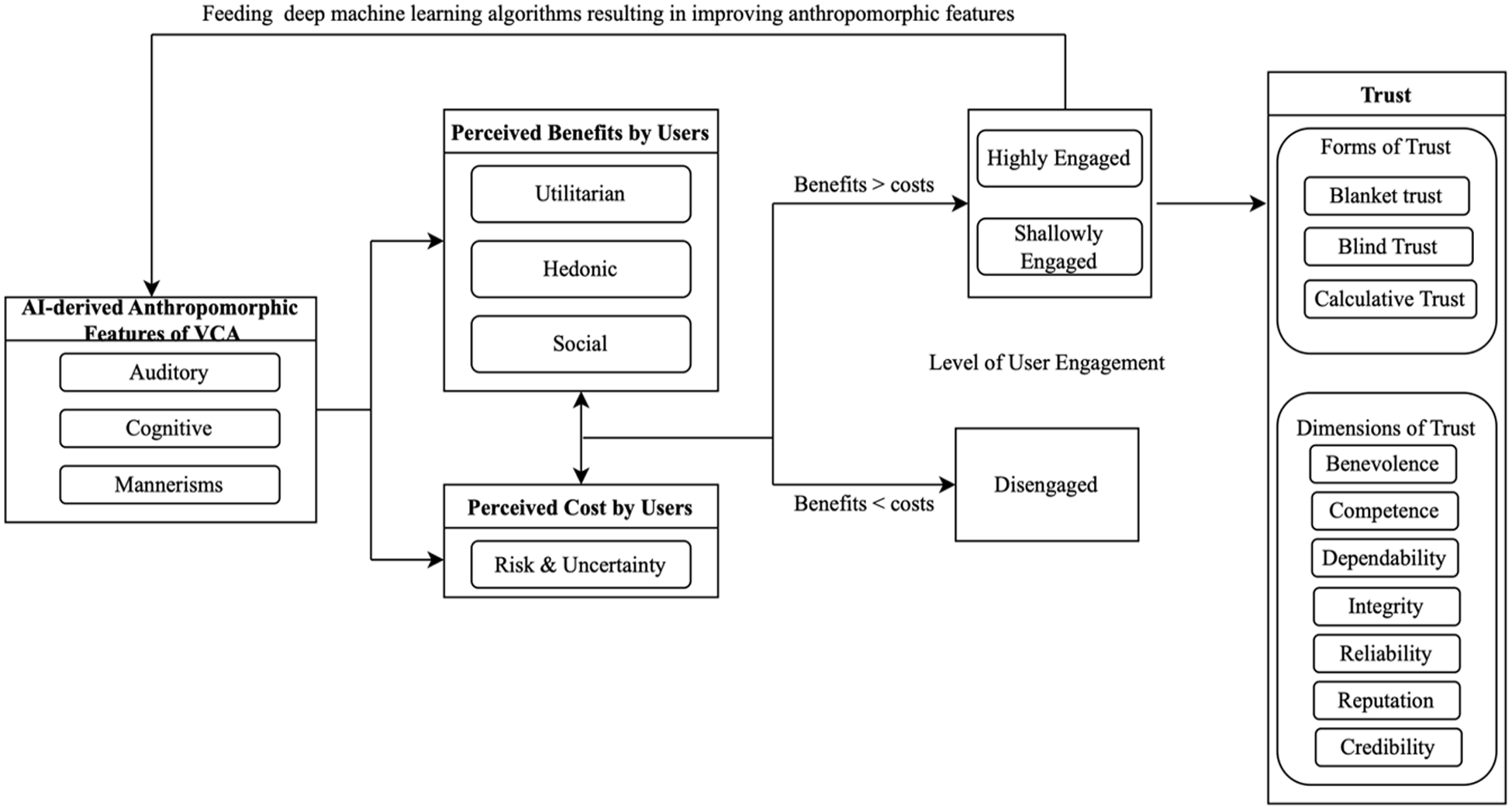

This research examined the role of anthropomorphic features on consumers’ engagement with and trust in VCAs with reference to the interplay of AI-derived benefits and costs. The intensity of this interplay appears to be influenced by perceived levels of reciprocity (i.e. positive, negative and generalised) and the levels of privacy risks and uncertainty necessary to optimise potential benefits (please refer to Figure 1). For some users, privacy risks and uncertainty are acceptable in exchange for perceived benefits. These users are prepared to surrender control of their privacy in order to maximise their expected benefits almost from the start of the relationship. Perceptions of competence and reliability in the fulfilment of activities instilled a sense of confidence in users when interacting with their VCAs demonstrating a high degree of trust. Reflective of Harwood and Garry’s (2017) findings, trust is an indicator of confidence when the service system is technically complex and there is imperfect knowledge and understanding about the service system. Our findings also suggest that anthropomorphising VCAs evokes higher levels of confidence and propensity to trust insofar as VCAs perceived to be human-like are deemed more competent than a mechanical machine (Blut et al., 2021).

Conceptual model for Engagement and trust development between humans and anthropomorphised VCAs.

The cognitive capabilities of VCAs appear to have moved users from a calculative-based trust approach towards a position of blanket trust as improvements in service personalisation resulted in the users’ perception of higher benefits relative to costs. Blois (2003) argues that blanket trust is unidimensional in nature and is therefore seldom possible because of the multidimensional nature of trust as a construct. However, based on their increasing experience of interacting with their VCA, users were prepared to incrementally disclose more information in return for increased benefits. Based on this acceptance of increasing levels of vulnerability, users calculated the costs of maintaining and increasing the benefits derived from their relationship. However, as the relationship developed, this calculative form of trust would increasingly diminish to be replaced by blanket trust where users were less concerned about the risks of disclosing information concerning an increasingly wide and varied range of interactions and activities.

That said, users that felt the VCA was listening to their conversations limited or even ceased interactions as they perceived the costs to their privacy and the uncertainty associated with disclosing personal information surpassed the expected benefits. Such behaviours support Liu et al.’s (2017) research which outlines how, in online service encounters, distrust enhances a customer’s negative emotions and fear towards a service provider and decreases their intention to use that service provider.

Chan and Yao (2018) identify how distrust within human-human relationships may be mitigated by a sense of social attachment. However, a number of participants in this study remained distrustful of their VCAs and never developed social attachment with them. As a result, they stopped interactions, effectively terminating the relationship. This is somewhat surprising as these users had no qualms about continuing to use personal computers despite many of the attributes and functions of PCs (i.e. algorithm based) being identical. The key differentiator between the two products appears to be the VCA’s anthropomorphic features that can evoke discomfort and a sensation of the presence of a social entity that is listening. This confirms Novak and Hoffman’s (2019) research insofar as social presence may increase engagement but simultaneously increase the risk of discomfort.

Hence, the effects of a humanised voice, interactive communication capability and cognitive features evoke a sense of social presence that may positively or negatively impact trust. In our data, higher levels of auditory and cognitive anthropomorphism, coupled with increased levels of interaction, generally elevated perceived levels of VCA competence whilst mitigating uncertainty and privacy risks. VCAs’ capability to learn and develop interaction styles that resonate with the individual user may result in increased familiarity and accompanying trust that potentially mitigates any privacy concerns. This is consistent with Letheren et al.’s (2019) study on human-VCA trust formation and the accompanying lowering of perceived user risk relative to the benefits.

An emerging finding identified in this research was how anthropomorphic features may trigger users’ emotional attachment to VCAs, which could lead to a perception that VCAs are benevolent in a similar way to that of a friend or family member. This challenges Schweitzer et al.’s (2019) findings that suggest a lack of emotional attachment in human-object relationships. They attribute this to a reluctance among users to build emotional relationships with objects that may compromise their sense of control over the object. When users feel they are in the role of ‘master’, utilitarian benefits will foster the development of trust. In contrast to this, our research findings suggest that a VCA’s perceived social presence may contribute to the formation of socio-emotional relationships. A VCA’s perceived benevolence based on social benefits (e.g. social presence and social interaction) is deeper than a VCA’s perceived benevolence based on purely utilitarian benefits.

This research comprises two key contributions. First, it contributes to the anthropomorphism literature by demonstrating how anthropomorphic features may prompt users to attribute human mannerisms to intelligent assistants. Previous literature in this field has tended to focus on the attribution of behavioural aspects, emotional states and mental states (Awad et al., 2018; Minsky, 2007). Secondly, it contributes to the human-machine relationship literature by identifying how social presence evoked by intuitive/thinking AI anthropomorphic features can have both positive and negative consequences on trust and engagement but also has the potential to lead to socio-emotional relationships.

Consequently, incorporating VCAs at the organisational frontline may have both advantages and disadvantages from an organisational perspective. VCAs can help managers develop their organisational frontlines remotely during periods of crises (e.g. COVID-19), or in times where face-to-face interactions are limited (e.g. outside of business hours 24/7). Moreover, the intangible nature of services often cause customers to rely on their interactions with frontline service employees as proxies to judge the quality of a service (Hennig-Thurau, 2004). This research demonstrates the ability of the anthropomorphic features of VCAs to develop user trust at the organisational frontline. This has implications for the way such trust may be leveraged from a commercial perspective (e.g. in the recommendation of products or services).

Finally, organisations that incorporate VCAs have two options (1) custom-built intelligent assistants and (2) outsourced intelligent assistants. Custom-built intelligent assistants require significant investment over time to capture and process data so as to learn customer behaviours. This can result in customer dissatisfaction during the early stages of learning. The second option, renting an interface from companies such as Microsoft or Amazon, has the inherent risk of a third party using their information. It also increases privacy risks and uncertainty for customers (e.g. being heard and recorded by a third party). However, organisations can mitigate these risks by clarifying their privacy policy for customers regarding why and where they record and save customers’ information.

Limitations and future research directions

The generalisability of this research is limited by the qualitative nature of its data insofar as findings cannot be used for statistical inference towards a population. Additionally, the rapid rate of AI development is such that innovative features are continually being added to VCAs impacting the potential for replication of this study. However, this does create significant opportunities for future research as ongoing developments will influence customer perceptions of their interaction with VCAs on a continual basis making research in this arena a work-in-progress.

Our research illustrates the formation of three levels of trust (i.e. blanket trust, blind trust and calculative trust). However, the precise factors that influence the formation of each trust level still requires investigation. Finally, whilst this research identified how high levels of perceived privacy risk and uncertainty could result in user disengagement, further research should aim to investigate other factors that may result in customer disengagement with AI-based organisational frontline.

Supplemental Material

sj-docx-1-anz-10.1177_14413582231181140 – Supplemental material for The Effects of Anthropomorphised Virtual Conversational Assistants on Consumer Engagement and Trust During Service Encounters

Supplemental material, sj-docx-1-anz-10.1177_14413582231181140 for The Effects of Anthropomorphised Virtual Conversational Assistants on Consumer Engagement and Trust During Service Encounters by Arezoo Fakhimi, Tony Garry and Sergio Biggemann in Australasian Marketing Journal

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Supplemental material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.