Abstract

This paper offers a counter-map of automation in social services decision-making in Australia. It aims to amplify alternative discourses that are often obscured by power inequalities and disadvantage. Redden (2005) has used counter-mapping to frame an analysis of big data in government in Canada, contrasting with ‘dominant outward facing government discourses about big data applications’ to focus on how data practices are both socially shaped and shaping. This paper reports on a counter-mapping project undertaken in Australia using a mixed methods approach incorporating document analysis, interviews and web scraping to amplify divergent discourses about automated decision-making. It demonstrates that when the focus of analysis moves beyond dominant discourses of neoliberal efficiency, cost cutting, accuracy and industriousness, alternative discourses of service users’ experiences of automated decision-making as oppressive, harmful, punitive and inhuman(e) can be located.

Keywords

Introduction

Transparency and accountability are fundamental for the legitimacy of political and administrative systems (Bovens, 2009; Shkabatur, 2012). However, the prolific use of automated technologies in government social services provision renders opaque many processes that previously relied on paper trails and clear assignment of responsibility (Henman, 2022; Sleep & Tranter, 2017). There have been calls to map the use of automated technologies in government, to ensure those responsible for decisions are accountable. The NSW 1 Ombudsman (2021) called for an audit of automated decision-making (ADM) technologies used in NSW state and local governments. Similarly, the Australian Research Council (ARC) Automated Decision-Making and Society Centre of Excellence (ADM + S CoE) has also spent its first few years gathering a baseline dataset of ADM systems in Australia and globally in various mapping projects, including one conducted by myself: Mapping ADM in Australian Social Services (Sleep et al., 2022). In that project ADM systems were shown to be widespread in Australian social services delivery, including in social security and employment services, as well as child and family, disability, homelessness, and domestic and family violence sectors. The focus was, however, on ‘official’ accounts of the technologies, taking publicly available documents at face value without significant critique of their underlying aims or motivations.

Leaving the articulation of automated technologies used in social services delivery to government and implementing agencies means their aims and experiences dominate discussions about the use of the new technologies in service delivery. However, the experiences and perspectives of service users, who are rarely included in decisions to employ these technologies, may be very different. Service users are seldom allowed to opt out of using the technology, and they often, in exchange for receiving services, need to accept administrative procedures and surveillance that they would prefer to avoid (Sleep, 2022). In response to this dynamic, this paper asks: how do service users experience automation in social services delivery?

To answer this question, an alternative type of mapping is needed – one that is consciously aware that a form of mapping that charts beyond the usual ‘official’ discourse is required. To achieve this, the paper borrows from critical geography's counter-mapping tradition to develop a deliberately alternative picture of the use of automations in social services delivery that honours the perspectives of service users. The aim of counter-mapping is not to just provide an alternative viewpoint to official narratives, but one that challenges dominant discourses and seeks to upset them. Here I draw from Kidd's (2019, p. 955) knowledge-centric approach to counter-mapping as ‘a techne to create knowledge about the world, denounce dominant representations, shed light on discrimination and injustice, and establish alternative and social categories’.

First, the paper will explain why, when mapping ADM in Australian social services, an approach that accommodates differentials of power, such as counter-mapping, is needed. Second, counter-mapping is outlined within its origin in critical cartography and its potential for critical data studies is highlighted. Third, the counter-mapping method adopted in this paper is outlined. Finally, a counter-map of ADM in Australian social services is presented to reveal that service users experience ADM in social services as punitive, extractive, excluding and (in)human(e).

However, before a critical counter-map of ADM in Australian social services delivery can commence, some core definitions need to be clarified. This paper adopts the definition of ADM developed by AlgorithmWatch (Chiusi et al., 2020; Spielkamp, 2019) for its European Union (EU)-based mapping projects. ADM is a deliberately broad term designed to include all technological innovations involving automated technologies, including artificial intelligence (AI), machine learning (ML) and generative technologies like ChatGPT. This broad definition is preferred because it is flexible enough to include new technologies in the fast-moving sphere of social services delivery. According to Chiusi et al. (2020), automated technologies are: design procedures [that] gather data … [and then] design algorithms to

analyse the data,

interpret the results of this analysis based on a human-defined interpretation model,

act automatically based on the interpretation as determined in a human-defined decision-making model.

This paper broadens this definition further following Spielkamp (2019), focusing on various automated systems in a social political decision-making context: a socio-technological framework that encompasses a decision-making model, an algorithm that translates this model into computable code, the data this code uses as an input—either to ‘learn’ from it or to analyse it by applying the model—and the entire political and economic environment surrounding its use. (Spielkamp, 2019, p. 9)

This definition reflects the embeddedness of ADM in political, economic, social and cultural systems. The broader term ADM, rather than specific terms like AI or ML, is essential in the context of Australian social services delivery to account for realities of the uses of these technologies, such as Robodebt. Robodebt (the Online Compliance Intervention) aimed to automate and streamline the identification and notification of social security debts by using a simple income averaging algorithm, and sending automated letters to service users whose reported income did not match the algorithm's calculation. However, the algorithm was flawed and did not account for variations like inconsistent working hours over time (Whiteford, 2021). Hundreds of thousands of false debt recovery notices were sent to economically and socially vulnerable social security service users. Robodebt did not use AI but used a basic calculation that averaged income. It was a simple technology, but its effect on the Australian policy and the political landscape has been substantial, as well as on service users, leading to significant distress including attributed death, homelessness and entrenching poverty (Commonwealth of Australia, 2023). Thus, it is not so much the level of technological sophistication of a digital tool but rather the way it is used that determines its effects.

The social services sector is defined broadly to include organisations that aim to improve the well-being of individuals, families and communities (Carson & Kerr, 2017, p. 1). In Australia, this is implemented through a collection of Commonwealth, state and territory departments and agencies, as well as community and private organisations (Department of Social Services, 2021). Complex contractual relationships between government, community services and private providers permeate the sector in what Powell (2019) called a mixed economy of welfare, Ramia (2002) criticised as ‘new contractualism’ in welfare, and Goodwin and Phillips (2015) identify as a marketisation of human services.

Mapping ADM in social services

This section of the paper will explain why, by reviewing existing studies, an approach that accommodates differentials of power like counter-mapping is needed when mapping ADM in Australian social services. Mapping ADM systems in social services is challenged by the opacity of government decision-making and the secrecy that often shrouds the commercial-in-confidence nature of the implementation of digital technologies in administrative decision-making. Pasquale (2015) uses the metaphor of a ‘black box’ to highlight the mystery that surrounds these technological processes, as well as the magnitude and complexity of the data being collected and hidden.

Stats New Zealand (2018) and Bosco et al. (2014) both dealt with the amorphous nature of AI-augmented decision-making through detailed questionnaires comprised of open-ended questions administered to key bureaucrats. Stats New Zealand (2018, p. 10) incorporated 17 agencies in their study, including the Ministry of Police and the Ministry of Health, and found AI and ADMs used in services that worked with children and young people, economy and employment, safety and security, and health. They (Stats NZ, 2018, pp. 8–9) focused on assessing uses according to principles for ‘the safe and effective use of data and analytics’ where the tools are ‘the engine of better government service delivery’ with, for example, improved ‘efficiencies’. Similarly, Bosco et al. (2014) sent a detailed questionnaire to each of the Data Protection Authorities in 28 EU countries about the use of facial recognition technology in their jurisdiction. Bosco et al. (2014) focused on transparency and understandability, particularly in relation to privacy and the right to informational self-determination. Finally, the author (Sleep et al., 2022) mapped ADMs used in Australian social services delivery using document analysis of publicly available materials in a case study research design. Sleep et al. (2022) focused on ‘official’ discourses through accounts provided by agencies, as well as public debates about the ADM assisted services. While widespread use of ADM was identified across the sector, with 28 ADM case studies described, these systems were interpreted at face value, without a critical or counter account.

However, there are important nuances that need to be considered when working in social services that were touched on by Sleep et al. (2022). These require more explicit focus if the lived realities of the impact of ADM to social services users are to be understood. Eubanks (2018, p. 10), in her book Automating Inequality, investigated ‘the impacts of high-tech sorting and monitoring systems on poor and working-class people in America’. She demonstrated that while the use of automation has increased in the provision of social services in the United States, the punitive and oppressive practices by decision makers continue in a form of ‘electronic poor house’ where citizens are red-flagged and sorted according to race and socio-economic status. Similarly, Carney (2019, 2020, 2023) argued that in Australia, social security recipients have been historically treated as economic scapegoats and punished for needing to access services. He pointed out that the administrative carelessness revealed through the Robodebt fiasco, the settled class action and, most recently, the Royal Commission into the Robodebt Scheme reflected power differentials between decision makers and service users. Both these accounts highlight that automated technologies develop and work within pre-existing policy frameworks, embodying massively unequal power relationships. In mapping ADM systems in the social services in Australia, there needs to be awareness that social services typically relate to people in situations of disadvantage and consciousness of the concomitant power differentials. This power-aware approach contrasts with the more neutral or upbeat accounts given by government of automation in the social services. This paper is my response to this initial mapping (Sleep et al., 2022), endeavouring to animate and articulate alternative discourses and experience of ADM in social services delivery.

Counter-mapping: From critical cartography to critical data studies

The act of mapping is to render a preferred representation of the world, and through this process constitute future relationships between people, culture, space and resources (Halder & Michel, 2019). Counter-mapping originated in critical cartography and harnesses the political nature of mapping into an act of resistance. It refers to efforts to map back (Halder & Michel, 2019), to map ‘against dominant power structures, to further seemingly progressive goals’ (Hodgson & Schroeder, 2002, p. 79). According to Harris and Hazen (2005, p. 115), counter-mapping is ‘any effort that fundamentally questions the assumptions or biases of cartographic conventions, that challenges predominant power effects of mapping, or that engages in mapping in ways that upset power relations’.

The term counter-mapping was introduced by Peluso (1995) in her article ‘Whose woods are these? Counter-mapping forest territories in Kalimantan, Indonesia’. Peluso explicated two different ways of mapping the forest. The first was imbued with historical colonial aims and used the language of forestry management. The second was an alternative method which was used by local landowners in response to two decades of intrusive industrial exploitation of custodial forests. This method appropriates ‘the state's techniques and manner of representation to bolster the legitimacy of “customary” claims to resources in an “alternative” or “counter” mapping movement’ (1995, p. 384), demonstrating how counter-mapping is an act of resistance for local landowners.

Successive examples of counter-mapping, using participatory or grass-roots approaches to mapping, include studies in Australia (Thomas & Ross, 2018), Canada (Barbosa & Burns, 2021), the United Kingdom (Firth, 2014) and the United States (Campos-Delgado, 2018). Many counter-mapping exercises use participatory methods, aiming to ‘mapping with’ (Wilmott, 2019, p. 43) rather than ‘mapping on or over’. The maps become a vehicle for animating alternative voices and alternative claims over space, culture and history. The form that a counter-map takes varies depending on who does the mapping. Many counter-maps are represented visually as a chart, others are presented as art or paintings. In addition, others are presented as story (written narrative or recorded spoken words), or expressed as music in the form of song.

The critical vein that animates the urgency of critical cartography in counter-mapping (Halder & Michel, 2019) also runs through critical data studies. For example, in her Atlas of AI, Crawford (2021, p. 113) elaborates the statement that ‘data is the new oil’, and explores the social, economic and environmental impact of the expanding use of AI technologies. She designed a detailed visual atlas, or map, displaying the impact of AI technologies, running counter to conventional discourses of regulation and inevitability. Echoing the resistance at the core of counter-mapping in critical cartography, Crawford explicates her practice as an act of resistance to the extractive nature of AI and the data, resources and people that feed it.

As Iliadis and Russo (2016, p. 1) explain, ‘data are a form of power’ (see also Beniger, 1989; Bowker et al., 2010; Haraway, 1991; Henman, 2021; Jones, 2016; Zuboff, 1988). The focus of critical data studies is the ‘sociotechnical “data assemblages” that make up big data’ (Iliadis & Russo, 2016, p. 3; see also Kitchin, 2014; Kitchin & Lauriault, 2014). Counter-mapping in critical data studies offers the possibility of harnessing alternative accounts of data technologies in their social and political contexts and agitating for change. It aims to disrupt, animating narratives about these technologies that diverge from and challenge official accounts. For example, Richardson (2019) in AI Now produced a ‘shadow report of the New York City Automated Decision System Task Force’ as an alternative account of automation in decision-making in New York, which aims to improve governmental transparency to support community advocacy. Similarly, Redden (2018a, 2018b) amplified divergent discourses about big data used in government in Canada. To map current ADM is to map future imaginings of automated technologies and systems, and a counter-map harnesses this to disrupt current power relations and render the world fairer and more just.

Counter-mapping's origins in critical cartography and its amplification of alternative voices with critical focus continues into mapping work in critical data studies. It is this trajectory of critical data studies that this paper contributes to. This paper aims to contribute to the literature on ADM in social services, especially work that aims to map this landscape, by providing a deliberately critical account of the datafication of Australian social services. It also aims to extend current counter-mapping work into the digital, and into the socio-technical context of ADM in social services.

Method

This project aimed to map back automation used in social services delivery. It employed a counter-mapping method which utilised a mixed methods approach incorporating 28 case studies of ADM systems using document analysis, analysis of the #NotMyDebt site, and interviews with eight people with experience of the ADM systems. The case studies were part of a broader related study that aimed to map the use of ADM in social services delivery in Australia (Sleep et al., 2022). The material for the counter-mapping project was compiled over an 18-month period between April 2021 and September 2022.

Selection of case studies

A list of case studies included in the project is presented in Figure 1. A purposive sampling strategy to select ADM systems was used. While this strategy did not provide a comprehensive and exhaustive account of every ADM system used in Australian social services delivery, this was not an aim of the study. Rather, the aim was to obtain qualitative counter-descriptions and narratives of the use of ADM in this sector. Only those ADM systems that had sufficient information available to build a case study were included. First, ADM systems were identified by interviewees, as well as by expert knowledge of the author and colleagues and internet searches of the websites of government agencies, advocacy and professional associations, specialist news outlets, and academic publications databases. Once a possible ADM case study was identified, a broad array of documents was collected and collated in a snowballing manner. These ranged from government agency annual reports and government websites to government tenders and technology company websites. There was a particular emphasis to investigate Australia's social security, child and family services, disability services, housing services and domestic violence services.

ADM case studies included in the project.

The criteria for inclusion of an ADM as a case study were broad. If the identified system fit the general definition of an ADM system and was, or planned to be, utilised in social services broadly defined, it could be included in the study. In general, if there was no automated technology or the technology was not part of a social services decision-making process that affected people, it was excluded. An exception to these criteria was the disability sector, where the disbanded NDIS Individual Assessment Instruments and the National Disability Data Asset (NDDA) were both included despite not being automated systems. Individual Assessment Instruments were included because they involved important debates about assisted decision-making in the NDIS. Similarly, the NDDA was included because it is a potentially important stepping-stone towards developing capacity for automation in the sector. ADM systems were excluded from the study when little documentary information could be located about them.

The Robodebt case study was particularly rich and included the #NotMyDebt website, which required distinct analysis. The website was initiated with the express aim to amplify the voices of those impacted by the scheme and included a portal where individuals could anonymously record their story, in their voice, about their social security debt experience. All the stories available on 22 March 2021 were downloaded into an Excel spreadsheet using a ‘Sitesucker’ app. A total of 1247 stories were downloaded, and all were included in the study. Information collected for each story was organised under the headings: title, amount of debt, payment type, whether the debt had been appealed, and the service user's experience of and reflections about the debt. Entries ranged from a few words to several paragraphs, with the majority 3–4 sentences long.

Recruitment for interviews

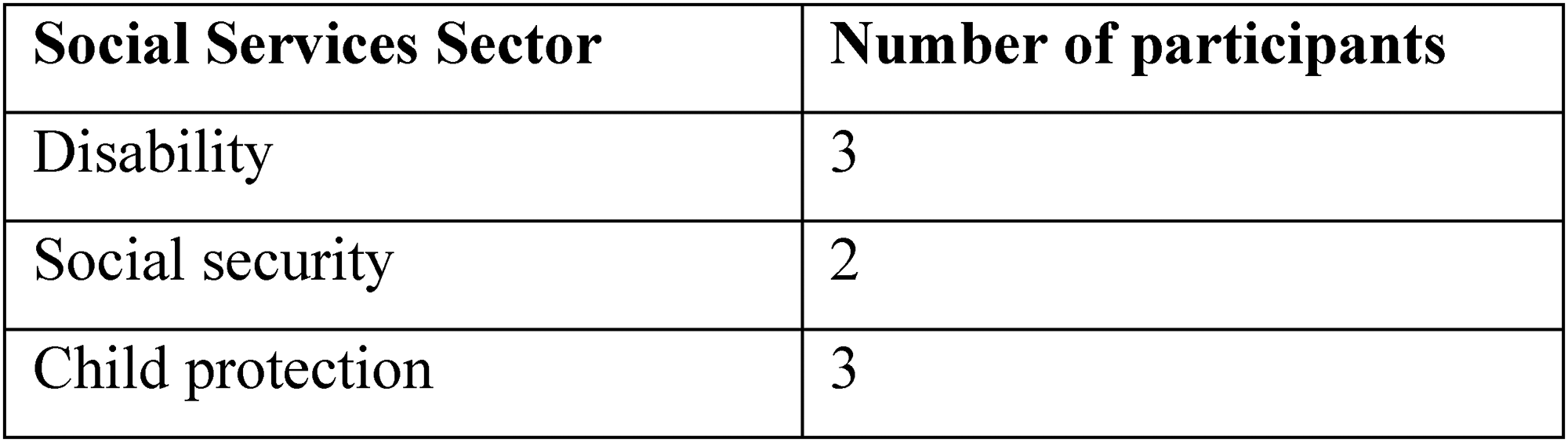

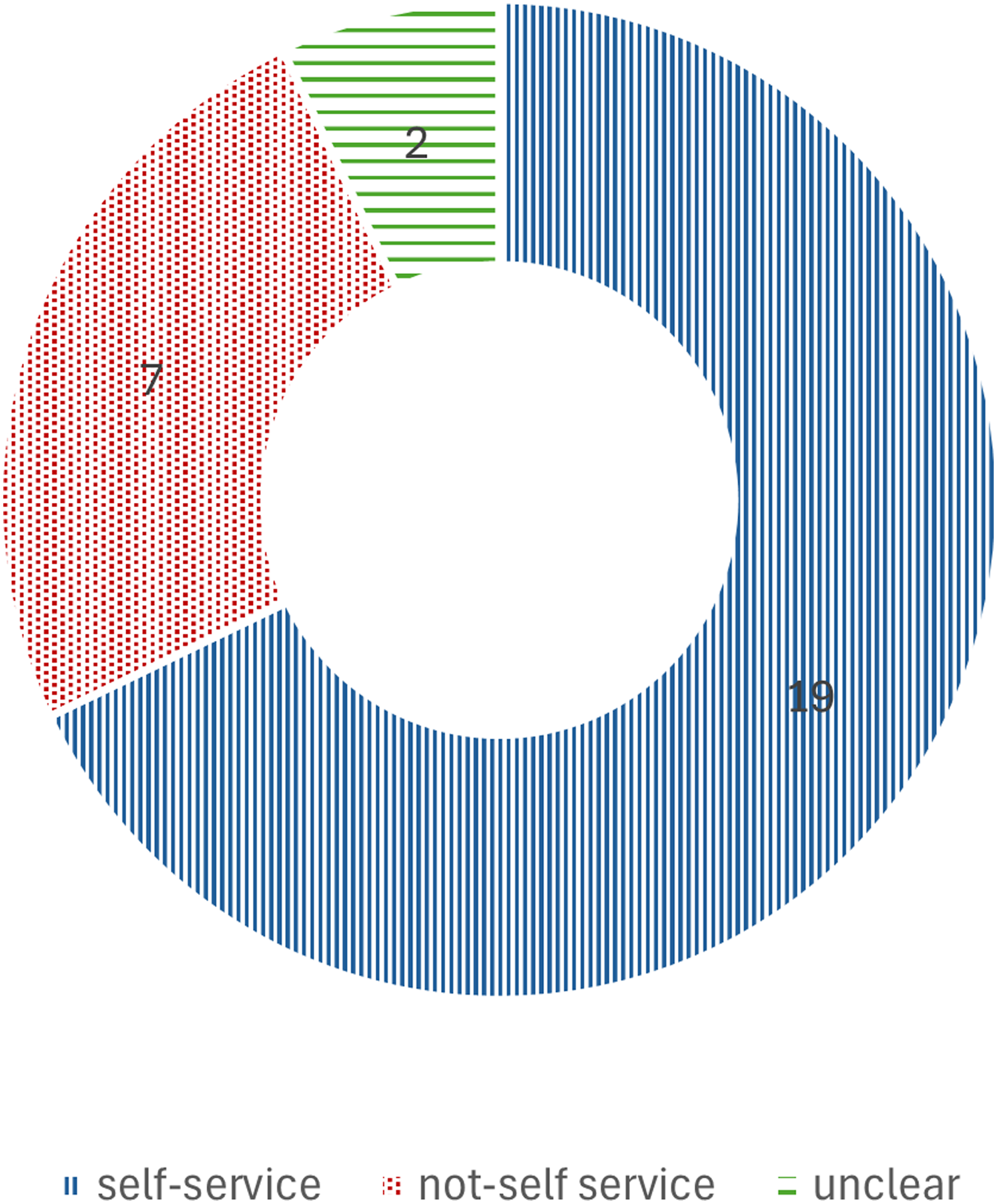

Eight semi-structured interviews with selected participants who have experienced automated decisions were conducted. A purposive sampling approach was used, with potential participants being selected who have experience as clients of various aspects of automation in decision-making in the social services. All participants had experience as a service user, while some were also decision makers or product designers. The sectors of social services recruited from are presented in Figure 2. Of the eight interviewees who participated in the research for this study, three were in the disability sector, two in social security and three in child protection.

Interview participants' primary social services sector.

Initial participants were invited from a list of contacts already compiled by the researcher, drawing from their current network. Participants were invited to suggest further potential participants in a snowballing approach. Potential participants were invited via email, and a participant information sheet was attached to provide further information to potential participants.

The interviews were conducted via Zoom, as participants were geographically dispersed and COVID-19 pandemic restrictions inhibited travel and face-to-face meetings for much of the research period. Interviews were between 1 and 2 hr in duration and were recorded via Zoom and auto transcribed. Pseudonyms have been used for interviewees throughout this paper.

Ethics

The project was conducted with approval from the University of Queensland Human Research Ethics Committee (2021/HE001091), and as part of a post-doctoral research role in the Australian Research Council Automated Decision-Making and Society Centre of Excellence, based in the School of Social Science, University of Queensland.

Analysis

The material collected was analysed in two steps. First, case studies of included ADM systems were drafted, which incorporated exactly what the ADM was, where it was occurring, who it impacted and how this was experienced. Using case studies of ADM systems facilitated analysis in their socio-political context (Yin, 2014). Case studies were compared and contrasted, for example, according to the ‘official’ purpose of the system and whether the system was self-service. This analysis is presented in the report ‘Mapping ADM in Australian Social Services’ (Sleep et al., 2022). Second, the case studies, #NotMyDebt stories and the interview transcripts were collated and analysed using thematic analysis (Hsieh & Shannon, 2005), drawing out themes based on the material and also informed by the Australian and international literature on automation in social services and welfare delivery in a predominantly deductive process. To amplify the voices of those who experience being the target of an ADM in social services delivery, in alignment with the aims of a counter-mapping approach, the voices of service users were prioritised in this analysis mostly through the interviews, as well as through experiential accounts such as the 1247 stories on the #NotMyDebt site. These themes were represented in a visual map drawn by the researchers. Given the prevalence of the information focused on Robodebt, much of the material used to build the map reflected service users’ experiences of Robodebt. While other ADM systems are also included in the study, it is important to acknowledge that in many ways, at the time of the study, to counter-map ADM in social service was to map in the spectre of Robodebt.

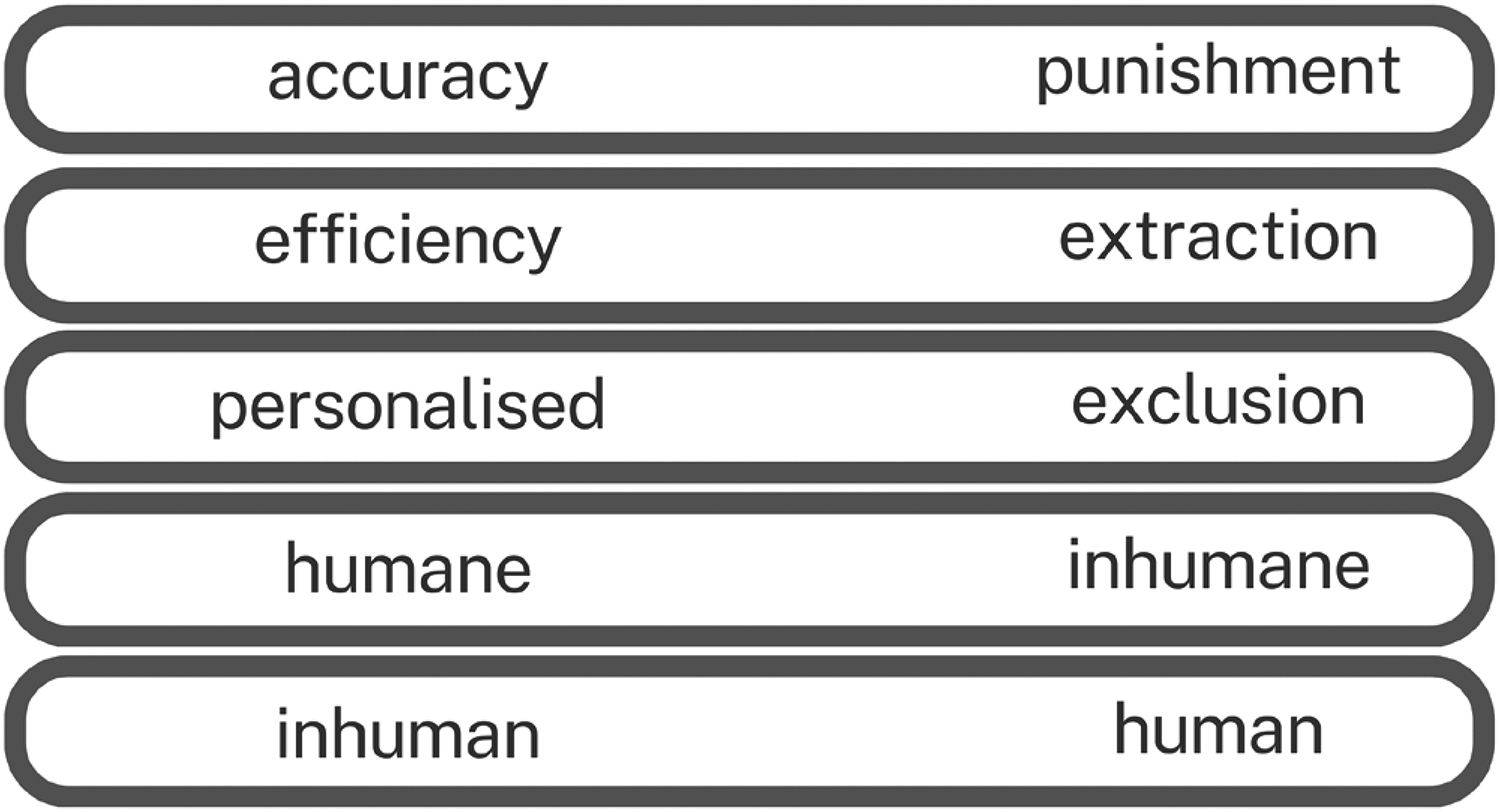

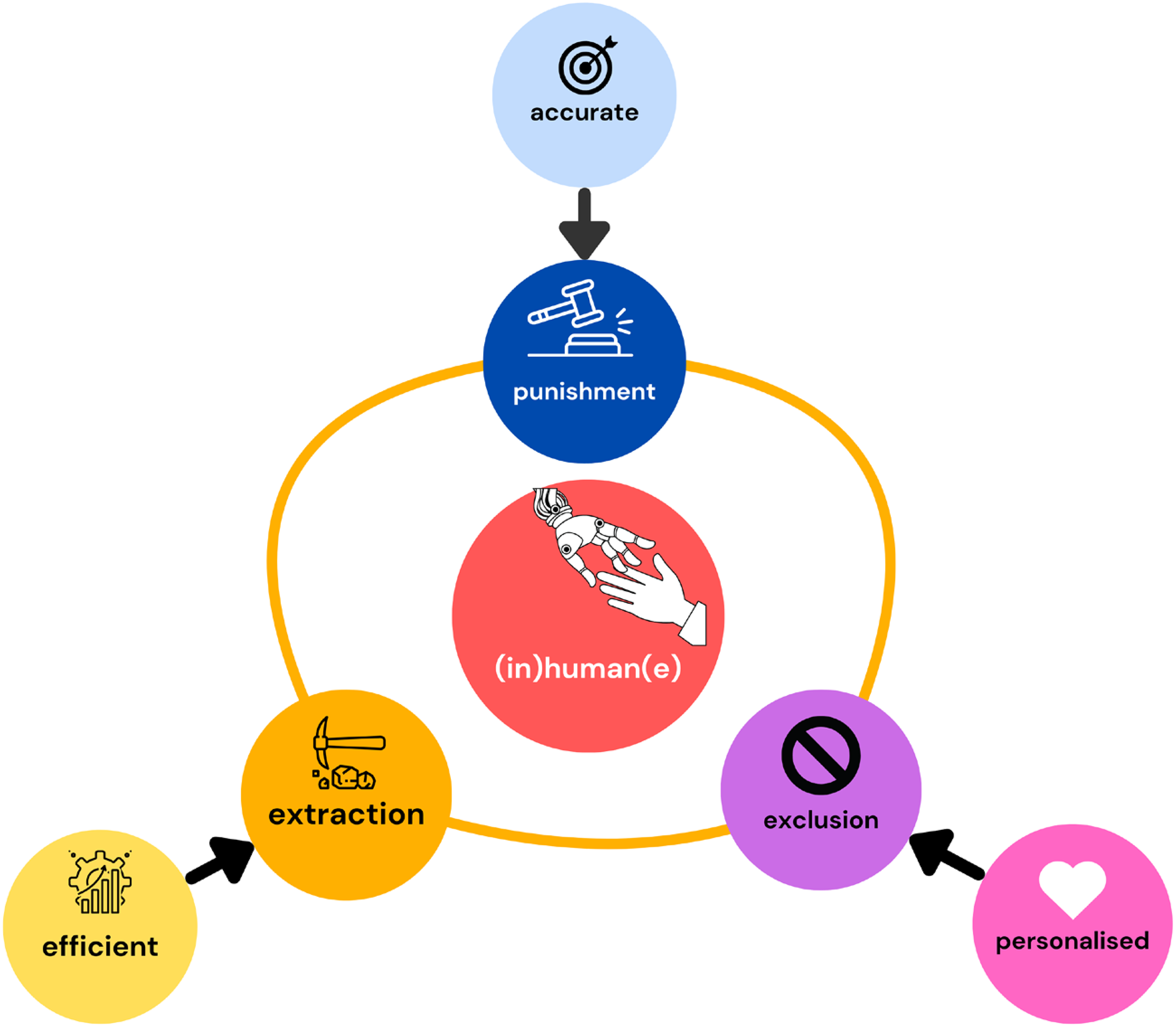

This map was drawn in three main stages. The first stage was to list the key themes identified in the study. These were: accuracy, efficiency, personalisation, extraction, punishment, exclusion, human, inhuman, inhumane, humane. Second, it became clear that the themes could be coupled into opposing dyads that generally referred to a similar process but from a different vantage point (see Figure 3). For example, from an ‘official’ perspective, ADM allowed greater accuracy; however, this accuracy connected into an extensive welfare compliance infrastructure. To many service users, the lived reality of this infrastructure was expressed as feeling like punishment, especially by those who shared their Robodebt story on the #NotMyDebt site. Hence, the terms accuracy and punishment were coupled as effectively opposing concepts. Efficiency from the point of view of ‘official’ discourses means reducing bureaucratic workloads by automating many of the menial tasks and assisting with data analysis. From the perspective of some service users, this was experienced as increased administrative burden as new tasks and skills are required of them, so efficiency and extraction have been coupled together. Personalisation, a widely discussed benefit of ADM systems, has been coupled with exclusion – because the most common purpose of ADM systems included in the case studies was to identify individuals who are eligible for service. From the perspective of service users, this may not be experienced as personalised service, but as being excluded from services should they be denied access based on the information the system has collected about them.

Grouped themes.

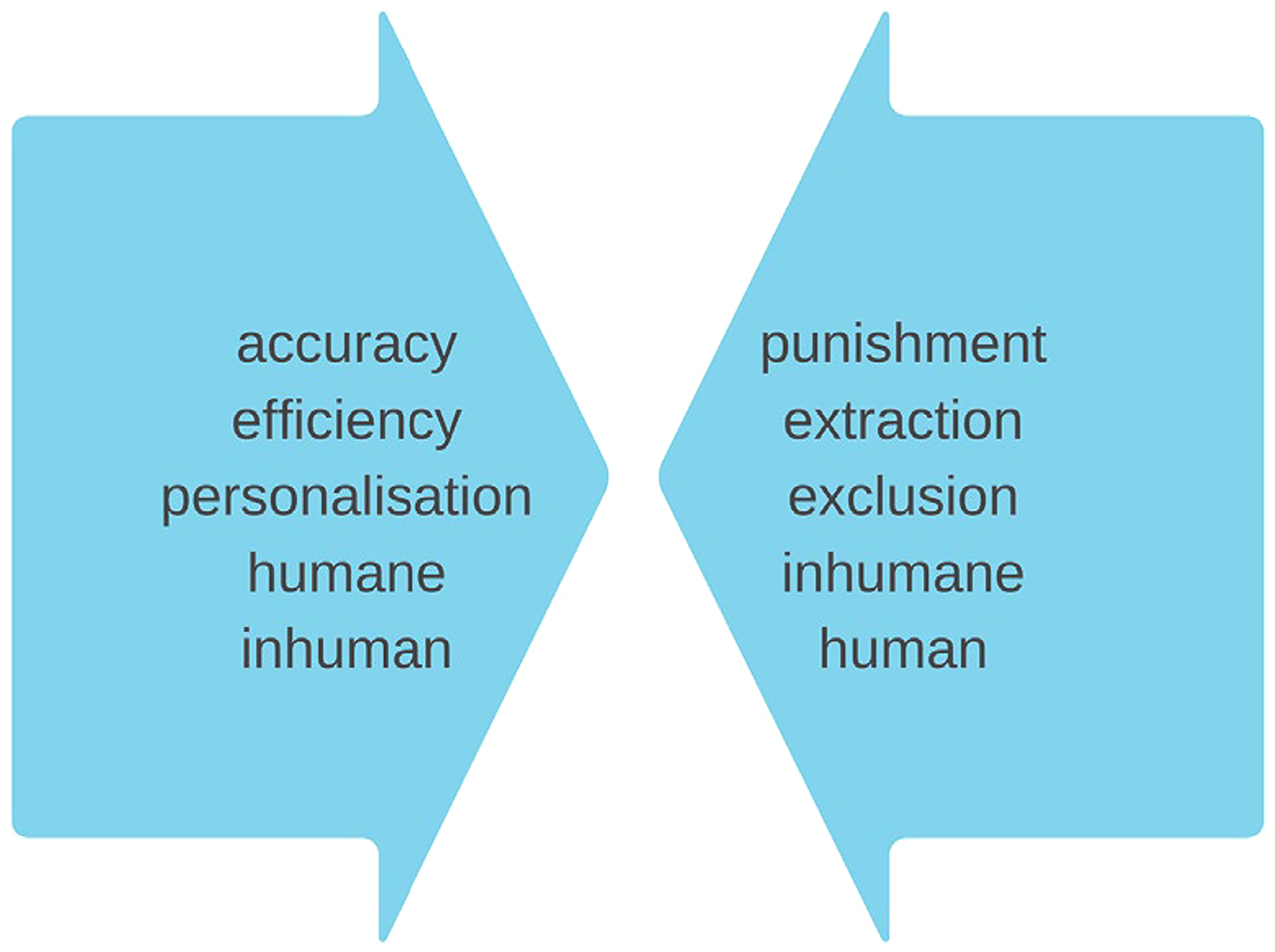

Third, in an important step towards developing the counter-map, it became clear that these coupled concepts each had a power directionality. The pressure seemed to be applied onto service users from the theme that related to the ‘official’ discourse indicating a type of power vector. Accuracy put pressure on service users that could feel like punishment. Personalisation put pressure on service users that was experienced as exclusion. Efficiency put pressure on service users that could feel like extraction. When service users expressed their experiences, it can be seen as an act of resistance, of pushing back on the dominant discourses of welfare and technology. In addition, services that were described as humane were experienced as inhumane by service users. Further, while the technologies promise decision-making absent from humans (inhuman), all systems included in the study included a human at some stage of the decision-making process (human). This directionality is presented in Figure 4.

Directionality of main thematic categories.

It is important to acknowledge that the experiences of service users were not uniform nor identical. For example, the experiences of social security recipients during Robodebt were widely expressed as punishment. In contrast, people dealing with disability services were more focused on being excluded and not being treated with human dignity. Nevertheless, the counter-map shows some of the general themes and accounts found in the study. In the same way that a map of a coastline rarely shows details like shade trees and rocky foreshores in the interest of rendering simplified the environment, so too does the counter-map model the experiences of service users in the landscape of ADM in social services delivery. This modelling provides a purposeful counter to official accounts of efficiency, accuracy and personalisation in the digitisation of Australian social services delivery.

Findings: Counter-mapping of ADM in Australian social services

Figure 5 represents a counter-map of ADM in social services delivery in Australia derived from the case studies, including analysis of the #NotMyDebt site and interviews. On the outer rim of the map are key ideals and aspirations for automation in social services that are expressed by organisational and product developers: the hope for more accurate, efficient and personalised service delivery and administration of welfare resources. The arrows indicate that these ‘official’ aspirations are experienced as pressures and stresses on service users. This shows that among social services users and their advocates, ADM in social services were discussed as punitive, extractive, excluding and inhuman(e). In general, those impacted by ADM were less likely to focus on a future imagined efficient, accurate and consistent decision-making process, and more likely to focus on the impact of the decision-making in their day-to-day lives.

Counter-map of ADM in Australian social services delivery.

The expectation that the system is not designed around service users’ needs and safety but instead was heavily fallible was a strong theme, particularly for social security recipients. Throughout the #NotMyDebt entries, almost every entry expressed frustration about receiving an incorrect social security debt through Robodebt, and phrases like ‘I have lost trust in the government/system/Centrelink’ and ‘I have no trust in government’ were common. Tracy, for example, expressed the disappointment and distrust she felt dealing with an incorrect social security debt (Robodebt): [They] chose to settle my case and wipe the debt in its entirety. The damage however, was already done. I spent over a year of my life fighting this debt … makes me feel so sad that, in a country such as Australia, our citizens, those who need our support the most, can be treated so poorly. (Tracy, #NotMyDebt 2022b)

Although Tracy's debt was erased in entirety, she spent a year fighting it, and felt the damage she has incurred was ‘already done’, implying that the issue was not just the money she did not have to return but the trust she has lost in Australia's social services system – that those who need ‘support the most’ can be treated so poorly. At the centre of the map (Figure 5) is both the individual service user and the automation itself, as simultaneously human/inhuman and humane/inhumane. Human/inhuman reminds us that all ADM systems make decisions about actual people's lives, even if they appear to not involve humans in the decision-making process through lack of decision-making discretion and facelessness (personal and administrative). Humane/inhumane represents that service users generally engaged with social services to receive aid, encouraged by the promise of welfare services to treat all humanely and with dignity; however, many recalled inhumane treatment, particularly those who experienced Robodebt. The key themes from the perspective of service users and their advocates – punitive, extractive, exclusion, (in)human(e) – will be unpacked further.

Accuracy/punishment

Improved accuracy emerged as a key ‘official’ reason for introducing ADM into social services delivery. Terms such as ‘more accurate’, ‘consistent’, ‘objective’ and ‘targeted’ permeated the publicly available documents on ADM in social services. The promise of crunching large amounts of data to reduce errors and unfair biases and discretion in deciding who receives services and resources is expressed in the publicly available documents as an alluring capability of digitalisation. For example, an ‘official’ aim of Online Employment Services including the Targeted Compliance Framework 2 is ‘accurately assessing job seekers to determine their individual needs and strengths for finding work [so it] could support the future employment services model to work efficiently and effectively. This allows for targeted and tailored services’ (Australian Government, 2018, p. 11).

Accuracy is inextricably connected to welfare compliance. An important part of targeting services is ensuring the intended people receive them, and, in Australian social security, recovering overpaid money in the form of debt recovery. However, Robodebt has shown that accuracy is not guaranteed and that service users are least able to absorb the hardships that result from errors. A key theme that emerged in the interviews and #NotMyDebt site was that many social services users felt targeted by punitive compliance mechanisms. Of the 1247 #NotMyDebt entries included in the study, most discussed being made to ‘feel like a criminal’, with over 35% (443) explicitly mentioning the word ‘punish’, ‘punitive’, accuse’, ‘crim’, ‘criminal’ and/or ‘target’. Sally provided a vivid account of this experience. Sally was a Robodebt survivor who was ascribed a historical debt using old records but new automated processes to investigate abnormalities in the data. She became aware of the debt when her tax return was ‘confiscated’ in 2019, after which on further investigation she discovered that she had a social security debt from four years earlier when she received Austudy. She reflected on the #NotMyDebt site about the punitive and stressful nature of this experience: This has been an incredibly stressful event that I have no control over. It has left me shaken and in tears multiple times. Now I am reflecting it could have been a better option to be homeless and not on welfare while I was studying to avoid this debt. This is NOT human services this is punishing the lowest income earners with debt. How can someone incur a debt over five thousand dollars and not be aware or notified. (#NotMyDebt, 2021)

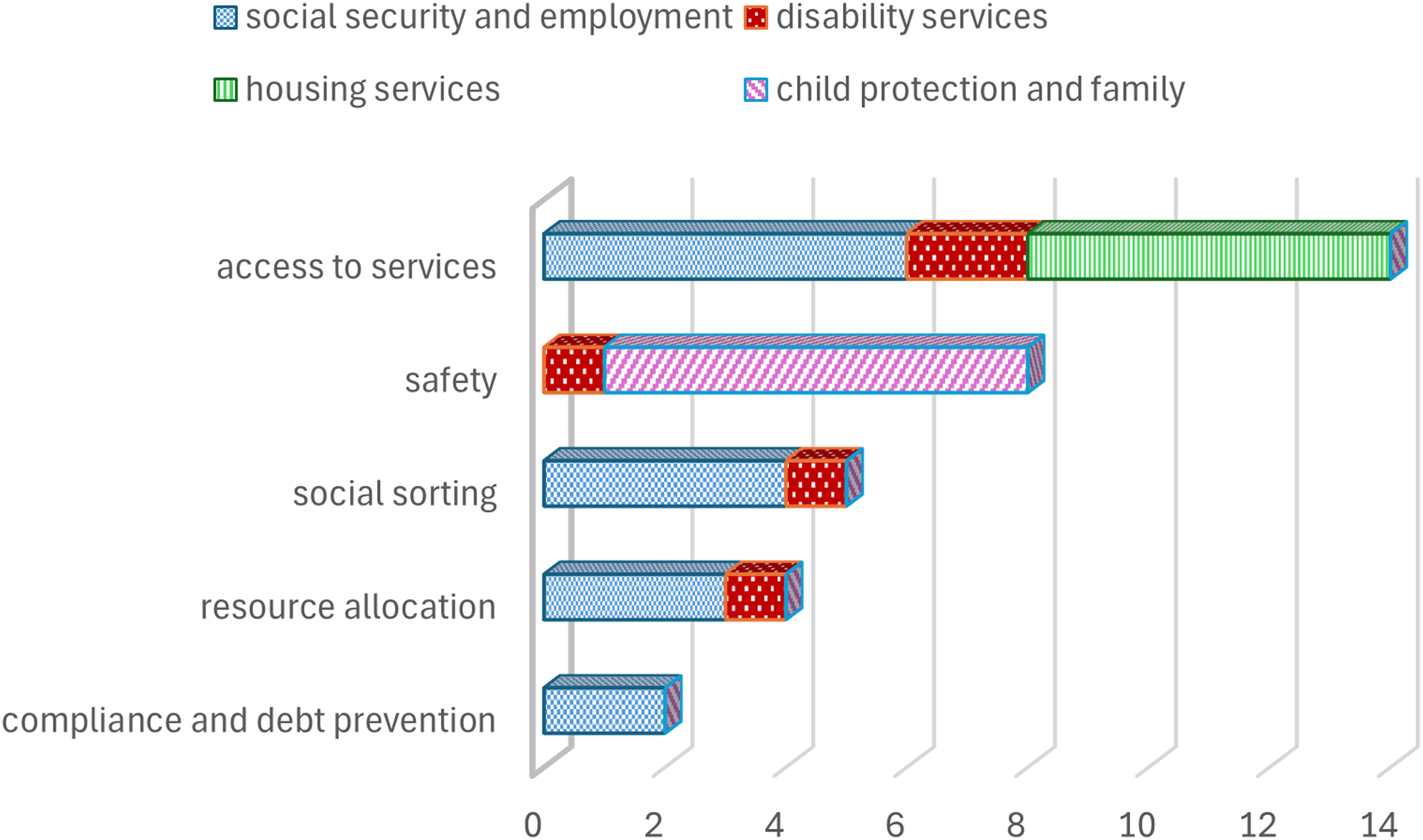

Sally did not feel her safety was a priority in Robodebt, or in the social security service delivery in general. This is reflected when the publicly stated or ‘official’ purposes of ADM systems used in social services delivery are compared and contrasted through a critical lens. Five ‘official’ purposes were identified among the case studies: access to services, safety, social sorting (organising of people into different categories based on available data, for example by race or sexual orientation), resource allocation, and compliance and debt prevention. It should be noted that these are the publicly stated aims of the systems, not the actual outcomes. The frequency of each ADM system purpose is displayed in Figure 6 according to social services sector. It should also be noted that ADMs may have multiple purposes. The most common purpose was access to services; this included portals for self-service by service users as identification of eligibility. Echoing Sally's experience of ADM in social security, all the compliance and debt prevention ADM systems included in the study were in the social security and employment sector (2), as well as the majority of the ADM systems that were purposed for social sorting (4 out of 5) and resource allocation (3 out of 4). At the same time, no ADMs used in the social security and employment sector explicitly stated safety as an aim (see Figure 6). It seems safety is not a purpose for Australian social security ADM application, but identifying eligibility, social sorting, resource allocation and debt and compliance are.

Frequency of ADM system purpose according to social services sector (N 28).

This does not mean that the experience of social security and employment services as punitive is new, or caused by the adoption of ADM technologies in service delivery. Many writers have chronicled the experience of social services delivery as punitive, from the 19th-century Poor Laws to the present (Headworth, 2021; Super, 2021; Trattner, 2007). However, what is new is the link between this punitive manifestation and the technological promise of efficiency, especially in welfare compliance.

Personalisation/exclusion

The personalisation of individual and digital system interactions has become commonplace, and the ‘Netflixification’ (customer-focused recommender system) of social services delivery that wraps around a person at each stage of their life, through their life course, is an enticing prospect for system and service design. For example, the Government Digital Experience Platform (GOVDXP) is intended to be the Commonwealth government's one-stop-shop for online services, replacing the previous myGov platform (Hendry, 2018: Thodey, 2022, p. 4). The system aims to learn from users’ prior interactions with government services to provide them with suggestions for new services and next steps, a feature akin to Netflix's recommendation engine (Hendry, 2020). By centring services around life events, such as finding a new job, having a baby, or experiencing a natural disaster, the government strives to offer ‘simple, smart and personalised services across portfolios and jurisdictions’ (Digital Transformation Agency, 2020).

However, as services get more complex, requiring more sophisticated and expensive technology and better connectivity to access, this personalisation can be experienced as a barrier by those who do not have the means, skills or bandwidth to engage digitally. Exclusion was a common theme that emerged in the interviews and case studies. For example, in the interviews, social security advocate John explained how digital literacy hindered claiming payment for numerous clients because they could not work with the automated online claims system effectively. The system did not have the capacity to recognise when an applicant was having trouble navigating the system, and instead simply rejected the incomplete claim: but this is the problem with these automated decisions, is that the computer is, you know, it's going through this algorithm where you need to complete all these things to get to the next step, but if you're unable to complete that step, that's you done, there's no other option. Yeah. So not only, so it's not that the most vulnerable people are being rejected, it's just that they're not being able to get on the system at all. Absolutely excluding. (John, interviewee 4)

However, even when service users have access to the resources needed to engage with the digital, ADM systems that are explicitly designed to collect and analyse data with the aim to decide who is eligible for service effectively exclude. Among the case studies of ADMs included in this study, 26 out of 28 stated their official aim to be deciding who to include or exclude from access to services either directly (15), or indirectly through social sorting, including assessing job seekers’ needs through the Job Seeker Snapshot (7), resource allocation (4) and compliance and debt prevention (2) (see Figure 6). That social services decision-making is attempting to discern between who is ‘deserving’ and ‘undeserving’ of resources is consistent with the observations of scholars of the welfare state who have long been critical of this distinction (see for example McDonald & Marston, 2005; Mendes, 2017; Piven & Cloward, 2012; Watts, 1997). Nevertheless, that this is the explicit aim of almost all automated systems included in this study should raise concern. ADMs change the nature of this debate by making many of the bureaucratic rules around eligibility opaque in consequential ways. Exclusion has always been a part of the organisation of welfare services; the value of ADMs has been projected to make this process more ‘objective’. However, ADMs can produce exclusion of their own kind, especially for people who lack digital infrastructure literacy.

Efficient/extraction

The promise of improved efficiency and value for money that ADM systems can bring is seductive for social services agencies that struggle to resource demand. Terms like ‘faster’, ‘cost-saving’ and ‘sustainable’ permeated the publicly available documents and the ‘face value’ descriptions of ADMs in the case studies. For example, Robodebt was part of an ‘integrated package of compliance and process improvement initiative[s] including improved automation and targeted strategies for fraud prevention in areas of high risk’ in an attempt to make the social security system more fiscally sustainable and repair the budget (Department of Treasury, 2015, p. 116). One way that services achieve this is by decreasing the administrative workload of social services workers through automating many of the menial jobs or providing tools that can aid in large database analysis to assist with decisions. However, this can sometimes mean the service user is required to undertake the menial task rather than the social services worker in the form of self-service.

The extractive nature of many of the ADM systems was a key theme that emerged in the case studies. Of the 28 ADM systems included in this study, 19 (67%) involved a form of digital self-service, while 7 did not (Figure 7). Two were unclear. This pattern indicates a use of digital technology to shift social services in Australia towards user self-services, and in doing so raising the possibility of extracting the labour of service users to play roles that were previously undertaken by administrators.

ADM systems that involved self-service compared to those that did not involve self-service.

While paper-based welfare systems were also extractive in the sense that service users were required to fill in forms and hand them to agencies, there are distinct differences in the way ADMs extract labour from service users. For example, the myGov app is used by Services Australia to communicate directly, in real time, personally to social security recipients via their smartphones, asking them to update their details, including relationship status, employment, income, job search and training activities, by inputting them directly into the system via the provided portal. This was work previously performed by social security officers during an in-person interaction with the service user. The administrator (ideally) checked the form was complete and guided the service user to provide the required information in the correct format. Once the service officer was satisfied that the application was adequate, they then took a copy of the application and documents and added them to the service user’s file. For many of the ADMs in the study, service users rely on the system to let them know if their applications are complete, and they must convert the required documents into the correct digital format and upload them to the system. This is an administrative burden (Madsen et al., 2022) that has been moved to the service user. This is similar to what Casey (2021) has identified as a move towards digital self-service in employment services in Australia, as part of a broader shift to ‘system-level bureaucracy’ (Bovens & Zouridis, 2002, p. 174) where service users are responsible for monitoring and inputting data into online systems. The ADM systems effectively commanded service users to undertake labour which was previously the responsibility of departmental officers (Eubanks, 2018). Bob, who supported two children to access services through the NDIS, spoke about the time it took to self-manage, which involved: claiming receipts, uploading documents or uploading receipts, just constantly checking the plan, responding to messages and all those sorts of things because everything has to be through online portals now particularly when it comes to myGov sorts of platforms [where] you get the messages through your phone. (Bob, interviewee 5)

(In)human(e)

(In)human(e) is at the centre of the counter-map for two reasons. First, it represents that it is individual humans and their families who are the intended recipients of the services. Second, it shows how power flows through the other key themes: accuracy/punishment, efficiency/extraction and personalisation/exclusion. Humans are the centre of the system. There is a deliberate play on words in the term (in)human(e). Even with fully digitised services, where there is no human engaging in the service delivery, there is a human being in the system who is being provided (or not provided) service and the values and objectives embedded in these systems are themselves human values and objectives. Simultaneously, as the systems increasingly have less human involvement in their administration (inhuman), they are still expected to be humane – to help rather than cause further harm. At the same time, interviewees with disability felt that they were not seen, that they were invisible to data-driven automated systems because their needs were so unique – rendering them feeling like they were perceived as not human and the services were experienced as harmful and inhumane. In addition, throughout the #NotMyDebt accounts, the words ‘inhumane’, ‘inhuman’, ‘not human’ and ‘the department of inhuman/e’ services recurred; the term (in)human(e) is a portmanteau of these sentiments reflecting the collective feelings of the service users’ experiences.

A key part of the (in)human(e) portmanteau is that the ADM systems do not see the complexity of human experiences, and this can lead to inhumane outcomes. An important theme in the semi-structured interviews was that the ADM systems collect a lot of information and then do something with it that impacts a person, but that the ADMs cannot ‘know’ the person. Jennifer, an advocate and user of disability services, expressed the difficulty automated systems had engaging with the sheer diversity of human needs in the disability sector on a day-to-day basis: a survey that you fill in and depending on what answers you give, will then make a decision based off what boxes you ticked and then that can, I guess, affect the results of the decisions that get made on your behalf without having an, even, conversation with you in person about did, um, did the box that you ticked actually reflect you. Within the disability context the nuances around function and functioning as, we know we have our good days and our bad days. Without that nuance some people could have decisions made for them on their behalf that don't reflect their actual needs. (Jennifer, interviewee 8)

In this quotation Jennifer expressed frustration about not easily fitting into predefined categories. When the services she and her clients could access were allocated according to the boxes ticked, this meant that there was a gap between the administrative categories and the individual's actual needs. Translating an individual's actual needs into bureaucratic categories has always been a challenge. ADMs cannot deal with the complexities of day-to-day life; welfare bureaucracies also are not designed to deal with such complexities. However, the human interaction between the client and street-level administrator co-completing a form to accurately represent the needs of the service user was diminished in many ADMs included in the study, meaning service users were required to complete the form without assistance directly in the digital interface.

Administrative systems are designed to simplify. It could be argued that critique that stops at simplification as a problem does injustice to the complexities of scale in delivering any welfare service. As Annemarie Mol reflected: The point of asking what is being counted is not to argue that counting is doomed to do injustice to the complexity of life. This is certain. The point, instead, is to discover how and in what ways. For in that process something is foregrounded and something else turned into unimportant detail. Some changes are made irrelevant whereas others are celebrated as improvements or mourned as detrimental. (Mol, 2002, p. 235)

A further tension in the (in)human(e) matrix relates to the ‘human in the loop’ in the system included in the case studies. Almost all (27 out of 28) ADM systems included in the study operated with humans involved in some way. Even if the technology employed was fully automated – like the abandoned chatbot NADIA, 3 which aimed to simulate real human video interaction to guide people with disability through the NDIS 4 – for the technology to work as a decision-making system there needed to be some human involvement. The system requires questions by service users addressed to the chatbot to work. Without a human asking NADIA questions, no response or decision-making can occur.

Gillingham (Gillingham, 2019; Gillingham & Humphreys, 2010) argued that the inclusion of humans does not ensure increased sensitivity or the incorporation of expert, experienced professional discretion. In participant observation of the use of digital assisted decision-making by child protection workers, he observed that practitioners were more likely to accept a computer-generated decision than to query or use their professional discretion to make a different decision. To decide differently to the computer would mean taking the full responsibility of the decision. In child and family services where an incorrect decision can mean the death of a child, this is a difficult burden for a single worker to carry. Often, Gillingham notes, the output from the automation was blindly accepted and led to intervention, even when a human was incorporated into the decision-making system. In these circumstances, from the perspective of a service user or a person who has come into contact with child protection services, even when a human is included in the automated assisted decision, it can effectively be an ‘other than’ human, an inhuman decision. In the context of child protection where children can be removed from their family and placed into out-of-home care, to remove human decision makers from this process is also inhumane and risks removing important human empathy and oversight.

Weitzberg et al. (2021, p. 1) argue that polarising debates around the use of technology in the aid and service delivery sector are harmful to nuanced and empirically focused debates about the real lived experience of service users. While neither techno-apologetic nor technophobic, this paper acknowledges the complexities of surveillance and other automated technologies ‘as harm and as care’ (Armstrong 1995 referenced in Weitzberg et al., 2021, p. 1), placing the application of automated technologies within an oppressive and harmful neoliberal policy environment that aims to change individuals rather than help them where they are at. The counter-map developed in this paper deliberately located itself as critical to these apparatuses, and hopes to pave some small steps towards alternative thinking about how to provide welfare to populations respectfully and as a human right. In a world that is increasingly becoming digitised and data-driven, it is important to keep working towards alternative futures where power, disadvantage and difference are not experienced as exclusionary by those who need care and support. Thinking about the use of automated technologies from the position of service users is an important step towards imagining alternative digital futures.

Conclusion

This paper conducted a counter-mapping of ADM in Australian social services to articulate an alternative account that animates power differentials and disrupts the politics of technological imaginaries. Major themes that emerged from the counter-mapping were that ADMs were experienced by social services users as punitive, extractive, excluding and (in)human(e), which conflicts with the ‘official’ discourses of ADM in social services as accurate, efficient and personalised. A counter-map was drawn with the portmanteau (in)human(e) at the centre. It demonstrated how the service users at the centre of the systems experienced accuracy as punishment. It also showed how service users experienced efficiency as extraction when their labour was harnessed to increase the speed and cost-effectiveness of digital administrative systems. In addition, it showed how personalisation was excluding when complex systems shut out those who struggled with digital literacy, blocking their access to vital social services. Further, the counter-map showed how, when ADM systems are unable to recognise the needs of people with a disability, they were experienced as inhuman and inhumane even when humans were included in the decision-making matrix.

Footnotes

Acknowledgements

Analysis and retrieval of the #NotMyDebt site data was assisted by Brooke Ann Coco and Dr Lutfun Nahar Lata.

Funding

The author completed this research as part of a Postdoctoral Research Fellowship with the ARC Automated Decision-Making and Society Centre of Excellence based at the University of Queensland.

Notes

Author biography

Lyndal Sleep is Lecturer in the Queensland Centre for Domestic and Family Violence Research based at Central Queensland University, and an Affiliate of the ARC Automated Decision-Making and Society Centre of Excellence (ADM + S CoE). Lyndal's research focuses on qualitative investigation of moments of technological change in social services delivery, focusing on the experiences of services users with the aim to advocate for more respectful, empowering and safe social services delivery. In her previous role as Research Fellow in the ADM + S CoE, Lyndal mapped ADM systems in use in the social services in Australia and the Asia Pacific, as well as developed innovative methods to investigate the experiences of ADM for vulnerable services users. She has also been developing new frameworks for understanding the interconnections between ADM, administrative structures and domestic violence. She is passionate about creating a future with more respectful and safe social services delivery. Lyndal's academic background spans science, technology and society studies, sociology, social work and law. Post-PhD she has been Chief Investigator in a number of research projects, including the NSW Ombudsman funded Mapping Automated Decision-Making Tools in Administrative Decision-Making, Notre Dame-IBM Technology Ethics Lab funded Trauma-informed AI: Developing and Testing a Practical AI Audit Framework for Use in Social Service, and ANROWS funded project Domestic Violence, Social Security Law and the Couple Rule.