Abstract

Proponents of digital transformation in welfare provision argue that digital technologies can take over tedious tasks and free resources to provide better care for those in need. Digital technologies, however, are often developed in line with a logic of control and dispositions around surveillance and efficiency which challenge careful engagements. In this conceptual article, we explore emerging tensions in digital welfare arrangements and propose an analytical framework to illuminate interrelations between care and control in values, infrastructures, and work related to the provision of welfare services. Illustrating the application of this framework with three empirical vignettes, we discuss how digital welfare technologies shape relations between state care and control. Considering theories of care in relation to the digital welfare state, we give a nuanced perspective on the contingencies of the digital transformation and add to the literature concerned with social justice by attending to everyday lived experiences in-between control and care.

Introduction

This article follows an observation that national digital policies for social service delivery in Europe are increasingly legitimised through promises of care and flourishing. For example, a digital strategy of the German federal government (Federal Government of Germany, 2022, p. 4) states that ‘harnessing the potential of digitalization [will] improve the cohesion of our society, promote public welfare and increase the performance of industry, the scientific and research communities and government’. The strategy proceeds to state the potential of a digital transformation ‘for better education and more equal opportunities’, to ‘ensure self-determination and social inclusion’ of people with disabilities and contribute ‘to better and more efficient [health]care’. Scholars have also pointed out the role of care in different domains of welfare service provision (Craig, 2020; Kaun & Forsman, 2022; Knezevic, 2020; Power et al., 2022). With this article, we contribute to such literature by exploring the increasingly digital welfare provision from the conceptual lens of care (Puig de la Bellacasa, 2017).

In the global North where we conduct our studies, welfare technologies use digital data in decisions on citizenship; welfare benefit eligibility assessment, calculation, and payments; fraud detection, or risk scoring (Alston, 2019; Busemeyer et al., 2022). We understand this digital transformation as a comprehensive institutional change taking place alongside the introduction of digital technologies and respective practices and processes into the organisational structures of public administrations and their interactions with citizens and welfare organisations (Mergel et al., 2019). Accumulating data about citizens, state administrations are not only enabled to provide personalised welfare services and the respective care for citizens, but also exert control over individuals and their position in the welfare system. The current focus in the literature is on the digital transformation of the welfare state, with scholars attending to processes of automation, practices of control, and possible harms (Dencik & Kaun, 2020; Jansen, 2021; Redden et al., 2020).

Digital transformation, as such, seems to favour a logic of control and dispositions around efficiency and effectiveness which, more often than not, challenge careful engagements. Attempts through such control to protect welfare systems from possible fraud, for example, were shown to have harmful outcomes often rooted in intersectional discrimination (e.g. Allhutter et al., 2020; Eubanks, 2017). The tensions between logics of care and logics of control become apparent, for example, in the competing obligations to care for individuals or the system. It is argued that the state's caring for an unfraudulent welfare system is in the interest of all, while it can simultaneously inflict harms on those individuals that it is meant to care for. At the same time, through increasing digitalisation, carers and beneficiaries of social services can also be supported, for example by releasing them from tedious tasks, freeing resources to provide care, creating visibility, and directing attention to certain hardships.

Both logics of care and (paternalistic) control coexist in welfare provision (Held, 2018; Khader, 2011). We argue in this article that digital technologies afford a transformation of the welfare state that privileges logics of control over logics of care and turns care into potentially harmful control practice. It is this relation between ‘caring’ for the public good through digital technologies and the modes of control these technologies afford that we discuss in this conceptual article. Following the Care Collective (2020, p. 5), we understand government care as ‘a social capacity and activity involving the nurturing of all that is necessary for the welfare and flourishing of life’. By juxtaposing the caring promises and practices of state control exerted through the digital welfare system, we shed light on some of the ambivalences of care in digital welfare, illuminating contingencies and conflicts in public care provision. We also add to the literature concerned with social justice (Dencik et al., 2022; Knezevic, 2020; Taylor, 2017) by shifting attention from big-scale ethics of digital technologies to the everyday lived experiences in-between state control and care.

To do that, we first review the concept of care and sketch out how practices of control materialise in the socio-technical arrangements of the digital welfare state. Drawing on each of the authors’ broader empirical research projects conducted in Germany (see Zakharova & Jarke, 2022) and Sweden (Kaun & Löfgren, forthcoming), we illustrate the tensions in the distribution of care and control in socio-technical arrangements of welfare through three empirical vignettes addressing different parts of the population and different types of digital welfare services: demographic ageing, affordable housing, and education. We are not so much interested in the differences between the individual welfare state systems or domains, but in how digital welfare states operate in a tension between care and control. Subsequently, we propose a three-level analytical framework for careful engagement with digital welfare including (1) values, (2) digital infrastructures, and (3) data work required for functioning digital welfare. We contribute to the field by integrating theories of care into digital welfare and conceptualising these in relation to state control.

The concept of care

The concept and ethics of care has a long tradition in feminist and sociological thought (Williams, 2018). Developed to draw attention to the gendered, unpaid, and often invisible physical and particularly domestic labour of women, this concept has been applied as a political and ethical instrument for shifting social relations (Sevenhuijsen, 1998; Tronto & Fisher, 1990). Intersectional perspectives further shed light on the multiple ways in which care is not only gendered, but is also provided and received according to other, overlapping socio-demographic and socio-economic categories (Fitts & Soldatic, 2022; Lynch et al., 2021). In this context, care can be understood as an embodied, affective, and often reciprocal practice of recognition of and tending to the needs of others. The recognition of otherness and heterogeneity of lived experiences, needs, and motivations is central to such – often unacknowledged and tedious – practices of care. At the same time, care is neither innocent nor free of power dynamics, but a particular way of configuring relations between caregivers and care-receivers. In this relational perspective, care ‘starts in the middle of things’ (Tronto, 2016, p. 4) and is directed at repair, maintenance, and continuation of various relations making the world as good to live in as possible (Tronto & Fisher, 1990). Closely related works on digital and data justice also concerned with issues of power, in/visibility, and public good are, in turn, attuned to the politics, structures, and accountabilities of digital and automated systems, often on a global scale (Dencik et al., 2022; Taylor, 2017).

The aspiration to create a ‘good’ world defines care not only as a practice, but also as a normative, ethico-political obligation (Puig de la Bellacasa, 2017). As an obligation, care follows certain teleo-affective dispositions, guiding how exactly practices of caring should be conducted and to whom they should be directed. These conflicting dispositions configure how care is provided and present a productive analytical lens for attending not only to the good but also to the ‘dark sides’ (Martin et al., 2015) of care. Furthermore, as Power et al. (2022, pp. 1173–4, original emphasis) argue ‘[t]he motives and effects of care are not necessarily benevolent or beneficial. Put another way, care practices are not always caring’. Across empirical domains, examples of careless or even harmful implications of care are abound. Banerjee and colleagues (2022) and Duclos and Criado (2020) conceptualise how, under the guise of care, reactionary politics and practices can be reinforced. Emejulu and Bassel (2018) illustrate paternalistic care and discuss how the withdrawal of state care and shifting of responsibility towards individuals in favour of self-determination and self-sufficiency does not support but rather hampers the achievement of these qualities. Taking practices and obligations to care as a point of departure in the middle of things, we can follow different kinds of careful or careless relations.

Many scholars following a similar relational approach to care point out the continuous shifts of care in society as various practices, forms, and spaces of care are transforming over time (Fine, 2005; Jupp, 2022; Power & Hall, 2018; Sevenhuijsen, 2003). In the sociological tradition such shifts concern the subjectivation of individuals, particularly those belonging to marginalised groups (Emejulu & Bassel, 2018); state, communal, and local organising of care provision (Power & Bergan, 2019); the practice and labour of care in various societal settings (Craig, 2020; Fitts & Soldatic, 2022; Wilding & Baldassar, 2018); and policies in the provision of social services (Sevenhuijsen, 2003). The informal and shadow care infrastructures and practices are discussed as complementing, substituting, opposing, and fixing the state care provision in local, communal networks (Bowlby & McKie, 2019; Power et al., 2022).

While many of the earlier conceptualisations of care focused on human relations, more recent research addresses the role of non-humans (Puig de la Bellacasa, 2017) and particularly technologies (Criado & Rodríguez-Giralt, 2016; Kaun & Forsman, 2022; Zakharova & Jarke, 2022) or infrastructures (Power et al., 2022) in the configuration of care relations. From this standpoint, care is seen as distributed across socio-technical arrangements composed of both humans and non-humans. Acknowledging this distribution of care allows us to accommodate both the current digital transformations of society and the situated, local character of practices of care which rely on and cover various instances of human and non-human others.

Digital welfare states and technologies of control and care

The welfare state is based on the obligation of the state to provide support and assistance to citizens with specific needs and risks that emerge in a market-based society (Dencik & Kaun, 2020). It dates back to the last quarter of the 19th century as a response to the deep societal, economic, and political transformations such as the continuing industrialisation and the rise of capitalism, urbanisation, and population growth (Castles et al., 2010). To meet the emerging hardships public policies were developed to implement new support structures. The responses of states (in particular in the global North) differed in many ways, resulting in a ‘remarkable diversity’ of welfare systems, for example with respect to timing, policy goals, or the precise forms of institutional solutions (Castles et al., 2010).

To organise the operations of these emerging welfare systems, national and international policies increasingly required implementation of standards and metrics in various domains such as health care and ageing (Katz, 1996), social housing (Kersbergen & Vis, 2013), and education (Hartong, 2015). This standardisation of public services, enhanced through but by far pre-dating processes of digital transformation, often results in the bureaucratisation of care: professional judgement and specialised, often tacit knowledge of care workers on site – such as social, health, or educational professionals – gives way to uniform, measurable characteristics of what ‘good’ care should look like according to national and international policies. Earlier research described such bureaucratic transformation – government ‘programs’ and ‘schemes’ – in terms of growing state control (Rose, 2000), while more recent studies into the histories of digital transformation illustrate how design of digital technologies follows similar logics of standardised classification and sorting (see Eubanks, 2017 for US and; Ruppert & Scheel, 2021 for European examples).

Over the past 15 years, the welfare states have begun to profoundly transform through the introduction of digital technologies and infrastructures, often presented as measures to increase efficiency in public administration and increasingly considered as a governance reform (Yeung, 2018). Earlier research has addressed questions of digitalisation and welfare primarily as a challenge of how to meet new needs in highly digitised states and changing employment markets with digital automation (Busemeyer et al., 2022). This approach is complicated when the welfare state administration itself increasingly encompasses a plethora of different digital processes and technologies (Bullock, 2019).

Many theoretical traditions were developed to conceptualise the relations between the state, technologies, and practices of control. According to Rose (2000, p. 323), these studies: have charted the assembly of complex and hybrid technologies of government, linking together forms of judgement, modes of perception, practices of calculation, types of authority, architectural forms, machinery and all manner of technical devices with the aspiration of producing certain outcomes in terms of the conduct of the governed – the technologies that we have come to know as the social insurance system, the schooling system, the penal system and so forth.

Reviewing work on such technologies and control by Deleuze, Foucault, and others, Rose (2000) suggested viewing state control through practices of inclusion into and exclusion from certain socio-technical arrangements and practices. Digital technologies as such have been proven to often prioritise control and surveillance over human flourishing and their well-being (Eubanks, 2017). In the digital welfare state, an accumulation and use of digital data serve the goals of state control (Dencik & Kaun, 2020). However, different groups are implicated in diverse ways by the digital welfare state.

This ambivalent relationship between public care provision directed at the public good and the control mechanisms of the state is central to the socio-technical arrangements of digital welfare. While state actors such as public administrations and data teams have a somewhat critical view on their role in configuring government service provision, recent research shows that they are still guided by top-down and paternalistic views on vulnerable citizens in particular and society at large (Broomfield & Reutter, 2022). Therefore, as digital technologies co-configure the welfare system, the way they are designed and used becomes important. By providing whoever uses technologies with ‘perceptual cues that can be performed with’ them (Costanza-Chock, 2020a, p. 8), digital systems’ design nudges people towards performing certain actions and avoiding others, hence materialising the logic of control.

Such logics and respective values of control can be found in government policies and regulations, imaginaries about public actors, technologies and infrastructures of the state, and public institutions’ practices in dealing with digitalisation and automation of state services (Kaun, 2022; Prasad, 2022; Redden et al., 2020; Reutter, 2022). The vague definitions, lack of consistency in defining certain infrastructures, algorithms, and data, as well as often restricted access to technologies and datasets further hinder careful engagements with the socio-technical arrangements of digital welfare. In multiple instances when government data are being opened for public scrutiny, the practical challenges of fulfilling caring promises via technologies of state control become visible (Poirier, 2021). For example, it becomes visible that users perform invisible integrational work adjusting technologies for current needs, fostering technology adoption, and further distribution as they alter, appropriate, and give them new purposes and functions.

Three vignettes: Digital welfare provision between care and control

To illustrate our conceptual approach and highlight the tensions between state care and control in digital welfare, we provide three empirical vignettes from three research projects conducted by the individual authors of this article. Each of the three vignettes features different groups of citizens, different vulnerabilities, and welfare infrastructures in welfare systems in Germany and Sweden: demographic ageing, social housing, and education. We draw on this variety following our initial observation that digital transformation in many welfare domains is motivated and justified through the promise of care for the populace. Illustrating how this motivation works out empirically we are not so much interested in the differences between welfare systems and domains as we are in the tensions between care and control rendered visible through our analysis.

The first vignette is reported by Juliane. It draws on workshops with German health care providers and explores how presumably caring digital welfare technologies to support ageing populations take control over the lives of older adults. The workshops were part of a 12-month government-funded research project in one of Germany's federal states to explore the benefits, risks, non/use and appropriation of Ambient Assistive Living (AAL) technologies in care homes and residential housing. In the second vignette, Anne reports findings from a research project that aims to explore communal welfare infrastructures that are increasingly integrated with digital and so-called smart technology (see Kaun & Löfgren, forthcoming). The vignette describes digital infrastructures of residential housing in a Swedish town. Similar to the first vignette, it shows how an infrastructure for control is introduced through a narrative of care for citizens’ safety and well-being. The findings are based on field observations and interviews with representatives from the public housing company, the start-up company and a tech incubator that facilitated the collaboration. The third vignette reported by Irina and Juliane is based on the findings from the research project DATAFIED (see Zakharova & Jarke, 2022) exploring what role digital data and associated data work play in configuring ‘good’ education in Germany. In the vignette, we draw on over 30 interviews with school personnel who perform data care work maintaining datasets required for the organisation of schooling but who lack control over how these datasets are designed.

Vignette 1: Demographic ageing and the digital welfare state

Demographic ageing has been described as one of the key challenges of the 21st century (United Nations, 2020). In particular, a rising demand for long-term care needs is articulated as a burden and threat to our welfare systems (Calvaresi et al., 2017). Welfare organisations increasingly delegate (parts of) their care obligation to digital technologies such as AAL, smart home devices and smart health applications (Bischof & Jarke, 2021; Cozza et al., 2019). Such digital welfare gerontechnologies are presented as solutions to ‘increasing the efficiency and productivity of used resources in the ageing societies’ (Calvaresi et al., 2017, p. 240), they promise to support independent living and everyday activities, promote mobility and healthier lifestyles, ensure safety, and support caregivers (Dunne et al., 2021; Jovanovic et al., 2022). The following quote from a representative of a US-based predictive analytics health care application demonstrates the narrative around the values of digital welfare technologies as a response to demographic ageing: So the shift in paradigm is to go more from the reactive senior care model which is what we do today […] into a proactive model […]. To do this, it requires lots of observation and today we are using human caregivers for all of that observation. [However, the] number of caregivers is not sufficient to take care of the aging population. It really brings us to that conundrum of how do we get beyond human observation. As you all know […] there is no such thing as 24-by-7 continuous human observation, it's just not scalable. But human observation also tends to be subjective. So it's unreliable, inefficient and oftentimes it's expensive. (Valencell, 2019)

Through the ‘delegation’ of care obligations and care work to digital welfare technologies, such technologies increasingly become part of the care arrangements of welfare states. To do so such systems monitor the homes and bodies of older people – ultimately increasingly controlling their lives and diminishing their privacy, autonomy, and agency.

The tension between control and care becomes visible when considering how such technologies reconfigure how, by whom, and for what purpose care is provided and received.

The narrative about providing better care through digital welfare technologies exemplified in the quote above neglects (and hence invisibilises) the work of embedding and maintaining such technologies in existing welfare care arrangements and making them work. In a series of workshops that Juliane conducted with 30 German social and health care providers about their experiences with AAL technologies, many pointed out how their care work suddenly had to extend to caring for digital technologies in order to maintain welfare services.

In particular, they stressed three points: First, more often than not, it is not older adults who decide to install digital welfare technologies in their homes but professional care providers or next of kin. There is a general suspicion and/or refusal of such technologies from many older adults. Participants argued that people who are trusted need to use their ‘leap of faith’ (workshop participant) to convince older adults to try/use/trust such technologies to become part of welfare care arrangements. Second, even if digital welfare technologies move into the homes of older adults, a lot of additional ‘care work’ for the technologies is required. During the workshop, a health care provider addressed this as long-term support ‘so that older adults do not have to fear that they are buying something and are left alone with it’ (workshop participant). Third, in many instances, so-called ‘digital ambassadors’ or ‘digital guides’ are seen as crucial for facilitating the relation-building between welfare technologies, older adults, and their everyday infrastructures: ‘The acceptance of such technologies by older people is not a problem, but rather existing infrastructures and financial aspects’ (workshop participant). This also points to the intersectional aspects of using and non-using gerontechnologies. For example, well-educated older men were reported to have much higher rates of digital literacies and access to AAL technologies than migrant older women. In addition, many commercial welfare technologies target those older populations who are understood to drive the ‘silver economy’ and have the economic means to self-determine which welfare technologies they require (see also Cozza et al., 2019). Overall, such welfare technologies reduce the care work of caregivers to mere observation that is controlling the lives of older adults, rather than appreciating, for example, the emotional and affective labour involved in long-term care work. Care in a digital welfare state then becomes mainly framed as a matter of effectively controlling the lives of older people.

Vignette 2: Infrastructures of security and surveillance in social housing constituting care

One important pillar of welfare provision has been access to affordable housing (Meagher & Szebehely, 2013). Consequently, public and social housing has had a historically strong link to the emergence of the welfare state. In the Nordic welfare system for example, the aim to provide affordable and modern housing for all guided the housing policies and subsidies for public housing from the mid-1930s onwards. The following example draws on experiences from the Swedish welfare system as an illustration of how public and affordable housing is increasingly intertwined with digital infrastructures often motivated by providing better conditions for care provision.

Since the 1990s there has been an increased commercialisation of the municipal housing companies and an adaptation to a deepened commercialism.

The public housing companies are now being run according to commercial principles and the possibilities for value transfers to the municipalities were widened. In this context of increased commercialisation, the introduction of digital infrastructures in the social housing sector is often motivated by, first, efficiency and, second, security and safety arguments. To explore the connection between digital infrastructures, public safety, and social housing, Anne has explored a case study around smart sensors that have been installed in several common areas of social housing facilities. This includes a residential housing block and a garage where residents pass by daily. The sensors register data on movement patterns, sounds, and smells in common areas such as hallways. They can also register the number of mobile phones used nearby. The simple box looks similar to a smoke detector and attracts little attention from residents.

Initially the sensor collected data for about two weeks. After this initial data gathering, Collactivate, the start-up company developing the sensor, and the public housing company identified events that would be defined as deviating from ‘normal’ behaviour patterns, for example the congregation of people and activity in common areas late at night. Based on the definition of so-called ‘thresholds for deviances’, a number of measures have been set up. At an initial stage, the box might activate blinking lights, or an alarm sound to disturb people. In a next step, security, or the so-called safety-group, would be alerted and sent to the area. If the public housing company notices deviations from what have been defined as the usual movement patterns repeatedly for the same area, they will increase the measures and systematically document the identified problem through, for example, additional surveillance. One representative from the public housing company confirmed that the measures put in place will always start with the least expensive one, for example the blinking light or disturbing sounds.

The systematically registered sensor data are made available to the housing company through a dashboard that highlights hotspot areas. However, the visualisations and datasets are used not only for direct, partly automated safety measures, such as blinking lights or security patrols, but also feed into broader networks, for example, a collaboration with local police and the municipality. The hotspots identified through the datapoints produced with the help of the sensors inform decisions on resources and prioritisations in local police work and the safety work of the municipalities. The involvement of the residents in the introduction process of the digital monitoring infrastructure was low. Initially, it was not clear whether residents should be informed about the smart sensors at all. In the end, they received a note from the housing company informing them about the pilot project.

In this case, the tension between care and control lies in the double aim of providing safe housing while the means to achieve this goal are exclusively focused on monitoring and controlling deviant behaviour. It comes as no surprise that the sensor technology was piloted in residential housing blocks that are primarily homes to populations with migrant background and that are situated in so-called vulnerable areas. The sensor project highlights that vulnerable populations often emerge as testing grounds for new technologies relating to the intersection of class-based racial and citizenship dynamics (Benjamin, 2019). Understandably, these areas emerge as an excellent context for sensors that supposedly help to efficiently fight vandalism, which in turn – following ideas of the broken window theory (Shapiro, 2018) – potentially leads to more serious crime.

Even if the housing company and municipality are aware of underlying logics of exclusion and vulnerability, they see the collection of data and automated response to deviant behaviour as an efficient way to contribute to safety in public areas even only in the short term. While the interviews hint at long-term investments, including in social work and increased resources for these vulnerable areas, these monitoring technologies are considered as an important starting point, for example to systematically evaluate public safety threats. However, instead of long-term care arrangements being implemented, they are postponed as the introduction of the sensors takes priority.

Vignette 3: Maintaining data as new educational care work

Public education is among the core societal domains concerned with the future development of the state as it performs an important function in educating citizens by introducing them to the culture, values, and operations of a society. This function, alongside the protection of the rights of children, leads to high demands on control and care, which coexist at multiple levels of education, from individual classrooms to the organisation of schooling to educational policies.

With the ongoing introduction of digital technologies into the educational domain, new actors and new rationalities become relevant (Williamson, 2022). Following the overall narratives of a digital educational transformation, educational technologies aim at increasing effectiveness and efficiency to facilitate more personalised learning. Hence, to justify the digital transformation of education, promises of care are made. Among them, a promise of more individualised teaching and learning, more time for teachers to engage with individual students, and early prediction of possible educational risks. The digital technologies provided by commercial actors such as Microsoft or Google, and design teams incorporated into the public administration of education, become intermediaries between school actors and policy-makers. Irina and Juliane studied such transformative processes in German K-12 schools (see Zakharova & Jarke, 2022 for more detail). We observed that maintaining datasets becomes an essential part of personnel's care for their school as the datasets represent the schools to controlling agencies, (future) schoolchildren's parents, and broader publics. Many control practices become directed at overseeing educational data. Technologies producing such data – learning management systems (LMS) – however, require a lot of additional, partially unaccounted for, and not fully recognised maintenance work. LMSs rely on both manual input of data about the student corpus (from names and addresses to grades and assessments) and the automated logging of behavioural data within such systems. Other, informal systems are usually prohibited, even though each school has their own additional tools to manage data before submitting it to an LMS. School personnel using the LMS respond to the data requirements they receive through the systems, having little control over the output.

Elsewhere we have shown how, in German schools, secretaries carry out much of the infrastructural and data work (Zakharova & Jarke, 2022). Only marginally considered as users of such systems and, hence, usually not included in the decision-making about their design, secretaries do most of the manual data input. They spend days at the beginning of each school year manually updating data about students (e.g. address, parents'/guardians' status required for disclosing a child's personal information), correcting ‘wrong’ automated data entries (e.g. class assignment based on age rather than study track record), creating workarounds when certain data required by the systems is missing from the students’ files (e.g. birthdays or birthplaces of refugee students, their previous studies record), or eliciting such missing data by personally seeking out students and their guardians. By inputting data into the systems, secretaries make sure that every student is accounted for – assigned to a class, is eligible for any relevant social services, etc.

Remarkably, this work, while being an essential part of secretaries’ jobs, often does not appear in the job descriptions or advertisements, and probably would have required higher wages than the often part-time working women (as they were in our case) receive. The predominantly male and highly educated federal state's data team members, on the contrary, belong to the more highly valued and rewarded professional group of data scientists or software designers. Besides this recognition, their work often remains opaque to the school actors, hindering methods of bottom-up control or questioning of the system design and the data it requires.

Developing workarounds whenever they lack control to adjust the system to the actual needs of school organisation, the secretaries and other school actors we spoke to often wonder how certain aspects of educational technologies design came to be. In relation to this question, technology designers incorporated into ministries of education report on mediating between the schools’ and political requirements on such systems. Schools responsible for the education and well-being of their students are only partially included in such negotiations.

The care and maintenance tasks are not the single reason for the misalignment between the gendered and rather low-paid work of secretaries and the decision-making practices of software designers. Attending to this misalignment from the lens of care, however, allows researchers to question the division between highly valued design tasks and invisible everyday data care work.

Three tensions between care and control in digital welfare states: values, infrastructures, and work

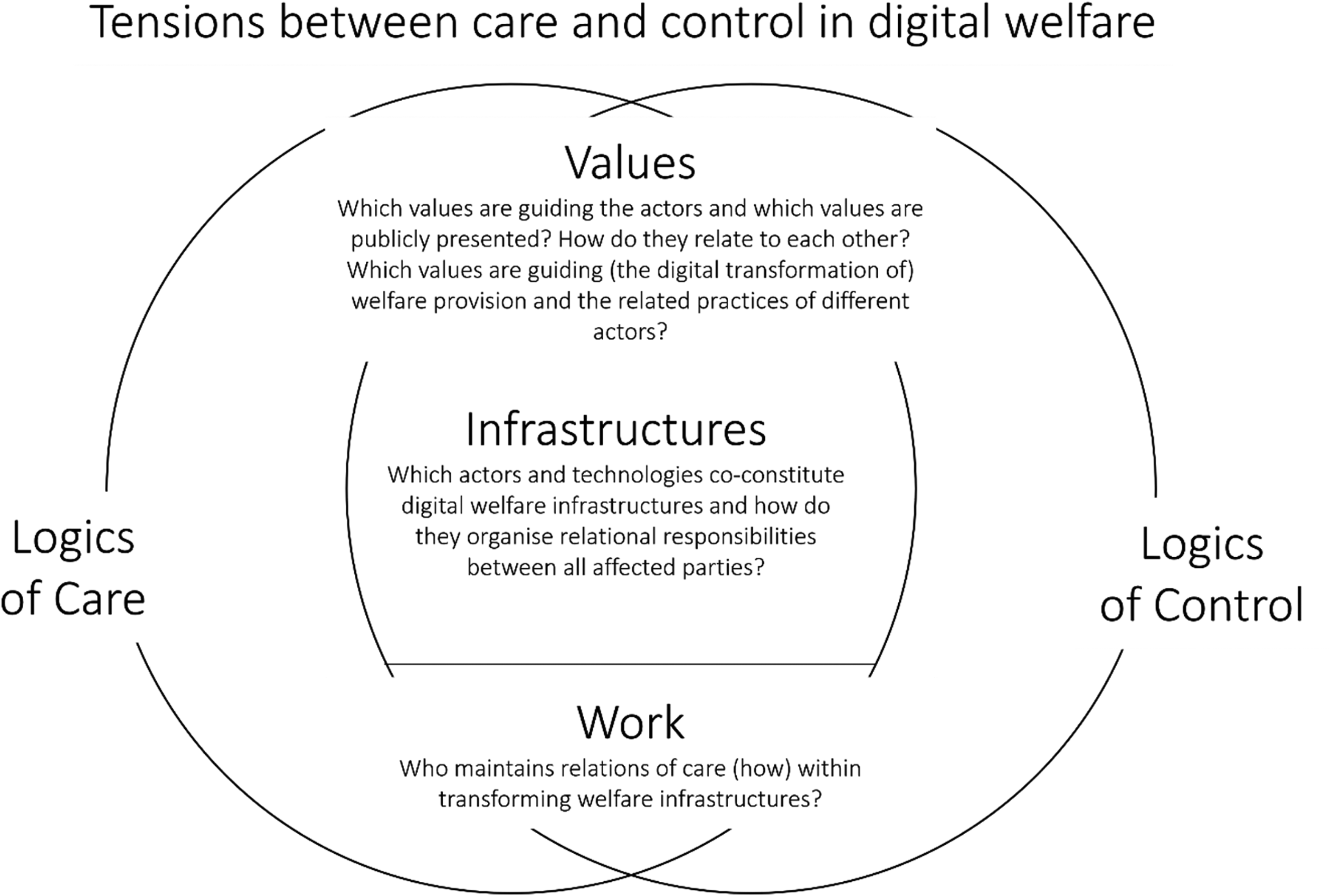

In the following, we propose an analytical framework (see Figure 1) for making sense of the tensions between care and control that can be observed in our three empirical vignettes of digital welfare. This framework combines three interrelated layers of how these tensions come to be and play out empirically: the values governing public care provision, the infrastructures for organising care work, and the infrastructural as well as data work to configure and maintain care arrangements. We argue that if there is a tension on any one of these levels, this tension affects all levels of the framework, regardless of whether these analytical levels empirically comprise different actors, hierarchies, organisations, and technologies. We demonstrate how the design and maintenance of digital welfare infrastructures connect actors within distributed arrangements of public service provision and mediate care and control according to situated practices of service delivery.

A framework for analysing tensions between care and control in processes of digital transformation.

Welfare state systems have fundamentally been based on the balance between providing care – to different degrees depending on the specific welfare system – and control. This balance is currently shifted by data-driven services and forms of surveillance that have led to the emergence of digital welfare states and became their incremental part. By design, digital technologies prioritise control and surveillance rather than care. The logic of control is increasingly expressed through safety and surveillance initiatives as the ones explored in this article. This refers to the paternalistic tendencies of many care relations that aim to enforce or prevent certain behaviour: for example, promoting what are considered as healthy lifestyles by punishing or more subtly nudging citizens (Kersbergen & Vis, 2013). The care practices within the welfare state range from providing support for fundamental needs to supporting citizens in their flourishing and good life (Jakobsson et al., 2022; Lomborg, 2020). In contrast to the logic of control, care can also be characterised as more open-ended and as something that requires interaction as well as negotiation between different actors. Providing care also necessitates the contextualisation and situatedness of needs as well as desires (Ruckenstein & Turunen, 2019).

Caring values

The first perspective addresses the kinds of values guiding the introduction and provision of digital welfare services in relation to the values used to justify digital transformation. This is prominent in all vignettes, when digital welfare technologies promise to make current practices more efficient, scalable, objective – hence better – while at the same time normatively positioning the digital care provision above values such as older adults’, residents’, and school personnel's privacy, autonomy, and agency. All three vignettes illustrate how presumably caring values justify control along the intersecting but visible to different degrees lines of age (Vignette 1), migration background (Vignette 2), and gendered work (Vignette 3).

This has two implications in the socio-technical arrangements of digital welfare. First, by shifting responsibilities to individual people, state care provision can take a patronising turn, placing individuals in the position of having to prove why they need certain care or why some systems they require for flourishing need to also be cared for and, therefore, require additional resources for maintenance.

The second implication stems from the design of technologies of digital welfare. Research has repeatedly shown that design for, and values of, individualisation are common for digital technologies (Bigo et al., 2019; Lake, 2017). While such technologies advertise personalisation, their design, in contrast, advances standardisation of individual cases and experiences. By design, no technological solution can accommodate the infinity of exceptions to the standard. Rather, technologies materialise certain ‘norms’ identified and inscribed in their design (Costanza-Chock, 2020b). This is particularly true for technologies used by public actors which are required to serve heterogeneous groups of citizens. Such core values of care as recognition of otherness and its intersectionality become written out in the technology design in favour of standardised solutions. From that perspective, state care aiming to support individual citizens promotes gathering more data about already marginalised groups making them more vulnerable to mechanisms of state control.

Infrastructures of care

The second framework level considers how digital technologies organise practices of care and control. From this perspective, infrastructures are relational: they define relationships and distribute practices between and across various public and private, communal, as well as technological actors. They often stay invisible and require maintenance work to function (Star & Ruhleder, 1996). Digital infrastructures have also been described as modular – composed of multiple individual systems, and scalable, often transgressing one societal domain or practice (Plantin et al., 2018). As such, infrastructures can be understood as political materialisations of careful or careless values setting teleo-affective dispositions and values which guide practices and define the power relations between various actors. For example, our vignettes 2 and 3 show how digital welfare infrastructures define which individuals will be recognised as ‘good’ housing residents or as pivotal members of school administration, or where and for what purpose to allocate resources – for example, for policing the neighbourhood or improving quality of living. At the same time, as these infrastructures become adapted by their users or beneficiaries, they become mediators of sometimes diverging values and practices. So, school secretaries create informal infrastructures and adapt existing ones to ‘fix’ the failures of state care according to their own perspective on what needs to be cared for and how.

Care work and data work

The third perspective draws on the early conceptualisations of (domestic) care work in addressing the invisibility and precarity of work required from various actors involved in care provision and reception in welfare states. It shifts the focus onto workers within the public administrations and other publicly funded or publicly relevant institutions like healthcare-related facilities (Stevens & Beaulieu, 2023), schools (Zakharova & Jarke, 2022), and libraries (Kaun & Forsman, 2022), or the (non-)citizens (Habich-Sobiegalla & Kostka, 2022) who grapple with the necessity to attend to digital data in their care practices. Besides their traditional work, these actors are expected to perform tasks supporting the digital transformation of care provision as Vignette 3 illustrates. Similarly, people affected by the digital transformation of welfare provision also need to care for the infrastructures of the care services provision in order to receive these services, as in the case with older adults in Vignette 1. Whether this care work remains unrewarded and/or unacknowledged, leaving those in need of care with a double responsibility to care for themselves and the technologies, oftentimes depends on their age, gender, migration background, place of residence, or work roles.

Further, the care work of embedding digital technologies into existing relations between the state and its citizens, communities, civil society, media, and commercial actors often remains invisible and is implicitly allocated to the groups affected by these (new) technologies. Vignettes 1 and 3 illustrate how some older people and school actors lack their own resources – literacies, capabilities, monetary compensation – to perform such integrational care work. Vignette 2 illustrates, in contrast, how technological infrastructures configure the data outputs, while the residents would be required to adjust their behaviours in order to produce data representing a ‘good’ neighbourhood. In sum, while digital infrastructures of the welfare state serve to facilitate and control certain values, behaviours, and social orders, they leave little leeway for the tedious, complex, invisible work needed to integrate the technological systems into the existing socio-technical arrangements.

It is this data care work that ascribes data meanings and values compliant with institutional goals, turning these data into an informationally, politically, and economically valuable and usable resource (Jarke et al., 2022; Stevens & Beaulieu, 2023). Besides the data collection, cleaning, and dataset preparation required to conduct any kind of data analysis, data care work includes interpretation, meaning-making and negotiation, repairing and fixing ‘broken’, unaccomplished, or ‘wrong’ datasets, translating the meanings of data and datasets as they move across digital infrastructures and actors following their own, often conflicting perspectives and values. Often, such data care work only implicitly engages with digital data and datasets. Rather, it is about upholding relationships between organisational, institutional, individual, communal, and public actors who are brought together through digital welfare technologies (Pink et al., 2018). Simply put, much of the care work cannot be translated into data outputs to be accounted for.

Conclusion

In this article, we bring the feminist concept and ethics of care together with sociological literature exploring the state functions and services to explicate how the use of digital technologies reconfigures the welfare system. To analyse the arising tensions between state control and care, we proposed a three-dimensional analytical framework directed at different constitutive elements of the socio-technical arrangements of the digital welfare state: values, digital infrastructures, and data work. Following Power and colleagues (2022) we add to the literature suggesting the care lens to consider the shadows between care and control and to take what occupies these shadows as a starting point of research engagements.

From that standpoint, welfare technologies achieve their caring values of convenience, better health or education, security and safety, or reduced workload differently for different groups of people. In foregrounding one of such presumably caring values, however, they fail to live up to the intersecting and lived realities of people of different genders, ages, migration backgrounds, geographies, and professions. As digital infrastructures prioritise control and standardisation over openness, it is challenging to embrace the open-ended outcomes of care in digital welfare. Such care ‘cannot be completed in the measurable time that both bureaucratization and commodification require’ (Lynch et al., 2021, p. 54) and might not be malleable to digitalisation processes. For example, much of the care work described in our empirical vignettes and the subsequent discussion not only is invisible to the state actors but is also difficult to quantify and datafy so as to be accounted for or rewarded.

Furthermore, intersectionally marginalised groups, such as older adults lacking financial resources and digital literacy skills, or residents of social housing in ‘vulnerable’ city districts, already experience more control and data extraction about their lives. Any further datafication of their care work in order to make it more visible and acknowledged would not necessarily benefit but possibly rather stabilise their marginalised and vulnerable positions. This points to a broad gap in the currently predominant practices of introducing and implementing digital transformation. Many resources are deployed to develop, maintain, and support digital infrastructures while the – literal – relational work that these infrastructures are doing is neglected. It remains an open question what alternative modes, attuned to the situatedness of care, can be developed. We also echo researchers and activists who argue for more community-led, independent, participatory projects to develop collective solutions to the lack of (appropriate) care. An overview provided in this article may serve as an analytical tool for researchers and practitioners in public administrations who wish to follow the call of the Care Collective ‘to put care in the very centre of life’ (The Care Collective et al., 2020, p. 5).

Footnotes

Acknowledgements

The research reported in section ‘Vignette 1: Demographic ageing and the digital welfare state’ was conducted by Juliane Jarke while she was affiliated with the Institute for Information Management Bremen (ifib). The research reported in section ‘Vignette 2: Infrastructures of security and surveillance in social housing constituting care’ was conducted by Anne Kaun and partially funded by the Baltic Sea Foundation (Grant S2-20-0007). The research reported in section ‘Vignette 3: Maintaining data as new educational care work’ was conducted by Irina Zakharova and Juliane Jarke while they were affiliated with the Institute for Information Management Bremen (ifib) and funded by the German Federal Ministry of Education and Research (BMBF, research project DATAFIED, funding number 01JD1803A). The authors thank the special issue editors and the anonymous reviewers for the insightful and helpful comments on the earlier versions of the manuscript.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: Research by Anne Kaun was partially funded by the Baltic Sea Foundation (Grant S2-20-0007). Research by Irina Zakharova and Juliane Jarke was funded by the German Federal Ministry of Education and Research (BMBF, research project DATAFIED, funding number 01JD1803A).

Author Biographies

Irina Zakharova is postdoctoral researcher at the Leibniz University Hannover. Her research is in the fields of Data Studies and digital and feminist Science and Technology Studies. Irina is interested in data practices in public welfare services provision, datafication of public education, and academic knowledge production in the datafied world.

Anne Kaun is Professor of Media and Communication Studies at Södertörn University in Stockholm. In several projects, she is currently exploring algorithmic automation in welfare provision as well as the history of digitalization in Sweden. In 2023, she published the book Prison Media together with Fredrik Stiernstedt with MIT Press.

Juliane Jarke is Professor of Digital Societies at the University of Graz. She works at the intersection of Critical Data Studies, digital STS and Participatory Design Research with a focus on the public sector, education and on ageing populations. Her latest book is the edited volume “Algorithmic Regimes: Methods, Interactions, Politics” (2024, Amsterdam University Press).