Abstract

In the social security sector, both in the Netherlands and abroad, the irresponsible use of algorithmic profiling technologies to combat misallocation of social security benefits has contributed to instances of discrimination. While a developing legal framework—made up of fundamental rights, the GDPR, and the recently adopted AI Act—requires risks of discrimination to be identified and mitigated, it offers limited guidance on how to implement these obligations. Social security agencies seeking to address the systemic risk of discrimination in algorithmic profiling are frustrated by the associated sociotechnical complexity, scientific uncertainty, and socio-legal ambiguity. This study examines how two Dutch social security agencies address discrimination risks in algorithmic profiling, using van Asselt and Renn's principles of systemic risk governance—communication and inclusion, integration, and reflection—as a theoretical framework. Through case studies involving document analysis and interviews, the research explores how these agencies address discrimination risks. This study highlights the importance of socially robust risk governance structures that encompass both simpler rule-based selection systems and trained algorithmic systems, that include scientific and client perspectives, and draw on the experiences of other agencies.

Introduction

The Netherlands has repeatedly seen cases in which citizens have been discriminated against by social security agencies using algorithmic profiling systems. In this paper, algorithmic profiling is used as an umbrella term to refer broadly to the design, implementation, and use of algorithmically embedded risk profiles within prevention and enforcement-oriented risk selection processes. This encompasses both simpler, rule-based algorithms and more advanced algorithmic systems trained using artificial intelligence techniques such as machine learning. These systems are employed to identify and select citizens from databases where there is a potentially higher risk of social security benefit misallocation—including errors, misuse, abuse, or, in some instances, fraud (Autoriteit Persoonsgegevens, 2024; European Union Agency for Fundamental Rights, 2018b: 19–22; Human Rights College, 2025; Al Khatib et al., 2024).

Instances of discrimination include the application of the ‘System Risk Indication’ in so-called ‘problematic neighborhoods’ (SyRI, 2020), the discriminatory mislabeling of alleged fraudsters by the Dutch Tax Authority (Amnesty International, 2021; Autoriteit Persoonsgegevens, 2021), the use of a biased machine learning algorithm for supervising welfare fraud in Rotterdam (Constantaras et al., 2023), and, most recently, the deployment of an indirectly discriminatory system to detect fraud in student benefits (Autoriteit Personsgegevens, 2024). In light of these scandals, citizens are more aware and distrustful of the government's use of algorithmic profiling technologies (KPMG, 2023), and governmental agencies are wrestling with how to address discrimination risks (Digitale Overheid, 2024; Rensen, 2024).

Under fundamental rights legislation, the right to non-discrimination and equal treatment establish substantive legal principles safeguarding against discrimination. 1 The more specialized General Data Protection Regulation 2 (hereafter GDPR) and recently adopted AI Act 3 (hereafter AI Act) introduce provisions obligating organizations using algorithmic profiling technologies to identify and mitigate risks to fundamental rights, including discrimination. Risks to fundamental rights posed by technological advancements are not only used to justify regulation, but within the GDPR and AI Act they are treated as an object of regulation, requiring accountability and action from organizations to address these risks (De Gregorio and Dunn, 2022; Gstrein, 2022; Kusche, 2024; Yeung and Ranchordás, 2024). 4

Mitigating the risk of discrimination in algorithmic profiling is complex and knowledge-intensive, as discrimination in this context can be regarded as a ‘systemic risk’—a recognized risk problem within the field of risk governance (Renn et al., 2011; Van Asselt and Renn, 2011; Yeung and Ranchordás, 2024: 66). Systemic risks are risk problems that cannot easily be measured in terms of probabilities or quantifiable effects due to high degrees of complexity, uncertainty, and ambiguity (Renn et al., 2011). In particular, discrimination in algorithmic profiling can be characterized as a systemic risk due to the sociotechnical complexity of algorithmic profiling systems, scientific uncertainty in identifying and mitigating risks to fundamental rights, and socio-legal ambiguity associated with a broad regulatory framework and divergent views on the identification, mitigation, and acceptance of risks. Risk governance authors van Asselt and Renn posit that a lack of clarity and knowledge exists about how to effectively address systemic risks (Klinke and Renn, 2012: 276; Renn and Klinke, 2016: 245–246).

In light of a developing legal landscape requiring organizations to address discrimination risks in algorithmic profiling, it becomes relevant to consider how organizations can effectively address these risks in this context. The established notion of systemic risk governance, consisting of the identification, assessment, and management of systemic risks in risk-related decision-making, could offer relevant insights (IRGC, 2018; Van Asselt and Renn, 2011). In particular, authors van Asselt & Renn propose three principles for systemic risk governance—communication and inclusion, integration, and reflection—which should be seriously considered in developing responsible and socially robust systemic risk governance strategies (Van Asselt and Renn, 2011).

Against this backdrop, the following research question is considered: How do public sector social security agencies operationalize their European legal obligations to address discrimination risks in algorithmic profiling? To answer this question, empirical legal methods are used, involving case studies in two public sector social security agencies using algorithmic profiling technologies and addressing potential discrimination risks. Their supervisory and enforcement competences, including information collection and processing, are grounded in the Work and Income Implementation Structure Act (Wet SUWI), which grants broad discretion regarding the implementation of this legislation. 5 Within this discretion, both agencies employ algorithmic profiling systems to identify welfare recipients where there is a higher risk of misallocation of welfare benefits. To this end, Agency 1 uses rule-based risk selection systems, while Agency 2 additionally uses more complex trained models. Likewise, both agencies, in light of a developing legal framework consisting of fundamental rights legislation, the GDPR, and, where later applicable, the AI Act, are identifying and mitigating risks of discrimination.

The study aims to generate insights into how organizations (can) fulfill their legal obligation to tackle the systemic risk of discrimination in algorithmic profiling. The principles of systemic risk governance are used as a framework to describe and analyze how these agencies tackle discrimination (Van Asselt and Renn, 2011). Both agencies studied are evolving in their approaches to tackling misallocation of social security benefits, and how they address risks of discrimination. This study thus provides insights into these organizations’ approaches, and analyses them from a broader academic perspective, thereby contributing to the theoretical foundation of risk governance in this context. It is worth noting that while the appropriateness of algorithmic profiling can and should be subject to public debate, this study is limited to examining the governance of the use of these technologies.

After this introduction, we discuss the contextual and theoretical underpinning of the study. The following section introduces the methodology used, which is based on the principles of systemic risk governance. Then, using the risk governance principles as a descriptive lens, we present the findings on how the two agencies address discrimination. Finally, the two approaches are discussed and reflected on in light of the systemic risk governance principles and literature.

Context and theoretical background: moving from risk regulation to risk governance

Regulating discrimination risks in algorithmic profiling

The legal basis upon which the agencies studied operate, the Wet SUWI, does not specify safeguards against discrimination. Thus, agencies are tasked with interpreting and implementing legal obligations aimed at safeguarding against discrimination, stemming from the European Convention on Human Rights and the GDPR, while also anticipating requirements that will be applicable under the AI Act. 6 Furthermore, when these agencies act within the scope of EU law, such as when applying the GDPR or later the AI Act, they are also bound by the Charter of Fundamental Rights of the European Union. 7

Under fundamental rights legislation, the right to non-discrimination entails the substantive prohibition of discrimination based on protected grounds such as race or ethnicity, while equal treatment requires any differential treatment to be reasonable and fair (Borgesius, 2020; Eder, 2021; Gerards and Borgesius, 2022; Tilburg University, 2021). 8 The right to privacy and data protection regulate the collection and use of data to prevent discriminatory outcomes in algorithmic profiling (Ligue des droits humains, 2022; 9 Roberts, 2015: 2127; The Hague District Court, 2020 10 ). 11 Additionally, the right to an effective remedy aims to ensure that individuals can challenge and seek correction for discriminatory decisions made by organizations employing algorithmic profiling systems (O'Sullivan, 2024). 12 These rights impose both ex-post protections and ex-ante obligations, requiring organizations to address risks of discrimination when designing and deploying algorithmic systems (Borgesius, 2020; Tilburg University, 2021).

More specific to the context of algorithmic profiling, the GDPR and AI Act introduce complementary safeguards requiring agencies using these technologies to identify and mitigate risks of discrimination. The GDPR applies more broadly to the automated processing of personal data, including profiling, which refers to processing personal data to evaluate personal aspects such as one's behavior. 13 This can include, for example, the use of both simple rule-based and complex trained risk profiling systems to select groups where there is a risk of misallocation of benefits (Autoriteit Persoonsgegevens, 2024).

In relation to such systems, the GDPR requires, for example, that the processing is lawful, fair, data such as race and ethnicity are not processed, and that there is meaningful human intervention (Article 29 Working Party, 2017a). 14 Importantly, where data processing poses a high risk to the fundamental rights of citizens, as in the case of algorithmic profiling, Data Protection Impact Assessments (DPIAs) are prescribed to identify and mitigate risks to the privacy and data protection of data subjects, including discrimination (Article 29 Working Party, 2017b). 15

Similarly, the recently adopted AI Act, once applicable, will require organizations to manage risks across the life cycle of high-risk AI systems, including governance practices, assessments of risks to fundamental rights, and the management of such risks. 16 While the AI Act provides a broad definition of AI systems, it remains unclear exactly when risk profiling systems fall within the scope of the regulation (European Commission, 2025; Hacker, 2023; Sousa e Silva, 2024). 17 Thus, while these legal frameworks seek to impose obligations to address discrimination risks associated with technology such as algorithmic profiling, they offer limited guidance on how agencies should operationalize these requirements in practice.

Challenges in addressing the systemic risk of discrimination

Addressing the systemic risk of discrimination in algorithmic profiling is, however, shrouded in complexity, uncertainty, and ambiguity (Van Asselt and Renn, 2011). Risks are complex when they are multicausal, which makes it difficult to attribute discriminatory outcomes to specific factors (Van Asselt and Renn, 2011: 436–437). Discriminatory outcomes are multicausal as they can be traced back to choices and unmitigated social and technical risks in the life cycle of such systems, including their design, implementation, and use (Haitsma, 2023; Marabelli et al., 2021). These include the use of biased training data, directly or indirectly discriminatory predictive criteria, biases in the manual review of selected cases, insufficient monitoring and evaluation mechanisms, and opacity toward external parties including citizens (Amnesty International, 2021; Constantaras et al. 2023; DDPA, 2024; Haitsma, 2023; Mehrabi et al., 2021; SyRI, 2020).

Uncertainty refers to when there is limited scientific knowledge on how to assess and manage risks and potential negative outcomes (Klinke and Renn, 2012: 276). In the context of algorithmic profiling, it is well-established that discrimination is a recurring and structural phenomenon, as demonstrated by countless incidents that clash with the right to non-discrimination, both within and outside of the Netherlands (Amnesty International, 2021; Bouwmeester, 2023; Chowdhury, 2024; Constantaras et al., 2023; NOS, 2024; Pelgrim, 2025; SyRI, 2020). However, interdisciplinary research into the causality of these discriminatory outcomes is still in its infancy (Algorithm Audit, 2024a; Dankloff et al., 2024; Haitsma 2023; Marabelli et al., 2021). While methodologies for addressing discrimination in algorithmic profiling are being developed, further research is still needed to examine their utility and effectiveness (Boonstra et al., 2024; Franzke et al., 2021; Meuwese et al., 2024; Tilburg University, 2021; Vale et al., 2022; Vetter et al., 2023).

Finally, risks are ambiguous when views differ on how to identify, appraise, manage, and justify risks and potential adverse effects (Renn et al., 2011; Van Asselt and Renn, 2011: 437). The legal framework for algorithmic discrimination is broad, key concepts such as fairness, bias and discrimination have multiple and often ambiguous definitions, case law is limited, and the academic field is still developing—together, these factors create socio-legal ambiguity (Gerards and Xenidis, 2021: 28, 107–117; Al Khatib et al., 2024; Mehrabi et al., 2022; Meuwese et al., 2024; Zuiderveen Borgesius, 2023). In particular, views in society and within organizations may differ regarding when the use of algorithmic profiling technologies is appropriate, if and when it is justified to use certain predictive indicators, to what extent bias and overrepresentation of specific groups is acceptable, or how to facilitate meaningful transparency or explainability (Amnesty International, 2024; Loi et al., 2020; Meuwese et al., 2024; Tweede Kamer den Staten Generaal, 2024). This sociotechnical complexity, scientific uncertainty, and sociolegal ambiguity leave organizations on an uneasy footing regarding how to effectively meet their legal obligations to address discrimination.

From risk regulation to risk governance

Synthesizing decades of interdisciplinary research and global efforts directed towards risk governance, authors van Asselt and Renn developed the principles of systemic risk governance to inform responses to systemic risk. The principles of communication and inclusion, integration, and reflection stress the co-production of knowledge to govern risks, with the robustness of this approach being demonstrated by the wide variety of contexts in which it is applied (Van Asselt and Renn, 2011). These include environmental and climate risk, urban planning, supply chain risk, disaster management, public health, and, more recently, data governance (Ahlqvist et al., 2020; Taebi et al., 2020; Di Guilio, 2023; Hanssen et al., 2018; McHugh et al., 2021; Micheli et al., 2020; Renn, 2015; Renn and Klinke, 2016).

Regarding communication and inclusion, communication between a variety of actors included in risk governance is at the core of effective risk governance processes. This involves ensuring the exchange of knowledge, experience, concerns, and perspectives between relevant parties, which include governmental actors, scientific experts, and affected parties or citizen interest groups (Van Asselt and Renn, 2011: 440). Inclusion stipulates that organizations must consider which actors to include, the mechanism for their inclusion, and the scope or mandate of their role in addressing systemic risks (Renn, Klinke & Van Asselt: 246). Mechanisms can, for example, include expert panels, citizen advisory committees, and impact assessments (Renn, 2015: 14; Renn, Klinke & Van Asselt: 246).

Secondly, the integration principle refers to establishing how the resulting knowledge is combined and used to identify risks and address those risks, having due regard for potential consequences, controllability, tolerability, and acceptance (Van Asselt and Renn, 2011: 441–443).

Lastly, according to the reflection principle, organizations must, together with a variety of actors, consistently and intentionally reflect on their approach to addressing the systemic risk in question, to avoid mistreating it as a simple risk (Van Asselt and Renn, 2011: 442–443). These principles aim to promote social learning and co-production of knowledge among parties, thereby fostering legitimacy and trust, and socially robust risk governance solutions based on collective input (Renn, 2015: 14; Renn and Klinke, 2016: 254; Renn et al., 2011: 246).

Methodology

This research is based on two case studies conducted in public sector social security agencies in the Netherlands between September and December 2024. The agencies were selected because they operate on the same legal bases while providing different social security benefits, use algorithmic profiling in combatting misallocation of benefits, and are proactively operationalizing and responding to their developing European legal obligations by addressing potential risks of discrimination. In order to gain access to the agencies and carry out the research, approval was needed from the University of Groningen and from both agencies.

The research considers how organizations fulfill and (can) operationalize their European legal obligations to address discrimination in algorithmic profiling. Based on qualitative data and insights, the study considers and analyzes whether and how this legal framework is stimulating risk governance in the agencies concerned. An analysis of how different national and supranational legal frameworks are interpreted and applied in specific risk-related decisions in the identified risk governance structures is outside the scope of this paper.

The principles of systemic risk governance are applied to two agencies, to understand how they approach and address the systemic risk of discrimination. As such, the principles of communication and inclusion, integration, and reflection are used as a lens to explore and describe their risk governance approaches. Examining naturally emerging similarities and differences between the two case studies provided insights into how public sector social security agencies address—or can address—risks of discrimination.

Mixed methods were used to collect data during these case studies. These primarily included internal document analysis and semi-structured interviews with relevant actors within the agencies, but also, where permitted, the ability to attend meetings and record observations. The data were collected based on informed consent, the results were presented to the agencies for feedback, and both agencies reviewed the publication to ensure factual accuracy. Furthermore, both organizations asked to be pseudonymized and are thus referred to as “Agency 1” and “Agency 2”.

The data collected were used to understand the profiling process and how these organizations approach the governing of risks of discrimination. The internal document analysis included organizational policy documents, process descriptions, impact assessments, and relevant reports. Additionally, the author was allowed in some instances to attend meetings and take notes, which, after being reviewed by the organization, could be used as data.

During the study, 31 participants were interviewed. Participants were selected based on their roles and their involvement in the prevention and enforcement process, as well as the risk governance process. They included section managers and heads of departments; legal and policy advisors; privacy, ethics, information security, and external relations officers; data analysts; and risk researchers investigating potential phenomena of misallocation. In the interviews, questions were asked about algorithmic profiling processes and the governance of risks of discrimination. The interviews were transcribed, and subsequently analyzed using the risk governance principles, focusing on the overarching risk governance landscape, risk mitigation assessments, the actors involved and their input, and mechanisms for reflection.

Results

This section first briefly examines the algorithmic profiling process and the systems used for prevention and enforcement in these agencies. Secondly, the focus will be placed on how the agencies approach and adress risks of discrimination in algorithmic profiling, beginning with a brief look at their overarching governance landscape, before examining their risk governance approaches through the lens of the risk governance principles.

Algorithmic profiling in Agency 1 and Agency 2

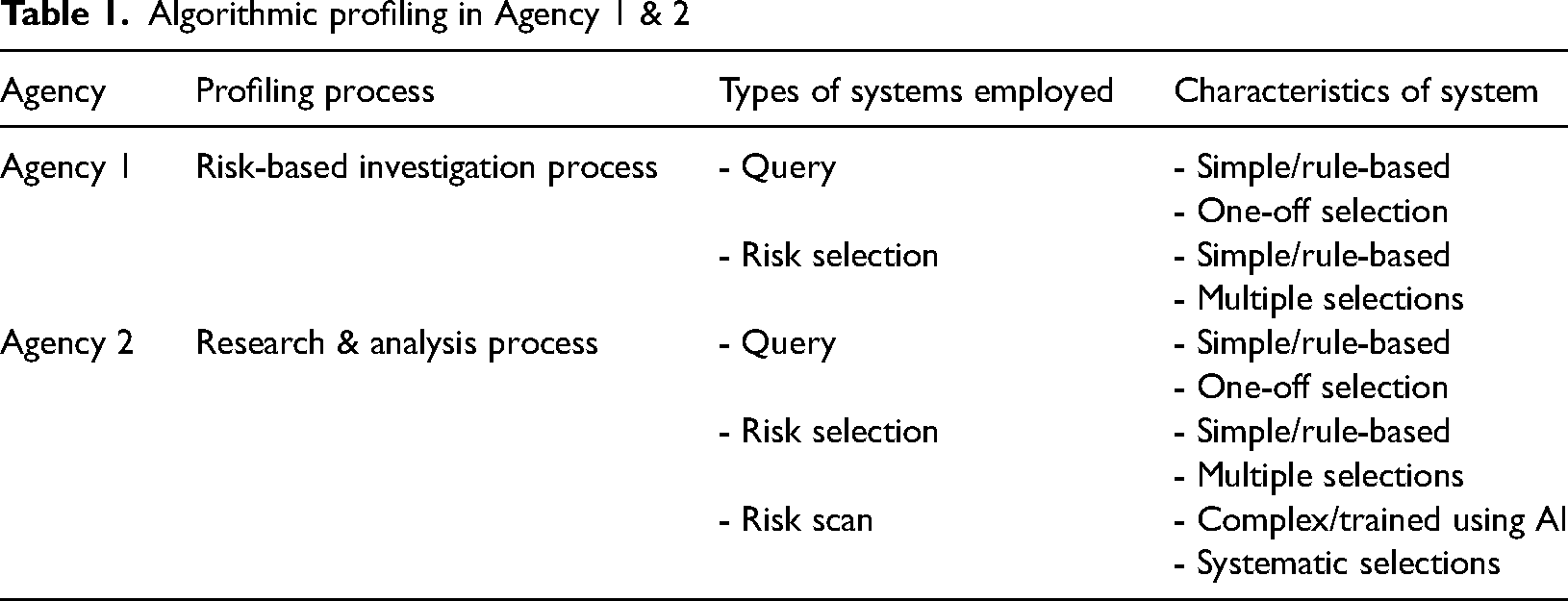

While their processes and types of systems differ, both agencies conduct risk-based investigations using risk profiles to combat potential misallocation of social security benefits. Regarding the prompting of a risk-based investigation, in Agency 1 risk profiles are created according to the risk-based investigation process, which is laid down in a process description (Risk-based investigations process description, 2024). This process begins with an internal or external signal—such as insights from prevention and enforcement agents or a fraud scandal in the media—indicating a phenomenon of misallocation. The organ responsible for risk-based investigations in the prevention and enforcement department receives this signal, investigates it, and, if warranted, can take mitigating measures to prevent the risk.

In Agency 2, during the Integral Fraud Risk Analysis process (hereafter referred to as IFRA), legal provisions enforced by the agency are examined to determine if there is a risk of misallocation. These risks are further investigated according to the “Research and Analysis Process” (hereafter R&A Process) laid down in the “Research and Analysis Protocol” (hereafter R&A Protocol) (R&A Protocol, 2024). The R&A process is carried out by multidisciplinary “Research and Analysis teams” (hereafter R&A teams) in the prevention and enforcement department, consisting of risk researchers, data analysts, risk mitigation advisors, enforcement agents, and sometimes a behavioral scientist. The goal of the R & A Process is to clarify the nature and scope of a risk of misallocation and investigate the population in order to inform the potential mitigation of the risk.

Query. In Agency 1, if warranted and legally and ethically justified, a simple rule-based risk profile, consisting of selection criteria, is developed and applied to select a population corresponding to the identified phenomenon. This profile enables a one-time selection—called a “query”—of a population in a data base which fit the risk profile for investigation. Likewise, in Agency 2, if during the R&A process, behavioral research, process research, and data analysis provide insufficient insight into a risk, client investigations may be used. In this case, a risk profile in the form of a simple rule-based “query” is created and applied, to select a sample of clients matching the potential risk phenomenon. If the selection itself provides insufficient insights into the risk, then the cases are investigated, clients are spoken to, and where warranted are subject to an enforcement decision.

Risk selection. In Agency 1, if the query-based investigation proves effective, and other mitigating measures inadequate, the same risk profile may be applied more than once to a larger population, to further tackle the misallocation. This requires all required steps, assessments, and approvals in the “risk-based investigation process description” to be followed again. Similarly, in Agency 2, following the R&A process and if necessary and proportionate, algorithmic systems with a more repressive character may be used. In particular, if a high rate of misallocation is found and preventive measures prove inadequate, the query can be further developed into a recurring risk selection used to select and mitigate the risk within a larger population. Employees involved in building these systems explained that risk selections focus on “singular relationships because someone is selected based on if they fulfill all criteria (within a profile)”. While these selections typically consist of fewer variables, a data analyst clarified that they can be made up “of quite a few lines of code to select the correct population”.

Trained models. Finally, the agencies studied have either experimented with developing, or are actually using trained models. Previously, Agency 1 was developing a machine learning system for risk assessments to detect and predict non-compliance with benefit conditions. However, respondents stated that due to increased societal attention regarding the risks associated with AI and the evolving regulatory landscape stemming from the AI Act, this development is currently on hold, as the agency would like to exercise caution.

Agnency 2, on the other hand, uses risk scans. Risk scans are models trained using machine learning techniques, which use more variables corresponding to a phenomenon to make selections. These models use combinations of weighted variables to process data provided by clients, resulting in a score relevant to making selections. A data analyst explained that these systems are also more systematic, in that every month thousands of clients go through the system, which also brings the risk that mistakes and biases can have more far-reaching consequences (Table 1).

Algorithmic profiling in Agency 1 & 2

Systemic risk governance in Agency 1 and Agency 2

Overarching risk governance landscape. The risk governance approaches in both agencies are embedded in the context of algorithm policies, process descriptions and protocols, and assessment methodologies are used to facilitate the identification and mitigation of risks of discrimination. Regarding algorithm policies, Agency 1 has an overarching algorithm policy that is currently in the later stages of development (Algorithm Policy, 2025). This document lays down policy principles aimed at providing further norms for the responsible development, application, and use of algorithmic systems within the organization. To ensure the application of these principles, overarching roles and responsibilities relevant to the governance of algorithmic systems are established. These include figures and bodies such as the privacy officer and data protection officer, the agencies ethics center, an external ethics committee, and a working group on algorithms. In Agency 2 an algorithm policy is also in place, called the Model Risk Management Policy (hereafter MRM policy). This policy seeks to ensure the legally and ethically responsible development of models that are explainable and auditable. It delineates the life cycle of models, assigns roles and responsibilities along “three lines of defense” including internal and external actors, such as the Client Council, and prescribes assessments (MRM Policy, 2024).

In both agencies, there is currently uncertainty about how rule-based systems—queries and risk selections—fit within their respective algorithm policies. Agency 1’s algorithm policy was drafted with a focus primarily on trained algorithms. According to the section manager, it was only this year, with increased attention to simpler risk profiles, that it became “clear to us that risk profiles should also fall under the policy”. Thus, “the idea is that simpler risk profiles should fall under the algorithm policy, but we still need to figure out how”.

Likewise, in Agency 2 the MRM policy was designed with their risk scan systems in mind, with these systems constituting a “model” and thus falling within the scope of the policy. Accordingly, the MRM policy applies to risk scan systems and prescribes a Data Protection Impact Assessment (DPIA) and Fundamental Rights and Algorithms Impact Assessment (hereafter FRAIA) to assess risks of discrimination associated with these systems. However, risk selection systems technically fall outside the scope of the definition of a model, and the policy. As a result, the DPIA currently serves as the main assessment for risks of discrimination associated with risk selection systems. Several respondents indicated that the agency tries to apply elements of the MRM policy to new and revised risk selections, but is in the process of creating a policy tailored to these systems.

Given the lack of clarity on how simpler risk profiles fit within the algorithm policy, process descriptions and risk-based investigation protocols act as the most relevant guiding documents, formalizing processes and prescribing assessment points. In Agency 1, while not a formal policy, the “Risk-based investigation process description” reflects key trade-offs and policy choices and is strictly followed. The document is particularly relevant to discrimination, as it formalizes points for ethical and legal assessments during risk-based investigations. In Agency 2, for client research using simpler queries, the R&A protocol describes the methodology for the desired execution of the R&A process and prescribes points for assessments. This protocol prescribes the “ethical deliberation” and the DPIA as mechanisms for identifying risks of discrimination.

The systemic risk governance principles applied

Communication and inclusion

Both agencies use a variety of assessments to bring together stakeholders when identifying and mitigating the risks of discrimination. Both agencies use DPIAs and ethical assessments, and Agency 2, in the case of risk scans, uses the FRAIA.

DPIA. Firstly, in Agency 1, an overarching DPIA and privacy quick scans are important for addressing risks of discrimination. The privacy officers, together with the chief information security officer and members of the prevention and enforcement department, came together to conduct a DPIA for the main prevention and enforcement processes of the agency. This is complemented by privacy quick scans, where the prevention and enforcement department collaborate with the privacy officer to assess whether the existing DPIA covers a new data processing operation, or if additional risks, including discrimination, require a new DPIA.

Agency 2 conducts multiple DPIAs. The number and type of actors involved and the length of the assessments increases progressively—from queries, to risk selections, to risk scans (DPIA Query, 2024; DPIA Risk Selection, 2024; DPIA Risk Scan, 2023). Prior to using a query, which assumes a one-time selection, a DPIA will be conducted. For risk selection systems, a DPIA is also conducted, as here multiple selections using a risk profile are assumed. DPIAs for these types of systems are conducted by members of the R&A teams together with privacy and security officers, and legal advisers. In the case of a risk scan, a DPIA will be conducted, since it involves a systematic selection of individuals based on the collection and processing of large amounts of data. For these systems, more actors participate in the assessment, including additional representatives from consultancy firms and the legal affairs department (DPIA Risk Scan, 2023). All DPIAs in Agency 2 result in a report that will ultimately be submitted to the data protection officer for approval.

Ethical assessment. Secondly, both agencies make use of ethical assessments. In Agency 1 an ethical assessment is used to bring together parties to identify and mitigate risks of discrimination related to each risk profile. The agency is still contemplating who will structurally participate in the ethical assessments in the future, and how the various actors’ roles within the assessment will take shape. The only assessment conducted so far included the section manager, a data analyst, a risk researcher, a member of the agency’s ethics research center, and an external relations representative (Ethical Assessment, 2024). Complementing the ethical assessment, a legal assessment is interwoven throughout the risk-based investigation process and ethical assessment, which includes the department of legal affairs with in-house lawyers.

In Agency 2, before any client investigation is conducted via the use of a query, an “ethical deliberation” takes place. Even though R&A teams may have sufficient grounds to opt for investigating clients using a query, the team must consider whether they should investigate clients. While this instrument is still being developed, it was explained that it should serve as a point of deliberation, a chance to discuss potential adverse impacts for the clients of Agency 2, including possible discriminatory impacts. In light of the interdisciplinary nature of R&A teams, this is a mechanism by which various parties pool expertise to reach a decision. So far, the ethical deliberation has been carried out once, without assigned roles, and the method for carrying it out is still in development (Memo Ethical Deliberation, 2024). In the future, the R&A team is interested in experimenting with involving the Client Council in the ethical deliberation, to enhance the transparency and explainability of client research.

FRAIA. Finally, unique to Agency 2's risk scan systems, the MRM policy additionally prescribes the externally developed FRAIA methodology as a mechanism for identifying and mitigating risks of discrimination. The FRAIA considers ethical aspects and risks to a broad range of fundamental rights, including the right to non-discrimination and equal treatment (Gerards et al., 2022). The assessment fosters interdisciplinary dialogue regarding responsible design, implementation, and use of algorithmic systems. A range of actors are involved in the various parts of the assessment: interest groups, clients, organizational actors such as data scientists and domain experts, legal advisors, and ethics consultants.

Integration

DPIA. In Agency 1, privacy officers, the chief information security officer, and prevention and enforcement employees delivered input for the overarching DPIA. This entailed finding commonalities by conducting impact assessments to distill a list of common risks and mitigation measures, including those relevant to discrimination. Regarding discrimination, within the assessment it is established that prevention and enforcement processes should be ethical and objective, and trade-offs must be motivated and documented. Other parts of the organization, such as the ethics center and legal affairs department, are involved in ensuring that a balance is struck between enforcement and privacy.

In a quick scan, the aim is to establish if a separate DPIA is warranted and to ensure a balance between privacy and prevention and enforcement. Questions regarding discrimination will also be asked by the privacy officer regarding if, for example, protected data categories “such as nationality and age” are necessary. The privacy officer further shared that collecting such data for risk profiling, may “meet the needs of enforcement” but may not meet “the legal requirements that the privacy officer oversees, for example, because the profile is not objective, or the selected population could be smaller and more diverse”.

In Agency 2, regardless of the type of system, the same aspects are assessed in the DPIAs (DPIA Query, 2024; DPIA Risk Scan, 2023; DPIA Risk Selection, 2024). In the DPIAs in Agency 2, prevention and enforcement actors typically have the role of writing up the DPIA, having consulted other actors, providing feedback, and giving the final advice and approval. Privacy and security officers, along with legal advisers, play an instrumental role by providing feedback on the drafted reports, concerning privacy and broader legal aspects of the risk profile. Final approval is given by the data protection officer.

Firstly, the assessment describes the processing, including the data processed, the goal and type of processing, and if automated decision-making and profiling is used. Secondly, it considers the legality of processing, including the legal basis, special categories of data, the necessity of the processing, transparency and rights of data subjects. Subsequently, the risks for data subjects are identified, ranked on likelihood and impact, and mitigating measures are established. Regarding direct discrimination, characteristics such as nationality, orientation, age, and gender cannot be collected and processed in selections. Indirect discrimination is also discussed, including risks of bias, stigmatization, and irresponsible profiling.

Managers in Agency 2 stated that the agency prioritizes privacy, which results in many thorough and lengthy DPIAs being carried out, with constant dialogue between the actors involved. While participants in prevention and enforcement recognize its importance, it was described as time-consuming and occasionally repetitive, and was sometimes perceived as excessively cautious. Furthermore, it was noted that identifying discrimination is not always easy. While direct discrimination is a relatively objective criterion, indirect discrimination is a more open category that was noted to be difficult to detect without data for bias testing. A head of the department noted that it seems that “what is not discrimination today, could be discrimination tomorrow”.

Ethical assessment. In Agency 1, the ethical assessment focuses on aspects such as public values affected, data quality, biases, direct and indirect discrimination, human intervention, and effective remedies, with the actors collectively answering the questions in the assessment (Ethical Assessment, 2024). The section manager explained that data analysts and risk researchers play a key role, as they are in the first instance “tasked with identifying risks and drafting mitigation measures”. This is due to their quantitative and qualitative knowledge related to the risk-based investigation and relevant population.

Data analysts deliver input concerning data and data quality, and conduct a bias assessment using the data they have to identify biases in the risk population with regard to nationality, being born abroad, having lived abroad, gender, and age. A data analyst noted that “there will always be bias” and it is still not certain “when bias is unacceptable”, meaning that the actors challenge each other in this, and the management team may have to take the final decision in light of this uncertainty. Furthermore, the agency can only test for bias based on the data it has; for categories such as ethnicity, therefore, where they do not have those data and cannot collect them, bias cannot be assessed.

Risk researchers likewise play a key role in the ethical assessment, delivering input regarding the selected population and the means of investigation. Challenges highlighted by risk researchers are that ethics can be “quite vague” as there is often not a clear “right or wrong”. Regarding bias and discrimination, a risk researcher notes that even with a “clean selection” overrepresentation can still occur, which “always leads to discussions”, and underscores the importance of clearly justifying the selection criteria used in a profile.

The perspective of the ethics center is important to the assessment, as they ensure that ethical issues that need to be discussed are put on the table, and decisions in the gray areas of the law are well-reasoned. The agency’s ethics center was involved in developing and piloting the assessment, but in the future their input will likely be limited to being a sparring partner and formally reviewing the assessment.

In the legal assessment, the lawyers consider legal risks, which can include direct and indirect discrimination related to risk profiles and their criteria (Ethical Assessment, 2024). Where a risk of discrimination is identified, for example in the ethical assessment, a lawyer considers “if there is discrimination, if it passes the legal test, if it can be justified, and how best to shape the investigation”. When assessing indirect discrimination, a lawyer explains that “you try to create a scenario for yourself regarding the outcome of applying certain selection criteria, and you think about whether the resulting differentiation is permissible and justifiable”. In the context of indirect discrimination, “you never know what you will run into, so you need to keep an open mind”. Finally, as part of this legal assessment, the legal affairs department must approve the ethical assessment.

In the ethical deliberation in Agency 2, the actors involved discuss risks and potential (adverse) impacts on the citizen, and the outcome of this discussion is documented in a memo (Memo Ethical Deliberation, 2024; R&A Protocol, 2024). A manager explained that the ethical deliberation stimulates broader questions such as “whether it is appropriate to use this selection, is there a chance of unequal treatment, and is the risk phenomenon big enough” to justify a certain selection. According to the R&A protocol, a member of the R&A team is in the lead, with risk researchers, data analysts, risk mitigation advisors and management playing roles ranging from being responsible, consulted, informed, and accountable.

FRAIA. Finally, in Agency 2, a FRAIA is carried out for risk scan systems (Gerards et al., 2022). The first part evaluates the necessity and purpose of the risk scan, public values (e.g. equality), the legal basis, and stakeholder roles. Input is provided by organizational actors, domain experts, citizens, and legal and privacy professionals. The second part examines the algorithm type, data quality, biases, security, accuracy, and transparency, with input from domain experts, data and algorithm professionals, and ethics officers. It also assesses whether certain indicators should be used. The third part addresses human intervention, algorithm effects, communication, and evaluation, incorporating input from the project leader, data professionals, ethics officers and citizens with regard to effects and communication. The final part identifies risks to fundamental rights, such as non-discrimination and equal treatment, and proposes mitigation measures. The project lead, domain expert, and legal advisor assess the risks, provide justifications, and develop mitigation measures. Based on the FRAIAs conducted, the head of Risk Management and Intelligence explained that while valuable, it is a complex, time-consuming instrument, containing questions which overlap with those asked in other assessments or parts of the organization.

Reflection

Reflection on assessments. With regard to reflection, both agencies noted that they reflected on the overarching assessment landscape and methodology chosen, and periodically evaluated compliance with the assessments conducted. Previously in Agency 1, DPIAs were conducted for each new data processing operation, which made it difficult to effectively oversee the management of risks. Likewise, the prevention and enforcement department also found it difficult to demonstrate compliance with DPIAs. This spurred the transition to a DPIA for the main prevention and enforcement processes, with the addition of quick scans, which was noted by a privacy officer to enable more effective periodic evaluations of compliance with mitigation measures. The organization noted the value of using a privacy maturity model to improve the agency’s privacy and data protection impact assessment landscape. Furthermore, in 2025 the ethical assessment methodology will be reconsidered, to work out how best to integrate it into Agency 1's processes. Moreover, the format of the ethical assessment will be evaluated every three years.

Likewise, in Agency 2, periodic evaluations are conducted of the implementation of, and compliance with, DPIAs and FRAIAs. The data protection officer, the head of the privacy team, and the head of the section concerned with risk-based investigations did however note difficulties in overseeing management of risks in DPIAs, citing the high volume of assessments being conducted. Currently, the agency is considering how the DPIA landscape can be further streamlined. Likewise, the R&A protocol was recently revisited and revised to ensure the R&A process is privacy-proof, and adjustments to the ethical deliberation are currently being tried out. Specific to risk scans, the MRM policy and prescribed governance structure, such as the various “lines of defense”, are also periodically evaluated. The head of the section stated that discussion of the FRAIA was frustrated by the fact that it is an external instrument and methodology.

Reflections through internal and external engagement. Both agencies noted that they reflected more broadly on their risk-based investigation processes and governance structures by engaging with internal and external incidents, other organizations, and clients. In Agency 1, “external and internal evaluations” and official scandal reports, including parliamentary inquiries, are used to reflect on their processes and governance. Furthermore, the department of strategy and external affairs tracks developments outside the agency and relays them within the organization, including incidents in the news, relevant developments and best practices, and questions from the media, the director, the relevant ministry, or the House of Representatives. The agency also, on an ad hoc basis, exchanges knowledge about profiling processes and risk governance with other agencies.

Likewise, within Agency 2, one participant noted that reflection is stimulated by incidents occurring both inside and outside the organization, leading to reconsideration and a better “fundament for conducting risk-based investigations” and for “preventing incidents”. Additionally, and similarly to the other agency studied, Agency 2, on an ad hoc basis, exchanges knowledge about profiling and risk governance with other agencies.

Agency 1 also engages its clients in ad hoc client panels and in the agency’s Client Council to discuss algorithmic profiling. A recent client panel discussed the role of AI in the agency’s prevention and enforcement activities, including the fact that clients of the agency prefer to be informed about the use of AI. The organization’s Client Council, an advisory body made up of clients of the agency, was also consulted regarding risk-based investigations, to see, as a head of the department explained, “what kind of questions do we get—is it more about why we do things, or why are we not doing more?”. Prior to the recent contact, the Client Council was last consulted several years ago, and a manager stated about the recent consultation that “it was more about bringing information, than receiving information”. The agency aims to increase client engagement in the future, although challenges remain regarding knowledge disparities and how best to involve them.

In Agency 2, similarly, the Client Council plays a role in reflecting on risk scan systems, ensuring that the agency can explain its actions and rationale to the individuals it supervises. However, participants noted challenges in involving citizens in these processes, primarily due to knowledge disparities and the complexity of the technology. At present, clients are not consulted regarding queries and risk selection systems, although plans exist to include them in the ethical deliberation regarding the appropriateness of client investigations. A coordinator from the privacy team noted that consulting clients is sometimes experienced as challenging and burdensome by the prevention and enforcement department, but could help to better identify risks for clients.

Discussion: addressing discrimination in algorithmic profiling

In the absence of sector-specific regulation, the combination of fundamental rights, the GDPR, and the anticipation of the soon-applicable AI Act provide the obligation to mitigate discrimination risks in data-driven prevention and enforcement. Fundamental rights establish overarching rights and obligations requiring agencies to prevent discrimination ex-ante in their prevention and enforcement practices. 18 The GDPR and, where later applicable, the AI Act then explicitly require agencies to identify and mitigate risks of discrimination in the context of algorithmic profiling. 19 Persistent national scandals in which the use of algorithmic profiling technologies has contributed to clashes with legal safeguards against discrimination have highlighted the urgency and necessity of addressing these risks (Autoriteit Persoonsgegevens, 2021, 2024; SyRI, 2020).

However, the European regulatory framework lacks the necessary specificity to provide an adequate structure for risk governance and risk-related decision-making (Gerards and Xenidis, 2021; Kusche, 2024; Wachter et al., 2021; Zuiderveen Borgesius, 2023). Both agencies are responding to these legal obligations and persistent scandals by taking proactive and intentional steps to establish structures for interdisciplinary risk governance and decision-making. In doing so, these agencies are confronted with the sociotechnical complexity, scientific uncertainty, and socio-legal ambiguity that frustrate risk governance as they operationalize their legal obligations.

To provide some structure for any systemic risk governance, the principles of systemic risk governance—communication and inclusion, integration, and reflection—are proposed to help overcome knowledge deficits and develop responsible and socially robust systemic risk governance strategies (Van Asselt and Renn, 2011). This paper applies the systemic risk governance principles to explore how these agencies are responding to the regulatory call to action and are structuring their risk governance strategies, in order to examine naturally emerging similarities, differences, and recommendations.

Overarching governance landscape

The risk governance approaches of both agencies are embedded in algorithm policies, process descriptions and protocols, and assessment methodologies. This landscape varies depending on the type of system used. For more complex trained models, both organizations have algorithm policies—either established or in development—that outline principles for responsible development and assign roles and responsibilities to internal and external actors, such as external ethics committees and client councils. However, the relationship between simpler rule-based systems—including queries and risk selections—and these algorithm policies remains unclear, as these systems currently technically fall outside the scope of these policies. The applicability of the policies affects the applicable principles, the actors involved and their responsibilities, and the prescribed assessment methodologies. Currently, both organizations are exploring ways either to integrate simpler rule-based systems into existing algorithm policies or to develop additional policies tailored to these systems.

Communication and inclusion

Regarding communication and inclusion, the findings indicate that assessments play a crucial role in responding to the regulatory call to action, by bringing actors together to identify and mitigate risks of discrimination in profiling systems. In particular, DPIAs and quick scans, ethical assessments, and the FRAIA were identified as offering differing but complementary lenses to consider discrimination. Both agencies make choices regarding the assessment methodologies employed, the structure and frequency of assessments, and the actors involved. Interestingly, currently only assessments of trained systems included both internal and external actors, whereas rule-based system assessments engage only internal actors.

The combination of assessments and actors highlights the symbioses between fundamental rights, data protection legislation, ethics, and interdisciplinary dialogue in identifying and mitigating risks of discrimination and justifying risk-related decisions. However, for simpler systems, this dialogue within assessments is currently limited to internal actors, with the underlying rationale for this not being explicit. For these simpler systems, the question then arises whether external perspectives should be included in assessments of these profiles and what their role could be, or if it is sufficient to include them in reflective discussions regarding responsible risk profiling. Here, assessment methodologies such as the FRAIA and the example of Agency 2 potentially involving clients in the ethical deliberation could prove insightful regarding the value of their inclusion and their role.

Integration

Regarding integration, actors collectively discuss and answer questions in assessments relevant to discrimination. Typically, for simpler systems, prevention and enforcement actors initially play a significant role in both organizations in identifying risks and mitigation measures, with other actors playing consulted/supporting, reviewing, or approving roles, although the exact roles of the actors and the relevant process descriptions, protocols, and embedded assessment methodologies are currently still somewhat fluid. For trained systems, however, the roles and responsibilities of various actors in assessments are established in algorithm policies and in the various sections of the FRAIA that dictate the expertise and roles needed to answer specific questions. This difference in the maturity of roles and assessments between types of system is noteworthy, and seems to reflect the recent public and political focus on trained systems.

During assessments, ambiguity was noted regarding when certain selection criteria in risk profiles can lead to indirect discrimination. The fact that bias tests are not always feasible due to legal limitations on data for bias testing further complicated this issue, as reported. Even when such tests can be conducted, uncertainty remains about when bias should be considered unacceptable. This struggle highlights the socio-legal ambiguity of discrimination in algorithmic profiling and the need for complementary lenses in assessments and interdisciplinary dialogue to support well-reasoned decisions on risk profiles.

Reflection

Finally, reflection on risk governance was firstly seen in periodic evaluations of assessment methodologies, landscapes, and compliance with mitigation measures. Both agencies emphasized the need to experiment with assessment methodologies and to later reflect on their content and integration into organizational processes and policies, based on practical experiences. They also stressed the need to regularly review whether the measures documented in individual assessments are being followed in practice, in order to maintain oversight of compliance. In addition, the agencies noted the value of evaluating the overall assessment landscape and whether it serves its intended purpose, as, for example, a high volume of assessments for each profile—though well intended—can increase the workload and complicate risk oversight, ultimately hindering compliance.

Furthermore, the agencies cited engagement with incidents, other organizations, and clients as stimulating broader reflection. Engaging with incidents proved useful, to build a better fundament for risk-based investigations and risk governance. Likewise, engaging with other organizations was noted to be helpful in this regard. However, currently both agencies only engage with other organizations on an ad hoc basis, thereby limiting the structural incorporation of risk governance knowledge stemming from the unique experiences of other organizations addressing similar challenges. Finally, the agencies’ involvement of clients was cited as stimulating reflection; however, this involvement currently focuses primarily on trained systems rather than simpler, rule-based systems. Since risks of discrimination can also arise through the use of these simpler systems, the concerns, experiences and values of potentially affected clients could prove useful.

Conclusion

This study examined how two Dutch public sector social security agencies are operationalizing their developing legal obligations to address the discrimination risks in algorithmic profiling systems, encompassing simpler rule-based risk profiling systems and more complex trained models. Fundamental rights, as well as complementary obligations in the GDPR and the later applicable AI Act, require agencies using algorithmic profiling technologies to address risks of discrimination, the urgency of which has been reinforced by recurring scandals.

However, this developing regulatory framework leaves considerable leeway regarding how to address the risk of discrimination. Despite the lack of specificity in the legal framework and the systemic nature of discrimination, both organizations are responding to an evolving legal landscape and recurring scandals by developing structures to address these risks. This demonstrates that the regulatory framework can, and in some instances does, stimulate the governance of risks of discrimination. However, in light of recurring scandals in the Netherlands, it is clear that these legal obligations are no guarantee that all agencies take action to establish (effective) risk governance strategies.

The principles of systemic risk governance—communication and inclusion, integration, and reflection—are proposed as basic principles to apply when developing socially robust systemic risk governance strategies. The application of the systemic risk governance principles has proved valuable by enabling a structured examination of the risk governance strategies adopted by the two public sector social security agencies studied. The broad nature of the principles and the systemic nature of discrimination in algorithmic profiling makes it difficult to evaluate the effectiveness of risk governance or how to improve risk governance strategies. Nevertheless, application of the risk governance principles and the risk governance literature gave insight into naturally emerging similarities and differences, while also highlighting elements currently absent and the rationale for their inclusion. These findings may thus be of value to other organizations and researchers engaged with the governance of discrimination in algorithmic profiling.

The findings reveal that both agencies studied are intentionally and proactively developing risk governance structures to address the systemic risk of discrimination and encourage an organizational culture of risk awareness and governance. This is evidenced by a landscape of algorithm policies, process descriptions and protocols, the use of assessments to involve relevant actors and take risk-related decisions, and mechanisms for reflecting on risk governance. The application of the principles likewise enabled several insights and potential areas for consideration regarding the governance of discrimination in algorithmic profiling.

Firstly, the study showed that the two agencies examined adopt different approaches to managing discrimination risks depending on the type of system in question. However, in light of the recognized risk of discrimination in simpler risk profiling systems, and the legal obligation to mitigate it, it is unclear why these systems should warrant a less socially robust governance approach. The exclusion of external scientific and governmental actors, as well as client councils, from the governance landscape of these systems seems to create vulnerabilities, particularly as these perspectives are essential to ensuring that organizational and scientific knowledge, along with the concerns and values of those directly affected, are adequately incorporated. Their absence could create vulnerabilities, by failing to adequately incorporate knowledge and perspectives relevant to navigating the sociotechnical complexity, scientific uncertainty, and socio-legal ambiguity associated with discrimination in algorithmic profiling.

Secondly, the broad nature of the legal framework requires social security agencies to interpret legal norms, creating a risk of siloed and potentially fragmented approaches to addressing discrimination when using algorithmic profiling systems. To continue developing mature risk governance in the social security sector, mechanisms for structural cross-agency learning could be beneficial. Given the unique approaches each agency takes to address the same risks, it could prove useful for the agencies to learn from each other's risk governance approaches, choices, struggles, and successes.

Building on the aforementioned insights, it is worth considering if it would be beneficial for the Dutch legislator to require reporting on risk governance for both rule-based and complex algorithmic profiling systems within Wet SUWI. Reporting could include relevant policies, protocols and process descriptions, assessment methodologies, and reflection mechanisms such as periodic evaluations of compliance, policies, and assessment systems. Such reporting obligations could promote transparent and mature risk governance by ensuring risk governance for both types of systems, facilitating cross-agency learning and providing external parties—such as citizens, supervisory authorities, and courts—with greater insights into the responsible use of algorithmic profiling systems.

Footnotes

Declaration of conflicting interests

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author received no financial support for the research, authorship, and/or publication of this article.