Abstract

Recent digital welfare malpractices have shown how rule of law systems may fail to protect citizens against abuses of power by social security administrations. Despite growing attention for the problems of the digital welfare state, the macro-level of the rule of law and ‘checks on government’ has remained largely overlooked. This article examines how the effectiveness of rule of law control mechanisms is put under pressure in the welfare state, particularly in the context of (semi-)automated systems of surveillance and enforcement. It explains how the convergence of automation and welfare conditionality jeopardises the safeguarding function of legislation, parliamentary and external scrutiny, and administrative adjudication. The first part brings together the literature on automated decision-making, welfare conditionality and the rule of law, and the second part explores these perspectives in a comparative analysis of Robodebt (Australia) and the childcare benefits scandal (the Netherlands). The main findings are threefold. First, rule of law-breakdowns in the digital welfare state stem primarily from excessive welfare conditionality (intensified criteria and obligations, intrusive surveillance and rigid enforcement), rather than automation as such. Second, the politico-ideological appeal of budgetary savings and tough enforcement drives executive branches to disregard constitutionally prescribed controls. Third, conventional control mechanisms fail to curb or counteract this threat due to various institutional weaknesses, from the ex ante stage of legislation to the ex post stage of scrutiny and judicial review. The article concludes by reflecting on the importance of stronger legal safeguards and control mechanisms, to improve the protection of citizens across welfare states.

Keywords

Introduction

There has been increasing research into the digital welfare state in recent years, with special attention paid to the risks of surveillance technologies and automated decisions in the enforcement of social security benefits (Alston, 2019; Van Toorn et al., 2024). This has been paired with, and is in part motivated by, malpractices such as Robodebt (Australia) (Whiteford, 2021), MiDAS (USA) (Ranchordás, 2022) and the childcare benefits scandal (the Netherlands) (Bouwmeester, 2023). 1 These cases have shown how data-driven social security surveillance and enforcement can result in false allegations of fraud, excessive targeting of vulnerable minorities, disregard for proportionality and empathy (Ranchordás, 2022), and fundamental rights violations (Alston, 2019).

These developments have also exposed another, higher-level issue. Rule of law systems, and their presumed functioning of control mechanisms, failed to shield people from excessive harm and illegitimate uses of power by social security administrations. This was especially apparent in the cases of Robodebt in Australia and the childcare benefits scandal in the Netherlands, both of which displayed critical defects in the legislative process, parliamentary scrutiny and judicial review (Brinkman and Vonk, 2022; Carney, 2019). These ‘control deficits’ (Haitsma and Bouwmeester, 2023) resonate with broader concerns about the weak transparency, controllability and accountability of digital welfare systems and inhibitions on scrutiny and judicial review (Cobbe, 2019; Rachovitsa and Johann, 2022; Van Zoonen, 2020).

Existing research still provides only limited insights into the risk factors for the rule of law, and for the functioning of checks and balances in digital welfare systems. There has been an increasing focus on the disruptive effects of automated decision-making in the rise of ‘algorithmic administrative states’ (Goossens, 2023), aggravating the disbalance in the trias politica in favour of already dominant executive branches (Passchier, 2021). Other studies have identified the challenges of keeping automated decision-making in check across different phases (Huggins, 2021; Lord Sales, 2020; Suksi, 2023), with specific attention paid to the ex ante stage of legislative design and procedure (Zalnieriute et al., 2019) and the ex post stage of parliamentary scrutiny and judicial review (e.g. Cobbe, 2019; Goossens et al., 2021). But automation is generally approached from a broad angle, with no more than anecdotal reference to social security as a domain of special significance. And while social security law and policy studies have analysed the micro level of policy design and state-citizen relationships (Carney, 2020; Henman, 2022; Lee-Archer, 2023), the macro level of the rule of law system has remained largely absent from this strand of literature. Consequently, we lack important insights into the rule of law risks surrounding digital welfare systems, let alone into ways to strengthen constitutional systems to effectively curtail and counteract these risks.

This article examines how the functioning of rule of law control mechanisms is put under pressure in the digital welfare state, more specifically in the context of data-driven, (semi-)automated systems of surveillance and enforcement. It brings together the still rather isolated literature streams on social security, the rule of law and automation, and explores these theoretical perspectives through a comparative analysis of the Australian Robodebt scandal and the Dutch childcare benefits scandal. It thereby contributes to a new, integrated understanding of the rule of law and digital welfare, informing ongoing governmental and academic discussions on the improvement of checks and balances across welfare states.

The following section explains how the combined trends of welfare conditionality (intensified criteria and obligations, intrusive surveillance and rigid enforcement) and automation have raised the need for rule of law safeguards and control of government. The article then systematically outlines how, paradoxically, these combined trends carry the potential to undermine the safeguarding function of legislation, external scrutiny and administrative adjudication. The theoretical framework is then used to explore the background of control deficits, from the legislative process to judicial review, in Robodebt and the childcare benefits scandal. These empirical perspectives are then considered further in a comparative discussion, paving the way for the final conclusions.

Enforcement regimes in the welfare state: trends and rule of law risks

Across welfare states, social security claimants have become increasingly at risk of ending up harmed by welfare bureaucracies. This is far from a coincidence, as two cross-border trends have contributed to this outcome: (a) the intensification of enforcement regimes under the rise of welfare conditionality; and (b) the automation of surveillance and enforcement practices.

Intensified enforcement regimes under welfare conditionality

While governments have always been concerned with combatting benefit fraud and limiting overpayment of benefits (Kiely and Swirak, 2022), the intensity of these activities has increased significantly since the 1990s (Fenger and Simonse, 2024). 2 This aligns with a broader trend of growing concern over benefit misuse in social security politics and public opinion since the mid-1980s, with politicians across the political spectrum intensifying their commitment to stricter rules and tougher sanctions (Vonk, 2014).

Many welfare states have shown such increased commitment throughout the ‘chain of enforcement’ (Klosse and Vonk, 2022: 362), spanning the full process of monitoring, fraud detection, and administering of recovery payments and sanctions.

3

The result has often been an all-out stricter ‘enforcement style’ in which (inadvertent) mistakes by applicants, such as a failure to supply income details on time, are increasingly punished as fraud, regardless of whether they stem from bad faith or a lower degree of culpability (Vonk, 2014). A variety of concepts have been coined to refer to this broader trend, ranging from the rise of the ‘punitive welfare state’ (Larkin, 2007), to ‘repressive welfare states’ (Vonk, 2014) and the ‘surveillance welfare state’ (Fenger and Simonse, 2024).

4

In recent years, the concept of ‘welfare conditionality’ has become particularly widely used. The term is understood here as a discourse and reform strategy (Fletcher, 2020) or new ‘welfare rights paradigm’ (Carney, 2011: 241), which has fundamentally altered the nature of social security through three dynamics of change (Adler, 2016; Gantchev, 2019; Vonk, 2014; Watts and Fitzpatrick, 2018):

(a)intensified conditions to qualify for income support (eligibility criteria) and stricter obligations for claimants; (b)increasingly intrusive surveillance, in terms of both data collection for eligibility assessment and continuous monitoring of compliance with claimant obligations; (c)increasingly rigid enforcement decisions, relating to debt recoveries, termination or reduction of benefits, and additional monetary fines.

This article will not delve into the risks of harm for social security claimants which have emerged due to the rise of the welfare conditionality paradigm (see, for example, Dwyer, 2019; Fletcher, 2020; Rossel et al., 2023). The main concern here is that these developments have resulted in a greater need for legislative and judicial checks to ‘soften the sharp edges’ (cf. Gantchev, 2020: 270) of strict social security laws and repressive enforcement practices (Vonk, 2014). As will be explained later, these checks are far from guaranteed in the modern welfare state, but first attention will go out to automation as a coinciding development that aggravates the vulnerabilities and risks of harm induced by welfare conditionality.

The digital welfare state: conditionality and automation combined

Like many other domains of public administration (Roehl, 2023), social security decision-making and service delivery have experienced substantial automation in modern welfare states. Digital technologies are used throughout the chain of enforcement (Klosse and Vonk, 2022: 362), including identity verification and eligibility checks, calculation of benefit amount and payment, fraud detection (e.g. algorithmic profiling systems, Haitsma, 2023) and debt recoveries and sanctions (Alston, 2019). This article refers to ‘automated decision-making (ADM)’, alongside ‘automated decisions’ and ‘ADM systems’, to broadly capture the spectrum of automation (Zalnieriute et al., 2019), from simple (rule-based) algorithms to complex and predictive (machine learning) systems that are used to either assist or fully replace human administrative decision-making (Cobbe, 2019; Hildebrandt, 2018; Wolswinkel, 2022).

Since the 1960s, automation has facilitated expanded benefit provision, reduction of errors and alleviation of administrative burdens for both citizens and implementing authorities (Henman, 2022), and it can moreover be used to reduce non-take-up, by tracking and contacting people missing out on income support (Alston, 2019). In recent years, however, the long-overlooked harms of automation in social security have been at the forefront of debate. Poorly designed systems have been shown to result in discriminatory targeting, to lack basic procedural fairness and government accountability, and to significantly impair access to justice (Alston, 2019; Carney, 2020), often disproportionately harming the most vulnerable members of society and deepening pre-existing inequalities and prejudices (Alston, 2019; Eubanks, 2018). Data-driven anti-fraud policies form an especially risky legal frontier or ‘grey zone’ in which long-established constitutional principles are prone to violations, especially when AI techniques and risk scores are used to single out suspicious cases (Damen, 2023: 534). More generally, the shift from street-level to screen- and system-level bureaucracy (Zouridis et al., 2020) has weakened the capacity to ‘bend the rules’ to ensure proportionate rule enforcement (Van Lancker and Van Hoyweghen, 2021), diminishing administrative empathy and increasing the risk that vulnerable citizens may be left behind in unfair and incomprehensible bureaucratic procedures (Ranchordás, 2022; Spijkstra, 2024).

While still far from completely understood, there is a growing consciousness of the linkages between ADM systems and welfare conditionality. On the one hand, rigorous data collection and welfare surveillance enable governments to attain the political objective of increasingly conditional benefit provision (Van Toorn et al., 2024; Watts and Fitzpatrick, 2018). On the other, the combination of the two trends can be viewed as the prime source of problems for social security claimants. Automation and conditionality are ‘double-edged’ in the sense that they can both advance and contradict the goals of social security; when combined, however, the risk of harm for citizens is compounded, rendering income support more ‘virtual’ than real and social security administration more ‘virtual’ than human (Carney, 2024: 33). Effective safeguards and controls are crucial to curtail and offset these compounded risks, both ex ante, through rule of law standards and social rights protection in legislation, and ex post, through judicial review and external scrutiny. The following section will explain how both automation and conditionality, paradoxically, compromise the functioning of these control mechanisms.

Rule-of-law control mechanisms in a situation of automation and conditionality

Before going on to explore the rule of law system-level risks of welfare conditionality and automation, some conceptual clarification and delineation is necessary. The main focus is on the classical control mechanisms or ‘guardian institutions’ in place to monitor compliance with human rights and rule of law principles (Bedner, 2010; Venice Commission, 2011), 5 with the underlying aim of shielding citizens from abuses of power. 6 These comprise the legislative process (design and procedure) as the core means of ex ante control – approached from a broad perspective, with attention paid to both legislative drafting and the role of parliament in ex ante legislative oversight (cf. Pelizzo and Stapenhurst, 2004) – and judicial review and ex post parliamentary scrutiny (or ‘post-legislative scrutiny’, De Vrieze and Norton 2020) as ex post control mechanisms. 7 That said, the analysis is at times extended to a broader view of ex post scrutiny – including oversight bodies, such as Ombudsmen, that assist parliamentary scrutiny – and to the whole process of administrative adjudication (including internal review and administrative appeals procedures, Asimow, 2015). The following sections identify key risk factors linked to the functioning of these control mechanisms by automation and welfare conditionality, before further discussing these compounded risks.

Ex ante: legislative design and procedure

With regard to the legislative process, a first set of challenges relate to the inherent difficulty of legally embedding ADM systems. ADM places the fundamental traditional dichotomy between norm-setting and rule application (individual decisions) under pressure, as rule-making competences are drawn (further) into the executive branch and away from legislative control (Goossens et al., 2021). The implicit underlying rationales of developers often remain under the radar, and many systems are developed outside the scope of legislative oversight, threatening the ‘input legitimacy’ of government algorithms (Grimmelikhuijsen and Meijer, 2022). Second, traditional rule of law principles are difficult to properly operationalise in anticipation of automated decisions. Besides principles such as fair treatment and due process, this is especially true of proportionality, as its purpose is to facilitate appropriate rule exceptions at the legislative ex ante stage, while proportionality-review by courts during the ex post stage continues to be strongly shaped by choices in the legislative process (Enqvist and Naarttijärvi, 2023).

Besides these legal issues, there are barriers of a practical nature. Automation places higher demands on the technical expertise of parliamentarians, even though this expertise and the political will to scrutinise ADM systems critically tend to be limited (Passchier, 2021). This was, for example, apparent with the introduction of the SyRI-legislation in the Netherlands; 8 there was little parliamentary consideration of potential fundamental rights violations, despite early warning calls from the Advisory Division of the Council of State and the Data Protection Authority (Rachovitsa and Johann, 2022). In light of these issues, some have argued for more extensive ex ante ‘rule of law controls’ of ADM, such as obligatory checks 9 and audits by advisory or watchdog institutions (Bovens et al., 2018).

When it comes to welfare conditionality and the legislative process, the story is rather different. Welfare conditionality essentially comes down to a highly deliberate attempt of legislatures to tighten legal norms and rule enforcement, to bring about a (neoliberal) shift in state-citizen relationships, towards austerity objectives and increased social control (Kiely and Swirak, 2022). Such a shift more or less inherently erodes social rights and rule of law safeguards, particularly with respect to the right to social security and the principle of proportionality. This has been especially apparent in the intensity of benefit sanctions, which have in many countries come to form a ‘troubled marriage’ with the right to a subsistence minimum (Gantchev, 2020) and, more broadly, minimum standards of social citizenship (Fitzpatrick et al., 2019). A similar degressive trend has been observed in relation to pervasive welfare surveillance. While some countries (e.g. Germany) are characterised by relatively strong (constitutional and statutory) legal safeguards, others (e.g. the Netherlands and the UK) have ‘practically unlimited powers’ for bureaucracies in welfare surveillance, with the task of constraining these powers largely delegated to the executive branch (Gantchev, 2019: 19).

Ex post: parliamentary and external scrutiny

In the domain of ex post scrutiny, some aspects discussed above are of equal relevance. ADM systems tend to be not only developed but also continuously adapted outside the scope of democratic oversight (Goossens et al., 2021; Grimmelikhuijsen and Meijer, 2022). While control could be achieved through parliamentary and public initiatives (e.g. freedom of information requests), these may be impeded by a lack of technical expertise or political will (Passchier, 2021). But the core concern shared in the literature is the opacity of ADM systems, as an inhibition to scrutiny. This ‘black box problem’ is mainly relevant for complex machine learning algorithms, for which it may be practically impossible to explain in human terms how the system works (Ebers and Trasberg, 2023: 23). But rule-based algorithms are prone to weak transparency too, be that due either to limited efforts to make underlying decision rules publicly available or to deliberate government secrecy (Carney, 2020). The Dutch SyRI case is a prime example as it was developed and kept running in an ‘institutional void’ outside the scope of democratic oversight, lacking transparency to the public and without quality checks in light of privacy safeguards (Van Zoonen, 2020). In response to the different drivers of opacity (black box problem, intentional opacity and technical illiteracy, see more extensively Cobbe et al. 2021), various mechanisms are proposed to enhance ‘algorithmic accountability’ (Busuioc, 2021), such as specialised parliamentary committees (Moulds, 2021) or supervisory authorities that can fundamentally intrude into the complex sphere of ADM practices (Gontarz, 2023: 156).

Shifting attention to welfare conditionality, the fundamental risk is rooted in the sphere of politics. Besides its legal and policy-oriented characteristics, welfare conditionality constitutes a political paradigm representing ideological concerns over deservingness and state-citizen relationships. Strict conditions, pervasive surveillance and rigid enforcement have emerged under the influence of an ideological shift away from guaranteed rights, to a ‘new economic philosophy’ of conditional welfare provision and neoliberal concepts of self-responsibility (Matteucci and Halliday, 2017: 12). 10 Moreover, benefit fraud is an especially politicised subject of debate that sparks outrage and generates calls for tough enforcement from parties across the political spectrum (Vonk, 2014; Wilcock, 2017). Recent research has shown that left-wing parties too – generally more in favour of ‘lax’ approaches to benefit provision and enforcement – have embraced increasingly stringent approaches, in fear of bleeding votes to (centre) right-wing parties (Rossel et al., 2023). This indicates that the critical parliamentary and wider public scrutiny of strict social security laws and policies may fall short for various reasons, be that due to strategic electoral considerations or to broader ideological concerns.

Ex post: administrative adjudication

There are also important challenges in the domain of administrative adjudication. First, the legal literature on ADM stresses that automation can hinder citizens’ access to both judicial and administrative procedures. With effective use of legal remedies being highly dependent on transparency (Cobbe, 2019), the often limited explanation and justification of automated decisions hampers citizens in their navigation of review, appeals and judicial procedures (Ebers and Trasberg, 2023). Once again the Dutch SyRI case can be highlighted as a malpractice, being ‘shrouded in secrecy’ and thereby denying citizens their right to review and redress (Rachovitsa and Johann, 2022). Others have emphasised that the opacity and complexity of ADM systems can overburden litigants in their efforts to gather evidence and demonstrate the faulty nature of automated decisions (Tomlinson, 2019). Second, it can be challenging for courts to judge ADM practices appropriately. This includes the assessment of whether decisions are properly justified (Zalnieriute et al., 2019) and the need for rule exceptions to safeguard proportionality (Enqvist and Naarttijärvi, 2023), for which traditional legal norms may be difficult to operationalise. And for the domain of welfare fraud detection specifically, it has for example been argued that the right to transparency in the GDPR ‘fails to set meaningful limits to the deployment of data-driven technologies’, despite high risks of human rights violations (Damen, 2023: 541). Furthermore, the judicial competence of administrative courts and tribunals is often limited to individual administrative decisions, rather than consideration of the underlying ADM system and decision rules that produce them (Gontarz, 2023). This can be especially problematic in social security, where mass decisions are often taken on the basis of ‘automated chain decisions’ that lack clearly stated decision rules (Van Eck, 2018), rendering it difficult for judges to make an informed assessment of a decision's legality and appropriateness (Goossens, 2023).

We will now examine the relationship between welfare conditionality and (access to) administrative adjudication. To begin with, welfare conditionality tends to impair citizens’ access to justice through a combination of: (a) increasingly strict and complex rules; and (b) high demands on citizens’ self-responsibility, to comply with claimant obligations (Sigafoos and Organ, 2021; Watts and Fitzpatrick, 2018). In addition, the grievances that arise under welfare conditionality regimes are often complicated and full of procedural pitfalls. Research in the UK has shown that this places unreasonably high demands on benefit recipients’ often already low legal awareness, stamina and ‘gritty determination’, resulting in high attrition rates in legal remedy procedures (Ahluwalia and Tomlinson, 2018: 230). Similarly, research into conditional cash transfer programs (CCTs) in Latin America demonstrates that welfare conditionality reforms go hand in hand with a weakening of procedural protections and appeal arrangements (Pérez-Muñoz, 2023). These issues raise the need for legal aid services, while many countries (such as the UK and the Netherlands, see respectively Dutch Legal Aid Board, 2021; Sigafoos and Organ, 2021) have gone through substantial budget cuts to legal aid in the early 2010s. Last but not least, significant tensions exist between welfare conditionality and judicial review. Courts, as final arbiters of the rule of law system, have become increasingly important, to ensure that enforcement practices comply with rule of law standards and strike a ‘just balance’ between rights and obligations (Vonk, 2014). 11 However, welfare conditionality has faced remarkably few serious legal challenges across (European) welfare states (Adler and Terum, 2017). In the UK, for example, the increasing severity of sanctions has been scarcely subjected to judicial review, despite clear avenues to ‘[curb] the excesses of the benefit sanctions regime’ and respond to structural ‘Rule of Law concerns’ (Ahluwalia and Tomlinson, 2018: 229–230). Existing research does not suggest a conclusive reason for this scarcity of judicial interventions, but the answer may lie in the traditional constitutional culture regarding the judiciary's positioning towards the legislative branch. Courts often display a deferential attitude towards parliamentary (statutory) legislation, deeming social rights a matter for the democratically chosen legislature (King, 2012). This attitude has, for example, been observed in the Netherlands, where courts have displayed a relatively deferential attitude towards increasingly severe benefit sanctions, at least when compared to the critical adjudication of benefit sanctions by the German Bundesverfassungsgericht based on the constitutional right to a minimum subsistence (Existenzminimum) (Vonk, 2019). 12

Compounded risks for the rule of law system

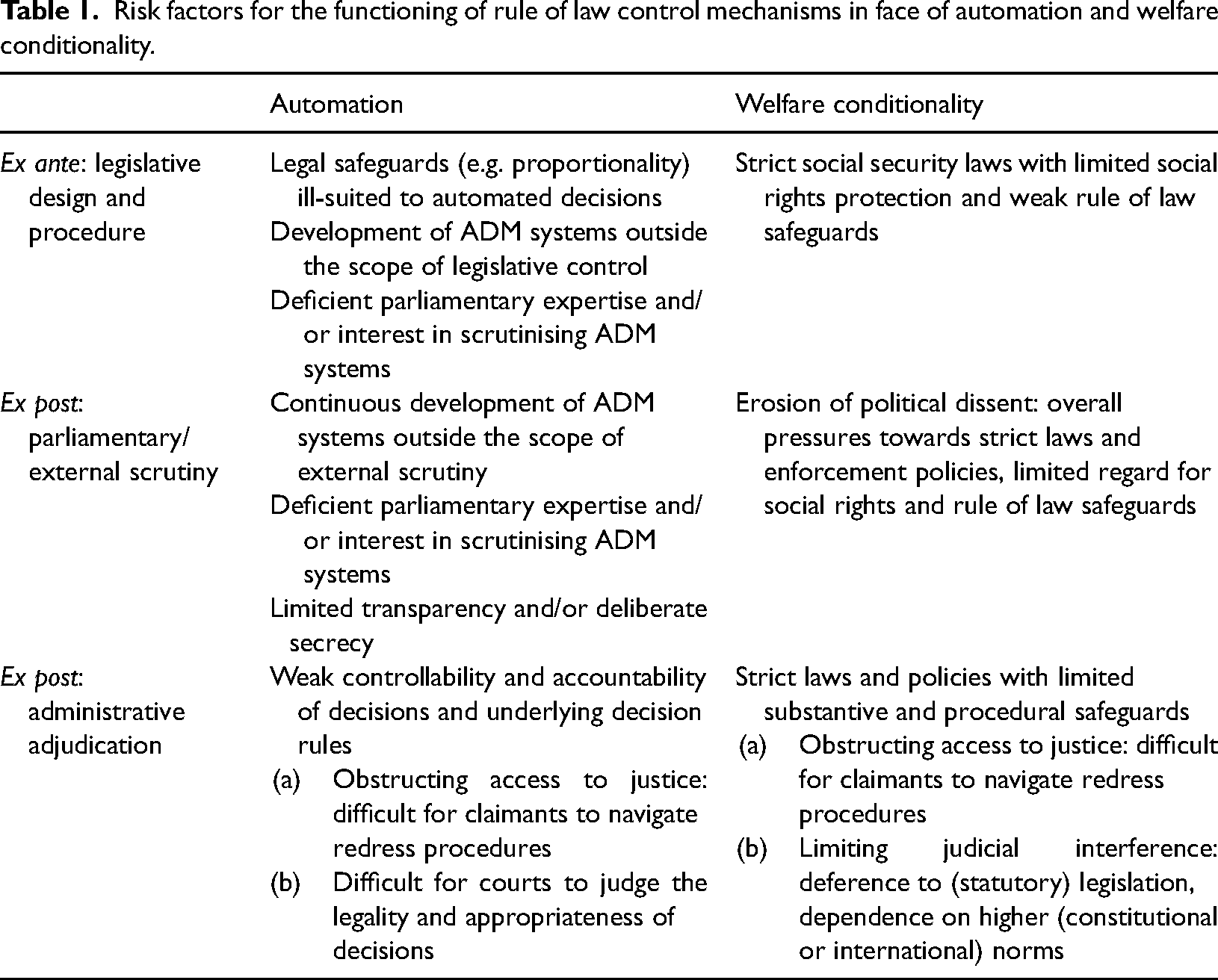

By recontextualising automation and welfare conditionality on the macro level of the rule of law system, their disruptive impact on the system of checks and balances becomes evident. The above findings first of all support the existing assumption that ADM forms a challenge to constitutional principles and rule of law controls (Goossens, 2023). Second, the same can be said for the undermining effects of welfare conditionality, which through its three dynamics of change (intensified conditions and obligations, intrusive surveillance and rigid enforcement) and political backdrop inherently compromises the protective function of legislative safeguards, ex post scrutiny and administrative adjudication. Welfare conditionality and automation can thus be viewed as distinct trends with their own set of challenges throughout the ‘chain’ of rule of law control mechanisms, summarised in Table 1 below.

Risk factors for the functioning of rule of law control mechanisms in face of automation and welfare conditionality.

This conceptual distinction was useful for reviewing the literature and classifying risk factors, but in the reality of digital welfare states these risks are of course interwoven. This means that in the legislative process, the safeguarding potential of legislative design and procedure is substantively diminished by both the rigidity of social security laws and the procedural challenges associated with the prior regulation of ADM systems (e.g. with regard to meaningful parliamentary influence). Post-implementation scrutiny could offset these deficits, but this becomes unlikely when faced with the overwhelming political pressures in favour of welfare conditionality, the often limited political interest in meticulous scrutiny of ADM systems and, in case these barriers are overcome, a lack of transparency inhibiting external control. Finally, administrative and judicial review procedures are far from guaranteed solutions. Beneficiaries need to work their way through the complex rules and redress procedures typical of welfare conditionality, automation obscures the underlying rationale of decisions, and on top of that, legal aid services have become less and less available across welfare states. And if cases do reach the courts, the much-needed critical review by these is hindered by the limited controllability of automated decisions, and by the courts’ inclination towards restraint in their judgment of social security decisions that flow from democratically legitimised legislation, hindering judicial interventions even in cases of disproportionate outcomes and violations of rule of law principles.

The above comprises only a first, general understanding of the disruptive impact of welfare conditionality and automation on the control mechanisms of the rule of law system. A more detailed understanding of the interplay between these factors, and especially their relative importance in terms of disruptive effects, is given in the following part, which draws on empirical insights from Australia and the Netherlands.

Cases: two rule of law system failures in the digital welfare state

Previous studies have already highlighted the commonalities between Robodebt and the childcare benefits scandal, as typical digital welfare malpractices (e.g. Fenger and Simonse, 2024; Van Krieken, 2024), similar policy fiascos (Bouwmeester, 2023; Whiteford, 2021) and, most importantly, ‘rule of law system failures’ with comparable breakdowns in checks and balances (Bouwmeester, 2023). At the same time, these cases illustrate the variety of ADM systems in social security and the associated risks, with Robodebt involving a relatively simple rule-based algorithm, and the childcare benefits scandal entailing a more complex predictive risk-profiling system. The following sections provide a synopsis of each case, 13 followed by an in-depth discussion of the (causal) background of control deficits, from the legislative process to judicial review.

Robodebt

In May 2015, the Australian government introduced a system of monitoring and welfare debt recovery entitled ‘Online Compliance Intervention’ (OCI). It was rolled out for a variety of means-tested benefit schemes, as part of a 2015–2016 budget measure called ‘Strengthening the integrity of welfare payments’. It involved the use of a simple algorithm, soon dubbed ‘Robodebt’ by the media (Whiteford, 2021), to calculate and automatically impose social security debts, by detecting discrepancies between the earnings declared by benefit recipients to executive agency Centrelink and tax records held by the Taxation Office. The main problem was that the algorithm ‘averaged’ earnings over the period of a full year. This went against the legally prescribed fortnightly calculation of earnings (in the Social Security Act of 1991), and wrongly assumed that beneficiaries with fluctuating earnings (the majority of benefit recipients, Whiteford, 2023) had received a stable income throughout the relevant year. Centrelink raised more than 450,000 unlawful ‘robodebts’ between 2015 and 2019. The system disproportionately harmed already vulnerable populations and caused widespread financial destitution, aggravating physical and mental health problems and a factor in multiple suicides (Ng et al., 2020). After a ‘crescendo of criticism’ (Royal Commission, 2023: 153), the system was brought to an end in May 2020. The Royal Commission investigating the scheme found that around 794,000 unlawful debts had been raised against approximately 526,000 benefit recipients, with a total of 1751 billion AUD to be written off (Royal Commission, 2023: 402).

Legislative design and procedure. The core issue with Robodebt was its substantively unlawful nature. Besides the practice of yearly ‘income-averaging’, in violation of the Social Security Act of 1991, it caused an unlawful shift in the onus of proof towards citizens – effectively obliging them to ‘disprove’ alleged debts (Carney, 2019). Moreover, in 2015 the proposal bypassed constitutionally required ex ante control by the legislature, which was essentially cancelled by staff and senior officers in the Department of Human Services (DHS). Legal advisers in the Department of Social Services (DSS) had noted that the system did not comply with the Social Security Act and therefore could not be implemented without new authorising legislation, but the DHS left these notes out of its final policy proposal – causing the Cabinet to be misled about the probable unlawfulness of the proposal (Royal Commission, 2023: 88–89, 532).

It remains difficult to fully reconstruct why Robodebt was implemented, 14 but the Royal Commission emphasised one main underlying cause of its rushed establishment: its nature as a cost-saving measure in a context of heavily politicised welfare politics. It was, in spite of everything, deemed desirable ‘because of the dual advantages of supposed savings – the misconceived notion that unreviewed discrepancies between ATO [Australian Taxation Office] and DHS income data represented mountains of gold – and its neat alignment with the political rhetoric of the day about the social security system and the need for “integrity" in welfare payments’ [emphasis added] (Royal Commission, 2023: 655). As emphasised before by Carney (2019: 6), compliance with the rule of law and procedural requirements were trumped by: (a) the political imperative of achieving quick fiscal gains for the government budget; and (b) ‘the ideological comfort of its political appeal to “tough on welfare” constituencies’.

Parliamentary and external scrutiny. Soon after implementation of Robodebt, there were increasing warning signals of its flaws and the harm it could cause. Concerns were raised by frontline staff, but the signals either did not make their way up, or were prevented from reaching senior executives and the ministers responsible. Moreover, constant misrepresentation of facts prevented scrutiny of the scheme (Royal Commission, 2023: 658), together with a lack of due diligence by the monitoring bodies. A Senate committee and the Commonwealth Ombudsman pointed to potential violations of legal principles, but neither recognised the fundamental illegality of income-averaging and the shift in the burden of proof (Carney, 2019). This led the Royal Commission to conclude that ‘good faith cannot be assumed’ and to argue the need for intensified freedom of information provisions and stronger information competences for the Commonwealth Ombudsman (Royal Commission, 2023: 582, 657).

Moreover, the undermining of external scrutiny and disregard for warning signals have been attributed to structural deficiencies in the administrative culture of the Australian Public Service (APS). Senior executives were overly responsive to their ministers, which incentivised a culture that inhibited ‘frank and fearless advice’ at the lower administrative echelons as well as the politico-administrative top tier (Podger, 2023). Consequently, the political drive to ‘ensure welfare integrity’ through cost savings remained dominant, despite multiple public servants raising concerns from early on (Royal Commission, 2023: 107).

Administrative adjudication. Robodebt was eventually terminated under pressure from two applications brought to the Federal Court by victims, represented by Victoria Legal Aid (Royal Commission, 2023: 288, 298). 15 But it primarily represents a long-term breakdown of access to justice and judicial review (Maxwell, 2021). Few victims found their way to the first stage of administrative redress; only 1% of debt collections (about 7400) were subject to a formal internal review and even fewer (1482) reached the first tier of the Administrative Appeals Tribunal (AAT) (Maxwell, 2021: 106). Several factors contributed to this low rate. Victims found it difficult to ascertain what had gone wrong, due to the structural obscurity of the debt-collection method (Ng et al., 2020). Requests for internal review were not followed up, recipients were hindered from exercising their right to tribunal review, and the documents requested (e.g. income data from employers) could often not be supplied on time (Royal Commission, 2023: 329). Many citizens were overwhelmed by complex administrative burdens, making it time-consuming or practically impossible to prove the system wrong (Ng et al., 2020). Others were unaware of their right to review, fearful of jeopardising future entitlements by complaining, or paralysed by feelings of powerlessness against the government (Maxwell, 2021).

For a long time, the administrative appeals procedure did not bring Robodebt's structural illegalities to an end. The core problem was not in the nature of the AAT decisions, but in how government officials dealt with the tribunal's rulings. As early as March 2017, an AAT decision suggested that the entire system was unlawful. Eventually, 424 AAT decisions concluded that debts were incorrectly calculated, versus just 114 accurate debt recoveries (Royal Commission, 2023: 556). But DHS had no procedures in place to disseminate the flaws pointed out in tribunal decisions, and warnings were largely ignored. Another problem was that the non-publication of first tier AAT decisions stood in the way of public scrutiny, inhibiting wider community understanding of the scheme (Royal Commission, 2023: 553–554). Finally, in violation of litigant protocols, DHS did not seek a second tier AAT review when overturned at the first tier (Royal Commission, 2023: 561–563), seemingly deliberately, to avoid acknowledging the system's fundamental flaws (‘administrative non-acquiescence’, Carney, 2019: 6). This ‘legal game-playing’ was enabled by the administrative culture, which discouraged civil servants from voicing dissent to higher-level executives, and by the populist anti-welfare culture that kept the government unreceptive, despite growing public discontent (Henman, 2025: 9–12).

Childcare benefits scandal

The childcare benefits scandal revolved around the Dutch scheme of means-tested childcare benefits for working parents, established by the 2004 Childcare Act (Wet Kinderopvang) and administered by the benefits department of the Tax Administration. By 2010, there were growing concerns about the system's vulnerability to fraud and about the scale of expenditure, which resulted in an intensified political focus on fraud detection and recoveries of overpayments (Parlementaire Enqûete Fraudebeleid en Dienstverlening [PEFD], 2024). These concerns were reflected in a strict enforcement regime, based on intrusive surveillance and a ‘zero tolerance approach’ to cancelled payments and full recoveries of advance payments (Parlementaire Ondervragingscommissie Kinderopvangtoeslag [POK], 2020: 47, 96). In August 2017, it became clear that this uncompromising approach was resulting in long-term financial insecurity for multiple hundreds of families (Nationale Ombudsman, 2017). In November 2019, it came to light that the Tax Administration had disproportionately targeted parents with a second nationality through an ‘institutionally prejudiced’ algorithmic profiling strategy, based on a fraud database and predictive risk selection model (Adviescommissie Uitvoering Toeslagen, 2020). Between 2012 and 2018, at least 26,000 benefit recipients were wrongly treated as deliberate fraudsters, with a disproportionate impact on people with a migration background (71% of all victims) and single parent families (around 50%) (CBS, 2022). 16

Legislative design and procedure. The Dutch legislature was blamed for enacting harsh, ‘draconian’ legislation that was unable to take account of individual circumstances (Van den Brink and Ortlep, 2021: 365). At the same time, the legal provisions concerning both eligibility assessment and recoveries of advance payments did in fact allow the Tax Administration and courts to account for proportionality in individual cases (Damen, 2021; Marseille, 2020). There is nevertheless general agreement that the legislation laid the basis for the misery experienced by the victimised parents. It did so by combining a system of advance payments and definitive calculations with a strict formulation of the law and lack of safety valves, thereby encumbering benefit recipients with large financial risks (PEFD, 2024: 135). This happened despite warnings from the Advisory Division of the Council of State that a hardship clause would be necessary to remedy emerging injustices (POK, 2020: 34).

Besides this, the development of algorithmic profiling practices took place fully outside the scope of ex ante parliamentary control. The registration system and risk selection model were developed and kept running ‘in the shadows of the rule of law system’ (Çapkurt and Schuurmans, 2021: 607). Contrary to the heavily critiqued SyRI system – which was at least established in legislation – this system was developed with a complete absence of external controls, regardless of its tensions with and, as eventually established, violations of the right to privacy and non-discrimination (PEFD, 2024: 57).

Parliamentary and external scrutiny. There was a structural lack of transparency from the Tax Administration and in the communication of cabinet ministers to parliament, which hindered external scrutiny by members of parliament, government watchdogs and the public (POK, 2020). The opacity of the algorithmic profiling system was an issue of special significance. It was to a certain extent driven by the technical nature of the algorithm; indicators were constantly amended to ‘improve’ the selection of presumed fraudsters. But more importantly, the fraud detection regime was kept away from external scrutiny by ‘organisational opacity’ (Haitsma, 2023: 72) and an outright misrepresentation of facts. The Tax Administration provided inaccurate information when faced with investigations by the Ombudsman and the Data Protection Authority, and the House of Representatives (Second Chamber) was for a long time given incomplete information on the use of second nationality as a risk indicator (RTL Nieuws, 2021). This opacity was sustained by risk aversion and fear among civil servants of being ‘slapped on the wrist’ and facing adverse consequences from their superiors (Haitsma, 2023: 72). The insufficient transparency of the surveillance system had only little to do with inherent technical complexities; rather than a black box, it was an ‘unknown box’ kept away from external scrutiny (Çapkurt and Schuurmans, 2021: 607).

For a long time, despite growing indications of the severe financial hardship caused by the Tax Administration's recovery practice, only a few members of parliament took action (Venice Commission, 2021). Members of the House of Representatives continuously fuelled a tunnel vision on fraud prevention and an overheated political demand for harsh enforcement (POK, 2020: 7); this attitude was strengthened from 2011 onwards in the wake of the economic crisis (PEFD, 2024: 402) and especially after the Bulgarian fraud affair that came to light in April 2013. 17 The political pressure eventually drove the development of a ‘business case’ in which the Ministry of Finance aimed to make fraud prevention pay for itself, creating perverse incentives for the ‘fraud hunt’, blown out of all proportion (PEFD, 2024: 362). The interplay between parliament and the media was highlighted as an underlying issue. Each brought out the worst in the other, acting as catalysts in causing benefit provision to become ‘synonymous with fraud’, especially in the case of recipients with an immigration background (PEFD, 2024: 63).

Administrative adjudication. The scandal displayed various shortcomings in the system of administrative adjudication. First, the administrative appeals procedure within the Tax Administration lacked due diligence and fair play (Nationale Ombudsman, 2017). Unintentional errors were routinely processed as fraud or gross negligence (POK, 2020: 25), appeals were incompletely processed and substantial delays were commonplace, with the supposed six-week processing time in some cases increasing to 18 months (generating long-term legal uncertainty, PEFD, 2024: 54, 218). 18 Files were incomplete and appeal decisions took the form of standard recitals that lacked a proper justification, which made it difficult for claimants to understand how they should begin to lodge an appeal (Schuurmans, 2021). Moreover, the Tax Administration's decisions were difficult to understand without legal expertise, in part due to their nature as ‘automated chain decisions’ (Van Eck, 2018) with no clearly stated decision rules (Werkgroep Reflectie Toeslagenaffaire Rechtbanken, 2021: 63). These problems increased the need for legal aid services, but there were structural weaknesses in the Dutch legal aid system due to unrealistic notions of citizens’ self-reliance, exclusionary income thresholds and the fragmented landscape of legal services (WODC, 2023). Presumably, this exclusion of recipients from subsidised legal counselling was a strong factor in the relatively high number of revoked judicial review applications (Schuurmans, 2021). The parliamentary inquiry committee of 2024 pointed to decades-long budget cuts in the subsidisation of legal aid as a problem factor in the background (PEFD, 2024: 207–208).

Victims encountered another obstacle when they reached the courts. Until its ‘reversal judgments’ of October 2019, 19 the Administrative Jurisdiction Division of the Council of State (the highest court responsible) applied an unnecessarily rigid interpretation of the law and systematically endorsed the Tax Administration's callous ‘all or nothing approach’ (Brenninkmeijer, 2021). This structural endorsement of the rigid enforcement approach, lacking any sense of proportionality, was emphasised as the core problem in the scandal, and as a ‘rule of law crisis’ (Besselink, 2021). While many underlying drivers contributed to this long-term absence of a countervailing balance, the cultural tradition of judges acting in deference to parliament (with respect to formal acts of legislation) played a key role in the persistently rigid interpretation of the law (Schuurmans, 2021; Venice Commission, 2021: 21). More fundamentally, some argue that the Council of State failed to fulfil its constitutional responsibility to act as a counterbalance to the legislature's insufficient normative awareness of fundamental social rights (Brinkman and Vonk, 2022).

Discussion

The above analysis of Robodebt and the childcare benefits scandal illustrates a variety of control deficits, from the ex ante stage of legislation to the ex post stage of scrutiny and adjudication. Of course, there are some differences in the nature of these ‘rule of law breakdowns’. The main storyline of Robodebt is that of an executive branch manipulating external checks from start to finish, while the childcare benefits scandal has been perceived markedly more as a collective failure, with all three branches of government complicit. But viewed together, the two cases provide valuable insights into the ways in which rule of law protections and control mechanisms fail to shield benefit recipients from the harm done by unlawful and repressive systems of surveillance and enforcement.

Some issues clearly resonate with the risk factors of ADM distilled from the literature. In both scandals, algorithmic tools were developed and kept running in an ‘institutional void’ (Van Zoonen, 2020) outside the scope of legislative procedure and external scrutiny, with limited transparency and almost no disclosure of their inner workings. Moreover, both cases are indicative of the disruptive impact of ADM on access to justice, making it difficult for citizens to form an understanding of how they should begin to challenge decisions, and of the substantive challenge for courts and tribunals to judge the legality and fairness of (semi-)automated decisions that lack a clear motivation and statement of decision rules.

But much more than this, the cases are indicative of the potential of welfare conditionality to undermine the rule of law. Both scandals emerged from a similar legislative backdrop of strict eligibility criteria and claimant conditions, and a policy context of repressive rule enforcement (Fenger and Simonse, 2024; Wilcock, 2017). It was only because of this that the use of automation had such detrimental effects. This is especially apparent in the demise of administrative adjudication in both countries, in Australia mainly driven by the overburdening of citizens with information obligations, and in the Netherlands coming down to a toxic combination of weak proportionality safeguards and overly deferential judicial review. There is also an important connection to legal aid services. Despite all obstacles, Robodebt was eventually brought down, in large part due to test cases brought to review with the help of legal aid bodies. And the scope and severity of the childcare benefits scandal would not have been the same were it not for the inaccessibility of the legal aid system and the decades-long budget cuts to the subsidisation of social (pro bono) lawyers (PEFD, 2024: 207).

This is still far from the full story. The two cases represent a much more direct undermining of rule of law systems. Control deficits in both cases were above all driven by two interwoven political pressures: (a) the ideological appeal of harsh enforcement (acting ‘tough on welfare’); and (b) the instrumental appeal of anti-fraud policies and debt recoveries as a means towards budgetary savings. In Robodebt, these dual pressures were the fundamental drivers of disregard for proper legislative procedure (Carney, 2019: 6; Royal Commission, 2023: 655), ‘wilful ignorance’ (Van Krieken, 2024) and legal game-playing (Carney, 2019; Henman, 2025), to sweep critical tribunal decisions under the carpet. The Dutch government's lack of transparency (PEFD, 2024) resonates with the secrecy surrounding Robodebt, but the childcare benefits scandal most of all demonstrates how these dual political pressures can have a wholly different detrimental effect: the almost complete demise of opposing parliamentary forces. This demise was driven by the interaction between the media and politicians (PEFD, 2024: 63), which created a path dependency towards increasingly repressive enforcement – reversed only when it was already too late. Finally, while not the focus of this contribution, we should note that these political pressures were able to do so much damage because they were combined with a weak administrative culture. Both scandals were shaped by a combination of excessive loyalty of senior executives to their political leaders (Bekker, 2020; Henman, 2025), disapproval of dissent in implementing authorities, and structurally weak information flows (POK, 2020; Royal Commission, 2023).

Thus, the collapse of rule of law controls in these cases should be understood mainly as the result of disruptive trends in social security politics, law and policy. Automation played only a minor part in this bigger play. This aligns with the general conclusions that Robodebt was not a ‘techno-failure’ but a ‘socio-political governance failure’ (Henman, 2025) and a breakdown of rule of law institutions (Carney, 2019), and that the childcare benefits scandal emerged from a societal culture of distrust and fixation on benefit fraud (PEFD, 2024), and a ‘rule of law culture’ (Van Ommeren, 2022) not fit to curb or counterbalance this overwhelming force.

Conclusion

This article asked how rule of law control mechanisms are put under pressure in the digital welfare state, with the main focus on (semi-)automated systems of surveillance and enforcement. The first part of the answer is that the two trends ingrained in the rise of digital welfare states, welfare conditionality and automation, each give rise to their own set of risk factors, from the ex ante stage of legislation to the ex post stage of scrutiny and adjudication. Just as the risks of harm for citizens are compounded when the systematic features of welfare conditionality (intensified criteria and obligations, intrusive surveillance and rigid enforcement) are combined with automation (Carney, 2024), the convergence of these two trends weakens the efficacy of control mechanisms throughout the rule of law system.

The Dutch childcare benefits scandal and Robodebt serve as cautionary tales, illustrating how both relatively simple and complex algorithms can be surrounded by breakdowns of rule of law safeguards and control mechanisms. But most of all, they show that the fundamental cause of such rule of law breakdowns lies in welfare conditionality and the broader political backdrop of social security reforms. The dual appeal of tough enforcement and budgetary savings can be so strong that it leads politicians, senior civil servants and social security administrations to, more or less deliberately, undermine the rule of law and constitutionally prescribed controls. As apparent in the cases studied, this can form a toxic combination, with insufficient thoroughness of scrutiny bodies (Robodebt), cancelling of critical parliamentary scrutiny, excessive judicial deference (childcare benefits scandal), and structurally weak access to justice (both cases).

These conclusions carry an important lesson for future research on the intersection of social security, automation and the rule of law. Research into the legal challenges of ADM remains valuable, be that with a focus on constitutional law, administrative justice or human rights. But when such studies predominantly focus on social security or narrowly related domains of the digital welfare state (e.g. employment support or asylum), they should critically consider the disruptive impact of underlying political, legal and policy-oriented trends, rather than solely paying attention to the general risks linked to automation. This would contribute to a better balance between the already rapidly growing literature on ADM and the still limited attention paid to the rule of law implications of substantive trends in social security.

Finally, if not already on their agendas, governments should develop strategies to ensure that their rule of law systems are actually capable of protecting citizens against digital welfare state abuses. How exactly this is to be achieved is still an open question. But the twofold dynamic of rule of law breakdowns in the cases studied here suggests that changes in the executive branch of government – towards a more humane treatment of citizens combined with a more open administrative culture – should go hand in hand with stronger control powers in the judicial branch, legislative branch and the landscape of watchdog institutions. The stark observation that digital welfare dystopia can only be held off through ‘a genuine commitment to designing the digital welfare state not as a Trojan Horse for neoliberal hostility towards welfare and regulation but as a way to ensure a decent standard of living for everyone in society’ (Alston, 2019: 10) should be interpreted broadly, not only in relation to policy design but also with an eye to the macro level of rule of law controls. Different constitutional systems, each with distinct accountability systems and redress arrangements, should prioritise different paths of reform, for example depending on the relative weight traditionally attributed to ex ante norm-setting versus ex post judicial review (Asimow, 2015). Future research should work towards tangible recommendations for such reforms and consider their suitability to different types of welfare states and constitutional systems. This may be the only way to ensure that cases such as Robodebt and the childcare benefits scandal remain dystopian traumas of the past.

Footnotes

Declaration of conflicting interests

The author declared no potential conflicts of interest with respect to the research, authorship and/or publication of this article.

Funding

The author received no financial support for the research, authorship and/or publication of this article.