Abstract

Background

Dementia disorders are affecting millions of people globally, characterized by memory loss, communication difficulties, and motor function decline. Accurate and early dementia detection is crucial for effective management and treatment. Gait analysis offers a non-invasive method for dementia detection by identifying subtle changes in walking patterns that often precede cognitive symptoms.

Objective

This study aims to evaluate the clinical utility of video-based gait analysis using the Timed Up and Go (TUG) test under single and dual-task conditions (TUGdt) for distinguishing individuals with dementia disorders from healthy controls (HCs).

Method

The study implemented three machine learning models: Support Vector Machine (SVM), Logistic Regression (LR), and Random Forest (RF), to discriminate between persons with dementia and HCs. The dataset consists of a cohort of 64 people with dementia (47 with Alzheimer's disease) and 67 HCs. The participants performed the TUG test as a single and dual-task (TUGdt). In the TUGdt, participants performed the TUG test while simultaneously completing an additional cognitive task (i.e., animal naming (TUGdt-NA) or reciting months in reverse order (TUGdt-MB)).

Results

The results showed that dual-task classification outperformed the single-task. The SVM algorithm achieved the highest accuracy in the TUGdt-NA task (accuracy of 87% ± 5.1 and recall of 86.6% ± 3.2) using 5-fold cross-validation and accuracy of 85.5% and recall of 89.5% using Leave-One-Out Cross-Validation (LOOCV) in the TUGdt-MB task.

Conclusions

In summary, video-based gait features effectively distinguish people with dementia from HCs, particularly under dual-tasking, offering cost-effective, automated, and non-invasive pre-screening to complement clinical assessments.

Introduction

Dementia is a collective term for a range of neurodegenerative disorders that predominantly affect elderly people. It is caused by various diseases and injuries that impact the brain. It is a leading cause of disability and dependence among older adults worldwide. 1 Every year, nearly 10 million new cases of dementia are diagnosed globally. 2 Timely diagnosis could prevent the disease from developing into a severe condition or advanced stages. 3 There are different ways to diagnose dementia, such as cognitive tests and brain imaging; however, most types of tests are labor-intensive, time-consuming, and cause discomfort to the people undertaking these tests.

Motor impairment is one of the early signs of dementia, and changes in gait patterns (the way of walking) have emerged as a potential early marker for the disease. 4 Gait analysis plays a crucial role in the medical domain, offering insights into an individual's health status, functional abilities, and disease progression. 5

Alterations in specific gait parameters could serve as early signs of dementia and aid in its diagnosis. Key indicators include reduced stride length, slow gait velocity, and increased gait variability. 6 These spatiotemporal gait characteristics are useful in distinguishing between individuals with dementia from those without cognitive impairment. 7 In addition to spatiotemporal gait measures, joint-level kinematics (e.g., hip and knee angles) capture lower-limb mobility and coordination and differ in individuals with cognitive impairment, with dual-tasking further amplifying these changes.8–10

The Time Up and Go (TUG) test is a widely used clinical assessment that measures mobility, balance, and functional ability by timing individuals as they stand up from a chair with armrests, walk forward 3 m, turn around, and return to a sitting position. 11 Furthermore, dual-task tests, such as the Time Up and Go dual-task test (TUGdt), combined with verbal tasks (such as naming animals or reciting months in reverse order), have shown to be effective in discriminating between individuals with dementia, mild cognitive impairment (MCI), subjective cognitive impairment (SCI), and healthy controls (HCs), underscoring their value in clinical assessments. 12

In recent years, advancements in computer vision and artificial intelligence have enabled more accessible, cost-effective, and detailed gait analysis. Among these technologies, video-based pose estimation presents a promising avenue for enhancing the accuracy of gait analysis. This technique uses algorithms to detect and track the movement of body parts’ keypoints, such as knees and ankles, from which gait characteristics can be extracted and analyzed. 13 Specifically, pose estimation technology offers a non-invasive alternative to analyze human motion. This allows for an automated and unrestricted gait analysis in various settings. 14

Recent studies have utilized the Microsoft Kinect V2 sensor to capture body movement for automated gait analysis in cognitive impairment.15–18 These studies have employed Kinect to examine gait features under both single and dual-task conditions in older adults, to distinguish between people with cognitive impairments and HCs. The use of Kinect in this context highlights its potential as a low-cost, markerless motion capture tool for clinical research. A few studies have started to explore the use of video-based gait analysis combined with pose estimation techniques as a simpler alternative. For instance, Löfgren et al. 19 demonstrated that step parameters extracted from video during dual-task TUG tests could differentiate between cognitive groups, particularly when using step length. However, our recent systematic review highlighted that the use of machine learning on video-derived gait data for dementia detection remains underexplored. 20 These findings underscore the need for scalable, low-cost, and non-invasive methods for cognitive assessment, particularly those that can operate using standard video input without specialized sensors.

To address limitations of the traditional methods, this study investigates the potential of video-based gait analysis using pose estimation to automate the discrimination of people with dementia from HCs. It aims to develop an automated movement analysis system that can accurately discriminate between those with dementia disorders from HCs. This research focuses on providing a complementary, cost-effective, non-invasive, and simple method for dementia assessment.

First, the relevant gait parameters from the Uppsala–Dalarna Dementia and Gait project (UDDGait™)21,22 using TUG tests performed under single and dual-task conditions (animal naming or reciting months in reverse) are extracted. Second, the features are analyzed with machine learning algorithms to discriminate between groups and identify the most informative gait parameters. Finally, the discriminative performance of single-task and dual-task TUG features is compared.

Methods

This section describes the participants and study setting, the proposed pose estimation approach, the extraction of gait features, preprocessing, and the machine learning methods used for classification.

Participants and setting

UDDGait™ is a longitudinal study in which data were collected at two specialist memory assessment clinics (Uppsala University Hospital from April 2015 to February 2017 and Falu Hospital from June 2015 to June 2016) in Sweden. The HCs were recruited through advertisements and flyers between May 2017 and March 2019. Data collection for all participants was conducted by a qualified physiotherapist. 21 Dementia diagnoses were established through comprehensive clinical assessments conducted at the specialist memory clinics. Diagnoses were made by geriatricians based on established clinical criteria, including results from the Mini-Mental State Examination (MMSE), 23 the Clock Drawing Test, 24 the Verbal Fluency Test (animal naming), 25 and the Trail Making Tests A and B, 26 alongside assessments of motor function and balance. 27 Additional screening for depression was conducted using a short version of the Geriatric Depression Scale. 28 Participants were categorized as having dementia, MCI, and SCI. These classifications formed the basis for distinguishing between healthy individuals and those with cognitive decline in the present analysis.

The exclusion criteria for this study included: inability to walk three meters back and forth, difficulty rising from a seated position, reliance on a walking aid indoors, recent hospitalization within the past two weeks, or the need for an interpreter to communicate in Swedish.

The study was approved by the Regional Ethical Review Board in Uppsala and the Swedish Ethical Review Authority (dnr. 2023-01670-01). Informed consent was obtained from all study participants at the time of enrollment. The TUG tests were captured using two synchronized video cameras mounted on tripods. One camera was positioned 2 meters in front of the turning line to provide a front view, while the other was set 4 meters to the side of the walking line to capture a side view recorded at 30 frames per second with a 1920 × 1080 resolution. The videos recorded by the side camera were used for pose estimation and feature extraction in this study.

Timed up-and-go single and dual-task tests

The dataset comprises video recordings of participants performing the TUG under single-task and TUGdt. The TUG is a test mainly used to assess mobility. 11 For TUG, participants begin from a sitting position, stand up from a chair, walk 3 meters, turn around, walk back to the starting point, and sit down again. In the TUGdt, participants perform the same physical tasks as in the TUG, but they are simultaneously engaged in cognitive tasks that include naming animals and reciting the months of the year in reverse order. Each participant performed one TUG and two TUGdt. In the first TUGdt, the participants recited months in reverse order, and the second was naming animals while walking.

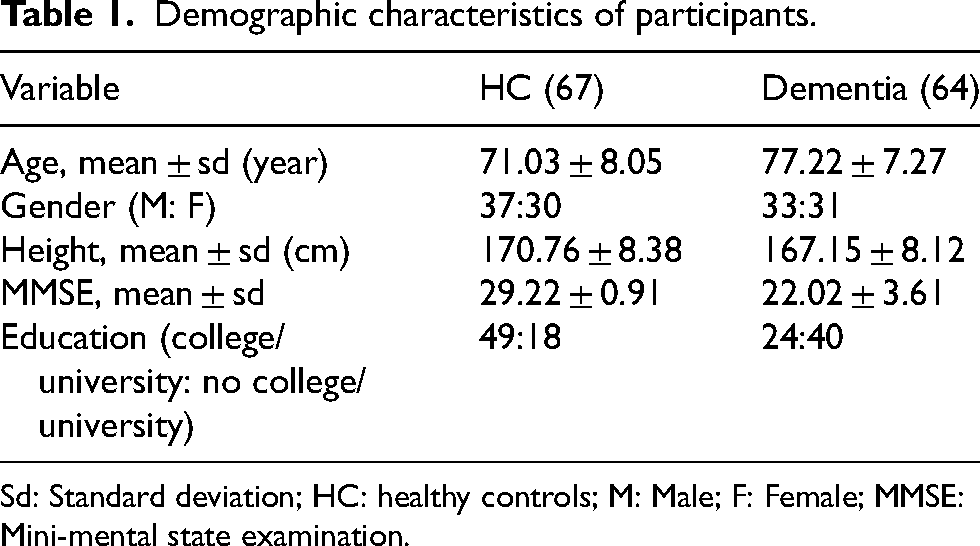

The dataset consists of recordings of 67 HCs (201 videos) and 64 people with dementia (47 diagnosed with AD and 17 with unspecified dementia) conditions (192 videos). Among the AD cases, 39 were diagnosed as having late-onset AD, 6 as having unspecified AD, and 2 as having early-onset AD. The demographic of the participants is presented in Table 1.

Demographic characteristics of participants.

Sd: Standard deviation; HC: healthy controls; M: Male; F: Female; MMSE: Mini-mental state examination.

Proposed method

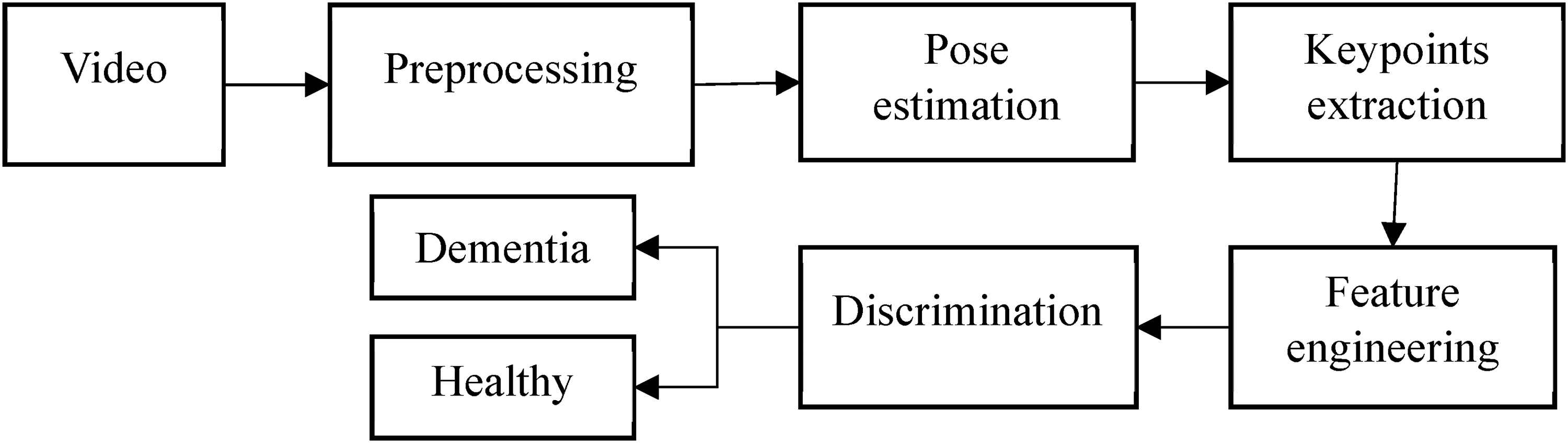

In this section, the method used for pose estimation, feature extraction, and classification is presented. The process includes segmenting the videos to focus only on the TUG single and dual-tasks part of the test, applying pose estimation to the segmented videos, preprocessing, and feature engineering for the keypoints obtained, followed by performing discrimination between HCs and those with dementia. The study workflow is shown in Figure 1.

Overview of the methodology from data acquisition to discrimination between dementia and healthy individuals.

Pose estimation

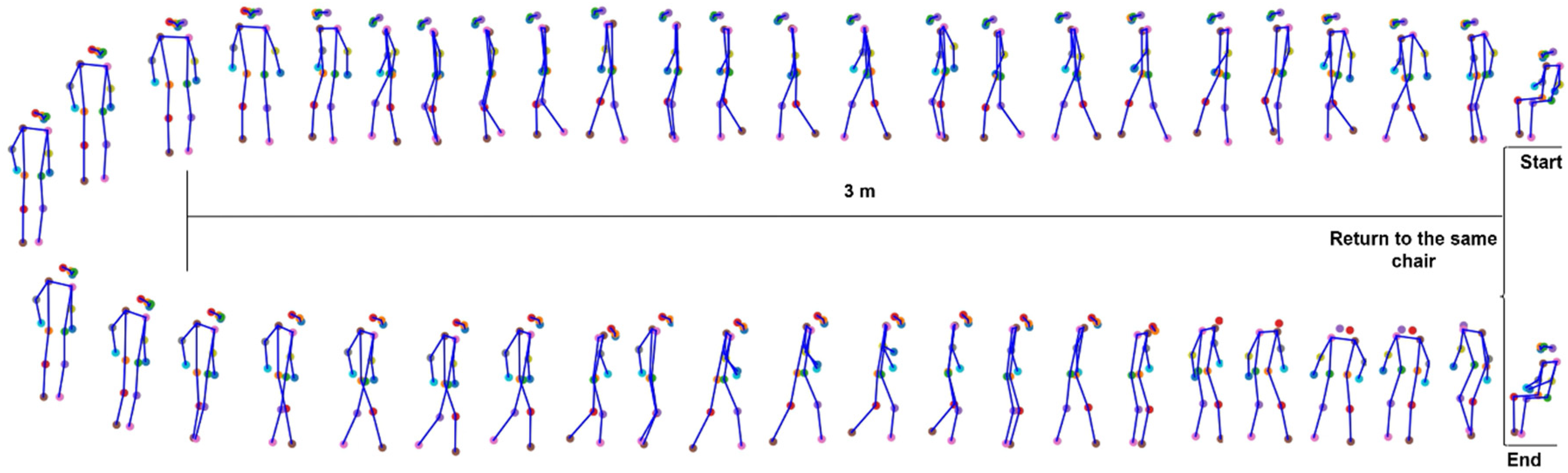

Pose estimation in computer vision involves detecting the positions and orientations of an individual's body parts from images or videos. This is achieved by identifying several keypoints on the human body, such as joints or facial landmarks. In this study, the 3D (x, y, visibility) pose estimation using YOLOV8 is performed to identify 17 keypoints for tracking the participant's movement.

The keypoints are: Nose, Left Eye, Right Eye, Left Ear, Right Ear, Left Shoulder, Right Shoulder, Left Elbow, Right Elbow, Left Wrist, Right Wrist, Left Hip, Right Hip, Left Knee, Right Knee, Left Ankle, and Right Ankle. Figure 2 depicts the TUG test sequence, created using video analysis with pose estimation. The figure shows the keypoints of an individual performing the test, starting from sitting, transitioning to standing, walking, turning, walking back, and sitting again on the chair.

Visualization of the TUG test.

YOLOV8 is a state-of-the-art pre-trained pose model that has been trained on the COCO dataset. 29 The visibility score indicates how the keypoints are visible in the images, with a value ranging between 0 to 1, where 1 represents the highest visibility score. The mean visibility score of the YOLOV8 pose model for the dataset in this study is 0.77.

The YOLOV8 pose model was employed to detect and localize skeletal landmarks in the video frames, providing keypoints essential for feature engineering. Extracting joint positions enables movement analysis by deriving relevant features from these keypoints. These features are then utilized in machine learning models for classification.

Preprocessing

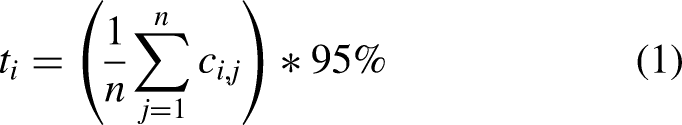

Following pose estimation, the extracted keypoints were preprocessed to ensure temporal consistency and reliability before feature extraction. The predicted joint positions were qualitatively verified to align with the corresponding visibility scores. Based on these scores, a confidence threshold for each joint was defined, calculated as (mean confidence − 0.05).

30

For joints identified as outliers, their positions are corrected by interpolating from neighboring frames with confidence values exceeding the threshold. The confidence threshold value ti is calculated as in Eq. 1. where

Feature extraction

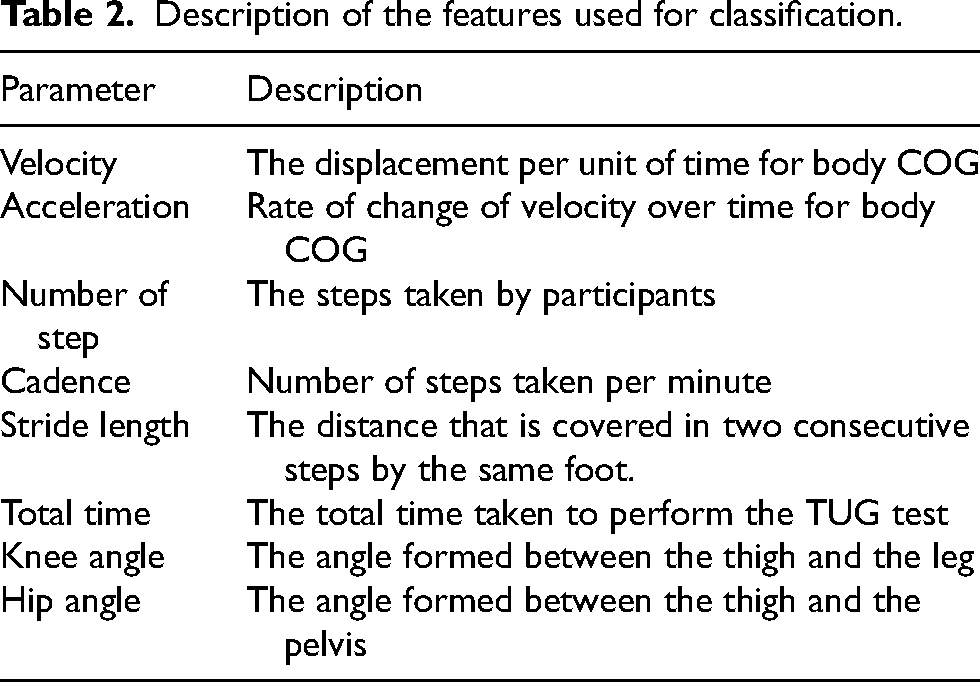

This study extracted several movement features from the pose estimation data to predict dementia. Our previous study 20 identified that slower gait speed, shortened stride length, and increased gait variability differentiate individuals with dementia from HCs. Building on these findings, the current study includes additional movement features (i.e., cadence, total test time, acceleration, knee, and hip angles) to provide a more comprehensive assessment of movement characteristics. Cadence and acceleration features can further reveal gait stability and motor control challenges. Furthermore, knee and hip angles offer insights into joint mobility. These features are derived from the coordinates of key joints obtained through pose estimation algorithms. The detailed analysis of these movement features allows for identifying potential abnormalities and variations associated with the early stages of dementia. The description of these features is presented in Table 2. All the features are normalized with respect to the height of the individual under consideration. This is performed by dividing each value for every feature on a frame basis by the corresponding height for each participant provided within the dataset. The starting point of the features is when the instruction is given by the clinician to start the test and stops when the person returns to the same starting sitting position.

Description of the features used for classification.

Stride length

Stride length represents the distance covered in two consecutive steps by the same foot. Shortened stride length is a common symptom in people with dementia, indicating impaired coordination and balance. 31 The stride length is calculated by taking the ankle keypoints obtained through pose estimation. Smoothing with a moving average window is applied to reduce the noise. Stride length is calculated by detecting peaks in the smoothed vertical positions of the left and right ankles, representing the points where each foot reaches its highest position during a step. The stride length is then determined by measuring the horizontal distances between consecutive peaks for each ankle and converting these distances into meters using a scaling factor.

To derive the scaling factor corresponding to real-world measurements, the study first calculates the Euclidean distance between two points (x1, y1) and (x2, y2) identified in one frame. This distance represents the pixel-based measurement between the two points in the frame. To translate this pixel distance into a real-world scale, the known real-world distance of 3 meters is invoked. This distance is the actual measured distance between two physical locations corresponding to the points in the frame (the distance from a chair to the turning point in the frame can be seen in Figure 1). This scaling factor is used to convert pixel measurements into meters.

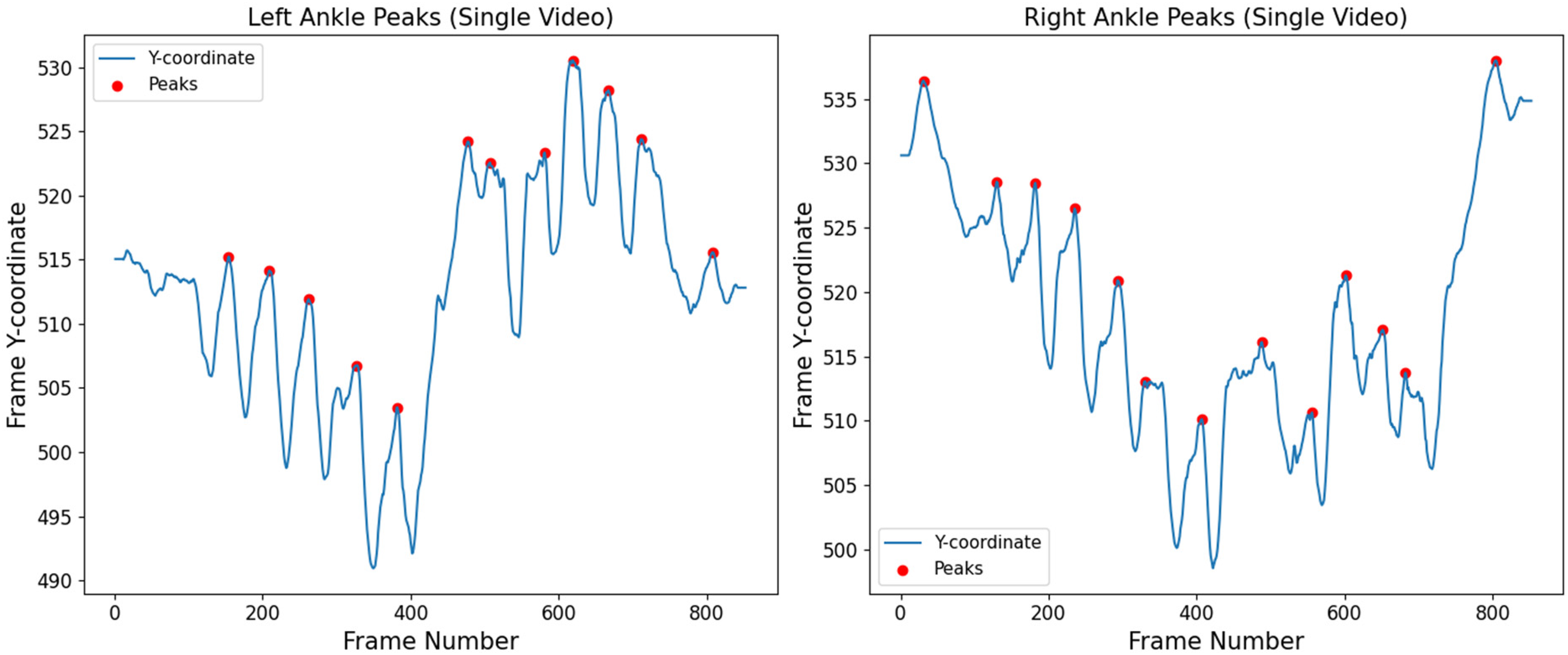

The number of steps in a video is calculated by detecting peaks in the smoothed y-coordinates of the left and right ankle keypoints. An example of peak identification for one participant is shown in Figure 3. The plot presents the Y-coordinate positions of the left and right ankles across frames, with peaks indicating the highest point of the ankles during the TUG test.

Peaks identification of the left and right ankle for one participant performing the TUG test.

Velocity

Velocity is the rate of change of displacement with respect to time. It gives insights into the overall pace of movement. A decreased walking speed is often observed in individuals with dementia, reflecting reduced physical capability.

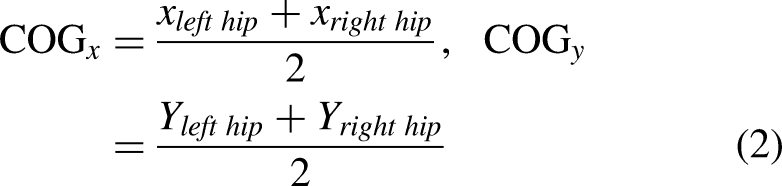

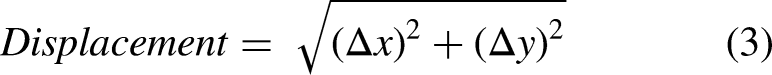

The velocity is obtained based on the body's Center of Gravity (COG). The coordinates of the left and right hips are averaged to find the body COG for each frame. The formula for calculating the COG for each frame is given in Eq. 2. The parameters in Eq. 2 include (Ylefthip) and (Yrighthip) which are the Y-coordinate of the left and right hip, respectively, along with (Xlefthip) and (Xrighthip), which are the X-coordinate of the left and right hip. These coordinates are used to determine the center of gravity for the hips.

After calculating the COG, the calculation of displacement is carried out. The displacement between consecutive frames is calculated as the Euclidean distance between the COG in two consecutive frames, as given in Eq. 3. where,

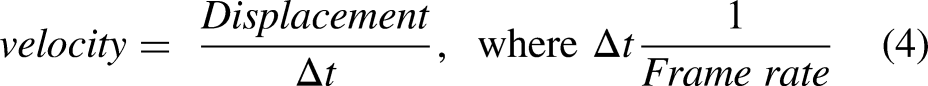

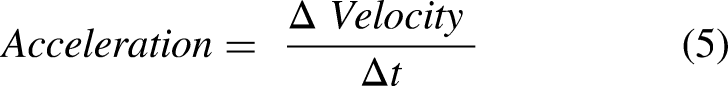

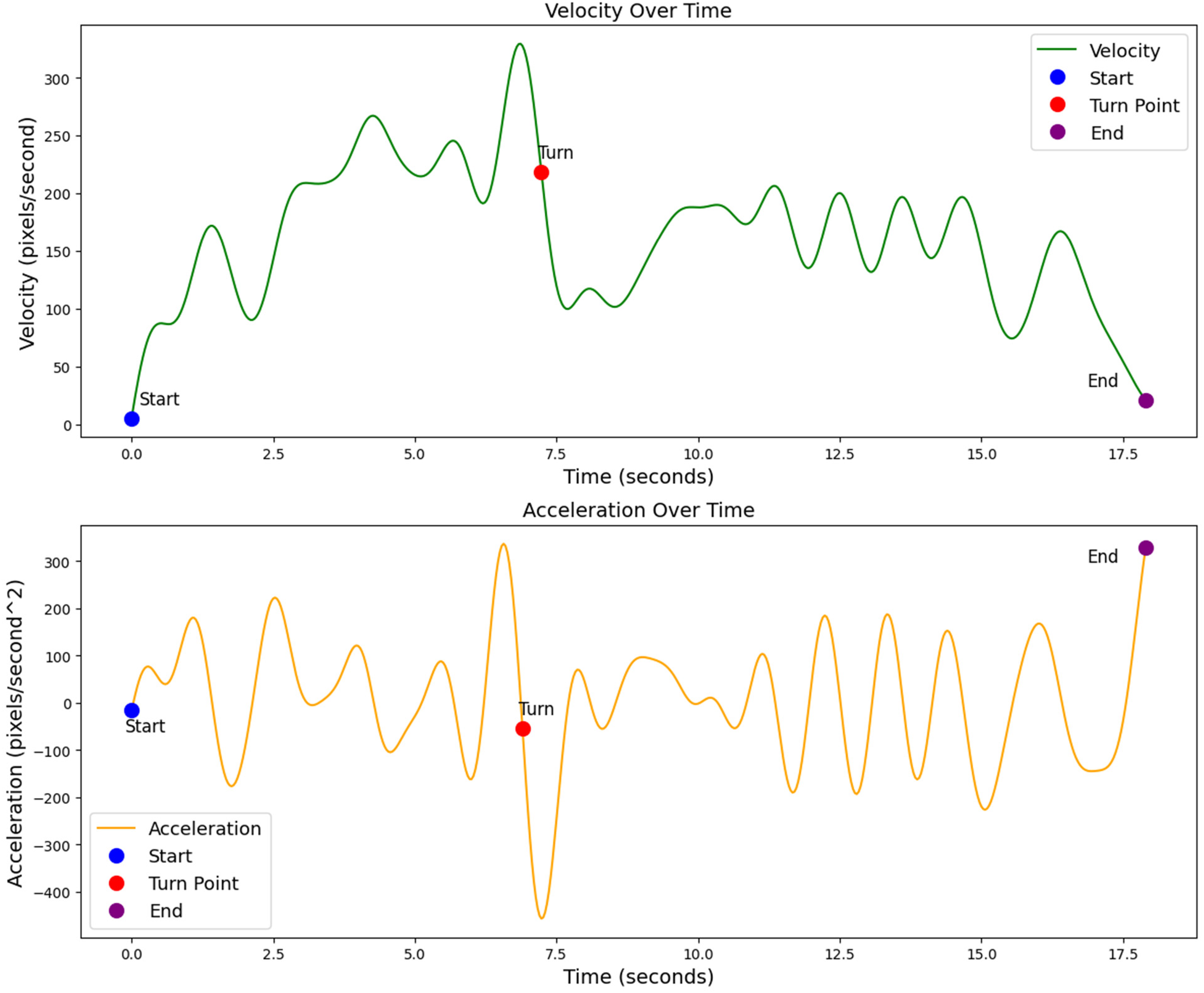

Velocity is then calculated by dividing the displacement by the time interval

Acceleration

Acceleration is the change in velocity with respect to time. The formula for acceleration is given in Eq. 5. The velocity and acceleration are shown in Figure 4. The Figure shows the velocity and acceleration for one individual performing the TUG test, with marked points indicating the start, turn, and end of the movement. A 4th-order low-pass Butterworth filter with a 0.06 cutoff frequency was applied to smooth the signals and reduce high-frequency noise.

Velocity and acceleration for a single video indicating the start, turn, and end of the TUG test.

Knee and hip angles

Changes in knee and hip angles during walking can indicate a reduced range of motion, which can indicate signs of motor impairment. The knee angle is given by the positions of three key landmarks: the hip, knee, and ankle. The process involves determining the vectors formed by these points and using the dot product to calculate the angle between them. This method provides an accurate knee angle measurement, which is crucial for assessing movement patterns. The formula for knee and hip angle calculation is given in Eq. 6.If vector a is the vector from the hip to the knee and vector b is the vector from the knee to the ankle. Then

The angle between the knee and hip is given by

The hip angles were calculated by the same method as the knee angles. However, the positions of the landmarks for hip calculation are the shoulder, hip, and knee.

Feature correlation

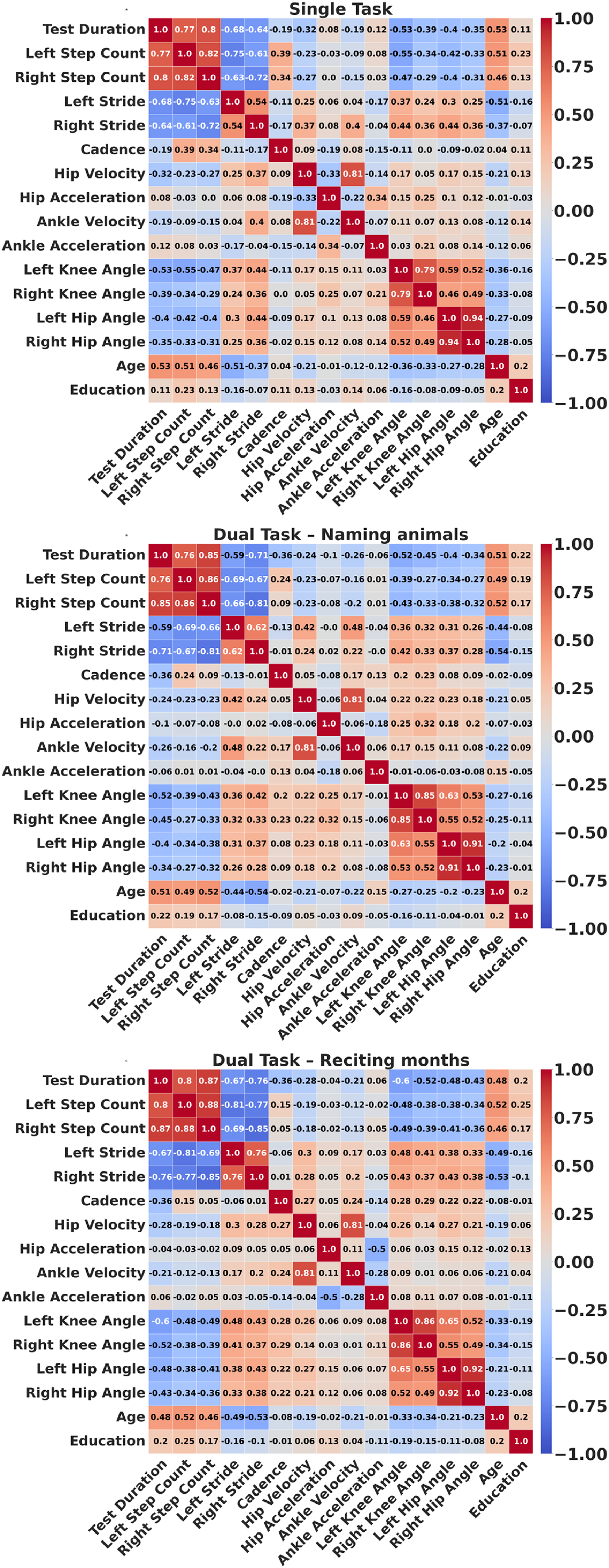

Figure 5 presents the correlation heatmaps of all features under the three TUG conditions: single-task, dual-task (naming animals and reciting months). In the single-task condition, the strongest correlations were observed between left and right step counts, left and right hip angles, and hip and ankle velocity. The two dual-task conditions exhibited similar correlation patterns. In the TUGdt naming animals and reciting months conditions, high correlations were found between left and right step counts, left and right knee angles, left and right hip angles, and between hip and ankle velocity. To address multicollinearity, strongly correlated feature pairs were averaged to form composite variables before modeling.

Correlation heatmaps of gait features across the three TUG conditions: single task, TUGdt (TUGdt-NA and TUGdt-MB).

Adjusting features

The study evaluated age, sex, and education as potential confounders between HCs and participants with dementia. Age and education differed significantly between groups (p < 0.001 for both), whereas there was no statistically significant difference for sex. As a result, age and education were included as covariates in all machine learning models; sex was excluded given the absence of a significant difference between the groups.

Machine learning models

The study employed three supervised machine learning algorithms (RF, SVM, and LR) to differentiate between HCs and individuals with dementia. Machine learning models such as SVM and LR typically need standardized inputs. The study applied z-score standardization (mean = 0, SD = 1) to all features. This scaling ensures that all features are on a common scale, and it improves the numerical stability. The extracted features have no missing data detected. All models were trained with (5-fold and LOOCV) cross-validation to ensure robustness and mitigate the risk of overfitting. LOOCV partitions the data into individual training and testing data points to evaluate model performance. In LOOCV, all observations from a single individual are left out as the test set, while the remaining data are used for training. This ensures that no data from the same individual appears in both the training and test sets. Hyperparameter tuning was conducted to optimize the performance of each model. The performance of these algorithms was then evaluated using classification metrics such as accuracy, precision, recall, and F1-score. These matrices offer a comprehensive evaluation of the machine learning model's performance.

Each participant performed three tasks: a TUG single task and two TUGdt (animal naming and reciting months). Therefore, the data were separated based on the task type. Machine learning models were then applied separately to classify the single-task, dual-task animal naming, and dual-task reciting months, comparing HCs and those with dementia within each task.

Random forest

The RF is an ensemble model used for both classification and regression tasks. It was selected due to its robustness and effectiveness in handling classification tasks with complex and non-linear relationships. In the single task, the RF was configured with 50 trees, a maximum depth of 3, a minimum of 10 samples per leaf node, and a minimum of 10 samples required to split an internal node. In the animal naming task, the configuration of 180 trees, a maximum depth of 10, a minimum of 1 sample per leaf node, and a minimum of 10 samples are required to split an internal node. When reciting months backwards, the study utilized 180 trees, a maximum depth of 10, a minimum of 1 sample per leaf node, and a minimum of 2 samples required to split an internal node. A random state for all the tasks was set to (a parameter that ensures the shuffling of the data before the split process) for 42, for reproducibility.

Support vector machines

SVM is an effective algorithm for handling high-dimensional input data. It has the capability to find an optimal separating hyperplane for classification problems. The SVM classifier is configured with a Radial Basis Function (RBF) kernel. The regularization parameter C is set to 0.7 for the dual-task reciting months backward and for the single task; for the animal naming task classification, it was set to 7 for the dual-task to balance the trade-off between maximizing the margin and minimizing classification errors. The gamma parameter is set to “scale”, which automatically adjusts according to the number of features. The features are standardized to a mean of 0 and a standard deviation of 1, which is crucial for SVM performance.

Logistic regression

LR is a simple algorithm and well-suited for binary classification tasks. LR can model the probability of an outcome based on one or more predictor variables. The LR model was configured with a maximum of 1000 iterations and a random state of 42 to ensure the reproducibility of results.

Results

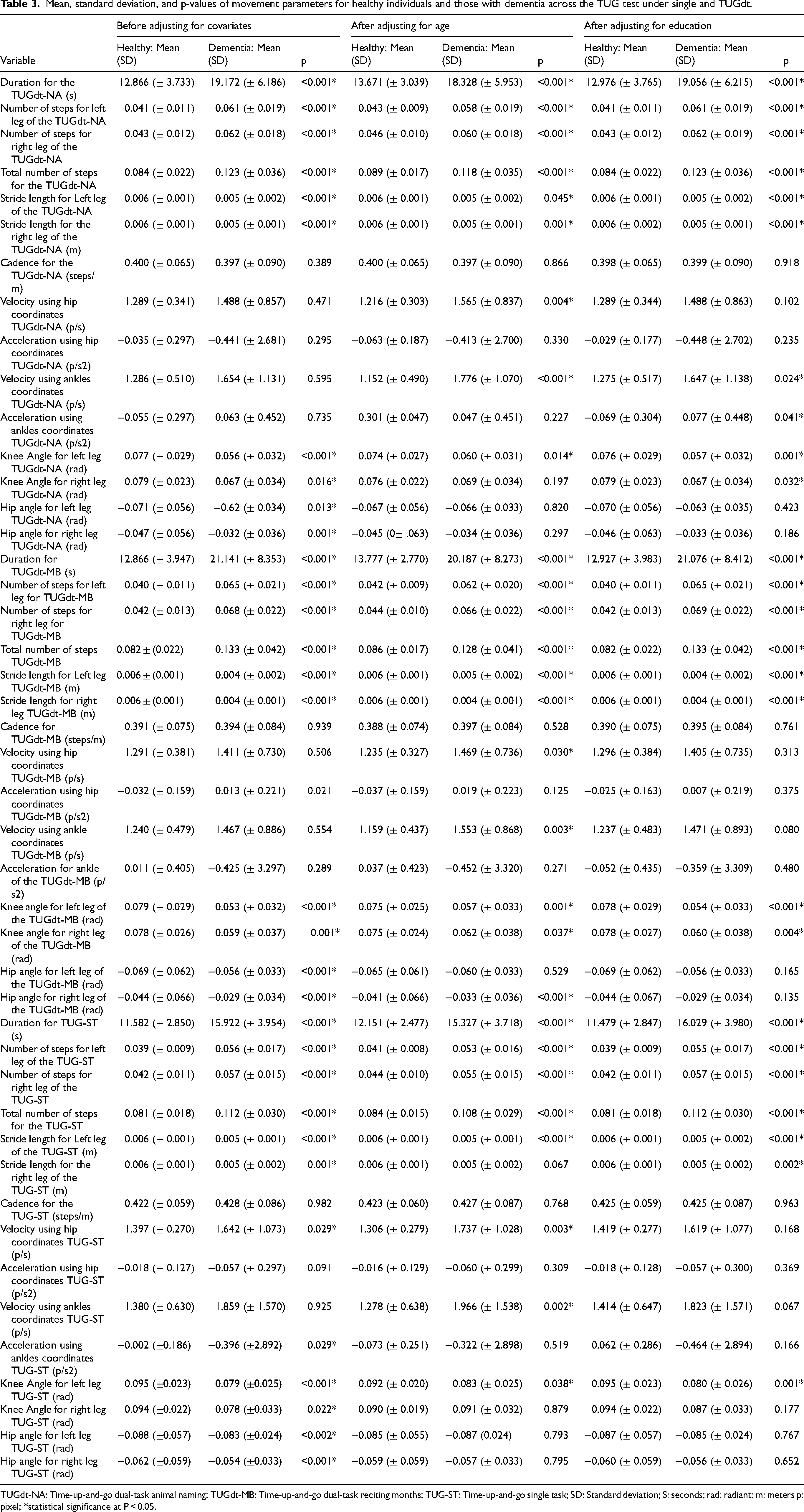

The study analyzed videos from 64 individuals with dementia and 67 HCs performing the TUG under single-task and two TUGdt (TUGdt-AN and TUGdt-MB). Pose estimation for body movement was implemented using YOLOv8, and 14 movement features were derived per participant. Table 3 reports the group's mean, standard deviation, and p-values results of the statistical tests of these features before and after adjusting for age. The adjustments for age aimed at reducing confounding effects, providing a clear distinction in the performance between the two groups. The TUG single-task test revealed significant differences in various features between HCs and people with dementia, particularly in the durations of the tests, step counts, stride lengths, and gait velocities. The time of the test, and the number of steps is significantly higher in the dementia group during the single task. In the TUGdt, similar trends were observed with higher differences. The dementia group had longer durations to complete the test and more steps. Moreover, stride length is shown to be shorter in people with dementia compared to the healthy group, which implies that people with dementia compensate by taking more steps. One observation in this study is that the TUG tests provide features such as the knee and hip angles that demonstrate statistical significance (Table 3), and, to the best of our knowledge, have not been examined in video-based TUG discrimination in the literature.15–18 Among these features, 9 features were found to be statistically significant during TUGdt-NA, 11 features during TUGdt-MB, and 8 features in the single task after adjusting for age. The mean, standard deviation, and P-values of these features are presented in Table 3. The Shapiro-Wilk test was used to check the distribution of each feature. If the distribution was normal, a t-test was conducted, and if not, then a Mann-Whitney U test was applied. Analysis of Covariance (ANCOVA) was conducted for each feature to adjust for age and education.

Mean, standard deviation, and p-values of movement parameters for healthy individuals and those with dementia across the TUG test under single and TUGdt.

TUGdt-NA: Time-up-and-go dual-task animal naming; TUGdt-MB: Time-up-and-go dual-task reciting months; TUG-ST: Time-up-and-go single task; SD: Standard deviation; S: seconds; rad: radiant; m: meters p: pixel; *statistical significance at P < 0.05.

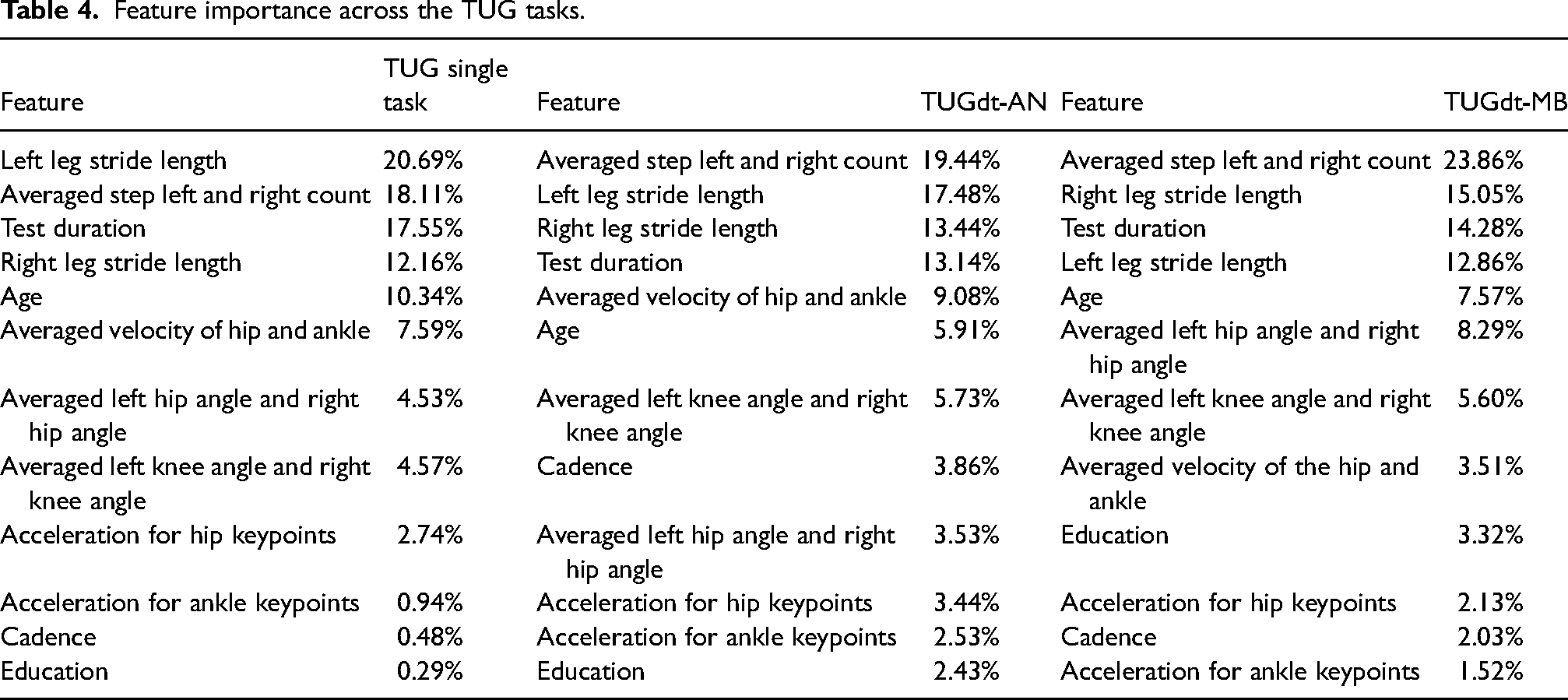

The feature importance evaluation using a Random Forest model with 5-fold cross-validation was implemented to identify the most informative predictors for each TUG condition. For the TUG single task, the most significant features include left leg stride length (20.69%), averaged step count (left and right) (18.11%), test duration (17.55%), and right leg stride length (12.16%). For the TUGdt-NA task, the most important features are the averaged step count (left and right) (19.44%), left leg stride length (17.48%), right leg stride length (13.44%), and test duration (13.14%). In the TUGdt-MB task, the top contributing features are averaged step count (left and right) (23.86%), right leg stride length (15.05%), test duration (14.28%), and left leg stride length (12.86%). Table 4 shows the full feature-importance rankings for the TUGdt-MB, TUGdt-NA, and TUG single task.

Feature importance across the TUG tasks.

The features mentioned in Table 4 were utilized in three different machine learning classifiers: RF, LR, and SVM to automate the differentiating process between HCs and people with dementia across the three TUG tasks. Fine-tuning of the models was carried out using grid search to attain the highest performance for these models.

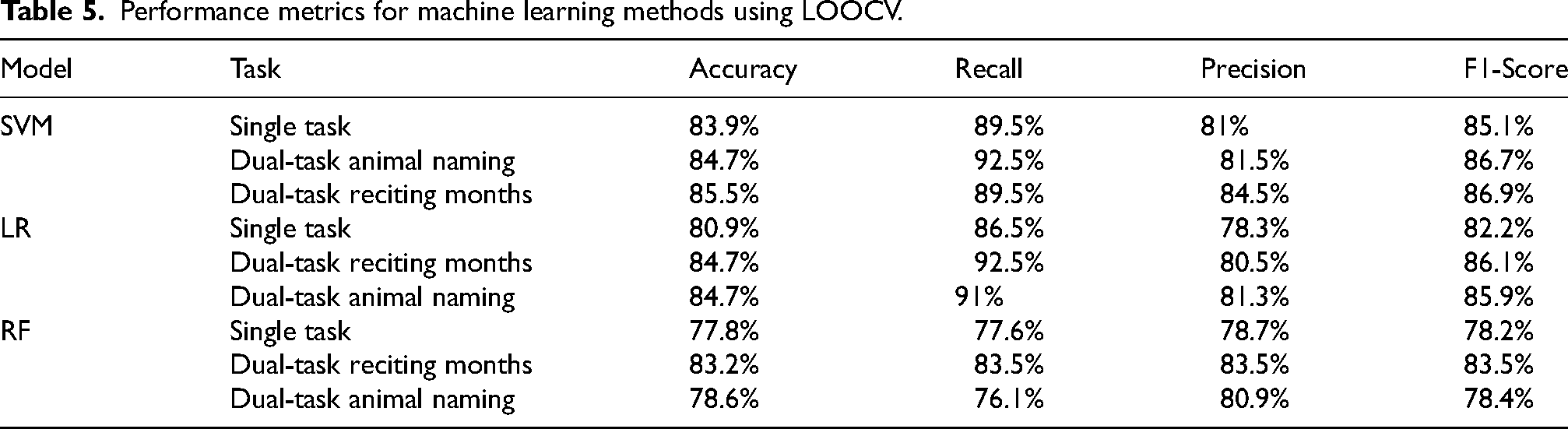

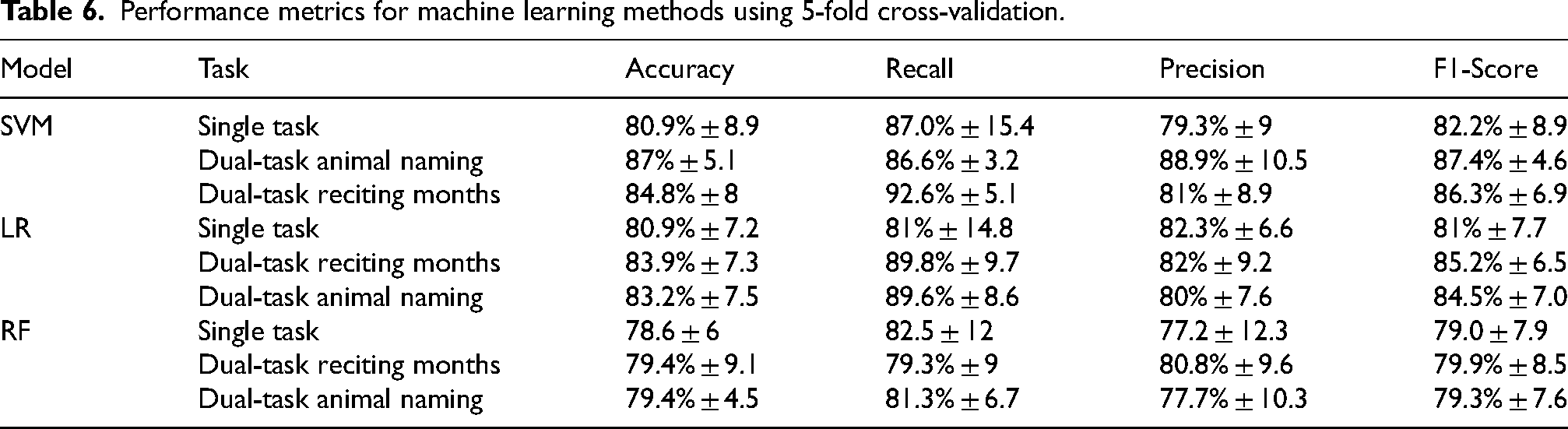

The performance of the models is shown in Tables 5 and 6. Table 5 shows the results using LOOCV, and Table 6 employs 5-fold cross-validation. The machine learning algorithms showed the highest performance in the TUGdt. The SVM classifiers demonstrated the highest performance in the TUGdt classification with an accuracy of 87% ± 5.1, a recall of 86.6% ± 3.2, a precision of 88.9% ± 10.5, and an F1 score of 87.4% ± 4.6% in the naming animal task. The results show that TUGdt tests consistently achieved higher performance than single-task tests. These results indicate that the TUGdt approach generally enhances the performance of these classifiers in distinguishing between those with dementia from HCs.

Performance metrics for machine learning methods using LOOCV.

Performance metrics for machine learning methods using 5-fold cross-validation.

The results show that the SVM model achieved the highest recall rates for both TUGdt conditions: 92.6% ± 5.1% for TUGdt-MB with 5-fold cross-validation and 92.5% for TUGdt-AN with LOOCV. In comparison, LR reached recall rates of 92.5% in the TUGdt-MB with LOOCV. High recall across these models ensures that most dementia cases are accurately identified.

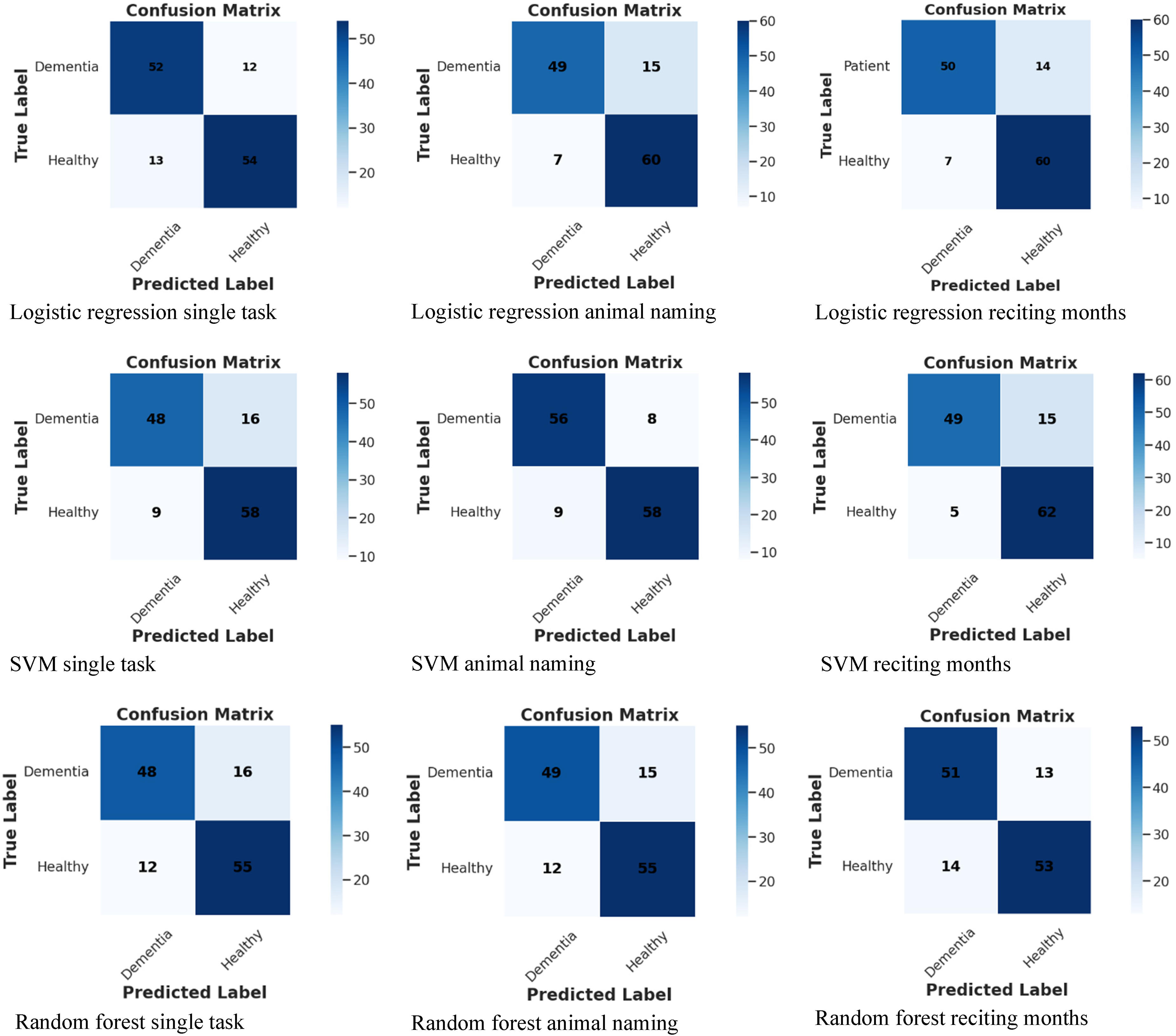

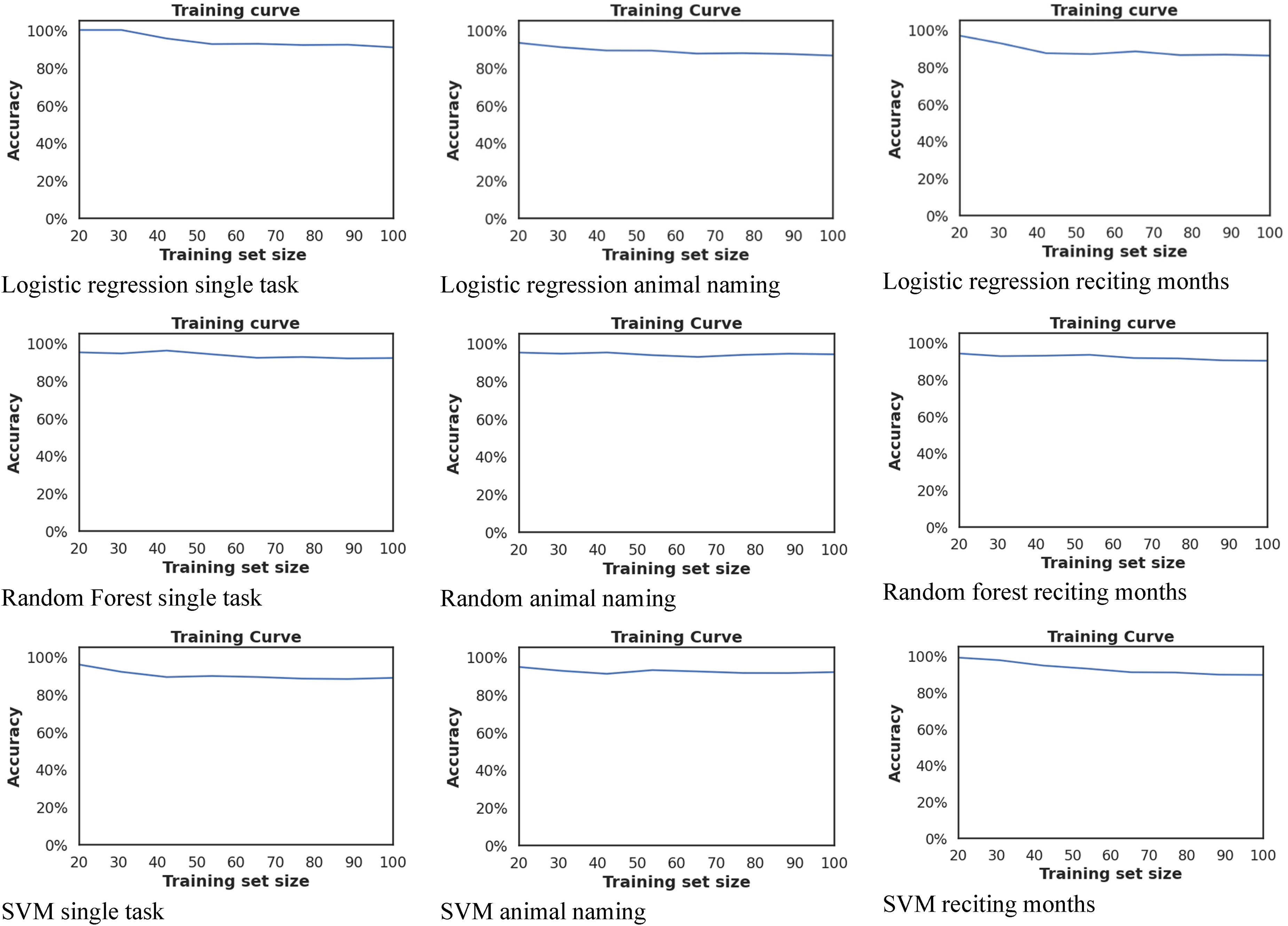

Figure 6 presents the confusion matrices from 5-fold cross-validation for the three classifiers (LR, SVM, RF) across the TUG conditions (single task, TUGdt-AN, TUGdt-MB). In all figures, the diagonal dominates, indicating that both people with dementia and HCs are generally well identified. Moreover, Figure 7 presents the training curves from 5-fold cross-validation for the three classifiers (LR, SVM, RF) across the TUG conditions (single task, TUGdt-AN, TUGdt-MB). The y-axis represents the accuracy on the training set. In all figures, the training curve starts high and then flattens as the training set grows, indicating an early plateau and stable generalization.

Confusion matrixes across the three tasks (Single task, TUGdt-NA, and TUGdt-MB) for the three models.

Training curves across the three tasks (Single task, TUGdt-NA, and TUGdt-MB) for the three models.

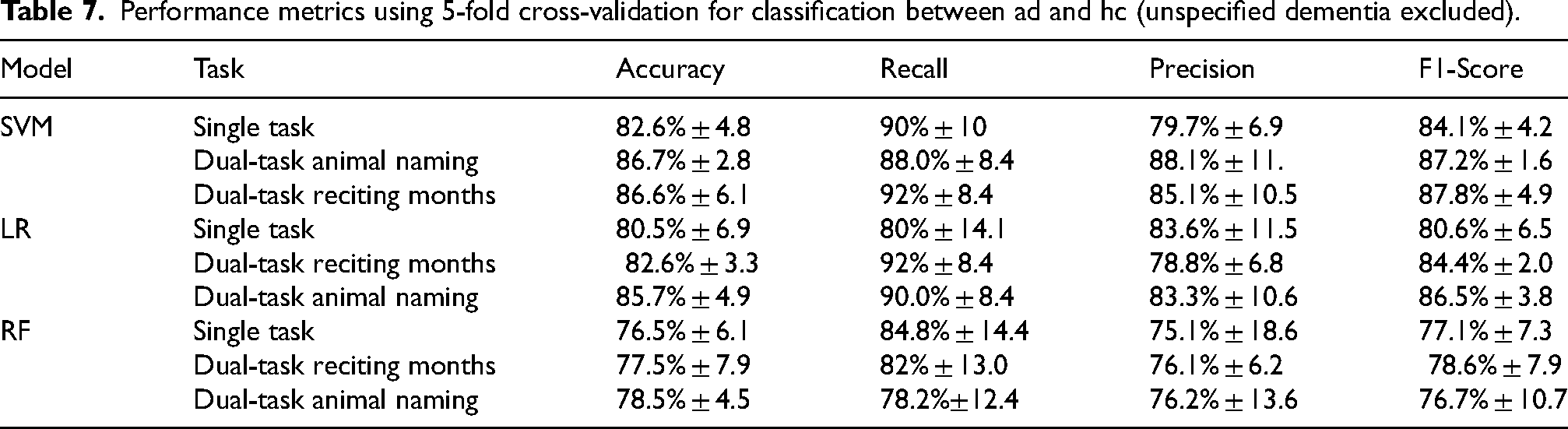

Table 7 reports 5-fold cross-validation performance when restricting the dementia group to AD only. It shows the same overall pattern as the full sample: the TUGdt outperforms the single task. SVM is highest algorithm overall (TUGdt-NA: 86.7 ± 2.8 accuracy) performance and highest recall on TUGdt-MB (92 ± 8.4). In summary, the AD only analysis confirms that the TUGdt-NA yields the best discrimination, and the results remain highly similar after excluding unspecified dementia.

Performance metrics using 5-fold cross-validation for classification between ad and hc (unspecified dementia excluded).

Discussion

The study aims to perform motion analysis for discriminating between HCs and people with dementia using TUG under single and TUGdt. It also compares the TUG single-task with the TUGdt performance. This is performed by utilizing machine learning and pose estimation using YOLOV8 on video data. Moreover, this study investigates the most important features contributing to the discrimination between the groups. Data from 64 individuals with dementia and 67 HCs were analyzed as they performed the TUG test under both single and TUGdt conditions.

This study derives multiple movement features from the pose-estimation outputs. From the extracted features, differences between the groups emerged. People with dementia had longer TUG durations, take more steps, and characterized with shorter stride length across all tasks (all p < 0.001 after age adjustment; Table 3). Hip and ankle velocities were higher in the dementia group and remained significant across all three tasks. These statistical findings align with the Random Forest rankings (Table 4): duration, averaged step count (left and right), and stride length were the top contributors in the single task, TUGdt-AN, and TUGdt-MB. They also align with prior evidence, including systematic review, 20 showing that individuals with dementia typically exhibit slower gait speed, shorter stride length, and greater gait variability compared with HCs. Building on this established evidence, the present study additionally incorporated cadence, acceleration, and joint-level kinematic features (knee and hip angles) to provide a more comprehensive assessment of movement characteristics. In the current results, these kinematic measures contributed to group discrimination, supporting the notion that cadence and acceleration capture aspects of gait stability and motor control, while knee and hip kinematics reflect joint mobility and coordination. Previous studies have similarly reported joint-level kinematic alterations in cognitive impairment, with dual-tasking amplifying these effects. For example, in MCI, altered knee angles, greater peak knee extension, and reduced knee angle at heel strike have been observed during level walking, 8 and knee peak extension under a story-recall dual-task has been shown to discriminate individuals with MCI from those with normal cognition. 9 Moreover, spatiotemporal and kinematic differences are also observed in AD and bvFTD, with lower-limb kinematics (thigh/knee/ankle) often more informative than spatiotemporal parameters alone and cognitive dual-tasking producing marked deterioration. 10 Together, these findings support the use of joint angles as complementary markers alongside other spatiotemporal measures.

The results show that TUGdt outperforms single-task across LOOCV and 5-fold CV. These findings highlight the importance of task selection in dementia detection. The SVM algorithm was strongest overall. These findings are in line with prior research showing that dual-tasking magnifies cognitive–motor interference and increases separation between groups compared with single-task gait. 12

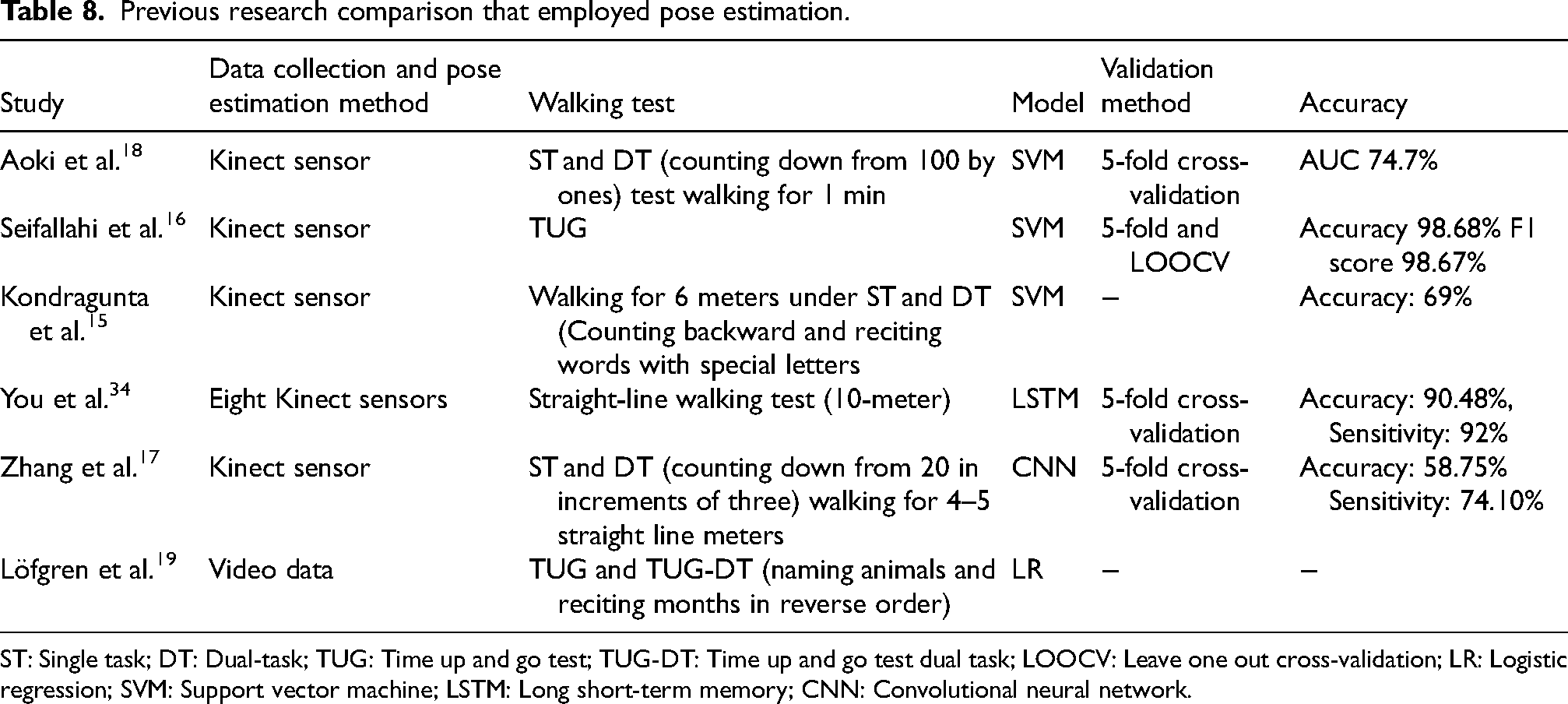

Compared with the literature that used data collected through Kinect sensors,15–18 this study employed pose estimation using YOLOV8 on data collected through a simple camera, providing a more accessible and cost-effective alternative. On the other hand, sensor-based methods are effective32,33; however, they are invasive and may cause a burden to the person undertaking the test. Table 8 provides an overview of the previous research for dementia detection through gait analysis using non-invasive methods.

Previous research comparison that employed pose estimation.

ST: Single task; DT: Dual-task; TUG: Time up and go test; TUG-DT: Time up and go test dual task; LOOCV: Leave one out cross-validation; LR: Logistic regression; SVM: Support vector machine; LSTM: Long short-term memory; CNN: Convolutional neural network.

Similar to the findings of Akoi et al., 18 this study showed that the TUGdt conditions achieved high performance compared to single tasks. In contrast to the study of Löfgren et al., 19 who employed LR for discriminating between cognitively impaired and HCs using gait parameters derived from the TUG, analyzed through pose estimation, this study experiments with different machine learning algorithms (RF, SVM, and LR) and employs cross-validation to ensure the robustness and generalizability of the results. Moreover, knee and hip angle features were extracted in this study, which have not been explored in previous research through video-based data. To the best of our knowledge, this is the first study that used video data to automate movement analysis for discriminating those with dementia from HCs using pose estimation and machine learning.

The proposed method provides an alternative to GAITRite, which is widely used in clinical gait assessment. GAITRite requires dedicated space, substantial cost, and is mainly used in specialized clinics. In contrast, single-camera video is cost-effectiveand non-invasive, requiring only a standard video camera, which makes it easier to deploy in clinical and non-specialized settings than GAITRite. With modern pose estimation, it has been validated with strong agreement against GAITRite, supporting a practical and scalable approach for dementia assessment. 32

The increasing number of people with dementia, along with the complexity and high costs of current diagnostic methods, highlights the importance of developing simpler yet inexpensive methods. This study explores the use of an automated approach for distinguishing those with dementia from HCs using movement analysis from video data. To our knowledge, this is among the first studies to investigate the application of machine learning for this purpose.

A single-camera, video-based TUG (single and dual-task) provides an objective, non-invasive method to assess gait and mobility associated with dementia. This study offers a method to discriminate between people with dementia and HCs by performing TUG tests under single or TUGdt using data from a single side-view camera. This is a simple and cost-effective method. This could be further developed as a screening tool for dementia in memory clinics or primary care. However, it is very important to acknowledge that further testing is needed to establish its validity as a screening method, particularly in populations with MCI or subjective cognitive impairment (SCI), since the current study only included individuals diagnosed with dementia.

This research shows the promise of computer vision and machine learning as a non-invasive method by using health data to aid discrimination between those with dementia from HCs. The method explored in this study has the potential to provide a useful, automated, and simple method for identifying dementia disorders and could serve as a complementary tool to support clinical assessments and accurate diagnosis. By providing an automated method, these techniques may help improve accessibility to dementia assessment.

A limitation of this study is that pose estimation models are affected by variations in video quality. Poor-quality videos might impact the accuracy of the pose model and introduce errors in pose detection. In addition, environmental and capture conditions, such as poor lighting and background clutter, can reduce keypoint reliability. The study acknowledges that combining AD and unspecified dementia introduces diagnostic heterogeneity, as these entities may reflect different neuropathological processes and gait phenotypes. To address this, we re-ran all analyses after excluding unspecified dementia (i.e., AD versus HC only), using identical preprocessing, features, and cross-validation; results were highly similar, and the main conclusions were unchanged. Moreover, the dataset used in the study is relatively small; a larger and more diverse dataset could help to improve the robustness of the models.

Conclusion

The study utilized computer vision through pose estimation techniques applied to video data to extract various movement features. The results indicate a promising capability for non-invasive discrimination of those with dementia from HCs, particularly in dual-task performance. This method enabled a detailed and accurate movement analysis based on video data. Three algorithms, namely RF, SVM, and LR, were utilized to differentiate individuals with and without dementia. Among the algorithms tested, the SVM achieved the highest classification accuracy for the dual task animal naming (accuracy of 87% and recall of 86.6% using 5-fold cross-validation), followed by reciting months backwards (accuracy of 85.5% and recall of 89.5% using LOOCV). These results highlighted the potential of using computer vision and machine learning models to support dementia screening.

For future work, expanding the datasets to different stages of cognitive impairment (MCI, SCI). Additionally, extracting a wider range of features, including parameters derived from whole-body movements, could provide a more comprehensive understanding of movement dynamics in dementia disorders. Furthermore, employing multimodal fusion, such as integrating features from speech and movement, could improve the accuracy and robustness of the machine learning models.

Footnotes

Acknowledgements

The authors appreciate grants from the Swedish Research Council (2017-1259) that provided support for the collection and management of the dataset within the Uppsala-Dalarna Dementia and Gait Project (UDDGait, principal investigator AC Åberg) used for the current study.

Ethical considerations

The study was approved by the Regional Ethical Review Board in Uppsala and the Swedish Ethical Review Authority (dnr. 2023-01670-01).

Consent to participate

Informed consent was obtained from all study participants at the time of enrollment.

Consent for publication

Not applicable

Author contribution(s)

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data availability statement

The original (UDDGait) data set material analyzed during the current study is not publicly available due to its content of sensitive personal data. The datasets generated may be available from the corresponding author of the current study, Mustafa Al-Hammadi, upon reasonable request, after ethical considerations.