Abstract

Despite the potential benefits of remote cognitive assessment for dementia, it is not appropriate for all clinical encounters. Our aim was to develop guidance on determining a patient's suitability for comprehensive remote cognitive diagnostic assessment for dementia. A multidisciplinary expert workgroup was convened under the auspices of the Canadian Consortium on Neurodegeneration in Aging. We applied the Delphi method to determine ‘red flags’ for remote cognitive assessment of dementia. This resulted in 14 red flags that met the predetermined consensus criteria. We then developed a novel clinical decision-making infographic that integrated these findings to support multidisciplinary clinicians in determining a patient's readiness to undergo comprehensive remote cognitive assessment.

Keywords

Introduction

Dementia is critically linked to aging and currently affects 55 million people globally, which is expected to increase to 78 million people by 2030. 1 A timely diagnosis of dementia is essential for patient care, future planning and access to services. 2 Further, therapeutic strategies are dependent on correctly identifying patients at early stages of neurodegenerative disease. A comprehensive cognitive and behavioral evaluation is required to determine a diagnosis, and it involves an encounter between a healthcare professional and the patient, with essential collateral input from the caregiver. 3

Our goal was to develop decision-making guidance to support clinicians who engage in remote dementia diagnostic assessment in order to identify situations in which remote cognitive and behavioral assessment should be avoided. The Delphi method was employed to establish multidisciplinary expert consensus within a workgroup convened under the auspices of the Canadian Consortium on Neurodegeneration in Aging (CCNA).4–6 The Delphi method is an iterative process to systematically establish group consensus while mitigating potential bias. 4 We aimed to identify features about the patient, caregiver, clinician, or context that would indicate the need to shift to an in-person encounter. Next, we engaged with a CCNA knowledge translation specialist to design a novel clinical decision-making tool. This infographic and tool aimed to provide urgently needed guidance to clinicians who conduct remote diagnostic assessments of people living with cognitive impairment in order to enhance diagnostic validity.

Telemedicine is defined by the Centers for Medicare & Medicaid Services as the “two-way, real time interactive communication between the patient and the physician or practitioner at [a] distant site”. 7 The COVID-19 pandemic led to a dramatic rise in the use of telemedicine in the context of dementia assessment, necessitating the rapid development of virtual diagnostic care frameworks.1,8–11 Telemedicine in the context of remote cognitive diagnostic assessment holds the potential to enhance accessibility among individuals located in rural settings or among those with limited mobility who may not otherwise have access to specialist care. 12 However, remote cognitive assessment also has important limitations that may widen existing healthcare disparities. These limitations need to be carefully considered in the implementation of remote cognitive assessment. 13 It is, therefore, important to identify appropriate contexts for comprehensive remote cognitive assessment, and situations when remote assessment should be avoided. The current study aimed to address these critical knowledge gaps and to develop clinical decision-making guidance based on the contextual factors, or red flags, that render a comprehensive remote cognitive assessment inappropriate at the outset of a clinical encounter, and warrant a switch to in-person assessment.14–16

Methods

Study design

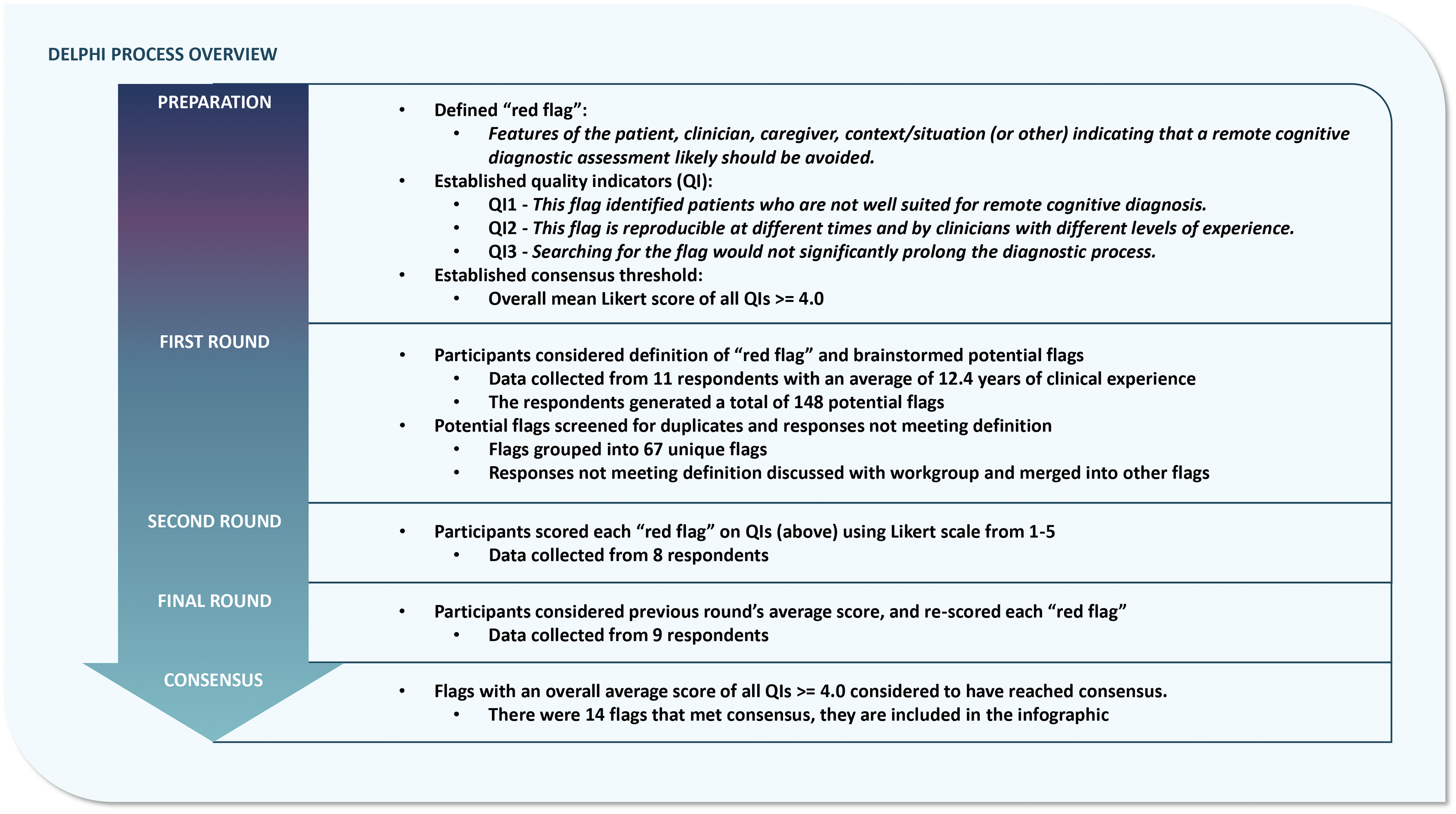

A workgroup was convened under the auspices of the CCNA and tasked with advancing guidance for remote cognitive assessment and diagnosis in dementia care. The workgroup was composed of a national panel of multidisciplinary experts including behavioral neurologists, neuropsychiatrists, neuropsychologists, geriatricians, and family medicine specialists with expertise in the diagnostic assessment and care, and remote assessment, of older persons with dementia. The rationale for including multidisciplinary expertise ensured that diverse perspectives were included in the final clinical decision-making guidance. The Delphi method was applied to achieve consensus among the workgroup. This method is particularly useful in the medical field, where evidence may be conflicting or insufficient.4,5 The advantages of this method include minimizing eminence bias, the ability to reach consensus asynchronously among a group situated across different locations, and allowing individuals to consider the group opinion when formulating their own opinion. In a three-round process, the experts first proposed potential ‘red flags’ for remote cognitive diagnostic assessment of dementia, before undergoing two further rounds of evaluation and re-evaluation of these potential red flags (Figure 1). This study took place between January 19, 2023, and September 20, 2023. Data collection for each round was performed using web-based surveys (Microsoft Forms). Survey responses were anonymous and weighted equally. A description of the project was shared with the McGill University Research Ethics officer in advance of beginning the study, and it was concluded that the project fell under Article 2.5 of the Tri-Council policy statement, and therefore would not require ethics review.

An overview of the Delphi process implemented in the current study. This figure details the iterative stages of the Delphi process and summarizes the results obtained at each stage.

The Delphi process

An initial virtual meeting with the workgroup served to establish and communicate the consensus criteria, definitions and process of the Delphi approach. 4 This included communication about (1) the definition of ‘red flag’, (2) the three quality indicators (QIs) for scoring candidate flags, and (3) the consensus threshold. ‘Red flag’ was defined as a “feature of the patient, clinician, caregiver, context/situation or other indicating that a remote cognitive diagnostic assessment likely should be avoided.” Factors relating to the patient, clinician, or caregiver were considered equally important. Three QIs were used to score each flag on a 5-point Likert scale. 17 The first QI focused on efficacy, “This flag identified patients who are not well suited for remote cognitive diagnosis.” The second QI focused on reproducibility, “This flag is reproducible at different times and by clinicians with different levels of experience.” The third QI examined feasibility, “Searching for this flag would not significantly prolong the diagnostic process.” Each potential response was rated on a 5-point Likert scale as follows: Strongly disagree = 1, Disagree = 2, Neutral = 3, Agree = 4, Strongly agree = 5. Consensus criteria were set a priori to reduce the risk of bias. We considered consensus to be met when the average score for all QIs for a potential red flag was 4.0 or greater.

The first of the three rounds of the Delphi process generated all the potential red flags that would be voted on in subsequent rounds. To increase the number of potential red flags available for subsequent rounds of voting, and to reduce the risk of missed flags, only the first survey was also sent to CCNA members at large. Survey prompts were designed to be as comprehensive as possible and span key domains (Supplemental Figure 1). Respondents considered prompts about each component of a clinical encounter including separately considering the features of the patient, caregiver, clinician or context (e.g. “What are red flags about the patient's caregiver that indicate a remote assessment should be avoided?”). Participants then responded anonymously by generating as many potential red flags as possible. Information about the expert participants, including their clinical specialty and years of clinical experience was collected in the first round only. After the first round, all responses were pooled and screened for validity (whether the potential factor met the agreed upon definition of ‘red flag’) and duplicate responses. With the feedback and approval of the CCNA workgroup members, responses that did not meet the definition of ‘red flag’ were removed, and duplicate responses were combined.

In the second round, respondents reviewed each potential red flag and were asked to score it based on the three QIs using the above Likert scale. After the second round, the group average scores for each red flag were calculated, including the average for each QI and overall scores. In the final round, respondents were shown each red flag again along with the group average scores from the previous round, for each QI and overall. They were asked to re-score each flag using the same QI Likert scale described above while considering the previous round's averages. After the third round, the group average scores for each red flag were re-calculated. The consensus threshold was applied (average total score across all QIs ≥ 4.0). Flags with scores above the consensus threshold were included in the final set of consensus red flags for remote cognitive assessment.

Development of the clinical decision-making tool and infographic

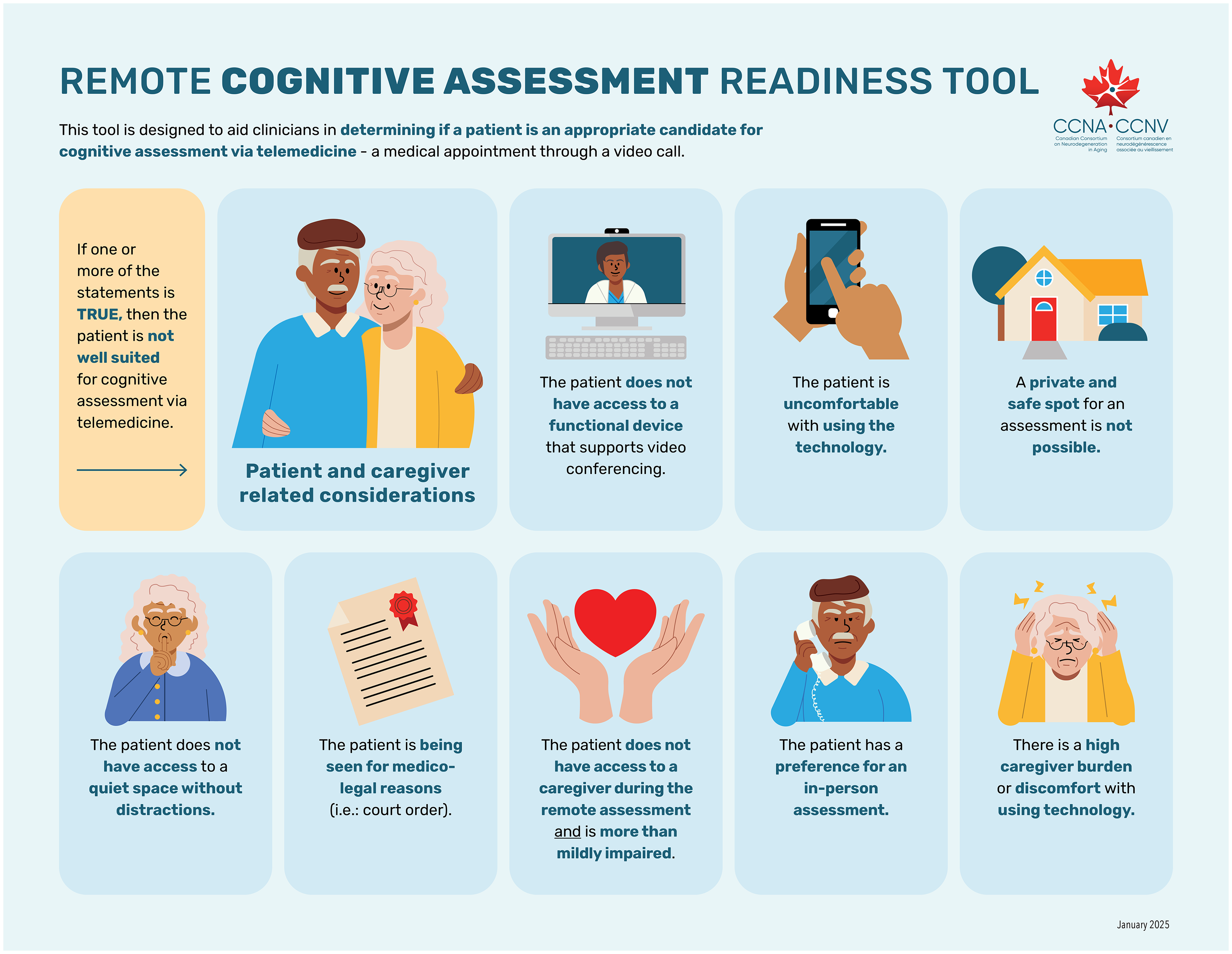

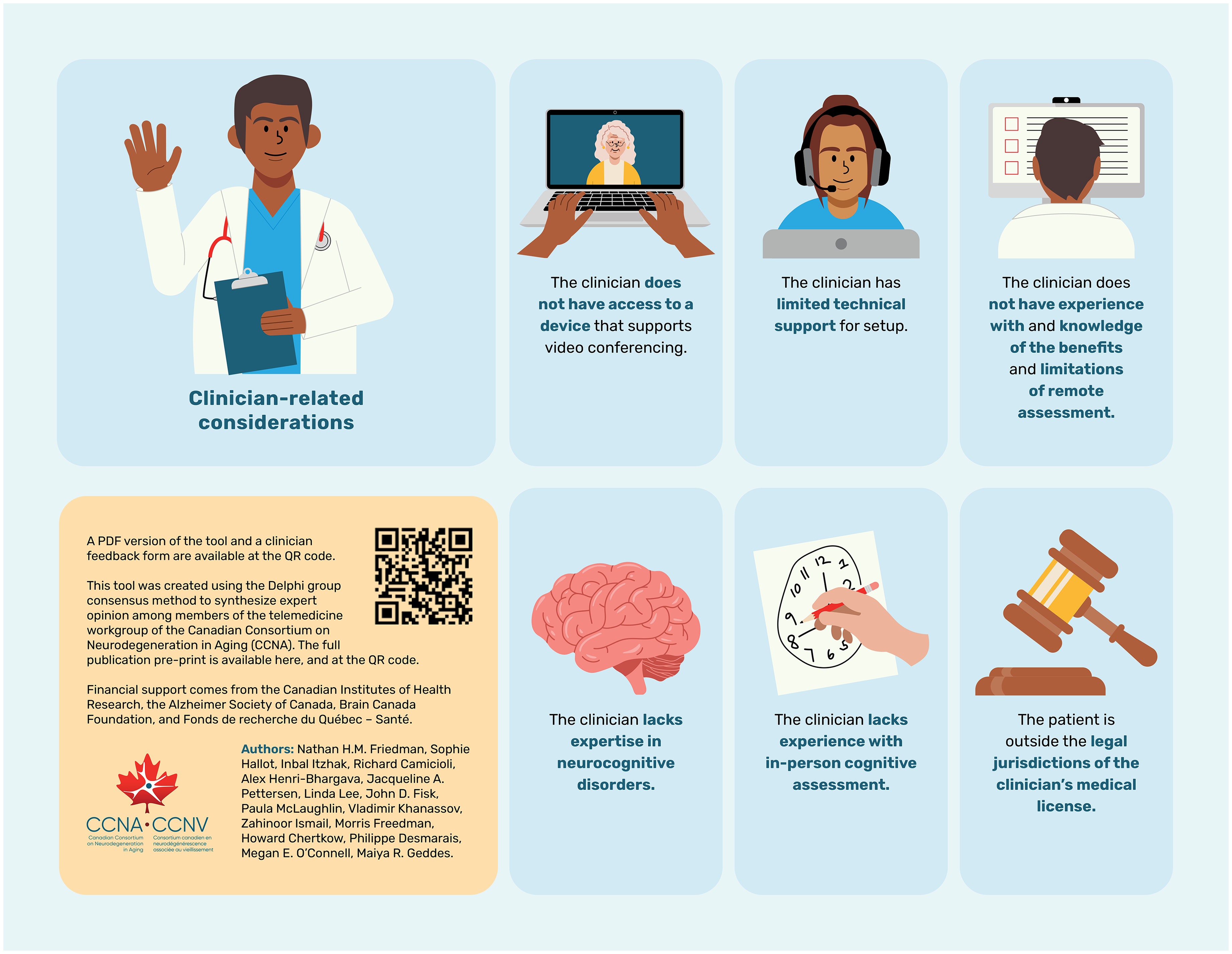

In collaboration with the CCNA Senior Knowledge Mobilization Specialist, the final set of red flags was translated into an infographic as a clinical decision-making guidance tool. This tool was designed to guide clinicians in determining if a patient is an appropriate candidate for cognitive diagnostic assessment for dementia via telemedicine (Figure 2). All flags were considered equally important, and if one of the final red flags is identified before a clinical encounter, then the patient is not well suited for remote cognitive assessment, and switching to in-person assessment is recommended.

A novel clinical decision-making guidance infographic to assess patient suitability to undergo remote cognitive diagnostic assessment. This infographic depicts the 14 final red flags that reached Delphi consensus criteria. If any of the flags is identified at the outset of the clinical encounter, a shift to an in-person assessment is warranted. The red flags about the patient, caregiver or clinician are considered equally important. This infographic was translated into French (Supplemental Figure 1).

Results

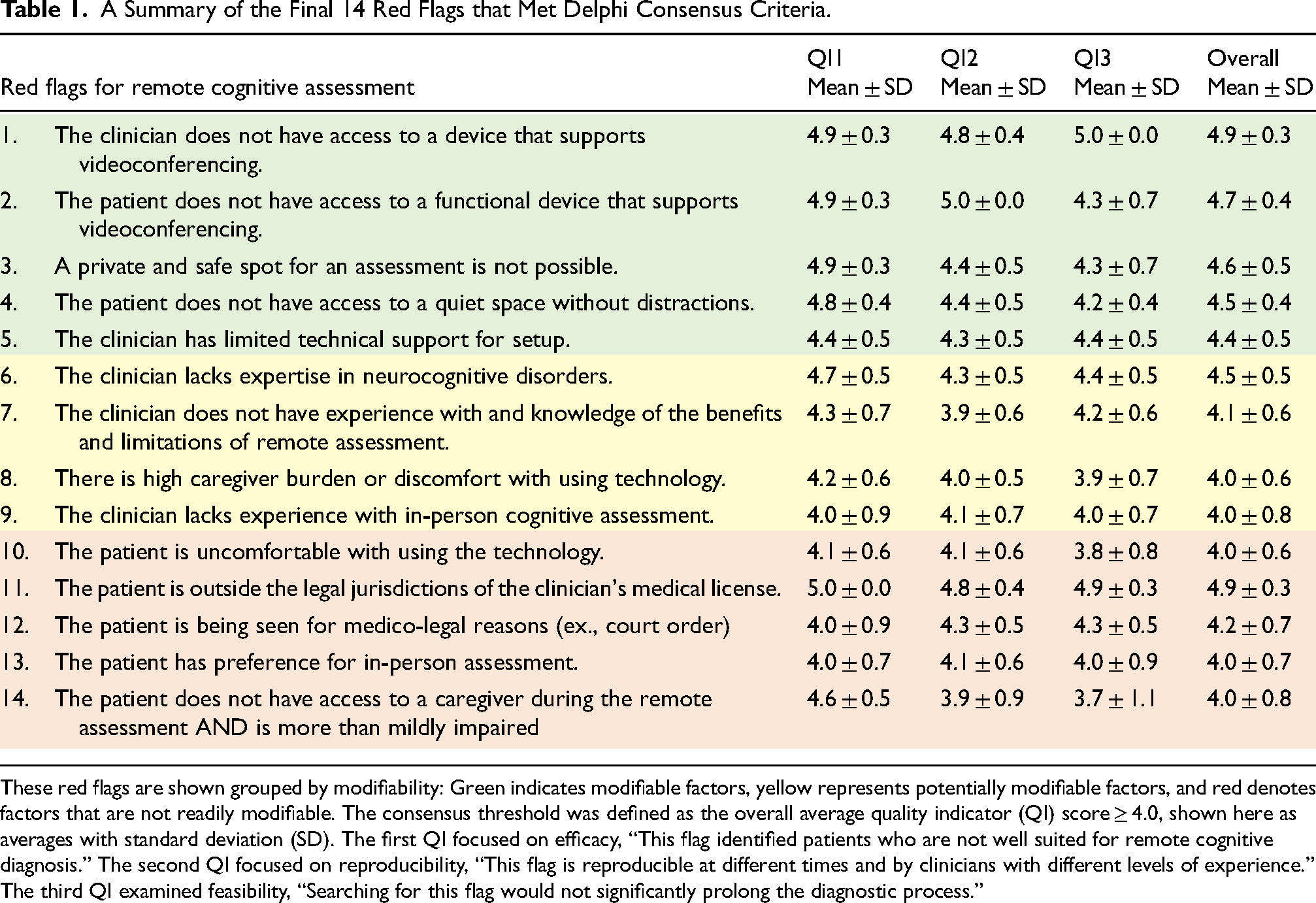

In the first round of the Delphi process, there were 12 respondents, who possessed an average of 14.6 ± 11.1 years (mean ± standard deviation) of clinical experience within behavioral neurology, neuropsychiatry, neuropsychology, social work, geriatrics, and family medicine. This group of respondents included three additional experts from the wider CCNA membership with expertise in the assessment and care of older adults with dementia (a neuropsychiatrist, behavioral neurologist and social worker). This group generated a total of 151 flags. Only 3 were considered invalid and did not meet the definition of ‘red flag’. Of the 148 remaining red flags, 81 were duplicate flags resulting in 67 unique candidate red flags. In the second round, 8 respondents from the CCNA workgroup scored each candidate red flag on the three QIs. In the third round, 9 respondents from the CCNA workgroup re-scored each flag while considering the previous round's scores. Given that information about the clinical specialty and years of clinical experience of respondents was gathered in only the first round, systematic comparison of respondent characteristics across the three rounds was not performed. The overall and individual QI mean scores and standard deviations for each potential red flag after the third round were calculated and are reported in Supplemental Table 1. Applying the consensus criterion after the third and final round yielded 14 red flags that scored at or over the mean threshold of 4.0 overall. This final set of red flags that reached consensus were grouped based on potential for modifiability and are reported in Table 1. An infographic of the 14 red flags that reached consensus was developed to provide clinical decision-making guidance about a patient's suitability for remote cognitive diagnostic assessment (Figure 2) and translated into French (Supplemental Figure 2). If any of the 14 red flags in the clinical decision-making guidance tool are identified, a decision to shift to an in-person clinical assessment is warranted.

A Summary of the Final 14 Red Flags that Met Delphi Consensus Criteria.

These red flags are shown grouped by modifiability: Green indicates modifiable factors, yellow represents potentially modifiable factors, and red denotes factors that are not readily modifiable. The consensus threshold was defined as the overall average quality indicator (QI) score ≥ 4.0, shown here as averages with standard deviation (SD). The first QI focused on efficacy, “This flag identified patients who are not well suited for remote cognitive diagnosis.” The second QI focused on reproducibility, “This flag is reproducible at different times and by clinicians with different levels of experience.” The third QI examined feasibility, “Searching for this flag would not significantly prolong the diagnostic process.”

Discussion

In this study, the Delphi method was used to establish consensus among multidisciplinary experts regarding patient suitability for remote dementia diagnostic assessment in cognitively impaired adults. This approach yielded fourteen red flags for remote assessment that reached consensus criteria. These red flags were features of the patient, caregiver, clinician or the context of the clinical encounter that suggest a shift to an in-person assessment is warranted. We then translated these findings to design a novel clinical decision-making guidance tool as an infographic for clinicians considering the suitability of a given patient for remote cognitive diagnostic assessment (Figure 2; https://ccna-ccnv.ca/remote-cognitive-assessment/).

The Delphi method

The Delphi method is a versatile approach that has previously been applied across a spectrum of research domains in healthcare.4,5 Most examples of the method retain the core elements of anonymity, iteration, feedback, and consensus, with the validity of the results depending on the implementation.4,5 In this study, the overall design, number of rounds and consensus criteria were defined prior to beginning the Delphi iterative rounds, similar to prior Delphi studies in healthcare. 5 Setting a predetermined consensus threshold is recommended as it increases transparency within the workgroup, ensures that experts are aware of the proximity of a flag to achieving consensus, and also reduces the subjectivity of study operators. 5 Our aim was also to achieve a balance between enabling participants’ responses to the workgroup's average scores while reducing response fatigue. The iterations in this study were carried out using online software, which allowed acquisition of de-identified data and upheld the principle of anonymity. These steps helped minimize the risk of eminence bias, defined as the unequal influence on the group consensus by individual members. The online forms also allowed data collection in the context of a national workgroup where individuals were geographically dispersed, and working asynchronously across different time zones. Asynchronous rounds further minimized the burden on respondents and maximized their response rate.

Red flags for remote diagnostic dementia assessment

The majority of the red flags that reached consensus highlighted factors about the patient and caregiver that render a remote diagnostic assessment inappropriate. Many of the identified red flags were readily modifiable (depicted in green in Table 1) or partially modifiable (depicted in yellow in Table 1). Red flags involving modifiable logistic and technical considerations were identified. These modifiable red flags present opportunities to mitigate barriers and improve access to remote cognitive diagnostic assessment by catalyzing the development of innovative public health initiatives and solutions. For example, accessible internet and technological support could reduce caregiver and patient burden. Community-based and rural access to high-speed internet and health resource centers offering internet-enabled, quiet, and secure spaces are promising approaches to achieve these goals.18–20 Initiatives to enhance access to remote cognitive and behavioral assessment have the potential to keep patients at home longer and to reduce the utilization of emergency and urgent care services. 21 There are also existing initiatives that aim to increase technology literacy among older persons in the community (e.g. Older Adults Technology Service through the American Association of Retired Persons and Connected Canadians) and in residential care settings. 22 Red flags also focused on patient and caregiver comfort and familiarity with technology, and, access to mobile devices and high-speed internet. This is consistent with prior literature that showed those without high-speed internet had reduced access to telemedicine during the COVID-19 pandemic. 23 Relatedly, a cross-sectional study cited limited access and comfort with technology as key barriers to remote cognitive assessment. 24 Additional mitigating strategies for these modifiable barriers include the development of more intuitive technology interfaces for patients, and accessible community-based telemedicine hubs to support remote clinical assessments. Prior research has shown that remote medical hubs that integrate technology infrastructure represent a promising model to enhance community access to remote cognitive assessment.16,18 There are also programs that aim to increase access to high-speed internet, such as Internet for All in the United States, and the Universal Broadband Fund in Canada.25,26 This again highlights the modifiable nature of multiple red flags, whereby changes in healthcare policy and thus community access could potentially reduce the digital divide.25,26 Accessible community-based telemedicine-enabled clinics could potentially address some of the red flags identified in the current study, including access to a private, safe location, and a location that is quiet and free of distractions. Privacy during a medical encounter is critical for patient autonomy, where a patient is free to decide who is present in a clinical encounter. Additionally, the presence of distractions impedes cognitive performance, thereby reducing the validity of remote cognitive testing. 27

A systematic review of remote cognitive assessment identified the presence of a caregiver as a critical facilitating factor. 28 Multiple red flags identified by the workgroup underscore the key role played by caregivers particularly if a patient has more than mildly impaired cognition. The balance between enhanced specialist access and caregiver burden when using technology was also highlighted by the final set of red flags, a finding that is consistent with prior research: One prior study comparing in-person and remote cognitive assessment in a memory clinic found that 68% of patients required caregiver assistance to participate in a videoconferenced clinical encounter. 29 Another study showed that patients with reduced cognitive function required greater caregiver involvement to complete remotely administered computerized and standard cognitive testing. 30

Current legal guidelines allow for remote cognitive assessment, but practicing outside of the jurisdiction of a clinician's license has implications for patient privacy, quality of care, clinician liability and remuneration. 31 Thus, legal considerations are also essential to determine the appropriateness of a remote diagnostic encounter (Flag 11, Table 1). Furthermore, the group consensus identified that a court-ordered assessment is sufficient reason to switch to an in-person assessment to ensure more information about and control of factors that influence testing validity (Flag 12, Table 1).

The group consensus identified several clinician-related red flags (Flags 1, 5, 6, 7, 9, Table 1). A prerequisite for remote cognitive assessment is the clinician's access to secure, encrypted software and technology (Flags 1, 5, Table 1). 32 However, this can be costly and often requires administrative support that pose potential barriers. 33 The group consensus also highlighted the importance of clinician expertise (Flags 6, 7, 9, Table 1): Competency in performing remote cognitive assessment includes knowledge of the benefits and limitations of telemedicine and expertise in neurocognitive disorders in an in-person context.8,34 A systematic review on barriers to dementia care among primary care physicians identified concerns about knowledge of dementia, lack of training in dementia care, and discomfort with conducting cognitive assessments. 35 While training clinicians in dementia diagnostic assessment is anchored by in-person learning, remote assessment is also a skill that requires exposure and practice. There is emerging research showing that integrating telemedicine training in residency programs enhances clinician comfort and competency, and also improves patient access to care.36,37

One flag that came close to, but did not reach, consensus was the presence of sensory impairment (Flag 17, Supplemental Table 1). While this flag scored highly in effectively detecting patients who are not well suited for remote cognitive diagnosis (QI1), it scored relatively low on the feasibility of screening for this potential flag (QI3). The impact of sensory impairment on the validity of remote dementia diagnostic assessment is well recognized, and this is especially important given the high prevalence of hearing and vision impairments among older adults.8,38 Previous studies have shown that participants with vision or hearing difficulty have lower performance on remote cognitive assessments, even when adjusting for their impairment.39–42 Further, sensory impairment can invalidate the results of remote cognitive assessment. 38 Thus, screening for hearing and vision impairments in advance of a remote cognitive diagnostic encounter is strongly encouraged. 38 There are tools and strategies to screen for hearing and vision impairments remotely developed by the World Health Organization that are low cost, openly accessible and validated (e.g. hearWHO, WHOeyes).43–45 However, the test-retest reliability of existing remote hearing assessment tools is not yet fully optimized. 46 Finally, there are also validated screening questionnaires for hearing and vision that can be readily administered, remotely.47,48 We recommend combining both self-reported and behavioral measures to screen for sensory impairment.49,50

Limitations and future directions

While this research provides important clinical decision-making guidance on virtual dementia diagnostic assessment readiness, it is essential to be aware of some of the limitations of this work. The final set of 14 red flags are based on expert consensus and this guidance has not yet been validated in clinical practice. An important future direction of this work is to integrate the input of individuals with lived experience of dementia, including patients and caregivers, about the barriers and potential mitigating strategies for remote dementia assessment. Opportunities to do so include future partnership with the CCNA Engagement of People with Lived Experience of Dementia Program. 51 In addition, the clinical-decision making guidance infographic and tool must be interpreted within a given clinical context. This tool does not replace clinical judgment, and the clinician must make the final decision whether telemedicine is appropriate.

Conclusion

The Delphi method was used to identify fourteen red flags about the patient, caregiver, clinician, or context that render a remote cognitive diagnostic assessment for dementia invalid. These findings were translated into a novel clinical decision-making infographic (Figure 2). This tool provides guidance about a patient's suitability to undergo remote cognitive diagnostic assessment at the outset of the clinical encounter and optimizes knowledge mobilization and uptake in clinical practice. This user-friendly and openly accessible tool aims to tailor appropriate use of telemedicine in dementia assessment and help clinicians avoid potential pitfalls. Our hope is that this work will also catalyze public health initiatives to mitigate modifiable red flags and barriers to remote dementia diagnosis and care.

Supplemental Material

sj-docx-1-alz-10.1177_13872877251338186 - Supplemental material for Red flags for remote cognitive diagnostic assessment: A Delphi expert consensus study by the Canadian Consortium on Neurodegeneration in Aging

Supplemental material, sj-docx-1-alz-10.1177_13872877251338186 for Red flags for remote cognitive diagnostic assessment: A Delphi expert consensus study by the Canadian Consortium on Neurodegeneration in Aging by Nathan HM Friedman, Sophie Hallot, Inbal Itzhak, Richard Camicioli, Alex Henri-Bhargava, Jacqueline A Pettersen, Linda Lee, John D Fisk, Paula McLaughlin, Vladimir Khanassov, Zahinoor Ismail, Morris Freedman, Howard Chertkow, Philippe Desmarais, Megan E O’Connell and Maiya R Geddes in Journal of Alzheimer's Disease

Footnotes

Acknowledgments

We are grateful for the insightful comments and resources provided by Natalie Phillips, Walter Wittich and Kathy Pichora-Fuller. We would also like to thank Shoshana Green and Annie Le Bire for their excellent administrative support, and the Operations Team of the Canadian Consortium on Neurodegeneration in Aging.

Ethical considerations

A description of the project was shared with the McGill University Research Ethics officer in advance of beginning the study, and it was concluded that the project fell under Article 2.5 of the Tri-Council policy statement, and therefore would not require ethics review.

Consent to participate

This study used the Delphi process to synthesize expert opinion. As per Article 2.5 of the Tri-Council policy statement, informed consent was therefore not obtained.

Author contributions

Nathan HM Friedman: Formal analysis; Investigation.

Sophie Hallot: Writing - original draft.

Inbal Itzhak: Methodology; Visualization.

Richard Camicioli: Investigation; Writing - original draft.

Alex Henri-Bhargava: Investigation; Writing - original draft.

Jacqueline A Pettersen: Investigation; Writing - original draft.

Linda Lee: Formal analysis; Methodology.

John D Fisk: Investigation; Methodology; Writing - original draft

Paula McLaughlin: Investigation.

Vladimir Khanassov: Methodology; Writing - original draft.

Zahinoor Ismail: Investigation; Writing - original draft.

Morris Freedman: Investigation; Writing - original draft.

Howard Chertkow: Investigation; Project administration; Writing - original draft.

Philippe Desmarais: Investigation; Methodology; Writing - original draft.

Megan E O’Connell: Conceptualization; Investigation; Methodology; Writing - original draft.

Maiya R Geddes: Conceptualization; Formal analysis; Funding acquisition; Investigation; Methodology; Resources; Supervision; Writing - original draft.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was undertaken thanks in part to funding from a National Sciences and Engineering Research Council of Canada (NSERC) Discovery Grant (DGECR-2022-00299), an NSERC Early Career Researcher Supplement (RGPIN-2022-04496), a Fonds de Recherche Santé Québec (FRSQ) Salary Award, the Canada Brain Research Fund (CBRF), an innovative arrangement between the Government of Canada (through Health Canada) and Brain Canada Foundation, an Alzheimer Society Research Program (ASRP) New Investigator Grant, the Canadian Institutes of Health Research, the Canada First Research Excellence Fund, awarded through the Healthy Brains, Healthy Lives initiative at McGill University, the DeSèves Foundation, and the National Institutes of Health (P30 AG048785) to MRG. This research was undertaken thanks also to funding from a Research Trainee Award from the Canadian Consortium on Neurodegeneration in Aging (CCNA) to NHMF.

Declaration of conflicting interests

The authors declared the following potential conflicts of interest with respect to the research, authorship, and/or publication of this article: Zahinoor Ismail is an Editorial Board Member of this journal but was not involved in the peer-review process of this article nor had access to any information regarding its peer-review. The remaining authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data availability statement

Questions and requests for descriptive data can be sent to the corresponding author.

Supplemental material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.