Abstract

This contribution addresses the relation between moral values, moral choices, and moral behaviors. We build on prior research that has revealed the “paradox of morality”: on the one hand people are highly motivated to do what is moral and to appear moral in the eyes of others. On the other hand, this makes them reluctant to consider moral shortcomings of themselves and self-relevant others—which are considered socially costly and difficult to repair. Here, we highlight the implications this has for those who aim to improve the moral values, choices, or behaviors of others. We posit that the paradox of morality easily introduces a vicious cycle. This happens when people disagree with the moral values of others, criticize their moral choices, or remind them of their inadequate moral behaviors. We review a program of research documenting the counterproductive cognitive, emotional, and behavioral effects that are raised in this way. We then examine how other people’s moral values, choices, and behaviors may be addressed in ways that circumvent such counterproductive responses, resulting in a vicious cycle. We present initial evidence of manipulations and interventions that make people more open to the possibility of reconsidering their moral values and help them improve their moral choices and moral behaviors. The model we present, and empirical validation of implicit mechanisms that distinguish vicious from virtuous cycles has practical implications, and gives rise to new theory and predictions to be tested in future research.

Keywords

Introduction

We live in turbulent times, that challenge our moral values, moral choices, and moral behaviors. Until relatively recently, political convictions, religious affiliations, or ties to local communities and family members made it easy to decide what a good and moral citizen should do and how to behave. Nowadays this is much less clear. Media reports of violent conflicts, famine, and mass migration raise questions about the meaning of key moral values such as fairness and care. Changing relations in society call into question common moral choices, for instance to prioritize business profits over social responsibility, or to protect organizational reputations against evidence of sexual harassment instead of securing socially safe working spaces. Finally, we have witnessed rapid changes in the moral meaning of lifestyle habits and everyday behaviors, for instance relating to quarantine and social distancing due to the COVID-19 pandemic or as we become more aware of how food and transportation choices relate to global warming.

All these issues and how to respond to them go beyond individual responsibilities and individual decision-making. Instead, addressing these societal changes and global challenges is not possible without agreeing about key moral values, making collective moral choices, and coordinating everyday behaviors in groups, organizations, and communities. As a result, the changes outlined above have intensified social concerns about these issues. That is, people not only reconsider the morality of their own actions but also express passionate opinions about the actions of others and how these align with moral goals. Paradoxically, the intense debate that results as people disagree with other people’s moral values, criticize their moral choices, or express frustration about behaviors of others they consider morally inadequate does not seem to move us forward. Instead, it tends to result in polarization, hostility, and estrangement, even within formerly tight families, communities, and organizations. Importantly, recent research examining the impact of moral appeals in public messaging—for instance to influence behaviors that prevent the spread of the coronavirus disease—also reveals mixed results (Bilancini et al., 2020; Bos et al., 2020; Everett et al., 2020; Harper et al., 2021).

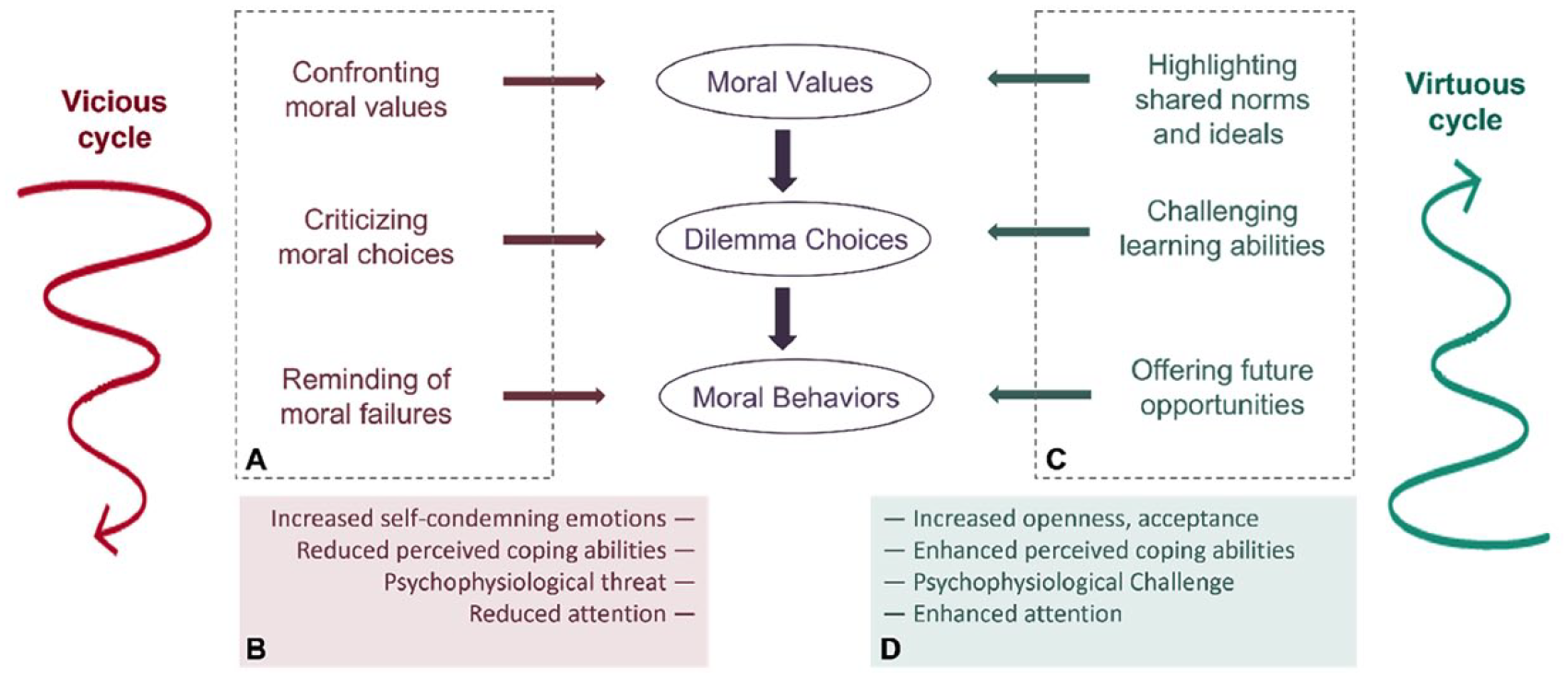

In our contribution we address this state of affairs. We examine why current moral debates appear so unproductive, and what alternative approaches may be more effective in behavioral regulation. We then use these insights to specify what comprises a successful intervention that allows people to more positively respond to moral appeals. Our analysis is based on emerging evidence on the central role of moral values, moral choices, and moral behaviors in people’s self-views and the way they consider others. We propose a theoretical model that highlights how this tendency informs different strategies that are typically used to address other people’s morality, emphasizing the added value of implicit indicators to assess whether and when such strategies are employed. Below, we first explain how people’s motivation to be (perceived as) moral affects their own behavioral intentions. Then we consider the common approaches people often use to question the moral choices and behavior of others, and summarize empirical evidence for the implicit mechanisms that reveal why such approaches tend to be counterproductive (see also Figure 1A and 1B; the vicious cycle of the regulation of moral behavior). Then, we propose a number of alternative approaches, and highlight evidence suggesting that these might elicit more productive cognitions, emotions, and behaviors (see also Figure 1C and 1D; the virtuous cycle of the regulation of moral behavior). We conclude this contribution by offering an example of an experimental intervention relevant to current moral debates in society, to demonstrate how a vicious cycle can be turned into a virtuous one.

Vicious and virtuous cycles in the regulation of other people’s moral behaviors.

The Motivation To Be Moral

Theories addressing moral values and moral behaviors tend to emphasize the importance of moral rules and moral guidelines as a way for individuals to successfully live together in groups and societies (for an overview, see Ellemers, 2018). Indeed, displays of altruism, helping, fairness, and care are indispensable for long term cooperation and community life. This is true even in times of increasing individualization, where the moral guidance of political and religious leaders is questioned. Accordingly, people often refer to moral values as key drivers to explain why they comply with social rules, help others in their community or organization, keep their promises, or make costly contributions to public goods.

The central role of moral concerns that drive people’s behaviors also emerges from studies examining the way people form impressions of individuals and groups (Brambilla et al., 2021). In different samples and paradigms, it is consistently found that people seek information about others’ moral traits and intentions before they turn to other relevant information, for instance, pertaining to their competence or general abilities (Brambilla & Leach, 2014; Brambilla et al., 2011, 2012). Furthermore, in the presence of multiple types of information, moral information dominates the overall impression people form of individual and group targets and predicts subsequent behavioral tendencies such as to help or to avoid these targets (Brambilla et al., 2013; Pagliaro et al., 2013).

People express great concern about their moral image in the eyes of others. Moral displays and moral failures are generally seen to express who people “really are” (Goodwin, 2015; Goodwin et al., 2014). As a result, people tend to fear moral shortcomings will be seen as predictive for their future behaviors and are more difficult to repair or compensate than other types of flaws (Pagliaro et al., 2016). Indeed, highlighting moral standards raises concerns about different types of social sanctions, such as loss of respect, hostile responses or social exclusion (Pagliaro et al., 2011). Accordingly, people generally indicate they are highly motivated to do what is moral and to appear moral in the eyes of others.

Accordingly, research participants have been found to show increased effort and enhanced performance when they think this is relevant to their morality. This was demonstrated, for instance, in an experiment where individuals performed a challenging cognitive task (a Stroop task) that was either said to assess their morality or their competence (Ståhl & Ellemers, 2016, Study 1). In this study, participants showed an improved task performance when they thought they were being tested for their morality rather than their competence. However, a second study monitored the behavior of research participants after they had completed this first task. This time, their Stroop performance indicated decreased executive control, after completion of a moral task. In turn, this mediated participants’ impaired performance on a third task, which addressed perspective taking—an ability arguably relevant for subsequent moral choices and moral behaviors (Ståhl & Ellemers, 2016, Study 2). Together, the results of both studies illustrate that people are motivated to demonstrate their moral concerns, but may not be able to keep up their desired moral behaviors over time and across different situations. Indeed, even for those with the best of intentions, exposure to new situations and moral dilemmas may invite moral failures and moral lapses if only because of increasing fatigue or lack of attention (Kouchaki & Smith, 2014).

Thus, on the one hand, there is evidence showing that the motivation to be moral impacts on behavioral efforts. This suggests that the best way to impact on other people’s moral behaviors is to target their motives and intentions. On the other hand, there is ample anecdotal and research evidence revealing that moral intentions do not always translate into moral behaviors (for an overview, see Ellemers, 2017; see also Sun & Goodwin, 2020). This raises the question of whether addressing explicit moral goals is always the best way to impact on other people’s moral behaviors. Below, we address this question in more detail. We begin our analysis by exploring whether and how people actually reflect upon and acknowledge the patchy relation between moral intentions and moral behaviors. We then build on this analysis to consider how common conceptualizations of the relation between deliberate decision making and moral displays impact on the ways in which people try to influence the moral behaviors of others. After this, we continue with addressing the approaches and (underlying) processes associated with vicious and virtuous cycles in the regulation of moral behavior.

Deliberate Decisions and Post-hoc Justifications

Theory and research on moral development and moral judgment have traditionally emphasized the role of moral values as drivers of moral choices that guide moral behaviors. The assumption that individual and group behaviors originate from such underlying principles, and that moral reasoning results in moral choices is common in everyday conversations and lies at the root of many theoretical and empirical approaches (for an overview, see Ellemers et al., 2019). Nevertheless, there is emerging evidence that this is an inaccurate description of the psychological mechanisms that drive such behaviors. For instance, behaviors that have moral implications are also driven by intuitions, or unthinking compliance with group norms (Greene, 2013; Haidt, 2001). Even if explicit narratives emphasize coherence of people’s moral values with the choices they make in moral dilemmas and the behaviors they display, these are also constructed after the fact (e.g., Anand et al., 2004; Bandura, 1999). Thus, post-hoc explanations do not necessarily capture the actual sequence of events, nor can stated intentions be seen as indicators of deliberate decision making. Indeed, there is ample research evidence revealing that stated moral intentions do not necessarily predict actual behaviors. For instance, studies on moral licensing reveal that people may be tempted to relax their behavioral standards once they have convincingly displayed their good intentions (e.g., Merritt et al., 2010; Mullen & Monin, 2016). Even the awareness that another member of one’s group has made a choice that is morally right, may be sufficient to prevent people from acting in line with the moral values and intentions they explicitly endorse (e.g., Kouchaki, 2011).

At the same time, results from different studies suggest that people are often disinclined to dwell upon the discrepancies between their moral values and deliberate intentions on the one hand and their actual choices and implicit behaviors on the other. In fact, when “moral rebels” or “moral exemplars” confront people with such inconsistencies, these people typically feel resentful—putting the others down as “holier than thou” or “do-gooders” (e.g., Cramwinckel et al., 2013, 2015; Monin et al., 2008). Additionally, researchers have established that people are generally quite reluctant to consider their own shortcomings in the moral domain, or to reflect on how they can improve their moral behavior (Sun & Goodwin, 2020). Awareness of the moral lapses displayed by the self or by self-relevant others tends to raise self-condemning emotions, such as (collective) shame and guilt that stand in the way of moral improvement (for overviews, see: Branscombe & Doosje, 2004; Giner-Sorolla, 2012). The tendency to hide or deny shameful behavior also reduces the value of explicit narratives and self-reported motives as ways to capture the actual reasons for the moral choices people have made and are about to make. Instead, these narratives tend to be constructed after the fact, and are also driven by self-consistency and self-justification motives (Bandura, 1999; Mullen & Skitka, 2006). Yet, the majority of studies to date rely on self-reported intentions or post-hoc narratives to examine these issues (see Ellemers et al., 2019). Here, we build on prior insights by considering psychophysiological and neurocognitive indicators that reveal the operation of underlying, more implicit, processes. That is, we review studies where such involuntary responses are captured in real time while people display (im)moral behavioral tendencies or while others prompt them to reconsider their (im)moral choices. Specifically, we address work assessing these underlying mechanisms by focusing on studies that examine cardiovascular measures indicating the emergence of motivational threat vs. challenge responses, and on specific brain potentials associated with motivated attention and response-monitoring (see also Ellemers & van Nunspeet, 2020).

In sum, we note that people generally consider moral values and moral principles as plausible guidelines for their own choices and social behaviors—even if these are only considered after the fact. This is also reflected in the assumption shared by many theoretical and empirical efforts, namely that moral choices and behaviors are the result of deliberate a priori decision-making. As indicated above, we argue that this narrative may be inaccurate or even misleading, and merits closer scrutiny of underlying and implicit mechanisms that can be captured in real time. Based on this reasoning, we point to the downside of standard approaches aiming to regulate other people’s moral behaviors. We argue that these generally rely on the notion that the best way to influence people’s moral behaviors is by explicitly challenging the moral values they endorse, criticizing the moral choices they make, and pointing out moral failures displayed in their behaviors. We begin our review by considering these common approaches one by one—presenting research evidence to explain why these are likely to have adverse effects. Then we consider research on implicit mechanisms that point to alternative ways of influencing other people’s moral behaviors—that are likely to be more productive.

Strategies to Address Other People’s Moral Behaviors

Above, we have argued that common theoretical and empirical approaches tempt people to consider moral behaviors as the result of moral reasoning and deliberate decision-making. At the same time, we noted research evidence revealing that this does not do justice to the often intuitive and unthinking moral choices people make. Thus, there is a discrepancy between common narratives of how moral behaviors come about and actual psychological processes documented in research. Yet, people tend to rely on these misguided conceptions about the origins of moral behavior when they attempt to influence and change behaviors of others that are considered morally inappropriate.

We advance current insights into this problem by considering three types of strategies that people commonly use to influence the moral behavior of others. We first examine the impact of confronting other people’s values, as relevant drivers of their moral behaviors. Then we consider the effects of criticizing the moral choices people make. Finally, we examine what happens when reminding others of their past moral failures (Figure 1, part A). These three types of strategies also represent attempts to intervene in different phases of the supposedly deliberate sequence of events, as the endorsement of specific values is seen as a driving force that precedes behavioral preferences, moral choices supposedly result from these values, while behavioral displays are seen as the end result of this process. Thus, confronting people’s values typically takes place ahead of time, criticizing people’s choices is relevant while they are deciding what to do, and reminding them of behavioral flaws only happens after the fact.

For each of these strategies, we present studies to reveal both the explicit as well as the more implicit and real time psychological processes that are activated in this way. Here, we focus on research that considers the underlying neurocognitive, psychophysiological, and behavioral measures to complement existing insights based on self-stated motives, reasons, and intentions (Figure 1, part B). Together, these data illustrate the counterproductive and self-defeating responses that are elicited by common attempts to influence other people’s moral behaviors. After completing this section, we proceed to the next section where we explore how emerging insight in these (underlying) psychological mechanisms can inform more productive attempts at influencing other people’s moral behaviors (see Figure 1, parts C and D).

Unraveling the Vicious Cycle

Confronting moral values

We first consider the tendency to confront the appropriateness of other people’s moral values, as a way to change their moral behavior. This was examined in a program of research that employed different methodologies and measures in variations of the same basic paradigm. Across different studies research participants were assigned to come to an agreement about a joint course of action, together with an alleged confederate who disagreed with their preference (e.g., to travel by airplane to a holiday destination). In the experimental condition, they were led to believe that the disagreement originated from the confederate endorsing divergent moral values (due to environmental concerns; the value conflict condition). In the control condition participants were told that the disagreement pertained to different preferences in the allocation of resources (relating to financial concerns; the resource conflict condition). This research paradigm was used to examine a range of issues that might cause moral disagreement (Kouzakova et al., 2012). Results showed that, across the board, having the confederate confront their values caused participants to feel that the essence of their identity was at stake. This caused them to have less sympathy for the confederate’s value position, and to consider it less likely that an agreement might be achieved—compared to when the same disagreement did not implicate a confrontation of their moral values. This is a first indication that simply confronting people’s moral values may be a counterproductive strategy.

A follow-up study in this program examined cardiovascular indicators of motivational states of threat versus challenge that were elicited in this way (Kouzakova et al., 2014). According to the biopsychosocial model, motivated performance situations are appraised in terms of their demands and available resources to cope with these demands (Blascovich & Tomaka, 1996). If situational demands (e.g., task difficulty) appear to outweigh individual resources (e.g., skills, support), this results in a maladaptive physiological stress response associated with a motivational state of threat. If resources match or outweigh situational demands this results in an adaptive physiological stress response associated with a motivational state of challenge. Each of these states is characterized by a specific pattern of heart rate and blood pressure changes which can be measured in real time (e.g., while research participants are voicing their arguments to come to a joint agreement; see also Blascovich & Mendes, 2010; Blascovich et al., 2001; Mendes & Park, 2014; Wormwood et al., 2019). Importantly, these involuntary cardiovascular responses to situational demands are associated with downstream behaviors as well as long-term physical and mental well-being (Blascovich, 2013; Hase et al., 2019).

Findings of the study revealed that both conflict conditions led to equal ratings of the intensity of the disagreement and the likeability of the confederate. Nevertheless, in the control condition, where the disagreement seemed to stem from different preferences for the allocation of resources, participants’ cardiovascular responses indicated an adaptive state of challenge. However, when the confederate had confronted their moral values, participants’ cardiovascular responses indicated a maladaptive state of threat. In this experimental condition, participants also explicitly indicated to be engaged in the discussion with a focus on preventing that they would have to give in on their value position (Kouzakova et al., 2014).

Other programs of research suggest that similar mechanisms can antagonize groups that endorse different moral values. Confronting the moral values of the other group does not prompt group members to reconsider their moral behaviors. Instead, this typically works counterproductively as it tends to raise infrahumanization of those who confront one’s moral values, distancing between the groups, and mutual displays of hostility (e.g., Pacilli et al., 2016; Skitka & Mullen, 2002).

Criticizing moral choices

The second strategy that is often employed to change the moral behavior of others is criticizing the moral choices they make. A direct way of intervening when others’ decisions are not considered moral is to provide feedback the moment such decisions are observed. Here, we highlight research showing people’s instantaneous and automatic brain responses while they receive criticism on their moral choices, to complement prior insights addressing their explicit (post-hoc) reflections on the decision-making process.

In a program of research set up to examine this, responses to receiving real-time feedback were assessed in studies in which participants were first asked to make monetary contributions to a public good (Rösler et al., 2023a) or donations to a charity (Rösler et al., 2023b). These contributions were then judged by ostensible spectators, who evaluated the contributions either positively or negatively, while referring either to participants’ competence or morality. More specifically, participants were presented with the spectators’ judgments about the competence of their choices, that is, how smart and clever vs. senseless and foolish they thought the participant was to invest these amounts of money; or in terms of the morality of their choices, that is, how well-intentioned and loyal vs. irresponsible and unreliable they thought the participant was to provide these amounts of money. Besides explicitly asking participants how they felt about receiving this criticism, the researchers also examined how the criticism was immediately and automatically processed in the brain. That is, while participants viewed the judgments they received from others, brain activity (an EEG) was recorded from which particular Event-Related brain Potentials (ERPs) were derived to investigate the cognitive processes associated with receiving such criticism. Specifically, the P300 and LPP were examined. These are potentials for which fluctuations in amplitudes have been shown to be associated with selective and sustained attention when viewing stimuli (e.g., Polich, 2007; H. Schupp et al., 2004; H. T. Schupp et al., 2006).

After receiving the criticism participants reported that they felt more emotionally affected by the negative judgments of their moral choices, than by negative judgments relating to the competence of their choices. Brain potentials (P300 and LPP) associated with sustained and motivated attention additionally revealed lower activity in the moment participants were confronted with negative judgments criticizing their morality, rather than their competence. In turn, the reduced attention towards negative moral judgments aligned with the observation that participants were less able to recall details of these types of judgments after they had completed the experiment (Rösler et al., 2023a). Taken together, these findings indicate that—compared to critique on one’s competence—people experience greater negative affective responses to moral criticism, (unconsciously) devote less attention to the negative moral judgments they receive, and are less likely to remember such negative feedback about their moral choices.

This body of evidence reveals that people sub-optimally process negative judgments others make about their moral choices. But there are more situations in which people’s attention towards negative feedback to their moral behavior may be limited. This was shown in a set of studies examining the effects of social norms on rule compliance (Van Nunspeet & Ellemers, 2021). Here, each research participant received positive as well as negative feedback for different trials of a task indicating their compliance with moral standards. This procedure made it possible to compare the extent to which they focused their attention on receiving positive feedback indicating the confirmation of their moral behavior, or on negative feedback indicating the detection of their moral flaws. Additionally, this research presented participants with social norms about the right way to behave on the work floor. In the control condition, norms emphasized the importance of compliance with a set of new and complex regulations—and the priority given to adherence to those rules in daily work tasks. However, in the experimental condition, co-workers allegedly indicated that strict rule compliance was not given priority in daily work tasks. After learning about the social norm present on the work floor, participants were asked to complete a demanding computer task in which the rules had to be applied and adhered to in a rapid pace. This resembles daily work situations in which many tasks need to be executed within a limited amount of time—making it difficult to fully comply with prescribed procedures. In the experimental condition, where the social norm condoned compliance failures, participants attached less importance to rule adherence, and were actually more likely to violate these rules when performing the task. Additionally, in this condition, their brain activity indicated that they attended more to the positive (rewarding) feedback they received on trials in which they had correctly adhered to the rules, than to the negative (aversive) feedback they received on trials where they had violated the rules. This was evident from ERPs referred to as the “Reward Positivity” (RewP), associated with reward-processing in the brain (e.g., Becker et al., 2014; Glazer et al., 2018; see also social psychological work on the similar Feedback Related Negativity potential, e.g., Kim et al., 2012). This pattern of brain activity therefore suggests that when the situation tempts them to violate moral guidelines, people are reluctant to attend to information revealing the wrongness of their choices. They rather prefer to focus their attention on feedback indicating what they are doing right.

Reminding people of past moral failures

In many situations it is not possible to provide immediate feedback when people are making behavioral decisions in moral dilemmas or revealing their moral choices. Thus, a third common strategy that is often used to change others’ behavior, is to remind them of what they did wrong in the past. The effects of this strategy have been examined, for instance, by asking people to first recall an autobiographical memory of a situation in which a fellow employee in their workplace negatively evaluated them on either their past incompetent behavior (for instance because of being perceived as unexperienced or unskilled) or their past immoral behavior (because of being perceived as dishonest or untrustworthy). Then participants were asked to reflect on this situation and how the other’s explicit negative evaluation had affected them. This methodology revealed that participants reported more negative attributions about the critic, as well as less motivation and fewer actual attempts to improve or change their behavior, when the negative evaluation concerned their shortcomings in the moral domain—rather than the competence domain (Rösler et al., 2021).

In another program of research using a similar design, participants were asked to recall a situation in which their group negatively evaluated their past immoral behavior, and to reflect on how this situation had made them feel. Results of this research show that people who were reminded of such negative evaluations about their past immoral behavior reported heightened negative affective responses—such as an increase in the experience of guilt. Moreover, besides the increase in such self-condemning emotions, negative moral judgments reduced their perceived coping abilities (Van der Lee et al., 2016). Following up on these findings, additional research used cardiovascular indicators of threat and challenge to monitor implicit responses when being prompted to recollect past moral failures. Here, participants were asked to verbally reflect on the role of morality in solving (management) dilemmas, after recalling their own past moral failures. Results showed that the recollection of past moral shortcomings was associated with a psychophysiological threat response—indicative of the appraisal that situational demands exceed one’s coping abilities (Van der Lee et al., 2023) Taken together, these research findings thus reveal that being called out for one’s past moral wrongdoings makes people feel guilty, raises a psychophysiological threat response that is generally maladaptive, and reduces people’s sense of being able to address the situation or to motivate them to improve their behavior in the future (Van der Lee et al., 2016, 2023).

The strands of research outlined above disclose why these three common strategies to regulate others’ moral values, choices, and behaviors, are likely to prove ineffective. The results we reviewed complement prior findings suggesting that confronting people with their (opposing) moral values, criticizing them on their moral choices, or reminding them of their past moral failures negatively affects how people explicitly reflect on such attempts of others to change the way they think and act. That is, the cardiovascular and neurocognitive indicators we presented also show how this relates to more implicit underlying processes that are relevant to downstream responses. In this way we extend insights on how such strategies likely increase others’ self-reported negative affective responses and self-condemning emotions such as guilt, and decrease their self-reported coping abilities. The research shows that such strategies also cause or increase a psychophysiological state of threat, or reduce motivated attention processes that are likely to undermine people’s ability for behavioral improvement (see also Figure 1, part B).

Below, we continue our analysis by addressing the counterparts of the strategies often used in an attempt to change other people’s (im)moral behavior, and examine whether this might be a more fruitful way to realize a virtuous cycle of moral behavior.

Establishing a Virtuous Cycle

While prior research has documented counterproductive effects of different strategies that challenge people’s moral behaviors, more productive and compensatory responses have also been observed. Which of these responses is most likely to emerge depends on a number of factors, such as individual differences in moral sensitivity or internalized moral identity (Aquino & Reed, 2002; Jordan, 2007). It has been established that especially people with strong moral identities can and do engage in compensatory strategies after moral failures, such as moral cleansing or moral compensation, as a way towards moral self-completion (Jordan et al., 2011). However, whether or not this happens also depends on relatively subtle differences in how people’s immoral behaviors are portrayed and how they are approached, for instance whether they are prompted to recall temporally close versus distant, or abstract versus concrete behaviors (Conway & Peetz, 2012).

In the next section, we therefore systematically consider the counterparts of the strategies often used in an attempt to change other people’s (im)moral behavior. We follow the same structure that we used in considering negative effects of common strategies, as we move from moral values to moral choices, and concrete behaviors. Specifically, we propose that behavioral change is more likely to occur when instead of confronting others with opposing moral values, shared moral ideals are highlighted. Likewise, instead of criticizing others’ moral choices, it might be more productive to challenge their learning abilities. Finally, rather than reminding people of their past moral failures, they may be more open to behavioral change when they are offered future opportunities to act in accordance with moral goals. We will now consider each of these more productive strategies in turn (see also Figure 1, part C), and present evidence revealing the (underlying) psychological mechanisms that explain why these are more likely to elicit behavioral change (see also Figure 1, part D).

Highlighting shared ideals and norms

The first alternative strategy we propose is highlighting or emphasizing shared moral ideals or social norms. Research on this issue has revealed that highlighting ideals (instead of reminding people of their obligations) can reduce the experience of social identity threat as well as cardiovascular threat responses. Further, an emphasis on ideals helps people to initiate action, and makes them more eager to approach their goals and desired outcomes (Does et al., 2011, 2012). Specifically, when considering difficulties in offering fair employment procedures, it made a difference whether the moral outcome of social equality was presented in terms of moral ideals (i.e., equal treatment of people with different ethnic backgrounds) or moral obligations (i.e., non-discrimination towards people with different ethnic backgrounds). When the moral ideal of equal treatment was highlighted, participants (people without a migration background, i.e., members of the advantaged group) generated more ideas for ways to promote such equal treatment and were more supportive of affirmative action policies. Moreover, these participants showed reduced social identity threat—most likely because the ideal of (rather than the obligation to achieve) social equality is less threatening to the collective self-esteem of these individuals. These findings were complemented by follow-up research which showed that participants who verbally reflected on the moral ideal (vs. obligation) of social equality, displayed a psychophysiological state of challenge—rather than a psychophysiological state of threat (Does et al., 2012).

Besides highlighting moral ideals, appeals to moral values can also be made by pointing to norms that are shared among self-relevant others, such as ingroup members. That is, research shows that people are inclined to behave in line with what the moral norms of fellows group members prescribe—also when this deviates from their personal preferences. Further, they also decide more quickly to do so—as revealed by reduced response latencies when informed about the moral, as compared to the smart, course of action according to one’s group (Ellemers et al., 2008; Pagliaro et al., 2011).

Similar to the positive effects of moral ingroup norms that are explicitly stated are ingroup norms that are apparent from the social evaluation by fellow group members. For instance, when people are asked to perform a task indicative of their moral values, they become even more motivated to perform this task well when an ingroup member is evaluating their performance. This was examined with an Implicit Association Test (IAT), developed to measure implicit biases towards social groups. This was framed as a test of one’s moral values and participants were made aware that their performance was being monitored by one of their fellow group members. Under these conditions, their IAT responses showed less evidence of social bias. Additionally, measures of their brain activity showed enhanced Error-Related Negativity potentials (ERN) when they made errors on this task (Van Nunspeet, Derks, et al., 2015). This heightened neural response-monitoring is associated with an increased concern for making errors (Hajcak et al., 2005), also within social contexts (Boksem et al., 2011; Van Meel & Van Heijningen, 2010). The results of this study (Van Nunspeet, Derks, et al., 2015) therefore reveal that people were more motivated to do well, as indicated by their greater concern about erroneous responses, on a task indicative of their values while their behavior was evaluated by someone with whom they tend to share similar values (see also Van Nunspeet, Ellemers, & Derks, 2015). Thus, this research points to specific conditions that invite people to behave in line with moral values.

Challenge learning ability

Highlighting shared moral ideals and norms is one way to open up the conversation about others’ abstract or overarching, yet opposing, moral viewpoints or attitudes. But what if behavior has become concrete and one wants to intervene? We previously showed that criticizing the moral choices people make is not an effective way to make yourself heard. An alternative way to accomplish this might be to refrain from invoking the moral domain altogether, and instead, frame potential concerns in terms of competence failures, to engage others’ motivation to learn and improve. After all, addressing (issues in) the moral domain also means addressing a domain that includes values and attitudes that tend to be seen as relatively stable over time (Pagliaro et al., 2013). Challenging others’ competence displays and learning ability on the other hand, may help them to focus on a domain in which there is ample potential to adjust and to grow. We therefore now focus on this second strategy.

Evidence relevant to this strategy can be gathered from the research in which the effects of receiving criticism about one’s (lack of) moral choices was compared to the effects of receiving criticism about one’s (lack of) competence (Rösler et al., 2021). Here, participants were prompted to recall situations in which they received negative feedback from a close colleague or fellow employee. In this research, referring to their lack of competence (rather than their morality) clearly increased the effectiveness of the critical feedback message. That is, these participants indicated being more open to and accepting of the feedback. They also reported that it motivated them to improve, and that they actually more often had changed their behavior based on the feedback (Rösler et al., 2021). Additional results assessed brain responses (i.e., the P300 and LPP amplitudes) associated with receiving negative feedback. When considering negative feedback about one’s competence choices these brain responses were indicative of enhanced motivated attention (Rösler et al., 2023a). In other words, negative judgments of one’s competence received more attention and are therefore likely to have more impact on people’s actual (motivation to) change, than negative judgments of one’s morality.

Other research offers additional evidence that counterproductive responses can be circumvented by avoiding moral references. In this case, changes in cortisol and testosterone levels were assessed among male research participants who interacted with a confederate. Their assignment was to make a joint decision given diverging individual preferences. As in the paradigm described above (Kouzakova et al., 2012, 2014), this was framed either as a value disagreement or as a disagreement over the allocation of valuable resources. This time, changes in endocrine responses compared to baseline were correlated with preferred conflict resolution strategies (Harinck et al., 2018). Results from this study revealed that testosterone levels increased when resolving a disagreement about resources. Further, this endocrine response was associated with a positive rating of the interaction and the self-reported willingness to engage in constructive conflict resolution responses. However, when the confederate confronted participants’ moral values, there was no evidence of such a constructive endocrine response or conflict resolution strategy (Harinck, et al., 2018).

Of course, it may not always be possible to reframe agreements or judgments in this way, as when the behavior obviously relates to (a person’s) morality. But in those instances, there is another approach that can help to reduce the emotional cost of receiving criticism on moral choices. This is one of the ground rules for providing constructive feedback (which is not always practiced), namely, to explicitly communicate one’s helpful intentions. This was shown with the research paradigm examining how people process and respond to moral criticism. Brain activity in ERPs was assessed to examine the cognitive processes associated with receiving negative judgments on one’s moral choices. In this case, critics referred to participants’ self-stated intentions to donate particular amounts of money to charities (Rösler et al., 2023b.). Relevant results were observed for the P200, which is associated with attention deployment and vigilance towards emotionally salient stimuli (Kissler et al., 2006; Trauer et al., 2012). This revealed reduced brain activity in the P200 indicating less emotional vigilance in response to such negative judgments when the feedback was motivated by the critic’s intention to remind the participant of their desire to substantially support a charity. Additionally, participants explicitly reported perceiving the judgments that were accompanied by these helpful intentions as more fair. In contrast, more evidence of vigilance, and less perceived fairness of the critique were obtained when participants received negative judgments that were motivated by the critic’s intention to show moral superiority (i.e., commenting that the critic would have donated more; Rösler et al., 2023b.).

Taken together, these findings reveal different ways to elicit more productive responses to moral appeals. They show that it is worthwhile to appeal to others’ potential for learning and growth—instead of criticizing their fixed morals. This can be achieved either by framing negative judgments in terms of the other person’s (lack of) competence or by explicitly communicating one’s helpful intentions. Both these approaches can help people to become more receptive of and attentive to criticism, and motivate them to improve their behavior.

Providing moral opportunities

There’s no way one can change what happened in the past, but one can choose how to behave in the future. So, when trying to convince people that they should change their behavior, focusing on what they did wrong in the past may not be very helpful. Instead, it might be more productive to focus people’s attention on what they can do (differently) in the future. Again, the way in which possibilities for future improvement are highlighted also matters. Insisting on what people cannot or should not do (i.e., proscriptive norms) is likely to be less motivating than emphasizing what people can do (i.e., prescriptive norms; Janoff-Bulman et al., 2009). Furthermore, when people are given the opportunity to reveal behavior that can be considered from a moral perspective or can be seen as addressing moral decision-making, it can help to emphasize the moral implications of these actions. Evidence that this is the case was obtained from a study in which participants showed less social bias on an IAT when it was introduced as a measure to assess one’s moral values concerning egalitarianism. In addition, they showed enhanced brain activity (i.e., greater ERN amplitudes) when they miscategorized the social stimuli during the task (Van Nunspeet et al., 2014). This increase in cognitive response-monitoring indicated they were (intrinsically) concerned about the errors they made when the task was said to indicate their moral motives (see also Boksem et al., 2011; Hajcak et al., 2005; Van Meel & Van Heijningen, 2010). This research thus reveals another implicit cognitive mechanism that can help people enact their moral intentions. This mechanism was engaged by explicitly emphasizing the moral implications of people’s behavior before offering them the opportunity to show their moral motivation. This encouraged them to do well and (unconsciously) made them more alert in monitoring their own behavioral responses.

A final example of how the provision of a moral opportunity can stimulate behavioral change is derived from the work we discussed above, to reveal the negative effects of reminding people of their past moral failures. In a second phase, this research also showed that presenting participants with a subsequent opportunity to restore their self-image as a moral group member helped them increase their perceived ability to cope with the situation and reduced their feelings of guilt (Van der Lee et al., 2016). In fact, moral opportunities can even be effective in stimulating moral behavior when past moral failures were made by fellow group members (Van der Toorn et al., 2015). This was demonstrated in a study where participants were confronted with moral transgressions committed by other members of their group. After processing this information, they received the opportunity to improve the moral image of their group. Offering participants a context in which they could endorse the importance of moral conduct and contribute to achieving this ideal reduced their self-reported perception of threat—potentially alleviating the resistance to behavioral change.

From a Vicious Cycle to a Virtuous Cycle of the Regulation of Moral Behavior

In the work reviewed so far, we have explained how and why the strategies people may be inclined to use when questioning others’ moral values, choices, and behaviors can be counterproductive. We have also considered evidence for alternative approaches that appear to be more productive. In the final part of this article, we summarize a recent research endeavor in which we tested an intervention that was designed to put these insights into practice. In this intervention we took care to circumvent the potential negative effects of confronting others with negative information or judgements about their morals, and invited behavioral change by providing them with an opportunity to express their moral aspirations for the future.

In this research project (Van Nunspeet et al., 2023), we adapted an approach that is commonly offered in anti-bias interventions. These interventions tend to inform people about the existence of bias, and the persistence of implicit bias and prejudice in particular, by making them aware that they too suffer from such biased cognitive heuristics. Creating awareness of one’s implicit biases can be seen as a form of confronting people with the fact that egalitarian values do not necessarily translate into unbiased behaviors. Based on the evidence summarized above, we anticipated that this standard procedure would elicit a motivational threat response. We also anticipated negative behavioral effects such as defensiveness and reduced coping abilities which would likely limit people’s self-efficacy and motivation to address the issue by adjusting their behavior. As a counterpoint to this common approach, we explored whether the experience of threat could be reduced or even turned into a motivational state of challenge by employing specific features of the constructive strategies we discussed above. In this case we did this by emphasizing the ideal of promoting egalitarian values rather than preventing biased perceptions, and highlighted future opportunities to achieve this.

We conducted this intervention study among university students. They took part in an experiment in which they were invited to attend a webinar about the topic of gender bias in teacher evaluations. In the first part of the webinar (i.e., the intervention), all participants were introduced to the general topic and informed about the presence and pervasiveness of gender bias in students’ teacher evaluations. Additionally, half of the participants were confronted with the gender bias that was ostensibly evident in their own teacher evaluations submitted before the intervention. Thereafter, in the second part of the intervention, participants were informed about either their university’s sense of obligation to prevent such biased teacher evaluations, or their university’s ideals to promote the fair evaluation of teachers. Participants were then asked to list their own ideas about how to meet those obligations or to achieve these ideals.

Results obtained in the first phase of the study revealed that confronting students with evidence of (their own and their group members’) gender bias increased their willingness to redress this problem. However, asking them to reflect on past displays of bias also caused a cardiovascular threat response that might impede effective action. Nevertheless, when students were subsequently invited to list ideas to promote fair evaluations in the future (in the second phase of the study), they displayed a cardiovascular challenge response. Moreover, these participants were more willing to acknowledge ongoing gender discrimination while reporting enhanced ability to cope with this issue. This was less likely to be the case among participants who had listed measures aiming to meet the obligation to prevent unfair evaluations (Van Nunspeet et al., 2023).

On the one hand, this study thus revealed effects that are consistent with the negative consequences of the strategies we outlined above (e.g., confrontational moral information eliciting psychophysiological threat responses, see also Figure 1, parts A and B). On the other hand, this study shows that even when a strategy with negative consequences has already been applied (such as reminding others about their moral shortcomings), a strategy with positive outcomes (such as an outlook on future moral opportunities) can still elicit a virtuous cycle to regulate moral behavior. More interestingly, these findings seem to suggest that even though confronting others with a critical evaluation of their moral values or (lack of) moral decisions may help to raise awareness or a sense of urgency about the matter at hand, the positive outlook on future opportunities or ideals is necessary to launch the underlying processes that help people to open up and move towards improving their moral ways. This is important, as in everyday life people regularly face situations in which they are being confronted in ways that prompt defensive and avoidant responses that characterize a vicious cycle. For instance, attempts to convince company owners that they should invest in socially responsible business practices tend to question their moral values when prioritizing profits and shareholders’ interests. Likewise, programs aimed at making people aware of social bias in order to increase diversity and inclusion policies tend to criticize the moral choices currently made in human resource practices. Finally, societal debates about sexually transgressive behavior and lack of social safety suffer when people are reluctant to consider whether or how their own past behavior might have been morally wrong. The research reviewed here reminds us of the pitfalls of these common strategies and reveals why this can easily become counterproductive. At the same time, this work also suggests additional approaches that might make people more willing to engage in behavioral changes that are needed for the future.

Conclusion

In this review we have outlined common strategies often used to influence and regulate the (im)moral behavior of others. Inspired by prior evidence revealing the importance of morality for people’s own sense of self and their social identity, we first argued that people easily tend to confront others with their opposing moral values, criticize others’ moral choices, or remind them of their past moral failures. We extend prior work that has revealed the mixed effects of such strategies. We presented evidence from a program of research to address the more implicit, underlying, mechanisms that explain why these may be ineffective and potentially cause a vicious cycle in the regulation of moral behavioral. The research results we reviewed reveal that these common strategies may increase others’ self-condemning emotions and elicit psychophysiological threat responses, as well as reducing perceived coping abilities and motivated attention. Subsequently, we specified evidence showing that highlighting shared moral ideals or norms, challenging others’ learning abilities, and providing future moral opportunities may circumvent these negative effects and instigate a virtuous cycle. Our experimental intervention was designed to combine these insights and test their effects. Results reveal how the negative consequences of people’s strategies to regulate the moral behavior of others can be turned into positive processes and outcomes—and hence what comprises a successful intervention that allows people to more positively respond to moral appeals. In sum, our approach reveals the added value of a more thorough look at underlying (psychophysiological and neurocognitive) processes to distinguish between productive and counterproductive effects of strategies to regulate the moral behavior of others. This knowledge is especially beneficial when it comes to designing successful interventions to combat the potentially polarizing effects of current changes in society.