Abstract

This article argues that in the turn towards the human-centric in Finnish welfare reform, the human is a flexible signifier which arises out of technical metaphor to stand for certain neoliberal fantasies regarding welfare citizenship, market society and the state. I situate my analysis in the preceding literature on the cultural production of the citizen in market-oriented welfare reform. Through a close reading of user representations in a governmental AI Programme seeking transform the welfare state towards human centricity, I identify three dominant articulations of the human: the machinic, the inadequate and the entrepreneurial. These articulations disambiguate the human-in-the-centre as a chimaeral fantasy representing a late-neoliberal policy regime and evince the role of the imagination of engineers in government technopolitics.

Keywords

Introduction

In 2017, as a part of the national AI strategy of the right-centre coalition government, the Finnish National Artificial Intelligence Programme was announced. The moon-shot programme, which is planned to be in use by 2040, with a total planned budget of 100 million euros, and with the first development iteration ending in 2022 with an 11-million-euro budget, is aimed to provoke, in the words of the programme, an ‘AI transformation towards a human-centric society’. The programme is composed of dozens of vaguely related subprograms, the largest of which (in terms of allocated budget) is one which plans to reform the Finnish welfare state by constructing a digital market platform for well-being services called the AuroraAI Network . The platform would allow public, private and third sector consumer service providers to target citizens with service recommendations based on their algorithmically constructed welfare avatar or ‘Citizen’s Digital Twin’ (Kopponen et al., 2022a). In contrast to analogous online advertising platforms, service providers in the AuroraAI Network would not be able to compete for visibility through price-bidding, but rather market encounters would be coordinated through an algorithmic evaluation of the predicted effectiveness of specific interventions on the citizen’s computational welfare avatar. Furthermore, in marked contrast to the two decades of New Public Management reforms in the Finnish public sector, which have oriented public services around the idea of customer-centricity, the reform programme proposes to make the welfare state explicitly human-centric (Ministry of Finance, 2019).

The human is a new metaphor for the citizen, in a time where various discourses, technics and policy interventions are imposing on the social institution of citizenship. Generally, the question of citizenship is twofold, both of which are fruitfully approached with the tools of cultural theory: the ‘who’ question of inclusion/exclusion, and how fringes are produced (e.g. Hintjens, 2007), and the ‘what’ question of rights and obligations, that is the meaning and doing of virtuous citizenship (e.g. Dahlgren, 2006). In this latter sense, the human is a concatenation on a longer line of articulations which refigure the ‘what’ of citizenship. In contemporary welfare states, the socio-liberal conception of the citizen has been overtaken by the citizen-consumer (Trentmann, 2007), a demotic construct demanding choice, personalisation and market solutions to the problem of well-being (Clarke et al., 2007). This is the result of a shift in the way citizenship is discursively framed: from a republican civic subject, holding certain hard-fought economic, political and social rights (Beiner, 1995), to a sovereign, individualised, and responsible consumer (Clarke and Newman, 2007; Coskuner-Balli, 2020; Giesler and Veresiu, 2014). In the past decade, datafication has become a significant force imposing on the welfare state (Dencik and Kaun, 2020), bringing with it a new, ordinated form of citizenship (Fourcade, 2021). Even as the changing institution of citizenship has been thoroughly theorised, the metaphor of the human presents a seemingly new shift: by situating itself in explicit contrast to the customer of customer-centric government reforms, the human proposes something totalising and transformational. Accordingly, this article seeks to disambiguate who this human-in-the-centre is.

I approach the human-in-the-centre through a close reading of the user representations produced and circulated in the Finnish National Artificial Intelligence Programme. User representations have been studied in various forms in the field of Science and Technology Studies as they pertain to the work of designing and building technological artefacts. By leveraging the concept in the study of a technopolitical intervention, I intend to move beyond the ambiguity of non-signifiers towards a substantive account of the welfare subject at stake. Analysing user representations reveals the human-in-the-centre as a fantasy of datafied consumerism in the digital welfare state. The human appears as a fantastic chimera in two ways: first in that it is an assemblage of various cultural tropes, technical metaphors and hegemonic discourses, and second, in that it is the non-existent vagary of a technical imagination, one which manifests in the human an idealised technology user to satisfy both the moloch of market capitalism and the leviathan of the paternal welfare state.

This article will proceed by foregrounding the shifting meanings of citizenship in market-oriented welfare reforms, and their relation to neoliberalism as an administrative project. I will then present user representations as an entry point into the politics of technological government intervention and analyse the case material in terms of three humanities which the material manifests: the machinic human, the inadequate human and the self-entrepreneurial human. Finally, I will discuss how this reconstituted human-in-the-centre raises not only questions pertaining to the neoliberalising welfare state but also the work of the engineer’s imagination in the making of government technopolitics.

Subjects in welfare markets: from citizens to consumers to humans

The institutions and ideologies of the welfare state have enjoyed a contentious relationship to those of market society. The basic project of the welfare state, the idea of the state recognising and protecting certain minimal conditions of a decent life, has enjoyed wide political support both on the left and right not least because it has been seen as necessary for the functioning of modern market capitalism (Offe and Keane, 1984). The welfare state compensates for the weakening of traditional social institutions under modernity (Abrahamson, 2004), protects public and private goods, tides over market fluctuations and secures the social reproduction of the labour force (Therborn, 1987). In fact, Offe and Keane (1984) argue that the destruction of the institutions of the welfare state would be a decimation of modern market capitalism. At the same time, the liberal critique of the welfare state is founded on the premise that the social protections offered by the state are detrimental to the functioning of a free market society. It causes inflation, decreases labour incentives and produces societal free-riders and its redistributive function is unjust. The welfare state is thus both the bane and the bulwark of market capitalism.

In the midst of this liberal contradiction, the welfare state and market capitalism have struck a convenient accord: quasi-markets (Grand and Bartlett, 1993). Shifting discourses and administrative reforms since the 1980s under the moniker of New Public Management have reframed institutions of the welfare state not as exogenic, remedial or imposing on free markets, but as a possibility for building new partnerships across societal sectors, and new forms of market-like engagement in traditionally public domains (Hood, 1991). These market reforms across various welfare states have been broadly justified through the cultural construction of a new kind of citizen subject: that of the citizen as a demanding, sovereign and expert consumer. The image of the citizen as a consumer was a culturally meaningful break from the post-war construct of the citizen primarily as a civic participant (Marshall, 1992). Overtaking their civic rights claims towards welfare, education and dignity, the newly hyphenated citizen-consumer appeared in policy documents demanding personalisation, service quality and market-like choice and justifying administrative reforms in that direction (Clarke, 2007). This image of the citizen as a consumer has become a ubiquitous rationale for the modernisation of bureaucracy (Newman and Vidler, 2006), described by Clarke et al. (Clarke and Newman, 2007) as a ‘revolution from above’: one which is imposed on, not arising from, the people.

Finland is considered an example of a social democratic welfare state (Alestalo, 1984; Esping-Andersen, 1990), although it has been a late-comer to the institutional welfare model when compared with its Scandinavian siblings (Alestalo, 2010). The Finnish welfare state has been traditionally characterised by a large public sector, universalist principles in service provision, tripartite labour negotiations and strong egalitarian leanings (Christiansen et al., 2006). In the early 1990s, a deep recession left Finnish welfare in not only a crisis of finance but also a crisis of legitimation (Nurminen, 1996). The post-recession austerity turned the 50 years of welfare state development towards retrenchment, so far as marking an end to universalism in the Finnish welfare state (Julkunen, 2001). New political rationalities brought with them a wave of NPM reforms such as corporatisation and privatisation of public services, and new logics of local managerialism in public agencies (Temmes, 1998), but also a new perspective on the welfare citizen, founded around discourses of workfare and activation (Karjalainen et al., 2020). This amounted to a market orientation which married the socio-political requirements of the welfare state with business interest in market society (Salminen and Viinamäki, 2001). As the citizen became identified primarily as a market actor to be tuned, social policy became paternal and therapeutic action (Kantola, 2003).

This trajectory in the welfare state can be broadly summarised as neoliberalisation. Neoliberalism has been variously and contentiously conceptualised as a set of market-liberal policy assumptions, an ideological dominant, a project of retreating the Keynesian welfare state and an authoritarian turn to liberalism, among others (Bruff and Tansel, 2019; Dean, 2008; Venugopal, 2015). While the intellectual history of neoliberalism can be traced back to a specific thought collective (Mirowski and Plehwe, 2015), in the contemporary moment, it is a wildly amorphous project, publicly discredited yet lurching ahead misidentified from crisis to crisis. This zombie phase of neoliberalism is not the Thatcherite/Reaganite politics of outspoken welfare retrenchment but rather the various and diffuse projects to fashion ‘new forms of active-and-punitive statecraft’ in liberal societies (Peck, 2010). This turns from retrenchment and privatisation to active social policy and public–private partnerships, from structural adjustment of the public economy towards the moral adjustment of the excluded. This has meant public hedging of financial risk while dealing with the worse-off activation as an eligibility condition for social support. The neoliberalisation of the welfare subject thus extends the genealogy of techniques by which citizen’s lives are conducted, specifically as free, self-responsible but governable subjects (Lemke, 2001; Rose, 1999). At the same time, these discourses produce the inadequate obverse as a central figuration for the contemporary politics of empowerment: the construction of the inadequate substrate of the underprivileged, and the techniques by which these powerless are levered into active citizens (Dean, 2010; Rose, 1999).

In Finland, neoliberalism has rarely been named as an explicit party-political orientation, but rather, it has been often identified as bubbling under the surface in the development projects of the civil service and administrative elite, especially in the Ministry of Finance (Alestalo, 2010; Patomäki, 2007). There, administrative reform can be pushed forward relatively unpoliticised. Indeed, the fact that the National AI Programme’s aim to reform the Finnish welfare state is a matter of technology politics (enacted by the Department of Government ICT) rather than social policy attests to the unkillable nature the of neoliberal project: it is reanimated in unlikely places. The metaphor of human-centric public services (reminiscent of, but separate from the discourses of human-centric AI) arose out of a think-tank collaboration producing anticipatory governance mechanisms for a future society (Hellström and Kosonen, 2016). The authors describe the legacy of turn-of-the-millennia government reforms as ‘sub-optimizing’ and ‘siloed’, implicating the NPM focus on managerial autonomy and agency-level efficiency metrics. The proposed solution is a shift from a customer-centric bureaucracy to one which sees the human holistically and through their ‘real-life needs’ (Hellström and Kosonen, 2016). In 2017, the concept of human-centric government was mobilised in the form of the Finnish National Artificial Intelligence Programme, a programme birthed from a publicly touted governmental technology strategy and quickly forgotten in the machinery of the technocratic civil service. I claim that this metaphor of the human-in-the-centre, specifically in the context of the welfare state, is in dire need of disambiguation. In this article, I intend to give the concept a substantive interpretation by analysing representations of the AuroraAI Network user as constructive articulations of the imagined citizen subject.

Analytic concepts and method: tracing user representations

Users have been of interest in technology studies since the early 1990s, iterating through many, at times contested, modes that users and technologies intersect (Akrich, 1995; Hyysalo et al., 2016; Oudshoorn et al., 2004; Woolgar, 1991). Design methodologies approach user representations as systematically sourced bundles of facts by which to ground design as a practice (Hyysalo and Johnson, 2015). In practice though, a wealth of user representations stem from designers’ everyday self-understandings (Akrich, 1995). This is in line with Sharrock and Anderson’s (1994) analysis, who pose that technology design, in practice, is an ‘irredeemably analytic’ activity, which relies on the designer’s ability to intuit and to bring in their personal experience as a shared cultural stock of knowledge. They propose the notion of the user as a scenic feature of technology production, that is as something which attempts to make collectively reasonable and shared assumptions about the design space. The scenic notion of the user avoids the pitfalls of an overly positivistic and arguably idealised story of technology design. Understood specifically in the sense of a stage rather than a vista, the scenic representations of the user form a kind of mise-en-scène of the technology project: artifice, with layers of cultural signification which contextualise the technological production. That is, users perform their rhetorical role in technology production through the way that their bricolaged, iconic, often hackneyed representations construct a contingent and culturally situated vision of a social reality at stake.

In the Finnish National Artificial Intelligence Programme, users enter into the project through various different kinds of texts: technical diagrams, anecdotes, written user stories and project illustrations. These texts are differently volatile: some stabilised to the status of an officiated report in the programme, others constructed through anecdotes in situ and discarded in passing or others yet recirculated as a kind of project folklore and reconstituted through word of mouth. The programme is led by the division of Government ICT at the Ministry of Finance but enrols a vast and ambiguously delimited stakeholder network which reportedly anybody can join, including municipalities, private consultancies, The Council of the Lutheran Church, and several non-profit organisations in the welfare sector. My research material is thus multimodal. It consists of five development documents outlining the design and planned technical details of the AuroraAI Network, persistantly circulated in the programme’s open stakeholder network, and two scientific publications describing the concept of the digital twin infrastructure. I supplement this material with interviews with programme planners. In my 32 interviews snowballing from the official programme lead, most participants felt incompetent to speak about the concrete aims of the AuroraAI Network, identifying a small set of actors as the agenda-setting voices. These include the ‘Visionary’: a ministerial civil servant with a background in process automation, who is seen as the originator of the concept of a human-centric AI transformation. The ‘Expert’, a ministerial civil servant, formerly a software engineer, identified as the programme’s chief AI engineer. And the ‘Allies’, several officials in enrolled organisations who are described by The Visionary and The Expert as those who ‘truly get the transformation’.

The multimodal research material concerning the government programme presented two challenges, which are diagnostic of the culture of technocratic progressivism in the civil service: those of provisionality and unaccountability. The texts circulated in the programme are always provisional and vaguely authored, produced through an ostensibly open collaborative process through which individuals, organisations, agencies and ministries evade individual authorship. Among the wealth of provisional texts, one can still find a specific if not coherent story of the human-in-the-centre. I approach the stories of the user of AuroraAI as representations which articulate the various meanings associated with the human in the programme. Paul du Gay defines articulations as ‘connecting disparate elements together to form a temporary unity’ (Du Gay, 1997). This points us towards the various signifying practices which bring together motifs, cultural tropes and narratives to manifest not just a user but a specific kind of user, calling for a semiotic approach to its various representations (Oudshoorn et al., 2004). By reading the user of AuroraAI as the protagonist of an intertextual story told through various diagrams, oral anecdotes and written accounts, three dominant articulations arise: the machinic, the inadequate, and the self-entrepreneurial.

Analysis

The machinic human

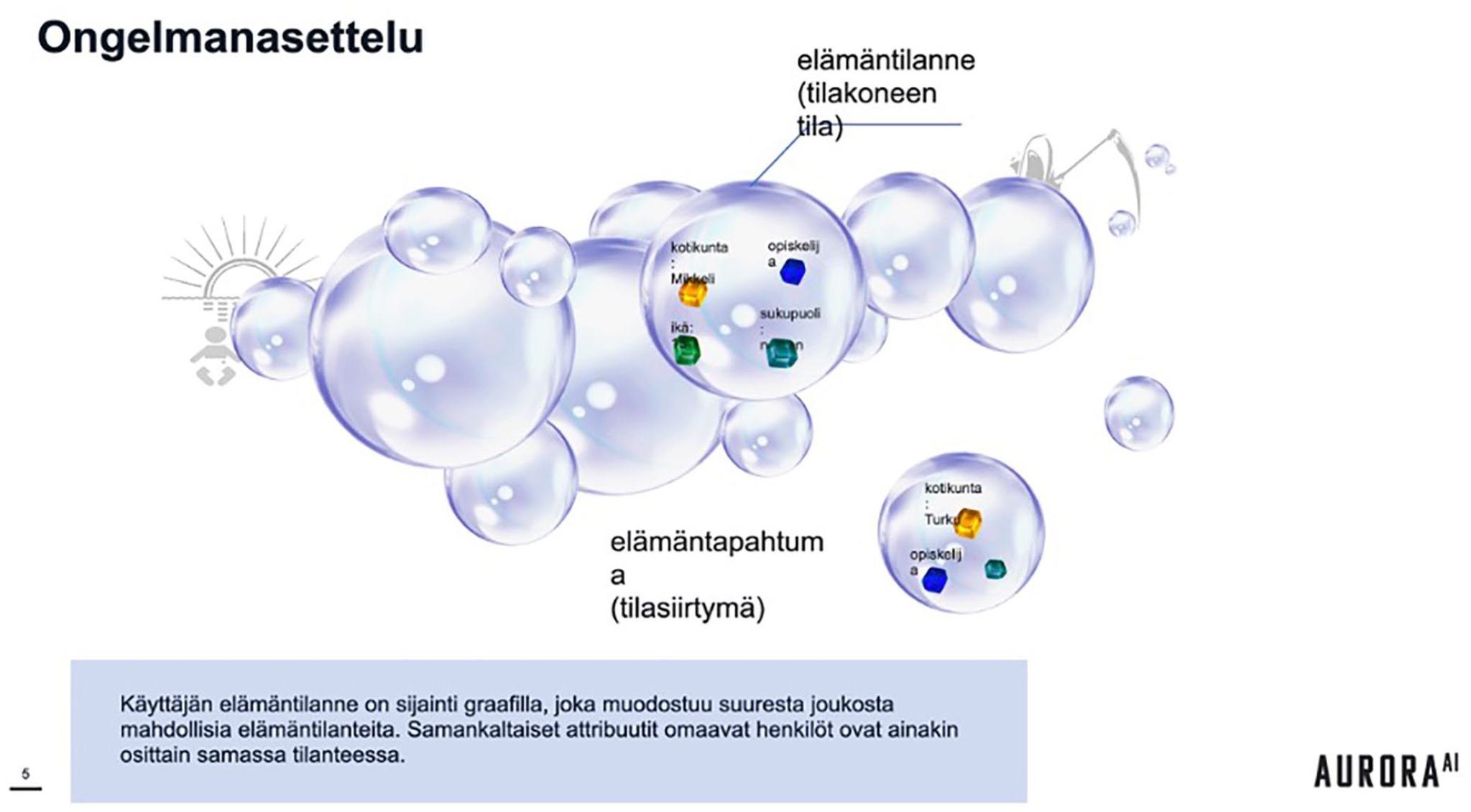

A slide in the AI Programme’s introductory material presents a diagram entitled ‘Problematisation’. It consists of a network of bubbles, labelled ‘life-states’, each connected to others by arrows labelled ‘life-state transitions’. A few of the bubbles are populated with variables, such as age, gender, hometown, employment status. One is overshadowed by a silhouette of an infant, another with a scythed, hooded reaper. In another version of the diagram later in the material, one bubble is marked with the image of a treasure chest brimming with gold.

The depiction above describes what the ministerial planners of the programme refer to as the life-state machine, depicted in Figure 1. It draws from the computational concept of a finite-state machine to produce an abstraction of human life. The life-state machine draws together both epistemological-ontological as well as prescriptive commitments in the form of a computational analogy. Invoked by cybernetician Norbert Wiener through various forms of metaphor, but owing its history to enlightenment era mechanistic thought, the analogy represents humans essentially as computational systems of inputs and states, informationally hooked to the external systems of control (Bowker, 1993; Hayles, 1999; Muri, 2008). The computational analogy doing work in the diagram is a commitment to the idea that the lived life of the citizen can be meaningfully modelled as a system of states and state transitions. The user’s lifeworld is situated on a graph of quantifiable attributes, by which the matter of living is made into an algorithmically calculable task: one of navigating the optimal path of transitions towards a life goal state.

A diagrame from the document “AuroraAI Network: Technical considerations” (Ministry of Finance, 2020) depicting the citizen’s life-state-machine.

This life-state is further specified in the programme as a set of eight quantified metrics referred to by the planners as the individual’s ‘Personal Stiglitz Dimensions’ (depicted in Figure 2). This Stiglitz Model, as it is also referred to in the programme documentation, is a reinterpretation of a report commissioned by the Sarkozy cabinet to develop new societal metrics of progress to replace the gross domestic product (GDP). The workgroup, led by the economists Fitoussi, Sen and Stiglitz, problematises the difficulties of measuring social progress vis-à-vis contested conceptions of well-being and the tasks of the liberal state, and in an aside, mentions eight facets of population well-being which any new metric should strive to account for. For the AuroraAI Network, these eight facets are reformulated as an economist-backed, multidimensional quantification of personal well-being, providing quantitative identification of one’s life state and a set of goals for optimisation.

A diagramme from the design proposal for the “How am I doing service” (Ministry of Finance and Zaibatsu Interactive, 2021) depicting the AuroraAI Network user and their quantified wellbeing.

This computational analogy not only renders calculable, and thus intervenable, the ultimately ethicalised standing of the welfare subject but demands assent to certain articulations about the matter of living. First, it articulates one’s life situation as an unambiguously identified set of personal properties and, more specifically, properties that can be meaningfully quantified into data by the state bureaucracy. Citizens are commonly represented through various inscriptions, such as samples of statistical populations (national PaP smears as a response to actuarial population-level risks), taxable accounts (a register of incomes, occupational expenditures, debts and investment profits or losses) and welfare beneficiaries (as pay stubs, accredited diagnoses and proofs of active employment seeking activities). The work that the computational analogy does here is not framing these bureaucratic inscriptions as context-specific tools of administration, albeit ones which tend to travel outside their remit, but executing the human-centric twist of defining the human as the intersection of some such partial accounts. This makes the human lifeworld bureaucratically identifiable.

The caption for the diagram reads: ‘The user’s life-situation is a location on the network graph. People with similar attributes are in similar life states. . . . People in similar life states need similar services to advance towards favourable life-states and to avoid unwanted ones, thus the user needs to be directed in the right direction on the graph.’

The diagram articulates the matter of living as the taking up of welfare-state transitions, or changes in one’s personal properties, by consuming specific consumer services. Certain life-states in the diagram are anticipated to bring positive outcomes, such as affluence or progeny, while others are marked as end-states: game over, death. While life-states are described as favourable or unfavourable, the diagram presents itself as a map with no predefined bearing, to be traversed in any chosen direction. This is a technocratic interpretation of liberal individualism as the pursuit of personal projects, but importantly, within the AuroraAI programme, this is a descriptive and not a normative position. The Expert described it in an interview thusly: ‘Everyone has a lifegoal that they are working towards, even if they won’t admit it. The point of the life-state-machine in AuroraAI is to be the map and the compass to get there. But that goal is up to you. I am allergic to being told what to do, you know? AuroraAI is not gonna tell you how to live your life, but just help you achieve your goals, whatever they are. Like of course if you wanna get drunk every day and that’s how you wanna live your life then it’s not for the AI to tell you that’s wrong’.

Finally, the metaphor of the finite-state machine has built within it an articulation of a personal present without a history. An important feature of a finite-state machine is that it fulfils the Markov property: its next state is only dependent on its current state and not what came before. The Markov property is what makes the metaphor work, by which I mean, the idea of making lifeworlds tractable and computable requires the assumption that the exponentially growing histories of individual lives can be abstracted away to a workable, calculable representation. At the same time, the Markov property can be read as a technical representation of a neoliberalised welfare subject: where well-being is simply the matter of choosing the interventions which push the socially decontextualised numbers in the right direction. That is, their life-state is a set of personal quantifications to be optimised, a kind of checking balance of human capital, divorced from the social history of its production.

The human-in-the-centre is ultimately a goal-optimising machine, one which is enabled through the bureaucratic production of numbers. Genealogies of political numbers show that rendering calculable is a key technique in modern governance and statecraft, but, importantly, this calculability shifts with modes of power, from domination and discipline towards ‘autonomisation and responsibilization’ (Rose, 1999). Indeed, the concept of a calculating agent evaluating choices according to their personal priorities recalls the Benthamian classical liberal subject. This subject turns neoliberal, though, when it shifts from being the ontic justification for a liberal social order into an imposed rationality which conceives of the self as a project to work the numbers and which conceives of welfare as the outcome of augmentable individual choice. Importantly, the metaphor centres the computational puzzle of finding one’s path in the life-state machine, rather than the material conditions of taking up that journey. Thus, it prefigures a cybernetic solution to welfare: that the problem is at core informational, and thus computationally solvable with the tools of algorithmic optimisation and feedback.

The inadequate human

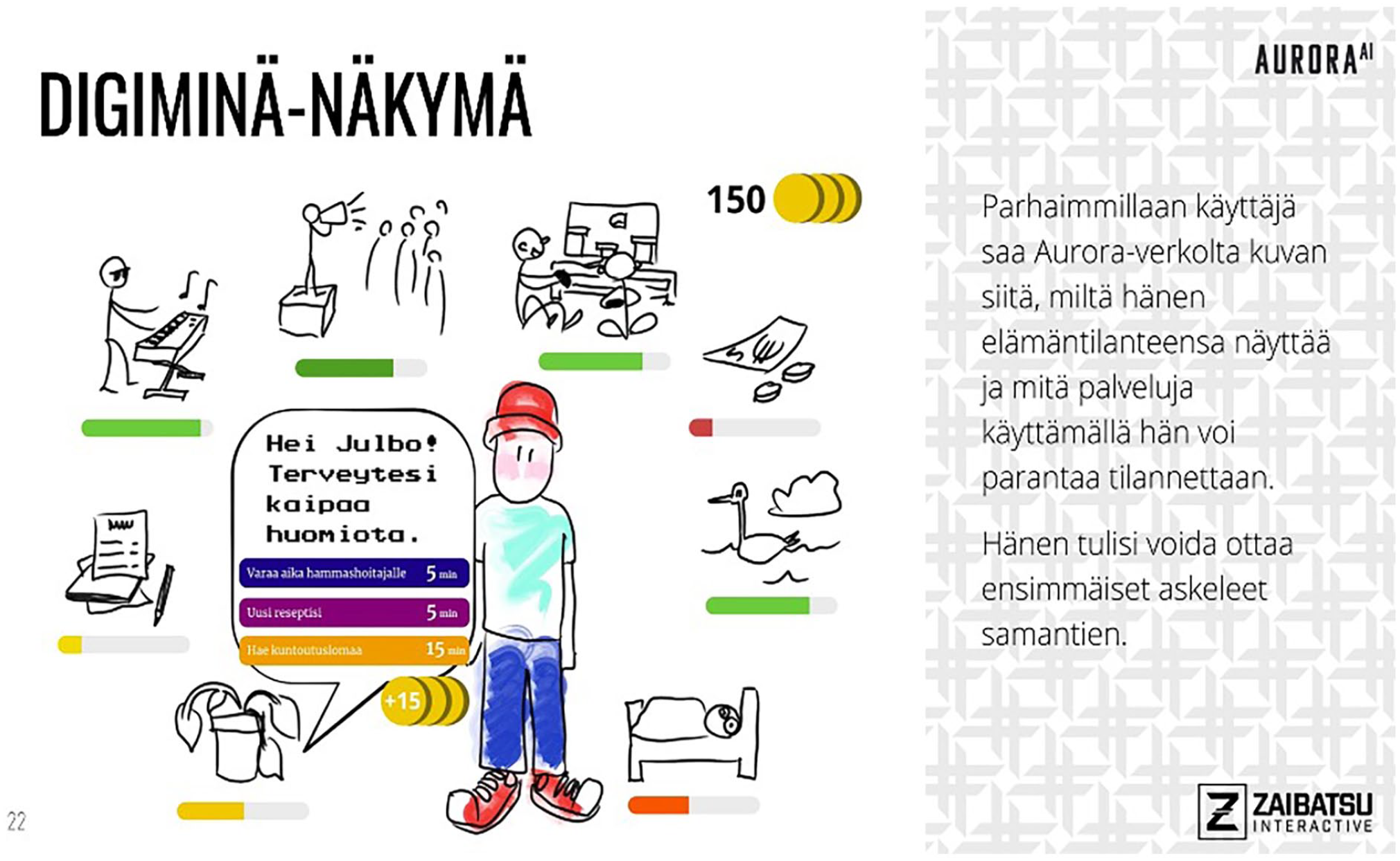

The ‘How Am I Doing’ service is the planned user interface of the digital welfare service market. It is described as a datafied visualisation of the human’s Stiglitz dimensions and a gamified interface for empowering welfare recommendations.

The user interface diagram in the design proposal for the ‘How Am I Doing?’ service of the AuroraAI Network depicts a cartoon user-avatar surrounded by 8 digital gauges (i.e. the Stiglitz Model). The gauges are labelled with sketched icons depicting health, finances, sociality, civic engagement etc. The gauges vary in their readings, from red and near-empty, yellow and half full, to green and full. The gauge for health, yellow, manifests a speech bubble, speaking to the avatar: ‘Hello [name], your health is in need of attention!’. The speech bubble suggests three different interventions: book a dental appointment, renew your prescriptions, apply for rehabilitation leave. The options are tagged with an icon of a stack of coins and labelled ‘+15’, promising a virtual reward for taking action, to be added to the 150 coins already in the user’s wallet, as depicted in an icon in the corner of the interface.

This human-in-the-centre is an inadequately self-aware subject of governance, which can be augmented with quantified self-knowledge and directed through various forms of feedback. Three modes of feedback are implicated in the diagram. The first is quantifying one’s life situation into eight distinct metrics and displaying them as gauges to be filled. Alluding to the popular game franchise The Sims, in which the player upkeeps (or neglects) their characters quantified ‘needs’ gauges, the human-in-the-centre is tasked with managing the various metres of personal well-being. The second feedback is recommendation. The interface speaks directly to the human-in-the-centre, offering personalised and implicitly data-corroborated options by which to satisfy said gauges. Evoking Microsoft’s millennial digital assistant ‘Clippit’, the AuroraAI Network uses speech bubbles and a second-person voice to approach the user in an anthropomorphic way. The last feedback is reward: it is a coin of ambiguous value, a promissory payout for actions deemed responsible. The coins accumulate to track past good choices and incentivise future ones, and as currency, they evince a future benefit, a possibility to spend and gain something of value, like arcade tokens or virtual currencies in micro-transaction-oriented games.

The three forms of feedback: evaluation, recommendation and reward, amount to a paternalistic prosthesis: they seek to fix an incompetence or inadequacy in the human which is explicated by planners as both a paucity of will and a poverty of information. To describe this, The Visionary used the metaphor of a ‘control signal’ from the engineering field of process automation: a signal which synchronises parts in an automated process to work in a timely and appropriate manner, and whose malfunction is cause for inefficiency or breakage. For The Visionary, the lack of a personal control signal is the cause of poor well-being choices: ‘Take the simple case of brushing your teeth. If everyone did it in a timely manner, we wouldn’t need such expensive dental services. But if you haven’t learned that as a kid, if it’s not a part of your daily life, then the most important thing is to get that signal, that empowerment’.

The human-in-the-centre is lacking in a foundational capacity to take care of themselves, an incapacity which can be algorithmically compensated for. On the contrary, the human-in-the-centre is also inadequately knowledgeable, lacking credible information which would give them the capacity to make good life choices. In the publication outlining the concept of a citizen’s digital twin, programme planners specifically tie this inadequacy to a deficit of knowledge: ‘For example, if a person wants to outline future paths towards their goals such as desired profession, they have to gather understanding from various sources, such as search engines and other online services, making the whole seem confusing, and they may not be able to make meaningful decisions for themselves. Decisions about the next place to study, for example, may be based purely on discussions in one’s own family, with a tutor and friends, and surfing on the Internet. Based on these, the decision to study a certain degree, for example, maybe on a very fragile basis’ (Kopponen et al., 2022b).

The inadequacy of the human and its amelioration work then in two ways. The first is epistemic: the informational deficit of the human is resolved with the moralistic edict to know thyself, specifically as Foucault conceives of it through ‘instituted models of self-knowledge’ (Foucault, 1997). It is a matter of reifying the (so-called) Stiglitz model into personal evaluation, adding to the proliferation of quasi-professional vocabularies mobilised by the ‘petty engineers of human conduct’ (Rose, 1999). The second is less epistemic and more cybernetic: it is the imposition of a control signal through gamification, by placing the care for oneself in the realm of the hedonic and playful, the proxy activity of gathering coins and filling gauges. This works not on self-knowledge but on the self as purely informational: creating automated feedback between data and action, turning towards the Deleuzian notion of control (Deleuze, 1992). The welfare of the human-in-the-centre is then a matter of paucity of will and lack of understanding, and their technical amelioration by quantified forms of self-knowledge, persuasive technologies and feedback loops. Importantly, this amelioration is specifically the empowerment of the human as a consumer. This most clearly exemplified by the story of the user which one civil servant presented to me with the title ‘a princess day’.

‘[The Visionary] presented the project to us with this story: so, we have a 16-year-old girl, and the AI has detected early signs of depression. So the AuroraAI Network pre-emptively goes to action, offering the girl a discount coupon to be used for hairdressing services. This is so that they don’t fall into the purview of expensive public mental health services. In the words of [The Visionary], we really have to create demand for all kinds of businesses, from golf courses to movie theatres’.

The Visionary expanded on this: ‘Yeah we talk about hair salons, because you know there is no life situation where you don’t have both public and private services involved. Like when I go grocery shopping, I use public roads and the police keep me safe, but the store is of course a private company. This is what we call the public-private-people-partnership. [. . .] But it’s not only them, it’s also the local chess club, maybe in a small municipality where there aren’t a lot of services, it might just be the local chess club that keeps people from becoming marginalised. So, we need broadly all of society on board, so we can really make people aware of their life-situation and help them take care of themselves pre-emptively’.

For the human-in-the-centre, empowerment is the imposition of a market rationality, one which frames affliction such as mental health issues as moments for responsible consumption. Furthermore, the ‘princess day’ elaborates on the paucity of will of the welfare subject I approached earlier: it is not simply a question of the inadequacy of self-knowledge, but specifically one’s inadequacy as a consumer in a marketplace of ample welfare opportunities. The human-in-the-centre is thus the moral obverse of the self-responsible subject. Nonetheless, he is strictly compatible with neoliberal interventionism: as markets can be constructed, so, too, can the consumer be reformed. For the human-in-the-centre, bureaucratic technologies can be mobilised for his empowerment as a choosing subject, just as the moralising orientation towards citizens-qua-consumers calls for (Giesler and Veresiu, 2014). This supports Rose’s (1999) argument that the language of empowerment in governance implies not the amelioration of social conditions which produce powerlessness, but rather the modulation of the relations individuals have with themselves. Indeed, the human-in-the-centre is in fact the ideal neoliberal subject despite their inadequacy exactly because their cybernetic nature makes them the perfectly plastic substrate of responsibilising consumer governance.

The self-entrepreneurial human

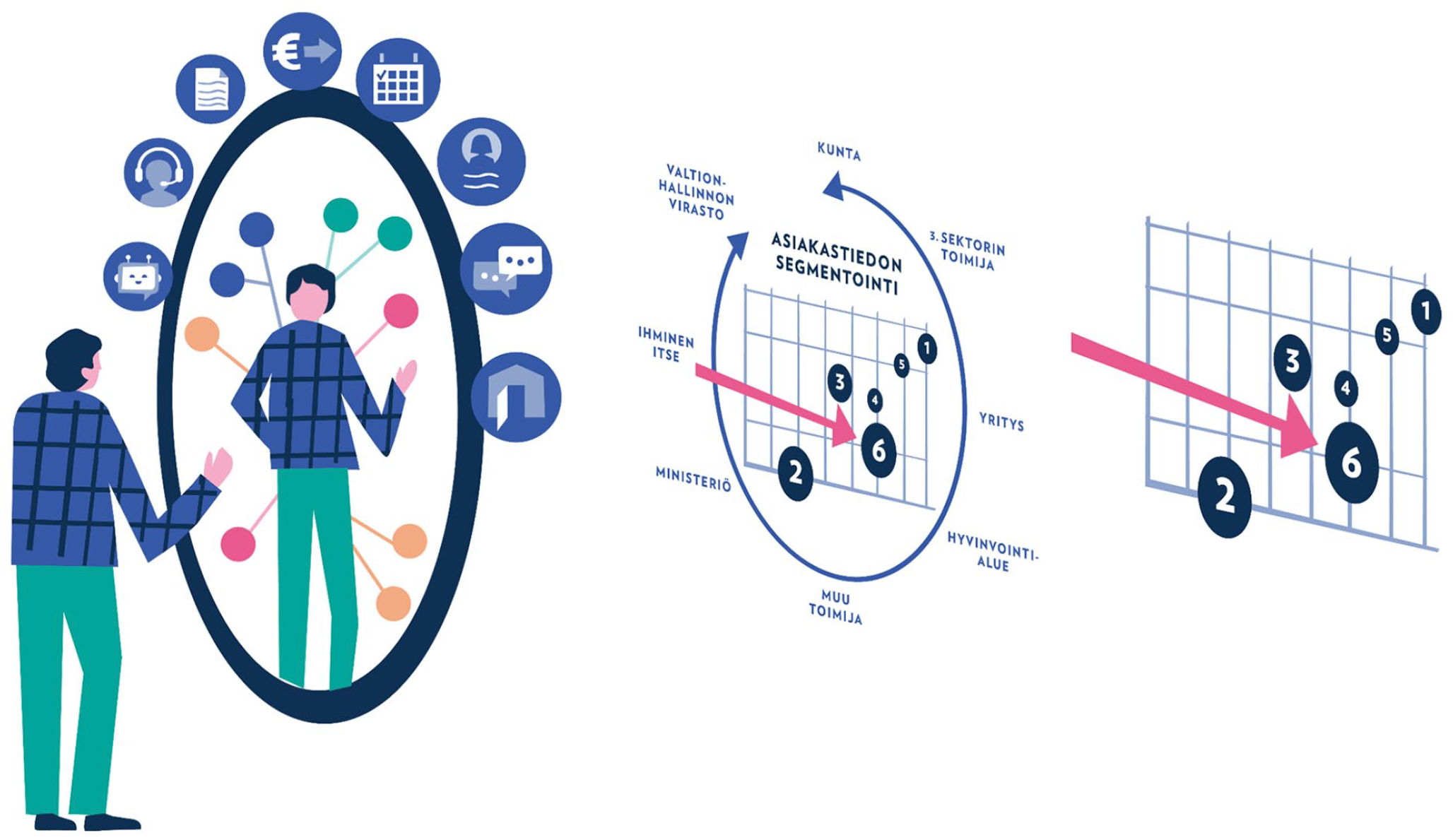

A central publication from the AuroraAI project is a document described as an operational model for a human centric society using the AuroraAI Network. The document begins with an illustration which has been circulated in several public contexts, such as conference presentations and public seminars on the system. In the illustration, the citizen is depicted as an individual inspecting themselves in a mirror. This mirror is digitally augmented with metrics and recommendations, and beyond the glass, a statistical machinery is locating the individual in a cloud of datapoints and extrapolating clusters of the modelled population.

The human-in-the-centre of the AuroraAI programme gazes upon their algorithmic self, while the paternal algorithmic bureaucracy gazes back. In project meetings and documentation, ministerial planners refer to the AuroraAI Network as a ‘two-way mirror’ (depicted in Figure 3): the silvered surface invites algorithmically augmented self-reflection, while the transparent side allows the bureaucracy to surveil, learn and develop their services. Importantly, in both the visual and verbal metaphor, it is the citizen themselves who is peering into the mirror, looking at themselves being looked at. This user is actively interested in quantifying themselves, processing personal data and actualising that data for personal growth, embodying the neoliberal ethic of self-entrepreneurialism. In the stories of the AuroraAI user, this data-oriented self-entrepreneurialism is both a necessary result of rational analysis, and a competence to be cultivated in responsible citizens.

A diagramme repeatedly appearing in different contexts in the programme, originally from the document “Ihmiskeskeinen toiminnan muutos” (Ministry of Finance, 2022), depicting a citizen looking at themselves in a data-augmented mirror.

The self-entrepreneurial rationality is closely tied to an ethic of self-quantification, which is personally familiar to many of the civil servants building AuroraAI. One municipal Ally compared the programme to their experience of fitness tracking: ‘There’s data produced about me all the time, like this Polar [a fitness tracker brand] is gathering data, and I could decide to give the data to the bureaucracy to describe my life-situation. And if they see that I should use some service more, like maybe go jogging, the AI can make a recommendation like, look, there’s this middle-aged men’s running club. It gathers on Tuesday, and they even have a sauna afterwards’.

This self-entrepreneurialism is marked by voluntarism: freely entering into a relation with the bureaucracy in which their personal data is turned into actionable advice regarding their well-being. This voluntarism is justified by an evaluation of the mutual benefit that algorithmic recommendations provide. The Ally underlined this mutual benefit with the example of employment markets, in which entering into data relations with the government programme is seen as a common-sense rationality.

‘If we think in a visionary way, that somewhere out there there is the perfect job for me. And if I give my information into the job markets, and I get the perfect job in return, why wouldn’t I do that? Like confidentially of course, there must be that trust. But the more I give, the better recommendations I get’.

In the project documentation for the AuroraAI Network, self-quantification is translated from a personal interest into a virtuous identity by framing it as a facet of competent citizenship to be cultivated: ‘Users need to be able to use the solutions and understand their possibilities and limitations, and they will need trust and courage to try new things and look into the future. . . . Requirements relating to the development of citizen competencies will increase as the digital revolution progresses, but the change presented by the AuroraAI service model poses some additional requirements. Every citizen should have strong information literacy skills: the ability to source, interpret, understand, modify, produce, present, and use information and assess its usefulness and accuracy’ (Ministry of Finance, 2019).

The self-entrepreneurial human, specifically in terms of self-quantification, is framed thus as a normative shift which is imposed by technological progress. Importantly, it involves not only skills and competencies around data use but also an identity which is self-reflective, fearless and future-oriented. This future orientation is totalising: the scale of the user’s data-informed self-reflection is an intensification of established quantified self-practices and a distal extension of anticipatory horizons. While the logic of fitness watches predicts the physiological recovery time after a strenuous workout, a matter of hours or days, for the human-in-the-centre, the dataistic self-evaluation extends to vocational futures, for example.

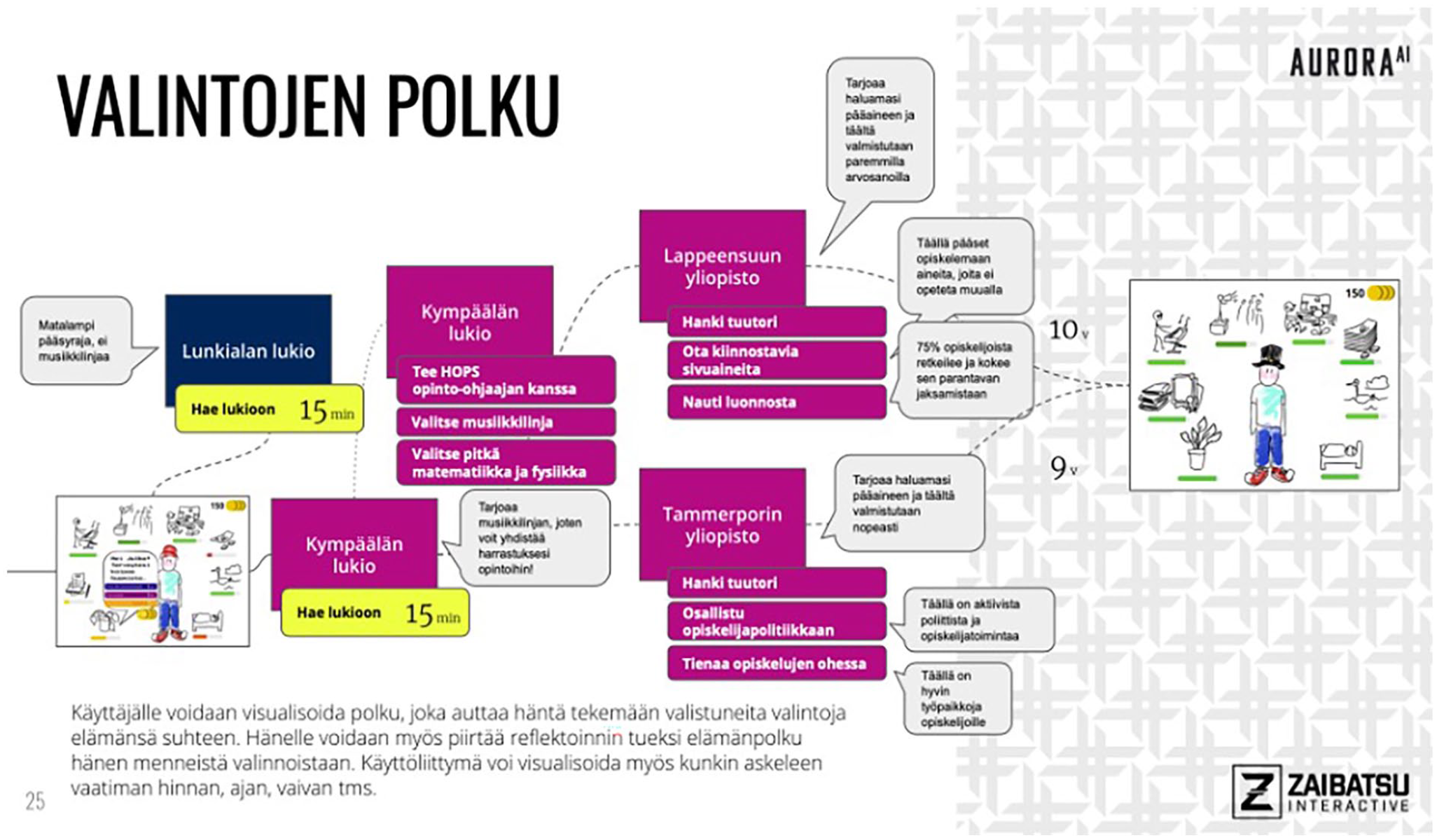

In the design proposal for the ‘How Am I Doing’ service (Ministry of Finance and Zaibatsu Interactive, 2021), a diagram entitled ‘a path of choices’ (see Figure 4) depicts a teenager at the end of her elementary school studies, reflecting on her choice of secondary education options. The diagram depicts an interface made of a branching tree of algorithmically anticipated choices. One branch, which first leads to a highly esteemed secondary school, continues growing towards a bachelor’s programme, further on towards a master’s, ending in a depiction of an avatar with all their welfare gauges in green. Each node is supplemented with more minor, data empowered life choices, like that of a hobby (‘75% of students like you enjoy hiking’) or minor subject. Another branch of the tree starts off at a mediocre secondary school, and the branch ends there, cutting the path of life choices short.

A diagramme from the design proposal for the ‘How Am I Doing’ service (Ministry of Finance and Zaibatsu Interactive, 2021), depicting a tree of pre-empted life choices.

Algorithmic evaluation and recommendation extend beyond the temporally proximal view into fitness and recovery, towards the large-scale biography of the human’s lived experience. While it has been argued that technologies of self-quantification shift the burden of welfare on the neoliberal subject (e.g. Lupton, 2013), in the case of the human-in-the-centre, this does not imply a withdrawal of the state bureaucracy. Rather, the bureaucratically enabled data reflection produces a new ‘micro-locale of government’ (Rose, 1999), in which the subject of quantification becomes a locus for a kind of ‘info-liberal’ governance (Catlaw and Sandberg, 2018). The human-in-the-centre, thus, has not only a specific orientation towards himself, as a reflexive project of development, but towards the algorithmic administration, as an advisor and co-confidant invited into an intimate sphere of personal autonomy.

Discussion: the citizen turns human

The story of the human told through user representations in the Finnish National Artificial Intelligence Programme is a sequel to the sovereign consumer which replaced the welfare citizen in the 1990s, and it invokes what might be called a late-neoliberal welfare regime (McGimpsey, 2017). Contrary to common conceptions, neoliberalism was never a march towards a minimal state but rather made its distinction from classical liberalism exactly by recognising the role of the state in constructing and defending of market assemblages, not least by compelling market rationalities onto non-market domains (Davies, 2018). For the welfare state, this meant not a dismantling, but a reformation towards a more market-shaped one. In part, the shift from republican citizen to sovereign citizen-consumer is just this: the cultivation of a market agency. However, as McGimpsey (2017) argues, a late-neoliberal regime may involve ‘an alteration in such subjectivating force within the public service assemblage’. That is, while the cultural construction of the inadequate welfare subject has always been a part of the welfare-state project, the techniques by which inadequate subject positions are produced demarcate shifts in welfare regimes. The metaphoric shift from the consumer to the human introduces a novel vision of paternal knowledge-making and dividuation (Deleuze, 1992) for the welfare subject and a new orientation of the role of the public administration in the lives of these citizens. The inadequate human who is computationally modellable and informationally augmentable makes space for a fantasy of direct and automatic control with tools of governance more intimate and personal than before.

Returning to the liberal contradiction in the welfare state, the push and pull between free markets and welfare interventions was to be resolved by quasi-markets, but not before the symbolic resolution of the welfare subject as primarily a sovereign consumer. The human-centric government is not so much a departure from this, as it is its intensification. The AuroraAI Network closely follows the P.A.C.T blueprint identified by Giesler and Veresiu (2014) as the governmental routine for the formation of the responsible consumer. The split between the ideal and non-ideal subject (Personalisation), the imposition of expert knowledge (Authorisation), the construction of empowering market mechanisms (Capabilisation) and the fostering of the self-entrepreneurial rationality (Transformation) situate the human-in-the-centre strictly within consumerist governance regimes. The shift, then, from citizen-consumer to human-in-the-centre is not so much in the waning of their consumer identity but rather the totalisation of the consumerist forms of governance: the extension of the moralistic administrative techniques to the whole human.

Even though the human-in-the-centre stems from a specific imagination of governance in the time of datafication, I am weary to diagnose a new phase of citizenship in the welfare state. That is because we should not confuse the fantasies of the civil-servant-cum-engineer with an actual policy regime. A generous interpretation of the Finnish National Artificial Intelligence Programme is that, at best, it is the inconsequential exhaust of a technopopulist hubris, the hubris of an economically liberal, technopopulist coalition government in a specific age of gilded technological idealism. Nonetheless, it is an exhaust which has enrolled not insignificant financial capital behind it, and if not political support, at least no political resistance. Instead of a coherent government strategy, what this analysis points to is the widening space for a specific kind of technopolitical of imagination in the technocratic bureaucracy. I argue that the human-in-the-centre is best understood as a techno-fiction, a chimerical and fantastic object manifest out of pervasive technocultural tropes, arising from a privileged position of technical expertise.

The human-in-the-centre is chimerical in that they are not new, but a new arrangement; a composition of neoliberal fantasies, dataisitic vagaries and ethopolitical fetishes. The torso is what David Harvey has identified as a technological fantasy of the neoliberal imagination: the cyborg consumer (Harvey, 2003). Referring by technology to the spectacle of the global media and advertising assemblage, the cyborg consumer’s desires are technologically provoked, much like the human-in-the-centre consumption choices are. The human legs are the quantified self: an identity constructed through dataistic forms of knowledge-making and regimes of anticipation, control and self-responsibility (Cheney-Lippold, 2011; Mackenzie, 2013; Schüll, 2019). The head of the chimera is the ethopolitical subject of advanced liberal governmentality: the subject of the various technics by which the paternal state conducts the lives of its citizens (Rose, 1999; Rouvroy et al., 2013). Composed together, these tendrils form the idealised cyborg-citizen-consumer: a symbolic figure who resolves the liberal contradiction of the welfare state and implies its neoliberal resolution: datafied welfare markets with augmented consumers.

The human-in-the-centre is imaginal in the sense that Bottici and Challand (2011) propose, in that it is composed of images which are the products of the individual faculties of their creators and their social contexts. They manifest from a technological and social imagination of specific technically minded individuals in agenda-setting positions within the bureaucracy, who see the world through technical metaphor. At the same time, it draws from a larger collective imaginary of technology, welfare and society and as well as a cultural repertoire of images, pervasive signs and their co-opted significations. In the human, we can easily recognise the ubiquitous cultural forms of personalised consumer recommendation, quantified fitness culture, gamification, and the curious human ontology of The Sims franchise. Most of all, the imaginal descends from above through institutionally produced representations, divorced from the civic demands of actual citizens. That is, the representations manifest from a demotic populism (Clarke et al., 2007), where citizens are spoken on behalf of, but not given a voice, as government datafication is wont to do (Broomfield and Reutter, 2022).

This cyborg citizen consumer is the product of creative storytelling in the bureaucracy, told specifically in the voice of the technocratic engineer and his allies. In identifying the voice of the engineer, it becomes natural that the metaphor for lived life in the programme is that of a state machine, a foundational abstraction in the field of software engineering. The self-entrepreneurial subject is easily a drawn-out self-reflection of the fitness-tracker-wearing engineer-civil-servant himself. Likewise, the inadequate human is the deficient negation of the engineer himself, the one who could be virtuous were they not lacking in the ways the engineer is not. If we turn our analytic eye to the work of fiction and fabulation in techno-science, as several semiotically minded scholars in STS have proposed (e.g. Latour, 1996), we can conceive of government digitalisation as a petri dish for certain kinds of storytelling to bloom. I contend that it is precisely the social esteem of the engineer which affords the Department of Government ICT the space to tell such stories which expand beyond the digital to impose on the citizen, the human and the organisation of the institutions of the welfare society.

Conclusion

In this article, I have approached the metaphor of the human-in-the-centre through a close reading of user representations produced and circulated in the work of building a technological human-centric welfare reform. The analysis reveals the human-in-the-centre to be a chimerical fantasy: a machinic subject who can be technically modelled, augmented and made self-responsible. Above all, this human-in-the-centre is a technologically extended consumer, around which new kinds of personalised service markets can be constructed. The narrative manifestation of this new citizen subject imposes on the rights and responsibilities associated with republican citizenship and implicates a late-neoliberal regime in the digitalising welfare state.

Although the politics of the human-in-the-centre are imminent and transformational, I argue that it cannot be fruitfully approached as a coherent policy regime. Rather, the human-in-the-centre should be seen as a kind of techno-fiction, one which is indicative of the work of engineers as narrators of social progress. The human-in-the-centre is composed of opportunistic metaphors drawn from engineering theory as well as co-opted, pervasive cultural tropes such as recommendation, gamification and self-quantification. It comes together as a fantastic narrative, divorced from citizen demands or technological realities, but one which marries an engineering fantasy of cybernetic control with the neoliberal fetish of provoked markets as a societal panacea.

As the digital is encroaching upon the world in new and creative ways, the narrative spaces for those who make claims to speak on behalf of technology are ever widening. Thus, the narratives of social change and technological development become further entwined, and the voices which speak for the digital get amplified as voices which speak on behalf of progress. At this juncture, it is then more important than ever to dissect the fabulations of the technologist, those which move resources, enroll allies and build projects, and in doing so contribute to moving the horizons of the imaginable. Approaching technologists as storytellers, I contend, opens a thick understanding of a significant force imposing upon contemporary culture and its institutions, such as citizenship.

Footnotes

Funding

The author(s) received no financial support for the research, authorship and/or publication of this article.