Abstract

Objectives:

Previous studies showed prediction effects in language processing within a language. Yet only a few studies have specifically investigated prediction effects across languages. In this study, we examined if prediction influences subsequent within- and cross-language production.

Methodology:

After hearing a classifier (e.g., “a cup of”), participants were asked to name a picture with a color frame indicating which language to use. The picture names could be semantically congruent or incongruent to the classifier (e.g., tea vs brick). Classifier language and response language could be the same or different within each trial for Chinese-English bilinguals. While both classifier language and response language varied in Experiment 1 (n = 40), classifier language was kept constant within blocks (but was manipulated across blocks) in Experiment 2 (n = 40).

Data and analysis:

We analyzed reaction times and accuracy by constructing linear mixed-effect models.

Findings:

In both experiments, better performance was observed in semantically congruent conditions (“a cup of tea”) than in semantically incongruent conditions (“a cup of bricks”), showing a semantic classifier congruency effect. Yet, this effect was found only when classifier and response language was the same but it was absent or even reversed when they were different. Our results showed that prediction only facilitated subsequent within-language but not cross-language production.

Originality:

This is the first study to test the influence of prediction on within-language and cross-language production.

Significance:

Our study contributes to the evidence about the prediction effects on language processing. In addition, we addressed the gap in understanding the role of prediction in language production. Our results indicate that prediction impacts within-language and cross-language production in different ways, facilitating within-language production while hindering cross-language production.

Keywords

Introduction

To navigate in a world bombarded with information, the brain has been recognized as a predictive machine to use inferences for information processing (Hohwy, 2013). This predictive function could also be seen in the domain of language processing (Ryskin & Nieuwland, 2023), in which predictions refer primarily to the further course of a linguistic utterance sentence, or interaction. In contrast to previous studies, which focused on how the predictability of upcoming input influences language processing within a language (e.g., Grisoni et al., 2021; Pulvermüller & Grisoni, 2020), we were interested in investigating how prediction influences subsequent within-language and cross-languages production in our current study.

Prediction in language processing

Previous studies have shown that prediction driven by a highly constraining context affects language comprehension (Grisoni et al., 2021; Ito et al., 2016; Kim et al., 2023; Lelonkiewicz et al., 2021; Leon-Cabrera et al., 2019; Martin et al., 2018; Ness & Meltzer-Asscher, 2018). A study by Grisoni et al. (2024) went beyond language comprehension and investigated if prediction also affects language production. In their study, participants were asked to produce a word to complete a sentence in a highly or less constraining context, in which a specific word either could or could not be predicted. Result showed anticipatory brain activity (defined by the mean ERP amplitude during the last 200 ms before speech onset of the participants) and response facilitation in a high constraining context, indicating an influence of prediction on subsequent language production in a monolingual (i.e., within-language) situation. To our knowledge, this is so far the only study focusing on the role of semantic prediction on language production, highlighting the need for more empirical evidences in this area.

Importantly, the role of prediction in language processing was not only explored with respect to the first, typically native language (L1) of participants, but also for their second language (L2). In the domain of L2 language processing, however, research has found mixed findings on the effect of prediction. While some studies did not find evidence of prediction effect in L2 processing (Ito et al., 2017; Martin et al., 2013), other observed an effect of predictions also in L2 (Chun & Kaan, 2019; Dijkgraaf et al., 2017; Foucart et al., 2014). For instance, Dijkgraaf et al. (2017) found predictive eye movements in both Dutch-English bilinguals and English monolinguals when listening to a highly constraining English sentence. Yet, the study also showed delayed predictive eye movements when comparing bilinguals in their L2 English with English monolinguals. This observation of a difference in timing might explain why other studies did not observe prediction effects in L2 when not considering the possibility of delayed prediction effects.

Taken together, previous studies clearly indicate prediction effects in L1 and also hint toward prediction effects in L2, albeit these effects might be smaller or later in time. However, it is also important to mention that most of previous studies focused on the effect of prediction on language comprehension, while less research has explored its impact on language production (for an exception, see Grisoni et al., 2024).

Prediction by production

Although there is little specific research on the effect of prediction on production, prediction and production are assumed to have a close connection. That is, with respect to the question how prediction occurs, the notion of prediction by production has been proposed (Dell & Chang, 2014; Federmeier, 2007; Kuperberg & Jaeger, 2016; Lelonkiewicz et al., 2021; Martin et al., 2018; for reviews, see Pickering & Gambi, 2018; Pickering & Garrod, 2007, 2013). To be more specific, when processing a speech input, we will predict the next outcome by using our own production system. As a result, word representations including the meaning-, grammar- and sound-related features are activated—either sequentially (Pickering & Gambi, 2018) or in parallel (Pickering & Strijkers, 2024).

To add more detail about prediction processing, previous research (DeLong et al., 2005; Wlotko & Federmeier, 2012) proposed that we, while comprehending linguistic input, generate predictions by weighing the likelihood of various possible outcomes. These probabilities can be flexibly adjusted by bottom-up input either validating or disconfirming the predictions during language processing. In a similar vein, Staub et al. (2015) proposed an activation-based race model about the predictive processes in language production. Specifically, they suggested that multiple concurrent potential responses are activated in parallel even in highly constraining contexts. These responses then independently “race” against each other to reach a response threshold. The first one to succeed determines the final output (i.e., language production). These studies imply that a collection of possibilities can be generated by prediction, which is also supported by research showing that prediction generally activates broad semantic features associated with multiple words, rather than just one specific word (Wang et al., 2020).

Prediction via classifier processing: semantic classifier congruence versus incongruence

In our study, we primarily focused on semantic predicting, that is the prediction of the semantic meaning of a word that has to be subsequently produced. To do so, we use the semantic classifier congruence effect as an empirical marker for prediction in the present study. Classifier hereby refer to the form of existence of specific object(s) (e.g., a bowl of noodles). In language usage, a certain classifier could only be associated with a limited range of nouns (Lehrer, 1986). The awareness of an unarbitrary combination of classifier and nouns stem from the statistical regularity learned during language acquisition. This assumption is supported by Romberg and Saffran (2010) who found language learners are conscious of the transitional probabilities between adjacent elements in language. In addition, it was also shown that children already start to make predictions once they gain adequate language proficiency (Rabagliati et al., 2016). Building on this evidence, we suppose that prediction processes could be activated by a classifier establishing a highly constraining context.

In the current study, semantic classifier congruence and incongruence were defined as whether the noun is likely to be predicted based on a classifier (e.g., congruent: “a bowl of noodles” vs incongruent: “a bowl of students”). To make sure predictions generated by a classifier are comparable in L1 (e.g., Chinese) and L2 (e.g., English), the current study only considers those Chinese classifiers that have corresponding English translation equivalents. Although we acknowledge that unlike Chinese, English is regarded as non-classifier language, classifiers in English serve the same function of conveying semantic features as they do in Chinese (e.g., measure classifier like “a box of,” arrangement classifier like “a row of,” and collective classifier like “a nest of”; Allan, 1977, 1978). Previous studies showed faster responses in a classifier-congruent condition than in a classifier incongruent condition, when participants performed a phrase-production task combined with a picture-word interference paradigm (i.e., classifier + noun; Huang & Schiller, 2021; Wang et al., 2019; Zhang & Liu, 2009). Furthermore, previous studies also suggested that processing a classifier activates semantically congruent conceptual/lexical representations (Huettig et al., 2010; Mitsugi, 2020). For example, in a study of Huettig et al. (2010) using a visual world paradigm, participants already directed their gaze more to a semantically congruent picture than to the semantically incongruent picture after hearing a classifier but not yet the noun. Interestingly, this result was found in Japanese speakers and L2 Japanese learners.

In a previous own study (Tong et al., 2025), we examined if predictions can influence subsequent language production in monolingual and bilingual situations. A classifier was presented first followed by a picture that had to be named by participants. In Experiment 1, a classifier appeared visually and English native speakers were asked to name the picture as quickly and accurately as possible. In two other experiments, the classifier was presented visually (Experiment 2) or auditorily (Experiment 3) to Chinese-English bilinguals. The language was manipulated between trials but the response language was always the same as the classifier language (i.e., only within-language conditions). The hypothesis of this study was that if prediction via classifier processing influenced language production, then a semantic classifier congruency effect should be observed, evident by faster responses in the semantically congruent trials than in the semantically incongruent trials. The results supported this expectation by showing a semantic classifier congruency effect in English native speakers and in Chinese-English bilinguals. In addition, this effect differed in size depending on the language, with a larger effect for L1 Chinese than for L2 English. These findings demonstrated the influence of prediction driven by processing a classifier on language production and also language-specific influences during prediction. Yet, these findings are limited and their generality is restricted. That is, until now, the impact of prediction was only explored in within-language production. A further step should be taken to investigate if prediction also influences cross-languages production.

Current study

The aim of present study was to investigate if prediction via processing an external input influences within-language and cross-languages production. Classifiers were used as external input creating a highly constraining context. In our study, a classifier was presented to the participants first, followed by a picture that they were asked to name. Through manipulating semantic classifier congruence between the classifier and the picture, the naming response could either be in line with or contradict a prediction. Importantly, classifier language and response language could be the same or different, introducing within-language and cross-languages production after a prediction. Whereas both classifier and response language were manipulated within a block in Experiment 1, the classifier language was manipulated block-wise in Experiment 2.

By manipulating the response language within a block in both experiments, we included both language repetitions and language switches in consecutive naming responses. Thus, the language relation was not only variable between the classifier and response language but also between two consecutive naming responses (i.e., language switching with respect to the actual language-production task of the participants). In previous studies of language switching in language-production tasks, a language switching cost was typically observed (Christoffels et al., 2007; Declerck et al., 2015; Declerck & Koch, 2023; Kroll et al., 2008; Liu et al., 2016; Meuter & Allport, 1999; Philipp & Koch, 2009). That is, it was more effortful to switch from one language to another than to repeat the language. Since language switching was possible in the sequence of naming responses in this study, we analyzed language switching in the sequence of naming responses as well. Yet, we focused on the language relation between the classifier and the response, as this is more closely related to the actual research question (i.e., the occurrence of a semantic classifier congruency effects in within-language vs cross-language conditions).

In both experiments, we expected to observe a semantic classifier congruency effect as a marker of prediction. That is, participants were expected to respond faster in the semantically congruent condition than in the semantically incongruent condition. This result should occur when classifier and response language was the same, which would be a replication of our previous study (Tong et al., 2025). If prediction and, thus, an activation of semantically related nouns also occurs across languages, a semantic classifier congruency effect should also be found when the classifier language is different from the response language (i.e., L1 L2 language direction or L2 L1 language direction). In addition, we suppose that this effect might be weaker when the prediction is generated by a L2 classifier.

Experiment 1

The main aim of Experiment 1 was to investigate the semantic classifier congruency effect across languages. In Experiment 1, both the classifier language (L1 Chinese vs L2 English) and the response language (L1 Chinese vs L2 English) were manipulated within a block to create within-language conditions (L1-L1 and L2-L2) and cross-language conditions (L1-L2 and L2-L1).

Method

Participant

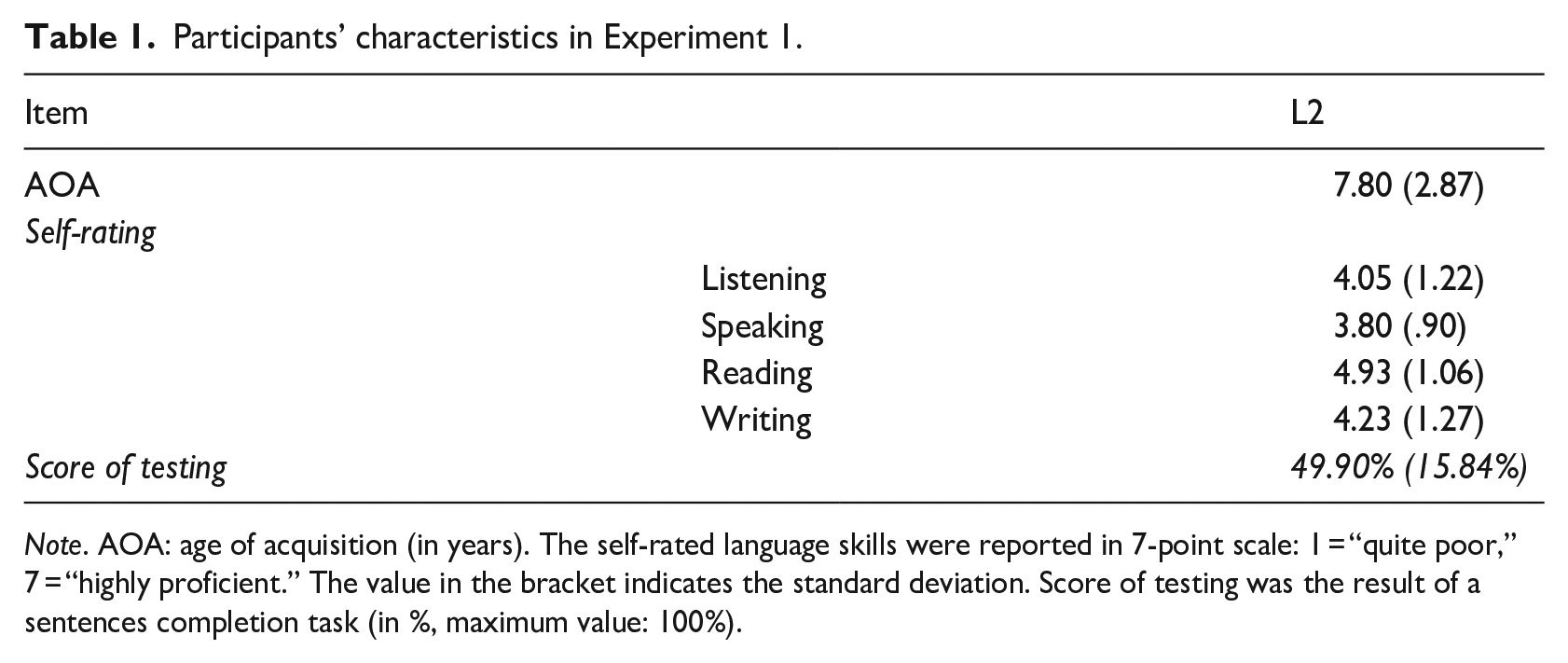

Forty participants studying at RWTH Aachen University took part (21 females, 18 males, 1 person did not report gender; 36 right-handed, 4 left- handed; mean age: M = 24.43 years, SD = 3.29). Chinese was their native language (L1) and English was the second language (L2). Before the experiment, participants were asked to fill in a questionnaire about the age of L2 acquisition, the self-rated language skills in English (7-point scale: 1 = quite poor, 7 = highly proficient, see Table 1) and completed an online sentence completion task for English as a language proficiency test (http://www.itt-leipzig.de/static/wstenglisch_01p/index.html). According to these results, the participants could be considered as unbalanced bilinguals.

Participants’ characteristics in Experiment 1.

Note. AOA: age of acquisition (in years). The self-rated language skills were reported in 7-point scale: 1 = “quite poor,” 7 = “highly proficient.” The value in the bracket indicates the standard deviation. Score of testing was the result of a sentences completion task (in %, maximum value: 100%).

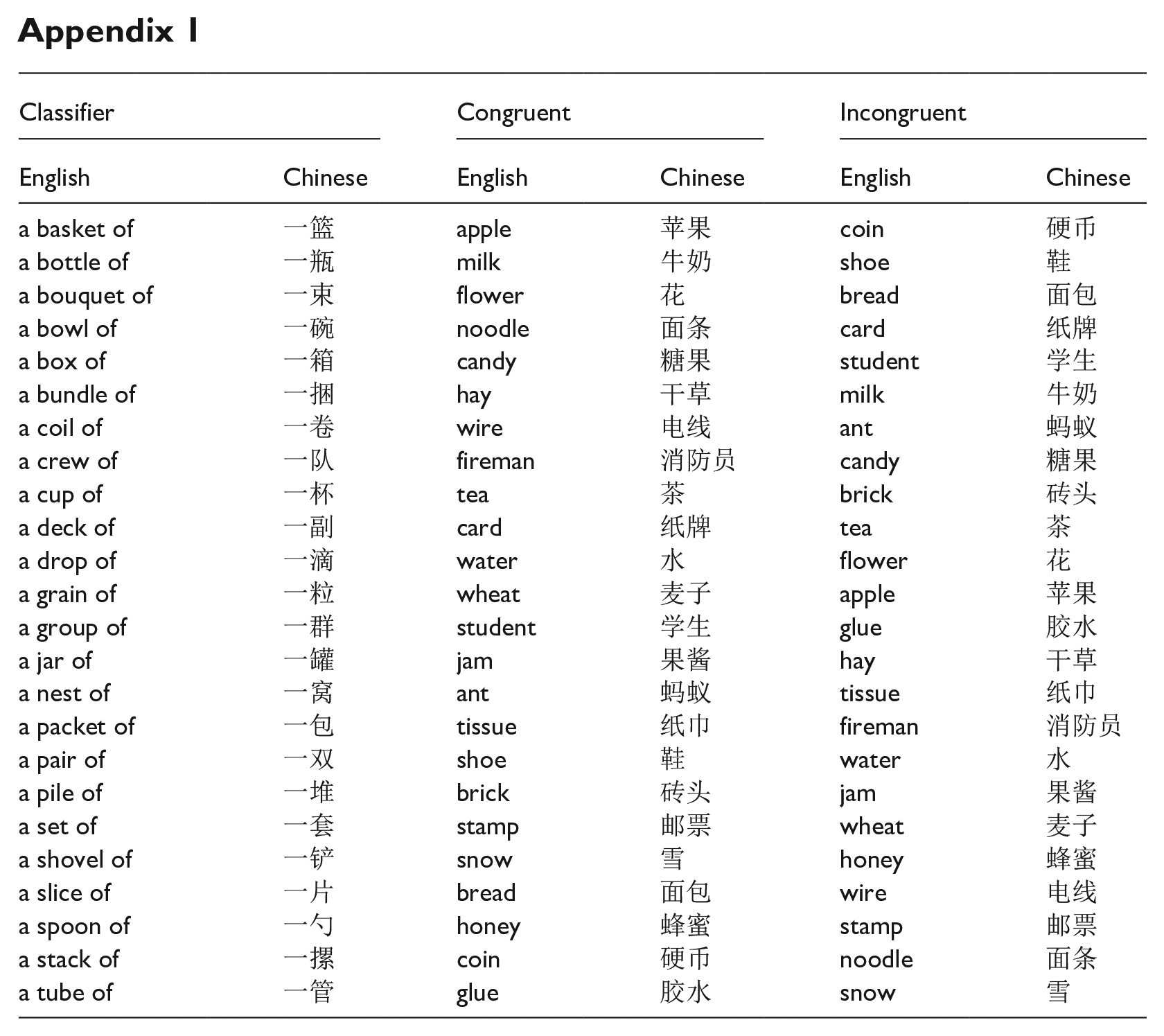

Materials and apparatus

In total, 24 classifiers and 24 color pictures were adopted from our previous study (Tong et al., 2025; see Appendix 1). All classifiers were once combined with a congruent picture/noun and once with an incongruent picture/noun (e.g., a deck of cards vs a deck of tea). Correspondingly, each picture was once used in a congruent and once in an incongruent classifier-picture/noun pair, leading to 48 different classifier-picture/noun pairs altogether.

The experiment was programmed and run using PsychoPy2 (Peirce et al., 2019), version 2021.2.3. Speech onset of vocal responses was recorded with a voice key using a microphone. Speech errors were coded offline by an experimenter during the experiment.

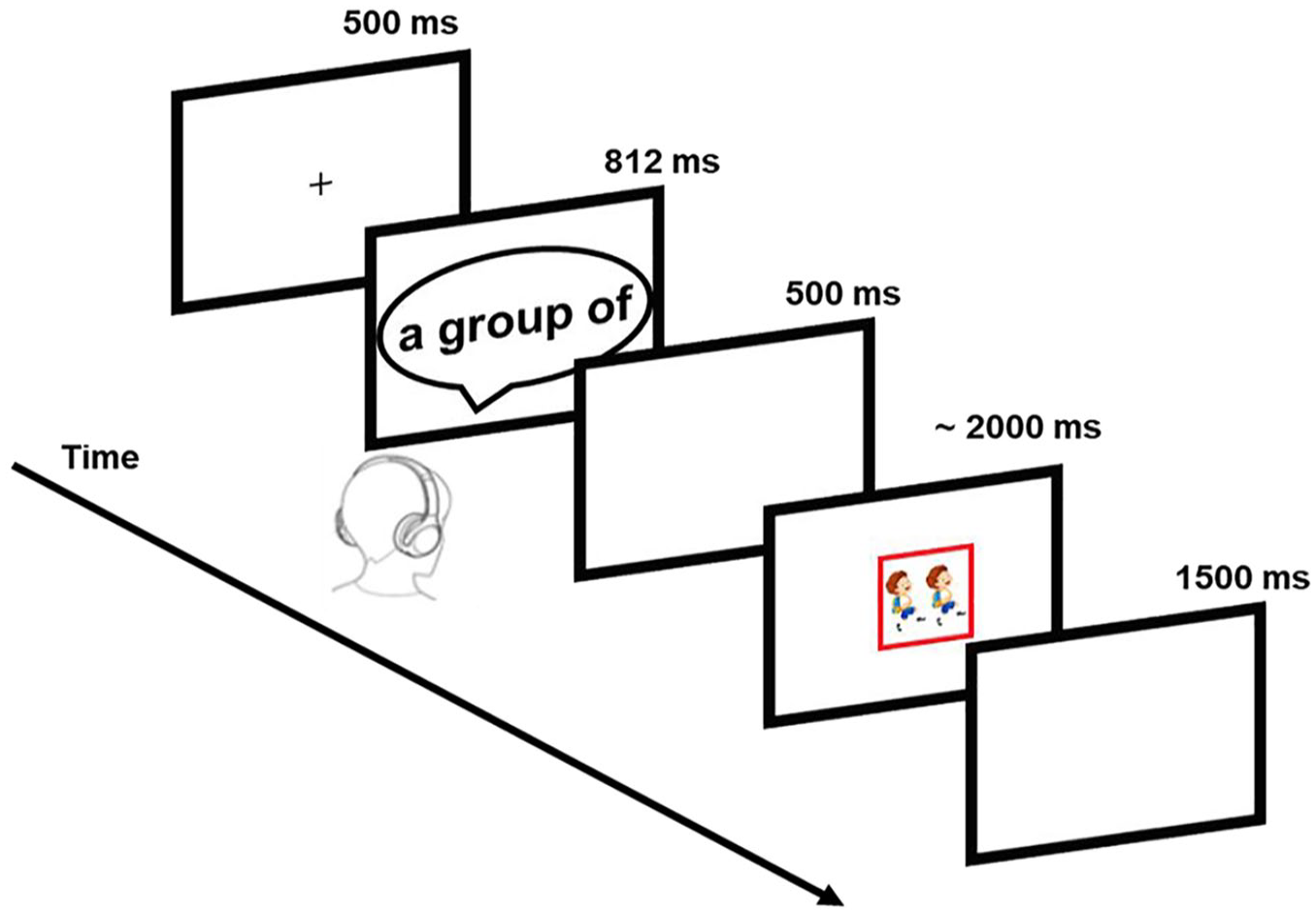

Procedure

In the picture-naming task, participants were asked to name the picture as fast and as accurately as possible (see Figure 1). Each trial started with a fixation cross for 500 ms. After this time, the classifier was presented auditorily followed by a 500 ms blank screen. Finally, a color-framed picture was presented. The picture disappeared once participants named the picture. Participants were asked to use either Chinese or English for naming the picture according to a colored frame (red vs blue, see Figure 1). Each color represents one of the languages (i.e., L1 Chinese vs L2 English). The color-language mapping was counterbalanced across participants. If a participant did not respond within 2,000 ms, the picture would disappear automatically. After the picture disappeared (i.e., after a response or when no response was given within 2,000 ms) the screen turned blank for 1,500 ms before the next trial started.

Experimental procedure of Experiments 1 and 2.

There were 16 blocks. Each block contained 48 trials and 2 warm-up trials. Each classifier-picture pair appeared once in each block. In total, there were 16 conditions resulting from the combinations of 4 factors: semantic classifier congruence (noun semantically congruent vs incongruent to the classifier), response language (L1 vs L2), classifier-response languages relation (same vs different), and consecutive response-language relation (repeat vs switch). The sequence of trials was pseudo-random to make the number of trials for each condition equal.

Design

We used a 2 × 2 × 2 × 2 within-subjects designs with the independent variables semantic classifier congruence (noun semantically congruent vs incongruent to the classifier), response language (L1 vs L2), classifier-response languages relation (same vs different), and consecutive response-language relation (repeat vs switch). The dependent variables were reaction time (RT) and accuracy.

Data analysis

The data, analysis script and file with explanation are available at https://osf.io/6ykvf/. The function buildmer (buildmer package; version 2.8; Voeten, 2019) within R (R Core Team, 2013/2017) was used to analyze data and to construct a best fitting linear mixed-effect model. Model selection in this function started with the maximal model and then performed a backwards stepwise elimination until the best fitting model was obtained. After that, the lme4 package (version 1.1–32; Bates et al., 2014) was used to analyze the data.

The model with the maximal structure included semantic classifier congruence, response language, classifier-response languages relation, and consecutive response-language relation as fixed effects, as well as participants and items as random effects. Semantic classifier congruence, response language, classifier-response languages relation, and consecutive response-language relation were included as by-subject and by-item random slopes. All fixed effects were two-level categorical predictors and coded as −0.5 and 0.5. For RT analysis, RTs were log-transformed and used as dependent variable. For accuracy analysis, response type (correct trials coded as 1; error trials coded as 0) was the dependent variable.

Result

The first two trials of each block (i.e., the two additional warm-up trials) and all trials with RT outside ± 3z around the mean of all trials for each participant were excluded. For RT analysis, additionally, error trials (wrong word, wrong language, or no response), trials following an error response, and “empty” trials caused by voice key errors were excluded. In the end, 87.92% of the data were included in RT analysis. For the accuracy analysis, we included correct trials and wrong response trials, but not trials following an error response and “empty” trials caused by voice key errors. In total, 91.01% trials were included for accuracy analysis.

Reaction time

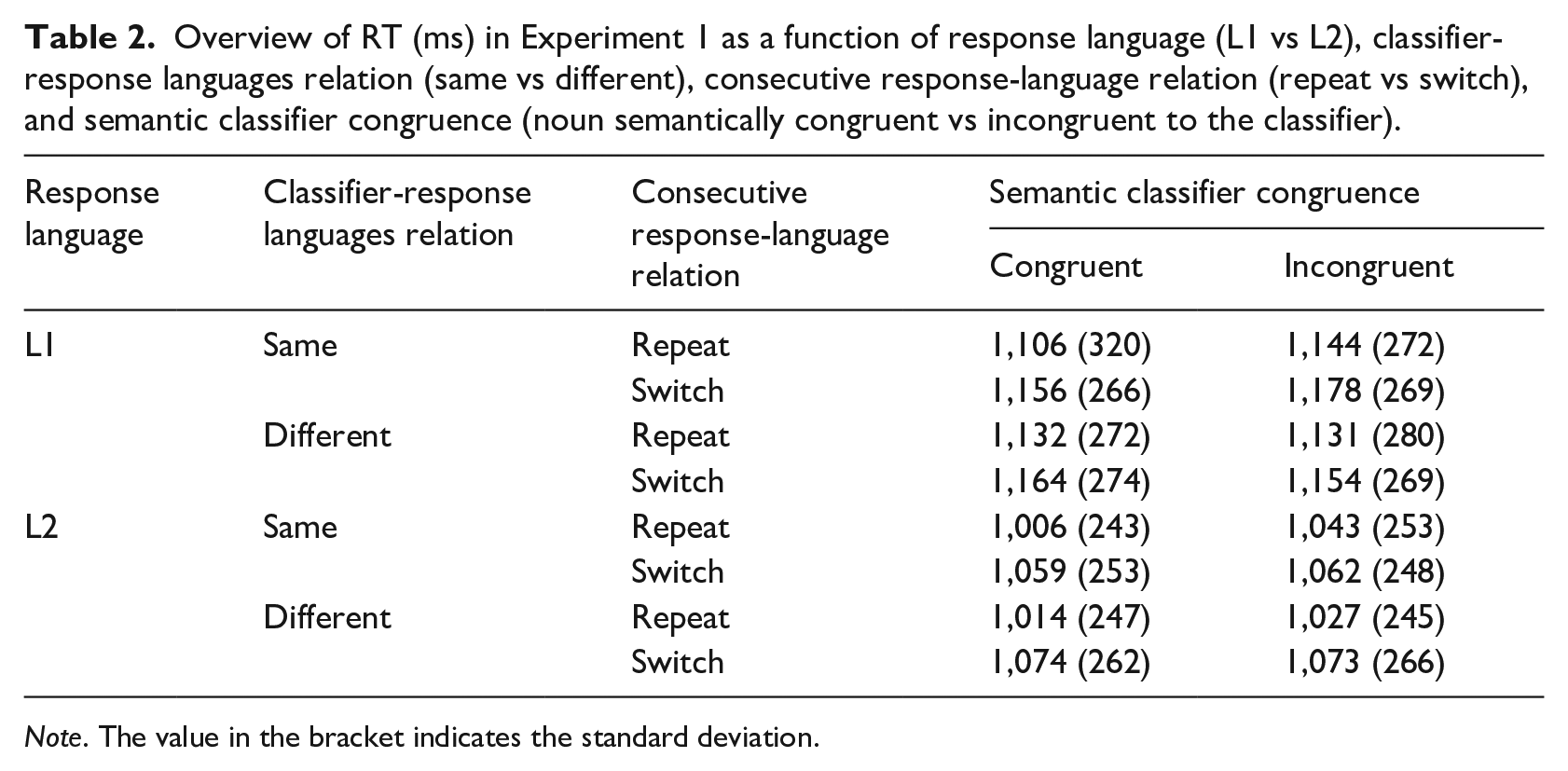

The overview of RT is shown in Table 2. The main effect of semantic classifier congruence was significant, β = .01, SE = 0.002, t = 5.33, p < .001, with 13 ms faster responses in the semantically congruent condition than in the semantically incongruent condition (1,104 ms vs 1,117 ms). The main effect of response language was significant, β = −.09, SE = 0.01, t = −8.72, p < .001, demonstrating a reversed language dominance effect with 100 ms faster response in L2 than in L1 (1,061 ms vs 1,161 ms). The main effect of consecutive response-language relation was also significant, β = .04, SE = 0.002, t = 16.52, p < .001. Better performance was found when the consecutive response language repeated between trials than when it switched (1,091 ms vs 1,130 ms), indicating a 39 ms language switch cost for naming the picture.

Overview of RT (ms) in Experiment 1 as a function of response language (L1 vs L2), classifier-response languages relation (same vs different), consecutive response-language relation (repeat vs switch), and semantic classifier congruence (noun semantically congruent vs incongruent to the classifier).

Note. The value in the bracket indicates the standard deviation.

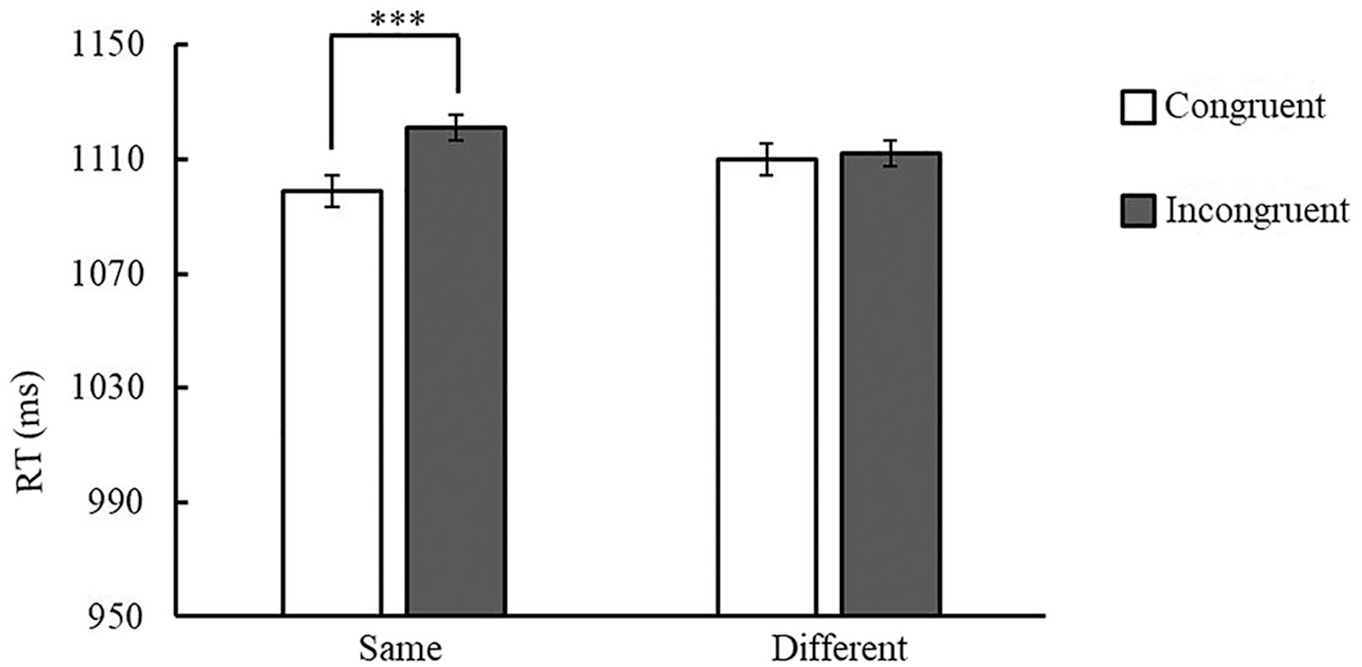

Although the main effect of classifier-response language relation was not significant (1,110 ms vs 1,111 ms, β = .002, SE = 0.002, t = .64, p = .52), the interaction of semantic classifier congruence and classifier-response language relation was significant, β = −.02, SE = 0.01, t = −5.00, p < .001. Follow-up analyses showed that participants responded 22 ms faster in semantically congruent conditions than in incongruent conditions when classifier and response languages was the same (1,099 ms vs 1,121 ms), β = −.02, SE = 0.003, z = 7.30, p < .001. Yet, the RTs in the congruent and the incongruent condition were similar when classifier and response languages were different (1,110 ms vs 1,112 ms), β = −.001, SE = 0.003, z = −.23, p = .82 (see Figure 2). Thus, a semantic classifier effect of 22 ms was observed within a language, but this effect was not significant across languages (2 ms). This two-way interaction was further modulated by a significant three-way interaction of semantic classifier congruence, response language and classifier-response languages relation, β = −.02, SE = 0.01, t = 2.07, p = .04. Follow-up analyses showed a 28 ms semantic classifier congruency effect for the L1-L1 classifier-response language pairs (1,146 ms vs 1,174 ms), β = −.02, SE = 0.005, z = −6.27, p < .001. The semantic classifier congruence effect was smaller but still significant in L2-L2 pairs (1,051 ms vs 1,068 ms; 17 ms effect), β = −.02, SE = 0.005, z = −4.05, p < .001. Yet, RTs were similar between congruent and incongruent conditions in L2-L1 language pairs (1,158 ms vs 1,160 ms; 2 ms), β = −.004, SE = 0.005, z = .75, p = .45, and in L1-L2 language pairs (1,062 ms vs 1,065 ms; 3 ms), β = −.005, SE = 0.004, z = −1.10, p = .27.

Mean RT (ms) in Experiment 1 as a function of semantic classifier congruence (noun semantically congruent vs incongruent to the classifier) and classifier-response languages relation (same vs different).

The interaction of semantic classifier congruence and consecutive responses languages relation was also significant, β = −.02, SE = 0.01, t = −3.58, p < .001. Follow-up analyses showed that participants responded faster in the congruent condition than in the incongruent condition in consecutive response language repeat trials (1,081 ms vs 1,101 ms), β = −.02, SE = 0.003, z = −6.32, p < .001. Yet the response time between these two conditions was similar in consecutive response language switch trials (1,128 ms vs 1,132 ms), β = −.004, SE = 0.003, z = −1.23, p = .22.

As regards language-switch costs with respect to two consecutive naming responses, the interaction of response language and consecutive response-language relation was significant, β = −.01, SE = 0.01, t = 2.36, p = .02. Follow-up analyses showed that participants responded faster when the response languages in two consecutive trials were both L1 than when the responses languages switched from L2 to L1 (1,143 ms vs 1,176 ms), β = −.03, SE = 0.003, z = −9.94, p < .001, indicating a 33 ms L1 language switch cost with respect to two consecutive naming responses. Similarly, faster responses were found for L2 language repetitions in the naming response compared to language switches from L1 to L2 (1,039 ms vs 1,084 ms), β = −.04, SE = 0.003, z = −13.46, p < .001, indicating a 45 ms L2 language switch cost. Thus, the results showed language switch costs for both L1 and L2 but these switch costs were smaller in L1 than in L2 (i.e., reversed asymmetrical language-switch cost).

Furthermore, language switch costs were also modulated by the classifier-response language relation, as indexed by the significant three-way interaction of response language, classifier-response language relation, and consecutive response-language relation, β = −.03, SE = 0.01, t = 2.88, p = .004. Follow-up analyses showed a comparable size of response-language switch costs for L1 (39 ms, β = −.04, SE = 0.005, z = −8.54, p < .001) and L2 (39 ms, β = −.04, SE = 0.005, z = −8.14, p < .001) when the classifier was L1). Whereas for L2 classifier, a smaller L1 (response language) switch cost of 28 ms (β = −.03, SE = 0.005, z = −5.52, p < .001) compared to a 51 ms L2 (response language) switch cost was found (β = −.05, SE = 0.005, z = −10.89, p < .001). There were no other significant effects (all ps > .07; more details of the final model could be found in Supplementary Materials Table S1).

Accuracy

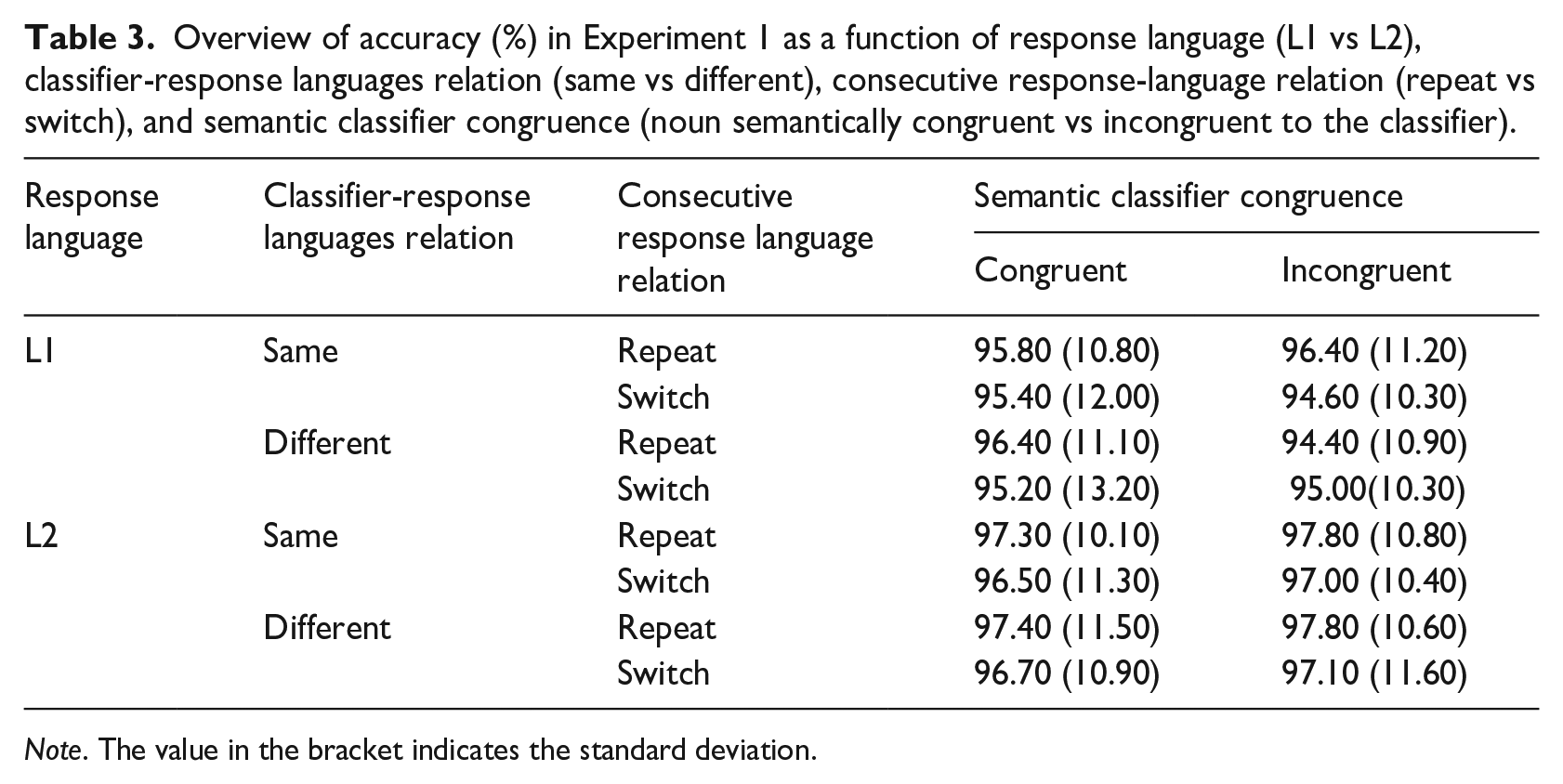

The overview of accuracy can be found in Table 3. The main effect of response language was significant, β = .50, SE = 0.12, z = 3.99, p < .001, with 1.6% higher accuracy in L2 than in L1 (97.20% vs 95.60%), suggesting a reversed language dominance effect as in the RT results. The main effect of consecutive response-language relation was significant, β = −.35, SE = 0.11, z = −3.18, p = .001, with 1.0% higher accuracy when consecutive response languages repeated than when they switched (96.90% vs 95.90%). No other significant main effects or interactions were found (ps > .10; more details of the final model can be found in Supplementary Materials Table S2).

Overview of accuracy (%) in Experiment 1 as a function of response language (L1 vs L2), classifier-response languages relation (same vs different), consecutive response-language relation (repeat vs switch), and semantic classifier congruence (noun semantically congruent vs incongruent to the classifier).

Note. The value in the bracket indicates the standard deviation.

Discussion

In line with our previous study (Tong et al., 2025), faster responses were found in the semantically congruent classifier-response condition than in the semantically incongruent condition. Importantly, this facilitation in RT was found when classifier and response language were the same (i.e., within language). However, we did not find this result when classifier language and response language were different (i.e., across languages).

A potential problem of Experiment 1 might have been that both classifier languages and response languages varied unpredictably between trial. As a consequence, the overall experimental setting could have been too complex for participants. More specifically, the high overall variability could have prevented the participants from making strong predictions, which might have been the case specifically for L2. To reduce the overall trial-to-trial variability, we thus kept the classifier language constant within a block in Experiment 2 but only varied it between blocks.

Experiment 2

In Experiment 2, we explored the semantic classifier congruency effect across languages when the classifier language was constant within a block. To this end, the classifier language was manipulated block-wise, while the response language was still varied within a block so that it could be the same or different from the classifier language in each trial.

Method

Participant

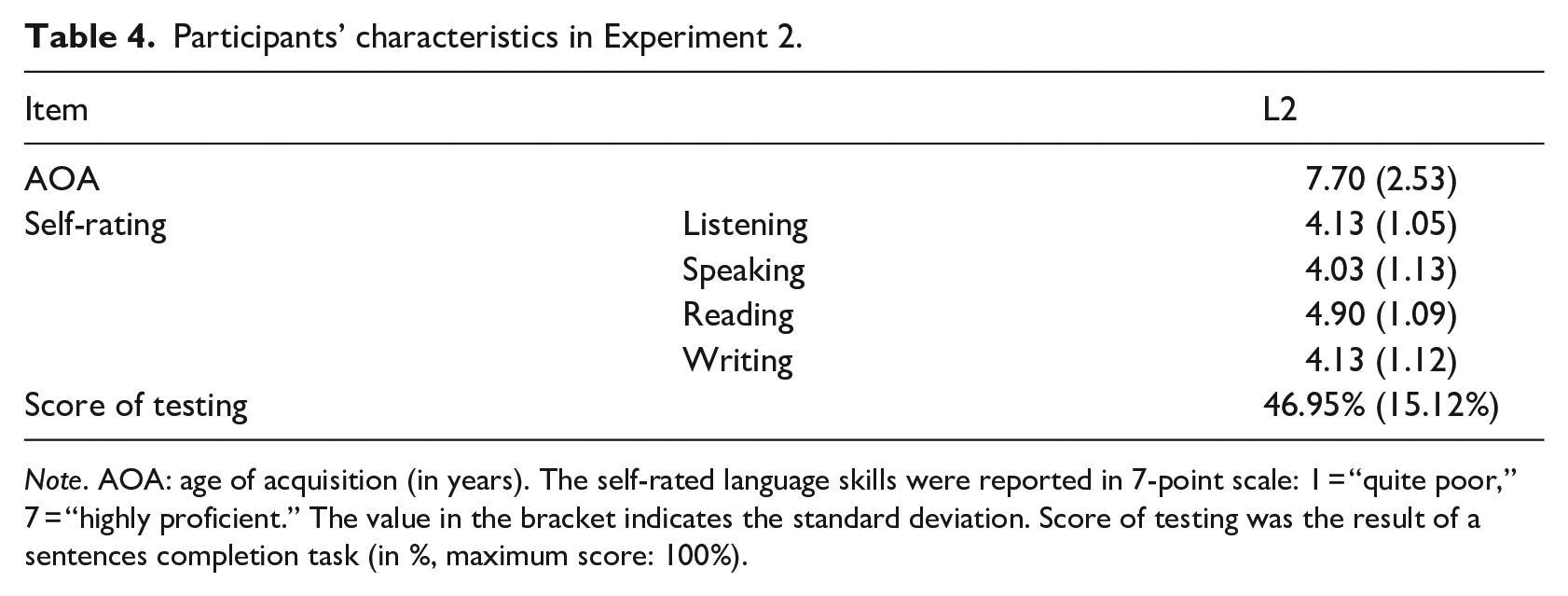

Forty participants studying at RWTH Aachen University took part (20 females, 19 males, 1 did not reported gender; 40 right-handed; mean age: M = 23.93 years, SD = 3.86). Chinese was their native language (L1) and English was the second language (L2). Before the experiment, participants were asked to fill in a questionnaire about the age of L2 acquisition, the self-rated language skills in English (7-point scale: 1 = quite poor, 7 = highly proficient, see Table 4) and completed an online sentence completion task for English as a language proficiency test (http://www.itt-leipzig.de/static/wstenglisch_01p/index.html). According to these results, the participants could be considered as unbalanced bilinguals. Furthermore, there were no significant differences between the participants in Experiment 1 and those in Experiment 2 regarding the age of L2 acquisition, self-rated skills, or proficiency test scores (all ps > .33). Thus, the participant groups in Experiments 1 and 2 can be considered to be comparable with respect to their L2 proficiency.

Participants’ characteristics in Experiment 2.

Note. AOA: age of acquisition (in years). The self-rated language skills were reported in 7-point scale: 1 = “quite poor,” 7 = “highly proficient.” The value in the bracket indicates the standard deviation. Score of testing was the result of a sentences completion task (in %, maximum score: 100%).

Materials and apparatus

The materials and apparatus were identical to those used in Experiment 1.

Procedure

The procedure was the same as in Experiment 1 except for classifier language being manipulated block-wise and the number of blocks being reduced to 12 experimental blocks.

Design

We used a 2 × 2 × 2 × 2 within-subjects designs with the independent variables semantic classifier congruence (noun semantically congruent vs incongruent to the classifier), response language (L1 vs L2), classifier-noun languages relation (same vs different), and consecutive response-language relation (repeat vs switch). The dependent variables were RT and accuracy.

Data analysis

Data analysis was the same as in Experiment 1. The data, analysis script, and file with explanation are available at https://osf.io/6ykvf/.

Result

For data exclusion, the same criteria as in Experiment 1 were applied. In the end, we included 89.31% of all trials for RT analysis and 92.66% of all trials for accuracy analysis.

Reaction time

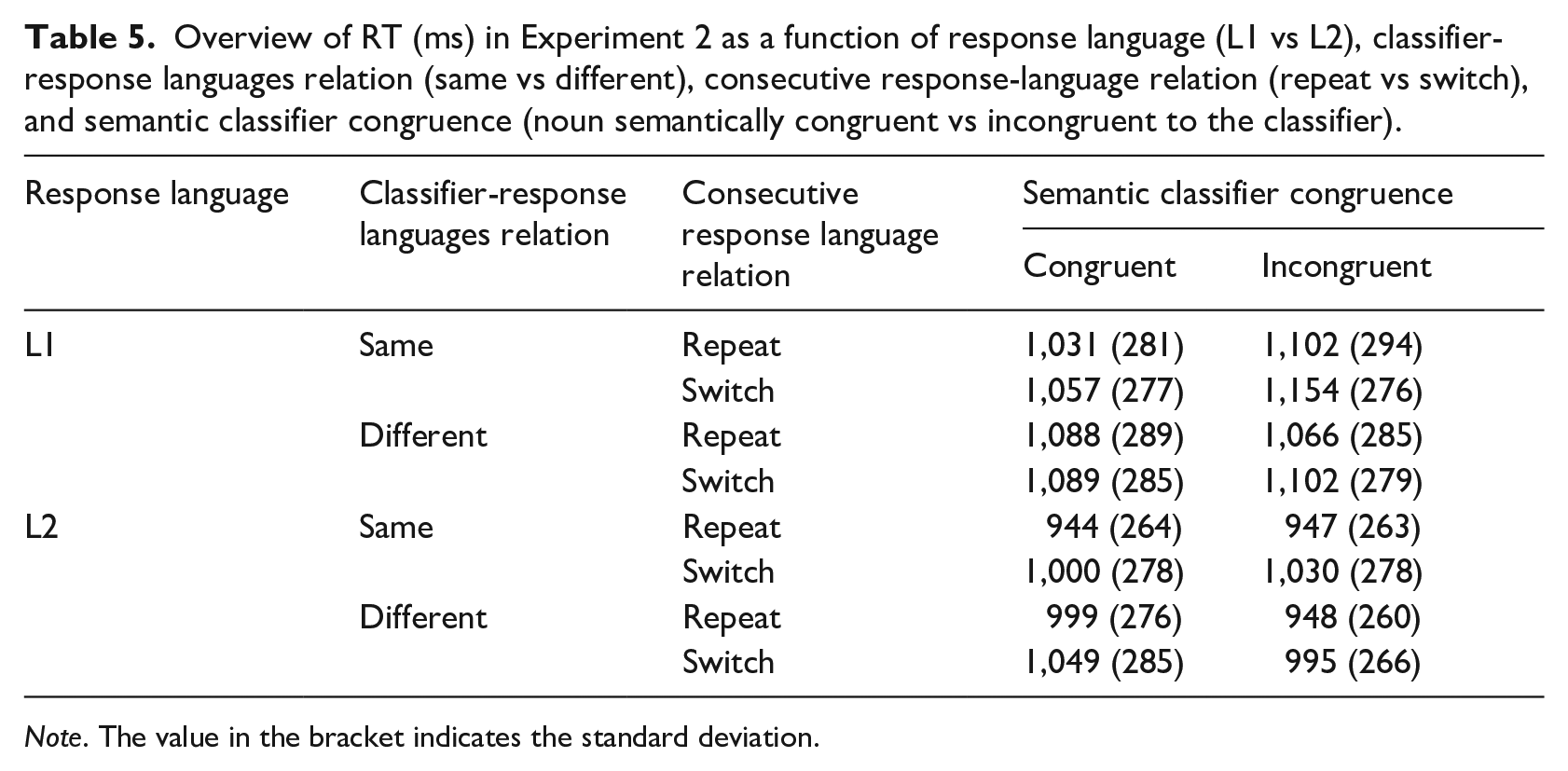

The overview of RT is shown in Table 5. The main effect of semantic classifier congruence was significant, β = .01, SE = 0.003, t = 3.58, p < .001, with 12 ms shorter RTs in the semantically congruent condition compared to incongruent condition (1,036 ms vs 1,048 ms). The main effect of response language was significant, β = −.09, SE = 0.01, t = −7.24, p < .001, demonstrating a reversed language dominance effect with faster response in L2 than in L1 (993 ms vs 1,092 ms). The main effect of consecutive response-language relation was also significant, β = .05, SE = 0.004, t = 11.19, p < .001. Faster responses were found in trials in which the response language repeated from the previous trial than in those in which the response language switched (1,020 ms vs 1,064 ms), indicating a 44 ms language switch cost with respect to the naming response. The main effect of classifier-response languages relation was also significant, β = .01, SE = 0.003, t = 2.83, p = .005, with 8 ms faster response in the trials in which classifier and response language was the same than in those with different classifier and response languages (1,038 ms vs 1,046 ms).

Overview of RT (ms) in Experiment 2 as a function of response language (L1 vs L2), classifier-response languages relation (same vs different), consecutive response-language relation (repeat vs switch), and semantic classifier congruence (noun semantically congruent vs incongruent to the classifier).

Note. The value in the bracket indicates the standard deviation.

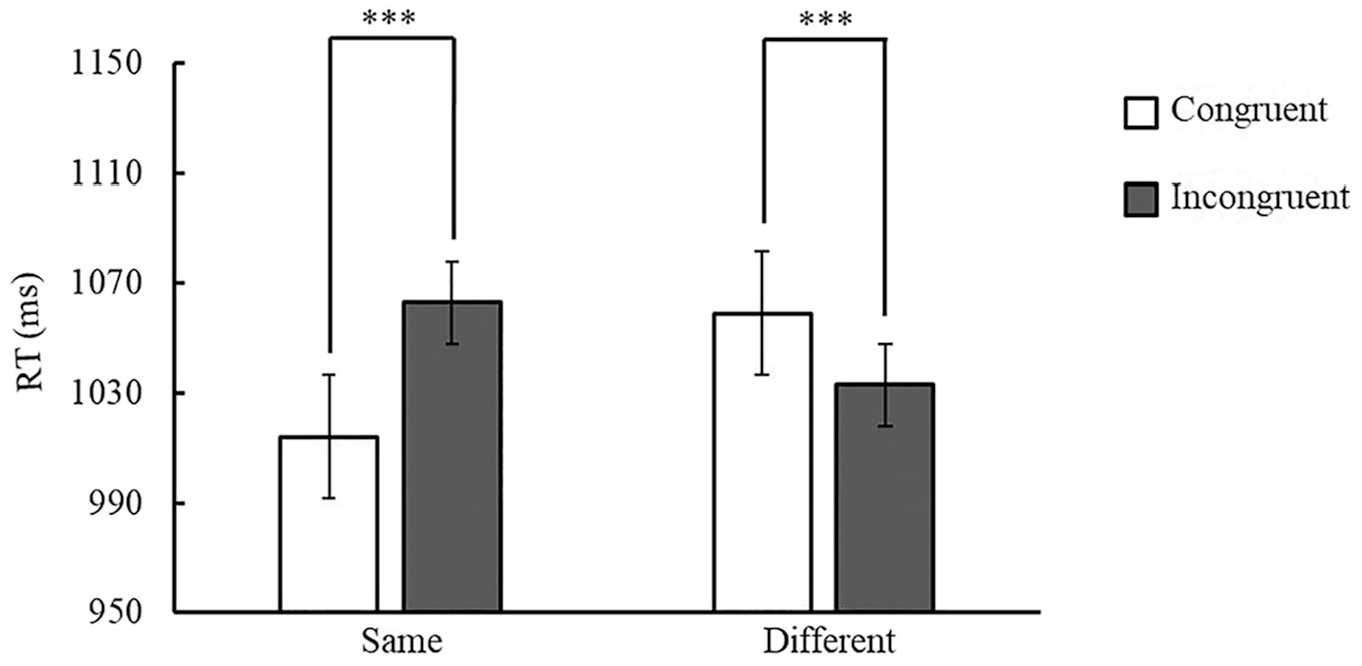

Importantly, as in Experiment 1, the interaction of semantic classifier congruence and classifier-response languages relation was significant, β = −.07, SE = 0.01, t = −11.38, p < .001. Follow-up analyses showed 49 ms faster responses in the congruent condition than in the incongruent condition when classifier and response language were the same (1,014 ms vs 1,063 ms), β = −.05, SE = 0.004, z = −10.41, p < .001. However, a reversed pattern with 26 ms faster responses in the incongruent condition than in the congruent condition was found when classifier and response languages were different (1,033 ms vs 1,059 ms), β = .02, SE = 0.004, z = 5.51, p < .001 (see Figure 3).

Mean RT (ms) in Experiment 2 as a function of semantic classifier congruence (noun semantically congruent vs incongruent to the classifier) and classifier-response languages relation (same vs different).

In addition, the interaction of semantic classifier congruence and response language was significant, β = −.05, SE = 0.01, t = −8.87, p < .001. Follow-up analyses showed 40 ms faster responses in the congruent condition than in the incongruent condition in L1 (1,072 ms vs 1,112 ms), β = −.04, SE = 0.004, z = −8.74, p < .001. In contrast, participants respond 17 ms faster in the incongruent condition than in the congruent condition in L2 (984 ms vs 1,001 ms), β = .02, SE = 0.004, z = 3.77, p < .001.

The interaction of classifier-response languages relation and consecutive response languages relation was also significant, β = −.02, SE = 0.01, t = −3.51, p < .001. Follow-up analyses showed that when the response language repeated from the previous trial, participants performed better in trials in which classifier and response languages were the same than in those in which classifier and response languages were different (1,012 ms vs 1,029 ms), β = −0.02, SE = 0.004, z = −4.49, p < .001. Yet, in consecutive response language switch trials, RTs were similar no matter whether classifier and current response languages were the same or not (1,065 ms vs 1,063 ms), β = .002, SE = 0.004, z = .47, p = .64.

With respect to response language-switch costs, the interaction of response language and consecutive response-languages relation was significant, β = .03, SE = 0.01, t = 5.15, p < .001. Follow-up analyses showed that for L1, participants responded faster in L1 repetition trials than when the language switch from L2 to L1 (1,078 ms vs 1,105 ms), β = −.03, SE = 0.005, z = −5.71, p < .001, indicating a 27 ms language switch cost for L1. An even larger language switch cost was observed for L2 (963 ms vs 1,022 ms, switch cost of 59 ms), β = −.06, SE = 0.005, z = −12.08, p < .001. Thus, these results showed a smaller switch cost in L1 than in L2 (i.e., reversed asymmetrical language-switch cost) as in the first experiment. No other main effects or interactions were significant (ps > .06; more details of the final model could be found in Supplementary Materials Table S3).

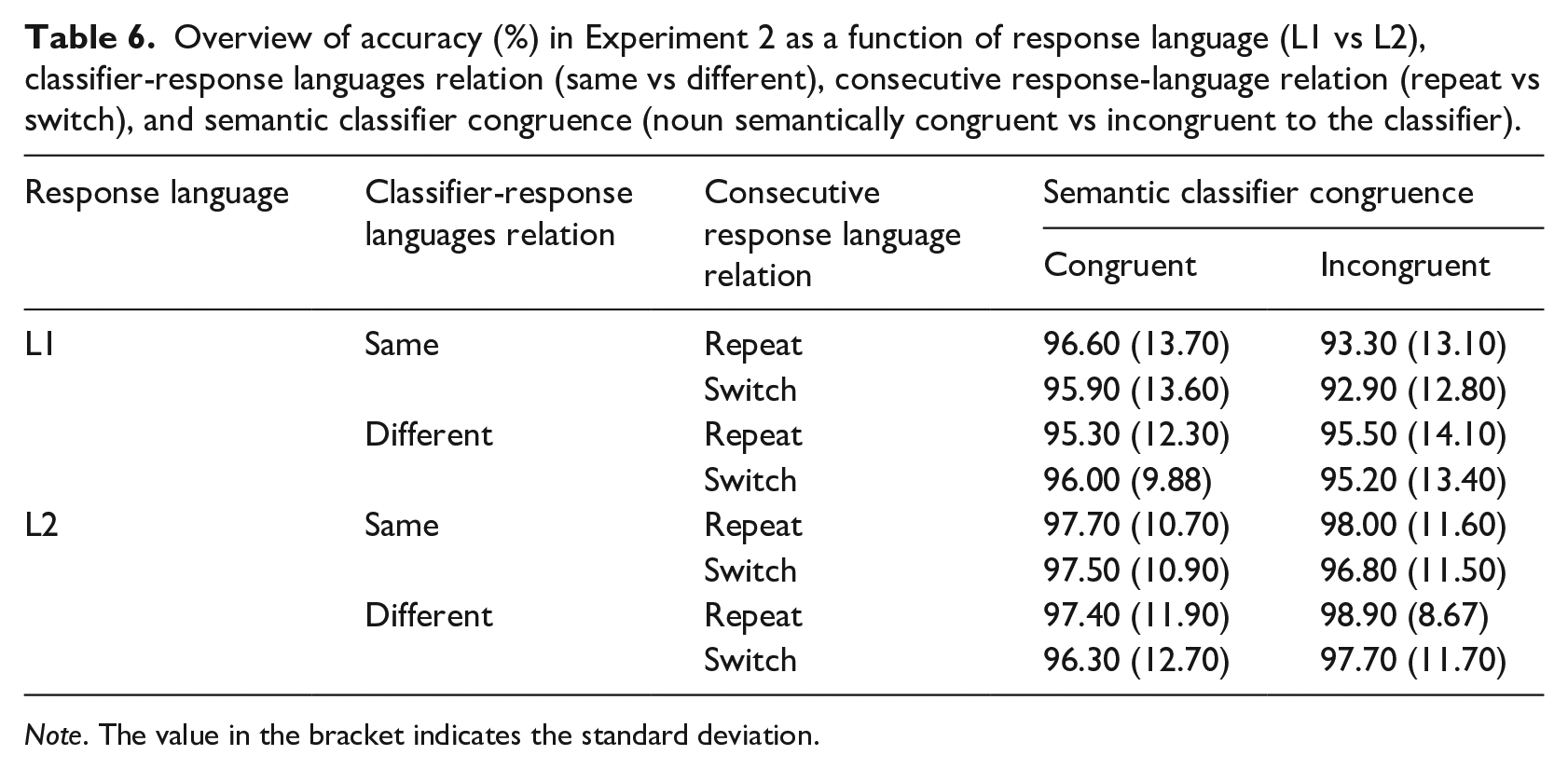

Accuracy

The overview of accuracy can be found in Table 6. The main effect of response language was significant, β = .65, SE = 0.13, z = 5.10, p < .001, with higher accuracy in L2 than in L1 (97.50% vs 95.10%). The main effect of consecutive response-language relation was significant, β = −.21, SE = 0.08, z = −2.67, p = .007. Higher accuracy was found when response languages repeated in two consecutive trials than when there was a language switch (96.60% vs 96.10%).

Overview of accuracy (%) in Experiment 2 as a function of response language (L1 vs L2), classifier-response languages relation (same vs different), consecutive response-language relation (repeat vs switch), and semantic classifier congruence (noun semantically congruent vs incongruent to the classifier).

Note. The value in the bracket indicates the standard deviation.

The interaction of semantic classifier congruence and classifier-response languages relation was significant, β = .68, SE = 0.16, z = 4.20, p < .001. Follow-up analyses showed 1.49% higher accuracy in the semantically congruent condition than in the incongruent condition when classifier-response languages were the same (96.95% vs 95.46%), β = −.34, SE = 0.11, z = 3.08, p = .002. However, when classifier and response languages were different, higher accuracy was found in the incongruent condition than in the congruent condition (96.85% vs 96.21%), β = −.34, SE = 0.12, z = −2.88, p = .004, which is consistent with the RT analysis.

The interaction of semantic classifier congruence and response language was significant, β = .71, SE = 0.16, z = 4.40, p < .001. Follow-up analyses showed higher accuracy in the congruent condition than in the incongruent condition in L1 response (95.54% vs 94.38%), β = .35, SE = 0.09, z = 3.82, p < .001. In contrast, higher accuracy was found in the incongruent condition than in the congruent condition in L2 response (98.39% vs 96.79%), β = −.36, SE = 0.13, z = −2.70, p = .01, which again is in accordance with the RT analysis.

The interaction of semantic classifier congruence and consecutive response-language relation showed a marginally significant effect, β = −.33, SE = 0.17, z = −2.00, p = .05. Follow-up analyses showed that despite a numerically larger semantic classifier congruency effect in language-repetition than in language-switch trial, the accuracy was statistically not different between the congruent and the incongruent condition when the responses language repeated in two consecutive trials (96.32% vs 97.18%), β = −.17, SE = 0.12, z = −1.37, p = .17, or when they switched (96.09% vs 96.52%), β = .16, SE = 0.11, z = −1.53, p = .13.

Regarding language-switch costs with respect to the response language, the interaction of response language and consecutive response-language relation was significant, β = −.47, SE = 0.16, z = −2.91, p = .004. Follow-up analyses showed that for L1, participants performance was similar in L1 repetition trials than in a language switch from L2 to L1 (95.43% vs 95.64%), β = −.02, SE = 0.09, z = −.21, p = .83, but for L2, a higher accuracy was observed in L2 repetition trials than in language switch from L1 to L2 (98.15% vs 97.04%), β = .45, SE = 0.13, z = 3.40, p < .001. No other significant main effects or interactions were found (ps > .11; more details of the final model could be found in Supplementary Materials Table S4).

Discussion

As in Experiment 1, we observed a semantic classifier congruency effect with faster responses in the semantically congruent condition than in the semantically incongruent condition. Yet, this effect was again found only when classifier and response language was the same (i.e., within language) but was absent and even reversed when the classifier language was different from the response language (i.e., across languages).

In addition, two new findings with respect to the classifier-response pairs and semantic classifier congruence and were observed in Experiment 2. On the one hand, we observed a main effect of classifier-response languages relation, with better performance when classifier and response language were the same than when they were different. This finding might indicate that the classifier already primes the language it was presented in, which leads to an advantage when the same language has to be used for naming the response. For trials in which classifier and response languages were different, however, the language priming effect of the classifier language conflicted with the language required for production, leading to slower response times compared to trials where both languages were the same.

On the other hand, the semantic classifier congruency effect was found only in L1 but not in L2. This effect was even reversed for L2, with better performance in the incongruent condition than in the congruent condition. We return to this effect in the General Discussion.

General discussion

In our study, we examined if predictions made by processing an external input (namely, a classifier) influenced within-language and cross-languages production. In two experiments, participants were asked to name a picture after hearing a classifier. In both Experiments 1 and 2, classifier and response language could be the same or different in a trial. Yet, we manipulated classifier language on a trial-to-trial basis in Experiment 1 and block-wise in Experiment 2.

First, we observed a semantic classifier congruency effect, which we interpret as a marker of the prediction effect. This effect was influenced by the response language so that it occurred for L1 but not consistently for L2, which resembles a previous study focusing on the semantic classifier congruency effect within language (Tong et al., 2025). Second, the semantic classifier congruency effect was also modulated by the classifier-response language relation. That is, the semantic classifier congruency effect only occurred when the classifier language was the same as the response language but it was absent (Experiment 1) or even reversed (Experiment 2) when the classifier language was different from the response language. Finally, we found a reversed language dominance effect, a switch cost in general and a smaller language-switch cost in L1 than in L2 (i.e., a reversed asymmetrical switch cost). The semantic classifier congruency effect was absent in response language switches but present in response language repetitions. These results will be discussed in the following.

Effects of prediction on within-language and cross-languages production

Previous studies suggested that prediction influences the following language processing (e.g., Chun & Kaan, 2019; Foucart et al., 2014; Leon-Cabrera et al., 2019). In addition, studies showed a delayed prediction in L2 as compared to in L1 (Dijkgraaf et al., 2017). While many of these studies focused on prediction during language comprehension, one study provided evidence that prediction also influences subsequent within-language production (Grisoni et al., 2024). However, there is a lack of evidence about how prediction influences cross-languages production. In our current study, we explored whether prediction via processing an external input influences within-language and cross-languages production, with a specific focus on cross-languages production.

We expected to see the influence of prediction on both within-language and cross-languages production, with a smaller prediction effect when prediction was generated by L2 processing. In contrast to this expectation, our results only confirmed the influence of prediction on within-language production. More specifically, a semantic classifier congruency effect as an empirical marker for prediction was present only when classifier language and response language were the same in both experiments (Tong et al., 2025). When classifier language and response language were different, no semantic classifier congruency effect was found in Experiment 1, and the effect was even reversed in Experiment 2, with faster responses in the incongruent condition than in the congruent condition.

In a previous study (Huettig et al., 2010), processing a classifier activated semantically congruent conceptual/lexical representations. In our current study, hearing a classifier established a highly constraining context which is known to trigger predictions (DeLong et al., 2005; Federmeier, 2007; Staub et al., 2015; Wlotko & Federmeier, 2012). Thus, we suspect that processing a classifier prompted participants to actively predict the corresponding picture that would be named later. This can explain the observation of a semantic classifier congruency effect in within-language condition. To account for the absent or even reversed effect in cross-language conditions, we additionally suggest that a classifier, as a strong language cue, might not only trigger the prediction of congruent concepts but also prime the language. Due to this pre-activation of both language and concepts, participants respond faster in the congruent condition than in the incongruent condition when both classifier and response language were the same, which was found in both experiments. In contrast, when only congruent concepts but not the correct language were preactivated, as it is the case when classifier and response language were different, the non-verbal pre-activation of concepts was not sufficient to result in a semantic classifier congruency effect.

Such an interpretation could also be related to the notion of different components in a task set (Philipp & Koch, 2010; Vandierendonck et al., 2008). A task set, as the cognitive representation of a task, consists of different components like stimuli, task rules, and responses (cf. Monsell, 2017). In our case, the concept and the response language can be considered as two such task-set components. When performing the picture-naming task in our study, participants then can be assumed to integrate these two components into a single task representation (i.e., a language-specific lemma) for the later task execution (i.e., language production).

If we now assume that predictions can already lead to the formation of such a specific task representation, participants just had to execute an integrated and activated task representation when both the concept and language could have been predicted based on the classifier (i.e., in a congruent within-language condition). In contrast, in the incongruent within-language condition, the predicted concept was incorrect so that a new concept had to be integrated into the task representation while the language remained the same. As a consequence, participants responded faster in the congruent condition than in the incongruent condition.

When the classifier and response language were different, at least one component of the pre-activated task set, namely the language, had to be changed. In the congruent condition, the predicted concept, however, could have been kept the same, whereas in the incongruent condition the concept also had to be changed. This resulted in a situation in which either only one task component (i.e., the concept in congruent cross-language conditions) or both task components (in incongruent task-set components) of the pre-activated task representation had to be changed for successful task performance. In previous studies, it was found that switching all components of a task representation was faster than switching parts of it (Philipp & Koch, 2010; Vandierendonck et al., 2008; also see the notion of partial repetition costs, for example, Hommel, 1998). For the present study, this notion is in line with the reversed semantic classifier congruency effect across languages in Experiment 2.

However, in the present study, this data pattern was only observed in Experiment 2. In Experiment 1, we observed no performance difference between congruent and incongruent conditions when classifier and response language were different. To account for this between-experiment difference, we suppose that also the experimental context had an influence on the performance. This is based on the adaptive control hypothesis (Green & Abutalebi, 2013), which states that control processes are adjusted with respect to the specific context. In our current study, the classifier language varied within a block in Experiment 1, whereas it was constant within a block in Experiment 2. This difference between the experiments might already have created different contexts for the participants, which, in turn, had a differential impact on control processes. In a context with a constant classifier language (at least within each block as in Experiment 2), we suspect that concept and language were strongly integrated into a task representation. However, when the classifier languages varied within a block as in Experiment 1, the integration of concept and language might have resulted in a relatively less integrated task representation. Such a weak task representation could have an effect both on the execution of a pre-activated task representation (i.e., reducing performance in congruent within-language conditions) and on the execution of partially correct task representations (i.e., improving performance in incongruent within-language and congruent cross-language conditions). As a consequence, the semantic classifier congruence effect in the within-language condition should be smaller in Experiment 1 than in Experiment 2 but also the reversed semantic classifier congruency effect should be reduced in Experiment 1 as compared to Experiment 2. Both trends can be observed in the present study as becomes obvious when comparing Figures 2 and 3.

One final important aspect that needs to be considered is that, so far, we argued with the integration of the predicted concept and the language that was indicated by the classifier. Yet, some of the classifiers that we used (e.g., a pair of or a group of) occur with a number of different nouns in real life, so that is unlikely that they are associated with a single concept only. In addition, several previous studies suggested that predictions activate a larger number of different concepts rather than a single one (DeLong et al., 2005; Staub et al., 2015; Wlotko & Federmeier, 2012). Thus, the complete picture is most likely more complex than shown here. However, the fact that, within the experiments of the present study, each classifier was associated with two different pictures/nouns only (i.e., one congruent and one incongruent) increases the likelihood that one specific concept was predicted with a high probability while comprehending the classifier.

Within-language prediction in L1 versus L2

Both experiments of this study showed a semantic classifier congruency effect, demonstrating the effect of prediction on subsequent language production. However, next to a difference between within- and cross-language prediction, we also observed differences between the languages themselves. That is, when looking at within-language conditions in isolation, both experiments showed a larger semantic classifier congruency effect for L1 as compared to L2. This finding is in line with a previous own study (Tong et al., 2025) as well as with other studies demonstrating a weaker or delayed L2 prediction effect as compared to the L1 prediction effect (Dijkgraaf et al., 2017; Foucart et al., 2014).

Importantly and in accordance with other studies (Chun & Kaan, 2019; Dijkgraaf et al., 2017; Foucart et al., 2014), the present study indicates that a prediction also plays a role in second language processing. This observation is also in accordance with previous reviews (Kaan, 2014; Schlenter, 2023) pointing out that the difference for prediction in L1 and L2 is quantitative rather than qualitative. The observed difference in the size of the prediction effect in L1 and L2 could be due to a more delayed processing (Dijkgraaf et al., 2017; Momenian et al., 2024) or weaker predictive ability (Martin et al., 2013) in L2 than in L1. Furthermore, specific properties of a language might also have an influence on the size of the prediction effect (cf. Foucart et al., 2014). For the present study, this could mean that the prediction effect in L1 Chinese was larger than in L2 English because Chinese has a richer classifier system as compared to English (Allan, 1977, 1978) Thus, participants may have relied more on prediction based on classifier processing in Chinese than in English. Yet, the design of the present study does not allow us to disentangle potential effects of temporal delay, weaker predictive abilities in a foreign language, and language-specific effects.

Taken together, the present study clearly demonstrates an influence of prediction on subsequent language production. Furthermore, the prediction effect was present in within-language conditions but not in cross-language conditions. Importantly for the aim of the present study, a language-specific difference was observed for within-language prediction (with a larger prediction effect for L1 as compared to L2). In contrast, results were comparable for cross-language conditions, indicating that the absence or the reversal of the prediction effect needs to be explained with respect to the difference between classifier and response languages rather than with a purely language-specific effect. In other words, the absent/reversed prediction effect in cross-language conditions cannot be accounted for by a delayed or weaker prediction in L2 classifier comprehension as it was observed for both L1(classifier)-L2(noun) and L2(classifier)-L1(noun) language combinations.

Prediction and language switching

For both experiments of the present study, the response language in each trial could be a response-language repetition or a response-language switch with respect to the previous trial. Thus, repeating or switching the response language might also have had an influence on the prediction effect. Indeed, the data pattern shows that the semantic classifier congruency effect was observed in response language repetitions but was absent in response language switches. This observation could be due to the language switching cost outweighing the semantic classifier congruency effect. However, the most important information with respect to the present study is that repeating or switching the response language had no additional effect on the difference between within-language and cross-language prediction. Thus, we cautiously conclude that response-language switching had an effect on the performance of participants but that this effect was rather general in nature.

Such a general effect is also found when looking at the typical empirical markers for language switching. That is, consistent with our own previous study (Tong et al., 2025), we found a reversed language dominance effect (i.e., better performance in L2 than in L1) and a reversed asymmetrical language-switch cost (i.e., smaller switch cost in L1 than in L2; cf. Bonfieni et al., 2019; Zheng et al., 2020; for a meta-analysis, see Gade et al., 2021). One possible explanation to account for this data pattern might be a general and proactive inhibition of L1 to facilitate L2 production (see, for example, Declerck, 2020; Declerck & Koch, 2023), temporally enabling L2 to function as a more dominant language than L1. A reversed asymmetrical language-switch cost could arise from this reversed language dominance, causing switching to L2 being harder than switching to L1. Yet, we would be cautious about this explanation, since there is no systematical evidence for the presence or absence of a reversed switch cost asymmetry in combination with a general reversed language dominance effect (for a meta-analysis, see Gade et al., 2021).

Conclusion

In our current study, classifiers were presented auditorily to create a highly constraining context intended to trigger prediction for the following semantic information. We invested if prediction could influence the following within-language and cross-languages production. Both experiments of the present study confirmed an influence of prediction, by demonstrating a semantic classifier congruency effect with faster responses in the congruent condition than in the incongruent condition. Furthermore, consistent with a previous own study (Tong et al., 2025), the semantic classifier congruence effect was found when classifier language and response language were the same, indicating a prediction effect for the following within-language production. However, when classifier language and response language were different, we did not find any semantic classifier congruency effect in Experiment 1 and this effect was even reversed in Experiment 2. We suspect that the concept (i.e., semantic information) generated by prediction and the language indicated by the classifier were integrated together as two components of a task representation. Thus, at least when focusing on the influence of prediction on language production, the prediction seems to refer to a language-specific lemma rather than to a non-verbal concept.

Constrains and limitations

In our current study, three limitations can be noted which could be addressed in the future research. First, our experiments were not designed to specifically disentangle the effects of consecutive response-language relation and consecutive classifier-language relation on language production. More specifically, we focused on the influence of consecutive response-language relation but did not analyze the data with respect to a possible influence of consecutive classifier-language relation (which would have been possible in Experiment 1). Yet, comprehending a classifier in the current trial might also be affected by the classifier language in the previous trial. For example, if the classifier language in the current trial differs from the classifier language in the previous trial, the prediction process might slow down due to the language switch between classifiers. Such an influence could be expected because previous research suggests that predictions can linger, with previously predicted words remaining accessible in memory for a certain period (Rommers & Federmeier, 2018)—and thus potentially also their language. In addition, language switch costs were not only observed in language-production tasks but also in language comprehension tasks (see, for example, Jackson et al., 2004; Olson, 2017 but also see Declerck et al. (2019), for evidence that language-switch costs in language comprehension are less systematically observed than in language production).

As a second limitation, it has to be noted that we used the age of L2 acquisition, the self-rated language skills in English and an online sentence completion task to assess the L2 language proficiency of the participants, although these measures offer a certain degree of insight into the participants’ language skills, they may not provide a fully accurate information about participants actual proficiency. Thus, in future research, language proficiency of participants could be tested with different measures and could also be included in the analyses to explore the influence of language proficiency (especially in L2) on prediction effects directly.

Finally, we also suggest that the cloze probability of the noun with respect to the classifier should be tested in future studies. Even though we asked a different group of participants to evaluate the congruency of the classifier-noun combination using a 5-point scale (Tong et al., 2025), this might not have been enough to represent the cloze probability.

Supplemental Material

sj-docx-1-ijb-10.1177_13670069251353414 – Supplemental material for Within-language and cross-languages prediction: Evidence from semantic classifier congruency

Supplemental material, sj-docx-1-ijb-10.1177_13670069251353414 for Within-language and cross-languages prediction: Evidence from semantic classifier congruency by Jing Tong, Iring Koch and Andrea M. Philipp in International Journal of Bilingualism

Footnotes

Appendix

| Classifier | Congruent | Incongruent | |||

|---|---|---|---|---|---|

| English | Chinese | English | Chinese | English | Chinese |

| a basket of | 一篮 | apple | 苹果 | coin | 硬币 |

| a bottle of | 一瓶 | milk | 牛奶 | shoe | 鞋 |

| a bouquet of | 一束 | flower | 花 | bread | 面包 |

| a bowl of | 一碗 | noodle | 面条 | card | 纸牌 |

| a box of | 一箱 | candy | 糖果 | student | 学生 |

| a bundle of | 一捆 | hay | 干草 | milk | 牛奶 |

| a coil of | 一卷 | wire | 电线 | ant | 蚂蚁 |

| a crew of | 一队 | fireman | 消防员 | candy | 糖果 |

| a cup of | 一杯 | tea | 茶 | brick | 砖头 |

| a deck of | 一副 | card | 纸牌 | tea | 茶 |

| a drop of | 一滴 | water | 水 | flower | 花 |

| a grain of | 一粒 | wheat | 麦子 | apple | 苹果 |

| a group of | 一群 | student | 学生 | glue | 胶水 |

| a jar of | 一罐 | jam | 果酱 | hay | 干草 |

| a nest of | 一窝 | ant | 蚂蚁 | tissue | 纸巾 |

| a packet of | 一包 | tissue | 纸巾 | fireman | 消防员 |

| a pair of | 一双 | shoe | 鞋 | water | 水 |

| a pile of | 一堆 | brick | 砖头 | jam | 果酱 |

| a set of | 一套 | stamp | 邮票 | wheat | 麦子 |

| a shovel of | 一铲 | snow | 雪 | honey | 蜂蜜 |

| a slice of | 一片 | bread | 面包 | wire | 电线 |

| a spoon of | 一勺 | honey | 蜂蜜 | stamp | 邮票 |

| a stack of | 一摞 | coin | 硬币 | noodle | 面条 |

| a tube of | 一管 | glue | 胶水 | snow | 雪 |

Acknowledgements

Thanks to Anna Thomas for experiment execution.

Data availability

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Supplemental material

Supplemental material for this article is available online.

Author biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.