Abstract

A comparison is made between probability and relative plausibility as approaches for the interpretation of evidence. It is argued that a probabilistic approach is capable of answering the criticisms of the proponents of relative plausibility. It is also shown that a probabilistic approach can answer the problem of overlapping where there is evidence that each side claims supports its theory of what happened.

Introduction

Over a period of 25 years and three editions, a seminal book Statistics and the Evaluation of Evidence for Forensic Scientists has described the underlying ideas and mapped the progress of these ideas in the area of the book title, an area which has come to be known as forensic statistics. The first edition of Statistics and the Evaluation of Evidence for Forensic Scientists was published in 1995 (Aitken, 1995). As the manuscript neared completion, there were two suggestions of the publisher that did not proceed to the published version of the book. The first related to the title where it was suggested to include the word ‘interpretation’ until it was pointed out that the book was about ‘evaluation’ not ‘interpretation’ and that the two words were not synonymous. The second suggestion was that the cover could include a picture of a fingerprint. It was pointed out in this case that there was very little on fingerprints in the book. There is an analogy of evidence evaluation with the scales of justice. Thus, it was that the book cover included scales of justice, images of which have appeared on the two subsequent editions.

There may have been little on interpretation in the first edition, a reflection on the relationship between statistics and forensic science at the time. In the second edition, in 2004, there were about 80 pages on interpretation and a discussion of transfer evidence, reflecting the work on propositions done in the late 1990s; see Cook et al. (1998a, 1998b). Now, 25 years after the appearance of the first edition, there are about 300 pages on interpretation within nearly 1000 pages of material, an increase which can only partially be explained by an increase in type size and margin sizes from the previous two editions. However, the title remains unchanged to ensure continuity of ideas from the beginning.

The primary concern of the first edition was with technical matters. DNA profiling was in its relative infancy, having been around less than 10 years. Its introduction helped the introduction of probabilistic reasoning to the courts. There were many teething problems, as the court transcripts of the time show. These were being overcome and there were thoughts as to which other areas of forensic science may be amenable to probabilistic treatment. Much probabilistic work had been done on evidence in the form of the refractive index of glass fragments with a seminal paper by Lindley (1977). The data for that paper had been provided by Ian Evett, who has published many papers on statistics, probabilistic inference and forensic science over the years. Since then, the technical aspects of the evaluation of scientific evidence have become increasingly accepted in the courts and the emphasis has become increasingly on interpretation and the best approach for the communication of evidential value to the fact-finder, judge or jury depending on jurisdiction.

The likelihood ratio is generally accepted as the best way of evaluating evidence. That it is the best way was first explained by Good (1989) and it is explicitly recommended by the European Network of Forensic Science Institutes (ENFSI) in their guidelines for evaluative reporting (ENFSI, 2015). One can read: Evaluation of forensic science findings in court uses probability as a measure of uncertainty. This is based upon the findings, associated data and expert knowledge, case specific propositions and conditioning information. (ENFSI Guideline, point n. 2.3, at p. 6) Evaluation will follow the principles outlined in Guidance note 1 (refer to paragraph 4.0). It is based on the assignment of a likelihood ratio. Reporting practice should conform to these logical principles. This framework for evaluative reporting applies to all forensic science disciplines. The likelihood ratio measures the strength of support the findings provide to discriminate between propositions of interest. It is scientifically accepted, providing a logically defensible way to deal with inferential reasoning. (ENFSI Guideline, point n. 2.4, at p. 6)

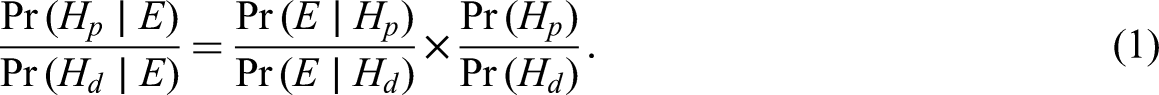

The likelihood ratio is part of the odds form of Bayes’ theorem. Consider two propositions in the context of a criminal trial, since it is absolutely impossible for us [the experts] to know the a priori probability, we cannot say: this coincidence proves that the ratio of the forgery’s probability to the inverse probability is a real value. We can only say: following the observation of this coincidence, this ratio becomes X times greater than before the observation. (1908: 504)

Unfortunately, for those unfamiliar with probabilistic reasoning (and, we suggest, this may be most people in the context of a criminal trial, judge, advocates and barristers, jurors), this interpretation is far from straightforward. Thus it was, as the technical matters became more acceptable, the emphasis of research on the role of probabilistic reasoning and statistics in forensic science moved from the technical matters towards interpretation. Lay people, in a statistical sense, have difficulty understanding the statistician’s verbal description of the likelihood ratio. Many errors of interpretation are given in Aitken et al. (2021).

The underlying premise of these ideas is that legal reasoning is probabilistic in nature. In a civil trial, judgment is based on a balance of probabilities. In a criminal trial, judgment is based on a finding beyond reasonable doubt, where that concept may be considered probabilistically. However, an alternative premise has recently been suggested. Instead of probabilistic reasoning as the basis for juridical proof the suggestion is that juridical proof should be based on a concept known as explanationism. The concept of explanationism has been developed because of apparent analytic difficulties detected in the probabilistic paradigm. It has been argued that the strengths of the competing paradigm involving explanatory inferences (referred to as relative plausibility) have become too persuasive to ignore. It is explained here that probabilistic reasoning can overcome these ‘analytic difficulties’. As a consequence, probabilistic reasoning remains the best way in which to evaluate and interpret evidence.

Interpretation – probability and relative plausibility

Allen and Pardo (2019) in the introductory paper to a special issue of International Journal of Evidence and Proof discuss the merits of the approach known as relative plausibility to interpretation as a replacement for probabilistic reasoning and argue that a paradigm shift is being experienced. The remainder of the special issue contains 20 short papers discussing the relative merits of relative plausibility and a final rejoinder from Allen and Pardo. The interested reader can study these papers at their leisure. Here we comment briefly on four problems that Allen and Pardo (2019) argue render probability implausible. These are the problems of:

the subjective nature of an assignation of coherent numbers to evidence; the item-by-item argument associated with a probabilistic account, rather than a holistic argument; the inconsistency between the assumptions of probability and those of legal doctrine and jury instructions; the inconsistency of probabilistic thresholds for standards of proof with the legal process and goals for these standards.

Allen and Pardo allow for the relationship between probability and uncertainty. The terms ‘probabilistic reasoning’ and ‘probability calculus’ are synonymous. The only way to combine uncertainties about events or different items of evidence is to use the rules of probability.

The comments about reasoning under uncertainty are made in the context of the law. However, the ability to reason coherently in the presence of uncertainty is necessary in many disciplines, not just the law. Probabilistic reasoning is the best way to do it. Even critical commentators (e.g. Allen, 2013) note that ‘none of the conceptualizations of probability except that as a subjective degree of belief can function at trial’. Note also that the choice of probability as a framework for reasoning under uncertainty is not made to suggest that it provides a model for the working of the legal process as a whole. Instead, it is argued that probability helps (i) formulate principles for logical modes of reasoning, (ii) formulate relevant questions and (iii) define the boundaries between the competences of expert witnesses and legal decision-makers. There is no suggestion that the responsibility for the reasoning and the decision-making processes is to be delegated to an abstract theory or that statisticians and other reasoners with probability should comment on the administration of the law. However, there are many instances in reports of criminal cases of incorrect probabilistic reasoning. At least eight different types of fallacious probabilistic reasoning in legal cases identified in the literature are gathered together in Aitken et al. (2021). It is to aid in the reduction of these instances of fallacious reasoning that the arguments in support of probabilistic reasoning in the criminal justice system are presented here.

A fifth problem, known as the overlapping problem (Pardo, 2013), is also discussed. This problem concerns the situation where the same evidence is interpreted differently by the prosecution and defence.

The subjective nature of an assignation of coherent numbers to evidence

Allen and Pardo (2019) cite Anderson v Liberty Lobby, Inc., 477 U.S. 242, 252 (1986), which explains that summary judgment depends on whether ‘reasonable jurors could find by a preponderance of the evidence that the plaintiff is entitled to a verdict’ and Reeves v Sanderson Plumbing Prods., Inc., 530 U.S. 133, 150 (2000), which explains that the standard for judgement as a matter of law ‘mirrors’ the summary-judgment standard and Jackson v Virginia, 443 U.S. 307, 318 (1979), which explains the sufficiency standard for criminal convictions depends on whether ‘any rational trier of fact could find guilt beyond a reasonable doubt’. Allen and Pardo argue that the legal standards for measuring sufficiency of the evidence in civil and criminal cases depend on being able to separate reasonable from unreasonable conclusions. They then argue that under the subjective account every decision is reasonable (at least so long as it is otherwise internally consistent). The subjective account thus fails to explain the central feature of the legal doctrine of reasonableness.

This argument conflates internal consistency with reasonableness. It is to be hoped that supporters of probabilistic reasoning are reasonable people who can distinguish reasonable inferences and decisions from unreasonable (though internally consistent) inferences and decisions. It is also to be hoped that the numerical assignations of probability to uncertain outcomes are chosen carefully to represent their measure of belief and are not unconstrained ‘in any way by the quality of the evidence or its probative value in proving the facts of consequence at trial’ (Allen and Pardo, 2019: 12). Also, decisions by fact-finders are ultimately subjective, whether or not numbers are involved. Those who argue numerically assign numbers to measures of uncertainty whereas those who do not argue numerically assign verbal descriptions to them. Both lines of argument are subjective. However, errors of interpretation are more easily exposed if a numerical argument is used than a verbal argument. Many errors of interpretation are listed in Aitken et al. (2021). It is not that probabilistic reasoners do not make errors, only that their reasoning is more explicit than that of those who argue verbally. An important advantage of the probabilistic approach is that the lines of reasoning of an argument expressed by an expert, a judge or a party to the case are made explicit and it is easy to argue against numerical values chosen. It is easier to analyse critically a probabilistic statement about the relationship between a certain finding and a proposition than a verbal statement that the finding is ‘consistent with’ the proposition or that a given proposition is a logical consequence of a given observation, for example.

The item-by-item argument associated with a probabilistic account rather than with a holistic argument

The probabilistic account (in both objective and subjective forms) is inconsistent with how fact-finders process and reason with evidence, which occurs holistically and not in the item-by-item fashion envisioned by the account. Although the account may nevertheless have some normative or educational value in understanding aspects of evidence, it is plainly inconsistent with how actual evidence evaluation works. This inconsistency is a problem for any such empirical account that purports to explain actual standards of proof because it appears to require or imply that fact-finders evaluate evidence in a manner that they obviously do not. (Allen and Pardo, 2019: 13)

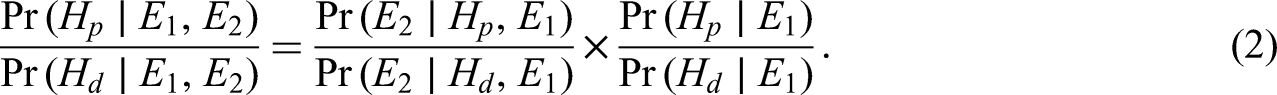

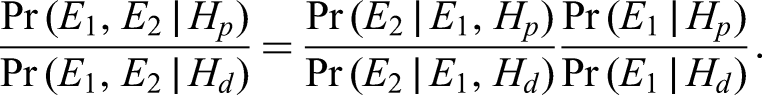

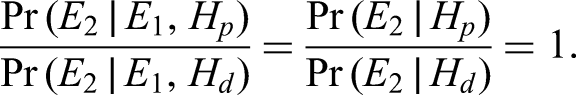

Bayes’ theorem provides a very good method for reasoning with evidence (e.g. Hahn et al., 2013) and for updating beliefs as evidence is led (e.g. Oaksford and Chater, 2007). Consider the evidence E in (1) as

Allen and Pardo (2019: 12) in footnote 43 disagree with this approach to assimilation of evidence What any offer of evidence means depends on all the evidence in the case. What might appear initially to be inculpatory may actually turn out to be exculpatory. Thus, serial updating is a waste of time and also confuses what is a learning process with an updating process. Trials are educational processes where fact-finders learn about the parties’ explanations rather than updating processes that involve updating a complete and coherent set of priors. The fact-finder typically has no idea what those ‘priors’ are until all the evidence is in.

Evidence is led sequentially. It is hard to imagine a fact-finder withholding (at least privately) a belief in the ultimate issue until all the evidence has been heard. The value of each new item of evidence,

As well as incremental updating, a holistic view of the evidence is provided by so-called Bayesian networks or Bayes’ nets (Roberts and Aitken, 2014; Robertson and Vignaux, 1993; Taroni et al., 2014). Each piece of evidence at a trial and the ultimate issue (e.g. guilt or innocence) is represented by a node in the network. The relationships amongst the different pieces of evidence and the ultimate issue are represented by lines joining appropriate nodes. The strengths of the relationships are noted in an adjoining conditional probability table. Software is available to process Bayes’ nets. As each piece of evidence is considered, the Bayes’ net can be updated incrementally as with the formulaic updating of (2).

It has been commented that Wigmore charts (Roberts and Aitken, 2014; Wigmore, 1937) and Bayes’ nets ‘will generally reflect the standpoint of the individual constructing the graph, rather than any putative “objective truth”’ (Dawid et al., 2011: 147). This is a valid point. A Bayes’ net helps an individual to structure the evidence and the relationships amongst different pieces of evidence better to understand the overall case. It is a benefit of the Bayes’ net that an individual can be assisted in their structuring of the evidence. Bayes’ nets from different individuals considering the same trial may be compared. Similarities and differences in their understanding of the evidence will be easy to determine. Debate amongst the individuals concerned will, hopefully, lead to a common Bayes’ net and a consensus view of the relationship between the evidence and the ultimate issue.

It is not suggested in consideration of a case that all possible combinations of items of evidence with associated uncertainties be considered by an evaluator of the evidence. Of course, no human being has the time to do the computations. That is why Bayes’ nets were developed (see Lauritzen and Spiegelhalter, 1988 with a hint ‘local computations’ in the title of the paper of the method described). As an example of this approach in practice consider an example involving offender profiling (or, more correctly, specific case analysis); see Aitken et al. (1996). There, Bayes’ nets were developed in a collaboration amongst senior police officers, statisticians and computer scientists to add structure to the investigation of sexually motivated child homicides. Various nets were developed and subjective conditional probabilities included based on the opinions of the police officers. One of the group was a very assiduous police officer who had compiled a database of all known cases of sexually motivated child homicides (sadly, a large number). From this database, frequentist conditional probabilities were able to be obtained and compared with the subjective conditional probabilities of the senior police officers. The two sets of probabilities were very similar. On reflection, this result is not surprising. The officers concerned were very experienced in these types of cases and had a very good feel for the values of the conditional probabilities with which the group was trying to work.

Bayes’ nets provide the ability to deconstruct a complicated scenario into local relationships. It is then possible for the appropriate conditional probabilities to be assigned subjectively. With suitably experienced scientists or police officers these subjective probabilities will be close to reality. The ability of experienced individuals to express their uncertainties as probabilities enables Bayes’ nets to lead to a more accurate outcome than their detractors suggest. See Biedermann et al. (2011).

It is also possible to consider ‘qualitative Bayesian networks’. Qualitative Bayes’ nets have been developed and supported by scientific and legal literature (see, e.g. Robertson and Vignaux, 1993). The logic dates back to Wigmore (1913). The qualitative relationships amongst variables cover qualitative influences which define the sign of the direct influence one variable has on another. This can be easily translated into sentences like ‘variable A makes variable B more likely’.

The ability of Bayes’ nets to deal with complex scenarios is not just applicable to scientific evidence. Other examples of their use in discussions of criminal cases are given by Edwards (1991), Kadane and Schum (1996) and Thagart (2003).

The inconsistency between the assumptions of probability and those of legal doctrine and jury instructions

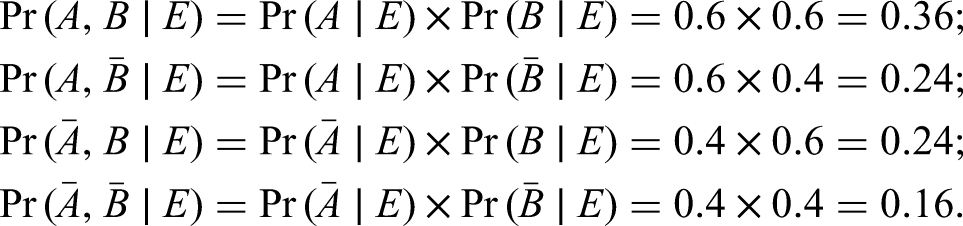

Allen and Pardo (2019) note that ‘legal doctrine and jury instructions typically dictate that the standard of proof applies to the individual elements, not to cases as a whole. Thus, in a two-element claim, A and B, if the plaintiff proves each to 0.6, then the plaintiff wins according to the law. However, if these two elements are independent of each other, then the probability of plaintiff’s claim is 0.36, not greater than 0.5. This inconsistency has come to be known as the conjunction problem.’ Many legal scholars cite this problem as a reason why probabilistic reasoning cannot be used in a court of law.

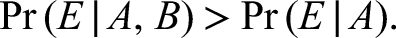

This conjunction problem was described earlier by Cohen (1977). Denote the elements A and B as in Allen and Pardo (2019)’s notation. They are both part of the plaintiff’s case. Both need to be ‘proven’ on the balance of probabilities to satisfy the burden of proof. There is one item of evidence, denoted E, and A and B are deemed independent, given E. The evidence is deemed to support both elements as it can be shown that

The example given by Cohen (1977) is that of a car driver who sues their insurance company because it refuses to compensate them after an accident. The two component issues (elements) that are disputed are, first, the circumstances of the crash (

However, further analysis (see, for example, Nesson, 1985) reveals that the plaintiff’s case (story) has a higher probability than any other case (story). Denote the complement (negation) of A as

A similar approach to this conjunction problem is best described by the illustration of what is known as Linda’s example (Sides et al., 2002). Here, the evidence E is the following information about Linda: ‘she is 31 years old, single, outspoken, and very bright; she majored in philosophy and, as a student, she was deeply concerned with issues of discrimination and social justice, and also participated in anti-nuclear demonstrations’.

The elements are

A majority of respondents across a variety of studies ranked the conjunction of A and B as more probable than A (see Hertwig and Chase (1998) for a review of findings; the original report is Tversky and Kahneman (1983)). This ranking is in contradiction to the laws of probability that state that, for two events A and B,

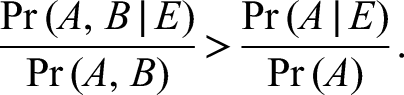

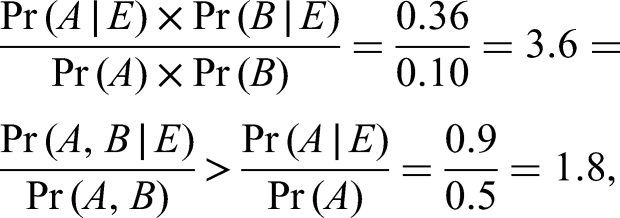

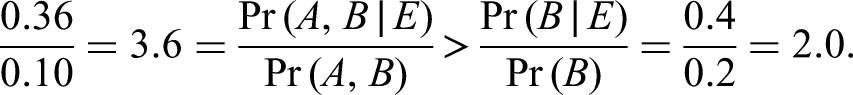

This paradoxical finding can be understood by considering relative values of unconditional and conditional probabilities. Consider a definition that evidence E favours

The numbers in the example from Nesson (1985) are for illustrative purposes only. The important principle in the discussion of Linda’s example is that it is the relative values of the probabilities, not their absolute values, that are important. For a discussion of this principle, an example is given in Cheng (2013) with a rebuttal in Allen and Stein (2013), a rebuttal which is, in turn, rebutted below. Consider (Cheng, 2013) independent elements A and B in a case, and evidence E such that

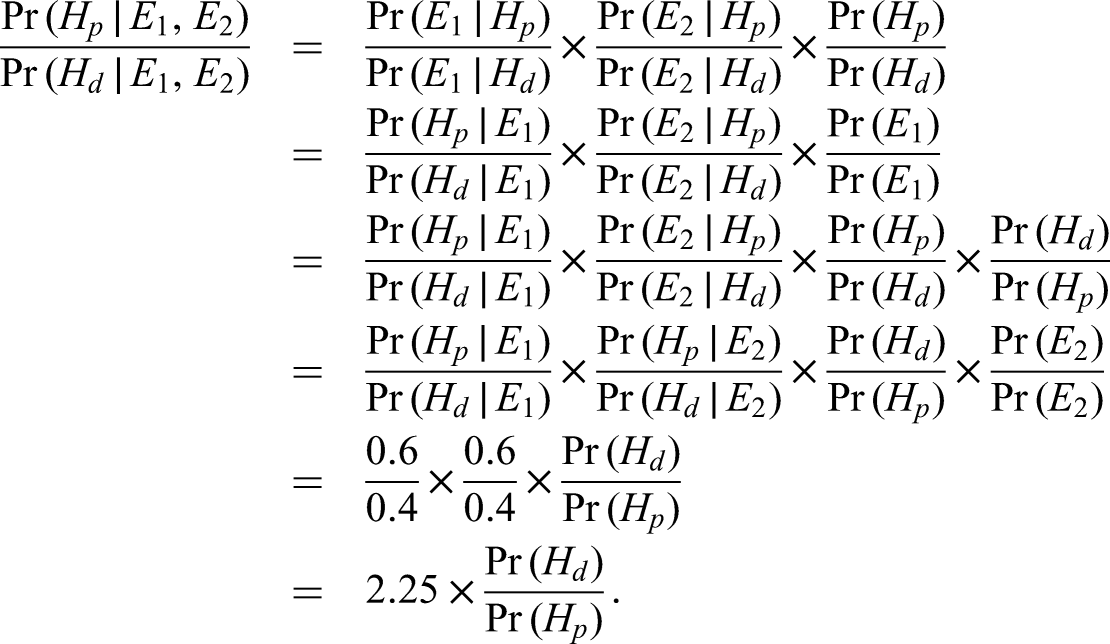

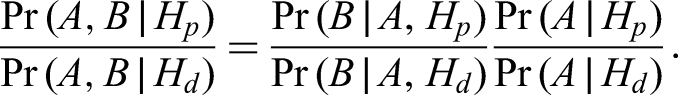

There is another conjunction problem, one which is discussed by Dawid (1987) and Cohen (1988). This is concerned with evidence evaluation, not the posterior probabilities of a case or elements of a case. This problem has only one element (better thought of here as a proposition), which is denoted

As

The first problem is faced by the triers of fact, the judges and juries. A decision has to be made, guilty or not guilty in a criminal case or a finding for the plaintiff or defendant in a civil case. In these situations, the burden of proof is, for example, beyond reasonable doubt in a criminal case, or on the balance of probabilities in a civil case. The decision should then be made on the situation which is best supported by the evidence. For those of a probabilistic bent, while the absolute values of the probabilities may be of interest, it is the relative values of the probabilities of the various stories provided by the prosecution and defence or plaintiff and defendant that are important. The second conjunction problem refers to the problem faced by an expert witness. They have to offer an opinion on the value of their evidence. This is best done with the likelihood ratio.

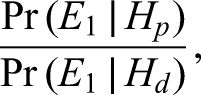

The likelihood ratio provides the best approach to the evaluation of evidence. It is a statistic which is a function of the probabilities of evidence, conditional on propositions, or elements. Consideration of this statistic is a matter for an expert witness. The inverse probabilities, those which are functions of probabilities of propositions or elements, conditional on evidence, are posterior probabilities. Consideration of these is a matter for a trier of fact.

In the absence of appropriate data, verbal summaries of relative values will suffice. The important principle is that it is relative values that matter, values that can be expressed verbally.

The inconsistency of probabilistic thresholds for standards of proof with the legal process and goals for these standards

A probabilistic approach to decision-making eases consistent decision-making. In a trial there are two sides, a plaintiff and a defendant in a civil trial and a prosecution and a defence in a criminal trial. Evidence is led and at the conclusion of the presentation of all the evidence and the summing up, a decision has to be made by the fact-finder (judge or jury) to find for the plaintiff or the defendant in a civil trial or to find the defendant in a criminal trial guilty or innocent. As before, denote the plaintiff’s or prosecution’s case

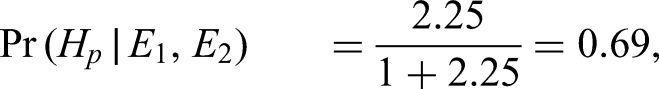

The fact-finder using this approach is presented at the conclusion of the presentation of all the evidence and the summing up with a value for the posterior odds

It is assumed that

The overlapping problem

Rule 401(a) of the Federal Rules of Evidence states that ‘[e]vidence is relevant if it has any tendency to make a fact more or less probable than it would be without the evidence’. Equation (1) shows that this is the case if the likelihood ratio is different from one. Pardo (2013) argues that this condition is not necessary. It is possible for a likelihood ratio to be one but for the evidence to be relevant. In any given trial, there may be evidence that each side claims supports its theory of what happened, an ‘overlap’ of evidence. The example given to illustrate this point is paraphrased here. The defendant, an inmate at a maximum security prison, was charged with two counts of battery on prison guards. There was an altercation between the defendant and guards after the defendant refused to return a food tray in his cell. Evidence (

For evidence interpretation, the propositions being considered need to be specified clearly in a framework of circumstances. Furthermore, it is important to separate propositions from explanations (Evett et al., 2000; Hicks et al., 2015). The importance of this separation is discussed in Aitken et al. (2021). For example, statements like ‘the crime stain originated from the PoI (person of interest)’ and ‘the crime stain originated from some unknown person who happened to have the same genotype as the PoI’ represent explanations. The probability of the evidence that the DNA profile of the crime stain genotype matches the profile of the PoI given the first explanation is 1, but the probability of the evidence given the alternative explanation is also 1. Thus, the likelihood ratio is 1 and would not help advance resolution of the issues in the case. (2021: 489)

The quoted statements from Aitken et al. (2021) represent explanations, not propositions. Both statements explain the evidence of the DNA profile of the crime stain. Thus, it is not possible to determine the value of the evidence.

It is possible to define propositions so that the value of the evidence would be determined. For example, the prosecution proposition (

Pardo (2013) states that ‘[t]he two competing theories for the assault were that (a) the defendant was frustrated and angry about not receiving the package, withheld the tray and attacked the guard; and (b) he withheld the tray to obtain the sergeant’s attention and in response the guards attacked him.’ However, in the context of the discussion above about propositions and explanations, these two ‘competing theories’ are explanations, not propositions.

The corresponding propositions are better phrased as (a) the defendant attacked the guard (

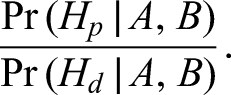

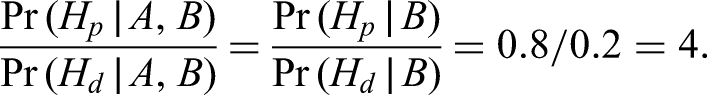

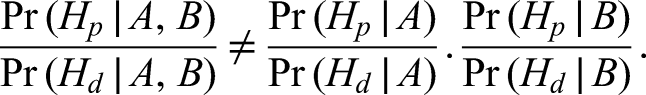

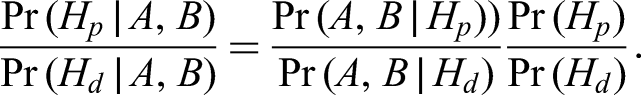

There is a reason why the ratio of probabilities,

The jury is interested in the ratio of the likelihood the defendant attacked the guard given the defendant had not received a package sent to him by his family, details of the package and the mail procedures at the prison to the likelihood the guard attacked the defendant, given the defendant had not received a package sent to him by his family, details of the package and the mail procedures at the prison. There is no particular reason why this ratio should be one. There is no reductio ad absurdum for the likelihood ratio theory. With careful consideration of, and distinction between, explanations and propositions, the overlapping problem ceases to be a problem.

Another example of evidence that has a likelihood ratio of 1 and is relevant is given by Pardo (2013).

A witness will testify that someone matching the defendant’s description was seen fleeing a crime scene. The defendant claims that it was his identical twin and introduces evidence establishing the twin’s existence. Suppose there is no reason to believe the testimony distinguishes the defendant from his twin.

In a comparison of the likelihood of the defendant’s guilt versus that of his twin, there does not appear to be any reason to think the likelihood ratio is different from 1: the ratio of the probability the person fleeing the crime scene ‘matched’ the defendant’s description given the defendant’s guilt to the probability the person fleeing the crime scene ‘matched’ the defendant’s description given the defendant’s identical twin’s guilt will be 1. However, the evidence is relevant.

There are two pieces of evidence:

Another example given by Pardo (2013) to show the difficulty of a probabilistic approach to evidence evaluation illustrates how the use of a likelihood ratio highlights the differing factors which need to be considered when assessing guilt. The example concerns two poisons. The prosecution alleges (

Assume

A final example from Pardo (2013) is that of a lottery.

There is a murder victim. The motive appears to be that the victim ran an illegal lottery and refused to pay the winner. It is unknown who actually won the lottery. The prosecution claims it was the defendant, and the defendant claims it was a rival. The defendant purchased one of the one thousand total lottery tickets and the rival purchased ninety-nine tickets. Suppose there is also evidence that the other nine hundred tickets were never sold and account has been taken of them.

The probability the defendant won the lottery is 1/100 or 1/1000, depending on whether or not the other nine hundred tickets were entered in the lottery or not. Similarly, the probability the rival won the lottery is 99/100 or 99/1000, depending on whether or not the other nine hundred tickets were entered in the lottery or not. The ratio of the two probabilities is 1/99, regardless of whether or not the other nine hundred tickets were entered in the lottery or not.

Conclusion

It has been written ‘[w]e believe it unfortunate that the triers of fact, jurors or judges (in a bench trial), never see evidence marshalled and arguments displayed in a Wigmorean manner. Wigmore’s intended audience for his methods consisted of attorneys preparing for trial; he said almost nothing about the impact of his methods on the behaviour of triers of fact.’ (Kadane and Schum, 1996: 258.) Likewise, it is unfortunate that barristers, advocates and attorneys do not make better use of the insights into the structure of the entirety of the evidence at trial afforded by the use of Bayesian networks. A well-presented Bayesian network would aid prosecutors and defenders in their case summaries at the beginning and end of a trial and help triers of fact in their deliberations throughout the trial.

Probabilities are subjective beliefs, the likelihood ratio is the measure for the value of evidence and the Bayesian approach is the approach to update beliefs as evidence is presented. Bayesian networks are a tool for use in practice to clarify the structure and dependencies between propositions and masses of evidence. Quantification through probability provides an approach to the evaluation of evidence which is clearer and more easily analysed critically than a verbal description of the value. A sequential probabilistic argument provides a coherent approach to evaluation and an improved assessment of the evidence than an argument that tries to consider all the evidence as a whole or one that mistakenly combines posterior probabilities.

Many have echoed Mr Justice Holmes’ 1897 opinion that ‘For the rational study of the law the black-letter man may be the man of the present, but the man of the future is the man of statistics’ (at p. 469) (Holmes, 1897). Twining (1990) added – to the previous Holmes’ statement – that […] the calculus of chance, Bayes’ Theorem, and a general ability to spot fallacies and abuses in statistical argument should be part of the basic training of every lawyer. (1990: 14)

Twining (1990) then reiterated the concept by writing The continuing ‘probabilities debate’ is more problematic. For, while it raises fundamental problems in probability theory and logic, it has important practical implications. The ‘unreality’ of some of these debates is in part attributable to the over-concentration of naive examples, such as the rodeo and blue and green bus problems, which do not take account of important procedural and other contextual factors. But theories of probability are central to evaluation of evidence and to elucidating basic evidentiary concepts such as ‘standard of proof’, probative value and ‘prejudicial effect’; and statistical evidence is of increasing practical importance in many different types of proceeding. […] The lawyer of today needs to be a master of elementary statistics. The general aspects of this can be accommodated within the logic of proof […]. (1990: 209)

There have been numerous calls to pay attention to these concepts, ranging from Eggleston, who wrote ‘it is the purpose of this book to discuss the part which probabilities play in the law, and the extent to which existing legal doctrine is compatible with the true role of probabilities in the conduct of human affairs’ (1983: 3) to the former President of the Italian Supreme Court, who recently noticed:

1

The challenge shifts to the field of the concrete effectiveness of the procedural model, while a new professional figure of magistrate is emerging in judicial practice. This would be a man of all-round culture, not only juridical but also humanistic and scientific, a responsible and effective evaluator of the fact and interpreter of law, a good reasoner and quality decision maker, skilled in the exercise of the art of judging, expert in logical-inferential techniques and in the verification of statistical-probabilistic schemes, as well as in the techniques of argumentative writing. However, he should be free from constraints and conditions other than the law, the reason and the ethics of limit and doubt. (Canzio, 2021: 91)

Footnotes

Acknowledgement

The authors thank Professor David Kaye (PennStateLaw) for very helpful comments on early drafts of this paper.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Schweizerischer Nationalfonds zur Förderung der Wissenschaftlichen Forschung (grant number 100011_204554/1).