Abstract

Neurodiversity refers to the idea that all brains—no matter their differences—are valuable and should be accepted. Attitudes toward the neurodiversity perspective can have real-life impacts on the lives of neurodivergent people, from effects on daily interactions to how professionals deliver services for neurodivergent individuals. In order to identify negative attitudes toward neurodiversity and potentially intervene to improve them, we first need to measure these attitudes. This article describes the development and initial validation of the Neurodiversity Attitudes Questionnaire (NDAQ), including item revision based on expert review, cognitive interviews, systematic evaluation of participants’ response process, and analysis of the instrument’s internal factor structure using exploratory structural equation modeling. Pilot analysis with 351 individuals mostly living in the United States who were currently working in or intending to pursue helping professions indicates that the NDAQ has construct validity, is well understood by participants, and fits a five-factor structure. While the NDAQ represents the first instrument designed to specifically assess attitudes toward the neurodiversity perspective, further validation work is still needed.

Lay Abstract

Neurodiversity refers to the idea that brain differences (including disabilities) are valuable and should be accepted. Attitudes toward neurodiversity can have real-life impacts on the lives of neurodivergent people (those whose brains do not fit society’s “standard”). These impacts can include effects on daily interactions, as well as how professionals such as teachers and doctors deliver services to neurodivergent people. In order to identify negative attitudes toward neurodiversity and potentially improve them, we first need to measure these attitudes. This article describes the development of the Neurodiversity Attitudes Questionnaire (NDAQ). NDAQ development included revision of questionnaire items based on feedback from experts and neurodivergent people, systematically evaluating the way participants responded to questionnaire items, and analysis of how the NDAQ items are grouped into different factors. A preliminary analysis with 351 individuals mostly living in the United States who were currently working or planning to work in a helping profession (e.g. doctors, teachers, therapists, and so on) indicates that the NDAQ measures attitudes toward neurodiversity, is well understood by participants, and fits a five-factor structure. While the NDAQ represents the first instrument designed to specifically assess attitudes toward the broad idea of neurodiversity, further work is still needed.

Models of disability and neurodiversity

The predominant lens of viewing brain differences is through the medical model. This model sees differences as abnormalities that need to be prevented, fixed, or cured (Marks, 1997). Widespread use of this model can lead to the public seeing disability as something sad and shameful (Chapman, 2020a). The social model, however, sees disability as being caused by one’s environment (Marks, 1997), where a psychological or physical impairment is separated from disability (i.e. problems one faces in society; Crow, 2010). A person is therefore not disabled due to an impairment—they are disabled to the extent that the world oppresses them due to a poor fit between their needs and the environment (den Houting, 2018).

Both models of disability have distinct strengths and weaknesses. The medical model has the potential to treat medical issues (e.g. gut issues and epilepsy that many people with neurodevelopmental conditions experience; Leader et al., 2022). The social model has helped push the onus of disability off individuals and onto society, leading to more rights for disabled people. However, as stated earlier, the medical model can induce shame and potentially lead to intervening on targets that have been deemed unacceptable by society but are not actually harmful (e.g. non-injurious self-stimulating such as hand flapping (see Bascom, 2011) or homosexuality, which was classified as a mental disorder until 1973 (Drescher, 2015)). The social model can have the effect of ignoring disabled people’s physical embodiment of their impairments (e.g. individuals with chronic pain may feel disabled by both an unaccommodating society and their own bodies (Crow, 2010)).

The concept of neurodiversity presents an alternative (Dwyer, 2022), and although it is often framed in terms of autism (Bridget et al., 2023), it is by no means exclusive to autism. Neurodiversity can refer to the factual reality of diversity in human neurology and cognition (Dwyer et al., 2023; Walker, 2014), but it can also refer to a political position (Dwyer et al., 2023; Ne’eman & Pellicano, 2022). This political position has been called the neurodiversity paradigm (Walker, 2014), neurodiversity perspective (Robertson, 2009), or “neurodiversity approach[es]” (Dwyer, 2022), and it includes the perspective that neurological and cognitive diversity—particularly with regards to neurodivergent people, those whose minds and brains differ from societal norms—is something to be accepted and even celebrated (Walker, 2014). Although autistic scholar Robert Chapman calls neurodiversity a “moving target” because different people define it slightly differently (Chapman, 2020b, p. 219), many generally agree that the neurodiversity perspective entails the following principles (adapted from Walker, 2014):

Differing neurology is valuable for our species

There are no “correct” brains, and therefore, individuals with different types of brains or neurological disabilities should be accepted (see Chapman, 2020a, use of the phrase “value-neutral” to describe neurodiversity as neither good nor bad)

Differences in neurobiology are subject to social issues similarly to race or gender

The neurodiversity model thus retains and rejects aspects of both the medical and social model of disability. For example, most proponents are vehemently against “cures” for many neurodevelopmental differences, such as autism and attention-deficit/hyperactivity disorder (ADHD), as they view them not as disorders but as different neurotypes, akin to different genders. Neurodiversity advocates often thus promote acceptance of differences and environmental accommodations as opposed to interventions targeted at solely changing individuals. This rejection of the medical model does acknowledge that, sometimes, it might be worthwhile to intervene at the individual level (Dwyer, 2022).

While the neurodiversity perspective has many strengths, there are some common misconceptions. One misconception is that it is only applicable to individuals with lower support needs or higher cognitive capacity (e.g. individuals who can communicate verbally). Although representation of nonspeaking individuals in the neurodiversity movement can be improved (den Houting, 2018; Russell, 2020), neurodiversity advocates are clear that the perspective’s message of acceptance and inclusion applies regardless of cognitive ability or support needs (Bailin, 2019; den Houting, 2018; Thinking Person’s Guide to Autism, n.d.). That said, neurodiversity advocates do recognize some other conditions, for example, anencephaly, as inherently harmful and as appropriate targets for medical treatment (Chapman, 2019). Indeed, although neurodiversity advocates favor social reforms to support those with epilepsy and depression, most remain open to curing these conditions (Dwyer et al., 2023), suggesting they fall outside a full-blown neurodiversity perspective’s scope. Similarly, neurodiversity advocates are clear that adoption of the neurodiversity perspective should not erase disability (Bailin, 2019; Ballou, 2018), and the model recognizes that many neurodivergent people still need and desire supports that help improve their quality of life (Robertson, 2009; Thinking Person’s Guide to Autism, n.d.). Nonetheless, as with any model or paradigm, the neurodiversity perspective can be criticized. For example, some of Russell (2020)’s concerns include that although the perspective’s tendency to dichotomize individuals into “neurodivergent” and “neurotypical” has some practical benefits, it can oversimplify reality and encourage advocates to castigate neurotypicals, instead of striking at neurotype-based prejudice itself. In addition, neurodivergent identity often relies on psychiatric diagnosis, which itself is part of the medical model that the neurodiversity perspective seeks to disrupt. Finally, as an ideology, the neurodiversity movement excludes those who do not share its values. While these critiques may suggest room for refinement of the neurodiversity perspective, its emphasis on acceptance and inclusion has clear positive benefits (Cage et al., 2018; Ferenc et al., 2023).

Why are attitudes toward neurodiversity important?

The neurodiversity perspective is not simply a theoretical lens to view different brains. It also has the potential to change the way the world relates to neurodivergent people. According to Robert Chapman (2020b), the neurodiversity perspective Can alter actual relations; all the way from how we empathise with neurological others on a personal level, to how we design scientific experiments or public spaces. Similarly, within and between neurominorities, it helps us foster not just solidary and resistance, but also grounds the development of shared vocabularies for making sense of our experiences and increasing our understanding of both each other and ourselves. So what starts out first as something epistemically useful, translates into the generation of different social facts, and finally into real world change. (pp. 219–220)

This real-world change can take place at all levels of interactions, and these interactions can be affected by one’s attitude toward neurodiversity. For example, Kim and Gillespie-Lynch (2023) found that endorsement of the neurodiversity movement was associated with less autism stigma. While all types of interaction are important, service providers in particular have an ethical duty to treat their clients humanely and with compassion. This is a core tenet of the ethical guidelines for many service providers (e.g. medical doctors (Orr et al., 1997), behavioral interventionists (Behavior Analyst Certification Board, 2020), teachers (California Teachers Association, n.d.)). Adopting the neurodiversity perspective is a step toward ensuring professionals provide services that are truly supportive and accepting of their clients, as opposed to inadvertently contributing to stigma and prejudice. For example, by introducing families to neurodiversity immediately upon providing a diagnosis, diagnosticians can ensure that families are aware of both the challenges and strengths that their child will experience (Brown et al., 2021). This approach can reduce parental stress and also increase the chance that their child feels accepted, which is what many autistic people call for (e.g. Sinclair, 1993; authors of the Sincerely, Your Autistic Child anthology (Ballou et al., 2021)). In addition, this early exposure to neurodiversity might open up avenues of identity for children that they otherwise might take a while to discover on their own (Dwyer, 2022). With regard to education, embracing neurodiversity can lead educators to utilize a strengths-based approach. Armstrong (2012) details how educators can support neurodivergent students by determining their strengths and interests and constructing niches in which they can flourish. Learning about neurodiversity can also lead teachers to reduce deficit-thinking with their own students (Lambert et al., 2021), which can lead to richer educational experiences. Viewing the neurodiversity perspective positively could also lead to interventionists (such as behavioral therapists) creating more acceptable programs (Fletcher-Watson, 2018; Schuck, Tagavi, et al., 2022).

Measuring attitudes toward neurodiversity

Measuring attitudes toward neurodiversity is needed to identify the need for or assess the effectiveness of anti-stigma programs or professional development workshops on neurodiversity. Although many instruments have been designed regarding attitudes toward specific disabilities (e.g. physical disabilities (Findler et al., 2007), autism (Derguy et al., 2021; Kim, 2020), intellectual disability (Morin, Crocker, et al., 2013)), measuring attitudes toward neurodiversity in general is still in its infancy. Even the recently developed Neurodiversity Attitudes Scale (VanDaalen, 2021) was designed with all items specific to autism. This reflects much of the neurodiversity literature to date, which ordinarily explicitly or implicitly frames neurodiversity around autism, perhaps only briefly mentioning other forms of neurodivergence (Bridget et al., 2023), such as stuttering, ADHD, and intellectual disability. Thus, a valid instrument to assess attitudes toward neurodiversity in general, in the political sense of the term described by Ne’eman and Pellicano (2022), is needed. The current study describes the development and validation of such an instrument—the Neurodiversity Attitudes Questionnaire (NDAQ).

Method

This study was approved by the University of California, Santa Barbara (UCSB) Institutional Review Board (protocol #11-23-0395). Participants in all phases of the study provided informed consent before completing any study activities.

Measure development

This study utilized established principles for designing instruments (e.g. Clark & Watson, 2019; Wilson, 2004; see also PROMIS®, 2013). These include defining a construct, designing items, and assessing the instrument’s internal structure. Initially, the first two authors developed items focused on attitudes specifically toward neurodiversity-affirming teaching practices. Cognitive interviews with three teachers revealed a tension between what teachers wanted to do in the classroom versus what they felt was feasible due to barriers such as lack of funding (Schuck, Choi, et al., 2022). It was thus decided to create items that were focused on attitudes toward neurodiversity more generally. While we were still focused on validating this measure in a sample of helping professionals, creating more general items would also allow this instrument to be validated in other samples, too, as the items could be applicable to those beyond professionals. To create these more general items, the tripartite model of attitudes (Rosenberg & Hovland, 1960) guided item development. This model elucidates three dimensions of attitudes: affective, cognitive, and behavioral. Initial items in each domain were brainstormed based on review of both academic literature and first-person accounts of neurodiversity (e.g. blogs, social media posts; in the case of two authors, lived experience of either being autistic or having an autistic child). We were also careful to include items about a variety of neurodivergences as well as the neurodiversity perspective more generally. Initial items were revised based on (1) expert review, (2) cognitive interviews, and (3) evaluation of participants’ response process. Thirty-one initial items were developed and subjected to feedback.

Expert review

Expert reviewers included an education professor with expertise in neurodiversity, an education professor with expertise in measurement, and an education research fellow with expertise in inclusive education. Experts were shown the items via email or during a Zoom meeting. Feedback included potential wording changes and topics for new items.

Cognitive interviews

Cognitive interviews (Willis, 2004) were conducted with three neurodivergent individuals (two with ADHD, one autistic) to ensure items were accurately capturing issues related to neurodiversity (i.e. assessing both content and construct validity). Interviews were conducted by the first author and took place via Zoom or email. Participants described what they thought about the item, whether it made sense, and how they would answer it. Participants were also asked whether they felt any topics were missing.

Response process evaluation

In order to more systematically understand how potential participants were interpreting items, a process inspired by response process evaluation (RPE) of Wolf et al. (2021) was used. RPE essentially moves the cognitive interview process into a questionnaire so that more participants can provide feedback in a less time- and resource-demanding way. It consists of participants answering survey items and meta-questions about the items and iterative review of responses by researchers. Items that perform poorly are revised, and more feedback is sought until items are judged as adequately understood/answerable.

Participants

RPE participants were all undergraduate students who were part of the research pool through the UCSB Communication department. Participants took the survey via Qualtrics for course credit. Although this undergraduate population is not exactly the NDAQ target population (i.e. helping professionals), we wanted the items to be relevant to individuals beyond just helping professionals. Nonetheless, it is likely that due to their degree requirements and intended majors, participants had experiences that closely mirror that of the target population (e.g. tutoring experience, undergraduate clinical and/or research work, volunteer experience in hospitals or schools). Of the 190 students whose responses were reviewed, 11 identified as disabled. Although participants were not asked about neurodivergent identity, an additional 11 participants mentioned having ADHD in open-ended responses, none of whom identified as disabled.

Procedure

Participants were randomly assigned 7–12 items and asked two meta-questions: (1) What do you think [item] means? (2) Why did you choose the answer you did? After approximately ⩾25 participants responded to each question, responses were assessed. Responses were rated as definitely, likely, or not understanding. All ratings were made by the first author, and judgments were made based on whether participants explained the item as it was intended and whether their answer choices aligned with their explanation. Usually, if at least 80% of participants definitely understood an item, it was considered final. A few items met this criterion, but data indicated that the item needed more work (e.g. everyone chose “disagree” or participants thought the wording was silly). These and items rated <80% understood were either revised or more data were gathered. All items included in the final pilot version of the NDAQ were rated as definitely or likely understood by at least 90% of participants.

After each RPE round, the researchers met to brainstorm revisions. Depending on the RPE responses, items were revised (which could have ranged from minor wording changes to complete revamping of the question) or discarded. Revised items were then entered into Qualtrics for the next round. When RPE started, a 5-point Likert-type scale was used (strongly disagree, disagree, neutral, agree, strongly agree); after review, it was decided to use 6 points with slightly disagree/slightly agree instead of neutral, as “neutral” was being chosen for non-systematic reasons. After the fourth round of RPE, all 29 items were considered final (see Table 4; the table only includes 28 items, as one item was removed after piloting).

Pilot testing

Participants

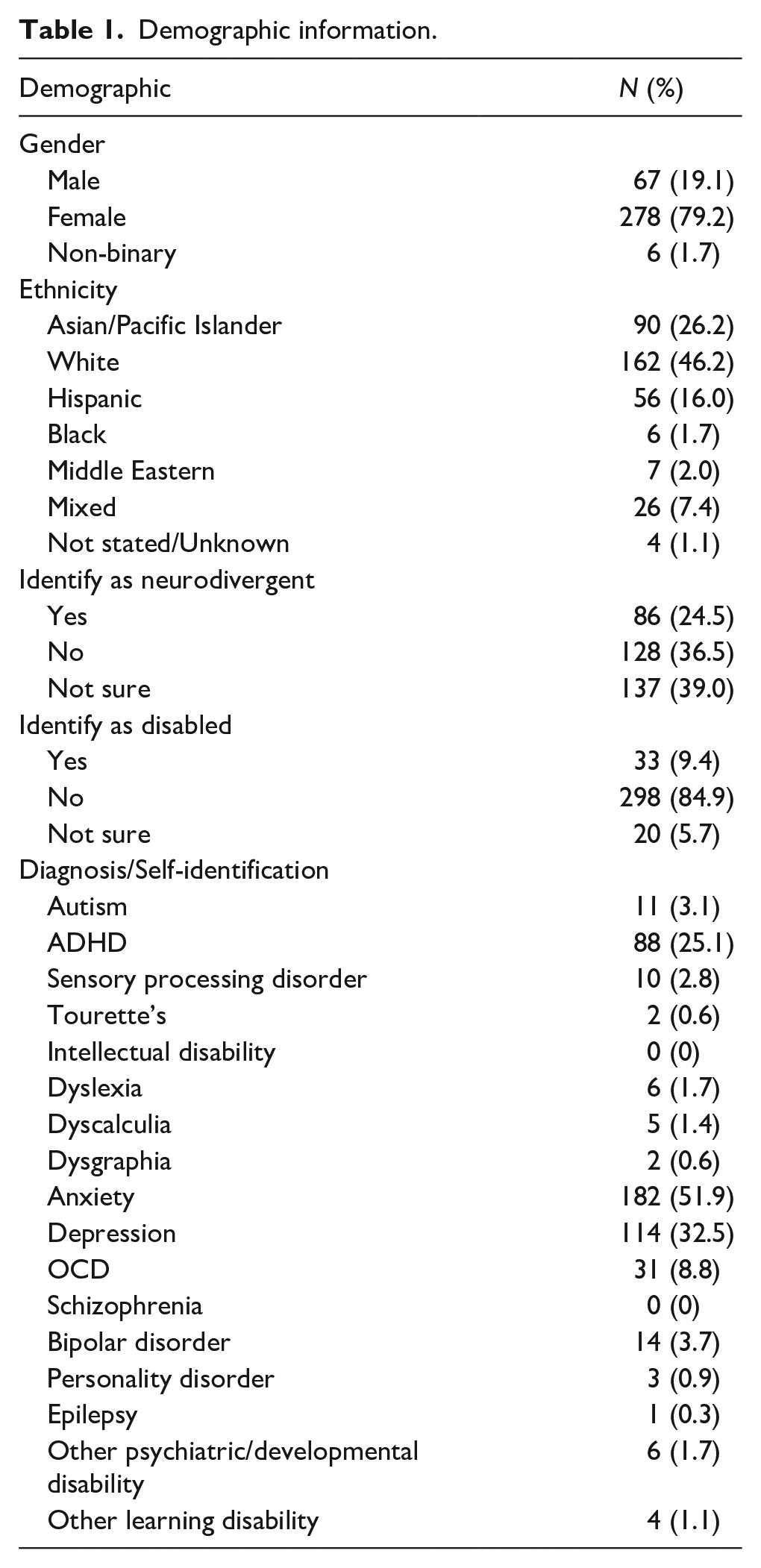

Recruitment materials for this portion of the study did not use the word “neurodiversity”; they instead referenced learning about disability and/or neurological differences. Inclusion criteria included (1) ⩾18 years old; (2) currently working or planning to work as a helping professional (defined broadly as anyone involved in education (e.g. teacher, paraprofessional), medicine (e.g. physician, nurse), or other therapy (e.g. psychologist, therapist/counselor)); and (3) able to read/type in English. To ensure a broad range of familiarity with neurodiversity, data were collected from two sources: (1) the UCSB Communication department undergraduate research pool and (2) online via listservs/social media. Undergraduates received course credit; online participants were given the opportunity to enter a gift card drawing. To be eligible to participate, undergraduate students needed to have already indicated that they wanted to go into a helping profession on a recruitment pool pre-screening survey given to them before they were able to sign up to participate in any studies. Online participants were asked the same screening question at the beginning of the survey and needed to indicate that they were currently or intended to become helping professionals in order to continue taking the survey. The sample included 259 undergraduate and 92 online participants (total N = 351) who ranged from 18 to 70 years in age (M = 23.09, SD = 7.38). All undergraduate participants were attending UCSB in the United States, and the vast majority of online participants reported living in the United States (n = 85 out of 92; other countries represented include Germany, Canada, South Africa, and Portugal); see Table 1 for sample demographics. Participants’ ratings of familiarity with neurodiversity ranged from 1 (not at all) to 4 (extremely) (M = 2.21, SD = 0.990; median = 2, both corresponding roughly to “slightly familiar”).

Demographic information.

Online participants were asked about their current (or, if still in school, future) occupation. Answers were coded as medicine/mental health, education, professor, and other. Most (n = 55; 59.8%) were in medicine/mental health; 22 (23.9%) were in education. Five in medicine or education were also professors. Eight indicated they were professors only, and one was still in school but planned to be a professor. Four were involved in other related fields (e.g. research coordinator); one did not answer the question. Undergraduates were asked what their future occupation(s) might be. Most (n = 188, 72.5%) indicated they wanted to be in medicine/mental health; 77 (29.7%) wanted to go into education (some wanted to go into both/either and are counted twice). Thirteen wanted to be professors only; 23 wanted to be a professor in addition to medicine or education. One did not answer the question.

Measures

Participants answered demographic questions and then questions about the neurodiversity perspective, such as neurodiversity familiarity (not at all, slightly, moderately, or extremely; see Burkhart, 2019; for a similar question) and whether they identify as neurodivergent/neurodiverse and/or disabled. Participants were then presented with the NDAQ and additional questionnaires. Before taking the NDAQ, participants were presented with the following instructions/explanation in order to help them understand some of the concepts and terminology used in the questionnaire: Instructions: All of the questions below refer to people who are neurodivergent; that is, their brains work in different ways than most other people. People who are diagnosed with autism, ADHD, intellectual disability, dyslexia, dyspraxia, and Tourette’s (among other diagnoses) are often considered neurodivergent. Please answer the following questions to the best of your ability. It is okay if you’re unsure; just choose whichever answer fits best, even if it’s not perfect.

After completing the NDAQ, participants were asked two open-ended questions related to whether they had any feedback and what they thought the purpose of the questions were.

Social Distance Scale (SDS)

Gillespie-Lynch et al.’s (2021) adaptation of Bogardus’ (1933) SDS was used to assess participants’ willingness to interact and engage with stigmatized populations. Three SDSs focused on autism, ADHD, and dyslexia. Each included 10 items rated from −2 to 2, with higher scores indicating more stigma.

Participatory Autism Knowledge Measure (PAK-M)

The PAK-M (Gillespie-Lynch et al., 2021) is a 29-item instrument designed to assess autism knowledge. Its recent updates include questions about masking/camouflaging and updated diagnostic criteria. Items were rated from −2 to 2, with higher scores indicating more knowledge.

Scale of ADHD-specific Knowledge (SASK)

The SASK (Mulholland, 2016) is a 20-item instrument designed to assess knowledge of ADHD. Although the original SASK items were designed as true/false questions, the SDS’ and PAK-M’s answer choices (5-point agree-disagree Likert-type scale) and scoring system (−2 to 2) were used.

Dyslexia Knowledge Scale

Gonzalez’s (2021) 10-item instrument is designed to assess understanding of dyslexia. Although the original instrument’s answer choices included Definitely True, Probably True, Probably False, and Definitely False, the SDS’ and PAK-M’s answer choices (5-point agree-disagree Likert-type scale) and scoring system (−2 to 2) were used.

Data integrity

Several procedures were used to ensure data integrity (Teitcher et al., 2015; Yarrish et al., 2019): (1) survey questions designed to be difficult for bots (e.g. asking participants to spell a name backwards after being provided with the correct spelling); (2) inclusion of nonsensical answer choices (e.g. “intentionally blank” as a diagnosis option); (3) flagging of identical and/or nonsensical open-ended responses; (4) open-ended responses that indicate lack of attention (e.g. “afds”); and (5) suspicious answer choice patterns (e.g. “agree” to all items). The first author coded all responses as authentic, inauthentic, or unsure using criteria 1–4. Unsure responses were reviewed using the fifth criterion. An undergraduate research assistant double-coded responses; discrepancies were resolved by the first author. Data coded as inauthentic were excluded.

Data analysis

To determine the factor structure of the NDAQ, exploratory structural equation modeling (ESEM; Asparouhov & Muthén, 2009; Marsh et al., 2014) with Geomin rotation was used. A maximum likelihood (MLR) estimator was used given that it is well suited for instruments using five or more response categories and is robust to non-normal data distribution (Rhemtulla et al., 2012). For consistency, models were also run using the variance-adjusted weighted least squares estimator. Models with one to six factors were reviewed using the following fit indices: Comparative Fit Index (CFI), Tucker–Lewis Index (TLI), the Root Mean Square Error of Approximation (RMSEA), and Standardized Root Mean Square Residual (SRMR; Hu & Bentler, 1999). CFI and TLI values of >0.90 and >0.95 were considered indicative of adequate and excellent fit, respectively. RMSEA and SRMR values of <0.08 and <0.06 were considered as indicative of adequate and excellent fit, respectively, with RMSEA 90% confidence intervals required not to cross the 0.08 boundary and the close fit test to have p > 0.05. Analyses were performed using MPlus 8.0 (Muthén & Muthén, 2007). Reliability of factors was computed using the Omega coefficient (Hayes & Coutts, 2020); general guidelines of ~0.90 = excellent, ~0.80 = very good, and ~0.70 = adequate were used (Kline, 2016).

Items in the final factor solution that were deemed to misfit more than other items (i.e. factor loading of 0.30–0.32 or cross-loadings onto other factors <0.100) were reviewed using RPE data. This was done to determine whether anything was missed during our initial RPE analysis that might warrant items being removed. Even though these items’ loadings were considered statistically acceptable (Comrey & Lee, 2013), we acknowledge that fitting factor models well does not necessarily imply a meaningful instrument (Maul, 2017).

Correlations between NDAQ scores and mean scores on other measures were used to assess convergent validity. It was hypothesized that those with less stigma and more knowledge would score higher on the NDAQ and that correlations would be higher for the SDS since attitudes are more similar to stigma than knowledge. It was also hypothesized that those with more neurodiversity familiarity and those who identified as neurodivergent would also score higher (with the latter being analyzed using independent samples t-tests). All descriptive statistics and correlational analyses were conducted using IBM SPSS v. 28.0.1.0 (IBM Corp., 2021).

Community Involvement

One of the authors is the parent of an autistic child, and another is an autistic researcher. Both authors were involved in all aspects of the study.

Results

Factor structure

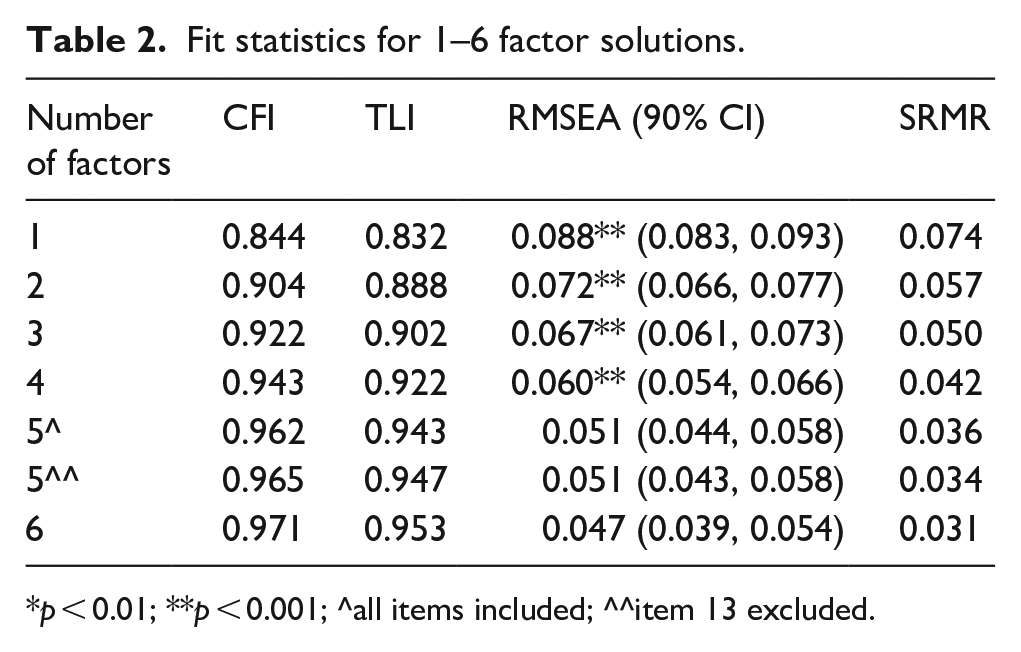

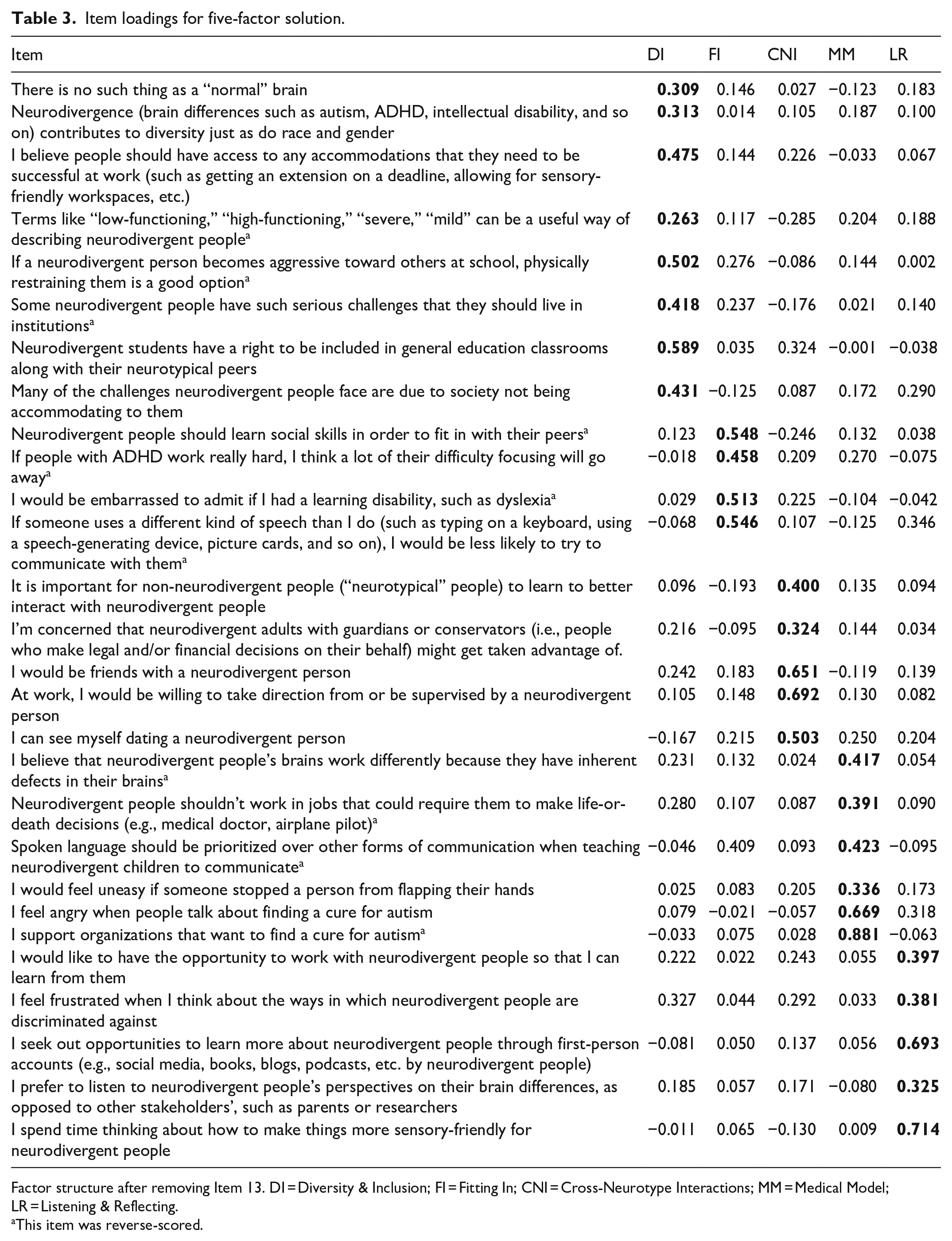

Four- to six-factor solutions showed at least adequate fit (see Table 2); these factor solutions were reviewed for theoretical relevance. The five-factor model was chosen given that, when compared to the four-factor solution, it yielded an additional theoretically meaningful factor, and an additional factor that emerged in the six-factor solution was not meaningful and consisted of all items that cross-loaded with other factors. Factor inter-correlations were ⩽0.37. The five factors were interpreted as: Diversity & Inclusion, Fitting In, Cross-Neurotype Interactions, Medical Model, and Listening & Reflecting. Two items (#13: Neurodivergent children should take medication to help them focus at school and #16: I would feel uneasy if someone stopped a person from flapping their hands) did not significantly load onto any factors. All authors agreed that item #13 was not relevant to the construct, as many neurodiversity proponents support use of medications (e.g. for ADHD; Dwyer, 2022) when medically indicated and/or desired. The five-factor model was run again without #13; #16 then loaded sufficiently onto one of the factors (see Table 3).

Fit statistics for 1–6 factor solutions.

p < 0.01; **p < 0.001; ^all items included; ^^item 13 excluded.

Item loadings for five-factor solution.

Factor structure after removing Item 13. DI = Diversity & Inclusion; FI = Fitting In; CNI = Cross-Neurotype Interactions; MM = Medical Model; LR = Listening & Reflecting.

This item was reverse-scored.

Reliability was very good for the entire scale (ω = 0.88) and adequate for all factors except Fitting In (Diversity & Inclusion: ω = 0.70; Fitting In: ω = .61; Cross-Neurotype Interactions: ω = .70, Medical Model: ω = .79; Listening & Reflecting: ω = .73).

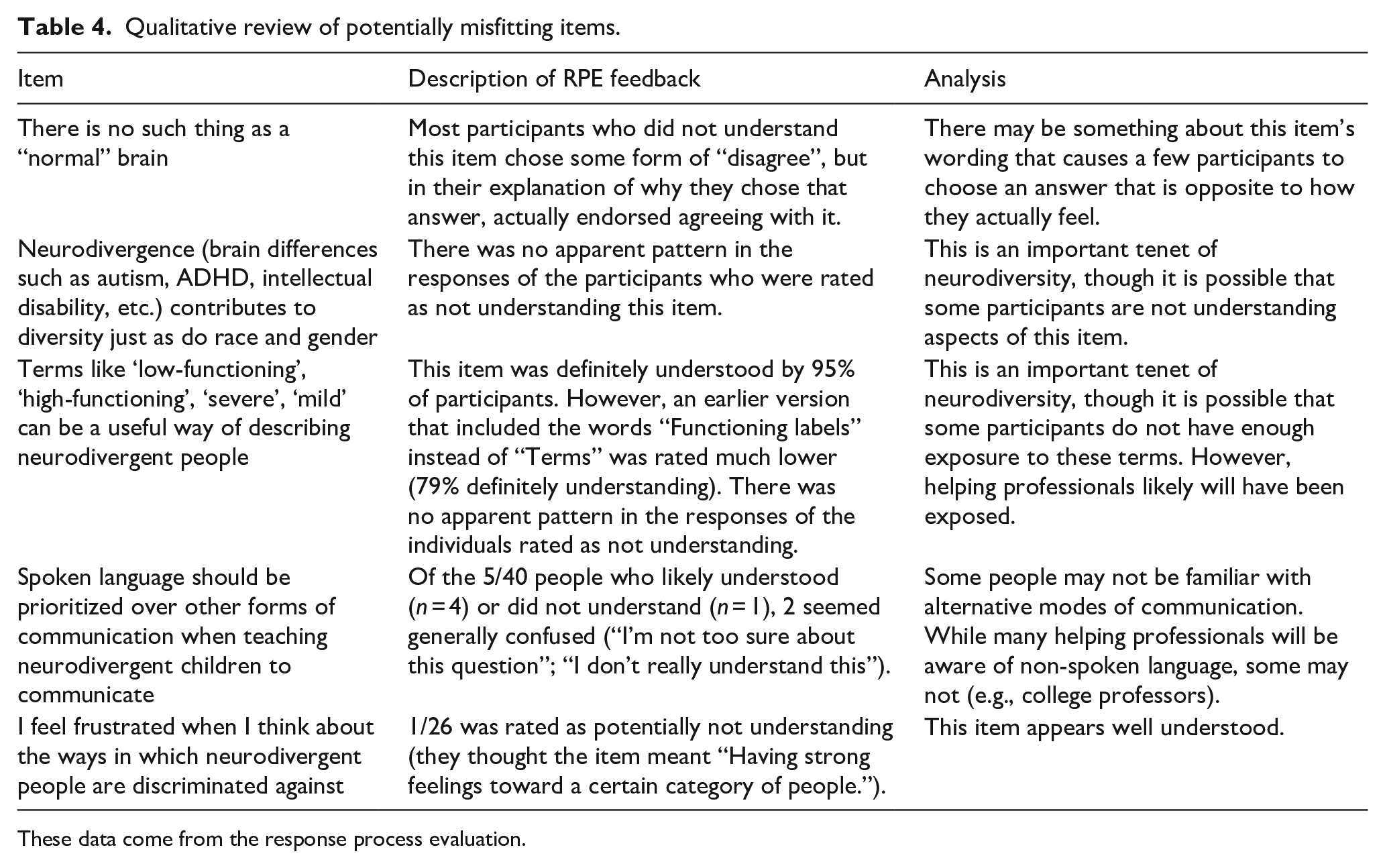

Qualitative investigation into items with less ideal fit

Five items were flagged as potentially misfitting; RPE data for these items were reviewed. Analysis of these items is presented in Table 4. It was decided not to remove any of the items at this time, although this analysis may be useful in future NDAQ investigations.

Qualitative review of potentially misfitting items.

These data come from the response process evaluation.

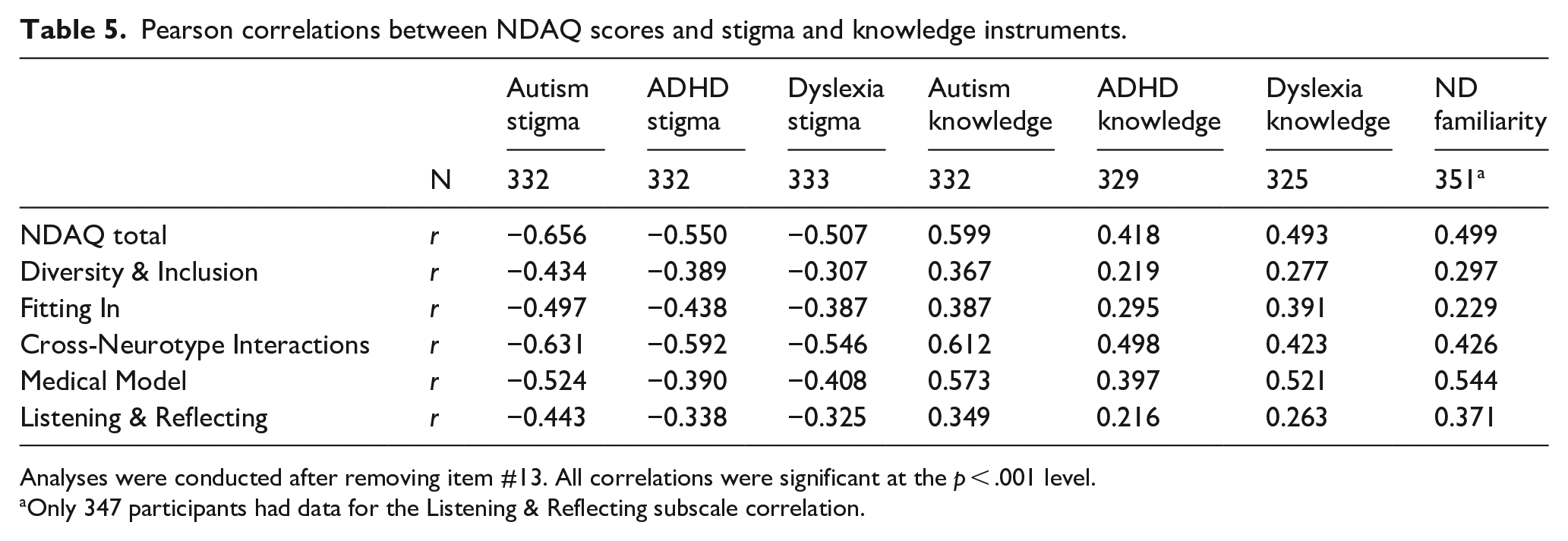

Evidence of convergent validity

NDAQ total mean scores as well as each factor mean were all significantly correlated with the SDS and knowledge scales (Table 5). All NDAQ scores were negatively correlated with the SDS (negative SDS scores correspond to less stigma) and positively correlated with knowledge scores. Among the knowledge measures, autism knowledge was most strongly correlated with NDAQ scores. NDAQ scores were also significantly correlated with reported familiarity with neurodiversity. Finally, individuals who identified as neurodivergent had higher NDAQ scores than those who did not/were unsure (ts = 2.99–6.30, ps < 0.05), except for the Fitting In factor (t = 1.82, p = 0.070).

Pearson correlations between NDAQ scores and stigma and knowledge instruments.

Analyses were conducted after removing item #13. All correlations were significant at the p < .001 level.

Only 347 participants had data for the Listening & Reflecting subscale correlation.

Participant open-ended feedback

When asked if they had any feedback, 94 participants wrote in something (not including those who wrote “no” or “N/A”). The most common responses focused on the desire to be able to give a nuanced or “neutral” response option given the heterogeneity of neurodivergent conditions (n = 41). Seventeen participants indicated that the survey made them think more deeply about topics related to neurodiversity. Four participants indicated that at least one item was confusing, and fived stated they did not have a lot of knowledge about neurodiversity.

Discussion

This study presents development and preliminary validation of the NDAQ. The NDAQ was developed by a mixed neurotype team of researchers and was subjected to both qualitative and quantitative investigation in order to address validity of the items, instrument factor structure, and convergent and discriminant validity.

NDAQ construct & content validity

Expert review and cognitive interviews helped initially revise our items and ensured content relevance. Our extensive RPE (Wolf et al., 2021) suggested strong construct validity. Future investigations will benefit from further qualitative work to determine whether additional items that tap into highly nuanced topics should be developed and included in the NDAQ. Furthermore, review of RPE data for items that had relatively lower factor loadings or somewhat high cross-loadings indicated that some items may be confusing for a small subset of participants. However, it should be noted that most participants did not mention in their overall feedback that the NDAQ was confusing, even though the majority of participants indicated they were only “slightly familiar” with neurodiversity. Notably, none of the 16 individuals who stated that the items were thought-provoking indicated that it was confusing, suggesting that the items were accessible even to those who had spent little time thinking about the neurodiversity perspective. It is crucial that further NDAQ work continues to look carefully at how each item is behaving qualitatively.

Factor structure of the NDAQ

ESEM indicated a five-factor model fit the NDAQ data with factors interpreted as Diversity & Inclusion, Fitting In, Cross-Neurotype Interactions, Medical Model, and Listening & Reflecting.

Items in Diversity & Inclusion appear to tap into two aspects of how neurodiversity is viewed in society. The first is regarding key tenets of the neurodiversity perspective (e.g. there are no “normal” brains; Walker, 2014). The second is related to the rights of neurodivergent people (e.g. access to accommodations, inclusion in general education). While the way one defines brain differences is likely related to how they view neurodivergent people, future research should look into whether this factor may actually represent two separate factors. On the other hand, based on the analysis of participant response processes, it is possible that some items use phrasing so specific to the neurodiversity perspective that those less familiar with neurodiversity may not understand them regardless of their attitude. Nonetheless, scores on this factor will likely highlight whether individuals could benefit from basic training in what neurodiversity is.

Items that loaded onto Fitting In were all directly related to neurodivergent people not fitting neurotypical standards, such as learning social skills and perspectives on alternative communication devices. These items are related to the degree to which neurodivergent people may feel the need to mask (Perry et al., 2022) their neurodivergence. These could be key when assessing professionals’ attitudes toward neurodiversity, as one’s score on this factor may indicate whether a professional would design intervention goals aligned with the neurodiversity perspective (see Roberts, 2020). It should be noted that the item about prioritizing spoken language, which loads onto the Medical Model factor, has a somewhat high loading onto this factor. It conceptually fits better with this factor, as it gets at the idea that diversity in communication should be accepted. Therefore, when using the NDAQ, it may be prudent to consider this item as part of the Fitting In factor or not use it at all. Future validation efforts will help clarify where this item fits best.

The Medical Model factor taps into how much participants align themselves with the medical model. Two items (about stopping someone from hand flapping and not allowing neurodivergent people to work in high-stakes jobs) might be interpreted as real-world ramifications of a medical-model orientation, although more work on these two items appears necessary in light of the complexity of the neurodiversity perspective. Scores on this factor may indicate whether someone needs education on different models of disability, including the neurodiversity perspective as well as the social model of disability (Marks, 1997).

The Cross-Neurotype Interactions and Listening & Reflecting factors both map well to both the behavioral and cognitive dimensions of the tripartite model of attitudes (Rosenberg & Hovland, 1960). For example, items in the Cross-Neurotype Interactions Factor ask participants to rate the likelihood of being friends with, dating, or taking direction from a neurodivergent person (all behaviors). The other two are concerned with how people think about interacting with neurodivergent people. The Listening & Reflecting factor comprises five items that all get at either how active a role the participant takes in trying to understand neurodivergent people and creating more inclusive environments and/or the extent to which they cognitively reflect on such issues. While the other NDAQ factors may be more related to how a professional works with neurodivergent people, these two factors may be particularly important in understanding how dedicated the professional is to ensuring that neurodivergent people are truly accepted in society.

Convergent and discriminant validity

While correlations between the NDAQ and measures of autism, ADHD, and dyslexia stigma and knowledge were all significant, correlations were generally stronger among the stigma measures. The finding that the NDAQ scores were most strongly related to the PAK-M compared to other knowledge measures also supports NDAQ validity, as the PAK-M was designed to be neurodiversity-aligned (Gillespie-Lynch et al., 2021), whereas the other measures were not. Neurodiversity familiarity and neurodivergent identity were also related to NDAQ scores, suggesting that endorsing positive attitudes toward neurodiversity was easier for those with prior proximity to the concept. Nonetheless, correlations between familiarity and certain NDAQ subscales (e.g. Fitting In and Diversity & Inclusion) were fairly weak, suggesting that familiarity with neurodiversity in general is not enough when trying to understand how helping professionals might approach working with neurodivergent people. These domains include items that appear to be relevant to key areas in which professionals have influence such as selection of intervention goals and provision of accommodations, emphasizing the importance of further research to explore factors driving scores on these scales. In addition, it is possible that these correlations might be stronger in the general public.

Limitations and future directions

These preliminary results should be evaluated with several limitations in mind. First, although the sample was somewhat racially diverse with over half identifying as non-White, there was little representation of Black individuals. Participants were also overwhelmingly female, which is in line with research suggesting women are more likely to participate in online surveys than men (Becker, 2022; Smith, 2008). Prior research has found that there are gender differences in attitudes toward disability (Morin, Rivard et al., 2013), with some studies finding that females have more positive attitudes overall (Goreczny et al., 2011), including among medical professionals (Edwards & Hekel, 2021). This could have reduced the amount of variability in our data, which could have impacted the factor structure. The current study’s participants were also recruited using convenience sampling. It is likely that participants were drawn to participate due to an existing interest in disability/neurological differences. While one would expect some helping professionals to be interested in this topic, it is likely that some see working with neurodivergent people as simply part of their job and are not interested in thinking much about it outside of work. Since these participants are probably underrepresented in our sample, it is crucial that future NDAQ validation work recruit these participants.

Although this study explored the NDAQ’s validity from both qualitative and quantitative angles (as suggested by the American Educational Research Association, American Psychological Association, & National Council on Measurement in Education (AERA, APA, & NCME), 2014), there are some areas that need further investigation. For instance, we did not assess differential item functioning, which can be evidence of measure bias or of more complex realities requiring further investigation. In addition, because neurodiversity is such a broad topic that is conceptualized differently by different people, it is possible our instrument is missing key concepts that are critically important to some neurodiversity advocates. Therefore, items may need to be added, which will likely affect the overall NDAQ factor structure. Other items may need rewording to be more understandable to a wider audience. In addition, while we intentionally removed the “neutral” response option during the RPE phase, it may be beneficial to include an optional open-ended question after each item so that participants have the opportunity to feel that their thought process is being heard. Our findings regarding factor structure must thus be regarded as preliminary.

Finally, this version of the NDAQ was tested and validated to gauge helping professionals’ attitudes toward neurodiversity in the hopes of identifying education/training needs. Although it would be ideal for an instrument to assess the impact of such a training, it should be made clear that the NDAQ has not yet been validated for that specific use (see the AERA, APA, & NCME, 2014, standards). An entirely new validation process is needed before it can be deemed suitable as a pre-post outcome measure. Similarly, while the NDAQ may be applicable to other populations (including lay persons participating in research on neurodiversity), it should be tested and validated with new samples before being widely used.

Conclusion

Despite noted limitations, this study provides preliminary evidence that the NDAQ is a valid measure of attitudes toward neurodiversity and could help identify helping professionals who would benefit from training to improve their attitudes toward neurodiversity. Such improvements could lead to decreased stigma and prejudice toward neurodivergent people and ultimately improve their quality of life.

Footnotes

Acknowledgements

The authors would like to thank everyone who participated throughout all phases of the study.

Author note

An earlier version of this project was part of the first author’s doctoral dissertation.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.