Abstract

Autistic people who cannot speak risk being underestimated. Their inability to speak, along with other unconventional behaviors and mannerisms, can give rise to limiting assumptions about their capacities, including their capacity to acquire literacy. In this preregistered study, we developed a task to investigate whether autistic adolescents and adults with limited or no phrase speech (N = 31) have learned English orthographic conventions. Participants played a game that involved tapping sequentially pulsing targets on an iPad as quickly as they could. Three patterns in their response times suggest they know how to spell: (a) They were faster to tap letters of the alphabet that pulsed in sequences that spelled sentences than letters or nonsense symbols that pulsed in closely matched but meaningless sequences; (b) they responded more quickly to pairs of letters in meaningful sequences the more often the letters co-occur in English; and (c) they spontaneously paused before tapping the first pulsing letter of a new word. These findings suggest that nonspeaking autistic people can acquire foundational literacy skills. With appropriate instruction and support, it might be possible to harness these skills to provide nonspeaking autistic people access to written forms of communication as an alternative to speech.

Lay abstract

Many autistic people who do not talk cannot tell other people what they know or what they are thinking. As a result, they might not be able to go to the schools they want, share feelings with friends, or get jobs they like. It might be possible to teach them to type on a computer or tablet instead of talking. But first, they would have to know how to spell. Some people do not believe that nonspeaking autistic people can learn to spell. We did a study to see if they can. We tested 31 autistic teenagers and adults who do not talk much or at all. They played a game on an iPad where they had to tap flashing letters. After they played the game, we looked at how fast they tapped the letters. They did three things that people who know how to spell would do. First, they tapped flashing letters faster when the letters spelled out sentences than when the letters made no sense. Second, they tapped letters that usually go together faster than letters that do not usually go together. This shows that they knew some spelling rules. Third, they paused before tapping the first letter of a new word. This shows that they knew where one word ended and the next word began. These results suggest that many autistic people who do not talk can learn how to spell. If they are given appropriate opportunities, they might be able to learn to communicate by typing.

About one-third of autistic children and adults cannot communicate effectively using speech, even after years or decades of interventions focused on speech (DiStefano et al., 2016; Tager-Flusberg & Kasari, 2013). For reasons that are not yet understood, some do not talk at all, some can say a few words or phrases, and others can speak but in a very limited way. Although some nonspeaking 1 autistic people can learn to use picture-based communication systems (e.g. Bondy & Frost, 2001), these systems are limited in that they are used primarily to make requests (Ostryn et al., 2008), the vocabulary available to a user is chosen by someone other than the user, and the evidence for their long-term efficacy is not robust (Howlin et al., 2007). Unfortunately, most nonspeaking autistic people are never provided access to an effective, language-based alternative to speech, significantly limiting their educational, employment, and social opportunities.

For people with acquired disabilities that make speech difficult or impossible (e.g. aphasia following a stroke), writing can become their preferred medium of expression (e.g. Thiel & Conroy, 2022). But fewer than 10% of people with congenital disabilities that significantly impact their ability to speak (including nonspeaking autism) are estimated to be literate (Foley & Wolter, 2010). In one large study, school professionals reported that fewer than 5% of their nonspeaking students from 3rd to 12th grade could write simple phrases or sentences (Erickson & Geist, 2016). Intrinsic factors (e.g. perceptual, cognitive disabilities) may contribute to the difficulties some nonspeaking people face in acquiring literacy. But nonspeaking autistic people also face at least three extrinsic challenges in acquiring literacy.

First, they have to overcome deeply entrenched assumptions that because they cannot communicate effectively using speech, they do not have the capacity for language. Speech and language have historically been conflated (Gernsbacher, 2004): A person unable to speak may be assumed to lack the capacity for symbolic thought that underlies language. But speech is only one means by which language can be expressed. Given appropriate instruction and support, deaf people, for example, can learn to convey their thoughts using sign language.

Second, most modern approaches to literacy instruction rely on speech. Recommendations for early literacy activities involve practicing letter–sound correspondences and decoding printed words by sounding them out (National Reading Panel, National Institute of Child Health and Human Development, 2000). Some literacy activities that do not rely on speech have recently been developed for nonspeaking autistic children (Benedek-Wood et al., 2016; Goh et al., 2013)—for example, computer games to teach printed word recognition or root word identification (Serret et al., 2017). But literacy activities for nonspeaking people have yet to be widely adopted (Arnold & Reed, 2019; Copeland et al., 2016).

Finally, many nonspeaking autistic people behave in ways that are inconsistent with expectations about how someone capable of becoming literate would behave. In addition to not being able to communicate effectively using speech, they may appear inattentive, have difficulty sitting still, or engage in impulsive or self-injurious behaviors (Tager-Flusberg et al., 2017). As adults, they may need support in activities like tying their shoes or crossing the street (Carter et al., 1998). They may have co-occurring movement disabilities that prevent them from being able to imitate, hold a pencil, mold their hands into signs, or consistently follow instructions (Leary & Donnellan, 1995). Their cognitive ability is vastly underestimated on conventional intelligence tests (Courchesne et al., 2015), many of which require spoken responses. As a result, nonspeaking autistic people may appear to be so disabled as to be incapable of acquiring literacy (Mirenda, 2003).

It is in this context that we conducted the preregistered study reported here. We asked whether, despite the significant challenges to acquiring literacy just outlined, some nonspeaking autistic adolescents and adults have learned English orthographic conventions. To answer this question, we developed a task to measure participants’ knowledge of spelling that did not require that they speak or generate text. Participants played a game that involved tapping sequentially pulsing targets on an iPad as quickly as they could. We compared how quickly they tapped targets on two types of trials: Trials where letters of the alphabet pulsed in a sequence that spelled a sentence the experimenter had earlier said aloud (e.g. “I should water the backyard today”) and trials where letters or nonsense symbols pulsed in closely matched but meaningless sequences. We reasoned that if participants knew how to spell, they would be faster to tap targets on trials involving sentences that had earlier been said aloud (because they could predict the sequence of pulsing letters) than trials involving meaningless sequences (where prediction was not possible).

As will be described below, the participants in our study were unable to communicate effectively using speech despite having received, on average, over 15 years of speech therapy. According to participants and their families, the most effective means of communication available to them involved spelling words and sentences by pointing to letters on a letterboard held vertically by a trained assistant. This method of communication has generated controversy because of doubts about nonspeaking autistic people’s capacity for literacy (e.g. Fein & Kamio, 2014) and concerns about whether they are pointing to letters they select themselves or letters the assistant has directed them to (e.g. American Speech-Language-Hearing Association (ASHA), 2018; but see Jaswal et al., 2020). The study here was not designed to evaluate the authenticity of this method of communication. But it is relevant to that debate insofar as it investigates whether nonspeaking autistic people who use this method know how to spell.

Method

Preregistration

The experiment was preregistered on the Open Science Framework (https://osf.io/a65u7). The preregistration was created on 13 June 2022, after data were collected from six participants, but before their data were processed or analyzed. Deviations from the preregistered procedure are described below.

Ethics and consent

This study was approved by the authors’ Institutional Review Board for the Social and Behavioral Sciences (Protocol No. 3557). The study was performed in accordance with relevant guidelines and regulations. Participants provided written informed consent or, if under 18 years of age, written informed assent. Parents additionally provided written informed consent for their own participation (i.e. to complete standardized questionnaires) and their children’s participation (regardless of whether adult children were under guardianship/conservatorship). Two adult participants were accompanied by a friend or sibling rather than a parent; these two participants were not under guardianship/conservatorship and so the participants provided written informed consent, but parent/guardian consent was not necessary or obtained. The script used during the consent process is available in the Supplemental Material.

Participants

Inclusion criteria and recruitment

There were four inclusion criteria in our preregistration: (a) at least 15 years old, (b) a diagnosis of autism, (c) an inability to communicate effectively using speech (see below), and (d) at least 2 years of experience learning to use a letterboard. After two participants’ sessions, we learned that they had been learning to use a letterboard for between 1 and 2 years. The patterns reported here remain the same with or without their data, so they are included in the final dataset.

Participants were recruited from three sources: (a) a center that specializes in supporting children and adults with limited speech to access other forms of communication; (b) a conference for nonspeaking people, their families, and professionals, held in Virginia in July 2022; and (c) an advocacy event involving nonspeaking people in Washington, DC in July 2022. With the assistance of the staff at the center and the organizers of the conference and advocacy event, we shared details about the study with potential participants and families and invited those interested to contact us for more information. We scheduled all eligible individuals who expressed interest and who could participate at one of the available appointment times. Sessions took place in clinics, homes, or hotels in Virginia, Maryland, and Washington, DC, between May 26, 2022 and July 29, 2022.

Sample

Our preregistration indicated that we would recruit at least 20 participants. Our final sample comprised 31 nonspeaking people with a clinical diagnosis of autism (Mage = 23.26 years; SD = 7.12; range = 15–52; 25 males, 6 females; for additional demographics, see Supplemental Table S1). One additional 26-year-old male participated, but he did not advance beyond the practice phase (described below) in the time allotted for his session and so did not provide any data.

Sample characteristics

A parent, friend, or sibling completed a demographic/developmental history form, including information about the kinds and duration of therapies commonly prescribed to autistic people. Supplemental Table S2 provides a summary of these data. Of note, 29 of the 31 participants had received speech therapy focused on speech for, on average, 15.72 years (SD = 6.02; range = 2–28; and many participants were currently receiving it). In addition, the 31 participants had, on average, 5.58 years (SD = 2.33; range = 1–9.33) of experience learning to use a letterboard.

The parent, friend, or sibling also completed three standardized measures: the Vineland Adaptive Behavior Scales-II, Parent/Caregiver Rating Form (VABS-II; Sparrow et al., 2005); the Social Communication Questionnaire, Lifetime (SCQ; Rutter et al., 2003); and the Social Responsiveness Scale-2, Parent Report (Constantino & Gruber, 2012). Supplemental Table S3 provides a summary of scores on these measures. We note here that, according to the VABS-II, our sample was significantly disabled: Their average standard score on the adaptive behavior composite was 53.77 (SD = 13.57; range = 24–78), over three standard deviations below what would be expected of people their age.

As noted earlier, participants in this study considered themselves and were considered by their families to be nonspeaking: They were unable to communicate effectively using speech. Their ability to speak ranged from not at all to a few word approximations to limited phrase speech. The first item on the SCQ (Rutter et al., 2003) asks, “Is he or she now able to talk using short phrases or sentences?” This item was used to split our sample into those with phrase speech (19 participants) and those without phrase speech (12 participants). Even among those with phrase speech, none of the participants was able to use speech to communicate effectively. For example, item 43 of the “Talking” section on the VABS-II (Sparrow et al., 2005) asks if the individual can use speech to “give simple directions (e.g. on how to play a game or how to make something).” None of the participants was able to use speech to provide directions that were clear enough to follow.

Task

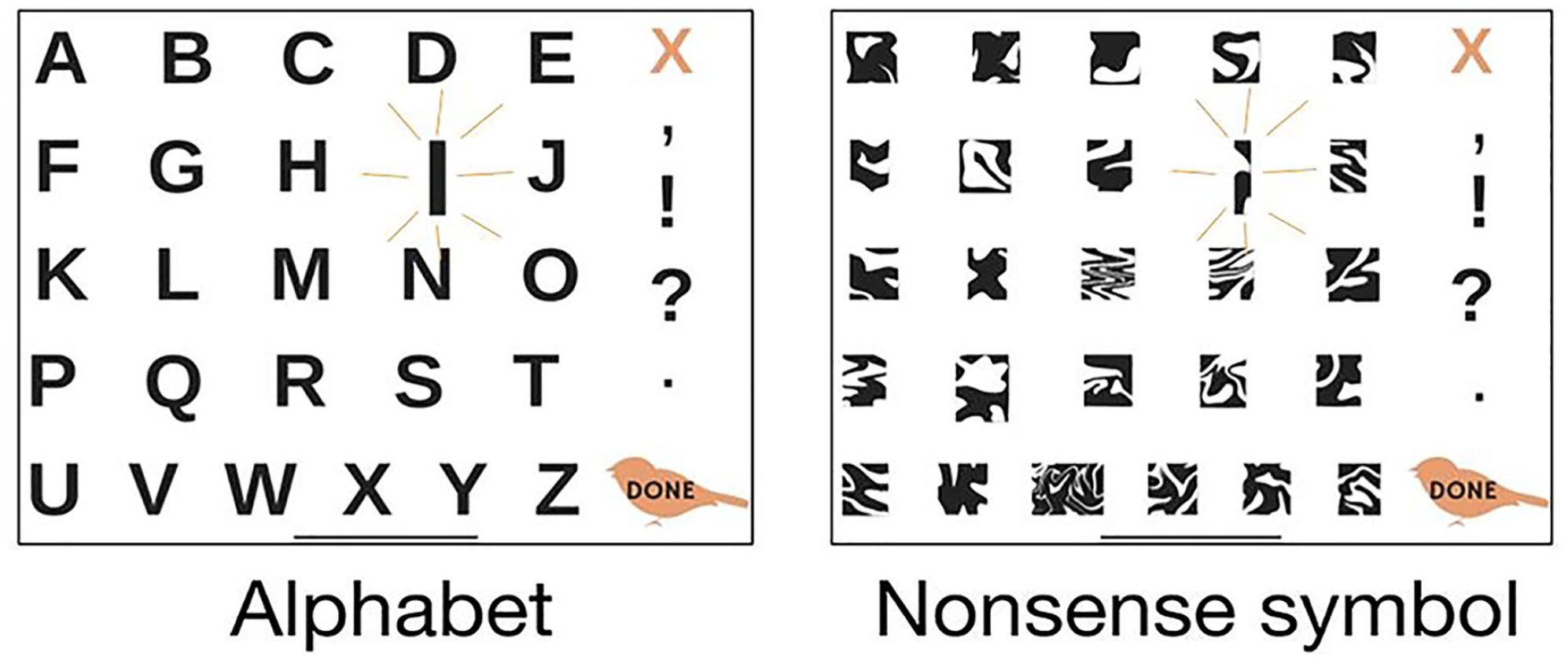

Participants viewed 26 possible targets, either letters of the English alphabet or nonsense symbols, displayed simultaneously on an Apple iPad Pro 12.9″ (8.46″ × 11.04″). Figure 1 shows the alphabet and nonsense symbol displays. When a trial started, one target began pulsing and continued to pulse until the participant tapped it, at which point another target began pulsing and continued to pulse until the participant tapped it, and so on. The task was simply to tap whichever target was pulsing as quickly as possible after it began pulsing. The sequence of pulsing targets was determined in advance and is described in the “Procedure” section. The Supplemental Material provides additional details about the task.

Alphabet and nonsense symbol displays used in the experimental task. The letter “I” and the nonsense symbol at the analogous physical location are shown pulsing.

Procedure

The iPad was secured on a stand in an upright position on a table in front of the seated participants; no one held the iPad or touched participants. As a warm-up exercise, participants first played a commercially available iPad game called Nine Dotz (since removed from the App Store), which had some similarities to our experimental task. Participants used the index finger of their preferred hand to tap as many colored squares in a 3 × 3 matrix as possible before a 30-s timer ran out. (Three participants used their left hand.) When the game began, one square would change color, the participant would tap it (and a counter would increase by 1), at which point it returned to a neutral color and another randomly selected square changed color, and so on. Participants were invited to play the game a second time if they wanted to attempt to beat their first score.

Next, participants received five practice trials using our task. The first four practice trials involved the alphabet display and letters that pulsed in sequences that spelled words or phrases the experimenter had earlier said aloud (i.e. the participant’s first and last names, “nonspeaking,” “presume competence,” and “brain body disconnect”). The fifth practice trial involved the nonsense symbol display and symbols that pulsed in locations that would have spelled “nonspeaking” if they had been letters (but participants were not told this). Participants were instructed to tap the pulsing targets as quickly as they could.

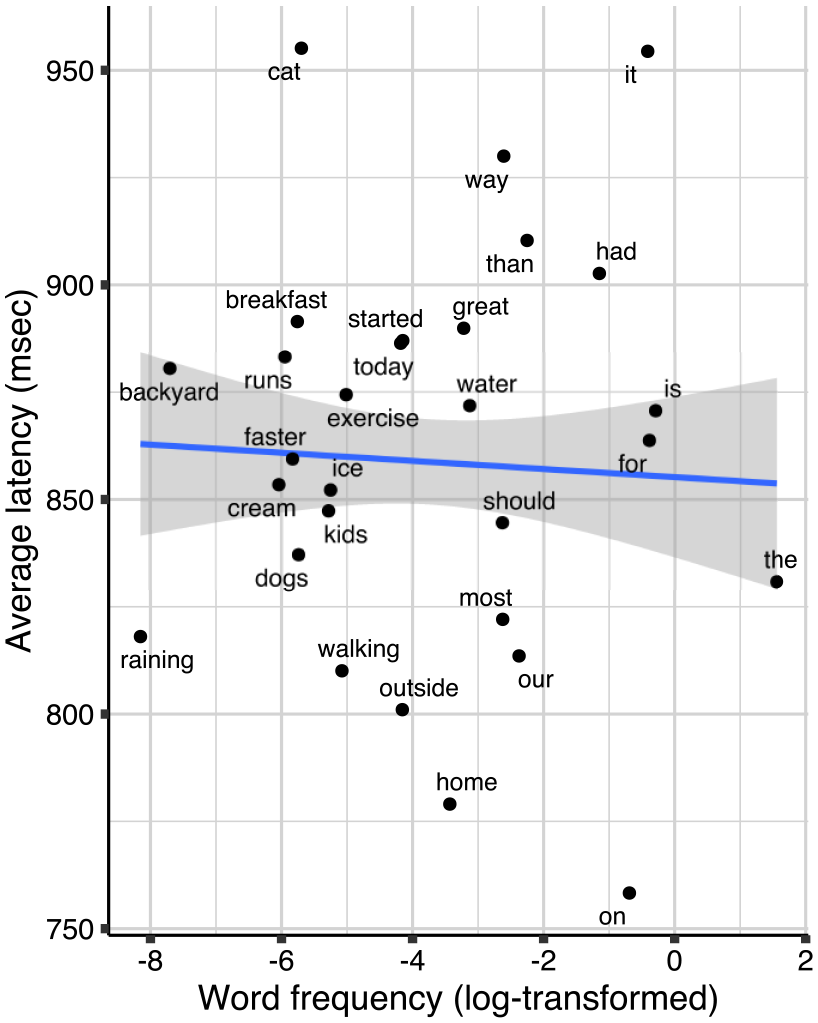

The experimenter then explained that they were going to begin the “real” game. The experimenter reminded participants that they should tap pulsing targets as quickly as they could. There were 20 trials, blocked into four conditions of five trials each (see Table 1). In the Sentences condition, letters pulsed in sequences that spelled five- to seven-word sentences of 28–30 characters each. Before initiating a Sentences trial, the experimenter twice said aloud the sentence that the sequence of pulsing letters would spell (e.g. “This time, the letters will flash in a sequence that spells, ‘I should water the backyard today. I should water the backyard today.’”). Participants were never shown a printed version of the sentence.

Sequence of pulsing targets in the four conditions.

In the Sentences and Reversed Letter Sequences conditions, targets were letters of the alphabet. In the Matched Symbol Sequences and Reversed Symbol Sequences conditions, targets were nonsense symbols that pulsed in the same physical locations as the letter targets in the Sentences and Reversed Letter Sequences conditions, respectively. Spaces are shown in the Sentences trials of the table for ease of reading; there was no spacebar on the display.

In the other three conditions, the sequences were meaningless: In the Matched Symbol Sequences condition, nonsense symbols pulsed in the same physical locations and order as the Sentences condition; in the Reversed Letter Sequences and Reversed Symbol Sequences conditions, letters or nonsense symbols pulsed in the reversed order. In the three meaningless conditions, the experimenter did not (and indeed, could not) inform participants of the sequences before initiating the trials because the sequences were meaningless. Participants were presented with the four conditions in one of four orders. (Our preregistration indicated we would use two orders; we added two orders to achieve a Latin square design.) The Supplemental Material includes the script used to explain to participants the warm-up, practice, and test trials.

Analysis plan

Our dependent variable was the amount of time (in milliseconds) between when a participant’s finger was no longer in contact with one pulsing target and when their finger first made contact with the next pulsing target (“latency”). Each participant contributed 552 latencies, representing their responses to 138 targets in each of the four conditions (excluding their response to the first target per trial in each condition because there was no release from a previous target). 2 Our preregistration indicated that we would conduct analyses on untransformed and log-transformed latencies. The findings were the same, and so for ease of interpretation, we report the results of analyses on untransformed latencies. 3

Analyses were conducted in R (R Core Team, 2022) using the RStudio interface (RStudio Team, 2023). To eliminate latency outliers that resulted from, for example, participants taking a break or scratching their cheek in the middle of a trial, we used the filtRT function from the statxp package (Vallet, 2022). We excluded latencies for each participant that were beyond two standard deviations of that participant’s overall average latency. The average percentage of latencies excluded per participant was 3.75% (SD = 0.98%; range = 1.27%–5.98%).

We analyzed the latency data with linear mixed-effects models, using the lme4 (Bates et al., 2015) and lmerTest (Kuznetsova et al., 2017) packages in R. We describe here the common elements of our mixed-effects models; details about the factors unique to each model are described in the “Results” section, with additional details in the Supplemental Material. We included a random intercept for each participant. The Sentences condition was set as the reference level. Two variables were included as covariates: (a) cumulative tap number (to account for possible fatigue or task familiarity effects over the course of the session) and (b) the Euclidean distance in pixels between the X-Y position where the participant’s finger left a target and the X-Y position where they first made contact with the next target (to account for the fact that targets that are physically closer together will be responded to more quickly than those that are farther apart). To follow up on significant interactions involving categorical variables, we fit the model into an analysis of variance or analysis of covariance (ANCOVA) framework and used the emmeans package (Lenth, 2022) to investigate the simple effects. To follow up on significant interactions involving continuous variables, we used a traditional subsetting approach in lme4.

Community involvement

There was autistic representation on the research team. In addition, the development of the study benefited considerably from autistic informants, family members of autistic people, and clinicians who work with and support nonspeaking autistic people.

Results

The data reported in this article, along with the R code used to generate the analyses and figures, are available on the Open Science Framework (https://osf.io/u3fmw). The Supplemental Material provides details of analyses (including specifications of each model) and deviations from the preregistered analysis plan.

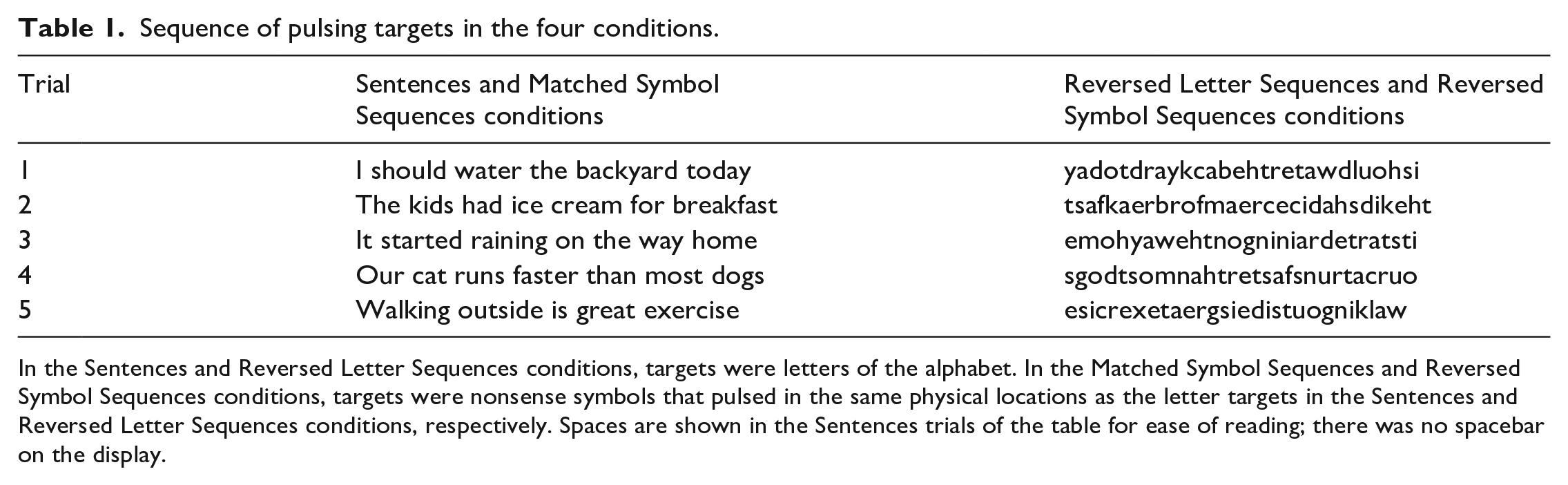

Anticipation of targets in Sentences condition

Our primary, preregistered analysis investigated whether participants were faster to tap targets in the Sentences condition than in any of the three meaningless conditions, the pattern expected of literate participants. As Figure 2 shows, participants were indeed fastest to tap targets in the Sentences condition. An ANCOVA derived from a linear mixed-effects regression on these data yielded a significant interaction between whether the targets were letters or symbols and whether the sequence of pulsing targets was in the forward direction (i.e. the Sentences and Matched Symbol Sequences conditions) or the reversed direction (i.e. the two reversed conditions), F(1, 16,409) = 73.52, p < 0.001.

Average latency in each condition. Shaded bars show the average latencies across participants, and thin lines show each of the 31 participants’ average latencies. Error bars represent 95% confidence intervals. ***p < 0.001.

Planned, follow-up comparisons showed that participants were faster to tap targets in the Sentences condition compared to the Matched Symbol Sequences condition, t(16,409) = –6.17, p < 0.001; estimated marginal mean (EMM) = –108.60, 95% confidence interval (CI): [–153.80, –63.40]; they were faster in the Sentences condition compared to the Reversed Letter Sequences condition, t(16,409) = –4.22, p < 0.001; EMM = –44.30, 95% CI: [–71.36, –17.30]; and they were faster in the Sentences condition compared to the Reversed Symbol Sequences condition, t(16,409) = –6.91, p < 0.001; EMM = –72.60, 95% CI: [–99.54, –45.60]. That participants were fastest to tap targets in the Sentences condition is consistent with what would be expected of people who know how to spell: They could anticipate the next pulsing target in the Sentences condition, but not in the three meaningless conditions.

Our design allows us to rule out two alternative explanations for why participants were fastest to tap targets in the Sentences condition. First, because the sequence in which targets pulsed was the same in the Sentences and Matched Symbol Sequences conditions (they only differed in whether the targets were letters or nonsense symbols), the faster latencies in the Sentences condition cannot be explained as an artifact of the particular sequence of targets in the Sentences condition. Second, because the Sentences and Reversed Letter Sequences conditions both involved letters as targets (they only differed in whether the sequences were in a meaningful or reversed direction), the faster latencies in the Sentences condition cannot be explained by the fact that it used letters as targets (e.g. that pulsing letters were more discriminable targets than pulsing nonsense symbols).

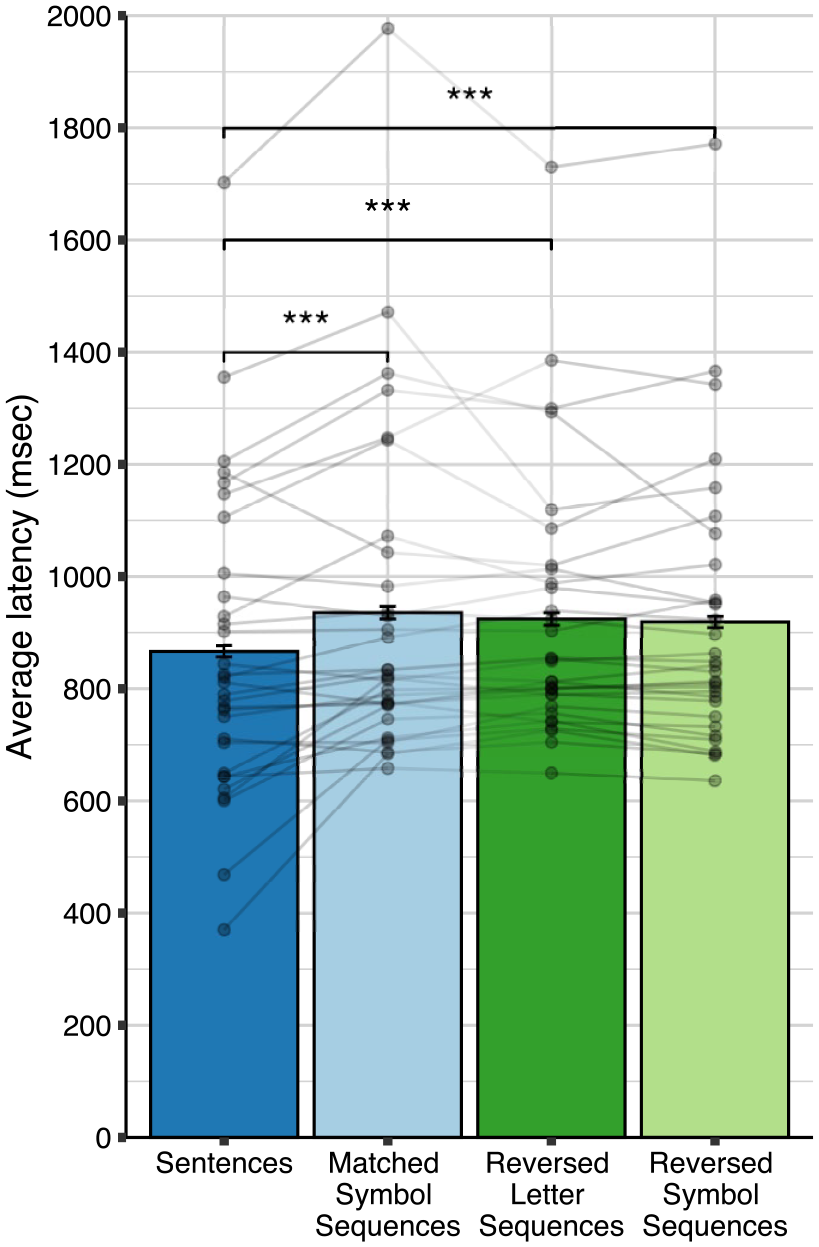

One concern could be that participants’ faster latencies in the Sentences condition compared to the three meaningless conditions were driven by only a few very frequent English words used in the Sentences condition (e.g. “the”). But as Figure 3 shows, there was no relationship between a word’s frequency in printed English (Michel et al., 2011) and the average time to tap the pulsing letters within that word. We analyzed these data using a linear mixed-effects regression, which did not show an effect of word frequency on latency, t(16) = –1.07, p = 0.299; b = –7.78, 95% CI: [–21.91, 6.42]. (This analysis was not preregistered.) Among non-autistic typists, word frequency also does not predict time to type letters within words (Baus et al., 2013; Pinet et al., 2016).

Average latency to tap pulsing letters within a word as a function of word frequency in the Sentences condition. Regression line shows predicted latencies from the mixed-effects model. Shading represents 95% confidence intervals.

Effects of bigram frequency and word boundaries on latency

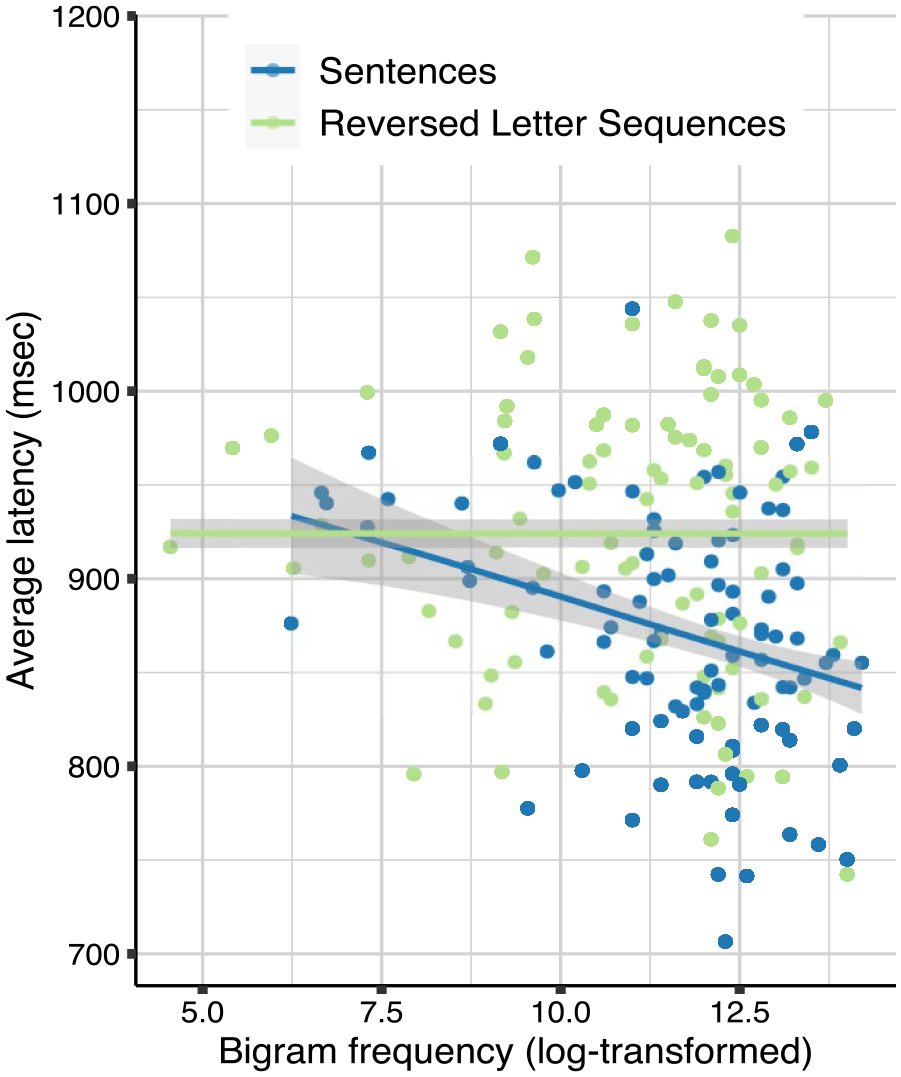

We next investigated whether participants showed two additional patterns in their latencies in the Sentences condition that would be expected of literate people. These two analyses were described as exploratory in the preregistration. First, we investigated whether the latency to tap a letter was related to how frequently that letter and the immediately preceding letter co-occur in English (i.e. bigram frequency). Non-autistic adults using a keyboard are faster to type bigrams the more frequent they are (e.g. Gagné & Spalding, 2016). This pattern is thought to reflect implicit knowledge of orthographic regularities (e.g. that the letter “h” is more often followed by “e” than by “s”), which is an important component of literacy (e.g. Conrad et al., 2013). The relationship between bigram frequency and latency is especially evident when non-autistic typists are copying from passages composed of real words compared to passages composed of meaningless strings of letters (Salthouse, 1984).

As Figure 4 shows, participants showed the pattern expected of literate people: In the Sentences condition, the more frequent a bigram is in English (Jones & Mewhort, 2004), the faster the participants were to tap the second letter in that bigram. In contrast, in the Reversed Letter Sequences condition, where the sequences of letters were meaningless, bigram frequency was not related to latency to tap the second letter in the bigram. We analyzed these data using a linear mixed-effects regression, which yielded a significant interaction between condition and bigram frequency, t(8166) = 3.34, p = 0.001; β = 23.26, 95% CI: [9.61, 36.90]. Follow-up analyses revealed a significant effect of bigram frequency on latencies in the Sentences condition, t(4028) = –4.79, p < 0.001; β = –23.44, 95% CI: [–33.02, –13.86], but not in the Reversed Letter Sequences condition, t(4106) = 0.13; p = 0.898; β = 0.62, 95% CI: [–8.81, 10.04]. Thus, implicit knowledge of orthographic regularities contributed to participants’ ability to predict the next letter in a sequence, but only when the sequence was a meaningful sentence they had earlier heard the experimenter say aloud.

Average latency to tap the second letter in a bigram as a function of bigram frequency in the Sentences condition and the Reversed Letter Sequences condition. Regression lines show predictions from the mixed-effects model. Shading represents 95% confidence intervals.

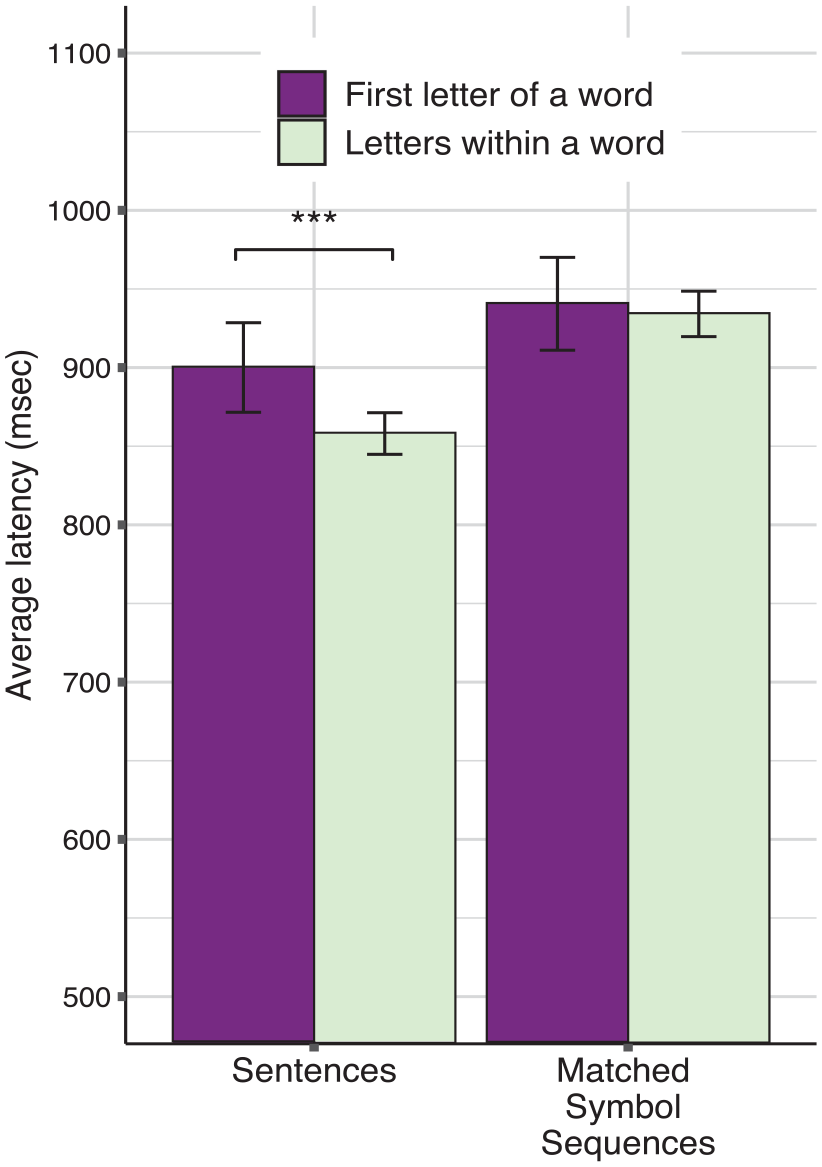

Second, we investigated whether participants paused at word boundaries in the Sentences condition. For non-autistic typists, the time between when they release the spacebar and when they type the first letter of a word tends to be longer than the time to type letters within a word, reflecting the fact that words are the natural units in reading and writing (Salthouse, 1984). There was no spacebar on the iPad display used in our task, and there was no delay between pulsing letters at word boundaries (i.e. in all four conditions, the next target in a sequence began pulsing as soon as the previous target was tapped). If participants nonetheless were slower to tap the first letter of a word compared to letters within words, it would provide evidence that they knew where one word ended and the next word began, which is inherent to literacy (Treiman & Kessler, 2014).

As Figure 5 shows, the nonspeaking autistic participants showed the pattern expected of literate people: In the Sentences condition, they were slower to tap the first letter of a word than letters within words. Also plotted, for comparison, is the average latency to tap nonsense symbols in the Matched Symbol Sequences condition at analogous serial positions and physical locations. An ANCOVA derived from a linear mixed-effects regression on these data yielded a significant interaction between condition and target position, F(1, 8116) = 4.81, p = 0.028. Follow-up analyses showed that in the Sentences condition, participants were slower to tap pulsing letters at the start of a new word than within a word, t(8116) = 4.02, p < 0.001; EMM = 47.6, 95% CI: [24.4, 70.9]. In contrast, in the Matched Symbol Sequences condition, there was no effect of target position on latency, t(8116) = 0.90, p = 0.368; EMM = 10.7, 95% CI: [–12.6, 34.1].

Average latency as a function of whether a target was at the start of a word or within a word in the Sentences condition or at the analogous serial positions and physical locations in the Matched Symbol Sequences condition. Error bars represent 95% confidence intervals. ***p < 0.001.

Bayesian prevalence analyses

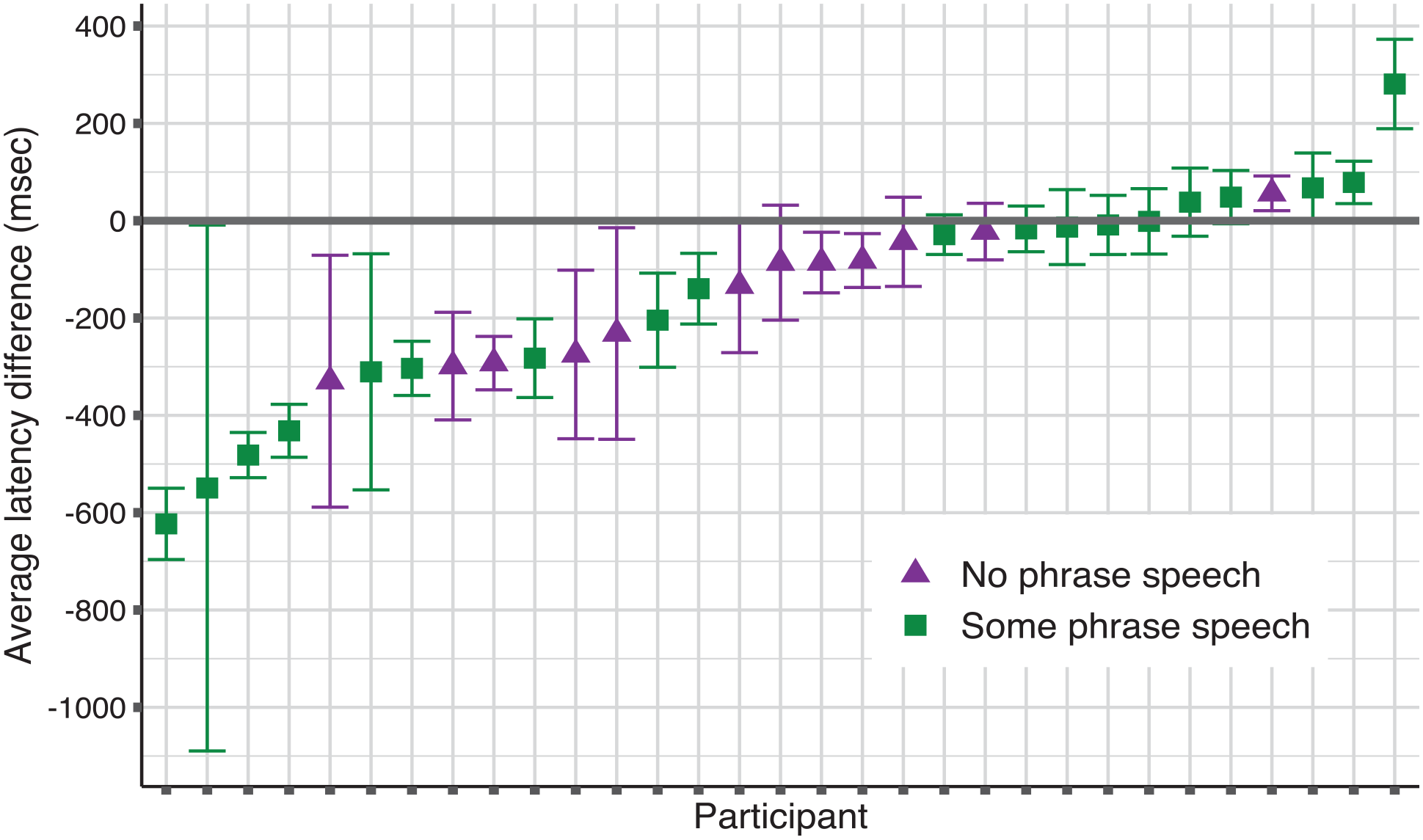

To investigate how many participants showed a latency pattern consistent with literacy and to estimate the proportion of people in the population from which our sample was drawn that would be expected to show this pattern, we used a Bayesian prevalence approach (Ince et al., 2021, 2022). The Bayesian prevalence approach is recommended as a complement to traditional null hypothesis testing for experimental studies where participants contribute multiple trials in each condition, the magnitude of the within-participant effect may vary across participants, and the number of participants is limited. It allows one to estimate the proportion of individuals in the population from which the sample was drawn who would be expected to show the effect if tested in the same experiment (the Bayesian maximum a posteriori (MAP) estimate), as well as the interval that represents with a 96% probability the true prevalence of the MAP estimate (the 96% highest posterior density interval (HPDI)). (This analysis was not preregistered.)

For each of the 31 participants, we created a linear model to assess whether they had faster latencies to targets in the Sentences condition than the Matched Symbol Sequences condition. This yielded, for each participant, a point estimate of the average difference in latency between these two conditions, along with 95% CIs. As Figure 6 shows, 16 of the 31 participants showed the signature pattern. The MAP estimate of this signature pattern was 0.49 (96% HPDI [0.31, 0.67]). Thus, one could expect, with 96% confidence, that between about one-third and two-thirds of people in the population from which our sample was drawn (i.e. nonspeaking autistic adolescents and adults with at least a year of experience learning to use a letterboard) would respond significantly faster in the Sentences condition compared to the Matched Symbol Sequences condition. The estimated prevalence rate was similar in the two sub-groups of our sample: Seven of the 12 participants without phrase speech showed the pattern; the MAP estimate was 0.56 (96% HPDI [0.28, 0.81]). Nine of the 19 participants with limited phrase speech showed the pattern; the MAP estimate was 0.45 (96% HPDI [0.22, 0.68]).

Average difference in predicted latency between the Sentences and Matched Symbol Sequences conditions for each participant. Each dot represents a model estimate for one participant. Error bars show 95% confidence intervals. Values below 0 represent faster latencies in the Sentences condition than the Matched Symbol Sequences condition.

Discussion

Our findings suggest that many nonspeaking autistic adolescents and adults in this study have learned conventions of English orthography. Their knowledge was reflected in (a) their ability to predict the next letter in a sentence, (b) their faster responses to more common bigrams in meaningful (but not meaningless) text, and (c) their tendency to parse a continuous stream of text into words. About half of the participants showed the signature pattern consistent with literacy; those who did not show the pattern may also know spelling conventions but be unable to demonstrate their knowledge on our task, which required sustained attention and speeded responses to over 550 targets. Regardless, our findings suggest that at least five times more participants have foundational literacy skills than would be expected from professional estimates based on nonspeaking people’s overt behavior (Erickson & Geist, 2016; Foley & Wolter, 2010).

One might object that it is not necessary to invoke literacy or knowledge of spelling to explain our findings. Perhaps the data we observed could be explained in terms of statistical learning mechanisms that allow many organisms to detect regularities in environmental input (e.g. Santolin & Saffran, 2018). According to this view, participants were fastest to tap targets in the Sentences condition because it involved sequences of pulsing targets that followed transitional probabilities to which participants had been exposed before the study, not because they understood the relation between letters, words, and language.

Statistical learning is foundational to literacy (Treiman & Kessler, 2014), and our finding that participants responded faster to more frequent compared to less frequent bigrams in the Sentences condition demonstrates that they had learned transitional probabilities in printed English prior to their participation in the study. However, and importantly, our findings cannot be explained by knowledge of transitional probabilities alone. If the speed with which participants tapped letters reflected only knowledge of transitional probabilities, participants should have been faster to tap the second letter of more frequent compared to less frequent bigrams in the Reversed Letter Sequences condition as well. They were not: Bigram frequency had no effect in the Reversed Letter Sequences condition (Figure 4).

We speculate that bigram frequency had an effect in the Sentences condition but not the Reversed Letter Sequences condition because of a difference in the approach participants took to completing the task in the two conditions. Recall that the task was simply to tap whatever target was pulsing as quickly as possible; there was no requirement that participants monitor the sequences or that they attempt to anticipate the next target. But in the Sentences condition, the experimenter told participants the sentence that was about to unfold before initiating each trial. A motivated, literate participant could convert the sentence the experimenter spoke aloud into its written form. They could actively monitor the sequence as it unfolded to anticipate subsequent letters, which would mean that their implicit knowledge of transitional probabilities would also play a role. In the Reversed Letter Sequences condition, in contrast, participants could not anticipate what the next letter would be, no matter how motivated they were. As a result, they may not even have attended to the identity of the pulsing letters as they tapped them (i.e. they might as well have been nonsense symbols), which would mean that implicit knowledge of bigram frequency would not have influenced performance.

Our finding that participants without phrase speech as well as participants with phrase speech showed the signature pattern of faster responses in the Sentences condition compared to the Matched Symbol Sequences condition is important for at least two reasons. First, it demonstrates that it is possible for nonspeaking autistic people to acquire aspects of literacy regardless of their speech proficiency. Second and relatedly, it is frequently suggested that more positive outcomes are associated with autistic children who achieve functional speech (i.e. phrase speech) by 5 years of age (e.g. Tager-Flusberg & Kasari, 2013). At least as far as learning foundational literacy skills, there was no difference in our sample of nonspeaking autistic adolescents and adults between those who had some phrase speech and those who did not.

Ours was not a prospective study, so we cannot know how participants acquired the literacy skills documented here. However, just as when non-autistic people acquire literacy, there are likely to be multiple contributing factors. As noted, the ability to detect statistical regularities clearly played a role. In addition, participants may have benefited from formal and informal literacy activities that encourage pattern-matching, including repeated exposure to language presented simultaneously in written and oral forms (e.g. closed captioning; e.g. Mottron et al., 2021). In addition, the year or more of practice participants had communicating on a letterboard held by a trained assistant may have highlighted orthographic regularities and sound–letter correspondences, though we note that the training our participants had received on the letterboard does not involve explicit instruction on these correspondences. Finally, nonspeaking adults with a variety of disabilities who can write without assistance have retrospectively identified the high expectations of parents and teachers as important contributors to their ability to read and write, even as other people may have doubted their capacity for literacy (Koppenhaver et al., 1991).

Our task was an indirect measure of literacy. The reaction time patterns we documented show that participants were sensitive to orthographic conventions. But it is important to note that participants demonstrated this competence by anticipating the spelling of sentences as they unfolded; participants did not generate sentences themselves. An important question for future work will be investigating why, given this underlying orthographic competence, most nonspeaking autistic people do not learn to express themselves in writing. As suggested earlier, part of the challenge is lack of opportunity because of underestimation of nonspeaking autistic people’s capacity to acquire literacy (e.g. Mirenda, 2003). The significant proprioceptive and motor challenges that many nonspeaking autistic people face can also interfere with learning to write by hand or type using conventional interfaces (e.g. Gernsbacher, 2004; Leary & Donnellan, 1995; Torres et al., 2013). Thus, another important area for future work will be leveraging advances in technology to create accessible and customizable communication interfaces as well as opportunities to practice the skills required to use them (Alabood et al., 2023; Krishnamurthy et al., 2022).

Limitations

One limitation of the current work is that due to the limited time we had with participants, we did not include a condition where letters pulsed in meaningful sequences that spelled sentences that had not been said aloud earlier. Literate participants who are expecting a sequence to be meaningful might be able to predict letters and words as the sequence unfolds even without having heard it in advance (e.g. they might predict that “happybi” will be completed as “birthday,” which could be followed by “to you”). This possibility awaits further study.

Another limitation is that ours was a unique, relatively small sample 4 of nonspeaking autistic adolescents and adults who had at least a year of experience learning to spell words and sentences on a letterboard. We do not know whether nonspeaking autistic people who did not have that experience would perform similarly. As noted earlier, we also do not know what kinds of experiences allowed participants in the current study to obtain the skills documented here. Most of our sample was White and from relatively affluent backgrounds, which may have influenced the opportunities they were given to develop literacy skills. A third limitation is that it is possible that the literacy skills documented here could be acquired without any connection to meaning, as in some accounts of hyperlexia (Silberberg & Silberberg, 1967). We did not assess text comprehension, so we cannot rule out this possibility.

Conclusion

According to the nonspeaking autistic participants in this study and their families, the most effective means of communication available to them currently involves spelling words and sentences on a letterboard held vertically by an assistant. (All the study participants are working on the skills to be able to type without assistance.) Were it not for concerns about the role of the assistant (e.g. ASHA, 2018; but see Jaswal et al., 2020), this would serve as prima facie evidence that they are literate. We used an experimental task that did not involve an assistant (i.e. no one held the iPad or touched participants) to investigate if these participants possess foundational literacy skills. Our data provided several reasons to believe that they do. Investigating how common these skills are among nonspeaking autistic people, what kinds of experiences facilitate their acquisition, and how best to leverage the skills to provide access to writing as an alternative to speech are essential goals for future work. In conclusion, consistent with recent work suggesting that nonspeaking autistic people’s cognitive potential has been underestimated (Courchesne et al., 2015), the study here suggests that their linguistic potential has also been underestimated.

Supplemental Material

sj-docx-1-aut-10.1177_13623613241230709 – Supplemental material for Literacy in nonspeaking autistic people

Supplemental material, sj-docx-1-aut-10.1177_13623613241230709 for Literacy in nonspeaking autistic people by Vikram K Jaswal, Andrew J Lampi and Kayden M Stockwell in Autism

Footnotes

Acknowledgements

The authors are grateful to Andrew Milivojevich, Aaron Van Haecke, the Woodbridge Digital Enablement Team, and the Woodbridge Cares initiative that allowed a group of talented engineers to develop the iPad app used in this study. They thank Emily Moore, Chelsea Rodi, Kate Kaufman, Talyn Steinmann, Skye Ferris, Zöe Robertson, D.J. Jimenez, and Ashley Sullender for research assistance, and Gus Sjobeck for statistical support. They thank many students and colleagues for helpful comments and discussions. They are indebted to the participants and their families for their enthusiastic participation.

Author contributions

V.K.J. conceived and designed the study. V.K.J. and K.M.S. collected the data. A.J.L., V.K.J., and K.M.S. carried out data analyses. A.J.L. and V.K.J. created visualizations. V.K.J. wrote the manuscript. A.J.L. and K.M.S. provided feedback.

Declaration of conflicting interests

The author(s) declared the following potential conflicts of interest with respect to the research, authorship, and/or publication of this article: V.K.J. teaches a community engagement seminar at the University of Virginia in which some participants in the study have been involved. A.J.L. and K.M.S. have served as teaching assistants for this seminar.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Akhil Autism Foundation. The funder had no role in study design, data collection and analysis, decision to publish, or preparation of the manuscript.

Supplemental material

Supplemental material for this article is available online.

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.