Abstract

Significant barriers to training have been introduced by the COVID-19 pandemic, limiting in-person professional activities resulting in the development of the novel remote training. We developed and evaluated a remote training approach for master trainers of the Caregiver Skills Training Program. Master trainers support community practitioners, who in turn deliver the Caregiver Skills Training program to caregivers of children with developmental delays or disabilities. The aim of this study was to evaluate the remote training of master trainers on Caregiver Skills Training Program. Twelve out of the 19 practitioners who enrolled in the training completed the study. The training consisted of a 5-day in-person session completed prior to the pandemic, followed by supporting participants’ ability to identify Caregiver Skills Training Program strategies through supported coding of seven video recordings over 7 weekly meetings and group discussions and ended with participants independently coding a set of 10 videos for Caregiver Skills Training Program strategies. We found that master trainers’ scoring reliability varied over 7 weeks of supported coding. All but one participant reached moderate or good independent scoring reliability despite a lack of ability to practice the Caregiver Skills Training Program strategies with children due to the pandemic. Taken together, our findings illustrate the feasibility and value of remote training approaches in implementing interventions.

Lay Abstract

The COVID-19 pandemic interrupted in-person professional activities. We developed and evaluated a remote training approach for master trainers of the Caregiver Skills Training Program. Master trainers support community practitioners, who in turn deliver the Caregiver Skills Training Program to caregivers of children with developmental delays or disabilities. The Caregiver Skills Training Program teaches caregivers how to use strategies to enhance learning and interactions during everyday play and home activities and routines with their child. The aim of this study was to evaluate the remote training of master trainers on Caregiver Skills Training Program. Twelve out of the 19 practitioners who enrolled in the training completed the study. The training consisted of a 5-day in-person session completed prior to the pandemic, followed by supporting participants’ ability to identify Caregiver Skills Training Program strategies through coding of video recordings over 7 weekly meetings and group discussions and ended with participants independently coding a set of 10 videos for Caregiver Skills Training Program strategies. We found all but one participant was able to reliably identify Caregiver Skills Training Program strategies from video recordings despite a lack of ability to practice the Caregiver Skills Training Program strategies with children due to the pandemic. Taken together, our findings illustrate the feasibility and value of remote training approaches in implementing interventions.

Keywords

With the objective of disseminating an evidence-based intervention for community settings, the World Health Organization (WHO) and Autism Speaks (AS) developed an open access caregiver-mediated intervention called the “Caregiver Skills Training Program (CST).” CST targets families of children aged 2–9 years old with, or at risk of, a neurodevelopmental disorder (NDD) including autism (Salomone et al., 2019). CST was developed based on a systematic review and meta-analysis followed by consultation of experts in parent-mediated interventions (Salomone et al., 2019). The efficacy for CST is still formative, with promising preliminary results on improving parent skills and self-efficacy (Salomone et al., 2022). CST was designed to be delivered by non-specialists care providers (e.g. nurses, community-based workers, or peer caregivers) supported by local specialists (Salomone et al., 2019). This model of training is known as the cascade training model, which promises scalability and sustainment of CST. The cascade training model begins with the training of master trainers (MTs) by CST experts. MTs then train facilitators, who in turn deliver the intervention to caregivers (Salomone et al., 2019). CST experts are a group of researchers and consultants who developed the intervention, while MTs are clinicians with experience in neurodevelopment and are responsible for training and monitoring “facilitators,” that is, non-specialized staff in their local context. Finally, facilitators are responsible for delivering the intervention to caregivers and can come from various fields such as nursing, community health care or can also be caregivers of children with NDD.

The WHO/AS CST MT training was initially designed to include a 5-day in-person training followed by practitioners’ direct practice with children with special needs. Implementation fidelity was originally established based on expert ratings of videos of practitioners themselves implementing CST strategies (detailed information on the strategies can be found here: World Health Organization (2022) directly with children during these practice sessions). Due to the COVID-19 pandemic, MTs engaged in the initial training were unable to complete this direct practice step during a period of maximal restrictions to in-person interactions. To support the MTs’ continued learning in a remote context, we developed a novel training protocol focusing on the MTs’ ability to reliably score CST strategies through previously recorded CST practice sessions.

Current study

This study reports on the feasibility of the novel training protocol on supporting MTs’ ability to recognize and determine the quality of CST implementation via video coding. The project has two specific aims: first, to examine whether 7 weeks of video coding and group discussions will increase MTs’ scoring reliability from baseline and second, to describe MTs’ scoring reliability during a follow-up independent scoring period.

Methods

Participants

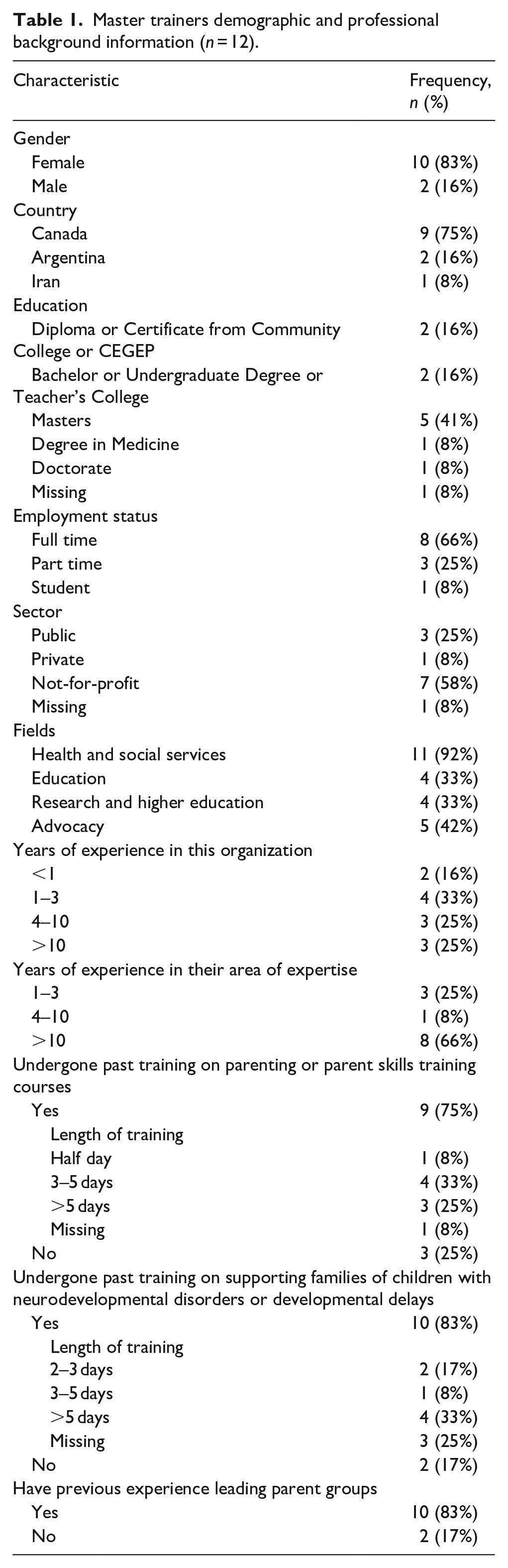

Participants were largely recruited from four community and research sites across Canada. Participants from other countries who expressed interest in the remote training were included. Nineteen MTs initially enrolled in the current study, from Canada (n = 11), the United States (n = 1), Argentina (n = 3), Egypt (n = 1), Ethiopia (n = 2), and Iran (n = 1). Participating MTs were professionals providing autism intervention services in their communities (e.g. autism consultants, speech language therapists, psychologists) and they all completed the 5-day in-person didactic training prior to the pandemic. Seven MTs did not complete the study due to service delivery challenges related to COVID-19 (n = 1), organizational changes (n = 1), training commencing at their own site (n = 1) or for other unspecified reasons (n = 4). Demographic data for the 12 remaining MTs are presented in Table 1.

Master trainers demographic and professional background information (n = 12).

Procedure

CST MT training

Due to the COVID-19 pandemic, the standard CST training was modified for remote delivery. Following a 5-day in-person didactic course (as detailed in Montiel-Nava et al., 2022) that was completed prior to the COVID-19 pandemic, MTs were asked to watch seven 7–10 min videos of an adult–child interaction and completed the WHO-CST Adult/Child Interaction Fidelity Scale following each video, described below (WHO-CST Team, unpublished). Following completion of each scale, MTs then reviewed the scoring key with comments from a CST expert and prepared their questions for a weekly 2-h virtual group meeting with two CST experts and other MTs. This supported coding period lasted 7 weeks, with the coding of one video and one group meeting each week. MTs then conducted independent coding where they completed the WHO-CST Adult/Child Interaction Fidelity Scale on 10 other videos independently. After each set of five videos, they attended a 2-h video coding group discussion with a CST expert. In total, MTs coded 17 videos: 7 videos coded at a frequency of 1 video weekly during the 7-week supported coding period and 10 videos in the independent coding period.

Setting

Training materials

Available videos of an interaction between an adult and child during either a play or a home routine from the Autism Speaks library were used. The Autism Speaks library is where various teams implementing CST across the world upload their CST practice videos for review. Seventeen videos were available, with consent from subjects of the recordings to use the videos for training and education.

Platforms

All group meetings were hosted virtually via Zoom. Data collection was done via the web-based data capture platform, REDCap (Harris et al., 2009). MTs also had access to a personalized password protected website as an online learning management tool. MTs’ submission of their fidelity checklists was done on another secure web-based platform, OneDrive.

WHO-CST Adult/Child Interaction Fidelity Scale

The MTs’ scoring reliability of adult–child interactions were assessed using the 11-item WHO-CST Adult/Child Interaction Fidelity Scale (WHO-CST Team, unpublished) on each of the 17 videos. The measure is bespoke to CST and rates the extent to which CST strategies are implemented during an interaction with a child. Higher scores corresponded to higher quality and more consistent implementation of CST strategies.

Data analysis

Intra-class correlations (ICCs) were used to examine the agreement between each MT and a CST expert (gold standard) on the total score over 11 items of the WHO-CST Adult/Child Interaction Fidelity Scale at all timepoints. ICCs ranged from 0 to 1 where scores closer to 0 indicated no agreement and scores closer to 1 indicated complete agreement (Rankin & Stokes, 1998). ICCs between 0.5 and 0.75, between 0.75 and 0.9, and higher than 0.9 are considered moderate, good, and excellent reliability, respectively (Koo & Li, 2016). A linear Generalized Estimating Equation (GEE) model on ICC scores using an independent correlation matrix was conducted to examine the effect of training on MTs’ supported scoring reliability on the seven timepoints controlling for MTs’ years of experience in the field and their previous training supporting families of children with NDDs. The averages of the ICC scores for each of the 10 videos coded independently are described.

Results

Scoring reliability

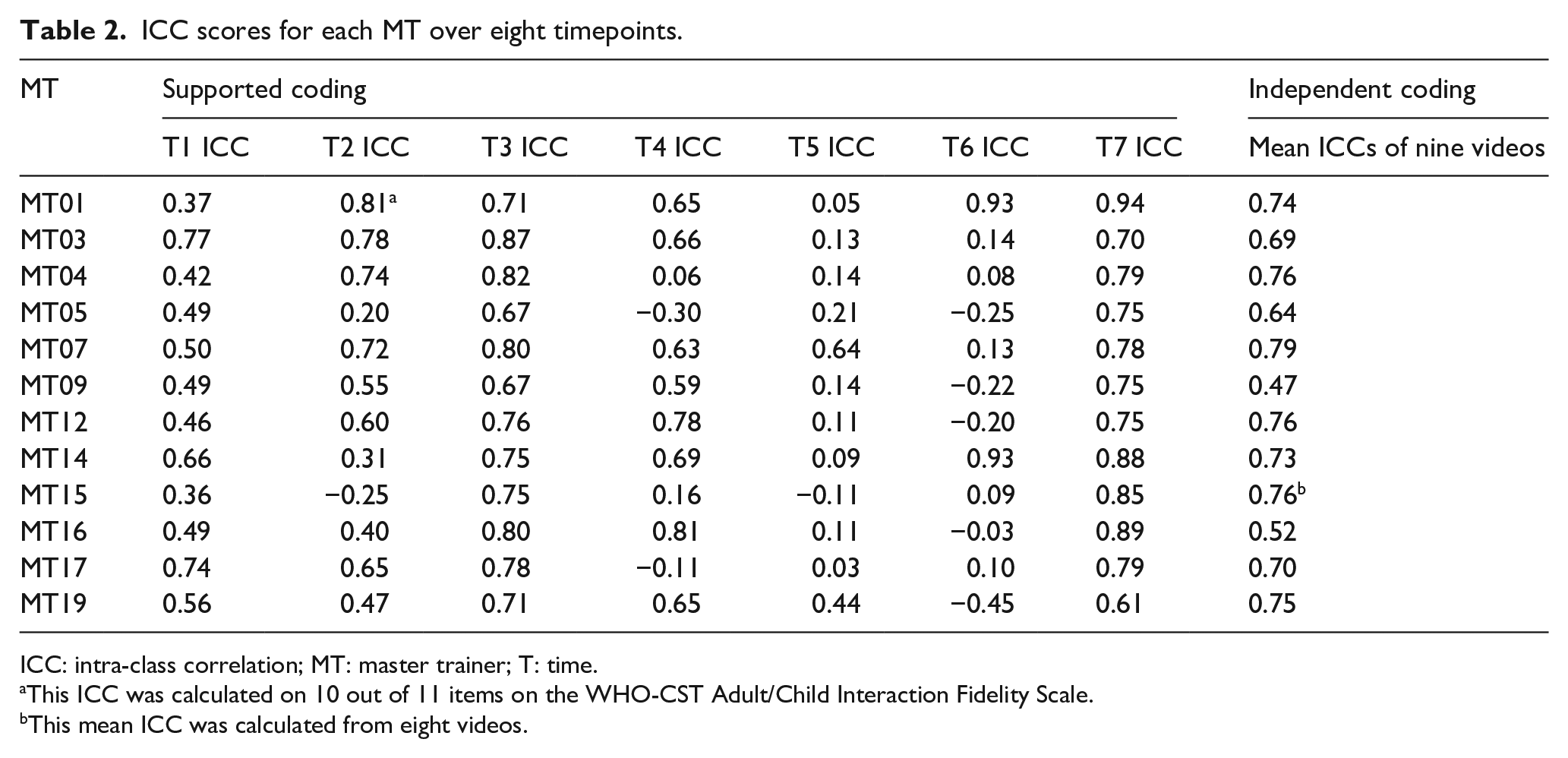

The mean ICC scores of the supported coding are shown in Table 2. The linear GEE model showed a significant main effect of time, Wald χ2(1) = 1301.18, p < 0.01, suggesting that scoring reliability was not stable over time. All other timepoints’ mean scores (MT1 = 0.39, MT2 = 0.38, MT4 = 0.41, MT5 = 0.04, MT6 = 0.08) were significantly lower than the final timepoint (MT7 = 0.66) except for timepoint 3 (MT3 = 0.64). The effects of MTs’ years of experience and previous training did not reach significance (Wald χ2(1) = 3.347, p = 0.06 and Wald χ2(1) = 1.211, p = 0.27, respectively).

ICC scores for each MT over eight timepoints.

ICC: intra-class correlation; MT: master trainer; T: time.

This ICC was calculated on 10 out of 11 items on the WHO-CST Adult/Child Interaction Fidelity Scale.

This mean ICC was calculated from eight videos.

All MTs had low scoring agreement with the CST expert on one video during the independent coding period with a mean ICC score of −0.03, which led to further investigation on the quality of the video. The video may have proved challenging to code possibly due to the constantly moving camera and blurry images. As such, we opted to exclude data from this video. The mean ICC scores for each MT of the nine remaining videos independently coded are shown in Table 2. Out of the 12 MTs, 11 reached moderate or good reliability: seven scored between 0.5 and 0.75 (moderate reliability) and four MTs scored above 0.75 (good reliability; Koo & Li, 2016).

Discussion

Due to the COVID-19 pandemic, interventionists’ ability to reach implementation fidelity for CST was no longer feasible during periods of in-person restrictions. We pivoted the training to focus on scoring reliability, targeting the CST intervention strategies from video recorded practitioner–child interactions. This study is the first to examine MTs’ scoring reliability on the implementation of CST intervention strategies. MTs’ scoring reliability varied over 7 weeks of supported coding. MTs’ independent scoring reliability skills post-training are promising with all, but one MTs achieving moderate or good independent scoring reliability. While scoring fidelity would typically be achieved after a practitioner had established implementation fidelity, we have illustrated that it is feasible for interventionists to achieve moderate to good scoring reliability before implementing the intervention themselves for CST.

The study has a small sample (n = 12), which may limit its generalizability and despite the statistical significance, may be underpowered for the analysis chosen. The high rate of attrition (37%) poses risk for attrition bias. It is unclear if factors related to inner settings (e.g. change in organizational priorities) versus individual fit (e.g. misaligned with professional priorities) with the program can be attributed to the high attrition. Future research detailing challenges in implementation of the training program guided by established frameworks are important to clarify potential challenges in feasibility and provide potential mitigating strategies (e.g. establishing buy-in with each participating professional by involving organization decision-makers). While ideally, the variability in difficulty of training videos should be minimized, we had a limited choice of videos. Thus, we cannot rule out the potential for video difficulty to account for the variability in scoring reliability over the study period. Finally, MTs’ scoring reliability was compared to one expert’s ratings. Considering that inter-rater reliability depends on the type of data, the number of raters and how raters are selected (Gisev et al., 2013), future studies assessing these variables’ impact of scoring reliability is important.

This study provides evidence for interventionists reaching moderate to good scoring reliability before implementation fidelity. Future studies could examine if scoring reliability could impact MTs’ subsequent implementation fidelity and inform the optimal sequence of training to create the effective packages and reduce cost and time burdens, two common challenges in the community settings.

Conclusion

This study is the first to report on community interventionists’ scoring reliability established remotely where we found that moderate scoring reliability is achievable through remote training. Given the paucity of guidance for creating sustainable intervention packages, these findings contribute toward models for intervention packages for sustainable community practice in the autism and neurodevelopmental field.

Community involvement statement

The research involved a “Community of Practice” consisting of community practitioners serving autistic individuals and their families, parents of autistic individuals, students, and researchers. The Community of Practice contributed to the design of the training program, including in the adaptation for its remote delivery and evaluation.

Footnotes

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The study was supported by the Public Health Agency of Canada (PHAC), and Fonds de Recherche du Québec - Santé (FRQS). We are also grateful for support from the WHO-Autism Speaks CST Team, including Chiara Servili, Erica Salomone, Laura Pacione & Felicity Brown.